Abstract

To improve postschool outcomes for students with disabilities, research in secondary transition has increasingly aimed to identify in-school experiences that contribute to positive outcomes after high school. Although research on postschool success for this population has recently identified seven new predictors, operational definitions and essential program characteristics remain missing. Therefore, the purpose of this Delphi study was to operationally define the seven newest predictors of postschool success in secondary transition and identify their essential program characteristics. Experts in the field of secondary transition reached consensus on an operational definition and a set of essential program characteristics for each of the seven newest predictors of postschool success. These definitions and essential program characteristics provide additional information needed to develop, implement, and evaluate effective secondary transition programs. Moreover, these results continue to move research to practice in meaningful and actionable ways. Suggestions for further research and implications are discussed.

In the evidence-based era of secondary transition (Test & Fowler, 2018), research has increasingly aimed to identify in-school experiences that contribute to positive outcomes after high school for students who experience disability. The need for this work is grounded in decades of research showing that students who experience disability have poorer outcomes than their peers who do not experience disability. The search to identify circumstances and experiences that contribute to positive postschool outcomes, primarily employment outcomes, began in the 1980s as researchers conducted follow-up and follow-along studies (see Alverson et al., 2010) with individuals who received or were receiving special education services following the enactment of the Education for All Handicapped Children Act (1975). Passage of the Individuals with Disabilities Education Improvement Act (IDEIA) in 2004 mandated the individualized education program include “. . . a statement of the special education and related services and supplementary aids and services, based on peer-reviewed research to the extent practicable” (34 C.F.R. §300.320). In response to the need for peer-reviewed research, Test et al. (2009) published a seminal systematic literature review in which 16 evidence-based predictors of postschool success (PPSS) were identified from high-quality correlational research. In general, a predictor is “used to estimate, forecast, or project future events or circumstances” (American Psychological Association, n.d.). In the context of secondary transition PPSS, the in-school experiences identified by Test et al. reflected a variety of programs (e.g., vocational education), skills (e.g., social skills), and activities (e.g., community experiences) shown to increase the likelihood of students achieving a positive outcome related to further education, employment, and/or independent living after high school.

Following Test’s foundational work, Mazzotti and colleagues (2016, 2021) conducted two systematic literature reviews in which they identified seven additional PPSS—goal setting, parent expectations, psychological empowerment, self-realization, technology skills, travel skills, and youth autonomy—and found further evidence supporting one or more of the original 16 predictors. To date, 23 predictors have been identified as supporting positive postschool outcomes based on high-quality, correlational research.

Absent from the original research studies were operational definitions with essential program characteristics that school practitioners could use to develop, implement, or evaluate existing secondary transition programs. To remedy this gap in the literature, Rowe et al. (2015) used a Delphi process to operationally define the original 16 predictors and identify their essential program characteristics. This work contributed to efforts to move research to practice (e.g., Trainor et al., 2020) by providing a structure for practitioners at state-, school-, and district-levels to develop, implement, and evaluate secondary transition programs using the best available evidence. Furthermore, operationally defining and identifying program characteristics is consistent with formation of evidence-based education in which research findings are identified and available to educators and other practitioners in a form that can be implemented (Alverson, 2020; Cook & Cook, 2013; Davies, 1999).

While research on postschool success for youth and young adults experiencing disability has continued to evolve and additional predictors continue to be described, operational definitions and essential program characteristics remain missing from the literature base on which the predictors were determined. Therefore, the purpose of this study was to operationally define the seven newest predictors and identify their essential program characteristics. The research questions for this study were:

Method

Delphi Technique

We used a Delphi technique to establish consensus on the operational definitions and essential program characteristics of the seven new PPSS in secondary transition. Delphi is a group processing procedure used to solicit input from experts and reach consensus (Barrios et al., 2021) through a structured and systematic approach to data collection, particularly when empirical data are otherwise unavailable (Linstone & Turoff, 2002). A primary feature of the Delphi is anonymous expert opinions given through iterative and structured rounds of input and voting (Diamond et al., 2014). Strengths of the technique include a structured communication method for knowledgeable individuals to express diverse perspectives and ongoing feedback, the ability to revise previous contributions throughout the process, and ensuring anonymity of expert panel members (Hsu & Sandford, 2007).

Procedure

The Delphi technique consisted of (a) identifying and securing experts; (b) creating and distributing an electronic questionnaire for voting; (c) soliciting experts’ input regarding the operational definition and program characteristics; (d) analyzing responses, including revising statements based on experts’ input; and (e) reaching consensus by the experts. Steps (b) through (d) continued until consensus was reached on a definition and each characteristic.

Identifying and Securing Experts

Experts for this study met at least one of the following criteria: (a) an author of scholarly, peer-reviewed content on one or more of the seven predictors being operationalized, (b) expertise related to a specific youth population (e.g., youth who drop out, disability group), or a specific postschool outcome category (e.g., postsecondary education, employment); or (c) a practitioner (e.g., secondary special or general education teacher, service provider) with 10 or more years of service to secondary age youth who experience disability.

Potential experts were identified from the (a) authors of the studies used to establish the predictor, (b) other researchers with expertise in a specific predictor area, (c) advisory board members for the National Technical Assistance Center on Transition: the Collaborative, and or (d) practitioners working with schools and students. Initial and follow-up invitations to participate in the study were sent to 96 experts (n = 42 researchers; n = 54 practitioners), of whom 35 (n = 6 researchers and n = 29 practitioners) consented, via approved Institutional Review Board process, to participate in the study. This number is consistent with the commonly reported number of participants in the literature (see Hsu & Sandford, 2007; Shang, 2023). Over time, four consented practitioner participants withdrew for unknown reasons, leaving 31 participants. Of these, 19 were practitioners at the local school or district-level, eight had expertise in postsecondary employment and/or education, seven identified expertise related to intellectual and development disabilities, 13 specified expertise in more than one disability category (e.g., autism and specific learning disability, behavioral health, significant complex needs, all disabilities), and one specified expertise in visual impairment. In total, 24 identified as women; 27 identified as Caucasian; 17 reported having a master’s degree; four identified as experiencing a disability; and 18 reported being the parent, or in the parent role, of a child experiencing disability (see Table 1).

Demographics for Expert Panel Participants.

Notes. The expert panel included a total of 31 participants, 25 (81%) of whom were practitioners, and 6 (19%) of whom were researchers. Participants were counted in more than one category for practitioner role and researcher expertise as appropriate.

Creating and Distributing the Questionnaire

We created each questionnaire within Qualtrics, an online survey platform. In round 0, we entered verbatim descriptors and/or definitions from the original studies and literature reviews used to identify the seven new predictors (see Mazzotti et al., 2016, 2021) into a textbox question format. Experts were asked “How would you operationally define [predictor] for secondary transition programs?” and “What are the program characteristics of [predictor] for secondary transition programs?” Each question was followed by an open textbox with unlimited character space to allow experts to respond to the question separately. In subsequent rounds, responses were organized using the rank order, multiple choice question, and matrix table formats with an open textbox for respondents’ comments accompanying each phrase. Subsequent rounds required experts to indicate their choice/s by ranking, selecting, and voting on definitions and characteristics.

We distributed each questionnaire via email containing a Qualtrics link. The questionnaire was available to experts for 7 to 28 days, with the voting window being longer during the early voting rounds when experts had more phrases to consider. Reminders and thank you prompts were sent to the experts midway through each voting window and a few days prior to closing the survey when the voting window was long (Dillman, 2007). During voting, respondents could return to the survey to continue or revise their responses as frequently as needed. To mitigate survey ordering effects, both the predictor survey blocks and corresponding phrases were randomized (Perreault, 1975).

Soliciting Input

With each round of input and voting (i.e., rank ordering definitions and essential program characteristics), experts were asked to review the phrase, add what they thought was missing, suggest revisions, or argue for or against the phrasing. Space was provided following each phrase, as well as at the end of the predictor set, for experts to provide input on a specific phrase (e.g., elaborate, provide a justification), or make a general comment.

Analyzing Responses

In all rounds of voting, once the voting window closed, predictors were assigned to pairs within the research team for analysis and followed three general processes. Researchers looked at the results individually, then in pairs, and finally as a group. Individually, researchers structured the data for easy review by (a) transposing experts’ comments from a vertical view to a horizontal view in an Excel spreadsheet for improved readability; (b) calculating a total score for each phrase by summing the rank orders assigned to a phrase by all experts; and (c) creating a line graph for each predictor from lowest total score to highest total score (i.e., most to least preferred ranking) for visual analysis (Kennedy, 2005; Rowe et al., 2015). Each datum point on the line graph represented a different phrase for the given predictor. For example, if the predictor had 16 phrases for a given round, there were 16 data points on the graph, each representing its total score. Use of visual analysis is routine in single-subject research to determine trend, stability, and level (Lane & Gast, 2013). Similarly, it is used in exploratory factor analysis and principal component analysis when examining a scree plot to determine when the line no longer shows a steep incline or decline (i.e., significant change; Cattell, 1966; Tabachnick & Fidell, 2007), and has been used in other Delphi studies (see Beiderbeck et al., 2021). Specific to this Delphi, we examined each predictor graph to determine the point at which there was a visually definitive difference in rank scores in order to confirm cut points. Individual researchers made minor edits (e.g., corrected typos), added a consistent stem (e.g., [predictor] refers to . . .), or drafted initial responses to comments (e.g., combine items c and d).

Next, the researchers met in pairs and (a) identified a potential cut point based on visual analysis; (b) discussed experts’ comments; and (c) compared notes for possible revisions in response to comments. When a pair could not decide on a revision, the full research team discussed options and made the decision. Research pairs calculated and reviewed cut scores for each predictor. Cut scores were incorporated to complement the visual analysis when determining which phrases to retain. There is currently no consensus in the Delphi literature on how to determine cut points within each successive round. Diamond et al. (2014) found the most common consensus method was by percent agreement with percentages ranging from 50% to 97% and a median threshold of 75%. We used a combined approach of identifying the 75% cut point for each predictor (i.e., retaining definitions and characteristics that fell within the top 25%) and visually analyzing data to ensure each mathematical cut point was logical given the dataset. To calculate cut points, we multiplied the number of expert responses by the number of definition statements presented for each predictor. We then subtracted the number of responses, divided that number by four, and added back the number of expert responses, which represented the lowest possible ranking. For example, to identify the numerical cut off for the top 25% of a predictor with six definitional phrases and 25 reviewer responses in one round, the calculation would be as follows: best score would be 25 × 1 = 25 (if all 25 reviewers ranked it as 1), lowest score would be 25 × 6 = 150 (if all 25 reviewers ranked it as 25). Therefore, the top 25% is calculated as (150 − 25)/4 + 25 = 56.

Finally, the entire research team met and visually analyzed the graphs to determine the natural breaking points compared to the mathematical cut score to confirm our decision to include or exclude a phrase. We then discussed and agreed on revisions to incorporate into the next voting round. Although as a research team, we did not set an a priori criterion of 100% agreement when making a revision, this was our intuitive practice when crafting a revision. We continued re-wording the phrase until we agreed on the revision. During team review, we employed best practices in qualitative research by maintaining an audit trail; using multiple perspectives for data interpretation; acknowledging researcher perspectives, beliefs, and biases; and returning to the verbatim comments when questions arose (Brantlinger et al., 2005; Whittemore et al., 2001). To ensure experts’ voices were maintained and centered throughout the revision process, we used [brackets] and strikethrough to show experts our additions and deletions, respectively. After revising the phrases, we reviewed experts’ comments to ensure revisions were consistent with their suggestions. Specific procedures for each round are described under data analysis.

Reaching Consensus

Consensus was attained through an iterative process of experts responding to a series of questionnaires. An a priori consensus threshold was set at 75% agreement based on recent reviews of Delphi thresholds (Barrios et al., 2021; Diamond et al., 2014). Across all voting rounds, the percentage of agreement was calculated as the number of “accept” votes divided by the number of “accept” votes plus the number of “reject” votes times 100.

Data Analysis

Data analysis differed between the identification of the definition and the essential program characteristics. The predictor definitions required participants to reach consensus on a single definition, yet each definition would have multiple corresponding essential program characteristics without a minimum or maximum set number. Therefore, it was up to the experts to identify the essential program characteristics for each predictor based on what they believed would be needed to implement or evaluate the predictor, given the agreed-upon definition. Experts first reached consensus on the definitions; then, the essential program characteristic.

Defining Predictors

Round 0

In round 0, we distributed descriptors to the experts with two prompts: (a) how would you operationally define [the predictor] for secondary transition programs?; and (b) what program characteristics would be needed if the predictor were to be implemented in a secondary transition program? Experts were told they could repeat the language/phrase used in the original research, expand, revise, or delete the language provided, or introduce new language when writing a definition. They were not limited in the number of definitions or program characteristics they could recommend. In total, experts suggested 25 or 26 phrases for each definition (N = 181 phrases) and 24 or 25 characteristic phrases per predictor (N = 174 phrases).

Analysis in round 0 consisted of the research team (a) reviewing comments for each suggested definition and characteristic, (b) separating phrases when a suggestion contained multiple, independent phrases (e.g., “do an internet search, using apps to be more productive, Microsoft Office suite, & Google Workspace”), (c) eliminating duplications, (d) adding a consistent stem, and (e) making grammatical edits. For example, when defining parent expectations, the suggestion: “the need to define what after high school looks like for each student/family depending on needs and environment” was revised to read, “[Parent expectations refers to] defin[ing] what after high school looks like for each student/family depending on needs and environment.” We used [brackets] and strikethrough, respectively, to show revisions made by the research team. Only duplicated phrases were eliminated in round 0.

Round 1

In round 1, experts were given between 19 and 26 definition phrases per predictor and between 20 and 74 characteristics per predictor, after eliminating duplications. Considering first the definition phrases, then the characteristic phrases, experts were asked to rank the phrases from most preferred (rank of 1) to least preferred. We analyzed data by (a) summing the ranks experts assigned to a phrase to obtain a total ranked score for each predictor (e.g., 1 + 10 + 1 + 9 + 1 + 3 + 7 + 17 + 7 + 4 + 1 + 7 + 24 + 14 + 3 = 109); (b) graphing the total ranked scores from smallest to largest (most to least preferred) in a line graph; (c) calculating a numerical cut point to identify the top 25% of phrases; (d) conducting visual analysis in tandem with examining the numerical cut point to determine which phrases to retain; (e) examining clusters of phrases identified through the visual analysis to ensure key constructs were reflected; and (f) reviewing comments to determine whether and, if so, how to revise phrases for further voting.

When results of the visual analysis and numerical cut scores were different, or when there was a cluster of definitions or characteristics with a rank score close to the cut point, we returned to the data and reviewed experts’ comments and the individual phrases within the cluster to determine whether an outlying statement was similar or different from those included within the cut range (i.e., the lowest score to the cut point). When the outlying phrase was similar to those within the range, we analyzed the outlying phrase for words or phrases to include in a similar definition within the cut range. For example, in the self-realization predictor, the lowest scoring definition (i.e., representing the most preferred definition) was scored 59, and there was a cluster of eight definitions with total rank scores between 79 and 118. The visual analysis cut point was 118, and the calculated cut point was 91. We interpreted visually clustered data as an indicator for further analysis due to data instability (Kennedy, 2005). In these instances, we analyzed data for similarities and differences, and extended the cut-off range when the visual cluster contained unique data. To determine where to make the cut point, we reviewed each definition and any comments in the cluster of scores between 79 and 118. With this review, we determined that two of the eight definitions within the cluster were unique and that six definitions could be combined into an existing definition. Thus, the final cut point was 118, which resulted in four unique definitions for the experts to consider in round 2.

At the end of round 1, given the large number of phrases being considered for the definitions and characteristics, and the need to determine characteristics based on a set definition, we disseminated only the definition phrases in round 2. In this way, focus was on defining the predictor, and then identifying corresponding characteristics based on the consensus definition. This process was consistent with the procedures used by Rowe et al. (2015).

Round 2

In round 2, experts were given between 1 and 6 proposed definitions per predictor and asked to either (a) vote to accept/reject the proposed definition when there was only one proposed definition to consider, or (b) rank order each definition from most preferred (i.e., rank of 1) to least preferred when there were multiple definitions to consider. When voting to accept or reject the proposed definition, analysis consisted of calculating the percent of agreement and reviewing any comments for potential changes that would necessitate further voting. For predictors not being voted on, we followed the steps outlined in round 1 for analysis. We incorporated minor word edits into the proposed definitions based on experts’ suggestions when consensus was not met. Experts reached consensus on the definition of travel skills in round 2.

Round 3

In round 3, experts were given between 1 and 3 proposed definitions per predictor. Analysis followed the same steps outlined in previous rounds. When voting to accept or reject a proposed definition, analysis consisted of calculating the experts’ percent of agreement and reviewing any comments for potential changes that would necessitate further voting. At this point in voting, experts’ comments/suggested revisions related to one or two phrases in each proposed definition (e.g., [use the word] “capacity,” or “demonstrating versus acting”; or “belief versus perception”). The research team edited the phrases in alignment with experts’ comments and disseminated the revisions for voting. After round 3, experts reached consensus on the definitions for goal setting and technology skills.

Round 4

In round 4, experts were given 1 to 2 proposed definitions for the remaining predictors and asked to vote to accept or reject each one. When voting, analysis consisted of calculating the experts’ percent of agreement consistent with prior calculations. When consensus was not met, minor word edits were incorporated into the proposed definitions consistent with experts’ suggestions (e.g., adding/excluding the word “capacity”) for consideration in the next voting round. After round 4, experts reached consensus on the definitions for parent expectations, self-realization, and psychological empowerment.

Round 5

In round 5, experts were given two proposed definitions for youth autonomy and asked to vote to accept or reject each one. Based on comments received in round 4, experts were also asked to indicate whether they preferred the term “independently” or the phrase “with a sense of self-governance” in the proposed definitions. The use of these two phrases was about equally represented in the comments in round 4. Analysis for consensus consisted of calculating the percent of agreement for the proposed definitions. Since consensus was not met for either proposed definition, we crafted a definition that combined both definitions, incorporating experts’ comments from the two rejected definitions, and disseminated it for voting.

Round 6

In round 6, experts were given one proposed definition for youth autonomy and asked to vote to accept or reject it. We continued providing space for the experts to comment and suggest edits. Analysis consisted of calculating agreement and reviewing comments. After round 6, experts reached consensus on the definition of youth autonomy.

Identifying Essential Program Characteristics

Round 1

After round 0, described previously, experts had suggested 174 characteristics statements. Many of the suggested statements contained multiple independent statements. For example, an expert suggested the program characteristics for psychological empowerment were, “Provide opportunities for self-exploration; provide opportunities to learn skills/behaviors that will support impact of personal outcomes; provide opportunities to practice skills/behaviors that support impact of personal outcomes; provide opportunities to evaluate and adjust skills/behaviors to support impact of personal outcomes.” To ensure experts considered each statement separately, we disaggregated statements with multiple independent phrases and eliminated duplications. This resulted in 285 independent phrases (20 to 74 phrases per predictor) to consider. We also corrected typos, added a stem as appropriate, and again used [brackets] and strikethrough to indicate changes made by the researchers. In round 1, experts were asked to rank the characteristics from most preferred (ranked as 1) to least preferred.

Analysis of the characteristics in round 1 consisted of (a) summing the ranks assigned to each phrase to obtain the total rank score; (b) graphing the total rank scores from smallest to largest and conducting a visual analysis to see how the phrases were distributed based on rank order; (c) examining experts’ comments and suggestions to determine whether and how to revise phrases for further voting; and (d) eliminating phrases based on duplications. We did not calculate or apply a cut point for the characteristics at any point. The goal was to identify essential program characteristics needed to implement or evaluate a program, not to reduce the number of characteristics to a predetermined, arbitrary number of characteristics.

Round 2

In round 2, experts were given the consensus definition along with the

Essential [meant] the characteristic must be evident for the program to be considered as (or consistent with) a predictor. This characteristic is necessary for implementation and evaluation of the program’s effectiveness. Ancillary [meant] that the characteristic complements, or adds to, the program, but is not required for implementation or evaluation (i.e., nice addition that expands or diversifies the predictor). Not relevant/exclude from list [meant] the characteristic did not align with the operational definition of the predictor and was not required for program implementation and/or evaluation. (Rowe et al., 2015, p. 5)

We provided space after each phrase and at the end of the list for experts to suggest a revision, argue for or against a characteristic, provide a justification, make a general comment, or add any missing characteristic.

Analysis of round 2 consisted of (a) counting the number of essential, ancillary, and not relevant votes per phrase; (b) examining comments; (c) revising phrases as suggested by the experts (e.g., combine 13 and 17); and (d) eliminating phrases based on the number of votes in each category. When the majority of votes for a phrase were “not relevant,” the phrase was eliminated; “provide opportunities for transition assessments, communication and collaboration” is representative of a phrase experts deemed “not relevant.” Overall, few characteristic phases met this criterion. When the number of votes in the three categories were nearly equal (e.g., 6, 7, and 5, respectively), we examined the phrase and any corresponding comments to determine whether the language and or construct was reflected in another characteristic, and then either (a) eliminated the phrase because it was duplicative (e.g., “very similar to parent expectations 16, prefer 16 due to clarity”), or (b) revised it based on comments (e.g., “informed choice should be used here”) and sent it out for another round of voting.

Round 3

In round 3, experts were given the operational definition and

Round 4

In round 4, experts were given the operational definition and

Results

Response Rate

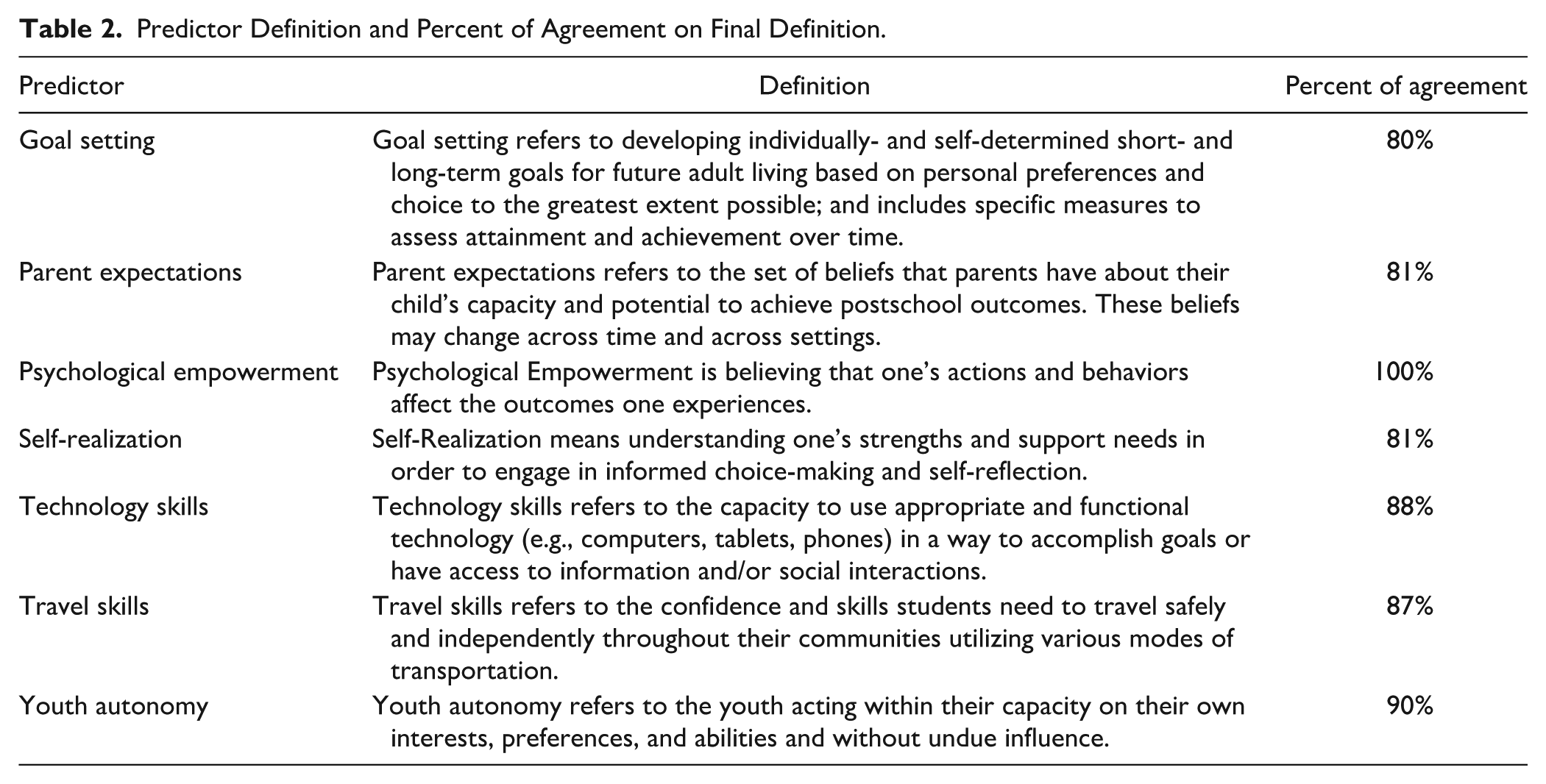

The response rate across the 10 rounds of voting was 71%, with a range of 48% to 90%. Wu et al. (2022) found average response rates for online surveys to be 44.1%. Experts reached consensus at the a priori threshold of 75% agreement on an operational definition for the seven new predictors after six rounds of voting. Agreement percentages ranged from 80% to 100% on each definition (see Table 2). For the essential program characteristics, experts reached consensus after four rounds of voting and agreement percentages ranged from 81% to 100% on each individual essential program characteristic.

Predictor Definition and Percent of Agreement on Final Definition.

Research Questions

This study answered two research questions: (1) What is an operational definition for each of the seven most recently identified PPSS? and (2) Based on the operational definition identified through census of experts in a Delphi procedure, what are the essential program characteristics for each predictor? Table 3 contains the operational definition for each of the seven most recently identified PPSS and the essential program characteristics for each predictor as identified by the experts in this study. Supplemental Table S1 includes the 23 PPSS with operational definitions.

Operational Definitions and Essential Program Characteristics of the Seven New Predictors.

Note. IEP = Individualized Education Program.

Discussion

This study operationally defined the seven newest PPSS and identified essential program characteristics for each predictor based on experts reaching consensus using a Delphi technique. Experts in transition for youth experiencing disability reached consensus through an iterative process that invited comments, contributions, modifications, and feedback from diverse and intersecting roles in secondary transition (e.g., parent, researcher, disability identity, area of disability expertise, practitioner).

Limitations

These results should be considered within the context of the following limitations. First, this Delphi technique was conducted entirely online over a period of approximately nine months. In-person voting and conversing would have expedited the voting process and likely resulted in different phrasing of definitions and program characteristics. We structured each questionnaire to give experts space and opportunity to argue for or against particular wording, make suggestions, and voice preferences. We used [brackets] and strikethrough to (a) provide feedback to experts, (b) be transparent in the changes made by the research team, and (c) center experts’ voices. Nevertheless, an in-person process and conversations may have resulted in slightly different wording.

Second, lack of experts’ diversity specific to race and gender is a limitation despite purposefully setting criteria a priori to identify experts based on a variety of expertise (e.g., knowledge of predictor area/s, disability, experience); experts with different lived experiences may have contributed differently to the final definitions and essential program characteristics. All Delphi studies are recognized to have limitations relative to inclusion criteria and selection of experts (Linstone & Turoff, 2002) and this study was not immune. It is possible individuals with different lived experience, race, and/or gender experience the predictors and/or essential program characteristics differently, and therefore would operationalize them differently. Shogren et al. (2018) found students’ self-determination scores varied by race-ethnicity, disability, and socio-economic-status (via proxy measure free/reduced lunch participation). In a phenomenological study with Black students diagnosed with intellectual and developmental disabilities and their families, Scott et al. (2021) reported participants’ perceived obstacles to self-determination were due to race. Implementing the predictors and essential program characteristics by applying a person-family interdependent approach within the context of systems-level interventions (e.g., home, school, work, community) will ensure more inclusive and culturally responsive transition practices (Achola & Greene, 2016).

Third, the overall response rates for each round of voting ranged from 48% to 90%. Response rates were variable despite targeted communication and reminders encouraging experts to complete voting. More participating experts may have resulted in more diverse statements and, ultimately, different definitions and or essential program characteristics. Further, the Delphi technique may conceal relative hesitancy to reach consensus. For example, one expert accepted a psychological empowerment characteristic in round 3 but noted removing a “dignity of risk” qualifier from the characteristic might result in an unintended consequence of diluting or misinterpreting best practices for psychological empowerment. In later voting rounds for the characteristics, the binary agree or disagree option may have obscured important nuances that inform the strength of convergence and consensus (Goodman, 1987).

Lastly, no research study is free from bias. The research team was an integrated interpreting instrument of this study (Brantlinger et al., 2005). We took several purposeful steps to reduce personal bias and establish trustworthiness in the findings. Specifically, we attempted to plan all procedural decision rules a priori, worked in pairs and as a team when making decisions, and acknowledged and disclosed personal biases as they arose. Nevertheless, these steps may not have precluded researcher bias from influencing decisions related to interpretation and integration of expert comments and feedback.

Implications for Further Research

Building on the findings from this Delphi study and those from Rowe et al. (2015), further research is needed in several areas. First, it is important to determine whether the experts’ operational definitions are consistent with empirical research related to each construct. Specifically, additional research is needed to examine existing research or additional empirical research to determine whether the characteristics identified by the experts are empirically associated with improvements in the predictor—that is, increased parent expectations, increased psychological empowerment, goal setting, and so forth. Furthermore, if the operational definitions and essential program characteristics are to inform development, implementation, and evaluation of secondary transition programs, then they need to be written in plain, nontechnical language. Confirming the definitions and characteristics are written such that practitioners understand the construct and can identify, create, and implement instructional experiences that build opportunities and skill development for students is vital.

Second, research is needed to confirm the extent to which these essential program characteristics are readily evident in practice. Investigating the absence, presence, and feasibility of the essential program characteristics with a broader and culturally and linguistically diverse group of educators, service providers, parents, and students situated in naturally occurring environments will further validate the essential program characteristics identified by the experts engaged in this study. Third, measuring effectiveness, paired with fidelity of implementation, of the essential program characteristics is needed to extend research into practice through actionable and procedural steps. As part of measuring effectiveness, researchers should examine the quality of students’ experiences within the implementation and evaluation of the predictors and the corresponding essential program characteristics. Research by Kutscher and Tuckwiller (2020) reported former students “. . . emphasized the quality of their relationships [emphasis original] with professionals who respected and encouraged them to pursue their goals” (p. 110). Lastly, empirical research is needed to investigate whether a relationship exists between students who experience the predictors and characteristics while in high school, (i.e., as planned through intentional implementation of the predictors in a student’s individualized education program (IEP) and students achieving their postsecondary goals stated in the IEP. This research is needed to examine whether there is a link between compliance, malleable program factors, and postschool outcomes. Individual student-level interventions need to be linked to systems-level infrastructure, supports, and interventions to ensure opportunities are created for students to access and are, in fact, engaging in experiences that increase skill attainment and the likelihood of achieving positive postschool outcomes.

Implications for Policy and Practice

The results of this study have implications for policy and practice. Operational definitions and essential program characteristics provide consistent and shared language for describing and discussing the predictors across disciplines and stakeholder groups in the context of both policy and practice. Whether writing policy, setting goals with individual students, or identifying goal-setting curricula resources, operational definitions promote common understanding and further reduces the research-to-practice gap. Similarly, the essential program characteristics provide a universal starting point for developing, implementing, and evaluating effective programs.

Germain to policies, implementation of some predictors and characteristics may require new or strengthened policy initiatives and collaboration at local, state, and national entities to reach full implementation. For example, experts included language related to informed choice in the definition of self-realization. Informed choice has been a central principle of the Rehabilitation Services Administration (RSA) employment policy since 1992 (Rehabilitation Services Administration [RSA], 2001) and is evident in Employment First initiatives (e.g., Minnesota Department of Human Services, 2016). Within education policy, specifically IDEIA (2004) and its accompanying regulations, informed choice is not delineated. Focusing informed choice at the student level, as implied through the language of the essential program characteristics for self-realization, may require changes in policy for it to become widespread, common practice. Such a policy would have far reaching implications for training, services, and resources to students, families, and practitioners (Mitchell, 2015).

The technology skills predictor also has implications for policy and practice. Technology skills in the 21st century are a necessity (Captain, 2023; Li, 2022). Yet, for individuals who experience disability, access to the technology needed to gain crucial skills is plagued with imbalances (Tyson, 2015); imbalances that became even more apparent during the COVID-19 pandemic. Bakaniene et al. (2023) found that students experiencing disability faced many technological challenges with online learning, including lack of access to technology and inequities in resources. Recognizing the importance of technology skills, the experts in our study called for student access to current technological platforms, programs, software, and hardware that are reasonably available. Action at the policy level, paired with fiscal resources, will be needed to ensure students experiencing disability have the resources necessary to develop technology skills for the 21st century workplace. Implementing this essential program characteristic will have practical implications, too. Significant fiscal investments in equipment, software, and professional development for staff will be needed. Staff will need to be savvy in their own technology skills in order to support students’ learning and access.

It is hard to have a conversation about travel skills without also including transportation in general, and more specifically, challenges and barriers associated with transportation for individuals experiencing disabilities. The essential program characteristics associated with travel skills include explicit examples of wide-ranging modes of transport (e.g., from skateboarding to using public transportation to driving). Particular travel skills necessary to access and utilize each mode of transport are unique and must be considered within the context of the individual and their current and future community. Some travel and transportation options (e.g., public transportation) should prompt stakeholder groups (e.g., youth, families, practitioners, and policy makers) to examine what is available, feasible, and needed within their community to accommodate the travel requirements of diverse users. Community-based transportation innovations (e.g., adaptive cycling programs or rentals, ride-shares) may need to be created or expanded through policy initiatives to meet the needs of diverse consumers. Identifying travel or transportation specific barriers and possible solutions may necessitate policy revisions. In an analysis of state Medicaid waivers to identify transportation services provided to people who experience intellectual and developmental disabilities (IDD), Friedman and Rizzolo (2016) found “the majority of [Medicaid Home and Community-Based Services] 1915(c) waivers provided transportation for people with IDD through [bulk services or transportation-specific services]; however, this transportation was often limited to very specific purposes such as to and from supported employment” (p. 173). They suggested revisions to states’ Medicaid waivers to allow flexibility in service dollars allocation to support individuals’ needs and facilitate broader community access.

At the practice level, by supporting secondary transition programs with high quality research- and evidence-based practices, the essential program characteristics provide touchstones for developing, implementing and evaluating the effectiveness of existing experiences. Practice-level implication requires aligning secondary transition programs with the predictors and essential program characteristics. This effort should be multidisciplinary in nature to determine the extent to which each predictor and characteristic are currently implemented, which are not, and who will be responsible for developing an implementation plan. Multidisciplinary teams should be tasked with prioritizing implementation of predictors and essential program characteristics based on student performance data, resource availability, policy alignment, and other relevant data (e.g., graduation and dropout, career and technical education). While all predictors and characteristics are essential for successful program implementation, certain characteristics may be of greater importance than others for an individual team and require more timely attention. It is also important to note that some predictors may have additional program characteristics given the unique context of students, schools, and communities.

Conclusion

The evidence-based era of secondary transition is well established as the major federal policies supporting secondary education and transition—IDEIA (2004), Every Student Succeeds Act (2015), Strengthening Career and Technical Education for the 21st Century Act (Perkins V; 2018), and Workforce Innovation and Opportunity Act (2014)—call for the identification and use of effective interventions and strategies grounded in peer-reviewed and evidence-based research. This study supports those efforts by identifying the operational definitions and essential program characteristics for the seven newest secondary transition predictors that support postschool success—goal setting, parent expectations, psychological empowerment, self-realization, technology skills, travel skills, and youth autonomy. Furthermore, the results of this study provide practitioners, administrators, and other stakeholders with actionable elements for developing, implementing, expanding, and evaluating secondary transition-focused education programs to ensure students who experience disability are prepared for further education, employment, and independent living, including community engagement.

Supplemental Material

sj-docx-1-cde-10.1177_21651434241271408 – Supplemental material for Operationalizing Predictors of Postschool Success in Secondary Transition: A Delphi Study

Supplemental material, sj-docx-1-cde-10.1177_21651434241271408 for Operationalizing Predictors of Postschool Success in Secondary Transition: A Delphi Study by Charlotte Y. Alverson, Kyle Reardon, Christina D. Howard, Gerrit Wiebe, Catherine H. Fowler, Dawn A. Rowe and Valerie L. Mazzotti in Career Development and Transition for Exceptional Individuals

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The research reported in this article was partially supported by funding through Cooperative Agreement Number H326E200003 with the U.S. Department of Education, Office of Special Education Programs and the Rehabilitation Services Administration. Opinions expressed herein do not necessarily reflect the position or policy of the U.S. Department of Education nor does mention of trade names, commercial products, or organizations imply endorsement by the U.S. Department of Education.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.