Abstract

Promoting self-determination is essential to effective transition services and supports. The Goal Setting Challenge App (GSC App) was developed to deliver self-determination instruction via technology, building on the evidence-based Self-Determined Learning Model of Instruction (SDLMI). This article presents data on goal attainment outcomes for students with disabilities who participated in a small, cluster-randomized controlled trial (C-RCT) of the GSC App during the 2020 to 2021 academic year and the ongoing COVID-19 pandemic. Findings suggest it is highly probable the GSC App enhances student transition goal attainment outcomes after one semester, with students three times more likely to attain their self-identified transition goals in the GSC App than in the business-as-usual condition. The impact of COVID-19 on implementation and sample loss is described, as are implications for research and practice.

Keywords

Promoting and enhancing self-determination are recognized as a best practice in secondary transition for students with disabilities (Rowe et al., 2021). Leaving high school with higher levels of self-determination is a predictor of postschool success (Mazzotti et al., 2021; Shogren et al., 2015). Multiple skills and abilities can be a focus of instruction during secondary education leading to enhanced self-determination, including goal setting and attainment skills (Shogren et al., 2015). However, there are ongoing challenges to implementing evidence-based self-determination instruction in schools with sufficient fidelity (Shogren, Raley et al., 2021). The COVID-19 pandemic further exacerbated challenges associated with equitable access to effective instruction to prepare students to set and go after their goals, building self-determination abilities, skills, and attitudes, as they transition from school to adult life (Rowe et al., 2020; Toste et al., 2021).

One evidence-based practice to support the development of self-determination for transition-age students is the Self-Determined Learning Model of Instruction (SDLMI; Shogren et al., 2018). The SDLMI is an instructional model teachers overlay on their instruction to promote self-regulated problem solving as students learn to self-direct the process of setting and going after their goals. When applied in the context of transition planning, secondary teachers focus on supporting students to identify goals, develop action plans, and evaluate their progress toward their transition goals across domains (e.g., high school completion, postschool employment, ongoing education, and community participation) to determine what adjustments are needed to move forward toward valued adult outcomes. The ultimate goal of the SDLMI is the student will learn to apply the Student Questions to the goal setting and attainment process in an increasingly self-directed way as they iteratively engage in the SDLMI, working toward progressively more complex goals, navigating barriers to goal attainment, and seeking out needed supports. A growing body of research has established impacts of the SDLMI on student self-determination, goal attainment, and postschool outcomes (Hagiwara et al., 2017). The SDLMI provides a means for teachers to target key skills such as goal setting, problem solving, decision-making, and self-advocacy that are recognized as essential to college and career readiness and postschool success (Mazzotti et al., 2021; Morningstar et al., 2017), and researchers have found that students and teachers perceive the SDLMI as leading to increased goal attainment outcomes for students with disabilities (Shogren, Burke, Antosh et al., 2019; Shogren, Hicks et al., 2021). Overall, goal setting, decision-making, and self-advocacy (targeted skills within the SDLMI) have been identified as research-based predictors of positive postschool employment and education success for youth with disabilities (Mazzotti et al., 2016, 2021).

The SDLMI was originally designed as a model of instruction for teachers (Wehmeyer et al., 2000). Teachers, after being trained to facilitate the SDLMI, play a key role in delivering Educational Supports aligned with the Teacher Objectives. For example, when the SDLMI is implemented in classrooms with students focused on transition planning, instruction is typically organized into 30- to 45-min lessons delivered 2 to 3 times per week (Burke et al., 2019; Shogren, Burke, & Raley, 2019). Each lesson focuses on one of the Student Questions, and instructional materials provide strategies and resources (e.g., PowerPoints and activities) teachers can use to support students as they answer each Student Question. Student Question responses are documented, and over a semester, students work through the 12 Student Questions. This process is repeated across semesters to enable students to set new goals or revise existing goals or action plans; thereby, students continue to grow in their goal attainment and self-determination as they approach the transition to adulthood. Implementation of the SDLMI is driven by three core components: 12 Student Questions linked to Teacher Objectives and Educational Supports. The 12 Student Questions are organized into three phases (i.e., Phase 1–Set a Goal; Phase 2–Take Action; and Phase 3–Adjust Goal or Plan). During each phase, students work through four Student Questions that guide them through solving the overall problem targeted in that specific phase (e.g., Phase 1–What is my goal?). The Teacher Objectives provide guidance for teachers on what they are trying to achieve in supporting students to answer each question (e.g., enable the student to communicate preferences, interests, beliefs, and values); and Educational Supports are instructional strategies (e.g., decision-making instruction and self-advocacy instruction) that are used to enable teachers to meet the Teacher Objectives.

Early research suggested the feasibility of multimedia SDLMI delivery (Mazzotti et al., 2012, 2013], and technological advances have created opportunities for the development of standalone applications that can promote individualized learning experiences for students with disabilities while delivering content aligned with the core components of the SDLMI. The Goal-Setting Challenge (GSC) App (Shogren & Mazzotti, 2020) was conceptualized as a technology-based solution for teaching abilities, skills, and attitudes associated with self-determination, including goal attainment skills, using the SDLMI framework. The GSC App consists of 14 lessons organized around the 12 Student Questions, with two introductory lessons on key self-determination concepts and terms. The lessons included in the GSC App were designed to meet the Teacher Objectives associated with each Student Question and provide critical Educational Supports using content and activities delivered through the GSC App. Each of the 14 lessons takes approximately 20 min to complete, and the GSC App was designed using features of universal design for learning (UDL) and integrated culturally responsive practices (e.g., including characters representing multiple cultural identities, and using examples that are relevant and reflect diverse funds of knowledge (Gay, 2018)). Accessibility features (e.g., text-to-speech, options to type or record responses, and responses in one lesson pulled forward to the next lesson) were built into the GSC App. The development of the GSC App and integration of best practices in instructional design are further described in Mazzotti et al. (2022).

Initial feasibility studies of the GSC App suggested students could engage with the GSC App with minimal support from teachers (Mazzotti et al., in press). While this research was disrupted by the COVID-19 pandemic, data collected prior to March 2020 suggested teachers felt the GSC App was practical and easy to implement, and that it targeted critical skills aligned with transition planning. Students also showed initial gains in self-determination content knowledge as they began engaging with the GSC App, and some students continued using the GSC App at home when virtual learning supports were established in Spring 2020. However, ongoing research is needed to explore the impact of the GSC App on student outcomes, including transition goal attainment given the role of goal setting and attainment in building self-determination skills and abilities. The purpose of the present study was to provide initial data on the goal attainment outcomes of students who participated in a small cluster-randomized controlled trial (C-RCT) of the GSC App completed during the 2020 to 2021 academic year and the ongoing COVID-19 pandemic. Our primary research question was as follows: How probable is it that the GSC App enhances student transition goal attainment outcomes after a semester of implementation?

Method

The purpose of this study was to explore the possibility that the GSC App will enhance student transition goal attainment outcomes (analyses on other exploratory outcome measures with different data collection schedules are ongoing). Specifically, this study used a C-RCT to explore the probability that the GSC App enhanced student transition goal attainment outcomes at the end of one semester of implementation during the 2020 to 2021 school year. Schools served as units of randomization (i.e., schools were randomly assigned to the GSC App treatment or business-as-usual [BAU] condition) to preclude contamination. Five schools in the U.S. Midwest were recruited in Summer 2020 and were randomized to either the GSC App group (n = 3 schools) or the BAU group (BAU; n = 2 schools) to implement in Fall 2020. In the U.S. Southeast, 10 schools were recruited in Fall 2020 to implement in Spring 2021 and were randomized to GSC App (n = 5 schools) and BAU (n = 5 schools) groups. Two Southeast schools, both in the BAU group, withdrew before training because of increased pandemic-related challenges (e.g., teachers leaving their role, lack of time, and focus on other academic priorities during virtual instruction). An additional school in the GSC App group completed training but withdrew before baseline data collection was initiated for similar pandemic-related reasons.

The loss of three schools out of the original 15 randomized schools led to a 20.0% overall school sample loss, prior to baseline data collection. Two schools were in the BAU condition (28.6% BAU school sample loss), and one school was in the GSC App condition (12.5% GSC App school sample loss). This led to a 16.1% (= 28.6%−12.5%) differential treatment sample loss at the school level, with sample loss larger in BAU schools. Because sample loss at the school level was assumed to be due to the pandemic in both GSC App and BAU groups, we did not include these missing schools (and the teachers and students in them) in our unexplained attrition counts, aligned with What Works Clearinghouse (WWC) Standards (2020).

In the 12 schools that did not withdraw prior to baseline data collection, there were originally 20 teachers recruited to participate, but six (30.0%) teachers (all in the GSC App group) withdrew before any baseline data could be collected. Each teacher reported that they had too many pandemic-related demands to take on any new activities, although other teachers in each of the 12 schools continued participation. Because this teacher sample loss was also assumed to be related to the pandemic, we did not include these missing teachers in our unexplained attrition counts per WWC Standards (2020). Thus, our study included 14 teacher implementers in 12 schools; 178 students (92 students in seven GSC App schools and 86 students in five BAU schools) provided assent to participate in the study. Of these 178 students who assented to participate and completed baseline measures, 55 (30.9% of total student sample) did not contribute goal attainment outcome data at the end of the semester of implementation (33 students in GSC App condition and 22 students in BAU condition), leading to 123 students with outcome data for the present analyses (see Supplemental Table S1 in the online supplemental materials for further details on student level-attrition across schools). Although we suspect factors related to the pandemic were likely at play for at least some of these students, we could not verify this was the case on an individual basis, as we could with schools and teachers. Therefore, we classified these 55 students as unexplained attrition. This led to no unexplained attrition among schools or teachers, but a 30.9% unexplained overall attrition rate among students and a 10.3% unexplained differential group attrition rate (25.6% student attrition in BAU; 35.9% student attrition in GSC App). As described in the “analysis plan” subsection (below), we implemented countermeasures against unexplained attrition in this analysis, including a sensitivity analysis.

Setting and Sample

The 12 schools were in rural (n = 7; 58.3%), suburban (n = 4; 33.3%), and urban (n = 1; 8.3%) areas and varied in their overall student population (M = 907, SD = 554). Due to the ongoing COVID-19 pandemic, 10 schools utilized fully virtual learning models during the study, and two schools in the Southeast utilized a hybrid learning model with students rotating between face-to-face and virtual instruction each day. Once the university Institutional Review Board (IRB) provided approval and district and school support for participation was obtained, one to two teachers and the students they supported with a current Individualized Education Program (IEP) that included transition services were identified. A waiver of consent was granted by the university IRB in which parents/guardians received an information statement about the research project and contact information if they had questions or would prefer their child not participate in the research project. If parents/guardians did not communicate they preferred their child to be excluded from the research project, student assent was collected, consistent with approved IRB protocols.

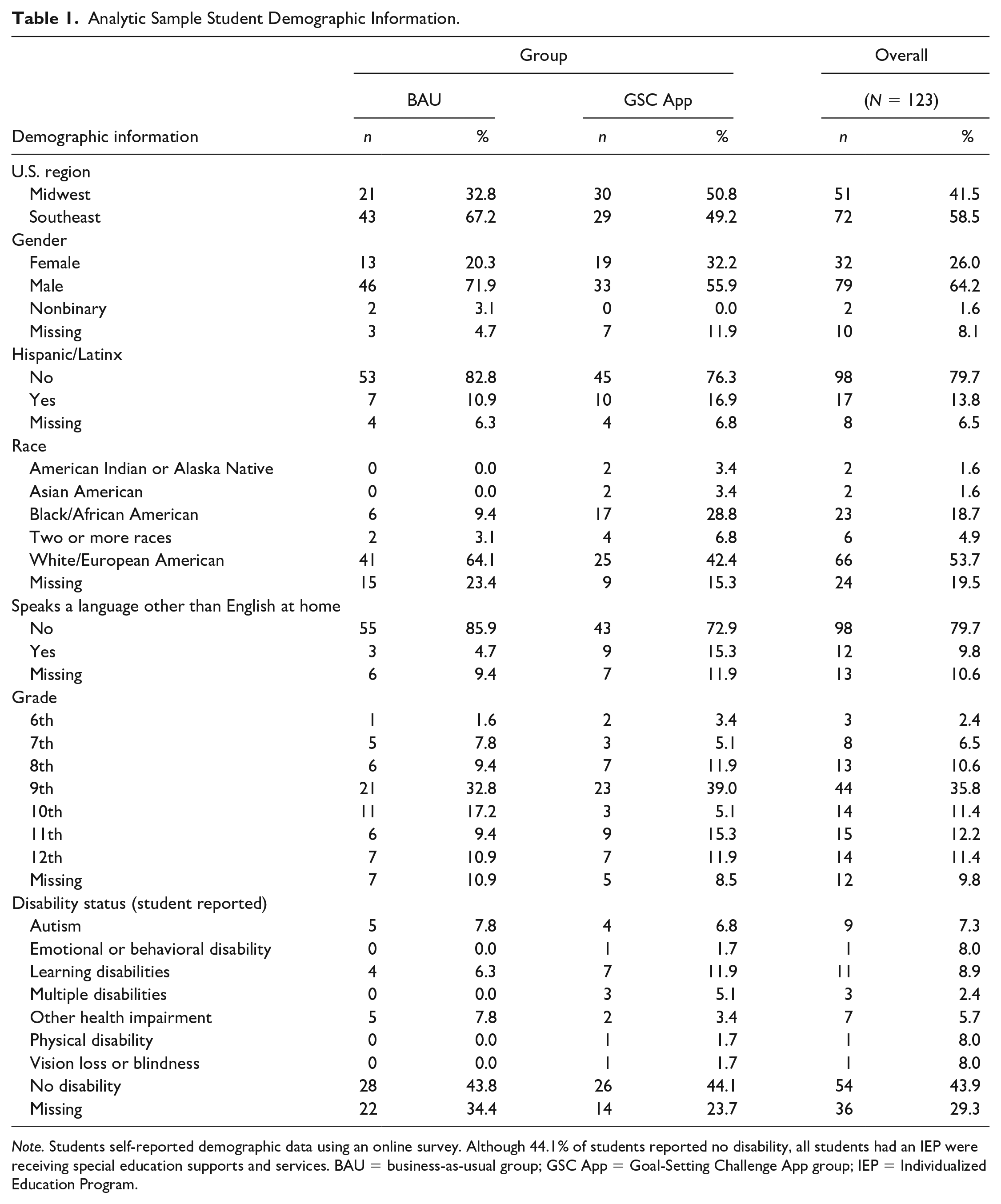

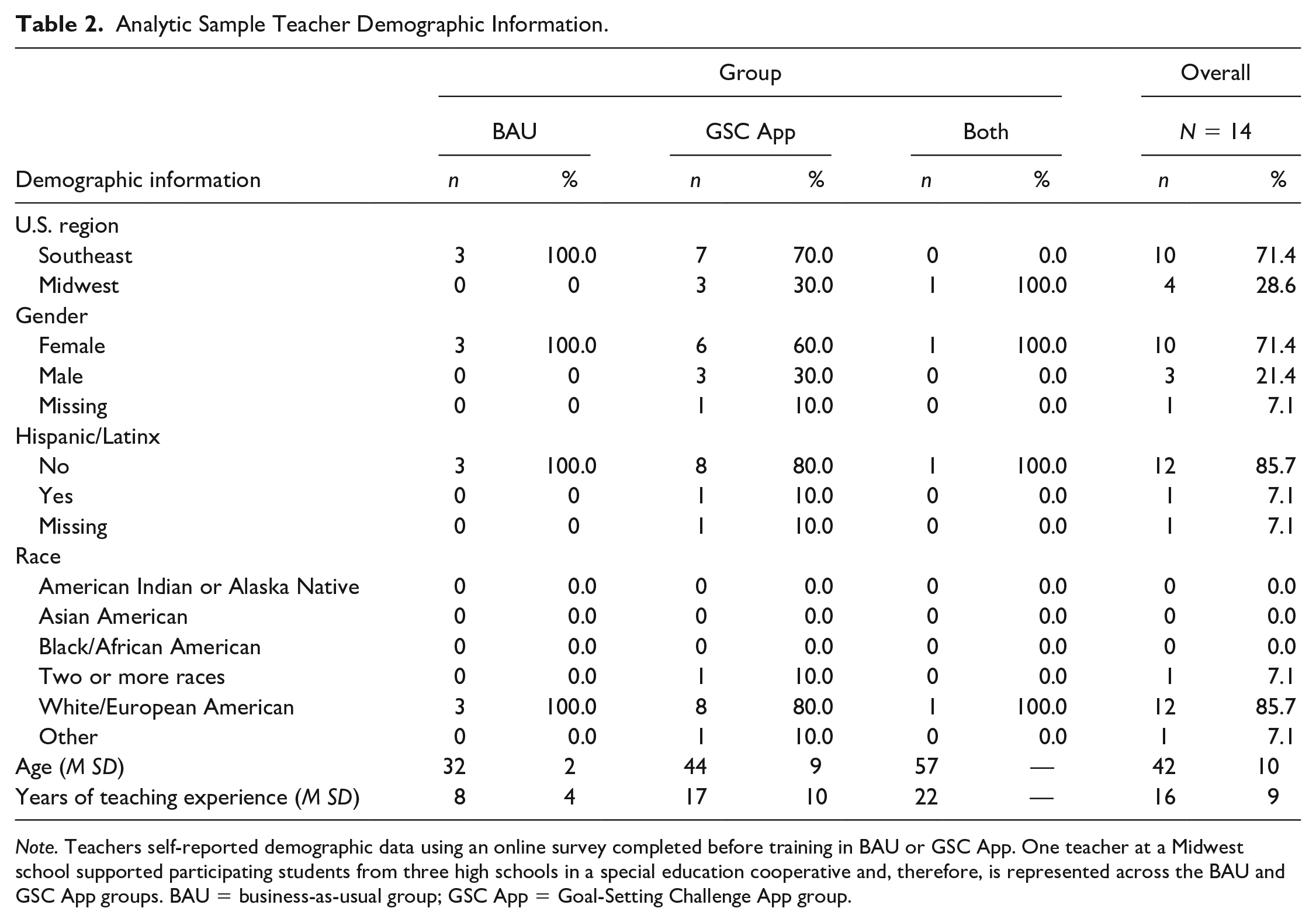

As described previously, the analytic sample—after accounting for sample attrition—included 123 students (n = 64 in BAU group; n = 59 in GSC App group) across 12 schools and 14 teachers, including one teacher who worked across three schools. In Table 1, we present the demographics of these 123 transition-age students who received special education services. Demographic information was collected from students via a self-report measure at baseline. A majority of students identified as male (64.2%) and White/European American (53.7%). All students had an IEP, according to school administrative records; however, when asked to self-report on their disability status, a large percentage of students self-reported not having any disability (44.1%) or skipped the question (29.3%) on the self-report demographic measure. Among the 26.6% of students who self-identified as having a disability, students most commonly identified as having learning disabilities (8.9%), autism (7.3%), or other health impairments (5.7%). Teachers also provided demographic information via a self-report survey before engaging in the GSC App or BAU training (see Table 2). A majority of teachers identified as female (71.4%) and White/European American (85.7%). The average age of teachers was 42 years old, and the average years of teaching experience across both groups was 16.

Analytic Sample Student Demographic Information.

Note. Students self-reported demographic data using an online survey. Although 44.1% of students reported no disability, all students had an IEP were receiving special education supports and services. BAU = business-as-usual group; GSC App = Goal-Setting Challenge App group; IEP = Individualized Education Program.

Analytic Sample Teacher Demographic Information.

Note. Teachers self-reported demographic data using an online survey completed before training in BAU or GSC App. One teacher at a Midwest school supported participating students from three high schools in a special education cooperative and, therefore, is represented across the BAU and GSC App groups. BAU = business-as-usual group; GSC App = Goal-Setting Challenge App group.

Procedures

Training and Ongoing Support for BAU Schools

The research team conducted a 2- to 3-hr virtual training at the start of the semester with special educators at schools assigned to the BAU condition to control for training and ensure effective completion of the preassessments and postassessments (i.e., Self-Determination Inventory: Student Report [SDI: SR; and Goal Attainment Scaling [GAS; Kiresuk et al., 1994] Shogren & Wehmeyer, 2017). Training was conducted at the start of the semester for which schools were implementing (i.e., Fall 2020 for Midwest schools and Spring 2021 for Southeast schools). Business-as-usual training consisted of (a) an introduction to self-determination and the SDLMI; (b) an overview of the GSC App project; (c) a description of data collection responsibilities and schedule (i.e., complete the SDI:SR and GAS at the beginning and end of the semester); and (d) an overview of how to use the SDI:SR and GAS with students, including practice with the assessments. Following training, BAU teachers continued to provide originally planned instruction to students (e.g., employment-related skills, social skills, and academic skills) with no additional supports from the research team related to self-determination instruction, aside from supports to collect data on the outcome measures. Research team members communicated with BAU teachers once or twice a month, to stay engaged and support data collection at the beginning and end of the semester.

Training and Ongoing Support for GSC App Schools

The research team conducted a 4- to 6-hr virtual training with special educators assigned to the GSC App condition at the start of the semester for which they were implementing (i.e., Fall 2020 for Midwest schools and Spring 2021 for Southeast schools). The GSC App condition training included the same training components as the BAU condition described previously, as well as additional information on navigating the GSC App. Additional training components included (a) an introduction to the GSC App lessons and how each lesson related to the SDLMI; (b) a review of technical aspects and embedded supports in the GSC App (e.g., text-to-speech, options to type, or record responses); (c) strategies and practice for how to introduce the GSC App to students and monitor progress throughout the semester; and (d) developing the implementation schedule and planning for GSC App implementation. Teachers were also provided with the GSC App User’s Guide (Shogren, Mazzotti et al., 2022) that integrated key information from training and provided details on accessing ongoing support from the research team to troubleshoot implementation issues. Before the end of the training or shortly after individual meetings with teachers, teachers finalized their GSC App implementation schedules in which they identified the days of the week and times they would support students in logging into the GSC App and completing lessons. During implementation, research team members were in communication with teachers approximately once per week. This communication included troubleshooting issues, joining virtual learning sessions when invited by teachers to support students to log in and troubleshoot engagement with the GSC App, and completing data collection activities at the beginning and end of the semester.

GSC App Intervention Components

As described previously, the GSC App was designed to deliver the SDLMI in 14 lessons using online instructional technology, including two introductory lessons followed by 12 lessons aligned with the Student Questions within the three SDLMI phases (i.e., Phase 1–Set a Goal; Phase 2–Take Action; and Phase 3–Adjust Goal or Plan; Shogren et al., 2018). Accessibility and engagement features embedded in the GSC App (e.g., multiple student response options) and delivery of SDLMI core components (Student Questions, Teacher Objectives, and Educational Supports) were developed using best practices in instructional technology as well as sustained input from end-users (i.e., students) and their teachers (see Mazzotti et al., 2022 for further information). Each lesson was designed to take approximately 20 min to complete, and, in this study, students completed one to two GSC App lessons each week during virtual or hybrid instruction.

During virtual sessions, teachers supported students via a virtual platform (i.e., Zoom, WebEx, Google Meet, and Canvas by Instructure) to log into the GSC App and complete the targeted lesson. Similar procedures were used for hybrid GSC App sessions, except for teachers supporting some students face-to-face while supporting other students virtually. During online synchronous instruction, teachers provided links to the GSC App and SDI:SR/GAS data collection platforms, assigned GSC App lessons and tasks to students, reminded students to complete the GSC App lessons, and provided their usernames and passwords, if needed. Also, if students were unsure what a specific activity was asking them to do or wanted support to use the accessibility features (e.g., speech-to-text), the teacher was available to answer their questions either in person (if hybrid) or via the virtual platform. Two teachers met individually with their students weekly via the virtual platform to discuss each student’s progress in the GSC App. Based on student attendance, most students engaged in the GSC App as a small group with other students in their class who were participating in the project; however, if a student was unable to attend a class session to engage in the GSC App with the group, teachers met individually with that particular student to support engagement in the GSC App. If a student missed one or more GSC App sessions, the teacher worked with a member of the research team to identify how they could support the student to complete the lessons needed and stay on track with the class implementation schedule. Even with these supports, student attrition occurred (as described subsequently), either because students stopped attending or participating in virtual instruction, or because they participated so infrequently, they could not maintain progress in the GSC App consistent with the class implementation schedule.

Procedural Fidelity and GSC App Dosage

During the trial, the GSC App and accompanying resources (e.g., training materials) were made accessible only to teachers and students in schools assigned to the GSC App group. To obtain access to the GSC App, participants needed an assigned account with a unique username and password. The GSC App collects data on user histories (e.g., time in the GSC App, completion of activities, and responses to Student Questions). The expectation was that during the semester, students would complete at least one cycle of the GSC App (i.e., 14 lessons). In the GSC App group (n = 59), eight students started but did not complete the full challenge. They got to, on average, the 11th lesson (of the 14 lessons; 78.6%; SD = 2.3 lessons; range 6–14) during the semester. This leaves 48 students (81.4%) who completed all 14 lessons (i.e., the full challenge) and three students who never accessed the App. The 48 students who completed the full challenge spent an average of 189.9 min in the App (SD = 93.5 min), with more time spent in Phase 1 (M = 65.9 min, SD = 48.9) than Phase 2 (M = 50.0 min, SD = 22.3) or Phase 3 (M = 42.9 min, SD = 15.3). Nineteen students began working on a second cycle of the GSC App.

Variables and Measures

GSC App Group

A binary indicator variable (coded as 0/1) documented whether schools were assigned to the GSC App group (coded as 1) or the BAU group (coded as 0). This variable was the independent variable in the analysis. The research team adhered to intent-to-treat principles when coding this variable.

Transition Goal Attainment Outcomes

To measure goal attainment by students in the GSC App (GSC App group) or students engaging in routine transition planning (BAU group), GAS (GAS; Kiresuk et al., 1994) was used. Goal Attainment Scaling has been widely used in education research (Carr, 1979; Ruble et al., 2012; Shogren, Dean et al., 2021), including as an outcome variable in SDLMI research (e.g., Shogren, Burke, Antosh et al., 2019; Shogren, Hicks et al., 2021). In the present study, after completing lesson four which focused on Student Question 4 (in Phase 1: Set a Goal), students in the GSC App group were supported by teachers to complete GAS via an online survey in which they provided a description of their goal and developed a rating rubric consistent with GAS protocols. With support from their teachers, students in the BAU group completed the online GAS survey at approximately the same time in the semester as the GSC App group, using a goal from their transition plan as required for all students receiving special education supports and services, and established a GAS rating rubric.

As per GAS protocols, a rubric was created to “scale” goal attainment outcomes personalized to the goal, ranging from −2 for much less than expected, −1 for somewhat less than expected, 0 for expected, +1 for somewhat more than expected, and +2 for much more than expected. At the end of the semester, students in both groups were prompted to rate their goal attainment on the GAS rubric. They also had the option to indicate they decided not to complete their goal. Only students that created their GAS rubric and provided a rating at the end of one semester (including students who reported they decided not to complete their goal, subsequently called goal abandonment) were included in the present analyses.

For the purposes of analysis, a categorical variable (coded as “unattained,” “attained” and “not applicable”) was created to reflect student ratings of their goal attainment outcomes. “Attained” was coded when students rated their goal attainment outcome as either meeting (0) or exceeding (+1, +2) expectations. “Unattained” was coded when student rated their goal attainment outcome as not meeting expectations (−1, −2). “Not applicable” (N/A) was coded when students reported they could not rate goal attainment outcomes at the end of the semester because of goal abandonment. This variable was the dependent variable in the present analysis.

Disability Status

Table 1 provides demographic information from students. Upon noting significant discrepancies between administrative classification of students as receiving special education services (i.e., all students had an IEP per administrative records) and students’ self-identification as having a disability, we chose to explore self-reported disability status as a moderator of transition goal attainment outcomes, given previous studies suggesting differences in SDLMI trial outcomes based on the use of administrative disability classification data versus student-self-report data (Shogren, Pace et al., 2022). We classified students into one of three categories: (a) students who self-reported they had a disability, (b) students who self-reported they did not have a disability, and (c) students who did not respond to the question about disability status.

Analysis Plan

Multilevel Hurdle Model

Data on goal attainment outcomes were hierarchically structured; in other words, data came from students (Level 1) nested in teachers (Level 2) nested in schools (Level 3), necessitating multilevel modeling. Multilevel modeling is appropriate for hierarchically structured data because it yields inferences that account for correlations of outcomes within clusters (Gelman & Hill, 2007). Data were also sequentially structured, meaning only students who decided to complete their goals (Stage 1) were able to rate their attainment of them (Stage 2), necessitating hurdle modeling. Hurdle modeling is appropriate for data generated from multistage processes in which outcomes at earlier stages are necessary conditions for outcomes at later stages (Cragg, 1971). In this case, not abandoning goals (Stage 1 outcome) was a necessary condition to attaining them (Stage 2 outcome). We thus selected a three-level hurdle model (students nested in teachers nested in schools) as our primary approach to regressing goal attainment outcomes on trial group (GSC App vs. BAU), wherein interlinked probabilities of abandoning goals and attaining goals at or exceeding expectations were modeled in one comprehensive analysis.

Bayesian Inference

Because of the relatively small number of schools available in this trial (n = 12), we decided to use Bayesian inference instead of null hypothesis testing. Null hypothesis testing is a zero/sum decision aimed at rejecting null hypotheses in one test. Bayesian induction instead only aims to update the relative probabilities of competing hypotheses, without deciding to reject or accept hypotheses. Moreover, Bayesian updating allows probabilities arrived at in one study to be further improved in the next study, which gives a natural route for sets of studies to build upon each other (Howson & Urbach, 2006). Thus, a major benefit of Bayesian induction is that these types of probabilistic inferences are valid with any sample size (van de Schoot et al., 2015), even though sample sizes will naturally be reflected in the amount of uncertainty in these probabilistic inferences.

Bayesian Model Averaging

To address our primary research question (How probable is it that the GSC App enhances student transition goal attainment outcomes after a semester of implementation?), we calculated the probability that the GSC App enhanced student transition goal attainment outcomes after a semester of implementing following a multiple-step procedure. First, we fit a series of models representing different group effects: (

Among these four models,

Sensitivity Analysis for Unexplained Student Attrition

Although pandemic-related factors were assumed to explain attrition at the school and teacher levels, they cannot be assumed to fully explain the lost 55 (30.9%) students out of 178 across the 12 retained schools and 14 retained teachers. Unexplained attrition can bias effect sizes. To evaluate the threat, we conducted an attrition sensitivity analysis (Frank, 2000). Specifically, we systematically imputed missing data to examine how the direction of the effect size changed or not based on the total numbers of “attained” outcomes imputed to students who did not contribute outcome data in the BAU condition and “unattained” outcomes imputed to students who did not contribute outcome data in the GSC App condition. Among students who did not contribute outcome data in the BAU, total number of imputed “attained” outcomes could range between 0 (min) and 22 (max) for a total of 23 possibilities. Among students in the GSC App condition who did not contribute outcome data, total number of imputed “unattained” outcomes could range between 0 (min) and 33 (max) for 34 possibilities. By crossing these two scenarios, we got 782 (= 23 × 34) different imputed data sets. We reran analysis using each imputed dataset counting the times the effect size changed direction. This sensitivity analysis showed that in 96.7% of the imputed data sets, the effect size matched the direction of the obtained output (i.e., positive). The other less than 3.3% of scenarios showed rather extreme conditions need to prevail in both BAU and GSC App groups to reverse its direction (e.g., almost all students lost in the BAU group needed “attained” imputed outcomes while almost all students lost in the GSC App group needed “unattained” imputed outcomes; see Supplemental Figure S1 in the online supplemental materials). Thus, while attrition sensitivity analysis cannot guarantee causality, it shows that the estimated direction of the effect size is relatively robust against the overall and differential student attrition across groups in the analytic sample, boosting confidence in results. Technical details can be found in the accompanying SAS 9.4 scripts on the OSF website and Supplemental Figure S2.

Results

Primary Research Question: How Probable Is It That the GSC App Enhances Student Transition Goal Attainment Outcomes After a Semester of Implementation?

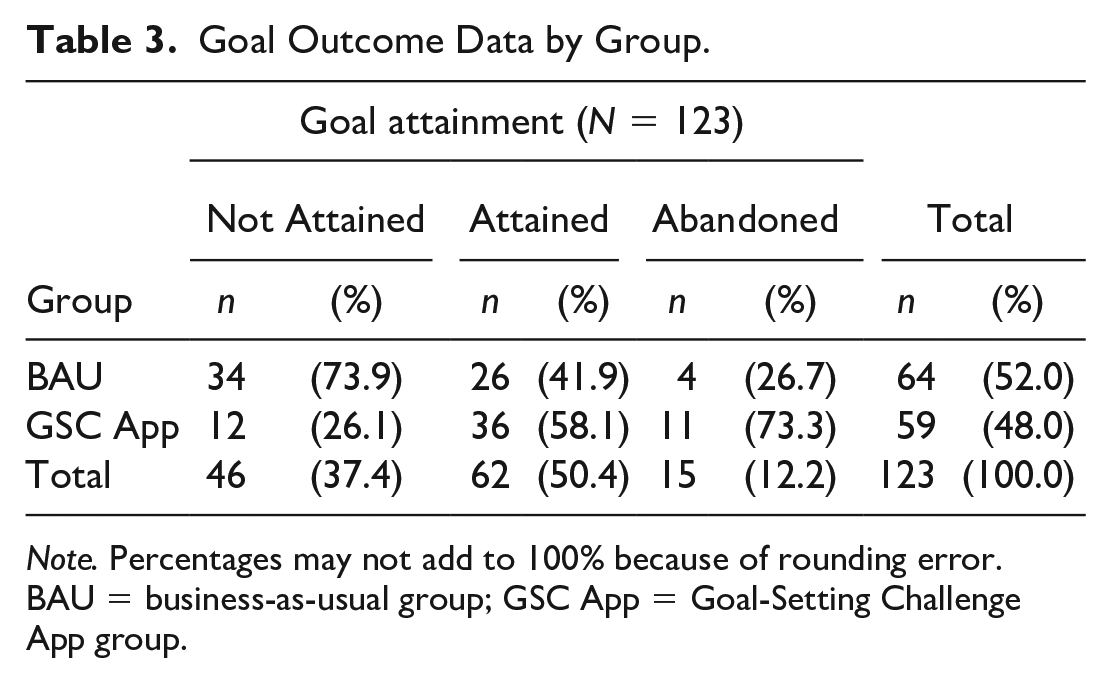

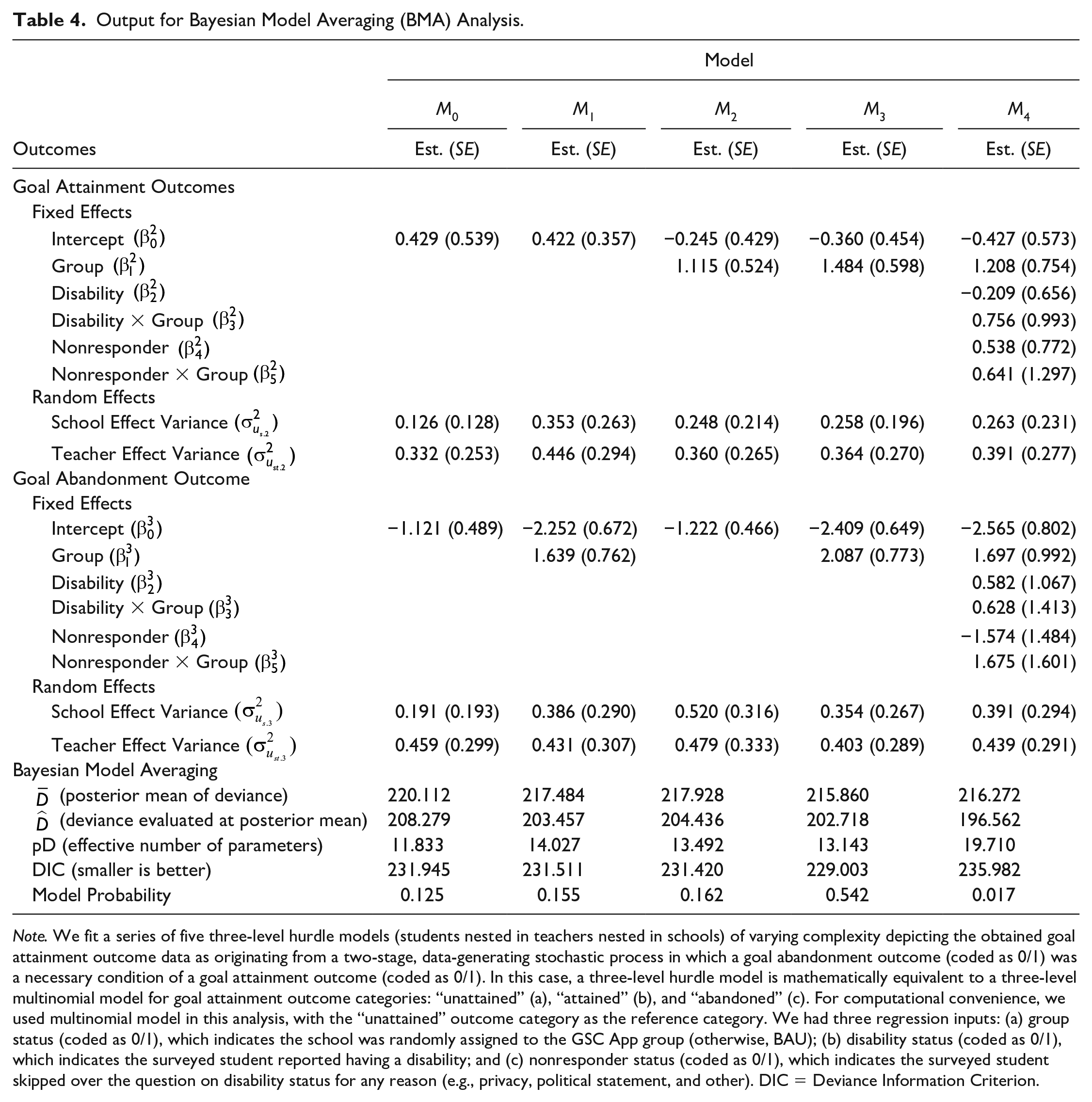

Table 3 shows sample statistics summarizing goal attainment outcome data across the BAU and GSC App groups, and Table 4 provides the result of BMA analysis. Despite not yet being able to accept or reject any models, BMA analysis is 72.1% confident that the correct model contains a positive group effect on goal attainment outcomes. In this case, this probability is equivalent to the sum of model probabilities of

Goal Outcome Data by Group.

Note. Percentages may not add to 100% because of rounding error. BAU = business-as-usual group; GSC App = Goal-Setting Challenge App group.

Output for Bayesian Model Averaging (BMA) Analysis.

Note. We fit a series of five three-level hurdle models (students nested in teachers nested in schools) of varying complexity depicting the obtained goal attainment outcome data as originating from a two-stage, data-generating stochastic process in which a goal abandonment outcome (coded as 0/1) was a necessary condition of a goal attainment outcome (coded as 0/1). In this case, a three-level hurdle model is mathematically equivalent to a three-level multinomial model for goal attainment outcome categories: “unattained” (a), “attained” (b), and “abandoned” (c). For computational convenience, we used multinomial model in this analysis, with the “unattained” outcome category as the reference category. We had three regression inputs: (a) group status (coded as 0/1), which indicates the school was randomly assigned to the GSC App group (otherwise, BAU); (b) disability status (coded as 0/1), which indicates the surveyed student reported having a disability; and (c) nonresponder status (coded as 0/1), which indicates the surveyed student skipped over the question on disability status for any reason (e.g., privacy, political statement, and other). DIC = Deviance Information Criterion.

Interestingly, although not necessarily expected, BMA analysis is 71.4% confident that the correct model contains a positive group effect on goal abandonment outcomes. In this case, this probability is equivalent to the sum of

Follow-Up Research Question: Is It Probable that Self-Reported Disability Status Is a Moderator of Goal-Attainment Outcomes by Group?

The model shown in the last column in Table 4,

Discussion

The present findings support the promise of the GSC App, a technology-delivered version of the SDLMI, for impacting student transition goal-attainment outcomes, compared with BAU in transition planning. The preliminary estimate of a threefold increase in the probability of students attaining their transition goals when using the GSC App during an academic semester of implementation is very promising, as it suggests positive impacts similar to or even greater than teacher-delivered instruction, particularly after one semester (Shogren, Burke, Antosh et al., 2019). Furthermore, like the teacher-delivered SDLMI, the GSC App was designed to be used over multiple semesters, enabling students to build on their experiences setting goals, creating action plans to achieve their goals, and self-evaluating their progress iteratively. There is a need for ongoing, longitudinal research on the impacts of the GSC App.

In addition, the results suggest the importance of exploring goal abandonment in future research, as the most probable model suggested students in the GSC App group were slightly more likely to abandon goals (i.e., stated at the end of the semester that they decided not to complete their goal). While this initially might not seem like a positive outcome, part of the SDLMI includes students learning to identify and work toward goals that are the “right match” with their preferences, interests, beliefs, and values (Shogren, Hicks et al., 2021). Early on, when first utilizing abilities and skills associated with self-determination within the context of transition planning, students may find they need to adjust their goals as they increasingly self-direct the goal-attainment process. Therefore, these findings may suggest the GSC App supported students to critically consider their goals, action plans, and self-evaluation processes as they engaged in the three phases of the SDLMI, identifying when they needed to revise their goal, an opportunity students do not typically have with goals identified on their transition plan. Although longitudinal implementation of the GSC App was beyond the scope of the current analysis, when the GSC App is used iteratively, students can change or refine their goal the next time they work through the GSC App. Future research should explore the focus of students’ transition goals using the GSC App across semesters to identify whether students in fact revise their goals after goal abandonment in following semesters and if this leads to positive outcomes over time. This work could inform how teachers support students to use the GSC App to learn from each iteration of the SDLMI, continually building abilities, skills, and attitudes associated with self-determination and working toward transition goals they identify as meaningful in their lives. While these initial findings provide compelling information to drive future research and considerations for practice, there are limitations of the current analyses that must be considered. In the following sections, we described these limitations, COVID-19–related impacts, and directions for future research and practice.

Limitations

This study was designed to provide an initial evaluation of the potential efficacy of the GSC App on transition goal-attainment outcomes for secondary students receiving special education services. Goal-attainment outcomes came from sets of nested units (students [Level 1], teachers [Level 2], and schools [Level 3]), and there was one instance of cross-classification where one teacher served in three schools. To compensate for this limitation, multilevel modeling procedures were used to fold in the extra uncertainty stemming from this extra data complexity into inferences from this study. Given the reduced number of schools available in the analytic sample (n = 12), as well as the small number of teachers including one teacher who worked across three schools in a rural district, we restricted inferences to model probabilities within a Bayesian induction framework. This approach is valid with any sample size, and it allows for the examination of the probability there was an effect of the GSC App on students’ transition goal-attainment outcomes without first needing to reject the null hypothesis. However, while weighing the probabilities of different models and quantifying the probability of a group effect is possible using this framework, the logic of Bayesian induction encourages that the posterior probabilities emerging from this study become prior probabilities in future studies to sharpen the knowledge base. Thus, ongoing work is needed to replicate findings in new, larger samples to make more precise inferences about effect sizes, reject the null hypothesis, and identify important moderators. However, it is important to note the findings from this study provide preliminary evidence and support for this future work.

There was 30.9% unexplained student attrition in the overall sample (25.6% in BAU group and 35.9% in GSC App). This is an important limitation of this analysis as unexplained attrition may bias effect size estimates. To be clear, the extent to which the COVID-19 pandemic explains this attrition is unknown. However, even if in the highly unlikely event none of this unexplained attrition was related to the pandemic, the positive effect size of GSC App implementation is a relatively robust finding as our attrition sensitivity analysis suggested that except under the most extreme conditions (all missing outcomes in the GSC App group reflect low goal attainment and all missing outcomes in the BAU group reflect high goal attainment) the main conclusions drawn in this study would not change, regardless of the values of these missing data. Finally, because of the small sample size, we were not able to fully and robustly explore sociodemographic characteristics of students, teachers, or schools that may have influenced transition goal-attainment outcomes. There is a need to design future work to address equity and ensure that students are not marginalized in the design and implementation of self-determination interventions in school systems, as this is an issue that has been identified in previous research, when the experiences of racially and ethnically marginalized students and alignment of instruction with their funds of knowledge and valued outcomes are not explicitly examined (Shogren, Scott et al., 2021). There is also a need to further explore implementation in rural schools, where teachers are often serving multiple students across schools as occurred in our sample.

COVID-19–Related Impacts

As noted in the description of the sample, challenges for schools, teachers, and students related to the COVID-19 pandemic led to school sample loss. Although 15 schools were randomized to either the GSC App group or BAU group, only 12 schools contributed data to this analysis. Of these three schools lost before any data collection, one school was randomized to the GSC App group, and two schools were randomized to the BAU group. Because the COVID-19 challenges schools experienced affected both the GSC App and BAU groups, the lost schools were counted as sample loss rather than unexplained attrition (WWC, 2020). However, even within the 12 schools that did participate in the study, there were ongoing and significant impacts on teacher and student engagement reflected by higher teacher and student sample loss rates across conditions than seen in any previous research with the SDLMI (e.g., Shogren, Burke, Antosh et al., 2019; Shogren, Hicks et al., 2021). For example, six teachers ceased participation in the study after training and baseline data collection. The stories behind these teacher and student sample losses vary, given unique circumstances; however, the unifying theme relates to the extraordinary challenges caused by the pandemic. For example, one of the teachers dropped out before starting the GSC App due to discomfort with novel online instructional resources that was exacerbated by the new demands of the COVID-19 pandemic, while other teachers reported feeling overwhelmed and stressed by the idea of adding another task to their list of instructional activities. These observations are consistent with studies of teacher stress during the pandemic (Klapproth et al., 2020; Pressley, 2021). But since this sample loss among teachers was related to the pandemic, we again classified this as sample loss rather than unexplained attrition. After removing explainable sample loss at the school and teacher levels, we were left with no unexplained attrition rates among teachers and schools but a worrisome unexplained attrition rate among students of 30.9% (with a 10.3% unexplained differential attrition rate between groups). These attrition rates suggest the significant impacts on student learning opportunities in transition resulting from the COVID-19 pandemic and the critical need to rapidly and immediately intervene to address these lost opportunities (Rowe et al., 2020).

Although it would be unwise to interpret the exact value of the effect size obtained in this study as the literal truth given unexplained attrition among students, overlap of teachers and schools, and reduced sample sizes, we can still say there was probably a positive effect of a meaningful magnitude given the use of Bayesian induction and sensitivity analysis. Moreover, from a practical perspective, the attrition rates themselves provide important insight into technology-delivered intervention during virtual learning. The GSC App was designed to be delivered via technology but still in-person, prior to COVID-19 pandemic disruptions. Implementation plans for the 2020 to 2021 academic year had to be changed more drastically for the GSC App group than the BAU group because of the additional supports that had to be in place for teachers and students to navigate engagement in technology-delivered instruction during virtual instruction (e.g., logging into the GSC App while also logged onto a virtual learning platform). Teachers had to troubleshoot issues with students online, not during in-person instruction in the classroom, necessitating a different set of competencies and new demands on teachers (Neece et al., 2020; Rowe et al., 2020). Furthermore, not all teachers were prepared or confident with supporting students via technology, particularly as teachers were essentially supporting students to engage in technology via technology as the GSC App platform and the virtual learning platforms (e.g., Zoom, WebEx, and Google Meet) were not integrated. These issues must be considered as we determine the ongoing impact of virtual learning on students with disabilities, as it is likely there were inequities in students’ access to and engagement in instruction based on a variety of factors (Neece et al., 2020). It is worth noting that even under these conditions, for the students in the GSC App group, over 81.0% completed all GSC App lessons during the semester.

The promising findings, even during these unprecedented delivery conditions, suggest the potential of the GSC App to positively impact transition goal-attainment outcomes, an issue that is likely to become more and more important given the impacts of the pandemic, particularly on students that did not engage or engaged inconsistently in virtual learning. Ongoing work will be needed to test the impacts of the GSC App as schools transition back to in-person learning. As a teacher using the GSC App in a Southeast state noted during exit interviews: I would love to do it again next year [2021-2022 academic year] when they’re in class and be able to sit down with them all the time and us like walk through it together. So, I know it’s more uniform and everybody’s doing the same things at the same time.

Implications for Research and Practice

As noted, ongoing work is needed to replicate these findings and test the longitudinal impacts of ongoing exposure to goal-focused self-determination and transition instruction using the GSC App. Ongoing work is also needed focused on exploring the impacts of various ways that students can engage with the GSC App, including during in-person group instruction, independently while working on transition goals at school or at home, and during virtual instruction. Ongoing research is also needed to explore the level of support that students, particularly students with more extensive support needs, and their teachers need to be able to navigate and work through the GSC App. While designed to be largely self-directed, with multiple built-in accessibility features (see Mazzotti et al., 2022), the degree to which teacher support is needed remains to be determined. During virtual learning, for example, students often needed support logging into the GSC App, remembering passwords, and navigating to the login screen. Furthermore, additional research is needed to systematically explore the factors that influence engagement of diverse learners with the GSC App, ensuring that the GSC App includes features that advance equity and combat marginalization in transition services, a long-standing issue in the field (Trainor et al., 2008, 2020). Given work suggesting self-determination interventions can lead to decreases in racially and ethnically marginalized students’ self-determination, particularly when strengths, cultural identities, and asset-based approaches are not infused in self-determination instruction (Scott et al., 2021; Shogren, Scott et al., 2021), ongoing focus needs to be placed on evaluating if the GSC App leads to equitable outcomes, or if additional work is needed to ensure the cultural responsivity of the GSC App. Another issue that needs further attention is exploring students’ self-perceptions of their disability and its impact on self-determination and goal-attainment outcomes, as previous work has suggested students’ disability awareness and identity influence outcomes of SDLMI instruction (Shogren, Pace et al., 2022). We did not replicate these findings in the current analyses, and the small sample size may have been a limiting factor.

There is also a need to explore the impacts of the combined use of the GSC App along with teacher support for goals and self-determination instruction during other instructional activities. Many of the features of the teacher-delivered SDLMI could be integrated into transition instruction to support and generalize content students are learning in the GSC App. Some combination of teacher- and technology-delivered supports and instruction may be critical to impact outcomes, particularly given the complexity of transition planning and goal attainment. Combining instructional components from the teacher-delivered SDLMI with the GSC App may be a complementary approach, providing teachers and students the benefits of both formats (e.g., using the GSC App to decrease implementation time demands, and using teacher-delivered SDLMI for personalized supports based on students’ strengths and support needs).

Conclusion

Given the ongoing impacts of the COVID-19 pandemic on student learning opportunities and transition outcomes, there is a critical need to rapidly explore tools that show promise to enhance teachers’ practices and student outcomes. The GSC App shows this promise and should be further researched to support student transition goal attainment during pandemic recovery and beyond. Ongoing issues related to prioritizing self-determination and transition instruction as well as teacher training and time to implement self-determination instruction and technology-based supports will need to be addressed in this ongoing work. As one teacher from a Midwest state reflected, Helping a kid learn how to set a goal is worthwhile in my book, and what it comes down to is the nuts and bolts and that’s what [the GSC App] does—it helps them, you know, with that and even thinking about what a goal is. When you talk with kids and they say they don’t have any goals, it’s like “Yes, you do! You just don’t know [how] to put the word goal with what you want.” So, connecting those two helps kids, and I think [it] is part of our job.

Footnotes

Authors’ Note

The opinions expressed are those of the authors and do not represent views of the Institute or the U.S. Department of Education.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The research reported here was supported by the Institute of Education Sciences, U.S. Department of Education, through Grant R324A180012 to The University of Kansas.

ORCID iDs

Open Practices

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.