Abstract

This study comparatively analyzes the impacts of practicing new test questions and reviewing old test questions on the exam scores of sophomore students in English-Chinese translation courses, aiming to provide empirical evidence for optimizing teaching strategies. A quasi-experimental design was adopted, with 90 sophomore students from two parallel classes randomly assigned to an experimental group (n = 45, practicing new questions) and a control group (n = 45, reviewing old questions). Both groups received 16 weeks of practice, with pre-test and post-test scores collected to measure learning gains. Statistical analyses included paired t-tests (to assess intra-group pre-post improvements), Welch’s t-test, and one-way ANOVA, supplemented by effect size calculations (Cohen’s d, η2) to evaluate practical significance. Results showed that both strategies significantly improved translation scores, and the experimental group showed a larger gain. Baseline equivalence was confirmed (t = −0.574, df = 88, p = .5676, Cohen’s d = 0.121). Post-test comparisons revealed the experimental group outperformed the control group (t = −4.162, df = 78.55, $p < .001$, Cohen’s d = 0.877). Pedagogically, we recommend a 16-week schedule—the rationale for this schedule is grounded in the study’s core findings: (1) new question practice is more effective for skill expansion, and (2) old question review is more effective for skill consolidation, while balancing constraints of Chinese higher education. The schedule is as follows: allocate 70% of class time to new questions in Weeks 1 to 4, a 50% new and 50% old mix in Weeks 5 to 8 (competence expansion), 30% new and 70% old questions in Weeks 9 to 12 (consolidation), and 40% new and 60% old questions in Weeks 13 to 16 (final review).

Plain Language Summary

This study compared how doing new English-Chinese translation test questions and reviewing old ones affect sophomore students’ exam scores, to help improve teaching. It used a quasi-experiment: 90 students from two similar classes were split into two groups (45 each). The experimental group practiced new questions, and the control group reviewed old ones, both for 16 weeks. Pre-test and post-test scores were collected, and stats like paired t-tests and ANOVA were used to analyze results, plus effect sizes to check practical value. Results showed both methods boosted scores, but the new-question group improved more. The two groups had similar baseline scores (pre-test). Post-test, the new-question group scored higher, and ANOVA confirmed their bigger gains. Practicing new questions worked better, especially for flexible paragraph translation and sight translation. The study suggests a 12-week class schedule: 70% new questions (Weeks 1–4), 50% each (Weeks 5–8), and 70% old questions (Weeks 9–12).

Keywords

Introduction

In the realm of university education, the cultivation of students’ comprehensive capabilities (Lamo, 2019) and the elevation of their academic performance (Adekunle et al., 2024) is important. Among the diverse range of courses, English-Chinese translation courses play a pivotal role in nurturing students’ cross-cultural communication skills (Yujuan, 2023). In an era of increasing globalization, the ability to proficiently translate between English and Chinese is not only a fundamental requirement for students’ future career development but also an essential means for them to engage in international exchanges and cooperation (Y. Zhao et al., 2024).

A substantial body of research has been dedicated to exploring the intricate relationship between learning strategies and students’ academic performance (Donker et al., 2014; Neroni et al., 2019). Theories such as the memory theory (Murray, 2012) and knowledge transfer theory (Nokes, 2009) serve as fundamental pillars in understanding this relationship. Memory theory (Sumrall et al., 2016), which encompasses concepts like short-term and long-term memory, emphasizes the role of encoding, storage, and retrieval of information. In the context of translation learning, students need to remember vocabulary, grammar rules, and translation techniques. Through the use of mnemonic devices, a form of memory-enhancing strategy, students can better retain new vocabulary, which in turn improves their performance in translation tasks involving word-level comprehension and production. Knowledge transfer theory posits that learning in one context can influence performance in another related context (Herfeld & Lisciandra, 2019). In translation courses, students who have mastered basic translation skills in simple sentence translation can potentially transfer these skills to more complex paragraph or text-level translation. Previous studies have shown that students who actively engage in knowledge-transfer-promoting strategies, such as analyzing similarities and differences between different translation tasks, tend to achieve higher scores in exams. The study (Polack & Miller, 2022) synthesizes the finding that retrieving previously studied material enhances long-term performance. In addition, this study outlines the history, applications, and opens questions of the testing effect, concluding that its robustness challenges learning theories to explain how retrieval processes boost future performance. Over the past 20 years, test-enhanced learning has flourished, yet many core questions are now answered. The work (Pan et al., 2024) gathers leading scholars to chart next directions: (1) integrating retrieval practice with elaborative or generative activities; (2) under-studied strategies such as pre-testing, spaced retrieval, forward testing, and successive relearning; (3) optimizing self-regulated and contextual practice testing; and (4) promoting student and educator acceptance of testing as a learning tool. The work (Chen et al., 2025) links the testing effect—superior retention after retrieval than after restudy—to predictive learning, where the mismatch between a generated answer and its feedback (prediction error) drives learning. Using three experiments and an associative memory network, it shows that only models embedding predictive learning capture the full pattern of testing-related gains when feedback is provided.

During the teaching process of translation courses, educators are constantly on the quest for effective learning strategies (Wen et al., 2023). The goal is to enhance students’ translation proficiency, enabling them to accurately and fluently convey information between the two languages, and simultaneously improve their performance in related examinations (Mei & Chen, 2022). Practicing new test questions and reviewing old test questions are two prevalent strategies adopted by teachers and students alike (Binks, 2018; Yang et al., 2019). Nationwide classroom data confirm that both strategies are embedded in daily instruction. The study (A. Zhao et al., 2025) tracked 157 Chinese primary-school language-arts teachers for one academic year and found that repeated use of the same task types (e.g., personal narratives, summaries) remained the dominant pattern, with teachers’ fall-semester beliefs significantly predicting spring-semester practice, illustrating the inertia of “old-item” routines. Complementarily, the work (Homa et al., 2019) showed that non-repeating, novel instances did not slow concept acquisition and even produced marginally superior transfer, suggesting that continuous new-item exposure is at least equally effective. In EFL higher education, the study (Aljabri, 2024) randomly assigned 270 Saudi university students to repeated-study versus repeated-testing conditions; delayed tests revealed significantly higher retention under repeated testing of the same prose passage, supplying causal evidence for the “old-item retrieval” advantage. The work (Zhang & Zuber, 2020) further demonstrated that semantic repetition benefited memory only when learners passively read the items, whereas active enactment nullified the repetition effect—implying that active processing (e.g., translation) may reduce the unique utility of old-item review. The study (Max & Baldwin, 2010) compared repeated versus novel utterances in a speech-motor paradigm; after 24 hr, fluency gains were retained only for the repeated utterances, underscoring the long-term value of practicing identical sequences. Conversely, novel utterances showed no retention, indicating that purely “new-item” practice without any repetition may fail to consolidate. Taken together, these studies verify that both approaches are widespread and educationally consequential; nevertheless, no investigation has directly contrasted repeated-item retrieval with novel-item practice within the same English–Chinese translation course, leaving the optimization of classroom time allocation unresolved. The present experiment addresses this empirical gap.

To clarify the scope of the study, we explicitly define the two learning strategies at the core of this study:

Practicing new test questions: Engaging with unseen translation materials selected from recent academic journals and aligned with the course’s skill objectives. These materials feature novel contexts, domain-specific vocabulary, and syntactic structures that students have not encountered in class lectures or homework assignments.

Reviewing old test questions: Re-engaging with previously completed translation materials from the course’s homework sets and unit tests. This process requires students to independently re-translate the texts, annotate errors), and revise based on instructor feedback—rather than merely recalling previously correct answers.

Existing research has not provided a clear-cut answer as to which strategy is more effective in promoting students’ translation abilities and improving their exam scores. This knowledge gap has left teachers in a dilemma when formulating teaching plans and students when choosing learning methods. In addition, the sophomores were selected as the research participants for three key reasons: First, they have completed 1 to 2 semesters of basic English language courses (e.g., intensive reading, academic writing) and an introductory translation course, equipping them with the minimum proficiency to engage meaningfully with both new and old question practice. This avoids the confounding variable of students being too novice to handle novel tasks or too advanced to show measurable gains; Second, the sophomores are transitioning from sentence-level translation to more complex paragraph- and text-level translation. This transitional stage is when practice strategy choices have the most significant impact on long-term translation competence—making it the ideal window to compare strategy effectiveness; Third, as second-year students, they share similar academic backgrounds and limited exposure to specialized translation strategies. This reduces pre-existing differences in strategy familiarity that could skew experimental results.

This study is designed to fill the aforementioned research gap. Specifically, its primary objective is to conduct a systematic and empirical comparative analysis of the impacts of practicing new test questions and reviewing old test questions on the exam scores of sophomore students in English-Chinese translation courses. By doing so, the research aims to provide solid empirical evidence for the optimization of teaching strategies in this particular course. The insights gained from this study will help teachers make more informed decisions regarding how to allocate teaching time between new question practice and old question review. For students, it will offer guidance on choosing the most suitable learning approach based on their own learning styles and goals. Ultimately, this research endeavors to contribute to the improvement of teaching quality and learning efficiency in English-Chinese translation courses, thereby promoting the overall development of students’ translation capabilities.

Literature Review

Related Research on Practicing New Test Questions and Reviewing Old Test Questions

Practicing New Test Questions

In various academic disciplines, practicing new test questions has been widely regarded as a means to expose students to novel knowledge and different types of problems (Ditta et al., 2020). In science education, new problems often require students to apply existing knowledge in innovative ways, thereby expanding their problem-solving skills (Okolie et al., 2022). In the context of translation courses, new test questions can stimulate students’ creativity and critical thinking. They are forced to grapple with unfamiliar texts, which encourages them to explore different translation strategies (Kozhevnikova, 2014). The study (Max & Baldwin, 2010) demonstrated that repeated utterances produced significantly greater fluency retention after 24 h than novel utterances, indicating that prior practice on the same material is critical for lasting motor learning. The study (Homa et al., 2019) showed that non-repeating training instances did not slow concept acquisition and even marginally improved transfer, suggesting that continuous exposure to new exemplars can be at least as effective as repetition. The study (Zhang & Zuber, 2020) found that semantic repetition boosted memory only for read items, whereas enacted items were unaffected, implying that active processing (like enacting or translating) may immunize learning against the benefit—or cost—of repetition.

In addition, the study (Dawadi, 2020) reported that the majority (70%) of students were motivated to learning English in the pre-test and 54% of the students were discouraged to learning English in the post-test context. It is implied that the students preferred new testing methods rather than traditional review approaches. The pre-test might have stimulated students’ motivation, due to its novelty or unique design, which brought a sense of freshness and a desire for challenge. But in the post-test situation, the significant decline in students’ enthusiasm could be attributed to the dull and repetitive nature of the review process. This made students lose interest, thus demonstrating their preference for new testing forms. However, this strategy is not without its drawbacks. In the absence of a firm basic-knowledge base, students might find it arduous to comprehend and tackle novel problems. Moreover, the lack of a systematic review could result in weak retention of crucial concepts.

Reviewing Old Test Questions

Reviewing old test questions has long been recognized as an effective strategy for reinforcing learned knowledge. For example, in the field of mathematics, going back to and resolving old problems enables students to discern problem-solving patterns, as noted by (Yen & Lee, 2011). This practice not only enhances their performance on similar problems but also facilitates the understanding of more intricate concepts, as per (Zimmermann, 2016). The study (Elgort & Nation, 2010) demonstrated that a method for learning second language (L2) vocabulary, which involves repeatedly retrieving the form and meaning of words, can prompt the acquisition of specific aspects of vocabulary knowledge. The work (Flores et al., 2023) put forward a predictor of word learning. This predictor highlights the role of prior knowledge in leveraging known words to learn additional words. Their study also explored item-based variability in vocabulary development, making use of lexical properties of distributional statistics obtained from a large corpus of child-directed speech. This phenomenon occurs in part because it fortifies the neural connections related to these words, allowing for more efficient recall from long-term memory, as Luk et al. (2020) pointed out. The study (Buchin & Mulligan, 2022) manipulated learners’ prior knowledge across two scientific domains and found that the memorial advantage of retrieval practice over restudy remained virtually identical for both high- and low-knowledge texts, indicating that repeated testing of previously studied material is effective regardless of students’ entry level. The study (Aljabri, 2024) showed that Saudi EFL learners in a repeated-testing condition recalled slightly less immediately than those who restudied, yet after 1 week the testing cohort significantly outperformed the restudy cohort, supplying causal evidence that old-item retrieval beats mere re-reading in delayed retention. A year-long tracking study (A. Zhao et al., 2025) revealed that Chinese primary-school language arts teachers maintained highly stable, balanced writing routines throughout the academic year; their fall-semester pedagogical beliefs significantly predicted spring-semester practices, underscoring the inertia of retrieval-oriented repetition once established.

Across disciplines and learning contexts, the evidence on practicing new test questions and reviewing old test questions points to clear, complementary roles for each strategy. Reviewing old test questions consistently emerges as a powerful tool for solidifying foundational learning: in mathematics, it helps students identify recurring problem-solving patterns; in L2 vocabulary acquisition, it strengthens long-term retention of word meanings through repeated retrieval; and in translation, it reinforces mastery of grammar rules, familiar translation techniques, and error correction—though this strength can become a limitation if review devolves into rote recall, restricting learners’ ability to adapt to unexpected tasks. Practicing new test questions, by contrast, stands out for fostering adaptive and higher-order skills: in science education, it pushes students to apply existing knowledge in innovative ways; in language learning, it exposes learners to authentic, novel contexts that build cultural awareness and lexical inferencing; and in translation, it encourages exploration of flexible strategies for unfamiliar texts—from navigating cross-domain terminology to adjusting to new genre conventions—though it may create unnecessary barriers for learners with underdeveloped foundational knowledge. Together, these insights underscore that neither strategy is universally superior; instead, their value depends on the learning goals at hand—whether prioritizing accuracy and retention (old questions) or flexibility and adaptive thinking (new questions)—a balance particularly critical for skill-intensive fields like translation.

Across disciplines and learning contexts, the evidence on practicing new test questions and reviewing old test questions points to clear, complementary roles for each strategy. Reviewing old test questions consistently emerges as a powerful tool for solidifying foundational learning: in mathematics, it helps students identify recurring problem-solving patterns; in L2 vocabulary acquisition, it strengthens long-term retention of word meanings through repeated retrieval; and in translation, it reinforces mastery of grammar rules, familiar translation techniques, and error correction—though this strength can become a limitation if review devolves into rote recall, restricting learners’ ability to adapt to unexpected tasks. Practicing new test questions, by contrast, stands out for fostering adaptive and higher-order skills: in science education, it pushes students to apply existing knowledge in innovative ways; in language learning, it exposes learners to authentic, novel contexts that build cultural awareness and lexical inferencing; and in translation, it encourages exploration of flexible strategies for unfamiliar texts—from navigating cross-domain terminology to adjusting to new genre conventions—though it may create unnecessary barriers for learners with underdeveloped foundational knowledge. Together, these insights underscore that neither strategy is universally superior; instead, their value depends on the learning goals at hand—whether prioritizing accuracy and retention (old questions) or flexibility and adaptive thinking (new questions)—a balance particularly critical for skill-intensive fields like translation. In general, the above-mentioned studies raise critical, unaddressed questions: Do old questions still prioritize accuracy in cross-linguistic tasks (e.g., English-Chinese sentence translation)? Can new questions enhance the unique higher-order skills required for translation (e.g., paragraph context adaptation, sight translation)? In translation courses, revisiting old translation exercises helps students to solidify their understanding of grammar rules, vocabulary usage, and translation techniques that they have already encountered. The repetition involved in reviewing old questions can also enhance students’ confidence and speed in answering similar questions in exams. By analyzing their previous mistakes during the review process, students can learn from their errors and avoid making the same mistakes again. However, if the review is done in a rote-learning manner, it may limit students’ ability to handle new and unexpected translation challenges.

Research Gaps and the Entry Point of This Study

The core research gap guiding this study is a broader lack of comparative research on two widely used educational pedagogies-practicing new test questions and reviewing old test questions-across educational contexts, with this gap being particularly impactful in skill-intensive domains like translation. While existing education research has validated the effectiveness of each strategy in isolation, few studies have directly compared the two pedagogies to identify which better supports learning gains, regardless of the subject area. This comparative void is not unique to translation but is acutely felt in disciplines that demand both accuracy and flexibility-where instructors must make urgent decisions about how to allocate limited class time. Our study focuses on English-Chinese translation not because “other courses have more research” but because translation serves as an ideal context to address this broader educational gap: it integrates linguistic, cognitive, and contextual skills, making it a rigorous testbed to explore how each pedagogy impacts distinct learning outcomes. By comparing the two strategies in translation, we aim to fill a longstanding gap in general educational research—while also providing actionable guidance for translation educators.

This study aims to fill this gap by conducting a targeted empirical research. By focusing on a specific group of students (sophomores) in a particular course (English-Chinese translation), we can provide more detailed and practical insights into the effectiveness of these two strategies. The results will not only contribute to the theoretical understanding of translation learning strategies but also offer practical guidance for teachers and students in the teaching and learning of English-Chinese translation courses.

In general, this study aims to address the following three research questions (RQ):

Methodology

Research Design

The present study employed an experimental research design, which is a powerful approach for examining cause-and-effect relationships. In this experiment, the independent variable was the learning strategy, with two levels: practicing new test questions (assigned to the experimental group) and reviewing old test questions (assigned to the control group). The dependent variable was the students’ exam scores in the English-Chinese translation course. To be specific, this study adopted a quasi-experimental design, as the two groups were naturally formed parallel classes (non-random student assignment). However, pre-test results confirmed group homogeneity (t = −0.574, df = 88, p = .5676) to control selection bias.

The present study employed a quasi-experimental design to examine cause-and-effect relationships between learning strategy (independent variable) and translation scores (dependent variable). Figure 1 illustrates the study’s workflow. To ensure the internal validity of the experiment, strict control over other variables was exerted in the sampling process (as shown in Figure 1. Both groups of students were taught by the same instructor, followed the same teaching syllabus, and had an equal amount of class time. This was to eliminate any potential confounding factors that could influence the students’ performance other than the learning strategies being tested.

Sampling and group assignment flowchart.

As shown in Figure 1, two parallel classes were selected from a university’s sophomore English-Chinese translation courses. These classes were filtered for homogeneity (same instructor, identical syllabus, similar baseline translation ability) to control for confounding variables. A coin flip was used to randomly assign one class to the experimental group (n = 45, practicing new questions) and the other to the control group (n = 45, reviewing old questions). This randomization eliminated subjective bias in group allocation. Both groups completed a pre-test (Week 1) to confirm baseline equivalence, a 16-week practice (Weeks 2–17), and a post-test (Week 18) to measure learning gains. Paired t-tests, Welch’s t-test, and one-way ANOVA were used to analyze data, with effect sizes calculated to evaluate practical significance.

Research Subjects

A total of 90 sophomore students from two parallel classes in a university were selected as the research subjects. These two classes were chosen because they were taught by the same teacher and had a similar academic background in terms of previous English-Chinese translation course performance and overall language proficiency levels. Group assignment was determined via coin flip—heads designated one class as the experimental group (n = 45), and tails as the control group (n = 45), eliminating subjective bias in allocation. Sample size was calculated using G*Power 3.1, with parameters set to α = .05, β = .80, and effect size f = 0.25 (Kang, 2021). The minimum required sample size was 78, and the sample of 90 met statistical power requirements. As shown in Table 1 Demographic characteristics and baseline comparisons of the two groups, baseline comparisons revealed no significant differences between the two groups in gender, age, English proficiency (CET-4), or translation learning experience (all p > .05), confirming group equivalence and eliminating pre-existing confounders.

Demographic Characteristics and Baseline Comparisons of the Two Groups.

As shown in Table 1, the control group had 18 male and 27 female students, while the experimental group had 16 male and 29 female students (χ2 = 0.18, p = .67), indicating no gender imbalance. Age: The control group had a mean age of 20.3 ± 0.8 years, and the experimental group had a mean age of 20.5 ± 0.7 years (t = 1.12, p = .27), showing no significant age difference. And CET-4 scores (a national English proficiency test in China) were 482 ± 33 for the control group and 485 ± 32 for the experimental group (t = 0.41, p = .68), confirming similar language foundations. In addition, 10 students in the control group and 12 in the experimental group had prior translation learning experience (χ2 = 0.31, p = .58), ensuring no pre-existing strategy bias.

Prior to the experiment, a pre-test was administered to all students. The results of this pre-test were analyzed using statistical methods to ensure that there were no significant differences in the initial translation abilities between the two groups. This step was crucial in establishing the homogeneity of the two groups at the start of the experiment, which further enhanced the reliability of the experimental results.

Research Tools

Teaching Materials

To ensure consistency with the course syllabus and experimental validity, teaching materials for both groups were aligned with the English-Chinese translation course’s core objectives but differed in novelty. Details of the teaching materials are as follows:

The “Old” materials were drawn from the previous semester’s homework (Weeks 1–8) and unit tests (Units 1–4), so students had already mastered the content and could devote the experiment to error analysis and revision rather than initial learning. The mix matches current course priorities: 30% sentence translation, 50% paragraph translation, 20% oral translation. Tasks target foundational skills: sentences emphasize high-frequency academic phrases (“sustainable development,”“data analysis”) and basic shifts; paragraphs use familiar, low-culture genres such as news reports on education policy or academic excerpts on language learning, 180 to 220 words each, two per session; oral texts are 140 to 160-word popular-science passages with simple syntax, one per session. Length is fixed: 15 to 20 words per sentence, 20 sentences per set. All items were verified against current skill goals; a sample paragraph on “online education trends” comes from Unit 2’s test in English-Chinese Translation: A Practical Course (Chinese version).

The “New” items were selected from 2022 to 2024 peer-reviewed journals such as Chinese Translators Journal and The Economist, and they were also taken from authentic professional briefs that were released by the UN and the World Bank. These texts were completely unseen by students, yet they still match the course goals because they embed fresh contexts, novel lexis and new cultural cues. The instructional focus is placed on adaptive transfer. Sentence tasks supply domain-specific terms and they present knotty syntactic problems that force students to reorder English absolute constructions into Chinese clauses. Paragraph tasks cover AI-ethics news, cross-cultural abstracts and smart-city specifications; each passage contains 200 to 240 words, two passages are offered per session, and they carry moderate culture-specific references such as “digital yuan.” Oral tasks present 160 to 180-word sight-translation scripts on topics and they demand real-time reformulation. The length limit is fixed at 18 to 25 words for each sentence, and every set contains 20 sentences. A typical example is the January 2024 Economist paragraph on AI ethics in healthcare; it explains that AI revolutionizes diagnosis through big-data pattern recognition, but it also warns that biased training data may lead to inequitable treatment, so regulators are now prompted to draft transparency rules.

Test Papers

In general, the 100-point test paper included: Sentence translation (30 points, 20 items) assessing basic grammar and vocabulary application; Paragraph translation (50 points, 2 passages: one news text and one academic text) evaluating context-dependent translation skills; Oral translation (20 points, 1 scientific text) measuring real-time translation flexibility. Scoring weights aligned with course priorities (highest for paragraph translation).

Two experts independently scored the test papers, with Cronbach’s α = .85 (pre-test) and α = .83 (post-test), meeting psychometric standards.

Data Collection and Analysis

Data Collection

The exam scores of the experimental group and the control group were collected at the beginning (pre-test) and end (post-test) of the semester. The pre-test scores were used to confirm the initial equivalence of the two groups, while the post-test scores were the main data for evaluating the effectiveness of the two learning strategies.

Data Analysis

The collected data was analyzed using the Statistical Package for the Social Sciences (SPSS). The independent-samples t-test was employed to compare the scores of the experimental group and the control group at both the beginning and end of the semester. This test was used to determine whether there were significant differences between the two groups in terms of translation ability at the start of the experiment (to ensure the experimental conditions were met) and to assess the differential effects of the two learning strategies at the end of the semester.

The paired-samples t-test was used to analyze the pre-and post-test score changes within each group. This allowed for an examination of whether each learning strategy led to a significant improvement in students’ translation scores over the course of the semester. By applying these statistical methods, the study was able to draw valid conclusions about the impacts of practicing new test questions and reviewing old test questions on students’ exam scores in the English-Chinese translation course.

Shapiro-Wilk tests confirmed that pre-test and post-test scores in both groups were normally distributed (control group pre-test: W = 0.965, p = .197; experimental group post-test: W = 0.960, p = .118), satisfying the assumptions of parametric tests. Levene’s tests showed homogeneous variances for pre-test scores (F = 0.956, p = .331) but heterogeneous variances for post-test scores (F = 5.408, p = .022). Welch’s t-test was therefore used for post-test inter-group comparisons to avoid bias. Effect sizes (Cohen’s d for t-tests, η2 for ANOVA) were calculated for all results to evaluate the practical significance of differences. Cohen’s d > 0.8 and η2 > 0.14 were considered large effects (Diener, 2010).

Results

Descriptive Statistics of Pre-test and Post-Test Scores

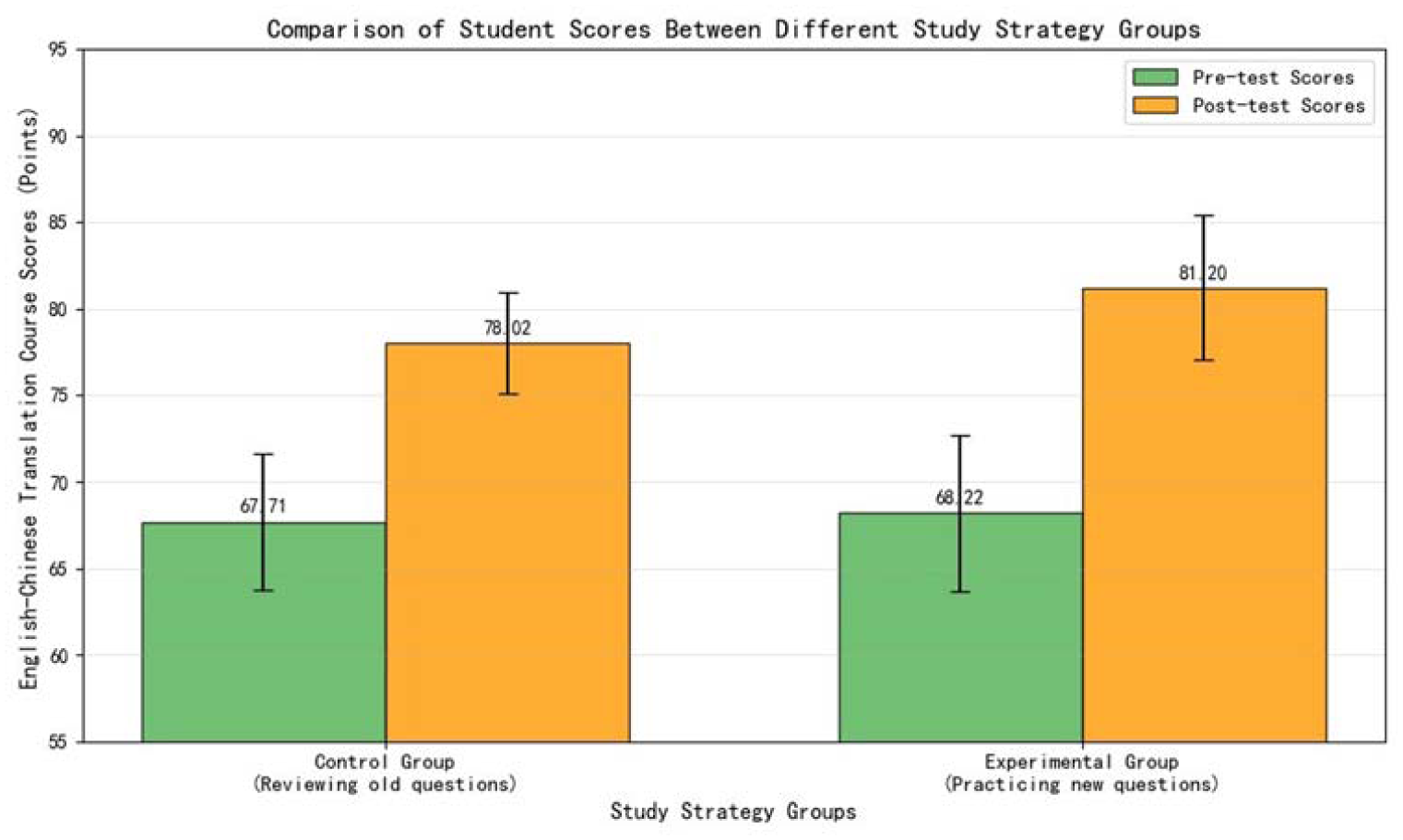

Descriptive statistics for pre-test and post-test scores in both the control and experimental groups are presented in Table 2 and Figure 2. At the baseline (pre-test), the control group (reviewing old questions) had a mean score of 67.71 ± 3.92, while the experimental group (practicing new questions) had a mean score of 68.22 ± 4.51. After 16 weeks, the control group’s mean post-test score increased to 78.02 ± 2.93, and the experimental group’s mean post-test score rose to 81.20 ± 4.20. The score improvement (post-test minus pre-test) was 10.31 ± 1.13 for the control group and 12.98 ± 0.83 for the experimental group, indicating that both strategies contributed to score gains, with the experimental group showing a larger average improvement.

Descriptive Statistics of Pre-Test and Post Test Scores for the Two Groups.

Pre-test and post-test score comparison between groups (with 95% CI).

Intra-Group Pre-test Versus Post-Test Comparisons

To determine if each practice strategy independently led to significant improvements in translation performance, paired t-tests were conducted within each group to compare pre-test and post-test scores (as shown in Table 3).

Intra-Group Pre-Test and Post-Test Score Comparison Results.

For the control group (reviewing old test questions), the mean pre-test score was 67.71 ± 3.92. After the 16-week intervention, the mean post-test score increased to 78.02 ± 2.93, representing a net improvement of 10.31 ± 1.13 points. The paired t-test revealed that this improvement was statistically highly significant (t = 60.431, df = 44, p < .001), with an extremely large effect size (d = 9.009). This indicates that reviewing old questions had a substantial and practically meaningful impact on enhancing students’ translation performance. For the experimental group (practicing new test questions), the mean pre-test score was 68.22 ± 4.51, and the mean post-test score rose to 81.20 ± 4.20, showing a larger net improvement of 12.98 ± 0.83 points. This improvement was also statistically highly significant (t = 103.755, df = 44, p < .001), with an even larger effect size (d = 15.467) than that observed in the control group. This confirms that practicing new questions was not only effective but also produced a more pronounced effect on improving translation scores. Collectively, these results demonstrate that both strategies significantly improved students’ English-Chinese translation scores. However, the experimental group, which practiced new questions, showed a greater magnitude of improvement.

Inter-Group Comparisons of Pre-test and Post-Test Scores

Pre-Test (Baseline) Comparison

To ensure the internal validity of the study, it was crucial to confirm that the two groups were comparable in terms of their initial translation ability before the intervention (as shown in Table 4). An independent samples t-test on pre-test scores revealed no statistically significant difference between the control group’s mean pre-test score (67.71 ± 3.92) and the experimental group’s mean pre-test score (68.22 ± 4.51; t = −0.574, df = 88, p = .5676). The effect size was very small (d = 0.121), indicating minimal practical difference between the groups at baseline. This homogeneity confirms that any subsequent differences observed in post-test scores can be reasonably attributed to the different practice strategies implemented, rather than pre-existing disparities in ability.

Inter-Group Pre-Test Score Comparison Results.

Post-Test Comparison

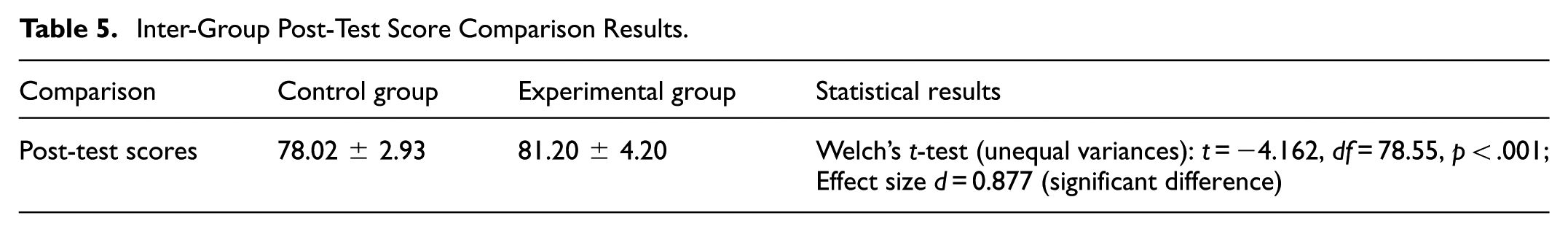

After the intervention period, an inter-group comparison of post-test scores was performed to directly evaluate the relative effectiveness of the two strategies (as shown in Table 5).

Inter-Group Post-Test Score Comparison Results.

Prior to the t-test, Levene’s test for equality of variances indicated that the assumption of equal variances was violated (F = 5.408, p = .022), so Welch’s t-test (correcting for unequal variances) was used. The results (as shown in Table 5) showed that the experimental group achieved a significantly higher mean post-test score (81.20 ± 4.20) compared to the control group (78.02 ± 2.93), with a statistically highly significant difference (t = −4.162, df = 78.55, p < .001) and a large effect size (d = 0.877). This finding further supports the conclusion that practicing new test questions is a more effective strategy for improving overall translation exam performance than reviewing old test questions.

ANOVA Analysis of Score Improvement

To quantify the extent to which the choice of practice strategy explained the variance in observed score improvements, a one-way ANOVA was conducted with practice strategy (new questions vs. old questions) as the independent variable and score improvement (post-test minus pre-test) as the dependent variable. The ANOVA results revealed a highly significant main effect of strategy type (F = 158.877, df = 1, 88, p < .001), with a very large effect size (η2 = 0.644). This indicates that approximately 64.4% of the total variance in students’ score improvements can be explained by the difference in practice strategies employed, underscoring the substantial impact of strategy selection on learning outcomes in translation pedagogy.

Discussion

Answers to the RQs

Theoretical Implications

The findings of this study contribute to three core theories, moving beyond practical teaching suggestions to advance theoretical understanding: (1) Skill acquisition theory (Button et al., 2008) posits that learning progresses through three stages: cognitive, associative, and autonomous. The old question group’s significant improvements (d = 9.009) align with the associative stage—repeated review of familiar content reduces errors and strengthens stimulus-response connections, consolidating foundational skills. In contrast, the new question group’s larger gains (d = 15.467) reflect progression toward the autonomous stage: novel tasks require learners to integrate rules flexibly. This extends skill acquisition theory by demonstrating that different practice strategies target distinct stages of skill development, with new questions accelerating the transition to higher-order autonomous performance-a key insight for translation pedagogy, which often prioritizes both accuracy and flexibility; (2) Cognitive load theory (Ouwehand et al., 2025) emphasizes that learning is optimized when instructional design aligns with working memory capacity. The old question group’s focus on familiar content minimizes extraneous cognitive load, allowing learners to allocate resources to error correction and skill refinement—explaining its effectiveness for consolidating basics. For the new question group, the novel texts require learners to engage in deep processing rather than passive recall. Our results show that this germane load, when appropriately calibrated, leads to superior learning outcomes—challenging the misconception that novel tasks overwhelm learners. Instead, the findings support the theory’s recent extension that germane load is critical for developing complex skills like translation, where flexibility and adaptability are essential; (3) Testing effect theory (Greving & Richter, 2018) argues that retrieval practice (testing) is more effective than re-studying for retention. Our study extends this theory by distinguishing between familiar content and new content in testing. The latter’s larger effect size (d = 15.467 vs. 9.009) demonstrates that testing with new questions not only reinforces existing knowledge but also requires learners to apply it to novel contexts. For translation pedagogy, this means the testing effect is amplified when tests require flexible application, rather than mere repetition—providing a theoretical basis for integrating novel practice tasks into assessment.

Furthermore, the findings should be interpreted within the socio-cultural and educational context of Chinese higher education, which shapes the effectiveness of the two strategies. First, China’s examination-oriented education system emphasizes standardized test performance, where familiarity with question types (via old question review) and adaptability to novel tasks (via new question practice) are both critical. The experimental group’s superior performance may reflect the complementary nature of these demands: new question practice aligns with the increasing diversity of translation tasks in national exams (e.g., CET-6 translation sections), while old question review reinforces the foundational accuracy required by exam scoring rubrics. Second, teacher-centered instruction, a common feature of Chinese classrooms, ensures consistent implementation of the teaching—all students received uniform guidance on strategy use, which may have amplified the observed effects compared to student-centered contexts where strategy application varies. Additionally, collectivist learning culture encourages peer learning: students in the experimental group often discussed novel translation challenges collaboratively, while the control group shared error-correction experiences—both of which may have enhanced strategy effectiveness. These contextual factors suggest that while the core finding (new question practice yields greater gains) may be generalizable to other examination-oriented, teacher-centered educational systems, the magnitude of the effect may vary in individualistic or student-centered contexts. Future cross-cultural studies should explore how cultural and educational norms moderate the relative effectiveness of the two strategies.

Cognitive Mechanisms

The superior performance of the New Question group is driven by three cognitive mechanisms triggered by ”desirable difficulties” (Bjork et al., 2013). First, contextual variability (genre, syntax, culture) broadens retrieval cues and builds a flexible strategy repertoire. Second, adaptive errors in novel tasks promote metacognitive reflection and error-driven learning, enhancing metalinguistic awareness. Third, repeated strategy adjustments train cognitive flexibility, which is essential for switching between comprehension and production across text types. These mechanisms collectively explain the significant score improvement (12.98 points) and large effect size (η2 = 0.644) observed in the New Question group.

Practicing new questions introduced such difficulties: unfamiliar text genres, cross-domain terminology, and novel syntactic structures forced students to move beyond rote recall and engage in deeper cognitive processing—such as adjusting translation strategies to fit new contexts or resolving ambiguities in unknown vocabulary.

In contrast, old question review, when not paired with deliberate analysis, risked creating an “illusion of mastery” (Bjork et al., 2013). Students in the control group often relied on memorized solutions to previously encountered questions, limiting their ability to adapt to new tasks. This mechanism explains why the experimental group outperformed the control group in sight translation—a task requiring real-time adaptation to unfamiliar content—with mean scores of 16.8 ± 2.1 versus 14.2 ± 2.5.

Practical Implications

Based on the effect size data and contextual constraints of Chinese higher education (e.g., 4 class hours per week for translation courses, average class size of 45 students, and heavy teacher workloads), we refine the 16-week stage-specific schedule to ensure feasibility:

Weeks 1 to 4 (Foundation Building): 70% new question practice (2.8 class hours/week) and 30% old question review (1.2 class hours/week). New questions are selected from open-access resources to reduce teacher preparation time; old questions are compiled from existing textbook exercises to avoid additional workload. To address class size challenges, group work is adopted: students complete new question practice in groups of 5, with teachers providing targeted feedback to 3 to 4 groups per class.

The Old Question group’s slight superiority in foundational skill retention justifies prioritizing familiar content in the early stages. Old questions should include previously taught sentence structures and high-frequency vocabulary, allowing students to consolidate basics with minimal cognitive load. The 30% new questions introduce gentle desirable difficulties to prevent rote learning, laying the groundwork for flexibility.

Weeks 5 to 8 (Competence Expansion): 50/50 mix (2 class hours each). New questions focus on genre-specific tasks aligned with national exam requirements (e.g., news and academic texts); old questions are revisited via error-analysis worksheets, which students complete independently before class—teachers only review common errors in class to save time.

As students master basics, balancing strategies leverages their complementary strengths. Old questions review key sentence-level skills, while New Questions expand to paragraph-length texts—targeting the contextual flexibility where the new question group excelled (35% vs. 22% relative improvement). This phase includes guided reflection on new question strategies to reinforce metacognitive awareness.

Weeks 9 to 12 (Consolidation): 30% new questions (1.2 class hours) and 70% old questions (2.8 class hours). Old questions are organized into thematic packages (e.g., technical terminology translation) and shared via learning management systems to facilitate self-directed review; new questions are designed as short, focused tasks (e.g., 15 min sight translation exercises) to fit limited class time.

New questions include sight translation of technical texts, cross-cultural content, and mixed-genre practice (e.g., switching between academic and news texts) to simulate real-world translation demands. Old Questions are limited to targeted review of persistent gaps to maintain accuracy without hindering flexibility.

Weeks 13 to 16 (Final Review): 40% new questions (1.6 class hours) and 60% old questions (2.4 class hours). New questions simulate exam conditions (e.g., 30 min paragraph translation tasks); old questions prioritize persistent errors identified throughout the semester, with teachers providing one-on-one feedback during office hours to address individual needs.

The post-test’s focus on integrated skills requires students to apply strategies flexibly. New questions mirror the post-test’s structure (sentence, paragraph, oral translation) with novel, authentic content to build confidence in handling unseen tasks—directly aligning with the new question group’s superior post-test performance. Old questions are restricted to high-priority foundational skills to ensure no critical gaps remain.

To further reduce teacher workload, we recommend leveraging existing teaching resources (e.g., university translation corpora, student homework archives) for old question compilation, and collaborating with other translation instructors to share new question banks. This contextualized schedule balances effectiveness with feasibility, ensuring it can be implemented within the constraints of Chinese higher education. In addition, for students with weaker English or translation foundations, we recommend increasing old question review to 60% in the first 4 weeks, gradually reducing to 50% by Week 8, to ensure foundational mastery before introducing more challenging new content.

Conclusion

This study investigated the comparative effectiveness of two learning strategies—practicing new test questions and reviewing old test questions—on student performance in an English-Chinese translation course, using a quasi-experimental design with 90 sophomore students over 16 weeks. The findings, validated through rigorous statistical analyses (paired t-tests, Welch’s t-test, and one-way ANOVA), provide clear empirical insights for translation pedagogy.

First, both strategies significantly enhanced translation scores, confirming the value of structured practice in translation learning. The control group (old question review) demonstrated a substantial pre-post improvement (10.31 points, t = 60.431, df = 44, p < .001), while the experimental group (new question practice) achieved an even larger gain (12.98 points, t = 103.755, df = 44, p < .001). Post-test comparisons, corrected for unequal variances, further confirmed the experimental group’s superior performance (t = −4.162, df = 78.55, p < .001), with a large effect size (Cohen’s d = 0.877) indicating practical significance. Second, the strategies exhibited distinct strengths in skill development. Old question review was more effective for consolidating foundational skills, as evidenced by the control group’s 28% improvement in fixed-sentence translation—reflecting mastery of basic grammar and vocabulary. In contrast, new question practice excelled in fostering knowledge expansion and translation flexibility, driving a 35% improvement in paragraph translation and higher scores in unfamiliar sight translation tasks (16.8 ± 2.1 vs. 14.2 ± 2.5) for the experimental group. This differentiation supports the need for targeted strategy selection based on specific learning goals. Third, the findings inform a evidence-based, stage-specific pedagogical approach. Given that new question practice yielded 1.26 times the improvement of old question review, we recommend a 16-week schedule that balances the two strategies: 70% new questions in Weeks 1 to 4 (foundation building), a 50/50 mix in Weeks 5 to 8 (competence expansion), 70% old questions in Weeks 9 to 12 (consolidation), and a 40/60 mix in Weeks 13 to16 (final review). For students with weaker English or translation foundations, increasing old question review to 60% in the initial phase is advised to ensure foundational mastery before introducing more challenging new content.

Limitations to Generalizability

The generalizability of the findings is constrained by three key factors related to the sample and context. First, participants were second-year Chinese university students with homogeneous English proficiency and an exam-oriented learning focus. In settings with native English speakers or non-exam curricula—such as literary translation programs, where nuanced expression and cultural adaptation are prioritized—old question review may play a more valuable role, as repeated revision of complex passages could refine stylistic precision.

Second, the study focused on English-Chinese translation, a language pair with distinct structural differences (e.g., subject-verb-object vs. topic-comment word order, and culture-specific idioms). These differences may have amplified the “challenge effect” of new questions, as students had to navigate greater linguistic distance. Whether the results apply to more structurally similar language pairs (e.g., English-French) remains untested.

Third, the study was conducted in a single university, limiting variability in teaching context (e.g., class size, instructor experience). Cross-institutional replications would help validate the robustness of the findings.

Another limitation is the inability to fully control for unmeasured confounding variables, including students’ prior exposure to translation strategies and external study habits

Limitations and Future Research Directions

Despite its contributions, this study has several limitations. First, the sample was limited to 90 sophomore students from a single Chinese university with homogeneous English proficiency. This limits generalizability to students with lower/higher proficiency or those from different educational backgrounds (e.g., liberal arts vs. science majors). Second, the study focused on English-Chinese translation-a language pair with distinct structural differences. Whether the findings apply to structurally similar language pairs (e.g., English-French) or non-Indo-European languages requires further testing. Third, the study measured short-term effects (16 weeks) but did not assess long-term retention, which is critical for translation skills that require sustained mastery. Fourth, we did not measure students’ cognitive load during strategy implementation, which could explain why new question practice was more effective for paragraph translation but less so for sentence translation. Finally, unmeasured confounding variables may have influenced results, despite baseline equivalence.

Future research should address these gaps by: (1) Extending the study to 2 to 3 semesters and including follow-up tests to measure long-term retention; (2) Designing three-group experiments (pure new, pure old, interleaved) to identify the optimal practice mix; (3) Integrating eye-tracking or think-aloud protocols to analyze cognitive processing differences—such as gaze patterns when handling new versus old translation questions—and unpack why new question practice fosters greater flexibility. Additionally, cross-cultural and cross-linguistic studies would help determine the generalizability of the proposed teaching schedule.

Overall, this study contributes to translation education research by demonstrating the differential benefits of new and old question practice and providing actionable teaching guidelines. Future work should explore long-term retention of skills and the potential of interleaved practice to optimize strategy combinations, further refining evidence-based translation pedagogy.

Supplemental Material

sj-docx-1-sgo-10.1177_21582440261423827 – Supplemental material for The Effects of Old and New Test Question Strategies on Student Academic Performance in Chinese-English Translation Classes

Supplemental material, sj-docx-1-sgo-10.1177_21582440261423827 for The Effects of Old and New Test Question Strategies on Student Academic Performance in Chinese-English Translation Classes by Yinjia Wan and Jian Lian in SAGE Open

Footnotes

Acknowledgements

We appreciate the works of the Editors and Reviewers.

Ethical Considerations

This study was approved by the Ethics Committee of Shandong Management University.

Consent to Participate

Written informed consent was obtained from all participants.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The dataset used in this study could be provided by the corresponding author upon reasonable request.*

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.