Abstract

Peer assessment has gained increasing attention as an effective instructional approach that enhances students’ learning competence. However, its effectiveness largely depends on the quality of peer feedback. To enhance the quality of feedback, it is essential to develop methodological tools that can hierarchically evaluate the different levels of feedback produced by students. This study developed a coding framework for analyzing peer feedback quality and applied it to authentic data from two rounds of peer assessment conducted in high school science classes to both validate the framework and explore students’ feedback patterns. A total of 1,960 peer feedback comments were collected from 49 male 11th-grade students. A seven-level peer feedback coding framework was constructed based on prior literature, and its hierarchical structure was validated through Rasch analysis. Using the same dataset, the distribution, consistency, and changes in student feedback were also examined. The results confirmed the statistical validity of the hierarchical structure. Students most frequently provided low-level feedback that merely verified the appropriateness of responses, while high-level feedback that identified errors or suggested improvements was relatively rare. Student feedback also showed consistency both within items and across the overall assessment, but an overall decline in feedback quality was observed in the second round. These findings demonstrate that the developed framework can serve as a reliable tool for diagnosing and evaluating students’ feedback competence. Moreover, the study highlights the need for structured instructional support to promote high-quality feedback and provides practical implications for designing and evaluating peer assessment–based instructional interventions.

Keywords

Introduction

Traditionally, assessment has been regarded as the exclusive responsibility of teachers, who have played a central role in evaluating student performance, assigning grades, and determining the direction of instruction. In this process, learners have typically been positioned as passive recipients of assessment, rather than as active participants. However, with the growing emphasis on learner-centered education, increasing attention has been paid to the importance of student agency in the assessment process (Tractenberg, 2021; Tzenios, 2022). Furthermore, there has been a shift in perspective that recognizes assessment not merely as a tool for measuring learning outcomes, but as a key pedagogical practice that supports and promotes learning (Black & Wiliam, 1998; Black & Wiliam, 2018). This evolving understanding has led to a reconceptualization of the nature and role of assessment in education. In this context, the significance of formative assessment, or assessment for learning, has been widely acknowledged, as it emphasizes the role of assessment in facilitating learning and fostering student growth. As learner-centered approaches and broader conceptions of assessment continue to gain prominence, peer assessment—in which students take an active role in evaluating one another’s work—has emerged as an effective means of implementing student-centered assessment practices (Dolezal et al., 2018; Ibarra-Sáiz et al., 2020).

Peer assessment refers to an activity in which learners of equal status evaluate one another’s work or learning processes by assigning scores and providing feedback (Topping, 1998; Wu & Schunn, 2021). This practice moves away from traditional teacher-led assessment by actively involving students in the evaluation process, with the goal of enhancing learning (Ibarra-Sáiz et al., 2020). Peer assessment has generally been reported to have a positive impact on student learning (H. Li et al., 2020; Yan et al., 2022). The process of peer assessment provides opportunities to foster critical thinking skills in both assessors and recipients (Jiang et al., 2023; F. Y. Yu & Wu, 2013; Zeng & Ravindran, 2025). As assessors, students are required to analyze their peers’ work and make logical judgments about its strengths and weaknesses, while as recipients, they must critically evaluate the feedback they receive and determine whether and how to integrate it into their own learning. Through this process, both assessors and recipients can broaden their thinking, which can in turn lead to the generation of new ideas and the enhancement of creativity (Maybee et al., 2025; Zhang et al., 2021). Moreover, peer assessment can also contribute to enhancing students’ metacognitive awareness by encouraging them to reflect on their own learning and monitor their learning processes more effectively (Ebrahimi et al., 2021; Loureiro & Gomes, 2023).

However, the effectiveness of peer assessment largely depends on the quality of the feedback provided, as feedback can have both positive and negative effects on learning (Hattie & Timperley, 2007; Wu & Schunn, 2021). While some feedback offers learners valuable information that guides the improvement of their own work, other feedback may be vague, inappropriate, or overly superficial; such feedback may fail to contribute meaningfully to student development, may be disregarded, or may even hinder the learning process (Bader et al., 2019; S. Yu et al., 2019). This issue is particularly pronounced given that the feedback providers are not expert teachers but students with limited experience. In fact, students often have little prior experience with structured feedback, and providing feedback to peers can be a novel and unfamiliar task. Students face significant cognitive challenges when providing feedback (S. Yu, 2021; Zhan, 2024), and many researchers agree that peer assessment requires adequate training to be effective (Fleckney et al., 2025; H. Li et al., 2020; Q. Lu et al., 2021; Wei & Liu, 2024).

To maximize the positive learning effects of peer assessment, a strategic approach that focuses on enhancing the quality of feedback is required. Research on feedback has significantly advanced our understanding of the mechanisms through which feedback promotes learning by identifying the conditions for effective feedback and clarifying the importance of specific characteristics (Hattie & Timperley, 2007; Nelson & Schunn, 2009; Shute, 2008). These studies have provided a strong theoretical foundation for feedback research and have informed various intervention studies aimed at improving the quality of student feedback in educational settings (Alqassab et al., 2018; Gan & Hattie, 2014; Latifi et al., 2023; Panadero et al., 2013). At the same time, however, much of this prior work has either remained at the level of theoretical discussion or concentrated primarily on individual characteristics of effective feedback. As a result, comparatively less attention has been devoted to systematically examining the diverse forms of feedback that students actually produce in peer assessment contexts.

To enhance the quality of feedback provided by students in peer assessment, it is necessary to conduct a detailed analysis of the feedback that students actually generate in such contexts and to identify how it diverges from ideal forms of feedback, so that appropriate support can be provided. In other words, the starting point for improving peer feedback is to identify the characteristics of student-generated feedback and to evaluate its quality. To this end, it is essential to develop and validate methodological tools for quantitatively analyzing the levels of peer feedback. Such tools can help objectively capture the characteristics of student feedback and support learners in delivering more effective feedback, thereby contributing to establishing peer assessment as a meaningful learning experience. A clearer understanding of how students provide feedback in authentic peer assessment contexts is also crucial for identifying appropriate ways to support and advance peer assessment practices. Accordingly, the present study aims to establish a systematic method for analyzing the quality of peer feedback. For this purpose, a coding framework was developed to classify and quantify the types and levels of peer feedback, and its validity was examined using data collected in real educational settings. The study addresses the following research questions:

Literature Review

Shifts in Feedback Perspectives and Peer Assessment

Feedback refers to information provided to learners about their performance or understanding by various agents such as teachers, peers, or the learners themselves, and it exerts a powerful influence on learning and achievement (Hattie & Timperley, 2007). As a core component of formative assessment, feedback helps learners recognize their current level of performance and guides them toward the next stage of learning (Black & Wiliam, 1998).

In recent years, the concept of feedback literacy has reconceptualized feedback not as a one-way transmission from teacher to student but as an active process in which learners interpret and utilize feedback to regulate their own learning (Carless & Boud, 2018). Within this perspective, peer assessment has emerged as a valuable learning activity that promotes the development of students’ feedback literacy. In peer assessment, students simultaneously take on the roles of both feedback receivers and providers, engaging more deeply in the evaluative process than when they merely receive feedback, thereby gaining a variety of learning benefits (Reinholz, 2016).

Effects and Challenges of Peer Assessment

Specifically, by receiving feedback from peers, learners become aware of perspectives different from their own, identify weaknesses in their understanding or performance, and gain opportunities for improvement, while by providing feedback, they actively engage in critical thinking, interpretation and application of evaluation criteria, reflection, and learning transfer (D. Nicol et al., 2014). In addition, peer assessment is inherently a social activity (Van Gennip et al., 2010) that contributes to the development of social and communication skills, negotiation and diplomatic abilities, and teamwork, which can be applied in various contexts (Topping, 2009). In this sense, peer assessment serves as an effective learning activity that helps students develop key competencies required for lifelong learning.

However, without sufficient training and guidance, the learning benefits of peer assessment mentioned above are unlikely to materialize. For most students, peer assessment is an unfamiliar learning activity, and they often face considerable cognitive challenges during participation. In particular, students may not fully understand how to provide effective feedback or recognize its importance, which can lower the quality of feedback and limit the educational value of peer assessment (Bader et al., 2019; He & Gao, 2023). Therefore, to implement peer assessment effectively, it is essential to provide students with adequate training and establish appropriate scaffolding to support the feedback process (Wanner & Palmer, 2018).

Existing Coding Frameworks and Measurement Gaps

The quality of feedback provided by students is a key factor that determines the learning effectiveness of peer assessment (Rotsaert et al., 2018; Zong et al., 2021). Therefore, it is essential to systematically evaluate the quality of student feedback. Previous studies on peer feedback have developed coding frameworks based on the core characteristics of effective feedback to assess feedback quality (Hovardas et al., 2014; Nelson & Schunn, 2009; Patchan & Schunn, 2015; Wu & Schunn, 2020). However, these frameworks have not sufficiently captured the heterogeneity and diversity of less effective feedback observed in authentic classroom contexts, and there has been limited empirical validation of whether different types of feedback form a hierarchical structure. As a result, there remains a lack of concise and valid measurement criteria for determining where an individual student’s feedback lies along the continuum of quality.

Instructional Interventions for Enhancing Feedback Quality

Various instructional approaches have been proposed to improve the quality of feedback that students provide during peer assessment. Representative examples include peer feedback training (H. Li et al., 2020; Sippel, 2024) and feedback scripting (Gan & Hattie, 2014; Latifi et al., 2023). Peer feedback training refers to an instructional approach in which teachers systematically guide students on the procedures of peer assessment and on how to compose effective feedback. Feedback scripting, on the other hand, provides students with sentence stems or structural prompts that they can use when writing feedback, enabling them to produce more specific and organized comments. The shared goal of these approaches is to help students understand the requirements of peer assessment tasks and to support them in generating effective feedback.

However, such instructional approaches generally adopt a “template-first” orientation, in which students are guided to produce feedback using checklists or sentence stems derived from the general characteristics of effective feedback. While this approach is useful for establishing minimum standards, it has limitations in diagnosing each student’s current level of feedback competence and determining what kinds of support are needed for further development. For instance, some students may need greater support in diagnosing errors, whereas others may require guidance in providing evidence or suggesting actionable solutions. Yet diagnostic mechanisms capable of systematically identifying and addressing these differentiated needs remain underdeveloped. This gap between diagnosis and prescription constrains the design of scaffolding tailored to individual students’ feedback competence and often results in uniform and superficial feedback instruction in classroom practice.

Research Gap and Perspective of the Present Study

Previous studies have identified the educational benefits of peer assessment and proposed various instructional interventions to enhance the quality of student feedback, which remains a central challenge in peer assessment. However, most prior research has focused on the core characteristics of effective feedback, paying relatively little attention to the diversity and qualitative variation of less effective feedback produced by students in authentic classroom contexts. Furthermore, existing feedback interventions have primarily emphasized uniform standards or templates rather than diagnosing learners’ current levels of feedback competence. Consequently, these approaches provide limited insight into how individual students’ feedback skills can be systematically assessed or progressively supported through appropriate scaffolding.

This study seeks to address these limitations by developing a coding framework that enables the hierarchical analysis of the qualitative levels of various types of feedback generated by students. Through this framework, the study aims to establish a methodological foundation for diagnosing learners’ feedback performance and to provide practical implications for designing level-specific feedback instruction and tailored pedagogical interventions.

Methods

Development of a Coding Framework

This study aims to develop a coding framework to systematically analyze the types and levels of peer feedback. Based on previous research on feedback, a coding framework was developed and refined to enable a detailed analysis of peer feedback.

First, the types of peer feedback were classified based on their content. To establish this classification, the peer feedback typology proposed by Seo and Jeon (2012) was consulted (Table 1). Seo and Jeon (2012) presented a framework that clearly categorized peer feedback into distinct types and analyzed the validity of feedback content by examining whether each type of feedback was appropriate and meaningful for task performance. While much of the existing literature has emphasized the presence or absence of individual elements of effective feedback, their typology enables classification based on the overall nature of feedback. This feature was particularly useful for the present study, as it allowed us to capture not only elaborated forms of feedback but also simpler or more superficial comments such as general impressions and basic judgments of correctness, thereby covering the full range of feedback produced by students. Thus, this typology provided an appropriate foundation for classifying student feedback.

However, new patterns of feedback were observed in students’ actual responses that were not adequately captured by the original categories. To address this, additional categories were introduced. Furthermore, since the purpose of this study was to examine the qualitative levels of feedback, the categories were revised to align with the analytical goals. As a result, the classification system was expanded to allow for finer-grained analysis of diverse feedback content.

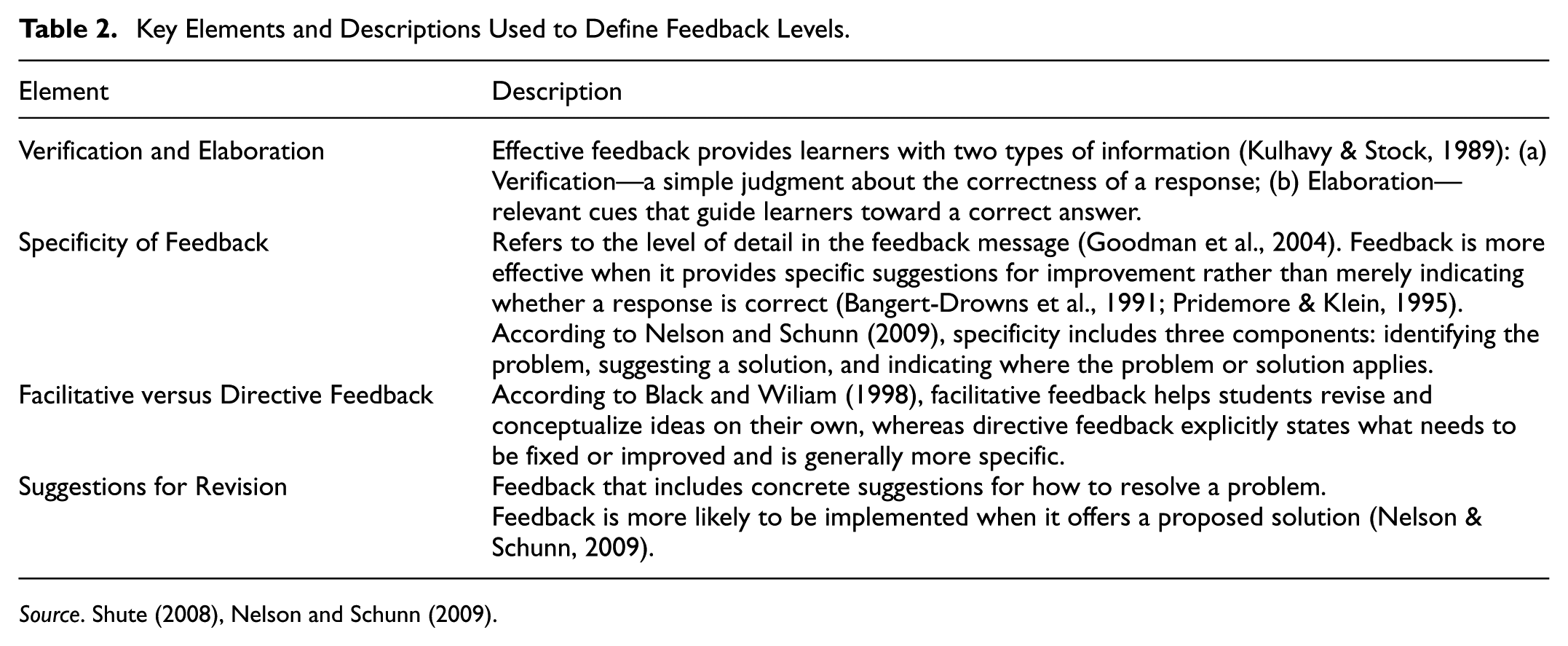

The purpose of this study was not only to classify student feedback into distinct types but also to develop a hierarchical framework that differentiates feedback from lower-level to higher-level forms. Seo and Jeon (2012) examined the validity of each feedback type by assessing its appropriateness for task performance, and Seo (2012) investigated the extent to which such feedback was accepted by recipients. However, these studies did not provide a theoretical account of which characteristics make certain feedback more sophisticated and educationally desirable. Building on their typology, the present study drew on Shute (2008), who synthesized key characteristics of effective feedback, and Nelson and Schunn (2009), who empirically demonstrated how particular features of feedback facilitate understanding and enactment, to establish feedback levels. These two studies are widely cited in the feedback literature and provide complementary theoretical and empirical foundations for constructing a hierarchical classification of feedback according to both its content and its level of elaboration. Based on these insights, a hierarchical framework was designed to capture how student feedback progresses from basic responses to increasingly specific and elaborated forms. Table 2 summarizes the key elements used to define the levels of peer feedback.

Key Elements and Descriptions Used to Define Feedback Levels.

Finally, the coding framework was developed by combining two criteria—types and levels of feedback—and its validity was evaluated by experts in science education and assessment, as well as by practicing teachers.

Implementation of Peer Assessment and Feedback Data Collection

To validate the coding framework developed in this study and to analyze students’ feedback patterns, peer assessment was implemented. The participants were 49 male 11th-grade students from a science-specialized high school in Gangwon Province, South Korea. After completing the first year of high school, they applied for and were admitted to the science-specialized track, indicating a strong interest in science. In their first year of high school, they had completed basic courses in physics, chemistry, biology, and earth science, but they had no prior experience with peer assessment. The sample was obtained through convenience sampling.

This study analyzed peer feedback data generated through an educational program implemented at the request of the school as part of regular instructional activities. The school is a science-specialized high school that offers a range of science-related programs, and this program was one of those activities. The data were anonymized before being provided to the researchers so that no personally identifiable information was available, thereby minimizing any potential risk to participants. The researchers did not introduce any additional intervention beyond the educational program itself and did not have access to any personally identifiable information.

As a result, the study posed no more than minimal risk to participants and was expected to contribute to improved understanding and practice related to peer feedback in educational contexts. Participants were informed in advance that their feedback data might be used for research purposes, and participation was voluntary and could be declined at any time without any negative consequences. Because the study involved only normal educational practices and posed no more than minimal risk, informed consent from individual participants was waived in accordance with Section 8.05 of the APA Ethical Principles of Psychologists and Code of Conduct.

The procedure followed the steps illustrated in Figure 1. First, a scientific reasoning test was developed to serve as the basis for the peer assessment task. After student responses were collected, the peer assessment activity was conducted using the PEER system. To explore potential changes in feedback patterns, a second round of peer assessment was carried out 5 weeks later using a different scientific reasoning task and the same procedure.

Overview of the data collection and analysis process.

Development of the Scientific Reasoning Test

Before implementing peer assessment, a scientific reasoning test was developed to provide students with a basis for generating responses to be evaluated. The test was designed to assess students’ scientific inquiry skills, such as hypothesis formulation and experimental design. Two versions of the test—Form A and Form B—were created, each consisting of four items. For each version, a model answer and an open-ended rubric were developed to support the evaluation of student responses. Figure 2 presents an example item from the scientific reasoning test (Item 2 from Form A), and Figure 3 shows the corresponding model answer and evaluation rubric. All other test items, along with their model answers and evaluation rubrics, are provided in the Appendix A.

Question 2 from Form A of the scientific reasoning test.

Model answer and evaluation rubric for question 2 from Form A.

The open-ended rubric was designed as an evaluation tool applicable across various item types. Rather than assigning scores solely based on the presence or absence of specific content, the rubric emphasizes a holistic evaluation of the overall structure and validity of student responses. Excessively detailed scoring criteria may lead students to produce formulaic feedback during peer assessment. Therefore, to allow for a wider range of feedback expressions and to analyze diverse feedback patterns, this study adopted an open-ended rubric. The rubric was developed to assess the validity of students’ conclusions and the reasoning provided to support them.

Collection of Student Responses

The first round of peer assessment was conducted using Form A of the scientific reasoning test. Students submitted their answers and justifications for each item via Google Forms. The collected data were compiled and stored in Excel for subsequent analysis. Students submitted their responses using student ID numbers, which were subsequently replaced with unique identification codes. All data were anonymized for analysis, and no personally identifiable information was retained.

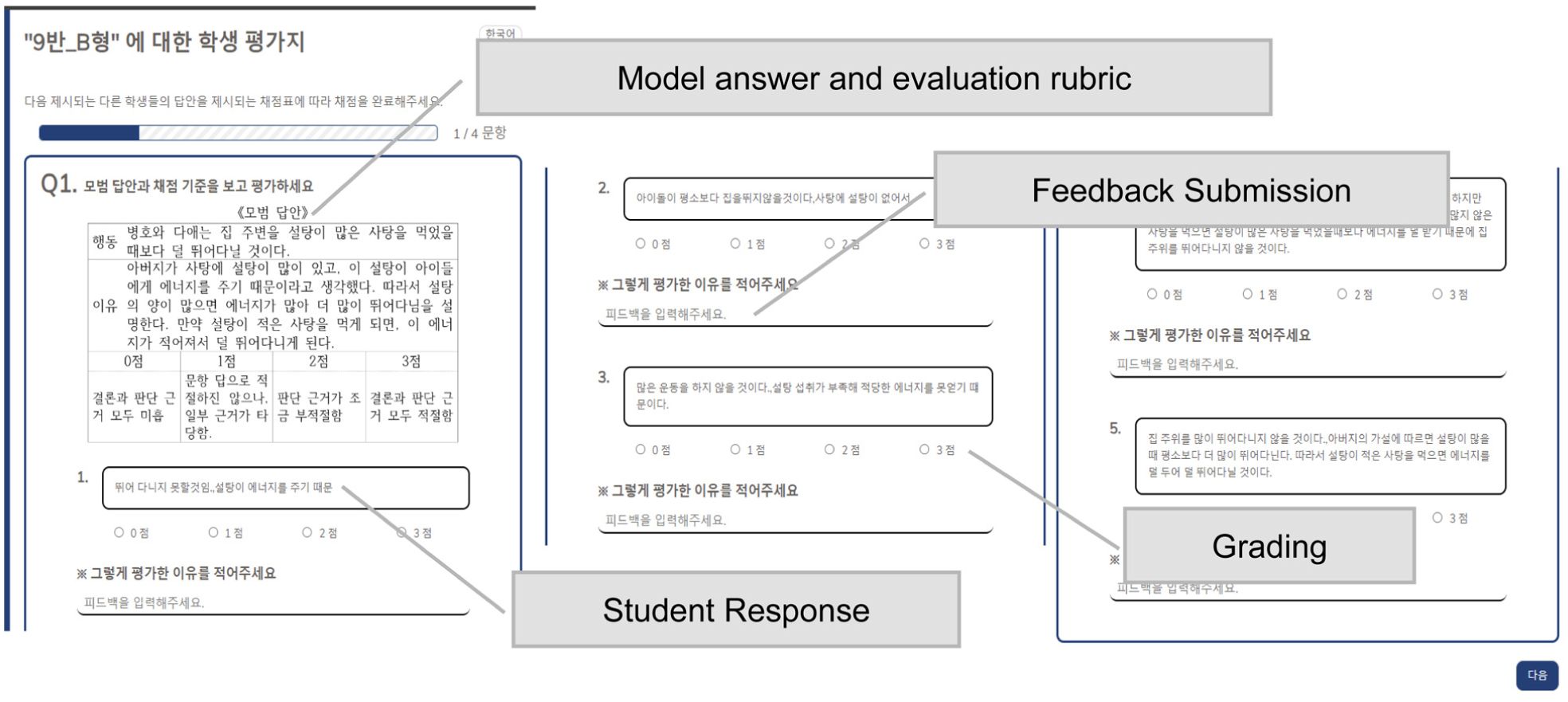

Implementation of Peer Assessment Using the PEER System

Following the collection of student responses, peer assessment was conducted using the PEER (Peer Evaluation Encouraging Reflection) system, which is designed to facilitate peer assessment efficiently in online environments. The system was used to generate peer assessment forms and to provide students with individualized links for accessing the assessments (Figure 4).

Screenshot of the peer assessment interface in the PEER system.

Each student evaluated and provided written feedback on the responses of five randomly assigned peers for each item, meaning that each response was reviewed by five different reviewers. The evaluation was conducted using a 4-point scale (0–3), based on the provided model answers and the open-ended rubric. Respondents and evaluators were anonymized, so students did not know whose responses they were evaluating or who had provided feedback on their own work. As with the collection of responses, all peer assessment results were anonymized and compiled in Excel. Individual files containing the average score per item and peer feedback were distributed to students so they could review the results of their evaluations. No specific training was provided on how to give feedback or what constitutes effective feedback. This decision was made to observe how students naturally engaged in peer assessment and the range of feedback levels they produced in an authentic setting.

Implementation of the Second Peer Assessment

The second round of peer assessment was conducted 5 weeks after the initial implementation. Applying the same procedure twice was intended to observe whether students’ prior experience with the peer assessment process influenced their feedback patterns. The 5-week interval between the two rounds of testing was determined by practical constraints associated with the school’s academic schedule, including the timing of examinations. In addition, while this study was intended to explore the effect of repeated tasks, only two rounds of peer assessment could be implemented due to the same constraints, which should be acknowledged as a limitation. The second round followed the same procedure as the first, using Form B of the scientific reasoning test.

Data Analysis

All feedback data were coded by two researchers using the developed coding framework. Inter-rater reliability was assessed using Cohen’s kappa, yielding substantial agreement (κ = .804) according to Landis and Koch’s (1977) benchmarks. Any discrepancies during the coding process were resolved through discussion. In addition, the number of characters in each feedback comment was analyzed as a supplementary indicator of feedback quality. This approach was based on the assumption that longer feedback tends to include more useful information and may be more beneficial than shorter comments (Patchan et al., 2018). However, longer comments do not necessarily guarantee usefulness, as they may contain redundant or overly elaborate content (Patchan et al., 2018). Therefore, the overall quality of feedback was evaluated by considering both the content-based coding results and the length of the comments.

Based on the results of the feedback type classification, the following analyses were conducted. First, Rasch analysis was carried out to examine the validity of the developed coding framework, specifically focusing on the categorization of feedback types and the hierarchical structure that reflects increasing levels of sophistication along a learning progression. Item fit was evaluated using Infit MNSQ and Outfit MNSQ statistics, with acceptable values defined between 0.5 and 1.5 (Boone et al., 2014). The reliability of the measurement tool was assessed using person reliability and item reliability indices. Internal consistency was examined using Cronbach’s alpha (α). All Rasch analyses were performed using Winsteps version 4.1.0.

Second, the distribution patterns of feedback types were examined to identify the overall tendencies in the peer feedback. Third, correlations of feedback types across items and of comment lengths across items were analyzed to assess the consistency of students’ feedback. All correlation analyses were conducted using SPSS 30. Finally, to explore the influence of students’ prior feedback experience on their feedback patterns, differences in feedback types and comment length between the first and second rounds of peer assessment were compared.

Results and Discussion

Development of a Coding Framework for Analyzing Peer Feedback

The coding framework for analyzing peer feedback developed in this study is presented in Table 3. Based on the typology proposed by Seo and Jeon (2012), three additional types were added—Irrelevant Feedback, Correctness-Focused Feedback, and Rubric-Based Feedback—while the original category of Question Feedback was excluded. The introduction of these new categories was necessary to capture feedback patterns that frequently appeared in students’ actual responses but were not sufficiently accounted for by the original typology. Because the framework of Seo and Jeon (2012) was developed in the context of writing tasks, it required modification to better reflect typical assessment contexts where correct answers are present. Moreover, in peer assessments of tasks with definitive answers, model answers and rubrics are generally provided, which give rise to additional feedback patterns that also needed to be considered. These modifications enabled the framework to more comprehensively capture the variety of feedback likely to occur in peer assessment. Question Feedback, by contrast, varied widely in its level depending on content and, in most cases, could be recoded into other categories. Therefore, this category was removed in order to establish a clearer hierarchy of feedback levels. Finally, the feedback level framework was designed to reflect key characteristics of effective feedback, as discussed by Shute (2008) and Nelson and Schunn (2009). It captures the progression of student feedback from basic responses to more elaborated and sophisticated forms.

A 7-Level Coding Framework for Analyzing Peer Feedback.

Level 0, Irrelevant Feedback, includes comments that are unrelated to the peer’s response and lack both verification and elaboration, making them unlikely to contribute meaningfully to learning. Level 1, General Impression Feedback, expresses the reviewer’s subjective impressions or vague opinions about the response. While it may include a minimal element of verification, such feedback generally lacks persuasiveness and is unlikely to offer meaningful information to the recipient. Level 2, Correctness-Focused Feedback, involves simple judgments about whether the response is right or wrong. From this level, verification begins to appear in a meaningful form. However, neither Level 1 nor Level 2 includes elaboration, and for this reason, they are categorized as lower levels of feedback.

Beginning with Level 3, Rubric-Based Feedback, feedback comments go beyond simple verification and include elaborative elements that provide more concrete information for improving the peer’s answer. This type of feedback evaluates the appropriateness of the peer’s conclusion and supporting reasoning based on the rubric criteria, offering cues for improvement rather than merely judging the correctness of the response. Previous research has shown that providing rubrics is effective in peer assessment practices (D. J. Nicol & Macfarlane-Dick, 2006; Panadero et al., 2013) and has emphasized that explicit reference to rubrics or standards is a key characteristic of effective feedback (Hattie & Timperley, 2007; Steiss et al., 2024). However, because it does not explicitly suggest how to revise or supplement the answer, it can be characterized as facilitative rather than directive.

Rubric-Based Feedback evaluates a peer’s response and suggests general directions for improvement; however, it does not explicitly identify specific errors or offer concrete revision strategies. For instance, feedback such as “the reasoning is insufficient” does not clarify which part of the response lacks reasoning, making it difficult for the recipient to determine exactly what to revise and how. When such specific information is lacking, feedback is less likely to be accepted or lead to meaningful revision. Therefore, for peer feedback to be used effectively, it is important to clearly identify areas in need of improvement and provide more detailed guidance on how to revise them.

Starting from Level 4, Error Identification Feedback is classified as directive in nature. It points out specific errors or areas that need revision, helping peers recognize deficiencies in their responses and encouraging them to make corrections. However, this type of feedback typically stops at identifying the problem and does not provide explanations as to why the issue is incorrect or why revision is needed. As a result, although the recipient may become aware of the error, they may struggle to determine how to revise it or feel less compelled to act on the feedback.

Peer feedback is a process in which the assessor explains the reasoning behind their judgment of a peer’s response and provides justification for its validity. Feedback does not become effective simply by being delivered. Feedback becomes effective only when it is understood and internalized by the recipient, thereby enabling productive improvement (D. J. Nicol & Macfarlane-Dick, 2006). Thus, feedback should be viewed as a persuasive process that logically explains why the recipient should accept it. To ensure effective feedback, the reasons for the evaluation must be clearly communicated so that the recipient can understand and meaningfully engage with the feedback. Level 5, Commentary Feedback, involves identifying specific strengths or weaknesses in the peer’s response, explaining the rationale behind those judgments, and presenting the reviewer’s perspective in a logical and structured manner. This type of feedback is generally regarded as more elaborate and persuasive than lower-level feedback.

Revision is considered one of the most challenging and least frequently occurring features of discourse (Berland & Reiser, 2011). In the context of peer feedback, revision refers to suggesting alternatives or offering directions for elaborating the content. While peer assessment activities typically ask students to evaluate their peers’ responses, revision may be understood as a more self-initiated and cognitively demanding process, as it involves unsolicited and elaborative contributions. Feedback that includes revision goes beyond simple error identification by providing concrete suggestions for improvement. Accordingly, Level 6, Suggestion with Alternative Feedback, is regarded as the most complex type of feedback in the framework.

The coding framework for feedback developed in this study aligns with the well-established feedback model proposed by Hattie and Timperley (2007). According to their model, effective feedback should progressively address three key questions: (a) Where am I going? (What are the goals?), (b) How am I going? (What progress is being made toward the goal?), and (c) Where to next? (What activities need to be undertaken to make better progress?). In the coding framework developed in this study, Correctness-Focused Feedback (Level 2) and Rubric-Based Feedback (Level 3) primarily address the first question by clarifying the goals of the task. Error Identification Feedback (Level 4) and Commentary Feedback (Level 5) additionally respond to the second question by diagnosing the learner’s current state and highlighting the gap between the current performance and the intended goals. Finally, Suggestion with Alternative Feedback (Level 6) extends to address the third question, offering concrete alternatives for making greater progress.

On the other hand, Hattie and Timperley (2007) note that feedback at the self or personal level rarely focuses on these three questions and is therefore unlikely to be effective. In this regard, Irrelevant Feedback (Level 0) and General Impression Feedback (Level 1) in our framework contain little task-specific information and thus cannot be considered effective feedback. Taken together, this coding framework reflects the core characteristics of effective feedback emphasized in prior theory and further contributes by differentiating feedback into a progressive hierarchy of types and levels. This enables a more fine-grained analysis of student-generated feedback.

To validate the developed coding framework, Rasch analysis was conducted using actual peer assessment data. For each of the eight items administered during the first and second rounds of peer assessment, the five peer feedback comments provided by each student were classified according to the established feedback types. The average level score of a student’s five comments per item was calculated and used in the analysis. The analysis examined whether the data fit the Rasch model framework, thereby providing evidence for the validity of the coding framework in terms of both the categorization of feedback types and the hierarchical structure that reflects increasing levels of sophistication along a learning progression.

The analysis showed that all item fit statistics (MnSq) fell within acceptable ranges (Infit MnSq: 0.78–1.42; Outfit MnSq: 0.82–1.41) (Table 4). Cronbach’s alpha was .80, indicating a satisfactory level of internal consistency. Person reliability and item reliability were 0.77 and 0.88, respectively, both of which are considered acceptable. These results support the statistical validity and reliability of the seven-level coding framework developed in this study for evaluating the quality of peer feedback.

Rasch item fit statistics for the 7-level peer feedback coding framework.

Results of Peer Feedback Analysis

Distribution of Peer Feedback Types

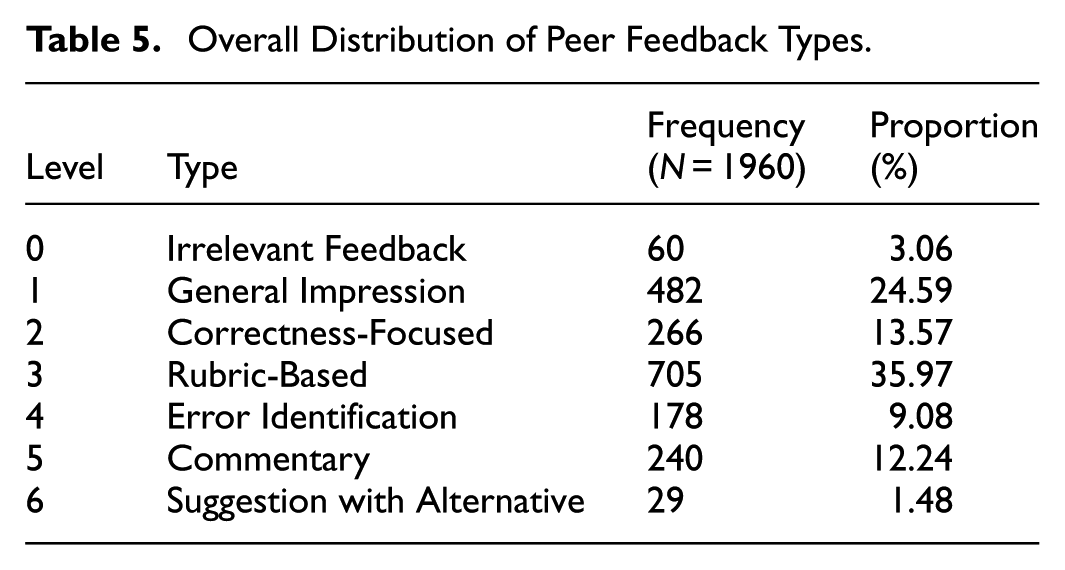

Table 5 presents the overall distribution of peer feedback types provided by students across the two rounds of peer assessment. Among all feedback types, rubric-based feedback (Level 3) constituted the largest proportion, accounting for 35.97% of the total. This type of feedback, which closely follows the evaluation rubric, may have been easier for students to produce than other types, as the rubric provided a clear direction for evaluation and likely guided students’ responses. D. J. Nicol and Macfarlane-Dick (2006) emphasized that providing rubrics helps clarify what constitutes good performance, thereby supporting desirable feedback practices. In this sense, rubrics explicitly specify task goals and the standards for judging them, effectively addressing the first key question in Hattie and Timperley’s (2007) model: “Where am I going?” The results of this study suggest that students actively relied on rubrics when composing feedback, indicating that the provision of rubrics strongly shaped the way feedback was produced. These results are also consistent with prior research reporting students’ positive perceptions of rubrics in peer assessment (Cockett & Jackson, 2018; Wang, 2014). Thus, providing rubrics can be considered a relatively straightforward but effective intervention strategy for enhancing the quality of student feedback.

Overall Distribution of Peer Feedback Types.

Following Rubric-Based feedback (Level 3), the next most frequently observed types were: General Impression (Level 1, 24.59%), Correctness-Focused (Level 2, 13.57%), Commentary (Level 5, 12.24%), Error Identification (Level 4, 9.08%), Irrelevant Feedback (Level 0, 3.06%), and Suggestion with Alternative (Level 6, 1.48%). While General Impression and Correctness-Focused feedback accounted for relatively high proportions, directive feedback that explicitly identify areas for revision or improvement were less frequently observed.

This finding suggests that while students can relatively easily provide general impressions or simple judgments of correctness, they encounter greater difficulties when required to identify specific errors or propose directions for revision. Several factors may account for this outcome. First, from a cognitive perspective, providing higher-level feedback requires considerably more complex cognitive processing. Recording impressions or judging correctness in a peer’s response involves little cognitive load. However, identifying problems in a response, articulating the gap between current performance and the intended goals, and suggesting directions for improvement all demand substantially more sophisticated processing. Hayes et al. (1987) reported that, compared with higher-ability writers, lower-ability writers detected fewer problems, paid less attention to global issues, had fewer strategies for addressing those issues, and were less likely to choose effective strategies for revision. This aligns with findings from many studies on feedback in writing tasks, which show that students often provide feedback focused on superficial features (Seo & Jeon, 2012; S. Yu, 2021). Such evidence underscores the need for scaffolding, such as providing students with training in peer assessment or the implementation of feedback scripts. It is unrealistic to expect students to produce feedback at a teacher- or expert-like level without any support. It is therefore important to explicitly guide students in understanding what information constitutes effective feedback and in recognizing which aspects may be missing from the feedback they provide.

However, the tendency of students to provide low-level feedback cannot be explained solely by a lack of cognitive ability. Instead, motivational factors may have played a more substantial role in shaping their feedback behavior. In this study, the peer assessment activity was not reflected in students’ final grades and had no direct connection to high-stakes examinations. In line with this, Gaynor (2020) found that when peer assessment is not graded, students tend to engage with the task strategically and often demonstrate low levels of participation. Moreover, no procedures were implemented that would allow students to see how their feedback was utilized—such as opportunities to revise their work based on feedback, to evaluate the usefulness of the feedback they received, or to respond to feedback providers. As a result, students may not have perceived a compelling need to invest the cognitive effort required to produce more elaborated feedback. This issue is discussed in greater detail in Comparison of Feedback Types and Comment Lengths Between the First and Second Peer Assessments.

In addition, the cultural characteristics of the group in which this study was conducted may have influenced the observed feedback patterns. First, the East Asian educational context is strongly oriented toward examination performance, which may have led students to perceive peer assessment more as a task focused on evaluating correctness than as an interactive and developmental dialogue. In a study of Chinese students, Zhan (2019) also reported that participants tended to view feedback as a conventional procedure rather than as an opportunity for improvement, and recognized its evaluative and encouraging functions more than its developmental ones. This suggests that, being accustomed to learning environments that emphasize correctness linked to summative assessments, students may have paid less attention to aspects of feedback that highlight differences between the criteria and the responses or that explain why the response falls short of the criteria, as well as to suggestions for improvement.

Second, Confucian traditions widely embedded in many Asian societies emphasize respect for authority, maintenance of harmony, and avoidance of conflict in public contexts (Chiu, 2009; Zhan, 2019). As a result, directly pointing out peers’ errors or suggesting revisions may be perceived as socially uncomfortable or as potentially harmful to relationships. Such cultural factors may have led students to prefer low-level feedback limited to general praise or correctness judgments, rather than more specific and critical feedback. Therefore, the feedback patterns observed in this study may reflect not only students’ individual abilities or motivation but also cultural elements characteristic of the East Asian context.

On the other hand, the greater frequency of commentary feedback compared to error identification feedback—which was positioned at a lower level in the coding framework—may be attributed to several factors. First, because error identification involves directly pointing out specific mistakes in a peer’s response, students may have felt uncomfortable providing such feedback out of concern that the recipient might perceive it negatively. As noted above, since this study was conducted in an East Asian educational context, such tendencies may have been further amplified. Arnold et al. (2005) reported that students tend to avoid giving negative evaluations out of fear that it may hurt their peers’ feelings or damage group relationships. In contrast, commentary feedback, which presents evaluative opinions rather than directly asserting that an error has occurred, may have been perceived as less confrontational and therefore psychologically less burdensome for students to provide.

Second, students may have found it difficult to accurately identify errors and may have been reluctant to risk providing incorrect evaluations, fearing that their own judgment could be perceived as flawed. Students feel burdened by concerns about the usefulness and accuracy of their feedback and experience a lack of confidence in their own abilities as feedback providers (S. Yu, 2021). In contrast, commentary feedback—rather than definitively pointing out specific errors—allows students to offer their own interpretations, making it a less risky and more comfortable option that does not require definitive judgments about correctness.

Additionally, the flexibility afforded in writing commentary feedback may have influenced students’ preferences. While error identification requires clearly articulating a specific mistake, commentary feedback allows for more open-ended observations, enabling students to comment freely on strengths or weaknesses they noticed in their peers’ responses. Due to this flexibility, commentary feedback may have been easier to produce, making it a more appealing option for students.

Consistency of Peer Feedback

To examine the consistency of peer feedback within individual items, an analysis was conducted to determine how consistently each student applied the same feedback type across the five peer comments they provided for a given item. Specifically, the number of distinct feedback types used by each student per item was calculated, and the results are presented in Table 6.

Number of Distinct Feedback Types Per Student Within Each Item.

In this study, consistency in peer feedback refers to the extent to which a student repeatedly employed the same type of feedback, reflecting whether a consistent evaluative standard was applied. For example, if a student’s five comments for a given item were classified as (1), (4), (1), (1), and (1), the number of distinct feedback types would be recorded as 2. A smaller number of distinct types indicates greater consistency in feedback use, whereas a larger number suggests that the student employed a variety of feedback types.

The analysis revealed that 38.52% of responses involved the use of only one feedback type, and 43.37% involved two types. In contrast, the use of three or more feedback types within a single item was relatively rare. These results indicate that most students tended to provide peer feedback in a consistent manner within each item.

In addition, a correlation analysis was conducted to examine whether students provided feedback consistently across the entire assessment. To this end, each student’s average feedback type and average number of characters were calculated based on five peer feedback comments per item, across all eight items included in Forms A and B of the scientific reasoning test. Table 7 presents the Spearman correlation coefficients for average feedback types by item, and Table 8 presents the Pearson correlation coefficients for average feedback length by item.

Spearman Correlation Coefficients for Average Feedback Types by Item.

Pearson Correlation Coefficients for Average Feedback Length by Item.

As indicated by the correlation analysis, students tended to maintain consistency in both feedback types and comment lengths within the same version of the scientific reasoning test. In other words, students provided feedback in a consistent manner not only within individual items but also across the entire assessment. This pattern suggests that students may have relied on stable cognitive approaches grounded in personal beliefs or internal criteria when generating feedback. These findings align with Patchan and Schunn (2015), who reported that the content of peer feedback is often shaped by individuals’ beliefs about feedback and is associated with the evaluator’s level of competence, as well as with Gao et al. (2025), who demonstrated that consistency in delivering particular feedback functions can be influenced by individual factors such as feedback strategies and feedback literacy.

These results carry important educational implications. The quality of student feedback appears to depend more on individual feedback competence than on writing ability or task-related knowledge, indicating that it can be substantially improved through appropriate instructional interventions. The feedback framework proposed in this study provides a useful foundation for diagnosing students’ current feedback levels and for designing tailored interventions to enhance the quality of peer feedback.

Comparison of Feedback Types and Comment Lengths Between the First and Second Peer Assessments

To examine the impact of peer assessment experience on students’ feedback practices, changes in feedback types and comment lengths between the first and second rounds of peer assessment were analyzed. This analysis aimed to determine whether students maintained consistent feedback patterns over time—just as they had demonstrated consistency across items and across the entire assessment during the first round—or whether their feedback became more elaborated or, conversely, more simplified in the second round. Table 9 presents the distribution of feedback types observed in the first and second peer assessments.

Distribution of Feedback Types in the First and Second Peer Assessments.

In the first round of peer assessment, the most frequent feedback type was Rubric-Based (Level 3), followed by Commentary (Level 5), General Impression (Level 1), Correctness-Focused (Level 2), Error Identification (Level 4), Irrelevant Feedback (Level 0), and Suggestion with Alternative (Level 6). In the second round, the distribution shifted, with General Impression (Level 1) being the most common, followed by Rubric-Based (Level 3), Correctness-Focused (Level 2), Commentary (Level 5), Error Identification (Level 4), Irrelevant Feedback (Level 0), and Suggestion with Alternative (Level 6).

In the second round of peer assessment, there was an overall decrease in feedback types that involved elaboration (Levels 3, 4, 5, and 6) and an increase in those that involved only verification (Levels 1 and 2), compared to the first round. In particular, Commentary feedback (Level 5) and Error Identification feedback (Level 4) showed substantial declines of 8.97% and 6.32%, respectively, while General Impression feedback (Level 1) increased significantly by 20.82%. These results suggest that, in the second assessment, students were more inclined to choose relatively simple types of feedback when composing their comments.

In addition, a comparison of the average number of characters in peer feedback between the first and second assessments revealed a substantial decrease in the second round. Excluding spaces, the average number of characters per feedback comment was 14.3 in the first assessment, but decreased to 8.3 in the second. Among the 49 participating students, only 6 (12.2%) showed an increase in their average character count, while the remaining 43 students (87.8%) showed a decrease.

Accordingly, the overall quality of student feedback declined in the second round of peer assessment compared to the first. This decline may reflect a decrease in students’ motivation and engagement when composing feedback. Students’ successful engagement in tasks requires that they hold passionate and positive feelings toward those tasks (Munns & Woodward, 2006). Bryant and Carless (2010) reported that although peer assessment is a skill requiring practice and repetition, students may experience excessive familiarity and a consequent loss of interest when it is repeatedly conducted in the same manner. In this study, the first round of peer assessment may have been perceived as a novel and motivating activity, whereas the repetition of a similar task in the second round may have resulted in decreased interest.

Moreover, although individual feedback was provided after the first round, students were not required to revise their responses based on the comments received. This may have led them to underestimate the purpose and importance of peer assessment or to perceive the feedback as having little practical utility. In addition, the fact that the outcomes of peer assessment were not reflected in grades and were not directly related to high-stakes examinations may have further weakened students’ motivation. As Bryant and Carless (2010) pointed out, in contexts such as Asian educational settings where examination performance is highly emphasized, peer assessment activities that are not directly connected to examinations risk being perceived as unnecessary or even meaningless. As a result, peer assessment may have been treated as a mere procedural task, prompting students to engage in it superficially and to produce shorter and less meaningful feedback.

Conclusion and Implications

This study aimed to develop a coding framework for systematically classifying and quantifying the types and levels of peer feedback, and to apply this framework to peer assessment data collected in real classroom settings to analyze students’ feedback patterns. To achieve this, a seven-level classification system was designed based on prior research on feedback, and two rounds of peer assessment were conducted to collect student feedback data. The collected data were then used to validate the coding framework and to analyze the distribution, consistency, and changes in the types of feedback provided by students.

Previous studies have primarily focused on identifying the characteristics of effective feedback, whereas the heterogeneity and diversity of less effective feedback observed in authentic classroom settings have received relatively little attention. This study extends the discussion beyond the notion of effective feedback by systematically categorizing the types of feedback that students actually produce and presenting their hierarchical structure as observed in real school contexts. The classification system of the developed coding framework integrates and clarifies existing conceptual discussions on feedback quality (Nelson & Schunn, 2009; Shute, 2008). Furthermore, Rasch analysis supported the ordered hierarchy of the framework, suggesting that feedback competence has a hierarchical and developmental nature and that the generation of feedback involves increasingly complex cognitive processes.

In addition, the coding framework developed in this study provides a methodological foundation for evaluating students’ feedback competence. The feedback produced by students showed consistent patterns not only within individual items but also across the overall assessment. This finding suggests that the quality of peer feedback may be influenced more by individual students’ feedback competence than by the characteristics of specific items or contextual factors of the assessment. Taken together, these results indicate that each student possesses a certain level of feedback competence, which can be further developed through targeted instructional interventions. The proposed framework can therefore be used as a diagnostic tool to assess students’ feedback competence and to monitor its development over time.

However, given the overall decline in feedback quality observed in the second round of peer assessment compared to the first, further investigation is needed to determine whether the observed consistency in feedback patterns truly reflects students’ individual competencies or is instead shaped by specific items or assessment contexts. Future research should aim to clarify whether feedback consistency is more strongly influenced by student ability or contextual factors—and, if the former, to identify which specific aspects of students’ ability most significantly contribute to high-quality feedback.

From a practical perspective, the findings of this study provide a concrete foundation for designing instructional interventions aimed at improving the quality of peer assessment. This coding framework has the potential to serve as an effective tool for both assessing and improving students’ peer feedback competency. First, it may be used diagnostically to systematically measure and analyze students’ feedback performance within peer assessment contexts. This enables clearer evaluation of individual students’ feedback levels and allows for the identification of specific aspects that may be lacking in their current feedback. Second, it can inform the development of instructional resources and training programs aimed at helping students provide higher-quality feedback. Instruction that includes opportunities to explore various types of feedback and reflect on the characteristics of effective peer responses can deepen students’ understanding of feedback and support them in establishing their own criteria for effective feedback. Ultimately, this framework may help students deliver more specific and meaningful feedback in peer assessment activities.

Third, the coding framework can be used to evaluate the effectiveness of instructional interventions by comparing student feedback before and after targeted feedback instruction. The framework enables the quantitative measurement of changes in students’ feedback practices, thereby supporting a systematic analysis of how specific pedagogical interventions influence the quality of peer feedback. Based on such analyses, more effective instructional strategies for teaching feedback can be designed, ultimately helping to make peer assessment a more meaningful learning experience. Therefore, the findings of this study may inform various educational practices aimed at enhancing the quality of peer assessment and support students in providing more effective feedback and using it actively in their learning process.

The feedback patterns observed in this study underscore the need for the diagnostic use of the coding framework and highlight key considerations for designing effective peer assessment. In the absence of instructional scaffolding, students frequently provided feedback that was limited to general impressions or judgments of correctness, which may reduce the educational value of peer assessment. These tendencies can be explained by a combination of cognitive, motivational, and cultural factors. Prior research has shown that assigning only scores in peer assessment yields limited learning benefits, whereas combining scores with qualitative feedback leads to more positive outcomes (Huisman et al., 2019; J. Lu & Law, 2012). In light of this, feedback that merely verifies the appropriateness of responses is unlikely to provide meaningful educational value. For peer assessment to function as a meaningful learning experience, structured instructional support is essential to help students clearly understand the purpose and role of feedback. In particular, explicit guidance should be provided to enable students to move beyond simple verification and to generate more specific and constructive feedback.

Furthermore, because students’ interest and motivation also influence the quality of feedback, the design of peer assessment should incorporate strategies that enhance students’ perceived value of the activity. This may include clarifying its purpose and significance, diversifying peer assessment formats to sustain engagement, or embedding peer assessment into exam-oriented educational contexts so that students perceive it as a useful opportunity to prepare for high-stakes assessments (Bryant & Carless, 2010). Such approaches can encourage students to regard peer assessment not as a superficial procedure but as a valuable and integral part of their learning.

Finally, in the current era of accelerating educational intelligentization, it is important to consider the potential of integrating artificial intelligence (AI) into online peer assessment environments. Previous studies have reported that online peer assessment can be effective in terms of ensuring anonymity and procedural efficiency (L. Li & Gao, 2016; Zhan et al., 2023), while the potential of AI-supported feedback provision has also been actively explored (Guo et al., 2025; Hooda et al., 2022; Steiss et al., 2024). Combining the coding framework developed in this study with AI support could allow for real-time diagnosis of students’ feedback, provide suggestions for improvement, and offer examples of feedback at different levels, thereby supporting students in generating more elaborated feedback. Such an approach may serve as a means of providing essential scaffolding in peer assessment while simultaneously reducing teachers’ workload. Moreover, because this framework can be applied not only to student feedback but also to feedback provided by teachers or AI systems, it has the potential to function as a universal tool for systematically analyzing feedback quality across sources and for identifying strategies for improvement.

Limitations and Future Directions

One limitation of this study is that the peer assessment process involved limited interaction among students. The study focused primarily on developing and validating a coding framework for analyzing peer feedback, and peer assessment was conducted solely for the purpose of collecting student feedback data. However, peer assessment is inherently a social process in which learners engage in mutual interaction to learn from and support one another, and interpersonal variables have been shown to play a significant role in facilitating learning (Van Gennip et al., 2010). Despite this, students in the present study were not sufficiently provided with opportunities to exchange ideas, engage in discussion, or share feedback during the peer assessment process.

Therefore, future research should conceptualize peer assessment not as an isolated individual task but as an integral component of collaborative learning. By applying the developed coding framework in contexts where students actively engage with one another, researchers can conduct in-depth investigations into how the quality of peer feedback evolves through interpersonal interaction.

In addition, the coding framework developed in this study focused on the cognitive aspects of feedback and did not take into account emotional elements such as praise or criticism. However, affective components of feedback may significantly influence learners’ receptiveness to feedback, motivation, and self-efficacy, ultimately contributing to improved learning outcomes (J. Lu & Law, 2012). Therefore, future research should adopt a multidimensional feedback analysis framework that incorporates both content and emotional dimensions to enable a more comprehensive understanding of the impact of peer feedback.

Finally, this study was conducted with a limited participant group, which may constrain the generalizability of the findings to diverse educational contexts and conditions. Therefore, future research should aim to include participants with a wider range of ages, genders, and learning backgrounds in order to more thoroughly evaluate the applicability of the proposed coding framework. In addition, due to the constraints of the school schedule, peer assessment was conducted only twice, with a 5-week interval between them. This limited the extent to which the influence of students’ prior peer assessment experiences on subsequent peer assessments could be fully examined. Furthermore, as this study relied solely on students’ feedback products as the source of data, it was constrained in its ability to directly investigate learners’ motivation and perceptions during the peer assessment process. As a result, the findings reported in this study may have been influenced not only by peer assessment experiences but also by a range of external factors. To identify these influences more clearly, it is necessary to conduct multiple iterations of peer assessment and to examine students’ motivation, engagement, and perceptions in a more comprehensive manner.

Footnotes

Appendix A

Ethical Considerations

This study analyzed peer feedback data generated through an educational program implemented at the request of the school as part of regular instructional activities. The school is a science-specialized high school that offers a range of science-related programs, and this program was one of those activities. The data were anonymized before being provided to the researchers so that no personally identifiable information was available, thereby minimizing any potential risk to participants. The researchers did not introduce any additional intervention beyond the educational program itself and did not have access to any personally identifiable information. Participants were informed in advance that their feedback data might be used for research purposes, and they were free to decline participation without any negative consequences. As a result, there was no foreseeable psychological, social, legal, or educational risk to participants, and therefore formal ethical approval was deemed not necessary.

Consent to Participate

Informed written or verbal consent was not obtained from individual participants because the study involved only the analysis of anonymized data generated through regular educational activities and presented no more than minimal risk. According to Section 8.05 of the APA Ethical Principles of Psychologists and Code of Conduct, informed consent may be waived when research involves only normal educational practices conducted in educational settings and presents no more than minimal risk of harm or distress to participants. As this study met those conditions and confidentiality was fully protected, informed consent was waived.

Author Contributions

Seoyeon Lee conceptualized the paper, analyzed the data, and wrote the manuscript. Minsu Ha contributed to data analysis and supervised the study.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Research Foundation of Korea (NRF) grant funded by the Korea government (MSIT) (NRF-2023R1A2C1003475) and by the ‘Science Education in Infosphere (SEI)’ funded by the four-stage BK21.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The datasets generated during and/or analyzed during the current study are available from the corresponding author on reasonable request.*