Abstract

To enhance learner engagement in traditional science communication, we innovative detection framework that based on long short-term memory (LSTM) networks. This framework equips AI robots with the capability to actively track fluctuations by combining sentiment-driven analysis and adaptive prediction mechanisms. First, we evaluate emotional attitudes on platforms such as YouTube and Bilibili, utilizing an emotion scoring system to capture shifts in learner preferences and interest levels, thereby creating a curated evaluation dataset for science content. Next, we enhance the robot’s facial emotion recognition performance during real-time interaction through convolutional neural network (CNN) training, which enables more accurate and personalized content recommendations. Finally, we integrate these features through an LSTM network, allowing the AI robot to dynamically assess and respond to learner interest. This comprehensive framework enhances emotional feedback mechanisms, enabling precise and real-time content recommendations. It also optimizes the role of AI robots as interactive science communicators to boost scientific literacy and engagement. This study contributes to science communication by enhancing the predictability of learner interest. It enables AI robots to interpret emotional responses to scientific texts with greater precision and personalization.

Plain Language Summary

This study aims to address the challenge of low engagement among non-expert learners in traditional science communication. The research team developed a dual-stream learning model combining emotion-driven analysis with an adaptive prediction mechanism, enabling an AI robot to intelligently respond to learners’ changing interests during real-time interactions. The study first analyzed viewers’ emotional responses to scientific content on platforms such as YouTube and Bilibili, constructing a sentiment-filtered dataset to capture non-expert learners’ evolving interests and preferences. Next, the AI robot was trained using a CNN to accurately recognize learners’ facial emotions during interactions, enabling more personalized content recommendations. Finally, an LSTM was incorporated to enable the AI robot to dynamically assess and predict learners’ interest levels. We found that this framework can more accurately recommend scientific content in real time, helping enhance engagement and interactivity in science communication. The findings offer practical insights for science education, aiming to enhance learners’ immersion and interactive experiences.

Keywords

Introduction

With the rapid advancement of emerging science and technology, social media has gradually become a mainstream channel for disseminating scientific knowledge to non-expert audiences. Science communication, as an important part of interdisciplinary scientific education, plays a vital role in improving the scientific literacy of non-professional learners (Wood, 2023). It has evolved into a key method for establishing positive public relations between readers and scientists (Johnston, 2020, pp. 20–24), enhancing public scientific literacy (Sharon & Baram-Tsabari, 2020), and nurturing the technological innovation capabilities of the younger generation (Bilotta et al., 2021). As the importance of science education continues to grow, the need for the rapid dissemination of scientific communication has become increasingly urgent. However, at present, scientific knowledge is primarily disseminated among professional scholars, and the general learning audience lacks interest and pathways for learning scientific knowledge. Although groups such as journalists attempt to interpret complex scientific concepts in more accessible language, differences in their disciplinary backgrounds (Villagrán Sánchez & López Pan, 2025) and ideological positions (Hardy et al., 2019) can compromise the accuracy of science communication. As a result, understanding scientists’ original perspectives remains a considerable challenge for the general public. Therefore, interpreting scientists’ views is still a major challenge for ordinary readers (Ashwell, 2016). To some extent, this trend has undermined the quality of science communication and diminished the efficiency of knowledge transmission. Therefore, shifting scientific communication from specialized scholars to non-specialists through new technological means such as robots’ utility is a crucial step in further enhancing public interest in scientific knowledge.

In the digital age, when conducting science communication for non-specialist learners, attention should be given to the personalized features of knowledge dissemination. Scientific communication should provide personalized knowledge dissemination based on the real-time emotional states and interest preferences of diverse learners. This can highly stimulate the interest of non-specialist learners with diverse academic backgrounds and varying professional orientations. In this case, AI robots, as a new form of human-machine interaction, are gradually participating in various tasks within the field of science communication. Due to their advanced capabilities in Human-robot interaction (HRI) and autonomous dialog, AI robots can serve as learning partners (W. Wang et al., 2019), robotic tutors (Kurtz & Kohen-Vacs, 2024), educational assistants (Guggemos et al., 2020), and fulfill other roles in actively participating in educational activities. In this case, AI robots stimulate learners’ interest in learning knowledge by employing techniques such as adaptive feedback (Alam & Mohanty, 2022; Xie et al., 2022), interactive gestures (Uluer et al., 2015), and engaging in voice conversations (Lubold et al., 2016). They provide learners with entertainment value during the actual learning process (Bogue, 2022; Gong et al., 2022), while also enhancing skills through active voice interaction (Yoon et al., 2019), thereby boosting students’ learning interest. As AI robots engage in increasingly profound emotional interactions with learners, this necessitates that AI robots undertake more complex and specific tasks. Considering the high interactive value of AI robots in the field of education, leveraging human-machine interaction to promote the dissemination of scientific popularization content can facilitate the broader dissemination of specialized scientific information. Therefore, AI robots need to develop more immediate and precise detection frameworks to serve as auxiliary tools for scientific communication.

Some studies have focused on enhancing human-machine interaction within learning environments by integrating data-driven techniques, including machine learning algorithms, AI robots, and affective computing techniques. Existing studies focus on the intervention of artificial intelligence technology in the external learning environment, such as learning behavior and motivation. From the perspective of AI robots’ assistance in learning, AI robots have been effectively identified as learning companions, demonstrating potential in supporting early childhood education (Neumann, 2020), enhancing students’ writing abilities (Chandra et al., 2020), stimulating students’ debating skills (Yun et al., 2023), and fostering increased learning confidence (Kanda et al., 2004). Moreover, other studies have further enhanced robot systems by focusing on altering the behavior techniques (Alam, 2022) and interaction feedback systems (Crompton et al., 2018) of AI robots to strengthen HRI. From the perspective of robot infrastructure design, various traditional data technologies are beginning to be incorporated into the human-machine interaction design of AI robots, such as multi-viewpoint patterns (Kanade & Yamada, 2003), probabilistic approaches (Stassopoulou & Dikaiakos, 2009), image sets (Cevikalp & Triggs, 2010), etc. With the continuous advancement of data analysis methods, machine learning techniques are gradually being integrated into the interactive analysis of AI robots. It allows AI robots to perform feature extraction and recognition through methods such as recurrent neural network (RNN; Qi et al., 2021), decision trees (Li et al., 2021), convolutional neural networks (CNN; Jaiswal & Nandi, 2020), transfer learning (Modi & Bohara, 2021), LSTM model (Wei et al., 2024), naive bayes classifier (Maswadi et al., 2021), etc. This enables AI robots to accomplish more complex interactive tasks in learning environments.

Although some existing machine learning techniques have played a positive role in the interactivity of AI robots, there are still limitations in terms of precision. The output interactivity of an AI robot in a specific learning environment has been analyzed, however, the comprehensive correlation between its program input, data integration, and output interaction is often overlooked. Moreover, most machine learning methods have not yet revealed the relationship between learner emotion recognition and learning preference classification, which limits the AI robot’s ability to recognize, predict and provide feedback on learner status in a learning environment. In this study, “learning” primarily refers to how non-expert audiences engage with and interpret scientific content, particularly their emotional and cognitive responses to the language, themes, and conceptual structures within scientific texts. AI robots can assist readers in bridging the gaps in understanding that often arise from ambiguously defined scientific concepts.

In order to improve the interaction accuracy of AI robots in scientific communication, this paper proposes a detection framework. The main idea of this framework is to establish the classification of learners’ emotional preferences for popular science videos in online media. Combining with the improved SO-PMI Algorithm, the classified data packets are then integrated into an AI robot for preliminary learning. Subsequently, real-time recognition of learners’ study states is achieved through the use of CNN methods. This method integrates personalized recommendation paths for human-machine collaboration in scientific communication into a comprehensive analytical framework, and processes analysis via different data approaches.

The contributions of this paper are summarized as follows.

(1) Feature Classification and Real-time Detection. This research addresses the characteristics of non-expert learners by collecting and constructing preliminary data packages from online science communication videos. We employ a joint dual-stream LSTM model for secondary image detection, achieving real-time monitoring of learners’ engagement states during science communication.

(2) Framework for Precise Human-Robot Interaction (HRI). By integrating K-means and emotion recognition, the framework aligns input features from science videos with HRI responses. The framework accounts for the multifaceted emotional responses learners may experience when engaging with scientific content—such as topic-related interest, cognitive strain, and varying levels of comprehension. At the same time, it addresses the widespread issue of information overload in online science communication, thereby enhancing the flexibility and responsiveness of interactive feedback.

(3) Enhanced Engagement through Emotion-Aware AI. The CNN-LSTM-based AI robot framework we propose is designed to boost interest in science communication among non-expert learners. The optimized interaction strategy enables AI robots to identify emotional states such as cognitive overload and difficulty in understanding perspectives. Based on this, AI robots provide personalized science content to support more accurate interpretation during communication.

In this study, the integration of different data approaches presents a significant advancement in reinforcing the role of AI robots as interactive learning companions. This paper introduces a detection framework for AI robots that emphasizes the interaction between learners and robots to achieve precise science learning outcomes. This study focuses on the learning needs of non-expert learners as reflected in their individualized comprehension patterns, emotional responses, and levels of cognitive load when engaging with science communication. By analyzing multi-dimensional data such as facial expression dynamics, emotion scores, and interest fluctuation patterns observed in non-expert learners while watching science videos, the AI robot can intelligently adapt its interaction strategies and content delivery to better match the learners’ level of comprehension. More specifically, the emotional interaction capabilities of AI robots enable them to engage in physical interaction with non-professional learners. By configuring the personalized traits of learners, this significantly enhances the programing of AI robots for scientific communication with different types of learners. The aim is to improve the responsiveness and reaction speed of AI robots when faced with the complex learning states of learners, thus aligning more closely with the personalized learning needs of the learners.

The remaining content of this paper is as follows. Section 2 is conducted by examining interactions such as comments and likes on different popular science videos from the YouTube and Bilibili online learning platforms. Utilizing sentiment analysis, labels attributing to non-professional learners’ knowledge structure, learning styles, interests, and learning needs within various courses are derived. In Section 3, personalized label attributes are integrated using CNN. Human-machine interaction output and learner state recognition are achieved by establishing a collection of facial emotion images. Section 4 provides the conclusion.

The Present Study

Technologies such as affective computing and artificial intelligence provide a new method to examine the changes in the learning status of non-professional learners in the face of scientific communication. Therefore, this study aims to analyze the real-time emotional changes of non-professional learners when they are learning scientific content, so as to classify learners and calculate their emotions, so as to enable AI robots to assist non-professional learners in learning and understanding accurate scientific knowledge.

This study seeks to address the following research questions:

(1) How can AI robots integrate behavioral and facial features to achieve real-time recognition of informal learners’ emotional states?

(2) How to improve the personalized response effect and user engagement of AI robots in scientific communication scenarios?

Research Method

Framework of the CNN-LSTM-Based AI Robot

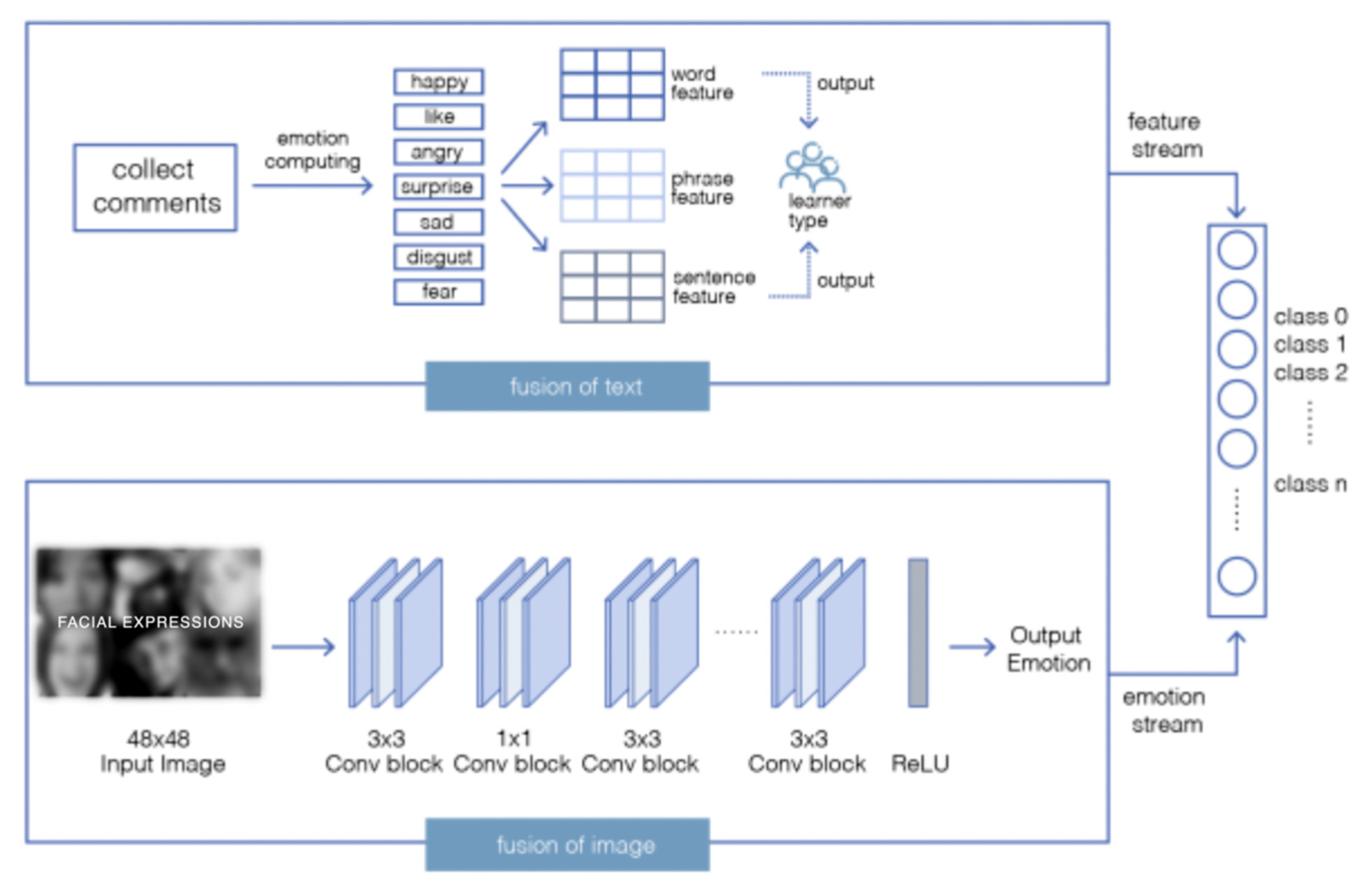

This study presents a system architecture for an AI robot designed to facilitate scientific communication with non-professional learners. Figure 1 shows the detection framework. Using the detection framework, we proposed to aid the robot learning companionship procedure requires the existence of a large dataset of learners’ emotional preferences for science communication. Therefore, this study first requires capturing the learning preferences of the learner based on big data from online learning platforms. Next, the collected data was subjected to preprocessing steps such as cleaning, clustering and feature labeling to construct the initial learner profile. Following this, the integrated collection of various categorized learner portrait models was implemented with the AI robot. To identify learners’ emotional responses, the system uses a CNN for facial expression recognition. The recognized expressions and behavior-related features are used to form multimodal input sequences. These sequences are then processed through a two-stream recurrent model to predict the learner’s real-time state. We used the CNN-LSTM-based AI robot to facilitate real-time augmented participation with learners in science communication. By combining facial expressions and behavioral cues, the system enables the AI robot to adjust its communication strategy in real time, thereby enhancing both responsiveness and personalization in the process of popular science communication. Its architectural design supports continuous learning and adaptation, resulting in a more effective informal learning experience

Detection framework of the CNN-LSTM-based AI robot with FER2013 images examples.

Dataset of Learners in Science Communication

By collecting data from online learning platforms such as YouTube and Bilibili, AI robots can initially understand learners’ attitudes toward science communication. Learners’ emotional responses to science communication videos are viewed as a multidimensional psychological process, encompassing complex states such as interest level, cognitive load, and frustration with understanding. Therefore, in collecting emotional data, we consider not only the sentiment polarity of user comments but also engagement metrics such as like ratio, topic response frequency, and the temporal characteristics of emotional expressions, in order to more comprehensively capture the dynamic emotional states of learners. To initially capture learners’ emotional features regarding existing scientific knowledge videos for AI robots, it is particularly vital to collect relevant datasets available from public websites using Hash operation. The construction of learner evaluations on scientific knowledge videos in online media represents a rapid and effective approach. Through this approach, it is possible to capture learners’ latest emotional attitudes (Watson et al., 2023) and learning states (Gong, 2023; Yukselturk & Top, 2013) in various scientific communications.

First, we constructed a learner portrait label framework from the dimensions of learners’ general characteristics and attributes, learning effect attributes, and behavioral characteristics. Next, basic data and bullet screen data of relevant science communication videos were collected through data acquisition. We manually annotated and filtered relevant content, and selected the Beautiful Soup module to parse HTML and XML. Preliminary data extraction was conducted on relevant data provided by learners. Third, based on the time intervals, volatility, positive sentiment of comments, and the episodic nature of emotional expressions, basic information, thematic features, emotional content, and the correlation of information behavior between learners and scientific communication were filtered. Fourth, the original data was cleaned by eliminating missing values and irrelevant entries, resulting in the construction of the preliminary data package. In this process, the noise information that affected the subject words related to the video topic features was removed, and the cleaned data was collected.

Learning Preference Labels and Features of Learners

Emotional Attitude Training

The data processing of learning preferences of learners is an effective method for discovering personalized behavioral characteristics (Hou et al., 2020). This can build a group interaction target label, thereby achieving precise product recommendations.

Therefore, this study first classified the expressions of emotional evaluations made by learners in scientific knowledge videos. Utilizing the sentiment dictionary from Dalian University of Technology (DUoT; Lin et al., 2020) as the foundation for sentiment analysis, we supplemented it with custom emotion seed words. This approach led to the expansion of the emotional lexicon related to science communication. To capture the emotional nuances in learners’ textual responses, we adopted a fine-grained sentiment classification system that divided emotions into seven primary categories—like, happy, anger, sadness, fear, disgust, and surprise—along with 21 secondary subcategories. Each emotion word was assigned an initial intensity score on a five-level scale (1, 3, 5, 7, 9), which provided more detailed granularity compared to conventional sentiment dictionaries. Furthermore, every emotion word was classified into one of three polarity types: neutral (0), positive (1), or negative (2). We further utilized the SO-PMI algorithm to define the terms used by learners as either positive or negative (Q. Wang et al., 2021). SO-PMI selected a

After calculation, the results of SO-PMI (H1) showed the emotional tendency. Learners’ different emotional tendencies toward scientific knowledge were effectively calculated. It was defined as follows:

Clustering and Building Sentiment Dictionary

We further utilize an unsupervised K-means to perform emotional computing and classification. Unsupervised machine learning algorithms can be employed to analyze the content of texts generated by learners in scientific knowledge (Chen et al., 2023; Fiorini et al., 2020).

First, we built the extended sentiment dictionary using SO-PMI. As mentioned above, we used DUoT as basic sources, as they include sentiment words in Chinese. Meanwhile, a large number of words related to scientific communication uttered by learners were also added. They together formed an expanded sentiment dictionary, including positive words such as “like” and “happy” and negative words such as “surprise,”“fear,”“sad,”“anger,” and “disgust.” We categorized sentiment polarity into two classes, where 1 represents positive and 2 represents negative. Emotional intensity included 1, 3, 5, 7, and 9, which meant that the higher the number, the greater the intensity of the emotion.

Next, unsupervised K-means was used to classify the types of learners and to explore the underlying structure of their emotional reactions regarding science communication content. Segment data into clusters based on sentiment features. It aimed to minimize the sum of Euclidean distances between data points and their respective cluster centers. The unsupervised K-means was defined as shown in Formula (a1) in Appendix A. This calculation process helped us automatically form different emotion type groups based on the emotional characteristics of learners when facing scientific communication content. This provided support for subsequent personalized recommendations and robot response strategies. Meanwhile, the silhouette coefficient was a metric to evaluate the effectiveness of the unsupervised K-means clustering. The silhouette coefficient for a single sample represents as E was calculated shown in Formula (a2) of Appendix A. For each point E, the average distance to the other points within its own cluster was denoted as v. The average distance from the point to all points in the nearest cluster that it was not a part of was represented as g. The value range of the silhouette coefficient was between −1 and 1. The larger the value, the better the clustering effect. By comparing the average silhouette coefficient under different numbers of clusters, we determined the optimal number of categories. We determined the optimal number of clusters to capture the natural distribution of learners’ affective states by calculating silhouette scores for different numbers of clusters.

Therefore, the learning states of learners in scientific communication were identified through the establishment of the aforementioned emotion dictionary, including indicators such as learning activity, concentration duration, and content preferences. Based on the analysis of learners’ emotional and behavioral data, a preliminary classification of learner types in the context of scientific communication was developed.

Experimental Model for Real-Time Augmented Participation in Training AI Robot

By classifying online scientific communication learners, the AI robot initially acquired the learning characteristics associated with each type of learner. The facial expressions in this study were not recognized as isolated facial expressions, but were regarded as immediate emotional reactions caused by the learners’ understanding and preference of scientific content while watching popular science videos. These expressions reflected the learners’ interest, confusion or involvement in the video content. In order to improve the robustness of the emotion recognition model, we also combined the public facial expression database to expand the model training data, made it more adaptable when facing diverse emotional patterns. Therefore, to further enhance the real-time interaction between the AI robot and learners, CNN was employed to construct a dataset of facial expressions in science communication (as shown in Figure 2; Goodfellow et al., 2013).

CCN for emotion recognition.

The CNN detected emotional changes of learner using activation functions to automatically segment images (Hassaballah & Awad, 2020, pp. 5–6). We labeled datasets from public datasets to train the CNN. 48 × 48 size was used for facial expression recognition, which showed the suitable face area. This size was relatively small, facilitating the capture of facial details, and variations in features. The input consisted of 13 separable convolutional layers with 3 × 3 kernels and a stride of 2. The output featured 1 × 1 and 3 × 3 alternating convolutional kernels, and was connected to four convolutional layers.

The formula (3) indicated that feature maps of the (1-1) layer convolving with filter

Combining the chain algorithm, the entire training process of the CNN involved calculating the partial derivative coefficients of the weight function with respect to the weight associated with each unit. In this way, we achieved real-time tracking of learners’ emotional changes for the AI robot.

Joint Two-Stream LSTM Model for Integration

Considering that the learner features and facial expressions lacked correlation information, we further built the joint two-stream LSTM model to resolve the accuracy problem in HRI during the process of scientific communication. The joint two-stream LSTM model was an effective model capable of simultaneously handling two distinct types of data streams, namely learner features and facial expressions (Zhao et al., 2020). The model effectively paid different levels of attention to the feature streams and emotion streams.

First, we preprocessed two distinct types of data streams. Given the sequence of behavioral feature streams of learners as

Each time step was represented by t, which the hidden states for the feature streams and the emotion streams were

In this way, the detailed information of learners’ features and facial expressions was integrated by the joint two-stream LSTM model to enhance the temporal dependence between features via attention weights. The AI robot achieved real-time prediction of learners’ states and feature types during the process of scientific communication.

Establishment of Experimental Model and Data Analysis

Dataset Construction of Learner Features Based on Facial Analysis

As online learning platforms gradually become the mainstream informal learning method, this study selected Bilibili and YouTube as the preliminary popular science data source. First, we collected the learner comments, liking and bookmarking on related popular science using the request library, especially those with the highest number of comments and likes. The lxml library was used to parse HTML/XML data. Based on the data we collected, we categorized the science communication topics of interest to learners into three types: science enlightenment, science and technology, and science culture. Second, the obtained 27,939 pieces of text data were preprocessed by HanLP and jieba segmentation libraries. Data cleaning of duplicate and invalid content in the text was conducted in conjunction with the pandas library. After the above steps, 7,272 pieces of valid data were obtained. We further combine those data to build the sentiment dictionary and learners’ emotion frequency distribution statistics for science enlightenment, science and technology, and science culture were calculated.

Next, the SnowNLP library was used to train a sentiment model for emotion analysis by inputting positive and negative text samples. The distribution of learners’ emotional values was shown in Figure 3a, and the learners’ attitude toward the different types of science communication was also calculated in Figure 3b. Figure 3a featured a histogram that normalized the frequency of learners’ emotional scores on a scale from 0 to 1. Scores closer to 1 meant more positive emotions toward the scientific content, while scores nearer to 0 implied more negative emotions. Figure 3b further evaluated the emotions associated with three types of scientific communication. As depicted in the chart, the “Science and Technology” category achieved the highest average sentiment score at 0.5936, followed by “Science Enlightenment” with a score of 0.5503, and “Science Culture” received the lowest score at 0.5359. These results suggested that learners exhibited varying levels of receptiveness toward different scientific communication topics.

(a) Sentiment value calculation and (b) sentiment scores for different scientific communication categories.

Last, we further conducted cluster analysis on learners of science communication. Calculation was performed combined with unsupervised K-means, and the results were shown in Figure 4a. In Figure 4a, the silhouette score ranged from −1 to 1. The closer the score was to 1, the better the clustering effect. Thus, we observed that the best clustering occurred with three clusters at 0.89276266. The results of positive and negative emotional evaluations were presented according to the four optimal clusters, as shown in Figure 4b. This illustration revealed the spatial distribution characteristics of emotional preferences. It further suggested that classifying learners into three categories resulted in the best data segmentation effect. According to Table 1, we found that the three types of scientific communication learners were vigilant learners, challenger learners, and optimistic learners. Different types of learners had different learning characteristics when facing scientific communication content. Among the three types of learners, the challenger learner type had the highest level of learning attention, reaching 87%. This type of learner was more interested in scientific communication content of the science enlightenment type, actively interacted with other types of learners, and showed moderately positive emotional characteristics. Meanwhile, the vigilant learner and optimistic learner respectively showed interest in science and technology and science culture, with learning activity levels reaching 14% and 63%, respectively. The effective clustering of these learning data allowed AI robots to have preliminary data on the learning characteristics of participating groups in scientific communication.

(a) Learner type clustering and (b) comparison of salient features of each category.

Learner Characteristic Type.

Comparative Analysis of SO-PMI Emotion Polarity Detection Accuracy

Kaggle FER-2013 (Goodfellow et al., 2013) and AffectNet (Mollahosseini et al., 2019) were selected in this study to enhance the accuracy and generalizability of emotion recognition. Kaggle FER-2013 as the public test set consisted of 35,887 images including fear, disgust, anger, happiness, neutrality, sadness, and surprise total seven facial expressions. Additionally, we incorporated the AffectNet dataset to further enhance the accuracy and generalizability of emotion recognition. AffectNet is a significantly larger and more comprehensive dataset, comprising over 420,000 images with diverse emotional expressions. The integration of AffectNet with FER-2013 provides a richer variety of emotional cues and more refined annotations, enabling the model to capture subtle facial expression nuances. This fusion enhanced the model’s performance in recognizing emotions across diverse and complex scenarios, thereby advancing the robustness and applicability of emotion recognition in human-robot interaction contexts. We trained the model on the dataset with a batch size of 64 and an initial learning rate of 0.001, as set via the “batch_size” parameter. This configuration leads to higher model accuracy. The training results were obtained after 30 epochs. After evaluation, Table 2 displayed the model’s accuracy in predicting real-time learner emotions. Further analysis through the Confusion Matrix (Figure 5) revealed that the prediction rate for the emotion “Sad” is relatively weak, at 88%. Among them, the accuracy rate for predicting “Surprise” emotions is 92%, which is the highest observed value. The predictions for the emotions “Neutral,”“Happy,” and “Angry” were comparatively better, with respective rates of 91%, 91%, and 90%. Therefore, in the real-time detection process involved in HRI, this model was able to exhibit relatively accurate emotion recognition capabilities and can be rapidly integrated into robots for detecting individuals’ real-time learning statuses.

Accuracy Comparison of Different Models.

Confusion matrix of average accuracy.

Results

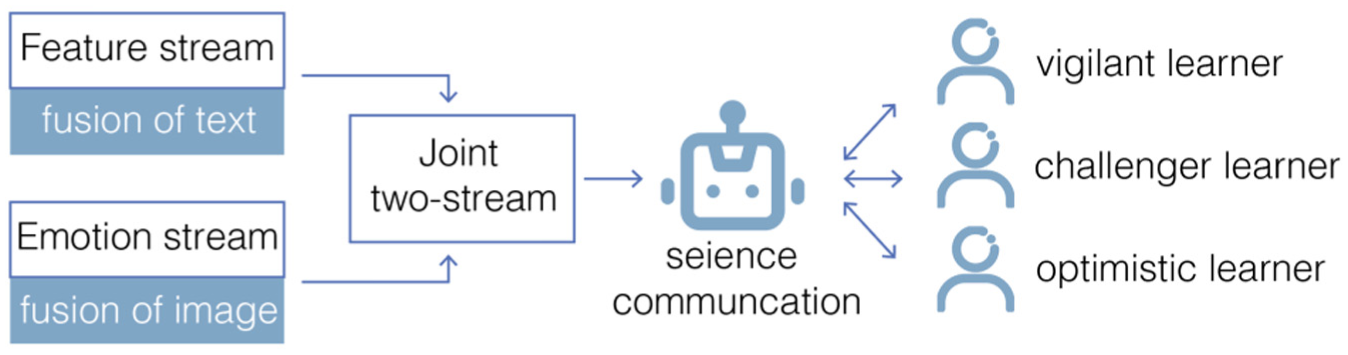

Considering that our main objective is to achieve real-time interaction and state detection between AI robots and informal learners in specific types of learning exchanges, we chose a dual-stream architecture to preserve feature streams and emotion streams. An attention mechanism-enhanced LSTM network was used to learn different features. We calculated the emotion stream and feature stream separately. For the feature stream, text embedding technology was used to convert the learner’s features into high-dimensional vectors, which were then input into an LSTM network. We take a vector of 236 dimensions as input and set the batch size to 54. The initial learning rate is 0.001 to obtain the best accuracy, and the training period was set to 20 epochs. For the emotion stream, we use the facial expression model trained in the previous step to obtain real-time changes in facial emotions, and input the features into another LSTM network. The emotion stream uses a 7 × 7 × 512 convolutional feature cube and a 4,096-dimensional feature vector as input. The learning rate is set to 0.001, and the training epochs were 20. After the above steps, the feature stream and emotion stream were fused using the vector concatenation method. Three types of learners were classified at the decision-making level. As shown in Figure 6, the process of fusing the text-based feature stream with the image-based emotional stream showed promising results. The AI machine established recognition of different types of learners through the real-time acquisition of multiple features, thereby enabling effective interaction of AI robots in scientific communication.

Joint two-stream LSTM process.

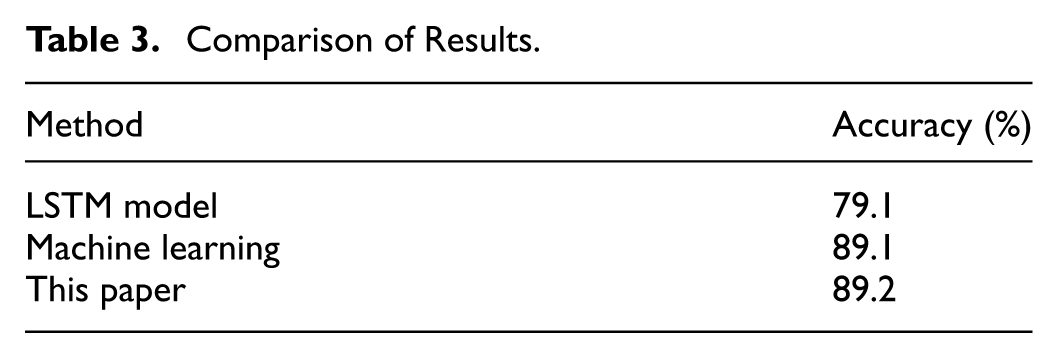

Meanwhile, this study compared CNN-LSTM-based method with other methods and found that the model proposed in this study had a higher accuracy of 89.2% (Table 3). The CNN-LSTM-based method enables AI robots to more accurately input informal learners’ emotional preferences toward scientific communication and to classify the learning characteristics of each type of learner. Furthermore, AI robots could detect changes in learners’ states in real-time to match their types when engaging in face-to-face scientific communication with informal learners.

Comparison of Results.

Discussion

This study aims to assist informal learners in bridging the gap between scientific knowledge and personal understanding through the use of AI robots, enabling more precise and tailored dissemination of science education content. To achieve this, a CNN-LSTM-based framework was developed to support real-time detection and classification of learners’ emotional states and behavioral traits while they interact with science videos. By incorporating the FER-2013 and AffectNet public datasets, the system enhances its capability in recognizing nuanced emotional states. Figure 4a shows the improvement in the model’s emotion recognition accuracy over multiple iterations. Figure 4b demonstrates the enhancement in recall, indicating that the emotion recognition system generalizes well in dynamic learner behavior. Notably, it achieved a peak accuracy of 92% in identifying the “surprise” emotion, demonstrating improved robustness over baseline models. Due to its responsiveness and adaptability, the framework is well-suited for deployment in real-time AI robot systems aimed at informal science communication.

Furthermore, in terms of feature extraction, this study achieves joint modeling of informal learners’ behavioral characteristics and facial expressions through the combination of text embedding technology and image convolutional features. The fused two-stream data enables the framework to effectively classify informal learners into distinct types in scientific communication. It performs well in distinguishing learner types such as challengers, optimists, and vigilant learners, with a Silhouette coefficient of 0.892, indicating strong clustering effectiveness. In particular, as can be seen from Figure 3a and b, different learner types have obvious distinctions in the dimension of emotional response, and challenging learners are more active in expressing multiple emotional categories, reflecting their high participation in the process of scientific communication. It is worth noting that challenger learners had the highest engagement scores in this study. This result is closely related to the cognitive style of this type of non-professional learner. Challenger learners tend to be more sensitive to complex or novel information and are more inclined to express opinions, ask questions, or demonstrate a stronger motivation to participate during interactions. Therefore, in the design of AI robots, priority can be given to enhancing emotional feedback recognition and adaptation strategies for challenger learners, thereby improving the efficiency of interaction.

Overall, the results indicate that the proposed framework generalizes well across diverse learner emotional patterns and holds promise for supporting responsive human-robot interaction in science education contexts.

Conclusion

To enhance the precision of interactions in science communication environments, this paper presents a novel AI robot detection framework based on CNN-LSTM. By performing emotion analysis on behavioral data collected from learning platforms, the framework identifies and categorizes learner characteristics. Using CNN methods, we train a facial emotion detection model and integrate dual features to monitor the real-time learning states of AI robots during science communication with learners. This enables AI robots to analyze the content preferences of various learner types and optimize the effectiveness of human-robot interaction. The CNN-LSTM-based framework enriches science communication scenarios by allowing AI to more accurately capture the interests of informal learners and achieve precise alignment with science communication resources, thereby significantly enhancing engagement and learning outcomes.

Limitations of the Study

While the model performs strongly in both recognition and classification tasks, future improvements could focus on enhancing multimodal fusion strategies and dynamic adaptation to learners’ evolving states. In addition, this study adopted a method that fused public datasets with collected samples, which may not fully capture the real-time states of all non-professional learners in science communication. The data of the current model is mainly based on public databases and online video comments, which is difficult to fully cover the real-time emotional state of all non-professional learners. Subsequent research should expand the sample source to cover groups of different ages, cultures, and educational backgrounds to improve the universality and generalization ability of the model. Future research should include a broader range of non-professional learners from diverse backgrounds to deepen our understanding of how science communication connects with these audiences.

Future Prospects

The CNN-LSTM two-stream emotion recognition framework proposed in this study shows good real-time recognition ability and interactive feedback effect in the scientific communication scenario of non-professional learners. However, it is still worth further exploration in the future. Future research should further broaden the perspective and conduct longitudinal studies to include brain wave changes in non-professional learners to achieve more accurate detection. In addition, corresponding studies can also be further carried out at different educational stages to carry out targeted scientific communication for different ages and learning needs. In terms of application scenarios, future research can extend the emotion recognition framework of AI robots to popular science education in primary or special education settings, in order to achieve broader educational value.

Practical Implications

This study contributes to providing a new pathway for the integration of artificial intelligence in informal science education, assisting informal learners in acquiring personalized scientific knowledge. It helps to bridge the knowledge gap that often arises from the complexity of scientists’ expertise or the varying knowledge backgrounds of intermediaries such as journalists. AI robots offer real-time and effective personalized explanations and guidance by recognizing the learning states, emotional fluctuations, and cognitive biases of informal learners when engaging with science communication content. This not only enhances non-professional learners’ understanding and interest in scientific information but also improves the efficiency of interaction and promotes educational equity in the science communication process.

Footnotes

Appendix A

The unsupervised K-means was defined as:

where n as the total number of data points.

The silhouette coefficient for a single sample represents as E was caculated:

ReLU was used to return any positive value of the input neuron x. The formula was as follows:

For each point E, the average distance to the other points within its own cluster is denoted as v. The average distance from the point to all points in the nearest cluster that it is not a part of is represents as g.

Mathematical details of the two-stream LSTM fusion. The model is defined as follows:

Acknowledgements

The author would like to thank all the participants who generously contributed their time and effort to this study. During the preparation of this work the authors used ChatGPT in order to improve language partly.

Ethical Considerations

This study involves the use of publicly available facial expression datasets, FER13 and AffectNet, both of which are widely used for academic research and do not contain personally identifiable information. Additionally, facial data collected by the authors were gathered with the informed consent of all participants, in accordance with institutional guidelines. All data were anonymized to ensure privacy and confidentiality.

Consent to Participate

Agree to participate. Informed consent to participate in this study was obtained from all participants prior to data collection. Participants gave their consent by completing a paper-and-pencil questionnaire that included an informed consent form explaining the purpose of the study, the voluntary nature of participation, and the assurance of data confidentiality. Completion of the questionnaire was considered as an indication of informed consent.

Consent for Publication

This study does not contain any person’s data in any form (including individual details, images, or videos).

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The work is funded by The Arts Project of National Social Science Fund of China under grant 25CG240, Jiangsu Provincial Social Science Fund Project under grant 25YSC007, Nanjing Institute of Technology Talent Introduction Research Start-up Fund Project under grant YKJ202455, the National Natural Science Foundation of China under grant 52305024, the Natural Science Foundation of Jiangsu Province under grant BK20230928, Nanjing Institute of Technology Postgraduate Education and Teaching Reform Project under grant 2025YJYJG05, Nanjing Institute of Technology Higher Education Research Project under grant 2025GJZC38, Jiangsu University Philosophy and Social Science Research General Project under grant 2025SJYB0311, the Digital Intelligence Committee for Arts, Physical Education, and Aesthetic Education of the China Educational Technology Association Research Project under grant YTM2025-M02.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The datasets generated during and/or analyzed during the current study are not publicly available due to ethical considerations.