Abstract

This study investigates high school students’ preferences for English writing feedback from three sources: non-native English teachers, native English teachers, and generative AI (ChatGPT), exploring which source students prefer and identifying factors influencing these preferences. A mixed-methods approach was employed with 20 high school students from an English newspaper club in Korea. Participants received feedback from all three providers based on a standardized rubric. Data collection included semi-structured interviews and Likert-scale surveys. Qualitative data were analyzed thematic analysis using constant comparative method, while quantitative data were analyzed using descriptive statistics, Kruskal–Wallis tests and Dunn’s post hoc analysis. Students rated teacher–ChatGPT collaborative feedback highest, followed by native English teacher feedback, ChatGPT feedback (unaware of AI source), non-native English teacher feedback, and ChatGPT feedback (aware of AI source). Native teachers were valued for linguistic naturalness and cultural insights, non-native teachers for empathetic guidance and shared backgrounds, and ChatGPT for systematic and accessible feedback. Yet concerns emerged regarding AI’s limited contextual sensitivity, emotional depth, and data privacy. Findings highlight the potential of teacher–AI collaboration, suggesting that AI’s technical precision and teachers’ contextual expertise can complement each other. The study extends the concept of “more knowledgeable others” to AI and proposes hybrid feedback models that balance efficiency, reliability, and personalization in EFL contexts.

Keywords

Introduction

In today’s globalized society, English communication skills are recognized as essential competences, and it is no exaggeration to say that English writing skills are a central element of international communication, academic achievement, and professional development (Bai et al., 2021; Kawinkoonlasate, 2021). In this context, effective English writing instruction in high school curriculum provides students with a foundation for success on the global stage. Appropriate feedback serves as a key element in such writing instruction, and plays a critical role in improving learners’ overall language skills and facilitating second language acquisition (Bitchener & Ferris, 2012; Hyland & Hyland, 2006). This is because feedback reinforces learners’ desirable behavior through positive feedback, while raises awareness of errors through negative/corrective feedback (Hattie & Clarke, 2018; Hattie & Timperley, 2007). Nevertheless, since writing ability encompasses broader performance-based elements—such as organization, content, style, grammar, vocabulary, and mechanics—than other language skills, the feedback provision process is particularly complex and requires more careful attention (Ferris & Hedgcock, 2023; Hyland, 2019; Hyland & Hyland, 2019).

Accordingly, researchers have categorized feedback types and examined their effects to provide more effective feedback to learners (Chan & Luo, 2022; Hyland & Hyland, 2019; Kluger & DeNisi, 1996). As a representative example, feedback has been classified into self-feedback, peer feedback, and teacher feedback based on its source (Diab, 2016; Tian & Zhou, 2020). Among these, teacher feedback has been considered important in the learning process due to its authoritative insights (Carless et al., 2023; Cheng & Liu, 2022; Tian & Zhou, 2020), but it often faces limitations in scalability, real-time delivery, and personalization due to teachers’ time and resources constraints (Ferguson, 2011; Ferris, 2014; Yu et al., 2021). In addressing these limitations, the development of digital technology has brought new innovations to the field of education (Haleem et al., 2022).

Particularly, technology-assisted feedback has been emerging as a promising alternative to overcome the limitations of traditional feedback by providing immediate, personalized, and actionable insights through digital tools, complementing the interaction between learners and teachers, and enhancing the feedback process while maintaining the fundamental teacher-student dynamic (Delante, 2017; Kim, 2018; Zou et al., 2023). Recently, generative AI (Gen-AI) programs such as ChatGPT have garnered attention as feedback providers by simulating interactive dialogue, providing context-sensitive explanations, and delivering real-time feedback tailored to individual learners’ needs. They not only complement traditional teacher feedback but also open up new possibilities for educational dialogue and guidance (Bahroun et al., 2023; Jeon & Lee, 2023; Steiss et al., 2024; Teng, 2025).

In this way, the modern educational environment emphasizes the importance of customized education tailored to individual learners’ needs and preferences, and seeks to realize this through diverse feedback sources. Particularly, understanding learners’ feedback preferences is critical for personalizing the learning process and enhancing learning efficiency (Pardo et al., 2019). This understanding helps students more actively accept the feedback they receive and become more immersed in learning (Carless & Boud, 2018). Therefore, providing feedback grounded in students’ preferences is an essential factor that should be considered for improving educational outcomes (Zeevy-Solovey, 2024).

Nevertheless, while existing research has extensively explored the effects of various feedback types and sources (Bitchener et al., 2005; Espasa et al., 2022; Nelson & Schunn, 2009; Niu et al., 2021; Tam, 2025), there is a scarcity of studies that simultaneously compare multiple feedback providers—such as non-native English teachers, native English teachers, and Gen-AI (ChatGPT)—in the context of high school English writing education, and that deeply explore high school students’ preferences for these three feedback sources and how these preferences influence their learning experiences.

To address this research gap, this study selected these three feedback providers as comparison targets. This is because each provider represents a core feedback source that learners actually encounter in contemporary English education environments, and has been discussed in prior studies for its unique educational values and limitations (Cheng & Zhang, 2021; Fitria, 2023; Rao & Yu, 2021). In this study, the term “feedback preference” refers to students’ subjective value judgments and emotional responses toward specific feedback providers, and is defined as a concept that includes the extent to which they perceive the feedback as useful, as well as the likelihood that they will actually incorporate the feedback into their learning.

Based on this foundation, this study extends theoretical perspectives on feedback by integrating them with the latest understandings of AI-mediated pedagogy. By exploring students’ perceptions of AI’s impact on the feedback process, it will enrich theoretical discussions about the educational potential of AI-based educational tools and effective integration. On a practical level, this study is expected to support English educators in designing learner-centered strategies by offering insights into students’ feedback preferences. This would enable the provision of feedback that more effectively reflects individual learners’ needs and preferences, contributing to qualitative improvements in educational outcomes.

Accordingly, this study investigates which feedback source learners prefer and how such preferences influence their learning experiences by comparing non-native English teachers, native English teachers, and ChatGPT. By simultaneously comparing these three feedback sources, this study aims to more comprehensively understand the patterns of feedback preferences in high school students’ English writing learning, and based on this, derive practical implications for designing effective English writing instruction. The research questions are as follows:

Literature Review

The Role and Types of Writing Feedback in Second Language Learning

Effective feedback plays a decisive role in enhancing learners’ language proficiency (Brookhart, 2017; Hyland & Hyland, 2019). According to Vygotsky’s sociocultural theory, effective feedback is crucial in facilitating the development of learners’ language skills within their zone of proximal development (Ellis, 2009; Vygotsky, 1978). In particular, second language writing is a complex process that requires the integration of various elements such as organization, content, style, grammar, vocabulary, and mechanical aspects (Ferris & Hedgcock, 2023). Therefore, appropriate feedback is essential to help learners recognize their mistakes and reinforce desirable behaviors (Hattie & Gan, 2011; Metcalfe, 2017).

In other words, writing feedback is an important formative assessment tool that provides immediate evaluation of the learners’ work (Lee, 2017; Nicol & Macfarlane-Dick, 2006), reinforcing their strengths and helping them recognize errors (Ferris, 2011). It is also a significant educational tool that provides specific guidance on both the learner’s writing process and output (Hyland, 2019). Writing feedback is generally classified into corrective feedback and formative feedback based on its purpose and effect (Ellis, 2009; Shute, 2008), and classified into self-feedback, peer feedback, and teacher feedback depending on the feedback provider (Diab, 2016; Tian & Zhou, 2020). Each type of feedback has its own strengths and weaknesses, and contributes to the development of learners’ writing skills in different ways (Chandler, 2003).

Characteristics of Teacher Feedback

Among them, teacher feedback has traditionally been considered an essential source of feedback because teachers’ expertise enables them to accurately diagnose students’ errors and provide specific directions for improvement (Al-Bashir et al., 2016; Ferris, 2014). However, on the other hand, it is pointed out that there are limitations in terms of time and resources for individual teachers to provide specific and individualized feedback to each student (Henderson et al., 2019), and consistency in feedback can be challenging (Carless & Boud, 2018). Additionally, teacher feedback can vary significantly depending on various teacher’s characteristics, including pedagogical training, teaching experience, feedback style, as well as linguistic background such as whether the teacher is a native or non-native English speaker (Li & Vuono, 2019). Among these, studies on teachers’ linguistic backgrounds demonstrate the complementary strengths of different feedback sources.

For instance, feedback from native English teachers is valued for presenting exemplary language use and effectively conveying the nuances and complexities of the language, which help learners develop more sophisticated language skills (Cheng & Zhang, 2021). In contrast, feedback from non-native English teachers provides empathy and insight into the language learning process, with the advantage of understanding learners’ errors and offering personalized guidance based on shared linguistic and cultural backgrounds (Rao & Yu, 2021). These perspectives suggest that both native and non-native English teachers bring unique and valuable contributions to the feedback process, each supporting students’ language development in distinctive ways.

The Emergence of AI-Based Feedback

As a complement to these variances and limitations of traditional feedback, the advancement of digital technologies, particularly AI, is presenting new possibilities in the field of second language writing education (Huang et al., 2023; Tsai et al., 2024). Gen-AI based tools such as ChatGPT have the potential to support the development of learners’ writing skills by providing immediate and personalized feedback while efficiently managing time and resources through large-scale data analysis (Baidoo-Anu & Ansah, 2023; Fitria, 2023; Kikalishvili, 2023; Yan, 2023). In line with this trend, recent studies have shown that learners tend to highly value the immediacy and personalized features of AI-generated feedback, which can enhance learners’ motivation and engagement compared to traditional feedback methods (Song & Song, 2023).

However, recent studies point out that current AI systems still subject to issues such as hallucination phenomena (plausible-sounding but incorrect or nonsensical content), lack of contextual awareness, and ethical limitations, which may restrict their ability to accurately interpret student errors or provide consistently reliable feedback (Banihashem et al., 2024; Su et al., 2023). Additionally, the lack of emotional interaction required during the learning process has also been noted (Wu et al., 2021). Some studies have acknowledged the continuous improvement of AI capabilities but argue that professional teacher feedback is still necessary (Kong & Yang, 2024).

Research Gap and the Need for This Study

In this way, while research comparing the advantages and disadvantages of AI feedback and teacher feedback has been actively conducted, it is important to recognize that different feedback sources not only influence the content and quality of feedback but can also significantly impact how learners perceive and prefer the feedback, which directly affect learners’ feedback acceptance and learning outcomes. Nevertheless, existing research has examined the characteristics of teacher feedback and the effects of AI feedback separately, or compared them partially, with few studies directly and simultaneously comparing learners’ preferences for feedback from native/non-native English teachers and AI feedback. Moreover, most studies on feedback preference in second language writing have been conducted with students in higher education (Cheng & Zhang, 2021; Kılıçkaya, 2022; Zhang et al., 2021), and research targeting high school students remains scarce.

Accordingly, this study was designed to fill this research gap by comparatively evaluating the strengths and weaknesses of feedback provided by non-native English teachers, native English teachers, and Gen-AI (ChatGPT), and to contribute to improving existing educational methods and establishing effective feedback provision strategies through an in-depth comparative analysis of educational outcomes based on high school learners’ feedback preferences.

Theoretical Framework

This study is grounded in social constructivist ontology, viewing language learning not as a simple individual cognitive process, but as a process meaning-constructing process within social interaction and cultural context (Lantolf & Thorne, 2006; Vygotsky, 1978). In particular, the zone of proximal development (ZPD) refers to the developmental area that learners cannot perform alone but can achieve with the assistance of a more knowledgeable other (MKO), and feedback serves as a key mediating tool that facilitates learning within the ZPD (Aljaafreh & Lantolf, 1994). From this perspective, this study regards non-native English teachers, native English teachers, and ChatGPT as different forms of MKO, considering them as interactive partners who can contribute to language development by supporting learners’ writing growth.

Furthermore, this study adopts a mixed methods design following a pragmatic approach and applies a phenomenological perspective (Creswell & Plano Clark, 2017). The phenomenological perspective enables in-depth exploration of how learners experience different feedback sources and what meanings they assign to those experiences. By complementarily combining objective measurement through surveys and statistical analysis with subjective experience interpretation through interviews and qualitative analysis, this approach allows for a comprehensive understanding of the complex patterns of feedback preferences that cannot be fully captured through a single method.

Methodology

Research Design

This study employed a mixed methods approach to deeply explore high school students’ perceptions and preferences regarding English writing feedback. Within the quantitative strand, students’ preferences for different feedback sources were measured and statistically compared. Within the qualitative strand, a phenomenological approach was adopted to focus on understanding participants’ subjective experiences and interpretations from investigating their perceptions of different feedback sources (Cilesiz, 2011).

To construct a more systematic research procedure and ensure reliability, this study referenced and adapted the key steps from prior studies (Diab, 2015; Zacharias, 2007). These steps included: (1) collecting participants’ texts, (2) preparing feedback from multiple sources, (3) providing the feedback to participants, and (4) investigating participants’ preferences through interviews or surveys.

Research Participants

The research participants consisted of 20 high school students (12 in the 2nd year, 8 in the 3rd year) who were engaged in an English newspaper-making club activity at a high school in Korea. They were recruited through convenience sampling. All participants had been involved in the English newspaper club for at least 1 to 2 years, and demonstrated high interest and engagement in English learning. Their English academic achievement placed them in the top 11% to 20% nationally, corresponding to the B2–C1 level of the Common European Framework of Reference for Languages (CEFR).

Research Procedure

In mid-April 2024, all participants submitted two drafts of English newspaper articles (see Supplemental Material 1). Subsequently, participants received feedback from three different sources (non-native teacher, native teacher, and ChatGPT) for each piece of their manuscripts. Feedback from all three sources was provided based on the same criteria from Ferris and Hedgcock’s (2023) ESL composition profile analytical rubric (see Supplemental Material 2), ensuring consistency and comparability across feedback types.

The order of feedback provision was intentionally arranged as follows: non-native English teacher → native English teacher → ChatGPT. This sequence was designed to reflect the educational environment students typically experience, and designed to explore learners’ perceptions and responses to AI feedback in a natural and realistic context, using human teacher feedback as a reference point. The non-native and native English teachers provided both oral and written feedback in face-to-face meetings with the students, while ChatGPT feedback was provided in written form only (see Supplemental Material 3). All feedback was utilized as positive data that could help learners specifically understand and further develop their writing outcomes.

A crucial aspect of the procedure involved methodological deception regarding the AI feedback. Initially, the third feedback was introduced as feedback from “another teacher” rather than disclosing that it was generated by AI, in order to observe students’ unbiased reactions. Subsequently, it was revealed that the feedback had actually been provided by ChatGPT, and once again the students’ perceptions and reactions were investigated. This approach allowed for the examination of both students’ unbiased responses to AI feedback and their perceptions after being informed of its source. In this context, “awareness” was operationally defined as the state of recognizing that the feedback provider is AI, and differences in students’ perceptions regarding changes in reliability, usefulness, and application intention before and after awareness of AI feedback were compared.

Information on Feedback Providers

In this study, the non-native English teacher feedback was provided by a Korean English teacher who holds a PhD in English Education, with 10 years of experience teaching English in high schools, 8 years of experience teaching English writing, and 5 years of experience supervising the English newspaper club. The native English teacher feedback was provided by an American English teacher pursuing a master’s degree in TESOL with a major in English literature. This teacher holds a Teaching English as a Foreign Language (TEFL) certificate and has extensive experience with 10 years of English teaching, 8 years of English writing instruction, and 8 years of guiding the English newspaper club. The AI feedback was provided using ChatGPT-4 developed by OpenAI, and to generate feedback consistent with human teacher feedback standards, Ferris and Hedgcock’s (2023) ESL composition profile analytical rubric was pre-entered into the prompts (see Supplemental Material 4).

Data Collection

Data collection included two main methods: semi-structured interviews and a Likert scale survey. Semi-structured interviews were conducted to examine students’ perceptions and reactions to feedback from different sources (see Appendix 1). The interviews were basically conducted for approximately 60 to 70 min each, with follow-up interviews conducted for students requiring further discussion during the analysis process. During the interviews, students were asked to express their degree of preference for feedback from different sources using a 7-point Likert scale (1 = strongly disagree, 7 = strongly agree) across the following five categories: (1) non-native English teacher feedback, (2) native English teacher feedback, (3) ChatGPT feedback (unaware of AI source), (4) ChatGPT feedback (aware of AI source), and (5) Teacher–ChatGPT collaborative feedback. All interviews were recorded with participants’ consent and subsequently transcribed.

Data Analysis

For qualitative data, the data collected through interviews was systematically analyzed through transcription and coding processes. The coding was based on Corbin and Strauss’s (2014) constant comparative method. The researcher repeatedly read and analyzed the data, and conceptualized meaningful statements through naming. The coding process proceeded in three stages: open coding (extracting and categorizing concepts from meaningful statements), axial coding (integrating similar concepts by comparing similarities and differences among extracted concepts), and selective coding (deriving and refining the core categories through comprehensive analysis integration). The coding was conducted by the primary researcher. To enhance the trustworthiness and credibility, member checking was employed by sharing the coding results with the participants for verification, and the module convener (supervising professor) reviewed the coding process and results to ensure validity.

For the quantitative data, the statistical analysis program SPSS version 28.0 was employed. First, descriptive statistics (mean, standard deviation, total score) were calculated for each feedback source based on the 7-point Likert scale scores. Then, the Kruskal–Wallis test, a non-parametric statistical method that does not assume normal distribution, was conducted to analyze differences between groups. Dunn’s test was subsequently applied as a post-hoc analysis to identify specific differences across groups.

Ethical Considerations

This study prioritized the rights and welfare of participants. Prior to participation, written consent was obtained, which clearly explained the research purpose, procedures, assurance of anonymity, the principle of voluntary participation, and the right to withdraw at any stage. Additionally, it was guaranteed that participants’ personal information would be used only for research purposes, and all information that could identify participants was anonymized for personal information protection, and all data would be securely stored and disposed of after the completion of the study.

Notably, this study employed methodological deception by initially introducing ChatGPT feedback as feedback from “another teacher” in order to explore students’ unbiased and natural reactions to AI feedback. This procedure was deemed essential to achieving the research objectives and was conducted after official review and approval from the author’s University Ethics Committee, as well as with permission from the institution where the research took place. Immediately after the feedback provision was completed, all participants were provided with a debriefing procedure that detailed the nature and reasons for the deception, and disclosed the true purpose of the research to ensure participants’ full understanding. Additionally, follow-up guidance was provided to adequately address any questions or concerns participants might have.

Findings

Preference Comparison (RQ1—Quantitative Results)

To address the first research question, “Which feedback providers do high school students prefer in English writing learning?” a survey was conducted during the interviews using a 7-point Likert scale (1 = strongly disagree, 7 = strongly agree). Students rated their preferences for five feedback sources: (1) non-native English teacher, (2) native English teacher, (3) ChatGPT feedback (unaware of AI source), (4) ChatGPT feedback (aware of AI source), and (5) Teacher–ChatGPT collaborative feedback. The results are as follows.

First, according to descriptive statistics, students preferred the Teacher–ChatGPT collaborative feedback the most, followed by native English teacher feedback, ChatGPT feedback (unaware of AI source), non-native English teacher feedback, and finally ChatGPT feedback (aware of AI source; see Table 1).

Results of Descriptive Statistics.

To determine whether the differences in preferences among feedback types were statistically significant, a Kruskal–Wallis test was conducted. The test statistic was 51.63 with a p-value of less than .001 at 4 degrees of freedom, confirming significant differences in ranks between groups. The relatively large test statistic (51.63) further indicated a substantial difference in score distributions among feedback sources (see Table 2).

Result of Kruskal–Wallis Test.

Note. *** indicates p < .001.

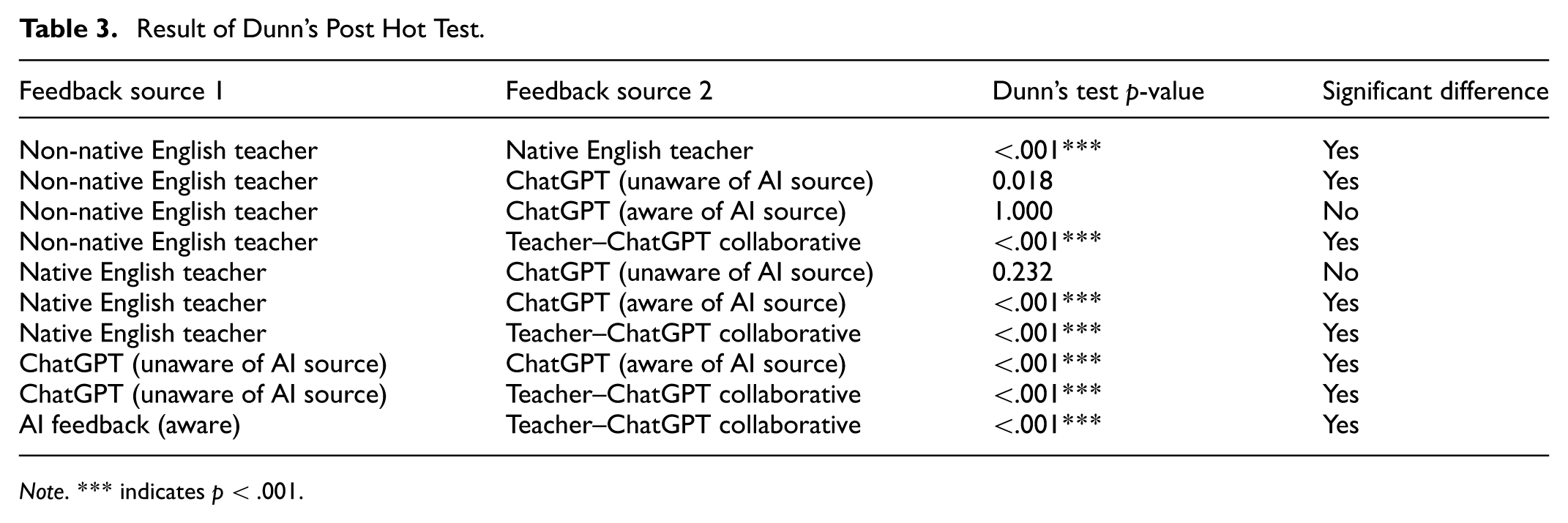

To identify which specific groups had significant differences, Dunn’s post hoc test was performed. Most group pairs showed significant differences in preferences; however, no statistically significant differences were found between non-native English teacher versus ChatGPT feedback (aware of AI source) and native English teacher versus ChatGPT feedback (unaware of AI source; see Table 3).

Result of Dunn’s Post Hot Test.

Note. *** indicates p < .001.

Particularly, noteworthy is the result showing no significant difference between non-native English teachers and ChatGPT feedback (aware of AI source). This may not indicate that students perceived the two feedback types as equal in quality. Rather, this finding may reflect students’ complex perceptions after becoming aware of AI as the feedback provider—acknowledging its strengths while simultaneously recognizing its limitations across various aspects.

Specific Factors Influencing Preferences (RQ2—Qualitative Results)

To address the second research question, “What specific factors influence high school students’ feedback preferences?” qualitative data collected through semi-structured interviews were analyzed. During the analysis process, student responses were classified into five major categories: (1) educational approach and methodology, (2) linguistic feedback characteristics, (3) relational and cultural aspects, (4) accessibility and usability, and (5) limitations and challenges. For systematic comparison across feedback sources, the results are presented in the following order: (1) non-native English teacher, (2) native English teacher, (3) ChatGPT feedback (unaware of AI source), (4) ChatGPT feedback (aware of AI source), and (5) Teacher–ChatGPT collaborative feedback. Not all five categories are discussed for each feedback source; instead, the analysis highlights the most prominent characteristics identified in the qualitative data. Additionally, the number of students who mentioned each theme is provided in parentheses to indicate the significance of each topic.

Feedback from the Non-Native English Teacher

Educational Approach and Methodology

Through educational approaches and methodologies, the non-native English teacher focused on grammar and structure, pointing out students’ grammatical errors, explaining correct sentence structures, and aiming to enhance students’ language skills. The non-native English teacher also provided advice on topic selection and content organization to help students effectively search for information and structure their content. Particularly, to aid in understanding the genre for newspaper article writing, guidance on the concept of genre, specific types, and formatting methods was provided to help students grasp the technical aspects of article writing (mentioned by 12 students).

It was my first time writing for an English newspaper, so it helped when the (non-native English) teacher explained what a newspaper article is and how to structure the content based on the topic. [Participants G, 1st interview, April 22, 2024, translated by researcher]

Additionally, the non-native English teacher respected students’ autonomy in the writing process by allowing maximum freedom throughout, which was highly beneficial. However, students expressed disappointment that the educational approach and methods centered too much on explaining grammatical errors and sentence structure, and there was relatively insufficient feedback on essential elements and formats, such as how to write newspaper articles, the overall context of articles, and topic selection (noted by 6 students).

It was good that the (non-native) English teacher corrected grammatical errors and sentence structures, but there was a lack of feedback on the overall context or how to write in terms of the topic and how to structure the writing. [Participants A, 1st interview, April 16, 2024, translated by researcher]

Linguistic Feedback Characteristics

Taking the advantage of using Korean, the non-native English teacher facilitated smooth communication with students. This greatly helped create an environment where students feel more comfortable communicating with the teacher and focus solely on the feedback content. However, looking at the educational culture of Korea, due to the lack of education on writing, particularly news article writing, in the Korean education curriculum, students experienced confusion and difficulties at the beginning of the activity. Despite the teacher’s brief explanations, this hindered students from establishing a clear direction and smoothly carrying out the overall activity (highlighted by 4 students).

We had never properly learned how to write compositions at school, especially for news articles. I didn’t know how to approach selecting a topic or structuring the writing… The (non-native) English teacher provided some explanations but only roughly, which was unsatisfying. And having to write the article in English was very burdensome… [Participant C, 1st interview, April 18, 2024, translated by researcher]

Relational and Cultural Aspects

The non-native teacher provided 1:1 personalized feedback considering individual students’ characteristics and career paths. This individualized approach was based on a close relationship and mutual understanding between students and the teacher. By providing tailored advice considering each student’s future plans and interests, the teacher guided students to explore and develop content related to their future goals more deeply. This was a great advantage not only for the students’ writing but also for their overall career exploration (reported by 7 students).

Being close with the (non-native English) teacher, and he interested in me and knew my career goals, allowed me to discuss article topics while also getting career advice related to the content… [Participant D, 2nd interview, April 26, 2024, translated by researcher]

Feedback from the Native English Teacher

Educational Approach and Methodology

Native English teacher introduced example-based learning, adopting an approach that enhanced students’ ability to think critically and correct themselves by observing good and bad examples. In addition, students were encouraged to participate in the revision process by collaboratively correcting mistakes that occurred during writing and repeatedly reviewing them. Furthermore, by systematically explaining how to write a news article, how the article is organized, and how the content develops, it helped students to understand the structure and content of the text more deeply, and ultimately improve their fundamental writing skills (mentioned by 5 students).

It was a good opportunity when the (native English) teacher showed us examples of well-written and poorly written articles and systematically explained the structure of news articles. So, I could understand not only individual sentences but also the overall structure of the text, and learn how to develop the content more logically. [Participants F, 1st interview, April 16, 2024, translated by researcher]

Linguistic Feedback Characteristics

Native English teacher focused on instilling naturalness in students’ language use. This was primarily achieved through proofreading to refine English expressions to sound natural in English, correcting grammatical errors, and smoothly modifying awkward sentences. Additionally, native English teacher helped students gain a deeper understanding of the English language by explaining the cultural background of English-speaking countries, comprehending linguistic nuances, and correcting contextual errors (identified by 10 students).

I was surprised when the (native English) teacher pointed out an awkward expression that I thought was correct. The teacher explained that while it sounds natural in Korea, it could be understood very differently in the United States, and even went on to explain the cultural differences behind it. [Participant B, 1st interview, April 18, 2024, translated by researcher]

Relational and Cultural Aspects

The presence of native English teacher was a novel and fresh experience for the students. In an environment where opportunities to converse with native English speakers were very limited, students were intrigued and engaged in the very experience of conversing with them. Moreover, native English teacher with diverse cultural backgrounds introduced characteristics of English-speaking cultures based on their own experiences. This greatly assisted students in understanding other cultures when writing, comparing them to their unique cultures, and reflecting the differences in their writing. These cultural exchanges played an important role in enhancing students’ linguistic abilities and inter-cultural competence (noted by 10 students).

It’s not common to have classes with native English teachers. So having the opportunity to converse with a native English teacher during the club activity is a huge advantage. [Participants H, 1st interview, April 22, 2024, translated by researcher] The (native English) teacher explained how people in English-speaking cultures perceive my topic. It was good to compare my culture with his (English-speaking) cultures, and increase my cultural awareness. [Participants G, 1st interview, April 16, 2024, translated by researcher]

Limitations and Challenges

The language barrier posed the biggest challenge in communication between native English teacher and students. Due to communication issues, there were often difficulties in receiving feedback, and sometimes misunderstandings occurred in conveying meaning. To address these problems, interpretation was frequently required, suggesting the need for continuous efforts to facilitate smooth communication during the educational process. Also, this highlighted how crucial smooth communication is for effective feedback. Additionally, students expressed regret that feedback from native English teacher was provided only 1 to 2 times per semester, which was absolutely insufficient. They emphasized that their writing skills could have improved further through continuous and periodic feedback (reported by 9 students).

We received native English teacher feedback just once or twice per semester. But if he could come once a month or more periodically, I think the advantages of feedback from a native English teacher would be maximized. [Participant A, 2nd interview, April 26, 2024, translated by researcher]

ChatGPT Feedback (Students Were Unaware that the Provider was ChatGPT)

Accessibility and Usability

The ChatGPT feedback was visually well-organized, providing excellent visual accessibility, and the specific revision instructions helped students easily identify and clearly understand problematic areas in their writing. These characteristics not only assisted students in thoroughly reviewing and revising their work but also enabled them to clearly recognize strengths and weaknesses of their writing, ultimately facilitating overall improvement of learners’ writing skills by allowing them to effectively address areas requiring improvement (highlighted by 7 students).

Sometimes I’m not sure what to even ask about, or don’t realize what I got wrong. But receiving such detailed, systematic feedback could make me realize what areas are lacking… [Participants B, 1st interview, April 17, 2024, translated by researcher]

As ChatGPT feedback was provided in document form, students could continuously refer to and repeatedly utilize it, supporting continuity in the learning process. This implies that ChatGPT feedback can promote sustained learning and growth beyond mere one-time information provision, contributing to achieving long-term learning goals. Furthermore, students could review and understand the feedback at their own pace, without being bound by specific times or situations. This provides students with a learning environment where they can internalize corrections through repeated exposure to written feedback and develop a deep understanding in the process (identified by 4 students).

When getting 1:1 feedback from the native English teacher, the feedback can end in that moment. But with written materials, I can refer to them later when writing and think “Oh, this was an issue!” and correct them… [Participants F, 1st interview, April 16, 2024, translated by researcher]

With specific instructions provided along with ChatGPT feedback, students had the opportunity to self-manage their learning process, think independently, and find ways to improve their writing on their own. In this process, students could actively participate in learning while developing critical thinking, problem-solving skills, and a sense of responsibility for their own learning—this particularly seemed advantageous for students who showed stronger engagement with their learning. Conversely, ChatGPT feedback alone may not be sufficient for some students, suggesting that its effective use may depend on learners’ self-directed engagement and motivation, as well as appropriate teacher support (mentioned by 4 students).

However, if feedback is given in writing, highly motivated students might be fine, but less motivated ones might stop at the feedback stage and not proceed to revisions. [Participants E, 1st interview, April 16, 2024, translated by researcher]

One of the biggest advantages of face-to-face feedback is that learning content is remembered longer through immediate correction and interaction. Students can ask questions directly and receive answers immediately during the feedback process (reported by 6 students).

When getting face-to-face feedback from the teacher, those parts are more memorable. [Participants H, 1st interview, April 22, 2024, translated by researcher]

Limitations and Challenges

ChatGPT feedback limits such direct communication opportunities, making it difficult for students to immediately resolve queries and potentially struggle with interpretation, especially for complex concepts or content that may be misunderstood. When receiving feedback solely in written form, students may fail to accurately grasp the intention and have difficulty in reflecting it appropriately. Nevertheless, the advantage of ChatGPT feedback is that it is well organized, providing students with the opportunity to carefully review and reflect on the feedback content (noted by 6 students).

When receiving written feedback on complex or difficult content, it was challenging to revise on my own without detailed in-person guidance from the teacher. [Participants J, 1st interview, April 16, 2024, translated by researcher]

ChatGPT Feedback (Students Were Aware that the Provider was ChatGPT)

Linguistic Feedback Characteristics

While ChatGPT feedback offered many advantages as a technical tool, it could not replace the need for direct educational feedback from a human teacher. Students felt that ChatGPT feedback lacked the ability to capture human nuances and emotional contexts, indicating that AI could not achieve the depth and quality of sensitive feedback that human teachers can provide. Additionally, students emphasized the greater importance of direct feedback from human teachers in an educational context. Although AI can effectively provide some technical feedback, since the ultimate readers of students’ articles are human, insights and interactions from human teachers are considered crucial elements determining the quality of the learning experience (mentioned by 14 students).

The final readers of a news article are human, right? My feelings and opinions could be included, which I think is an issue the teacher, a human, should read and judge. I don’t think AI can grasp the subtle nuances. [Participants G, 1st interview, April 22, 2024, translated by researcher]

Limitations and Challenges

There was an overall skeptical perception of the feedback provided by ChatGPT. It was judged that AI has limitations in fully understanding and reflecting subtle human emotional nuances or contexts. Additionally, students said that the feedback from AI was sometimes perceived as too mechanical or interpreted in a contextually inappropriate manner, lacking in-depth understanding compared to feedback from human teachers. These limitations appeared to impact the overall credibility of the feedback. Moreover, participants noted that AI lacks the ability to form personal relationships with students or deeply understand their individual experiences and tendencies, potentially limiting its capacity to provide feedback carefully tailored to each student’s needs and preferences (reported by 9 students).

AI doesn’t know what career I hope for or what I’m interested in. And it doesn’t know my weaknesses, strengths, history, or deeply understand my dispositions to reflect that in feedback. [Participant L, 2nd interview, April 26, 2024, translated by researcher]

ChatGPT provided effective feedback, particularly in areas of grammar, spelling, and structure, which greatly helped students identify and correct technical errors in their writing. However, despite AI’s usefulness in many technical aspects, it still exhibited insufficient understanding compared to humans in certain contexts, sometimes misinterpreting context or providing incorrect explanations, indicating areas where it has not yet fully surpassed human domain. Additionally, a major issue was that AI sometimes provides plausible feedback for nonexistent information. Such errors led learners to question the accuracy and reliability of AI-provided feedback, further highlighting the importance of critical judgment from both learners and teachers (highlighted by 8 students).

It creates a situation where I have to re-verify facts and re-investigate, so I probably won’t use it. If the teacher used AI for feedback, I would start with being suspicious. [Participants K, 1st interview, April 22, 2024, translated by researcher]

Students raised concerns about the risk of their work and personal information being exposed externally as AI processes their articles by storing data on its servers. This data could include sensitive student information, and if improperly managed or exposed, it could seriously violate student privacy, so student raised concerns about necessitating robust data protection and security. Furthermore, data processed and stored by AI could be repeatedly utilized in the AI’s learning process and potentially reused. In this context, they responded that using the data without the students’ consent might violate the Personal Information Protection Act so clear consent procedures and transparent information provision were deemed necessary (identified by 4 students).

The feedback AI provides is based on information stored in big data. What if the content of the articles I write gets stored on AI’s servers and provided as information or feedback to others? My personal information could be revealed, and what about the copyright of my work? [Participants I, 1st interview, April 17, 2024, translated by researcher]

Teacher–ChatGPT Collaborative Feedback

Linguistic Feedback Characteristics

Students responded very positively to the synergy integrated technical complementarity and human depth in feedback. Based on the technical analysis by ChatGPT, which provides highly accurate and efficient feedback in grammatical and structural aspects, teachers can offer more systematic and detailed feedback. While AI handles the technical details, teachers take charge of the depth of context and expression in the writing. This collaborative approach enables teachers to provide students with contextual understanding, cultural sensitivity, and a deep understanding of individual students, helping to enrich and develop students’ writing in more meaningful ways. Students also mentioned that even for technical feedback, if teachers offered feedback to students based on AI’s analysis, could lead to more systematic feedback (noted by 11 students).

I think the teacher could sufficiently compensate for AI’s shortcoming areas, like appropriately providing cultural or contextual nuances. [Participants B, 1st interview, April 17, 2024, translated by researcher]

Accessibility and Usability

Students expressed the expectation that teachers, by utilizing AI, could delegate the accurate analysis of technical aspects such as grammar, spelling, and sentence structure to AI. This would allow teachers to use their time and resources more efficiently, reducing their workload and enabling them to spend more time interacting with students, developing educational content, and focusing more deeply on the individual student needs. Additionally, since AI-provided feedback is not constrained by time or place, students anticipated being able to receive feedback according to their learning schedules and immediately apply it, thereby minimizing potential delays in the learning process. However, there were also concerns that using AI feedback could increase teachers’ workloads, as they would need to verify the accuracy of the feedback provided by AI (reported by 7 students).

If a teacher utilizes AI to provide feedback together, I think it could actually increase the teacher’s workload since they would have to re-verify any plausible information or errors made by AI. [Participant J, 2nd interview, April 26, 2024, translated by researcher]

Limitations and Challenges

Concerns about the reliability of AI-provided feedback and privacy protection were also highlighted as important issues in the teacher–ChatGPT collaborative feedback system. Students mentioned that AI feedback could only be accepted in a limited, collaborative manner for certain genres such as informational writing. They continued to raise reliability issues even for AI’s technically-focused feedback where AI is advantageous. Worries were also expressed about teachers becoming overly reliant on AI. Regarding privacy, they argued that as students’ work gets uploaded and stored on AI servers, data leaks could occur, potentially leading to serious privacy violations if personal information is exposed externally. Thus, teachers and institutions must transparently disclose data processing methods, storage durations, access permissions, etc. to students and parents, obtaining user consent (highlighted by 8 students).

I’m actually worried the teacher may too much rely on AI. Some teachers might just have AI do everything and only read the output. [Participant I, 2nd interview, April 26, 2024, translated by researcher]

Discussion

This study fills a gap in the existing literature by exploring high school students’ preferences and perceptions of English writing feedback. The main findings of this study can be interpreted based on Vygotsky’s social constructivism, zone of proximal development (ZPD), and the concept of more knowledgeable others (MKO). This provides new theoretical and practical insights into how AI, as a new form of MKO or mediating tool, interacts with existing human MKOs to contribute to learners’ development within their ZPD (Aljaafreh & Lantolf, 1994; Lantolf & Thorne, 2006; Vygotsky, 1978).

Quantitative analysis revealed that students rated the teacher–ChatGPT collaborative feedback most positively. This empirically supports previous research suggesting the positive impact of technology-assisted feedback (Delante, 2017; Kim, 2018; Zou et al., 2023). Such strong preference demonstrates that two types of MKOs can most effectively facilitate learning within learners’ ZPD by combining their respective strengths to create synergy. AI serves as a “technological MKO,” offering accurate and efficient support in grammatical and structural aspects, while teachers function as ‘human MKOs’ based on contextual understanding, cultural sensitivity, and empathetic support, playing complementary roles. This empirically demonstrates the new possibilities for educational dialogue and guidance proposed by Bahroun et al. (2023), and makes an important theoretical contribution by expanding the MKO concept beyond individual human experts to a collaborative network of diverse expertise sources in the AI integration era.

Students also positively evaluated the visual systematicity, repeatability, immediacy, and personalized nature of AI feedback. This supports Song and Song’s (2023) assertion that AI feedback can increase learning motivation and engagement. However, once the AI source was revealed, students raised concerns regarding lack of contextual understanding, absence of emotional exchange, and privacy protection issues. These findings empirically demonstrate the limitations of AI judgment pointed out by Wu et al. (2021), as well as the balance between efficiency and reliability discussed by Baidoo-Anu and Ansah (2023) and Kikalishvili (2023). Additionally, the finding that the lack of a large difference in preference between non-native English teacher and AI feedback (aware) suggests that students simultaneously recognize both the strengths and limitations of AI. This supports Kong and Yang’s (2024) assertion that teachers’ professional feedback remains essential, and serves as empirical evidence showing how important the balance between technological efficiency and human expertise and empathy is for facilitating learning within the ZPD.

Students’ high evaluation of native English teacher feedback underscores the importance of natural language use and explanations of cultural context, which corresponds with Cheng and Zhang’s (2021) emphasis on the effectiveness of native teachers’ exemplary language use and conveyance of linguistic nuances. Conversely, non-native English teachers showed strengths in smooth communication using Korean and providing tailored advice considering each student’s career path and interests. This supports Rao and Yu’s (2021) argument that shared linguistic and cultural backgrounds enable understanding of learners’ errors and provision of customized feedback. Together, this means that native and non-native English teachers perform complementary roles as linguistic-cultural and empathetic-personalized MKOs respectively, expanding learners’ ZPD.

Finally, by focusing on high school students—a relatively underexplored group—this study complements prior research primarily conducted in higher education settings (Cheng & Zhang, 2021; Kılıçkaya, 2022; Zhang et al., 2021). This provides new insights that appropriate types of MKOs and support methods may vary according to learners’ characteristics as well as developmental stages.

Implications

This study provides valuable implications for improving English writing education and maximizing the synergy between human expertise and AI capabilities. The theoretical and practical contribution of this study lies in proposing a multi-layered feedback ecosystem model where different feedback sources complement rather than compete with each other.

First, rather than providing the same feedback to all learners, personalized feedback tailored to individual learners’ characteristics and circumstances is necessary. In other words, to enable students to more actively accept feedback, teachers need to carefully observe learners’ needs and responses while flexibly combining face-to-face and written feedback from non-native (or native) English teachers with AI feedback, leveraging the strengths and addressing the limitations of each source in a balanced manner.

Based on students’ responses, an effective approach would be for teachers to provide feedback tailored to individual characteristics regarding overall writing intentions, content, structure, contextual understanding, and cultural sensitivity, while AI handles technical accuracy and systematic analysis. If such a teacher-AI collaborative approach were to be implemented, it would be necessary to strengthen competences in genre knowledge, contextual interpretation, AI literacy, and hybrid pedagogical design. Additionally, it would be educationally meaningful to explore restructuring the writing-feedback-revision cycle to include both teacher and AI, or to investigate personalized feedback methods linked with various assessment approaches. In this process, the fact that students conditionally accept AI underscores the need for transparent integration practices. Therefore, educational institutions should establish clear disclosure policies to help students understand AI’s capabilities and limitations, and consider gradual implementation strategies.

Although this study primarily focused on pedagogical aspects, the findings also suggest several technological directions. For example, developing personalized AI algorithms that analyze individual learners’ writing characteristics, alongside establishing ethical and institutional mechanisms to ensure feedback reliability and personal information protection, could contribute to enhancing AI feedback acceptance. In the long term, policy-level support—such as funding research on teacher–AI collaborative models, and developing and disseminating manuals and guidelines for classroom application—could help the stable establishment of teacher-technology collaborative feedback systems within school practice.

Conclusion

This study employed a mixed-methods approach to deeply explore high school students’ perceptions and preferences regarding English writing feedback from different sources: a non-native English teacher, a native English teacher, and ChatGPT. Quantitative analysis showed that students rated teacher-AI collaborative feedback the highest, followed by native English teacher feedback, ChatGPT feedback (unaware of AI source), non-native English teacher feedback, and ChatGPT feedback (aware of AI source).

Qualitative analysis findings revealed that students preferred feedback providers who could properly grasp the intent and context of their writing and offer natural expressions and genre conventions grounded in professional English knowledge. Rather than simple grammar or punctuation corrections, students expected in-depth feedback that comprehensively considered the overall flow, cultural context, and emotional expressions in their writing. Additionally, the need for personalized feedback tailored to individual students’ proficiency levels, interests, and career paths was emphasized. Students expected feedback to be provided periodically within a cyclical and systematic writing-feedback-revision process, and preferred interactive communication rather than one-way delivery. Notably, the collaborative teacher–AI feedback model showed higher preference than all other types, suggesting that the complementary roles of teacher expertise and AI support hold significant potential for learners’ writing development.

However, this study has some limitations. First, due to the limited sample size (N = 20) and research confined to a specific educational context, concerns about data saturation and representativeness of the findings arise, limiting the generalizability of the results. Future research needs to expand and validate the findings by including more diverse school levels and a larger number of students. Second, since feedback was provided in a fixed order, there is a possibility that sequence effects influenced students’ perceptions. Additionally, this study relied primarily on students’ subjective perceptions without directly evaluating the actual quality or accuracy of feedback content. Future research should introduce randomized or counterbalanced feedback ordering, and develop objective feedback quality assessment tools to examine the relationship between subjective preferences and objective effectiveness. Third, this study was limited to conceptually exploring the teacher-AI collaborative feedback model, and application and effect verification in actual classroom contexts were not carried out. Future research should implement this in real classroom settings and empirically analyze its educational effects. Lastly, interaction effects between teacher feedback and AI feedback were not directly analyzed. Employing repeated measures ANOVA or interaction term analysis within regression models could more precisely identify the impact of interactions between the two feedback sources on learners.

Overall, this study holds theoretical and practical significance in demonstrating that teachers and AI can function in a complementary rather than competitive relationship in English writing education contexts. The finding that students most positively evaluated a collaborative feedback model combining teacher expertise and AI’s analytical strengths, rather than absolutely preferring a specific feedback provider, suggests that more effective learning environments can be created when various feedback sources perform their unique roles in a complementary way. Through such collaborative approaches, it is possible to provide personalized feedback tailored to individual learners, ultimately contributing to improvements in students’ English writing ability and enriching their overall language learning experience.

Supplemental Material

sj-pdf-1-sgo-10.1177_21582440251383693 – Supplemental material for Exploring High School Students’ Preferences for English Writing Feedback: Non-Native English Teacher vs. Native English Teacher vs. ChatGPT

Supplemental material, sj-pdf-1-sgo-10.1177_21582440251383693 for Exploring High School Students’ Preferences for English Writing Feedback: Non-Native English Teacher vs. Native English Teacher vs. ChatGPT by Whyunyoung Choi in SAGE Open

Footnotes

Appendix

Acknowledgements

Not applicable.

Ethical Considerations

All procedures followed were in accordance with the ethical standards and principles for conducting research at Lancaster University. Ethical approval was granted by the Educational Research Ethics Committee, Faculty of Arts and Social Sciences, Lancaster University (Application ID: TEL/CH17/Mod1/36676856, 02/04/2024).

Consent to Participate

All participants provided written informed consent prior to participating.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by open access funding from Lancaster University.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The data supporting this study can be accessed by contacting the corresponding author. Data will be provided upon request, subject to approval by the university research ethics board. All data have been fully anonymized in compliance with privacy and ethical considerations and are available for research purposes only.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.