Abstract

Value-added assessment (VAA) is gaining increasing concern as a method to quantify the effectiveness of educational institutions, teachers, and programs by measuring student progress over time. This study utilizes bibliometric analysis of 2,046 studies and then content analysis of 165 studies to synthesize key findings and offer comprehensive insights. The bibliometric analysis identified five frequently occurring keywords within 205 key nodes, which were grouped into three clusters: development, implementation, and concerns. For the content analysis results: 1) VAA evolves through three stages: inception, expansion, and maturity; (2) VAA has been implemented to evaluate school and teacher effectiveness, teacher preparation programs, and student achievement; and (3) researchers have raised concerns about VAA’s flawed assumptions, validity and reliability issues, and risks of misuse. Future research is expected to refine VAA methodologies, enhance evidence-based decision-making, focus more on student progress, and incorporate qualitative data into quantitative models. This study contributes to the ongoing discourse on educational assessment and accountability. However, the study is limited by potential biases from relying solely on the Web of Science database and English-language publications.

Keywords

Introduction

Value-added assessment (VAA) is a method for evaluating the effectiveness of educational institutions, teachers, or programs by measuring student progress over a specific period (Levy et al., 2019; McCaffrey & Hamilton, 2007). In recent decades, assessment and evaluation have become central to shaping global educational policies and practices (Bayer et al., 2016). The increasing reliance on large-scale international assessments, such as the Program for International Student Assessment (PISA), has transformed educational accountability into a metapolicy that has significantly influenced school practices, teaching methodologies, curriculum development, and student learning experiences (Breakspear, 2012; Lingard et al., 2013). In this context, countries worldwide have begun to incorporate VAA into their educational accountability systems, given its potential to provide a more comprehensive understanding of educational effectiveness beyond raw achievement scores (OECD, 2008).

The existing literature reviews have primarily focused on VAA’s statistical modeling and specific applications in educational practice (Braun & Wainer, 2006; Hibpshman, 2004; Koedel et al., 2015; McCaffrey et al., 2003; Tekwe et al., 2004; Timmermans et al., 2011; Wiley, 2006). Early models focused on simple year-to-year score changes, while later models adopted hierarchical linear, multilevel, and random effects approaches (McCaffrey et al., 2003; Wiley, 2006). Over time, VAA has been widely applied to evaluate school effectiveness, assess teacher performance, analyze teacher preparation programs, and track student progress (Kim & Lalancette, 2013; Levy et al., 2019). For example, the gain score model compares students’ achievement gains under different teachers within the same school or district, identifying those whose students show the highest progress year-over-year (Anderman et al., 2015). However, these reviews lack a systematic examination of how VAA has evolved across different educational systems and fail to provide a global picture of its historical development, practical implications, research gaps, and emerging trends.

This gap is particularly significant given the increasing international interest in evidence-based educational evaluation and accountability. Without a comprehensive synthesis of VAA research, policymakers, educators, and researchers may struggle to interpret its global trajectory, anticipate challenges, and optimize its implementation. To address this gap, this study conducts a longitudinal bibliometric-content analysis to systematically map its historical development, implementation in education, and key limitations so as to extract key findings for future research and practice, and provide evidence-based guidance for educational evaluation reform. The research questions are as follows:

Question 1: What stages has VAA gone through according to the identified literature?

Question 2: How has VAA been implemented in educational practice?

Question 3: What are the research gaps and potential future research directions for VAA?

Materials and Methods

Data Collection

For the inclusiveness of literature on VAA, the data were drawn from the Web of Science (WOS) database. WOS is fully compatible with VOSviewer, ensuring accurate bibliometric analysis. Our research process adhered to Reed and Baxter’s (2009) guidelines for utilizing reference database in research synthesis.

To get a global overview of VAA, an initial search was conducted in the WOS database using the research term “value-added assessment OR value-added model.” The search was limited to the research areas of “Education” or “Psychology” and covered the period from the database’s inception to June 2025. This search yielded 2,046 results. The author, title, source, and abstract of each record were exported to support subsequent bibliometric analysis.

Following the initial search, a more focused search was carried out within the WOS Core Collection to identify authoritative sources for content analysis. Using the previously mentioned research term and research areas from the database’s inception to June 2025, this search returned 554 records. The full records and cited references of these 554 articles were imported into the HistCite analysis tool to identify the top 50 most highly cited authoritative publications.

To capture recent developments and broaden the dataset, 50 articles published in the past five years were manually selected for their relevance and completeness. In addition, a snowball sampling method was used to identify further studies by tracing the references cited in the selected articles. Studies were included in this review if they satisfied the following criteria:

Research about VAA in both K12 and higher education (or adult education)

Published as a scientific journal article, a policy document, a declaration, a report, a book, or a book chapter

Available as full text in English

Empirical application or literature review of VAA

Ultimately, 165 studies were selected for in-depth content analysis based on a combination of citation impact, recency, relevance, and richness of content.

This data collection ensures a comprehensive and representative sampling of the literature on value-added assessment, balancing broad coverage with a focused analysis of the most impactful and recent contributions to the field. We acknowledge several limitations in our methodology. One challenge was the rapid pace of publication, which made it difficult to keep our analysis fully up-to-date. Additionally, relying on WOS as the sole data source may have introduced potential biases, including a preference for English-language publications and the exclusion of policy-related gray literature, which could limit the diversity of perspectives considered. Furthermore, we recognize the inherent bias in content analysis, despite our efforts to ensure rigor in the process. Nevertheless, we made extensive efforts to stay current, comprehensive, and unbiased, while remaining open to diverse sources.

Data Analysis

A mixed-methods approach was employed, combining quantitative bibliometric analysis with qualitative content analysis.

Bibliometric Analysis

Bibliometric analysis is a statistical technique applied to academic publications to provide a quantitative assessment of scholarly literature (D. Chen et al., 2016). Two primary tools were used for the bibliometric component: VOSviewer (version 1.6.20) and HistCite (Pro 2.1 version). These tools were selected for their advanced visualization capabilities, efficient data processing, and comprehensive analytical functions.

VOSviewer (n = 2046) was utilized for information visualization, including the construction of co-word network graphs. The objective of this stage was to explore topics related to VAA in educational research. HistCite (n = 554) was employed to analyze literature citation networks, generate citation network graphs, and assess citation relationships and impact. This tool helped identify the distribution of documents by publication year, journals, author contributions, institutional contributions, country participation, and keyword relevance (Rajeswari et al., 2023). As citations grow over time, co-citation analysis contributes to outlining the intellectual structure of a domain through the identification of the most influential research work in that area (Bhatt et al., 2020). Using the results of co-citation analysis, we selected the top 50 co-cited articles out of 554 articles to create a co-citation matrix, which we further processed for a more detailed analysis.

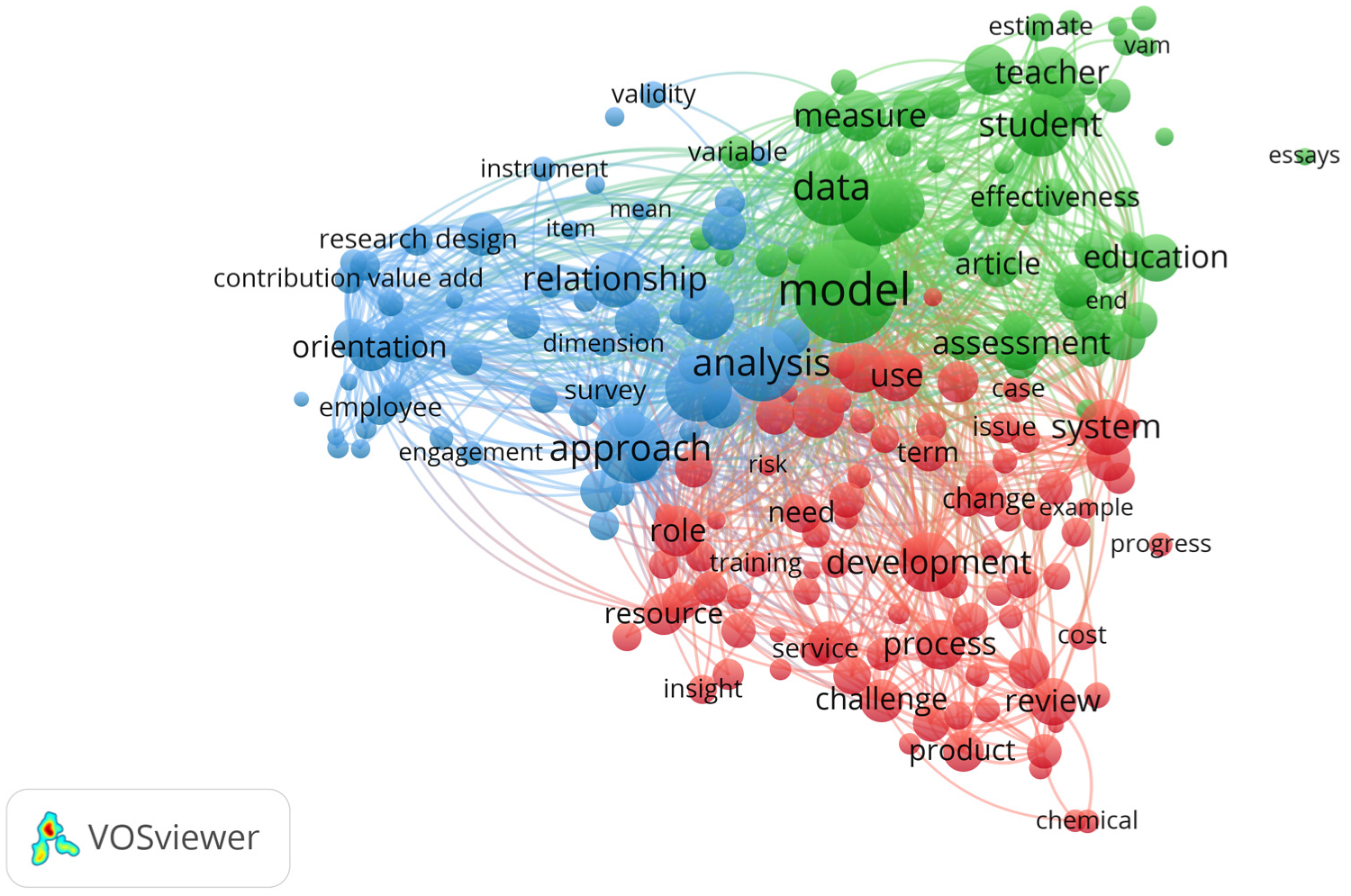

The objective of the bibliometric analysis for co-word occurrence was to explore topics related to VAA in educational research. Through iterative testing of multiple threshold levels (15, 25, 35, 45, 55), a co-occurrence threshold of 35 was selected as it provided an optimal balance between thematic clarity and term coverage (Donthu et al., 2021). Applying this threshold to the keywords extracted from the selected articles yielded 205 keywords out of a total of 33,395. Among the most frequently occurring keywords were “development,” “performance,” “application,” “models,” “ challenge,” and “quality,” suggesting their central role in shaping the research landscape. These keywords were expected to contribute to the formation of thematic clusters. The analysis produced a network visualization comprising 205 nodes, which were grouped into three distinct clusters (see Figure 1). Based on the analysis, the clusters were labeled according to the research topics, including: (a) the development process of VAA, (b) its implementation in educational practices, and (c) the concerns and future trends associated with its use. The following sections provide a detailed discussion of each cluster, incorporating relevant references to highlight the predominant research interests in VAA.

Co-occurrence network of keywords.

In addition to keyword analysis, a bibliometric screening of 554 documents from the Web of Science Core Collection was conducted using HistCite. These publications represented contributions from 1288 authors across 177 journals and 51 countries, incorporating a total of 1707 keywords. The analysis covered research published between 2011 and 2025. To further analyze VAA research, a co-citation analysis was performed, revealing key scholarly connections. The co-citation network consists of 50 influential academic articles interconnected by 99 co-citation links. The academic article with the fewest co-citations has been cited 5 times, while the one with the most co-citations has been cited 53 times. The analysis reveals a growing interest in VAA, particularly in academic articles published over the last decade. Co-citations were especially frequent for publications from 2011 to 2015, highlighting an increasing focus on this area of research. Several central academic articles, such as Koedel and Betts (2011), emerge as key nodes in the network, indicating their substantial influence on shaping the field.

Content Analysis

To identify the emergent research themes or categories, qualitative content analysis was adopted on the literature data (Prashar & Sunder, 2020). Qualitative content analysis is an approach of empirical, methodologically controlled analysis of selected literature (Lindgren et al., 2020). A total of 165 publications from 1968 to 2025 were analyzed. We carefully reviewed each source, categorizing the information according to emerging themes. For this process, an adapted version of the qualitative content analysis procedure developed by Prashar and Sunder (2020) was applied (see Figure 2). Through this inductive approach, an initial set of 12 subsidiary categories was identified, which were then synthesized into three central categories: developmental stages, implementation in educational practice, research gaps, and future directions.

Flowchart for inductive category development.

The reliability of the analysis was strengthened by using established bibliometric tools and an in-depth content analysis process. This integrated approach provided a comprehensive understanding of developmental trends and academic landscapes within the field, offering critical support for the study’s findings and discussion.

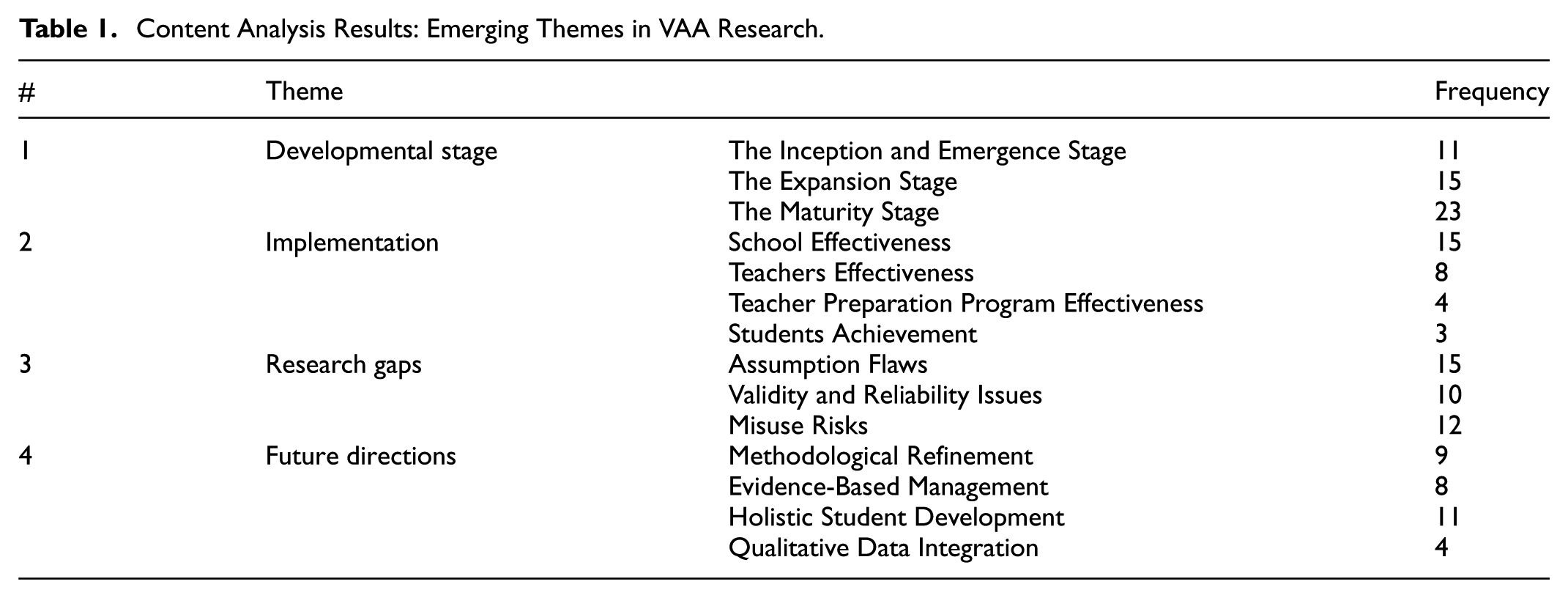

The results of our qualitative content analysis are presented in Table 1, which highlights the key themes emerging from existing research on VAA in educational practice. Our analysis identifies three predominant themes: developmental stages, implementation in educational practice, and research gaps and future directions.

Content Analysis Results: Emerging Themes in VAA Research.

Findings and Discussion

The Stages of Value-added Assessment Development

The Inception and Emergence Stage

The term “value-added” originates from economics, where it refers to the process by which an article or product gains additional value at each stage of production (Koedel et al., 2015). VAA’s methodological roots lie in econometrics and educational statistics (Wiley, 2006). This concept was subsequently adopted in education, particularly within educational assessment.

The concept and methodology of value-added assessment in education were initially introduced in the United States, particularly within the domains of educational policy and research. Its intellectual roots can be traced back to Dewey’s (1930) pragmatism, which emphasized learning through experience, the importance of educational outcomes, and the use of evidence to inform teaching and reform. Building on this foundation, American education in the mid-20th century became increasingly shaped by positivist and empiricist traditions, influenced by behavioral psychology and psychometrics.

The evaluation of the incremental value resulting from educational investment has been in existence since the 1970s (see Table 2), with a focus on the relationship between educational inputs and student performance in standardized examinations. The inception of value-added assessment can be traced back to the publication of the Coleman Report in 1968, which primarily investigated educational inequality and factors influencing student achievement. The Coleman Report suggested that among the factors influencing student achievement, school and teacher quality are the most crucial, aside from the student’s family background (Coleman, 1968). It is worth noting that family background plays a more significant role in determining student achievement than school factors. However, since students cannot change their family background, it is essential to focus on the factors that can be improved, such as school and teacher quality. Although the Coleman Report did not explicitly mention VAA, the report laid the groundwork for subsequent research by emphasizing the need to understand how educational inputs, such as teacher quality and school resources, impact student outcomes. At least, in the United States, the modern research on the “school effect” can be attributed to the Coleman Report (OECD, 2008).

VAA in the Inception and Emergence Stage.

Since the publication of the Coleman Report, various studies have been conducted, highlighting the relationship between educational inputs and student performance in standardized exams (Gansle et al., 2012; D. D. Goldhaber et al., 2013; S. M. Johnson, 2015), thereby contributing to the emergence of the concept of value-added assessment. Among the most seminal contributions was the research conducted by the economist Eric Hanushek, who began to conceptualize teacher effects in economic terms as based on the relationship between inputs (e.g., education status, years of experience) and outputs (i.e., student achievement scores) (Amrein-Beardsley & Holloway, 2017). E. Hanushek (1971) first introduced the term “value-added” within the educational context. In his pioneering study, he described a statistical model for analyzing the effects of teachers on student learning while accounting for students’ prior achievement levels. This groundbreaking work laid the foundation and provided a framework for the development of value-added models. Consequently, value-added models—previously most commonly used in business and agriculture—found their way into the education sector (Amrein-Beardsley & Holloway, 2017). Subsequently, Lindley and Smith (1972) presented a Bayesian approach to the general linear model. This hierarchical parametric structure permits the modeling of multilevel phenomena encountered in school effects research (S. Raudenbush & Bryk, 1986). These pioneering efforts set the stage for the refinement of the methodology of VAA in subsequent stages.

The Expansion Stage

In the 1980s, there was a growing interest in finding more sophisticated methods to assess the effectiveness of schools and teachers. This interest was driven by the rise of the accountability movement in U.S. education policy, beginning in the 1980s with reports such as A Nation at Risk (National Commission on Excellence in Education, 1983). The report raised concerns about the state of education in the United States. Concurrently, advances in statistical methodology and available data enabled researchers to develop more advanced value-added models. Expanding on the foundational general linear model, one notable development was the hierarchical linear model by S. Raudenbush and Bryk (1986), a sophisticated technique for analyzing nested data structures common in educational research settings.

Throughout the 1990s, test-based accountability gained significance as a central component of education reforms. States increasingly relied on standardized tests to evaluate student performance, holding schools and educators responsible for these outcomes (Fuhrman, 1999). Consequently, the value-added model for evaluating teachers or schools gained popularity. William Sanders emerged as a pioneer in value-added modeling, playing a pivotal role in its development and popularization within the field of education. Sanders and Horn (1994, 1998) developed the Tennessee Value-Added Assessment System (TVAAS), a statistical methodology that shifted the focus from year-end results to student progress, enabling the determination of the effectiveness of school systems, schools, and teachers (Everson, 2017; Hill et al., 2011; Şen et al., 2020; Timmermans et al., 2011). Evidence from the TVAAS database shows that teacher effectiveness is a major determinant of student academic progress and that teachers’ effects on student achievement are both additive and cumulative, with little evidence suggesting that subsequent effective teachers can offset the effects of ineffective ones (Sanders & Horn, 1998). With the adoption of TVAAS, Tennessee became the first state to adopt a statewide accountability system based on VAA. TVAAS was the first generation of the now widely available layered model known as the Educational Value-added Assessment System. Around the same time, a two-stage covariance model called the Dallas value-added accountability system was developed and implemented in Dallas, Texas (Ladd, 1999).

During the expansion stage (see Table 3), the applications of VAA in a few jurisdictions, including Tennessee and Dallas, have attracted the wide interest of researchers and analysts. It prompted several member countries of the Organization for Economic Cooperation and Development (OECD) to implement operational teacher and school evaluation systems, including the United States, the United Kingdom, and Australia (Kim & Lalancette, 2013; OECD, 2008). Unlike teacher accountability measures in the USA, the UK government provides a contextual value-added model, which accounts for school intake and situation, as the most widely used approach in England for evaluating school quality (Kelly & Downey, 2010; Levy et al., 2019). The Lancashire Value Added Project in the UK exemplifies a relatively successful application of VAA in evaluating school effectiveness (Thomas, 1998). However, despite the enthusiasm surrounding these applications, VAA has not seen widespread integration into official state or district evaluation frameworks, in part because its implementation requires extensive computing resources and high-quality longitudinal data that many states and districts currently do not have (McCaffrey et al., 2004).

VAA in the Expansion Stage.

The Maturity Stage

Wide Adoption and Link to High-risk

In the early 21st century, value-added assessment emerged as the predominant evaluation paradigm in basic education across the United States, facilitated by federal legislative initiatives and incentive programs. The enactment of the No Child Left Behind Act (NCLB) in 2001 mandated that states receiving federal funding establish standards for proficiency in reading and mathematics for grades 3 through 8, based on performance on standardized assessments (US Congress, 2002). Due to the testing provisions of NCLB, states are building databases containing longitudinal student records—precisely what is required for the application of value-added models (Braun & Wainer, 2006).

With the enactment of NCLB, standards-based reform became widespread throughout the United States. However, standards-based reform evolved under the influence of NCLB and other high-stakes testing policies into what is now known as test-based reform, a system where educators and others rely primarily on tests, rather than standards, to communicate expectations and guide practice (Hamilton et al., 2008). Subsequently, disputes and conflicts arose regarding how academic achievement should be measured and the appropriate role of standardized testing in student evaluations. The Education Value-Added Assessment System, based on the Tennessee Value-Added Assessment, then gained credibility in numerous districts and states as a means to address the various factors contributing to the challenges associated with NCLB and mitigate much of the controversy (Levy et al., 2019; Misco, 2008; Paige, 2020; Sanders, 2000).

This trend continued with the Race to the Top (RttT), which provided grants to states that adopted reforms like using student growth measures, including value-added models, for teacher and principal evaluations. Within the framework of the RttT initiative, the United States Department of Education mandated the development of comprehensive educator evaluation systems to enhance teacher and principal effectiveness across participating states and school districts (Ballou & Springer, 2015). Under RttT, states must link student test scores to teachers to measure teacher effectiveness and then connect those effectiveness measures back to the teachers’ preparation programs (Brady, 2021; Henry et al., 2012). Given that standardized testing was ubiquitous in U.S. school systems, VAA presented a cost-effective alternative to traditional evaluation methods (Guarino et al., 2015b). Teacher evaluations have increasingly incorporated VAA, holding educators accountable for students’ achievement gains.

During the maturity stage of value-added assessment, teacher effectiveness based on value-added models is considered a potential improvement over traditional metrics (such as classroom observations, principal observations, and measures of educational attainment or experience) due to its advantages of being more objective, relatively lower cost to calculate, and reducing many forms of bias (D. Goldhaber, 2015; Guarino et al., 2015a; Loeb et al., 2014). Student standardized assessment results were intrinsically linked to teacher effectiveness, identifying educators as “highly effective” only if their students achieved exceptional scores on these evaluations (Brady, 2021). Therefore, policymakers demonstrate an increasing propensity to utilize the results of VAA to inform high-stakes teacher personnel decisions (M. T. Johnson et al., 2015; Yeh, 2020). The policy of replacing low-performing teachers with high-performing ones, based on student academic achievement, is viewed as a potential strategy to enhance teaching effectiveness (E. A. Hanushek, 2009). This trend, encompassing teacher hiring, firing, rewards, and promotion, was spurred by relevant policies and legislation compelling states and localities to link teacher performance with compensation, contract renewal, and tenure (D. Goldhaber et al., 2014).

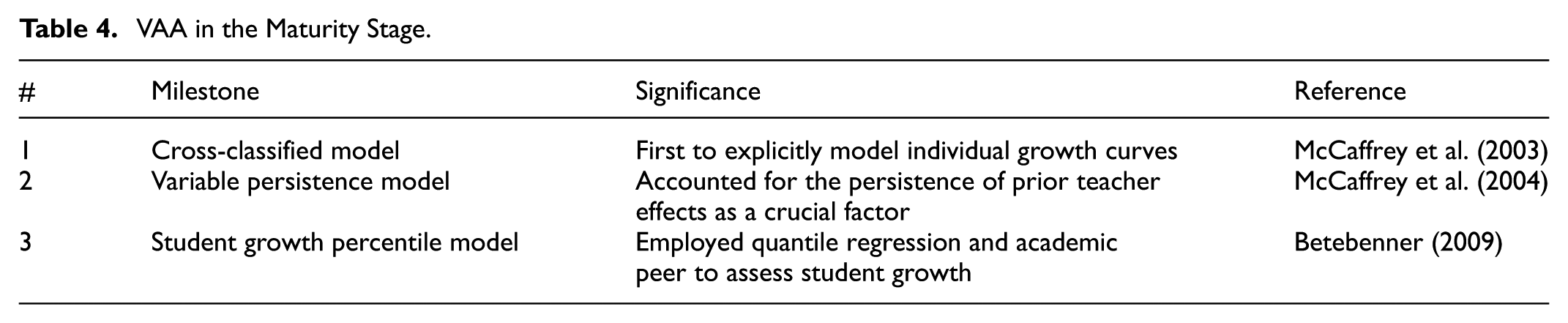

Complex Modeling and Multidimensional Assessment of VAA

The relevant legislation and widespread adoption of VAA paved the way for the further refinement and sophistication of various value-added models in practical applications. Scholars have begun to focus on the diversity of VA models and the adjustment of covariates in VAA (e.g., Everson, 2017; Levy et al., 2019; McCaffrey et al., 2004). Subsequently, VAA entered a period of complex modeling and multidimensional assessment (see Table 4). For example, in 2002, Raudenbush and Bryk developed the cross-classified model, which uses longitudinal data across subjects, years, and cohorts to analyze persistent teacher effects without attenuation. It is the first to explicitly model individual growth curves (McCaffrey et al., 2003). McCaffrey et al. (2004) proposed the variable persistence model, which posits that the impact of teachers on students’ academic performance gradually diminishes over time. By conducting a comprehensive analysis of data spanning multiple years, subjects, and groups, this model takes into account the persistence of prior teacher effects as a crucial factor. Betebenner (2009) introduced the student growth percentile model, which employs quantile regression to assess student growth by quantifying the relative change in a student’s position within a cohort of academically similar peers. Currently, seven frequently employed models for measuring growth include the gain score model, the residual model, the covariate adjustment model, the student growth percentile model, the TVAAS, the cross-classified model, and the variable persistence model.

VAA in the Maturity Stage.

The discussion regarding covariates in value-added assessment involves considering additional factors within the model that may influence assessment outcomes. These factors can be categorized as student-level characteristics, family background characteristics, and educational context-level characteristics (Levy et al., 2019). Ballou et al. (2004) modified the TVAAS by introducing controls for student socioeconomic status and demographics. Their results show that accounting for individual student characteristics had minimal impact on estimated teacher effects. However, including school-level poverty measures (percentage of students eligible for free/reduced-price lunch) had a more significant effect. Koedel et al. (2015) suggest that including demographic and socioeconomic factors in value-added models, though minimally impactful overall, can prevent misclassifying teachers in extreme circumstances. The inclusion or exclusion of specific covariates remains a debated topic (Everson, 2017).

Implementation of VAA in Educational Practices

In the domain of educational assessment, VAA was used to measure school effectiveness, teacher effectiveness, teacher preparation program effectiveness, and student achievement (see Table 5). The two most frequent targets of VA models are teachers and schools. For example, Levy et al. (2019) analyzed a total of 370 empirical studies of VAA and found that, for half of the studies, the target of the VAA was the teacher, with the majority of these studies conducted in the USA.

The Implementation of VAA.

Originating in the United States, VAA has gained widespread adoption across numerous states, with nearly half of the states either mandating or recommending its use (Kurtz, 2018). Beyond the U.S., VAA has been implemented in several countries, including the United Kingdom and Australia, though with different focuses. In the U.S., VAA has primarily been applied to assess school effectiveness, teacher effectiveness, teacher preparation programs, and student progress. In contrast, in the UK, VAA has become the most widely used approach for evaluating school quality (Kelly & Downey, 2010; Levy et al., 2019). In China, VAA is still in the exploratory phase. As part of the 2020 educational reform, there has been a growing interest in using VAA to evaluate student achievement as a key component of educational assessment (Qin & Zhang, 2022; Zhu & Wu, 2023).

School Effectiveness

School effectiveness refers to an educational institution’s ability to achieve its predetermined goals, particularly in promoting student learning outcomes, development, and well-being (Gao et al., 2025). In the context of school effectiveness, VAA evaluates a school’s impact on students’ achievement over a specific period (see e.g. Leckie et al., 2024; Leckie & Goldstein, 2019; Henderson et al., 2020; Şen et al., 2020). By comparing outcomes after adjusting for varying intake achievement, VAA indicates the relative boost a school offers to a student’s previous level of accomplishment compared to similar students in other schools (Thomas, 1998). For example, employing VAA, Opare-Kumi (2024) showed that students in schools using English as a medium of instruction have lower mathematics test scores compared to students in mother tongue education schools.

Recent studies on school value-added scores focus on isolating the specific contribution of schools to student achievement. Persad and Antoine (2023) quantified the effects of secondary schools on student performance in math and English by controlling for prior attainment and background factors such as socio-economic status, gender, and age. Similarly, Ansari (2024) applied value-added assessment to measure student progress in math, English, and Urdu, isolating the impact of schools on learning gains beyond students’ initial ability levels.

VAA in school effectiveness serves multiple purposes and addresses three major policy goals: school accountability, school improvement, and school choice. Primarily, VAA supports external accountability by quantifying the impact of educational institutions on student learning, thereby holding schools accountable for their role in shaping academic outcomes (Fuhrman, 1999). Additionally, VAA promotes school improvement by providing data to inform decision-making, aiming to contribute to better decisions about educational practices, which, in turn, should lead to improved student achievement (McCaffrey & Hamilton, 2007). Furthermore, VAA facilitates school choice by providing parents and families with performance data on various schools, enabling informed decision-making (Doris et al., 2022; Henderson et al., 2020; OECD, 2008).

Teacher Effectiveness

When applied to teacher effectiveness, VAA aims to measure the impact of individual teachers on students’ achievement over time. In other words, it assesses how much academic progress students make when taught by a particular teacher compared to their peers taught by other teachers (Jerrim et al., 2025). The goal of teacher value-added assessment is to separate the influence of individual teachers from student background characteristics, peer effects, and school effects (Papay, 2011).

Recent studies highlight that teachers play a critical role in determining students’ academic progress. Ng (2024) examined the effects of tenure on teacher productivity by comparing fourth-year tenured and pre-tenured teachers, finding a decline in math value-added scores after tenure, while English language arts value-added and evaluation ratings remained unchanged. Similarly, Jerrim et al. (2025) emphasized that a substantial portion of students’ progress in reading and math can be attributed to differences between teachers, underscoring the significant impact of teacher assignment on student achievement.

This application of VAA is an educationally and economically meaningful measure (Chetty et al., 2014a, 2014b; Koedel et al., 2015). The existing test score value-added measures are a good proxy for a teacher’s ability to improve students’ test scores (Chetty et al., 2014a). VAA can be utilized to improve educational programs, enhance teacher responsiveness to individual student learning needs, and facilitate curriculum changes in individual classrooms based on student achievement level data (Misco, 2008), thereby improving the overall quality of education. Improving teaching quality is likely to have substantial economic and social benefits. Chetty et al. (2014b) found that a one standard deviation increase in teacher value-added in a single grade level is associated with a 2.2% higher probability of college attendance at age 20 and a 1.3% increase in annual earnings at age 28.

Teacher Preparation Program Effectiveness

Teacher Preparation Program (TPP) is another accountability reform in addition to school effectiveness and teacher effectiveness. These programs are held accountable for producing effective teachers by test score gains of the students they teach (Henry et al., 2012). Emphasizing teacher preparation is attractive as it targets an essential, continuous process that can proactively address issues of teacher effectiveness (Noell et al., 2019). The underlying premise is that effective teacher preparation programs should lead to improved teacher instructional practices, which, in turn, should positively impact student achievement. Systemic efforts to strengthen teacher preparation in Louisiana led to the implementation of the first statewide VAA of TPPs (Noell et al., 2019). Subsequently, a number of states have adopted this system.

Recently, VAA has been increasingly applied to evaluate the effectiveness of teacher preparation. For example, Rhodes and Marder (2024) used value-added models to analyze how different teacher preparation pathways influence student test score gains across multiple grades and subjects. Similarly, B. Chen et al. (2024) examined whether candidates’ demonstrated teaching skills during training could predict their future impact on student achievement, using VAA to establish the link between preparation quality and classroom effectiveness.

Student Achievement

Extending the application of VAA beyond schools, teachers, and TPPs, it can also provide valuable insights into individual student progress. In the context of student ability studies, VAA offers a unique perspective on how much a student has advanced academically. In contrast to summative assessment, this approach shifts its emphasis away from final scores. Instead, it centers on establishing a reference frame starting from each student’s individual level, assessing specific stages in the dynamic changes of students, and considering the factors influencing the ongoing process and transformations (Qin & Zhang, 2022).

Compared to more conventional methods of high-stakes assessment, VAA of academic accomplishment is more closely linked with goal orientation theory because it places greater focus on monitoring student development (Anderman et al., 2010). This alignment is supported by research conducted by Zhu and Wu (2023), which revealed a strong connection between students’ reading abilities in math and their overall academic performance gains, as measured by VAA scores. Students who scored higher on assessments of math reading tended to show greater value-added improvement.

Most importantly, the assessment outcomes generated from VAA hold the potential to positively influence student motivation. Data that truly reflect growth in student learning can be used by educators to augment student motivation (Anderman et al., 2010). Using VAA to assess student growth is particularly important as it provides a more comprehensive and specific view of student progress, potentially boosting confidence and encouraging continued effort. However, a large number of VAA practices still focus on school and teacher accountability, and only a few truly evaluate and focus on student academic growth.

Research Gaps

Despite advancements in its implementation and statistical methodology, VAA in education continues to face significant scrutiny and critique on ethical concerns. Extensive debates persist regarding the unreasonable underlying assumptions, low validity and reliability, and high-risk application of its outcomes (e.g., Amrein-Beardsley & Close, 2021; Hill et al., 2011; Koedel & Betts, 2011; Papay, 2011; Paufler & Amrein-Beardsley, 2014; Schochet & Chiang, 2013).

Assumption Flaws

VAA is based on the assumption that it uses multiple years of test-score data on students to try to estimate the causal effects of individual schools or teachers on student learning (McCaffrey & Hamilton, 2007). It attempts to isolate the contributions of individual teachers or schools to student achievement (Papay, 2011). However, many researchers have challenged this assumption, arguing that using standardized assessments as the primary measure of accountability and isolating the causal effects of school education or individual teacher influence is problematic, as students’ learning outcomes are influenced by multiple factors (Brady, 2021; Guarino et al., 2015b).

The critics surrounding underlying assumptions arise from the recognition that value-added models should not be interpreted as estimating the causal effects of teachers or schools (Konstantopoulos, 2014; Rothstein, 2009; Rubin et al., 2004). Yeh (2020), for instance, questions the presumed causal relationship between what is labeled the “teacher’s contribution” to student achievement and subsequent improvements in student performance. However, some researchers view value-added scores as the clearest indicators of school/teacher effectiveness and quality, defined as the amount by which schools/teachers increase their students’ achievement test scores over the year (E. A. Hanushek, 2009; Hill et al., 2011; Loeb et al., 2014).

One concern is that good teaching may have long-lasting effects, implying that an increase or decrease in student performance could be attributable to exposure to exceptionally high or low-quality teaching in a prior year rather than the influence of the current teacher (Misco, 2008). Moreover, if the assignment of students to teachers were random, neither the estimation strategy nor the choice of control variables in the model would substantially affect teacher effectiveness estimates (Guarino et al., 2015b). However, since student-teacher assignments are not randomized and students attend schools chosen by their parents, this results in student and family backgrounds being confounded with teacher and school characteristics (M. T. Johnson et al., 2015; Konstantopoulos, 2014). VAA models that do not account for these factors may inaccurately attribute student outcomes solely to the teacher’s influence, leading to biased evaluations (American Educational Research Association, 2015; Hill et al., 2011).

Thus, just as researchers argue that value-added scores represent not only some “true’’ value that teachers add to student learning but also the influence of previous teachers, errors in measurement, and possible bias stemming from how students are assigned to classrooms and teachers to schools (Hill et al., 2011). Jerrim et al. (2025) found that much of the progress that primary school students make in reading and math is not solely due to the individual teacher they are assigned, but rather due to factors that operate across different teachers. At the same time, it is important to recognize that exam results and test scores alone are not sufficient to make sound judgments about school performance or teacher effectiveness (Thomas, 1998). Taken together, these debates indicate that the assumption that VAA can estimate the causal effects and accurately distinguish the effects of teachers and schools is unreasonable.

Validity and Reliability Issues

The search for “accurate, verifiable information” about the effectiveness of teachers and schools has long frustrated educational researchers and practitioners, with many now believing that the VAA holds the long-awaited solution to this problem (Wiley, 2006). However, the low stability of value-added scores has become a topic of widespread discussion (e.g., Levy et al., 2019; Yeh, 2020). For example, Rothstein’s (2009, 2017) finding that the value-added model produces biased estimates of teacher contributions to student achievement. Numerous scholars have explored the causes of this instability and the variations in VAA results, uncovering a complex landscape of methodological challenges.

One significant issue is the inconsistency between different VAA models. Sass et al. (2014) observed instances where various VAA models produced different evaluation results for the same teachers or schools. This finding is corroborated by several studies indicating that teacher effect estimates from different value-added models can vary widely, with minimal overlap between teachers identified as high or low performers across different models (e.g. Kurtz, 2018; Newton et al., 2010). That is, depending on the model employed, a teacher may be classified anywhere from top-performing to average or even among the worst, raising serious questions about the reliability of these assessments.

Lockwood et al. (2007) delved deeper into the sources of this variability, finding that using different mathematics achievement measures leads to much greater differences in estimated effects than those caused by choosing various model specifications. Moreover, Papay (2011) highlighted additional sources of potential bias, noting that test timing and measurement errors can introduce inaccuracies in estimating teacher effectiveness, thereby compromising the validity of evaluations. Value-added scores in New York, North Carolina, Los Angeles, and likely other locations are moderately biased due to student sorting, with a magnitude sufficient to result in significant misclassification rates in evaluation systems based on value-added measures (Rothstein, 2017).

The instability of VAA results over time and across different classes presents another significant challenge. Berliner (2014) argued that VAA scores are not stable from class to class or year to year due to the myriad of exogenous variables that impact student achievement in the classroom and may never be stable enough to be used to evaluate teachers. For further refinement, Konstantopoulos (2014) suggested establishing a comprehensive teacher evaluation system in which value-added measures should neither be eliminated nor entirely relied upon.

These findings collectively emphasize the low validity and reliability of value-added assessment outcomes. The inconsistencies among different models, the impact of varying test measures, and the influence of external factors all contribute to a scenario where the reliability and validity of VAA are continually questioned.

Misuse Risks

There is substantial controversy regarding the application of value-added assessment results. While some scholars argue that the results of VAA can provide valuable feedback for teachers to enhance instruction and improve educational quality (S. M. Johnson, 2015), others caution against their misuse and potential negative consequences.

The American Educational Research Association (AERA, 2015) warns of biases in value-added score results and the high risks associated with misusing assessment results for high-stakes decision-making, which can have potential negative consequences. This concern is particularly relevant in the context of attempts to link teacher pay and tenure to performance, which not only involves high stakes but also diverges significantly from the original intent of value-added assessments (Caillier, 2010; Hershberg et al., 2004). Recently, studies have raised ongoing concerns about the misuse of VAA in high-stakes teacher evaluations. Legal efforts across the United States to block the use of VAA in high-stakes teacher evaluations, such as decisions on merit pay, tenure, and dismissal, have not succeeded (Amrein-Beardsley, 2023). These challenges highlight persistent doubts about the validity and fairness of such models. Amrein-Beardsley et al. (2023) urge caution in relying on VAA for consequential personnel decisions without stronger empirical support, calling for further research into its interpretation and application. Pivovarova and Amrein-Beardsley (2024) also stress that teachers’ score distributions must be considered when VAA is used alongside other indicators of teacher quality. Together, these studies highlight the need for more responsible, evidence-based integration of VAA into education policy.

In addition to the risks that value-added assessment poses to teacher retention and tenure, the most serious concern is the continuation of NCLB, which emphasizes content mastery above all other curricular components (Misco, 2008). This approach may result in overreliance on standardized testing, potentially leading to unfair evaluation results that overshadow other important factors. Additionally, excessive emphasis on quantitative metrics may incentivize a test-driven approach to teaching, producing misleading effects on school and teacher value-added scores (Ballou & Springer, 2015; D. Goldhaber, 2015).

While VAA results have the potential to provide useful insights, their application must be approached with caution. Wiley (2006) suggested that the results of VAA should not be used as the sole indicator of teacher effectiveness, and high-stakes decisions should not be made primarily based on VAA estimates. Thomas (1998) asserts that monitoring alone does not improve performance, nor does it provide definite distinctions or comparisons. He emphasizes that linking school effectiveness measures to school improvement is a process that starts with analysis but must continue beyond it. Those suggestions highlight the importance of viewing data analysis as a starting point for improvement rather than as the endpoint for an accountability system. A balanced approach that considers multiple educational factors beyond test scores is essential for fair and effective evaluation of schools and teachers.

Future Directions

In response to ongoing criticisms and the growing use of VAA in education, researchers have identified several emerging trends: refining VAA methodologies, enhancing evidence-based decision-making, promoting holistic student development, and integrating qualitative insights into VAA (see Table 6).

Research Gaps and Future Directions of VAA.

Methodological Refinement

The most important point to understand is that while certain models have shown utility for targeted applications, no single approach provides an optimal solution universally, nor do they yield results that are reliable enough to warrant their use in high-stakes evaluations of teachers or schools (Hibpshman, 2004; Kim & Lalancette, 2013). Thus, one important direction for future research is the consideration of the cumulative impact of teachers and schools on student achievement, with a cautious approach to applying these results in evidence-based management rather than high-stakes accountability.

Researchers are increasingly focusing on the cumulative impact in evaluating teacher and school value-added scores. Lee and Choi (2024) applied VAA to assess the cumulative influence of teacher effectiveness on student achievement, highlighting its long-term effects on learning disparities. Similarly, Temurtaş and Aktan (2024) used test scores from the same students across three consecutive assessments to estimate teacher value-added scores. At the school level, Gao and Bi (2023) found that school effects were stable and consistent over time, with gross effects models showing greater stability than traditional value-added models. Page et al. (2024) also emphasized the need to account for temporal dependence in school performance, noting that ignoring it reduces estimation efficiency, while incorporating it, even when the dependence is weak, improves accuracy.

Recent studies are calling for future research to adopt a cumulative lens in evaluating teacher impact, school performance, and educational equity (Lee & Choi, 2024). By continuously improving the accuracy and reliability of VAA models, policymakers and educators will be able to place greater confidence in the results and use them more effectively to inform decision-making processes.

Evidence-Based Management

School evaluations and student assessment outcomes can serve as valuable tools for evidence-based management, informing decisions on resource allocation, student promotion and retention, and teacher professional development (Bayer et al., 2016). Amrein-Beardsley et al. (2023) call for further research to critically assess the validity of VAA interpretations to support more responsible and data-driven policy decisions.

Educational improvement should emphasize collaborative, school-wide strategies rather than focusing solely on individual teacher performance (Jerrim et al., 2025). Beyond high-stakes accountability, the application of VAA may also prove useful in understanding the efficacy of different routes into teaching. It may further help assess the impact of teacher-led instruction and classroom discussion on outcomes such as procedural knowledge, self-efficacy, and intrinsic motivation (Boel et al., 2025). Using a dynamic value-added model, Opare-Kumi (2024) found that students in English-medium instruction schools scored lower in mathematics than those taught in their mother tongue. In special education settings, Even and BenDavid-Hadar (2025) used VAA to link student performance gains to principals’ transformational leadership, suggesting that future research and policy should focus on leadership assignments and resource support for disadvantaged students.

Rather than relying solely on VAA outcomes, it can serve as a predictive tool. For example, D. Goldhaber et al. (2025) used value-added scores in math to examine how well external evaluations align with actual teacher impact. Similarly, Bertoni et al. (2024) applied VAA to assess the effectiveness of Peru’s teacher evaluation instruments.

Future research should view data analysis as a starting point for improvement, not merely an endpoint for accountability. VAA outcomes can serve as valuable tools for evidence-based management, guiding decisions on resource allocation, student progression, and teacher development.

Holistic Student Development

VAA is undergoing a significant transformation, shifting its primary focus from evaluating teachers and schools to assessing individual student growth. As policymakers increasingly prioritize teacher quality and accountability, there is a growing recognition of the importance of evaluating student growth rather than relying solely on absolute achievement scores (Anderman et al., 2015; Zhu & Wu, 2023). This shift aligns VAA more closely with goal orientation theory, emphasizing the monitoring of student development over time (Anderman et al., 2010).

Although many researchers have recognized the importance of evaluating student growth rather than solely relying on absolute student achievement scores to design accountability systems, many empirical studies on VAA fail to treat students as the primary subjects of assessment. Instead, these studies hold schools and teachers accountable by evaluating the value or growth students have achieved, rather than assessing the students directly.

An emerging trend is focusing on assessing student growth. This student-centered approach addresses some of the reliability and validity issues associated with using VAA for teacher and school accountability. For example, growth models such as the student growth percentile, proposed by Betebenner (2009), evaluate student progress directly, without the need to isolate the specific contributions of teachers or schools. This approach is inherently more equitable, as it recognizes and values progress for all students, considering their starting levels. Moreover, the shift toward measuring student growth mitigates potential misuse of high-stakes evaluation results. VAA, with students as the focus of assessment, measures their progress by tracking learning trajectories and growth trends. The results of this assessment provide positive, incentive-based feedback on student development.

In addition to shifting the focus of assessment to students’ growth, future research in the field of VAA will likely adopt a more comprehensive perspective on student growth. While VAA has traditionally focused on students’ academic achievement, there is growing recognition of the importance of non-cognitive factors and students’ holistic development. Several skills are relevant to student learning. Non-cognitive outcomes such as motivation, self-efficacy, and collaboration appear to be linked to student achievement, and these factors have received increased attention in recent years (Bayer et al., 2016). Scholars have acknowledged the multidimensional nature of students’ non-cognitive abilities and affective factors within the context of value-added assessment, focusing research on the student level and exploring how various student factors influence their growth (e.g. Aubery & Sahn, 2021; DeAngelis, 2021; Loeb et al., 2019). Recent studies using VAA demonstrate this shift. Li et al. (2024) used VAA to estimate the impact of parental involvement on children’s non-cognitive abilities, controlling for baseline, individual, family, class, and county-level factors. Donaldson et al. (2025) applied VAA to assess how schools contribute to students’ mental wellbeing beyond individual background factors during the transition from primary to secondary school. In higher education, Zhang et al. (2023) used VAA to assess how undergraduate education enhances students’ critical thinking.

Future VAA research should prioritize student assessment to strengthen its educational value and incentive structures. Expanding its scope can help address accountability concerns and support a more comprehensive evaluation system. Specifically, studies could explore integrating social-emotional skills, character traits, and other non-cognitive aspects of student growth. This shift would offer a fuller picture of development and inform teaching strategies that promote well-rounded learning.

Qualitative Data Integration

To date, most research on VAA has been purely quantitative (Hill et al., 2011). This overemphasis on quantitative metrics in VAA neglects essential dimensions such as non-cognitive abilities, creativity, and critical thinking, which will result in a test-oriented approach to teaching. This practical bias leads to unstable results in evaluating schools and teachers, raising concerns about transparency and incentivizing deceptive practices (Amrein-Beardsley & Holloway, 2017). For instance, if a teacher or school is financially rewarded by the state through a merit pay program for maximizing standardized test scores, they may divert resources away from activities that foster character education (DeAngelis, 2021). While using annual student achievement data for educational accountability purposes is beneficial in some way, employing students’ progress within an academic year for systematic formative assessment has been proposed as a more comprehensive method. Formative assessment, unlike high-stakes summative evaluation, seeks to prevent issues proactively instead of merely ensuring accountability or retrospectively reporting outcomes (Cummings et al., 2015; Papay, 2011). Thus, the promising direction for future research in the field of VAA involves the integration of qualitative data that extends beyond traditional standardized tests.

As education evolves to prioritize 21st-century skills and personalized learning, there is a growing emphasis on authentic assessments that reflect real-world tasks and problem-solving abilities. Future research should explore practical mechanisms for integrating qualitative data into VAA to capture students’ holistic development. Students are not merely academic performers but whole persons whose growth includes non-cognitive dimensions such as resilience, collaboration, motivation, and emotional well-being. These aspects, often overlooked in large-scale or standardized tests, can be better assessed through qualitative methods. Qualitative data, including classroom observations, student portfolios, and teacher reflections, can offer valuable insights into teaching practices, student engagement, and instructional quality. For instance, tools like e-portfolios, classroom observations, and interviews allow for process-oriented assessment, documenting students’ learning journeys over time. Student portfolios can showcase progress in creativity or teamwork; classroom observations may reveal engagement and behavioral development; interviews provide insight into self-regulation or goal-setting. By combining these qualitative sources with quantitative VAA data, researchers can construct a more accurate and comprehensive picture of student growth.

Conclusion

VAA has emerged as a crucial method in global educational evaluation, evolving through phases of inception, expansion, maturity and critical reflection. This progression has established a comprehensive system for gauging school and teacher effectiveness while tracking student achievement. VAA’s empirical focus, targeting schools, teachers, programs, and students, emphasizes its versatility and significance in shaping educational policies and practices. Despite its widespread adoption and potential benefits, VAA faces scrutiny. Critics highlight several concerns, such as the reliance on potentially flawed assumptions, questions regarding the validity and reliability of outcomes, risks of misuse, and an overemphasis on quantitative metrics. These limitations may threaten the equity of the individuals being assessed. These criticisms emphasize the need for ongoing research trends, including methodological advancements, evidence-based management within educational institutions, a broader understanding of student growth, and the incorporation of qualitative data.

This study has conducted a comprehensive review of the development, implementation, and concerns of VAA, providing policymakers, educators, and researchers with some implications. While VAA has gained widespread use in the USA due to supportive policies, its adoption remains limited in many other parts of the world due to a lack of available datasets (Jerrim et al., 2025). To leverage the full potential of VAA in educational assessment, longitudinal data regarding students’ large-scale testing and other background information are needed to facilitate its integration into educational practices (Temurtaş & Aktan, 2024). For Policymakers, more national-level policies should be developed to promote the application of VAA in educational practices. For educators, VAA should be incorporated into different types of assessments, such as formative and summative assessments, to ensure a comprehensive evaluation system. For researchers, additional research is necessary to explore the practical implementation of VAA in assessing students’ achievement, helping to clarify misconceptions and reduce errors in educational practice. These insights may guide future research and reforms in educational evaluation.

However, the review has some limitations. Relying on WOS as the sole data source may have introduced potential biases, including a preference for English-language publications and the exclusion of policy-related gray literature, which could limit the diversity of perspectives considered. A cross-database replication is recommended for future research. In addition, the rapid pace of publication makes it challenging to keep the review completely current.

Future research should examine the global applicability of VAA, particularly in low-resource and non-Western contexts, where evidence remains limited due to insufficient data (Jerrim et al., 2025). To track student achievement effectively, countries need to establish comprehensive longitudinal data systems, including large-scale exam results and background information of students. Additionally, integrating qualitative data can support holistic student development. As education systems worldwide face evolving challenges in assessment and accountability, VAA holds potential for promoting more equitable and informed evaluations of educational outcomes.

Footnotes

Ethical Considerations

No. The authors not interact with any human participants/subjects or identifiable private information

Author Contributions

Conceptualization, Chen. J.; validation, Chen. J.; methodology, Chen. J.; formal analysis, Wan. X.; writing - original draft, Chen. J. and Wan. X.; Writing – review and editing, Chen. J., Wan. X., and Luo. Z.; supervision, Chen. J.; funding acquisition, Chen. J.. All authors have read and agreed to the published version of the manuscript.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The Chongqing Federation of Social Sciences (grant No. 2021NDYB120), the Chongqing Higher Education Association (grant number: CQGJ21B027), and the Chongqing Normal University Doctoral Program (grant number: 23XWB058).

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The data generated by this study is available from the corresponding author upon reasonable request.