Abstract

This study, which delves into the role of artificial intelligence (AI) in detecting disinformation within media platforms, is of paramount importance in the current digital age. By employing thematic and sentiment analysis of the scientific literature, it addresses crucial technological challenges, the impact of public trust, the significance of high-quality and diverse data, and advanced forms of content manipulation, such as deepfakes and automated accounts (bots). The study’s findings, which reveal prevailing scepticism about the reliability of AI, underscore the need for transparent algorithms and the inclusion of media literacy. Moreover, the study underscores the importance of tackling ethical dilemmas, the value of cross-sectoral collaboration, and the development of more transparent and responsible AI solutions in the fight against disinformation.

Keywords

Introduction

Industry 4.0 has ushered in the era of digital communication, where social media has emerged as a central source of information (de Lima Santos et al., 2024). However, these same networks also facilitate the rapid and widespread dissemination of disinformation, a pressing issue in today’s digital age. Social media such as Facebook, X, Instagram, YouTube, and TikTok enable the rapid spread of content without proper fact-checking (Calvo-Gutiérrez & Marín-Lladó, 2023; Denniss & Lindberg, 2025).

Algorithms that prioritise engagement and emotionally charged content inadvertently facilitate the rapid spread of fake news, often faster than verified news. In this context, the role of AI is pivotal. AI’s ability to swiftly process large volumes of data, detect patterns, and automate fact-checking is a powerful tool in identifying and curtailing the spread of fake news (Paschen, 2020; Shu et al., 2020).

However, the use of AI poses both technical and social and ethical challenges. These include issues related to the reliability of models, the problem of algorithmic ambiguity (also known as a black box), the lack of standardised and diverse data, and raises questions about the relationship between privacy and transparency (Kreps et al., 2022; Marsden et al., 2020). Thus, in the real world, many models are based on culturally or linguistically specific datasets. As a result, this leads to problems in the transferability of data to other contexts (Ödmark, 2023).

To effectively implement AI solutions in the media environment, it is essential to recognise the importance of maintaining public trust. The specificity of this trust lies in its foundation on understanding and control over algorithmic decisions. If this trust does not occur, the risk of society's resistance to technology increases (Tandoc & Seet, 2024).

The question also arises of how AI deals with increasingly sophisticated forms of disinformation in practice. These include deepfakes and automatically generated content, such as publications. Consequently, the importance of educational programmes and public initiatives in promoting media literacy must also be problematised (Adjin-Tettey, 2022; Vaccari & Chadwick, 2020).

The study aims to find answers to five research questions (RQ): (RQ1) what technological and methodological barriers hinder the use of AI to detect disinformation; (RQ2) what is the role of public trust and how it can be strengthened; (RQ3) how data quality and diversity affect the performance of models; (RQ4) what approaches can be used to control advanced forms of manipulation; and (RQ5) how educational programmes can reduce the impact of false information in the digital space.

This study combines theoretical insights through a systematic analysis of scientific articles, providing a comprehensive understanding of the challenges and opportunities associated with using AI in the context of preventing the spread of disinformation.

Theoretical Background

Social networks have become the main channel for spreading news and disinformation. This is largely due to the design's specificity, which enables content to spread rapidly without proper fact-checking. Algorithms based on user preferences often inadvertently increase the visibility of false information, thereby affecting the reliability of digital sources (Cinelli et al., 2020; Tandoc et al., 2018).

AI, with machine and deep learning, and advanced models (e.g. GPT-4, CLIP, Claude, Perplexity), enables the rapid identification of fake content. However, it has limitations: it struggles to understand ambiguous or satirical content, and is often adapted to English only, which reduces its effectiveness in other languages and environments. The challenge is that the AI tool's ability to create misinformation (e.g. deepfake) on the other side further complicates detection (Vosoughi et al., 2018; C. Zhang et al., 2019). To ensure that disinformation is detected, it is essential that developers, linguists, sociologists, and communities work together, and that AI is adapted to local languages and cultures to avoid systemic errors and unintentional censorship (Shu et al., 2017).

The use of AI also raises significant ethical challenges. The non-transparency of the algorithms, known as the so-called “black boxes,” erodes public trust. There is a risk of private data misuse, as well as the potential for surveillance or censorship, especially in authoritarian countries. The development of explainable AI (XAI) and clear rules to protect privacy are key (Cinelli et al., 2020; Shu et al., 2017).

Data quality and diversity are essential for the accuracy of models; therefore, it is crucial to standardise and improve data quality (Shu et al., 2017; Vosoughi et al., 2018). Successful deployment of AI to detect disinformation requires the cooperation of all stakeholders and the strengthening of media literacy (Lazer et al., 2018).

The theories of agenda-setting and use complement the theoretical background and need satisfaction, which explain how AI shapes the information environment and reinforces user preferences, leading to information bubbles. Transparency, ethical design, and respect for information diversity are key to mitigating misinformation (Shu et al., 2017).

Research Methodology

Article Selection Criteria

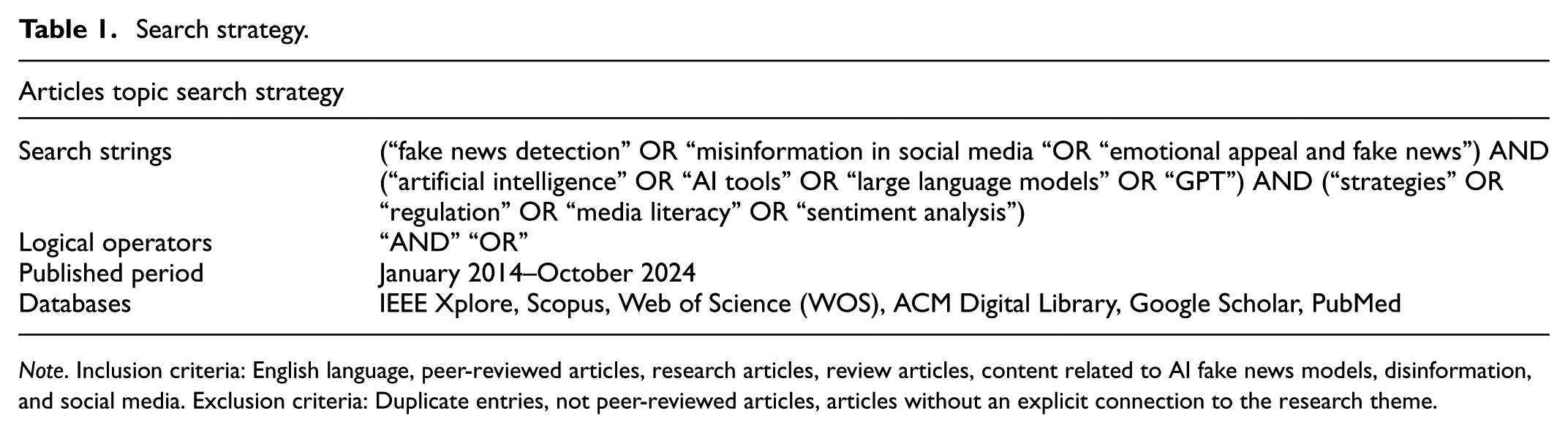

A systematic search strategy was used to find relevant articles. To provide better transparency of the flow of scientific articles, the search strategy itself is presented in Table 1. With this search strategy, we aimed to encompass the widest possible corpus of scientific articles related to the research area: the role of AI in detecting and limiting the spread of disinformation. We placed special emphasis on articles that address topics such as fake news, users' emotional responses, and the impact on health and politics.

Search strategy.

Note. Inclusion criteria: English language, peer-reviewed articles, research articles, review articles, content related to AI fake news models, disinformation, and social media. Exclusion criteria: Duplicate entries, not peer-reviewed articles, articles without an explicit connection to the research theme.

We searched for articles in scientific literature databases, applying inclusion and exclusion criteria. To achieve this, we combined search strings using logical operators (AND and OR; see Table 1), which allowed us to obtain either broader or more specific results.

We prepared the structure of the search strategy to include in the further analysis of the articles, both those that discuss technical content related to the research phenomenon and those that cover research on the impact of disinformation and approaches to limiting it.

From the preliminary keyword search, 1,239 articles were identified. After applying filters to peer-reviewed English-language articles and removing duplicates, 1,087 articles remained. Further review of the WOS database yielded nine additional articles.

The following phase involved a thorough examination of 1,096 articles by reviewing their titles and abstracts. The ASReview software was used to identify articles relevant to the subject matter, allowing articles to be ranked based on user preferences (Van de Schoot et al., 2021). Ultimately, 462 articles were excluded because they did not explicitly mention the connections among AI, fake news, or disinformation. Additionally, 82 articles were dismissed because they lacked clear references to AI, and an additional 65 articles were excluded as irrelevant to the topics of fake news and disinformation. The process of the article search strategy is presented in Figure 1.

Articles search strategy.

This resulted in a final set of 487 articles published between 2014 and October 31, 2024. The main sources included Communication Research, Journal of Media Studies, AI & Society, IEEE Access, IEEE Transactions on Computational Social Systems, Journal of Information Technology, Frontiers of Psychology, Journalism Practice, Journal of Mass Media Ethics, Atlantic Journal of Communication, Journal of Computational Social Science, American Behavioral Scientist, Newspaper Research Journal, Journal of Social Media and Society, Communication Quarterly, and Critical Studies in Media Communications.

Thematic and Sentiment Analysis

Preparation and Execution of Coding

Four researchers conducted the coding independently. They had predetermined a set of codes and themes, which helped them further review and label the content. Each researcher used the same database and followed uniform coding guidelines when coding.

During the coding process, the researchers maintained regular coordination with each other throughout all stages of coding. The goal of regular coordination was to achieve a common understanding of the codes. At the same time, they clarified ambiguities between them and ensured the entire process was consistent. Precisely because the researchers resolved discrepancies on the fly and through discourse, inter-rater reliability was not formally calculated. This approach was coordinated and prepared by established qualitative research practices, which emphasise that consistency of group understanding is more important than numerical metrics (Campbell et al., 2013; Saldana, 2013).

Barbour (2001) also emphasises that when researchers are involved in ongoing reflections, coordination, and the formation of common agreements, inter-rater coefficients such as Cohen’s Kappa or Krippendorff’s Alpha are not always necessary or advisable. Nowell et al. (2017) suggest that, rather than focusing on inter-rater coefficients, it is essential to emphasise achieving consensus, a characteristic particularly relevant to interpretative qualitative research.

Preparing Text for Analysis

The texts of the scientific articles were pre-processed before the sentiment analysis and thematic coding. Text pre-processing reduced noise in the data and improved the consistency of linguistic units, ensuring a more reliable and comparable analysis.

The following procedures were performed as part of the text preprocessing process:

Stopwords: common and analytically irrelevant words, such as “the,”“is,” and “in,” were removed. These words have no meaning for content classification or emotional evaluation.

Lemmatization: words were transformed into lexical forms. For example, words such as “writing,”“written,” and “writes” were recognised as the same unit, “write.” This reduced redundancy and improved concept recognition.

Text standardisation: We included the conversion of all characters to lowercase and removed punctuation marks, numbers, special characters, and HTML tags. This ensured that the algorithms processed all content uniformly, so that external language variations were not affected.

Text Analysis Processes

This subsection outlines the processes involved in article analysis.

First Step: Identifying Key Ideas, Themes, and Tones

Key ideas were identified based on a systematic review of the articles, highlighting the following themes:

The spread of disinformation on social media: It has become a prominent concern that necessitates further examination. The rapid dissemination of misinformation and disinformation on social media platforms has the potential to significantly influence public opinion. It is not uncommon for social media platforms, such as Twitter, Facebook, and YouTube, to be utilised as conduits for disseminating misinformation, potentially exacerbating social tensions.

The role of AI in creating and detecting fake news: The emergence of sophisticated AI tools, including GPT models and deep-fake technologies, has facilitated the creation of fake content, thereby exacerbating the issue of disinformation. AI technologies have the potential to be utilised in identifying fake content. Nevertheless, the dependability of these technologies for detecting fake content remains a topic of contention.

Educational and regulatory measures for disinformation limitation: Implementing educational and regulatory measures to restrict the dissemination of disinformation represents a crucial step in combating this phenomenon, as it constitutes a key strategy for addressing the challenges posed by the proliferation of misinformation. This document emphasises the necessity of educating the public on identifying fake news and implementing regulatory measures to limit the dissemination of disinformation on digital platforms.

The impact of COVID-19 on the spread of health misinformation: The emergence of the global pandemic caused by SARS-CoV-2 has led to a notable increase in the circulation of misinformation, particularly regarding the efficacy and safety of vaccines and the implementation of health measures. A lack of trust in scientific sources and the proliferation of health misinformation can have a significant impact on public health.

Financial motivations for creating fake news: The phenomenon of generating deceptive content for financial gain is further substantiated. Those who disseminate fake news often seek to generate profits through clicks and advertisements, suggesting a lack of concern for the veracity of their assertions and indicating that financial gain is their primary motivation.

The impact of disinformation on democracy and political processes: Extensive research has examined the influence of misinformation on electoral processes and public political attitudes. These studies have identified the potential for such information to exacerbate conflicts and erode confidence in democratic institutions.

Emotional impact of fake news: It can influence how individuals perceive, process, and disseminate the information they encounter, thereby affecting their interactions with the surrounding environment. The expression of negative emotions, such as disgust and anger, is a common feature of fake news, facilitating its rapid dissemination.

Use of bots and automated accounts to spread disinformation: Deploying automated accounts and bots to propagate misinformation is a significant challenge in digital communications. Bots and automated accounts are common methods of disseminating information on social media platforms, thereby enhancing the reach and impact of misinformation and complicating its containment, which can further disseminate it.

In the subsequent steps of the analysis, more detailed codes were created with the assistance of the initial thematic groups. Structured thematic and sentiment analyses were conducted for each theme.

Second step: Coding

All references were downloaded from literature databases and organised in an Excel spreadsheet for subsequent analysis. The spreadsheet included the following details for each reference: title, author, journal, abstract, and keywords. Next, the abstracts and full texts of the selected articles were systematically reviewed and coded according to the predefined criteria (Bandara et al., 2015).

The coding process focused on specific dimensions relevant to the research questions, effectively addressing the topics and themes of the articles, thereby achieving the initial objective (Woike, 2007). The objective was to determine whether certain text sections enhance the understanding of key aspects of text analysis, including the influence of artificial intelligence, ethical and legal challenges, misinformation, and digital literacy.

The frequency of text concepts or words is a crucial indicator of central themes. Thematic coherence and pattern repetition were also taken into consideration. Ideas or phrases that conveyed similar concepts or addressed related themes were assigned single codes (Saldaña, 2009). All instances of the same or associated ideas were grouped under a single code to ensure consistency. The codes were designed to capture the essence of the ideas or themes under examination (Williams & Moser, 2019).

Additionally, it was crucial to logically connect the codes to significant themes or concepts within the articles to maintain coherence and consistency in the analysis (Creswell, 2003). The relevance of the selected codes to the overall analysis and conclusions was also taken into consideration. This approach ensured that the final recommendations for both practice and theory were grounded in the most pertinent available information, thereby enhancing the rigour of the process. The initial coding system was preserved throughout the analysis, and new codes were aligned with those initially identified, along with substantive themes. While the authors coded independently, they consulted regularly to address issues or inconsistencies and worked with the same version of the database. As a result, we did not calculate the inter-rater reliability. Table 2 lists the main codes that cover several aspects of the analysed articles.

Themes and Codes.

Source. Authors’ analyses.

The codes identified in Table 2 represent the fundamental concepts of each theme as outlined in the second phase of the analysis. Furthermore, applying codes enabled a comprehensive and systematic examination of the recurring elements in the text. In the third phase, these codes facilitate the recognition of patterns and structures, which assists in the subsequent implementation of the thematic analysis.

Third Step: Themes Design Process

The goal of the third step is to review the codes, identify patterns between them, and form broader thematic clusters (Attride-Stirling, 2001). Within this framework, content-like codes were gradually merged into larger and more meaningful themes that reflected the main emphasis of the analysed articles.

The first stage of the theme generation process involves reviewing codes for individual themes, where we examine each code, identify common elements, and verify whether multiple codes refer to similar ideas. This was followed by grouping the codes into more structured thematic clusters. The second stage (ii) involved the formation of thematic patterns, which entailed determining a broader pattern for each theme based on the similarities and connections between codes, thereby facilitating further analysis steps (Roblek & Dimovski, 2024).

Thematic patterns were developed based on the similarities and common elements between the codes, as presented in Table 3.

Theme, Codes, and Theme Description.

Group themes are now more effective in identifying the key patterns present in the text. Each theme comprises several codes that collectively represent specific aspects of the content analysed. The fourth step examined the themes to ascertain their relevance and consistency with the original codes.

Fourth Step: Re-Examining and Re-Evaluating The Previously Discussed Topics

The third step was to determine whether the flights adequately addressed all the relevant content of the articles. If this were not the case, the content needed to be supplemented or adapted. Moreover, it was crucial to determine whether all codes had been successfully integrated into existing topics and whether any similarities could facilitate the consolidation or further development of each topic.

The themes were well-conceived and pertinent to the subject matter, demonstrating a clear understanding of the key issues at hand. Each theme addressed a significant aspect of the content being analysed. The verification process demonstrated that each topic adequately addressed the relevant codes pertinent to the specific domains of disinformation or fake news. Thus, no alterations or modifications were required for the topics.

Fifth Step: Identification and Definition of Themes

Each theme was assigned a name in the fifth step and subjected to a comprehensive definition. This approach facilitated an understanding of the concept's significance and its role in clarifying the content under examination. This definition also serves as the foundation for subsequent analytical procedures, including sentiment analysis.

Social media and the spread of disinformation: This theme examines the role of social networks, including Facebook, Twitter, and Instagram, in facilitating the rapid dissemination of disinformation. Furthermore, the role of social influencers, whose considerable reach can inadvertently contribute to the propagation of disinformation, is a crucial element of this field of study.

The role of AI in disinformation: This theme examines the dual impact of artificial intelligence (AI) in creating and detecting fake content. The text addresses ethical concerns regarding the utilisation of AI, its unreliability in journalism, and the potential benefits of employing AI in detecting deepfake content.

Educational and regulatory measures as solutions: This theme proposes enhancing media literacy and implementing legislative and regulatory measures to curtail misinformation. It incorporates strategies to enhance public awareness of disinformation and enact legislation to restrict the dissemination of false content on digital platforms.

Health-related disinformation and COVID-19: This study examines the impact of misinformation surrounding the 2019 novel coronavirus (COVID-19) pandemic, vaccines, and public health. It investigates the influence of misinformation on public confidence in scientific sources, its impact on decisions regarding protective measures and vaccination, and the implications of such decisions for public health.

Commercial motives and sensationalism: This theme examines the financial interests that drive the creation of sensationalist content and fake news to increase traffic and advertising revenue. The subject matter focuses on the profitability of fake news, which is often based on attracting clicks and attention rather than disseminating accurate information.

Political manipulation and the impact of disinformation on democracy: This theme examines how fake news and automated accounts, such as bots, influence political views and create and promote conflict between different social groups. It includes the use of disinformation to influence electoral outcomes and exacerbate social discord.

Emotional impact of fake news: This theme concerns the influence of intense emotions, such as anger and disgust, on the propagation of misinformation. Emotional charges affect how the public perceives news and encourage its dissemination.

Role of bots and automated accounts in spreading disinformation. This theme encompasses the use of bots and automated accounts to disseminate misinformation on social media platforms, where automation enables the rapid dissemination of deceptive content on a larger scale.

The fifth step concludes the thematic network analysis. Considering these findings, it can be concluded that the eight topics collectively illuminated a complex ecosystem of disinformation and fake news. The social, technological, economic, and political factors within this ecosystem are inextricably linked. Considering these themes, it is evident that many factors contribute to the creation, dissemination, and impact of disinformation in the modern age. These factors include social media, AI technology dynamics, emotional manipulation, and regulatory loopholes. Figure 2 illustrates the thematic network within the disinformation ecosystem.

Ecosystem of disinformation and fake news.

Figure 2 depicts the main themes as nodes near the central theme, “Ecosystem of Disinformation.” The overarching theme unified the various subthemes, with each connection signifying pertinent relationships and similarities between them. As illustrated in Figure 1, the overarching theme is the “Ecosystem of Disinformation,” which encompasses a range of interrelated sub-themes, including the influence of social networks, the role of artificial intelligence, commercial motives, political manipulation, and other significant factors. The interconnections between topics, such as the relationships among social media, automation, and bots, illustrate how diverse forces interact and reinforce the dissemination of misinformation.

The sixth step employs thematic definitions to facilitate the subsequent analysis phases. Consequently, the codes, frequencies, and quotes for each theme were collated into a table, after which a sentiment analysis was conducted.

Sixth Step: Themes, Code, Frequency, and Quotes

Table 4 presents an overview of the main topics, frequencies, associated codes, and at least five citations for each topic. The data in this table provides valuable insights, allowing for a more detailed analysis of the key elements of disinformation and fake news. The number of quotations available for thorough analysis depends on the complexity of the topic, the length of the text, and the depth of the required analysis (Creswell & Poth, 2017; Wankhade et al., 2022). Following the recommendation that deeper analyses require at least five citations, we included five citations for each topic (Braun & Clarke, 2006; Creswell & Poth, 2017). This approach enables a more comprehensive understanding and examination of the various perspectives involved (Patton, 2014). In theory, approximately five citations per topic are sufficient for an effective and balanced analysis. However, including additional citations can offer deeper insights and a more detailed exploration of sentiments and the diversity of opinions. However, it is essential to include both extreme and middle quotes to guarantee exceptional values and a variety of tones, as this allows for the most accurate representation of the data. Therefore, it is of the utmost importance to ascertain which elements evince the most positive or negative tone. Moreover, this is the sole method by which the various tones and influences present in the text can be identified and highlighted, in addition to neutral elements (Patton, 2014; Sandelowski, 1995).

Themes, Codes, and Citations.

Frequency: A theme mentioned in articles indicates its prominence, with more frequently discussed themes receiving greater attention.

Citation: The selected citations elucidate the essential aspects of each theme, thereby facilitating a more nuanced comprehension of the context of each code.

This table presents the frequency of occurrence for each theme, offering insights into the themes that were most prevalent in the analysis.

Seventh Step: Sentiment Analysis

As part of the sentimental analysis, the emotional tones of each topic were analysed (e.g. positive, negative, neutral), and an assessment was given of whether this tone is expressed in the text (Boukes et al., 2020). The application of sentiment analysis facilitated the identification of the text’s prevailing emotional tone and attitude (Kratzwald et al., 2018). Moreover, sentiment analysis facilitated the visualisation of sentiment distribution by topic (Gandhi et al., 2023).

Sentiment analysis was conducted using the TextBlob lexicon method (Bonta et al., 2019). This method employs a list of words with preestablished sentiment values. In this context, the contribution of each word to the calculation of a text’s overall tone is of considerable significance (Chandrasekaran & Hemanth, 2022). TextBlob is integrated into the Python environment, which enables it to access a robust and validated lexical base, resulting in efficient classification of texts based on emotional tone (Bonta et al., 2019). The tool is thus suitable for quickly analysing larger text corpora because it enables the calculation of sentence polarity and the identification of basic emotional tones (positive, negative, neutral) without the need for complex models. However, TextBlob also has limitations that we have considered when interpreting the results. These are: (i) the inability to detect sarcasm, irony, or complex rhetorical figures. Therefore, it may yield incorrect assessments for certain statements (e.g. labelling them as neutral or positive, even though they are critical or negative); (ii) there is poor accuracy within the domain of specific language, such as expressions from the fields of politics, media, or healthcare. The problem arose because the model was originally trained on a general language, and therefore, there is a possibility of misinterpreting specific contexts or technical terminology (Chandrasekaran & Hemanth, 2022). Table 4 presents the results of the sentiment analysis for each theme.

In the present study, the authors decided to supplement the text analysis with thematic analysis to address the limitations of the interpretation of the results. This enables a more in-depth understanding of the meanings within the contexts of the articles (Braun & Clarke, 2006). Table 5 presents the sentiment analysis for each theme.

Sentiment Analysis for Each Theme.

The explanation of the columns in Table 5:

Average sentiment score: The average sentiment value for each topic, ranging from -1 (completely negative) to 1 (completely positive).

Sentiment category: The tone of each topic is rated based on the average score.

Individual sentiments: The sentiments of the individual quotes are used to calculate the average.

To provide a more detailed elucidation of the sentiment analysis results (Table 4), the sentiment tone of each topic must be analysed based on the average sentiment of the quotes (Taboada et al., 2011). The following section presents the principal findings of the sentiment values and their broader impact.

Social media and the spread of disinformation - Average sentiment: slightly positive - Interpretation: The topic addresses the role of social networks as conduits for disseminating disinformation. The somewhat optimistic tone may indicate a degree of neutrality in the text or an emphasis on social networks’ capacity to facilitate the dissemination of information to the public, despite the challenges associated with the propagation of false information. This tone indicates that the impact of social networks is perceived as ambiguous, with the potential to be either beneficial or detrimental.

The role of artificial intelligence in disinformation - Average sentiment: slightly negative - Interpretation: The theme is characterised by a certain degree of negativity. This reflects concerns regarding the dependability of AI. Furthermore, it highlights the ethical issues that arise when utilising AI for perception and content generation. Negative sentiments underscore doubts about AI's reliability in detecting fake news and raise ethical concerns about utilising this technology in journalism.

Educational and regulatory measures as solutions - Average sentiment: slightly negative - Interpretation: A somewhat negative tone indicates a moderate level of scepticism regarding the efficacy of regulatory measures and educational initiatives in addressing this issue. This may indicate concerns regarding the limited efficacy of legislative measures or a lack of comprehensive educational initiatives.

Health-related disinformation and COVID-19 - Average sentiment: slightly negative - Interpretation: The dissemination of erroneous health information, particularly during the COVID-19 pandemic, has elicited a negative response. This indicates a prevailing concern about the potential adverse effects of disinformation on public health. This theme highlights the decline in public confidence in health resources and risks associated with the circulation of inaccurate health information.

Commercial motives and sensationalism - Average sentiment: slightly positive - Interpretation: From an optimistic standpoint, commercial interests play a pivotal role in fuelling sensationalism in the media and journalism. Concurrently, this provides evidence supporting the assertion that financial incentives significantly influence the media. Although sensationalism is not considered an inherently beneficial phenomenon, this tone demonstrates a balanced perspective on commercial motivations and acknowledges their significance in journalism.

Political manipulation and democratic influence - Average sentiment: negative - Interpretation: The subject matter is perceived as having a markedly adverse quality. This indicates concerns about the influence of disinformation on political processes and democratic institutions. Negative sentiments suggest the manipulation of voter opinion and the polarisation of the public, which ultimately undermines trust in democratic institutions and destabilises society.

Emotional influence and virality of fake news - Average sentiment: slightly negative - Interpretation: A slightly negative sentiment expresses the impact of emotional influences on the dissemination of fake news, which is often driven by negative emotions such as anger and disgust. This tone underscores how emotionally charged headlines and stories amplify the virality of fake news, thereby contributing to the spread of misleading content.

Automation bots in disinformation spread - Average sentiment: slightly negative - Interpretation: The text is written in a somewhat pessimistic tone, indicating concerns about the use of bots and automated accounts to disseminate information. This suggests the presence of technical challenges in identifying and limiting the influence of automated accounts, which in turn contributes to an increase in the volume of fake content.

A generalisation can be made regarding the sentiment analysis of the quotes in question. Most topics were addressed in a negative or slightly negative manner, reflecting the growing awareness of the potential consequences of disinformation in various domains, including health, politics, technology, and social networks. A markedly negative tone characterises the themes of political manipulation and democratic influence. This can be interpreted as a strong opposition to manipulating opinions and exploiting democratic processes.

The analysis yielded two exceptions to the prevailing themes: the role of social media in disseminating disinformation and the impact of commercial motives and sensationalism. A slightly positive tone characterises both themes. When examining the role of social media, it is essential to maintain a certain degree of neutrality and acknowledge the dual nature of this phenomenon, which presents a complex set of factors that must be considered. The capacity of social media to disseminate disinformation is counterbalanced by its potential to facilitate the dissemination of accurate information. Compared to the other themes, a slightly positive sentiment was less prevalent in discussions of commercial motives and sensationalism. This reflects the acceptance of commercial interests as an inevitable aspect of media practice. In contrast, other forms of disinformation, such as political manipulation and health disinformation, are treated with greater scepticism and a negative tone.

A horizontal bar chart was created using MATLAB to display the sentiment scores for the various disinformation and media integrity themes, as illustrated in Figure 3.

Average sentiment scores by theme on disinformation topics.

As illustrated in Figure 3, the bar chart depicts the themes on the vertical axis (y-axis), while the sentiment values range from −0.4 (indicating a markedly negative sentiment) to 0.4 (indicating a markedly positive sentiment). Each topic is associated with a horizontal column where:

Colour of the columns: A colour scale was devised to indicate the polarity of sentiment, with red representing negative sentiment, white indicating neutrality, and blue denoting positive sentiment. This scale allows for rapid assessment of the polarity of a given topic. The scale on the right of the graph depicts sentiment values between −0.4 and 0.4, thereby facilitating the interpretation of the colours.

Column-based labels: The horizontal axis (x-axis) represents the average sentiment score, which facilitates comparison of sentiments across different topics. A negative score indicates that sentiment is predominantly negative, whereas a positive score indicates that sentiment is primarily positive.

Numeric labels next to columns: The numerical labels on the right-hand side of each column indicate the precise sentiment value, thereby facilitating more accurate comprehension and comparison between themes.

The graph in Figure 3 provides a rapid and straightforward visual representation of the sentiments associated with different topics regarding misinformation, whereby certain topics are more negatively or positively evaluated. To illustrate, the topic ‘Political manipulation and democratic influence’ exhibits a relatively negative average rating, indicated in red. The topic ‘Social media and the spread of disinformation’ is rated slightly more favourably, as noted in the blue bar.

Eighth Step: Integration and Synthesis of Results

The final eighth step involved integrating and synthesising the data on each topic. This synthesis is based on a combination of thematic and sentiment analyses.

Social media and the spread of disinformation - Key findings: Online platforms, including Facebook, X, Instagram, and TikTok, play a pivotal role in the dissemination of disinformation. These platforms facilitate social influence and virality, enabling the rapid dissemination of misinformation to a large audience. - Sentiment: Slightly positive (0.24); this indicates an ambivalent perception of the impact of social networks, which serve as both instruments for disseminating information and are pivotal in the propagation of disinformation.

The role of artificial intelligence in disinformation - Key findings: AI is employed to generate and identify fabricated content. Despite AI’s potential to be an effective tool in combating disinformation, concerns persist regarding its inherent unreliability and the ethical issues associated with its use. - Sentiment: Slightly negative (−0.17); this indicates a sceptical perspective on the utilisation of AI for content creation, accompanied by concerns about ethical considerations and reliability.

Educational and regulatory measures as solutions - Key findings: Education and legislative measures play pivotal roles in combating disinformation. These measures enhance awareness and cultivate critical thinking, which is indispensable for effectively mitigating misinformation. However, the efficacy of these methods remains limited. - Sentiment: Slightly negative (−0.05); this perspective suggests a moderate level of concern regarding the efficacy of these measures in containing the dissemination of disinformation.

Health-related disinformation and COVID-19

- Key findings: The proliferation of disinformation about health, particularly in the context of the ongoing COVID-19 pandemic, has resulted in a notable decline in the level of trust placed in scientific sources. The dissemination of health misinformation poses a significant threat to public health, engendering fear and confusion in the public, which may ultimately lead to detrimental health outcomes. - Sentiment: Slightly negative (−0.11); the prevailing opinion is that there is cause for concern regarding the potential adverse effects of disinformation on health, affecting both individuals and public health.

Commercial motives and sensationalism

- Key findings: Commercial interests frequently influence the content of sensationalist and misinformative media. The pursuit of profits by media outlets has led to the publication of sensational news, aimed at increasing readership, which has resulted in a decline in journalistic quality. - Sentiment: Slightly positive (0.10); this sentiment represents a moderate stance on the role of commercial interests in the media landscape, despite the prevalence of sensationalist content.

Political manipulation and democratic influence - Key findings: The impact of political campaigns and disinformation on democratic processes can be discerned in the manipulation of voters’ opinions and the polarisation of the public, ultimately leading to a deficiency of trust in democratic institutions. - Sentiment: Negative (−0.3); the markedly negative sentiment underscores significant concerns about the potential impact of disinformation on democratic and political processes more broadly.

Emotional influence and virality of fake news - Key findings: The rapid transmission of negative emotions, such as anger and disgust, facilitates the propagation of emotionally charged fake news. Consequently, this leads to the accelerated dissemination of disinformation. - Sentiment: Slightly negative (−0.15) – The dissemination of fake news is facilitated by the emotional intensity of the content, which can have adverse consequences.

Automation bots in the spread - Key findings: Automated accounts and bots facilitate the proliferation of disinformation, exponentially amplifying the dissemination of false information without human involvement. - Sentiment: Slightly negative (−0.2); the predominant opinion is that there is a concern about the potential impact of bots and automation on the credibility of information disseminated in digital media. This assumption is based on the idea that an increasing prevalence of automated content may result in a decline in the perceived reliability of information in digital environments.

The comprehensive findings of this study, which employed thematic and sentiment analyses of articles, indicate that disinformation is emerging as a significant threat to public health, political stability, and social trust. Most topics show negative or slightly negative sentiments, reflecting a pervasive concern about the impact of misinformation in various contexts. However, exceptions, such as the “Commercial Motives and Sensationalism” theme, indicate a moderate acceptance of commercial interests in the media. Meanwhile, social networks play a complex and multifaceted role in facilitating the rapid dissemination of information and the proliferation of misinformation.

Research Results and Discussion

The analysis of scientific articles revealed complex interrelationships among technological, social, and ethical dimensions. Using a combination of thematic and sentiment analysis, key themes, emotional responses, and potential systemic effects were identified. The results and discussion section will present the interpretation of the data and provide answers to the research questions, which will then be compared with those from previously published studies. Suggestions for future action follow.

Potential and Ethical limitations in The Use of AI

In the scientific literature, AI is often defined as a promising solution for identifying disinformation. This finding was also confirmed by the analysis conducted in this study. Machine learning techniques, sentiment analysis, text classification, and bot detection are important in this regard. However, it is essential to emphasise that the results of sentiment analysis reveal a slightly negative tone, which raises doubts about the reliability and transparency of AI systems.

The so-called ‘black box’ effect appears as a central challenge, where internal decision-making processes are non-transparent. Both Tandoc and Seet (2024) and Chen and Shu (2024) emphasise the importance of explainable artificial intelligence (XAI), which allows users to gain insight into how and why the system made a certain decision. Among the proponents of these approaches is also the existing theory that warns that technology without explanation can reduce public trust in its use (Abdollahi et al., 2024; Pechtor, 2024; Sousa et al., 2024).

Political Manipulation and Emotional Influence

The most negatively rated topic was political manipulation. It has been found that politicians often employ emotionally charged messages, such as fear and anger, which are then spread via bots and automated systems. The synergy between content and technology itself leads to the polarisation of public opinion and reduces trust in traditional information sources. The theories of the authors, van der Linden, Marwick, and Lewis, also confirm this. These authors have been warning for several years that emotional responses are key to the success of disinformation campaigns.

Marwick and Lewis (2017) argue that digital platforms reward emotionally charged content through their structure. This, in turn, enables the development of a fertile environment for manipulation.

Van der Linden et al. (2021) is the author of the theory of psychological inoculation. Within this framework, he examines the roles of emotional activation and cognitive vulnerability as primary mechanisms in the dissemination of false information.

Roozenbeek and van der Linden (2019) have demonstrated, based on empirical examples, that early exposure to manipulative techniques can reduce their effectiveness.

It is also worth noting that Ferrara (2020) believes that bots are no longer just tools for simple “spam.” Rather, he links their role to the ability to carry out sophisticated campaigns with a psychological impact, especially when aligned with narratives that trigger an emotional response.

As part of the analysis in this study, we note that there is empirical support for the connection between emotional influence and automated spreading mechanisms, such as bots. This opens up a new understanding of this phenomenon, which has not yet been systematically addressed in studies.

Commercial Interests: an Ambivalent Dimension

In the sentiment analysis, the topic of commercial interests was assessed slightly positively. This seems contradictory, since sensationalism is often criticised as one of the main causes of disinformation. However, such an assessment reflects the complexity of the modern media environment, in which editorial decisions are shaped not only by ideological or political influences but also by economic pressures.

In a digital environment where algorithmic metrics of attention, such as clicks, views, and shares, are directly linked to revenue, scenarios arise in which media organisations are compelled to balance commercial success (profit) with informational responsibility (Pickard, 2019). From this perspective, the sensationalist style, although considered problematic, can represent a survival strategy. In practice, this is especially true for smaller or independent media (McManus, 1994).

In the context of analysing the articles, we find that commercial goals influence the structure of the content, for example, in the direction of shorter, emotionally charged, and visually attractive forms. However, based on the analysis of the content, we notice that the texts also mention that market logic is not necessarily harmful, provided it is placed within the framework of ethical guidelines and regulations.

The findings of this study’s analysis of the articles confirm the modern view of media economics theory, highlighting that the sustainability of information sources, particularly those serving the public interest, often relies on effective business models. However, these models must adhere to the principles of social responsibility (Hardy, 2010; Napoli, 2011).

Baker (2002) and McChesney (2008) highlight the danger of excessive commercialisation itself. At the same time, they also believe that in real-world conditions, it is not possible to separate the economic and informational functions of the media. The authors further believe that understanding the ambivalence of commercial interests is important. However, we must be cautious, as these interests can be a condition for manipulation, but are also necessary for the existence of professional journalism.

Answers to Research Questions

This subsection presents the interpretation of the results, provides answers to the research questions, compares our findings with existing literature, and offers suggestions for future action.

Technological and Methodological Barriers to The use of AI for The Detection of Disinformation

The findings of the thematic analysis demonstrated that technological and methodological constraints represent substantial obstacles to the implementation of AI in the identification of misinformation. In practice, the quantity of data analysed by AI algorithms is contingent on the quality, provenance, and diversity of the data in question. Nevertheless, the sentiment analysis findings indicated a somewhat negative sentiment, reflecting concerns about the reliability of AI in addressing complex and rapidly evolving patterns of disinformation.

Nevertheless, the efficacy of AI-generated results is contingent on the availability of uniform data. In the absence of such sources, issues of transparency may arise, resulting in a phenomenon known as the "black box.” This has the additional consequence of eroding public trust (Demartini et al., 2020). As Aïmeur et al. (2023) and Kreps et al. (2022) have observed, the technological challenges associated with the rapid evolution of misinformation patterns and the need for scalable algorithms continue to represent a significant barrier to the reliable utilisation of AI.

Public Trust as a Prerequisite for The Successful Implementation of AI Tools

The second research question aimed to determine the influence of public trust on the effective deployment of AI solutions. The results of the thematic analysis indicated that public trust is becoming a crucial factor in the successful deployment of AI in media practice. Sentiment analysis was employed to identify instances of negative sentiments in the context of political manipulation and the impact of disinformation on democratic processes. Nevertheless, the cases illustrate the challenges associated with public trust in AI tools for misinformation detection.

To enhance public confidence, it is imperative to ensure that AI instruments do not replace the duties undertaken by journalists, but rather augment them (Bontridder & Poullet, 2021). Similarly, it is incumbent on managers of media organisations to recognise that they are the primary point of contact with AI developers. This approach allows the customisation of tools to align with the specific requirements of each medium, thereby enhancing the transparency of AI-driven information verification processes (Dwivedi et al., 2021).

Data Quality and Diversity as Success Factors for AI Solutions

The third research question aims to determine the impact of data quality and diversity on the effectiveness of AI solutions in detecting misinformation. The results of the sentiment analysis indicated a negative perception of methodological challenges, which were identified as potential limitations to the effectiveness of AI. This is attributed to the absence of diverse high-quality datasets. However, the results of the thematic analysis indicated that the utilisation of standardised and diverse data enables the attainment of more reliable results. Conversely, univariate data present challenges in identifying intricate patterns across diverse cultural contexts (De Angelis et al., 2023; C. Zhang et al., 2019).

It can be concluded that the key to the scalability of AI models is the need to provide diverse data. This is because it is essential to recognise the adversarial nature of AI solutions, which, conversely, also facilitate the accelerated development of misinformation by frequently employing novel methods and tactics (Shu et al., 2017). Therefore, it is essential to develop innovative data collection and processing techniques to ensure the efficacy of AI-based solutions in countering disinformation and fake news (Shu et al., 2017).

Effective Approaches to Tackling Advanced Forms of Content Manipulation

Preventing fake news has become an increasingly challenging task for contemporary AI systems, particularly in light of sophisticated manipulations such as deepfake videos and bot-generated content. The thematic network findings indicate that a combination of AI solutions and human oversight can effectively mitigate the impact of these sophisticated forms of disinformation.

Nevertheless, the sentiment analysis revealed a prevalence of negative sentiments, suggesting concern about the potential impact of these manipulations on the public. It is, however, important to emphasise that the results demonstrated that the regular updating of bot detection algorithms and a greater involvement of human judgement have a positive impact on the improvement of AI solutions.

Thus, media organisations and agencies that oversee media markets should ensure that algorithms are more transparent and regularly updated bot-detection algorithms are implemented. This would have a significant impact on reducing instances of misinformation generated by bots.

The Role of Media Literacy and Awareness-Raising to Reduce The Impact of Disinformation

The final research question focuses on the significance of media literacy and awareness-raising initiatives in mitigating the impact of misinformation within the digital socio-cyber ecosystem. Thematic analysis revealed that outreach programmes and awareness-raising campaigns have a significant impact on individuals’ awareness and capacity to identify misinformation. Nevertheless, the results of the sentiment analysis indicated a slight negative bias, suggesting that current educational programmes are not sufficiently effective in mitigating the impact of disinformation.

Thus, the quality of programmes designed to enhance media literacy is suboptimal and requires further improvement. This would help reduce individuals' vulnerability to disinformation. This is corroborated by studies that have demonstrated that individuals who are aware of the importance of verifying sources are more effective at identifying misinformation, which substantially reduces its dissemination (Adjin-Tettey, 2022; Rubin, 2019).

Thematic Relations and Systemic Effects

The themes identified in this study’s analysis – political manipulation, emotional responses, automation of information flows, commercial pressures, and the role of AI – are not isolated phenomena, but form a complex, intertwined system that shapes the modern digital information environment.

In the discussion, we have already highlighted the finding that political manipulation often stems from emotional dynamics. This means that messages that evoke feelings of anger, fear, outrage, or deprivation trigger cognitive biases, leading to a lower likelihood of critical assessment of information (Martel et al., 2020). Emotional triggering is most often exploited by sensationalist content, which is often supported by a strong visual component or polarising language (Bakir & McStay, 2018). When such signals are amplified by automated mechanisms such as bots, coordinated networks, or virality algorithms, the impact of disinformation increases exponentially (Ferrara, 2020; Vosoughi et al., 2018).

The dynamic effect has systemic consequences, extending beyond individual cases of misinformation. Research to date suggests that prolonged exposure to such communication leads to a decline in trust in the media, science, and public institutions, resulting in a decrease in trust in the functioning of the democratic system (Tucker et al., 2018; Guess et al., 2020). However, the decline in trust is not a one-way process. When the epistemological stability of information channels is disrupted, it is more difficult to re-establish social consensus.

Studies typically focus on addressing this topic separately. For example, a study may examine the impact of AI on journalism (Diakopoulos, 2019), the role of emotions in the spread of disinformation (Pennycook & Rand, 2018), or the role of bots in political communication. Meanwhile, this study specifically concerns itself with establishing an intersectional approach. In our opinion, it is necessary to connect insights from the fields of psychology, cognitive science, political science, computer science, and journalism. This approach will enable a deeper understanding of contemporary manipulative practices and guide effective strategies for addressing them.

Chadwick et al. (2021) argue that managing the complex system of disinformation requires a systems analysis. The characteristic of this analysis is that it does not seek solutions in isolated technologies but in coordinated social, technological, and institutional responses. Therefore, AI should not be considered or understood only as a tool, but as part of a system that AI is also obliged to help protect.

In the past, the most prominent examples of AI’s impact were likely the 2016 and 2020 US elections, as well as the COVID-19 pandemic. During the US elections, there was a proliferation of manipulative content on socially sensitive topics, such as race and the migrant crisis, built on emotionally charged rhetoric. The use of AI for audience segmentation and micro-targeting, as seen in the Cambridge Analytica scandal, has led to the proliferation of polarising content (Howard et al., 2018; Issak & Hanna, 2018).

During the pandemic, however, misinformation about vaccines, the origin of the virus, and the effectiveness of government measures spread. Algorithms designed to achieve maximum engagement often prioritise controversial content. As a result, there was an increase in distrust of science and healthcare (Cinelli et al., 2020; Islam et al., 2020; RoozenBeek et al., 2020). Cinelli et al. (2020) point out that it was precisely this emotional tension – fear, doubt, and anger – that was decisive in the spread of this content.

These examples demonstrate the need to establish ethical standards and regulate the use of AI, as otherwise, there is a risk that digital solutions will exacerbate social insecurity instead of addressing information challenges.

Conclusions

In conclusion, thematic and sentiment analyses of the articles revealed that the complexity of using AI to detect disinformation, as well as the importance of adaptability, public trust, data quality, and media literacy, are key factors to consider in this field of study. It is of utmost importance to employ a multifaceted approach to mitigate the negative impact and consequences of misinformation on society. This approach should be based on technological solutions, legislative measures, educational initiatives, and efforts to foster public trust and confidence. Therefore, it is essential to regularly reiterate these factors to the public, as this is the most effective way to address emerging forms of disinformation on digital platforms, thereby protecting public trust and maintaining the integrity of the information environment.

Limitations of The Study

This study has several limitations. The initial limitation is that only scientific articles published between 2014 and October 2024 were included in the analysis. Consequently, these findings may not entirely represent recent research and technological developments. It is worth noting that our analysis was limited to the Western aspects of the issue. It can be reasonably deduced that cultural and societal differences in the acceptance of and access to digital technologies may have significant implications for disparate portrayals of the utilisation and efficacy of AI in detecting disinformation across other regions of the globe. Additionally, this study focused on analysing academic sources written in English, which may result in selection bias, as academic articles in foreign languages and other academic texts, such as books, book chapters, and conference proceedings, as well as industry and non-academic perspectives, may be underrepresented.

The study is primarily qualitative and interpretive. Therefore, it does not include formal statistical validation of sentiment scores and topic frequencies. However, we suggest that future research incorporate statistical methods, such as chi-square tests for analysing topic frequencies and t-tests or ANOVA for comparing sentiment values across topics. These statistical methods will enhance methodological rigour and enable the testing of the statistical significance of observed patterns. This is important given the increasing methodological hybridity within media phenomenon research.

Recommendations for Future Research

Based on the findings of this study, recommendations are proposed. First, there is a need to improve AI models by implementing innovative solutions that facilitate access to diverse data and enable testing in real-life situations, resulting in greater accuracy and flexibility of the models. Second, further research is required to develop effective strategies for fostering public trust in AI tools designed to detect misinformation while also emphasising the transparency and human control of AI systems. Third, we propose that a longitudinal study in the field of media literacy is beneficial, as such an approach would facilitate the evaluation of the long-term impact of educational initiatives on individuals' capacities to discern misinformation.

Practical Recommendations

The findings also provide valuable insights for media organisations, AI developers, and regulatory bodies. For example, it has been proposed that there should be a greater degree of collaboration among those working in the field of AI, journalists, and those responsible for regulating this area. The objective of this collaboration would be to adapt AI solutions in a manner that aligns with the requirements of the media industry. Thus, relevant regulatory bodies must establish transparent and clearly defined standards for the disclosure of algorithmic processes. It is particularly important to emphasise social networks, where artificial intelligence plays a significant role in detecting and removing fake content. It would be advantageous for governmental and non-governmental organisations to intensify their efforts to develop initiatives to enhance media literacy. Such an initiative would significantly contribute to the development of critical thinking and analytical skills among citizens, thereby enabling them to assess the reliability of information sources. By equipping the general public with the necessary digital literacy skills and promoting transparency in technology, it is feasible to significantly reduce the proliferation and dissemination of misinformation, which would, in turn, foster a more reliable information ecosystem.

Footnotes

Ethical Considerations

“There are no human participants in this article and informed consent is not required.”

Funding

The author received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

Data sharing not applicable to this article as no datasets were generated or analysed during the current study.