Abstract

In response to the COVID-19 pandemic, specific assessments such as the COVID Stress Scales (CSS) were developed to measure pandemic-specific distress. The present study aimed to further validate the psychometric properties of the CSS and explore relationships between the CSS and measures of well-being. Adults in the U.S. (N = 1,388, 63.3% female, 36.1% male, 58.4% Caucasian) completed the CSS, Satisfaction with Life Scale (SWLS), Patient Health Questionnaire-4 (PHQ-4), Brief Resilient Coping Scale (BRCS), and Cognitive and Affective Mindfulness Scale-Revised (CAMS-R) in June and July of 2020. Confirmatory factor analyses on the CSS were used to examine multiple model solutions. The six-factor solution provided the best fit (CFI = 0.98, TLI = 0.98, RMSEA = 0.06), outperforming the five-factor solution, which showed higher RMSEA (.07) and inadequate item separation according to the Rasch analysis. Rasch analysis found no misfitting items and the data fit the Rasch model. Results of the Differential Item Functioning (DIF) analysis supported gender invariance as only negligible DIF was observed across items. As expected, the CSS was positively correlated with measures of anxiety and depression (PHQ-4) and negatively correlated with life satisfaction (SWLS), resilience (BRCS), and trait mindfulness (CAMS-R). Our results partially replicate the factor structure of the CSS found in different adult samples.

Plain language summary

In this paper, we collected data using the Covid Stress Scale survey. In the literature we can find that there are competing “models” that is, some studies have found the scale has six dimensions or factors and other studies have found five dimensions. We examine these dimensions in our study and we used methods such as confirmatory factor analysis, and Rasch analysis.

Introduction

Major pandemics have been documented throughout history (as early as the Plague of Justinian in 541) and it has been noted that public health measures such as quarantine, isolation, and travel controls have been implemented throughout time to help contain the spread of infectious diseases (Piret & Boivin, 2021). Over time, increased attention has been brought to the adverse mental health impact that pandemics and subsequent public health measures taken to aid in containing outbreaks can have on individuals, such as increased stress, anxiety, and depression symptoms (Soklaridis et al., 2020). The COVID-19 pandemic has been linked to heightened psychological distress worldwide (Pieh et al., 2020; Qiu et al., 2020; Rehman et al., 2021; Rodríguez-Rey et al., 2020; Tsamakis et al., 2020; Vindegaard & Benros, 2020; Wang et al., 2020), and its impact persists in society despite no longer being classified as a public health emergency (Gonzalez, 2023; World Health Organization, 2023).

The COVID-19 pandemic highlighted that mental health professionals in clinical practice need assessment and intervention solutions as they face the unique challenges associated with health-related societal crises such as a pandemic (Elliott et al., 2024; Gruber et al., 2021). Pandemic-specific experiences, such as the fear of becoming infected with the virus, isolation, and being quarantined have been specifically associated with increased psychological distress (Rehman et al., 2021; Taylor et al., 2020; Vindegaard & Benros, 2020). The enduring mental health ramifications from the experiences of the pandemic persist to the present day (Kathirvel, 2020). This calls for continuous evaluation and intervention strategies by mental health professionals to address the evolving needs of affected populations. This persistence is important, given that experiences such as lockdown measures and infection rates, while no longer as prominent, continue to leave lasting impacts on individuals’ well-being (Pennix et al., 2022; Solomou et al., 2024). Measures have been developed to capture the unique impact that these pandemic-specific fears and stressors have on individuals and to aid in assessing those who may need support due to this distress (Ahorsu et al., 2022; McKay et al., 2020; Taylor et al., 2020). In this study, we will examine the psychometric properties of one of the instruments designed to measure the stressors associated with COVID-19.

COVID Stress Scale

As mentioned previously, pandemics have historically led to widespread psychological distress (Piret & Boivin, 2021), yet standardized tools to assess pandemic-specific stress, such as the COVID Stress Scales (CSS), have only recently been developed (Taylor et al., 2020). After reviewing related literature and consulting with health-related anxiety experts, Taylor et al. (2020) identified domains and subsequent scales important for the assessment of pandemic-specific related stress; fears about the dangerousness of the virus (Danger scale), fears about physical sources of virus contamination (Contamination scale), fears related to foreigners being a source of the virus (Xenophobia scale), fears about social and economic consequences of the virus (Socioeconomic Consequences scale), compulsive checking behaviors related to the virus (Compulsive Checking scale), and traumatic stress symptoms related to the pandemic (Traumatic Stress scale). The authors initially developed 58 items that were thought to capture these six domains and after removing five items, were left with 53 initial items. These items were worded in a simplified way that would make adaptation of the items for future pandemics easier (e.g., using the term “the virus” in lieu of COVID-19 specifically).

The psychometric properties of the CSS were originally examined in two large samples of U.S. (N = 3,375) and Canadian (N = 3,479) adults (Taylor et al., 2020). Taylor et al. (2020) used parallel analysis to determine the dimensionality of each scale. For both samples, parallel analysis supported a five rather than a six-factor solution. Each factor corresponded to one of the proposed scales, except for the Danger and Contamination scales, which loaded on a single factor together. Rather than reducing the Danger and Contamination factor to a single six-item scale, all 12 items were retained as two separate scales so that Danger and Contamination could be assessed separately.

Factor Structure

Since its development, the CSS has been adapted and used by researchers to assess for distress related to the COVID-19 pandemic globally (e.g., Asmundson et al., 2020), as well as in Mexico (Delgado-Gallegos et al., 2020), Iran (Khosravani et al., 2021), Egypt and Saudi Arabia (Abbady et al., 2021), Palestine (Mahamid et al., 2022), Germany (Kubb & Foran, 2020), Philippines (Montano & Acebes, 2020) and a total of 24 languages (Rachor et al., 2023). However, the five-factor solution for the CSS initially identified by Taylor et al. (2020) has not always remained consistent among different samples and adaptations of the CSS. For example, Adamczyk et al. (2021) studied the CSS in Polish and Dutch samples. The authors stated that consistent with Taylor et al. (2020) the scale showed a five-factor structure rather than a six-factor solution. However, the authors’ data showed the Traumatic Stress and Checking scales merged into a single factor in the five-factor solution. Historically, in different studies the Danger and Contamination scales have combined to create a single factor yielding a five-factor solution (Mertens et al., 2021; Taylor et al., 2020). Taylor et al.’s (2020) final version recommended a five-factor solution while Mertens et al. (2021) saw a clustering of the items in the Danger and Contamination subscales. Yet, Mahamid et al. (2022) translated the CSS into the Arabic language and reported that a six-factor solution with 31 items among a sample of N = 860 Palestinian adults.

Rasch Analysis

Although prior research has validated the CSS across different populations (Mahamid et al., 2022; Taylor et al., 2020), few studies have examined its item-level psychometric properties using Rasch analysis, limiting our understanding of its internal structure and item difficulty calibration. Rasch modeling is a popular tool in applied health research with the purpose of exploring the psychometric properties of questionnaires and inventories (Dima, 2018). Recently, it was used to assess the psychometric properties of the Fear of COVID (FCV) scale with Chinese and Pakistani populations (Chen et al., 2021; Ullah et al., 2023), and the development of a symptom burden questionnaire for long covid (SBQ-LC; Hughes et al., 2022). Yet, these authors could not find literature on Rasch analysis and the CSS. One advantage that Rasch analysis presents is that the model can place a person’s trait and item difficulty in the same continuum (e.g., logits) which makes it easy for applied researchers to validate the scores of a scale (Boone, 2016; Boone et al., 2013). Research on item selection suggests that Rasch has a slightly higher percentage of variance explained compared to CFA (Chiu et al., 2020). Christensen et al. (2012) recommends the use of both Rasch analysis and CFA to address lack of confidence in the items, and there is interest in the dimensionality of the measure as is the case with the CSS subscales (e.g., Danger and Contamination). Finally, Rasch analysis provides information on item fit based on this information researchers can make decisions regarding revising or removing items which can be useful for the Danger and Contamination subscales.

Differential Item Functioning

Consistently, scale development has involved examining differential item functioning to identify item bias (Desjardins & Bulut, 2018). For example, within the COVID-19 specific scale development area, Sánchez-Teruel and Robles-Bello (2021) and Stănculescu (2022) examined DIF across gender for the Fear of COVID-19 Scale (FCV-19S) in Spanish and Romanian, respectively, as part of their psychometric evaluations of the instrument. When examining the literature for the CSS only Şahin et al. (2022) conducted differential item functioning on the CSS. The authors utilized gender, whether participants had presence or absence of a COVID history, and student status to assess DIF within a sample of Turkish adult volunteers. The authors reported that the DIF analyses did not find evidence of invariance for the CSS items across gender. Although Şahin et al. (2022) did not detect DIF, further research is needed to establish the gender invariance of the CSS across diverse cultural contexts to support valid measurement and meaningful gender comparisons.

Well-Being Measures

Taylor et al. (2020) administered the patient health questionnaire-4 (PHQ-4), short health anxiety inventory (SHAI), the obsessive-compulsive inventory-revised (OCI-R), the xenophobia scale (XS), and the Marlowe Crowne social desirability scale short form (MCSD-SF) to provide convergent and discriminant validity evidence for the CSS. However, they did not include well-being measures. A recent study by Duckering (2022) examined the relationship between the CSS and psychological well-being using psychological inflexibility as a mediator variable and the psychological well-being scale (PWS) as the outcome variable. The single study examined the relationship between CSS and psychological well-being in 152 psychology students that were predominantly Latinx/Hispanic (69%) and reported a weak correlation. Furthermore, Pheko et al. (2023) studied mental well-being shortly after the beginning of the COVID-19 pandemic in the countries of Botswana, Zimbabwe, and Malaysia. The authors found anxiety and loneliness to be moderately and negatively correlated to well-being.

Assessment of well-being and personal strengths alongside measures of psychological distress has been proposed to be helpful and offer more information in clinical practice (e.g., Rashid & Ostermann, 2009). Investigating the relationship between pandemic-specific stress, general distress, and indicators of well-being can better inform our understanding of assessment and treatment efforts during health crises such as a global pandemic (Carver et al., 2021; Gavin et al., 2020; Greenspoon & Saklofske, 2001; Saltzman et al., 2020) as well as inform the development of intervention and prevention targets during present and future pandemics.

Purpose and Rationale

The overall aim of the present study is to examine the psychometric properties of the CSS and examine the relationships between the CSS and measures of well-being (life satisfaction, resilience, and mindfulness), neither of which have been included in the COVID-19 assessment literature. A secondary aim included examining item performance in the CSS by performing Rasch analysis and differential item functioning (DIF) analysis across gender both of which have rarely been performed on the CSS. We predict that the psychometric properties of the CSS, using CFA, would yield similar findings to the original study (Taylor et al., 2020). However, based on our literature review we anticipate examining competitive models based on different number of factors. We also hypothesized that life satisfaction, resilience, and mindfulness would negatively correlate with the CSS (Conversano et al., 2020; Lenzo et al., 2020; Pidgeon & Keye, 2014) and that anxiety and depression would positively correlate with the CSS (Devakumar et al., 2020). While the CSS was developed to assess stress directly related to the pandemic there is a lack of understanding its differential functioning across gender.

Research Questions

How many factors are identified in the CSS?

Using Rasch analysis, how effectively do the items on the CSS Scale measure COVID-19 stress among population in the US?

Does the CSS exhibit measurement invariance, as examined by differential item functioning, across gender?

Method

Ethical Approval

The general protocol and data collection procedures for this study were approved by the University of Texas at Tyler Institutional Review Board (IRB). Prior to beginning the survey, participants provided informed consent. A component of the informed consent was a review of potential risks and benefits of participation. Participants were informed that some of the survey questions may elicit difficult memories or that they may feel distress. As a component of the informed consent, they were reminded that they may stop participation at any time and were provided with contact information of the principal investigator, faculty sponsor, and IRB contact in the event that there were any questions or concerns about the survey. Participants were informed more than once within the informed consent that their participation in this study was entirely voluntary and that they were not required to answer any questions that they did not want to and could stop participating at any time without penalty. To mitigate risks associated with emotional discomfort or distress that participants may have experienced, at the end of the survey each participant was offered a debriefing summary in which resources were offered to them (i.e., mental and behavioral health resources, behavioral support helpline). Participants were informed that study data were kept confidential and personal information was collected and stored separately from survey information. Each participant’s survey data was identifiable by a unique code for each participant.

Overall, the benefits of this research study were viewed by the IRB to outweigh the minimal risks involved with the potential to promote well-validated assessment tools.

Sampling and Data Collection Procedures

Participants completed the CSS in June–July 2020, a period characterized by peak pandemic uncertainty and widespread lockdowns, making this dataset ideal for assessing acute COVID-related distress. Participants were recruited through social media outlets (Twitter, now X, and Facebook), which may have introduced selection bias by over-representing younger, tech-savvy individuals with internet access. After providing informed consent, participants (N = 1,388) who were 18 years of age or older, resided in the U.S., and were able to read and understand the English language began the survey via Qualtrics between June 22 and July 16, 2020. Participants were incentivized to complete the survey by being entered into a drawing for a $25 Amazon gift card. Participant ages ranged from 18 to 83 (M = 30.7, SD = 10.4). In addition to age, participants were asked a series of demographic questions including race and ethnicity, gender, marital status, and highest level of education completed.

Participants

The majority of participants identified as female (63.3%) and the remaining participants identified as male (36.1%) or other/prefer not to answer (.6%). In response to race and ethnicity, over half of the participants identified as White (58.4%) and the remaining participants identified as Hispanic or Latinx (11.9%), Black or African American (10.0%), American Indian or Alaska Native (7.7%), Asian or Asian American (4.8%), Multi-racial (2.7%), Middle Eastern or North African (1.8%), Native Hawaiian or Other Pacific Islander (1.5%) or preferred not to answer. Most of the participants were married (49.1%) or never married (43.6%) and the remaining participants were either divorced, separated, widowed, or preferred not to answer (7.3%). Most participants had completed a level of education ranging from high school to Bachelor’s degree (cumulative 81.5%) and the remaining participants had either not completed high school (2.4%) or completed an education higher than a Bachelor’s degree (16.1%).

In addition to the demographic questions mentioned above, the survey included questions related to the participant’s COVID-19 pandemic experience (see Table 1 for details).

COVID-19 Pandemic Experiences Reported by Participants.

Measures

COVID Stress Scales

The CSS is a 36-item scale designed to assess COVID-specific distress. The CSS consists of 6 scales including Danger (fear of becoming infected), Contamination (fear of coming into contact with contaminated objects or surfaces), Compulsive Checking (checking for and seeking reassurance for pandemic-related threats), Socioeconomic Consequences (fear of the socioeconomic consequences of the pandemic such as job loss), Xenophobia (fear that foreigners are carriers of the virus), and Traumatic Stress (symptoms of traumatic stress related to the pandemic such as intrusive thoughts and nightmares). Items in each scale are rated on a 5-point Likert-type scale, ranging from 0 (not at all or never) to 4 (extremely or almost always) and were summed for each subscale. Higher scores on each scale are indicative of higher distress levels. The scores of the CSS have demonstrated good reliability and validity in previous studies (e.g., Montano & Acebes, 2020; Taylor et al., 2020) and in the present study. Details about reliability for the CSS in this study are reviewed in detail in the results section.

Patient Health Questionnaire-4

The Patient Health Questionnaire (PHQ-4; Kroenke et al., 2009) is a 4-item self-report scale that was created to assess core symptoms of anxiety and depression symptoms that have occurred within the past 2 weeks. Two items screen for depression and 2 items screen for anxiety. Items are rated on a 5-point Likert-type scale ranging from 0 (not at all) to 3 (nearly every day). Anxiety and depression symptoms can be examined together with a total score of all items or can be assessed separately by examining the anxiety and depression subscales. Kroenke et al. (2009) suggested that the following total combined scores are indicative of symptom elevation: 3 to 5 (mild), 6 to 8 (moderate), 9 to 12 (severe). When examining anxiety and depression separately from each other, the authors suggested that scores of ≥3 for both the anxiety and the depression subscales serve as appropriate cut-points to indicate clinical significance. For example, a score of ≥3 on the depression subscale has demonstrated 83% sensitivity and 90% specificity for major depressive disorder and a score of ≥3 on the anxiety subscale has demonstrated 88% sensitivity and 83% specificity for generalized anxiety disorder (Kroenke et al., 2009). The PHQ-4 has demonstrated good reliability in the past (e.g., Löwe et al., 2010) and in the present study. Cronbach’s alpha and McDonald’s omega were α = .80,ω = .80, respectively.

Brief Resilient Coping Scale

The Brief Resilient Coping Scale (BRCS; Sinclair & Wallston, 2004) is a 4-item scale that measures how individuals cope with stress and difficulties. The participants responded to 4 items using a 5-point Likert-type scale ranging from 1 (does not describe me at all) to 5 (describes me very well). Item responses were summed and compared to normative cut-offs: 4 to 13 (low resilient copers), 14 to 16 (medium resilient copers), 17 to 20 (high resilient copers). The BRCS has demonstrated acceptable internal consistency with Cronbach’s alpha between .76 and .78 in previous research (Kocalevent et al., 2017; Sinclair & Wallston, 2004), and fair reliability in the present study (α = .69, ω = .69).

Satisfaction with Life Scale

The Satisfaction with Life Scale (SWLS; Diener et al., 1985) is a 5-item scale designed to assess an individual’s overall satisfaction with life. Each item is rated on a 7-point Likert scale ranging from 1 (strongly disagree) to 7 (strongly agree). The scores were summed and compared to normative cut-offs (Pavot & Diener, 2008): 5 to 9 (extremely dissatisfied), 10 to 14 (dissatisfied), 15 to 19 (slightly dissatisfied), 20 (neutral), 21 to 25 (slightly satisfied), 26 to 30 (satisfied), 31 to 35 (extremely satisfied). The SWLS has demonstrated good reliability and validity in previous studies (Pavot et al., 1991; Yun et al., 2019) and demonstrated good reliability in the present study (α = .86,ω = .86).

The Cognitive and Affective Mindfulness Scale-Revised

The Cognitive and Affective Mindfulness Scale-Revised (CAMS-R; Feldman et al., 2007) is a 12-item measure designed to capture trait mindfulness. Participants rate each item on a Likert-type scale from 1 (rarely/not at all) to 4 (almost always). After appropriate reverse-scoring, participant scores were summed. Higher values reflect greater mindful qualities. The CAMS-R has demonstrated acceptable reliability in previous studies (Feldman et al., 2007) and acceptable reliability in this study (α = .74,ω = .74).

Data Analysis

Data management, internal consistency reliability, and correlations were conducted in IBM SPSS Statistics (Version 24; IBM Corp, 2016). Confirmatory factor analyses (CFA) and differential item functioning (DIF) analysis were performed using the lavaan, nFactors, psych, and lordif packages in R (Choi et al., 2016; Raiche & Magis, 2020; R Core Team, 2022; Revelle, 2022; Rosseel, 2012). Rasch analyses were conducted in Test Analysis Modules (TAM) and the SnowIRT module from jamovi (Jamovi Project, 2021; Robitzsch et al., 2022; Seol, 2023). Analyses were conducted with the completed data for each specific analysis.

Considering that both five- and six-factor model have been proposed among CSS literature (Mahamid et al., 2022; Taylor et al., 2020), we wanted to test both models with our data. The five-factor solutions were formulated from the findings of the original developers of the CSS (Taylor et al., 2020) in which Danger and Contamination items were placed on one factor together, with the other scales being placed on their own respective factor. The six-factor solutions were formulated from the findings of other researchers (e.g., Mahamid et al., 2022) in which the items from each of the six scales were placed in their own respective factor. We performed CFA on the following solutions: five-factor, five-factor (higher order), five-factor (bifactor), six-factor, six-factor (higher order), and six-factor (bifactor). We ran these analyses using the diagonally weighted least squares (DWLS) estimation as it has been shown to offer more accurate parameter estimates and model fit that is more robust to non-normality and variable type (Mîndrilă, 2010).

In the current examination, we tested for differential item functioning across male and female respondents using an iterative approach combining item response theory and ordinal logistic regression methodology. This approach uses item response theory techniques (i.e., grade response model or generalized partial credit model) to estimate each participant’s level of the underlying latent trait assessed by the instrument. Once ability estimates are established, a series of nested logistic regression models (i.e., ability only; ability and group assignment; ability, group assignment, and ability × group assignment) are estimated testing relationships between independent variables and responses for each assessment item. The fit of the nest models for each item is then compared using likelihood ratio tests and significant differences between models are evidence of uniform (i.e., consistent group differences in the probably of selecting a certain response option regardless of the level of the underlying latent trait), non-uniform (i.e., group differences in the probably of selecting a certain response option that differ across different levels of the underlying latent trait) and overall differential item functioning. If DIF is detected in assessment items, the process begins again using modified ability/trait estimates and continues until a stable set of items demonstrating DIF is identified (Choi et al., 2016). This was applied to each of the subscales individually to provide a more accurate assessment of potential item-level bias (De Ayala, 2009; Vallejo-Medina & Sierra, 2015). The magnitude of detected DIF effects was evaluated using ΔR2 Nagelkerge and the following criteria: negligible DIF, ΔR2 Nagelkerge < .035; moderate DIF, ΔR2 Nagelkerge between .035 and .070, Large DIF, ΔR2 Nagelkerge > .070 (Jodoin & Gierl, 2001).

Results

Confirmatory Factor Analysis (CFA)

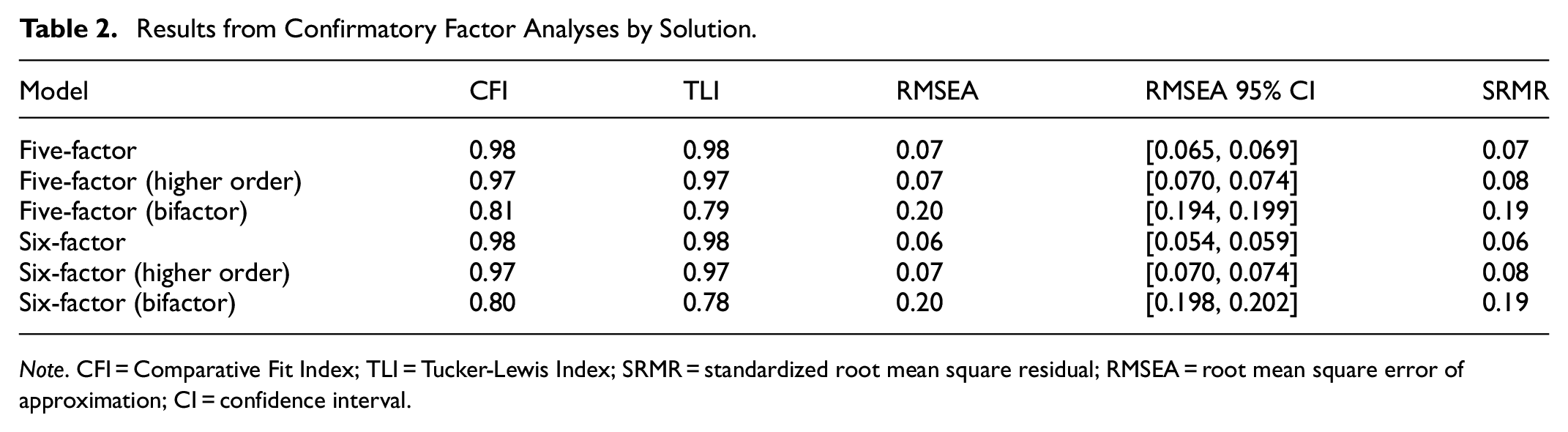

Mardia’s test was conducted to test multivariate normality. The results indicated there were issues with multivariate normality (p < .001), which further supported the use of DWLS estimation when conducting the CFA. Among the models demonstrating acceptable fit, the six-factor model demonstrated the best fit to the data, outperforming the five-factor, five-factor higher-order, and six-factor higher-order models based on model fit indices (see Table 2 for results of each CFA performed).

Results from Confirmatory Factor Analyses by Solution.

Note. CFI = Comparative Fit Index; TLI = Tucker-Lewis Index; SRMR = standardized root mean square residual; RMSEA = root mean square error of approximation; CI = confidence interval.

Internal Consistency Reliability

Internal consistency reliability was measured by Cronbach’s alpha and McDonald’s omega for each CSS subscale. Following guidelines identified by Streiner (2010), internal consistency for each CSS ranged from acceptable to excellent (George & Mallery, 2019). The following scales demonstrated excellent internal consistency: Socioeconomic Consequences scale, α = .91, ω = .91 and Xenophobia scale, α = .95, ω = .95. The following scales demonstrated good internal consistency: Contamination scale, α = .88, ω = .88; Traumatic Stress scale, α = .88, ω = .88 and Danger scale, α = .87, ω = .87. The Compulsive Checking scale demonstrated acceptable internal consistency (α = .78, ω = .79).

Rasch Analysis

To help us decide between the partial credit model (PCM) and the rating scale model (RSM) prior to conducting Rasch analysis we conducted a model comparison analyses (Table 3; Andrich, 1978; Masters, 1982). The PCM does not assume of fixed item thresholds across in contrast to RSM (Andrich, 1978, 1988; Masters, 1982; Masters & Wright, 1997). The results of the likelihood ratio test of model comparisons supported the use of PCM for our dataset based on lower values of the Deviance, the Akaike’s information criterion (AIC; Akaike, 1987), and the Bayesian information criterion (BIC; Schwarz, 1978).

Rasch Analysis Model Comparison.

Except for the Compulsive Checking subscale where two out of the three indices suggest PCM.

Local Independence

To assess local independence for the Rasch analysis, we utilized the effect size of the model fit MADaQ3 measure, which represents the average of the absolute values of Q3, for this measure we anticipate values closer to zero which would support local independence (Yen, 1984). The MADaQ3 was ranged from 0.045 to 0.118 for all the subscales (p < .001) indicating local independence.

Item Fit

In Rasch analysis, the purpose of item fit is to identify if the data fit the Rasch model (Linacre, 2002). Linacre (2002) provides guidelines for the item fit measures Infit and Outfit (0.5–1.5). Each of the five scales were examined individually as our previous exploration using CFA indicated the subscales should be examined independently; however, none of the scales had an Infit and Outfit for any item that exceeded the cutoffs provided by Linacre (2002) as can be seen in Table 4. Paying special attention to the scales of Danger and Contamination due to the issues previously discussed in the literature review we found that item fit was not an issue for either of these scales indicating that the items belong to their respective scales. In Table 4 we have also sorted the items within each subscale from the item easiest to endorse to the least difficult to endorse. For example, the highest Infit value was observed in the Danger subscale. The item easiest to endorse (the item that caused the least stress) by participants was Item 6 “I am worried that social distancing is not enough to keep me safe from the virus” at 1.171 and the most difficult to endorse was Item 4 “I am worried that I can’t keep my family safe from the virus” with the lowest Infit 0.877, suggesting minor variation in item response behavior but no significant misfit. Overall, Rasch analysis confirmed model fit, with all items falling within acceptable infit/outfit thresholds.

Item Fit Statistics for All Scales.

Person-Item Map

The Person-Item Maps for all the subscales of the CSS can be found in Figure 1. In Figure 1, from left to right we can see the Person-Item Maps for the danger, socioeconomic consequences, and xenophobia are in the first row and Contamination, Traumatic Stress, and Compulsive Checking in the second row. In each Person-Item Map, on the left we have the respondent’s trait and to the right of the graph we have item difficulties for all subscales. The Person-Item Maps indicated that participant trait distribution (pandemic stress levels) exceeded the range of item difficulties. We can particularly see this in the Xenophobia scale which showed a slight right skewness. This suggests that for a number of participants may experience stress beyond what the CSS is able to capture, particularly in socio-political contexts where pandemic-related xenophobia is heightened. The rest of the scales showed an approximately normal distribution of participants traits (e.g., stress depending on the subscale trait).

Person-Item Maps clockwise for the Danger, Socioeconomic Consequences, Xenophobia, Contamination, Traumatic Stress, and Compulsive Checking.

Differential Item Functioning

Results of a DIF analysis of the CSS across gender classification (female vs. male) indicated that most CSS items demonstrated negligible DIF. However, ΔR2 Nagelkerge values revealed evidence of moderate uniform DIF for Compulsive Checking Item 1 (i.e., “checked social media posts concerning COVID-19”). Examination of the item characteristic curve indicated that, after controlling for the underlying latent trait measured by the compulsive checking items, female participants were more likely than male participants to report checking social media for posts related to COVID-19. The results of the DIF analysis are presented in Table 5.

Differential Item Functioning Analysis Results for CSS Subscales.

Note. Corrected p-value indicating a significant x2 test = .01.

Concurrent Validity

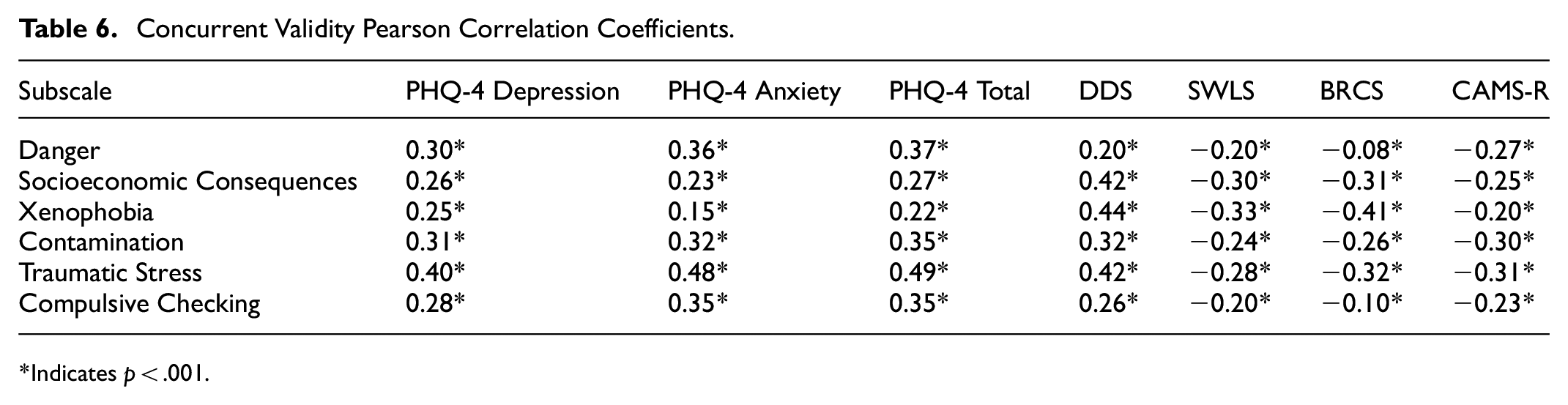

To assess concurrent validity, we examined the Pearson Product Moment Correlation coefficients between the PHQ-4 and CSS scales. The CSS demonstrated strong concurrent validity, with significant correlations between CSS and the PHQ-4 subscales (Total: r = .37, p < .001; Depression: r = .30, p < .001; Anxiety: r = .36, p < .001; Table 6 for full details). These findings indicate that higher pandemic-specific stress is associated with greater general psychological distress. We also examined the correlational relationships between well-being measures and the CSS. The CSS scales were correlated negatively with the SWLS, the BRCS, and the CAMS-R, with correlation coefficients ranging from small to moderate. It is important to note that the strongest correlation was observed between the Traumatic Stress subscale and PHQ-4 total score (r = .49, p < .001). This finding could potentially indicate the need for trauma-informed mental health interventions.

Concurrent Validity Pearson Correlation Coefficients.

Indicates p < .001.

Discussion

It is well established in literature that pandemics are associated with higher levels of psychological distress (e.g., Wang et al., 2020). Indeed, the most recent COVID-19 pandemic has been associated with pandemic-specific stress, anxiety, depression, and traumatic stress worldwide (e.g., Pieh et al., 2020; Qiu et al., 2020; Rehman et al., 2021).

Similarly to previous research utilizing the CSS had encountered issues with the Danger and Contamination subscales along with discrepancies between six or five factor solutions (Mahamid et al., 2022; Mertens et al., 2021). Our results indicated that both a six and a five-factor model fit the data, however, we opted for a six-factor model rather than five-factor model based on interpretability and to align with the original development of the scale. Taylor et al. (2020) in their initial CSS paper, elect to keep the two scales (Danger and Contamination) that loaded on the same factor as separate scales. The authors explained their reasoning arguing they suspected that others may wish to examine Danger and Contamination separately. Despite frequent factor merging in prior studies (Mertens et al., 2021), Rasch analysis in our sample showed distinct item difficulty calibrations between the Contamination and Danger scales, supporting their separation. These discrepancies among findings for the Contamination and Danger scales throughout CSS studies may be explained by data collection occurring at different phases of the pandemic or may be related to the Contamination and Danger scales being closely related, which has been proposed by other researchers (e.g., Mahamid et al., 2022; Mertens et al., 2021). Regardless, the accumulation of research showing the merging of the Danger and Contamination scales perhaps indicates that our interpretation of these subscales should change accordingly. Furthermore, our paper was the first to examine the CSS through a Rasch analysis framework, through the item fit exploration we found no issues with the items misfitting in their respective subscales.

In terms of differential item functioning, our results showed that most CSS items did not show evidence of response bias across male and female participants. The general lack of DIF effects is consistent with the findings of Şahin et al. (2022) which suggested that the CSS instrument demonstrated gender invariance in a sample of Turkish adults. However, it is important to note that our DIF analysis did identify one item that might not be functioning as intended. However, one item from the Compulsive Checking subscale—Item 1, which assesses the tendency to check social media for posts related to COVID-19—demonstrated a moderate level of uniform DIF. Specifically, female participants were more likely than males to endorse this item, even after controlling for the underlying latent trait measured by the subscale. Prior empirical research has provided evidence of gender differences in the tendency to utilize social media to obtain health-related information as well as differences in the type of health-oriented information sought out by males and females on social media platforms (Bidmon & Terlutter, 2015; Hassan & Masoud, 2021; Krasnova et al., 2017). Thus, this item may be capturing general information-seeking tendencies rather than specific compulsive social media checking behaviors related to COVID-19.

As we anticipated, our results indicated that anxiety and depression (PHQ-4) positively correlated with the CSS. As highlighted within the CSS, pandemic-specific distress is often associated with fear and other negative emotions, thus, a positive relationship with anxiety and depression is to be expected. In contrast, well-being indicators such as life satisfaction, resilience, and trait mindfulness were negatively correlated with the CSS in our study. This finding highlights that well-being indicators should be taken into consideration alongside distress to capture the breadth of an individual’s mental health more fully (Carver et al., 2021; Keyes, 2005). For example, an individual who reports high levels of pandemic-related distress may also simultaneously report high levels of resilience and life satisfaction. Intervention needs for someone with high levels of pandemic-related distress and high levels of well-being will be different than someone who has high levels of pandemic-related distress and low levels of well-being. Thus, assessing for indicators of well-being in addition to screening for pandemic-related distress could assist those in clinical practice with formulating individualized intervention targets. Without timely identification and intervention, what may begin as pandemic-specific distress can lead to more severe or chronic symptoms of anxiety and depression (Fofana et al., 2020).

Limitations and Future Directions

There are several limitations to the present study First, the sample of this study was recruited entirely from social media outlets (Twitter, now X, and Facebook), which is not representative of the entire U.S. population, as some individuals do not use social media or have internet access. For example, internet and social media use are impacted by variables such as homelessness and socio-economic status (Guadagno et al., 2013). Second, all data were self-reported, increasing the risk of social desirability bias. Participants may have underreported distress due to stigma surrounding mental health, particularly among male respondents. Future research should conduct longitudinal assessments to determine whether CSS scores remain stable over time, particularly as pandemic stressors shift post-COVID. Longitudinal assessments would also help determine potential effects of long-COVID on responses to the CSS, something that was not identified nor able to be explored in the present study. Third, additional assessments of concurrent validity to the CSS would be helpful to include in future research. For example, PHQ-4 is a strength of the study in terms of its brevity in screening for clinically meaningful anxiety and depression symptoms that was used in the initial validation paper for the CSS (Taylor et al., 2020), other concurrent measures of depression and anxiety would be helpful to include alongside PHQ-4 in future research.

Clinical Implications and Conclusion

The present study contributed to the literature by including measures of well-being along with measures of distress in examining the psychometric properties of the CSS. The data was collected during the height of the pandemic (summer 2020) and the findings have the potential to offer a more holistic view of mental health during the pandemic. The COVID-19 pandemic was just one of the most recent global pandemics; however, in 2024 the World Health Organization (WHO) keeps track of diseases which have the potential to become an epidemic (e.g., acute watery diarrhea [AWD], cholera, malaria among others). Thus, development of instruments to assess pandemic specific stress is useful for public health officials and clinicians. Understanding an individual’s experience of pandemic-related distress in conjunction with other forms of psychological distress that may already be routinely screened for (e.g., anxiety and depression), and in parallel with well-being indicators, can help those in clinical practice guide support and intervention needs. For example, in clinical settings, the CSS can be paired with resilience measures (e.g., BRCS) to tailor interventions. For instance, individuals scoring high on CSS but also demonstrating high resilience may benefit from mindfulness-based interventions, whereas those with low resilience may require cognitive restructuring techniques. The addition of a pandemic-related distress screening such as the CSS and measures of well-being to routine assessment practices could prove beneficial, not only as society continues to recover from the COVID-19 pandemic, but also in the event of future pandemics.

Footnotes

Ethical Considerations

This study was reviewed and approved by the University of Texas at Tyler Institutional Review Board.

Consent to Participate

Participants were informed about the purpose of the study, their rights as participants, and the voluntary nature of their participation before beginning the online survey. Survey responses were collected anonymously to protect participants confidentiality.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

Data sharing not applicable to this article as no datasets were generated or analyzed during the current study. Data is available upon request.