Abstract

This study addresses how Chinese learners of English as a second language (L2) perceive conversations in English materials as compared to speakers of English as a first language (L1). Data were collected through questionnaires completed by 48 participants (28 L2 English learners in China and 20 L1 English speakers in the UK), eliciting evaluations of written versions of two conversations from the International English Language Testing System (IELTS) and the College English Test (CET) Band 6 of China. Overall, learners favored the CET conversation, but L1 speakers preferred the IELTS one. A content analysis uncovered five major attention foci among participants: Language, Discourse, Content, Context, and Learning, with L1 speakers focusing more on the genuineness of language at a macro level than learners, who mostly attended to words and sentence structure. More detailed discourse analysis of lexical items reflecting appraisal highlighted that learners showed high awareness of context-related authenticity and evaluated difficulty with respect to a wide range of aspects, whereas L1 speakers evaluated the authenticity at language and discourse levels and commented on the perceived difficulty of content and learning. Implications for teaching and learning spoken English with materials include the importance of offering explicit information about authenticity and spoken features of conversations that students may otherwise fail to notice.

Introduction

Most undergraduates in China majoring in any discipline except English are required to take the College English Test (CET), an English exam administered by the National College English Testing Committee of China (Zheng & Cheng, 2008). Additionally, those who plan to study abroad or live in English-speaking countries will take the International English Language Testing System (IELTS). To improve their exam scores in listening, students practice items from previous exams and repeatedly listen to conversations in authentic or sample examination papers. As the most common spoken English input for Chinese learners (Hui & Dong, 2019), conversations in CET and IELTS listening tests are representative of spoken English in international and local English materials available in China. Unfortunately, spoken texts in various English materials have been claimed to be inauthentic (Wagner, 2014), contributing to the failure of learners’ speaking skills (Tomlinson, 2008). However, it is unclear how language learners themselves perceive frequently used spoken English materials like CET and IELTS, as compared to native English speakers’ views on these same materials.

Various studies addressing the effects of materials on language learning have shown that learners’ language competence can be developed through using more authentic materials (Montero Perez, 2022) or more difficult ones (Niazifar & Shakibaei, 2019). As these studies focus on learners’ outcomes after using materials, they do not address the learning process itself (Mas-Alcolea & Torres-Purroy, 2022). Thus, it remains unclear how materials with varying degrees of authenticity or difficulty help language input to become intake, contributing to language acquisition by learners’ noticing (Jones & Oakey, 2024). Although language input from materials undergoes the same processes as other types of input in the learners’ minds, studies on language teaching materials rarely investigate the effects of materials as input on language learning (Ellis & Shintani, 2014).

Our study aims to fill this gap by investigating what learners notice and how their attention is driven by the authenticity and difficulty of spoken materials. Our analysis of learners’ evaluations of conversations in CET and IELTS provides insights into how learners consciously perceive spoken materials and estimate their authenticity and difficulty. Noticing language input facilitates acquisition even though it does not guarantee it (Schmidt, 2001). We propose that the efficiency of language teaching can be enhanced by taking into account learners’ attention focus and perceptions while using texts in materials.

Research addressing materials users typically focuses on teachers, learners, or divergences between them. Some studies exploring learners’ perception or understanding of language use first language (henceforth “L1”) speakers as a comparison group to second language (“L2”) learners when addressing aspects such as speech segmentation (Dobrego et al., 2023) or compliment responses (Y. Zhang, 2024). However, to our knowledge, no previous study directly juxtaposes perceptions of authenticity and difficulty of spoken text materials in L1 English speakers versus L2 English learners, which is significant for highlighting features of learners’ interpretations. To address this gap, this study explores how L2 English learners in China perceive conversations in materials, as compared to L1 English speakers. Specifically, the study addresses three research questions (RQs) as follows:

Literature Review

The Authenticity of Materials

Research has identified a lack of authenticity in the way spoken English is represented in materials with respect to spoken features, vocabulary, discourse, or pragmatic information. Textbook conversations incorporate fewer discourse markers like repetition, pausing, and hesitation devices (Gilmore, 2004). Highly frequent lexical bundles among L1 English speakers (Northbrook & Conklin, 2019) and pragmatic knowledge of speech acts is under-represented in textbooks (Pérez-Hernández, 2021). Spoken discourse in textbooks tends to be scripted (Guo & Yao, 2021), and listening texts in language examinations lack characteristics of spoken language, such as backchanneling and repetitions (Rossi & Brunfaut, 2021). Depending on where they are used, materials may furthermore lack context-related authenticity (Gilmore, 2007) regarding the choice of topics or the representation of cultural background. Some textbooks incorporate topics about personal information, which are less common in students’ daily interactions (Siegel, 2014). English exams, moreover, tend to represent certain social and cultural values that may not be adequate for examinees from other parts of the world (Noori & Mirhosseini, 2021). As these concerns are typically not explored from L2 learners’ perspectives, it remains unclear whether the main targeted users of these materials consider them to be relevant and what aspects they would pay attention to.

The Difficulty of Materials

Texts in materials can present challenges for L2 learners with respect to the target language, such as new words, long sentences, or discourse structure, but also concerning nonlinguistic aspects, such as unfamiliar context, genre, topic, or cultural background. Previous research has focused primarily on lexical difficulty and, to a lesser extent, on syntactic or textual difficulty and nonlinguistic aspects.

Based on Nation’s (2022) lexical coverage for unassisted reading comprehension, much work measures the number of words needed to reach 95% or 98% coverage in English textbooks (e.g., Bi, 2020). Many studies also address lexical density (e.g., Tang & Liang, 2021) and the progression of difficulty (e.g., Guo & Yao, 2021). Several studies focus on the general difficulty of texts, involving not only lexical but also syntactic or textual aspects (e.g., Chen, 2016).

Most of this research focuses on written texts in textbooks, especially the lexical difficulty in English textbooks for academic/specific purposes. There are some notable exceptions analyzing the lexical difficulty of oral discourse. Gilmore (2004), for example, compared the lexical density of conversations published in textbooks between 1982 and 1997 and found that more recent publications present a lower vocabulary load for learners with a decreased lexical density. Notably, studies of this kind focus on materials analysis but do not consider learners’ views, thus neglecting the effects of noticing input features in support of language acquisition.

Effects of the Authenticity and Difficulty of Materials

Authentic materials have frequently been shown to be beneficial to language learning. For instance, the employment of authentic plays and drama in classroom instruction significantly improved learners’ syntactic complexity and pronunciation accuracy (Floare Bora, 2024). Conversely, inauthentic input from materials may aggravate learners’ problems in using linking adverbs (Leedham & Cai, 2013). Moreover, test takers’ extensive use of testing materials can reinforce L1 social and cultural biases, which are problematic in the context of L2 countries (Noori & Mirhosseini, 2021). By contrast, research on the effects of difficulty yields inconclusive results. After being exposed to reading materials with different levels of difficulty for 3 months, L2 English learners who read more difficult texts significantly outperformed those who did easier ones in reading comprehension (Niazifar & Shakibaei, 2019). Other studies found that learners’ reading comprehension can be constantly improved independently of the difficulty of texts (Chiang, 2015), although students show higher levels of reading anxiety with more difficult texts (Bahmani & Farvardin, 2017).

In studies in this line of research, learners are mostly passive participants, and researchers’ conclusions tend to be drawn from their analysis of language production rather than learning processes (MasAlcolea & TorresPurroy, 2022). Therefore, how specific content in authentic or difficult materials gradually influences language learning remains underexplored. How learners interpret authenticity reflects their understanding of real language use; for instance, learners who are frequently exposed to scripted listening input may have misconceptions about what is natural in spoken language (Liao et al., 2018). This clearly motivates a closer look at perceptions of authenticity.

Materials with authentic language or context may not be suitable for learners, as they may contain undesired challenges. Teachers often adjust the difficulty of materials gradually to suit the level of ability as required, which almost inevitably sacrifices authenticity – creating a trade-off between authenticity and difficulty. Thus, creating a suitable level of authenticity and difficulty in materials poses considerable challenges and may affect learners’ satisfaction and learning progress in various ways. Materials evaluation (ME) research with respect to authenticity and difficulty provides further insights in this area, as summarized next.

Evaluations of the Authenticity and Difficulty of Materials

We reviewed how research on ME has addressed the issues of authenticity and difficulty by examining relevant items in existing evaluation checklists. Two overview tables (Supplemental Appendices S1–2) summarize items from evaluation checklists concerning the authenticity and difficulty of language materials, including those designed for teacher evaluators and learner ones.

Only a few items in the checklists were developed specifically for learners. The interpretation of authenticity, as shown in Supplemental Appendix S1, differs clearly across the checklists. While some researchers consider authentic materials to be those produced by native speakers, others focus on the authenticity of topics or situations, features of natural speech or spoken grammar, and so forth. Most items about difficulty (see Supplemental Appendix S2) relate to learners’ language ability, covering a wide range of issues such as vocabulary, sentence length, cognitive load, or confidence.

Other quantitative or qualitative items addressing authenticity and difficulty do not offer a definition at all but presuppose an understanding of what authenticity and difficulty is. Also, as the evaluation items usually aim at entire textbooks rather than specific texts, they reflect a fairly general, impressionistic view of materials from teachers’ or researchers’ perspectives. Additionally, some investigations on learners’ materials evaluation with methods like questionnaires, interviews, or field notes, also focus on participants’ general perceptions of English textbooks, such as the usefulness (J. Li & Cui, 2020), development (Deng & Wang, 2023), or dynamic use of L2 English materials (X. Zhang, 2023). A more specific exploration of evaluations from L2 English learners’ perspective is needed, motivating our study.

Methodology

Participants

L2 English learners (N = 28; 24 female and four male; mean age = 21) were speakers of Chinese as a first language. They were third-year Business English major students at Xi’an International Studies University. They had learned English for more than 10 years on average and passed the Test for English Majors Grade Four (TEM 4) in China, which is roughly equivalent to B2 or C1 of the Common European Framework of Reference for Languages (CEFR), so they can be considered as high intermediate L2 English learners. L1 English speakers (N = 20; 13 female, three male, four unknown; mean age = 22 for the 16 who provided this information, which was not obligatory) were recruited at Bangor University. Ethical approval was obtained from the Research Ethics Committee of Bangor University (Reference code: LX-1623/AH113) on April 27, 2018. All participants provided written informed consent prior to participating. Participants were free to withdraw from our study at any time and for any reason without consequence. There was no potential risk of harm for participating in our research.

Materials

While conversations are multimodal in nature, relevant research usually singles out one aspect to address in-depth, such as pronunciation (Liao et al., 2018) or subtitles (Montero Perez, 2022) of spoken texts. Our study focuses on learners’ interpretations of linguistic features of spoken texts, using written versions of conversations.

Two conversations used to elicit learners’ interpretation of authenticity and difficulty in materials were excerpted from listening sections of CET and IELTS, respectively (see Supplemental Appendix S3). While selecting conversations, we took authenticity and difficulty into consideration. To ensure comparability, we chose conversations that were similar in mode and context, namely telephone calls in a customer service context. The IELTS conversation encodes more spoken features of authentic conversations (see Supplemental Appendix S4), which is characteristic of IELTS (Hui & Dong, 2019). The difficulty of the conversations was measured by VocabProfilers (Cobb, 2024), which showed that the CET conversation was more challenging in terms of types, lexical demand, and coverage of academic words even though the two conversations are close in length, with 281 and 243 words respectively (see Supplemental Appendix S5).

Data Collection

We designed a questionnaire to explore participants’ perspectives on the authenticity and difficulty of the two selected conversations. The survey showed the two conversations given in Supplemental Appendix S3, followed by nine questions. Both conversations were presented in written form. After reading each conversation, participants firstly answered the following three questions (twice, i.e., once for each conversation):

Then, they were asked to compare the CET conversation with the IELTS one by answering the following questions:

Both learners and L1 speakers were divided into two groups, group A and group B, respectively. The two conversations in the questionnaires were counterbalanced for different groups to avoid the effects of sequence on their reading. For L1 speakers, the second “you” in question 3 [Model] was changed to “learners,” and “your English study” in question 6 [Preference] became “English learners.”

L1 speakers completed the questionnaire online through Survey Monkey. A prize draw was used as an incentive (Dörnyei & Taguchi, 2012) to invite and encourage participants to take part. Nevertheless, only 20 participants completed the survey, despite as many as 70 starting it. Although this is unfortunate, this number was deemed sufficient for the current purposes of primarily qualitative analysis, with L1 speakers’ data needed for comparison only. Learners were invited to complete the questionnaire in their classroom. They received printed questionnaires from their teacher of English who was entrusted to collect data and completed them in one session. The effective response rate was 100%, unsurprising for group questionnaire administration (Dörnyei & Taguchi, 2012). All participants used their first language (English and Chinese, respectively) when filling in questionnaires, as we aimed to collect participants’ actual views expressed freely in natural language for in-depth analysis of responses, avoiding the risk of constraining learners’ responses based on limited language skills.

Data Analysis

Responses to the first parts of questions 2 [Problems], 3 [Model], 4 [Authenticity], and 6 [Preference] provided quantitative data addressing RQ1 (see Section 4.1). To address RQs 2 and 3, free-text responses were analyzed at both content and linguistic levels in qualitative and quantitative ways, following Tenbrink’s (2020) approach to Cognitive Discourse Analysis.

RQ2 aims to identify learners’ attention foci while reading conversations in language materials. For this purpose, we identified relevant content categories in free-text responses and examined their quantitative distribution (see the “Attention regarding the authenticity and difficulty of conversations ” section below). Free-text responses were analyzed collectively (similar to a small corpus), independent of the specific question that was asked to elicit the data. This differs from traditional content analysis (e.g., Krippendorff, 2018) but is justified as participants provided overlapping answers across all questions, which were regarded as general prompts.

To address RQ3, we examined how participants evaluated aspects of authenticity and difficulty by (qualitatively) identifying lexical items that served as linguistic indicators of these concepts in their responses and examining them (quantitatively) in the context of the content categories in which they occurred. Cognitive features of linguistic choices (such as specific lexical items) have been analyzed in a wealth of literature within cognitive linguistics and beyond; here, we draw specifically on Martin and White’s (2021) approach to appraisal, which is an excellent fit to the evaluative aspects concerning authenticity and difficulty that we aim to draw out from our participants’ discourse data. We adapted and operationalized Martin and White’s (2021) framework in a data-driven way for the current context, as described in more detail below (see the “Characteristics of the perceptions of authenticity and difficulty” section below).

Findings

Perceptions of the Authenticity and Difficulty of Conversations

Authenticity of Conversations

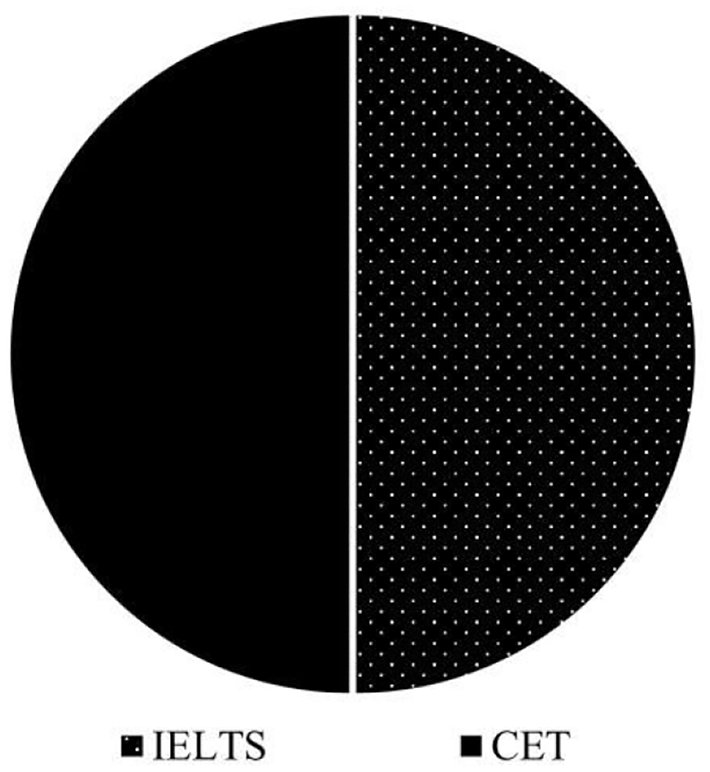

To address the authenticity of English conversations (first part of RQ1), survey question 4 [Authenticity] elucidated which conversation appeared closer to real communication for participants. As shown in Figures 1 and 2, half of the L2 English learners (50%) considered the CET example as a realistic interaction, while the other half thought that IELTS conversation was authentic. In contrast, most L1 English speakers (80%) agreed that the IELTS conversation was authentic, but none of them chose CET. 4 L1 speakers (20%) believed both of them might be authentic to some extent (this was excluded in the statistical test). The difference in perceptions of authenticity of conversations between learners and L1 speakers was significant (χ2 (N = 44) = 11.733, p = .001).

L2 learners’ perceptions of authenticity.

L1 speakers’ perceptions of authenticity.

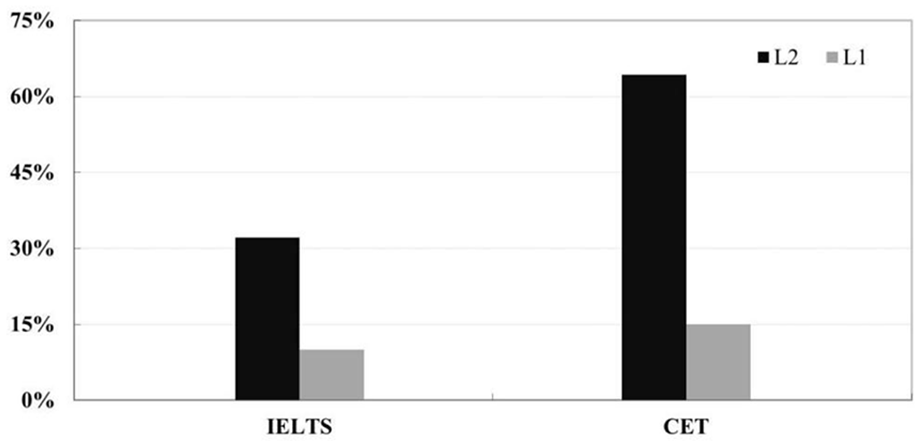

Reported Difficulties

To address the difficulty of English conversations (second part of RQ1), survey question 2 [Problems] asked if participants had any difficulties or problems in understanding the conversations. As shown in Figure 3, nine learners (32%) reported difficulties in understanding IELTS conversations, as compared to 18 (64%) in CET. This indicates that both conversations present some difficulties for learners, with CET being statistically more challenging, as confirmed by a Chi-square test (χ2 (N = 56) = 5.793, p = .016). Unsurprisingly, the majority of L1 speakers reported no problems in understanding both conversations (90% in IELTS and 85% in CET). The Chi-square test showed a significant difference in perceptions of difficulties between learners and L1 speakers (χ2 (N = 96) = 14.754, p = .002).

Difficulty in understanding conversations.

Conversations as Models for Learners

To elucidate participants’ general perceptions of English conversations (further informing RQ1), survey question 3 [Model] invited participants to decide separately whether the conversation they just read is a good model for learning. Question 6 [Preference] asked them to compare the two and decide which one would be a better model. As shown in Figure 4, most learners agreed that both conversations were good models (68% for IELTS and 79% for CET; this apparent difference was statistically not significant). L1 speakers’ responses were more diverse and somewhat more skeptical. 12 (60%) of the L1 speakers agreed that IELTS was a good model, 6 (30%) neither agreed nor disagreed, and 2 (10%) disagreed. 13 (65%) of them also considered the CET conversation as a good model, 4 were neutral (20%), and 3 (15%) disagreed. As participants’ neutral choices did not explicitly show a positive or negative attitude, they were excluded from the Chi-square tests, which showed no significant differences in either L1 speakers’ attitudes to conversations or a comparison of learners’ and L1 speakers’ answers.

Agreement with IELTS or CET as good model conversations.

Figure 5 shows that in the direct comparison (Question 6 [Preference]), 18 learners (64%) voted for CET but only 10 (36%) for IELTS. In contrast, 14 L1 speakers (70%) chose IELTS, 3 (15%) chose CET, and 3 (15%) were neutral (which was excluded from the Chi-square test). The difference between learners and L1 speakers was significant, as shown by the Chi-square test (χ2(N = 46) = 7.769, p = .005). These results demonstrated that learners assumed the CET conversation to be a better model, whereas L1 speakers preferred the IELTS conversation.

Preference of IELTS or CET as a model conversation.

Attention Regarding the Authenticity and Difficulty of Conversations

Categorization

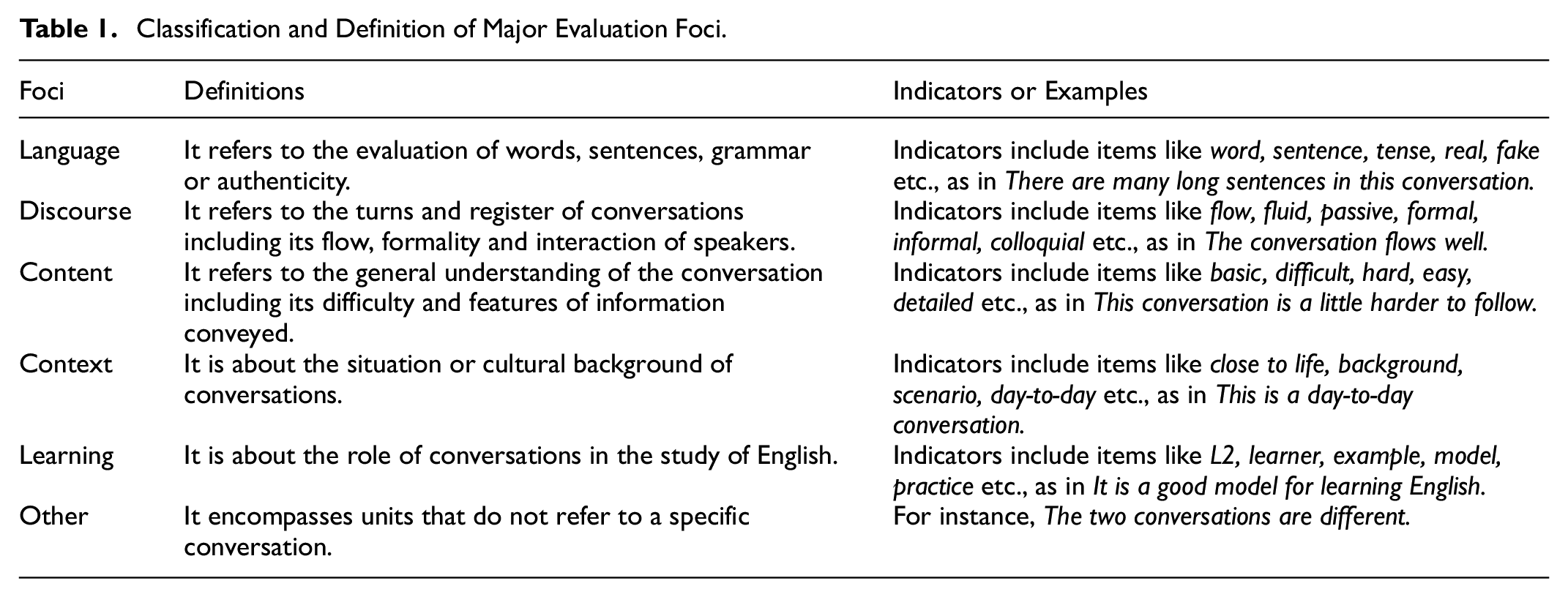

To address RQ2 concerning what L1 speakers and L2 learners attend to when interpreting the authenticity and difficulty of conversations, we now analyze the remaining free-text response data of questions 2 [Problems], 3 [Model], 4 [Authenticity] and 6 [Preference], and answers to question 5 [Differences] to investigate participants’ perceptions of conversations on the content level. Responses to questions 2 [Problems] and 3 [Model] that were simple answers like Yes or No, and questions 4 [Authenticity] and 6 [Preference] that reflect participants’ choices of IELTS and CET like The first conversation or The second one have been analyzed above. As motivated above, we treated all the data as a small corpus instead of analyzing answers to questions one by one. All responses were firstly segmented into units that showed one single thought or evaluation aspect. The total count of units for content analysis was 722 (432 in Chinese and 290 in English), excluding units not reflecting participants’ attitudes that were produced (as expected) in answer to Question 1 [Understanding], which asked for participants’ general understanding of this conversation (see Supplemental Appendix S6 for details). Then, all units were coded manually according to their content. For instance, the response It can be used to practice listening and speaking and it is close to real life contained two units, namely It can be used to practice listening and speaking, and it is close to life. The first unit pertained to language learning, whereas the second addressed the context of the conversation. They are therefore coded Learning and Context respectively. Based on iterative scrutiny of the units, six major evaluation foci emerged as follows: Language, Discourse, Content, Context, Learning, and Other (see Table 1 for their classification and definition).

Classification and Definition of Major Evaluation Foci.

Annotation

The major categories just listed were mutually exclusive, that is, each unit was associated with precisely one of these categories. This coding was done by the first author. To test for inter-coder reliability, a research assistant was trained and asked to double code 149 units of major categories (60 in L1 speakers and 91 in L2 learners), around 20.64% of the total. The inter-coder reliability was then checked by Krippendorff’s Alpha (Krippendorff, 2018) which was computed by SPSS macro (see Hayes & Krippendorff, 2007). The results showed excellent levels of agreement for L1 speakers’ units (α = .83), learners’ units (α = .86), and the total (α = .84), demonstrating a very high-reliability level for the categorization.

Major Evaluation Foci Distribution

31 units in L2 learners and 19 in L1 speakers did not explicitly reflect participants’ attitudes about the conversations and were therefore coded as Other. These were excluded in the analysis of distribution. The frequency of the main five evaluation foci was counted; results are shown in Figure 6. The most striking difference could be observed with respect to Context, mentioned by 24 learners (86%) across 72 units (18%), but only by 7 L1 speakers (35%) across 12 units (4%). Learning was mentioned by 28 learners (100%) in 124 units (31%) and 17 L1 speakers (85%) in 57 units (21%). In contrast, Discourse was mentioned by 18 learners (64%) in 38 units (9%), as compared to 17 L1 speakers (85%) in 61 units (23%). We also compared the distribution of units under each focus between learners and L1 speakers by a Mann-Whitney U test (see Table 2). Learners focused significantly more on Context and Learning than L1 speakers did, whereas L1 speakers paid significantly more attention to Discourse than learners did.

Number of participants and units by percentage across major evaluation foci.

Distribution of Units Under 5 Foci Between L1 Speakers and L2 Learners.

Note. (i) IQR is the difference between upper and lower quartile. (ii) * means P values are statistically significant at .05 level.

Distribution of Subcategories

Due to the richness of their content, major evaluation foci were further specified into subcategories, yielding altogether 16 specific categories (see Supplemental Appendix S7 for full definitions). The categories reflect differences in perspectives of each major evaluation focus. For instance, the major evaluation focus Language comprises the subcategories of Word, Sentence, Grammar, and Genuineness (a more general, macro-level evaluation of language style). While most units contain only one subcategory, a few refer to two subcategories, for example, This conversation is practical and professional falls into the subcategories of both Application and Business under Learning. Therefore, the total number of subcategory instances differs from the number of units associated with major content categories (as each unit was associated with precisely one major category). To test inter-coder reliability, Krippendorff’s Alpha was therefore not applicable; instead, a proportional agreement was calculated between the first author and the trained research assistant who coded 143 units (77 subcategories in learners and 66 ones in L1 speakers), around 20.91% of the total subcategories (684). The result showed a 91% agreement in subcategories of learners and 92% in those of L1 speakers.

Within the major category of Language, there is a significant difference between L1 speakers and learners in the distribution of subcategories (Table 3). Learners mostly attended to language features like word usage and sentence structure, whereas L1 speakers were more concerned about genuineness of language at a macro level. A significant difference between learners and L1 speakers was also found in the subcategories of Content, specifically Problem and Information. While comprehension was a prerequisite for learners’ evaluation of content, L1 speakers were more concerned about the nature of information in terms of its relevance, granularity, etc. Within Context, which was the least frequent category with L1 speakers, learners paid much more attention to familiarity of context than L1 speakers. Finally, most L1 speakers only referred to the Learning of English, whereas learners showed more concern about the application of conversations, business knowledge, and their interest.

Subcategories of the Five Evaluation Foci and Chi-Square Tests.

Note. (i) F is the frequency of subcategory units. (ii) P is the percentage of subcategory units to total subcategory units of each focus. (iii) Different subscript letters, a and b, indicate significant differences in the number or proportions of subcategory units between groups; the same letters, a and a, do not indicate significant differences. (iv) Value of Pearson Chi-Square is reported for most cases (i.e., Language, Discourse and Content). Value of Fisher’s Exact Test is reported when more than 20% cells have an expected count of less than 5 (i.e., Context and Learning). (v) p values relate to the distribution of subcategories under each major evaluation foc, and the *indicates statistically significant results.

Characteristics of the Perceptions of Authenticity and Difficulty

Our third research question, RQ3, asks more specifically how the perception of the authenticity and difficulty of conversations in CET and IELTS is reflected through the participants’ attention foci, as expressed in discourse. To gain further insights into how evaluations were expressed linguistically, we identified words and phrases that fell into the category of Appraisal (Martin & White, 2021). Appraising items were evaluative markers (individual words or phrases) in a content unit which showed participants’ attitudes to conversations, that is, their appreciation of the texts’ qualities or their feelings about them (see Supplemental Appendix S8). For instance, in the unit The second conversation is a more realistic representation of how people talk, more realistic would be selected as an appraising item. There could be one or more appraisal items in one content unit, or none. For instance, in The first one sounds very robotic and unrealistic, both very robotic and unrealistic were selected as appraising items. Here, we first present the overall distribution of appraising items, and then take a closer look at the participants’ appraisal of the perceived authenticity and difficulty of the conversations.

724 appraising items (306 in L1 speakers and 418 in learners) were identified, of which 189 belong to Language (88 in L1 speakers and 101 in learners), 112 Discourse (72 in L1 speakers and 40 in learners), 152 Content (74 in L1 speakers and 78 in learners), 86 Context (12 in L1 speakers and 74 in learners) and 185 Learning (60 in L1 speakers and 125 in learners). Their occurrence within the previously identified subcategories of content units is presented in Supplemental Appendices S9–13. Inter-coder reliability in classifying appraising items was checked using Krippendorff’s Alpha (Hayes & Krippendorff, 2007) between the first author and the trained research assistant, who coded 156 items across five major categories (79 in L1 speakers and 77 in learners), around 21.55% of the total items. The results showed excellent levels of agreement for appraisal items in L1 speakers’ units (α = .88), learners’ units (α = .85), and the total (α = .86).

Appraising items relating to authenticity were found across subcategories of Language, Discourse, and Context. With L1 speakers, most items are evaluations on Word (e.g., less fillers and unnecessary words), Grammar (e.g., errors), and Genuineness (e.g., realistic and nature) within the Language category (59 of 88, 85.23%), and Turn (e.g., pauses, hesitations, or fluid), and Register (e.g., formal, colloquial, or casual) within the Discourse category (61 of 72, 84.72%). In contrast, much fewer learners’ items refer to the authenticity of Language (26 of 101, 25.74%) and Discourse (22 of 40, 55.00%). Instead, most learners’ items focus on Familiarity of Context, as reflected by frequent phrases such as day-to-day and close to life (53 of 74, 71.62%). This illustrates a higher awareness of the authenticity of Context rather than of the more specific language and discourse levels.

Likewise, most items concerning difficulty also emerged across several subcategories of major foci, including Language, Content, Context and Learning. L1 speakers’ items (23 of 74, 31.08%) primarily refer to Problem of Content (e.g., easy and confusion) or appear sporadically (6 of 60, 10.08%) under Learning (e.g., complicated for a learner). Many learners’ items concerning difficulty referred to Content (46 of 78, 58.97%) but also to Word (e.g., new words and don’t know), Sentence (e.g., difficult sentences and uncertain) and Grammar (e.g., analyze subject and verb) of Language (75 of 101, 74.26%), English (e.g., too easy and few to learn) of Learning (21 of 80, 26.25%) and Understanding (e.g., not an easy setting) of Context (8 of 74, 10.81%). These results suggest that learners perceived the difficulty of conversations in more aspects than L1 speakers, specifically the role of difficulty in English learning.

Discussion

We explored how L2 English learners in China respond to spoken text materials in a language learning context as compared to L1 English speakers, addressing perceptions of authenticity and difficulty as reflected through attention foci. Results showed that although learners perceived CET as more difficult than IELTS, they regarded it as a better model for learning English, even though participants had divergent views on its authenticity. By contrast, most L1 speakers believed IELTS was authentic and conducive to language learning. This addresses our RQ1, “How do L1 English speakers and L2 English learners perceive the authenticity and difficulty of conversations in CET and IELTS?,” but also raises questions as to why CET was perceived as better and what learners’ perceptions of authenticity or difficulty are as compared to L1 speakers. The following sections will address RQ2, “What do L1 English speakers and L2 English learners attend to when interpreting the authenticity and difficulty of conversations in CET and IELTS?” and RQ3, “How is the perception of the authenticity and difficulty of conversations in CET and IELTS reflected through the participants’ attention foci, as expressed in discourse?” in turn.

Attention Foci (RQ2)

The items in our questionnaire revolved around authenticity and difficulty without asking participants directly to report what they were paying attention to while reading the texts. Such a question would be difficult to answer, as it pertains to a cognitive process that people are not aware of. Instead, attention focus is revealed by what people say in response, as it is impossible to produce discourse without attending to its content (Ericsson & Simon, 1993).

Our content analysis of the qualitative data identified five evaluation themes that participants focused their attention on, among which Language was the most frequently mentioned category for both learners and L1 speakers. Unlike previous research about authenticity (materials analysis or evaluation) that addressed a diversity of issues such as linguistic features, cultural background, or topics, participants in our study devoted much attention to Language, presumably because learners should have basic linguistic competence and language skills to understand spoken Language (Buck, 2018). Language quality has also been found to be a key theme in learners’ and teachers’ evaluations of locally developed L2 English materials in China (Deng & Wang, 2023). This finding is in line with research on language teachers’ interpretation of authenticity showing that while selecting authentic materials for learners, some teachers were more concerned about the linguistic features of texts than about the learners’ learning context (Emerick, 2019). Also, research on language teachers’ think-aloud comments on the difficulty of four tasks in language textbooks suggests that most teachers considered linguistic factors rather than conceptual or procedural demands when judging and considering adaptations to decrease task difficulty (Révész & Gurzynski-Weiss, 2016). Our research adds to this in that language draws more attention than other factors in speakers and learners of English alike (beyond teachers), while interpreting the authenticity and difficulty of conversations in materials.

However, a closer look at the distribution of the subcategories of Language revealed that learners’ attention to Genuineness of Language differed from L1 speakers. While L1 speakers primarily focused on general authenticity, learners disagreed about which conversation was authentic and rarely mentioned the general authenticity of conversations. This makes sense as L1 speakers could intuitively identify the authenticity of conversations, particularly the IELTS one which they found more authentic, based on their own knowledge and use of the language. Therefore, our results support previous findings that most L1 speakers can identify authentic texts “with little hesitation and considerable accuracy” (Porter & Roberts, 1981, p. 37). By contrast, learners’ attention was largely drawn to basic linguistic features for comprehension, as reflected by the many occurrences within the subcategories including Word, Sentence or Grammar.

Content analysis further revealed that learners focused more on Context than L1 speakers, concurring with findings showing that learners are generally more sensitive about their familiarity with the situational context in language materials than L1 speakers (Siegel, 2014). This makes sense as learners need to develop the ability to integrate context into linguistic processing, including what the interlocutor said earlier as well as the situation, topic, and general background knowledge (Buck, 2018). Teachers, as well, have been found to interpret authenticity based on context, thus taking into account the intended speech community of L2 learners’ future language use (Emerick, 2019). Therefore, a good comprehension involving Language and Context seems to be the prerequisite for learners when evaluating conversations. This is also reflected by the high number of Content subcategories relating to difficulty mentioned by learners.

Furthermore, learners focused more on Learning while L1 speakers paid more attention to Discourse. This might seem somewhat inconsistent with the general view that learners are less critical than experts or teachers in materials evaluation (McGrath, 2021). Since learners in our study had learned English for more than 10 years, they were confident about which aspects of the spoken text might be better for their study, thus being very productive in Learning. Moreover, lexical choices of appraising items under each content category further highlighted divergent interpretations of authenticity and difficulty between learners and L1 speakers, as discussed next.

Perceptions of Authenticity (RQ3a)

Participants’ interpretation of authenticity was identified according to their appraising items, such as real, realistic, and natural, cutting across several content categories, including Language, Discourse, and Context. L1 speakers’ and learners’ differences in perceiving authenticity can be clearly observed in their lexical choices concerning the above major evaluation foci.

L2 participants did not mention some important features of spoken language even though they in general were positive about the language of IELTS. On the language level, a large proportion of learners’ appraising items related to vocabulary, sentence and grammar aspects. Many of their appraising items related to linguistic features like new words, difficult sentences, subordinate clauses, and so on. While L1 speakers pointed to unnecessary words, errors, or real errors in conversations, no learners spotted instances of this kind.

On the Discourse level, many L1 speakers’ items referred to the flow of IELTS, like natural pauses, repetition, or real disfluencies, which rarely appeared in learners’ responses. These features are known to be very common in the natural spoken communication of L1 speakers (Biber et al., 2021), thus contributing decisively to authenticity. While both L1 speakers and some learners agreed that CET was formal and not casual, a few learners favored the flow of CET in which interlocutors provide much more information in each turn than in the case of IELTS, because they considered more information as more interactive. Also, like most learners in the context of classroom language instruction who are exposed to scripted texts from textbooks and talk formally like a book (McCarthy & Carter, 1995), learners in this study rarely mentioned the differences between formal and informal language, as reflected by only a small portion of appraising items concerning Register. Thus, although half of the learners agreed that IELTS was authentic, their perceptions of conversations differed both from L1 speakers and from research findings concerning spoken features of authentic conversations.

Learners were very sensitive to the Context in conversations and showed a clear preference for the situational context of CET, a shopping scenario, which they frequently encounter in their daily lives. This may explain why many learners in our study were positive toward the Context of CET because they found that CET has a daily or familiar setting while IELTS reflects a business or too professional one. While learners may have been unsure about the authenticity manifested through Language or Discourse, they clearly knew which conversation was closer to their daily life. It can be concluded that the context of conversations may exert substantial influence on learners’ perceptions of authenticity.

Perceptions of Difficulty (RQ3b)

Learners perceived the difficulty of conversations from more aspects than L1 speakers, although L1 speakers recognized the challenges to some extent. However, their attitudes toward the difficulty of spoken texts were different. L1 speakers were generally in favor of IELTS because of its authentic language expressions, natural flow of turns, and characteristics of information. In their view, CET failed to show the above features, except for a few L1 speakers claiming that CET provided more to learn. Many learners favored CET precisely because of its inclusion of more difficult words, expressions, or grammar rules. This supports the notion that learners should be provided with input somewhat beyond their language proficiency (Krashen, 1982), and that more challenging materials appeal to advanced learners. While the IELTS conversation was considered authentic from L1 speakers’ perspective, it did not appeal to many learners because it seemed too easy to be a good model.

Like L1 speakers, a few learners in our study mentioned the differences in register between CET and IELTS, and they all agreed that CET was more written or formal, but learners in general would like to learn using CET, the more difficult conversation, which may distract their attention from the colloquial or causal language use in daily conversations like IELTS. Moreover, many learners appraising items about Learning demonstrated that learners were unsatisfied with IELTS as it provided little help in reading and writing. Generally, this suggests that some learners may tend to treat spoken texts like reading materials, which may result in overlooking the useful, brief, and easy expressions shown in IELTS, based on a preference for more formal materials like CET. Therefore, learners’ perceptions of difficulty raise a question as to how to draw their attention to learn and distinguish spoken and written language in spoken texts like conversations (McCarthy & Carter, 1995), especially when presented in written form as in our study and in some teaching and exam contexts.

Our findings also explain why some highly frequent spoken expressions, like those observed in IELTS or other spoken materials, would be underrepresented in learners’ speech. It has been observed elsewhere that spoken English materials in China lack various spoken features, including minor discourse markers like oh, ah, well, and right, although these are precisely the kinds of texts – spoken texts in listening tests and language materials – that many learners are frequently exposed to (Hui & Dong, 2019). In consequence, highly frequent lexical bundles in textbooks, for which learners had a clear processing advantage in a phrasal judgment task, do not adequately reflect L1 speakers’ formulaic language (Northbrook & Conklin, 2019). Thus, many corpus-based studies of Chinese English learners’ spoken English reported their problems in using discourse markers like I mean (Yuan, 2021), you know (Y. Li, 2019), anyway (Zhu, 2019), etc.

Finally, learners’ preferences for materials seem to be fairly unrelated to their interest, since only a few learners’ items about Learning in our study referred to something as being boring, interesting, or not interesting– consistent with Peacock’s (1997) finding that learners may be motivated by materials for reasons other than personal interest. Considering our research findings, the problems reported in earlier studies may relate to the fact that spoken features of language do not draw much of learners’ attention because they focused more on new and difficult words or sentences, as reflected by appraisal items in their responses like too easy, few to learn, or no new words to learn. Haselow (2021) suggested that learners should learn spoken features, including pragmatic markers like actually or I mean by explicit instruction. Therefore, it may be reasonable to increase learners’ attention to linguistic features of spoken texts through measures like text enhancement (see Benati, 2016).

Implications

Concerning L2 English learners’ interpretation of authenticity and difficulty, our research offers the following implications for language teaching: (1) Even though the L2 participants in our study were high intermediate English learners, they still differed significantly from L1 speakers in their perceptions of authenticity in conversations. This reflects that the skills of perceiving authenticity may develop only at a late stage of foreign language learning. (2) We concur with the idea of Li and Cui (2021) that learners should be guided by teachers to systematically consider the authenticity of spoken language, such as the linguistic features of conversations found by Biber et al. (2021). Although authentic materials have been proven beneficial for language learning, the provision of authentic materials without explicit instruction about what makes the language input in materials authentic may not be enough. (3) Providing learners with different versions of conversations from different materials could support and enhance learners’ evaluation of spoken texts toward more native-like understanding, paralleling the concept of High Variability Phonetic Training, which facilitates speech acquisition via speech samples produced by diverse speakers (Saito et al., 2022).

Conclusion

Our research highlights key features of the understanding of authenticity as well as the role of difficulty in spoken texts from a learner perspective, contrasted with first-language speakers’ views. Learners’ attention focused on more specific levels of conversations (e.g., words, grammar) than that of L1 speakers. They attended more to context and learning aspects than to the text itself and found authenticity more in the context than in the linguistic features. They appreciated difficulty as a welcome challenge, despite a certain lack of authenticity on the language level that was more apparent to L1 speakers than to the learners. While this study focused on the differences between learners’ and L1 speakers’ perceptions of materials, the methodology employed here could also be used to investigate teachers’ perceptions contrasting with materials writers (as well as learners).

Supplemental Material

sj-pdf-1-sgo-10.1177_21582440251339659 – Supplemental material for Second Language Learners’ Perceptions of Conversations in English Materials

Supplemental material, sj-pdf-1-sgo-10.1177_21582440251339659 for Second Language Learners’ Perceptions of Conversations in English Materials by Yu Hui and Thora Tenbrink in SAGE Open

Footnotes

Acknowledgements

The authors would like to express their gratitude to Dr. Wei Wang, associate professor at the Business School of Xi’an International Studies University, for offering help in collecting learners’ data.

Author’s Note

We confirm that the work in this article is original and has not been published previously, that it is not under consideration for publication elsewhere, and that its publication is approved by all authors.

Ethical Considerations

This study was approved by the Research Ethics Committee of Bangor University (Reference code: LX-1623/AH113) on April 27, 2018. All participants provided written informed consent prior to participating.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was funded by the National Research Centre for Foreign Language Teaching Materials (Beijing Foreign Studies University) under grant number ZGWYJCYJ2023ZZ22.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

All relevant data are included in the manuscript and its appendices.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.