Abstract

Monitoring at the subnational level has connotations for the effectiveness of local governance in the developing world. Using a cross-sectional survey underpinned by the project life cycle model, the paper contributes to understanding essential considerations in developing and implementing subnational monitoring in Africa. About 74 of the targeted 275 District Development Officers responded to the online Google form questionnaire. The study employed descriptive statistics to analyze data. Results demonstrate that the monitoring system is excellent in design, but its implementation and reflection stages are weak. It highlights planning for subnational monitoring systems encompassing setting clear objectives, utilizing robust indicators, involving stakeholders, and an elaborate plan for data collection, analysis, communication, and reporting as vital ingredients of a monitoring system. Sufficient resources and capacity-building initiatives, including providing on-the-job training, hiring experienced staff, committing financial resources and logistics, and backstopping support, are necessary to implement monitoring activities effectively. An appropriate policy environment, including manuals and legislation, is critical to anchoring and sustaining subnational monitoring systems. Local government’s monitoring systems should prioritize learning, knowledge sharing, and documentation of lessons learned to facilitate programmatic improvements and replication and devote at least 2.5% of the District Assembly Common Fund to finance monitoring and evaluation actions.

Introduction

The paper discusses the design and implementation of monitoring systems at the subnational level to enhance their effectiveness as a development tool in the developing world. The quality of the monitoring system has implications for its ability to contribute to realizing the outcomes of decentralization. Thus, this study aims to analyze the crucial issues in the planning, implementation, and reflection stages of subnational monitoring systems to contribute to understanding the systems by highlighting the critical quality monitoring issues at the subnational level of governance.

Decentralization enhances local democracy, which leads to more responsive governments (Chigwata & Ziswa, 2018; Crawford, 2009; Khan Mohmand & Loureir, 2017), increased local-level participation in planning and implementation, and efficient delivery of local services (Chigwata & Ziswa, 2018; Faguet, 2014; Khan Mohmand & Loureir, 2017; Mwihaki, 2018; Smoke, 2015). Decentralization also promotes sustainability in service delivery, increases public accountability (Chigwata & Ziswa, 2018; Faguet, 2014; Khan Mohmand & Loureir, 2017; Mwihaki, 2018), and removes layers of bureaucracy by simplifying bureaucratic procedures. Despite the investments and attention on decentralized governance in the developing world, deficits in decentralized government, particularly regarding the disconnection between local political participation and local government accountability, have been highlighted (Chigwata & Ziswa, 2018; Crawford, 2009).

Consequent to the deficits in decentralization and its incompleteness in Africa (Joshi & Schultze-Kraft, 2014), and in the light of the growing need to improve the performance of the public sectors in the developing world, monitoring has gained renewed vigor as a way to enhance public sector accountability by putting in place the mechanisms for holding managers and governments accountable for performance. Akanbang and Bakyieriya (2020) and Sjöstedt (2013) acknowledged monitoring as a viable response to the call on local governments to use resources effectively and efficiently and provide quality services. Its contribution to accountability and learning in the public sector (Porter & Goldman, 2013; Waithera & Wanyoike, 2015) is also recognized. However, monitoring in the developing world and at the subnational level continues to be viewed as merely fulfilling a regulatory requirement (Akanbang et al., 2016; Porter & Goldman, 2013) and compliance with donor demands.

The gap between the policy and practice of monitoring is linked to an inadequate understanding of the theoretical basis for its implementation (Nilsen, 2015). A solid grasp of the theoretical underpinnings of monitoring systems helps establish mechanisms that ensure the achievement of the systems’ goals. This study uniquely contributes to the theoretical foundation of implementing subnational monitoring systems, aiming to improve their quality at the subnational level of governance. This contribution is crucial for understanding and addressing the quality issues in subnational monitoring and identifying the fundamental quality indicators necessary during the design, implementation, and reflection stages to enhance effectiveness.

Drawing on implementation science, the study analyses the challenges in designing and implementing subnational monitoring systems in local African government contexts. The study identifies quality issues in Ghana’s subnational monitoring systems by examining these systems through the lens of the project life cycle and process models/theories of implementation science. This approach provides valuable insights into critical issues in designing and implementing monitoring systems within decentralized local government contexts in the developing world. The findings aim to inform policy, research, and practice by highlighting the design, implementation, and reflective processes needed to avoid pitfalls and maximize the benefits of subnational monitoring.

The project life cycle, a standard framework for designing and implementing development interventions (Biggs & Smith, 2003; Golini et al., 2017; Umhlaba Development Services, 2017), comprises various phases or stages implemented iteratively and integratively. Each stage also has clearly defined objectives, information requirements, responsibilities, and critical outputs (Eggers, 2002; Chandurkar et al., 2017). Broadly, the stages begin with needs identification and objectives formulation, through planning and implementation of activities, to the assessment of the outcomes (Biggs & Smith, 2003; Golini et al., 2017; Singh et al., 2017; Umhlaba Development Services, 2017).

The Government of Ghana has implemented several development policies, programs and projects through local government authorities to reduce poverty in the country. Since the return to democratic governance in 1992, a minimum of 5% (called the District Assembly Common Fund) of government internally generated funds have been made available to the local authorities for project implementation. The National Development Planning Commission (NDPC) developed guidelines for a decentralized monitoring system, which local authorities have implemented countrywide to assess their progress and impact in executing these development interventions (Akanbang, 2021; Akanbang & Bakyieriya, 2020).

Research on monitoring local governance is evolving, with much of the work focused on its role in local governance (Nelson, 2016). Localized studies exist on the benefits and constraining factors of decentralized monitoring (Akanbang & Bakyieriya, 2020) and the effect of integrated decentralized monitoring on water and sanitation services monitoring (Akanbang, 2021). However, many of the studies are case studies and, therefore, have not provided sufficient detail and scope for analyzing the operationalization of the subnational monitoring system to provide a broad overview of the practice of monitoring within the decentralized system of governance. Just as monitoring puts a searchlight on programs or projects to establish vital mechanisms for their success, there is the need to subject monitoring design/planning and implementation processes to such a test to realize its full benefits. The study is thus essential in that it contributes to improvements in the design and implementation of subnational monitoring systems, which is instrumental to development effectiveness and accountable governance in the developing world. Key questions that drive the study are: what are the critical issues in designing and implementing a subnational monitoring system for local governments in deprived contexts? And what factors affect the implementation of monitoring systems at the subnational level of governance? The remaining sections of the paper present the project life cycle and its role in designing and implementing a monitoring system to provide theoretical insight into assessing the monitoring system. A presentation on the study context and methods follows this. Section “Results” presents the study’s results, while Section “Discussion” discusses the results. Section “Conclusion” concludes the results and discussion and proffers a way forward for research, policy, and practice on decentralized subnational monitoring.

Theoretical Framework

Nilsen (2015) identified three objectives: describing and guiding the implementation process, understanding and explaining what influences implementation outcomes, and evaluating the implementation of implementation theory. Regarding understanding and/or explaining the factors that influence implementation, which is in tandem with this study, Nilsen (2015) identified five groups of theories: process models, determinant frameworks, classic theories, implementation theories, and evaluation frameworks. This study adopts the project life cycle model within the process models to analyze the design and implementation of the Ghana subnational monitoring system. Process models specify the stages of translating policy/project/plan into practice. They aim to regulate the implementation process. The project life cycle as an action model provides practical guidance in planning and executing programs. Action models elucidate essential aspects considered in implementation practice and usually prescribe some stages or steps pursued in translating project initiatives into practice. They are active because they guide or pursue change (Graham et al., 2009, cited in Nilsen, 2015). They generally support the planning and implementation of actions and consider deliberate planning at the initial stages of implementation actions.

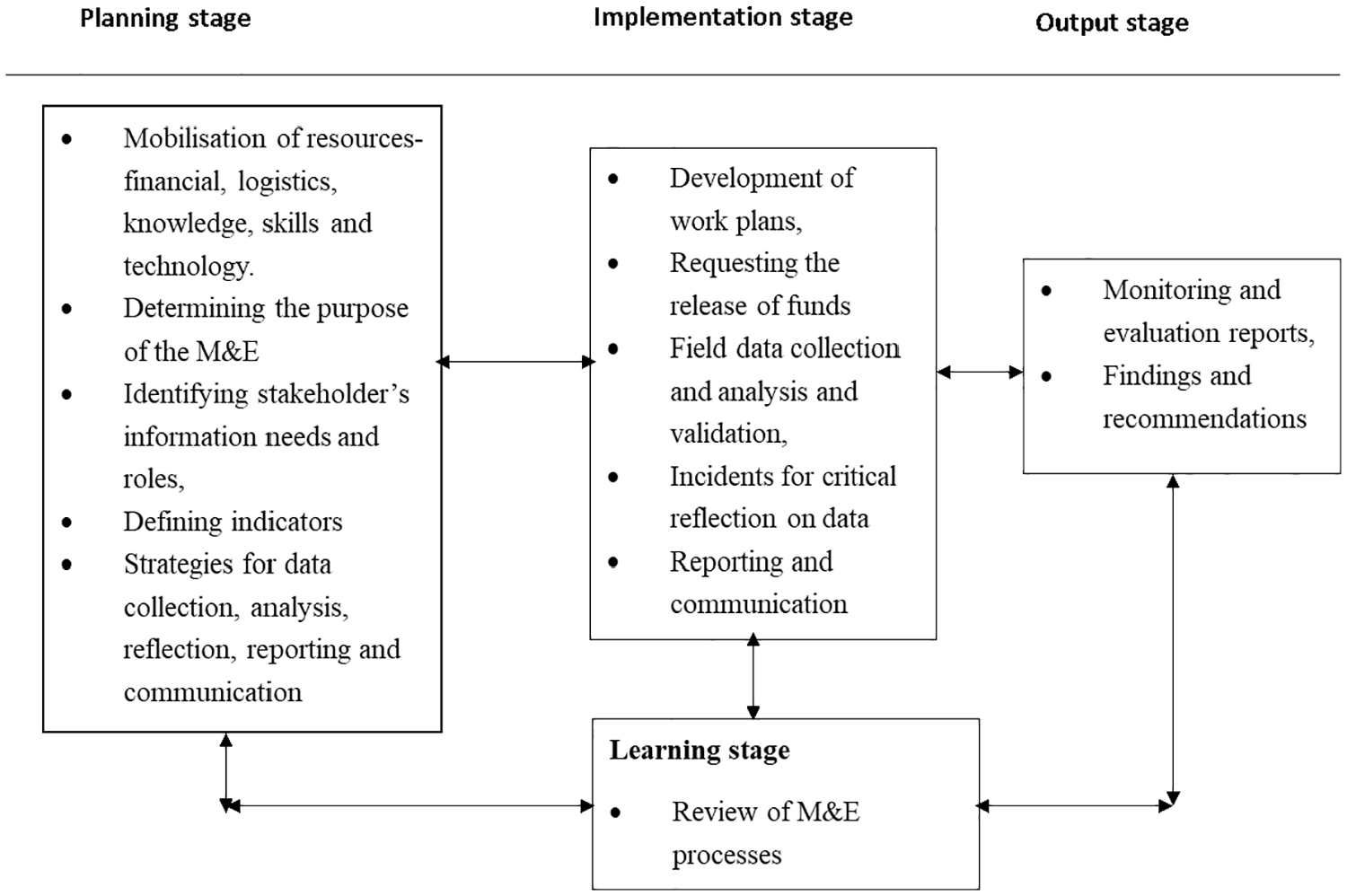

Baum (1970), cited in Golini et al. (2017), introduced the project life cycle into project planning and management. The life cycle breaks down an intervention into phases that connect the beginning of the intervention to its end (Figure 1). The project life cycle comprises various phases or stages delineated and implemented successively in a phased manner. Every intervention has its unique cycle of operation, though the fundamental project life cycle remains the same (Chandurkar et al., 2017). The Cycle consists of some progressive stages that generally begin with needs identification and objectives formulation, through planning and implementation of activities, to the assessment of the outcomes (Biggs & Smith, 2003; Ahonen, 1999 cited in Golini et al., 2017; Umhlaba Development Services, 2017). The stages of the project life cycle link primary policy goals with specific projects and programs and ensure that lessons learned from implementing these projects and programs feed into subsequent planning cycles. It aims to improve the effectiveness of projects, programs, and policies. Broadly, the Cycle is categorized into three phases: planning, implementation, and learning (Ahonen, 1999, cited in Golini et al., 2017).

Project life cycle.

According to Kusek and Rist (2004), Bamberger (2009), and Umhlaba Development Services (2017), the steps involved in designing and implementing a monitoring system include defining the purpose of the M&E system, identifying key stakeholders and their information needs, identifying performance questions and indicators, having a process for the collection, processing, validation, analysis, and interpretation of data, having a plan for reporting, dissemination, and use of M&E information, having a plan for engaging stakeholders and building capacity and conditions for operationalizing the M&E System and having a plan for implementing the M&E system.

In line with the project life cycle, the planning stage of a subnational monitoring system involves the processes of putting in place the monitoring system—determining the purpose of the monitoring system, identifying stakeholder’s information needs and roles, defining indicators, having a system for data collection and analysis, having a system for critical reflection, communicating and reporting on results as well as analyzing conditions and capacities for monitoring (Umhlaba Development Services, 2017). The stage also concerns the mobilization of resources—financial, logistics, knowledge, skills, and technology required for the effective functioning of the M&E system. Like any project intervention, this preliminary stage is critical to the success of the monitoring system. Ensuring clarity in formulating goals and objectives is crucial to avoiding instituting monitoring architecture that does not contribute meaningfully to project or program success and the empowerment of project beneficiaries. Sociocultural and political issues and context are considered during this stage (Eggers, 2002). Intensive stakeholder engagement at this stage at the subnational level would help identify key information needs that the monitoring system should provide to inform decision-making to complement the usually nationally determined indicators. It would also reveal the sociocultural and political context issues affecting the system’s effectiveness. The process of critical reflection also deserves key attention to avoid the accumulation of errors that often result in project failure (Eggers, 2002).

The implementation stage corresponds to all the activities geared towards implementing the system—accessing funds for M&E, developing work plans, field data collection and analysis, validation, and incidents for critical reflection and reporting. According to Suggett (2011), the implementation stage determines the success or failure of any intervention. Rahmat (2015) highlights the importance of cooperation, coordination, and commitment during implementation. The need for the implementation process to be dynamic, flexible, and adaptable to changing situations has also been recognized (Eggers, 2002; Rahmat, 2015). Figure 1 depicts the stages of the project life cycle. The level of ambiguity in an intervention will often determine the degree of success of implementation (Signé, 2017; Veronesi & Keasey, 2015). Zhan et al. (2014) highlight how a lack of participation in implementing interventions can pose problems.

West (2005), cited in (Signé, 2017), added that lack of stakeholder participation in rulemaking creates implementation difficulties. Politics and conflict (Signé, 2017) and stable funding (Akanbang, 2021) affect the effectiveness of the implementation of interventions. Without financing, there is often an inability to mobilize other aspects of an implementation strategy. Resources must be available for implementation to succeed. Furthermore, Rahmat (2015) views administrative capacity, organizational structure, public involvement, corruption, and staff capacity in terms of number, knowledge, skills, and commitment as factors that affect the implementation of interventions.

The learning stage consists of a review of the monitoring process. A significant attribute of the project life cycle is its emphasis on learning (Biggs & Smith, 2003; Eggers, 2002). Learning, represented by the monitoring and evaluation stage in the project life cycle, involves making adjustments in response to ongoing events and feeding the experiences into future endeavors (Biggs & Smith, 2003). The learning stage is not a standalone activity but integrated into all the other stages (Eggers, 2002; Ahonen, 1999, cited in Golini et al., 2017). The iterative nature of the project life cycle emphasizes the attainment of planning goals through a stepwise movement instead of a momentary leap from problem to solution (Biggs & Smith, 2003), highlighting the inherent value of monitoring in the project life cycle. Monitoring guides the effective and efficient implementation of interventions in line with the principles of adaptive planning.

This paper uses the project life cycle as a logical model to analyze the design and implementation of Ghana’s subnational monitoring system, thus contributing to the theoretical literature on monitoring implementation, which has not been given sufficient attention in the monitoring literature. In other words, it provides insights into the logical sequence into which the activities involved in the design and implementation of monitoring and evaluation, broadly classified as planning, implementation, and learning, ought to be undertaken. The uniqueness of this study is that it contributes to the theoretical framework for implementing monitoring systems at the sub-national level. Various theories and models, including the logical framework, program theory, and theories of change, have explored how monitoring and evaluation can uncover project impacts. However, there is a shortage of research on the theoretical framework for designing and implementing monitoring at the local level of governance. This study, therefore, employs the process models of implementation science to contribute to the scant scientific literature on the implementation science of monitoring. Figure 2 provides a diagrammatic representation of the operationalization of the Cycle in the context of subnational monitoring. Reviewing the various issues considered during the project life cycle concerning monitoring systems’ design and implementation has formed the basis for the questionnaire to analyze the monitoring system.

Issues involved in the project cycle within the context of subnational monitoring.

Study Context and Methods

Study Context

This section provides the context within which the subnational monitoring study is designed and implemented. Ghana’s local government system constituted the laboratory for the study. Ghana has been implementing a local government since 1988. The coming into force of the 1992 constitution strengthened the government system. After the 1992 constitution, various legislations, including the Local Governance Act, Act 462 of 1994, now amended as Act 936, 2016; the National Development Planning System Act, Act 480, 1994, among others, were enacted to enhance local governance in the country. Act 936, 2016, established the MMDAs as local authorities with the mandate of planning for the development of the areas within their jurisdictions, while Act 480, 1994 institutionalized the National Development Planning Commission (NDPC) as the national coordinating body of the Decentralized Development Planning System in Ghana. Specifically, Section 1(2) of the Act, 1994 (Act 480) stipulates that the decentralized national development planning system shall comprise MMDAs at the district level, Regional Coordinating Councils (RCC) at the regional level, and sector ministries, agencies, and the NDPC at the national level. The NDPC is the coordinator of the national development planning system. Based on Sections 1 to 11 of Act 480, the key deliverables of the Commission include the development of National Development Policy Frameworks, the development of Planning and M&E Guidelines for district and sector levels planning and monitoring, and the development of District, Sectors and National M&E Plans. The Regional Coordinating Councils (RCCs) coordinate, monitor, evaluate, and harmonize development activities at the regional level. Specifically, they provide, among others, guidance to the districts in the development and execution of their M&E Plans; evaluate, recommend, and support capacity building and other M&E requirements for the District Assemblies; and facilitate the evaluation of the District Medium Term Plans and recommend for policy review at all levels. At the district level, which is the focus of this study, the District Planning and Coordinating Unit (DPCU) executes planning, programming, monitoring, evaluation, and coordination functions. To perform its M&E functions effectively, the District Planning and Coordinating Unit (DPCU) should co-opt representatives from other sector agencies, persons from the private sector, and civil society organizations whose inputs will be needed. Regarding monitoring and evaluation, the DPCU undertakes periodic project site inspections, develops indicators for assessing change, prepares progress reports, and disseminates, the reports. Figure 3 shows the institutions within the decentralized planning system, the key actors, their roles, and the flow of information concerning subnational monitoring.

Decentralized M&E institutional and reporting framework.

At the community level, elected members form the unit committee. There are two groups of technocrats to help in the efficient running and provision of services at the local level—those who work in the departments of the assembly (e.g., Agriculture, Health, Education, etc.) and those working in the district administration consisting of staff of the Planning and Coordinating Unit, Budget Unit, Administrative Unit, Finance Unit, Internal Audit and Human Resource Unit. The district department responsible for the project (e.g., health department or agriculture department) undertakes the implementation of projects. In most cases, a private service provider or a contractor executes infrastructure projects while the implementing department, in collaboration with the secretariat of the assembly (consisting of planning, finance, works, etc.) as well as community-level stakeholders as the unit committees and assembly members monitor and supervise project execution. Each district has an M&E team coordinated by district planning officers, the respondents in this study.

Methods

Survey Design

The research design employs a cross-sectional survey. The cross-sectional design allowed sufficient information to be collected across the country to assess the design and implementation of the decentralized subnational monitoring system implemented countrywide. Such valuable information is instrumental to the policy, practice, and research on monitoring in the developing world. Existing empirical research has examined chiefly subnational monitoring as case studies (Agbenyo et al., 2021; Akanbang, 2021; Akanbang & Bakyieriya, 2020, Sulemana et al., 2018). The survey design, thus, provided a broader scope and national perspective on subnational monitoring in Ghana, which is critical to policy and decision-making. The survey period was between October and December 2022.

Sampling Design

The study’s target population was coordinators of the district planning coordinating units of the MMDAs. These coordinators are heads of planning officers at the unit. The planning officers coordinating the district’s planning anchor the decentralized subnational monitoring system. There are 261 MMDAs in Ghana, each having a District Planning Coordinating Unit coordinated by the district planning officer. The district development planning officers of the 261 MMDAs were the targeted respondents of the study. Thus, the target population was 261. All 261 heads of district development planning officers were recruited into the study, giving a sample size of 261. However, only 74 (a response rate of 28%) of them answered and returned the questionnaire.

Data Collection and Analysis

The primary data collection method and tool was a structured 5-point Likert-scale questionnaire using Google Forms. Information derived from the literature on issues relating to the quality of the design, implementation, and reflection stages of the decentralized subnational monitoring system guided the development of the structured questionnaire. Table 1 contains the issues explored in the questionnaire. The WhatsApp page of the District Development Planners Association of Ghana provided access to the respondents so they could fill out the Google forms. The questionnaire contained elaborate instructions on who was qualified to respond to the questionnaire and the process of filling out the form. The data analysis utilized descriptive statistics (frequencies, percentages, means, etc.), linear regression and Spearman correlation. The researcher computed the means and standard deviations from the 5-point Likert-scale data, which ranged from “5” and “1” with “5” denoting “strongly agree” while “1” denotes “strongly disagree.” This computation enabled the transformation of the Likert-scale data into a continuous variable for the issues explored in the study to be ranked to determine those fundamental during the monitoring system’s various stages. An index was computed for “monitoring design quality” from the issues explored under the monitoring design. This index (monitoring design quality) became the dependent variable of the linear regression analysis to determine the predictive factors of the monitoring system’s design quality. The predictive factors were the age of the monitoring staff, the age of the district, the number of years of experience, the number of years of last training received by monitoring staff, and the number of meetings in the year to plan for monitoring. A correlation analysis using Spearman correlation assessed the association between the quality of monitoring design, monitoring implementation and reflection stages of the monitoring system.

Issues in the Design, Implementation, and Reflection Stages of Monitoring.

Source. Author’s construct (2021) based on Akanbang et al. (2019), Chantler et al. (2014), Goldman et al. (2018), Preskill (2018), Kihuha (2018), Kusek and Rist (2004), Njama (2015), Nyamambi (2021), Umhlaba Development Services, (2017), and Wang and Hong (2018).

Validity and Reliability of the Questionnaire

A colleague with expertise in monitoring reviewed the draft questionnaire to ensure that the questions measured what they intended to measure. Similarly, the questionnaire was pretested among 10 members of the district planning coordinating unit (planning officers other than the head of the unit) at the district level to ensure its validity and reliability.

Ethical Considerations

The study obtained ethical clearance from the Research Ethics Review Board of the researcher’s institution. The researcher assured respondents of the confidentiality and anonymity of all information collected. The researcher guaranteed their autonomy by indicating they could freely discontinue the survey at any time. The researcher briefly explained the benefits of the study to them. Additionally, the researcher did not solicit personal identifiers such as names and telephone contacts.

Limitations of the Study

The study design employed a census to reach out to all study participants. However, the response rate of 28% was low to support the generalization of the findings. The study was also limited to monitoring systems within only MMDAs and not the public sector generally in Ghana.

Results

Background Characteristics of Respondents

Of the respondents, 89.26% were male, with an age range of 27 to 55 and a mean age of 38.59 ± 6.0047. Table 2 shows that Ashanti had more respondents, while the Greater Accra region did not record any respondents. The Ashanti Region has the highest number of districts in Ghana. About 75.76%, 23%, and 1.3% of respondents held a Master’s, Bachelor’s, and PhD degrees, respectively. More respondents (58%) were at the rank of district planning officer, followed by 27% (principal planning officer) and 15.6% (assistant planning officer).

Regional Distribution of Respondents.

Source. Survey October 2022.

The two main disciplinary backgrounds of the respondents were development studies (37.38%) and planning (32.35%). The disciplinary backgrounds of respondents are shown in Table 3. The curriculums of these two disciplines include courses or topics in monitoring and evaluation.

Area of Qualification in the Highest Level of Education.

Source. Survey October 2022.

Approximately 80% of the respondents received monitoring training as part of their disciplinary training at the highest level of education, while nearly 91% received on-the-job training in monitoring. On average, people working in monitoring have 9.28 ± 4.280 years of experience. The range of experience varied between 1 and 22 years. On the currency of on-the-job training, the study found that it has been 3.24 ± 3.0421 years since the last on-the-job training of respondents. The time range since the previous training was received varied between 0 and 13 years. A significant proportion of respondents (about 81%) believed that monitoring was a core function they performed at the MMDA level. The survey results indicate that the respondents had a weak capacity to execute monitoring functions at the subnational level.

Status of Monitoring at Subnational Level

Two primary monitoring methods emerged: collectively as a committee (47.3%) and individually and collectively as a committee (52.7%). Construction project monitoring (89.1%), activity monitoring (56.7%), output monitoring (48.6%), and compliance monitoring were the most common types of monitoring at the MMDAs (see Table 4 for details). Less than one-third of MMDAs conducted inputs, outcome, and impact monitoring.

Kinds of Monitoring (n = 74).

Source. Survey October 2022.

NB: Multiple Response Questions.

NB: Multiple Response Questions

According to the survey, many respondents (75.6%) believed that their MMDAs had low motivation to undertake monitoring. Only 24.4% of respondents indicated that their MMDAs were motivated to undertake monitoring, while about 81.1% considered monitoring a core activity of MMDAs.

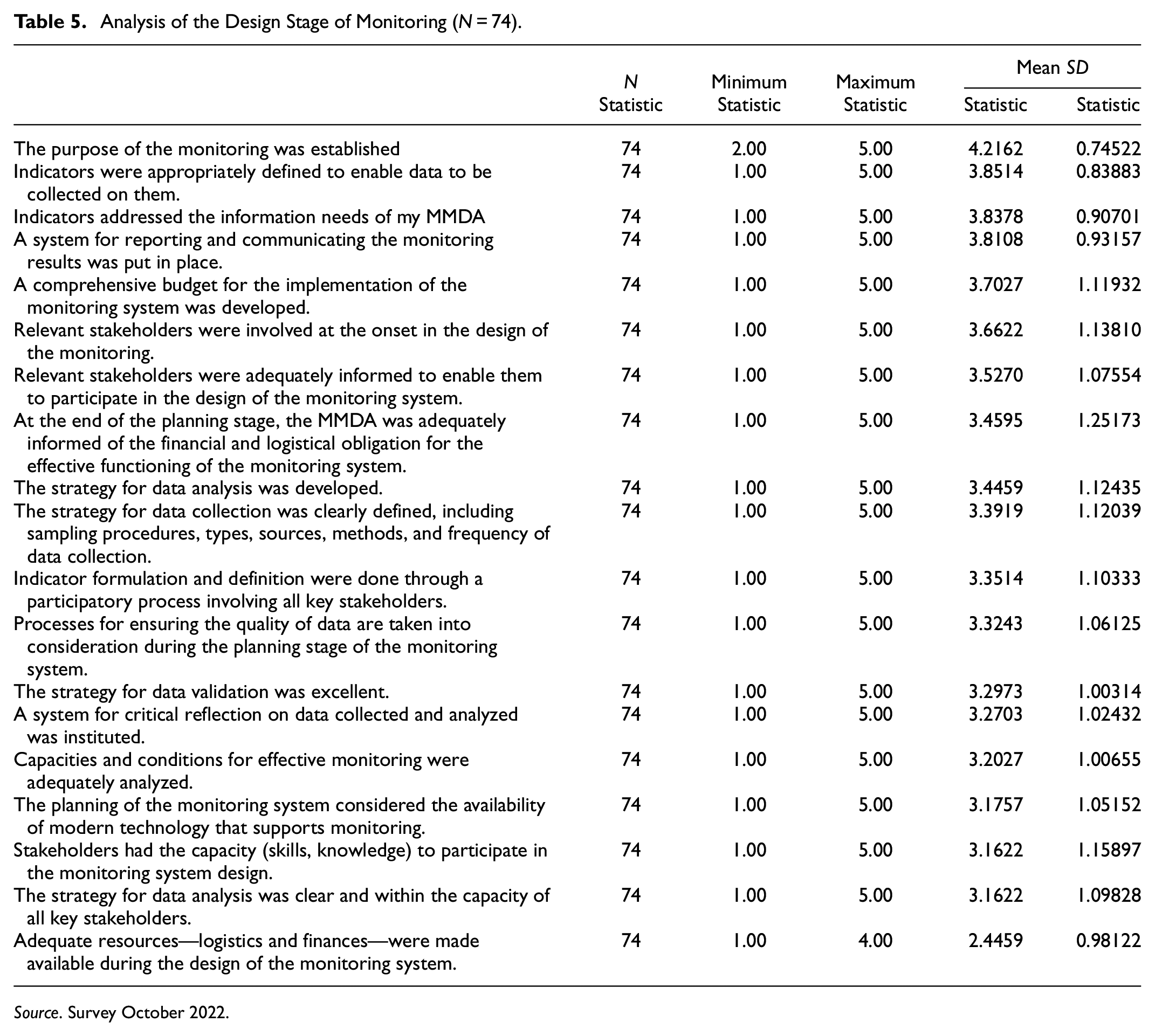

Analysis of the Design Stage of the Decentralized Subnational Monitoring System

Table 5 displays the evaluation outcomes on the participants’ viewpoints regarding the issues involved in the design phase of the sub-national monitoring system. The majority of the design issues had mean values above 2.5, indicating that the quality of the design stage of the monitoring system is almost excellent. The design elements that showed high mean values included; a clear definition of the purpose of the monitoring system (mean = 4.2162 ± 0.74522), appropriately defined indicators (mean = 3.8514 ± 0.83883), ensuring that the indicators catered to the information needs of actors at sub-national level (mean = 3.8378 ± 0.90701), and development of a plan for communication and reporting (mean = 3.8108 ± 0.93157). These high mean values suggest that the developers carefully considered these design issues during the monitoring system’s development. The only design issue that recorded a mean below 2.5 was “adequate resources—logistics and financial were made available during the monitoring system design” (mean = 2.4459 ± 0.98122).

Analysis of the Design Stage of Monitoring (N = 74).

Source. Survey October 2022.

A linear regression shows that the number of years of experience of the planning officers positively predicts monitoring design quality (B = 0.086, p = .006). An additional year of experience gained by planning officers in monitoring increases monitoring quality by 0.086 times. A longer time lag in on-the-job training negatively predicts monitoring design quality (B = −0.082, p = .027), meaning every one-year delay in on-the-job training decreases monitoring quality by 0.082 times. Table 6 displays the results of the model summary, ANOVA, and coefficients.

Linear Results on Predictors of the Quality of a Monitoring System Design.

Analysis of the Implementation Stage of the Subnational Monitoring System

Table 7 presents the issues considered while implementing the subnational monitoring system. The following: development of checklists to guide data collection (mean = 3.7703 ± 0.88437), all key actors responsibly played their roles (mean = 3.0811 ± 0.93276), and backstopping support from RPCU and NDPC was always timely (mean = 3.0000 ± 0.97924), ranked highly as prevailing practices involved in the implementation of the monitoring system. The adherence to the implementation schedule (mean = 2.9054 ± 01.03592) and the accessibility to finance and logistics (mean = 2.2027 ± 0.96486) were less prioritized issues in the implementation of the system.

Analysis of Implementation (N = 74).

Source. Survey October 2022.

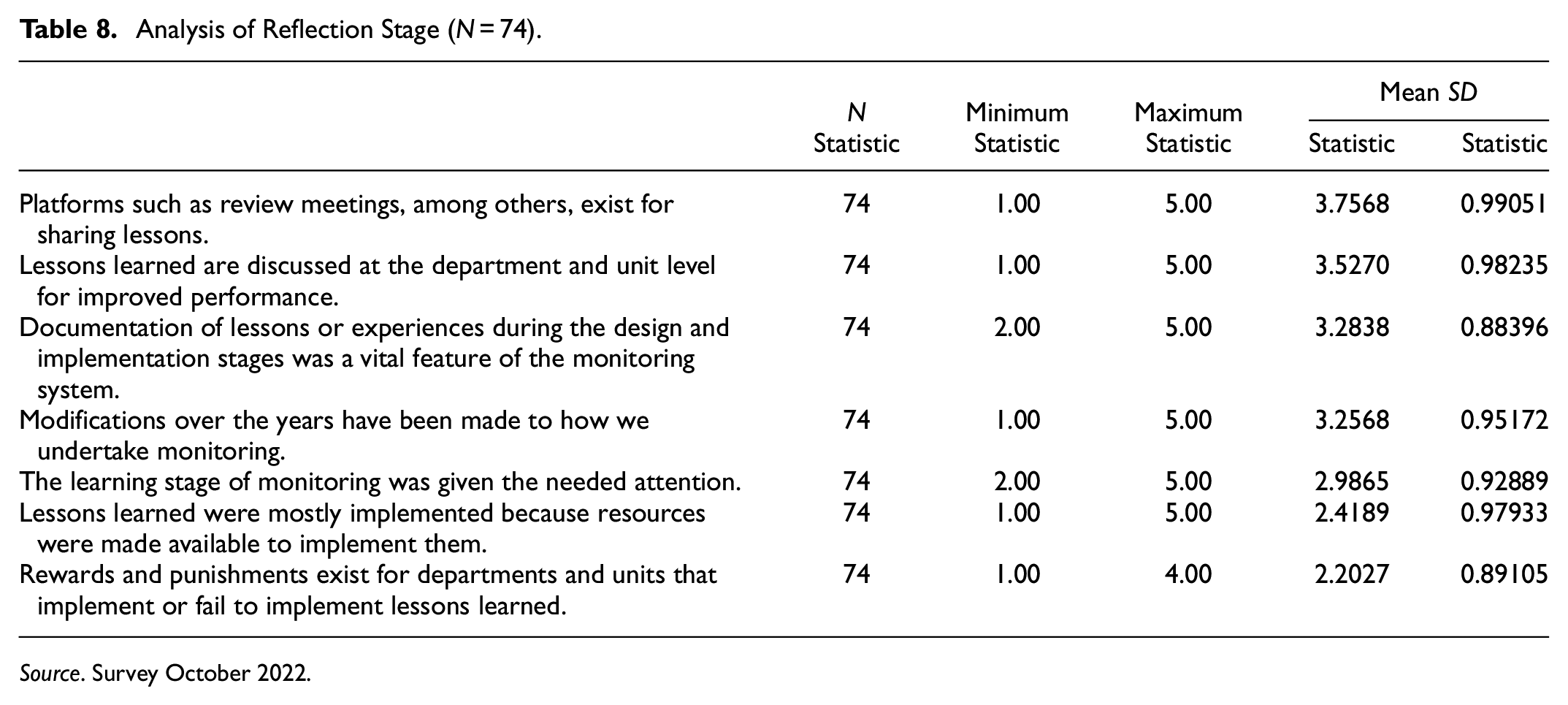

Analysis of the Reflection Stage of the Subnational Monitoring System

Table 8 indicates that respondents identified several issues undertaken to facilitate reflection and learning on data generated from monitoring. These issues include platforms such as review meetings, among others, for sharing of lessons (mean = 3.7568 ± 0.99051), discussions of lessons learned at department and unit level for improved performance (mean = 3.5270 ± 0.98235), documentation of lessons or experiences during the design and implementation stages as a vital feature of the monitoring system (mean = 3.2838 ± 0.88396), and modifications to how to monitor have taken place over the years (mean = 3.2838 ± 0.95172). However, implementing lessons due to resources made available (mean = 2.4189 ± 0.97933) and rewards and punishments given to departments and units who implemented or failed to implement lessons learned (mean = 2.2027 ± 0.89105) were rated as issues that were not strongly inherent in the monitoring system.

Analysis of Reflection Stage (N = 74).

Source. Survey October 2022.

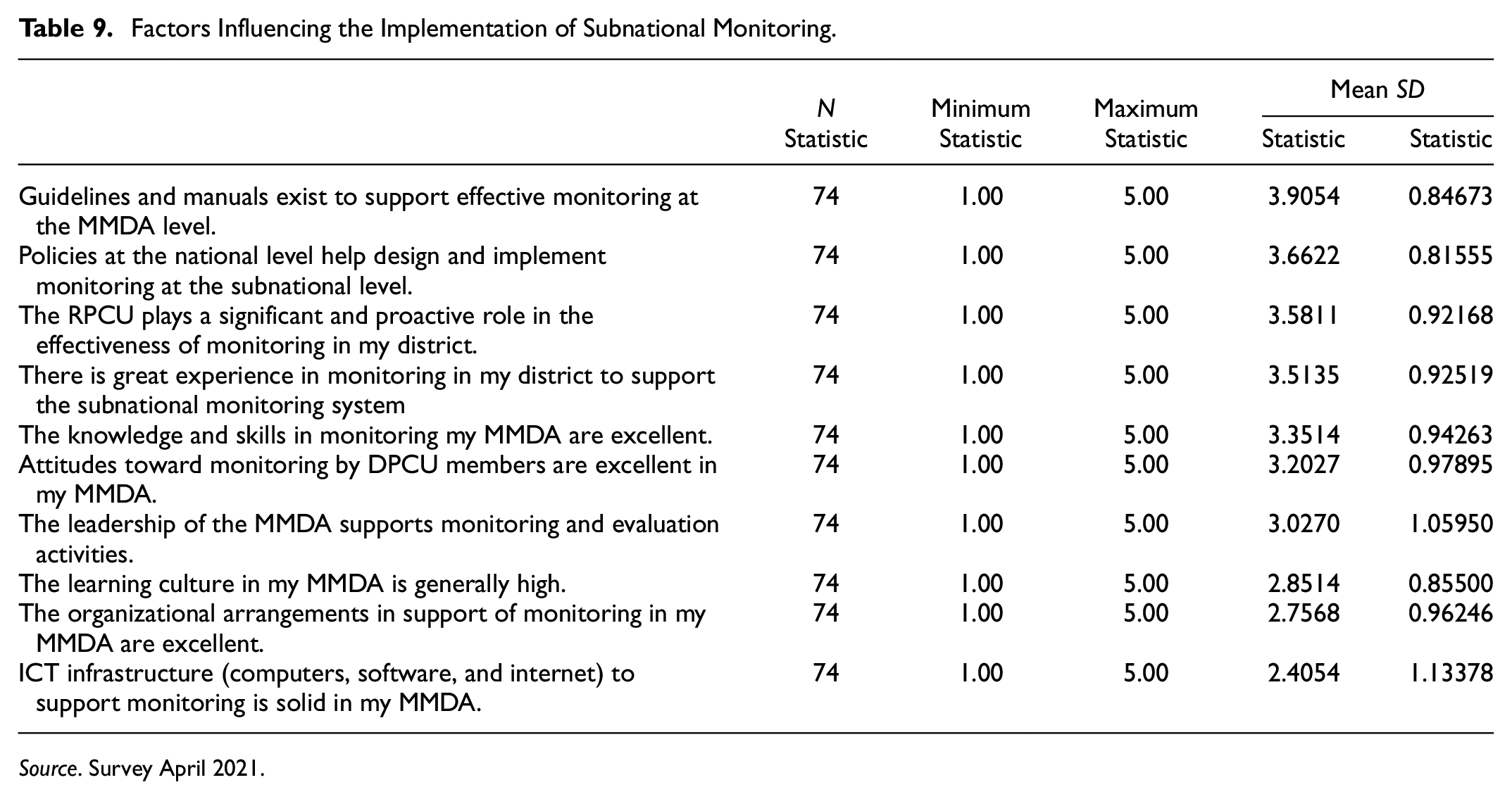

Policy, Organizational, and Individual Factors Affecting Subnational Monitoring

According to the evaluation results presented in Table 9, policy-level factors such as guidelines and manuals that support effective monitoring at MMDA level (mean = 3.9054 ± 0.84673) and policies at the national level that aid in the design and implementation of monitoring at the subnational level (mean = 3.6622 ± 0.81555) were highly ranked as enabling factors in the decentralized subnational monitoring system. The backstopping role of the intermediate level (RPCU) was ranked high (mean = 3.5811 ± 0.92168) as facilitating the monitoring system design and implementation. Experience, skills, attitudes in monitoring, and leadership support for monitoring had mean values above 3. However, logistical support in the form of ICT infrastructure (computers, software, internet) to support monitoring had a mean value below 2.5, indicating that it is a missing link in the subnational monitoring design and implementation system.

Factors Influencing the Implementation of Subnational Monitoring.

Source. Survey April 2021.

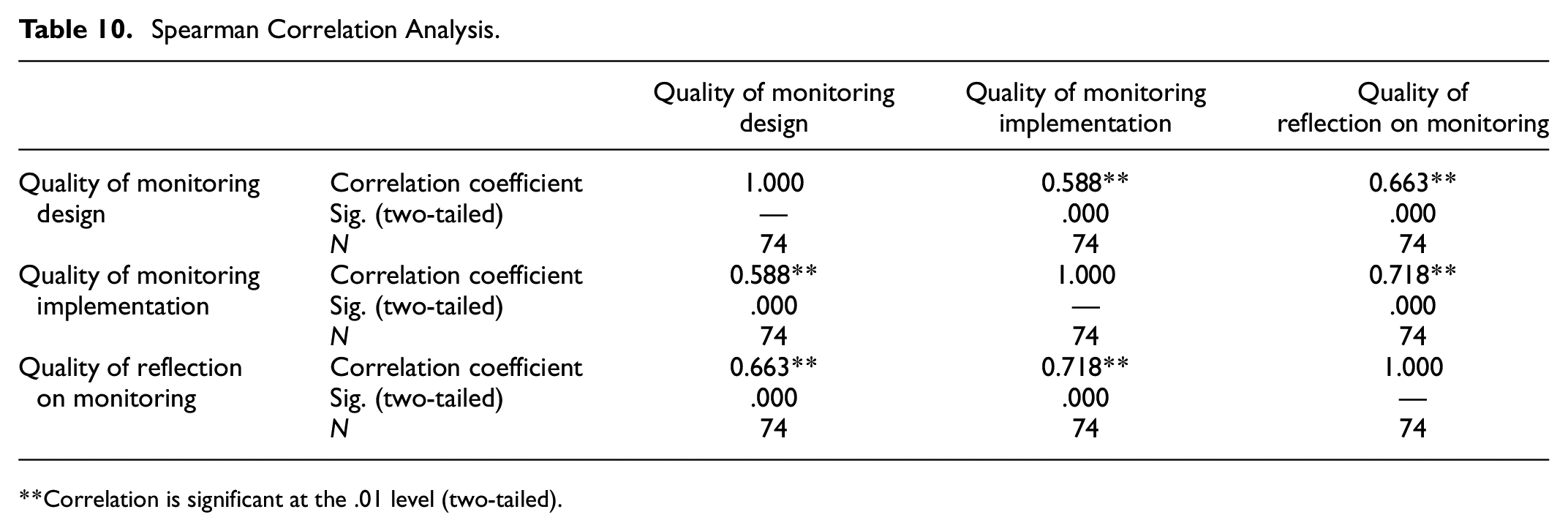

A correlation analysis of the quality of monitoring design, monitoring implementation and reflection on monitoring, as shown in Table 10, shows a positive correlation between the various stages of the monitoring process.

Spearman Correlation Analysis.

Correlation is significant at the .01 level (two-tailed).

Discussion

Monitoring System and the Project Life Cycle

The study found that the stages of the project life cycle as an action model used to guide the implementation of development actions (Nilsen, 2015) were inherent in the subnational monitoring system. Specific essential issues such as clearly defined and robust indicators, stakeholder involvement in planning, implementing, and evaluating the system, comprehensive framework for data collection including quantitative and qualitative data, backstopping support from NDPC, RPCU, existence of manuals and guidelines for monitoring, use of the checklist in data collection, existence of review meetings and platforms highlighted by Kusek and Rist (2004), Bamberger (2009), and Umhlaba Development Services (2017) were rated high as prevalent in the monitoring system. A conspicuous important issue associated with the monitoring system but rated weakly by respondents in the monitoring system is logistics, technology, and budget support for the design, implementation, and learning stages of the system. The inadequate attention to resources for the monitoring system can potentially undermine the effectiveness of the implementation and learning stages. Correlation analysis also showed a high positive connection between the three stages of the monitoring process, suggesting a strong connection between the components of the monitoring system in line with the project life cycle.

The design stage of the monitoring system was adequate: According to Karani et al. (2014) and Kihuha (2018), monitoring planning is closely linked to its performance. A straightforward monitoring system design and framework gives focus and avoids ambiguity in data collection methods, indicators, and reporting requirements, allowing for the systematic tracking and evaluation of project progress. Precise planning and design, including a national policy framework for the monitoring system, have been identified as vital to its effectiveness by Goldman et al. (2018) and Mathis et al. (2001). The design stage of the monitoring system was deemed adequate by the respondents. The design stage is critical as it is the foundation for the decentralized subnational monitoring system. The observation that it was solid thus provides an anchor for the subnational monitoring architecture. Issues that attested to the adequacy of the design of the system included a clear definition of the purpose of the monitoring system, appropriately defined indicators, ensuring that the indicators responded to the information needs of actors at sub-national, and development of a plan for communication and reporting, relevant stakeholders were adequately informed and involved at the onset in the design of the monitoring, strategy for data collection was clearly defined including sampling procedures, types, sources, methods and frequency of data collection, and the strategy for data validation was excellent. A lapse in the s design was the nonavailability and accessibility of logistics and financial resources to enable the effective carrying out of the activities of design stage.

Clearly defined indicators in monitoring systems are the basis for measuring progress and outcomes effectively (Lamhauge et al., 2013). A monitoring system utilizes well-defined indicators that are specific, measurable, achievable, relevant, and time-bound (SMART), allowing for effective tracking and evaluation of progress. Indicators provide the means to measure and quantify results achieved by projects or programs, and serve as tools for both program staff and partners to prioritize inputs and convey outcomes. Kihuha (2018) observed that stakeholder involvement enhances the performance of monitoring systems, while inadequate provision for resources, logistics, and skills at the design stage undermines the quality and reliability of data collection, analysis, and reporting. Involving relevant stakeholders in monitoring enhances ownership, transparency, accountability, and the use of data for adjustments to programs. Esmail et al. (2015) explored the consequences of excluding relevant stakeholders from M&E processes, including limited ownership, lack of contextual understanding, and reduced utilization of M&E results. Inadequate stakeholder involvement makes it challenging for stakeholders to understand and validate the reported results.

Clarity in data collection processes, including sampling and the deployment of diverse data collection methods, including surveys, interviews, and observations, to obtain comprehensive and reliable information is an attribute of a sound monitoring system. Noyes et al. (2019) discuss the limitations of relying on limited data sources and or inadequate sample sizes, highlighting the potential biases and incomplete information that may arise. According to Noyes et al. (2019), researchers should incorporate qualitative methods to allow for a deeper exploration of context, individual experiences, and unforeseen outcomes to address the insufficiency of quantitative data collection. Nasambu (2016) asserts that data collection frequency offers more data, enabling managers to track trends. Sridharan et al. (2016) found that irregular data collection hinders tracking progress and making informed decisions. Data verification, validation, and cleaning are processes to ensure the accuracy and reliability of collected data. Bamberger et al. (2010) highlighted that data inaccuracies, inconsistencies, and biases pose significant risks to monitoring data’s validity and usefulness.

The quality of the monitoring system design positively correlated with the staff’s experience in monitoring. The number of years of experience in monitoring was a positive predictor of monitoring quality. Experienced monitoring staff possess a deep understanding that contributes to designing robust systems better suited to handle real-world challenges, as they can anticipate potential issues and incorporate preventive measures during the design phase of monitoring systems. The absence of on-the-job training was a strong negative predictor of the quality of a monitoring system’s design. On-the-job training is critical in nurturing a culture of continuous improvement in monitoring systems.

Implementation of the Monitoring System

The study found that the development of checklists to guide data collection, all key actors responsibly played their roles, and the availability of backstopping support from RPCU and NDPC emerged as moderately ranked issues inherent in the implementation stage of the monitoring system. The adherence to the implementation schedule and the accessibility to finance and logistics were less prioritized issues in implementing the system. Generally, therefore, the implementation of the monitoring system was defective. Effective implementation is required to ensure that interventions can transition from plan through action to results (Suggett, 2011). The implementation, which is the system’s engine, needs to be functional for it to work. Just like a car with a weak engine will be slow and ineffective in getting people to their destination on time and comfortably, weak implementation in monitoring will produce unappealing results that could undermine the trust and confidence in monitoring systems. Management participation and support for monitoring were found by Kihuha (2018) to have a significant effect on the monitoring system’s performance. Njama (2015) and Yekani (2022) uncovered that weak organizational leadership support impacts monitoring negatively. Hutchinson (2018) also observed the critical role of budgetary support in monitoring effectiveness in Africa, while Nyamambi (2021) identified insufficient or no funds for monitoring as a significant setback to monitoring at the subnational level. Goldman et al. (2018) found limited financial resources to inhibit the effective conduct and scaling-up of monitoring and evaluation activities in Africa.

Ghana and Africa need to move away from the long-held position that they are very good at developing good policies, plans, and laws, among others, but are weak in translating them into action. This systemic thinking and approach account for the weak implementation of the monitoring system at the subnational level. Implementing policies, plans, and bylaws should be considered as one of the minimum conditions during the assessment of local authorities to give focus to the monitoring system’s implementation.

Reflection Stage of Monitoring

The results of the analysis revealed that platforms such as review meetings, among others, exist for sharing of lessons, discussion of lessons learned at department and unit levels for improved performance, and documentation of lessons or experiences during the design and implementation stages was a key feature of the monitoring system, and modifications to how to monitor over the years were moderately ranked issues prevalent in the reflection stage of the monitoring system. However, issues such as implementing lessons learned because of the availability of resources and rewards and punishments for departments and units that implemented or failed to implement lessons were non-existent in the monitoring system.

Integrating learning as a fundamental monitoring system component guarantees the documentation and dissemination of lessons learned and best practices, resulting in programmatic improvements and replicating lessons and best practices. Review meetings and discussions of lessons in groups provide a platform for reflection on monitoring data to inform decision-making on monitoring, planning, budgeting, and implementation. Such platforms enable further questions such as “how are we doing relative to our targets and why?” and “what did we achieve and what needs to be done?” to be explored. Critical reflection assists in learning and managing for impact. Goldman et al. (2018) found that various platforms, including discussion of evaluation findings in stakeholder workshops, presentation of conclusions and recommendations in policy briefs, and thematic workshops, enhance reflection and use of monitoring and evaluation results. Bouey et al. (2023) found that setting up a focus group to contextualize findings was critical to adaptive learning and using findings. According to Eggers (2002), critical reflection avoids the accumulation of errors, often resulting in project failure. Akanbang et al. (2016) observed review meetings as a standard monitoring feature at the subnational level. However, discussion of monitoring reports in groups was low. Such platforms, as argued by Akanbang et al. (2016) create opportunities for deep reflection on results, identification of problems, validation of data, cross-learning among stakeholders and individuals, and making data accessible for use. This study thus corroborates their findings on review meetings but departs from their findings on group discussion of findings. The nonexistence of punishments and rewards for implementing lessons affects the learning stage of the monitoring system. Given the low motivation for monitoring at the subnational level, there is a need for incentives and punishments to incentivize departments and individuals to take lessons learning seriously.

Inadequate funds to implement decisions hindered the effectiveness of the reflection stage. This observation tends to discourage stakeholders from investing time, energy, and other sacrifices to undertake monitoring because it undermines the result of the process. To leverage the strength of the monitoring design and the growing demand for monitoring information at the subnational level, as discovered by Akanbang et al. (2016), policymakers at the subnational level need to show a keen interest in the work of the monitoring teams and meet some of their logistical needs to assure them of the importance of the work they do.

Characteristics of the Monitoring System

Monitoring as a Collective Activity

The study’s results demonstrate that monitoring was conducted as a collaborative activity. Collaboration in monitoring ensures data quality because data validation and quality assurance are enhanced (Akanbang, 2021; Chantler et al., 2014). Such a collective approach to monitoring is fundamental to dealing with data quality issues such as incomplete data and inaccurate reporting. Using data from multiple sources facilitated triangulation and the validity and quality of data (Chantler et al., 2014; Guijt, 2008; Mathis et al., 2001). Quantitative methods could not shed light on why women and girls did not use the newly built drinking water delivery points around or near their houses, necessitating the commissioning of a qualitative evaluation to complement the quantitative findings (Hutchinson, 2018). Evaluation as a collective activity involving a wide range of stakeholders is common in the African context (Goldman et al., 2018; Wang & Hong, 2018). It also provides a learning opportunity for participants in the monitoring process (Akanbang et al., 2019; Chantler et al., 2014; Wang & Hong, 2018). However, it is associated with delays due to the cooperation needed from different actors before taking subsequent steps. Cooperation, coordination, and commitment are critical to monitoring as a collective action (Chantler et al., 2014; Rahmat, 2015).

Low Motivation for Monitoring by MMDAs

Akanbang and Bakyieriya (2020) and Akanbang (2021) conducted qualitative studies that found low motivation to be a vital issue affecting monitoring at the subnational level. Despite increased demand for monitoring information (Akanbang et al., 2016), funding and logistics for monitoring activities have not increased proportionally. Nyamambi (2021) suggests that effective monitoring requires strong leadership and commitment from all actors to foster a learning culture and utilization of results.

The Dominance of Construction Projects and Activity Monitoring

According to Goldman et al. (2018), previous studies have confirmed that construction and activity monitoring are the main types of monitoring that occur at the subnational level. Akanbang et al. (2016) observed that this type of monitoring is critical as it serves as the basis for making payments to contractors or service providers, hence its emphasis at this level. However, use and impact monitoring, critical to projects and activities achieving their intended objectives, is less undertaken at the subnational level, as recently observed by Akanbang (2021) and Sulemana et al. (2018). Akanbang et al. (2016) observed that the limited attention to use and impact monitoring results from limited funding for monitoring. Most projects financed by donors have arrangements for monitoring during the implementation stage. However, once that stage ends, monitoring ceases because the MMDAs do not make the requisite resources available to the monitoring teams to do their work.

Factors Affecting Implementation

Policy-level factors such as guidelines, manuals, and policies at the national level that aid in designing and implementing monitoring at the subnational level were highly ranked enabling factors in the decentralized subnational monitoring system. The backstopping role of RPCU (an intermediate actor) was key in facilitating the implementation of the monitoring system. Experience, skills, attitudes in monitoring, and leadership support at the individual and organizational levels positively impacted the monitoring system implementation. However, logistical support in the form of ICT infrastructure (computers, software, internet) to support monitoring was regarded as crucial but non-existent in supporting the system’s implementation. Contrary to Yekani (2022), in which key monitoring stakeholders were unaware of policies at the national level that support monitoring activities, policies and frameworks, including manuals, were highly ranked as factors that facilitated the implementation of the monitoring system.

This study confirms the findings of Agbenyo et al. (2021) weak skills and knowledge are a significant hindrance to monitoring at the local level of governance. Kihuha (2018) highlights technical expertise, management participation, and support as requirements for the effectiveness of monitoring systems. Njama (2015) and Yekani (2022) uncovered that organizational leadership support for monitoring was weak and impacted monitoring negatively, as corroborated by this study.

Conclusion

Monitoring is an essential component of effective and accountable local governance. The design and implementation of monitoring systems have implications for their ability to contribute meaningfully to decentralization’s ability to achieve its anticipated objectives. The study investigated relevant issues concerning the subnational system’s design, implementation, and reflection stages to inform the policy, practice, and research in subnational monitoring in Africa. In tandem with the process models/theories of implementation science, the project life cycle model provided insight into the design and analysis of the study’s results. In line with the process model, the subnational monitoring system has the three main stages of the project life cycle—design/planning, implementation, and reflection/learning. Issues of the design stage of the system that were highly ranked included; the system has a clearly defined purpose (mean = 4.2162 ± 0.74522), has appropriately defined indicators (mean = 3.8514 ± 0.83883), indicators covered the information needs of actors (mean = 3.8378 ± 0.90701), and a well-developed plan for communication and reporting (mean = 3.8108 ± 0.93157). Availability of checklists to guide data collection (mean = 3.7703 ± 0.88437), actors responsibly played their roles (mean = 3.0811 ± 0.93276), and backstopping support from RPCU and NDPC was always timely (mean = 3.0000 ± 0.97924) were highly ranked issues of the implementation of the system. On the reflection stage, review meetings exist for sharing of lessons (mean = 3.7568 ± 0.99051), discussion of lessons at the department/unit level (mean = 3.5270 ± 0.98235), documentation of lessons/experiences during the design and implementation stages (mean = 3.2838 ± 0.88396), and modifications over the years to how we undertake monitoring (mean = 3.2838 ± 0.95172) were issues ranked highly by respondents. Policy level factors such as guidelines and manuals support effective monitoring (mean = 3.9054 ± 0.84673), and policies at the national level aid the design and implementation of monitoring (mean = 3.6622 ± 0.81555) ranked highly as enabling factors in the decentralized subnational monitoring system. Logistical support in the form of ICT infrastructure (computers, software, internet) was a constraining factor to the monitoring system. The study concludes that the system for subnational monitoring in terms of design is excellent, but its implementation and reflection stages are weak. The study contributes to understanding the status, quality, and implementation factors of African subnational monitoring. It highlights the precise planning and design of subnational monitoring systems, including setting clear objectives, utilizing robust indicators, involving stakeholders, and using timely, multi-data as critical to effective subnational monitoring. Sufficient resources and capacity-building initiatives are necessary to support effective monitoring activities, including hiring experienced personnel, providing on-the-job training, committing financial resources and logistics, and backstopping support. Appropriate policy environments, including manuals and legislation, are critical to anchoring and sustaining subnational monitoring systems. Monitoring systems should prioritize learning, knowledge sharing, and documentation of lessons learned to facilitate programmatic improvements and replication. To prioritize monitoring and address the perennial problem of funding, which is a source of low motivation for monitoring at the subnational level, MMDAs should allocate at least 2.5% of the DACF to financing monitoring.

Footnotes

Acknowledgements

The author thanks the respondents of the study—the District Development Planning Officers of the Metropolitan Municipal District Assemblies of Ghana, for spending their precious time and data to answer the questionnaire. The author also thanks Mr. Kwaku Korang for configuring the questionnaire for administration on Google Forms. Thanks also go to Prof. Millicent A. Akaateba for reviewing the questionnaire.

Author’s Contributions

The author designed, collected data, analyzed, and wrote the study report.

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.

Ethical Approval

The study received ethical clearance from the Research Ethics Review Board of the Simon Diedong Dombo University of Business and Integrated Development Studies. The Certificate number is UBIDS/RERB/2021/04

Consent Details

The researcher indicated in the Google form that participation in the study was optional and that respondents could end their participation at any point of answering the questions.

Data Availability Statement

Data would be made available upon request.