Abstract

Although many studies have considered the effects of online reviews on tourists’ decisions, none have directly investigated how to leverage open data analyses to create early choice sets and facilitate destination planning. This paper illustrates how salient characteristics can be mined from the shared experiences embedded in review data and incorporated into a predictive model to build a travel counseling approach. The model is designed by first defining a prediction-based mechanism from online reviews and then generating a multinomial classification problem on all candidate destinations of interest. The model is implemented by applying Natural Language Processing (NLP) and Deep Learning (DL) technologies to review textual features. The model is validated using 75,315 reviews from TripAdvisor along with destinations from 257 U.S. national parks. Empirical results indicate a best classification accuracy of 67%, outperforming two previous approaches. Findings shed light on how to exploit past tourists’ experiences to generate early destination recommendations to identify items for choice sets and reduce tourists’ travel-planning effort. Theoretical and managerial implications regarding social media analytics are provided based on online review meta-data in touristic management.

Plain Language Summary

A destination recommendation system is developed in the brand awareness stage. A predictive framework is proposed using online reviews and deep learning. A many-to-one mapping is reverse built between reviews and their destinations. A generator is established to create items for an early choice set. Tourists’ knowledge is broadened and trip-planning effort is reduced.

Introduction

Social media (SM) and user-generated content (UGC) have transformed the tourism industry. They represent efficient information sources as tourists plan vacations (Pantano et al., 2017; Pantano & Di Pietro, 2013), especially when choosing a destination in the pre-trip phase (Pantano et al., 2017; Pantano & Di Pietro, 2013; Xiang et al., 2015). This information enhances potential tourists’ expectations by foreshadowing experiences and influencing decisions (Chen, 2019; Pan et al., 2021; Toral et al., 2018). Likewise, consumers’ exposure to online reviews benefits stakeholders by boosting individuals’ purchase intentions and likelihood of buying recommended products (Shu & Scott, 2014).

Selecting a destination is a major decision when planning a vacation (Fesenmaier et al., 2006). Yet vacationers are not omniscient; a person may know what kind of vacation they wish to experience without necessarily knowing where to go. Product search engines have access to myriad information; however, they often struggle to return accurate item details owing to search engine–specific marketing techniques or policies. A model that uses authentic experiential data in specific domains could raise recommendation accuracy. Some businesses, such as less popular scenic parks, might go overlooked even if the locations have comparable beauty or similar offerings to popular destinations. A counseling system based on destination characteristics and tourists’ experiences will keep business owners from compromising on price to attract visitors. Being introduced to less popular destinations can also reveal tourists’ blind spots (i.e., options they might not have otherwise entertained) to promote better-informed decisions. Such recommendations are thus expected to be useful for tourists and destination marketers alike.

Dense research on travel counseling since the early 21st century has sparked a trend of harnessing smart technologies (e.g., artificial intelligence [AI] and data mining) to investigate recommendation models. Suggestions about travel routes (i.e., how to travel) have received close attention (Zheng et al., 2017, 2020). Destination recommendations (i.e., where to travel) are less common. Few scholars have pondered how to help tourists choose places to visit. Efforts from Oh et al. (2016), Pantano et al. (2017), Keerthi and Lakshmi (2018), Sohrabi et al. (2020), and Li et al. (2022) are notable exceptions. However, this line of work features three pitfalls. First, apart from Keerthi and Lakshmi (2018), the other four studies did not draw a specific destination from a set of alternatives. Second, none of the five studies addressed multinomial classification problems with a large number of classes (e.g., 100 classes). Traditional approaches are of limited utility in big data analysis because they fail to provide time-sensitive solutions for a large class volume. Third, none of these studies returned informative results to potential tourists. A person is unlikely to be aware of all destination alternatives, even in their home country. It is hence important to offer tourists a range of options on where to go.

UGC may also serve as a trustworthy data source in prospective visitors’ initial decision making (Osei & Abenyin, 2016; Tham et al., 2013). Yet exactly how UGC data can generate destination items to facilitate tourists’ trip planning remains unclear. This knowledge gap motivated the present study. We aimed to discern interactions between textual data from UGC comments and corresponding destinations by reverse building a many-to-one mapping to provide destination predictions based on tourists’ expectations of an upcoming trip. Our work makes two substantial contributions to the touristic literature. We describe how to (1) give destination options to enhance tourists’ knowledge in travel planning; (2) apply prediction-based approaches to inform early destination recommendations via a perceived image (i.e., keywords from textual reviews). The remainder of the paper is structured as follows. Section 2 presents a brief overview of prior research on itinerary planning on destination selection and models of providing destination recommendations. Section 3 discusses the details of the research framework and dataset we used. Section 4 describes the empirical results and main findings. Finally, section 5 draws discussion and section 6 presents conclusions.

Research Background

Itinerary Planning on Destination Selection

As a sub-decision of itinerary planning, destination selection has drawn intense academic interest over the past five decades. Most studies on the subject have involved behavioral and choice set approaches. Frameworks such as the theory of planned behavior (Ajzen, 1985) and choice set models (Um & Crompton, 1992) have often been used respectively (Kozak et al., 2017; Lam & Hsu, 2006). Behavioral approaches seek to delineate decision stages and identify related internal and external factors (Sirakaya & Woodside, 2005). The influential features underlying selection range from all-side determinants (Jo et al., 2019) to specific aspects (Cao et al., 2020; Karl et al., 2020; Neto et al., 2020; Pan et al., 2021; Pestana et al., 2020). More recently, researchers have explored the behavior of clusters of travelers during destination selection (Ghosh & Mukherjee, 2023). Personal knowledge has been deemed integral in determining the extent to which factors influence tourists’ information processing when choosing destinations. Travelers generally refer to internal and external information sources to obtain insight for travel planning (Fesenmaier et al., 2006). Search engines are becoming increasingly popular for this purpose (Fesenmaier et al., 2011).

The choice set model suggests that destination selection involves a series of choice sets and a funnel-like process (Um & Crompton, 1992). This process covers brand awareness and brand evaluation stages, each of which includes from one to several choice sets. Studies have addressed choice sets’ size and structure in each stage (Decrop, 2010; Hong et al., 2006; Kozak et al., 2017). Deliberate attention has been given to issues in the brand evaluation stage (Ghosh & Mukherjee, 2023; Qiu et al., 2018; Thai & Yuksel, 2017), when vacationers seek to evaluate a few alternatives and reduce the number of options (Kozak et al., 2017). Research related to the brand awareness stage is scarce.

Providing Destination Recommendations

Destination recommendations can be roughly divided into two categories (Hruschka & Mazanec, 1990). The first is based on “recommendation technology,” which is commonly used to address the tourist trip design problem (Vansteenwegen & Van Oudheusden, 2007) and is otherwise known as an expert system (Hruschka & Mazanec, 1990). Recommendation-based approaches to travel planning involve choosing points of interest (Stamatelatos et al., 2021) that suit tourists’ preferences by considering parameters (including individuals and groups) (Delic et al., 2018) and constraints on a daily basis. This method can generate on-site (real-time) or pre-trip propositions about either travel routes (itineraries) or tourism essentials (Zheng et al., 2017, 2020). Personalized route recommendations, personal navigation systems, and electronic tourist guides fall into this category. Both pull factors (e.g., destination attributes, otherwise known as brand image) and push factors (e.g., individual preferences such as duration and expenses) are usually taken into considerations in building recommendation-based systems.

The second category is based on “retrieval technology,” which typically involves searching for a particular keyword or phrase and then providing sophisticated search results (Fesenmaier et al., 2006). Some researchers have predicted tourists’ reactions (e.g., “like” vs. “dislike” and “recommend” vs. “do not recommend”) toward a destination (Pantano et al., 2017) or airline (Jain et al., 2022). Others have inferred tourists’ likelihood of being interested in vacation items in a candidate destination (Li et al., 2022) and have identified destination sub-regions based on individuals’ interests and travel backgrounds (Sohrabi et al., 2020). Still others have used review ratings and the months of visits to predict 65 destinations (Keerthi & Lakshmi, 2018).

When applied to a choice set model, recommendation-based approaches can help prospective tourists make final decisions about a late choice set in the brand evaluation stage (i.e., when personal preferences and situational constraints can be incorporated into the model) (Abbasi-Moud et al., 2021; Delic et al., 2018). With respect to early choice set counseling in the brand awareness stage, retrieval- or prediction-based approaches prevail. A possible cause is that pull factors rather than push factors largely shape early choice sets (Decrop, 2010; Hong et al., 2006; Sirakaya & Woodside, 2005). Pull factors are fairly constant; that is, different tourists may view a given destination relatively consistently, whereas one’s length of stay or budget can vary.

Summary of the Past Studies

Much effort has been made to brand evaluation stage. Little is known about brand awareness stage (Keerthi & Lakshmi, 2018; Mu, 2014), during which early choice sets contain destinations that tourists either recognize or potentially prefer. Pull factors dominates tourists’ decision in this stage. Since UGC data reflect a destination’s pull factors and constitute its brand image (Toral et al., 2018), these data can therefore be used to help build a prediction-based travel counseling system in brand awareness stage. Previous scholar (Keerthi & Lakshmi, 2018) tried to explore the potential of open data sources in offering destination predictions from an array of candidates—but predictions were rooted in tourists’ preferences instead of destination attributes. Additionally, current models on helping tourists choose visiting places adopted neither AI techniques (e.g., machine learning [ML]) nor multiple evaluation metrics. Such deficiencies hinder models’ generalizability. In early period of travel planning, tourists may incline to a destination set from which to pick up one or two for further consideration. Such alternatives serve as constructs in the brand awareness stage when using a choice set model to explain destination selection. However, the literature is largely silent about how to generate a range of options to give informative results to tourists. This study illustrates how external information can alter travelers’ early choice sets (Fesenmaier et al., 2006) by considering ways (e.g., smarter search systems) (Fesenmaier et al., 2011) to aggregate such information to offer potential tourists new knowledge. A specialized search engine, or a predictive method grounded in domain-specific data, may also provide fresh insight into early destination recommendations.

Research Framework, Dataset, and Methods

Research Framework

Essentially, a destination and its corresponding textual reviews (i.e., conveying tourists’ destination evaluations) constitute a one-to-many mapping. These reviews reflect a destination’s pull factors and convey its brand image (Toral et al., 2018). In the ML field, elements such as destination attributes or travelers’ feelings embedded in reviews can be viewed as representations from raw data. ML seeks to automatically learn rules by looking at data. A related question thus arises: is it possible to reverse build a many-to-one mapping to learn useful and appropriate representations from reviews in order to recognize a destination? Destination-related attributes embedded in user-written reviews strongly influence the brand awareness stage of travel planning (Decrop, 2010; Hong et al., 2006; Sirakaya & Woodside, 2005). This many-to-one mapping can be applied to early destination recommendations.

This study introduces a prediction-based approach to address early destination recommendations. This tactic is usually applied in tourism demand forecasting, albeit for future projections rather than inferences. For our purposes, the shared experiences embedded in reviews are fed into a model to predict a destination from a large number of candidates. Since the attributes appearing frequently in destination reviews could be tied to multiple places, instead of singular output, a strategy similar to Top-5 accuracy (Kang et al., 2021) is introduced in this study when we run the model for testing. Details of the strategy can be found in Model Interpretation in the Findings section, where any predicted label with a probability greater than a predefined threshold will be reserved as output. Thus the approach can contribute to item generation for the choice set containing items of potential destinations.

We formally define the analysis of prediction-based destination recommendations from review content as a multinomial classification problem (with M classes in total, where M denotes the total number of destinations) in ML. The following five steps constitute our approach. Figure 1 presents our analytical framework. All methods were implemented using packages on the Python platform.

1) Data preprocessing: Data were elaborately chosen, cleaned and tokenized to meet the requirement of a neural network.

2) Feature extraction: A parts-of-speech (POS) word search and word vector representation in natural language processing (NLP) are introduced to identify and vectorize salient keywords from review content.

3) Classifier design: Several classifiers in deep learning (DL) are imported to estimate destination prediction. Typically, different classifiers refer to different models.

4) Model training: The training input includes review features reflecting past visitors’ destination experiences; the output is an integer (ranging from 1 to M) indicating a destination.

5) Model test: Given a query representing an individual’s desired experiences during an upcoming trip, we calculate the query’s probability of belonging to a certain class for each classifier. The predicted destination by the classifier with the best performance ultimately presents the “best offer” for a tourist, including descriptive words to facilitate destination planning.

Flow chart of the methodology.

Dataset

We assembled a destination dataset from the United States National Park Service. A total of 467 national park units were gathered from the organization’s official website (https://www.nps.gov/findapark/advanced-search.htm). These units cover the most popular travel destinations throughout the United States, including national parks, national monuments, and historic sites. However, not all units qualified for classification due to an inadequate number of reviews that did not provide sufficient training data for modeling. We could therefore only investigate a subset of all data (see Data Preprocessing section for details).

We collected review data from the most popular and largest travel-related review site, TripAdvisor, to address the destination prediction task in line with other studies (Chang et al., 2020; Ghose et al., 2012; Pantano et al., 2017; Phillips et al., 2015; Zhang et al., 2017). Data were gathered in mid-December 2021. Specifically, the URLs of destination review pages on TripAdvisor were obtained first. A Python-based program was next coded to collect necessary data. On each review page, all reviews written in English were crawled and served as the basis for subsequent analysis. Review data included each review’s textual content and the destination to which it referred. Figure 2 shows a typical review on TripAdvisor.

A review on TripAdvisor. (https://www.tripadvisor.com/Attraction_Review-g60708-d108188-Reviews-Abraham_Lincoln_Birthplace_National_Historical_Park-Hodgenville_Kentucky.html; accessed on 12 Aug. 2022).

Methodology

Data Preprocessing and Tokenization

An imbalanced training dataset can influence modeling performance and lead to poor classification (Guerreiro & Rita, 2020; Ma et al., 2018). Review records with an equal number N in each class (i.e., destination) were randomly selected to construct the final dataset. The larger the N, the higher the performance. However, a larger N may lead to fewer destinations being included in the final dataset due to an insufficient number of TripAdvisor reviews; that is, the number of reviews per destination varied widely. Including as many destinations as possible would produce the most compelling findings. As such, we set N to 300, as seen in prior studies (Cho et al., 2022; Kang et al., 2021). It would be less than ideal to require an equal number across samples. Thus, all destinations with at least 210 reviews (70% of N) were retained. This compromise led us to investigate over half of all available destinations (M = 257 of 467 in total). In the end, our database (i.e., final dataset) included 75,315 review records related to 257 destinations. We used Google Earth (https://earth.google.com/web/) and ArcGIS to automatically transform each destination into a pair of longitudinal and latitudinal coordinates to pin each destination on a map as illustrated in Figure 3.

Spatial analysis of 257 destinations (look-up table appears in Supplemental Materials as Table S1).

All review data in the final dataset were tokenized using the following steps: (1) all spaces were removed; (2) all punctuation marks and other non-letter symbols (e.g., “&,”“/”) were removed; (3) elements with consecutive duplicates were eliminated; and (4) capital letters were converted to lowercase to reduce redundancy.

Feature Extraction: POS Word Search

We adopted a novel feature-extraction strategy to uncover the in-depth meaning embedded in reviews by examining cognitive and affective images. Cognitive image is characterized by nouns (Ghose et al., 2012; Guerreiro & Rita, 2020), whereas affective image is portrayed through adjectives and adverbs (Guerreiro & Rita, 2020). These three types of POS words coincided with findings from Xiang et al. (2009), who noted that such terms are common in queries. We used the Natural Language Toolkit and the TextBlob library in Python 3.7 (see https://textblob.readthedocs.io/en/dev/) to detect POS tagging when mining relevant keywords, as done in previous studies for different purposes (Deng & Li, 2018; Lee et al., 2021; Zhang et al., 2019; Zheng et al., 2021). Each review was ultimately split into a collection of single keywords. Because ML algorithms use tensors as their basic data structure, all input should be of the same length. We cut off our list of keywords after 68 words (this number was chosen based on statistics from the sample data; see Descriptive Analysis in the Findings section). The list was padded with zeros after the last word if it contained fewer than 68 words.

Feature Extraction: Word Vector Representation

When dealing with extremely large vocabularies, word vector representation (i.e., word embeddings) collapses information into far fewer dimensions. This technique enabled us to efficiently train the neural network (Chollet, 2018). Word embeddings can normally be retrieved in two ways: by learning from raw data (i.e., jointly with the main task of destination prediction) or by using pretrained word embeddings (i.e., by loading precomputed matrices into the model) (Chollet, 2018). Popular pre-trained word vectors are Global Vectors (GloVe; https://nlp.stanford.edu/projects/glove) and the Word2vec algorithm (https://code.google.com/archive/p/word2vec). We used both task-specific and pretrained embeddings (Word2vec here) to intuitively determine which method was more effective with our dataset. Consistent with earlier work (Chang et al., 2020; Jain et al., 2022; Liu et al., 2022), each distinct word in the corpus was mapped onto a 300-dimension vector to obtain a word-embedding representation.

Classifier Building and Comparison

Considering that DL models (Hinton et al., 2006) have been shown to outperform many traditional models (Ma et al., 2018; Zheng et al., 2021), we used DL classifiers for destination prediction. The long short-term memory (LSTM), gated recurrent unit (GRU), and convolution neural network algorithms for text classification (TextCNN) were chosen as models for comparison to highlight marginal gains in destination prediction under different DL approaches. To further validate the efficacy of the proposed method, two previous text-classification approaches, namely, TT-Rec (Yin et al., 2021) and Travel Intention-based ML technique (Oh et al., 2016), were selected for comparison.

Model Evaluation

The final dataset was split into an 80–10–10 partition (Chollet, 2018) based on 60,249 pieces of training data, 7,531 pieces of validation data, and 7,535 pieces of test data, respectively. The dataset was shuffled multiple times, and 10-fold cross-validation (Bengio & Grandvalet, 2004; Refaeilzadeh et al., 2009) was performed to minimize potential bias from the partitioning procedure. The final experimental results demonstrated the average performance of 10-fold cross-validation. Findings for the proposed model are reported using Precision, Recall, Accuracy, and F-measure (Powers, 2011), which are typical performance metrics for classification problems in tourism and hospitality forecasting (Chang et al., 2020; Kang et al., 2021; Li et al., 2021).

As mentioned, the attributes uncovered in this study do not necessarily refer to distinctive features strongly associated with a particular destination. This scenario differs from that of unique city-level destination attributes adopted in prior work (Toral et al., 2018): previous research covered four cities, whereas we considered more than 200 parks. We further validated the proposed model’s efficacy using a less rigorous evaluation metric because discriminative values did not exist.

Findings

Descriptive Analysis

Table 1 presents the descriptive summary of our review data. Table 2 displays the descriptive attributes of review features within our data, indicating that the distribution of feature length (i.e., keywords) in our final dataset was similar to that of review length (excluding all possible punctuation). Table 2 also depicts why we disregarded overly long keywords during feature extraction, as the number of keywords in our training data was centralized to

Descriptive Summary of Final Dataset.

Note. The division of regions conformed to the United States Census Bureau; the division of types was summarized by the authors.

Distribution of Properties of Data Features in Final Dataset.

Model Interpretation: Predicting Destinations via Deep Learning

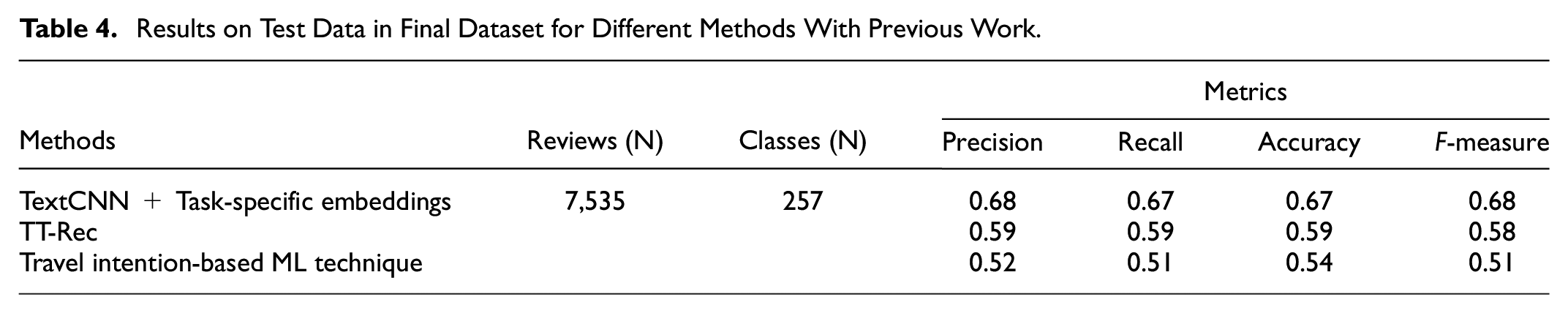

Table 3 reflects the performance of our experiment based on reviews from the test dataset. For all models, results with pretrained word embeddings were worse than those with task-specific embeddings. This phenomenon may have arisen because our training data were sufficient for learning truly powerful features (Chollet, 2018). The common features learned on a different vocabulary in other cases would have been difficult to repurpose in this study. In each word-embedding category, TextCNN exhibited the best performance among competitors. This approach’s strength in extracting non-sequenced features from our keywords list might justify its superiority. Findings show that TextCNN with task-specific embeddings generated test scores (Precision, Recall, Accuracy, and F-measure) around 63%. Notably, several other binary classification cases reached an accuracy ranging from 58% to 79% (Chang et al., 2020; Guerreiro & Rita, 2020; Ma et al., 2018). The results in this study therefore seem acceptable when compared to these binary cases. One challenge was that discriminative attributes were not consistently available to distinguish all destinations, as mentioned in the Model Evaluation section. Another plausible explanation may be our large class volume. The comparison with previous work is illustrated in Table 4. The proposed model using TextCNN + Task-specific embeddings outperforms TT-Rec and Travel Intention-based ML technique.

Results on Test Data With a Sample Size of 7,535 in Final Dataset for Different Models.

Results on Test Data in Final Dataset for Different Methods With Previous Work.

Model Interpretation: Multiple Options for Early Destination Recommendations

We did not solely aim to discern accuracy or precision from the absolute identity between a predicted label and target. We further validated the DL classifier’s efficacy by exploring how the model might help potential tourists create an initial consideration set. As discussed, it can be challenging to identify unique attributes that appear frequently in certain destination reviews over others. Accordingly, rather than relying on singular output (denoted as “basic matching”), we developed a list of destinations that provided comparably higher probabilities ranked in descending order (similar to other recommendation systems). Doing so enabled us to ensure robust identification in addition to providing users with multiple options, thereby generating items for the choice set. The early set size for tourists’ destination selection ranged from roughly 4 to 6 (Decrop, 2010; Kozak et al., 2017). The maximum size of the ranked list was set to 5 based on the mean level in prior research. That is, any label with a probability higher than 0.2 was selected as output in this study. Our research question about creating a generator for a choice set in destination selection based on passive information from the outside environment has thus been answered.

Notably, the matching rule between the predicted and target destination can change. The changing rule was denoted as “advanced matching” in this study: if the target fell within the ranked list, then it was deemed a true positive. We then re-arranged our results accordingly. Gains from this compromise were noticeable, leading to an approximate 7% increase on average for any model (see Tables 3 and 5 for comparison; the corresponding indices in Table 5 are labeled “advanced”). This enhanced prediction performance dovetails well with our expectation and implies that advanced matching can strengthen the diversity and accuracy of destination prediction.

Results on Test Data With a Sample Size of 7,535 in Final Dataset for Different Models (Advanced Matching).

Model Interpretation: Out-of-Sample Illustration

In this section, we describe certain out-of-sample examples to visually validate the impact of the proposed framework for the TextCNN model with task-specific embeddings. In addition to test data from TripAdvisor, we referred to other sources (e.g., reviews from Google Maps, Booking, Expedia, Travelocity, Orbitz and the authors’ own impressions) as out-of-sample data to ensure in-depth analysis and to mimic the destination-planning process. Results are listed in Table S2 in Supplemental Materials. Our proposed method made reasonably effective predictions for early destination recommendation–related tasks. For instance, in review #1, the word sequence (“beautiful scene” vs. “scenery is nice”) changed slightly, yet the DL model learned this similarity, resulting in a second-ranking hit on the target. In review #2, the reviewer mentioned “wonderful monument” and “beautifully”; the proposed system returned 68, as one review of 68 in the corpus contained similar terms (e.g., “great views of the memorial” and “beautiful”). Likewise, the phrase “Great for bikers, walkers” was analogous to “good for walking, jogging” in the training data in review #3. In the case of review #4, both reviews mentioned “mountain,”“snow,” and “beautiful/excellent.” In review #5, the word “ferns” is the plural form of “fern,” and “wooden” has the same root as “woods.” Similarly, in review #9, the word “battle” was semantically similar to “war/battlefield,” and “knowledgeable” was close to “descriptive/detailed.” The terms “Lincoln, VC” and “falls, DC, trails” distinguished the destinations in 93 (review #6) and 238 (review #7), respectively. In review #8, the words “hiking” and “waterfall” in our out-of-sample data suggested that this content probably corresponded to target #2. In review #10, although the terms “courthouse” or “jail” did not exist in the corpus, the proposed model could capture another semantically close word (“historic”) via word embedding to support fuzzy queries. The words “commissary” and “gallows” confirmed the destination of target #29. In the word-embedding space, the geometric distance between two vectors is associated with their semantic distance (Chollet, 2018). Synonyms and different word forms are embedded into similar word-embedding vectors and result in closer points. The word embedding–based method can thus potentially identify the closest word or terms’ similar roots in the corpus by providing positional reorganization. Therefore, the proposed prediction method has good generalization power.

Reviews #4 and #7 in Table S2 demonstrate how the proposed model works in experience-sharing contexts and helps enhance tourists’ knowledge. While searching for information, a person may not know an exact destination name but could instead have vague expectations about an upcoming experience due to differing levels of destination awareness. The proposed model provides a way to record several descriptive words and then find a suitable option. For example, the input might be a set of desires/interests for an upcoming trip to a park, similar to the test samples. One could input a sentence such as “a nearby outdoor excursion close to DC. I would like views of a great fall with some trails.” Because this content mirrors that in review #7, our model returns the same prediction of destination #238. The proposed framework can hence serve as a fuzzy query via a specialized search engine with authentic experiential data in specific domains. The return set are composed of one to five items representing different destination(s), some of which the user might not have known prior to searching.

Discussion

Online reviews represent a promising research direction to facilitate tourists’ information searches, decisions, and knowledge exchange (Xiang et al., 2017). However, an understanding of itinerary planning in SM-related studies is scarce. We referred to publicly available data from TripAdvisor, whose large volume of reviews provides direct evidence of customers’ shared experiences. A prediction-focused framework for destination recommendation was proposed using different DL models based on these reviews. Although the accuracy achieved in this study is data-specific, the model presented herein can be adopted to recommend any product or service featuring textual reviews (e.g., eating, drinking, and accommodation). Findings offer tourism researchers and practitioners meaningful insights.

Theoretical Insights

Our research makes several theoretical contributions to the literature. First, this study represents a pioneering effort to extend SM analytics within touristic management. Current SM analytics in consumer-oriented research has mainly concentrated on how perceptions shape travelers’ decisions (Hu & Yang, 2020). This study enriches existing knowledge about travel planning by using SM data and a predictive model to guide prospective tourists’ early destination planning. This branch of research is a promising field that has only been partly explored to predict travelers’ responses to tourist attractions (Pantano et al., 2017) and to make destination predictions by use of review ratings (Keerthi & Lakshmi, 2018).

Second, this study adds to the growing body of destination selection research through an innovative topic: how to create a choice set generator. Most studies on destination choice sets in tourism have considered conceptual issues such as choice sets’ size and structure (Decrop, 2010; Hong et al., 2006; Kozak et al., 2017). Analytical work based on empirical data through which choice sets can be predictively created remains lacking. This paper bolsters available choice set models by using passive information from the outside environment to inform an early choice set generator.

Third, this study offers novel insight regarding how researchers can use customers’ post-trip evaluations to support pre-trip travel planning. Consumers are inclined to share their experiences after traveling (Choi et al., 2007). Past travelers’ commentary can be useful for future tourists, as post-trip assessments can inspire prospective visitors. Our work empirically substantiates the argument that consumers’ tourism-related decision making is a cyclical process linking pre-trip, on-site, and post-trip behavior (Icoz et al., 2018).

Fourth, while other studies have sought to unravel the destination selection process and illuminate tourists’ behavior via multinomial logit models (Hong et al., 2006; Juschten & Hössinger, 2021; Keshavarzian & Wu, 2021), a dynamic agent-based model (Alvarez & Brida, 2019), or hierarchical and model-based clustering (Ghosh & Mukherjee, 2023), this study delves into destination selection outcomes to guide tourists’ behavior. By creating items for an early choice set, tourists can be directed to destinations traceable through in-depth analysis. Developing a recommender system based on our findings may further improve subsequent performance in the brand evaluation stage.

Managerial Implications

Developing an accurate model to predict destinations based on online reviews will benefit consumers, business owners, and platform managers alike. First, the proposed prediction model to can facilitate the searching process of potential tourists as well as generate additional knowledge to reach their dream destination(s). Today’s customers make decisions in an environment with a plethora of open data. The key advantage of our model compensates for customers’ tendency to not necessarily read every review about a destination to examine whether it is an ideal option. This type of guidance technology (Fesenmaier et al., 2006) can simplify tourists’ assembly of an early choice set and reduce their travel-planning effort. This predictive model could also broaden individuals’ cognitive capacity during the brand awareness stage of destination selection. A high level of destination awareness might enhance visit intention, as pointed out in prior work (Bigne et al., 2019).

Second, the proposed model enables destination marketing organizations (DMOs) to incorporate a range of functionalities beyond simply providing destination-related information. Essentials that link to travel experiences, accommodation, and transportation are main areas of concern in tourists’ destination planning. The proposed approach will allow DMOs to develop a smarter search system that embraces different facets (keywords from UGC data) to achieve the travel planner’s ultimate goal (Fesenmaier et al., 2011).

Third, business owners (e.g., those running less famous scenic parks) will benefit from this reverse mechanism among competitors: personnel will no longer need to worry about intense competition between destinations where, for example, tourists normally only consider the most famous destinations while ignoring less popular ones. If such a destination offers a thrilling experience which compels many tourists to post reviews on SM platforms, our predictive model can present this destination to prospective tourists for contemplation. Business owners will then no longer be forced to compete on price to be considered. The gap between more and less famous destinations would also narrow in the destination market.

Fourth, our model can be deployed on SM websites to help platform operators suggest travel destinations that appeal to customers’ tastes. In particular, online platform operators can provide information more economically by prioritizing destinations that satisfy customers’ queries. Applying our model to a given sample at a certain time and running it periodically could further promote query accuracy. By so doing, customers’ perceptions of different destinations can be identified automatically, enabling operators to fully harness the power of UGC data.

Conclusion, Limitations, and Future Work

Tourists’ decision-making process is influenced by their beliefs and behavioral norms as well as by others’ recommendations and prior experiences within the social environment (Pantano et al., 2017; Toral et al., 2018). This research serves as a pilot study to address textual reviews’ impacts as an information source on social networks when developing destination predictions to support travel planning. Another objective of this study was to expand the predictive analytics of choice sets to address the drawbacks of earlier conceptual work. Specifically, we endeavored to aggregate travelers’ destination-related feedback by transforming large amounts of open data into future destination propositions. We implemented a predictive approach by combining three techniques in text mining and the neural network domain—POS word search, word vector representation, and DL models—by incorporating past and shared travel experiences in review comments. This process generated recommendations for satisfactory travel destinations and created early choice sets for future tourists. The proposed destination prediction model was then validated via a case study using data from TripAdvisor and its performance was compared with two text-classification approaches from previous studies. Bearing in mind that review text and the corresponding destination are not a perfect match (compared to the traditional classification problem where a clear boundary exists between classes), we presented an advanced matching rule for robust testing results. Findings revealed high predictive performance in terms of providing a user multiple options.

Conceptually, applying DL to early destination recommendations is innovative because this technique has mainly been applied to tourism demand forecasting. Our analytical framework demonstrates sound experimental power in classification prediction. Theoretically, this research is novel because it develops a nontrivial recommendation system that can offer useful predictions on where to travel—a research area requiring deeper exploration (Li et al., 2018). What sets this study apart is our exploration of how to use open data to establish a choice set to facilitate travel planning. Methodologically, our main challenge involved identifying and aggregating homogeneity that can be assigned to each destination from textual reviews and differentiating that destination from others. Another barrier concerns the large volume of classes in our study; this obstacle renders traditional methods somewhat challenging to implement. A sound solution thus remains elusive, calling for powerful DL methods in our work. Overall, to the best of our knowledge, no scholars have yet investigated the following issues in touristic management: (1) identifying items for an early choice set to widen tourists’ knowledge in travel planning; (2) applying prediction-based approaches for early destination recommendations by using textual reviews reflecting past tourists’ perceived image.

Our study unveils intriguing findings about early destination recommendations, and initial experiments using this approach seem promising. However, this study includes limitations which spotlight avenues for future work. First, some variables were not considered. For instance, a recommendation or prediction system may need to be configured, designed, and practically implemented to address other aspects (Hu et al., 2017): season and day of the week, type of travel, tourist’s nationality, and length of time between the dates of travel and sampling. This information suggests the possibility of incorporating other features into the model. Second, due to data availability, destination prediction for different source markets was not conducted but can be performed in the future to explore itinerary planning further. We thus could not completely rule out the possibility that data might not be comparable due to cultural differences. Third, our overall research design was based on a specific case (i.e., TripAdvisor) using a small sample. Neither reviews from other SM channels (e.g., Hotels.com and Google Maps) nor diaries from travel websites (e.g., Expedia and Trip) were considered during training. Because studying a complete dataset can reduce sampling errors (Xiang et al., 2017), the efficacy of our DL model necessitates testing across other channels to demonstrate its robustness. Fourth, our approach abides by the assumption that review content reflects a customer’s experience in a destination. In reality, platforms are mixed with manipulated reviews. Our proposed model appears fairly “immune” to manipulation: both the features and the input for actual tests were less sensitive to the corpus. Manipulated reviews are richer in adverbs, whereas authentic reviews generally contain more nouns and adjectives (Banerjee & Chua, 2014); as such, the features in this study were more authentic. Moreover, manipulated reviews tend to either enhance a destination’s image or tarnish its reputation. Users will likely search for positive words when looking for a destination in the early stage of travel planning. A combination of “negative” samples in the corpus had little effect on prediction accuracy. Despite resistance to reputation-damaging manipulation, the question of how to purify training samples to lessen image-bolstering effects deserves more investigation. Fifth, multi-source data (e.g., data from search engines and SM) will likely enrich researchers’ data sources and enhance predictive performance. It would thus be interesting to explore whether integrating other source data in model training yields better results. Sixth, because attributes appearing frequently in one destination review could be tied to multiple places, multi-label classification should be considered. Preprocessing the training sample can mitigate this challenge: all destinations should be run manually before assigning multiple labels to each review. Putting aside the required human labor, the homogeneity in reviews and the destination to which they belong would be impaired during data collection. The weak link between a review’s textual content and other possible destinations (labels) presents another issue. Additional preprocessing strategies need to be developed to lessen such bias, which will be part of our future work. Seventh, our sample lacked linguistic diversity—reviews were gathered from the TripAdvisor U.S. website, and all were in English. Language plays an important role in conveying information to readers (Salehan & Kim, 2016). Scholars could consider reviews in other languages to address how language affects predictive performance. Lastly, we did not differentiate between first-time and repeat visitors. These groups vary in their travel behavior, destination perceptions, and perceived value (Chen, 2019). Their UGC data should thus be handled separately to reduce possible bias. Despite the above limitations, we believe our study paves the way for additional research involving destination recommendations during pre-trip planning.

Supplemental Material

sj-docx-1-sgo-10.1177_21582440241246434 – Supplemental material for A Predictive Model Based on TripAdvisor Textual Reviews: Early Destination Recommendations for Travel Planning

Supplemental material, sj-docx-1-sgo-10.1177_21582440241246434 for A Predictive Model Based on TripAdvisor Textual Reviews: Early Destination Recommendations for Travel Planning by Yating Zhang, Hongbo Tan, Qi Jiao, Zhihao Lin, Zesen Fan, Dengming Xu, Zheng Xiang, Rob Law and Tianxiang Zheng in SAGE Open

Footnotes

Acknowledgements

The authors express their sincere appreciations to Jionghui Jiang of Shenzhen Tourism College in Jinan University for his technical assistance, and to Shaopeng Liu and Zhenlin Wu of Shenzhen Tourism College in Jinan University and Shiqi Liu of School of Business in Sun Yat-sen University for their efforts of data acquisition, manuscript drafting and revision drafting without which this research could not have proceeded to its present form.

Author Contributions

Methodology, Writing - Original Draft Preparation and Visualization: Yating Zhang; Programming—Comparative algorithms, Model Training and Model Test: Hongbo Tan; Literature Search, Data Curation and Formal Analysis: Qi Jiao; Programming—Proposed algorithm: Zhihao Lin; Data Acquisition and Data Preparation: Zesen Fan and Dengming Xu; Conceptualization and Discussion: Zheng Xiang and Tianxiang Zheng; Writing—Review & Editing: Rob Law; Supervision, Project Administration and Funding Acquisition: Tianxiang Zheng. All authors have read and agreed to the published version of the manuscript.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was funded by Jinan University Shenzhen Campus Funding Program under Grant Nos. JNSZQH2105 and JNSZQH2302, and National Natural Science Foundation of China under Grant No. 42001147.

Ethics Statement

No.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.