Abstract

Faced the vast amount of information, choosing the appropriate materials is a prerequisite for effective self-directed learning. The recommendation algorithm is a kind of intelligent technology that can accurately locate the required information which the users care about most. However, many recommendation techniques experience can not be trained adequately in scenarios with small sample data and extremely sparse ratings. Moreover, DLRAs (Deep learning based Recommendation Algorithms) require high hardware support. The probabilistic graph (PG) can effectively represent the implicit complex relations among nodes, but it still has the problem of sparse data sensitivity. Therefore, we propose a Matrix-Factorization-based Probabilistic Graph Model for Recommendation Algorithm (MF-PGMRA): By matrix-factorizing the sparse rating matrix, the users and items are mapped to the user/item spaces, respectively; We employ the inner product to data-enhance and overcome the problems of sparse data and cold start; Then, we build Probabilistic Graph to construct the “user-item” latent spaces and estimate the probability distribution based on expectation maximization (EM), so as to predict the ratings; Finally, we built a library management system with the recommendation module to highlight the benefits of MF-PGMRA for students’ subject learning. According to a questionnaire, we confirmed that the students are satisfied with the system from four aspects of speed, accuracy, usability and convenience, which can confirm that the library management system based on MF-PGMRA can efficiently and accurately recommend suitable materials for students from the huge amount of learning materials to improve students’ self-directed learning efficiency.

Plain Language Summary

We designed an intelligent recommendation method for material selection of self-directed learning based on matrix factorization and probabilistic graph model, and built a library management system with the recommendation module with our method for practical application. In the future, we plan to build an improved PGM by introducing deep learning model to further mine implicit relations between users and source of scholarly retrieval for better self-directed learning.

Keywords

Introduction

Learners often face such a problem, how can they quickly get the knowledge they need? With the change in the knowledge form and traditional education concept, people pay more attention to the improvement of learning ability based on information acquisition, and learning how to learn gained ground. The development and change in information society make people more and more aware of the importance of knowledge updating, and lifelong learning ability is particularly important. The learners should have self-directed learning skills and these skills are related to lifelong learning (Tekkol & Demirel, 2018). Through self-directed learning, learners can improve their learning ability, build a higher learning enthusiasm, and meet individual independence and flexible learning needs through formal and informal ways. Self-directed learning has become a habit rather than a requirement already. Self-directed learning does not mean learning alone (Voskamp et al., 2022). From a long time ago, self-directed learning was considered as an active learning strategy implemented by formulating learning objectives and identifying teaching resources (Knowles, 1975). From the perspective of function, the close relationship between self-directed learning and lifelong learning determines that self-directed learning should first requires individuals to have the skills and abilities to learn on their own (Candy, 1990). In this process, according to Knowles, learners first face the challenge of identifying learning objectives and selecting appropriate learning materials (Knowles, 1975).

Nowadays, with the advent of information technology, knowledge becomes more fragmented and data-driven. In addition to technical and organizational guidance, the use of information analysis tools for learning significantly mediated the influence of learners’ online communication self-efficacy and computer self-efficacy (Sumuer, 2018). It can also improve the learners’ critical thinking, communication and collaboration skills (Ramamuruthy & Rao, 2015). The affordances of communication technologies can provide learners with the opportunity to enhance the self-directed learning process (Sumuer, 2018). Learners get great satisfaction in self-directed learning with the information analysis techniques. However, the self-directed learning also has some difficulties (Loyens et al., 2008), and the learners need to face the time-consuming task of searching for useful knowledge in a vast amount of information (Ribosa & Duran, 2022). The scattered and unsystematic resources are another problem in successfully complete self-directed learning (X. Liu & Dai, 2007). With the development of artificial intelligence, the intelligent recommendation system has become a necessary tool for learners (Shao et al., 2021; Wu et al., 2022). It can help the learners to obtain appropriate learning resources based on their preferences and learning goals (George et al., 2016; Joy & Pillai, 2022). It is very suitable for self-directed learning in the aspects of personal information collection, system guidance and personalized control (Buder & Schwind, 2012). At present, the function of the recommendation system mainly focuses on the precision and accuracy of recommended results (Verbert et al., 2012), but a high accuracy does not always correlate with the needs of learners (Erdt et al., 2015). Sometimes, even the learner with certain knowledge experience can not accurately describe the knowledge information which he needs. Although, some sources of scholarly retrievals, such as the use of Google Scholar, also lack advanced search capabilities and inability to apply quality control (Halevi et al., 2017). In fact, most learners are not equipped with the hardware support for deep learning, or built an additional overly complex operating system either. The development direction of an intelligent recommendation system is to identify the differences between learners intelligently and give the accurate recommendations of interactive evaluation according to the resources that learners are interested in (Tarus et al., 2017). In particular, the higher requirement of current recommendation systems is to meet the self-directed learning needs of learners even with less evaluation information.

Among existing recommendation technologies, the Collaborative Filtering-based Recommendation Algorithms (CFRAs) are widely used in e-commerce (Garanayak et al., 2019; Valcarce et al., 2019). But they don’t work well in sparse data and cold start scenarios. The Matrix Factorization-based Recommendation Algorithms (MFRAs) are another classic recommendation algorithm (Han et al., 2019; Mehta & Rana, 2017; Sommer et al., 2017). Matrix factorization (MF) can map the information of users and items into F-dimensional joint latent space to overcome the problems of sparse data and cold start (Qiu et al., 2018). However, MFRAs only consider the explicit relations between users and items, which can result in poor accuracy in complex data scenarios.

The deep-learning-based recommendation algorithms (DLRAs) have also obtained excellent results in many data scenarios (Dhelim et al., 2022). At present, the main idea is to add deep neural networks and related frameworks based on traditional machine learning methods, such as CF and MF, to combine feature extraction and fusion. However, in scenarios with small sample data and extremely sparse ratings, the existing DLRAs can not be sufficiently trained to obtain enough information and features. Moreover, the training needs a large amount of time and space costs, which requires a high level of hardware environments (S. Zhang et al., 2020).

The Probabilistic Graph Model (PGM) is an effective method employing the probability graph to process multiple variables (W. Liu et al., 2019). The PGM-based recommendation algorithms (PGMRAs) identify users and items according to their latent spaces and perform clustering analysis to improve recommendation accuracy. However, PGMRAs are also sensitive to sparse data due to information loss during training.

Based on the above analysis, we propose an MF-based PGM for recommendation algorithm (MF-PGMRA), making full use of the advantages of MF’s data enhancement and PGM’s implicit relation mining to perform recommendation tasks in scenarios with sparse data and cold start. And then, we embedded a recommendation module into the library management system, applied MF-PGMRA to the learning materials recommendation to help students to self-directed learn efficiently for evaluating the actual effects of each algorithm. The system enables students to carry out the intelligent recommendation and search function of learning materials without the need for special hardware, which helps solve the problem of a more accurate selection of learning materials under the condition of sparse data and creates conditions for further development of self-directed learning.

The main contributions of this paper include the following:

We design an intelligent recommendation method by combining MF with PGM based on Expectation Maximization (EM) (Miyahara et al., 2020) to predict the ratings and perform the recommendation tasks.

MF-PGMRA can solve the problems of sparse data and cold start, and carry out a fine-grained clustering analysis on users and items to capture their implicit relations.

We performed different experiments on four datasets with different characteristics and built a library management system with a recommendation module for practical application. The Experimental and satisfaction measure results highlight that MF-PGMRA outperforms the related methods and some representative DLRAs in the scenarios with small sample data and extremely sparse ratings.

Related Work

CFRAs and MFRAs

CFRA is one of the earliest representative methods. Its significant advantage is the simple implementation. However, CFRAs do not work well in sparse data and cold start scenarios. Subsequently, MF is widely used in recommendation applications, and MFRAs outperform CFRAs in sparse data and cold start scenarios. The core of MFRA is to map the users and items to their latent space respectively, and the vectors of users and items are used to predict the missing ratings. Although CFRA and MFRA belong to the matrix completion strategy in essence, MFRA considers user space and item space with data enhancement functions, and the ratings are determined by several latent factors: the original “user-item” rating matrix,

The crucial functions of MFRA are as follows:

Loss function:

Where

According to Equations 1 and 2, the loss function can be expressed:

Where

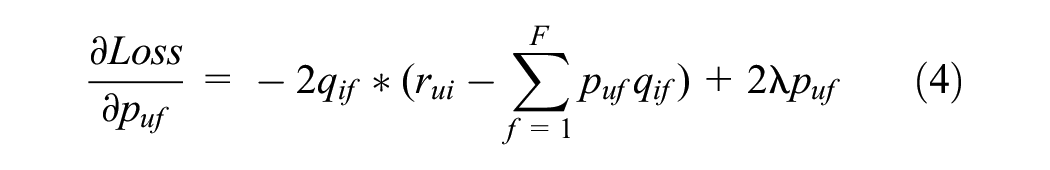

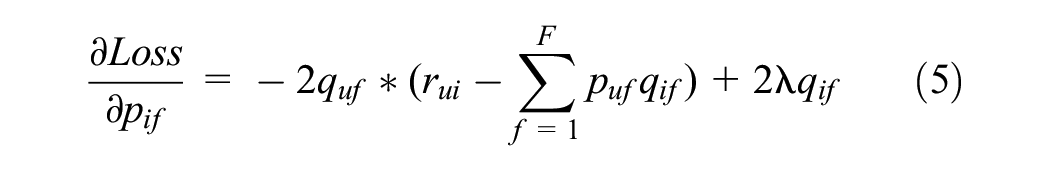

(2) Gradients:

By the partial derivatives of puf and qif, their gradients can be calculated:

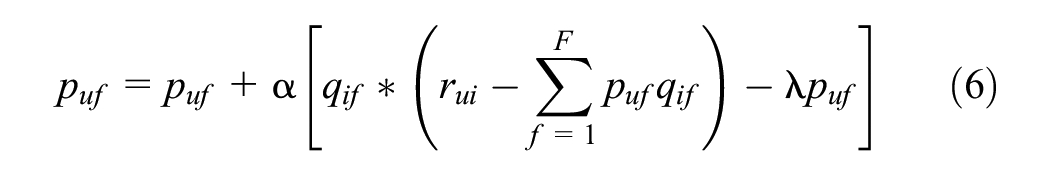

(3) The update of puf and qif

MFRA updates puf and qif iteratively along the gradient direction by the following formulas:

where α is the learning rate, and the prediction ratings are calculated by Equation 2.

Compared with CFRA, MFRA predicts ratings only by calculating the inner product. When new users and items are added, MFRA can factorize the new matrix to overcome the cold start problem with little time and space cost. The computational complexity of prediction does not depend on the number of users and items, and MFRA can overcome the sensitivity of sparse data. However, MFRA only considers the explicit relations between users and items. In complex scenarios, the performance needs to be further improved.

Probabilistic MF (PMF) and Probabilistic Graph Model (PGM)

PMF is an improved MF with Bayesian probability theory, has been well used for the recommendation tasks. Ma et al. (2008) proposes a factor analysis approach based on PMF to solve the data sparsity problem. J.Liu et al. (2013) presents two recommendation methods fusing social relations and item contents based on Bayesian PMF. The method in Hernando et al. (2016) is based on CF with Bayesian PMF. Bobadilla et al. (2018) proposes a Bayesian Non-negative MF (BNMF) method to improve the clustering results of CF with a pre-clustering. Cao et al. (2018) proposes a neighborhood-aware unified PMF recommendation model that fuses social tagging. R. Chen et al. (2018) proposes a hybrid recommendation approach using GMM and MF. C. Wang et al. (2018) proposes a Confidence-aware MF (CMF) to optimize the rating prediction accuracy and measure the prediction confidence simultaneously. However, PMF focuses on the visible variables (J. Chen, 2017) and fails to fully mine implicit relations.

PGM is a clustering model based on probabilistic graph, similar to the Gaussian Mixture Model (GMM) (Tao et al., 2020). There are m sub-distributions in PGM. It has been widely used for artificial intelligence, medical treatment and other fields. Meanwhile, PGM has also achieved good results in the recommended system (Q. Xiao, 2017). In recommendation scenarios, there are two latent spaces: user latent space and item latent space. The ratings are determined by the two latent spaces. The users with similar preferences can be grouped into one user-class, and several similar items can be grouped into one item-class. PGM can take into account these visible and latent variables by fine-grained clustering analysis to achieve better recommendations.

Deep Learning-Based Recommendation Algorithms (DLRAs)

Some representative deep-learning-based recommendation nalgorithms (DLRAs) have been published. H. Wang et al. (2015) proposes a CDL (Collaborative Deep Learning) recommendation method combing SDAE (Stack Denoising AutoEncoder) with Bayesian Network to obtain the rating matrix. W. Zhang et al. (2016) proposes the FNN (Factorization Machine supported Neural Network) and SNN (Sampling-based Neural Network), and combines three feature extraction methods, FM (Factorization Machine), RBM (Restricted Boltzmann Machine) and DAE (Denoising AutoEncoder), with DNN (deep neural network). FNN employs FM to transform the sparse features into dense features and reduce the dimension of feature space; SNN employs negative sampling based on RBM and DAE to accelerate the training speed. Shan et al. (2016) proposes the Deep Crossing model using the DNN framework to improve the training efficiency. Cheng et al. (2016) proposes the Wide & Deep model combing the Linear Regression (LR) with DNN to carry out feature combination and training. Qu et al. (2016) proposes a PNN (Product-based Neural Network), similar to FNN. It is based on the Product layer to train features. X. He and Chua (2017) proposes NFM (Neural Factorization Machine) combing the second-order linear features extracted by FM with the high-order nonlinear features extracted by Neural network. Guo et al. (2017) proposes the DeepFM combining FM and DNN, similar to the NFM. H. Wang et al. (2015) proposes the Deep & Cross Network (DCN). Through joint training of the Cross Network and DNN, DCN not only does DNN retain the ability to capture complex feature combination, but also has advantages in recommendation accuracy and memory usage. X. He et al. (2017) proposes a CFRA based on deep learning, NCF (Neural Collaborative Filtering), which improves traditional CFRAs and achieves better recommendation results. J. Xiao et al. (2017) proposes an AFM (Attentional Factorization Machine) based on FM, considering the different importance of each feature.

In all, the basic framework of DLRAs can be summarized as shown in Figure 1. However, in small sample data scenarios, the rating information is extremely sparse, these DLRAs are often unable to be fully trained, and the high time and space complexities of training are also often unmet.

Framework of DLRAs.

Method

Idea and Process

To take full advantages of the data enhancement function of MF to sparse data and cold start, and the implicit relations mining of PGM between uses and items, and to avoid the problems of the high computational cost of DLRAs and their insufficient training on the datasets with small sample data and extremely sparse ratings, we propose the Matrix-Factorization-based Probabilistic Graph Model for Recommendation Algorithm (MF-PGMRA). The basic idea is to factorize the rating matrix to address the sensibility of PGM to sparse matrix, and train PGM based on the Expectation Maximization (EM) to mine implicit relations between users and items and predict the unknown ratings. The predicted results and original data are merged as the ratings. The parameters are listed in Table 1. Among them, U, I, R, ZU, ZI are nodes in PGM. The parameters are P(ZU), P(ZI), P(U|ZU), P(I|ZI) and P(R|ZU, ZI). and PGM structure is shown in Figure 2.

Parameter List.

Probabilistic graph structure for users, items and ratings.

The joint probability distribution of all nodes in PGM can be expressed as Equation 8. According to Bayesian probability theory and the Probabilistic Graph structure shown in Figure 2, the joint probability of P(U, I, R) can be expressed as Equation 9:

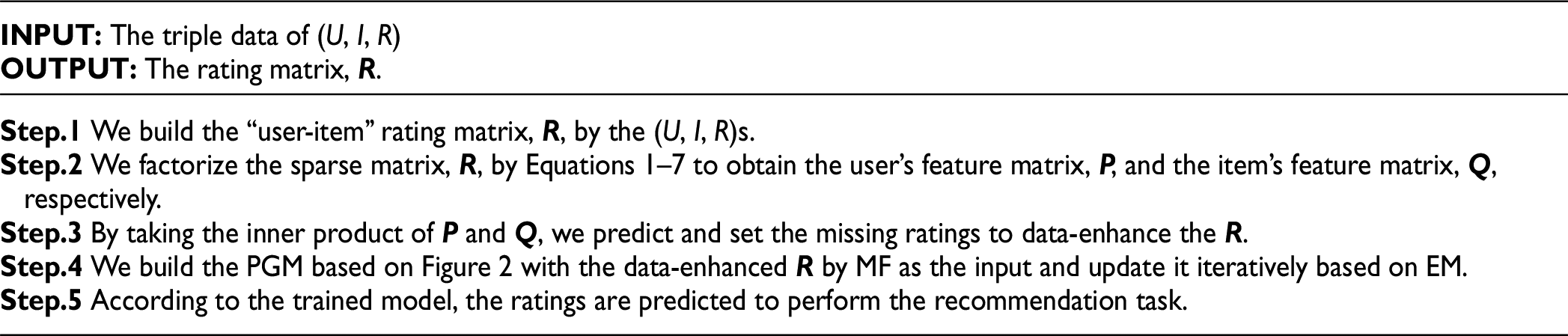

MF-PGMRA’s mainly processes are shown as follows:

Among them, The

(1) In Step.4, (U, I, R) is the observable variable in PGM, ZU and ZI are latent variables. EM is a data optimization algorithm that can be applied to the datasets containing latent variables or missing data. The optima of parameters, P(ZU), P(ZI), P(U|ZU), P(I|ZI) and P(R|ZU, ZI), can be updated iteratively by the maximum likelihood estimation. EM mainly includes two steps: Expectation and Maximization:

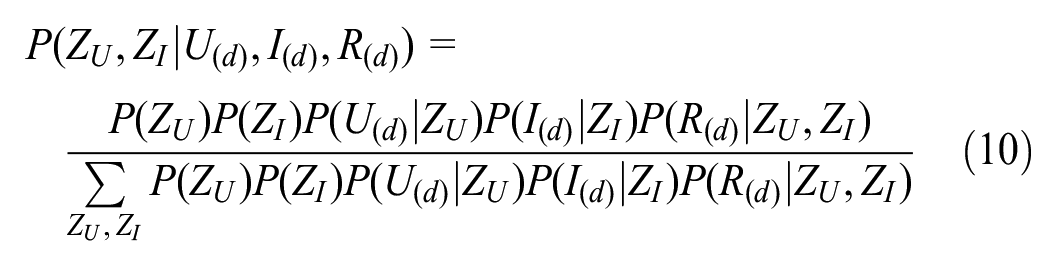

①E (Expectation): We can calculate the joint posterior probability of (ZU,ZI) by Equations 8 and 9:

It is the expectation of (ZU,ZI) on the training set.

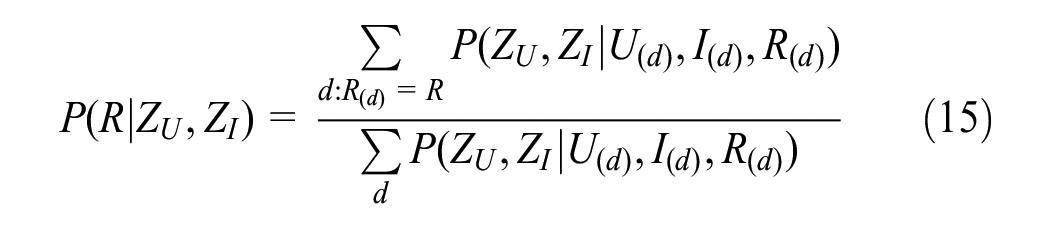

②M (Maximization): According to P(ZU, ZI|U(d), I(d), R(d)), P(ZU), P(ZI), P(U|ZU), P(I|ZI) and P(R|ZU, ZI) are updated by maximum likelihood estimation by the following formulas:

Among them, d:U(d) = U represents the triple data taking U(d) = U, d:I(d) = I represents the triple data taking I(d) = I, d:R(d) = R represents the triple data taking R(d) = R.

Then, these parameters in Equations 11–15 are updated iteratively by the EM operations and Equation 10 to get the joint distribution of (ZU,ZI).

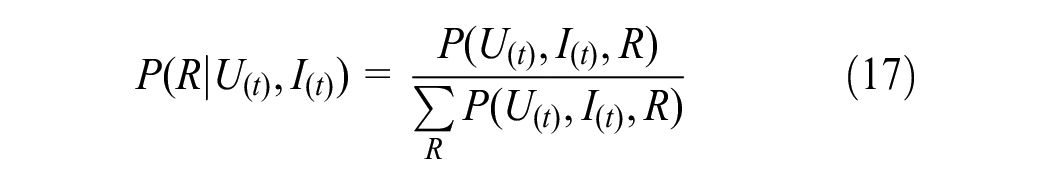

(2) In Step.5, the unknown ratings can be predicted by the trained P(ZU), P(ZI), P(U|ZU), P(I|ZI) and P(R|ZU, ZI). Given that U(t) and I(t) represent the target user and item respectively, the joint probability of (U(t), I(t), R) can be estimated:

According to Bayesian probability theory and Equation 16, the probability distribution of P(R|U(t), I(t)) can be estimated:

Therefore, the rating of U to I, RU(I), can be calculated by the weighted average of Equation 17:

Alternatively, RU(I), can be set to the maximum of P(R|U(t), I(t)):

Analysis

The core of PGM is to extract the user space and item space to identify each user and item. That is, each user and item has a representation on the corresponding latent space, and the representations can predict the ratings by the fine-grained clustering analysis based on PGM to boost the recommendation performance.

In the traditional Bayesian network, Each variable is observable, and the parameter estimation is carried out by maximum likelihood estimation or Bayesian estimation (J. Chen, 2017). However, MF-PGMRA focuses on the fine-grained clustering analysis based on PGM. The (ZU, ZI) are latent variables, and their probability distributions need to be estimated by a probability graph. And then, MF-PGMRA can predict the ratings by P(R|ZU,ZI).

PGM is sensitive to sparse data, but this problem can be overcome by the inner product of MF. MF predicts the ratings, and uses them to data-enhance the training set and as the input to PGM, which can effectively reduce the sensitivity of sparse data and the information loss of PGM training.

Considering the relations between users and items, MF-PGMRA is more rational than CFRA filling in missing ratings with default values (i.e., the average of ratings); Compared with the explicit relations between users and items in MFRA, MF-PGMRA can be more suitable for complex data scenarios by the implicit relation mining based on PGM between users and items.

Instance

Based on PGM, MF-PGMRA divides users and items into several classes, respectively. The core is to obtain the distribution of each class and predict the ratings. Therefore, we design an instance as shown in Figure 3. The training set is the sparse rating matrix used for training PGM (there are four samples in total).

Training: The parameters in Formula (10), P(ZU), P(ZI), P(U|ZU), P(I|ZI) and P(R|ZU, ZI), are initialized randomly. Their numbers are determined by the dimensions of

Predicting: According to the probability distribution of (ZU, ZI) obtained during the training stage, P(R|U(t)=u2, I(t)=i1) can be calculated according to Equations 16 and 17 to estimate the probability distribution of ratings and predict the ratings by Equation 18.

Instance design.

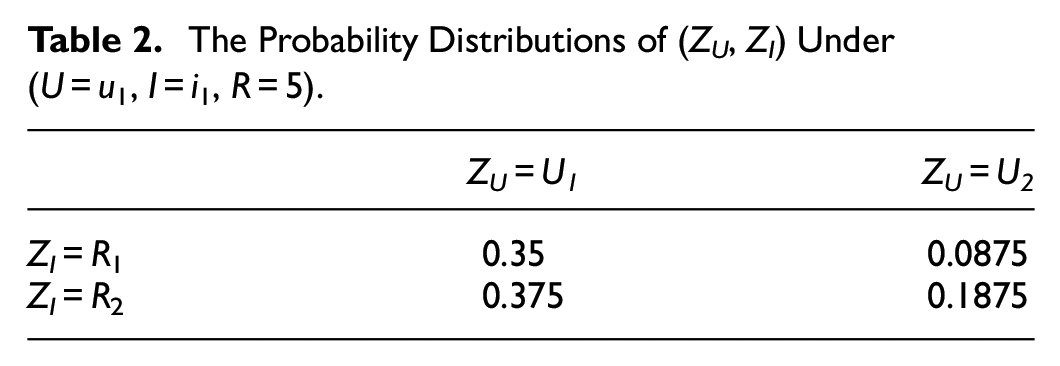

We design an instance shown in Figure 3 to illustrate our method. The rating range is “1~5.” Specifically, we consider the following scenario: There are three users, two items; the numbers of ZU and ZI are both 2; the training set consists of four triple data: (U = u1, I = i1, R = 5), (U = u2, I = i2, R = 3), (U = u3, I = i1, R = 2) and (U = u3, I = i2, R = 1). The parameter initialization is shown in Figure 4.

Parameter initialization.

Training Process

According to Equation 10 and the initial values, the posterior probability distribution of ZU and ZI can be calculated. For example, for (U = u1, I = i1, R = 5):

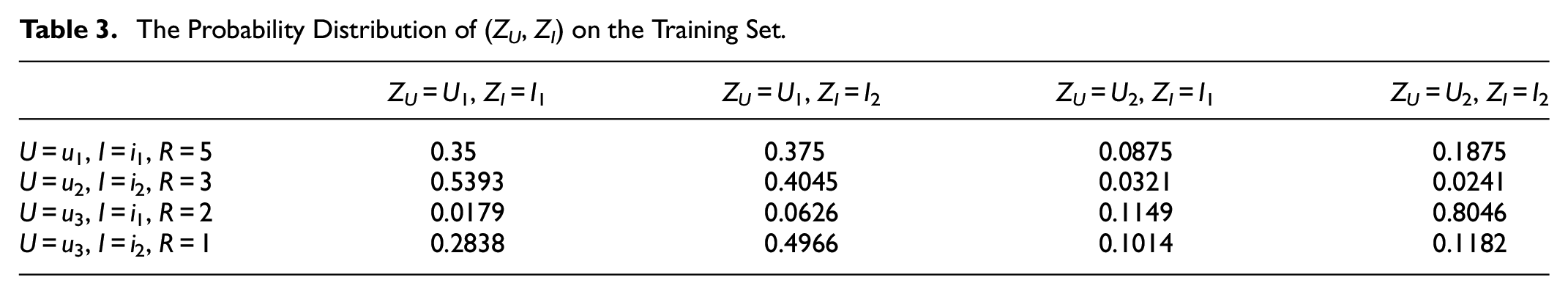

Similarly, the probability distributions of (ZU, ZI) under (U = u1, I = i1, R = 5) are shown in Table 2. Based on these values, the probability distribution of (ZU, ZI) on the training set in Figure 4 can be calculated as shown in Table 3.

The Probability Distributions of (ZU, ZI) Under (U = u 1, I = i 1, R = 5).

The Probability Distribution of (ZU, ZI) on the Training Set.

Then, the parameters, P(ZU), P(ZI), P(U|ZU), P(I|ZI) and P(R|ZU,ZI) can be updated iteratively according to Equations 11–15. The final probability distributions of these parameters are shown in Figure 5.

The optimal value of each parameter.

Prediction Processes

Given user, U = u2, and item, I = i1, we can calculate the probability distribution of P(ZU, ZI|U(t) = u2, I(t) = i1, R) according to Equation 16 and Figure 5, as shown in Table 4:

The Probability Distribution of P(ZU,ZI|U(t) = u2,I(t) = i1,R).

The data in Table 4 are put into Equations 16 and 17 to get the posterior probability distribution of

Then we can predict the rating of u2 to i1 is 5 by Equations 17 and 18.

Finally, we can predict all the unknown ratings.

Experiment

Experimental Setup

We selected four datasets for experiments. The statistical information is shown in Table 5. The training set and test set of each dataset were divided by cross-validation.

Movielens (Harper, 2015): It contains 1,000,209 records of 6,040 people rating 3,883 movies, and also includes some other information, such as film metadata information and user attributes. The rating matrix has a high sparsity, which is 95.74%.

Epinion (Boroujeni, 2013): It contains 139,738 records of 40,163 users rating 139,738 items. Each item is rated at least once. In addition, the dataset includes the trust relations among users, the data is more complex, and the sparsity of the rating matrix is very high, at 99.9882%.

Amazon Instant Videos (R. He & McAuley, 2016): It contains 583,933 records of 5,130 users rating 1,685 videos. The dataset also contains 37,126 comments, and its rating matrix is 93.24% sparsity.

Toys & Games (McAuley et al., 2015): It contains 915,446 records of 53,1,890 users rating 64,426 games. The rating matrix is extremely sparse, at 99.9973%.

Statistical Information of the Four Datasets.

Experimental Settings on the Four Datasets.

We adopt the Mean Absolute Error (MAE), Mean square error (MSE) and “Accuracy (ACC)” as the performance metrics. We designed different experiments to demonstrate the advantages of MF-PGMRA in different aspects. Specifically, the experiment process is as follows:

Comparative Experiment of PGMRA, CF-PGMRA and MF-PGMRA

To illustrate that MF can better solve the sparse data sensitivity and cold start problems than CF, we built the comparative experiment of PGMRA, CF-PGMRA and MF-PGMRA on Movielens and Epinion datasets. The experimental results are shown in Figures 6 and 7. The results of each method tend to converge on each dataset when the iteration is about 10 times.

On the Movielens dataset (sparsity: 95.74%), PGMRA performs poorly because of the information loss caused by the sparse matrix. The results of CF-PGMRA are close to that of MF-PGMRA because of its limited data enhancement and practical training based on PGM on a reasonable sparse rating dataset. MF-PGMRA obtains the best results because it has a better data enhancement ability based on MF and considers the latent relations between users and items to reduce the information loss in PGM training.

On the Epinion dataset (sparsity: 99.9882%), due to the sensitivity to the sparse matrix, PGMRA’s results are much less effective than those on Movielens dataset. On this extremely sparse rating dataset, CF’s data enhancement ability has little effect. Although CF-PGMRA combines PGM, its results are not much better than PGMRA. In contrast, MF-PGMRA’s results on this dataset are about the same as those on the Movielens dataset. This means that due to the advantages in overcoming the sensitivity of sparse matrix and cold start, and analyzing the latent relations between users and items, MF-PGMRA can achieve better results on different types of datasets.

Results of the three methods on the Movielens dataset.

Results of the three methods on the Epinion dataset.

Comparative Experiment of CFRA, MFRA, PGMRA, CF-PGMRA, and MF-PGMRA

To highlight that PGM can effectively mine the implicit relations between users and items on the basis of solving the sparse data sensitivity and cold start problems, we compared CFRA, MFRA, PGMRA, CF-PGMRA and MF-PGMRA on Movielens and Epinion datasets. MAE and MSE are used as performance metrics (since CFRA and MFRA are regression models for rating prediction, which cannot be classified, so we cannot calculate the ACC). Other settings and procedures are the same as above. The experimental results are shown in Table 7.

The results of PGMRA are inferior to those of CFRA and MFRA on the two datasets. After adding CF or MF, the effects of CF-PGMRA and MF-PGMRA are significantly boosted compared with CFRA and MFRA, which indicates that PGM can fully mine the implicit relations between users and items after solving the problems of sparse matrix sensitivity and cold start.

On the Epinion dataset (sparsity: 99.9882%, more complex, more samples), due to CF’s limited data enhancement ability on the extremely sparse data, CFRA’s performances are the worst. Although MF has better data enhancement ability, MFRA is only better than CFRA, because it only considers the explicit relations between users and items, which is not enough for data analysis in larger and more complex datasets. PGMRA has better results than CFRA and MFRA due to PGM’s advantages in clustering analysis based on implicit relations between users and items. Due to the better data enhancement of MF and the implicit relation mining of PGM, MF-PGMRA can achieve the best results in a more complex data background.

Results of the Five Methods on Movielens and Epinion Datasets.

The bold entries are the best results of the five methods.

Comparative Experiment of Representative DLRAs and MF-PGMRA

To verify MF-PGMRA can outperform DLRAs in the scenarios with small sample data and sparse ratings, we built the comparative experiment on the Amazon Instant Videos dataset with small sample data and the Toys&Games dataset with extremely sparse ratings. We use the MSE to evaluate the recommendation performance (since these DLRAs are regression models for rating prediction, which cannot be classified, so we cannot calculate the ACC. Moreover, MAE and MSE have similar curses, so we just analyze the results of MSE). The representative DLRAs are summarized as follows:

DeepCoNN (Deep Cooperative Neural Network) (Zheng et al., 2017): It is an end-to-end convolutional neural network to get the latent features of users and items.

HFT (Hidden Factors and hidden Topics) (McAuley & Leskovec, 2013): It is a deep-learning-based MF method. The comment information is used for model training, rating and prediction.

TransRev (Reviews as Translations) (Garcia-Duran et al., 2020): It learns the representations of users, items and comments. It is an improved DeepCoNN with attention mechanism.

AspeRa (Aspect-based Rating prediction) (Nikolenko et al., 2019): It estimates the ratings based on comment information and discovers the coherence of comments by using max-margin loss for joint training of item and user embeddings.

The parameters settings of these DLRAs are based on the settings in their References, respectively. The experimental results are shown in Table 8 and Figure 8.

On the Amazon Instant Videos dataset (sparsity: 93.24%), which is the smallest dataset of the four datasets (583,933 samples), the results of MF-PGMRA are better than those of DLRAs. Because DLRAs can not be fully trained on small sample datasets, while MF-PGMRA can get better prediction results by considering the data enhancement and implicit relations between users and items.

On the Toys&Games dataset (sparsity: 99.9882%), which has the highest sparsity of the four datasets, the results of MF-PGMRA are still better than those of DLRAs. The DLRAs are sensitive to the extremely sparse rating data. Moreover, the training costs of DLRAs are significantly higher than that of MF-PGMRA.

MSE Results of the Representative DLRAs and MF-PGMRA on the Two Datasets.

The bold entries are the best results of the five methods.

Results of MF-PGMRA on two datasets.

In summary, CFRA, MFRA, and PGMRA are not satisfactory in the scenarios with sparse rating matrix and complex application data. Based on the advantages of PGM in data implicit relation mining and data enhancement of MF, MF-PGMRA shows stable and ideal results on different datasets with different characteristics. Especially, on the datasets with small sample data and extremely sparse ratings, the results are better than those of DLRAs.

Algorithm Practices and Learner Satisfaction Measure

To verify the effectiveness of MF-PGMRA, we added a rating module to the library management system of XXXX in 2021, and collected 53,742 rating records of 2,000 students on 7,563 books or materials in the first half of 2022. The rating matrix is 93.24% sparsity. Then we used these rating records to train the related machine-learning-based algorithms, MFRA, PGMRA, MF-PGMRA, and the best DLRA, AspeRa, in Table 8. After the second half of this year’s semester started, we embedded each algorithm as a recommendation module into the library management system, tested it on 120 freshmen and asked them to take a satisfaction questionnaire, respectively. We employed four evaluation metrics, Speed, Accuracy, Usability and Convenience, each with a rating range of 0 to 10. The details of the questionnaire are shown in Table 9, and the average results are summarized in Figure 9.

The Details of Questionnaire.

Learner satisfaction measure results.

In terms of speed metrics, MF-PGMRA’s satisfaction is on par with MFRA and PGMRA, and better than AspeRa, the deep-learning-based representative. It is important to emphasize that MF-PGMRA has the best results in all other metrics. In particular, although AspeRa also outperforms MFRA and PGMRA in Accuracy, Usability and Convenience metrics, its speed is unsatisfactory, thus pulling down its satisfaction in terms of Usability and Convenience, compared to its Accuracy results. Overall, MF-PGMRA achieved the best performances which can be reflected in the ratings and comments of students on all aspects. The results also can verify that MF-PGMRA can meet the different needs of users for material recommendation and selection in self-directed learning.

With the help of the recommendation module, students can automatically get the learning materials from the library management system based on their name, major, grade and other information. It helps students to avoid blind selectivity when faced with a huge amount of learning materials and allows them to further filter learning materials based on keywords.

In this practice, we found that with the help of the recommendation module, students do prefer to use the library management system to access materials. Particularly, compared with the initial use of the system, the students use the recommendation system for longer, and they are willing to spend more time looking for learning materials through the recommendation system. After 2 weeks of testing and running the recommendation module, the average time students spend using the system has improved significantly, from less than3 min to over 8 min, indicating that the recommendation module can effectively promote students’ willingness to self-directed learning and develop continuous learning behaviors.

The above analysis supports that if accurate learning materials are provided to learners, they will not easy to give up self-directed learning due to too many learning resources or inefficient search. The recommendation system can promote students to carry out self-directed learning after class, and effectively extend the learning time and enhance the learning effectiveness. Through the study of e-materials with high ratings, students can grasp the related knowledge more comprehensively and determine further learning objectives more accurately.

Discussion

In today’s online and blended learning environments, learning management systems have become the center of support for teaching and learning (Pepple, 2022). Different from other fields, technology-enhanced learning systems require more consideration of the particularity of individual learners’ needs (Erdt et al., 2015). Many studies have shown that students have become accustomed to using various intelligent technologies to help self-directed learning. The recommendation system is an important tool to realize self-directed learning, which should not only achieve accuracy but also provide personalized service (Fazeli et al., 2018). When learners face the huge amount of knowledge but the lack of evaluation information, they often need more accurate personalized recommendations rather than information accumulation in a less demanding and more convenient hardware environment. The accurate recommendation of learning materials is a prerequisite for improving the effectiveness of self-directed learning (Geng et al., 2019). With the help of intelligent recommendation, the preparation for self-directed learning can be realized more accurately and effectively, to avoid reducing learners’ enthusiasm and motivation. The ability of students to obtain guidance will help improve the effectiveness of learning, and will actively improve the motivation to learn (An et al., 2022). Intelligent recommendation of learning materials is an ideal tool for students to overcome distraction and abandonment in the face of massive amounts of materials in self-directed learning (Krieger, 2015).

We analyze the shortcomings of traditional CFRA, MFRA and current DLRAs in the scenarios with sparse data and cold start, and propose the matrix-factorization-based probabilistic graph model for the recommendation algorithm (MF-PGMRA). Combined with MF’s data enhancement, it can take full advantage of the implicit relation mining of PGM between users and items on the basis of overcoming the problems of sparse ratings and cold start. The experimental results on four datasets with small sample data and sparse ratings show that MF-PGMRA outperforms the traditional CFRAs and MFRAs, and gets better performances than the representative DLRAs in the scenarios with small sample data and extremely sparse ratings.

Due to the advantages of MF-PGMRA, we believe that it can be used to recommend accurate learning materials for the preparation of self-directed learning. By constantly collecting students’ evaluations or ratings of learning materials, it can recommend suitable learning materials more and more accurately to help students, even in the weak environments of the library hardware. We discussed the establishment of a library management system to better realize the learning materials recommendation process for self-directed learning, and on the basis of ensuring the accuracy of recommendation results, we consider more the convenience of user operations and the weak dependence on the hardware environment. Learners can enjoy the self-directed learning service without using professional equipment. The practical process and the questionnaire results show that the learners can obtain better choices among the huge amount of information, and make more suitable choices to enrich their learning knowledge and make decisions on the basis of better user experience, which also can verify the recommendation module based on MF-PGMRA can better meet the learning needs of the students than the other baselines. Students gave high ratings and comments to the recommendation module based on MF-PGMRA, as it really helped them to get accurate learning materials efficiently, which also has been confirmed in the interviews with some students.

In the future, we plan to build an improved PGM by introducing a deep learning model to further mine implicit relations between users and sources of scholarly retrieval, and further improve the recommendation performance of the library management system for better self-directed learning. In the case of continuous data accumulation, detailed data analysis will be made for courses in different academics to provide more detailed technical support for students’ self-directed learning.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Philosophy and Social Science Foundation of Heilongjiang Province (20EDE377), and the Education Foundation of Heilongjiang Province (SJGSZD2021018).

Data Availability Statement

The data supporting the findings of this study are available within the article, or from the corresponding author, [Yingjin Cui].