Abstract

Cross-disciplinary research (CDR) is a necessary response to many current pressing problems, yet CDR practitioners face diverse research challenges. Communication challenges can limit a CDR team’s ability to collaborate effectively, including differing use of scientific terms among teammates. To illustrate this, we examine the conceptual complexity and cross-disciplinary ambiguity of the term

Introduction

Cross-disciplinary research 1 (CDR) confronts a number of institutional and structural impediments, including lack of opportunity and funding, methodological and philosophical incompatibilities, and differences in research time frames and spatial scales (Bracken & Oughton, 2006; Brewer, 1999; Eigenbrode et al., 2007; Golde & Gallagher, 1999; Khagram et al., 2010; Lele & Norgaard, 2005; Morse, Nielsen-Pincus, Force, & Wulfhorst, 2007; National Academy of Sciences [NAS], 2004). Among the significant challenges cross-disciplinary teams face are those associated with communication, such as formation of “high performing collaborative research teams” (Cheruvelil et al., 2014), localization of disparate disciplinary cultures (Crowley, Eigenbrode, O’Rourke, & Wulfhorst, 2010), and translation of specific words across different scientific vernaculars (Bracken & Oughton, 2006; Eigenbrode et al., 2007; Thompson, 2009).

Variation in technical terminology is a communication issue that consistently emerges across teams of disparate disciplinary composition, career maturity, and team objectives (Bracken & Oughton, 2006; Endter-Wada, Blahna, Krannich, & Brunson, 1998; Jeffrey, 2003; Thompson, 2009). There is some consensus that technical language is an area where cross-disciplinary literacy is critical (Marzano, Carrs, & Bell, 2006; Morse et al., 2007; Thompson, 2009; Wear, 1999). This is especially evident in science and academia, where words can have more precise and constrained disciplinary meanings than they do in general language. For an example of this, consider the word

Semantic variation across technical vocabularies challenges successful CDR in a number of ways. Cross-disciplinary communication requires practitioners to (a) understand their own disciplinary perspective well enough to convey the meanings to others and (b) develop a shared understanding of commonly used terms (Jeffrey, 2003). The understanding one has of one’s own disciplinary perspective, though, will often be implicit or tacit, acquired over time based on one’s experiences with words (Nagy & Scott, 2000; Polanyi, 1966/2009). In the event that obstacles to communication arise, explicit understanding of this perspective that can be readily conveyed to one’s collaborators could be of great value. Mutual understanding of how other disciplines use their technical terms is needed to support the desired level of integration and build common ground across disciplines (McKibbon et al., 2010). Once achieved, this mutual understanding can enable collaborators to avoid unwittingly talking past one another because they assume that the terms they use are univocal. Appreciation for these disciplinary nuances allows for greater word consciousness, which promotes more effective word choice and word use (Graves, 2006).

In this article, we examine the conceptual complexity and cross-disciplinary ambiguity of technical terminology from the perspective of a central scientific technical term, “hypothesis.” In some disciplines, the term

Theme and Variation in the Hypothesis Concept

Few concepts are as central to scientific research as the concept

According to what we will call the “Standard View” of the scientific method, science begins with observation that leads to the formation and then the testing of hypotheses, followed by interpretation of the results (McErlean, 2000, p. 8). An important method of forming and testing hypotheses is the hypothetico-deductive method, in which a hypothesis is tested against an observational statement that it deductively implies (Hempel, 1966). The Standard View gives privilege of place to hypotheses as the “tentative answers” (Hempel, 1966, p. 10) that would serve to explain the phenomena under investigation. Specifically, on this approach, hypotheses are statements of relationships between variables that are formed prior to derivation of the data and, if valid, suggest explanations for the effects under investigation. As such, they are different from both research questions, which pose but do not explain, and models, which are built after data have been derived (Krebs, 2000). Hypotheses in this view are regarded as necessary parts of the scientific process (Martin, 2000), without which “analysis and classification are blind” (Hempel, 1966, p. 13).

On the Standard View, hypotheses are “invented” to explain “observed facts” (Hempel, 1966, p. 15). They are “happy guesses” produced by creative applications of “inventive talent” (Whewell, 1984, p. 211). These suppositions play a key functional role in science, guiding scientific inquiry and constraining scientific experimentation. Historically, though, there has been concern about giving hypotheses such a formative influence over scientific inquiry; in fact, it is this that turned Newton against them, since hypotheses would “frame” the inquiry with an “unproven premise” (Glass & Hall, 2008, p. 379). Here is where Popper’s contribution to the Standard View is most keenly felt. By introducing the idea of

Although the Standard View dominates textbook introductions to the scientific method, the reality of scientific practice reveals wide variability in the

There is no single way to think about the nature of hypotheses or their role in science. Variability in this most basic of scientific research concepts suggests that different disciplines embed different epistemological perspectives, that is, different ways of looking at the nature of research and the business of producing new knowledge (Miller et al., 2008). 2 The fact that conceptual variability manifests at such a foundational level suggests the need to communicate about the different epistemological perspectives in play in collaborative CDR. Failure to do this can leave these variations lying in wait, threatening to give rise to unreasonable disagreement or, perhaps worse, unreasonable agreement (e.g., Bracken & Oughton, 2006; Slatin, Galizzi, Melillo, & Mawn, 2004; Thompson, 2009). In short, revealing assumptions and misconceptions around fundamental terms such as “hypothesis” can create opportunities for CDR practitioners to recognize potential communication hurdles that could hinder team progress, development, and efficiency.

Method

The “Toolbox” approach described by Eigenbrode et al. (2007) is designed to create common ground through conceptual dialogue about fundamental, philosophically identified research assumptions. Specifically, the Toolbox approach focuses members of interdisciplinary research groups on epistemic and metaphysical dimensions of scientific research, structuring a dialogue among them about assumptions that frame and inform their research approaches. By limning research orientations in these fundamental terms, collaborators are afforded the opportunity to develop their disciplinary self-understanding as well as their mutual understanding of the various perspectives represented in the group.

These dialogues take place in workshops organized primarily for groups engaged in cross-disciplinary projects. Toolbox workshop groups have comprised ad hoc collections of researchers, groups of students, and functioning research teams (the majority). Most groups had already coalesced around or were about to begin work on a CDR question. The focus and composition of these groups has varied widely, addressing research questions ranging from public health to clean energy systems to evolutionary biology. Before the workshop, participants are asked to self-report their disciplinary background on their workshop sheets. Participants can record up to six self-identified disciplines in the demographic section of their Toolbox instruments. Most participants list several disciplines, typically variations on a more general discipline (e.g., microbiology, microbial evolution, and microbial ecology).

Participants in the workshops base their discussion around the Toolbox instrument, a structured set of prompts that draws out their views on issues concerning epistemic dimensions (viz., motivation, methodology, confirmation) and metaphysical dimensions (viz., reality, values, reductionism) of the research process. (The Toolbox instrument can be downloaded from www.sagepub.com/orourke, under Chapter 11 student resources.) Immediately prior to the dialogue, participants are provided with a paper copy of the instrument, asked to read the prompts and associated questions, and respond to the prompts by scoring a Likert-type scale and/or making notes about their impressions. In responding to the Toolbox prompts, participants are asked to be mindful of both their own practice and their disciplinary perspective(s); as a result of this prompting, both their individual scientific assumptions and a representation of the assumptions underpinning their discipline(s) emerge in the dialogue. The prompts express foundational claims about scientific research that support different and incompatible perspectives, and as a result motivate dialogue that can reveal unexpected disagreements and surprising similarities. This enables the group to develop an enhanced, negotiated, mutual understanding of their respective approaches to science that is conducive to more effective and efficient research communication.

The subsequent dialogue session typically lasts about 2 hr. Discussion can begin around any point and anywhere within the instrument, and is usually initiated by a participant who found a particular prompt intriguing. While the group dialogue is intended to unfold in an organic, participant-led fashion, each session is moderated by a facilitator who is part of the Toolbox research personnel. The facilitator’s role is to prompt participants to explore other areas of the Toolbox instrument if discussion becomes “bogged down,” or simply comes to a halt, without being intrusive or guiding the dialogue. Toolbox sessions are audio-recorded and subsequently transcribed. Each session also includes an observer drawn from the Toolbox project personnel, whose role is to take notes that ensure the accurate attribution of speaking turns to distinct participants and to capture non-verbal data.

Our research has been exploratory, and our aim has been to run workshops with as many different types of cross-disciplinary groups as possible. Initial recruitment focused on cross-disciplinary groups associated with the National Science Foundation’s Integrative Graduate Education and Research Traineeship (NSF-IGERT) projects; subsequent groups were recruited by targeted invitation or when they approached us about participating in a workshop.

For this article, we analyzed how 16 interdisciplinary groups used the term

Scientific research (applied or basic) must be hypothesis driven.

There are strict requirements for determining when empirical data confirm a tested hypothesis.

Transcripts of these dialogues were coded and analyzed in the qualitative software Nvivo 8 by Toolbox researchers using narrative analysis that involved, first, developing an iterative coding scheme (Miles & Huberman, 1994), and second, using that scheme to reveal patterns related to the use of the term

Results

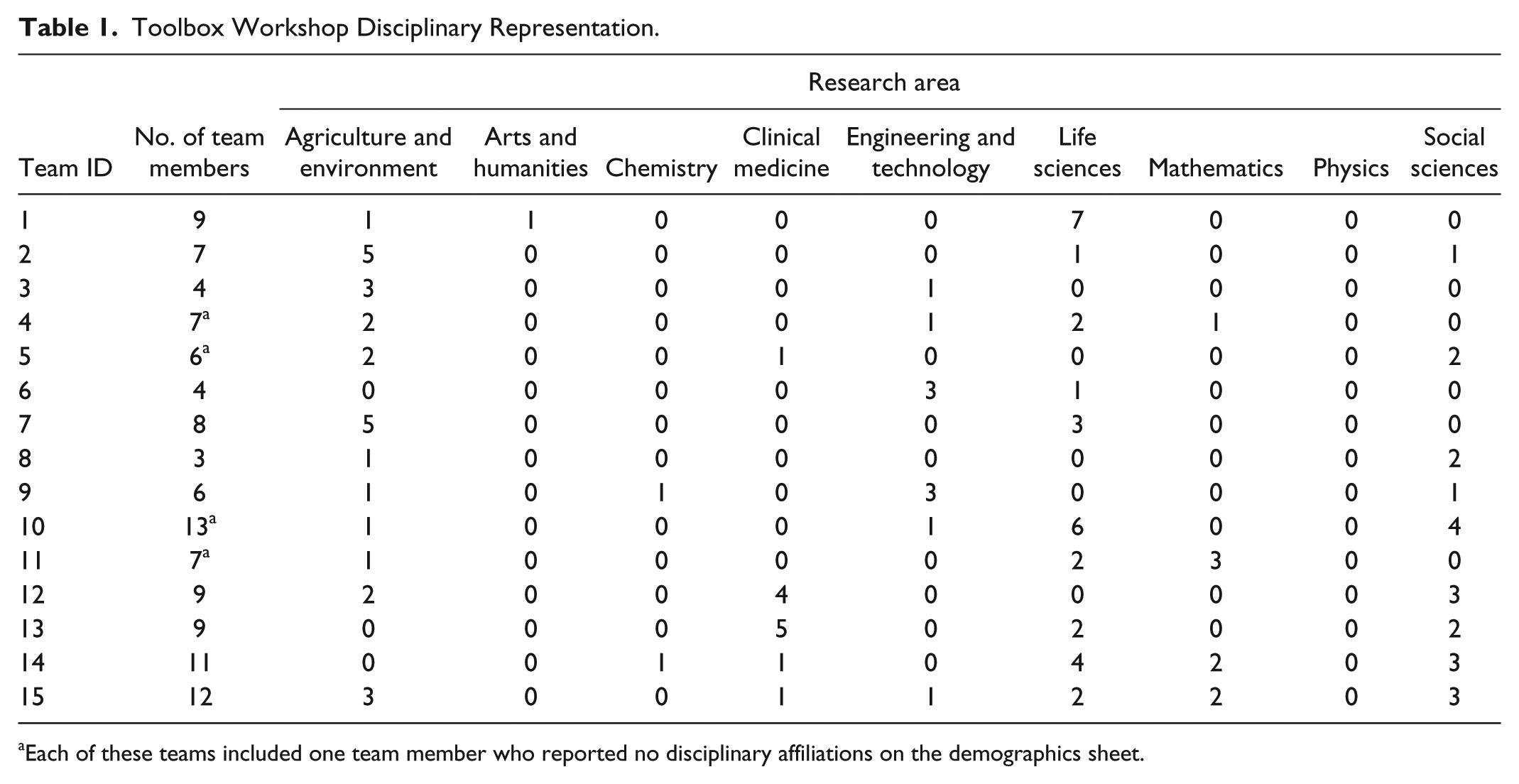

We analyzed dialogue about hypotheses from 16 Toolbox workshops, involving a total of 127 researchers (mean group size = 7.8) from multiple disciplines (Table 1). For clarity, we have characterized the participants based on the first of the several disciplines listed. We categorized these disciplines into research areas, after Morillo, Bordons, and Gomez (2001), to provide a simplified look at the makeup of participating interdisciplinary teams. These data are not analyzed further. For the purpose of this article, we focused our analysis on participant discussion of the meaning and use of “hypotheses” and its cognates in science. Three themes about hypotheses emerged across workshops. Here we arrange those themes conceptually, moving from basic discussion of what a hypothesis is to the critical question of whether hypotheses must be employed to perform science, and concluding with evaluation of when and how hypotheses are employed across and within disciplines.

Toolbox Workshop Disciplinary Representation.

Each of these teams included one team member who reported no disciplinary affiliations on the demographics sheet.

Theme 1: Definitions of Hypotheses

Questions about how to define and employ the word (1) [The problem] is that different definitions of “hypothesis” are in use. (Session 10, Ecology)

Some participants approached negotiating this problem by reflecting on the (2) And so now I’ve been going back to my students, and they’ve been telling me they’ve done all these great things, and I said, well, what is your hypothesis? What did you think you were going to see? (Session 16, Computer Science) (3) I teach that a hypothesis is our best guess to a question. (Session 10, Plant Pathology)

These perspectives align well with the closely associated conceptions of hypotheses as tentative answers (Hempel, 1966), happy guesses (Whewell, 1984), or alternative possible explanations (Martin, 2000). The second is also consistent with the idea that hypotheses can be distinct from and posterior to research questions. Such a clean distinction between hypotheses and questions is not universally maintained, though, as evinced by Comment 4: (4) I view that hypotheses are directive questions . . . (Session 2, Geochemistry)

This statement (Comment 4) conforms to the idea presented in Hempel (1966) and Martin (2000) that hypotheses (5) Without a hypothesis, even if it’s just something you think may happen, you need to know what evidence you need to take. You need to know what to look for and you don’t know what you’re looking for without a hypothesis of some sort. (Session 7, Zoology)

Two additional modes of guidance—identifying what is to be tested (Hempel, 1966; McErlean, 2000) and providing the foundation for experimental design—are expressed in Comment 6: (6) I’m a big proponent of at least knowing what your question is. I’m a little less concerned about whether you frame it as a null [hypothesis] or not. But you ought to have some sort of hypothesis that you’re going to try to test or use to design your project. (Session 3, Natural Resources)

Of course, guidance need not always be positive, especially if it involves a “rigid” hypothesis: (7) . . . where you might have a really “rigid” hypothesis . . . that might in fact constrain your thinking, constrain your creative juices . . . (Session 16, Computer Science)

Other Toolbox participants offered more narrowly defined conceptions of hypotheses. Common among our data are expressions of statistical conceptions. One participant stated, (8) I think that a hypothesis has to do with using statistical tests to prove or disprove a bunch of numbers (Session 2, Marine Ecology),

while another offered, (9) I’m really stuck in that biostatistics hypothesis box where a hypothesis has to be quantitatively proven or disproven. And maybe that, that’s just kind of the box I have around the word “hypothesis.” (Session 2, Geochemistry)

Not all participants endorsed the standard, statistical approach, however; for example, one conservation biologist noted, (10) The big trend now is away from [using] the standard [null hypothesis]. Instead you have a list of ten, twenty hypotheses and you’re gathering evidence and seeing which of those fits rather than forcing null and alternative hypotheses. (Session 2, Ecology)

A second way of getting at the meaning of hypothesis involves locating it (11) . . . and what we asked people to do . . . was not necessarily to come up with hypotheses but come up with questions, right, interesting questions, right? And that’s the start of a process that might lead to interesting hypotheses. . . . (Session 16, Philosophy)

In responding to this claim, one of the philosopher’s collaborators questioned whether research questions precede hypotheses: (12) But to know even how to begin with an exploration of those questions, you have to have some idea in mind of what the answer could be or you won’t have any idea where to look. (Session 16, Computer Science)

This sentiment that hypotheses are guiding ideas about “what the answer could be” is widely shared, but others take the “idea in mind” that frames pursuit of research questions to be a vague sense that something scientifically interesting is afoot. As one social scientist explained to her teammates, (13) I think of the value qualitative research is that it could generate hypotheses; I don’t know the degree to which that’s true within most of the disciplines represented here. Take a Margaret Mead classic case of understanding the situation in a very holistic way, and in doing that, calling out things that strike you or that seem curious or ought to be understood more, or could be considered. And in the describe-predict-control sequence of scientific research, then that description phase can [be] generating [a] hypothesis about that. (Session 10, Education)

In Comment 13, hypotheses are understood to be an (14) The data we collect are empirical, because we’re out there collecting them according to some design. Then we test them statistically, and set criteria for determining whether we’re going to reject or accept the hypothesis. (Session 3, Civil Engineering) (15) Every time you build a model, it is like you’re testing a hypothesis because you build models to collect data. (Session 2, Economics)

In Comment 14, as in Comment 5 above, the hypothesis is described as giving shape to the experimental design that yields data that determine the resulting attitude toward the hypothesis; Comment 15 attributes the same functional role to hypotheses in the context of model-based science. In both these cases, the role ascribed to hypotheses is consistent with the hypothetico-deductive method, described above (Hempel, 1966).

Emphasis on hypotheses understood extrinsically suggests that hypotheses are

Theme 2: Do You Need a Hypothesis to Do Science?

For many participants, hypotheses appear to be a critically important part of the scientific process. But this is by no means the consensus view among practicing scientists. One biologist highlighted this by recalling his experience as a graduate student: (16) I’d like to know what people think about hypothesis driven. I guess it’s [statement] 6—scientific research, applied or basic, must be hypothesis driven. And one of the reasons I find that interesting is when I was in graduate school, that [is] exactly what my adviser, who I greatly respect, would have said. . . . One of the other people who was on my committee, who I also greatly respect, said that hypothesis driven research is way overrated. (Session 16, Biology)

The idea that scientific research must be hypothesis driven, or must at least make room for hypotheses, is expressed in Comments 5 and 6 above—hypotheses are critical design elements that identify both what is to be tested and what is to count as evidence for those tests. This attitude is also reflected in the following pair of quotes: (17) Science . . . at some level is hypothesis driven. There’s something that’s testable [there]. If there’s nothing that’s testable, I’m not sure what you’re doing. (Session 2, Ecology) (18) In natural science we are hypothesis driven. You can’t just go out there and mess around. (Session 11, Algorithmics)

Both of these reinforce the picture that scientific research requires systematic tests, and that these depend on hypotheses for their content and focus. In Comment 19, we find this view expressed in connection with graduate education: (19) . . . if you don’t formulate a hypothesis, you tend to just drift with the wind. So if you’re training students, it’s good to be able to teach them how to formulate a specific hypothesis at some point. So for me it’s like uh, I think it was Emerson said about consistency, a foolish consistency is the hobgoblin of small minds. A foolish addiction to hypotheses is not productive, but you’ve got to have it sometimes. (Session 16, Microbial Ecology)

These three comments were comments from participants who consider themselves scientific researchers, but an essential connection between scientific research and hypotheses was also expressed by those who did not think of themselves in this way. For example, one engineer announced, (20) I don’t really use hypotheses in my disciplines, but I don’t really consider myself a scientist either. I guess I had thought if you considered yourself a scientist that meant you used hypotheses . . . (Session 15, Engineering) (21) . . . the first thing that came to my mind is wait, you know, I’m doing design. That’s not hypothesis driven. I mean that’s problem solving and, and has nothing to do with hypotheses basically, but then I went and said well wait a second. That’s not scientific research. The question says scientific research, applied or basic. It’s certainly scientific work, but I wouldn’t call it scientific research. It’s design. That’s why it has its own name. . . . (Session 16, Computer Science)

Both Comments 20 and 21 cast scientific research as research that involves hypotheses by definition. Others take hypotheses, understood as antecedent guides to scientific research, to be necessary but only as part of the (22) It’s just that a lot of times what happens is that you do the research and you formulate the hypothesis after you have the results . . . A lot of really great new discoveries happen that way. You come up with something you did not expect, you did not anticipate. You have to write it up, you have to fill out the formula, and you write it up . . . misleadingly that way. (Session 16, Computer Science)

According to this view, hypotheses are retroactively crafted to bring scientific discovery in line with accepted scientific rhetoric, that is, with community expectations that research generates conclusions that are anticipated and not accidental. But so understood, this necessity is less a conceptual requirement of scientific research than a sociological one.

Other participants were less adamant that hypotheses testing per se is required to conduct science: (23) Not all the research is based on experimentation. So [research] doesn’t necessarily have to be hypothesis-driven. (Session 7, Biology)

By distinguishing non-hypothetical research from (24) In the social area, like subject studies, where you study just one subject’s life history, you don’t have a hypothesis. You are just describing that subject. (Session 7, Biology)

More broadly, there are areas of scientific inquiry that do not employ hypotheses, as reported in this comment from a social scientist: (25) . . . the use of hypotheses in [social science] varies depending on the type of research you are conducting. Some social scientists use more traditional null and alternative hypotheses while others do not use hypotheses at all. (Session 14, Sociology)

These comments suggest that scientific research that does not involve hypotheses is distinct from and perhaps independent of hypothesis-driven research; however, scientific research is more complex and intertwined than that, as revealed in this exchange between a biologist and a computer scientist: (26)

Observational and qualitative studies can stand alone as rigorous contributions to scientific knowledge, but they can feed into experimental work when they are (27)

“Freeing stuff” is not research, from this perspective, even if it is necessary to “boil down” to a hypothesis. Conversations like (27) highlight the common view that regardless of whether or not a discipline is traditionally hypothesis-based, research must involve hypotheses from the start to be considered scientific.

Thus, understanding perceptions concerning hypotheses at the level of scientific practice is critical to revealing varied expectations about whether and when a CDR team is conducting research that can be properly classified as

Theme 3: How Do Disciplines Use Hypotheses?

As we noted above, emphasis on how hypotheses figure into the practice of disciplinary research is motivated by the two statements in the Toolbox, one focusing on hypotheses as drivers of scientific research and the other on what is involved in confirming hypotheses. Because participants are asked in part to be mindful of their disciplines in dialogue, they typically respond to these prompts by embedding the hypothesis concept into their disciplinary practice.

As we have seen in Comments 14 and 15, certain participants report that hypotheses are indispensible to conducting research in their disciplines. The engineer who produced Comment 14 went on to say that, whatever anyone might think, their discipline demanded that research products include testable hypotheses to meet publication standards: (28) . . . so I was just thinking methodologically, if you don’t follow those protocols, you don’t get published in my [discipline’s] journals. (Session 3, Engineering)

Others express more ambivalence about how hypotheses are relevant to the practice of a particular discipline. In Comment 21, an engineer distinguished between scientific research and scientific work, reporting that the latter did not involve hypotheses. Another engineer describes the role of hypotheses in engineering this way: (29) In engineering we don’t necessarily have a hypothesis. We see what happens under these conditions and we [develop] mathematical models. You might have some idea what might happen but it’s not as hypothesis driven. However, we’re always investigating a question of some kind. And it’s something we don’t know the answer to that question. I don’t really think of [my work] as hypothesis-based, per se, but more of a formal process. And maybe that’s what the difference is. And you’re expected to do that in [engineering]. (Session 3, Chemical Engineering)

Although Comment 29 does not explicitly claim that engineering counts as scientific research, the view expressed conforms with a conception of scientific research described by Glass and Hall (2008), namely, question-driven investigation that leads to the formation of models, which are then verified based on their ability to predict outcomes.

Engineering was not the only area described as operating without hypotheses. Another discipline mentioned by name was oceanography: (30) A lot of times in oceanography, you can’t really have a hypothesis . . . because oceanography is about deploying instruments, seeing what those instruments say, and deploying more instruments. And it can be completely un-hypothesis driven. (Session 2, Marine Ecology)

While Comment 30 met with criticism in the workshop—in fact, Comment 17 was a response to this comment—it reflects a perspective according to which scientific research can consist in data collection that is not hypothesis driven.

Another discipline that has a complicated relationship with hypotheses, as we have seen, is social science. In one workshop, a biologist proclaimed, (31) Social scientists don’t use hypotheses; they only use observations. (Session 13, Biology)

To this, a public health scientist responded, (32) I am a social scientist and I use hypotheses all the time. There are misconceptions that all social scientists do qualitative research, but that is not true. The kind of research we do depends on the questions we are trying to answer and I often want to answer more quantitatively-based questions. (Session 13, Public Health)

The social scientist who reported a similarly complex relationship between social science and hypotheses in Comment 25 prefaced that remark by speaking to these misconceptions, saying, (33) Many people on my team assumed I did not use hypotheses because I am a social scientist. (Session 14, Sociology)

Similarly the engineer responsible for Comment 20, in learning more about the work of social scientists, continued, (34) . . . I guess I did not realize that sociologists are scientists. So I had not really considered whether or not they might use hypotheses. It is very interesting for me to find out that [sociologists] sometimes do use hypotheses! (Session 15, Engineering)

This statement illustrates how members of CDR teams can have limited knowledge of other approaches to science. Perhaps one of the most critical aspects of team communication is how members of CDR teams apply their disciplinary expectations to the contributions made by their collaborators. A fundamental contribution of the Toolbox workshops is helping promote self- and mutual understanding regarding communication styles and philosophical differences between team members. The quotes weaved throughout this section and the dialogue captured in Comments 33 and 34 provide just a few examples of workshop participants learning more about how and why other disciplines chose to use or not use hypotheses in their research.

Discussion

Terminological variation across disciplines is a well-known challenge for those who engage in CDR. However, although it has been often noted (e.g., Eigenbrode et al., 2007; Galison, 1997; Klein, 2000; Naiman, 1999; NAS, 2004; Schoenberger, 2001), the point has not often been explored in detail with respect to a particular term. Our attempt to do this highlights the need to avoid the assumption that one’s collaborators will agree with how fundamental concepts like

Our approach has been to collect comments and insights from scientists about the hypothesis concept and use those as the foundation for conceptual analysis. One might critique this approach by asking why we put so much stock into what scientists think about hypotheses in general, given that many scientists have not had much training in the relevant conceptual methods or philosophical literatures. If, as Glass and Hall (2008) asserted, “many scientists use the term ‘hypothesis’ when they mean ‘model’” (p. 378), why should we rely on their views in analyzing the hypothesis concept? There are two reasons. First, the conceptions expressed in these workshop dialogues are what guide these scientists as they conduct their research business. They have ample practical experience in forming and testing hypotheses; furthermore, their intuitions and interactions with hypotheses are the ones that matter to scientific practice. Although some may be philosophically untutored, the evidence from the dialogues is that when given the opportunity, many are quite capable of articulating how they understand the hypothesis concept. Second, one of our goals is to highlight the fact that you cannot presume your collaborators will have the same understanding of this core concept as you work toward achieving project objectives. The differences between interpretations can be subtle and insidious, making it difficult to appreciate when they are there and when they are not. Failure to recognize that even fundamental concepts like hypothesis can be variously interpreted can lead to disagreements about project decisions concerning research direction and publication (Comments 28 and 29; see also Bracken & Oughton, 2006; Thompson, 2009; Wear, 1999). Thus, we are interested in what the scientists think because they are the ones who do the science and they are the ones who collaborate.

Turning to Theme 1 and the nature of hypotheses, it is worth repeating the two statements in the Toolbox instrument that have prompted the dialogue we sample above:

Scientific research (applied or basic) must be hypothesis driven.

There are strict requirements for determining when empirical data confirm a tested hypothesis.

These supply the conceptual frame within which the comments supplied above were made. The first of these presents hypotheses as what guide scientific research, whereas the second highlights the role that hypothesis testing plays in advancing scientific understanding. These aspects resonate with the historical, philosophical development of hypotheses as an element of the scientific method, and they are reflected in our data. For example, Comments 4 to 6 and 14 highlight the

What emerges from these comments is a picture of hypotheses as

As we have noted, Glass and Hall (2008) distinguished hypotheses from questions and models, and suggest that scientists are not always so careful. Our data would appear to bear them out, but may point to a different possibility. Glass and Hall’s view entails a relatively restrictive sense of the concept according to which a hypothesis is “an idea or postulate that must be phrased as a statement of fact, so that it can be subjected to falsification” (Glass & Hall, 2008, p. 378). But our data suggest that there is a range of hypothesis concepts that vary in terms of how restrictive they are. Comments 7 to 10 and 14 describe “rigid” hypotheses that meet specific criteria, rendering them subject to test and falsification, consistent with the view of Glass and Hall. By contrast, though, consider Comments 2 to 6 and 12—these characterize hypotheses not in terms of their status as statements of fact, but rather in terms of their potential to motivate and frame broader scientific inquiry. These comments describe less restrictive, more general versions of the hypothesis concept. One does not falsify questions, and hunches about what you might see are not precise enough to be subject to classical falsification. Interestingly, this difference could account for why so many participants in these dialogues are happy to think of their work as involving confirmation of hypotheses, pace Popper, Glass and Hall, and many other philosophers of science. If the more expansive version of the concept is in play, then establishing the legitimacy of the inquiry framed by such a hypothesis could well be taken to confirm the hypothesis in that framing role. Participants across workshops seemed sensitive to gradations in the hypothesis concept and how such variability reflected functionally different categories in research, for example, testable hypotheses versus general hypotheses. This variation in the concept could explain some of the disagreements expressed in Toolbox workshops as merely apparent, for example, the disagreement found in the Exchange 11 and 12, where it appears that the restrictive concept informs Comment 11 whereas a more expansive concept informs Comment 12. Another point to note is that the variability did not necessarily follow preconceptions of “hard” and “soft” disciplines; for example, oceanography, a very quantitative physical science, was described as less hypothetico-deductive by its practitioners than what the profuse instrumentation and precision might lead one to believe.

We turn now to the second theme and the question of whether hypotheses are necessary to science. The first point to make in this connection is that there is no one conception of

Although our results do not reveal unanimity across the disciplinary spectrum concerning the nature of science, upon analysis, they deliver a broad model of science. Beginning with the observation made in Comment 21, we can distinguish between scientific

Theme 3 concerns the disciplinary deployment of hypotheses, and it is here that we address the third goal mentioned at the top of this section, namely, the goal of evaluating communication and collaboration in CDR. In the workshops, there was ample discussion about how different disciplines did—or did not—employ hypotheses. Our results demonstrate that these discussions were important for better understanding the research approaches of fellow participants, and in some instances (e.g., Comment 21), perhaps better understanding one’s own discipline. It is important to emphasize that the goal of the dialogue is not to standardize the understanding of the hypothesis concept in a project, because differences about it are an important part of the conceptual diversity that recommend CDR as a mode of research in the first place; rather, the goal should be to discover those differences so as to enhance the collaborators’ collective ability to look at their common problem through each other’s eyes. Thus, the dialogues emphasize that one’s research approach—broadly embodied here under the hypothesis concept—is an important topic for discussion among CDR collaborators (Eigenbrode et al., 2007; Khagram et al., 2010; Miller et al., 2008).

These discussions also revealed erroneous preconceptions participants had about one another’s disciplines, often in debate about the use of hypotheses by a particular discipline. Of course, debate among scientists about the use of hypotheses in research is not new. Within ecology, for example, there is a history of adapting increasingly quantitative methods, at least in part to counter the notion that ecology was not a hard science (Simberloff, 1983). The fallout of this “physics envy” has been a trend away from inductive approaches in ecology and the dominance in the field’s flagship journals by “the scientific method” in the spirit of Platt (1964), despite recognition that there are numerous ways ecologists actually practice science (Forbes et al., 2004). One important and reoccurring example of these erroneous preconceptions in our workshops was the statement by natural and physical scientists that social scientists did not employ hypotheses at all (e.g., Comments 31 and 34). This evinces a lack of understanding about the social sciences as a whole, as reflected in reactions by social scientists (e.g., Comments 32 and 33), and the use of complementary quantitative and qualitative approaches within social science disciplines. Given our data, it is tempting to ascribe this knowledge gap to persistent disciplinary chauvinism within the natural and physical sciences (Giri, 2002). Qualitative, observational approaches that do not employ statistically testable hypotheses certainly do look non-scientific to some quantitative scientists. However, it is worth noting that debates over research methodology continue within social sciences, as is suggested by Comment 25. In fact, many social science disciplines have struggled internally with methodological conflicts for quite some time. More than 70 years ago, for example, White (1943) argued that quantitative methodology, although philosophically attractive, could not answer many of the most intriguing questions in sociology, and injudicious use of mathematical language to explore inherently non-mathematical concepts actually reduces understanding.

The importance of how researchers use hypotheses in discourse and practice is not merely of abstract or academic interest, but a critical aspect for evaluating CDR efforts (Klein, 2006). Narrow understanding of scientific processes and variation in methodology across disciplines can derail developing careers (NAS, 2004). Basic conflicts in methodology can reduce success in interdisciplinary education programs (Morse et al., 2007); indeed, one of us participated in an NSF-IGERT project where the interdisciplinary component of the dissertation was nearly rejected because of its non-hypothesis-driven and non-statistical approach. Whether or not research is hypothesis driven can even constrain funding opportunities (NAS, 2004)—an obstacle addressed by a participant during this study: (35) Well, you see, in our case, with any kind of expensive research, you have to formulate some kind of hypothesis to get funding. (Session 1, Plant Science)

Beyond the immediate and personal, restrictive concepts and definitions limit science as a way of knowing and discovering, constraining exploration of both basic and applied problems (Gallopin, Funtowicz, O’Connor, & Ravetz, 2001; Pickett, Burch, & Grove, 1999). Miller et al. (2008) invoked “epistemological pluralism” as a guiding principle for CDR efforts. This principle requires disciplinary participants to recognize the multiple ways of investigating a CDR problem, and requires research plans to accommodate multiple ways of knowing (Khagram et al., 2010).

One way of cultivating epistemological pluralism and reconciling epistemological difference is through the use of dialogue methods (Bammer, 2013), an approach instantiated by the Toolbox workshops. Although not an essential exercise—CDR is practiced by teams that are epistemically synchronized without formal discussion of these differences—the data we have collected in these workshops suggest that conversation that explicitly focuses on primary epistemological concepts, such as the

Footnotes

Acknowledgements

The authors would like to thank the University of Idaho, the University of Alaska Anchorage, Michigan State University and the Washington State Department of Agriculture for their support of this work. We also extend many thanks to our workshop participants for engaging in Toolbox sessions and providing us with the insight needed to complete this research. The authors are also grateful to Stephen Crowley, Sanford Eigenbrode, Troy Hall, Liela Rotschy, David Stone, and five anonymous reviewers for very helpful comments on early drafts of this paper.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

This work was supported in part by National Science Foundation Grant No. SES-0823058 and IGERT Grant No. 0114304.