Abstract

U.K. policy is to embed “knowledge transfer as a permanent core activity in universities.” In this article, I propose an agenda for analyzing and informing the evolving practices of communicating university research insight across institutions, and for analyzing critically the ways in which “research impact” is being demanded, represented, and guided in current policy discourse. As an example, I analyze the U.K. Economic and Social Research Council (ESRC) “Step-by-step guide to maximising impact,” using the concept of “recontextualization.” The analysis illustrates that the availability of relevant social science research does not ensure its use, even by the research funding council. It suggests that while a rationalist,

Keywords

“Maximizing Research Impact”: Discourse and Practice

A prominent issue for academic researchers across disciplines is the increasing demand to generate “impact” from their research beyond academic debate. The U.K. Government “Science and Innovation Investment Framework 2004-2014” states,

The Government’s aim for future policy is to create a funding regime that promotes and rewards high quality knowledge transfer, . . . and further

Whether this will actually increase the use of research in industry and society will ultimately depend on the detailed practices of that cross-institutional communication. If it is ill-informed, routinely mishandled, and/or embedded in underlying assumptions that separate knowledge from practice, or view practitioners merely as

There are well-established fields of research on knowledge transfer, research dissemination, diffusion of innovation, research utilization and science communication. So, it is useful to ask whether current policy and guidance is already sufficiently informed (and critiqued) by those fields of research. Or, whether there is still an important role for areas of social science with relevant insight and expertise, such as applied linguistics and social studies of science.

Relevant insight and expertise includes, for example, the analysis of networks of contingent elements within the development and the use of research claims (e.g., Latour, 1987), so challenging any simplistic causal expectation of “research impact.” It includes analysis of the cross-institutional communication of research (e.g., Roberts & Sarangi, 2003), and of the relations and positioning of research participants, potential users, and stakeholders within the processes of research (e.g., Cameron, Frazer, Harvey, Rampton, & Richardson, 1992/2006). It includes the analysis of institutional discourses and genres both as social practice and as a focus and mechanism of social and professional change (e.g., Fairclough, 1992b).

More broadly, social science offers expertise in juxtaposing theoretical perspectives, empirical evidence, and reflections on experience. For many social scientists, there is a fundamental belief in a need to put theoretical, “logical” and “common sense” accounts of the world up against empirical evidence, and vice versa, so that our understanding is neither

In fields across social science, researchers have usefully challenged rationalist and common sense accounts, including of communication (e.g., Ivaníc et al., 2009; Roberts, 1997), the management of professional change (e.g., Tengblad, 2012), and the interactions between science and other spheres (e.g., Jasanoff, 2005). They use research evidence to critique and cumulatively inform theoretical and practical insight.

The intention of this article is to identify the urgent need for such research to analyze, inform, and potentially challenge (i) the evolving practices of communicating publicly funded research insight across institutions, and (ii) the ways in which this communicative work is being demanded, represented, and informed within current policy and guidance.

The urgency is not just because this communicative work is central to making university research available and useable. It is also because current representations and guidance are problematic in familiar ways, which have long been analyzed and critiqued. Left unchallenged, they may have serious consequences for the future integrity, value, and use of publicly funded research.

Specifically, I propose an agenda of three inter-related contributions:

In this article, I focus primarily on the first. As an example, I analyze the “Step-by-step guide to maximising impact” (SSG) offered by the U.K. Economic and Social Research Council (ESRC) within its online “Impact toolkit” for university researchers. With the ESRC as its author, this toolkit is arguably the most authoritative and significant guidance on generating research impact for U.K.-funded social science researchers. Analysis of this guidance is necessary, first to show the need for informed input and critique, and second to show just how fundamental (even basic) the social science insights need to be to challenge the current representations of this professional activity.

To support this analysis, I also briefly illustrate the value of (2) and (3). I include observations from the experience of a recent European Commission (EC) FP7-funded project to illustrate some of the intellectual challenges in practice. I also briefly refer to some concepts that inform my own practice, to illustrate the kinds of conceptual understanding that it is possible to offer. Together, they help support the core argument in the analysis, that the current ESRC guidance is not merely simple, but simplistic.

The title “Research impact unpacked?” is a dual question. First, it is about whether current guidance usefully

Method

“Unsuitable Terminology”: Constructing the Object of Interest

The term The metaphor . . . is, at best, one of gathering and integrating evidence from research, condensing this into convergent knowledge, and neatly packaging this knowledge for transfer elsewhere. . . . In other words, knowledge parcels for grateful recipients. Such a view belies the inherent and, we would argue, largely insurmountable challenges of doing so for any but the most simple and incontrovertible of findings. Moreover, . . . the subtlety and complexity of research use in context further militate against simple models of “translate and transfer.” (Davies et al., 2008, p. 189)

In this way, they suggest that the term and its use misrepresent each element within this communicative practice: the knowledge or findings, the actors, the challenges, and the processes of communication. Partly in response to such critiques, the ESRC now emphasizes the term Knowledge exchange (KE) is about opening a dialogue between researchers and research users so that they can share ideas, research evidence, experiences and skills. This can involve a range of activities; from seminars and workshops to placements and collaborative research. By creating this dialogue, research can more effectively influence policy and practice, thereby maximising its potential impact on the economy and wider society. (ESRC, n.d.-b: Knowledge Exchange)

A further common term is

Bourdieu and Wacquant (1992) argue that such terms, along with their associated literatures, policy documents, and practices, “pre-construct” the object of our interest (p. 229). They therefore have to be viewed as part of the policy discourse to be analyzed. Put simply, a research

Nevertheless, any analysis requires a term with which to refer to, and potentially re-conceptualize, the set of professional practices that are of interest. No term is neutral, so it needs to reflect our intellectual and practical interest.

Here I shall use “research communication” as a deliberately general term to describe the diverse set of communicative practices by which academic researchers engage with people in the domains of policy, professional practice, and public debate beyond academia, with the intention to develop and communicate significant insights from their research. The term has been used similarly, for example, by Scott (2000) within his scoping report for the European Environment Agency. In addition, the EC (2010) guide for researchers in socio-economic sciences and humanities is called “Communicating research . . . ”

The term

I deliberately use the same term to embrace both dialogue and produced artifacts (such as introductory leaflets, research articles and reports, online demonstrations, video documentary, broadcast interviews, and media publications). This helps to keep in view a core insight from Bakhtin (1986), that even such texts and artifacts are dialogic: They are constructed in anticipation of encountering a response.

Analyzing the Representation of Professional Practice in Discourse

As a framework to analyze the representation of research communication in policy and guidance, I draw on the concept of “recontextualization.” Originally from Bernstein (1990), it has been developed by van Leeuwen (2008) to analyze the representation of social and professional practices in discourse.

Echoing van Leeuwen (2008), Fairclough (1992b), and others, I am using the term

Bernstein’s (1990) sociological concept of recontextualization was prompted by observing what he described as the “overwhelming and staggering uniformity” of classroom activity and teaching practices across cultures and across the curriculum (p. 169). He argued that social and professional practices such as physics and woodwork (his examples) are recontextualized by a “pedagogic discourse” which “fundamentally transforms” them into classroom physics and woodwork. Physics is “delocated” from the original social relations and purposes of research and industry, and “relocated” within the social relations of a pedagogic discourse, with its own purposes, sequencing, and systems of evaluation.

Van Leeuwen (2008) usefully develops the concept beyond pedagogy, as an analytical framework with which to “analyse all texts for the way they draw on, and transform, social practices” (p. 5). He describes his work as starting from the view “that all discourses recontextualize social practices, and that all knowledge is, therefore, ultimately grounded in practice, however slender that link may seem at times” (van Leeuwen, 2008, p. vii). He uses the term “a socially constructed knowledge of some social practice” developed in specific social contexts. (van Leeuwen, 2008, p. 6)

By empirically analyzing Australian news reports and written guidance for parents on their child’s first day at school, van Leeuwen (2008) observes that discourses

not only represent what is going on, they also evaluate it, ascribe purposes to it, justify it, and so on, and in many texts these aspects of representation become far more important than the representation of the social practice itself. (p. 6)

Specifically, he argues that recontextualization can involve the substitution, deletion, rearrangement, re-legitimation, and re-evaluation of elements of the social practice. As these “transformations” can be achieved by diverse linguistic means, his inventory is “sociosemantic” rather than traditionally linguistic (van Leeuwen, 2008, p. 23; Halliday, 1978). He argues that in a “recontextualization chain,” such transformations “can happen over and over again, removing us further and further from the starting point” (van Leeuwen, 2008, p. 13).

Two important notes are necessary. First, recontextualization is not itself pejorative: Any representation involves recontextualization. Rather, it is an analytical concept with which to investigate and critically analyze representations of social and professional practice. Second, it is important not to reify a

Drawing Insight From Case Studies

In advocating case study analysis (agenda item 2, above), it is important to acknowledge that the ESRC offers a growing number of “impact case studies” online. However, these are primarily retrospective reports of successes, rather than analyses of the intellectual, practical, interpersonal, and organizational challenges within the communicative process. I suggest it is useful to draw a parallel with Latour’s (1987) classic methodological distinction between studying “ready made science” and “science in the making.” Rephrasing his core principle (p. 4), I would argue that

our entry into understanding research impact should be through the back door of

As decades of research in social studies of science and elsewhere have shown, issues and challenges can be most explicit at moments when the opportunities are uncertain, strategies are not yet decided, and success is still unclear. Arguably, these moments are as important for developing guidance as they are for research, because the challenges are often forgotten once they are resolved. Studies of

When proposing case studies, it is vital to be clear about what insight can be drawn from them. As many have argued in qualitative and case study research, a case cannot simply be generalized as truth or as a guide for future work. Instead, its value is to offer a situated and potentially “telling case” that contributes to building deeper conceptual understanding (discussed in Platt, 1988). It may enable us to refine or refute universal claims produced from

It is with that aim that I include brief observations from one recent inter-disciplinary project below. The intention is simply to show that the intellectual challenges of research communication in practice are of a different intellectual order from those assumed within the ESRC guidance. This contrast helps to

refute the general categorical assertions within the guidance,

foreground the ESRC assumptions and problematize the discourse,

illustrate why such guidance needs to be informed by social science.

The example project

As the U.K. partner for the EC FP7-funded ORCHESTRA project (2009-12: No. 226521) our collaborative focus was on generating understanding of recent research on computer-based (in silico) methods for assessing chemical toxicity. The project was timely because the 2007 EU REACH regulations demand that industry assess many thousands of existing chemicals in the coming years. This is predicted to cost billions and “consume” many millions of animals in traditional animal testing, despite EU policy to approve animal testing only “as a last resort.”

The project therefore set out to inform professional thinking among regulators, industry, and toxicologists about in silico methods as a means to reduce in vivo testing. One of many dissemination strategies was to conduct detailed interviews with researchers, regulators, potential industry users, and other stakeholders to investigate and analyze their priorities and concerns, and the issues around take-up. These were communicated in a video documentary (Pardoe, Cazzato, Golding, Benfenati, & Mays, 2011) as well as online (www.in-silico-methods.eu) and in open access articles (e.g., Benfenati et al., 2011).

As a final ORCHESTRA output, we reviewed the experience of the project to help inform science and technology researchers in future EC projects. An updatable e-guide (Pardoe & Mays, 2012) identifies some of the intellectual and practical challenges for researchers of making science and technology research engaging, accessible, and useable, while also retaining the scientific rigor and integrity of the publicly funded research. The project was an affirmation that even when research lies firmly within the natural sciences, social science insight can offer a vital contribution to understanding the challenges and potential pitfalls involved in a wider communication, and informing strategies.

The U.K. ESRC Guidance as a Focus for Analysis

Why Analyze Guidance From the U.K. ESRC?

The ESRC is “the UK’s largest organisation for funding research on economic and social issues,” and describes itself as “an international leader in the social sciences” (ESRC, n.d.-a). It financially supports more than 4,000 researchers and postgraduate students at any one time. Its “role is to”

promote and support . . . high-quality basic, strategic, and applied research . . . in the social sciences advance knowledge and provide trained social scientists . . . , thereby contributing to the economic competitiveness of the United Kingdom, the effectiveness of public services and policy, and the quality of life provide advice on, disseminate knowledge of, and promote public understanding of, the social sciences. (ESRC, n.d.-a)

With decades of social science research funded by it, the ESRC is in a unique position among the U.K. funding bodies to be able to do (in its guidance) exactly what it now demands from its researchers:

After many years of development and refinement, the

From U.K. “Global Economic Performance” to “Succinct Messages”

Consistent with its umbrella organization, the Research Councils UK (RCUK), the ESRC defines fostering global economic performance, and specifically the economic competitiveness of the United Kingdom increasing the effectiveness of public services and policy enhancing quality of life, health and creative output. (ESRC, n.d.-b: What is impact?)

These societal and economic goals are articulated to explain and justify the U.K. “funding regime that . . . embeds knowledge transfer as a permanent core activity in universities” (HM Treasury, 2004, p. 76). The required shift in professional practice for university researchers is represented in terms of “the behaviour and attitudes that RCUK wishes to foster” (RCUK, n.d.).

At the level of implementation, the policy becomes a demand for each funded project to produce a “pathways to impact” plan, and to generate and demonstrate impact. The ESRC’s role includes evaluating impact plans and giving the advice analyzed below.

In this chain of recontextualization, the policy goals and the potential ways forward are progressively transformed or narrowed by decisions about how they can be achieved through specific demands and procedures. By viewing these policy statements in a sequence, from global economic priorities, to researcher “behavior and attitudes,” to calls for “succinct messages” (below), social scientists and discourse analysts can usefully recognize and question each successive narrowing.

While such analysis is not the focus of this article, such successive transformations and narrowing can be observed within single texts. The extract below, from a page within the impact toolkit titled “Why is KE important?” consists of a series of categorical and potentially non-controversial statements. The policy and rhetorical action is achieved through making them sequential. As is often observed in critical discourse analysis (e.g., Fairclough, 1992b), the most ideological and problematic steps are those which are omitted, and which the reader has to presuppose to make sense of the text.

The UK has a strong science base, but performs less well in capitalising on new research to generate innovation. Effective KE is vital in ensuring research is translated into policy and practice. As a funding body, the ESRC spends over £211 million a year on research, training and knowledge exchange. We want to ensure that our funded research is not only of the highest quality, but also has a positive impact on society. KE is therefore fundamental to the way we work. (*)However good your research, there is little point in doing it if nobody knows about it. If your research is to make a difference to policy or practice it must be accessible to potential users and other interested parties. Thinking about who these might be and how to actively engage with them through the lifespan of your research will help you to:

Gain a better understanding of the needs of potential users, their expertise and their perspectives on your chosen topics Inform and improve the quality and focus of your research Gain valuable new skills Increase the prospects of your research being applied. (ESRC, n.d.-b: Why is KE important?)

For example, the statement at (*) would appear to be utterly obvious in a profession so focused on peer review and publication. Yet juxtaposing it with the subsequent statements potentially redefines “nobody” as “nobody outside academia,” which has fundamental implications for research.

The extract ends by bringing the U.K. societal and economic goals right down to the personal “gains” and skills for “you” as a researcher. The role of the impact toolkit is then to guide social science researchers in how to achieve those societal, economic, and personal goals, by “ensuring research is translated into policy and practice.”

The ESRC’s Impact Toolkit and “Step-by-Step Guide to Maximising Impact” (SSG)

Our impact toolkit

The impact toolkit is the core of the ESRC’s written advice for its funded researchers. Launched in January 2011, it is described by the ESRC as “a practical tool” which “draws on best practice from investments” (ESRC, 2011).

It consists of more than a hundred separate web pages. Its

Within that toolkit, I analyze the “SSG” because it is one of the most explicit and practical guidance sections. Elsewhere in the toolkit, the researchers are directed to the SSG for “practical guidance on planning research impact.” It is a section in which the ESRC goes beyond merely reiterating demands and policy statements, to offer

The ESRC claims that the SSG addresses the readers’ need, both practically and intellectually. The introductory page “Developing a strategy” claims that “This part of the toolkit gives guidance on how to maximises impact” [ This takes you through

The page headings within the SSG identify the steps and approach. The introductory page (1) is followed by

2. Setting objectives 3. Developing messages 4. Targeting audiences 5. Choosing channels 6. Planning activities 7. Allocating resources 8. Measuring success. (ESRC, n.d.-b: Developing a strategy: Step-by-step guide)

Pages 2, 3, 4, and 5 are most specifically about communication, so they are my focus in the analysis and the frequency tables below. But first, it is worth simply quoting from pages 3 and 5 to offer a further sense of the conceptual understanding of communication offered by the SSG, and of the style of the toolkit as a whole:

(3) Developing messages When drafting your key messages, avoid using overly complex statements . . . Remember that key audiences such as journalists and policymakers are overloaded with information and may not remember your messages if they are too complex. Ensure that the language you use is appropriate for the audience . . . (5) Choosing channels It is important to consider the most appropriate channels to reach your target audience . . . for example: . . . why an email bulletin rather than face-to-face contact?

Comment: The SSG as an Example of Informing Professional Practice

Across the SSG, and more widely in the impact toolkit, the guidance consists of simple and categorical statements of this kind. The simplicity and conceptual repetition (and the often circular linking of pages) make the SSG and wider toolkit far less comprehensive than it first appears.

It is perhaps necessary to remember that it is written for use by social science researchers. Even if the reader is not involved in research specifically related to communication or knowledge claims, they are nevertheless involved daily in complex communicative practices ranging from engaging students to managing teams and institutional politics, and in making subtle decisions about their communication strategies in meetings, emails, reports, and academic articles.

Many of the guidance statements above could be applied equally to those familiar communicative practices. If they were, they might be criticized for merely stating the obvious. In other words, such statements are

Reading such guidance can prompt us to ask the basic questions that we should ask of any text that aims to inform professional practice. Is it really informing the readers (here social scientists) of things they do not already know? Does it really address the challenges they face (in communicating their research to people in other contexts and professional worlds)? Does it offer the kind of conceptual understanding and practical detail they need to achieve it? Moreover, from all that we know from research and practice, is this really the most useful information that we are currently able to offer?

When involved in a research communication project, I raise such basic questions and concerns with the team, and suggest strategies for finding out the answers. I would raise very serious concern if a research team produced a guide for professionals that diverged so markedly from the discourse practices of those professionals, was so devoid of explicit research insight or evidence to support the guidance, and was so universal, directive, and categorical. I would be concerned about whether it could create a productive relationship with the user, whether it would inspire or actually deter use of the research, and whether the team had really investigated or understood the challenges and the varied contexts of use. Those questions seem highly relevant in this case.

I would argue that while the

Analysis

Van Leeuwen identifies several ways in which a social or professional practice may be recontextualized and so transformed by representations of it. To structure this analysis, I explore six potential transformations:

Relocating purposes

Rearrangements and (re)ordering of actions

Evaluations: the concepts offered to guide and review communication

Substitutions: the representations of policy makers, practitioners, and publics

Substitutions: the representations of communicative actions

Legitimating the guide and the demand to maximize impact

Relocating Purposes

Questions like “why are we doing this?” or “what for?” are as central to research communication as they are to any activity. Van Leeuwen (2008) observes that representations of practice in discourse can involve adding, articulating, and/or potentially transforming the purpose(s) of that practice, including the participants’ own sense of “what for” (p. 20).

A first observation of the purposes articulated within the SSG is that these are almost all . . . Every strategy is different, but you can use these steps as a template to develop your own. Your strategy takes you from where you are now to where you want to be. (ESRC, n.d.-c)

In this way, the text simultaneously locates the purposes within ESRC procedures, yet also claims they are individual and come from “you.” This is a familiar feature in advertizing, in which demands are both personalized and mitigated by invoking notions of freedom and choice (Fairclough, 1992b). It also echoes a pervasive and problematic discourse in education (critiqued in various fields including in Hyland, 2003; Mercer, 1995; Swales, 1990) in which individuals are required to generate

The guide presents “you” with the demand to be (already) clear about “your objectives,” but without any useful indication of how those objectives might be developed and clarified (see Build awareness of the project among a defined audience Secure the commitment of a defined group of stakeholders to the project aims Influence specific policies or policy makers on key aspects Encourage participation among researchers or partner bodies (ESRC, n.d.-b: Step-by-step guide: Setting objectives)

Arguably, these objectives are merely an extension of the policy demands rather than useful intentions informed by practice. They appear irrefutable because in each case it would be difficult to advocate the opposite. In van Leeuwen’s (2008) terms they are “moralized actions”: abstractions that merely “trigger intertextual references to the familiar discourses and values that underpin them” (p. 126). For the research team, each objective only begs the kind of practical (what, who, how, and why) questions that the team already face. They are about impacts, rather than about the people, ideas, and communicative processes that might one day achieve those impacts. They contrast markedly, for example, with the respectful, self-questioning, experience-based “tips” for engaging with policy makers offered by Goodwin (2013).

The SSG offers no conceptual basis, and no investigative strategies, from which readers can develop their own objectives, or review them. There is no link to case studies that could usefully show, for example, (a) that it is necessary to

Instead, the ESRC merely tells researchers to “make sure that you set SMART objectives”: “specific,” “measurable,” “achievable,” “relevant,” and “time-bound.” This is a framework available on many project management and business advice websites. Its representation as a checklist of demands, with these particular elaborations of each letter (cf. Jisc InfoNet, 2008), reinforces the ESRC demand that “impact must be demonstrable” (impact toolkit: what is impact?). This representation reflects a current managerial policy focus on “performance indicators”; it is an interesting shift from its original use. Morrison (2011) and others attribute SMART to Doran (1981), and observe that as an industry consultant, Doran made it explicit that “in certain situations it is not realistic to attempt quantification” (cf. “measurable”), and that it “can lose the benefit of a more abstract objective” (quoted in Morrison, 2011). Doran’s caution is vitally important in research communication, given the temptation to pursue measurable and achievable performance indicators in place of the less tangible and more challenging long-term societal impacts desired in U.K. policy.

In the FP7 ORCHESTRA project (see “Drawing Insight From Case Studies”, above) the team started by investigating the current policy commitments, professional practices, and concerns and priorities of policy makers and potential users. As in many projects, it became clear from interviews and discussions that knowing how to use the research in practice was only one small part of the challenge faced by practitioners. Merely “building awareness” and providing information from the research was not the route to professional uptake. Instead, the project needed to investigate and address wider issues, including current user experience, confidence and concerns, reliability in practice, and issues around the regulatory demands debates and acceptance.

Second, it became clear that to “influence policy makers” or “secure the commitment” of some users, as the ESRC suggests, would merely increase the concerns of others, and so position the project on one side of an unproductive debate. To retain the integrity and value of the research, we needed to critically inform the debate, rather than just join it. Social and historical studies of both science and education have shown that it has been the trajectory of too many new methods and technologies to be overstated initially, and then discredited when they are misapplied or used too widely or used with insufficient scrutiny. So in this case, instead of promoting use of the technology, our objective was to promote a critical understanding of it and of its limitations to inform a wise and appropriate use.

In other words, a credible, effective, and professionally responsible approach required a long-term view of impact, and required objectives and strategies directly counter to simply “maximizing impact” in the short term. In each way, the intellectual challenge of developing and clarifying objectives is of a different order from the simple self-determined process advocated by the ESRC guide.

Rearrangements and (Re)ordering of Actions

Questions like “where do we start?” “what do we do next?” and “where are we heading?” are likely to emerge during any unfamiliar communicative endeavor. Addressing them can be a potentially vital part of any guide. Yet van Leeuwen (2008) argues that in representations of practice, “elements of the social practice, insofar as they have a necessary order, may be rearranged” (p. 18). Representations can reverse action and reaction, as well as the chronological or rhetorical sequencing.

As its name suggests, the

The first stage of developing your strategy is to set out a clear statement of your objectives. This should link to your goals and how you will evaluate the success. (ESRC, n.d.-b: Step-by-step guide: Setting objectives)

The subsequent

Arguably, this functions as a “regulatory discourse” (Bernstein, 1990; Chouliaraki & Fairclough, 1999) as it constructs the social relations in such a way that the researcher appears as the main (or sole) actor, and so appears responsible for the success or failure of the impact. However, it also represents and potentially encourages a kind of arrogance that researchers and research policy would be wise to avoid if U.K. research is to engage other professionals.

By contrast, I would argue that it is only through investigations and dialogue that a researcher can develop a sense of (a) what the research might contribute to professional practice and debate, (b) what may be seen as significant by practitioners, and (c) what may be needed to make it useable in practice. As in the ORCHESTRA example (see previous section), a primary element in almost any research communication process will be to

Contrary to the guide, I regard it as a primary organizing principle for research communication, that

Case study observations of research communication practice can usefully help us to understand alternative, but no less rigorous, orderings. For example, in the ORCHESTRA project the dissemination actions and outputs had to be proposed by partners prior to the contract (as is usual in EC proposals) with no opportunity for face-to-face discussion. Within the later discussions, I realized that our agreed actions and outputs functioned as “boundary objects” (Leigh Star & Griesemer, 1989), with partners from different disciplines holding very different assumptions about what an output would be and what purpose it would serve. It was only when actually organizing the event or collaboratively writing the output, that the different assumptions became apparent. At that stage, making good decisions required explicit and reasoned discussion about the function and form of each output: the leaflet, the workshop, the video, etc.

Such an ordering of actions is actually the opposite of the simple ordering assumed by the ESRC. Yet it has a clear rationale: Each media and genre (“channel”) is effectively a “resource for making meanings” (Halliday, 1978, p. 192) or “semiotic resource” (van Leeuwen, 2005, p. 3) that brings possibilities for what can be communicated and to whom. Having proposed a leaflet or video, the team is able to imagine and discuss together what could be communicated and potentially achieved by them. In other words, the planned “output” or “channel” provides a frame for then collaboratively “developing messages,” and developing and refining the “objectives.” This reverse ordering does not displace the ESRC ordering; both may be evident and useful. But understanding it is vital to enable researchers to see the value and the potential for creativity and intellectual rigor within their own ordering. If researchers only have the ESRC model in mind as the goal, they may view their own collaborative process merely in frustration, as being a result of cross-institutional misunderstanding and a failure to define their objectives and messages first.

Evaluations: The Concepts Offered to Guide and Review Communication

In the various literatures around research communication, there is an often-cited and valuable distinction between the “instrumental utilisation of research” and the “conceptual utilisation of research” (Caplan, Morrison, & Stambaugh, 1975, cited in Scott, 2000, pp. 5, 11; ESRC, 2009; Nutley, Walter, & Davies, 2007).

An often quoted development of this point from Weiss (1980), is that

Instrumental use is often restricted to relatively low-level decisions, where the stakes are small and users’ interest relatively unaffected. Conceptual use . . . can gradually bring about major shifts in awareness and reorientation of basic perspectives. (quoted in Scott, 2000, p. 11)

Davies et al. (2008) further emphasize the value of the using research in conceptual ways

on the ground, research and other forms of knowledge are often used in more subtle, indirect and conceptual ways: bringing about changes in knowledge and understanding, or shifts in perceptions, attitudes and beliefs, perhaps altering the ways in which policy-makers and practitioners think about what they do, how they do it, and why. (p. 189)

This conceptual/instrumental distinction is itself a good example of a concept that offers practical value. Having heard it, it becomes a way to think about formulating and communicating research ideas, and about what conceptual understanding may be necessary for any

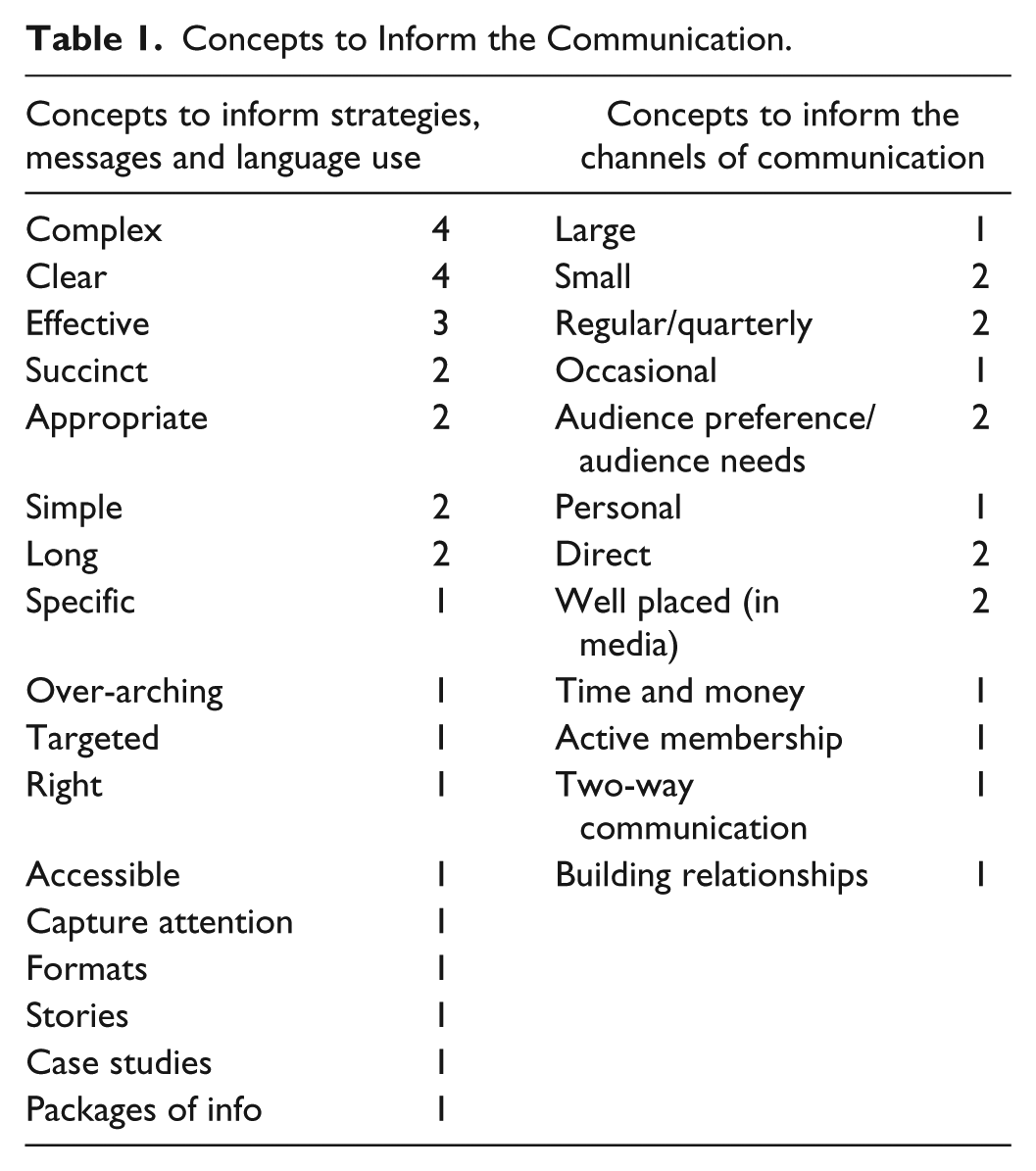

Given the diversity of U.K. social research, and the diversity of potential users, stakeholders, and contexts of use, it is legitimate to ask what conceptual understanding of communication is offered by the SSG. Table 1 therefore lists the concepts offered to researchers to inform and evaluate their “strategies,” “messages,” “language,” and “channels.”

Concepts to Inform the Communication.

Van Leeuwen (2008) observes that representations of social and professional practices involve such representations of what is “good” or “bad” or “useful” or “interesting,” and why (pp. 18-21). In this case, the SSG reader already knows that “clear,” “effective,” and “accessible” are good, because the opposites are clearly not good. These are

In practice, to produce a “succinct” text for an unfamiliar readership is likely to involve investigating the professional expectations of the genre, and more specifically, what information can be assumed and what needs to be articulated for those readers. That kind of interactive investigation is vital if the research outputs are to have professional credibility, and not be dismissed as, say, abstract, patronizing, useless, or unreadable.

The concept of “appropriate” texts and language has been a focus of critique for many years (e.g., Fairclough, 1992a). What it actually

In this way, the guide appears to draw on a familiar and much-critiqued discourse of communication as “decontextualised skills” (Ivaníc et al., 2009), as if one can engage in “appropriate” and “effective” communication simply guided by a universalized notion of “clarity,” without knowing or investigating the specialist genres and discourses of the professionals you want to communicate with.

The SSG does offer one example of how to “ensure that the language you use is appropriate for the audience”:

For example, the Institute for Social and Economic Research published a report called

While the original press release may have been wise, its inclusion as a single example of “appropriate” language is highly problematic, especially without any concepts with which to review it. It is likely to reinforce the common notion that researchers need to adopt a more “tabloid” discourse to communicate beyond academia and generate impact. There are no warnings about the consequences.

I would argue that if publicly funded university research is to have impact, then it needs to retain the integrity and rigor that is its strength and core value. So any advice on making research engaging and accessible must, above all, include advice on monitoring whether the original research claims have shifted in the process. In her classic article, Fahnestock (1986) analyzed “the fate of scientific observations as they passed from original research reports intended for scientific peers into popular accounts aimed at a general audience” (p. 275). She identified significant transformations in the apparent focus of the research and the scientific claim (e.g., here from “atypical employment” to “kind of work,” and from “well being” to “bad for you”). She observed that scientific claims can become more certain within popularizations, as the theoretical perspectives, experimental constraints, and elements of context are put aside in favor of simply reporting a finding.

The ORCHESTRA project showed repeatedly the fundamental socio-linguistic point that changing the language changes the meaning. To increase the “clarity,” “relevance,” and “accessibility” of research findings often involves requesting further conceptual clarity from the researchers. To ensure that every statement is

Substitutions: The Representation of Policy Makers, Practitioners, and Publics

Substitution is described by van Leeuwen (2008) as “the most fundamental transformation” (p. 17). Participants and actions can be particularized or generalized, aggregated or nominated in ways that can transform their apparent relations and identities.

In the SSG, the researcher is individualized and activated as “you” with responsibility for all actions. At the same time, the policy makers, industries, practitioners, and publics who may use the research (and/or benefit or lose by its use) are all aggregated and passivated. Of 58 references to other people, 29 represent them as an “audience.” Indeed, 65% of all references to other people define them solely in their relation to “your research” (Table 2, left column).

Representations of Those To/With Whom the Researcher Communicates.

The right column shows the only groups identified specifically by profession. (An additional linked pdf page simply lists sector categories such as “Large business,” “SMEs,” “Senior Civil Servants,” and “Trade unions.”)

Of these, MPs and journalists are two professions that can create visibility at government level for ESRC-funded research, and so may influence policy. A new section appeared in the toolkit in early 2012 on “Taking the research to Westminster” and “Contact[ing] government organisations.” Yet a focus on them represents a potentially centrist, top-down view of innovation and professional change. It is also highly problematic in terms of achieving informed and wise impact for the U.K., since MPs and journalists are at least one step removed from the detail of most professional practice.

Taking U.K. university research directly to MPs and journalists risks bypassing vital peer review from experienced practitioners. From experience, I would argue that professionals in fields relevant to the use of the research are a vital source of insight—about whether and how the research could be useful in practice, what problems, concerns, and questions there will be, and what might need to be communicated for people to understand it. Practitioners are potentially the most able to trial it, challenge it, refine it, and initiate its use.

The SSG contains no reference to such dialogue with practitioners. There is nothing on what the researcher might gain or learn from them, or on the ways in which different “audiences” might use different aspects of the research in different ways. This omission in the SSG contrasts with the ESRC demand, for example, that

by considering impact from the outset, we expect you to

Explore who could potentially benefit from your work Look at how you can increase the chances of potential beneficiaries benefiting from your work. (ESRC, n.d.-b: What the ESRC expects)

These two

Substitutions: The Representation of Communicative Actions

The page headings of the SSG represent the communicative process as “setting objectives” to deliver “messages” to “audiences” through “channels.” In the detail, the communicative process is similarly represented as material actions:

Actions by “You,” To/With/For Others.

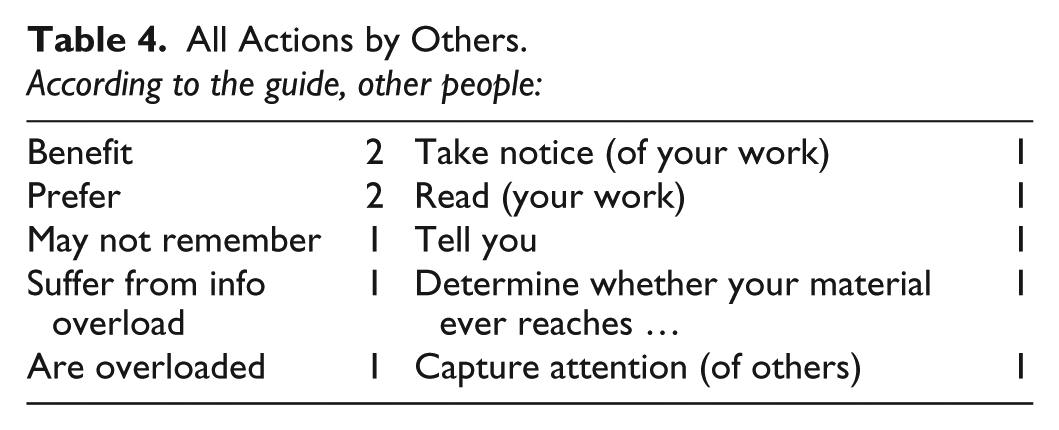

There are no interactive processes like “discuss” or “ask” or even “explain.” Communication is represented as a mechanical process of information management, or “shunting information” (Smith, 1985), rather than generating interaction and understanding. (The single reference to “consider” is the only finite process that explicitly represents a response to what the other people may say; “two-way communication” is mentioned once as a noun.)

Consistent with this, the actions also passivate others as recipients of activity or as being subjected to it. For example, “journalists are worth cultivating too.” The only actions (finite processes) for which other people are the agents are shown in Table 4, column 2, yet in each case their actions are reactions, and solely in relation to the research. (The activated process in the right column is associated only with MPs, MPs’ researchers, and CEO’s assistants, discussed above.)

All Actions by Others.

There are pages elsewhere in the toolkit that can at first appear to counter this passivation of others. For example, a page alongside the SSG, titled “How to maximise impact,” calls for “establishing networks and relationships with research users,” for “involving users at all stages of the research,” and for “well planned public engagement and knowledge exchange strategies” as “key factors that are vital.” This sounds like it is advocating dialogue. However, it remains consistent with the SSG: first, it gives all agency to “you”, the researcher, and second, it offers no indication of what you may learn from doing it.

The reality of any research communication process is that the potential users and other key “audiences” are likely to be already informed by extensive professional experience, and possibly by other research. They will have well-established institutional practices, professional debates, current controversies, and concerns, and they may have commitments to existing policies. All of that forms the context and the real challenge of research communication.

Yet there is nothing in the SSG to prepare you for this. It implicitly reproduces a view of research communication, and more broadly of science and society, that has been much critiqued (e.g., Irwin, 1995; Myers, 2003; Wynne, 2005), in which the professional and public worlds are unknowing, separate from the research world, and a blank slate waiting to receive the research.

Legitimating the Guide and the Demand to “Maximize Impact”

Finally, I turn to the issue of legitimation. Van Leeuwen (2008) argues that “texts not only represent social practices, they also explain and legitimate (or delegitimate, critique) them.” Texts explain (or assume) why a social or professional practice “must take place in the way that it does” (p. 20). In analyzing the SSG, I suggest it is useful to explore how it legitimates (a) itself and (b) the demand for every research project to “maximize impact.”

For the guide itself, one source of legitimation is the use of an all-knowing expert voice: The categorical statements appear to know the problems “you” will face, and how to address them. It is self-evidently in “your” interest to follow the advice:

An effective strategy needs to have . . . ; it is worth . . . ; it is useful to . . . ; it is important to . . .; In order to . . . you need to . . .

A further source of legitimacy is simply that the advice offered is so basic that, in van Leeuwen’s (2008) terms, it appears to be “common sense and in little need of legitimation” (p. 20).

Yet categorical claims and imperatives (like “avoid using . . . ,” “ensure that . . . ” “find out . . . ,” and “don’t assume . . . ”) usually need a further source of legitimation. In this case, it is provided implicitly by the role and status of the institutional author, the ESRC. This author has the ultimate power of judging “your” funding applications. So their advice is an indication of what will get research proposals funded. Indeed, in procedural terms, it can be useful for the ESRC-funded researcher if the advice is singular and categorical.

It is therefore vital to recognize that this guide is

Perhaps the most interesting observation in terms of legitimacy is the ESRC’s departure from academic and scientific practice. Within research, a traditional source of legitimacy is to cite and build on what others have said and done before. In social science, legitimacy also involves offering empirical evidence of some kind. Those are the professional practices of the intended readers of this guide. As a research funder, the ESRC usually insists on such practices. Moreover, there is a huge amount of research available to inform this guide.

Yet the ESRC breaks with academic practice, to offer no research insights or evidence. There are no social science references. Even in a policy document outside academia, we could expect some support and referencing. In this case it appears that the institutional power of the author, and the common sense nature of the advice, make research and evidence unnecessary.

The ESRC guide and toolkit thereby place the practice of research communication, and the ESRC’s process of guiding it,

An alternative approach could have been to make research communication intellectually attractive to researchers. The guidance could have concisely summarized at least some of the academic insights which point to the challenges of research communication and which reveal it as an intellectually interesting activity. The equivalent guide from the EC (2010) does so. The ESRC guide could build on the traditions of participatory and action research in the social sciences, to inform a process of creating productive relationships with stakeholders and potential users. Yet the ESRC chooses not to do so.

Finally, I turn to our second question of how the guide legitimates the policy demand for every project to “maximize impact.” This is the demand for impact generation to become “a permanent core activity in universities” (HM Treasury, 2004, p. 76). The answer would appear to be precisely in simplistic nature of the advice. The representation of research communication as a series of self-evident and easy tasks, needing only common sense strategies, makes impact

If the ESRC had drawn on the available literature and offered a fuller understanding of research communication and impact generation in practice, it would risk pointing to the “largely insurmountable challenges” (Davies et al., 2008, p. 189). Instead, the ESRC discourse echoes the commercial discourse analyzed by Fairclough (e.g., 2002, p. 115) where companies minimize the apparent task: You “just” do this.

As if anticipating this guide, van Leeuwen (2008) observes that “some texts are almost entirely about legitimation . . . and make only rudimentary reference to the social practices they legitimise” (p. 20).

Concluding Comments

In this article, I have suggested a three-point agenda for social science to inform the cross-institutional communication of research as a rapidly growing area of professional academic practice. I have illustrated the need for a fundamental critique of the current guidance discourse by analyzing the ESRC’s

Ironically, the guide provides a good example of why the uptake of research, and the connecting of research and practice, is not just about making research accessible or available. The guide is written by the U.K. organization whose function is to promote the use of social science research, and which has unique access to the body of research and to scholarly advice. It nevertheless chooses to put all this aside in favor of informing a U.K. shift in professional practice on the basis of a naively rationalist common sense discourse. It can even seem functional to do so.

A major challenge when communicating with policy makers and practitioners is precisely to make the case that research insight may be useful in practice. It often involves struggling with a dominant professional ideology that practice is simply common sense, and that it is better guided by the common sense of managers without reference to research. This is a particular challenge for social scientists, where the findings are not technologies or

So does the guide matter? As always, practitioners may realize the guidance is inadequate, and may draw on other insights and develop their understanding from reflective practice to achieve some success. That is very likely in the case of communicating research and generating impact. However, I suggest the inadequacy does matter in practice.

First, it matters within the culture of universities. Separating this vital activity from academic debate, and representing it as simple common sense, only serves to give it low status in relation to research. Focusing the purposes and actions on bureaucratic and procedural demands separates and undermines it further. There is an already pervasive assumption that research communication happens after the intellectual work of research, rather than constituting part of that work. This guide appears to confirm it. It therefore undervalues the intellectual work carried out by anyone who genuinely wants to engage with practitioners and policy makers to communicate their research. It is an example of how to undermine and

Second, it matters outside the university, in industry, policy, and public arenas. In each part of the analysis above, I have suggested that if the guide were actually followed, it would risk producing uninformed actions that lack credibility among the intended “audiences,” and risk undermining the integrity and rigor of the research. Moreover, the lack of status of the activity, the focus solely on the researchers’ own objectives, the lack of any recognition of the value of practitioner review, and the view of professionals simply as receiving “audiences” will not go unnoticed by those “audiences.” The focus on quick visibility with politicians and journalists, rather than engaging with the complexities of practice, can only undermine it further. In each way, the guidance can actually undermine the policy of maximizing the impact of social science research.

Third, it matters within the funding councils themselves and the wider U.K. policy, because it institutionalizes an attractive and reductive understanding of research communication among those who influence funding. The evident danger of institutionalizing such a simplistic view is that the ESRC reviewers of research proposals may not recognize the dangers and the sheer inadequacy of proposals that merely promise to write to MPs and “get the existing information to everyone for very little cost.” Consequently, they may reject as “over-evaluated” and too costly, those proposals that recognize the challenges and that realistically cost the intellectual and practical work needed to distill the research in new ways, engage with practitioners, and investigate current practices, concerns, and debates. If so, such funding decisions will run directly counter to achieving the goal of enabling social science research to contribute to society and the economy.

Footnotes

Author’s Note

Earlier versions of this analysis were presented at the ESRC conference “Bridging the Gap Between Research, Policy and Practice” (Pardoe, 2011a), and at the interdisciplinary conference on “Applied Linguistics and Professional Practice” (Pardoe, 2011b). I would like to thank Dr. Sherilyn MacGregor, the participants in the two conferences, and the anonymous peer reviewers for their helpful, critical and encouraging comments.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research and/or authorship of this article: European Commission (FP7 project 226521, 2009-12).