Abstract

Background:

Depression and anxiety affect nearly 1 in 4 Canadians. Traditional patient education materials, such as handouts, are often lengthy and difficult to understand, leading to disengagement. Human-like artificial intelligence (AI) avatars offer a novel way to supplement education by delivering consistent, engaging video content that mimics human interaction and is easily accessible online.

Objective:

This pilot study aimed to develop a human-like, non-generative AI avatar educational video to support education on antidepressants for patients living with depression and anxiety. The secondary objectives were to evaluate participants perceptions of the tool across 3 domains: credibility, satisfaction, and understanding.

Methods:

The video was developed through 2 Plan-Do-Study-Act (PDSA) cycles, informed by prior research on patient-reported barriers and enablers to antidepressant use. After viewing the video, participants completed a survey assessing the 3 domains. Success was predefined as ≥60% of participants rating each domain ≥4 on a 5-point Likert scale. Open-ended feedback was summarized descriptively to help inform revisions.

Results:

Fifteen University Health Network (UHN) Patient Partners participated in PDSA Cycle 1, most with lived experience of depression or anxiety and high digital literacy. Success thresholds were achieved for credibility (75%) and satisfaction (67%) but not for understanding (50%). After revisions, 10 participants from the original group completed PDSA Cycle 2, where all domains exceeded thresholds (credibility 90%, satisfaction 85%, understanding 82%). Participants described the tool as trustworthy, clear, and engaging.

Conclusion:

This pilot study demonstrated that human-like, non-generative AI avatars can be an effective supplementary educational tool to deliver education on antidepressants for individuals with depression and anxiety. The tool demonstrated acceptability across credibility, satisfaction, and perceived understanding, highlighting its potential to enhance patient engagement and access to reliable information. As a scalable and adaptable format, avatar-based education may extend beyond mental health to other conditions, languages, and clinical settings. Future studies should examine its impact on knowledge retention, treatment adherence, and integration into clinical practice.

Keywords

Introduction

Mental health disorders are leading contributors to the global disease burden, with depression and anxiety ranked among the ten most disabling conditions worldwide. 1 More than 300 million people live with anxiety-related disorders and 280 million with depression, affecting over 7% of the global population. 2 These conditions impair daily functioning and quality of life, contributing to over $50 billion annually in healthcare expenditures and lost productivity. 3

Management of depression and anxiety commonly involves a combination of psychotherapy and pharmacotherapy. 4 While cognitive-behavioral therapy (CBT) and related interventions are effective, pharmacotherapy remains the cornerstone of treatment for moderate to severe symptoms. 4 Selective serotonin reuptake inhibitors (SSRIs) and serotonin-norepinephrine reuptake inhibitors (SNRIs) are widely prescribed, however, adherence to these medications remains suboptimal. 5 Approximately one-third of patients discontinue antidepressants within 3 months, and nearly 55% discontinue within 6 months. 5

Multiple patient-reported barriers to adherence have been identified, including fear of side effects, preference for non-pharmacological approaches, uncertainty about effectiveness, and stigma. 6 Conversely, facilitators include positive treatment experiences, structured routines, and trust in healthcare providers. 6 Patients have also emphasized the importance of reliable and personalized education available in multiple formats. 6 Key priorities for educational resources include information on the safety and effectiveness of antidepressants, a better understanding of depression, medication administration, healthcare experiences, and social influences. 6

Traditional education tools, such as printed medication handouts, are often lengthy, difficult to understand, non-specific, and easily misplaced. 7 Many patients instead turn to unverified online sources, with 23% reporting harm and 43% reporting distress from misinformation. 8 Video-based medication education has emerged as a promising alternative to improve health literacy and satisfaction.9,10 However, conventional video production is time consuming, costly, and difficult to revise with emerging evidence. Artificial intelligence (AI) offers a scalable and efficient approach to deliver personalized, reliable, and engaging education. 11

Among AI applications, non-generative AI such as scripted avatars are particularly suited for healthcare because they ensure accuracy and consistency, whereas generative AI can create new content dynamically but risk introducing misinformation when used for patient education. 12 Human-like avatars, when designed to resemble healthcare providers in tone and appearance, may be perceived as more trustworthy and relatable for patients compared to cartoon characters. 13

Despite increasing interest in AI in healthcare, limited research has focused on supplemental patient education on antidepressant medications. To our knowledge, this pilot study is the first to develop and evaluate a human-like, non-generative AI avatar video designed to improve antidepressant education for patients living with depression and anxiety. The study also aimed to examine participants perceptions of the avatar video as an educational tool across 3 domains: credibility, satisfaction, and understanding.

Methods

Study Design and Setting

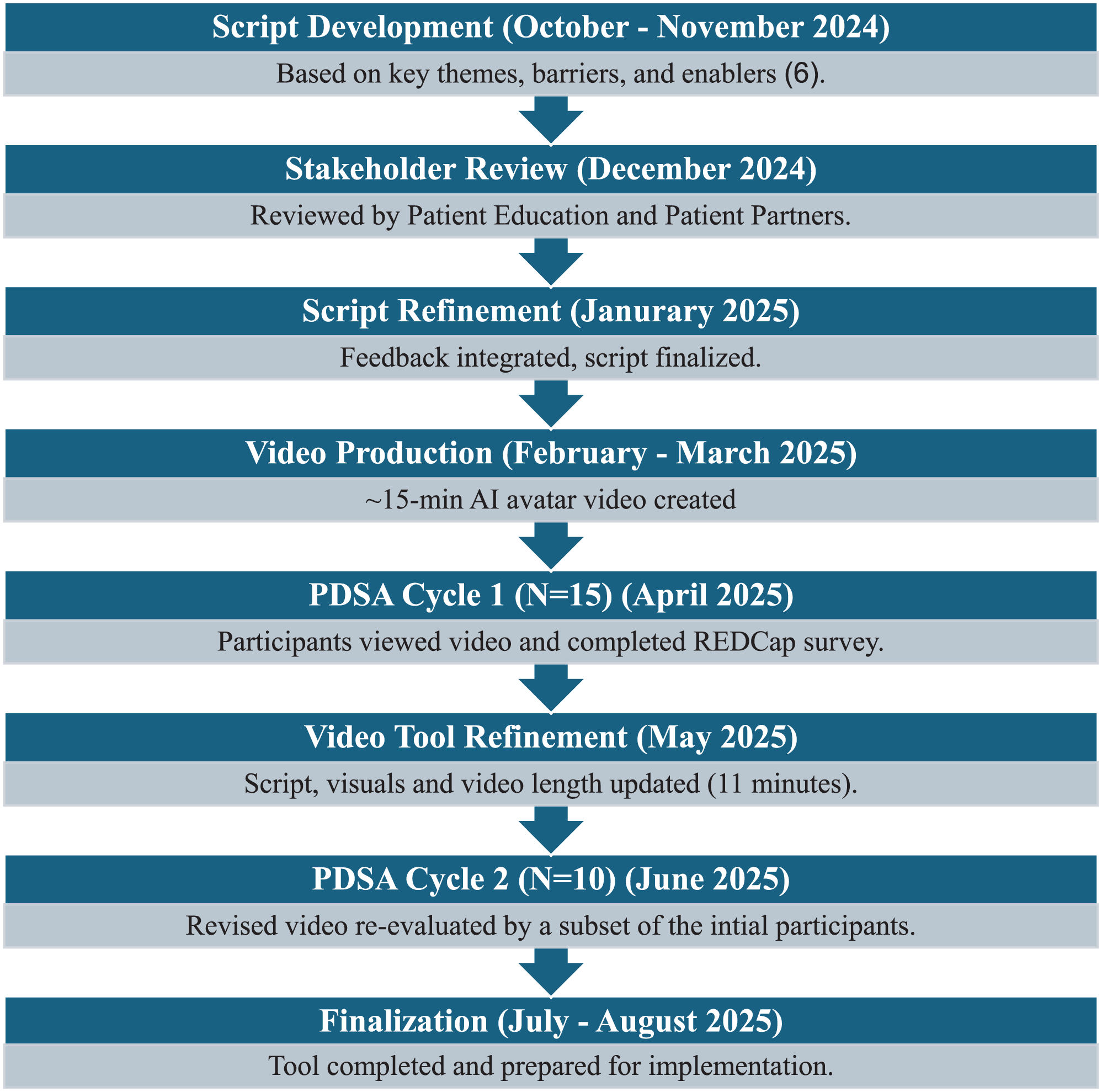

This pilot study used 2 Plan-Do-Study-Act (PDSA) cycles to develop and evaluate a human-like AI avatar educational video on depression and anxiety between February and August 2025. The study was conducted at the University Health Network (UHN) led by an interprofessional team from the Toronto Western Family Health Team (TW-FHT) in Toronto, Ontario. The overall study design is illustrated in Figure 1.

Development and evaluation design.

AI Avatar Video Development

The educational resource was a scripted, non-generative AI avatar video designed to deliver standardized and engaging education on the management of depression and anxiety, with a focus on antidepressants. The content included an overview of depression and anxiety as medical conditions, the role of antidepressants in treatment, commonly used classes of medications (e.g., SSRIs, SNRIs), expected benefits, potential side effects, strategies to manage adverse effects, and practical considerations such as how and when to take medications. The script also incorporated themes identified in prior qualitative work on patient-reported barriers and enablers to antidepressant adherence in primary care, such as uncertainty about effectiveness, concerns about side effects, stigma, and the importance of treatment routines and trust in providers. 6

The script was co-developed by our project team which included pharmacists (AB, PM, DK, KL, YM, CP) and a family physician (CJ) with input from a research scientist (SL). To ensure clarity, inclusivity, and adherence to plain language standards, it was reviewed by a UHN Patient Education Specialist. Structured feedback was also solicited from a group of 5 UHN Patient Partners with a history of depression and/or anxiety, who were not participants in the PDSA cycles, but engaged specifically to review and edit the script. Feedback from this group was integrated into the script to optimize readability, inclusivity, and patient-centeredness prior to video production.

The finalized script (Supplemental Appendix A) was uploaded to a non-generative AI video platform that produces human-like avatars. Two avatars, modeled after pharmacists on the study team (AB, CP), were developed to deliver the content in a conversational and credible tone. The avatars were filmed in a clinical consultation room to reflect a real-world primary care setting. The initial video was approximately 15 min in duration and following revisions from PDSA Cycle 1, it was condensed to about 11 min. Post-production edits were completed using Adobe Premiere Pro, including adjustments to timing, captions, and sequencing. Animations and visual aids, developed with support from an interactive arts and multimedia student, were added to further enhance accessibility and engagement.

Participants

Fifteen individuals from the UHN Patient Partner Program participated in this study. This program is a structured initiative that engages people with lived experience to contribute to healthcare quality improvement (QI) across UHN. Volunteer members participate in a range of projects, and invitations to this study were disseminated by the Patient Partners Coordinator to the wider network, with follow-up communication provided to those interested and eligible. Eligibility criteria included being ≥18 years of age, able to speak English, having access to video-capable technology, and the ability to independently complete an electronic survey. While a personal history of depression or anxiety was preferred, it was not required. All participants provided electronic informed consent through the REDCap eConsent platform. 14 A total of 15 participants completed PDSA Cycle 1, of whom 10 also participated in PDSA Cycle 2.

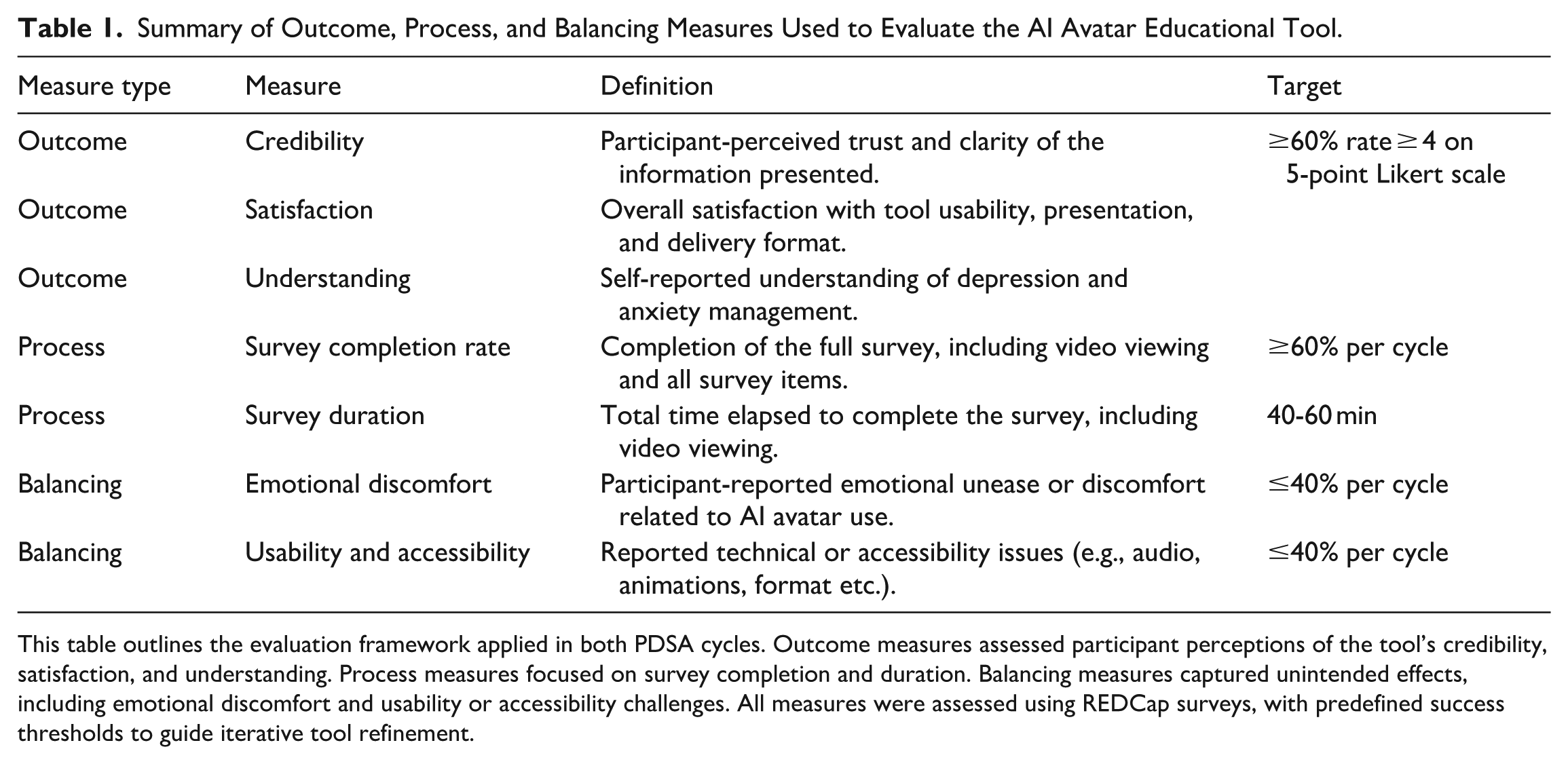

Evaluation Framework

This QI study used an evaluation framework aligned with QI reporting standards, assessing intervention performance across outcome, process, and balancing measures. The evaluation focused on 3 patient-reported domains: (1) credibility (confidence, trustworthiness, and clarity of the information presented), (2) satisfaction (ease of use and engagement), and (3) understanding (understanding of information) provided by the AI avatars. These domains were developed collaboratively with a senior evaluator with expertise in QI design and informed by prior validated measures of digital health education tools.13,15 This adaptation was combined with domains identified in earlier patient-reported barriers and enablers to antidepressant adherence ensuring the evaluation reflected both theoretical grounding and patient-centered priorities. 6 These patient-reported domains formed the basis for all survey items and were assessed using a structured REDCap instrument.16,17 A description of the survey content is in Supplemental Appendix B.

Summary of Outcome, Process, and Balancing Measures Used to Evaluate the AI Avatar Educational Tool.

This table outlines the evaluation framework applied in both PDSA cycles. Outcome measures assessed participant perceptions of the tool’s credibility, satisfaction, and understanding. Process measures focused on survey completion and duration. Balancing measures captured unintended effects, including emotional discomfort and usability or accessibility challenges. All measures were assessed using REDCap surveys, with predefined success thresholds to guide iterative tool refinement.

Data Collection and Analysis

All data were collected using an 11-item REDCap survey that included both Likert-scale questions and open-text feedback. Identifying fields were excluded to ensure the dataset was de-identified prior to export into Microsoft Excel for structured analysis.

Quantitative data were analyzed descriptively, appropriate for the small sample size and QI design. For each Likert-scale item, frequencies, percentages, means, medians, standard deviations, and ranges were calculated. Items were grouped into 3 domains (credibility, satisfaction, and understanding) and domain-level composite scores were generated by averaging across relevant items. Results were reported separately for each PDSA cycle and descriptively compared across cycles. Demographic variables were summarized using frequencies and percentages.

Open-text responses were analyzed using a descriptive summary approach. Two team members (AB, CP) independently reviewed all comments to identify recurring suggestions, issues, and areas for refinement, then compared findings and resolved discrepancies by discussion; a third reviewer was available if needed. Comments were grouped into 4 predefined categories aligned with participant priorities and evaluation domains: (1) trust in source and content, (2) avatar delivery and presentation, (3) emotional tone and engagement, and (4) format preferences and barriers.

Balancing measures, including emotional discomfort and usability challenges, were also assessed through survey feedback. These data were reviewed after each PDSA cycle to identify concerns with tone, clarity, or accessibility and to guide modifications. All feedback and decisions were tracked in a QI log. No inferential statistical testing or formal qualitative coding was performed, consistent with the exploratory nature of a pilot QI study. The analytic approach emphasized transparency, feasibility, and responsiveness to participant input.

Ethics Approval

This project was approved by the Quality Improvement Review Committee. As a quality improvement initiative, it was exempt from Research Ethics Board review and conducted in accordance with institutional policies for privacy, data security, and ethical QI practices.

Results

AI Avatar Tool Development

The primary outcome of this project was the development of a human-like AI avatar educational video depression and anxiety management. The video features 2 digital pharmacist AI avatars (AB and CP) delivering content through human-like narration, with supportive visual elements to enhance clarity and engagement (Figure 2). The final video version included chapter segmentation, animations, closed captioning, simplified language, and inclusive graphics to improve accessibility and user experience (Figure 3).

AI avatar educational tool video interface.

Key design features of the AI avatar tool.

Participant Characteristics

Fifteen participants completed the first PDSA cycle, and of these, 10 completed the second cycle. The majority were aged 30 to 59 years, with over 70% holding a college or university degree. Most reported a history of depression and/or anxiety and had past or current experience using antidepressants (67%). Comfort with digital tools was high, with nearly all participants rating themselves as moderately or very comfortable using technology (Figure 4).

Key revisions to the AI avatar video between PDSA cycles.

Demographic characteristics are summarized in Table 2. All 10 participants in PDSA Cycle 2 were part of the original sample, and their demographic characteristics did not differ meaningfully from the overall group.

Participant Demographics.

Summary of participant characteristics (N = 15), including age, education, mental health history, antidepressant use, and comfort with digital technology. These variables were collected to contextualize survey responses and explore trends in participant perceptions of the AI avatar tool. All 15 participants completed Cycle 1. Of these, 10 also participated in PDSA Cycle 2. No meaningful demographic differences were observed between PDSA cycles.

PDSA Cycle 1: Outcome Measures

Outcome Measures

In PDSA Cycle 1, composite scores for credibility (mean = 4.25, SD = 0.91) and satisfaction (mean = 3.77, SD = 1.38) exceeded the predefined success threshold of ≥60%, with 75% and 67% of participants, respectively, rating the domain items as 4 or higher on a 5-point Likert scale. The understanding domain composite fell below the threshold (mean = 3.42, SD = 1.47), with only 50% of participants rating relevant items as 4 or higher. Within the “understanding” domain, most participants reported improved understanding of depression and anxiety (mean = 3.67), however, fewer endorsed increased confidence in starting or continuing antidepressants (mean = 3.00). This information is demonstrated in Table 3 below.

Participant Ratings of the AI Avatar Educational Tool in PDSA Cycle 1.

Summary of survey responses (N = 15) evaluating credibility, satisfaction, and understanding of an AI avatar-delivered video on depression and anxiety. Ratings were based on a 5-point Likert scale, with domain composite scores calculated as the average of all items within each domain shown in bold. The “Rated ≥ 4” column reflects the proportion of participants who rated each item as 4 or 5. A predefined success threshold of ≥60% was used to indicate acceptability.

Revisions Between PDSA Cycles

Illustrative quotes from open-text feedback after PDSA Cycle 1 was summarized and grouped according to the 4 key themes as presented in Table 4.

Summary of Qualitative Open-Text Feedback after PDSA Cycle 1.

Represents participant comments from PDSA Cycle 1, organized by outcome domain and grouped into common content areas to guide iterative development.

PDSA Cycle 2: Outcome Measures

Outcome measures in PDSA Cycle 2 were evaluated using the same post-video survey as in Cycle 1. Table 5 presents a comparison of the percentage of participants who rated each domain with a score of 4 or higher on a 5-point Likert scale. All 3 domains exceeded the predefined 60% success threshold in PDSA Cycle 2.

Comparison of Domain-Level Outcomes Between PDSA Cycles.

Each domain represents the average of Likert-scale responses to multiple survey items. Success was predefined as ≥ 60% of participants rating each item within a domain ≥ 4 out of 5. Full item-level results for PDSA Cycle 1 are presented in Table 3.

Participant Feedback Following PDSA Cycle 2

Qualitative feedback from PDSA Cycle 2 highlighted perceived improvements in the tool’s clarity, delivery, and inclusivity. Participants noted that the revised format enhanced usability, with 1 commenting,

Process and Balancing Measures (PDSA Cycles 1 & 2)

Process and balancing measures assessed survey feasibility, participant burden, and unintended effects. Table 6 summarizes completion rates, duration, emotional discomfort, and usability challenges across both PDSA cycles. Average survey duration exceeded the target of 40 to 60 min, taking over 80 min in both cycles. By Cycle 2, all other measures met predefined thresholds, with notable improvements in emotional discomfort and usability.

Process and Balancing Measures Across PDSA Cycles.

Survey completion rate reflects the proportion of participants who completed the entire study protocol, including video viewing and survey responses. Survey duration refers to the average time from survey initiation to completion, including video viewing. Emotional discomfort includes any participant-reported unease or distress related to the AI avatar tool. Usability and accessibility challenges encompass reported technical issues, navigation difficulties, or audio-visual concerns. Predefined success targets were based on feasibility and acceptability thresholds for pilot interventions.

Discussion

This quality improvement pilot study explored the development and evaluation of a non-generative AI avatar video designed to supplement patient education on depression and anxiety in a primary care setting. Across 2 PDSA cycles, participant feedback guided iterative refinements in delivery, format, and content. The final version demonstrated feasibility and acceptability, with measurable improvements across credibility, satisfaction, and perceived understanding meeting all predefined success thresholds. Our findings are consistent with a growing body of literature suggesting that AI-driven video tools may support patient engagement and perceived understanding as components of health education. A previous study exploring human-like AI avatars delivering surgical education were perceived as more engaging and trustworthy than traditional chatbots, particularly when modeled after healthcare professionals in tone and appearance. 13 In the field of mental health education, AI tools like the eXtended-reality Artificial Intelligence Assistant (XAIA), have shown early promise for patient education. 18 However, prior literature suggests that trust, engagement, and perceived usefulness of digital mental health tools can vary across individuals, and that design features such as cartoon-like avatars may reduce engagement and relatability for some users. 18 Additionally, the generative nature may introduce the risk of misinformation and content inconsistency, which is especially problematic in sensitive areas like mental health education. 18 Other studies have used avatars for medication support or triage but rarely focus specifically on antidepressant education, making our study one of the first to test this in practice. 19

Unlike generic online videos, the AI avatar resource developed in this study offers distinct advantages. Avatars can be rapidly generated, updated as medical information evolves, and adapted across multiple languages, providing a scalable format for diverse populations. They ensure consistent delivery across sessions, eliminate the need for repeated filming, and can be enriched with features such as interactive knowledge checks. Importantly, this tool was co-designed with Patient Partners and grounded in prior research on patient-reported barriers and enablers of antidepressant adherence, ensuring alignment with patient priorities such as clarity, empathy, and inclusivity. This study suggests that AI avatar-based education may support patients perceived understanding of antidepressant treatment, which represents an important component of health literacy. Health literacy is a critical determinant of treatment engagement in depression and anxiety, yet many patients report confusion about benefits, risks, and treatment timelines. 20 Improved health literacy has been associated with greater treatment engagement and adherence; however, this study did not directly measure medication adherence, symptom change, or clinical outcomes. 20 Rather, the findings provide preliminary insight into patient perceptions of avatar-based education and support the rationale for future evaluation of such interventions using objective patient-reported and clinical outcome measures. The co-design process was integral to the tool’s development. Patient Partners shaped language, tone, and delivery style, ensuring the content was patient centered. At the same time, participant feedback identified challenges such as emotional discomfort related to sensitive mental health content, usability considerations with digital technology and survey length, highlighting important opportunities for further refinement of the tool.

This study has several limitations. The participant sample was relatively homogenous with respect to language, education level, age, and digital literacy, which may have contributed to favorable evaluations and limits generalizability to more diverse populations. The small sample size and incomplete participation across cycles, with only two-thirds of participants completing both PDSA cycles, further constrain the generalizability of the findings. As the intervention relied on digital delivery, individuals with limited access to technology or lower digital literacy may also face barriers to engagement. Additionally, outcomes were based on self-reported measures rather than validated knowledge assessments, limiting conclusions regarding learning or behavior change. Self-reported data may be subject to response bias, and survey length may have contributed to participant fatigue. Qualitative feedback was collected through written survey comments rather than in-depth interviews, which may have restricted the depth and nuance of insights obtained. Additionally, the survey required more time than anticipated, often exceeding the intended 40–60 min. Future studies may consider shorter or modular survey formats to enhance feasibility and participant engagement.

Despite these constraints, this study demonstrates the feasibility of developing a low-resource, scalable AI avatar intervention for mental health education. The format is adaptable to other chronic conditions, could be offered in multiple languages, and can be integrated into clinical workflows through patient portals, QR codes, or after-visit summaries. Future work should evaluate the impact of avatar-based education on knowledge retention, antidepressant adherence, and symptom reduction, as well as service-level outcomes such as reduced follow-up visits or improved guideline-concordant prescribing. Comparative studies with traditional resources (e.g., pamphlets, static videos, real-time counseling) will help clarify relative effectiveness. Finally, as generative AI evolves, future tools may incorporate interactive dialogue, though safeguards will be essential to avoid potential for AI hallucinations and misinformation.

Conclusion

This pilot study provides early evidence that non-generative, human-like AI avatars are an acceptable and credible modality for supplementary patient education on depression and anxiety. By demonstrating positive ratings across credibility, satisfaction, and perceived understanding, this study offers preliminary insight into patient perceptions of AI avatar-based education in a primary care setting. While this pilot study did not assess objective knowledge acquisition, medication adherence, or clinical outcomes using validated instruments, the findings support the feasibility of this approach and inform future evaluation. Future research should assess avatar-based education using knowledge assessments and objective outcomes, such as treatment adherence to better understand its impact. With their scalability, adaptability to multiple languages, and potential for integration into clinical workflows, AI avatars represent a promising adjunct to traditional patient education across diverse conditions and care settings.

Supplemental Material

sj-docx-1-jpc-10.1177_21501319251413030 – Supplemental material for Development and Evaluation of an AI Avatar Educational Tool for Depression and Anxiety: A Qualitative Pilot Study

Supplemental material, sj-docx-1-jpc-10.1177_21501319251413030 for Development and Evaluation of an AI Avatar Educational Tool for Depression and Anxiety: A Qualitative Pilot Study by Adam Bleik, Patricia Marr, Shelly-Anne Li, Debbie Kwan, Catherine Ji, Kori Leblanc, Yuki Meng and Christine Papoushek in Journal of Primary Care & Community Health

Footnotes

Acknowledgements

We would like to thank Angela Lu (Graphics Design Student) for her contributions to the video design and animation. We also acknowledge Alicia Goorbarry (UHN Patient Partnerships Coordinator), Scott Christian (Senior Evaluator at Logical Outcomes) and Dr. Noah Crampton (Family Physician) for their support with participant coordination, quality improvement processes, resource development, and evaluation design.

ORCID iDs

Ethical Considerations

This project was approved by the University Health Network (UHN) Quality Improvement Review Committee (QIRC; ID# 25-1037) on February 27, 2025, and classified as a quality improvement initiative exempt from Research Ethics Board (REB) oversight under the Tri-Council Policy Statement V2.

Consent to Participate

All participants provided informed electronic consent through a secure REDCap platform prior to participation.

Author Contributions

All authors contributed to the conception and design of the study, data interpretation, and manuscript preparation. The primary author, Adam Bleik, led manuscript drafting, data analysis, and revisions. All authors reviewed and approved the final version of the manuscript submitted for peer review.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project received financial support from The Honorable Charles & Anne R. Dubin Scholarship for Excellence in Family Practice at Toronto Western Hospital bestowed by the University Health Network Foundation and Department of Family and Community Medicine. The funder was not involved in the study design; collection, analysis, and interpretation of data; writing of the report; and in the decision to submit the article for publication.

Declaration of Conflicting Interests

The authors declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Adam Bleik, project lead, is a co-founder of

Data Availability Statement

De-identified data supporting the findings of this study are available from the corresponding author upon reasonable request, in accordance with institutional guidelines and data governance policies.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.