Abstract

Objective:

This conceptual paper explores the transition from apomediation to AIMediation, allowing patients or users to independently seek and access health information on their own, often using the internet and social networks, rather than relying exclusively on the intermediation of health professionals. It examines how generative artificial intelligence (GAI) reconfigures the dynamics of informational authority, access, and user autonomy in digital health environments in light of the increasing use of generative AI tools in healthcare contexts.

Method:

This study examined how mediation models in health information have changed over time. It uses Eysenbach’s framework and new developments in large language models (LLMs). A new model was created to compare intermediation, apomediation, and AImediation.

Results:

AImediation emerges as a new paradigm in which patients or users interact directly with AI tools such as ChatGPT, Claude, Perplexity, or Gemini to access compiled multi-source health information. While this model retains the user autonomy characteristic of apomediation, it centralizes information flows and removes peer-based social layers. Key challenges include algorithmic opacity, prompt dependence, and the risk of misinformation due to hallucinations or biased outputs.

Conclusion:

AImediation redefines how individuals access and evaluate health information, requiring critical engagement from users and responsible development by technology providers. This framework calls for more research to determine how it affects patient actions, the roles of professionals, and the ethical use of AI in healthcare.

Introduction

Eysenbach coined the term apomediation 1 18 years ago to refer to an ecosystem in which we no longer depend on traditional intermediaries to access health information, but on apomediaries: agents (people or tools) who “stand by” to guide and orient users toward reliable information. Apomediation is thus a collaborative and distributed approach to information guidance that replaces the figure of the central expert with a network of agents who accompany, filter, and validate information without becoming gatekeepers. Its relevance and credibility lie in maintaining trust and information quality in information-rich environments, such as social networks.2 -4

Although there has been controversy surrounding this concept due to the risks of misinformation that can be caused by having intermediaries who can create content without true, contrasted, and quality information, 5 even causing false positives, 6 there have also been many supporters who see mediation as an opportunity to empower patients in the search for easily accessible health information to help them with their decision-making, as well as to encourage health professionals to become content creators. 7

Likewise, this type of user-generated content can be interesting for the consolidation of digital communities around a condition, ailment, or disease, which also makes it possible to create support networks around the content.8,9 However, some studies warn that the growing prominence of content creators can displace the epistemic authority of health professionals, 10 an effect that completely changes the paradigm of healthcare.

As Eysenbach 1 warned almost two decades ago, the debate on the disintermediation facilitated by ICTs not only in the field of healthcare, but also in many other areas where the middleman has been cut (travel agents, librarians, pharmacists, etc.) continues to rage, while “emancipated” consumers and users must take responsibility for assessing the quality and credibility of information. The center of the controversy in the health field is precisely the loss of the symbolic power of the health professional as a gatekeeper of information, something that the algorithms of the social networks have been gradually taking over, giving relevance to that content that obtains greater external validation, while invisibilizing or banning false or misrepresented content. 11

However, the irruption and popularization of the use of Generative Artificial Intelligence (GAI) such as ChatGPT, Gemini, Claude, or Perplexity, among others, is bringing about a revolution in the access to health content, since it not only removes the intermediary, but also displaces the networking and peer support communities that were generated in the comments and opinions on social networks such as YouTube or Facebook, in specialized forums, and wikis. In contrast, GAIs feed on all this online indexed content, compiling and packaging it as answers to queries from various sources. 12 In this theoretical paper, the authors propose referring to this new ecosystem as “AImediaries.”

Analyzing the shift from the apomediation model to the AImediation model is useful because it allows us to analyze the implications of a return to compiled and less social informational centrality, in which a certain level of multisource epistemic emancipation is maintained (Table 1).

Comparative Framework of Information Mediation Models in Health Contexts.

Source: Own elaboration based on Eysenbach. 1

Conceptual Framework

In health communication, the evolution of information mediation can be understood through three sequential paradigms: traditional intermediation, apomediation, and emerging AImediation. This conceptual framework traces shifts in informational authority and user autonomy across these models.

In the classical intermediation model, healthcare professionals are the central gatekeepers of knowledge. The advent of apomediation – a term coined by Eysenbach 1 – replaced the sole expert with a distributed network of peer- or tool-based guides who “stand by” to orient users toward reliable content. Apomediation disperses informational authority across social networks and digital platforms, empowering patients to access and evaluate health information with greater independence than before.

Building on this progression, AImediation represents a new paradigm in which generative AI systems (eg, ChatGPT, Gemini, Perplexity, Claude, and similar large language models) directly mediate information exchange. AImediaries aggregate and synthesize multi-source health content in response to user queries, essentially centralizing information retrieval within an algorithm while preserving user autonomy fostered by apomediation. By comparing intermediation, apomediation, and AImediation, this framework clarifies how generative AI reconfigures the balance of trust, guidance, and expertise in digital health communication.

The Black Box of the Search Algorithm

Unlike the traditional intermediation model, in which information emerges from a healthcare professional, or the apomediation model, in which information is generated and filtered by other users, GAIs use search algorithms based on multi-source and multi-language SEO positioning of information found online. 13 This allows the GAI itself to filter the information and compare and contrast it even with scientific evidence by accessing specialized websites of medical and scientific societies, scientific journals, and medical forums, but prioritizing some websites over others.

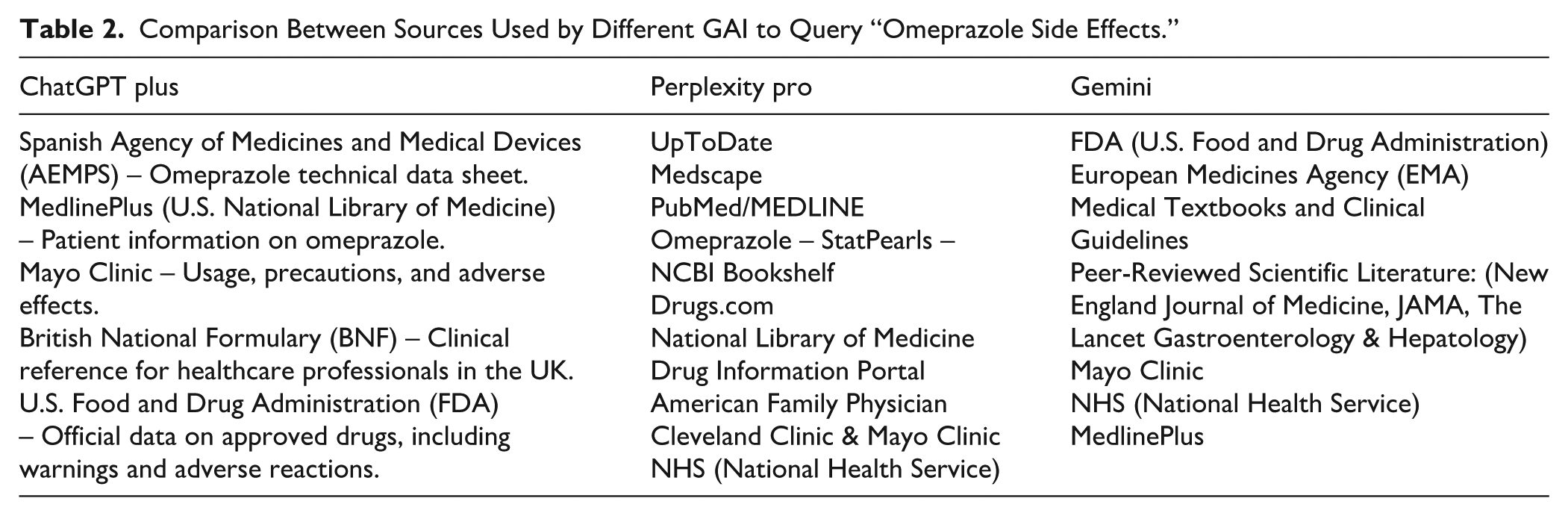

In fact, the same query performed within seconds of each other on the same interface may provide answers compiled from different sources (see Table 2). Therefore, although GAIs usually report the sources from which they have extracted the emerging information, these are selected and paraphrased differently because of the effect of “nonmonotonic reasoning” 14 (see Figure 1).

Comparison Between Sources Used by Different GAI to Query “Omeprazole Side Effects.”

Different answers to the same query in ChatGPT Plus (top), Perplexity Pro (middle), and Gemini (bottom).

Autonomy and Less Dependence on the Intermediary Are Maintained

As with apomediation, in IAMediation, the user can make the query online, always having the option to decide if, when, and how to use the healthcare intermediary. With the multisource answers of GAIs, the user will perceive themselves to be better informed about their symptoms or condition, making it less likely that they will need to go to an intermediary for answers, as the recipient will have greater knowledge and self-efficacy. Of course, this is not without its dangers, such as self-medication.

Reducing dependence on intermediaries can also alleviate the workload of health professionals and the waiting lists for primary care in health centers. Even in terms of care times, an informed patient reduces the need for an in-depth explanation of the ailment or condition and even in seeking supportive therapies (such as physiotherapy).

Efficiency Lies in the Query

One of the biggest challenges in learning how to use GAIs is writing a prompt or query that facilitates understanding of health condition. In other words, the user must know or describe exactly what they are looking for so that the LLM yields the most appropriate result. In this sense, it is important that the user knows how to distinguish, for example, a headache from a migraine, or present all the most relevant data from their medical history each time they consult the GAI about a condition, on the understanding that it does not store sensitive information in the user’s memory.

Thus, GAIs will not yield the same information with the prompt “I have a mild recurrent headache for the past 2 days, what can I do?” as “I am a 60-year-old patient with type 2 diabetes and hypertension, I have a mild recurrent headache for the past 2 days, what can I do?” (see Figure 2). In this sense, one of the issues that arise with these new information search and filtering mechanisms is that it is essential that users develop skills not only in prompt formulation but also in discerning what clinical information is indispensable to provide the algorithm with all the data necessary to obtain the correct information.

Different responses with different prompts and response customizations based on GAI memory in the left query (Gemini).

Risks of Algorithmic Biases

No health information mediation model is infallible, and GAIs are no exception, at least for the time being. Algorithmic biases often originate from multiple factors, including the training data used to develop the models being unbalanced or reflecting cultural, linguistic, or socioeconomic gaps, 15 and the fact that GAIs may automatically prioritize sources based on their SEO domain authority and not necessarily on their scientific rigor or specialty relevance.

In addition, the “hallucinations” of the GAI may arise in the answers, presenting erroneous, illogical, incorrect, unreal, fabricated, poor quality information, or nonexistent contraindications 16 (see Figure 3). This, also in relation to the previous point that a prompt may be incomplete or poorly formulated, increases the chances that queries will lead to ambiguous, generic, or biased answers. These challenges underscore the need for users to possess strong digital and health literacy.

Example of GAI hallucination with the search “Side effects of omeprazole.”

Unmitigated algorithmic biases in health AI can also disproportionately mislead or exclude certain populations, echoing concerns that biased training data may worsen health disparities. 17 Therefore, ensuring transparency and fairness in AI-driven information curation is critical.

Discussion: The Role of Health and Digital Literacy in the Interpretation of AI Results

The effectiveness of the AImediation model depends not only on the technological capabilities of the generative models, but also – and crucially – on the level of digital and health literacy of the user, both to interpret and verify the emerging results, and to design the query prompt with all the necessary elements to retrieve a more accurate answer. In contrast to apomediation, where peer support can partially compensate for users’ educational shortcomings, interaction with a GAI requires greater interpretive autonomy and advanced skills in formulating clear and contextualized prompts.

Therefore, the effectiveness of AImediation hinges not only on technology but also on user competencies. Emerging scholarship and the WHO call for AI literacy, the ability to understand, critically evaluate, and responsibly apply AI outputs, as an essential extension of digital health literacy. 17 Without such literacy, users may misinterpret AI-generated information or trust it inappropriately

Low digital literacy can lead to the formulation of vague, imprecise, or biased questions, which in turn translates into erroneous, incomplete, or potentially dangerous answers. Similarly, a literal or uncritical interpretation of the results offered by AI can generate a false sense of knowledge, making professional consultation difficult or promoting self-medication practices without clinical validation. In contrast, users with more advanced skills can take advantage of the adaptive potential of these models to generate iterative questions, contrast information, and refine the quality of the answers received.

This phenomenon reveals a digital divide in the making, not only between those who do or do not have access to these technologies, but also between those who do or do not know how to adequately formulate their queries and critically analyze the answers generated by the AI. In this sense, digital literacy should be understood not only as a technical skill but also as a critical transversal competence, which should be promoted through public health education policies, continuous training for health professionals, and awareness campaigns aimed at the general public.

Additionally, health literacy is necessary, understood as the ability of users to obtain, understand, evaluate, and apply health information to make informed decisions about their well-being. In the context of AImediation, this competence takes on greater value, since the proper interpretation of AI-generated results requires not only technological skills, but also basic knowledge of physiology, symptomatology, treatments, and clinical evidence. Without this frame of reference, the risk of misinterpretation or overinterpretation of content increases significantly, especially when the answers generated use technical, ambivalent language, or lack explicit references.

Hence, any strategy that seeks to integrate AI into healthcare settings should be accompanied by robust health literacy programs capable of empowering citizens to discern between valid information, pseudoscience, and AI hallucinations, as well as to recognize when it is essential to resort to the professional judgment of the classic clinical intermediation model.

The transition to an AImediation ecosystem has substantial and practical implications. For patients, this represents an opportunity for informational empowerment, although it requires advanced digital health skills and critical capacity. For healthcare professionals, it opens up a new scenario where their role can be complemented by AI, especially in patient education and the validation of generated information.

Future research should explore empirical evaluations of how patients interpret AI-generated responses, their impact on clinical outcomes, and the development of standardized frameworks to integrate AImediaries into professional healthcare workflows.

Conclusions

In summary, the AImediation model marks a significant conceptual shift in health communication, redefining how individuals obtain, use, and trust health information. Whereas traditional intermediation vested informational authority in medical professionals and apomediation distributed that authority across peer networks, AImediation introduces an AI-driven mediator that compiles knowledge from diverse sources, on demand.

Key insights from this analysis highlight that AImediation preserves the direct-access autonomy of the apomediation era, albeit now exercised under the algorithmic guidance. This raises the paradox that while patients are empowered to independently retrieve information, they may also develop an over-reliance on AI outputs, a known risk in automation that can undermine truly independent judgment. 18 This reconfiguration of informational authority has profound implications for both patients and healthcare professionals.

For patients, AImediation offers unprecedented informational empowerment, as users can instantly access tailored, multi-sourced answers. However, it also demands higher levels of digital and health literacy to critically interpret AI-generated advice. In the absence of strong AI literacy and critical appraisal skills, the AI’s tendency to sometimes provide plausible-sounding but incorrect answers has prompted calls for algorithmic transparency and validation frameworks in health settings. Therefore, users must be encouraged to verify AI-provided information against trusted sources to prevent a false sense of security, 19 potentially influencing their health behaviors (such as self-diagnosis or treatment adherence) in ways that are not always beneficial to them.

Also, the “paradox of autonomy” 20 arises in digital health, when users’ freedom to access and select online health information ironically leads them into misinformation. While autonomy empowers individuals to make informed choices, the vast availability of low-quality or misleading content undermines this very freedom by polluting their decision environment. As a result, autonomy becomes paradoxical: in enabling user independence, digital platforms also expose users to information that can distort their understanding, eroding true self-directed agencies in health-related decisions

For healthcare professionals, the rise of AImediaries challenges the traditional gatekeeping role and shifts their professional responsibilities. Practitioners may increasingly serve as interpreters, validators, and context providers for AI-derived information rather than being the sole source of facts. This evolving role could alleviate certain workload pressures (as routine informational queries are handled by AI) but also requires professionals to engage with patients in verifying the quality of AI-provided information and addressing any misinformation or anxiety it may cause to patients.

Looking ahead, careful attention must be paid to the ethical implementation of AImediation in healthcare and the promotion of AI literacy among all stakeholders. As generative AI becomes more ingrained in health information seeking, future research should explore strategies for integrating AImediaries in a manner that upholds transparency, mitigates biases, and ensures accountability in the information provided. Developing guidelines for algorithmic fairness and reliability is essential to ensure that AI tools complement clinical decision-making without undermining trust or exacerbating health disparities. Parallel to these efforts, there is a pressing need for educational initiatives to boost both patient and provider AI literacy, empowering users to formulate effective queries, interpret AI outputs with skepticism, and recognize when professional consultation is warranted. By pursuing these directions, researchers and practitioners can better understand the impact of AImediation on patient behavior and clinical practice and ensure that this new paradigm enhances health communication in an ethical, equitable, and evidence-based manner.

Footnotes

Authors’ Note

Luis M. Romero-Rodriguez is now affiliated to University of Sharjah, Sharjah, UAE.

Ethical Considerations

No ethical approval is required.

Consent to Participate

This theoretical study does not involve people.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.