Abstract

Purpose

Existing research quality appraisal tools in the social sciences face significant disciplinary incompatibility and methodological limitations. This study aims to address these gaps by evaluating current tools and developing a versatile new checklist to standardize quality assessment across quantitative, qualitative, and mixed-methods studies.

Design/Approach/Methods

A systematic literature analysis of 24 mainstream appraisal tools assessed their disciplinary compatibility, methodological scope, and criteria design. A new checklist (i.e., Quantitative, Qualitative, and Mixed-Methods Studies [QQM] checklist) was developed using a utility-usability framework, integrating common indicators to ensure methodological inclusiveness for studies.

Findings

Analysis revealed most tools are restricted to single methods, 67% focus on medical fields, and only two are discipline-specific for social sciences. Issues of existing tools include ambiguous criteria, rigid binary scoring, and outdated frameworks. The QQM checklist addresses these gaps with eight universal indicators and method-specific criteria (4–6 for quantitative, 6 for qualitative), using a three-tiered scoring system (0–2 points).

Originality/Value

The QQM checklist offers a standardized solution for quality appraisal in social sciences, transcending disciplinary and methodological boundaries. Its design enables AI integration, advancing standardization for consistent, rigorous evaluations—critical for funding, publication, and policy decisions. This work contributes to improving research quality and transparency in the social sciences.

Introduction

Research quality evaluation has always been important for science improvement (Harden & Gough, 2012). It is also an important part for researchers’ or institutes’ performance in high-stakes evaluations, such as grant funding (Geuna & Martin, 2003). Within scientific publications, the burgeoning of meta-analyses or systematic reviews calls for better judgments of the studies that are included in those syntheses (Harden & Gough, 2012; Heyvaert et al., 2013; Pluye & Hong, 2014). For instance, “garbage in, garbage out” has long been a concern for some careless meta-analyses as they may produce biased conclusions for the scientific community (Egger et al., 2001; Nelson et al., 2018; Slavin, 1986, 1995). As a result, attempts have been made to apply quality appraisal, typically conducted using checklists or tools with multiple quality indicators, to most scientific domains (Appelbaum et al., 2018; Michie et al., 2005).

To date, a number of appraisal tools have been developed to increase the rigor and standardization of the assessment process (Harrison et al., 2021; Hong et al., 2018b; Sirriyeh et al., 2012). However, most of them favor specific methods (e.g., either qualitative or quantitative). We are still lacking comprehensive assessment tools for evaluating studies of multiple methods, particularly in the social science domain. Our literature review showed that, out of 24 comprehensive appraisal tools/checklists, only two were specifically designed for the social sciences—one focusing on Mexican Americans and the other on implementation science.

The lack of appropriate tools has led social science researchers to simply use tools that have been developed in other disciplines. However, research quality and evaluation are highly discipline-dependent (Collins et al., 2012; Fàbregues & Molina-Azorín, 2017). Social science research applies to a wide variety of methods, from collecting and analyzing numerical data to using qualitative techniques to dissect complex social phenomena that defy simple quantification. As a result, generic tools may fail to capture the specificities of social science research, potentially overlooking important evaluative aspects and reducing the validity of the assessment (Koch & Harrington, 1998; Protogerou & Hagger, 2019). Additionally, some researchers use different tools to assess various study types separately in reviews or meta-analyses. This increases the workload and, on the other hand, compromises the objectivity of the evaluation.

In addition to the difficulties in finding suitable tools, existing tools have certain shortcomings. A major one is the lack of validated and consensus-based criteria. Some tools also suffer from incomplete or unclear evaluation criteria, lack of user guidance, and failure to update in line with academic advancements (Heyvaert et al., 2013; Hong et al., 2019). Therefore, what social science researchers need is a standardized and easy-to-use checklist to guide the research quality evaluation across various study designs.

To address this gap, the present study developed a research quality checklist that can be used for quantitative, qualitative, and mixed-methods studies. Building upon a previous version created by the authors for a scoping review (Salmela-Aro et al., 2021), the checklist includes eight generic indicators applicable to all study types, along with 4–6 tailored indicators for quantitative studies and 6 for qualitative studies. Suggestions for cut-off points are also provided to guide users in determining the eligibility of studies for further analysis. One purpose of this paper is to introduce the creation, initial validation, and application of the checklist. It is hoped that the checklist will improve systematic reviews, meta-syntheses, and evidence-based practice in the social sciences by enabling studies to be assessed with greater rigor and consistency. It can also serve as a tool for editors evaluating articles for publication, guiding research funding decisions, and educating university students on conducting high-quality research.

Current research appraisal tools

Due to the increasing popularization of systematic review and meta-analysis in recent studies (Hong et al., 2018b; Sirriyeh et al., 2012), there has been a considerable growth in the number of appraisal tools in recent years. As the necessary tool for systematic examination of study quality, appraisal tools formalize the quality appraisal process and guarantee the process to be conducted in a systematic, transparent, and reproducible manner (Harden & Gough, 2012; Hong et al., 2018b). Appraisal tools are regularly divided into two categories based on the target of evaluated methods: (a) appraisal tools for specific research approaches and (b) comprehensive appraisal tools.

The former type of tool usually focuses on either quantitative or qualitative method studies. In particular, appraisal tools for quantitative studies usually comprise the criteria for cross-sectional and longitudinal surveys as well as experimental/trial methods, for example, the Prevalence Critical Appraisal Instrument (Munn et al., 2014). Appraisal tools for qualitative studies, on the other hand, generally concentrate on the evaluation of interview, observation, field study, and case study, with the Critical Appraisal Skills Programme (CASP) qualitative checklist tool being one of the most commonly used tools (Long et al., 2020). Given the specific focus on quantitative or qualitative methods, these tools are not able to be used effectively for assessing the quality of mixed-methods studies. In addition, some appraisals were particularly designed for systematic review or meta-analysis rather than empirical studies, like Cochrane Handbook (Chandler et al., 2019) and the Preferred Reporting Items for Systematic Reviews and Meta-analyses (PRISMA) guidelines (Moher et al., 2010), and thus, they are not included in the review.

The latter type of tool, for comprehensive appraisal, aims to evaluate quantitative, qualitative, and mixed-methods studies and categorize them according to a single evaluation scale. To achieve these aims, most appraisal frameworks take one of two approaches. The first approach is to develop a broad range of criteria for all sub-dimensions of quantitative and qualitative methods. One of the most widely used examples is the set of critical appraisal tools from the Joanna Briggs Institute (2024), with 14 different sub-scales for different methods. The second approach attempts to emphasize the unique methodological feature of mixed-methods studies, by adding criteria such as “rationale for using a mixed method design, how the quantitative and qualitative components were integrated, and the added knowledge gained from the integration” (Fàbregues et al., 2021; Ivankova, 2014). For instance, the Mixed Methods Appraisal Tool (MMAT) is a widely used model among them (i.e., Hong et al., 2018a). In addition, some appraisal tools concentrate on the service for a particular discipline or cohort, including the “Quality Assessment Tool for Studies With Diverse Designs” (QATSDD) for medical/clinical studies (i.e., Sirriyeh et al., 2012), the A comprehenSive tool to Support rEporting and critical appraiSal Tool for Implementation Science (ASSESS; Ryan et al., 2022), and the Transformative, Mixed Methods Checklist for Psychological Research With Mexican Americans (TMMC-MA) for the cohort of Mexican Americans (Canales, 2013).

Prior studies have pointed out that there is a small number of comprehensive appraisal tools because many of these tools focus on a specific type of design or method (Harrison et al., 2021; Sirriyeh et al., 2012). In one systematic review of appraisal tools (over 500 tools), Hong et al. (2019) found that few of them include the comprehensive criteria for quantitative, qualitative, and mixed methods. Moreover, the shortage of comprehensive appraisal tools is very evident in social science. Building upon Hong et al.'s (2019) review of appraisal tools from 2000 to 2018, we conducted a follow-up literature search across the years 2019–2024 (see Appendix A for the search protocol). In total, we identified 64 relevant studies with 24 comprehensive tools/checklists. Among them, 43 studies (67.2%) were published in medical/clinical/nursing journals (i.e., 25 in the Social Sciences Citation Index [SSCI] Q1–Q2), 15 studies (23.4%) in method-related journals (i.e., 10 in SSCI Q1–Q2, especially in the Journal of Mixed Methods Research [JMMR]), and only five studies (7.8%) in education, psychology, or management journals (i.e., two in SSCI Q1–Q2). Regarding the tools/checklists, 10 were of the comprehensive type for most disciplines (four of which were more targeted to medical/clinical/nursing), and 11 were particularly designed for the medical discipline. Only two tools were designed for the social sciences (i.e., 1 for Implementation Science and 1 for psychological studies in Mexican American populations). As discipline plays a critical role in shaping researchers’ thinking and decisions regarding appraisal quality (Fàbregues et al., 2019), the shortage of tools in social science may restrict the ability of researchers to evaluate the quality of studies fitting into this disciplinary field.

Moreover, the existing comprehensive appraisal tools are inconvenient to use for a number of reasons. In reality, there is a controversial swing between “too few/simple criteria to guarantee the quality” and “too many/complex criteria to guarantee appraisal efficacy.” On the one hand, some tools attempt to reduce users’ analysis burden by including fewer items; however, it may lead to unclear and incomplete features (Heyvaert et al., 2013). Take MMAT, a comparatively short appraisal tool, as an example. Experts have identified that some items include several concepts without clear clarifications, which are difficult for them to interpret and score (Hong et al., 2018b). On the other hand, other tools try to add more items and categories to develop a more comprehensive framework. Nonetheless, this attempt may contribute to overlapped, complicated, time-consuming, and less user-friendly issues, especially for less experienced researchers (Heyvaert et al., 2013; O'Cathain, 2010).

We can see this difficulty play out in examples of the two main approaches to comprehensive tool development mentioned above. Including a comprehensive range of criteria for all sub-dimensional methods is meaningful for users who focus on a specific method of studies, whereas it might be inconvenient and time-consuming for those reviewing studies with various methods. Similarly, including a specific criterion for mixed-methods studies might benefit researchers who are interested in evaluating quality according to whether mixed methods are better than other types of study designs (Fàbregues et al., 2021; Ivankova, 2014). However, this attempt may also increase the demands and difficulties in tool usage, especially when mixed-methods studies are just one part of a systematic review and users are less experienced.

Furthermore, existing appraisal tools often fall short in their scoring method/procedure. On the one hand, few tools provide simple and clear guidance to quickly understand scoring standards and make a final assessment (e.g., suggested cut-off points). This limitation undermines the efficacy and convenience for users (Heyvaert et al., 2013), and therefore, has received criticism from several systematic review studies (e.g., Holl et al., 2016; McPherson et al., 2017; Orr et al., 2021). On the other hand, criteria in most comprehensive appraisal tools are mostly dichotomous (i.e., only “yes,” “no,” and “unknown”), without a medium score. The dichotomous response usually fails to distinguish between studies with strong and weak coverage of an issue (Sirriyeh et al., 2012). Given all those limitations, an easy-to-use yet comprehensive appraisal tool with a clear-scoring method is needed to help researchers conduct quality evaluations of studies with quantitative, qualitative, and mixed-methods designs, particularly in the social sciences.

Need for a brief but powerful comprehensive tool

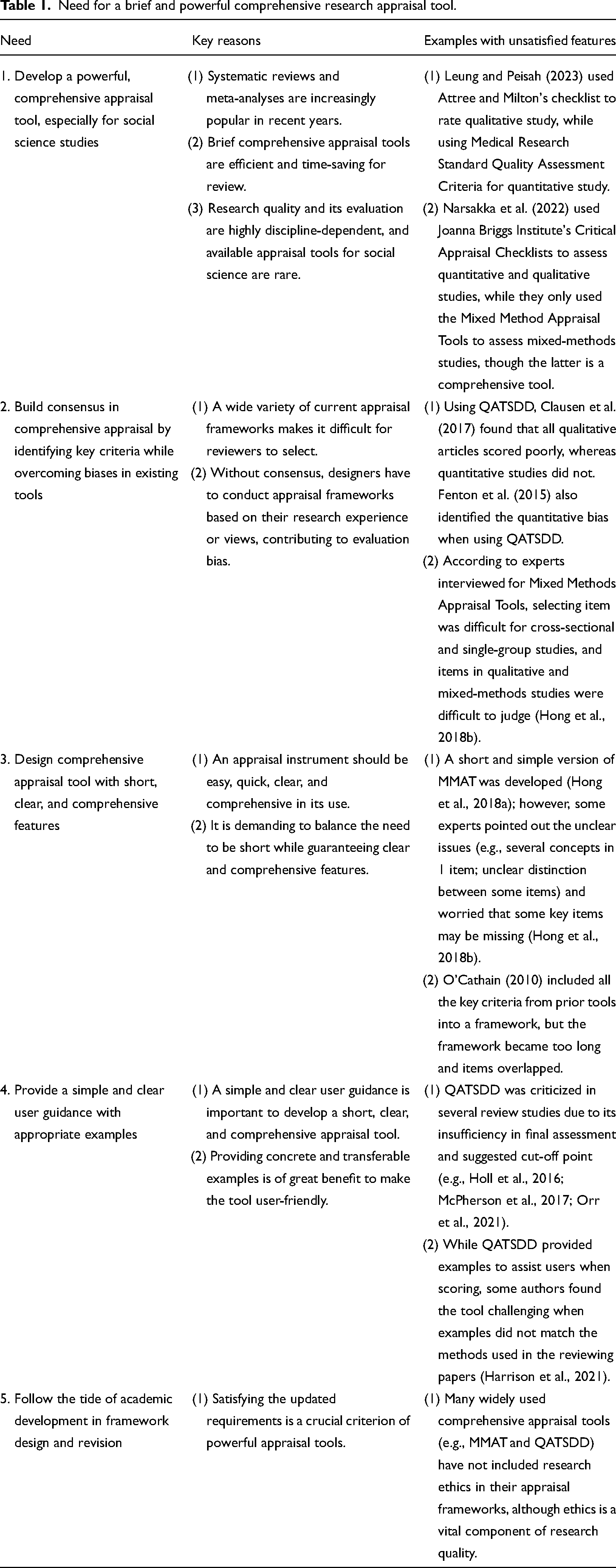

As systematic reviews and meta-analyses are becoming increasingly widely utilized (Hong et al., 2018b; Sirriyeh et al., 2012), it is imperative to develop a brief comprehensive appraisal tool for researchers to save time and improve efficacy during literature selection (Harrison et al., 2021; Sirriyeh et al., 2012). Otherwise, researchers have to spend substantial time and effort on applying different tools to a single corpus of literature to appraise different methods (e.g., Leung & Peisah, 2023; Narsakka et al., 2022). Developing a comprehensive all-purpose tool is most urgent in social science research, since research quality and its evaluation are highly discipline-dependent (Collins et al., 2012; Fàbregues & Molina-Azorín, 2017), and available comprehensive appraisal tools for social sciences are rare, as we have explained above. See Table 1 for the summarized needs.

Need for a brief and powerful comprehensive research appraisal tool.

Developing a brief comprehensive appraisal tool is also beneficial for raising consensus in quality appraisal, and for helping to overcome the existing drawbacks due to the lack of consensus. Specifically, the wide variety of appraisal frameworks makes it difficult for reviewers to choose the most appropriate one(s) for their study (Hong et al., 2018b). Moreover, without a consensus to guide criteria selection, tool designers have to conduct the framework based on their research experience or views (Fàbregues et al., 2019), and consequently, their tools tend to bias their familiar/unfamiliar methods (e.g., quantitative bias in QATSDD; Clausen et al., 2017; Fenton et al., 2015). Given these drawbacks, there is an urgent need for a comprehensive appraisal tool that identifies the key and comparatively consensual criteria from existing tools while overcoming their biases.

To design such a powerful appraisal tool, the primary requirement is for it to be easy, quick, clear, and comprehensive in its use (Heyvaert et al., 2013). In other words, appraisal tools are highly appreciated when they are short, comprehensive, and clear (Hong et al., 2018b). Nonetheless, achieving this balance for comprehensive appraisal tools is demanding. For instance, O'Cathain (2010) attempted to develop a clear and comprehensive appraisal framework by including all the key criteria from prior tools, but the author found the framework became too long with a few overlapping criteria.

To meet the requirements of easy, quick, clear, and comprehensive features, there is a further need to provide simple and clear user guidance, which is lacking in many current comprehensive appraisal tools, especially with respect to their scoring frameworks (Heyvaert et al., 2013). Specifically, providing concrete and transferable examples is one of the most crucial strategies to make user guidance simple and clear (Hong et al., 2018b), but many existing tools failed to provide it. For instance, users found the examples provided by QATSDD are challenging to understand when they did not match the methods used in the reviewing papers (Harrison et al., 2021).

Finally, a powerful tool should also follow the tide of academic development, so it covers updated requirements regarding research quality. For instance, in recent years, there has been an increasing emphasis on research ethics in studies; however, many widely used comprehensive appraisal tools (e.g., MMAT and QATSDD) do not include this as a criterion.

Theoretical framework guiding the development of the quantitative, qualitative, and mixed-methods studies [QQM] checklist

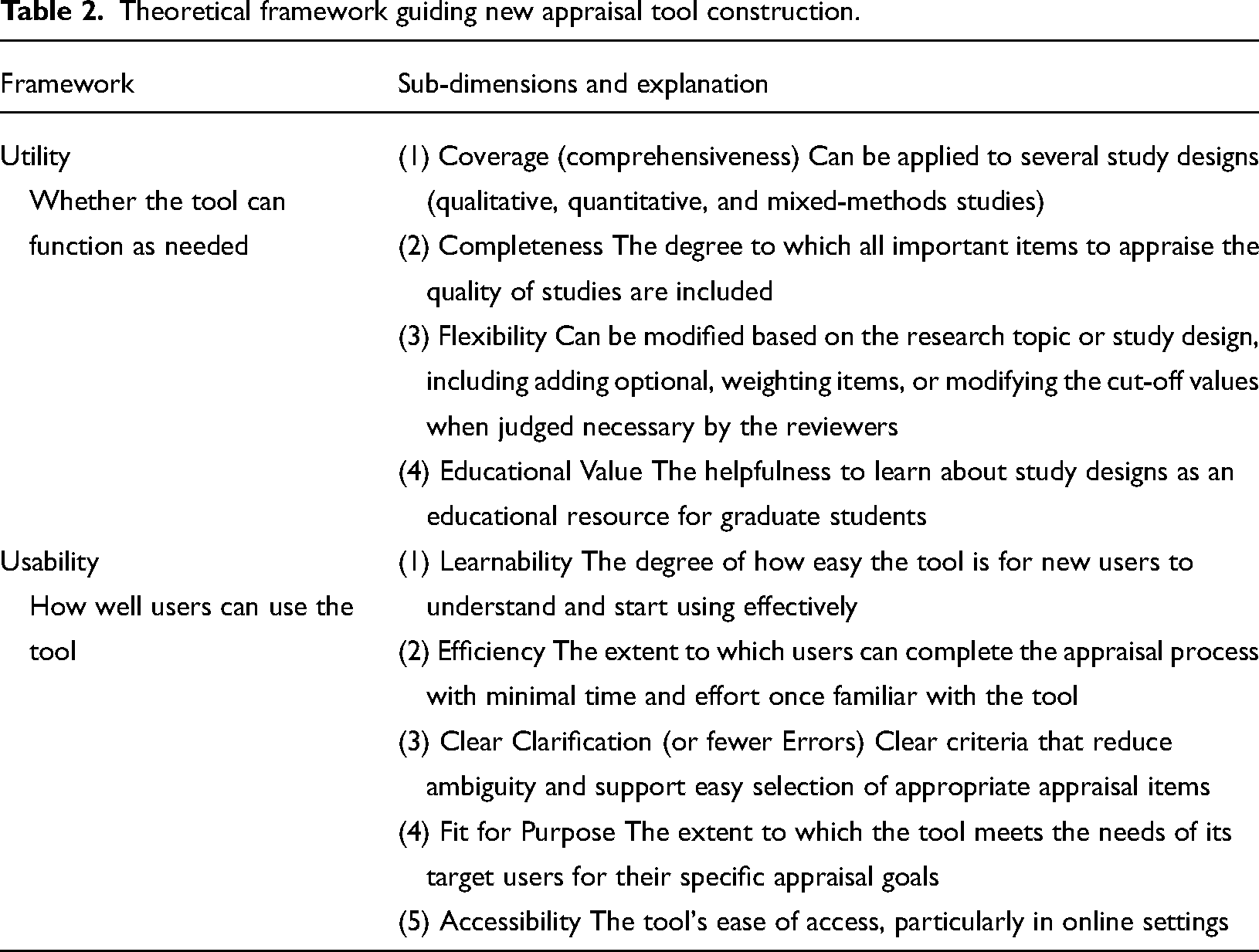

To design a brief, powerful, yet comprehensive research quality appraisal checklist, this study adopted a realistic stance for its theoretical framework (including utility and usability; Hong et al., 2018b). Specifically, the framework divided the “utility” and “usability” dimensions of the realistic stance into four and five sub-dimensions, respectively (see detailed information in Table 2).

Theoretical framework guiding new appraisal tool construction.

Utility is evaluated by whether the tool can function as needed, with four sub-dimensions: Coverage, Completeness, Flexibility, and Educational Value. Coverage (or comprehensiveness) means the possibility to apply a tool to several study designs (i.e., qualitative, quantitative, and mixed-methods studies), while Completeness refers to the degree to which all important items and criteria are included. These two sub-facets are the most essential for all comprehensive appraisal tools. Furthermore, good utility also entails desirable flexibility and educational value, in which the tools can be modified based on the research topic or study design, and can be used as an educational resource for graduate students to learn about study designs.

Next, Usability was assessed by how well users can use the tool, based on users’ perspective. Five sub-dimensions were included in Usability: Learnability, Efficiency, Clear Clarification, Fit for Purpose, and Accessibility. Learnability and Efficiency usually interact with each other, highlighting that a good tool should be easy for users to learn and be able to come out with a good performance once the users have learned it. Moreover, Clear Clarification requires the tools to have fewer difficulties in understanding criteria and selecting appropriate items to appraise, and Fit for Purpose demands the identification of targeted users and other issues, in order to help users select appropriate tools based on their purpose. In addition, the Usability dimension advocates that the tools should be easily accessible online (Accessibility).

QQM checklist—The quality appraisal checklist for quantitative, qualitative, and mixed-methods studies

Introduction of QQM Checklist

Guided by the aforementioned theoretical framework, the Quality Appraisal Checklist for Quantitative, Qualitative, and Mixed-Methods Studies (QQM Checklist; see Appendix B for the checklist) is designed to evaluate the quality of quantitative, qualitative, and mixed-methods studies in social science research. The prototype of QQM Checklist has been developed and used in a review on students’ engagement (Salmela-Aro et al., 2021), showing potential for use by social science researchers for various types of reviews. The full QQM Checklist comprises a total of 20 indicators categorized into three sections: (1) Study Procedures and Sample, (2) Additional Indicators for Quantitative Studies, and (3) Additional Indicators for Qualitative Studies. For mixed-methods studies, both quantitative and qualitative indicators should be considered.

The first section consists of eight indicators that apply to all study types. These indicators assess key aspects of research design, including the description of the study setting, participant recruitment processes, study administration, informed consent, ethical considerations, sample description, achieved sample, and justification of sample size. Clearly describing data collection, sampling strategies, and analysis procedures ensures auditability, allowing future researchers to examine and replicate the findings (Nowell et al., 2017). For example, inadequate sample size justification can lead to underpowered studies and increase the risk of Type II errors (Cohen, 1988). Additionally, poor reporting of recruitment and participant selection processes may introduce selection bias, threatening both internal and external validity (Shadish et al., 2002). Also, quality research nowadays is required to be conducted ethically and transparently.

The second section applies to quantitative studies (including the quantitative aspect of mixed-methods research) and assesses the rigor of data handling and measurement. It includes four indicators: missing data reporting, missing data handling strategies, measurement validity and reliability, and descriptive statistics. Addressing missing data is critical because mishandling can introduce bias and compromise statistical power (Little & Rubin, 2019). Furthermore, ensuring measurement validity and reliability aligns with psychometric principles, as unreliable instruments reduce the generalizability and replicability of findings (DeVellis, 2017). For longitudinal and repeated-measures studies, two additional indicators assess participant attrition and repeated measures’ reliability (RepeatR). These indicators are included because high attrition rates can lead to systematic biases in longitudinal analyses (Gustavson et al., 2012), and poor reliability in repeated measures can undermine the consistency of findings over time (Schmidt & Hunter, 1996).

The third section applies to qualitative studies (including the qualitative aspect of mixed-methods research) and examines the methodological robustness of data collection and analysis. It includes six indicators evaluating the description and rationale of instrument content, the inclusion of instrument examples, the specification of data analysis methods, the provision of analytical examples, and researcher reflexivity. These indicators align with Lincoln and Guba's (1985) criteria for trustworthiness, which emphasize the importance of credibility, dependability, and confirmability in qualitative research. For instance, reflexivity enhances the credibility of the findings by helping researchers recognize their own biases, positionality, and power dynamics with the study participants.

Each indicator is rated on a three-point scale (1 = No, 2 = Partial, 3 = Yes), and the total score is converted into a percentage for comparisons across different study types. Our checklist also includes suggested cut-off points, categorizing studies as poor (≤50%), moderate (51%–79%), or high quality (≥80%). Such cut-off points are commonly used in systematic reviews to guide the inclusion or exclusion of studies based on methodological rigor (Higgins et al., 2024).

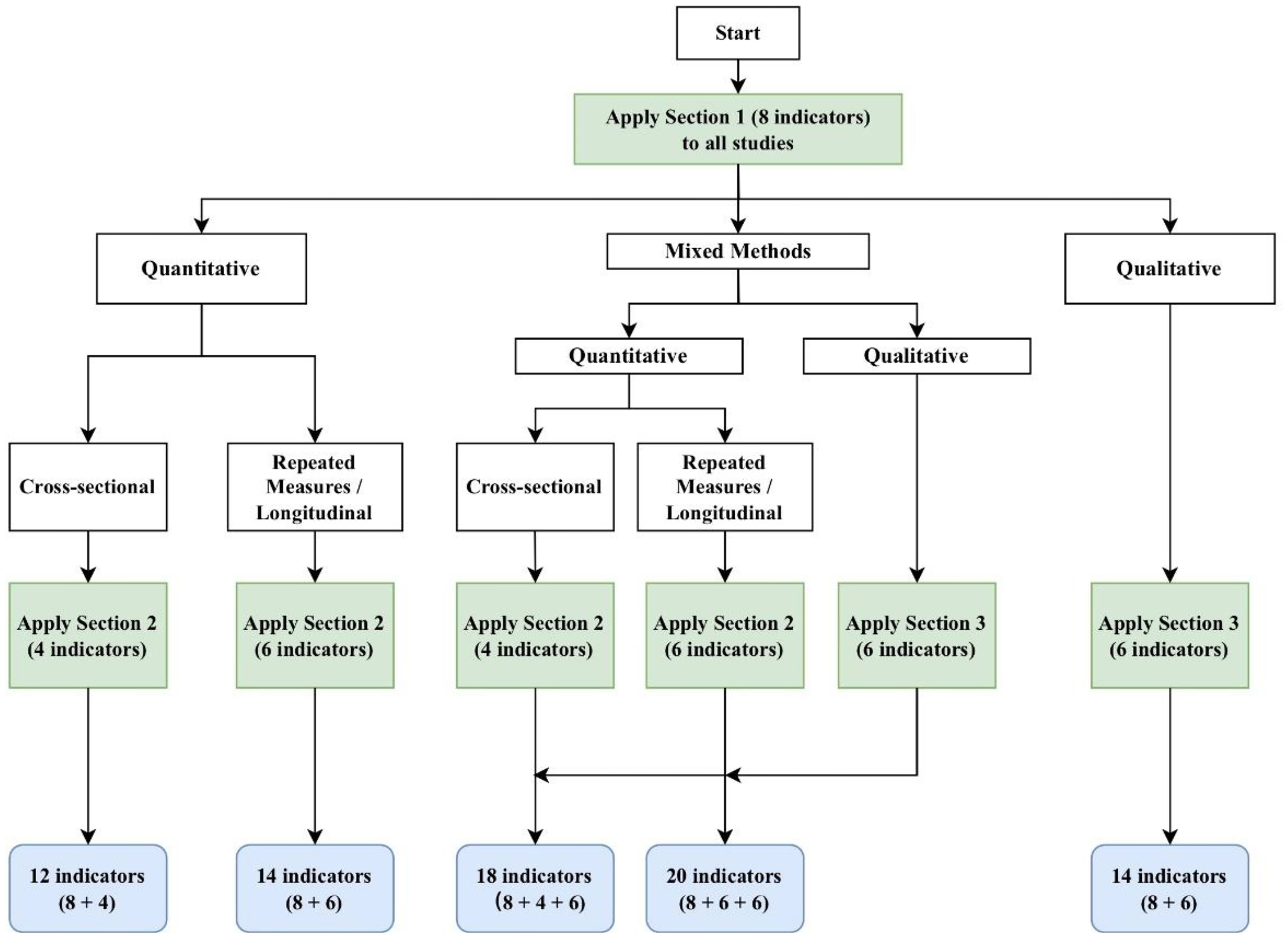

Applying our checklist involves three main steps. First, users should begin by reading the instructions in the checklist and determining the study type (qualitative, quantitative, or mixed methods). Second, users need to select and apply the appropriate sections based on the study type. A flowchart (Figure 1) is provided to guide section selection based on study type and design. For qualitative studies, users should apply the first section (“Study Procedures and Sample”) and the third section (“Additional Indicators for Qualitative Studies”). For quantitative studies, the first section (“Study Procedures and Sample”) and the second section (“Additional Indicators for Quantitative Studies”) should be applied, noting that the “Attrition” and “RepeatR” indicators in the second section only apply to studies with repeated measures or longitudinal data. Mixed-methods studies require the application of all three sections. Finally, scores from all applicable sections are summed and converted into a percentage score.

Flowchart for applying the QQM Checklist.

For example, a longitudinal quantitative study scoring 39 out of 42 points would have a score of 93%, indicating high quality. Notably, quality dimensions and cut-off points can be adjusted based on research objectives. For instance, all studies might be included in a scoping review, but stricter quality thresholds may be applied in a meta-analysis to ensure the validity and rigor of the synthesized findings. A further benefit of the QQM Checklist is its appropriateness for producing high-quality inter-rater reliability statistics because each item uses a three-point scale (enabling more refined judgements) and researchers can agree beforehand exactly which items should be scored.

QQM checklist validation

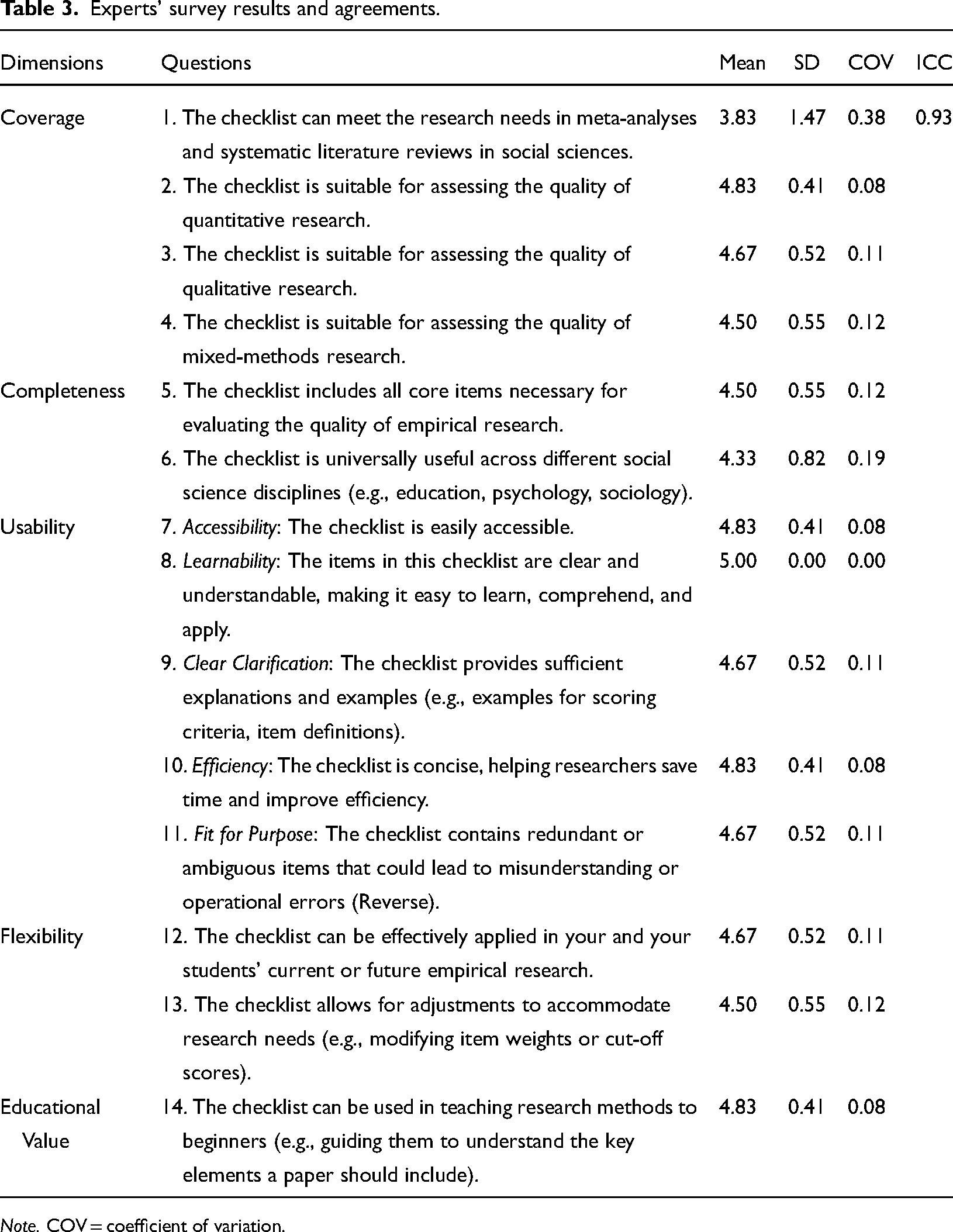

To validate the effectiveness of QQM Checklist, we first surveyed six experts in social science subjects from China. All of them received doctoral degrees (4 from education, 1 from psychology, and 1 from sociology) in English-speaking programs (1 from the USA, 1 from Hong Kong SAR, 4 from Europe) so that they are proficient in English and Chinese. On average, they have five years of working experience after Ph.D. The survey includes all the dimensions of utility and usability with 14 items (see Table 3). The items were rated from 1 to 5 (Strongly Disagree to Strongly Agree) using a 5-point Likert-style scale. Results (in Table 3) indicated good quality of this checklist, with high mean scores of 4.5–5.0, small standard variance of 0.41–0.82, and small Coefficient of Variation of 0.08–0.19 for nearly all items (except “the item 1” with mean = 3.83, SD = 1.47, and COV = 0.38). ICC was calculated to demonstrate inter-rater agreement rather than Kappa given the ordinary variables (Gisev et al., 2013). ICC (=0.93) indicated good inter-rater agreement, illustrating a high consensus between experts.

Experts’ survey results and agreements.

Later, we invited two young researchers (one master student and one junior doctoral student) to evaluate three studies using quantitative, qualitative, and mixed methods (studies are shown in Supplementary Appendix C), and examined their inter-rater reliability. Good inter-rater agreement was observed by calculating Kappa values (i.e., quantitative = 1.00, qualitative = 0.84, and mixed methods = 0.79), demonstrating that even young researchers can have a good command of this checklist.

Unique features of the QQM checklist

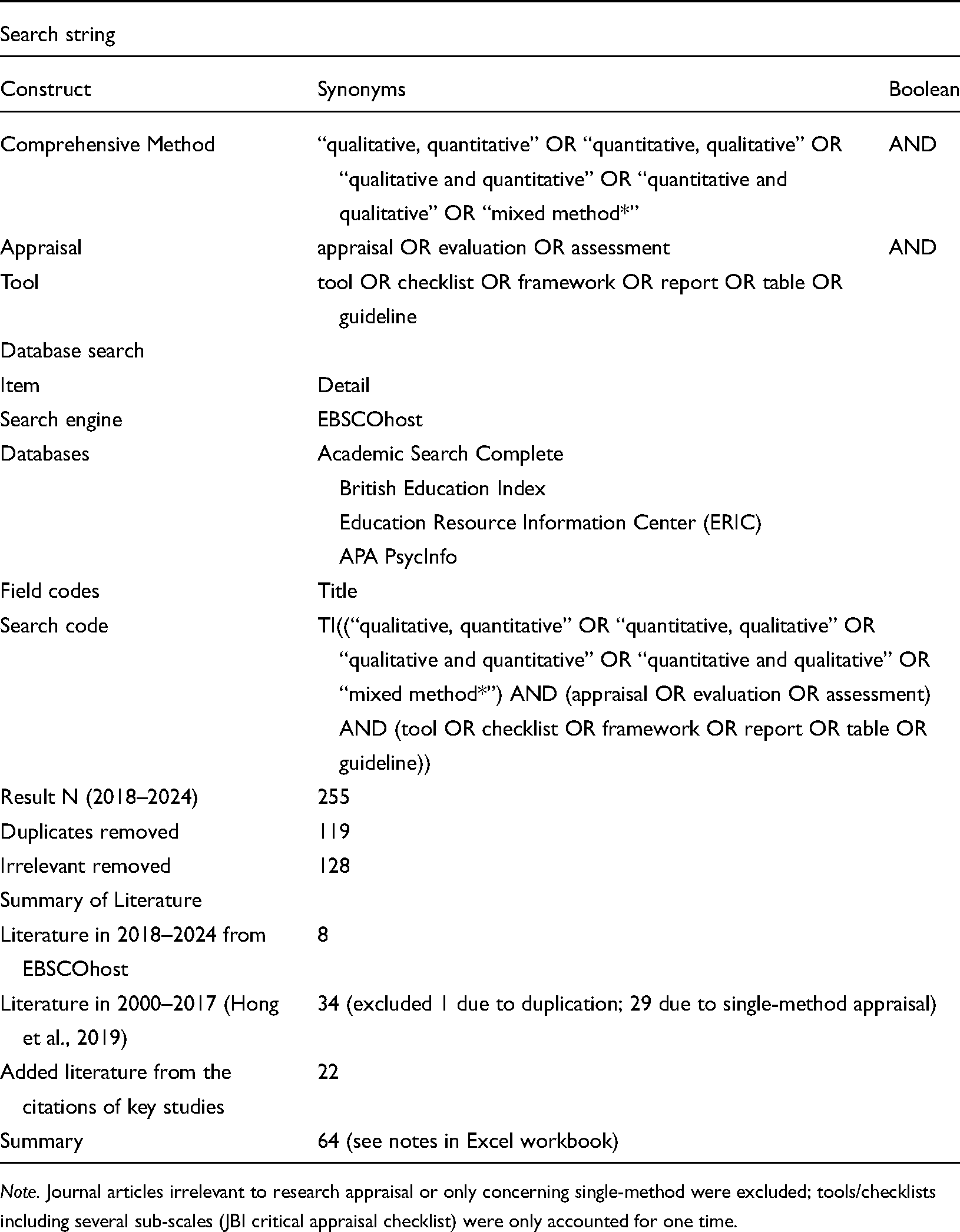

To show unique contributions of the QQM Checklist, we conducted a comprehensive comparison between this checklist and other two widely-used appraisals for empirical studies, including MMAT (Hong et al., 2018a, 2018b) and Joanna Briggs Institute (JBI) (2024). The detailed criteria for different methods in these three tools were listed in Table 4. In terms of similarities, the QQM Checklist not only adopts the basic criteria from these two tools (see the bold texts in Table 4), but also aligns with the theoretical framework from Hong et al. (2018a, 2018b), the designers of MMAT. Specifically, both of the appraisals are based on the concepts of Utility (quality of tool: Coverage, Completeness, Flexibility, and Educational Value) and Usability (user friendliness of tool: Learnability, Efficiency, Clear Clarification, Fit for Purpose, and Accessibility), which have been discussed in the section of “Theoretical framework guiding the development of the QQM Checklist.”

Comparisons of QQM with MMAT and JBI.

Regarding key differences, the QQM Checklist fulfilled the existing tool gaps by mainly focusing on “research quality” to eliminate those studies with less valid methods, procedures, and results, whereas MMAT and JBI emphasized more on “paper quality” (including writing quality). Therefore, the QQM Checklist comprises richer criteria for basic research principles (e.g., setting, recruitment, administration, consent, ethics, and sample), while excludes the criteria only related to paper-writing, such as coherence of difference sections and effective integration of mixed methods. Consequently, studies with good research quality but with comparatively lower writing quality would acquire a higher score in QQM Checklist than the other two appraisals, as their results and findings are still trustworthy and valuable for systematic review and meta-analysis. Additionally, the QQM Checklist integrates various empirical methods into the framework of quantitative, qualitative, and mixed methods, captures their key common principles, and provides balanced number of criteria for them (i.e., six items in each sub-facets). This design is more tailored to social science studies with less complicated method profiles and equal importance in different methods. In contrast, other two appraisals provide detailed categories of methods (especially for quantitative methods) and their corresponding criteria to meet the requirement of medical research (e.g., 14 sub-scales in JBI).

Discussion

Throughout the literature, we found that there is a shortage of quality evaluation tools suited to social science studies, especially the tools balancing complexity, easy usage, and reliable judgments. Some tools are less user-friendly due to overlapping criteria, poor layout, and insufficient user guidance. For instance, the JBI and CASP tools applicable to multiple study designs provide a separate checklist for each design. However, there is considerable overlap between the general quality criteria for qualitative and quantitative research (Brannen, 1992). This design places unnecessary burdens on users who need to switch between different sub-scales for the same criteria. To address this issue, our checklist consists of a unified section that applies to all research methods, along with two separate sections containing indicators tailored for quantitative, qualitative, and mixed-methods research. Each section clearly identifies the applicable research types and provides specific descriptions of each indicator, including how the generic indicators may differ in their application to quantitative, qualitative, and mixed-methods studies (e.g., justification for sample size in qualitative versus quantitative research). Additionally, unlike most tools that list indicators first and provide explanations in a separate section, our checklist organizes the indicators alongside their descriptions in a parallel structure. This makes it easier for users, especially beginners, to interpret and score, thereby reducing variability in scoring and enhancing the efficiency of the assessment.

To ensure reliable judgment, our checklist provides criteria and examples specific to the social science field. Applying tools designed for other disciplines to evaluate social science research can be problematic, as they often prioritize criteria specific to those fields. For instance, tools developed for clinical and medical research emphasize internal validity, generalizability, and experimental control, which may be less applicable to the social sciences, especially in qualitative research. A key issue is the quantitative bias inherent in some of these tools; for instance, small sample sizes are unnecessarily penalized in the “Quality Assessment Tool for Studies With Diverse Designs.” Given that social science research often deals with human behavior in complex social and cultural contexts, qualitative methods such as observational studies and ethnography that often use smaller sample sizes can provide valid and valuable evidence. However, the misalignment between qualitative methodologies and appraisal tools that focus on quantitative metrics can lead to underestimation or exclusion of qualified qualitative research (Carter & Little, 2007). To avoid this bias, in our checklist, criteria regarding sample size are based on the justification of participant numbers for the chosen analysis method, rather than on total sample size per se. In addition, the qualitative section prioritizes the credibility of the analytical process rather than factors such as replicability or statistical power. Another limitation of other tools is their emphasis on reporting quality over the appropriateness of research conduct. This can lead to misleading evaluations, as poor reporting does not necessarily indicate flaws in research design or execution. To address this, our checklist emphasizes methodological rigor by evaluating the integrity and transparency of the research process, including planning, data collection, and analysis.

Research ethics is also included in our checklist to protect participant rights, ensure academic integrity, and promote social responsibility. Many assessment tools (e.g., MMAT) prioritize core methodological criteria while ignoring ethical issues. This may lead to unassessed research misconduct and malpractice, which undermines the credibility of the research and causes negative social impacts. This problem is particularly acute in sensitive research settings, such as studies dealing with controversial topics or involving vulnerable populations that are often conducted in the social sciences. In light of this, our checklist emphasizes ethical requirements such as informed consent and obtaining ethical clearance from a mandated body.

To accurately reflect study quality, a scoring system is adopted whereby each indicator is evaluated as “no,” “partially,” or “yes,” receiving numerical scores of 1, 2, and 3, respectively. This scale addresses the limitations of dichotomous scoring used in tools like MMAT, which fail to distinguish between studies with stronger and weaker coverage on a given issue, leading to an oversimplification of the assessment. For example, a study employing a complex statistical assessment may receive the same score as one relying solely on a face validity check. Additionally, dichotomous scoring may ignore partial compliance with criteria and exclude studies that have limitations on some criteria but are still of high quality, resulting in bias due to the absence of eligible studies. On the other hand, a scoring system with many rating categories may reduce inter-rater reliability, as observed in the use of QATSDD (Sirriyeh et al., 2012). To address this, our checklist employs a three-point scale and provides clear descriptions and transferable examples that indicate what constitutes full or partial evidence. This adds transparency and consistency to the scoring process and prevents reviewers from scoring based on personal interpretations. The overall score, summed from the totals of each applicable section, is computed into a percentage by dividing by the number of indicators. This allows for standardized comparisons and categorization of study quality. It also avoids the unfair scoring seen in tools like QATSDD, where mixed-methods studies may receive inflated scores simply because they are assessed by a wider range of metrics due to their dual qualitative and quantitative components, even if the individual quality of each is not superior to a well-conducted single-method study. Moreover, our checklist sets different scoring criteria for different study types (e.g., a range of 54 points for mixed-methods studies versus 42 points for qualitative studies), ensuring that studies are not unfairly scored based on the number of methods used.

Our checklist can be integrated with artificial intelligence (AI) tools to enhance usability and scoring consistency. Large language models (LLMs), for instance, can be used to assess studies based on checklist indicators and generate initial scores. These scores can then be compared with human ratings to evaluate inter-rater reliability and refine the scoring process. Recent work by Zhu et al. (2025) demonstrated the feasibility of such an approach in a systematic review and meta-analysis, where ChatGPT was used for screening and coding processes, achieving strong agreement with expert reviewers. Machine learning algorithms can also be used to identify which indicators most effectively distinguish study quality and adjust their weights accordingly. Additionally, AI-generated feedback could assist novice users in understanding the criteria and making more accurate judgments.

The checklist can benefit both novice and experienced researchers in the social sciences conducting systematic reviews and meta-analyses, reviewers assessing manuscripts for potential publication, and readers critically appraising and synthesizing research findings. Its flexibility and efficiency allow users to spend more time on in-depth analysis, reflection, and generating new research questions. Additionally, funding agencies can use the QQM checklist as a baseline check to evaluate the methodological rigor of research proposals. Furthermore, educators can use it as a teaching aid to help graduate students grasp and apply key concepts and standards in research design and execution.

Further considerations in use of QQM checklist

It is worth noting that effective quality appraisal presupposes a clearly formulated review question. Systematic reviewers and meta-analysts using the checklist still need to make value judgments based on the study specifics and the review question to determine whether an article is relevant to the question (Petticrew & Roberts, 2008). In addition, even with scoring guidelines in place, researchers’ personal backgrounds and judgments may still influence the final score, leading to inconsistency in scoring. Thus, an iterative process is encouraged whereby reviewers independently score and then compare and discuss to resolve discrepancies (Sirriyeh et al., 2012). Moreover, it should be noted that our choice of three-point scoring system may not fully capture the nuanced features of some complex studies. Readers/users may set up more rigid or looser cut-off points (e.g., a threshold of 90% as “Very high quality”) as long as they provide reasonable rationale. Furthermore, although this paper outlines possible pathways for AI integration, no empirical implementation has yet been conducted. Future work will focus on the development, testing, and validation of such AI-assisted workflows to assess their feasibility, reliability, and acceptance among researchers. Finally, researchers from different cultures and regions are encouraged to apply the QQM checklist in their research and teaching practices and to provide feedback on the tool's applicability to us. This will help us revise and update the checklist in the future, to further improve its usability internationally.

Supplemental Material

sj-docx-1-roe-10.1177_20965311251371227 - Supplemental material for Quality Appraisal Tools for Quantitative, Qualitative, and Mixed-Methods Studies: A Review and a Brief New Checklist

Supplemental material, sj-docx-1-roe-10.1177_20965311251371227 for Quality Appraisal Tools for Quantitative, Qualitative, and Mixed-Methods Studies: A Review and a Brief New Checklist by Xin Tang (唐鑫), Zixin Zeng (曾子欣), Haoyan Huang (黄浩岩) and Jennifer Symonds in ECNU Review of Education

Footnotes

Contributorship

Xin Tang was responsible for the conceptualization of the work, contributed to writing the original draft, and was involved in reviewing and editing the manuscript. Additionally, Xin Tang provided supervision and resources for the project. Zixin Zeng contributed to writing the original draft and was involved in reviewing and editing. Haoyan Huang contributed to writing the original draft and determined the methodology. Jennifer Symonds was responsible for the conceptualization of the work, contributed to reviewing and editing the manuscript, and provided resources.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical statement

As a review study, not an empirical study, this study did not survey human participants using measurement tools. Thus, normal IRB was exempt. However, this study was conducted in accordance with the principles outlined in the Helsinki Declaration. Six human experts were asked to evaluate QQM Checklist based on the dimensions of utility and usability. Prior to their participation, all six expert reviewers were provided with a clear explanation of the study's purpose, the nature of their task (evaluating the research quality assessment tool), and the expected time commitment. Participation was voluntary, and reviewers were informed that they could withdraw at any time without consequence. Verbal agreement to participate was obtained from all experts before they were given access to the tool for review. All data collected were anonymized and has been handled with strict confidentiality.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The study is supported by Shanghai Jiao Tong University and University College London Strategic Partner Collaborative Project (PI: Xin Tang; Co-PI: Jennifer Symonds).

Supplemental Material

Supplemental material for this article is available online.

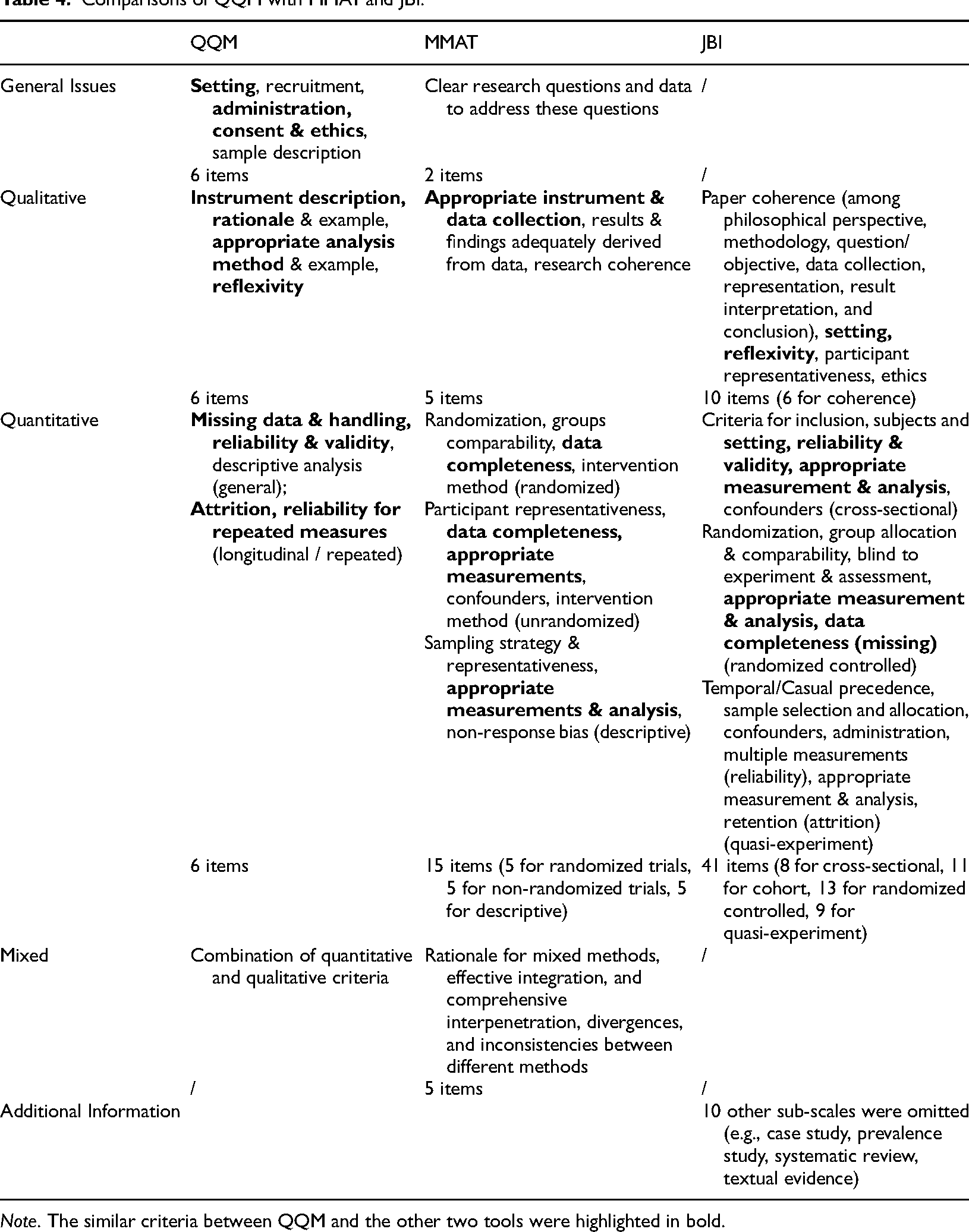

Appendix A. Procedure of systematic review

| Search string | ||

|---|---|---|

| Construct | Synonyms | Boolean |

| Comprehensive Method | “qualitative, quantitative” OR “quantitative, qualitative” OR “qualitative and quantitative” OR “quantitative and qualitative” OR “mixed method*” | AND |

| Appraisal | appraisal OR evaluation OR assessment | AND |

| Tool | tool OR checklist OR framework OR report OR table OR guideline | |

| Database search | ||

| Item | Detail | |

| Search engine | EBSCOhost | |

| Databases | Academic Search Complete |

|

| Field codes | Title | |

| Search code | TI((“qualitative, quantitative” OR “quantitative, qualitative” OR “qualitative and quantitative” OR “quantitative and qualitative” OR “mixed method*”) AND (appraisal OR evaluation OR assessment) AND (tool OR checklist OR framework OR report OR table OR guideline)) | |

| Result N (2018–2024) | 255 | |

| Duplicates removed | 119 | |

| Irrelevant removed | 128 | |

| Summary of Literature | ||

| Literature in 2018–2024 from EBSCOhost | 8 | |

| Literature in 2000–2017 (Hong et al., 2019) | 34 (excluded 1 due to duplication; 29 due to single-method appraisal) | |

| Added literature from the citations of key studies | 22 | |

| Summary | 64 (see notes in Excel workbook) | |

Appendix B. Quality Appraisal Checklist for Quantitative,Qualitative,and Mixed-Methods Studies

Apply this section to quantitative, qualitative, and mixed-methods studies.

Apply this section to quantitative and mixed-methods studies. Please note that the attrition and repeated measures indicators are only relevant for longitudinal studies (do not fill these out for cross-sectional studies).

Researchers may wish to use a convenient score to determine eligibility for further data processing (e.g., only include studies scoring 50% or higher). It is up to you to decide whether to employ cut-off points. Cut-off points should match the purpose of your study (e.g., if you are doing a scoping review, you might not want to reject any studies based on quality, whereas if you are doing a meta-analysis, you might want to restrict the quantitative studies based on whether they report measurement validity statistics). We have included some suggestions for quality dimensions with two cut-off points,

1

emphasizing that these are only suggestions and not rules that researchers must follow.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.