Abstract

Keywords

Writing practice plays a crucial role in the process of language learning. But grading compositions often requires a lot of time and effort, and different teachers have varying standards. Therefore, an automatic essay scoring system is particularly important as it can alleviate the difficulties teachers face in correcting compositions. It is revealed that automated essay scoring systems are predominantly found in the context of English language corpora, with a staggering 95 pieces of literature dedicated to the subject, but there are only two or three pieces of literature related to automated essay scoring systems for Chinese or other languages (Huawei & Aryadoust, 2023). Therefore, the development of an automated essay scoring system in the Chinese context is particularly essential.

Project overview

The ELion Intelligent Chinese Composition Tutoring System (https://elion.ecnu.edu.cn/write/list.htm) is a joint effort between East China Normal University's Shanghai Institute of Artificial Intelligence for Education and Microsoft Research Asia. The ELion Intelligent Chinese Composition Tutoring System, based on the “Compulsory Education Chinese Curriculum Standards,” analyzes students’ compositions from four main dimensions: topic comprehension (keyword detection), content (composition length and quality of words and sentences), expression (fluency, logic, and grammatical errors), and handwriting. The system also provides detailed comments on students’ word choice, sentence structure, and paragraph organization, offering a multi-dimensional and multi-perspective objective analysis of students’ compositions. Teachers can review the system's preliminary assessments, provide additional feedback, write teacher messages, and set examples.

This program began in the spring of 2021 with a modest but specific goal: to reduce teachers’ grading workloads in Chinese composition writing in Chinese primary and secondary schools. As of June 2024, a total of 251 primary and secondary schools had adopted the ELion Chinese Composition Intelligent Tutoring System. The system's reach has expanded from its initial base in the Jiangsu and Shanghai regions to various parts of the country. It has accumulated a user base of 15,240 students and 560 teachers from Grades 3 to 9, with nearly 50,000 compositions uploaded and a monthly active user count exceeding 5,375. As the system's promotion expands, the ELion system has also received unanimous praise from frontline teachers. A primary school teacher in Shanghai skillfully uses the ELion Intelligent Chinese Composition Tutoring System for essay review. By comparing AI-generated reviews with those of the students, the teacher stimulates students to express their creativity based on the AI feedback, fostering interactive learning between students and AI within the classroom setting. The principal of a primary school also pointed out: Previously, the application of AI in the classroom mainly focused on subjects like mathematics, which are more inclined toward science. There are not many mature AI applications in the Chinese language subject. The performance of ELion has liberated Chinese language teachers to some extent from the task of essay correction, playing an important role.

The introductory paper (Zheng et al., 2023) provides an overview of the ELion Intelligent Chinese Composition Tutoring System. This brief report will focus on our investigation and exploration of large language models (LLMs) in essay scoring and feedback, planned or unplanned, which can be naturally divided into three stages: BERT only, BERT-ChatGPT Synergy for improved feedback generation, and BERT-ChatGPT synergy in an expanded framework for diversified and bold applications. The major underlying AI technology for such a system is called natural language processing (NLP), and the current core architecture for NLP is Bidirectional Encoder Representations From Transformers (BERT). The key element here is known as the transformer, and it is also an essential component for ChatGPT (Chat Generative Pre-Trained Transformer), which has become a hot topic in AI and education. Thus, when this project began, BERT, ChatGPT's cousin, was the clear choice for an automated essay scoring and evaluation system. This is the unfolding story of this system, which is full of surprises and echoes many issues, particularly the use of (generative) LLMs in education. This brief report aims to share some key findings, along with a new working concept and its theoretical support.

Stage I: BERT only

The most critical technological element of the system is an algorithm that automatically assigns scores and assesses textual attributes of essays. RoBerTa, a robust variant of BERT, and several other AI techniques, most notably Optical Character Recognition (OCR) technology for Chinese children's handwriting, are employed by ELion to evaluate essays from students across a spectrum of feature levels.

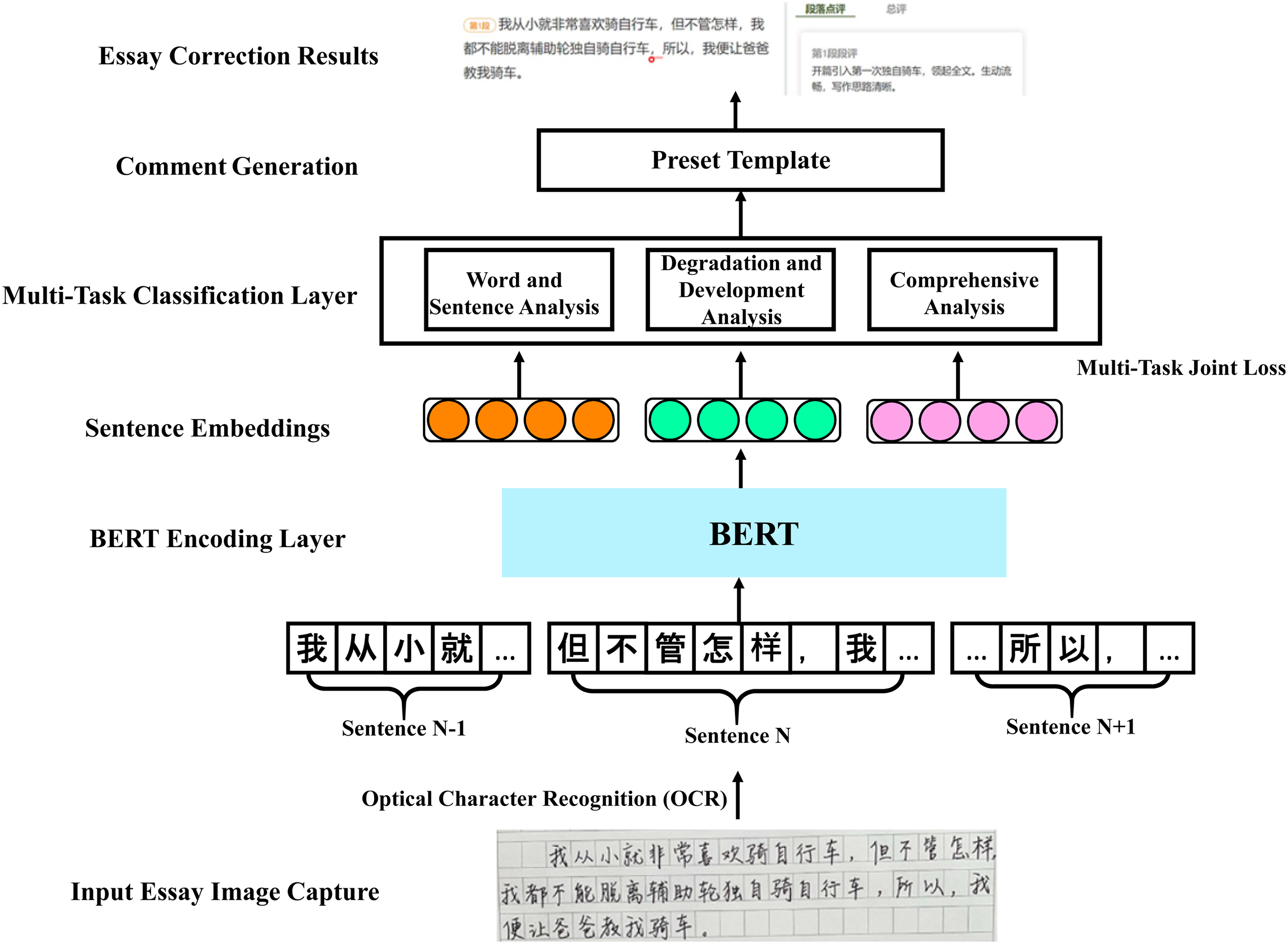

The operation of ELion's automated essay evaluation algorithm is illustrated in Figure 1. The algorithm converts the images into text encoding using text recognition after students upload photographs of their essays. In addition, it generates fundamental descriptive statistics, including the number of words and paragraphs. The algorithm then analyzes the entire essay, including words, phrases, sentences, and paragraphs, using the RoBerTa model. In addition to typographical errors and inappropriate word usage, the system is capable of identifying language fluency, coherence, logical reasoning, rhetorical techniques, and more. The algorithm then determines grades and provides essay correction information in accordance with the analysis of the aforementioned text. Finally, the intelligent feedback generation technology produces customized revision suggestions and comments for the essay, based on the evaluation results from the preceding steps and predefined comment templates.

ELion's intelligent essay scoring algorithm with BERT only.

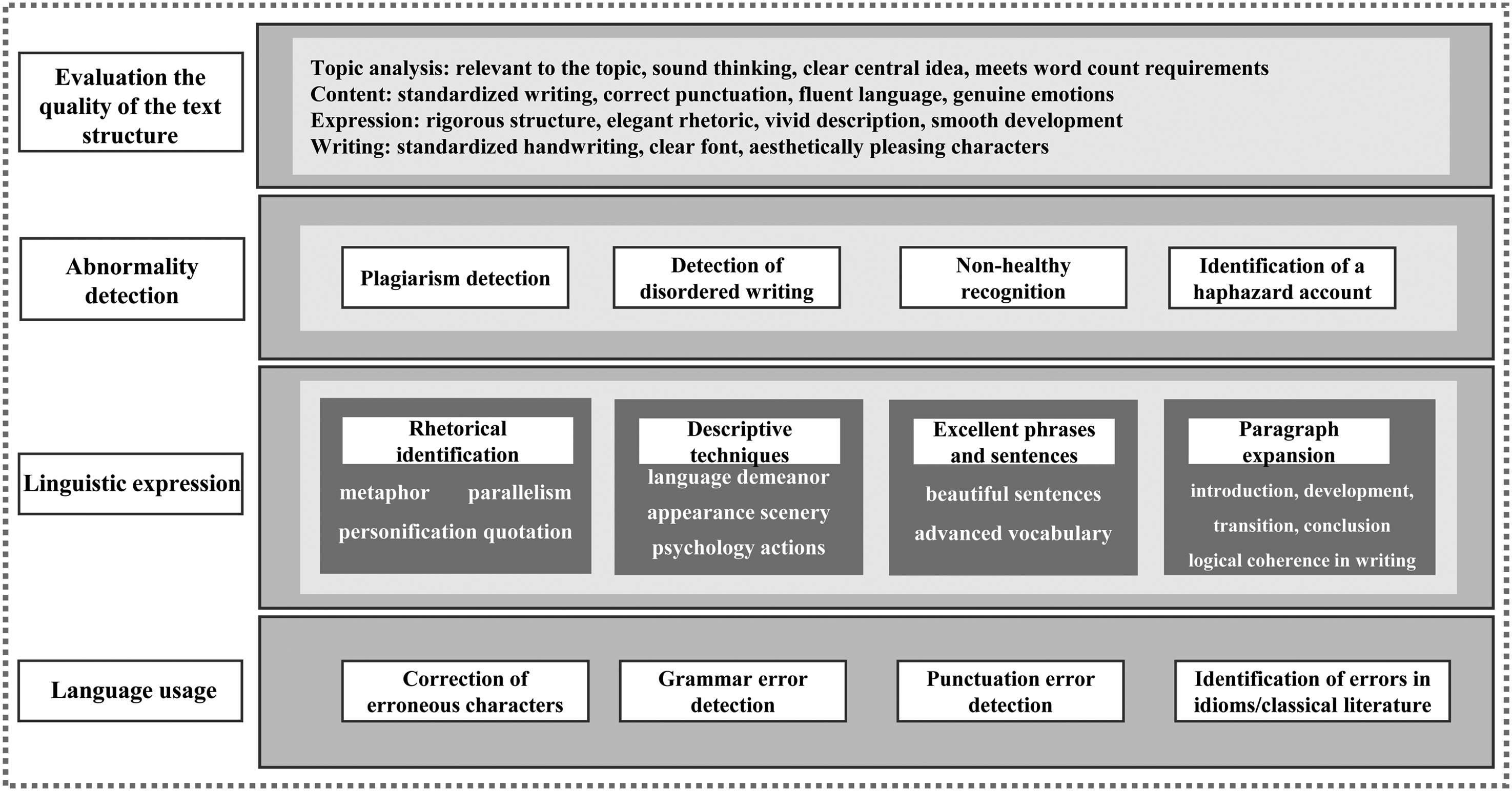

In order to fulfill the demands of the national curriculum and educators regarding “deep” language feature analysis, ELion assembled a teaching and research team of 23 individuals. This team consisted of Chinese educators, educational researchers, evaluation specialists, and AI experts. The group was assigned the responsibility of creating a composition evaluation framework that would be suitable for use in Chinese elementary and intermediate schools. Following a comprehensive examination of established frameworks for evaluating Chinese composition, the group devised a four-tiered assessment system that progresses from superficial to profound (see Figure 2).

ELion's Chinese composition assessment framework.

With varying degrees of precision, the scoring and evaluation algorithm based on RoBerTa can do an excellent job of assessing Chinese compositions for the aforementioned qualities. However, generating feedback reports on a large scale regarding the satisfaction of teachers and students remained a significant obstacle at this time.

In this iteration, ELion generated comments utilizing the BERT model combined with predefined templates. The assessment result for each student is considered as “accurate and individualized,” but this report exhibits several shortcomings, primarily in its delivery style: Initially, it appeared tedious and inflexible due to the pre-written nature of numerous sentences within the template. Furthermore, it is possible that these sentences lack amusement, lack friendliness, or fail to be presented in a manner suitable for the students’ grade levels and ages. Teachers are occasionally concerned that younger pupils may not be able to comprehend the report.

Another concern regarding the unexpected surge in popularity of ChatGPT is the perception that this technology possesses such immense power that it can supplant any existing method. People are, at the very least, curious as to why the system is not “upgrading” to ChatGPT.

Stage II: BERT-ChatGPT synergy for improved feedback generation

The team initiated trials of ChatGPT for essay scoring in early December 2022, well in advance of its widespread adoption in the field of education. A number of the aforementioned concerns may be readily remedied by integrating ChatGPT exclusively during the feedback phase. Our initial report exhibits precision in its evaluation yet lacks polish in its presentation. One straightforward resolution entails modifying our report to suit the various patterns specified by the instructors. “Easy to read for third or fourth graders,” “conversational in tone,” and “in a more humorous fashion” are the most favored alternatives.

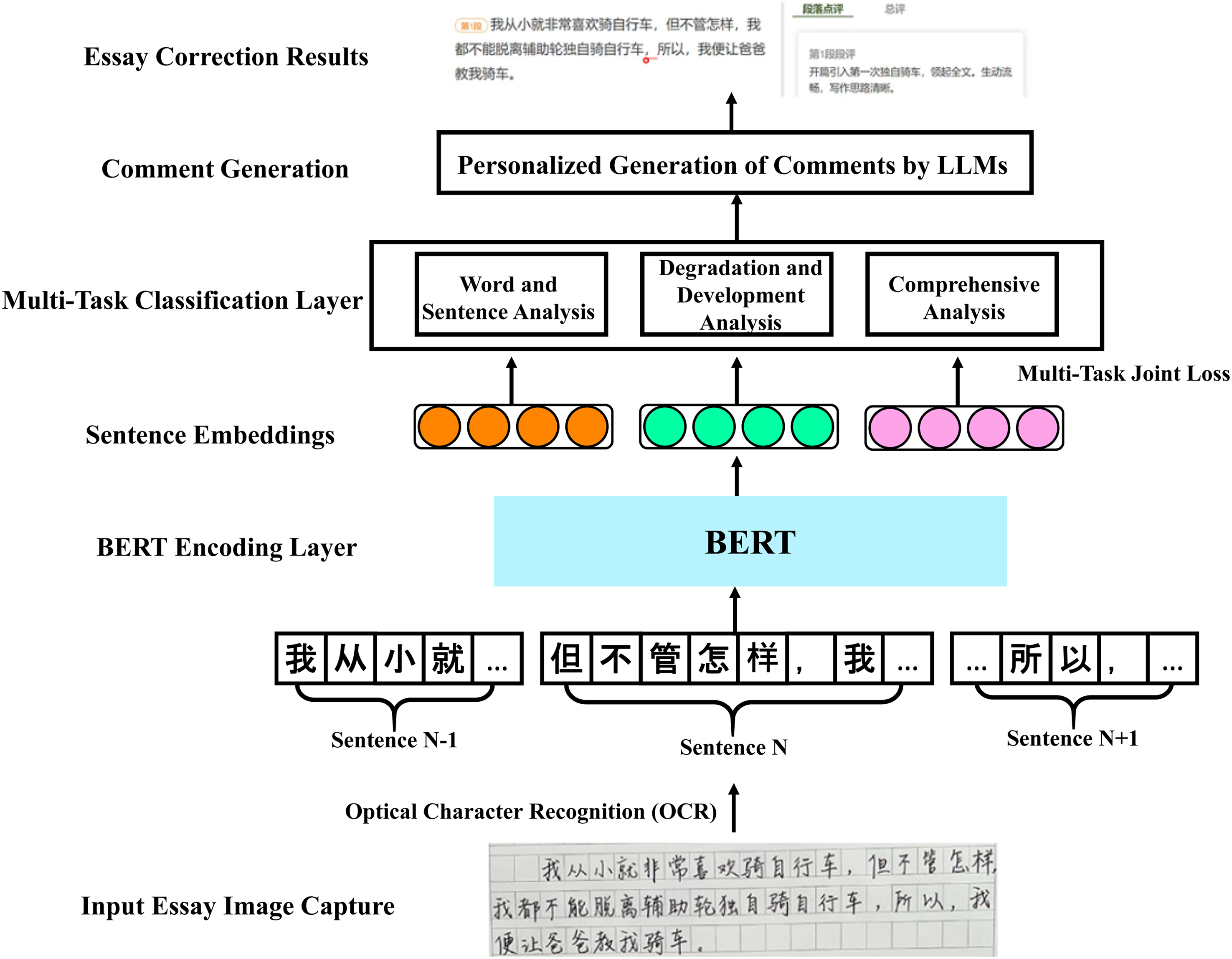

The feedback generated from templates can be effortlessly altered to conform to various styles due to the exceptional text generation capabilities of GPT technology. By developing GPT prompts, the ELion system can enhance the potential for generating feedback by guaranteeing language generation that is more suitable and in accordance with the true requirements of educators. The teachers’ responses to our small-scale survey contrasting the two types of reports generated by these two distinct methods readily attest to this. The operating method of ELion's second-generation intelligent automatic essay evaluation algorithm, which is built upon BERT-ChatGPT synergy for improved feedback, is illustrated in Figure 3.

ELion intelligent essay scoring algorithm with BERT-ChatGPT synergy.

The second significant concern is one that has been long anticipated: Is it imperative to substitute the current BERT engine with the ChatGPT engine? A comprehensive evaluation of ChatGPT's efficacy in assessing Chinese compositions in the aforementioned domains is therefore required. A sequence of initial investigations were undertaken to evaluate the performance of ChatGPT in this particular text assessment, driven by the concern that it might surpass the system on which our ardent 2-year labor has been spent. Despite being highly innovative and powerful in content generation, ChatGPT may not be as capable as a specifically designed system when it comes to serving as a general cognitive engine, and it has a number of evident shortcomings in essay scoring and text evaluation. These initial investigations have yielded some indications that a custom-engineered system remains necessary and might not be swiftly supplanted by GPT technology. An initial investigation was undertaken to assess the ChatGPT's efficacy in TOEFL essay scoring (Xia et al., 2024). The achievement of satisfactory scoring results, marginally inferior to the meticulously designed system, is especially noteworthy for the regression effect of scoring, potentially attributable to data limitations in the ChatGPT.

Stage III: BERT-ChatGPT synergy in an expanded framework

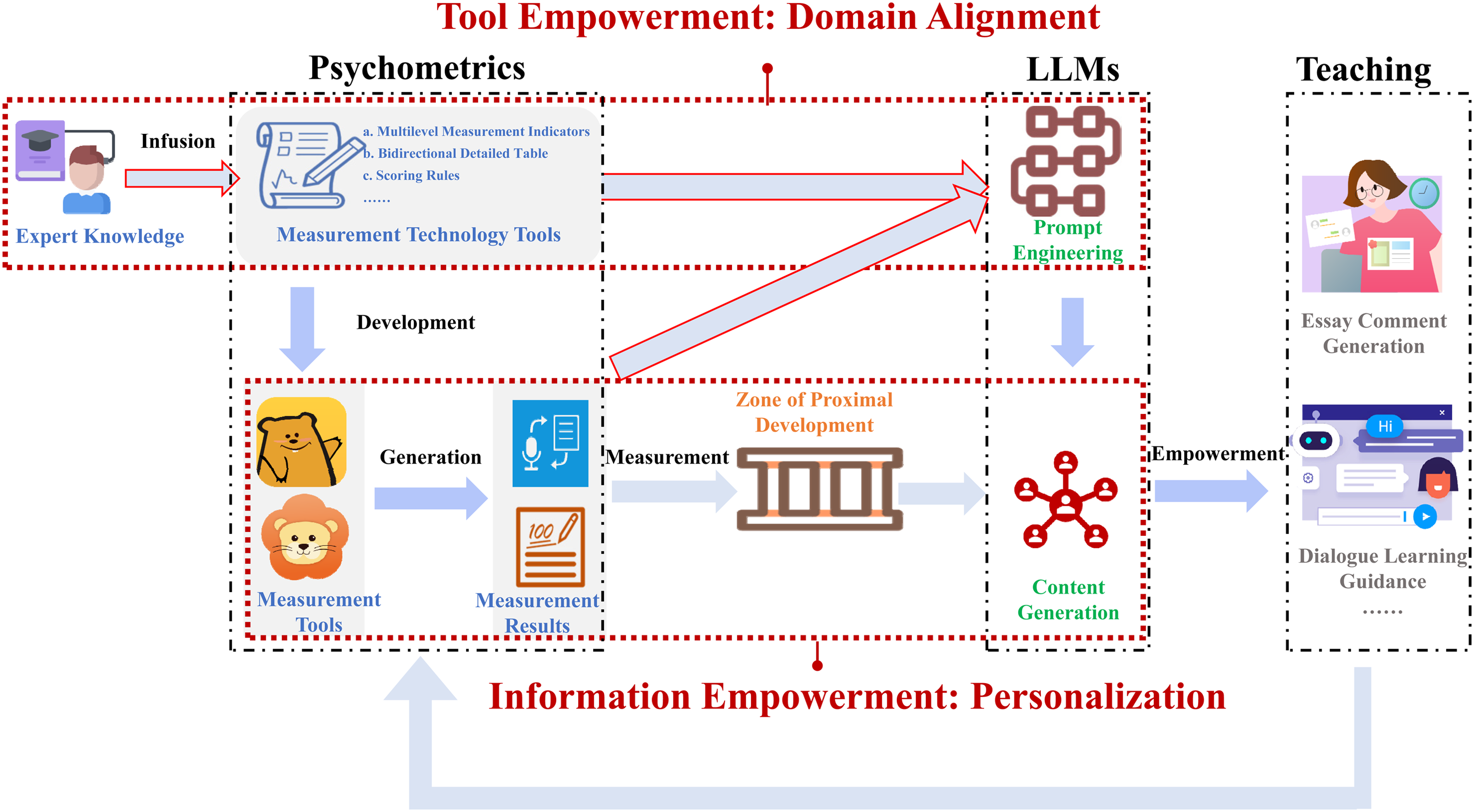

Additional research has been conducted to explore the potential advantages of LLMs in the fields of essay evaluation and education at large. In order to facilitate the continued advancement of the ELion essay system, a novel conceptual framework called Learning Copilot Enabled by Accurate Assessment and ChatGPT (LCEAAG) has been introduced. Using ChatGPT in conjunction with rigorous assessment within this framework could facilitate the development of a multitude of educational applications, such as interactive instruction, in addition to essay scoring and evaluation.

The possibility that ChatGPT's explanation fails to satisfy the standards of educators and learners is even more consequential. This practice, known as “prompt engineering,” necessitated furnishing ChatGPT with meticulously crafted and extremely detailed instructions.

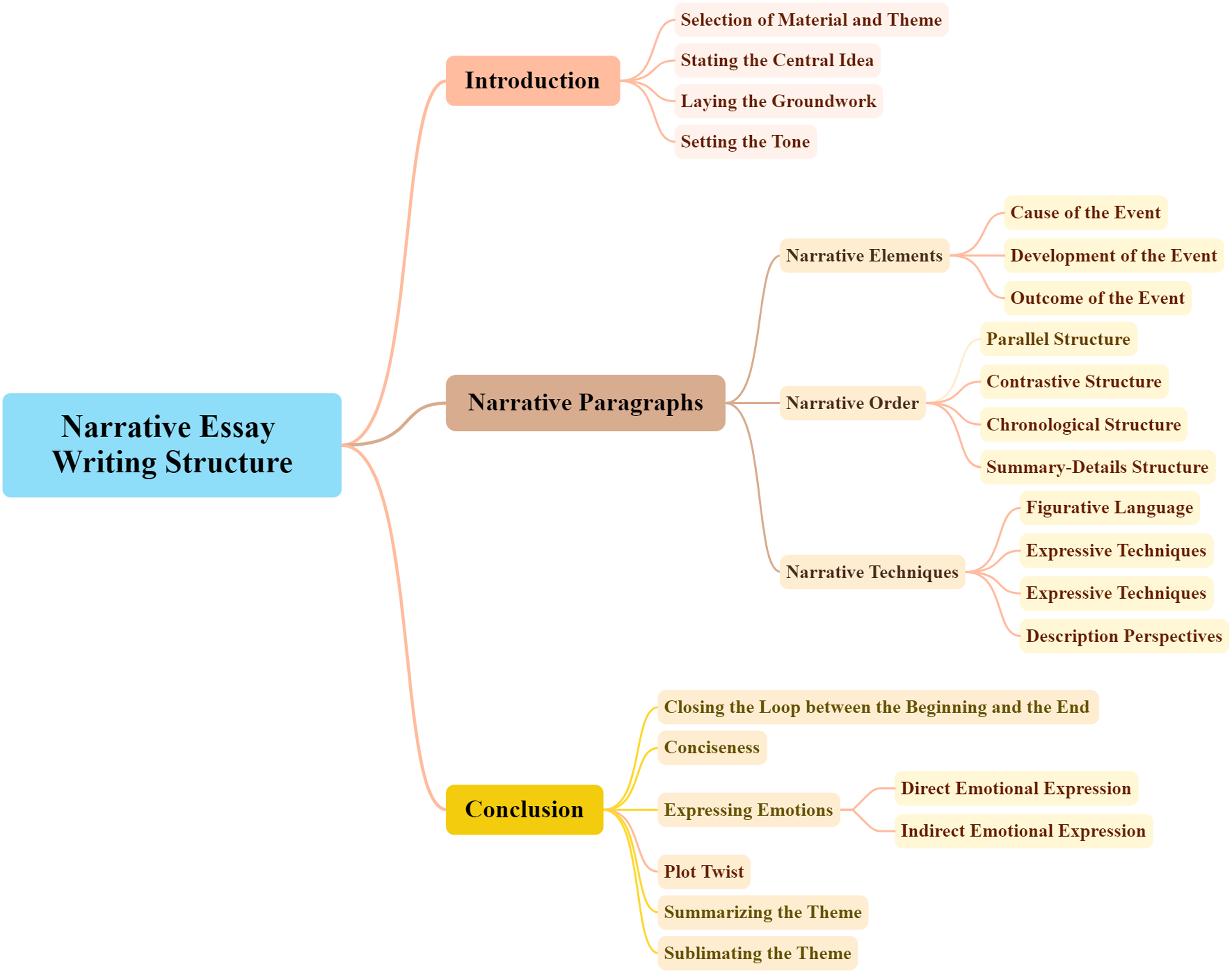

An effective approach was proposed and experimented by the ELion team to carry out prompt engineering in a methodical and quality-controlled fashion by leveraging established educational assessment tools, including scoring rubrics and the indicator framework for psychological assessment. These psychological assessment development tools commonly take the form of a hierarchical tree, which illustrates the different facets of the target domain or concept (construct, as employed in psychology and education). These facets span from more generalized to more specific and minute dimensions. An example of narrative essay assessment is presented in Figure 4.

An assessment indicator framework for narrative essays.

It is possible to generate a set of prompts using the indicator framework, conceivably in accordance with every single bottom-level indicator. By adopting this approach, we can guarantee that we have inquired about every essential aspect pertaining to the subject matter and have not neglected any critical components. It can operate as a quality control mechanism in this manner to achieve the alignment between the prompts and domain knowledge which is labeled as the “tool empowerment” of psychometrics devices.

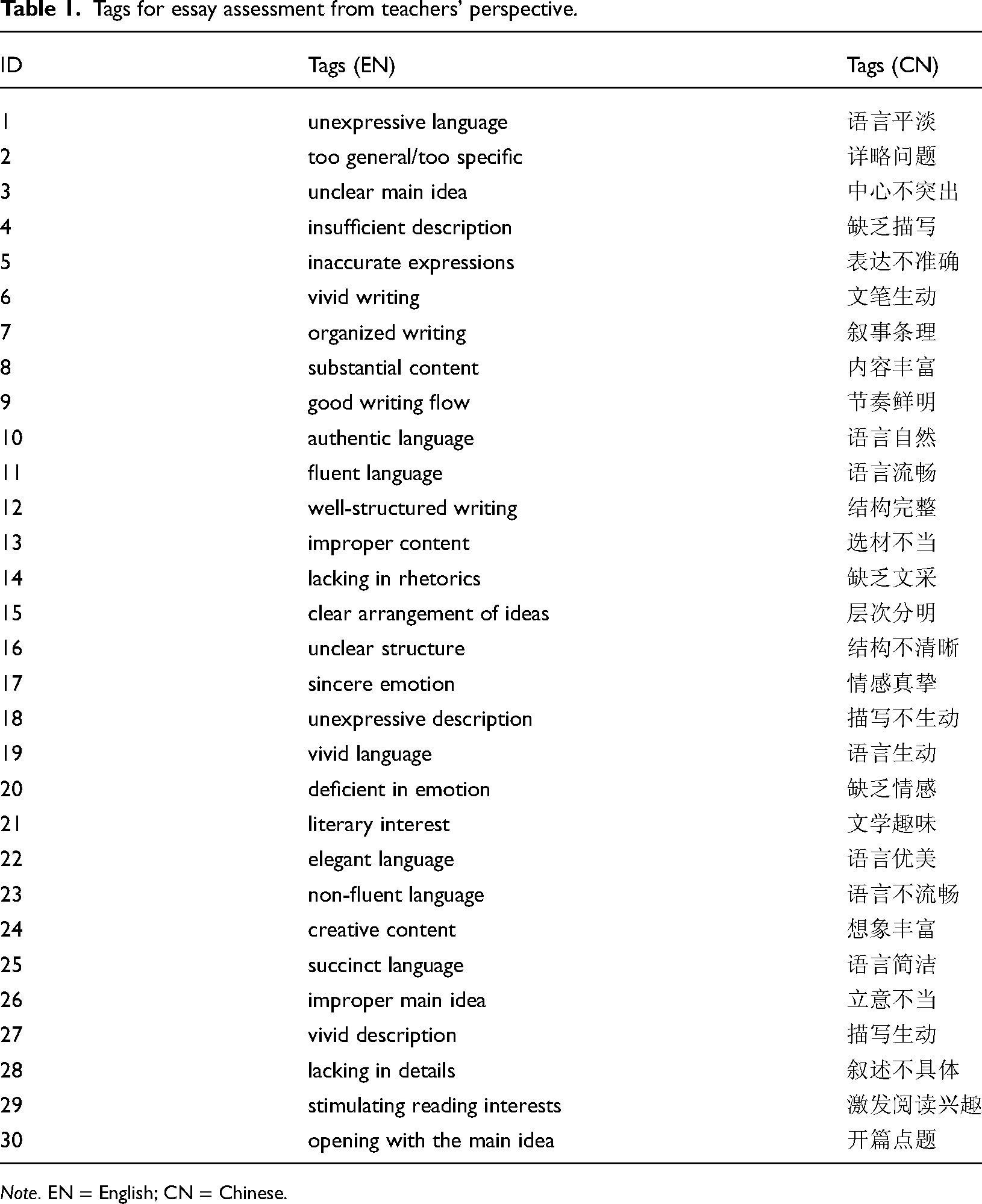

Additionally, information gathered from instructors can be used to generate a list of prompts as presented in Table 1 that can be cross-referenced with the framework to guarantee comprehensiveness. Through a straightforward survey of educators, we compiled this inventory to ascertain the matters that preoccupy them the most when assessing and grading the essays of their pupils. These points (or tags, as they are referred to in computer science) constitute the most exhaustive compilation of all aspects pertaining to writing feedback and learning that the majority of instructors found intriguing or pertinent.

Tags for essay assessment from teachers’ perspective.

Note. EN = English; CN = Chinese.

Under this LCEAAG framework, ChatGPT is capable of two functions: First, it can conduct the text assessment by adhering to the given prompts generated from the assessment indicators; second, it can engage in intelligent discourse with a student by utilizing assessment data from an external assessment, either in isolation or in conjunction with data from an external assessment and itself. The second function entails interactive instruction, wherein the personalized assessment outcome further augments the guidance provided by the assessment concept framework. When utilized in conjunction with the assessment outcomes which are labeled as the “information empowerment” from the assessment result, this collection of prompts has the potential to enhance the accuracy of an interactive dialogue between the ChatGPT and the students. The whole LCEAAG framework is presented in Figure 5.

The LCEAAG framework.

The idea of systematic prompt engineering in LCEAAG is inspired by Herbert Simon's (re)definition of “psychology as a science of the artificial.” Following the same line of thinking, we can argue that psychometrics can be redefined as “a science of the artificial.” Psychometrics becomes the interface among the human mind, the target domain, and the LLM. To be more specific, the traditional important technical devices in psychometrics emerge as the interface or bridge between the target domain and the LLM. This interface's main job is to guide the LLM to make a pedagogically relevant and enriching conversation with students. The quality of this interactive tutoring is further ensured through the “accurate assessment” and is conversational and amicable due to the ChatGPT's distinctive advantage. This approach can also be implemented in a range of situations involving psychological and educational evaluations. We are experimenting, for instance, with interactive report feedback for a personality assessment related to the workplace.

The investigation into the possible integration of LLMs into this automated scoring and evaluation system project has progressed through three discrete phases. The unanticipated appearance of ChatGPT disrupts the initial plan, resulting in both positive and negative consequences; thus, this process is also generative. Furthermore, the primary insight gained thus far is that even for LLMs, it may be necessary to incorporate a variety of technologies—both old and new—across various disciplines in order to attain satisfactory results in practice. Innovative technology cannot solve problems in the actual world on its own.

Takeaway message

This article integrates how the ELion Intelligent Chinese Composition Tutoring System evolved in China to address the challenging and time-consuming essay grading requirements.

The ELion system's technical progress occurs in three stages: first, multidimensional assessment using only BERT; second, ChatGPT is added for enhanced feedback generation; and lastly, the LCEAAG framework is implemented. The system combines psychometric tools with rapid engineering to enhance AI's applications in education.

Psychometrics and LLMs enable AI incorporation in education. Based on Herbert Simon's “the sciences of the artificial,” the study redefines psychometrics as a “science of the artificial,” making AI–student interactions pedagogically helpful and intellectually celebratory.

The study underlines that single technology cannot solve real-world problems. Technology and traditional methods must be used to generate cross-domain educational solutions. Generative AI offers pros and cons, stressing the need for customization and disciplinary adaptability in education technology.

Footnotes

Contributorship

Chanjin Zheng, the principal researcher, was responsible for the overall conceptualization and supervision of the project, provided key insights into the integration of educational theory with artificial intelligence technology, and contributed to the theoretical foundation of the LCEAAG framework. Wei Xia is mainly in charge of drafting the initial manuscript, organizing theoretical content, and creating illustrations. Shaoguang Mao is responsible for system development, ensuring the system's accuracy in evaluating Chinese compositions. Yan Xia coordinated the technical team and managed the deployment of the system in educational settings.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.