Abstract

An increasingly common challenge facing researchers is participants falsifying their identity or their experiences to participate in online research. Imposter participants pose a threat to the integrity of research data, requiring careful risk mitigation strategies. In this case study report, we describe four projects across two institutions with victim-survivors and perpetrators of domestic, family and sexual violence in which we encountered imposter participants. We describe the technical, manual and ethical strategies we implemented to safeguard the integrity of our research. While necessary, these strategies were resource-intensive, and impacted participant recruitment and the wellbeing of researchers. We recommend a range of strategies at the study design, organisational and global level to better equip researchers with the tools to manage impost participants and maintain the integrity of data collected in research.

Keywords

Introduction

An increasingly common challenge facing researchers is that of ‘imposter participants’ in online studies. Imposter participants falsify an identity or their experiences to meet the eligibility criteria for a study (Ridge et al., 2023; Roehl and Harland, 2022). Several researchers have described their experiences of people falsifying details to participate in online studies (Owens, 2022; Ridge et al., 2023; Roehl and Harland, 2022). This type of participant poses a threat to the integrity of research data. Data integrity is foundational to the relationship between the research community and the public that looks to research for evidence that advances knowledge (National Academy of Sciences et al., 2009). End users or beneficiaries of research (e.g. community, industry, government, practitioners) must have confidence that findings from studies are trustworthy, complete and can be relied upon. However, the use of remote online research methods has introduced a greater likelihood of imposter participants (Owens, 2022; Ridge et al., 2023; Roehl and Harland, 2022).

Ethical considerations for online research methods

Many online platforms such as social media sites Facebook and X (formerly Twitter), and crowdsourcing services were not originally designed for research purposes; the anonymous environment they provide, coupled with incentives for research participation provides a fertile ground for misrepresentation by participants (Hydock, 2018). There are benefits of using online methods to recruit participants as it enables researchers to reach a wide audience cost-effectively, target specific cohorts according to the study topic, and recruit participants traditionally underrepresented in research (Bennetts et al., 2019; Thornton et al., 2016). Similarly, there are convenience and cost benefits to conducting studies online, including through online surveys for quantitative data or online conferencing software for qualitative interview or focus group data. In situations such as the COVID-19 pandemic, most research across the world had to be conducted online to adhere to the physical restrictions; this provided the added benefit for research to continue rather than completely stop due to the pandemic-related restrictions. There is no evidence that using online methods of data collection adversely impacts data richness or value (Archibald et al., 2019). Yet, as the popularity of using the internet and social media as a research tool has grown, so have the associated ethical issues. A study conducted with Australian researchers and ethics committee members in 2020 revealed there is a need for additional support, training and guidance to increase knowledge and confidence on social media ethics (Hokke et al., 2020). Some ethical guidelines are in place for online recruitment (National Health and Medical Research Council et al., 2023), but these have not kept pace with emerging ethical dilemmas, including the management of fraudulent research participants.

Guidance and codes relating to the integrity of research data have historically focused on the conduct of researchers (National Health and Medical Research Council et al., 2018). However, the shift to using online methods to recruit research participants now requires greater consideration of the trustworthiness of participants. Ethical guidelines in Australia and elsewhere recommend participants are reimbursed for the time and expenses involved in research (National Health and Medical Research Council et al., 2023). Honorariums are expected to be proportionate to the time required for participation to discourage participants feeling undue pressure to participate (National Health and Medical Research Council et al., 2023). Yet, research reimbursement payments that are appropriate for participants’ time and expenses in high-income countries like Australia may be considered a significant amount to those whose financial circumstances are dire or those living in low- to middle-income countries. The COVID-19 pandemic accelerated use of online research methods, prevalence of imposter participants and global cost of living pressures and rising poverty levels (Ridge et al., 2023). This may have led to people looking for new ways to supplement their income, and payment for study participation might motivate some to fraudulently participate in online research (Owens, 2022; Ridge et al., 2023).

Imposter participants in online survey studies

Imposter participants in online surveys may be humans or internet ‘bots’, defined as software that can perform automated functions (Bauermeister et al., 2015). Researchers have proposed various methods for preventing, detecting and responding to imposter survey participants (Griffin et al., 2022). Researchers suggest the need for intentional choices at the study design, recruitment and data cleaning stages, with none being sufficient on their own. These include raffle-based rather than individual participant incentives; a pre-eligibility screening survey; software solutions to detect bots or fraudulent participants; the inclusion of open-ended survey questions; and human checks for duplicate, inconsistent or dishonest responses (Griffin et al., 2022; Hydock, 2018; Salinas, 2023; Wang et al., 2023). Online survey studies that have encountered imposter participants have found that multiple strategies are required, whereby each step reduces the number of fraudulent responses, but labour-intensive manual checks are still necessary to be fully confident in the integrity of their data (Griffin et al., 2022; Wang et al., 2023).

The use of large databanks of online participants managed by commercial organisations (panels) are commonplace (Goodman and Paolacci, 2017). Participants complete multiple surveys and are paid for every survey completed usually at a very low monetary reward/survey. This raises concerns about whether panel participants may lie or exaggerate to meet the criteria for a study or may progress through survey questions quickly with little attention to their answers. Some research has suggested that panel data can be appropriate for research when checks are included to ensure low quality data is removed (Douglas et al., 2023; Porter et al., 2019). These include checks for attention, meaningful answers, following instructions, remembering previous presented information and working slowly enough to be able to read and respond to questions properly (Douglas et al., 2023). However, studies comparing panel data quality between online research platforms found significant differences in comprehension, attention and honesty depending on the platform used (Douglas et al., 2023; Peer et al., 2022). These mixed findings suggest panel data should be interpreted cautiously.

Imposter participants in online interview studies

Qualitative researchers are increasingly encountering imposter participants in online interview studies, both at the recruitment and interview stages (Owens, 2022; Ridge et al., 2023; Roehl and Harland, 2022). Researchers have documented their experiences and their strategies for preventing their participation and inclusion in the final data set (Ridge et al., 2023; Roehl and Harland, 2022). Strategies they have implemented at the recruitment stage include using targeted advertising, snowball sampling and not mentioning research incentive payments in research advertisements. Researchers have also suggested ways to verify the identity of people at the expression of interest stage, including requesting potential participants to email researchers from an ‘official’ email address, requesting they send a screen shot of an identification document, confirming their qualifications, asking them questions about the local area to help check their location and asking participants to turn their camera on for the first part of an online interview (Owens, 2022; Ridge et al., 2023; Roehl and Harland, 2022). Careful judgement is required from researchers so that these strategies do not deter genuine participants (Owens, 2022). At the interview and analysis stage, recommended strategies include taking a ‘cynic’, ‘sceptic’ or ‘seeker’ approach in determining whether participants were genuine (Roehl and Harland, 2022), and using the researcher expertise and experience in assessing the ‘plausibility’ of the participant’s narrative (Owens, 2022). However, there is a lack of evidence for what strategies are appropriate and effective in what circumstances, and researchers continue to deploy a range of strategies at an individual study level without clear ethical guidance.

Aim of this case study

In this emerging topic, no studies to our knowledge have examined the issues arising from imposter participant involvement in sensitive research related to experiences of domestic, family and sexual violence (DFSV). There are specific considerations when recruiting and conducting studies with victim/survivors and perpetrators of DFSV. For victim/survivors, privacy protection to avoid perpetrator awareness of involvement in any study is paramount to ensure they are safe from further harm, so identification verification of participants must be enacted safely and securely (Ellsberg et al., 2005). Similarly, perpetrators experience shame and stigma associated with their use of DFSV, thus verification steps may act as further deterrent to recruitment (Boxall et al., 2023).

In this paper, we discuss four case studies of imposter participants in DFSV research we have undertaken across two institutions. First, we will briefly describe each study, the nature and scope of the imposter participant issues identified and what measures were taken to ensure the integrity of the data. Then we will discuss issues relating to data integrity, ethical dilemmas and researcher impact associated with managing imposter participants from these studies. Finally, we propose some approaches for managing imposter participants and fraudulent data in DFSV research.

Case studies of imposter participants in research

Project 1: Online surveys (2020–2022)

This study explored support needs of Australian victim/survivors and perpetrators of intimate partner and sexual violence via separate online surveys using the web-based platform Qualtrics (2020). The recruitment strategy included advertisement on social media platforms (Facebook, Instagram, X (formerly Twitter), LinkedIn, Gumtree, Locanto), with tailored, advertising messages for targeting participants. In addition, a broad list of organisations that work with potential target participants were contacted for promotion of the study.

During data collection, we became aware that participants were answering the surveys multiple times on a very large scale within a 24-hour period, despite some standard measures in place through the Qualtrics platform to detect and remove false participants. We suspected fraudulence given the volume of sequential responses a few seconds apart in the early hours of the morning (Australian time). Further investigation confirmed our suspicions through several data checks: a disproportionate number of participants relative to the Australian population stated they were from an Aboriginal or Torres Strait Islander background; many survey responses were left blank; there was a pattern of people who gave the same open-ended responses to the question being asked; and there were multiple inconsistencies across responses. We surmised the incentive for these participants might have been to gain multiple gift vouchers.

Our initial solution was multifactorial: stop sending gift vouchers to all participants; manually checking whether data appeared genuine; and only sending gift vouchers to participants who had genuinely completed the survey. As the fraudulent responses continued, we then amended our ethics application to cease giving gift vouchers, and instead offer participants the option to enter into a draw to win an iPad. However, we continued to receive fraudulent responses. We then implemented significant changes in terms of how we engaged and screened participants. We no longer linked participants directly to the full survey through social media. Instead, from the landing page on our research centre’s website we directed them to a brief survey of eight screening questions that included demographic questions, questions about experiences of DFSV, a simple arithmetic question and contact details. Upon completion of these screening questions, we sent eligible participants an email with a unique link to the full survey that only worked once. We enabled additional fraud detection fields in Qualtrics for the full survey including bot detection and other security features to filter out the data.

The survey completion rate with genuine responses progressed slowly and it was particularly challenging to recruit people who use DFSV. Therefore, we decided to use panel participants for recruitment, implementing similar measures as described above for the panel participants. Despite these measures, some fraudulent responses were still received, albeit at a lower rate. We dedicated a significant amount of time to examining data for possible non-genuine responses, and removed unreliable data based on criteria that we had established. Strategies included:

- examining metadata from Qualtrics survey responses for patterns or indications of fraudulent activities (e.g. survey start and end dates, duration, geographic location (longitude and latitude), repeat IP addresses).

- checking for clearly conflicting and implausible responses, and cross-referencing responses against others provided (e.g. participant who selected heterosexual orientation but said they had a same sex partner).

- observing for suspicious patterns across dataset (e.g. vague and sometimes meaningless phrases repeated across multiple survey respondents, specific or similar patterns of email addresses and names across respondents).

- assessing the quality of qualitative or open-ended responses.

Using these methods, we felt confident that the final data set contained genuine participants.

Project 2: Randomised controlled trial (2022–2024)

We conducted a trial exploring assisting men in seeking help for abusive behaviour in their intimate relationships. The target population was men 18–50 years old living in Australia who had experienced relationship issues related to their behaviour in the previous 12 months. Participants were expected to complete a series of three online surveys receiving gift vouchers of $30 at baseline, $40 at 3 months and $50 at 6 months. Our recruitment strategies were similar to those we employed in Project 1; however, because men who use DFSV are difficult to recruit, we took additional steps to promote the study through television and radio and a broad range of industries.

Having learned from our earlier experiences, we implemented verification processes from the outset. This included responding to Redcap Captcha questions, providing an email address and a verifiable mobile phone number. We then sent a security code to the phone number to allow access to the first survey. Nevertheless, we detected fraudulent responses in the dataset as potential participants tried to enter the study multiple times with different email addresses. Consequently, we adjusted the recruitment process to include a more rigorous verification process. Changes included requesting for respondents’ full name and residential address (excluding the house/unit number) only for the purpose of verifying those details against the publicly available Australian Electoral Roll, and asking them to use an institutional email address where possible. We also undertook over-the-phone verification and online checks for social media accounts. In REDCap, we collated all new responses under the label ‘participants with pending verification’ and respondents were able to progress to the full survey only after they had been manually checked and marked as verified. The new verification protocol worked well in deterring non-genuine participants. However, it significantly reduced uptake, possibly due to people’s concerns about providing personal details for verification purposes.

Project 3: Qualitative studies (2022–2023)

One interview study explored women’s experiences of psychological violence and their preferences for health support; the second interview study examined the shaming tactics partners used in the context of an abusive relationship. Both studies used social media to recruit participants through Facebook, LinkedIn and X (formerly Twitter). For both studies, we provided AU$40 gift vouchers as a token of appreciation for participants’ time (45 minutes–1 hour), however the ‘shame’ study did not mention this on the advertisement. We directed interested participants to a Qualtrics expression of interest (EOI) form containing some simple eligibility questions and space to provide confidential contact details for follow up (i.e. a safe email address that no one else had access to). Both forms contained a Captcha verification question as a way of confirming respondents were human.

Within a few days of advertising, we received 242 EOIs for the psychological violence study, and 131 EOIs for the ‘shame’ study. We examined the data closely and determined most (80%–90%) were imposter participants because they used non-sensical names (e.g. ‘Stoyqgpr Martyvb’) and phone numbers that were not consistent with an Australian format. We removed data that were easily identifiable as fraudulent, however, for the ‘shame’ study, we remained unsure about several possible participants. There was a clear pattern across these participants: the use of old-fashioned female first and last names, and ‘Gmail’ addresses that exactly matched these names. We initially sent information about the study and a consent form to five of these potential participants, who all returned the consent form and requested a video interview. Their email responses were perfunctory and demonstrated a formal but limited mastery of the English language. We continued to feel uneasy about these participants, however it was difficult to clearly identify specific reasons for feelings of unease, thus we proceeded to arrange the interviews.

We held video interviews with these five participants. All but one elected to leave their camera off, the lighting and audio was poor and they had limited English. The descriptions of their experiences of abuse were vague and contained little depth (e.g. one participant said their partner ‘body-shamed’ them while another said their partner was ‘verbally disrespectful’, but neither were able to provide specific examples). At the end of the interviews, we asked demographic questions which contradicted the above with all saying they spoke English as their first language and two said they were Aboriginal or Torres Strait Islander, implausible in the context of other details provided by these participants. Following a review by the research team, we decided to exclude these interviews from our analysis due to uncertainty about their authenticity. We then advised future participants who we suspected were imposters that we wished to conduct a short pre-screening telephone interview to further assess their eligibility for the study. These participants did not respond to the email.

The researchers felt conflicted and unsettled when conducting these interviews. For example, when participants described abuse, the interviewers responded with empathy despite feeling uncertain of the participant’s authenticity. The interviewers struggled with feelings of anger and frustration after realising that they needed to approach subsequent interviews with suspicion in case the participant was an imposter, which impacted their ability to empathically engage with genuine participants.

Project 4: Qualitative study (2023)

This project was an interview study conducted by a team at a separate university to map the victim-survivor journey of accessing family violence services in rural and remote locations. During the recruitment phase, posters were displayed at local family violence services, through which seven participants were recruited. The study was also promoted in one local Facebook page of a rural community group; the post advertised an AU$100 payment for participant time (1–2 hours). Following this, 15 participants contacted us within 2 days through a project email address. This appeared to be highly unusual as researchers in this field know the challenges associated with recruitment of victim-survivors, especially in a rural location. The email addresses were similar to those described in Project 3, with ‘old-fashioned’ English first and surnames with a corresponding Gmail address.

To provide participants the benefit of the doubt, and especially in this field of research where we attempt not to doubt the veracity of a victim-survivor’s story/experience, we proceeded to organise online interviews with the first 10 participants. Confirmation came swiftly with no further questions asked about the nature of the study. They also agreed to any given interview time allocated by the researcher. For the first four participants, they were given the option of not turning on their camera. For the cultural identity related questions, almost all of them identified as belonging to the Aboriginal or Torres Strait Islander community. This was unusual because it was an overrepresentation of the proportion of First Nations people in those areas. Participants described similar countries of origin (such as Canada), causing some doubt because we were not certain why so many Indigenous people had migrated from Canada and we had previously not come across such a demographic.

The researcher asked further general probing questions such as the weather, to which participants responded with very different responses to how the weather actually was in that location. For subsequent participants (3 more), additional verification steps were taken during the interview: for example, the researcher asked them to turn on their video camera at least for the first few minutes to introduce themselves, and also asked them for their postcode and to provide local information such as the name of their nearest hospital. It was clear that participants were in a different time zone and were unable to provide the local information requested. During one interview, the participant looked up at someone standing next to them and sought the answer from them. A male responded to them, with their voice recorded on the audio recorder as well. Participants appeared fearful when asked simple questions such as whether they were not feeling cold in their light attire (due to it being winter in Australia).

Following such interview experiences, the research team contacted family violence services in the rural and remote locations and asked them about the authenticity of some of the details the participants had described, without identifying any participant. The services indicated that those experiences were highly unusual in their region. To preserve the integrity of our data, we decided to exclude all data collected after the promotion of the study on the community Facebook page. We also cancelled all other interviews scheduled with participants who had provided ‘suspicious’ Gmail addresses. We continued our recruitment of participants using other methods and avoided promotion on any Facebook pages.

Reporting to ethics committee

The researchers proceeded to inform the institutional ethics committee to inform them of what had ensued during the course of these four studies. This was for the dual purpose of keeping the committee and other university researchers informed of the need for precautions when undertaking such research. The ethics committees advised that they had not been privy to such a situation, and we had to institute a range of procedures including not including the payment amount on posters and altering recruitment processes to ensure that the integrity of our data and project findings were maintained.

In summary, as DFSV researchers from two institutions, we have encountered imposter participants in several studies of different designs in recent years and we have employed a range of strategies to safeguard the integrity of data and study outcomes.

Discussion

This report of multi-institutional case studies provided four examples of challenges presented by imposter participants when conducting studies on DFSV. Our experiences suggest fraudulent research participation is widespread and requires consideration at the planning, ethics approval, recruitment and data cleaning/analysis stage to verify authenticity of participants. We are confident that the range of technical, ethical and manual strategies we implemented dissuaded people from continuing to attempt to participate fraudulently ensured that only genuine participants are represented in our studies, and protected the integrity of the research findings. However, these efforts significantly impacted recruitment, particularly in relation to perpetrators of DFSV. Many of our experiences with imposter participants are consistent with those from other studies (Bauermeister et al., 2015; Ridge et al., 2023; Roehl and Harland, 2022). Thus, we will only focus in more detail on considerations specific to DFSV research.

Managing imposter participants in research on DFSV

Researchers conducting studies on experiences of DFSV commonly take a trauma-, violence- and culturally-informed approach that seeks to mitigate the risks of victim-survivors being re-traumatised or distressed by their participation (Alessi and Kahn, 2023). In such research, verifying the identify of participants requires careful consideration. For legal or safety purposes, victim-survivors of DFSV may need to take extra steps to conceal their identify, thus making some of the recommended verification processes unworkable. For example, researchers who have undertaken qualitative studies about the experiences of health professionals have identified imposter participants, and have recommended asking participants (e.g. those purporting to be health professionals) to verify their identity by sending an email from a work email address, a link to a work website or a screen shot of a work identity card (Roehl and Harland, 2022). However, in our research, consistent with best practice guidelines for ethical research on DFSV, we encourage victim-survivors to create a safe email address that no one else has access to or could easily guess (Women’s Aid, 2020). Further, using automatic location verification through some of the available technology is not ethical to safeguard the privacy and location of the victim-survivor participant (Salinas, 2023). There are also challenges in the use of additional steps to verify identity in our perpetrator study. Understandably, despite assurances of confidentiality and adherence to ethical standards, genuine participants may still be discouraged from engaging in such research. Therefore, balancing the application of validation strategies when recruiting from the wider community with potential participants’ concerns or fears remains a challenge that needs to be carefully navigated.

A strategy we found useful to deter imposter participation was taking the extra step of advising suspected imposter participants that we would be conducting a pre-screening telephone call (which may rule out overseas imposter participants, if overseas participants are not the focus of a study). Yet the strategy is not without risks, given that many victim-survivors have experienced disbelief, mistrust and minimisation of their experiences of DFSV from family, friends and services. Adding an extra layer of screening may be a deterrent for victim-survivors who wish to participate in research, so it needs to be phrased and handled sensitively so not to convey any suggestion of disbelief of their experiences.

In considering how to prevent imposter participation, we discussed whether to continue reimbursing participants for their time and/or whether to promote the payments on project advertisements. Reimbursing participants for their time is consistent with national guidelines (National Academy of Sciences et al., 2009; National Health and Medical Research Council et al., 2023), and our payment amounts are in line with government guidelines for remunerating victim-survivors of family violence (Victorian Government, 2023). Further, one research team’s work is informed by a group of women with lived experience of DFSV. This lived experience group are firmly of the view that study participants should be remunerated, and that transparency should underpin all stages of a study, which means including remuneration details in study advertisements. However previous research has found that paying for participating in research can be associated with deception by participants (Lynch et al., 2019; Surmiak, 2020), as we noted in our studies, and one ethics committee recommended the discontinuation of advertising remuneration. We continue to discuss how to balance fair payment and transparency with the need to minimise the inclusion of imposter participants.

Engaging in violence-related qualitative research requires significant ‘emotional labour’ on the part of the interviewer; it involves engaging authentically as they support the participant to share often distressing experiences (Moran and Asquith, 2020). In this process, researchers seek to minimise their own risk of secondary or vicarious trauma (Dickson-Swift, 2022). Imposter participants create unanticipated challenges for DFSV researchers. Researchers need the opportunity to discuss how to manage incongruent approaches when studies have suspected imposter participants, including how to balance the need to approach interviews with scepticism (Roehl and Harland, 2022), while also being prepared to offer empathy and sensitivity to participants. Researchers require support and an opportunity to safely reflect on the thoughts and feelings that arise during the research process (Clarke and Braun, 2021).

Another challenge for our research teams was whether we had any ‘duty of care’ requirement for the participants we suspected were imposters (Owens, 2022). We speculated about the motives and identities of the imposter participants and our consensus was that they may have been students or were not based in Australia and were participating for the financial incentive, which was a relatively small amount; they could have also been forced to take part in such research as some of their experiences were congruent with other ‘valid’ victim-survivor participants. We were never 100% sure that they were not genuine participants, leading to an uncomfortable feeling that we may be harming the participants by taking a sceptical approach during pre-screening or during the interview, or in not offering the degree of empathy that we would normally offer. Some of the questions we asked ourselves were: Are these vulnerable participants? Are they being coerced into participating by others? How organised are the people targeting research studies for financial gain? Do we have a responsibility to find out? Whose responsibility is it? Do we have a duty of care to provide participants with adequate information about resources that could support them? These questions raised uncomfortable concerns; our focus as researchers was to investigate the impacts and harms of violence, and it was alarming to think our research may be hijacked by organised criminal groups, knowing that many criminal groups are involved in trafficking vulnerable people into internet fraud (Jesperson et al., 2023).

General strategies for avoidance and detection of imposter participants

To minimise risk of fraudulent respondents we suggest researchers plan their recruitment approach carefully, and if using social media consider only advertising in ‘closed’ online groups focused on a particular topic (where members are usually verified and content is only visible to those within the group), or on the pages of organisations likely to have access to eligible participants. The downside of these approaches is narrowing the range and diversity of genuine participants. Our experiences suggest it is becoming increasingly difficult to use pre-screening to filter out imposter participants. Technical solutions to screening out international respondents are available for survey software (Burleigh et al., 2018), however these have not made it into routine study design and may require expertise beyond researchers’ capabilities. The engagement of information technology departments to implement University-wide solutions is required so that each study team is not tackling this problem on its own. The final step of verification is that of ‘plausibility’ (Owens, 2022). With survey data, researchers need to apply manual checks over and above built-in ‘checks’, such as checking consistency across responses, and reviewing open-ended question responses. Our experiences suggest these manual validation techniques should be applied regardless of the source of recruitment (panel or in-house). For interview studies, researchers must use their expertise and judgement in assessing whether a participant is genuine based on their communications prior to interview, the participant’s narrative and the accuracy of the demographic details they provide, while acknowledging that not all interviews will provide rich in-depth data. For participants from different countries, or when participants speak different languages, further culturally appropriate checks need to be built in to confirm the cultural identity and location of the participant.

More broadly, ethics committees should be alert to the issue of imposter participants. Ethics applications should be structured to include a protocol for verifying the authenticity of participants and managing imposter participants, particularly for studies where recruitment will be via social media or panels managed by commercial companies. This requires university investment in training and education for ethics committee members, as well as researchers (Hokke et al., 2020). There could also be random audits of studies to check the veracity of methodological procedures and any adverse outcomes. Policies at a national level (such as national codes for responsible research conduct) should also be updated to reflect issues and concerns related to imposter participants and provide guidance for risk mitigation. Currently in research policies and codes, data integrity guidance has focused on the conduct of researchers and not of participants (National Academy of Sciences et al., 2009; National Health and Medical Research Council et al., 2018). Guidelines need to be expanded to include the proactive management of nefarious research participants to safeguard the integrity of research data. This will help to maintain public trust in research findings and protect researchers from the potential emotional impacts of engagement with imposter participants. The onus would still be on researchers to demonstrate that their research is trustworthy, complete and can be relied upon.

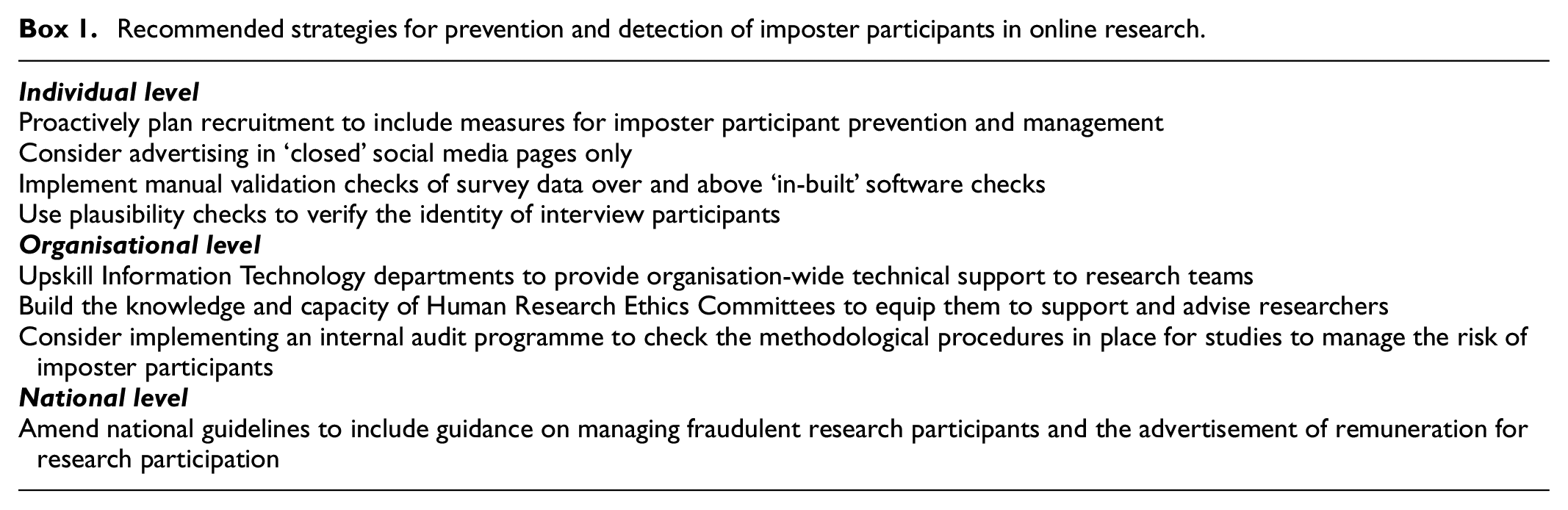

We summarise our recommended strategies in Box 1.

Recommended strategies for prevention and detection of imposter participants in online research.

Conclusion

Imposter participants raise ethical issues in research and require careful attention to ensure final data sets only include genuine participants to safeguard the integrity of research findings and implications. This has significant resource implications for research teams and can impact study recruitment and time for DFVS research. Additionally, their inclusion in trauma-informed qualitative studies may have emotional or psychological consequences for researchers. Guidelines and strategies at the individual, organisational and national level are required to prevent their inclusion in research, and to help researchers plan and proactively respond to their attempts to fraudulently participate for financial gain or other unknown reasons. Such steps will ensure greater data integrity and increased public confidence in research findings.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Ethical considerations

Ethical approval was given for the four studies discussed in this article. Three were approved by the University of Melbourne; one by Deakin University.

Consent to participate

Participants gave written consent (interviews) or electronic consent (surveys) to participate in the studies discussed in this article.

Data availability

Data sharing not applicable to this article as no datasets were generated or analysed during the current study.