Abstract

There are many occasions where contact information needs to be collected from survey participants in order to achieve future contacts and conducting follow-up surveys. This article reports findings from two experiments into collecting respondent emails and sending the second survey invites. In the email collection experiment, when only one follow-up survey was mentioned, more respondents provided their emails, compare to when the emphasis was on the research purpose of the follow-up survey. However, the follow-up survey participation rates are similar among respondents who provided their emails regardless of the wording of the request. The invitation email subject line experiment shows that a generic requesting for opinion reduces the follow-up survey participation compared to the elements emphasizing survey sponsor and specialty opinions.

Keywords

Introduction

There are many occasions when contact information needs to be collected from survey participants. Longitudinal studies, panel studies, and mixed-mode surveys often rely on the ability to re-contact initial participants at later dates or through different modes of contact. Pollsters, journalists, and market researchers may want to follow up individually with some respondents to get additional information or quotes that could not be obtained through a standard survey. Finally, modular surveys reduce respondent burden and improve response rates by breaking a long survey into multiple modules which are administered one at a time (Johnson et al., 2012; Kelly et al., 2013; Smith et al., 2012; West et al., 2015). In all of these examples, respondents’ email addresses, telephone numbers, or some other form of contact information must be collected in an initial survey.

For probability-based samples such as address-based sampling and random digit dial, contact information (such as address and telephone number) is acquired prior to the first contact and therefore collecting such information in the first interview is often unnecessary. However, the expansion of non-probability sampling has posed a challenge to the survey research field. In recent years, an increasing amount of research effort has been spent comparing and examining probability versus non-probability surveys ( see for example, Chang and Krosnick, 2009; Malhotra and Krosnick, 2007; Pasek, 2016). Although probability-based surveys have their advantages, conducting a probability-based web survey can be quite challenging in reality; hence, most web surveys, especially market research surveys, are largely non-probability-based (Pasek et al., 2014).

Two common sample sources for non-probability web surveys are online access panels and river (intercept) sampling (Liu, 2016). Online panels recruit respondents by collecting their contact information—typically email addresses—and profile data. Panelists can then be re-contacted with future survey requests and targeted based on their profile information. In this case, longitudinal or modular surveys can lend themselves well to panels, because panelists have expressed an interest in taking surveys and because their contact information has already been collected. River sampling, however, is used to survey the respondents only once without attempting to recruit them into a panel. Given that, it is likely to be more difficult to conduct modular surveys among river samples. Respondent-driven sampling has also been tested as an alternative for recruiting panel members (Schonlau et al., 2014).

The focus of this study is on web surveys, and I present findings from two experiments regarding how best to collect emails from respondents and invite them to take a follow-up survey using a river sample.

How to collect the email and complete a follow-up interview?

The two challenges that modular surveys face are the following: (1) how to collect respondents’ emails for the follow-up survey and (2) how to invite respondents to take the follow-up survey. I conducted two experiments to test several ways of requesting emails and inviting respondents to take the follow-up survey. Previous research has shown that requesting an email address for a follow-up survey is similar to inviting a respondent to participate in a survey in that many of the same factors involved in collecting re-contact information are related to survey nonresponse. This experiment tested three different factors. The first is regarding the number of follow-up surveys. Compared to recruiting survey participants into an online panel, of which the anticipated number of future surveys is unspecified, or into several future surveys, requesting email addresses for only one more survey is a much smaller request. Therefore, emphasizing this factor may elicit more email addresses to be provided. The second factor this experiment examined is the effect of the stated purpose of the follow-up survey. Previous studies have shown that different survey sponsors have different impacts on survey participation (Duncan, 1979; Groves et al., 2004; Groves and Peytcheva, 2008; Presser et al., 1992), and that government- and university-sponsored surveys tend to achieve higher response rates than those of for-profit organizations. Respondents are thought to infer the purpose of the surveys, and they are more likely to cooperate with research-related surveys than with commercial surveys. In this experiment, although conducted by a for-profit company, the study itself is for research rather than commercial purposes. Making that explicit may increase the willingness of participants to provide their email addresses. The third factor in this experiment is the topic of the follow-up survey. The experiment was embedded in a politics-related survey about the 2016 US presidential election in late 2015. Given that presidential election is the highest profile election in the United States, we expect that mentioning this topic may motivate the participants to provide their email for the follow-up survey.

The issue of email subject lines for web survey invitations has also received a good amount of research attention. Several factors, such as personalization (Heerwegh, 2005; Porter and Whitcomb, 2003), the sender’s profile (Kaplowitz et al., 2012; Keusch, 2012), and the survey sponsor (Porter and Whitcomb, 2005) have all been examined for improving survey participation. In this study, I tested three variations of email subject lines for the follow-up survey invites. First, I tested the reason for email contact and sponsor. Similar to the Porter and Whitcomb (2005) study, one condition in the experiment clearly announced the purpose of the email (“SurveyMonkey research survey”). Since respondents had provided their email addresses for a research survey from the same organization, mentioning the organization’s name and the purpose (i.e. survey) may help the respondents connect the dots and improve their participation rate. Second, I looked at the selectivity of the opportunity. Porter and Whitcomb (2003) found improved email click rates and response rates when the selectivity of participation and deadline of response were mentioned in the email invite. They posit that selectivity increases the sense of scarcity of the opportunity and hence can elicit more survey participation. Third, I tested a clear request for opinions. Previous research tested different approaches to requesting opinion in survey invites (Trouteaud, 2004). This study also tested a similar subject line. However, instead of pleading for opinions or offering an opportunity for expressing opinions, the subject line in this experiment emphasized empowerment (“Your opinion counts!”).

Experiment 1

Data and experiment

Data

Experiment 1 was conducted using SurveyMonkey, an online survey platform. More than 3 million people in the United States take user-generated surveys (created by people with SurveyMonkey accounts) on SurveyMonkey every day. After each completed survey, the SurveyMonkey platform displays a thank-you page to respondents. This experiment took a random subset of the thank-you page traffic and displayed a survey invitation page. Should respondents choose to take this survey, they clicked on the page and were redirected into the survey. There was no control over who the saw the page, the previous survey the respondents took, or who chose to click on the invite and take the survey. Therefore, by essence, it is a non-probability-based sample.

In total, 6654 respondents completed the survey. Due to a programming error, the treatment conditions for the first 810 completed respondents were not recorded and they were removed from the analysis. As a result, the effective sample size for this experiment was 5844. The survey was conducted between 30 September and 7 October 2015. The survey contained 30 questions, most about media usage. At the end of the survey, respondents were asked to provide their email addresses so that they could be contacted for future surveys. The respondent demographics are in Appendix 2.

Design of experiment

There were five experimental conditions for collecting email addresses from respondents who completed the first survey, and respondents were randomly assigned to one of the five conditions. The exact question wordings are as follows:

Condition 1 (Control): Thank you for participating in this survey. We would like to send you another short survey via email within the next week. If you’d like to participate, please provide us with your email in the box below. We will never give away your email to anyone else or use it for commercial purposes.

Condition 2 (Research purpose): Thanks for participating in this survey. We would like to send you another short survey via email within the next week. If you’d like to participate, please provide us with your email in the box below. Your email address will be used for survey research purposes only. We will never give away your email to anyone else and or use it for commercial purposes.

Condition 3 (Only one more re-contact): Thanks for participating in this survey. We would like to send you just one more short survey via email within the next week. If you’d like to participate, please provide us with your email in the box below. We will only contact you one more time for another survey. We will never give away your email to anyone else and or use it for commercial purposes.

Condition 4 (For election): Thanks for participating in this survey. We would like to send you another short survey about the 2016 election via email within the next week. If you’d like to participate, please provide us with your email in the box below. We will never give away your email to anyone else and or use it for commercial purposes.

Condition 5 (All inclusive): Thanks for participating in this survey. We would like to send you just one more short survey about the 2016 election via email within the next week. If you’d like to participate, please provide us with your email in the box below. Your email will be used for survey research purpose and you’ll only be contacted one more time for another survey. We will never give away your email to anyone else and or use it for commercial purposes.

Condition 1 was the control group. Condition 2 emphasized the research purpose of the study. Condition 3 emphasized that only one follow-up survey would be attempted. This is a clear distinction from recruiting participants into online panels, where the number of subsequent surveys is typically not specified. Condition 4 specifically announced the purpose of the subsequent survey to be about the US presidential election, since some of the questions in the follow-up survey are politics-related. Finally, Condition 5 contained all the elements of the previous conditions. A follow-up survey invite was sent out to the participants the day after they provided their email addresses. The second survey contained 12 questions, most of them about politics and elections.

Results

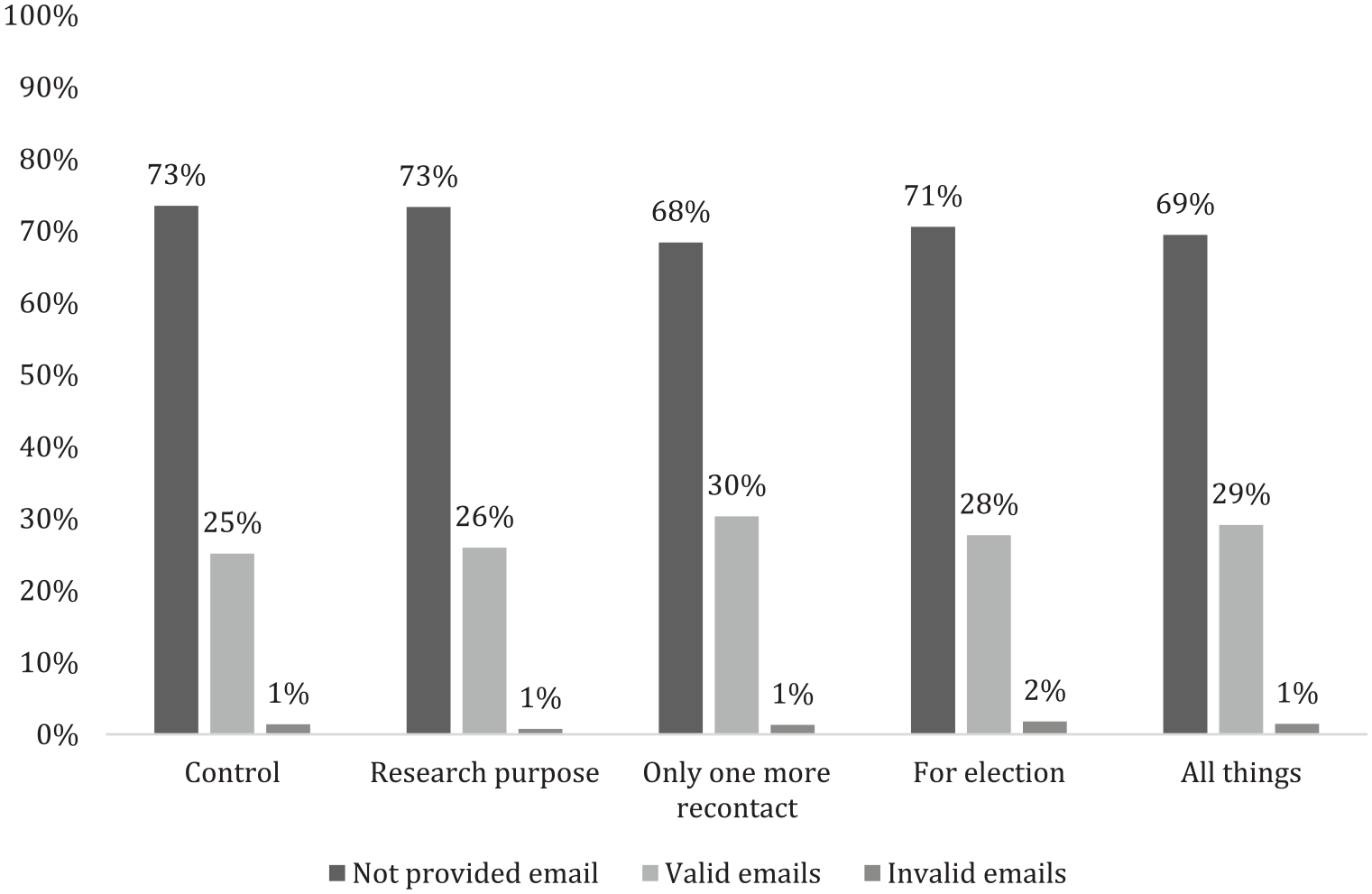

To evaluate the effectiveness of each condition, I first examined the email addresses provided by the respondents by the treatment groups. For each condition, I computed the percentages of respondents who provided valid email addresses, who did not provide email addresses, and who provided invalid email addresses. The invalid email addresses include those that bounced back or were otherwise undeliverable. Figure 1 shows that for all conditions, around 70% of the respondents did not provide their email address in answer to the request. The percentages of respondents not providing an email address were slightly lower in Condition 3, where only 1 more survey was emphasized, and Condition 5, where all elements were included in the text; slightly more respondents provided valid email addresses in these 2 conditions. The percentages of invalid email addresses are similar across all conditions. The differences across five conditions are all significant (

Response distributions to the email request by experimental conditions, experiment 1.

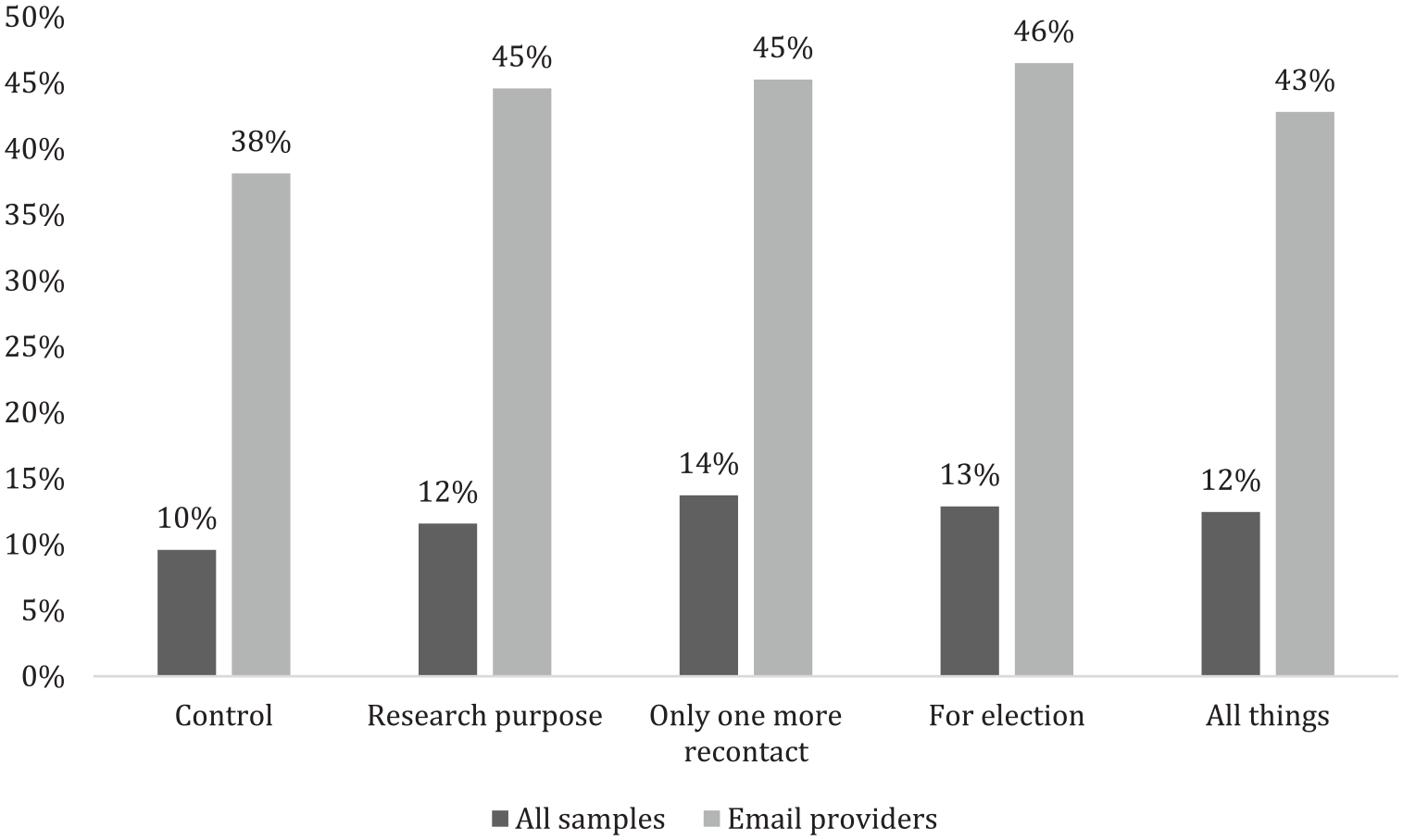

The day after the email address was collected in the first survey, a follow-up survey request was sent to the respondents. The completion rate for the follow-up survey is presented in Figure 2. The dark bars show the overall cumulative completion rate among all samples. That is, the completion rate of the follow-up survey based on all respondents of the first survey, regardless of whether an email address was provided or not. As we can see, Condition 3 (only one further contact) achieves the highest completion rate (14%) while Condition 1 (control) had the lowest completion rate (10%). The other three conditions had a similar completion rate. The difference between Conditions 3 and 1 is significant (

Percentages of completed follow-up surveys by experimental conditions, experiment 1.

Experiment 2

Data and experiment

Data

The data source for experiment 2 was the same as experiment 1, that is, the SurveyMonkey thank-you page. However, the first survey that included the email request was different. This survey contained 19 questions in total, most of which centered around politics and the presidential election. Similar to experiment 1, the respondent’s email address was requested at the end of the survey. This experiment was implemented between 22 and 27 October 2015. The survey invitation page received a total of 142,576 unique views. Of these, 7832 clicked on the invite and were redirected to the survey and 4549 of them completed the survey. The respondent demographics are in Appendix 2.

Design of experiment

Experiment 2 also had five conditions, and the wordings were almost identical to those in experiment 1, except that the last statement on not using their email addresses for commercial purposes was removed from all conditions (see Appendix 1 for the exact wording). This is to test whether the potential commercial usage would reduce respondents’ willingness to provide their email addresses and participate in the follow-up survey. All respondents were randomly assigned to one of the five conditions.

After collecting email addresses from respondents, I conducted a second experiment by varying the email subject line for the second survey email invitation. Specifically, there were three different email subject lines.

Condition 1: SurveyMonkey research survey.

Condition 2: Your opinion counts! Take a survey.

Condition 3: Specialized survey awaits.

The first condition emphasizes the survey sponsor. The second condition requests opinions and the third condition emphasizes the selectivity of the survey. Email providers were randomly assigned to one of the three conditions. Only one email invite was sent to participants, without any reminders.

Results

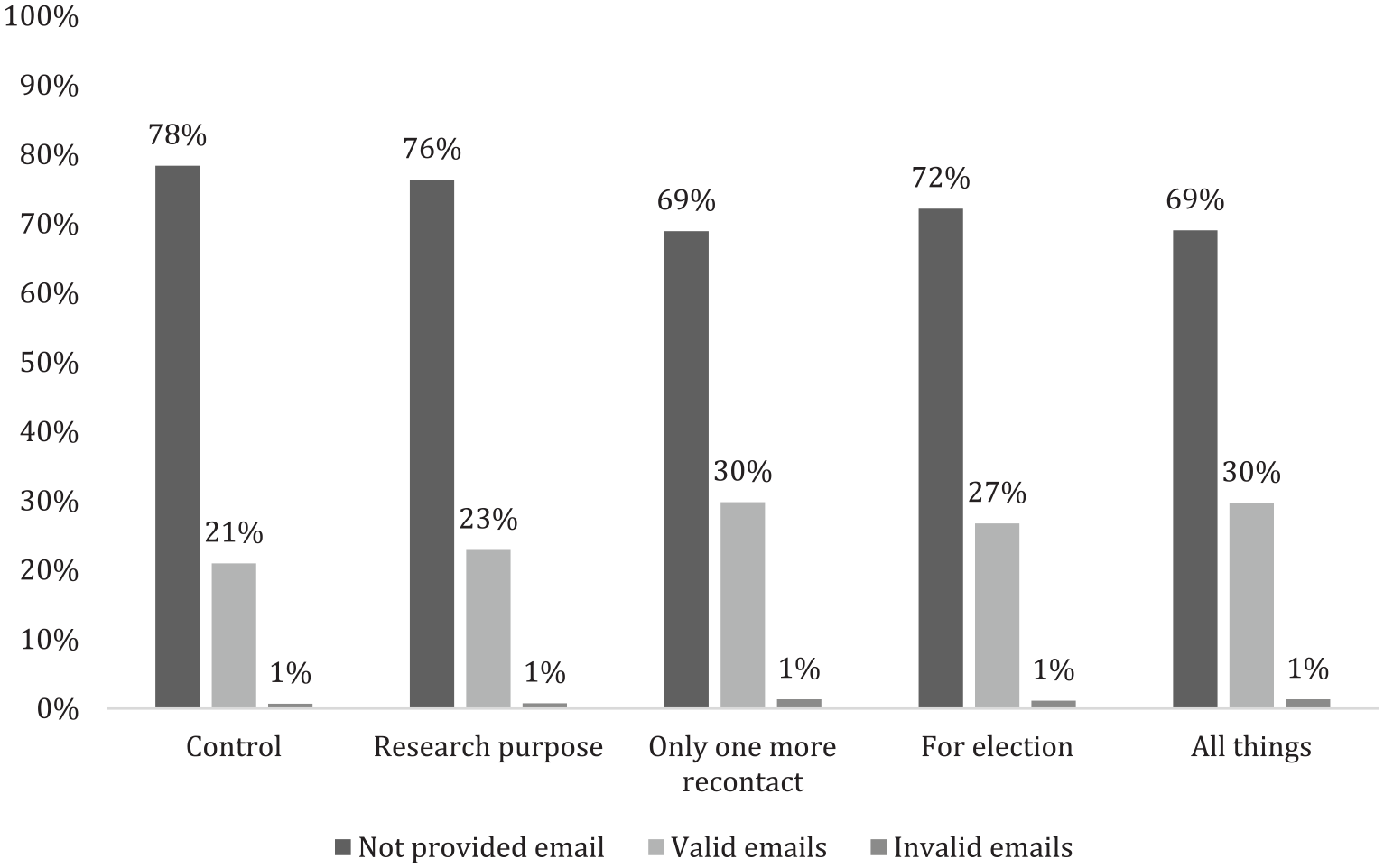

As in experiment 1, Condition 3 (only one further contact) and Condition 5 (only one further contact + research purpose + election) resulted in the highest cooperation rate (i.e. providing an email address) and the lowest item nonresponse rate in experiment 2 (Figure 3). Condition 1 (control) and Condition 2 (research purpose) elicited significantly fewer email addresses. The invalid email rates were almost identical across all conditions. The overall difference is significant (

Response distributions to the email request by experimental conditions, experiment 2.

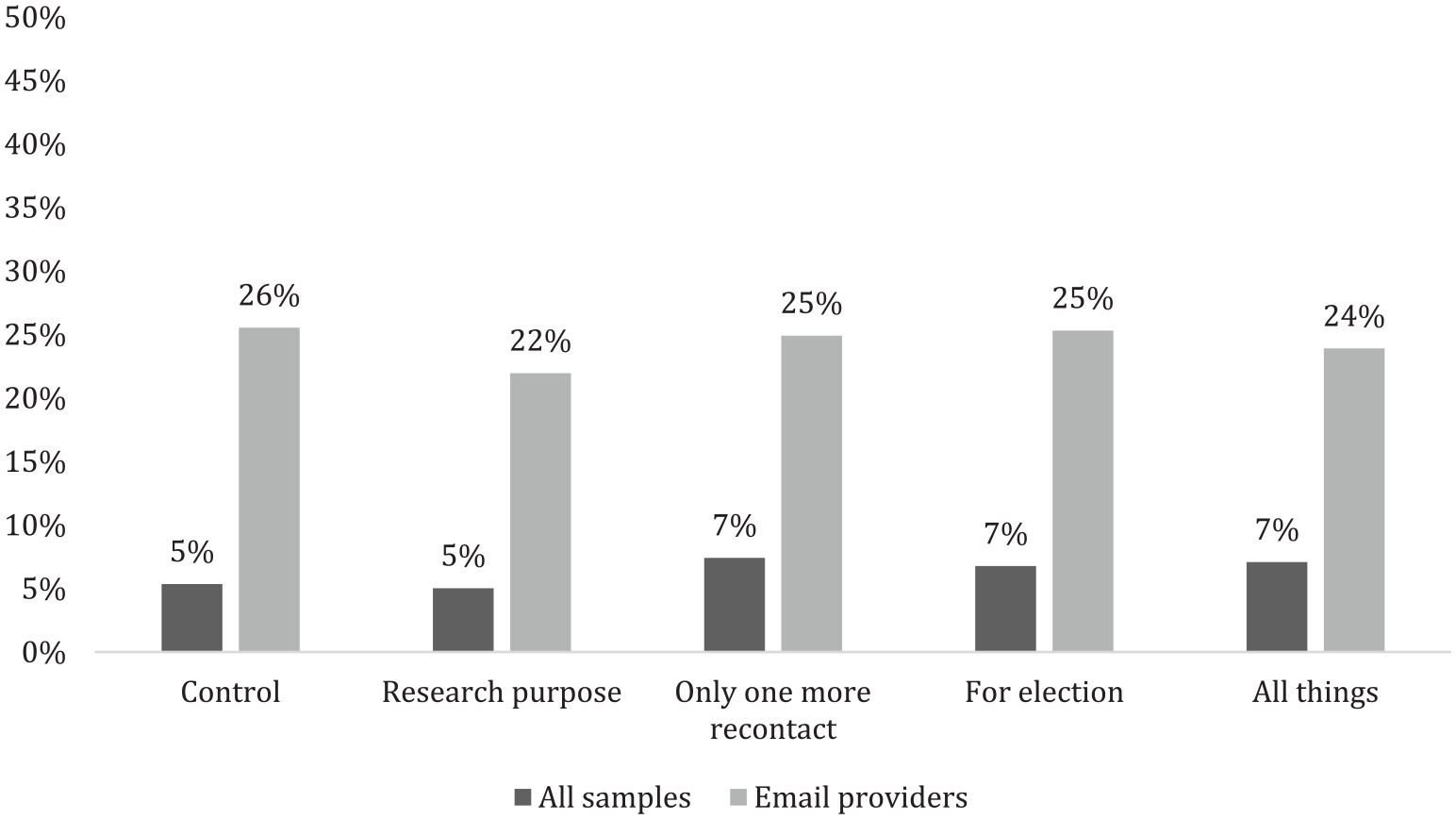

Moving on to the completion rate of the follow-up survey, as Figure 4 shows, overall completion rates tend to be lower than the completion rates for experiment 1 (see Figure 2 for completion rates for experiment 1). This is the case for both the cumulative completion rate and the completion rate only among email providers. The trend of the completion rates across all conditions, however, remains the same for experiment 2 as compared to experiment 1. More specifically, the cumulative completion rates (dark bars) are slightly higher in Conditions 3, 4, and 5 (about 7%) and lower in Conditions 1 and 2 (about 5%). The difference is not statistically significant (

Percentages of completed follow-up surveys by experimental conditions, experiment 2.

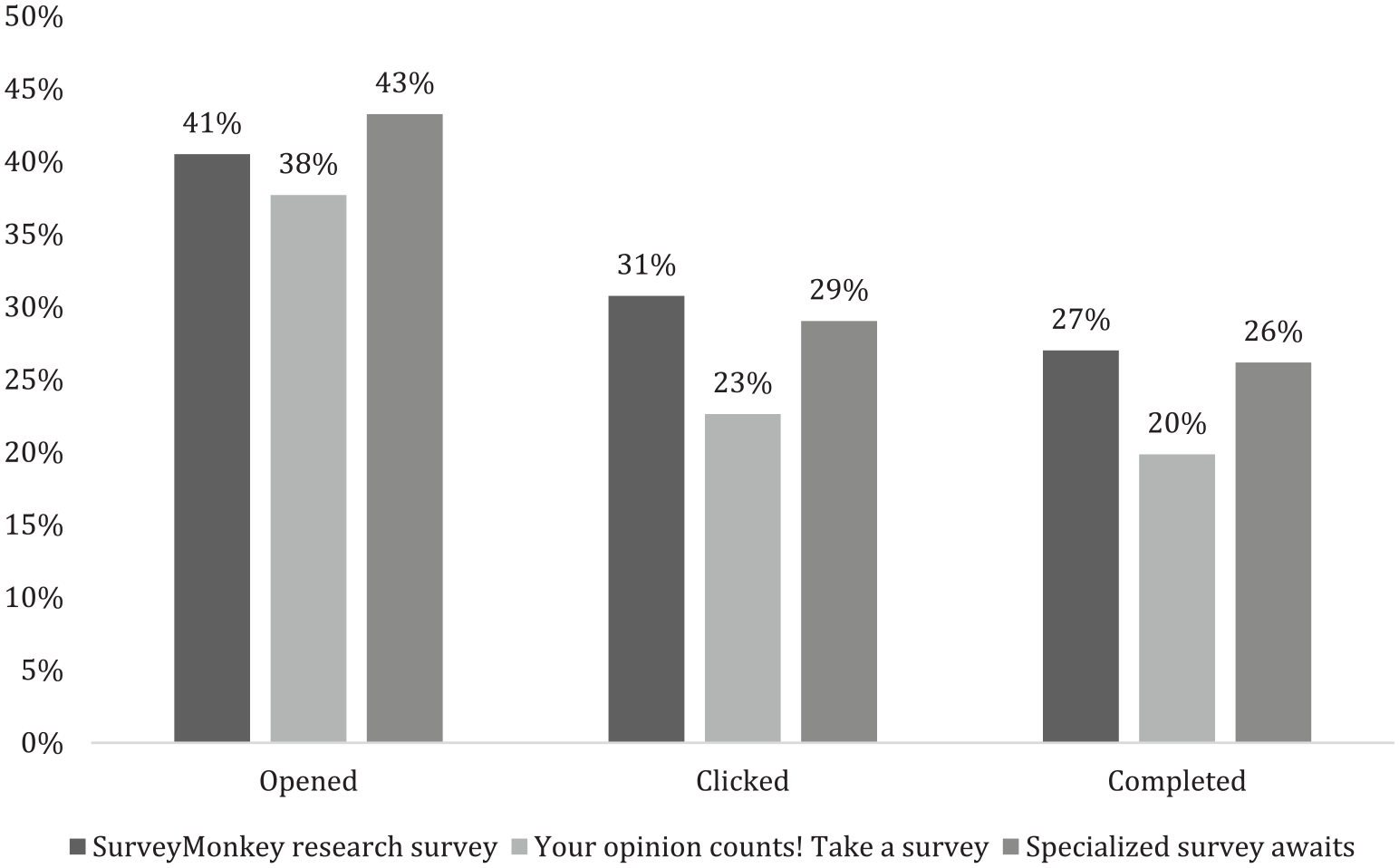

In addition to the experiment into requesting emails, I conducted a second experiment into subject lines of the email invitation for the follow-up survey. All respondents who provided their emails were randomly assigned to one of three subject lines. Figure 5 presents the email open rate, click rate (or begin survey rate), and the final completion rate by the email subject line conditions. Condition 2 (“Your opinion counts! Take a survey”) results in the lowest open, click, and completion rates among the three conditions. The other two conditions have somewhat higher and similar open, click, and completion rates. The open rate does not differ significantly across conditions (

Subject line experiment of the survey invitation email for the follow-up survey.

Finally, I tested whether there was interaction effect between the email request wording experiment and the subject line experiment on open, click, and completion rates. Three-way chi-square tests show no significant effect for open rate (

Discussion

Previous research on wordings of survey solicitation/invitation has focused almost exclusively on their impact on the immediate survey participation. In other words, whether different wordings have an impact on the survey participation for current survey. Those studies identified effective techniques for cross-sectional surveys, usually with a probability-based sample. For address-based or random digit dial studies, the contact information is obtained ahead of the study. Going back to the same respondents does not require obtaining their contacts in the initial survey. For intercept or river-based non-probability web survey, however, researchers do not have such information and have to rely on the survey participants to provide them initially. Non-probability survey has its advantages on several dimensions, such as cost and efficiency (Liu, 2016). This study set out to examine solutions to augment the intercept non-probability sample with the capability of conducting more than one cross-sectional survey.

Specifically, this study experimentally examined the impact of five different question wordings used to request emails from survey participants for a follow-up survey, along with three different email invitation subject lines for the follow-up survey itself. The re-contact request experiments emphasized three aspects of the follow-up survey, including survey purpose (i.e. research), survey frequency (i.e. only one more survey), and survey topic (i.e. election). Each experiment had one condition for each component and a fourth condition containing all three conditions. The control condition used a more generic wording without mentioning any of the three components. In both experiments, the condition that emphasized only one follow-up survey elicited the most email addresses, regardless of whether this was the only component emphasized (Condition 3) or whether this was described along with other components (Condition 5). The re-contact request experiment also had a carry-over effect on the follow-up survey completes. The same two conditions that elicited the most emails also received the most final completes for the follow-up surveys. The email-providing rate and completion rate of Condition 4 (emphasizing election) were very close to Conditions 3 and 5. When comparing the completion rate by the experimental conditions just among email providers, however, no significant difference was found, suggesting that once respondents decided to provide their email addresses, their willingness to follow through with the request and participate in the follow-up survey is similar regardless of the email request wording. One interesting difference between experiments 1 and 2 is that the completion rates were lower for experiment 2. In this experiment, the text about the survey’s noncommercial purpose was removed. In the real world of market research, although the emails collected will not be released or sold for commercial purpose, respondents are often invited to take commercial surveys. Without a guarantee as to the noncommercial use of emails, respondents became less willing to complete the follow-up survey.

Three email subject lines for the follow-up survey invitation were also tested in the second experiment. The three conditions emphasized the survey sponsor, the specialty of the survey opportunity, and requesting opinion, respectively. The open, click, and completion rates were compared across the conditions. While the conditions on survey sponsor and specialty of the survey opportunity performed similarly on all three outcomes, the generic requesting for opinion condition performed consistently worse than the other two. This suggests that when inviting survey participation, it is wise to avoid using generic terms and languages as they are not informative and can lower the participation rate.

The implications of this research can be utilized for longitudinal surveys or panel studies in a variety of fields, including sociology, political science, psychology, and so on. The expansion of non-probability sampling provides researchers with new opportunities to conduct research cheaper and faster, but longitudinal studies with participants drawn from non-probability sample face a significant challenge in obtaining respondents re-contact information (e.g. email address). This study shows that it is feasible to collect email addresses from survey participants for a follow-up survey and that many participants who provided their emails are actually willing to participate in the second survey. This research approach opens up opportunities for longitudinal or modular surveys even with non-probability samples for which no contact information is available until completion of the first interview. More specifically, when asking for contact information and inviting for follow-up studies, researcher should provide context for the invite rather than keeping them generic. Providing relevant content, such as the number of follow-up survey and the survey topics, helps set the expectation. When the language is too vague or generic, it gives respondents little to no information on what they would expect and hence lower their willingness of providing their contacts.

It is important to point out that since this study only asked for one more survey, it is uncertain whether this approach will be successful if we ask respondents to take more than one follow-up survey. This will be a topic worth exploring in the future. Also, even for probability-based samples, collecting contact information may also be necessary, as when following up a telephone survey with a web survey. Thus, the application of this study can be useful beyond simply a non-probability-based sample design.

Footnotes

Appendix 1

Condition 1 (Control): Thank you for participating in this survey. We would like to send you another short survey via email within the next week. If you’d like to participate, please provide us with your email in the box below. We will never give away your email to anyone else.

Condition 2 (Research purpose): Thanks for participating in this survey. We would like to send you another short survey via email within the next week. If you’d like to participate, please provide us with your email in the box below. Your email address will be used for survey research purposes only. We will never give away your email to anyone else.

Condition 3 (Only one more re-contact): Thanks for participating in this survey. We would like to send you just one more short survey via email within the next week. If you’d like to participate, please provide us with your email in the box below. We will only contact you one more time for another survey. We will never give away your email to anyone else.

Condition 4 (For election): Thanks for participating in this survey. We would like to send you another short survey about the 2016 election via email within the next week. If you’d like to participate, please provide us with your email in the box below. We will never give away your email to anyone else.

Condition 5 (All things): Thanks for participating in this survey. We would like to send you just one more short survey about the 2016 election via email within the next week. If you’d like to participate, please provide us with your email in the box below. Your email will be used for survey research purpose and you’ll only be contacted one more time for another survey. We will never give away your email to anyone else.

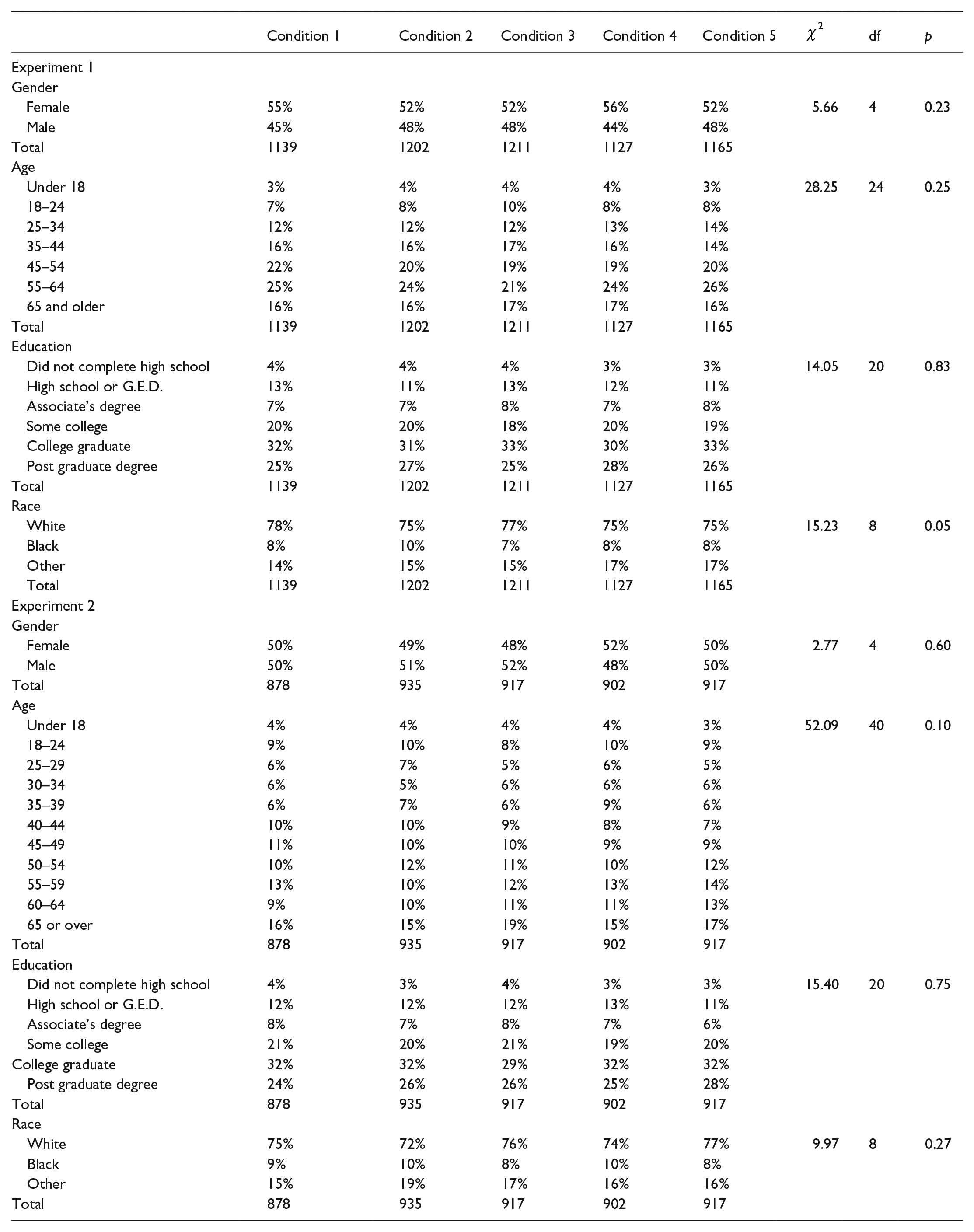

Appendix 2

| Condition 1 | Condition 2 | Condition 3 | Condition 4 | Condition 5 |

|

df | p | |

|---|---|---|---|---|---|---|---|---|

| Experiment 1 | ||||||||

| Gender | ||||||||

| Female | 55% | 52% | 52% | 56% | 52% | 5.66 | 4 | 0.23 |

| Male | 45% | 48% | 48% | 44% | 48% | |||

| Total | 1139 | 1202 | 1211 | 1127 | 1165 | |||

| Age | ||||||||

| Under 18 | 3% | 4% | 4% | 4% | 3% | 28.25 | 24 | 0.25 |

| 18–24 | 7% | 8% | 10% | 8% | 8% | |||

| 25–34 | 12% | 12% | 12% | 13% | 14% | |||

| 35–44 | 16% | 16% | 17% | 16% | 14% | |||

| 45–54 | 22% | 20% | 19% | 19% | 20% | |||

| 55–64 | 25% | 24% | 21% | 24% | 26% | |||

| 65 and older | 16% | 16% | 17% | 17% | 16% | |||

| Total | 1139 | 1202 | 1211 | 1127 | 1165 | |||

| Education | ||||||||

| Did not complete high school | 4% | 4% | 4% | 3% | 3% | 14.05 | 20 | 0.83 |

| High school or G.E.D. | 13% | 11% | 13% | 12% | 11% | |||

| Associate’s degree | 7% | 7% | 8% | 7% | 8% | |||

| Some college | 20% | 20% | 18% | 20% | 19% | |||

| College graduate | 32% | 31% | 33% | 30% | 33% | |||

| Post graduate degree | 25% | 27% | 25% | 28% | 26% | |||

| Total | 1139 | 1202 | 1211 | 1127 | 1165 | |||

| Race | ||||||||

| White | 78% | 75% | 77% | 75% | 75% | 15.23 | 8 | 0.05 |

| Black | 8% | 10% | 7% | 8% | 8% | |||

| Other | 14% | 15% | 15% | 17% | 17% | |||

| Total | 1139 | 1202 | 1211 | 1127 | 1165 | |||

| Experiment 2 | ||||||||

| Gender | ||||||||

| Female | 50% | 49% | 48% | 52% | 50% | 2.77 | 4 | 0.60 |

| Male | 50% | 51% | 52% | 48% | 50% | |||

| Total | 878 | 935 | 917 | 902 | 917 | |||

| Age | ||||||||

| Under 18 | 4% | 4% | 4% | 4% | 3% | 52.09 | 40 | 0.10 |

| 18–24 | 9% | 10% | 8% | 10% | 9% | |||

| 25–29 | 6% | 7% | 5% | 6% | 5% | |||

| 30–34 | 6% | 5% | 6% | 6% | 6% | |||

| 35–39 | 6% | 7% | 6% | 9% | 6% | |||

| 40–44 | 10% | 10% | 9% | 8% | 7% | |||

| 45–49 | 11% | 10% | 10% | 9% | 9% | |||

| 50–54 | 10% | 12% | 11% | 10% | 12% | |||

| 55–59 | 13% | 10% | 12% | 13% | 14% | |||

| 60–64 | 9% | 10% | 11% | 11% | 13% | |||

| 65 or over | 16% | 15% | 19% | 15% | 17% | |||

| Total | 878 | 935 | 917 | 902 | 917 | |||

| Education | ||||||||

| Did not complete high school | 4% | 3% | 4% | 3% | 3% | 15.40 | 20 | 0.75 |

| High school or G.E.D. | 12% | 12% | 12% | 13% | 11% | |||

| Associate’s degree | 8% | 7% | 8% | 7% | 6% | |||

| Some college | 21% | 20% | 21% | 19% | 20% | |||

| College graduate | 32% | 32% | 29% | 32% | 32% | |||

| Post graduate degree | 24% | 26% | 26% | 25% | 28% | |||

| Total | 878 | 935 | 917 | 902 | 917 | |||

| Race | ||||||||

| White | 75% | 72% | 76% | 74% | 77% | 9.97 | 8 | 0.27 |

| Black | 9% | 10% | 8% | 10% | 8% | |||

| Other | 15% | 19% | 17% | 16% | 16% | |||

| Total | 878 | 935 | 917 | 902 | 917 | |||

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.