Abstract

This article offers an analysis of methodological disputes between various stakeholders in welfare provision. It addresses debates of validity, efficiency and purpose. It gathers data from two sources: a knowledge exchange event which brought together voluntary sector workers, outcome software providers and academics, and auto/biographical data from my long-term participation in a grass-roots community project seeking to tackle street homelessness and food poverty in the London Borough of Newham. It pays particular attention to the tensions inherent in measuring impact and presenting ‘softer’ outcomes. It highlights the innovative approaches adopted by those working in the third sector as they seek to comply with an often overwhelming and increasingly complex set of methodological demands from funders. This article includes a discussion of the positioning of researchers seeking to offer ‘accountable knowledge’ and the types of knowledge which arise in pursuing an approach best described as ‘theorised subjectivity’. I consider the tensions inherent in my own attempt to navigate towards being a ‘partial inbetweener’ or at least a ‘trusted outsider’ to homeless people within the project I volunteer with. To this end, discussions of the use of auto/biographical data, drawn from working as a community organiser, are included.

Keywords

Introduction

I am an activist. My academic and activist interests are around how social change is effected. My scholarship in social change and addressing marginalisation is driven by a desire to bring academic rigour to inform activism. A number of years ago, I opted to move from a full to a part-time lecturing post so that I could commit more time to activism and community organisation. I am surprised to be writing a methodological paper. I have not previously read methodological papers, but I suspected they were prosaic; I suspected they were dry; I suspected they are not for activists, and I was wrong on this last point at any rate. I am writing this piece because methodology emerged as the substantive issue, and on several levels, in a knowledge exchange I helped to facilitate between community workers, academics and software providers for the third sector.

‘Making it Count’ began as a community engagement project at the University of Greenwich in June 2017. Initially, it brought together 11 workers and volunteers from seven small- to medium-sized community organisations. It was followed up by a series of interviews with some of those who part to extend and clarify the points made at the event. The delegates, who have been anonymised here, represented small charities running winter night-shelters; volunteer agencies; large national children’s charities; healthcare charities for particular diseases; lesbian, gay, bisexual and transgender (LGBT) inclusion associations; and broader community organising groups. The representatives, usually the chief executive officer (CEO) or equivalent position met with four academics from the Applied Sociology Research Group at the University of Greenwich, a number of other academics in related fields and two delegates from software providers who produce online toolkits to help the third sector collect data (one of which had to cancel at the last minute but sent a presentation). These were the market leader, ‘Outcome Star’ and the smaller ‘Soul Record’.

This article brings together themes and insights from this knowledge exchange event. These findings contribute to the analysis of methodological disputes between various stakeholders in welfare provision. This is, in turn, an interesting example of methodological debates around validity, efficiency and purpose. It includes a discussion of positioning of researchers and adopts an auto/biographical approach to do so.

The methodological approach

The knowledge exchange interrogated tensions arising in the process of requiring, collecting, systematising and presenting data. It asked, what can be made of the clash of methodologies between these groups? The tensions in measuring impact and presenting outcomes focussed on doing this for ‘softer’ outcomes: such as improvements in self-esteem and personal resilience in people accessing services. Those working with marginalised communities know that these changes are essential foundations upon which the ‘hard outcomes’ of, for example, a successful return to employment, accessing and maintaining accommodation, reductions in mental health crisis or levels of substance misuse can be built. How do we make these fundamental personal growth outcomes ‘count’? Four stakeholder communities were in mind during in this exchange:

The recipients of services provided by grassroots community groups;

The volunteers and workers of these groups, especially those charged with collecting data and writing impact reports;

The software providers seeking to systematise data collection in the sector;

The funders, both statutory commissioning officers and grant-giving organisations.

Method is data. Some of the data collected at the knowledge exchange, and from my autobiographical reflection, is more readily appreciated as being such. There is, I suggest, another layer of data, both apparent and valuable, in both contexts. In common with the Making it Count participants, I hold some fairly deep emotions about the work we undertake and what is at stake in discussions of validity and evidence for impact. Emotion is an often overlooked set of data. However, Letherby (2015) suggests that ‘emotion is integral to (feminist) methodological processes not least because emotion is part of everyone’s life and emotional expression within the research process is often data in itself’. Feminist methodologies more readily appreciate this and are more willing to accept the risks and value the inclusion of emotion as valid data in an appropriate methodology.

Data were the crux of the matter in the ‘Making it Count’ roundtable event. How is, and how should, data be collected from communities who lack trust in bureaucracy, or where individuals have chaotic patterns of engagement with projects? The process of collecting data in this setting is extremely complicated in ways which became apparent during the exchange. Furthermore, many grassroots organisations rely on volunteers to do this work, and these people might themselves be suspicious of the process. Then, there are third sector software providers, many of whom have produced toolkits with much academic investment. Are these fit for purpose? Are these products well-known? Can volunteers use them? In addition, overshadowing the whole process are the funders. It was felt they still tended to want neat, quantitative measurements and empirical evidence when so much of what grassroots projects produce are ‘softer’ outcomes, hard-won progress, emotionally invested relationships which span many years and can be a whole lot more tenuous than the funding bodies might suppose. In this article, I pay attention to the clash of philosophies underlying the process of data collection and impact presentation. How is it possible to measure improvements in a person feeling less suicidal, having a sense of hope, feeling better able to contribute something to a group? Can such progress be made to ‘count’ in a commissioning environment beset with an austerity culture, circumvented by a discourse of blame and shame around the marginalised? Our methodology began with data collection undertaken as part of a knowledge exchange. It involved close listening to stories of the clash of methodologies, unpicking the wisdom of experienced community workers. What we heard were the problems of methodology in the real world. This involves making a distinction between methods and methodology. What is clear is that there are layers to the reflections to keep in mind: Reflection on the methods (tools for data gathering, e.g. questionnaires, interviews, conversational analysis), methodology (analysis of the methods used) relevant to each and any research endeavour are crucial if our work is to have any epistemological (the theory of knowledge) value. (Letherby, 2015: 50)

When analysing the data from ‘Making it Count’, I was in effect analysing both the new data being produced by the exchange event and considering the participants’ methods and methodologies in their own fields. This produced a complex reflection where both methods and methodologies were interrogated. A further dimension was that, my own subjective position in this exchange would also provide a layer of data as I began to reflect on my own emotions and engagement as both an activist, and an academic, and in this instance, a researcher of my own community of activists. As noted above, in this exchange, I am positioned as part of the academic community and part of the grassroots project community. In part then, this methodological reflection is auto/biographical and ethnographic. Arguably, this is inevitable, as Letherby (2014) states, All research is an auto/biographical practice, an intellectual activity that involves a consideration of power, emotion and P/politics. (pp. 1–2)

Feminist methodological theory argues that this stance, from subjective involvement and interest, may be preferable since the explicit nature of the author’s involvement goes some way towards the production of ‘accountable’ knowledge (Cotterill and Letherby, 1993; Fine, 1994; Letherby, 2003, 2014). Furthermore, Letherby suggests an auto/biographical approach explicitly includes awareness of self/other relationships and encourages reflection on power relationships within research; power in methodological choices will be an important theme of this article. As such, I adopt a theorised subjectivity here (Letherby, 2003, 2014), requiring ‘the constant, critical interrogation of the author’s personhood – both intellectual and personal – within the production of the knowledge’. (Letherby, 2014: 6)

At least three areas of tension emerged from the knowledge exchange: the tension of status difference; the question of whether the methods and methodologies used to collect data are fit for purpose, and finally, the creative, subversive methodologies of community workers as they attempted to bridge the gaps between the requirements of funders and the delicate dance of a non-hierarchical, humane approach needed to maintain relationships with those using their services. The knowledge of community workers is seldom given due credence and yet, they were precisely the group holding together these tensions, with a wealth of experience in balancing the demands of funders with the sensitivity of service users. Their voices are rarely heard. ‘Making it Count’ sought to address this.

The tension of status differential

It is easy to proclaim a ‘Broken Britain’. It is harder to go about trying to pull together the fractured pieces in a community or individual’s life. The current discourse on poverty is one of ‘Saints and Scroungers’. The emergence of ‘poverty porn’ as popular entertainment presents poverty as ‘a problem of the other’ (Chauhan and Foster, 2014). At the same time, there is little investigative coverage of poverty in news stories which ‘use poverty to lend emphasis or to sensationalise and do little to further an understanding of poverty in the UK’ (McKendrick et al., 2008: 22).

It is perhaps through such stereotypical news coverage that the poor and welfare recipients have become one of the most unpopular groups in modern society (Chauhan and Foster, 2014: 4). Popular representations of homeless people, for example, are found to be dehumanising and homogenising. The image of homeless people as fundamentally different from others is reinforced through the use of the generic term ‘the homeless’. This designation demonstrates a lack of appreciation of the basic human character of individuals (Widdowfield, 2001: 51). Shildrick and Rucell (2015) argue that discourses of shame and stigma ‘have tended to explain poverty by referring to people’s moral failings, fecklessness or dependency cultures’. They further argue that media representations are not the only place where the poor are stereotyped. Institutions such as public or welfare delivery services have also been shown to be important in stigmatising and disadvantaging those experiencing poverty.

The effect of the stigmatisation of the poor was felt by the participants at the ‘Making it Count’ exchange. Some expressed that, as community workers, they were seen as ‘naïve’, or even ‘complicit’ in social problems. A culture which dehumanises the poor might well be imagined to cast those who champion the rights of the marginalised, at best ‘do-gooders’ and well-meaning amateurs, or at worst, as wasting their time on people who ‘only have themselves to blame’. Identifying with the marginalised positions some of the community workers as proximal to these othered communities. The discussion at ‘Making it Count’ unearthed a sense that those working at a grass-roots level hold subversive knowledge to counter populist ideas of poverty. Some participants expressed that they had an ‘insider knowledge’ about the reality of poverty. They were happy to share their insights and experiences.

The knowledge exchange asked, ‘what are the main issues you face in presenting the impact your project makes?’ In answering this, the most prevalent theme was that of navigating power differentials: the first of which was of navigating the power differential between their organisations and funding bodies who ‘hold the purse strings’. In exploring this further, many participants expressed a sense that the increasingly complex and detailed reporting of impacts made them feel that ‘they were not being trusted’. There was an overall desire for opportunities to collaborate with funders as to the way impact data could be submitted, one which gave credence for the knowledge and expertise they had accrued by working in service delivery.

A close examination of the data from the knowledge exchange suggests an underlying philosophical difference in how some of the community workers view their funders, one which is especially poignant for groups committed to a community organising model: Organising models propose building and confronting power as a possible antidote to social problems. (Christens and Inzeo, 2015: 429)

Some of the data gathered from ‘Making it Count’ suggested that a philosophy of ‘confronting power’ sits uneasily with joining the competitive market for statutory sector partnership funding. One such example came from a project which had been funded by a ‘payment for results’ agreement with the Department for Work and Pensions (DWP), delivered through a partnership with their local Job Centre At the initial partnership consultation, the funding commissioner suggested that the project would be required to refer those who did not show up for their appointments back to the Job Centre, where such information could lead to ‘sanctioning actions’. The project leader recalled how she had been horrified: And when they do that, and their benefits end, they will be sent to me at the Food Bank. And I will have to help them appeal! . . . They [Job Centre] just don’t understand how we work at all.

Other respondents spoke of a struggle to ‘make them understand’. The language of ‘us and them’ points to tensions between projects seeking funding from institutions who might have been cast as complicit in existing oppressive power structures.

The community workers were also aware that they navigated a second tension arising from status differentials, one in which they might to be seen as brokering power, over and against individuals using their services. In collecting data from their project users, they risked finding themselves cast as bureaucrats. They were at the nexus of complex relationships, between those in need of support services and those holding funding. This tension was especially prevalent in the context of collecting data. One participant recalled difficult conversations with a number of service users, which involved being told, I don’t want to fill in forms so you can get money for helping me.

Overwhelmingly, the ‘Making it Count’ participants had experienced reluctance from their service users to provide certain types, or any, of personal data. This produced stress in knowing them as service providers held a certain degree of power, by which they could withdraw provision. This elicited an uncomfortable awareness of the power differential between themselves and the community members using their services. Many had adopted innovative methods to navigate this juncture, which will be explored later where I argue that they seem to adopt aspects of Goffman’s (1956) dramaturgy by ‘performing’ differently to various audiences.

I too have experienced the tension of desiring to work with marginalised people, to empower them as agents of their own lives, while also having an uneasy sense of working for, or worse still to, them. As I explained at the start of this article, I decided to move to part-time academic work to free up time to work in my own community, taking on various roles within local community groups. I began with no desire to produce academic outputs from this; I had in effect separated activism and academia. My ethical sensibilities supposed that, while knowledge would be gained from working closely with people pushed to the precipice of society, I must avoid becoming the ‘clip-board-holding investigator’. I reacted strongly to the idea that my work for grassroots organisations could be seen as a ploy to unearth nuggets for academic papers. I did indeed find, as many ‘Making it Count’ participants voiced, that some people who have experienced long-term homelessness and/or been part of custodial and welfare systems have a sense of being sitting ducks for bureaucrats with their endless forms and questions. They, quite rightly, resent the over-processing of personal facts and details by people who perceive them as a set of problems and failings.

In consideration of power imbalance between researcher and participant, Wolf (1996) argues that most feminist writing has focused on addressing the imbalance within the process and products of research, conceivably because to address the actual power imbalance in social power between these people is a far greater task! When researching marginalised communities, Kamala Visweswaran (2003) argues that the key question to hold in mind is, ‘whether we can be accountable to people’s own struggles for self-representation and self-determination’ (p. 39). This is important. The use of capturing grass-roots stories and attempts to hear the voices of silenced people within my own research, and that of many of the participants at the knowledge exchange, point to ways in which this question of ‘accountability’. The use of more participatory methods, especially as championed by feminist sociologists such as Stanley and Wise (1993), is part of a broader concern to research everyday life with ‘close’ and ‘sympathetic consideration’ (p. 2).

The fact that I am now writing academic papers based on my involvement in community organising, and particularly working with marginalised people, suggests that I have shifted somewhat. In my experience, hearing the stories of people who I have now known for many years has opened opportunities to listen at levels which were not possible earlier. It took time. From one side, to overcome a justifiable reticence to have their lives presented as source evidence for some greater scheme, and from mine, to appreciate that the people I worked with wanted their stories told and their wisdom and insights to be shared. This position held true and felt comfortable as long as I stayed focussed on participatory methods of data collection and took seriously the need to spend time in these communities without my ‘clipboard’. Time, building personal relationships, and being first and foremost a listener, opened the ways to bring activism and academic endeavours together. Reflecting on this, I doubt that the tensions between ‘insider’ and ‘outsider’ positioning, which have long been theorised in the social sciences, will ever be resolved. While some, like Arthur (2010), suggest this is a false dichotomy and that a researcher’s identity can shift between such positions, I am aware that this process takes place within a matrix of power. I can never be an ‘insider’ in the homeless communities I work with, simply because I am not homeless. At best I can be a partial ‘inbetweener’ or a trusted outsider. What is more is that, there is a certain fluidity to my identity position. I have been involved with these for 5 years and live in the same neighbourhood as they are held. I have known some of the people in these projects for all, or many, of these years. At one level, when I am with the members of these projects, my perception is that I am accepted and trusted. The extent to which this is true is not really my call to make. However, even from my own self-reflection, I feel my position shift as I sit down to the task of writing up notes and analysing data from this engagement. I have to distance myself to some extent and allow the data to speak to me. This shift infers to me that there is a power imbalance inherent in being ‘among’, which is different from that of ‘observing’ and ‘analysing’. Successfully resolving the tension I feel in this relies on the extent to which I am genuinely, and persistently, interested in the lives of people I work with. In terms of academic outputs, this means the process is slow. I can live with that.

Tensions arising from the methodologies used – are they fit for purpose?

Feminist methodological research has long identified the relationship between epistemology and the process and products of methodology/particular methodological approaches (Letherby, 2003 Stanley and Wise, 2002). The ‘Making it Count’ knowledge exchange saw how this relationship produces clashes as various stakeholders hold different views as to what process is fit for purpose, both in the day to day gathering of data, and how it should be presented as a product. Underlying these differences is the fundamental variance in epistemology.

Data gathering methods were diverse among the third sector charities represented at the exchange: questionnaires, interviews, video capture of participants’ stories, conversational analysis and the use of toolkits to map a service user’s journey were all used. No charity used a single method. Partly, this was due to the differing demands of a variety or changing set of funders, which is an external pressure. It was also due to the inventive practices adopted by those collecting data, hence the consideration of innovative solutions in a following section of this article.

The most prevalent theme from the ‘Making it Count’ focus groups was of having to constantly adjust the methods of data collection and the sheer amount and time involved in this: Small funders are worse. We have worked with almost 80 contracts. Everyone wants something different. Whether they are putting in 8 grand or 800 grand they seem to want the same level of reporting.

Participants from very small scale grassroots organisations also faced a further challenge. Much of their data collection was carried out by volunteers, many of whom were explicitly drawn to their organisation’s activism. Volunteer reluctance to collect data or to spend time uploading it to computerised systems added to their problems with methods.

A separate issue with the expected timescales of impact was raised in the context of the reality of working with people facing complex and entrenched problems and the expectation of ‘hard outcomes’ within a set time frame. As one respondent reflected, He’s not addicted, just out of prison and homeless because no-one thought to teach him how to write a CV.

Such contributions from participants highlight a more fundamental issue, a dissonance between the types of outcomes which funders wanted to be measured, often ‘hard measures’, and the slower, more tenuous progress they identified in their service users. Such ‘softer outcomes’ were identified as not only essential in producing a successful degree of progress but in maintaining it: What is meaningful outcome information? Getting a job, getting accommodation. But what about the skills and resilience needed to make the change last? What about motivation to change behaviour? What about that?

A further issue raised was the ethical and practical difficulties of providing monetary values to impact: It’s actually impossible. There are so many variables but we have to say – our service costs this but saves this. It’s always that which you have to reduce everything to. How much does it cost? How much does it save? Nothing really about the person. What does it cost them not to have our help? How do you put a pound sign on someone not becoming HIV positive?

Ultimately, these tension can be summed up as a differing notion of ‘what really counts’.

One participant at the knowledge exchange voiced a more fundamental tension inherent in the methodological process itself. Where is the starting point? The person’s obvious needs or their attributes and strengths? It is relevant here that this participant came from a community organisation project which held an asset-based approach, seeking to avoid a ‘top-down’ model of service delivery. This can be seen as at odds with the methodological assumptions of funders who focus on a ‘needs met’ model: What does this person have going for them? That’s where to start.

A related tension, which became apparent, was that some participants were committed to collaborative data collection processes, ones which were acceptable to their project users and were part of a shared conversation, integral to working with an individual, not an ‘add on’ to the interaction or, worse still, as one participant said the ‘before-I-help-you- form-filling approach’. A truly collaborative method of data collection is time-intensive. Preferring it, or insisting upon a certain method may add to the sense of being at odds with funders’ presuppositions that time-efficient preferences. The epistemology shaping decisions on how and why to collect data voiced by the third sector participants can be seen to reflect the recent feminist approaches which insist research should mean something to those being studied and should lead to change (Letherby, 2015: 6).

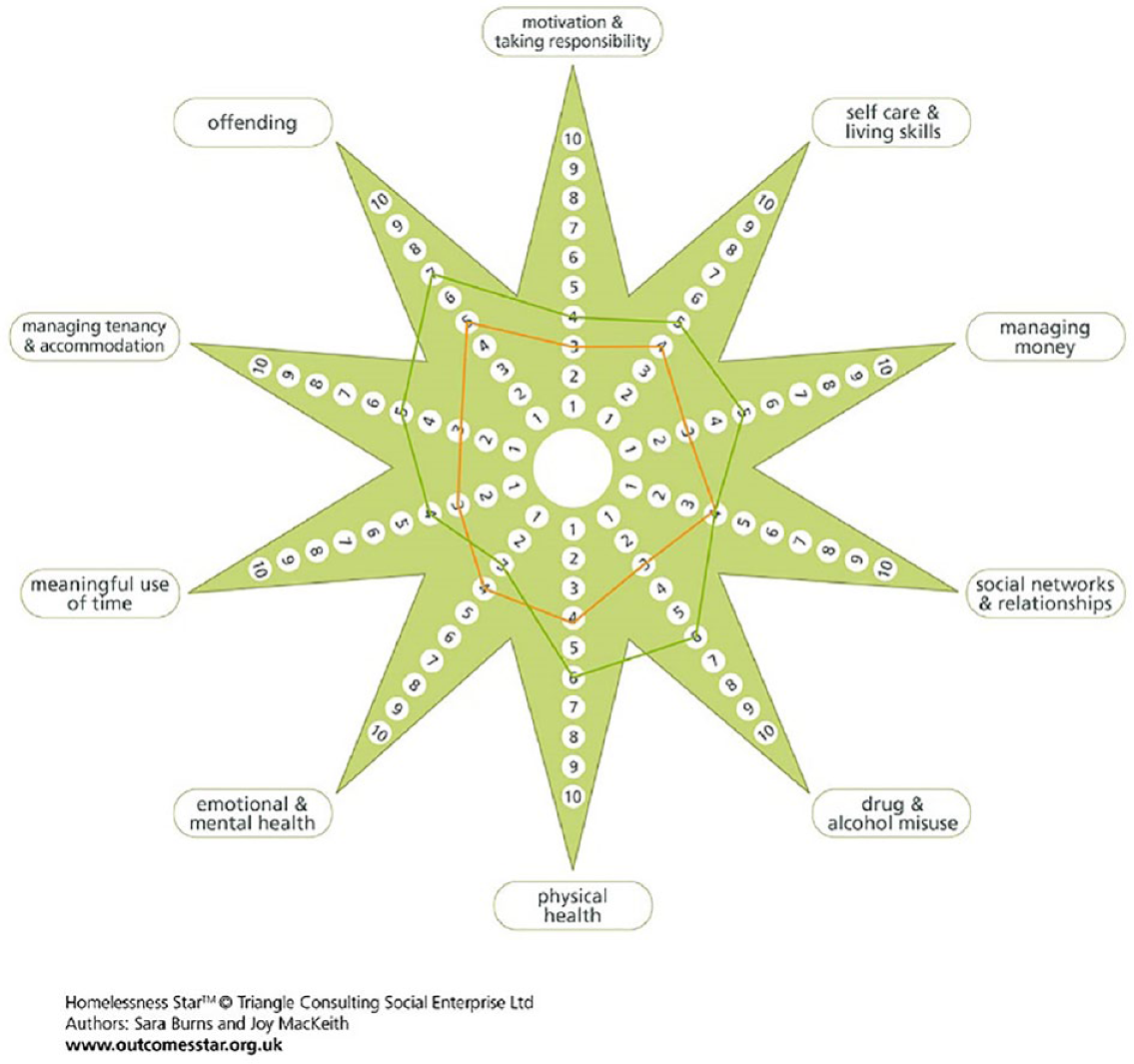

Such tensions in third sector methods and methodologies have been known for many years and have led to the production of a number of toolkits aiming to systematise collection and presentation of data. Two software providers were present at the ‘Making It Count’ knowledge exchange: ‘Outcome Star’ (the market leader), and the smaller scale ‘Soul Record’. Both of these toolkits rely on a theory of change model, which participants in the exchange felt were fitting. Both measure a range of ‘soft outcomes’ and present progress in tangible ways, seeking to both measure and support change. As such, they measure progress across a range of outcomes: such as increased confidence and problem-solving. They can also be used as diagnostic tools to help an individual identify changes they want to make in their life. As such, they are collaborative and adjustable. These tools make useful visual representations of an individual’s progress and, once data is entered, can cut the amount of time in producing detailed reports. As part of the Making it Count event, two providers were invited to send a delegate to explain their tool, address questions and hear comments about their use in practice from the third sector delegates. Due to unforeseen circumstances, only ‘Outcome Star’ could provide representation on the day, and so, inevitably, much of the feedback and data collected from the event addressed this one particular toolkit.

Outcome Star has become the market leader in software provision of its type and is now used by over a thousand organisations in the UK and overseas. In response to previous consultation with third sector providers, there are now 30 versions of the Star. It provides an online toolkit of questionnaires and action plans. Data are gathered from a series of interviews which take place in a one-to-one ‘key working context’ and should take up to 1 hour to complete. The software states to have an ethos of ‘person-centred, strengths-based and co-production approaches’. ‘Making it Count’ participants who had used this toolkit were asked to feedback on their experience. They commented that it shared their philosophy of progress in that the descriptors were are not measures of the severity of a particular problem but measured a participants’ willingness to engage with a particular issue; to journey towards positive change. This was a welcome meeting of minds and went some way to resolve the issues outlined earlier in this article. Some had also found it to be useful cutting report writing time. It was seen to be a flexible tool in that it has a range of specific descriptors and has produced a range of models. There are ‘Stars’ for adult care, through housing and homelessness, armed forces veterans and youth. It is inherently adaptable. And yet still the participants had devised further ways to adjust it, which is appraised in the following section of this article.

There was one overriding and concerning finding from the ‘Making it Count’ event: the number of small-scale organisations who had no idea that such toolkits had been created and were available for immediate use, or that other guides and consultative groups existed who might help them navigate the tensions inherent in measuring their impact, including the soft outcomes, to a range of audiences.

The initial scoping project for ‘Making it Count’ included an analysis of current provision of toolkits and consultancy services. The degree of partnerships between academic institutions and third sector groups is impressive, both in terms of the number of active collaborations and the timescale of partnerships. Some have resulted in formal, long-standing collaborations with umbrella charities, such as the New Economics Foundation online toolkit ‘Prove and Improve’ produced with the National Council for Voluntary Organisations (NVCO) Charities Evaluation Service. Others publish guides to help third sector organisations respond to specific Governmental Acts, such as Housing Associations’ Charitable Trust’s (HACT) Guide (2014): Measuring the Social Impact of Community Investment, which was produced as a response to the Public Services (Social Value) Act 2012. Some larger scale charities have also been willing to share their resources to other organisations online. An example being the National Housing Federation’s guide to measuring social impact (n.d.). There are also a whole range of institutions who have identified enterprise opportunities in building third sector capacity to collect and present data, offering consultancy and a range of ‘masterclasses’. The scoping exercise produced a wealth of available resources, some of which were free or at low cost. What was striking was how few of these were known to the third sector participants. When asked why, the participants said that smaller groups were often unaware of the wider sector and sometimes focussed entirely on their own community. This meant that they lacked information about what might be helpful. Expertise at trustee level is hard to secure and so much of the networking, which might help groups find out about such provision, happens in the ‘spare time of over-stretched staff’: We have enough to do trying to stay afloat to go to training events. I didn’t know someone had made this kind of thing (the Outcome Star). I should really have known about it.

It is another example of how the pressures on small-scale projects, in particular, are precluding a ‘work smarter, not harder’ model of delivery. Part of the arduous workload on staff in the third sector is, ironically, the increasing amount of time given to collect, analyse and report data.

It is not only the charity representatives at ‘Making it Count’ who are beset by the increasing onerous nature of an outcomes-driven funding system. Little et al’.s (2015) report for the Dartington Social Research Unit identified that Outcomes are part of a bigger way of thinking. In the last 30 years, the reform of public services has been based on a paradigm that has sharpened accountability, promoted the use of evidence, and encouraged social markets to flourish. All of these reforms have been in pursuit of better outcomes. (p. 62)

The pursuit of accountability in an increasingly hostile funding environment, rather than as primarily part of reflective praxis, drives the current focus on measuring outcomes. This will inevitably lead to ideas of acceptable evidence of these outcomes, and then, to what Little et al. (2015) describes as ‘the third leg of the accountability stool’, social markets, which are ‘quasi-markets’ and ‘new public management’. The marketization of welfare is well documented. Many of the ‘Making it Count’ participants commented of the levels of competition within such a ‘quasi market’, where charities, social enterprises and private companies compete to provide services. According to Little et al. (2015), the changing context of welfare provision has separated out the ‘language of outcomes, evidence and social markets’ (p. 17). It is a new and evolving language game. And as such, it is always invested with maintaining power and exercising hegemony: Some speak it, some don’t. On the whole, those who do speak the language are furthest away from the day-to-day lives of people facing the greatest disadvantage – and are the best remunerated. Many are part of the industry that has sprung up to help public systems get things done. Universities and research organisations are more engaged in decisions that influence the allocation of public resources than they were three decades ago. (Little et al., 2015: 71)

Little’s paper describes a situation which concurs with the findings of the ‘Making It Count’ event: When the public sector wants to engage with civil society, it largely does so on its terms. It sets a target – an outcome or an output. It asks civil society organisations to bid for the contract. The voluntary sector is sucked into the system space and abides by system rules.

The result is that, as increasing parts of the voluntary sector have become providers of state-funded services, they are ‘sucked into’ the language games of producing prescribed outputs, talking about the ‘outcomes of the outcomes of the outcomes’ that make up many system conversations. (Little et al., 2015: 73)

Questioning whether methodologies are fit for purpose, therefore, revealed yet another axis with the matrix of power; those shaping the conversations around appropriate methodologies are possibly the least engaged with the communities relying on welfare provision. This presents a personal challenge for me: to stay connected and use the privileged language codes in ways which promote the interests of those facing the most disadvantage, and those who work alongside them, to defuse their knowledge ‘upwards’.

Innovative solutions

The Making it Count event unearthed many examples of how grassroots organisations had come up with innovative solutions in response to the tensions described above. Reflecting on these conversations brings to mind Goffman’s (1956) dramaturgy. The community workers and activists played to different audiences, presenting themselves differently to each, and they were doing it very well. They were playing out their roles in third sector provision to multiple audiences: at one level they presented their outputs to funders, stakeholders and trustee groups but spoke differently within their own teams, and again shaped methods to work well with people using their services. They had many ‘front stages’ and were aware of shifting performances and scripts. Perhaps, ‘Making It Count’ provided an opportunity for some ‘back stage’ interaction? Participation in this event would be anonymised, and the funders and communities they were accountable to were not present. In this context, they may have felt more ready to share the innovations and practices which enabled them to navigate the tensions in methods, methodologies and power relations they had described.

Some of the participants had created their own data capture tools and devised bespoke questionnaires for each funding provider. Others were innovating with video capture so that their service users could ‘tell their own story’. Site visits and times when they could ‘walk people around’ to see what was happening on their project were seen as having most value. In all this, the organisations attending the event seemed more than ready to be accountable; they were simply struggling with the amount and conflicting nature of the data required and some of the presuppositions made about ‘what really counts’.

One example of the ways the third sector representatives reported innovating methodologies was in the way one participant reported using ‘Outcome Star’. What was disclosed can be framed within Goffman’s dramaturgical theory.

To one audience, the CEO of her organisation, the participant said she only would express ‘positive things and all the good points of using it [the Outcome Star]’. She was aware that her organisation saw it as ‘the answer’ and that she needed to be ‘seen to be on the same page’. The Outcome Star draws a picture – a star – to illustrate progress along a series of desired criteria. It takes about 1 hour to review progress in what should be a 1-2-1 private conversation, and has printed questionnaires to help facilitate this process. As progress is made, the shape within the star expands.

However, to those she provided support for, this participant shared that she greatly adapted the way she used the software. She preferred using pencil and paper to draw much less well formed versions of the computerised picture and rarely using the printed off questionnaires: Mostly I’m outside meeting up anyway. So I take a bit of paper and we might draw the new lines [on Outcome Star] as we think they are now but I don’t write while someone is talking. I listen. I look at them.

She also admitted she did not always fill in the data in time on software version and sometimes ‘concluded’ what progress had been made from a ‘general chat’ rather than asking about all the different criteria: It doesn’t feel natural. I don’t want to talk away to someone tapping away . . . like GPs now. That’s not listening.

This participant revealed the extent to which she practised Goffman’s (1956) ‘impression management’: with her boss, colleagues and clients. She innovated and knew which audience to present different ‘scripts’ to. While this participant felt ‘it’s a good tool. It does work. But you need to be flexible’. This need was greatest when she described the difficulties of using the software to write reports for funders, especially given the expected timescale to work with someone within the project. In discussing this she said, ‘The Star . . . it goes in and out’.

At any particular moment, a client she is working with might be seen to be making great progress: valuing sobriety, keeping appointments, complying with statutory agencies and ‘getting everything together’. They might have received a job offer. Stop the ‘Star’ there, and the client has ticked all the boxes of progress and these can be captured as outcomes of positive impact for the organisation. The client may even be ‘removed from the books . . . job done!’ But forward weeks or even days later and the job has not materialised, a set-back has led to the return of destructive behaviour patterns, the client feels desperate, unmotivated and angry, perhaps not wanting to engage again. Capture ‘the Star’ at this point and its evidence of failure. A few weeks of patience later, the client might be ready ‘to pick themselves up and have another go’. Others at the event joined in this conversation and agreed in what was coined ‘the oscillating Star’: But you would never present that story, the reality, to funders. You have to freeze frame when there is something good to say.

The ‘oscillating star’ is a sad and poignant example of the language games and innovations which welfare providers must play. They know that outcomes have been set which infer unrealistic time frames for lasting progress. Resilience takes time to build, many years more than the funding cycles of grants. Freeze-framing the Outcome Star at a pertinent moment is a necessary innovation when those setting the rules, as Little et al. (2015) suggest, are furthest removed from the everyday lives of those in most need of help. Participants are aware of this. They ‘play to’ different audiences.

‘Making it Count’ was a valuable knowledge exchange. It revealed the tensions and innovations of those attempting to report the impact of community organisations. It shone light onto the power plays evolving under as marketization of welfare gathers pace, within a cultural discourse which stigmatises the poor. It demonstrated the commitment and expertise of grassroots workers and it collected knowledge to counter the current ‘top-down’ approach of policy making. It also put into words much of the struggle I have faced as an academic and activist; how difficult it is to navigate the ethics of researching people who have been greatly marginalised; to be an ‘inbetweener’. The layers of power, the complexity of assumptions, the tensions inherent in working within these systems demonstrate that, so very often, those setting success criteria really do not know what counts or how to best count it.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.