Abstract

It is common practice in survey questionnaires to include a general open and non-directive feedback question at the end, but the analysis of this type of data is rarely discussed in the methodological literature. While these open-ended comments can be useful, most researchers fail to report on this issue. The aim of this article is to illustrate and reflect upon the benefits and challenges of analyzing responses to open-ended feedback questions. The article describes the experiences of coding and analyzing data generated through a feedback question at the end of an international online survey with small-scale cannabis cultivators carried out by the Global Cannabis Cultivation Research Consortium. After describing the design and dataset of the web survey, the analytical approach and coding frame are presented. The analytical strategies chosen in this study illustrate the diversity and complexity of feedback comments which pose methodological challenges to researchers wishing to use them for data analyses. In this article, three types of feedback comments (political/policy comments, general comments of positive and negative appreciation, and methodological comments) are used to illustrate the difficulties and advantages of analyzing this type of data. The advantages of analyzing feedback comments are well known, but they seem to be rarely exploited. General feedback questions at the end of surveys are typically non-directive. If researchers want to use these data for research and analyses, they need a clear strategy. They ought to give enough thought to why they are including this type of question, and develop an analytical strategy at the design stage of the study.

Introduction

It is common for surveys to include open-ended questions, where respondents are asked to give their opinions on a subject in free-form text (O’Cathain and Thomas, 2004). Typically, recipients are asked non-directive questions such as “Any other comments?” or “Is there anything else you would like to say (or add)?” with a “free text” answer box for responses (Smyth et al., 2009).

In the methodological literature, open-ended comments from surveys have been described as a blessing and a curse for researchers (Dillman, 2007; Miller and Dumford, 2014; O’Cathain and Thomas, 2004). General open questions at the end of a survey may act as a safety net, helping researchers to identify issues not covered by closed questions even if the questionnaire was developed with considerable amounts of background research and piloting (Biemer et al., 2011). Respondents may want to give more details about issues than is typically allowed by closed questions. Piloting may have failed to uncover issues that affect a small number of people only, or that have emerged since the design of the questionnaire. Responses may be used to elaborate or corroborate answers to closed questions, offering reassurance to the researcher that the questionnaire is valid, or highlighting problems with particular questions (Biemer and Lyberg, 2003). Furthermore, the habitual “any other comments” question at the end of structured questionnaires has the potential to increase response rates (McColl et al., 2001).

Finally, the use of “any other comments” may help to redress the power balance between researchers and participants (O’Cathain and Thomas, 2004). Closed questions represent the researcher’s agenda, even if they have been developed through consultation with representatives of the study’s population and piloting. General open questions offer respondents an opportunity to voice their reactions and opinions, to ask for clarification about an issue, or to raise concerns about the research or about a particular policy.

Despite the insights that these types of questions may provide, the lack of clarity around the status of the responses may constitute a challenge for analysis. Researchers may consider responses to general open questions as quantitative data (Bankauskaite and Saarelma, 2003; Steckler et al., 1992), as largely text-based qualitative responses (Boulton et al., 1996), or as “quasi-qualitative data” (Murphy et al., 1998). General open questions have qualitative features, in that they allow respondents to write whatever they want in their own words with little structure imposed by the researcher (except for any limitations on the space provided for comments). However, a researcher attempting to qualitatively analyze the data from general open questions may struggle with a lack of context, and a lack of conceptual richness typical of qualitative data, because individual responses often consist of a few words or sentences (especially when the size of answer boxes is limited; Christian et al., 2007; Smyth et al., 2009). Furthermore, the closed questions that precede the open-ended question may impose constraints on the comments provided, as the closed questions may implicitly suggest and constrain what is considered the legitimate agenda for the qualitative responses (O’Cathain and Thomas, 2004), or may have created fatigue in respondents, thereby limiting their open-ended responses (Biemer and Lyberg, 2003). Such uncertainties are exacerbated when the status of a general open feedback question remains unclear at the design stage of a study.

When questionnaires are being prepared for analysis, the researcher faces the dilemma of whether to analyze and report the written responses to the final open question. Data input and analysis require considerable resources. One could argue that researchers should not ask open questions unless they are prepared to analyze the responses, as ignoring these data may be considered unethical. However, practical constraints (a lack of time or expertise to analyze this type of data) often contribute to the decision not to analyze these responses (O’Cathain and Thomas, 2004). This is particularly problematic when a general feedback question is designed to redress the power balance between researchers and participants by offering respondents an opportunity to voice opinions or concerns about the survey or about a particular issue.

In this article we draw on our own experiences with analyzing a general “any other comments” open question at the end of a structured questionnaire. The article utilizes data collected through a web survey carried out by the Global Cannabis Cultivation Research Consortium (GCCRC) in 13 countries (Australia, Austria, Belgium, Canada, Denmark, Finland, Germany, Israel, the Netherlands, New Zealand, Switzerland, the United States, and the United Kingdom) and seven languages (Danish, Dutch, English, Finnish, French, German, and Hebrew). The survey targeted small-scale domestic cannabis cultivators and mainly asked about experiences with and methods of growing cannabis (Barratt et al., 2012, 2015).

The final question in this survey (“Do you have any other comments or feedback about this project?”) yielded a significant number of (often lengthy) answers. As a research team, we were faced with the dilemmas described above. In this article, we describe and reflect upon our analytical approach and the benefits and challenges of analyzing feedback comments. We summarize the main topics arising from the feedback comments and present some of the lessons learned in terms of developing a strategic method for analyzing data from general open questions at the end of structured questionnaires. From a methodological perspective, the inclusion of a feedback question in a survey is not particularly innovative, but few researchers report on the analysis of feedback comments, and methodological papers focusing on this issue are relatively scarce. We hope our experiences and reflections may help other researchers to optimize the quality and utility of this type of data.

The Global Cannabis Cultivation Research Consortium web survey

General design

The methodology of the GCCRC web survey has been described in detail elsewhere (Barratt et al., 2012, 2015), so a brief overview will suffice here. Following successful online surveys into cannabis cultivation in Belgium (Decorte, 2010), Denmark, and Finland (Hakkarainen et al., 2011), the GCCRC developed a standardized online survey to allow for the collection of comparative data across participating countries: the International Cannabis Cultivation Questionnaire (ICCQ; Decorte et al., 2012; Decorte and Potter, 2015). The core ICCQ includes 35 questions across seven modules: experiences with growing cannabis; methods and scale of growing operations; reasons for growing; personal use of cannabis and other drugs; participation in cannabis and other drug markets; contact with the criminal justice system; involvement in other (non-drug related) illegal activities; and demographic characteristics. Some participating countries added additional items to address other research interests, for example, growing cannabis for medicinal purposes or career transitions and grower networks (see e.g. Hakkarainen et al., 2015; Lenton et al., 2015; Nguyen et al., 2015; Paoli et al., 2015). The ICCQ also includes items to test eligibility and recruitment source.

Recruitment: a mix of promotion strategies

The most important recruitment method was engagement with cannabis user or cannabis cultivation groups, usually through their websites and online forums. Facebook, news articles, and referral from friends were the other main sources of recruitment. The mix of promotion strategies varied from country to country (see Barratt et al., 2015 for details).

We have previously acknowledged the limitations of the Internet-based research methods used (Barratt and Lenton, 2015). However, online surveys have many advantages compared with face-to-face, postal, or telephone research, particularly when researching “hidden populations” such as drug users and drug dealers (Coomber, 2011; Kalogeraki, 2012; Miller and Sønderlund, 2010; Potter and Chatwin, 2011; Temple and Brown, 2011).

The GCCRC survey: a participatory approach

Populations involved in criminal activities can have good reasons to be secretive and suspicious of researchers. Criminologists and other social scientists researching active offenders usually acknowledge this problem and the concerns of their participant group. Building on previous studies on cannabis cultivation using online surveys (Decorte, 2010; Hakkarainen et al., 2011), we approached cannabis growers to inform the study, pilot test the questionnaire, and build legitimacy around the survey. “Participatory online research” methods (Barratt and Lenton, 2015; see also Potter and Chatwin, 2011; Temple and Brown, 2011) were used through online engagement and dialogue with cannabis users and growers as a part of the research process (for more details, see Barratt et al., 2012, 2015).

The online chats and discussions between the research team and the potential participants in different countries were fruitful piloting exercises that improved the questionnaire (and specific items). They also allowed the team to demonstrate their willingness to listen to and act on feedback from participant groups and that their time and efforts in improving the survey were valued. This led to the inclusion of certain items in the questionnaire as well as the final open-ended question.

The process of participatory engagement is difficult to achieve when working with populations that must identify with a stigmatized activity in order to participate. Our conversations with cannabis growers revealed concerns about anonymity, the “real” goals of our study, and whether the study would be used to undermine cannabis cultivation and law reform. Many potential participants shared the view that they could not see a good reason to complete the survey as it could simply “fill in unknown gaps for authorities” and there was a tendency for cannabis growers to assume that we were not “on their side” or were even “against them.” In some cases, these assumptions were backed up by reference to previous negative experiences with researchers and journalists.

These piloting experiences indicated that just sending the usual “we want you to tell us your story” message would not be convincing enough for many potential participants. Instead, somewhat ironically, we would need to overcome their negative preconceptions of us by being clear that we did not have negative preconceptions of them—that we did not assume cannabis use is “bad,” or that all cannabis growers are “criminal.” As a strategy to increase recruitment and to show participants the potential benefits of engaging with research, we noted that the study provided an opportunity to challenge common stereotypes of growers. This was conveyed to prospective participants by including the following statement 1 in the ICCQ: The general community typically has a very unrealistic view about people who grow cannabis. We want you to help set the record straight by completing this questionnaire. It is likely that this helped make the study attractive, especially among growers with explicit views on cannabis policy. However, it could be argued that this particular style of encouraging potentially suspicious participants may have also influenced responses. It may, for example, have caused some participants to understate responses seen to fit with the “unrealistic views” of the general public—such as their criminal history, use of other drugs, or profits from selling cannabis. Clearly, there are tradeoffs between encouraging participation and influencing responses, especially when researching hidden populations or sensitive topics—deciding how best to balance these concerns will vary from project to project and is something that researchers using similar approaches should think about carefully.

General sample characteristics

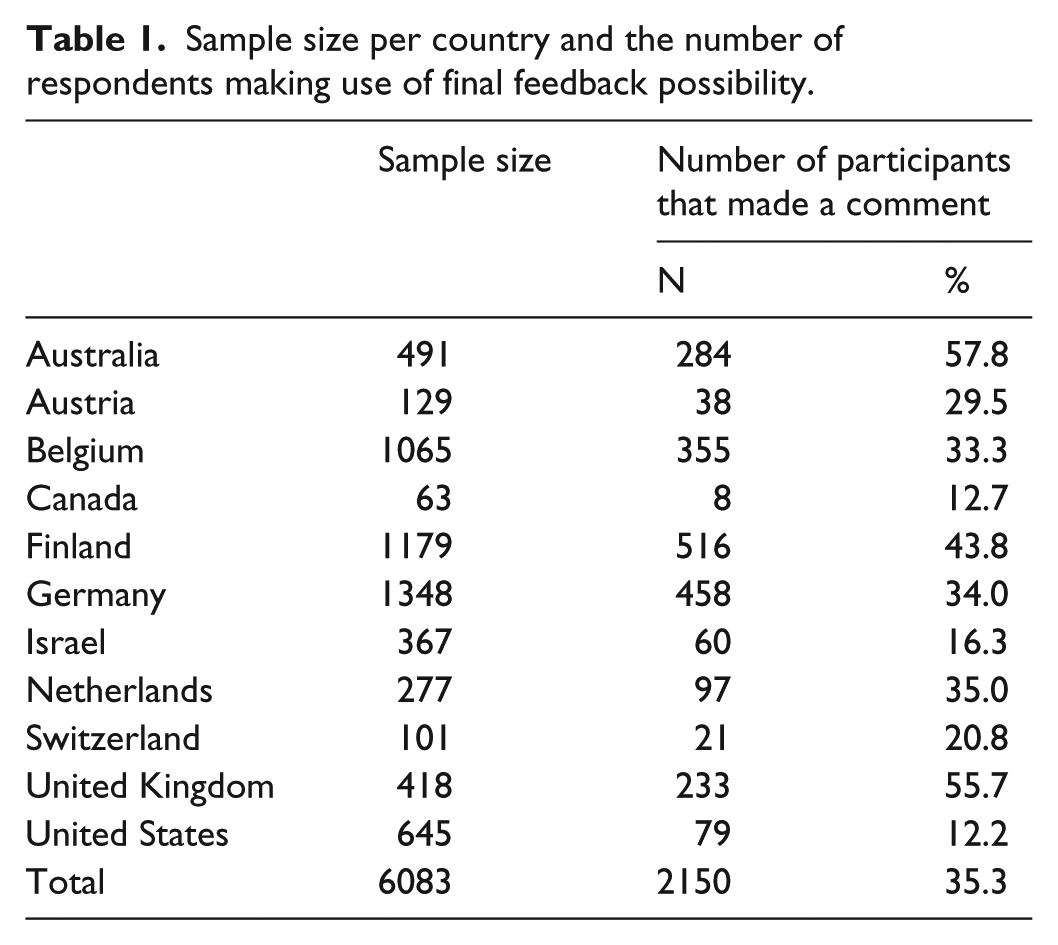

The findings presented here are based on data acquired from 11 of the 13 countries covered by the ICCQ surveys. 2 Not all respondents were included in the data presented here. Three rules were used to determine eligible respondents for analysis in this article (described by Potter et al., 2015), which left us with a final sample of 6083 cases. A total of 2150 of these participants (35.3% of the total sample) left a feedback comment at the end of the survey (see Table 1 for a breakdown of the final sample).

Sample size per country and the number of respondents making use of final feedback possibility.

Full discussion of the sample characteristics and the general findings of the survey can be found in Potter et al. (2015) and Wilkins et al. (2018). Given the focus of our study (domestic small-scale cannabis cultivation) and the method (an online survey), we expected (and acknowledged) a bias toward smaller scale growers who are less involved in drug markets and other types of crime. Those with greater criminal involvement would probably be less likely to respond to our survey as they are likely to have greater concerns about possible criminal justice repercussions resulting from reporting their activities (Potter et al., 2015).

Overall, there was a great deal of similarity across countries in terms of findings. A clear majority of small-scale cannabis cultivators are primarily motivated by reasons other than making money from cannabis supply and have minimal involvement in drug dealing or other criminal activities. These growers generally come from “normal” rather than “deviant” backgrounds. Some differences do exist between the different national samples suggesting that local factors (political, geographical, cultural, etc.) may have some influence on how small-scale cultivators operate, although differences in recruitment strategies may also account for some differences observed.

A general open and non-directive feedback question at the end

At the end of the questionnaire, we included our general open question “Do you have any other comments or feedback about this project?” The GCCRC research team did not have an a priori strategy to analyze the feedback comments at the design stage of the study. The general open question was clearly non-directive. We placed no restrictions on the length of answers, allowing respondents as much space as they wanted. Other than this, we made no particular efforts to encourage participants to answer the feedback question.

It has been noted that data generated from general open feedback questions may contribute very little extra data over and above what is gathered in the closed questions of the questionnaire (Thomas et al., 1996). To explore what contribution the feedback comments could make to our study overall, we performed a preliminary analysis that involved reading a sample of responses. We decided formal analysis of the feedback responses was desirable for three reasons: (1) the comments offered valuable additional insights related to the closed questions in the ICCQ; (2) a large number of participants took the time to write comments; and (3) the strength of emotions and personal views expressed in many of the comments.

In the next section, we describe our analytical approach combining an open coding strategy and (quantitative and qualitative) content analysis. We present the coding frame we constructed, and discuss the challenge of translation. In section “Making sense of general feedback comments”, we illustrate some benefits and challenges of analyzing responses to open-ended feedback questions using some quantitative results and qualitative extracts from our study.

The analytical approach of the GCCRC: translation, analysis, and coding

The challenge of translation

Eleven national datasets were created. Different research teams used different survey software packages (LimeSurvey, Qualtrics, SurveyXact, SurveyGizmo, Survey Monkey, Webropol). Datasets from Anglophone countries were collected in English, while other surveys were translated into local languages (Danish, Dutch, Finnish, French, German, and Hebrew) by the research teams. The use of different survey packages and different languages necessitated a complex procedure to accurately stitch the master dataset together. When the aim is to create comparable datasets, one must also be sensitive to different cultural responses to survey procedures and translated items (Harkness, 2008; Mangen, 1999; Straus, 2009). We have described the data preparation procedures (coding, recoding, and data cleaning) we implemented and the issues we encountered elsewhere (Barratt et al., 2015).

A challenge in the data preparation was the translation of text-based responses back into English (the only language common to the whole research team) before merging the datasets. This was not only a problem for the nine questions in the ICCQ that offered a text response option for the “other” field. The translation of the responses to the general feedback question proved particularly challenging, as 2150 participants formulated comments totaling 128,214 words. The size of these comments ranged from one- or two-word responses to extensive comments (up to 789 words long) on a range of topics. Translation of the responses proved to be time-consuming.

To overcome the problem of “conceptual equivalence” (Tsai et al., 2004) and to reduce the risk of misinterpretation and loss of a respondent’s intended meaning, translation of the feedback comments from the original language to English was done by the local research teams. These local teams were familiar with the local language or dialect(s) and could convey conceptual equivalence, and they were familiar with the intricacies of the national cannabis policy and the sociocultural characteristics of the participants in their own countries. The Anglophone researchers checked the quality of a subsample of translated transcripts, and if they suggested grammatical or linguistic corrections, these were again checked by the local research teams to ensure local meanings were captured as far as possible.

Recognizing patterns and being able to interpret the data accurately was a challenging part of the process and involved some discussion about the meaning of informant accounts. Our study involved researchers with (1) different methodological perspectives and disciplinary interests, (2) detailed understanding of the study context including the cultural characteristics of participants and the national and international cannabis policies in place, and (3) the ability to accurately convey meaning of data collected in a local language. We tried to make the process of seeing patterns in these qualitative data and drawing out meaning more thorough by involving the entire research team, taking advantage of their differing disciplinary, linguistic and cultural perspectives.

General analytical approach

We quickly realized the advantage of having a coding system in English; any refinement of the thematic framework could easily be discussed by the whole team, and this facilitated joint decision-making between bilingual and English-speaking researchers on the categorization of coded data. The thematic framework and codes were modified and added to as other important issues and viewpoints emerged. The result was a single database containing all coded feedback comments that could be viewed and accessed by the whole team.

The procedure we used to analyze the feedback comments can best be described as a cross between an inductive coding strategy and content analysis. Initially, using data from one country, Belgium, three coders independently coded each respondent’s comment as the unit of analysis allowing multiple codes for each text unit. These codes were then organized into a coding frame which was used in a quantitative content analysis (Silverman, 2006) permitting intercoder reliability to be calculated (see Berelson, 1952).

The initial coding frame which emerged from the inductive coding exercise by the Belgian team was then distributed to the research partners from other countries and an iterative process of coding, reliability assessment, and codebook modification was initiated. When the revised codebook was finalized, the entire set of responses for the complete sample was coded accordingly.

Reliability tests based on a subsample of 20% of cases confirmed a high level of consistency between coders, with Cronbach’s alphas ranging from 0.83 to 0.95. Finally, the codes were entered into the general dataset alongside the data from the closed questions.

Once the data file was prepared, a number of descriptive statistical tests were used: to calculate the number of respondents per country that made use of the feedback option; to compare those who gave feedback with those who did not; and to calculate the frequency distribution of all codes and categories (for the total sample and by country). The analysis presented in the following sections serves as an example of a very simple content analysis aimed at revealing the main themes in our respondents’ feedback comments and involving simple tabulations of instances of particular categories. We also use some quotes and extracts to illustrate the themes.

The coding frame

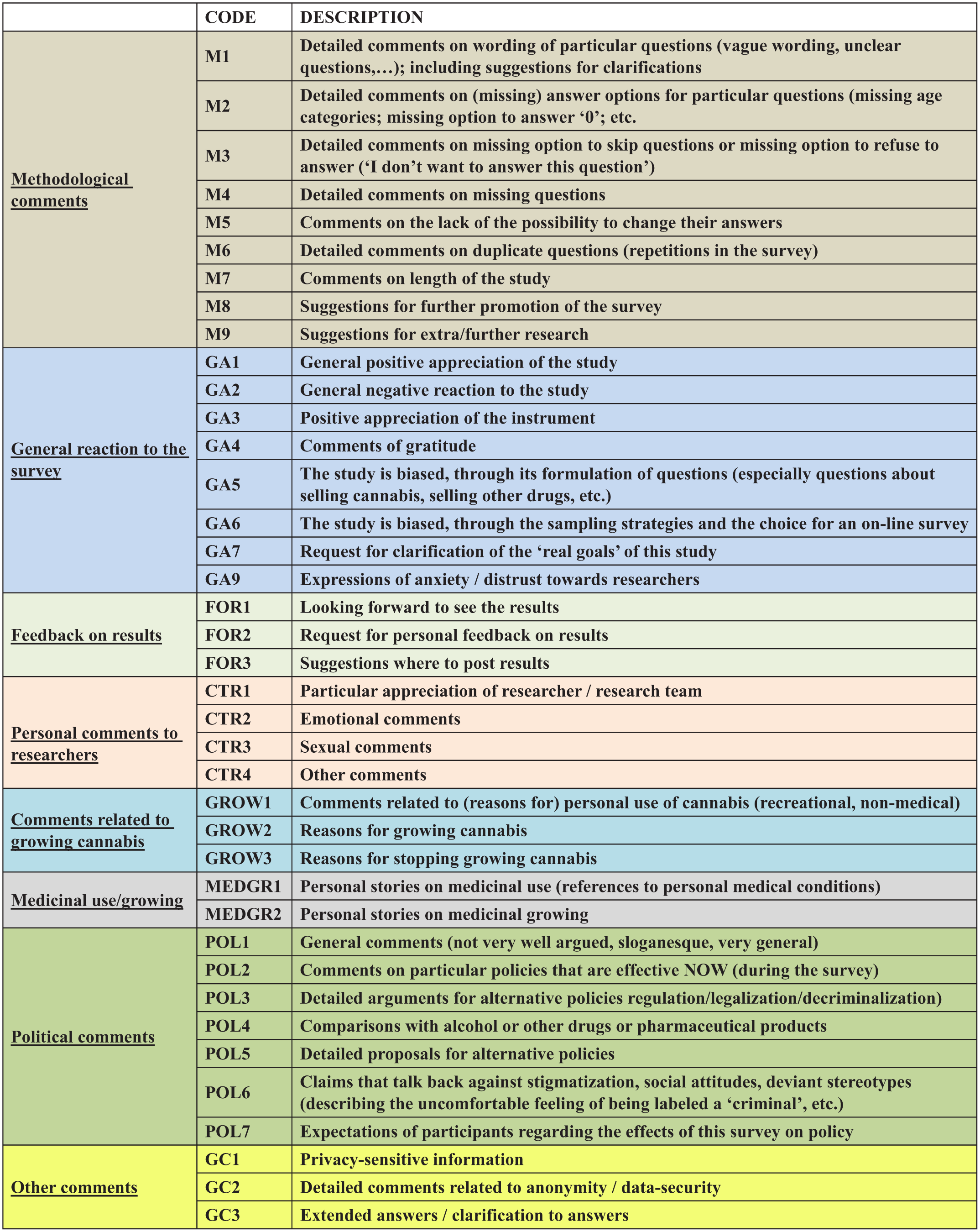

The analytical process described above led to a coding frame of eight categories of comments (see Figure 1):

Comments related to the methodology and survey design.

Comments that expressed general (positive and negative) reactions to the study.

Comments related to the availability of study results.

Personal comments addressed to individual researchers, including expressions of appreciation for the work of a particular researcher, sexual/sexist remarks. and emotional comments.

Comments related to respondents’ cannabis cultivation and cannabis use, including reasons for stopping growing cannabis (which most of the participating countries had not asked directly in the ICCQ).

Comments related to personal experiences of cannabis cultivation and use for medicinal purposes.

Comments related to cannabis policy or drug policy in general.

The final category included comments that could not be assigned to any of the other categories. This included privacy-sensitive information (such as names, personal e-mail addresses, or phone numbers which we had to delete from the database), detailed comments related to data security and anonymity (e.g. “I am using TOR”), and extended clarification of answers to closed ICCQ questions.

Final codebook.

Making sense of general feedback comments

Response and nonresponse to the open feedback question

Overall, 35.3% of the sample analyzed here left a feedback comment (see Table 1). There are notable differences between countries: in Australia and the United Kingdom, over 50% of the participants left a comment; in the United States, Canada and Israel, the proportion of participants is below 20%.

These differences in response rates may be attributable to similar factors that may have influenced the sample sizes recruited in each country. They may reflect differences in the mix of recruitment methods (see earlier) and efforts expended by different teams, as well as differing degrees to which teams established strong relationships between researchers and their respective cannabis cultivation communities. The differences may also reflect differing levels of distrust regarding cannabis issues (and levels of acceptance for cannabis) or research more general (Barratt et al., 2015).

Differences in response rate to the feedback question may also be attributed to survey fatigue in participants, as some research teams added extra questions or modules to the ICCQ (see earlier). Another factor that may have affected these responses is the completion mode: completion via mobile devices (smartphones and tablets) may have required more time or effort from participants, compared with completion via laptops and desktop computers. Unfortunately, we were not able to test this proposition without access to comparative data on completion mode in every country.

Another explanation for the relatively high rate of feedback comments in Australia and the United Kingdom may have been the inclusion of an extra question on the growers’ attitude toward cannabis policy (see below). Although we were unable to test this proposition, it is possible that this question was seen by participants as signaling a willingness by the research team to hear their views on policy.

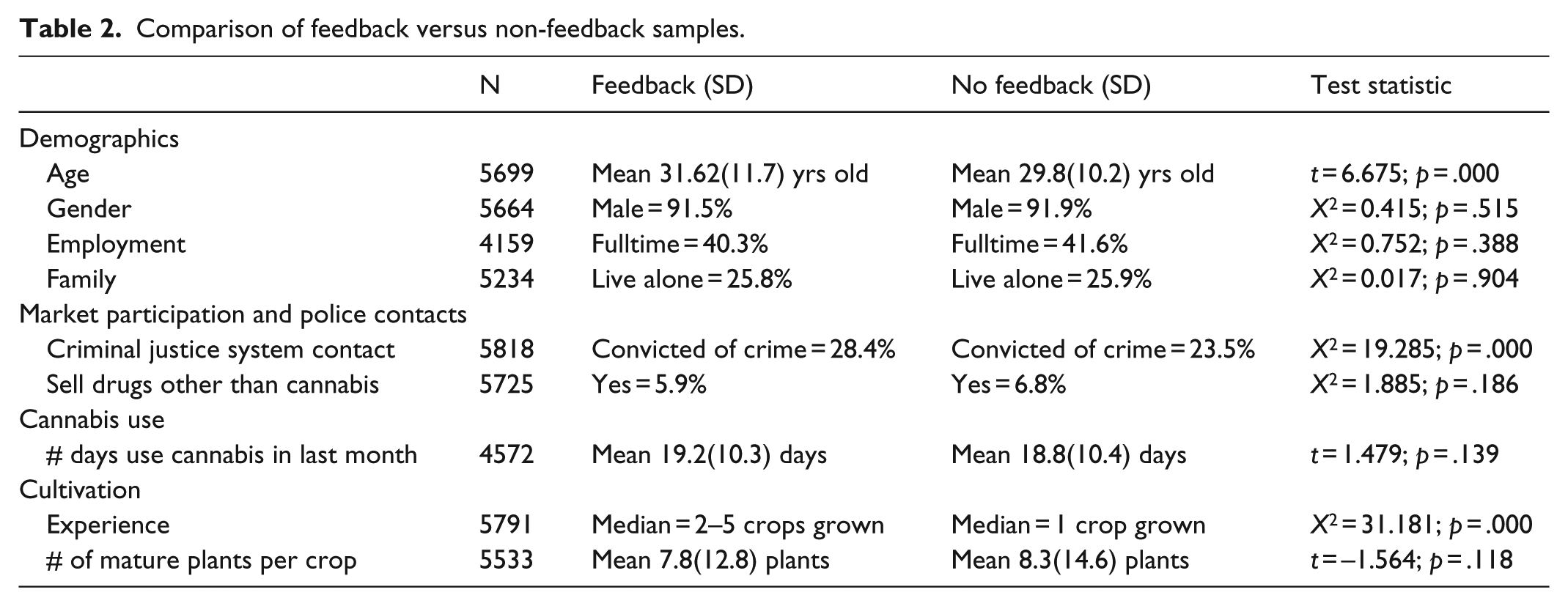

Table 2 shows a comparison of respondents who gave feedback with those who did not. The feedback group was significantly older, more likely to have been convicted of a crime, and more likely to be experienced growers. We conducted a binary logistic regression to analyze the relative impact of each of these variables, alongside country of residence. In the multivariate analysis, only age and country of residence remained significant predictors. While beyond the scope of this article, future research should explore potential predictors for commenting using a series of nested models.

Comparison of feedback versus non-feedback samples.

Categories of feedback

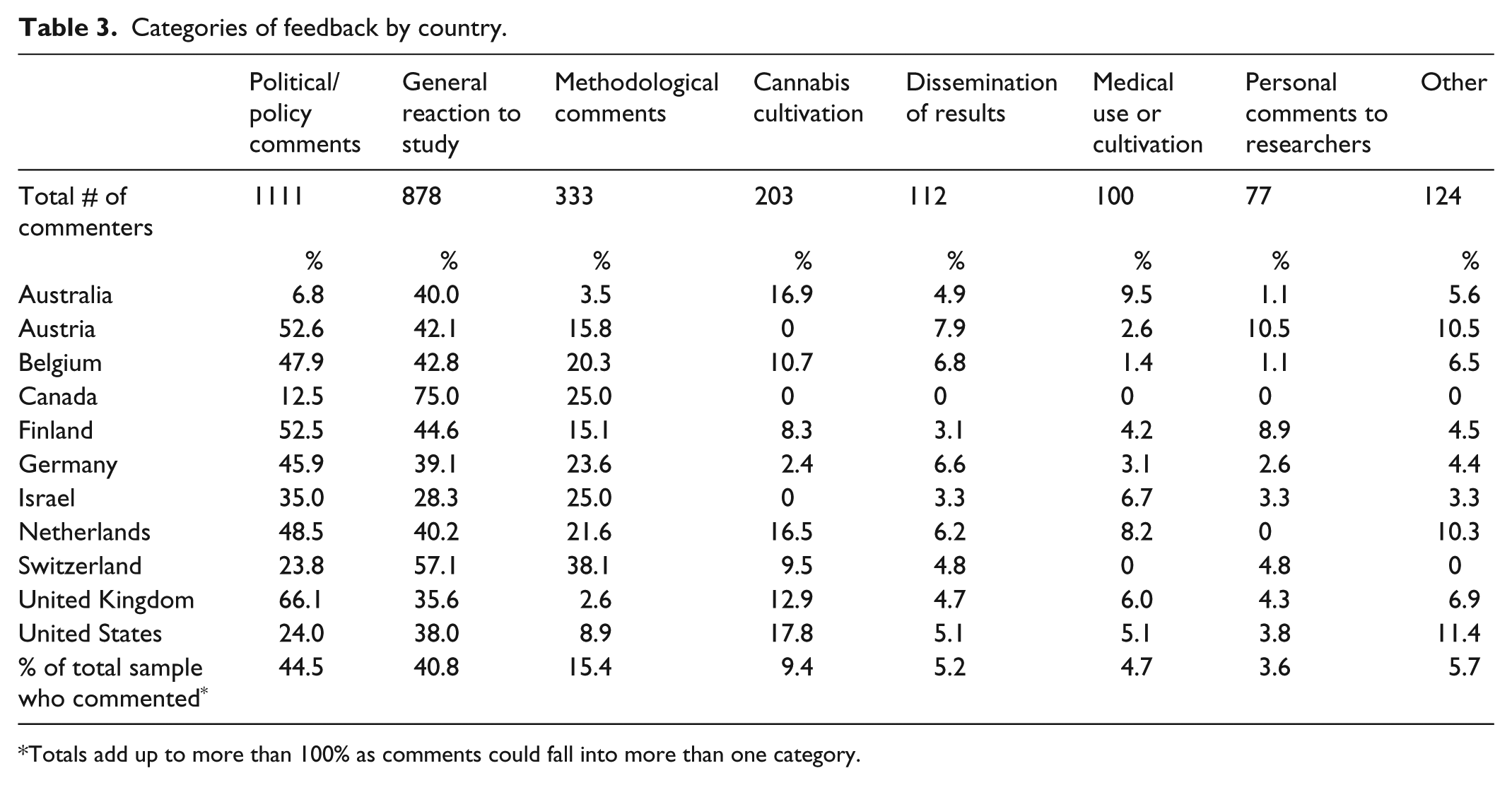

In Table 3, we present a quantitative overview of the eight categories of feedback comments per country. Despite some variation between different countries, three categories of comments are far more prevalent than the other categories: comments related to (cannabis) policy (44.5% of commenters), general appreciation of the study (40.8%), and methodological comments (15.4%).

Categories of feedback by country.

Totals add up to more than 100% as comments could fall into more than one category.

Comments falling into each of the other categories were given by less than 10% of those participants who commented (less than 3% of the total sample). In this article, we focus on the three most common types of comments made and use these to illustrate the benefits and challenges of analyzing and using feedback comments.

Political and policy-oriented comments

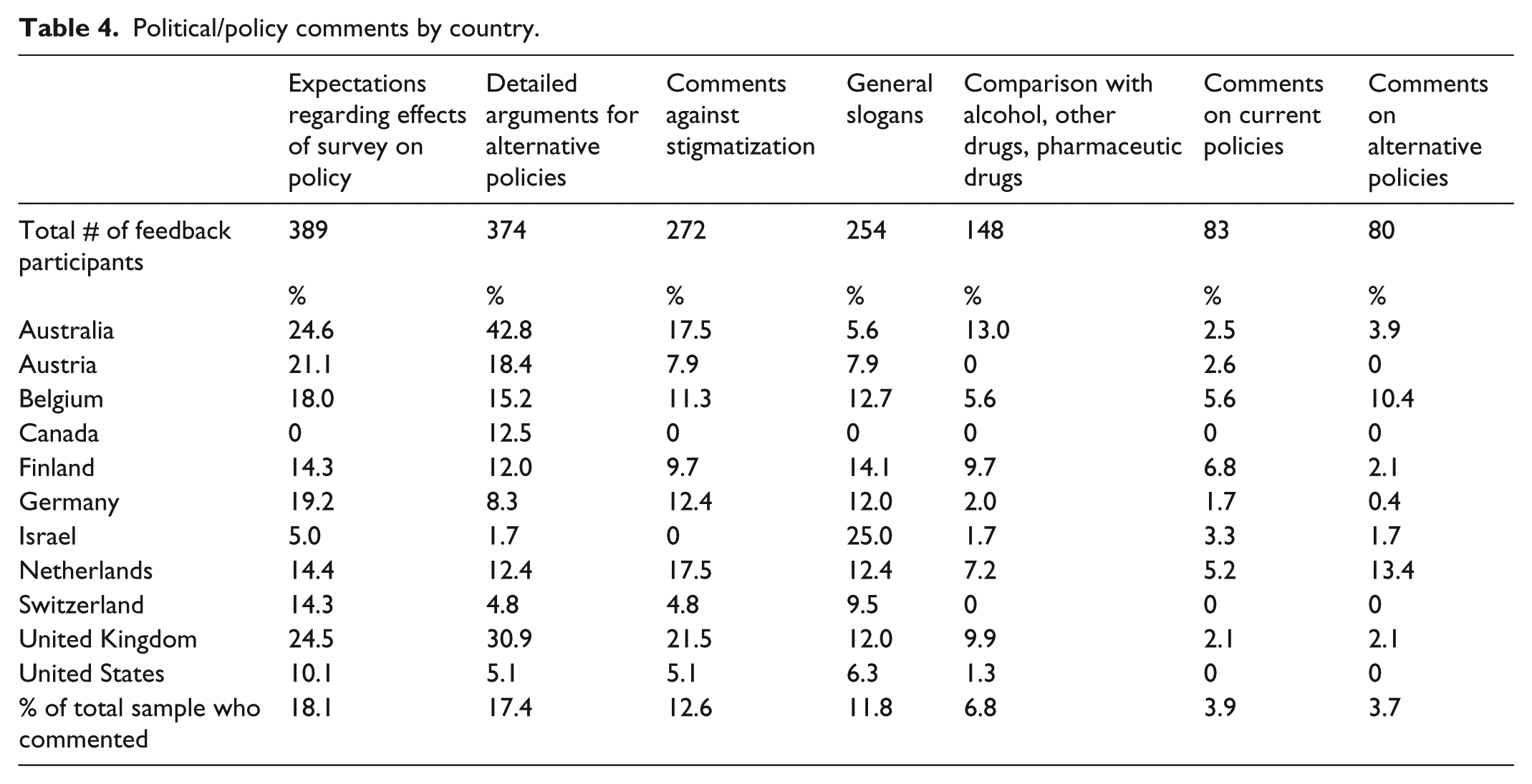

Inviting and analyzing feedback comments realizes the opportunity for participants to voice their concerns and opinions about a particular policy. Analysis of the feedback comments to the survey revealed that almost half of the participants who made use of the possibility to give feedback on the survey (44.5% of the commenters) made some comment related to cannabis policy. In Table 4, we present these comments in a more detailed manner.

Political/policy comments by country.

Many participants (n = 254; or 11.8% of those who commented) wrote very short comments (often slogans with exclamation marks) such as Legalize!, Free the weed!, or Overgrow the government!, which provided limited information beyond a general view on cannabis policy. But many other feedback comments did offer deeper insights into participants’ support of non-prohibitionist cannabis policies, why they preferred particular policy options, and what they did or (more often) did not like about current cannabis policy.

One-sixth (17.4%; n = 374) of the participants who gave feedback comments wrote detailed arguments in favor of alternative cannabis policies such as better quality control and safer cannabis, weakening the black market and eliminating criminal organizations, create legal economies and jobs, and so on.

Respondents formulated (mostly critical) comments on specific ongoing policies (n = 83; 3.9% of those responding to the feedback question). These comments often relate to the national drug policy or legislation in their country, or to particular initiatives by local policymakers. Another group of participants provided (more or less detailed) proposals for alternative cannabis regulations (n = 80; 3.7%), suggested how many plants an individual should be allowed to grow or described (their) “ideal” cannabis production facilities and distribution points.

Other participants (n = 148; 6.8%) made explicit statements about the harms associated with the use of alcohol, other illegal drugs, or pharmaceutical drugs in comparison with the (lesser) harmfulness of cannabis. An Australian participant wrote: An adult should have the right to choose his own substance or medication, especially one that is less dangerous than others which are legal at the moment. A Belgian respondent stated: Cannabis has a stigma and because of this its users and cultivators are stigmatized as well. And yet alcohol (abuse) is much more harmful!

A recurring theme here is “stigma” and terms hinting at “(de)stigmatization.” A total of 272 participants (12.6% of respondents answering the open feedback question) referred explicitly to the stereotypical depiction of cannabis users as a public danger or threat, as criminals or marginal people, and the depiction of cannabis growers as drug producers or dealers. These respondents attempted to resist stigmatization by stressing what they were not. For instance, they describe themselves through adjectives such as (very) ordinary, honest, responsible, educated, well-integrated, reliable, non-violent and not stupid. They point at the “normal” positions and roles they assume in society (e.g. as father, family person, employee, tax-payer, etc.) and the ordinary activities they undertake (taking care of the kids, attending school or university, meeting up with friends, etc.). They describe their personal pattern of cannabis consumption in terms of moderate and non-problematic use, and use “we” to demonstrate affiliation with a larger group or community: We are no criminals and There’s nothing wrong or abnormal with what we do.

Our analysis revealed that many respondents (n = 389; 18.1%) had explicit hopes regarding the effects of the study on policy, which is probably connected to their motives for participating in the survey. Many respondents expressed the hope that […] questionnaires like this will help the promotion of a more humane drug policy […], or lead to a more tolerant, transparent, or liberal cannabis policy. Many respondents express the hope that […] this research will finally destigmatize cultivation for personal use!, and that […] this study will help destroy the stereotypical image of cannabis users, or at least nuance it. Another participant wrote: I hope my voice will be heard.

Reaction to the study

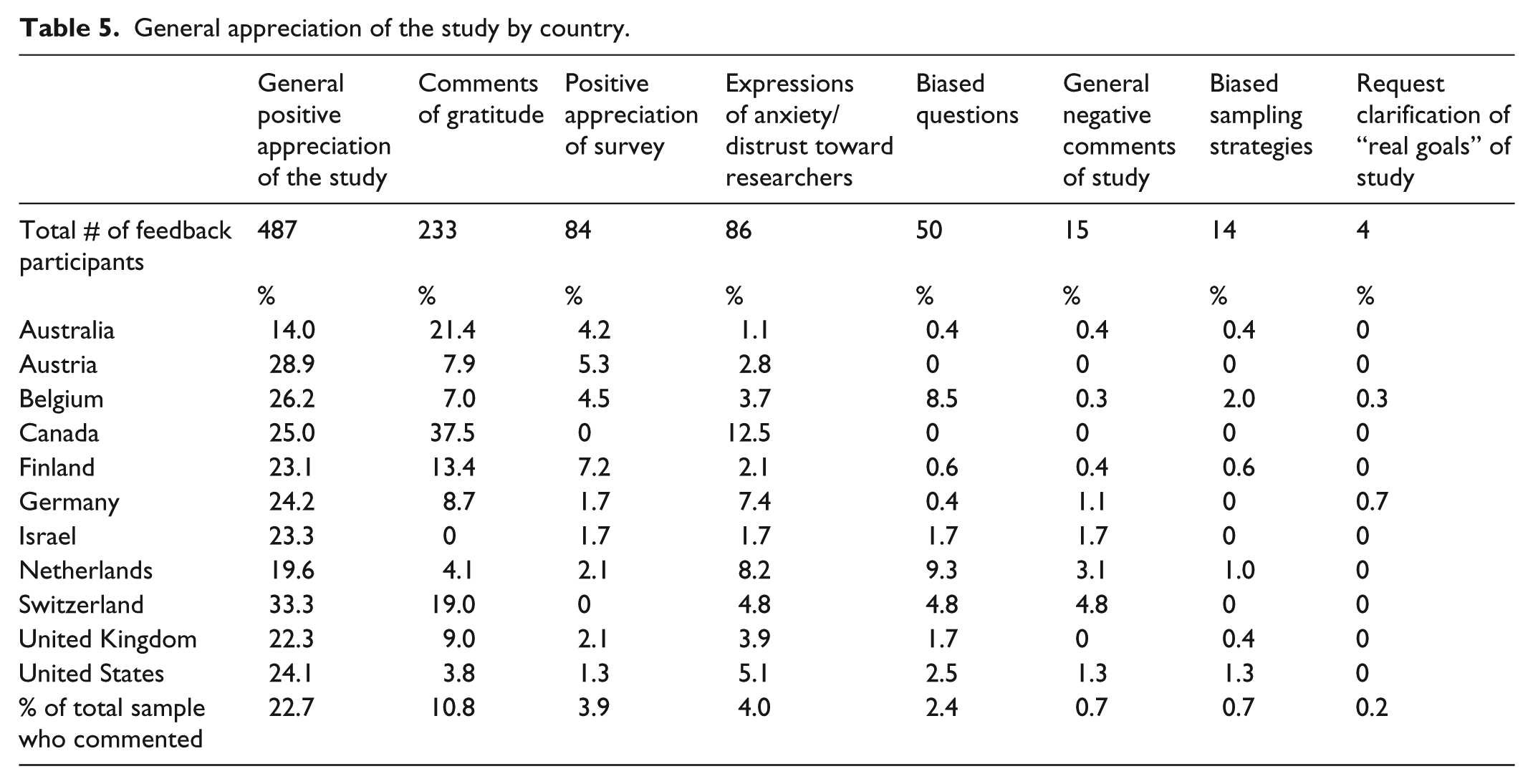

Methodological textbooks often suggest ending a questionnaire by inviting respondents to “ventilate” their feelings about the study and the questionnaire. In Table 5 we present in more detail the second category of feedback comments expressing a general reaction to the project. Almost one-quarter of the participants who made use of the feedback option (22.7%; n = 487) expressed their positive appreciation of the study in general terms. They called the survey a nice, fantastic, amazing, great initiative, or decent, interesting and relevant research. Participants enjoyed participating and congratulate the researchers for an excellent job. Another 233 participants (10.8% of those who commented) expressed feelings of gratitude: Thank you very much for taking the first step, or Thank you for showing interest in cannabis users.

General appreciation of the study by country.

Another 84 participants (3.9%) expressed appreciation for the research instrument (the ICCQ). They described the questionnaire as fairly complete, well-composed, very targeted and obviously well-prepared. One participant states: You clearly did your research when writing this survey. Great job!

The positive appreciation of the study and the research instrument, and even the expressions of gratitude, clearly showed how the study was perceived by participants, which should be an important topic of concern for researchers. The fact that many participants were happy that the researchers showed interest in them and that their voices were heard may be linked to the general “participatory” approach chosen in our study (as described above).

Negative comments: residual distrust and irritating questions

A total of 169 participants (7.8% of all comments) provided some negative, critical, or skeptical comment (see Table 5). Analysis of the feedback comments in our survey showed that very few participants who commented had a generally negative view on the survey (n = 15; 0.7% of those gave feedback). Some of these respondents raised concerns about the reliability of the study (I’ve filled in this research in all honesty, but the odds of this being the case for all participants are zero). Others raised (relevant) questions on the representativeness of the sample recruited: I fear that the respondents may not be representative for our population. I think that people that cultivate for personal/medical use, will be more likely to participate in this research (because of ideological reasons) than larger growers with criminal/financial motives […]. Another participant states: […] this will definitely lead to a distorted view, the REAL big boys will never fill in these types of surveys. Another participant commented that there are more reliable ways of getting information on the topic of cannabis cultivation, such as interviews and face-to-face contacts. Although the number of participants expressing a general negative view of the study is low (n = 15), we should recognize that such views might be shared by more respondents who did not choose to mention it.

Although we previously argued that our participatory approach likely helped to gain access and develop trust, some feedback comments show there is still a degree of residual distrust, even among participants that chose to participate in the study. In all 4% of the participants who commented (n = 86) expressed some degree of anxiety or concern about the anonymity of the survey, for example, I hope this is in fact anonymous! or I hope you’re not the feds!. Some expressed another fear: I sure hope this survey won’t lead to a stricter and stronger policy against cannabis use and cultivation. Four participants asked for the clarification of the “real” goals of the study.

Analysis of the feedback comments showed that 50 participants (2.4% of those who commented) were upset by certain questions, particularly those related to selling cannabis, financial profits from cannabis cultivation and “collaborators” in growing activities. For example, some respondents were distrustful of questions about their grower networks. Although these questions asked to identify the number of other growers they collaborate with through pseudonyms, they were experienced as very intrusive: Don’t ask any names. Or, I find the previous page rather suspicious. The additional module on career transitions and grower networks (added to the core questionnaire by the teams in the United States, Canada and Belgium) triggered the following comment from a participant: […] At the end I wanted to reply to the 14 extra questions but I backed out because I had the impression that you were talking about my “collaborators” in terms of “criminal associations” (and profit as well, which does not apply to me at all). (Belgium)

Some participants indicated that the questions about income from cannabis growing and collaboration with others did not apply to their personal situation and therefore were irrelevant. They experienced these questions as misplaced, disturbing, or shocking; some accused the research team of being biased. In their view, these questions seemed to suggest that all cannabis growers are profit-oriented, selling cannabis (or other drugs), and that the people they collaborate with were “accomplices in criminal acts” (ignoring the fact that the results of those questions may also demonstrate that growers may usually not be involved in profit-making and crime).

The way the questions were written made it look like every cultivator is selling his harvest or working together with other people. (United Kingdom)

Although we repeatedly argued that our study provided an opportunity to challenge common stereotypes of growers such as assuming that all cannabis growers are a part of large criminal enterprises, motivated by large profits or associated with violent crime, and that participants could help to create a more realistic view of people who grow cannabis by completing this questionnaire, some participants were still very upset by the fact that we did ask questions about income from cultivation and collaboration with others.

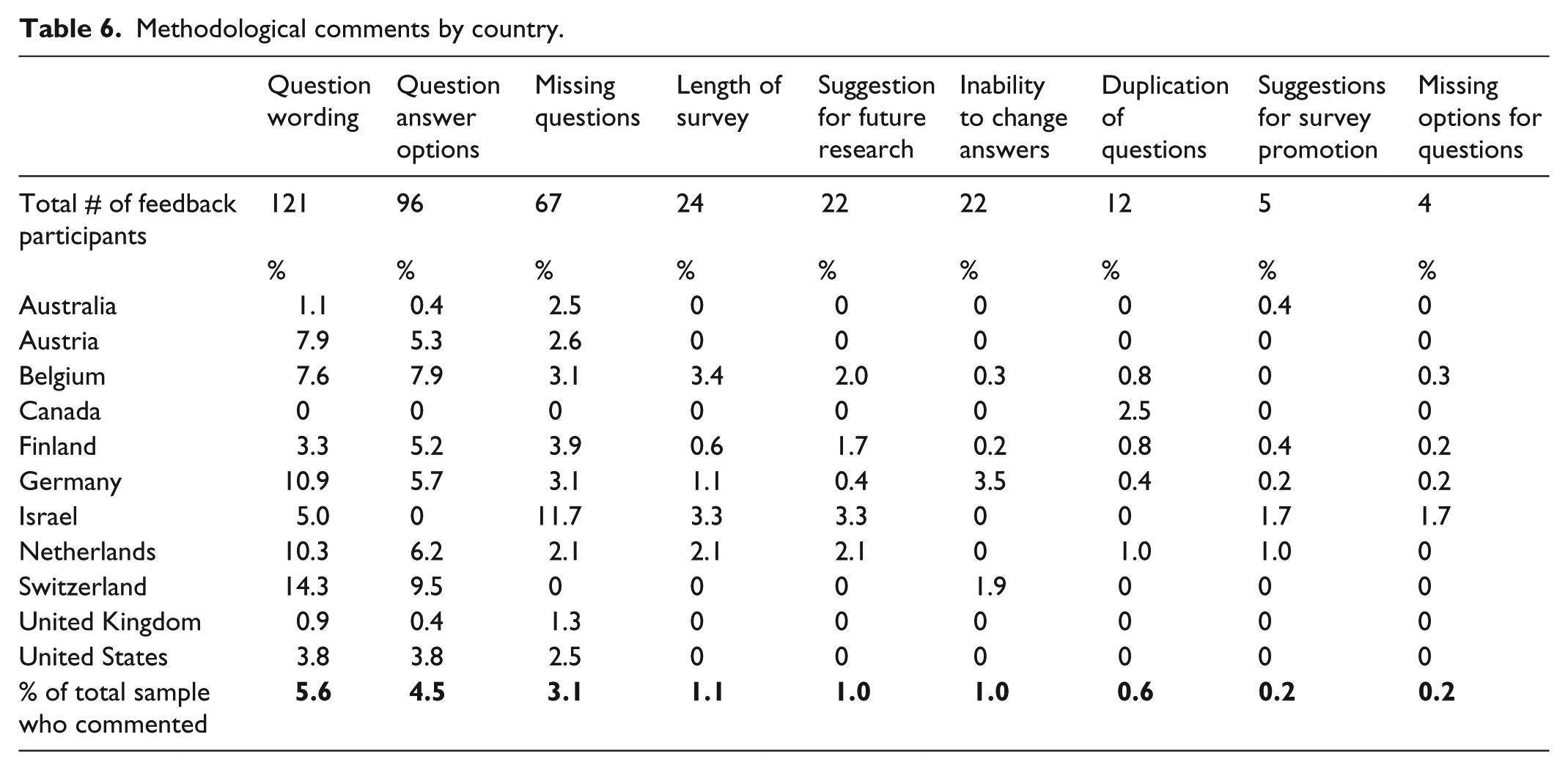

Methodological comments

A total of 333 participants (15.4% of those who commented) left methodological comments (Table 6). They formulated detailed comments on the wording of certain items or indicated which question they found difficult to interpret (n = 121; 5.6%). Some of them included suggestions on how to improve the wording or clarifications that ought to be added. Some participants complained that they could not skip some questions, could not tick the option “I do not want to answer this question,” or could not skip back to change some of their answers (n = 22). Some felt the questionnaire contained repetitive questions (n = 12). Others (n = 96) commented on missing answer options. One staff member of a Belgian cannabis social club commented: “Because I went to the police to tell them I am growing” is another way to get into contact with the police. I miss this option in the questions. Another participant complained: Indoor most people grow in a tent. This was not an option to answer.

Methodological comments by country.

Our broad category of methodological comments also included comments about the length of the survey (You should have warned about the large number of questions.) (n = 24), and suggestions by participants on how we could further promote the study (n = 5). Finally, 22 participants (1.0% of those who commented) identified issues that were not covered by the closed questions, such as (more) questions on cannabis use, on consumption methods (vaporizing, eating, etc.), and on knowledge about the cannabis plant (the active ingredients, the role of terpenes, etc.).

Discussion

A preliminary analysis of the feedback comments demonstrated that they provided valuable additional information to the closed questions. Full analysis of the feedback comments turned out to be complex for several reasons. Translation issues made the process of data input complicated, and the coding procedure required a considerable amount of time and energy. The analysis involved much discussion among the research team about the meaning of informant accounts. The coding was similar to the early stages of qualitative analysis, involving skills not normally associated with survey analysis, and involved several researchers with differing disciplinary, cultural, and linguistic backgrounds. However, the open-ended comments in our survey proved to be relevant and useful for several reasons.

The general open question at the end allowed respondents to “ventilate” their feelings about the study and the questionnaire. Positive appreciation of the study and the research instrument clearly indicated how our study was perceived by the participants, which was an important concern for the research team. Feedback comments can teach us a lot about the extent to which a targeted population approves or appreciates a study.

The fact that many participants were happy that their voices were heard may be linked to the general “participatory” approach chosen in our study. The methodological literature often suggests that completing open-ended response options requires a greater amount of time and mental effort than most closed-ended questions (Dillman, 2007), and that those with negative feelings about the study or the questionnaire are more likely to voice their opinions as comments, using them as a platform for their complaints (Miller and Dumford, 2014). This was not the case in our study. Despite the fatigue respondents may have experienced after completing a lengthy questionnaire, many respondents continued and made use of the (optional) feedback opportunity and expressed their appreciation and even gratitude. This might be an indication that the combination of offline and online dialogue with the target population was a successful strategy to establish legitimacy of the study, to gain access, and to develop trust with the targeted group of respondents, and solidified the support for the study. Our participatory approach may also have reduced power differences between researcher and participant (Bakardjieva and Feenberg, 2001). Allowing participants to give comments and express their appreciation via the open-ended question at the end is in line with the nature of the Internet as an open forum for exchanging ideas and made our respondents feel involved and more positive about cooperating.

Some of our respondents used the opportunity to voice concern about the study. Although the number of participants with a general negative appreciation of the study is low, and even though we previously argued that our participatory approach has helped to gain access and develop trust, some feedback comments illustrated a degree of residual distrust, even among participants that chose to participate in the study and completed all items.

Analysis of the feedback comments demonstrated that certain items were particularly likely to upset (some) participants: those related to selling cannabis, financial profits from cultivation, and “collaborators” in growing activities (see also Weisheit, 1991). Despite our participatory approach and our repeated efforts to clarify our research agenda, we were not able to eliminate suspicions among the targeted group. Although we have repeatedly argued that our study provided an opportunity to create a more realistic view of people who grow cannabis, some participants were still upset by the fact that we asked questions about income from cultivation and collaboration with others. Our carefully worded questions about income from cannabis growing and collaboration with others were misread and experienced as irrelevant or disturbing by a few respondents.

The explicit background of our study (i.e. to debunk myths and unrealistic views and provide a fuller picture of cannabis growing and cannabis growers) may have been an important incentive for participation, but the feedback comments helped us to identify the problems that some participants experienced with a few survey items. Again, even though only a small number of participants formulated these types of comments, we must recognize that they might be relevant for others who chose not to participate in the study, or who participated but did not mention such concerns in the feedback comments.

A general limitation of the analysis presented here is the fact that we do not know how many respondents may have declined to take part in the survey because of distrust toward the study or the research teams. We also do not know the proportion of participants that dropped out of the survey because of negative feelings around particular questions since the feedback question was located at the very end of the questionnaire.

Feedback comments not only reveal which questionnaire items provoke irritation or distrust among participants, they also help to identify (broader) issues that are not covered in the survey but seemed important to (some) participants. The feedback comments showed the importance of the perception of cannabis policy by our participants, and other topics such as knowledge about the cannabis plant, or methods of cannabis consumption. Feedback comments also helped identify missing answer options and minor technical flaws. When researchers are planning a follow-up survey with the same population, or planning another survey with similar target groups, the analysis of feedback comments like these helps to evaluate and improve the next survey. Before we launch a second sweep of our survey in 2019 (in a larger number of countries), we will use the participants’ feedback to revise the questionnaire regarding question wording, explanatory statements about some questions, and to decide which topics to drop and which new topics to insert.

Feedback comments offer participants an opportunity to voice their concerns and opinions about a particular policy, especially when the policy at stake is controversial or subject to emotional debates (cf. Scholtz and Zuell, 2012). The core ICCQ did not contain any closed questions about attitudes to cannabis policy, and only three countries (Australia, Denmark, and the United Kingdom) included one extra question on this topic. But almost half of the participants who gave feedback on the survey made some (often clearly articulated) comment related to cannabis policy. The comments did not only corroborate our earlier finding based on the closed question (added to the survey in three countries) that the study’s population is keen to express their views about appropriate cannabis policies (Lenton et al., 2015); they also offered a clearer understanding of what they do not like about current policies and the reasons for their particular preferences (see also Decorte et al., 2011).

The feedback comments made it clear that, when the reasoning behind the respondents’ preferences for a particular policy is of interest, the best way to learn about it is to pay attention to the respondent’s own words. Narrative answers about the basis on which they have preferences may be subject to some unreliability because of differences in respondents’ writing skills and styles, but they do offer researchers a more direct window into what people are thinking. If a researcher wants to understand why participants prefer Policy A to Policy B or what they do or do not like about the policy they are subjected to, there are many reasons to want to hear narrative answers in addition to the responses to standardized, fixed-response questions (Fowler, 1995). This can also be interpreted as an indication that many respondents feel we should have included more questions on their opinions and ideas about cannabis policy.

What is also important here is that our participants’ comments at the end of the survey seem to illustrate some of the complex ways in which the study’s population—illicit cannabis growers (and users)—attempt to challenge the stigma attached to them. The comments show how participants try to take ownership of their contribution to the study and they seem to tell us more about participants’ motives for participating in our study. They contain attempts to reject prevalent constructions on cannabis cultivation (and use) and try to “normalize” these practices (cf. Emerson, 1992). As such, they contribute to substantive findings and even theoretical perspectives. For example, the comments reveal forms of micropolitics relating to the management of the stigma associated with illicit drug use, as described in the literature (see Hathaway et al., 2011; Pennay and Measham, 2016; Pennay and Moore, 2010; Rødner Sznitman, 2008). Participants arguing that their cannabis use does not interfere with socially approved activities illustrate processes of “assimilative normalization.” They try to “pass as normal” while accepting “mainstream” representations of cannabis use (Hathaway et al., 2011; Rødner Sznitman, 2008; Sznitman and Taubman, 2016). Other comments sought to resist or redefine what is considered to be “normal” with respect to cannabis use, illustrating the theoretical concept of “transformational normalization” (cf. Pennay and Moore, 2010; Rødner Sznitman, 2008; Sznitman and Taubman, 2016) as respondents harnessed the open feedback question to their own transformational agenda, presumably in the hope that their voices will be heard through their contribution to the study.

Our experiences of analysis and coding this type of data underline the importance of an explicit methodological strategy. Not only did the research consortium not allocate specific resources to merge these qualitative data into the general dataset, we also acknowledge that the GCCRC research team did not have an a priori strategy to generate in-depth analyses of the feedback comments at the design stage of the study. Our general and non-directive feedback question generated an immense variety of comments, which—together with the translation issue in our cross-national sample—made coding and analysis even more challenging. On the other hand, it clearly allowed our participants to take ownership of their contribution and to comment in whatever way they wanted. An alternative strategy might be to add an optional feedback module, with several questions focusing on different aspects, such as the general appreciation of the survey (with a rating scale), a text field for highlighting problems with (the wording) of particular questions, a question to identify issues not covered by the survey or identifying new issues (“safety net” question), and questions that offer an opportunity to voice opinions on the topic of the study. Coding and analysis of the responses will most likely prove to be easier, but adding more than one feedback question to a survey obviously requires more time and effort from participants who may already feel fatigue. Asking for feedback in a non-directive manner offers participants a better opportunity to “open up.”

Conclusion

Including a general open and non-directive question at the end of a structured questionnaire is common, but the analysis of this type of data is rarely discussed in the available methodological literature and most researchers fail to report on this issue. Feedback comments can potentially do much more than corroborate the quantitative findings. Through their feedback on the study, participants can offer alternative readings of their practices to those provided by “mainstream” or dominant discourses, or they can challenge the (implicit or explicit) views and assumptions researchers have built into their questionnaire. As such, they can contribute to substantive findings and theoretical developments. Feedback comments can help to detect residual distrust, to identify questions that provoke negative feelings among some participants or seem to be misread or misunderstood, and they can help to identify issues that are not covered in a survey. As such, they can help to improve follow-up studies or enhance researchers’ expertise in designing questionnaires.

Simply including an open feedback question in a survey because it is usual practice or using this type of question without giving much thought to why we are doing so, is potentially troublesome. Simply ignoring these data seems unethical—especially when researchers claim to be willing to listen to the concerns of the population under study and to act on feedback from participant groups. Taking feedback comments seriously may help to reduce power differences between researcher and participant. The process of analysis and coding this type of data described in this article also underlines the importance of an explicit methodological strategy. Developing a strategy for analyzing the feedback comments at the design stage of the study will reduce the dilemma faced by researchers about whether and how to analyze these questions (O’Cathain and Thomas, 2004). It should help the researcher to allocate appropriate time and expertise to these data, and to produce an analysis robust enough for publication in peer reviewed journals. The value of such questions, and the quality of the data and analysis, will be optimized if researchers allocate sufficient resources for coding and analysis and if they make more strategic use of these questions.

If the feedback question is designed to give a voice to participants then researchers should ensure that comments will be read, and that any concerns and queries expressed will be met with appropriate action by the research team.

Respondents who choose to answer the general open question can be systematically different from respondents overall, because of self-selection mechanisms. It is important to analyze and report on the characteristics of those who leave comments so that potential bias can be considered. Those who choose to answer the general open question could be different from respondents overall, either being more articulate or having a greater interest in the survey topic. Even if only a small number of participants use the feedback option to “ventilate” negative feelings about the study or about certain questions, this can also help to explain why participants drop out after a few questions, or why other individuals chose not to participate at all.

It is important to determine whether the general open question adds much to the closed questions in the questionnaire. Performing a preliminary analysis involving reading the responses to consider the contribution they make to the study overall, is advisable. If the comments merely corroborate or slightly elaborate upon the answers to closed questions, it still is good practice to report within publications that the responses to the general open question did not provide additional information to the closed questions. If formal analysis is undertaken, a description of the analytical strategy and the coding process should be provided.

Footnotes

Acknowledgements

We would like to thank the thousands of cannabis cultivators who completed our questionnaire. Our research would not be possible without your efforts. Thank you to all the people and groups that supported and promoted our research, including but not limited to: A-clinic Foundation, Bluelight.org, Cannabis Consumer organization “WeSmoke,” Cannabis Festival 420 Smoke Out, cannabismyter.dk, Chris Bovey, Deutscher Hanfverband, drugsforum.nl, eve-rave.ch, Finnish Cannabis Association, grasscity.com, grower.ch, hampepartiet.dk, Hamppu Forum, hanfburg.de, Hanfjournal, the Israeli Cannabis Magazine (![]() ), jointjedraaien.nl, land-der-traeume.de, Nimbin Hemp Embassy, NORML-UK, Österreichischer Hanfverband, OZStoners.com, partyflock.nl, psychedelia.dk, rollitup.org, Royal Queen Seeds, shaman-australis.com, thctalk.com, Vereniging voor Opheffing Cannabisverbod (VOC), wietforum.nl, wiet-zaden.nl, and all the coffeeshops, growshops and headshops that helped us. The German team would like to thank Anton-Proksch-Insitut, Dr. Alfred Uhl (Vienna, Austria), and infodrog.ch, Marcel Krebs (Bern, Switzerland), who gave consent to address Austrian and Swiss respondents and assisted their team with recruitment of Swiss and Austrians.

), jointjedraaien.nl, land-der-traeume.de, Nimbin Hemp Embassy, NORML-UK, Österreichischer Hanfverband, OZStoners.com, partyflock.nl, psychedelia.dk, rollitup.org, Royal Queen Seeds, shaman-australis.com, thctalk.com, Vereniging voor Opheffing Cannabisverbod (VOC), wietforum.nl, wiet-zaden.nl, and all the coffeeshops, growshops and headshops that helped us. The German team would like to thank Anton-Proksch-Insitut, Dr. Alfred Uhl (Vienna, Austria), and infodrog.ch, Marcel Krebs (Bern, Switzerland), who gave consent to address Austrian and Swiss respondents and assisted their team with recruitment of Swiss and Austrians.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The project meetings of this study are funded by the Nordic Center for Welfare and Social Issues (NVC). S.L. and M.J.B. through their employment at The National Drug Research Institute at Curtin University and the National Drug and Alcohol Research Center at the University of New South Wales are supported by funding from the Australian Government under the Drug and Alcohol Program. M.J.B. is funded through a National Health & Medical Research Council Early Career Researcher Fellowship (APP1070140). The Belgian study was funded through the Belgian Science Policy Office, under the Federal Research Program on Drugs (Grant no. DR/00/063). The US/Canada study received funding from the College of Health and Human Services at California State University, Long Beach. The German study received refunding from a prior project funded by the Deutsche Forschungsgemeinschaft (DFG, German Research Association).