Abstract

Improvisation in music therapy is a highly complex and diverse form of creativity, offering a wide variety of musical information for music therapists to work with. To address this diversity in research and analysis, it is common to combine a wide range of interdisciplinary scientific approaches. Microanalysis methods in music therapy provide highly insightful results on a detailed musical level in musical improvisation but come at the cost of a time-consuming analysis procedure. The automation of these methods in machine learning environments and the use of the wealth of digitally obtainable musical information in clinical improvisations is highly promising for enabling the efficient use of microanalytic methods in clinical practice. In particular, assessment procedures – the systematic collection and analysis of client information to plan subsequent therapy sessions – can benefit greatly from a microanalytic insight into imitation patterns or entrainment processes as observable in musical instrument digital interface (MIDI) data. However, the automation of microanalytic methods poses a challenge in formalising analytical arguments while at the same time maintaining qualitative validity in a machine learning environment. This article provides an interdisciplinary theoretical framework for the microanalysis of musical data in clinical improvisation that is suitable for computational implementation, leading to the development of an automated analysis tool for further use in research and clinical practice. While a pilot application of the system presented in the article suggests general functionality, future challenges for the training of a supervised classification model have been identified that focus on the need for formalisation of microanalytic arguments and feature development to ensure qualitative validity.

Introduction

Music therapy is defined as a “reflexive process wherein the therapist helps the client to optimize the client's health, using various facets of music experience and the relationships formed though them as the impetus for change” and is specified as “the professional practice component of the discipline, which informs and is informed by theory and research” (Bruscia, 2014, p. 36). Meanwhile, music therapy is not only informed but also investigated by around 60 Cochrane reviews on the effectiveness of music interventions for various psychological and physical conditions. In conducting music therapy, a wide variety of methods have been developed, including receptive forms of music therapy that focus on listening to music – such as the Guided Imagery and Music method developed by Helen Bonny (Bonny & Summer, 2002) – and active forms of music therapy, such as composition, performance, and clinical improvisation. (Wheeler, 2015)

Music therapy improvisation, especially in dyadic or group settings, focuses on the intermusical relationships between client and therapist as they are formed through their musical interaction when improvising together (Bruscia, 1987). It has been reported to be a highly effective method for treating affective mental disorders, such as depression (Aalbers et al., 2017; Erkkilä, 2014; Erkkilä et al., 2011, 2019, 2021; Hartmann et al., 2023). A possible explanation for the efficacy of this intervention for major depression is commonly found in a psychodynamic background, where clinical improvisation is conceptualized as a symbolic/preconscious vehicle to bring unconscious experiences into consciousness (Bruscia, 1998; Erkkilä, 1997, 2004; Isenberg, 2015).

The musical result of these improvisations, if recorded, represents a highly complex and detailed collection of musical information that also tends to provide insight into the specifics of the underlying social interaction. Assessing and analyzing this musical information in improvisations is crucial for enhancing and securing the potential of clinical improvisation (Bonde, 2016).

In contrast to traditional music therapy analysis models, which include aural analysis and assessment of single sessions (Bruscia, 1987), more recent and elaborated methods, such as microanalysis, focus on the detailed development of improvisation sessions (Trondalen & Wosch, 2016; Wosch, 2021; Wosch & Erkkilä, 2016; Wosch & Wigram, 2007). This comes at the cost of a more time-consuming application process, leading to difficulties in implementing these methods in clinical practice and for more comprehensive research purposes beyond single case studies.

Addressing this imbalance, the methods of computational analysis and music information retrieval (MIR) – a multidisciplinary field of research that focuses on extracting and gathering information from musical data, such as musical instrument digital interface (MIDI) or .mp3 data (Downie, 2008) – aim for faster and higher-volume information processing. Although information systems are continuously implemented in music therapy practice (Amorós-Sanchez et al., 2024; Wosch & Magee, 2018), such as the Improvised Active Music Therapy Treatment (IAMT) methodology (Kogutek et al., 2019, 2021, 2023), Volk et al. identify the need for a closer connection between music therapy practice and computational functioning (Volk et al., 2023).

To close this gap, this article presents a theoretical model of a new analysis method as a combination of (1) an interaction model as a common framework, (2) a microanalysis method, which (3) is implemented in a computational background.

Methodology

The analysis approach presented in this article is designed to generate a dataset for the training of a supervised machine learning classification model (Joshi, 2023; Paluszek & Thomas, 2017) to develop a standalone, automatic analysis tool in subsequent stages. Therefore, the following analysis theory proposes procedures to (1) segment musical data of improvisations into single musical (inter)actions and (2) consistently annotate these single musical interactions alongside characterizing features into distinct classes.

Material

The data presented here are a concatenated dataset whose components were originally published by Erkkilä and colleagues from 2016 to 2020 (Erkkilä et al., 2019, 2021; Hartmann et al., 2023; Saarikallio et al., 2023). The data used were provided by University of Jyväskylä for a pilot for the development of automated assessment of musical interaction in clinical improvisation.

The originally published studies investigated the efficacy of enhancing Integrative Improvisational Music Therapy (IIMT) (Erkkilä et al., 2021) – an improvisational method based on the interplay between dyadic improvisation on piano or djembe and verbalization of musical experiences – including slow-paced breathing or listening homework over the course of 12 biweekly sessions. All analyses presented here focus on mean velocity (loudness) as an example musical feature. The final machine learning model, however, will be trained to adapt to a selection of the features available in the Music Therapy Toolbox (MTTB), including velocity, mean pitch, note density, articulation, and tempo.

Theoretical Components

The analysis approach presented in this article consists of three analytical methods, each responsible for formalizing processes of music therapy improvisation toward automatic analysis.

The Social Systems Game Theory (short SGT) by Tom R. Burns (Burns et al., 2018) serves as a theoretical framework for the modelling of social interaction in clinical improvisation, which further enables the identification of single musical actions by providing a model for interaction and interaction processes.

The Autonomy Microanalysis (IAP-AM) by Thomas Wosch (Wosch, 2007) is an aural analysis and assessment tool and the core of the analysis procedure, providing the necessary annotation classes and interpretative background for further use in practiced music therapy.

The Music Therapy Toolbox (MTTB) (Erkkilä, 2007), as a music information retrieval (MIR) and analysis tool, prepares the musical data for the subsequent segmentation and annotation, and is the computational basis for transforming Autonomy Microanalysis into a quantitative tool (Erkkilä & Wosch, 2018) (Figure 1).

Theoretical components.

Social Systems Game Theory (SGT)

Before introducing Social Systems Game Theory as the framework used, I give a brief introduction to the connection between “game,” “play,” and music therapy.

The subject of “playing” is connected with psychotherapy, musical improvisation, and also clinical improvisation, as it is conventionally defined by the relationship between structure (or rules) and freedom (Huizinga, 1956). Simultaneously, this dyad (structure and freedom) represents two focal points of scientific discourse, which can be illustrated along the conceptual separation of the terms “game” and “play.”

While “playing” focuses on the free aspect of action and is recognized in psychotherapeutic or pedagogical discourses (Buchheim et al., 1996; Lutz Hochreutener, 2009; Winnicott, 2005), “game” (or classical game theory) emphasizes the structure and rationality behind social interaction and is therefore studied in economics or mathematics (Golman, 2020; Matsumoto & Szidarovszky, 2016; Peters, 2015) to describe, analyze, and predict behavior in social interaction.

Discourses in music therapeutic improvisation occasionally acknowledge the aspect of “play” or “playfulness” (Bruscia, 2023; Erkkilä et al., 2011), either by interpreting the musical domain as a psychodynamically effective “potential space” (Winnicott, 2005) or “in-between world” (Weymann, 2019), or by embedding individual musical action in between the dualism of freedom and structure (Bruscia, 2023).

Classical game theory, however, appears to be incompatible with clinical improvisation, as it focuses on strategic interaction that is primarily motivated by maximizing individual gain, driven by strictly rational behavior, and rejects subjective and possibly irrational ways of behaving (Golman, 2020; Matsumoto & Szidarovszky, 2016; Peters, 2015).

Social Systems Game Theory (SGT), on the other hand, is a sociological development of game theory, developed by Tom R. Burns and Eva Roszkowska (Burns et al., 2017, 2018; Burns & Roszkowska, 2005a, 2005b). It rejects the notion of hyper-rationality and further emphasizes multidimensional action criteria, including emotional and expressive qualities (Burns & Roszkowska, 2005b), as well as a more dynamic model of social interaction that is suitable for modelling real-life situations. It therefore seems to be more appropriate for modelling social and musical interaction in music therapy improvisation. By illustrating decision-making processes and providing a model of interaction, the SGT functions as a theoretical framework to specify and structure the object of analysis (interaction).

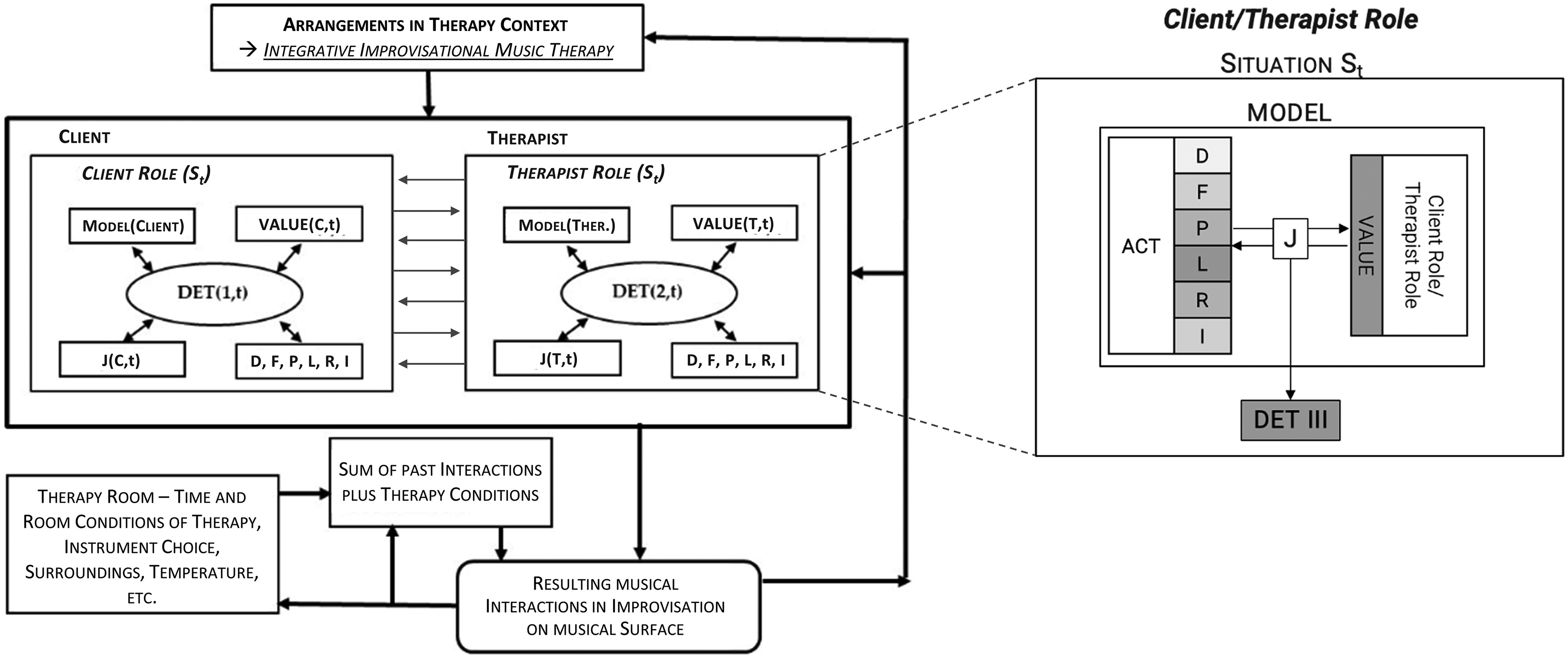

External factors of SGT include (a) physical ecosystem structures (such as time, space, and other material conditions), (b) specific interaction conditions, (c) previous interactions and outcomes, and (d) cultural/institutional arrangements (Burns et al., 2018). Mapped onto music therapy improvisation, these external factors might include (a) specifics of the music therapy room such as temperature or size, time of intervention, and instruments available; (b) time available, physical impairments, psychological and cognitive impairments; (c) previous improvisations from this or another session; and (d) arrangements for the improvisation, possibly determined by improvisation technique or method (Figure 2, left side). The internal factors of SGT are organized in the context of SOCIAL AGENTS, each of which inherits a specific (but nevertheless flexible and possibly changing) ROLE

Two role model of interaction of Tom R. Burns adapted to Music Therapy Improvisation.

The SOCIAL AGENTS involved in the clinical improvisation can be categorized as client and therapist. Possible ROLES taken may depend on the arrangements made beforehand – the client may freely improvise as “client,” may be involved in role play, can be the initiator/idea-giver etc. The ROLE taken in the interaction situation inherits specific MODEL-, VALUE-, J-, and ACT-complexes appropriate to that ROLE. The MODEL complex contains (possibly incorrect or incomplete) information about the interaction conditions and assumptions about the behavior of the interaction partner. This may be information about the specifics of the client's interaction or knowledge about the method used by the therapist (Figure 2, left side).

The VALUE- and ACT

Interaction model of clinical improvisation.

A similar view of the multidimensionality of communication situations can be found in the concept of punctuation in event sequences in Watzlawick et al. (2017). Similarly, Bruscia introduces an iterative process crucial for clinical improvisation that involves imagining, evaluating, selecting, and trying out the musical option that is best suited to the current situation (Bruscia, 2023).

The structure of this interaction model is linked to both the following Autonomy Microanalysis and the Music Therapy Toolbox, acting in this context as a mediator and common framework for formalization purposes. It takes a central role in the segmentation process modelled further down (see “Segmentation”).

Improvisation Assessment Profile “Autonomy”

The Improvisation Assessment Profiles (IAPs) were developed by Kenneth E. Bruscia and first published in his book Improvisational Models of Music Therapy (Bruscia, 1987); they have been enriched with additional material in his most recent book on music therapy assessment (Bruscia, 2023). The IAPs are a standardized aural assessment tool for music therapy improvisations and require prior aural training as in other standardized psychological observational assessment tools. This article aims to transform this tool, which was originally based on complex hearing analysis, into an automated tool based on a machine learning model.

The IAPs comprise techniques for assessing whole or parts of clinical improvisations based on observation, analysis, and psychological interpretation. In total there are six different assessment profiles for analysis, each focusing on a different musical aspect: Integration, Variability, Tension, Congruence, Salience, and Autonomy. Each profile inherits five different gradients that are used to rate and classify the whole or sections of an improvisation. The rating is carried out along different musical dimensions, each focusing on a different parameter: Rhythmic, Tonal, Texture, Volume, Timbre, Physical, or Programmatic.

The Autonomy Profile, which is used to classify the “intermusical or interpersonal relationships” (Bruscia, 1987, p. 444) between client and therapist, is the basis of the Autonomy Microanalysis. It offers the following five gradients for rating: Dependent, Follower, Partner, Leader, Resister. Each of the gradients gives an indication about how likely the client is to take on the role of follower or leader – from Dependent (exclusively follower role) to Resister (exclusively leader role) – in a selected segment or the whole improvisation. It is also assumed that the client's behavior may differ between musical dimensions. This means, for example, that one may follow rhythmically while at the same time resisting in volume or leading in timbre.

When analyzing the Autonomy Profile of a client or an improvisation, the client's functioning in an interpersonal situation is assessed, including insights into the client's awareness of self and others as well as the need to maintain boundaries between self and others. If both awareness and personal boundaries are present, meaningful musical contact with another person can be established. Bruscia further distinguishes musically autonomous behavior as focused on “selfness” or “otherness,” whereas “selfness” is expressed by leadership- (Leader and Resister) and “otherness” by followship-behavior (Dependent and Follower) (Bruscia, 1987).

Therefore, if the client shows a strong tendency to behave as a follower or leader, possible conclusions may relate back to issues of personal boundaries or awareness.

Autonomy Microanalysis

The Autonomy Microanalysis, as created and applied by Thomas Wosch (Wosch, 2002, 2007, 2017; Wosch & Erkkilä, 2016), is a development of the Improvisation Assessment Profile Autonomy, changing several key factors in the analysis process.

Instead of analyzing and classifying the whole improvisation, each transition in the interpersonal activity is continuously evaluated on the basis of a listening score of the improvisation. Afterwards, both the client and the therapist are analyzed and rated along Bruscia's Autonomy gradients for each transition in a table (Wosch, 2007) – Wosch introduced a sixth gradient, “Independent” (Erkkilä & Wosch, 2018). Instead of focusing on many different musical dimensions, the analysis is performed along the selection of three main dimensions: Rhythmical Basis, Melody, and Timbre (Wosch, 2002).

These developments represent a shift in perspective from Bruscia’s original theory. By continuously analyzing both the client and the therapist, the detailed interaction between the two improvisers can be traced and analyzed for a deeper understanding and possibly valuable information for the subsequent therapy sessions. The focus is therefore put on the single improvisation and the detailed interactions, whereas Bruscia's method of analysis tends to focus more on a comparative interpretation of multiple sessions.

An Autonomy Microanalysis of the first minute of the sample case can be seen in Figure 4. The analysis table consists of a top row that indicates the times of each transition perceived in interpersonal activity. Each time a transition is perceived, all three dimensions – Rhythmical Basis (tempo, meter or subdivisions), Melody (modality, tonality and melody), and Timbre (loudness, articulation, instrument choice) – are evaluated along the Microanalysis Autonomy gradients (Dependent, Follower, Partner, Leader, Resister, Independent) for both client (C) and therapist (T).

Autonomy microanalysis of the improvisation sample.

Autonomy Microanalysis can generate highly insightful and detailed results, with the drawback of a high time commitment needed. Therefore, this analysis method would benefit greatly from computerization for effective and time-efficient analysis of intermusical interaction. In addition, computational analysis enables more detailed features and richer musical information to be integrated into the analysis process.

Music Therapy Toolbox

The Music Therapy Toolbox is a MATLAB-based music information retrieval (MIR) and analysis tool, specifically designed for the detailed analysis of clinical improvisation in music therapy (Erkkilä, 2007; Erkkilä & Wosch, 2018; Luck et al., 2006; Wosch, 2021; Wosch & Erkkilä, 2016; Wosch & Magee, 2018). The tool was originally developed at the University of Jyväskylä between 2003 and 2006 and is still under continuous development (Lartillot et al., 2008; Lartillot & Toiviainen, 2007).

To apply the MTTB, MIDI files of whole dyadic improvisations are imported, which are then analyzed according to various musical features. These features include temporal surface features (note density, average note duration, articulation), register-related features (mean pitch, standard deviation of pitch), a dynamic-related feature (mean velocity/loudness), a tonality-related feature (tonal clarity), a dissonance-related feature (sensory dissonance), pulse-related features (individual pulse clarity, individual tempo), and features that quantify the temporal client–therapist interaction (common pulse clarity, common tempo).

Both, the MTTB and the IAP-AM therefore analyze the musical data as split into different musical parameters. Although the musical dimensions (IAP-AM) and musical features (MTTB) can be matched, there is no direct connection.

Each of the analyzed musical features is converted into two different graphs, one for the client (green) and one for the therapist (black), showing the second-by-second evolution of the players’ musical features in relation to each other (Figure 5), providing a detailed and versatile basis for the integration of other analysis methods.

MTTB analysis of mean velocity of the improvisation sample.

Note. Mean Velocity (loudness) is calculated by the MTTB based on the MIDI velocity parameter, which describes the speed or force with which a note is played on a MIDI controller (in this case, the MIDI keyboard). High values represent playing forte, while low values represent playing piano (total values range from 0 to 127). For better visualization, the first two minutes of the sample improvisation (total duration of 4:24 min) have been extracted. The Mean Velocity values for this excerpt range from 22.75 to 79.65.

Own Theoretical Model

In the theoretical model presented in this article, the main task is to integrate the Autonomy Microanalysis into the MTTB functionality, thus enabling data-driven processes and combining qualitative and quantitative functioning. However, to provide sufficient and appropriate data for the further development of a theory and the creation of a standalone tool in a supervised machine learning classification environment, consistent guidelines for the segmentation and annotation of single musical (inter)actions are needed. In the following, prototypes of said segmentation and annotation processes are presented.

Segmentation

The segmentation arguments of the Autonomy Microanalysis, namely transitions in interpersonal activity, are hardly translatable into computational means, as they are perceived alongside a highly complex systematic hearing analysis.

Therefore, the segmentation process of the Autonomy Microanalysis and the method itself need to be formalized. In this context the customized interaction model of the SGT is applied, which defines each transition in interpersonal activity as a transition between different musical situations, generated by an individual change in musical behavior. Each time an improviser changes his/her musical behavior, a new musical situation and thus a possible change in interpersonal activity is implemented.

One way of describing these changes in musical behavior is to focus on trends in individual musical development as observable in the MTTB. Two tendencies of change can be identified:

the absolute change tendency (increasing [ + ], decreasing [−], or remaining at [0] a certain musical feature such as volume, tempo, or density), which is further characterized by the relational change tendency (moving toward the other improviser [affirmative] or away from him/her [contradictive])

…leading to six different classes (Figure 6).

Change tendencies in musical interaction.

Applying this concept of segmentation, indicated by any change in one's absolute change tendency, is equivalent to identifying local minima and maxima – transitions from increasing [ + ] to decreasing [−] absolute change tendencies or vice versa ([−] to [ + ]) – for each player respectively. The segmentation of the original data (Figure 5) along this mechanism can be seen in Figure 7 – the different figures illustrating the segmentation for the client, the therapist, and both combined.

Segmentation Prototype.

Annotation

The formalization of the interaction scheme as process-based interaction, as in SGT, indicates a further shift in perspective. Whereas the Autonomy Microanalysis annotates each improviser's ROLE (in SGT terms) in a given musical situation, the process-based segmentation proposed above focuses on the subsequent individual musical actions that lead to new musical situations with the indication of possibly new interpersonal relationships. Accordingly, the analysis gradients (Dependent to Resister) classify characteristic musical actions/reactions rather than classifying the general musical behavior, thus again shifting the object of analysis from ROLE annotation (as in the Improvisation Assessment Profile “Autonomy” or the Autonomy Microanalysis) to the identification of characteristic musical actions (ACT-complex) that may indicate behavior in a particular ROLE.

In summary, subsequent musical actions, labelled as “Follower-type,” “Leader-type,” “Resister-type,” etc., lead to new musical interaction situations which, in sum, may represent a perceivable ROLE of one of the improvisers in the form of one of Bruscia's gradients (Dependent, Follower, Partner, Leader, Resister).

The annotation of these musical (inter)actions still follows the classes of Bruscia's original Autonomy Profile, but with the additional requirement of characterizing these action types with features compatible with computerization. These features may emerge from explorative or experimental settings in computational environments but are primarily to be deduced from formalization processes of Autonomy Microanalysis to ensure qualitative validity. These links between complex human audio-assessed action types and digitally measured machine learning features form the basis for the training of a machine learning classification model.

Possible features for distinguishing interaction types, used in the following pilot analysis, include the relational change tendency value (i.e., the intensity of an improviser moving towards or away from the other improviser) as a general dimension, or the reaction time to the initial musical impulse of the other improviser, thus mapping the intensity and impulsiveness of action. A general assumption might therefore be that the more intense and the faster an action is, the more it tends to be categorized in an extreme gradient. An illustrative annotation of these features can be seen in Figure 8 and is explained briefly here:

Annotation Sample.

To illustrate the segmentation and annotation process, an illustrative excerpt of Mean Velocity (second 91 to 101) has been extracted. The sample improvisation is segmented along every change of absolute change tendency. In this excerpt, a total of eight segments/(inter)-actions were identified by the segmentation algorithm, consisting of five changes of the therapist (two maxima at seconds 94/96 and three minima at seconds 92/94/99) and three changes of the client (two maxima at seconds 95/97 and one minimum at second 96).

Second 92: Therapist and client start with a significant difference (Δ 12.73 meanv) in mean velocity. While the client acts affirmatively positive toward the therapist (+A, 2.5 meanv/s, [Follower-type]), the therapist changes his/her motion from a negative absolute change tendency to a positive absolute change tendency, thus acting contradictively (+C, 0.55 meanv/s, [Resister-type]). Second 94: The therapist changes the way of playing 2 s after his/her initial motion into an affirmatively negative direction, therefore acting in a Follower-type motion as well (−A, −2.88 meanv/s, [Follower-type]). Second 95: Both therapist and client change their way of playing. The client, having surpassed the therapist with the previous affirmatively positive motion, now reacts 1 s later in an affirmatively negative motion, engaging in a Partner-type motion (−A, −2.33 meanv/s, [Partner-type]) as both players are behaving affirmatively near to each other (Δ 2.33 meanv). The therapist also participates in the Partner-type motion by moving affirmatively positively toward the client (+A, 0.2 meanv/s, [Partner-type]). Second 96: One second later, both players change their behavior in different directions while being almost identical in volume. While the therapist acts in a contradictively negative motion (−C, −2.80 mean/s, [Partner/Leader-type]) and thus reduces his/her volume, the client increases the volume in a contradictively positive motion (+C, 4.14 meanv/s, [Partner/Leader-type]). Depending on the interpretation, both motions could be annotated as Leader-type (as introducing new musical ideas/directions) or Partner-type (as moving in close proximity to each other). Second 97: Shortly after (1 s), the client changes from increasing volume to decreasing volume, behaving affirmatively negatively toward the therapist (−A, −1.51 meanv/s, [Follower-type]) and therefore engages in a Follower-type motion. Second 99: After some delay (2 s), the therapist again moves toward the client by becoming louder, behaving affirmatively positively (+A, 0.92 meanv/s, [Follower]).

In this excerpt it can be seen that the client tends to engage mainly in Follower-type behavior, indicating that he or she might lean toward “otherness” by focusing predominantly on the other person's musical behavior. Depending on the other improvisations and possibly previous sessions, this prevalence of Follower-type motions may be the norm or an exception to the general behavior, therefore possibly indicating a relevant change in the client's behavior. Either way, if the behavior is being identified on a larger scale or as a meaningful moment, it can be reflected upon verbally as part of the Integrative Improvisational Music Therapy methodology. Similar interpretations and argumentations of clinical relevance in objectivist approaches can be found in Erkkilä & Wosch (2018) and Wosch & Erkkilä (2016).

This type of segmentation and annotation process was carried out for the entire improvisation, resulting in a dataset consisting of single musical (inter)actions, where each musical motion was characterized by distance to the other player, movement intensity (absolute and relational), reaction time, and the final ROLE-type annotation.

Results

The application of these analysis methods in a pilot setting (rule-based segmentation and a manual annotation of the resulting segments according to the guidelines of classical Autonomy Microanalysis) to the whole sample improvisation yields the results shown in Figure 9 and Figure 10.

Distribution of manually annotated (inter)action types (numeric and percentual) of the sample improvisation.

Distribution of (inter)action types along relational change tendency and reaction time for mean velocity (loudness) of the sample improvisation.

Figure 9 shows the distribution of the manually annotated interaction types used for the whole improvisation as well as the individual distribution of interaction types used by each therapist and client. The annotation results show a major tendency of both players of the sample improvisation to emphasize primarily Follower-, Resister-, and Partner-type actions, leading to the conclusion that both of them were able to participate meaningfully in the improvisation without one player being prevalent as a leader or follower. Instead, both players shared control of the improvisation (Follower/Partner-type actions) and responded affirmatively to each other (Follower-type actions), but also maintained individual boundaries (Resister-type actions). The higher segments analyzed for the client indicate more changes in the individual behavior, which can be interpreted as a need to adapt their behavior more often than the therapist, possibly hinting toward uncertainty and a greater need to adapt to the other player – thus emphasizing otherness. The excerpt analyzed above (Figure 8) illustrates this behavior in more detail, as both players show a general tendency to follow each other, interrupted only by the leading efforts of both players at second 96, which is resolved by the following of the client, who adapts to the volume of the therapist.

It should be kept in mind that this annotation has been conducted intuitively and therefore does not represent the formalized annotation process of the Autonomy Microanalysis that is currently under development.

Figure 10 illustrates a possible characterization of the musical interaction types along the relational change tendency value and the reaction time, as proposed as possible characterizing features for machine learning recognition. The results shown in Figure 10 indicate that while the relational change tendency value could be a major argument for distinguishing between basic affirmative (Dependent, Follower) and contradictive (Leader, Resister) interaction types, reaction time does not seem to clearly distinguish between, for example, Leader- and Resister-type behavior for the annotation used in this pilot. This offers insights in two different ways: (1) The differentiation of Leader- and Resister-type (Inter)actions based on graph annotation does not intuitively include reaction time in this example. Resisting motions of the client and therapist (for mean velocity in this improvisation) therefore typically might be understood as more controlled motions and non-impulsive actions. (2) Reaction time needs to be evaluated as a predictor of interaction type classification. If reaction time is assumed from Autonomy Microanalysis to be a predictor for Resister-type or Dependent-type behavior, then subsequent annotations (along the annotation guideline in development) must be conducted with a greater focus on this feature.

Discussion

In the next steps, this method requires intensive work to carefully integrate the Autonomy Microanalysis into the computational functioning of the Music Therapy Toolbox, which involves detailed formalization and synchronization work in the new paradigm of process-based musical interaction to design comprehensive and detailed annotation guidelines. This integration will take place in MATLAB to connect the analysis system directly to the functionality of the MTTB, which is programmed in MATLAB, without the need to convert it to another programming language. Although open-source programming languages such as R or Python would contribute to wider applicability, the direct connection to the MTTB and the focus on numerical computation for feature calculation argue in favor of MATLAB. Further, it is planned to develop a standalone version of the analysis tool to make it available independently.

In addition, one of the main future challenges is to deduce computable machine learning features relevant to interaction type recognition, alongside the creation of annotation guidelines, both of which are mandatory for the training of a precise machine learning classification model. This challenge is mainly due to the nature of the Improvisation Assessment Profile “Autonomy” gradients (Dependent, Follower, Partner, Leader and Resister). Since they were originally intended to be applied to larger improvisation excerpts (the whole or a section of an improvisation) and are applied on the basis of a complex hearing analysis, there is no precise description of how people behave in detail (second-by-second) when they behave as Dependent, Follower, etc.

Therefore, the annotation guidelines needed to annotate single musical (inter)actions will be created as a formalization of the original gradient descriptions (as ROLEs in the SGT), complemented by an in-depth analysis of manually applied Autonomy Microanalysis annotations to specify the precise arguments used in manual role annotation.

With these implementations in place, further work can be undertaken toward a fully automated analysis system based on machine learning algorithms to provide a detailed and efficient microanalytic analysis tool for further use in research and clinical practice – from detailed analysis of single situations to statistical evaluation of the evolution of musical interaction behavior over the course of several improvisations.

Conclusion

The aim of this article was to combine different theoretical components (Social Systems Game Theory, Autonomy Microanalysis and the Music Therapy Toolbox) to develop a comprehensive analytical approach that can produce a suitable dataset for machine learning purposes to develop a tool applicable in music therapy assessment. While the theories could be successfully mapped to each other for the purpose of segmenting musical data into single musical (inter)actions and labelling these actions with the classes of Autonomy Microanalysis, central connecting areas were discovered to ensure qualitative validity and effective machine learning processes. These include the development of comprehensive annotation guidelines and the deduction of concrete machine learning features for the characterization of musical actions, which will be pursued in the coming months.

Footnotes

Acknowledgments

Special thanks go to Jaakko Erkkilä (Centre of Excellence in Music, Mind, Body, and Brain (CoEMMBB), University of Jyväskylä) for the close collaboration regarding the material used.

Special thanks go to the other members and cooperation partners of the HIGH-M project: Thomas Wosch (Technical University of Applied Sciences Würzburg-Schweinfurt), Sebastian Trump and Martin Ullrich (Nuremberg University of Music), Olivier Lartillot and Anna-Maria Christodolou (RITMO Centre for Interdisciplinary Studies in Rhythm, Time and Motion), and Jörg Fachner and Clemens Maidhof (Cambridge Institute for Music Therapy Research (CIMTR)).

Action Editor

Jörg Fachner, Anglia Ruskin University, Cambridge Institute for Music Therapy Research

Peer Review

Ivan Moriá Borges, Federal University of Minas Gerais, Music Therapy.

Demian Kogutek, Wilfrid Laurier University, Faculty of Music.

Consent to Participate

Written informed consent was obtained from all participants (see “Ethical considerations”).

Consent for Publication

Written informed consent was obtained from all participants (see “Ethical considerations”).

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical Approval

This research did not require ethics committee or IRB approval. This research did not involve the use of personal data, fieldwork, or experiments involving human or animal participants, or work with children, vulnerable individuals, or clinical populations. The MIDI data used in this article is a product of an ethically approved study (Erkkilä et al., 2021), including analysis of MIDI data, and has been anonymized for further use so that participants can’t be recognized.

Ethical Considerations

The processed data presented in this article are based on a material transfer agreement of the 18th of December 2023 between the University of Jyväskylä and the Technical University of Applied Sciences Würzburg-Schweinfurt. The original study was approved by the ethical board of Central Finland health care district, 07/09/2017, ref.: 17 U/2017. Jaakko Erkkilä of University of Jyväskylä was informed and gave feedback on the use of the material.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Hightech Agenda Bavaria [grant number HTA THWS Förderlinie A HIGH-M]; and the Bayerische Wissenschaftsforum [grant number 4020304BayWISSVobig]. Supported by the publication fund of the Technical University of Applied Sciences Würzburg-Schweinfurt.