Abstract

Sound categorization is automatic, yet very little is known about how this process works. Physical sound sources such as musical instruments generate sounds that carry timbral information about two mechanical components: The excitation sets into vibration the resonator, which acts as a filter to amplify, suppress, and radiate sound components. Given that excitation–resonator interactions are quite limited in the physical world, Modalys, a digital, physically inspired modeling platform, was utilized to simulate the combinations of three excitations (bowing, blowing, striking) and three resonators (string, air column, plate). This formed nine types of interactions, which are either typical (e.g., struck string) or atypical (e.g., blown plate). In two separate categorization tasks, participants chose either the excitation or resonator they thought produced each interaction. For the typical interactions, participants accurately categorized their excitations and resonators. Atypical interactions were assimilated to typical ones and listeners identified either the correct excitation or the correct resonator but not both. Hierarchical clustering revealed that interactions were perceived differently depending on the categorization task. These findings suggest that unfamiliar sound sources are interpreted as conforming to familiar sound sources for which mental models exist. These studies consequently exemplify the role of timbre in sound source recognition.

Introduction

Humans encounter many different sounds, from voices to musical instruments, on a daily basis. The ability to recognize sound sources is automatic yet impossible without considering the timbres they emit. Timbre has been defined as the “attribute of auditory sensation which enables a listener to judge that two nonidentical sounds, similarly presented and having the same loudness and pitch, are disimilar [sic]” by the American National Standards Institute (ANSI S1.1-1994, p. 34). This definition is misleading, tells us very little about timbre, and only describes it as what it is not, rather than what it is. McAdams (2013) explained that timbre perception is composed of two characteristics. The first is that timbre carries a plethora of auditory attributes that either continuously vary over the duration of a sound (e.g., brightness, attack sharpness, nasality, etc.) or are categorical (i.e., characteristic of a specific sound). The second characteristic describes timbre as the driving force of sound source identification such that sounding objects can be classified into categories. Accordingly, timbre bears perceptually useful information about the mechanical components of sound sources (Giordano & McAdams, 2010).

A large body of literature has investigated how timbre perception is associated with the acoustical features of a sound wave (Caclin et al., 2005; Grey & Gordon, 1978; Lakatos, 2000; McAdams et al., 1995). These acoustical features, however, do not arise spontaneously: They originate from sound sources, which have mechanical components. Less empirical attention has been given to the dependence of timbre perception on the mechanical components of acoustic musical instruments (Giordano & McAdams, 2010). The two mechanical components of interest to the current study are the excitation mechanism and resonant structure, which interact to create sound. We are particularly interested in whether listeners can recognize excitations and resonators independently of one another, or if the perception of one is dependent on the other.

Musical Instrument Identification

Listeners have the tendency to assign labels to everyday sounds, including those produced by musical instruments. Several studies have tested the recognition of musical instrument tones using identification tasks (Berger, 1964; Elliott, 1975; McAdams et al., 2023; Saldanha & Corso, 1964). Some instruments are more easily identifiable than others. For example, Saldanha and Corso (1964) found that listeners were best at identifying the clarinet, oboe, and flute, whereas the worst identification performance was for the cello and bassoon. With respect to wind instruments, Berger (1964) found that listeners more easily identified the oboe, clarinet, cornet, and tenor saxophone compared to the flute, trumpet, alto saxophone, bassoon, French horn, and baritone horn. Musical instrument identification also depends on register: Nonmusicians judged tones separated in pitch by more than an octave as originating from different instruments even when they were played by the same instrument (Handel & Erickson, 2001, 2004; McAdams et al., 2023). Additionally, studies have reported that certain portions of sounds are important for instrument identification. Saldanha and Corso (1964) found that identification performance was best for tones that retained their onsets and sustain portions, whereas the presence of the release portion contributed very little to identification performance. They also found the presence of vibrato in the sustain portion improved categorization even when the onset portion was removed from the sounds. Although Elliott (1975) similarly found that onsets were important for musical instrument identification, participants were also worse at identification when the release portion of recorded sounds was removed, suggesting that the release portion also communicates acoustically important information for identification. Identification performance was much better for recordings that did not have any portions removed (i.e., unaltered recordings), except for the cello, which was commonly mistaken for the violin.

The experimental tasks of the above studies generally required participants to listen to isolated tones and identify them from a list of musical instrument names (except for Handel & Erickson, 2001, who asked participants if a sequence of tones separated in pitch was played by the same instrument or different ones). Rarely, however, do experimental tasks require participants to identify musical instrument tones based on the mechanical components that produced them. An attempt to connect instrument identification performance to the mechanical components of sound sources was made by Giordano and McAdams (2010) in their review of previous identification studies. The general pattern they found was that the identification of individual instruments was greater than chance, and confusions were often made for instruments of the same family. Very rarely did participants confuse instruments from different families. These authors suggested that a greater accuracy of identifying instrument tones might be predicted by larger differences in the mechanical components of sound sources.

Testing the recognition of the mechanical components of sound sources is more common in studies involving impacted materials; that is, objects made of various materials are set into vibration by some kind of action. Several studies have tested the identification of the materials of simulated plates and bars when they are struck (Giordano & McAdams, 2006; Lutfi & Oh, 1997; McAdams et al., 2004, 2010). Less attention, however, has been given to other excitatory actions, such as dropping, bouncing, bowing, and blowing. These studies are important to consider nonetheless given that actions are synonymous with excitation mechanisms and materials are an aspect of resonant structures. They also provide insight as to how listeners recover one mechanical component independently of the other.

Identification of Impacted Materials

Although the primary focus of the current research will be on musical tones, the majority of previous studies have focused on the recognition of the materials of impacted objects (i.e., mostly nonmusical sounds, except McAdams et al., 2004, who used synthesized xylophone bars) (Aramaki et al., 2009; Giordano & McAdams, 2006; Klatzky et al., 2000; Lakatos et al., 1997; Lutfi & Oh, 1997; McAdams et al., 2010). Fewer studies have examined the identification of multiple actions such as bouncing (Warren & Verbrugge, 1984), in addition to their role on material identification (Hjortkjær & McAdams, 2016; Lemaitre & Heller, 2012).

Listeners are generally good at differentiating between the materials of impacted objects across gross categories such as metal–glass and plexiglass–wood even across different sizes of objects (Giordano & McAdams, 2006). However, distinction within gross categories of materials becomes confusing (Lemaitre & Heller, 2012). McAdams et al. (2010) examined material distinctions within the gross category of metal–glass with dissimilarity ratings and a categorization task. The researchers simulated impacted plates that varied in two material properties: wave velocity (related to elasticity and mass density) and damping properties (thermoelastic and viscoelastic damping). Because of the interpolations between the two damping models, the plates represented a continuum of materials between aluminum and glass. Dissimilarity ratings depended on the information corresponding to wave velocity and damping, but categorization performance depended on damping properties alone. Density and elasticity change the modal frequencies, which can also vary with object size. So, the modal frequencies were not considered a reliable cue for material categorization per se. Therefore, listeners based their judgments on acoustical features that were relevant to the perceptual task (McAdams et al., 2010). Less common is research on how sound-producing actions affect timbre perception, even though listeners are likely more sensitive to the actions rather than the materials or objects involved in sound production (Lemaitre & Heller, 2012; McAdams, 2019). One exception includes a study by Warren and Verbrugge (1984), which found that listeners could accurately distinguish between breaking and bouncing glass. A second exception includes a study demonstrating that listeners could distinguish between different speeds of rolling balls, but determining the speed depended on the size of the ball (Houben et al., 2004).

More recently, research has focused on sounds that are produced by combining different actions and materials. In Lemaitre and Heller's (2012) study, four different actions (scraping, rolling, hitting, and bouncing) were applied to cylinders made of four different materials (wood, plastic, metal, and glass). In one experiment, listeners rated sounds based on how well they conveyed different materials and actions. The ratings supported previous findings demonstrating material confusion within gross categories of wood–plastic or metal–glass. However, listeners consistently rated the resemblance of the actions correctly regardless of gross category membership (i.e., sustained versus discrete actions). The second experiment was an identification task, which also measured reaction times. Action identification was more accurate and faster than material identification, likely because the acoustic components determining the type of excitation are in the attack portion of the sound, whereas determining the material might require participants to listen for acoustical cues such as damping, which occur much later in the sound. These findings demonstrate the informational value of the mechanical components of sound sources and a greater sensitivity to actions than materials.

Hjortkjær and McAdams (2016) employed a similar stimulus design as that of Lemaitre and Heller (2012). The stimuli combined three actions (drop, rattle, and strike) with plates made of three materials (wood, metal, and glass). Dissimilarity ratings of the stimuli were explained by two dimensions, as revealed by multidimensional scaling (Hjortkjær & McAdams, 2016). One dimension separated wood from metal and glass and was correlated with changes in spectral centroid (i.e., spectral center of gravity). The second dimension separated the three actions and was correlated with variability in temporal centroid (i.e., center of gravity of the energy distribution across time). Consistent with Lemaitre and Heller's (2012) findings, material identification was better between than within gross categories (i.e., wood versus metal–glass), and distinctions among actions were clear regardless of gross action category membership (i.e., single versus multiple impacts). However, confusions were made for materials with similar spectral centroids and actions with similar temporal centroids. Hjortkjær and McAdams’s (2016) findings highlight the influence of sound source mechanics on acoustical properties, which in turn contribute to the timbres of generated sounds.

Interaction of Excitations and Resonators in Musical Tones

Much like impacted materials, musical instruments contain interactions between two closely related macroscopic mechanical components: the excitation mechanism and the resonant structure. For example, a clarinet consists of an interaction between blowing (excitation) and an air column (resonator). An interaction is therefore the coupling of a resonator (e.g., string, air column, plate) to a controllable energy source (e.g., bowing with a frictional bow, blowing a vibrating reed, striking with a hammer) (Fletcher, 1999). Interactions of sustained sounds are nonlinear (McIntyre et al., 1983), meaning that an increase in the input disproportionally increases the output (Fletcher, 1999). Struck strings have been described as nearly linear given that only the initial hammer contact is considered nonlinear. Furthermore, the coupling of struck plates does not correspond to any nonlinearity (Fletcher, 1999). Excitation–resonator interactions of musical instruments are very specific: For example, air columns can be blown but not bowed. This was demonstrated in a recent study that used Modalys (Dudas, 2014), a physically inspired modeling synthesizer, to simulate combinations between three types of excitations (bowing, blowing, striking) and three types of resonators (string, air column, plate), forming nine classes of excitation–resonator interactions (Huynh et al., 2024, reports a detailed explanation of the synthesis design). Four of these interactions were typical of acoustic musical instruments (bowed string, blown air column, struck string, struck plate). These interactions are deemed typical as they are frequently encountered. Accordingly, typical interactions are mechanically plausible because they are physically modeled after acoustic musical instruments and listeners can conceptualize these interactions. The remaining five interactions were atypical: bowed air column, bowed plate, blown string, blown plate, struck air column. Atypical interactions can be described by their familiarity and mechanical plausibility as well. Bowed plates and struck air columns might be more familiar to musicians than nonmusicians, considering musicians’ exposure to extended techniques of musical instruments (e.g., a bowed xylophone bar or a slap tongue on the clarinet or saxophone). So, musicians might be more likely to say the bowed plate and struck air column are mechanically plausible in comparison to nonmusicians. However, the five atypical interactions are physically impossible in terms of their synthesis in Modalys. Modalys synthesizes the bowed plate such that the bow passes through the plate to excite it, whereas a xylophone bar is bowed at its edge. A slap tongue technique is normally applied at the mouthpiece of a clarinet or saxophone, but Modalys applies the striking excitation somewhere along the length of an air column not connected to a mouthpiece. The other three atypical interactions (bowed air column, blown string, and blown plate) are even less likely to be considered familiar and mechanically plausible.

To test the efficacy of the synthesis design employed by Huynh et al. (2024), their listeners rated the extent to which exemplars of the nine interactions resembled the three excitations (Experiment 1) or resonators (Experiment 2) in separate experiments. For the stimuli produced by typical interactions, listeners assigned higher resemblance ratings for both the excitations and resonators that actually produced them. However, listeners rated the atypical interactions as resembling either the correct excitation

Mental Models of Musical Sound Sources

McAdams and Goodchild (2017) argued that listeners form mental models of sound sources, even when their timbral characteristics vary with changes in pitch, dynamics, and other parameters. A mental model is an internal representation of how a system (such as a musical instrument) behaves in the world. Mental models are closely tied to Smalley's (1997) concept of source bonding. As sounds are heard through exposure, listeners cannot help but attempt to recover the events and sources that produce them into some kind of mental framework. Smalley (1997) extends the concept to explain that sounds are considered to be related if they seemingly share similar origins. So, sounds produced by similar events and sources shape and refine the mental model of a particular sound source. Of course, mental models of musical instruments can be acquired and shaped differently depending on one's musical background. Musicians interact with their instruments daily, allowing them to understand the techniques and restrictions of sound production. Nonmusician listeners can generally understand how a musical instrument is played through passive exposure, but the specific techniques or restrictions of sound production may not be as well understood. Researchers have found that listeners categorize musical sound sources very quickly (Agus et al., 2012), suggesting evidence for mental models of musical sound sources. It is important to note that the stimulus set of the current study will be exploring excitation–resonator interactions outside their typical contexts. Listeners have likely developed the mental models for typically combined excitations and resonators over frequent exposure. However, it is possible that the atypical interactions are too complex for listeners to form new mental models for them, as demonstrated by Huynh et al. (2024).

The Current Study

Previous studies have highlighted the role of sound source mechanics in timbre perception of mostly nonmusical sounds (Aramaki et al., 2009; Giordano & McAdams, 2006; Hjortkjær & McAdams, 2016; Klatzky et al., 2000; Lemaitre & Heller, 2012; Lutfi & Oh, 1997; McAdams et al., 2004, 2010; Warren & Verbrugge, 1984). Huynh et al. (2024) examined how listeners perceived sounds produced by typical and atypical excitation–resonator interactions that were synthesized with physically inspired modeling: Listeners used a continuous scale to rate the extent to which the interactions resembled the excitations and resonators that did or did not produce them. A categorization task on the same stimulus set as Huynh et al. (2024), however, might more directly examine whether and how the atypical interactions fit into listeners’ mental models of sound sources. We also examine the role of musical experience, if any, on categorization accuracy. Given that mental models of sound sources can be shaped by a variety of musical experiences that are not limited to the number of years of formal musical training, we used a self-report inventory called the Goldsmiths Musical Sophistication Index (Gold-MSI) to assess the individual differences in listeners’ musical backgrounds (Müllensiefen et al., 2014; see more information in the Participants section). The scores of the Gold-MSI were added to the analyses as covariates to examine if there is a musical experience advantage with respect to correct categorization of the excitations and/or resonators used to create the interactions.

Four hypotheses are proposed for the current study. First, the easiest excitation and resonator to identify are striking and the string, respectively. Striking is the only impulsive excitation involved in the stimuli of the current study, and impulsive excitations are known to be easily distinguished from sustained ones (Giordano & McAdams, 2010; Lemaitre & Heller, 2012). The string is the only resonator involved in two typical interactions as it is typically struck or bowed. The air column and plate are each associated with one typical interaction, which will make them harder to identify across all interactions. The second hypothesis proposes that excitations and resonators might not be processed independently of one another in the way that actions and materials are when dealing with impacted materials. Mechanical plausibility and familiarity through exposure play a large role in the perception of natural sound-producing events. The sounds produced by impacted materials are mechanically plausible, meaning listeners can easily interpret their sound production without knowing the exact actions and materials involved. Given that the atypical interactions in the current study are physically impossible, their sound production will be ambiguous to most listeners. This leads to the third hypothesis: the perception of atypical excitation–resonator interactions will conform to existing mental models of sound sources by assimilating 1 them to typical interactions. This prediction would complement the findings of Huynh et al. (2024) by using a categorization task rather than a resemblance rating task. For example, the bowed air column and blown plate might be categorized as being produced by blowing an air column, since these atypical interactions were previously associated with higher blowing and air column resemblance ratings. Furthermore, assimilation to typical interactions indicates that listeners seek invariant features between an ambiguous sound source and one for which a mental model exists. Finally, the fourth hypothesis is that atypical interactions will be categorized and perceived differently depending on whether listeners’ attention is directed to the excitations or resonators. For example, the bowed plate might assimilate to mental models of either (1) the bowed string when sounds are categorized based on their excitations or (2) the struck plate when categorization is based on resonators. As it is difficult for listeners to conceptualize the sound production of the atypical interactions, they might in turn interpret them as something that is known or familiar. This interpretation, however, will depend on what their focus is directed to.

Thus, this paper aims to investigate how sounds produced by atypical excitation–resonator interactions are incorporated into mental models of sound sources by comparing their perception to the typical interactions. The current study tests whether listeners can detect the invariant features among the different excitations or resonators regardless of the complementary mechanical property they are applied to, or if listeners’ interpretations of the stimuli conform to what they are already familiar with. The experiment involves a two-part categorization task. Participants listened to each sound in the stimulus set and categorized them based on their excitations in one part and resonators in another part. For the analyses, the responses were analyzed in terms of categorization accuracy. The confusions that listeners make were analyzed to speculate on which typical interactions the atypical ones generally conform to and whether the types of confusions depend on excitation or resonator categorization.

Method

Participants

Forty-seven participants (35 females, 11 males, 1 self-identified as “other”) were recruited from either a mailing list or web-based advertisement certified by McGill University. They reported having normal hearing, which was confirmed by a pure-tone audiometric test with octave-spaced frequencies from 125 to 8,000 Hz at a hearing threshold of 20 dB HL relative to a standardized hearing threshold (International Organization for Standardization, 2004; Martin & Champlin, 2000). They had an average age of 22.8 years (

Apparatus

Listeners completed the audiometric test and main experiment in an IAC model 120act-3 double-walled audiometric booth (IAC Acoustics, Bronx, NY). The experiment ran on a Mac Pro computer running OSX (Apple Computer, Inc., Cupertino). The stimuli were presented over Seinnheiser HD280 Pro headphones (Sennheiser Electronic GmbH, Wedemark, Germany) and were amplified through a Grace Design m904 monitor (Grace Digital Audio, San Diego, CA). The physical levels of the sounds were measured by coupling the headphones to a Bruel and Kjær Type 4153 Artificial Ear connected to a Type 2205 sound-level meter (A-weighting) (Bruel & Kjær, Nærum, Denmark). The sounds varied in level from 57.8 to 72.8 dB SPL. The experimental task was programmed in the PsiExp computer environment (Smith, 1995).

Stimuli

A digital, physically inspired modeling synthesizer, Modalys, was developed by The Musical Acoustics Team at the Institut de Recherche et Coordination Acoustique/Musique (IRCAM) in Paris, France. Modalys has the advantage of simulating different excitations and resonators without the resulting sounds necessarily being perceived as an existing musical instrument (Dudas, 2014; Eckel et al., 1995). It implements modal synthesis to isolate parameters of an excitation and a resonator and models the acoustical outcome between their interaction. Nine classes of interactions between three excitations (bowing, blowing, striking) and three resonators (string, air column, plate) were simulated. Four of these interactions are considered typical as they can be produced in the physical world: bowed string (BoSg), blown air column (BlAc), struck string (SkSg), and struck plate (SkPl). The remaining five interactions—bowed air column (BoAc), bowed plate (BoPl), blown string (BlSg), blown plate (BlPl), and struck air column (SkAc)—either cannot be physically produced and are mechanically implausible (BoAc, BlPl, BlSg) or are rarely encountered by listeners (BoPl, SkAc), so they are considered atypical interactions. Given that the atypical interactions are rarely or never encountered in everyday music listening, it was difficult to anticipate how they would sound. Moreover, physically inspired modeling of atypical interactions is quite uncommon (for notable exceptions, see Böttcher et al., 2007 for musical sounds and Conan et al., 2014 for continuously excited objects). Consequently, an exhaustive approach was implemented to synthesize 400 versions of each excitation–resonator interaction type, such that a variety of timbres could be chosen for each interaction type. The stimulus design was described in detail in Huynh et al. (2024), but a summary will be described here. To maintain the experimental control necessary for the experiments, the physical parameters pertaining to each resonator and the temporal envelope of each excitation were kept as consistent as possible. For example, the same string was used for each excitation applied to it, and the same temporal envelopes for bowing were applied to each resonator. Given that Modalys provides users with default examples of typical interactions upon installation, those of the bowed string, blown air column, and struck plate were used first. The goal was to isolate the parameters of each excitation from those of each resonator in their typical interactions before simulating them in atypical contexts. In general, Modalys estimates the resonator properties by computing the modes that would be present during vibration. Excitation properties were estimated by solving a time equation that predicts the temporal evolution corresponding to its movement.

The resonators were simulated with three different models, so the sounds they produce should differ in their harmonic content. The string was a thin wire fixed at its edges and was expected to produce sounds with modes vibrating at integer multiples of the fundamental frequency. The air column was cylindrical, open at one end, and closed at the other. The modes of the air column should primarily vibrate at odd harmonic ratios with respect to the fundamental frequency. The plate was rectangular, rigid, made of metal, and fixed at its edges. When excited impulsively, the plate should have primarily inharmonic content. However, all the modes of the plate would initially be activated if a sustained excitation was applied to it; then, a fundamental frequency would correspond to one of the modes, and the other modes would be almost harmonically related to the fundamental.

The bowing excitation was primarily made up of two temporal envelopes; one controlled the bow speed and the other controlled the bow pressure. Variations in these two parameters are known to produce considerable changes in timbre: Loudness is mainly controlled by the bow speed, and brightness is mainly controlled by the bow pressure (Halmrast et al., 2010). Minor adjustments were made to these temporal envelopes during their interaction with the string to make the bowing sound as realistic as possible. Then, the bowing excitation could be applied to the air column and plate (having been isolated from their interactions with blowing and striking, respectively). Twenty values of the maximum bow speed were combined with 20 values of the maximum bow pressure. This formed 400 versions for each of the BoSg, BoAc, and BoPl interactions.

Blowing through a model of a clarinet mouthpiece with a single reed was controlled by two temporal envelopes for the breath pressure and embouchure pressure. The embouchure pressure describes the control of the height of the initial reed opening. Varying breath pressure and embouchure pressure is known to change the resulting timbre, such that a higher breath pressure and a smaller reed opening generate brighter sounds (Coyle et al., 2015). Adjustments to the temporal envelopes of the breath pressure and embouchure pressure were initially made when they were applied to an air column. Once a realistic blowing sound was achieved, the two temporal envelopes were then applied to the string and plate. Twenty values for both the maximum breath pressure and maximum embouchure pressure were combined with one another, generating 400 versions for each of the BlAc, BlSg, and BlPl interactions.

The striking excitation was involved in two typical interactions. When applied to the string and plate, the temporal envelope of the hammer force was adjusted to generate a realistic sound. Then, the same temporal envelope was applied to the air column. Twenty values of the maximum hammer force were combined with 20 values corresponding to the position at which the sound output was recorded along the length of the string or air column. Consequently, 400 versions of each of the SkSg and SkAc interactions were synthesized. For SkPl, Modalys normalizes the output of the sounds, so changing the hammer force did not generate considerable changes in the resulting timbre. Instead, 20 values of both the horizontal and vertical coordinates at which the sound output was recorded, emphasizing different vibration modes, were combined in the initial synthesis of SkPl, forming 400 versions of this interaction.

In the final stimulus set, three exemplars out of the 400 versions were selected for each interaction. These 27 exemplars were chosen in the previous study by Huynh et al. (2024), and the same exemplars are used in the current study. The general process of selecting three exemplars first involved noting which of the 400 versions of each interaction produced an audible output. This generated a space of perceptible sounds for the physically inspired models of each interaction (see Supplementary Materials provided by Huynh et al., 2024 for the plots of the perceptible sounds of each interaction). Then, the authors noted the degree to which each of the perceptible sounds resembled the excitation and resonator that produced it. After listening to all the sounds that were reported to resemble the excitation and resonator of each interaction, three exemplars were then informally chosen on the basis that they conveyed a variability of the timbres of that interaction. The lowest vibration mode of each stimulus was 155 Hz, which corresponded to a pitch of E-flat-3. The stimuli were modified in Matlab (Mathworks, Natick, MA) to have a duration of 2 s. Silences were added to the ends of stimuli shorter than 2 s. Stimuli longer than 2 s were trimmed because the excitation had already been terminated by its temporal envelopes and the sound no longer conveyed any new acoustic information beyond that duration. Additionally, to prevent an abrupt cut off in the stimuli, a 50 ms exponential fadeout was applied to each of them. Spectrograms of one exemplar of each interaction are shown in Figure 1.

Spectrograms for one exemplar of each interaction type, organized by the excitations (in rows) and resonators (in columns) that produced them.

Procedure

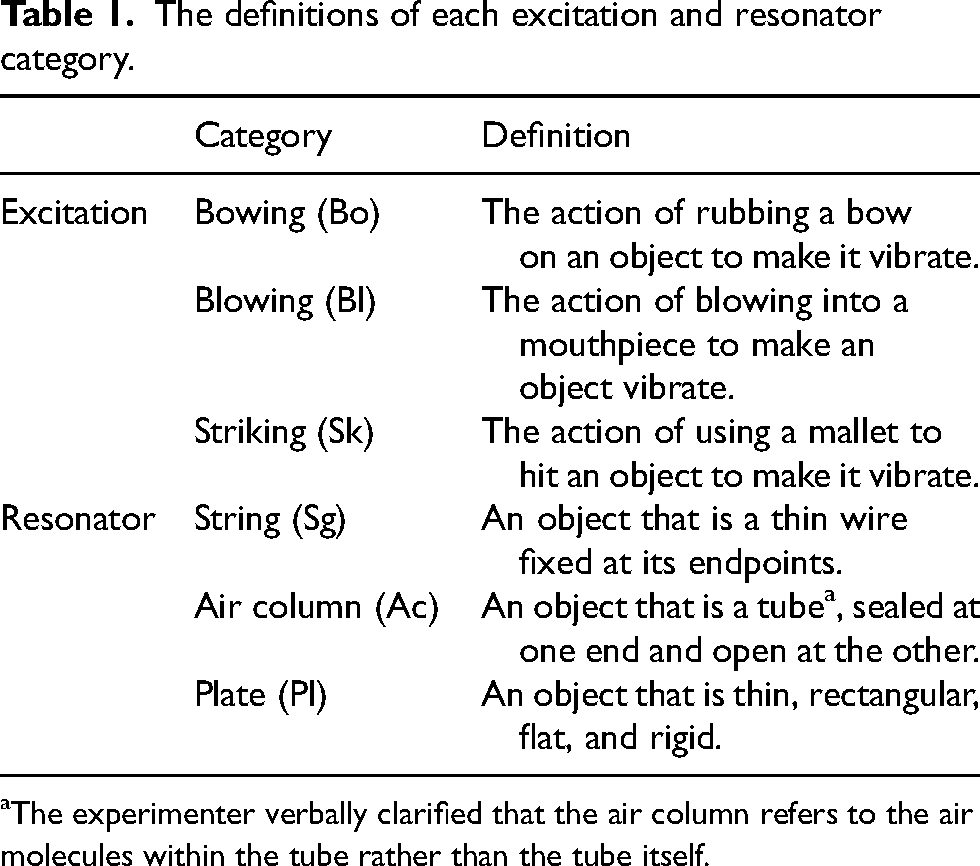

Prior to beginning the experiment, the listeners were given written definitions of each excitation and resonator type (Table 1). Excitations were defined as types of actions performed to set a resonator into vibration. Resonators were defined as types of objects that vibrate and radiate sound components. After receiving written and verbal instructions of the main experiment, the participants completed two practice blocks, one for excitation categorization and one for resonator categorization. The practice blocks were based on three stimuli that were produced by typical interactions: BoSg, BlAc, and SkPl. They were synthesized to have a lowest vibration mode of 220 Hz, corresponding to an A3 pitch. These interactions were chosen as they each represented one of the three excitations and one of the three resonators. Each practice block comprised three trials, one for each relevant excitation or resonator category. The practice blocks were completed with the experimenter so that the participant was able to ask questions about the instructions or interface before beginning the main experiment. The practice blocks followed the same format and paradigm as the experimental blocks.

The definitions of each excitation and resonator category.

The experimenter verbally clarified that the air column refers to the air molecules within the tube rather than the tube itself.

At the beginning of the experimental blocks, the participants listened to the full range of stimuli to get a sense of the variability of the excitations and resonators producing the sounds. The sounds were presented with an inter-onset interval (IOI) of 2 s in a pseudo-random order such that two successive sounds were not produced by the same excitation–resonator interaction (e.g., a BoSg was not presented after another BoSg). The experiment was divided into two blocks: One concerned the categorization of the excitation of each stimulus, and the other concerned resonator categorization of each stimulus. The order of the two blocks was counterbalanced across the participants. In each block, there were 27 trials (one for each stimulus), giving a total of 54 trials in the entire experiment. In each trial, the participants were played a stimulus, which they were able to hear only once. Depending on whether the excitations or resonators were being categorized, there were three boxes on the screen representing the names of the three corresponding categories. The positions of the three boxes were randomized across the participants but remained constant over trials for each participant. The participants were instructed to click the box corresponding to the excitation or resonator they thought produced the sound, depending on the block. The order of stimulus presentation within each block was pseudo-randomized such that two stimuli produced by the same interaction were not presented successively. Once the participants were satisfied with the excitation or resonator they had chosen, they proceeded to the following trial.

Analyses

The correct response data for both excitation categorization and resonator categorization were analyzed in two separate binomial logistic regressions using generalized mixed linear effects modeling. We chose GLMM (i.e., a multilevel model) because the data contained non-independent observations as each participant made a categorization response for each stimulus. Moreover, multilevel modeling can account for random effects that are introduced by within-participant predictors, much like the excitation and resonator categories of the current stimulus set. The participants’ musical training scores from the Gold-MSI were included as a between-participants predictor. 2 The musical training score is a between-participants predictor because listeners varied in their scores, making musical training an independent observation. The formalization of the regression models used for the correct response of excitation categorization and of resonator categorization is explained in the Appendix.

Two regression models were computed (i.e., one with the dependent variable as the correct response of excitation categorization and the other with the dependent variable as the correct response of resonator categorization) using the lmerTest package in R (Kuznetsova et al., 2017). To find the maximal random effects structure justified by the correct categorization responses, the approach proposed by Barr et al. (2013) was implemented. That is, if the model with all random effects resulted in a singular fit, the random slope that either had a very low variance (i.e., close to 0) or was highly correlated with another random slope was removed. Random slopes that contributed to the singular fit were removed one by one until the model no longer had a singular fit. According to Schielzeth and Forstmeier (2009), this technique guards against the high Type I errors that intercept-only mixed effects models are prone to. Consequently, the random slopes for each of the resonator types were dropped from the regression model for the correct response of excitation categorization. For the regression model representing the correct response of resonator categorization, no random slopes were removed as their inclusion did not result in a singular fit. (See Equations 8 and 9 of the Appendix for the formalization of the regression models for excitation categorization and resonator categorization, respectively.) Selected fixed effects of the two regression models, accounting for random effects, are reported in the Results section to examine how the excitations and resonators of the stimulus set and degree of musical training influence the accuracy of both excitation and resonator categorizations.

Results

Categorization Accuracy

The current study examined how well the participants categorized the excitations and resonators of the nine types of interactions without knowing how they were produced. Figure 2 shows the mean percent correct of excitation and resonator categorization for each interaction type. The participants were best at identifying striking regardless of the type of resonator on which it acted. This was not surprising because striking was the only impulsive excitation, whereas there were two types of sustained excitations. Accuracy of bowing and blowing categorization depended on the resonator of the interaction. The listeners were generally better at identifying the string than the air column or plate. The string was more versatile and associated with two typical interactions in the stimulus set (e.g., bowed and struck), but the air column and plate were each associated with one typical interaction. A general pattern of excitation and resonator categorization among the atypical interactions was observed: The participants were better at categorizing either the excitation or the resonator but not both. They more often identified the correct excitations than the correct resonators for the struck air column (SkAc) and blown plate (BlPl). On the other hand, the resonators of the bowed air column (BoAc), bowed plate (BoPl), and blown string (BlSg) were more correctly categorized than their excitations.

Mean percent correct of categorizing the excitations and resonators of each interaction type. Error bars represent one standard error of the mean. Bo = bowing, Bl = blowing, Sk = striking, Sg = string, Ac = air column, Pl = plate.

As described in the Analyses section, we computed two separate binomial logistic regressions for the correct response of excitation categorization and the correct response of resonator categorization as dependent variables with generalized linear mixed effects modeling. The excitation types, resonator types, their interaction, and the participants’ musical training scores of the Gold-MSI were included as predictors in both regression models.

For the correct response of excitation categorization, the effect of musical training scores was not statistically significant,

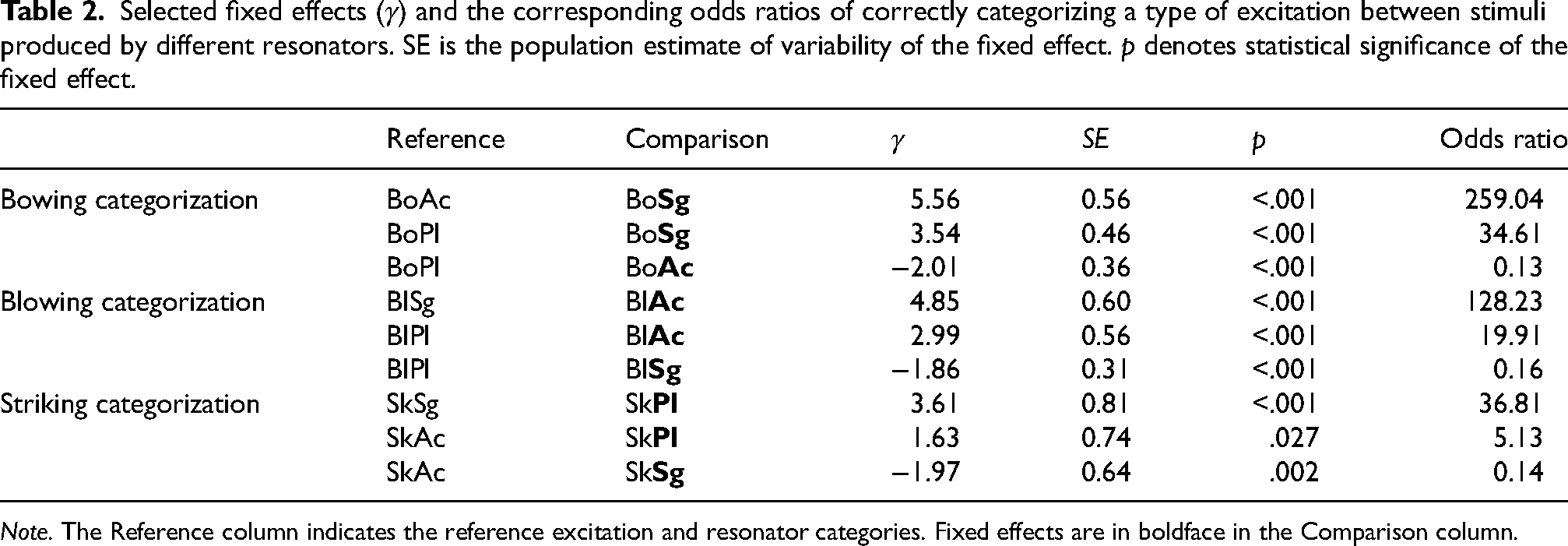

Selected fixed effects of excitation categorization correct responses are reported in Table 2. By re-running the same excitation categorization regression model (described in the Appendix) and changing the reference excitation and resonator categories, we computed the log odds ratios (i.e.,

Selected fixed effects (

Unlike excitation categorization accuracy, the correct response of resonator categorization was significantly influenced by listeners’ Gold-MSI musical training scores,

Selected fixed effects of the different types of excitations on the correct categorization of the resonators, controlling for musical training scores, are shown in Table 3. These selected effects were obtained by running the same regression model multiple times and changing the reference excitation and resonator categories. The odds ratios generally illustrate that the odds of correctly categorizing the resonator were greater when the sounds were produced by typical interactions than by atypical ones. For example, the odds of correctly categorizing the string were greater when it was bowed or struck than when it was blown. The regression coefficient for the fixed effect of bowing (Bo) relative to SkSg was non-significant, meaning that there were no differences in the odds of accurately choosing the string (Sg) between BoSg and SkSg. As mentioned, these are both typical interactions, so resonator categorization was very accurate for them (Figure 2). For air column (Ac) categorization, the odds of correct categorization were Bl > Bo > Sk. As expected, the air column was categorized most accurately when it was blown because this is a typical interaction. But the air column was also identified quite accurately when it was bowed (Figure 2). Among the sounds produced by the plate (Pl), the odds of its correct categorization from greatest to smallest were Sk > Bo > Bl. These patterns of results support the hypothesis that resonator categorization would be more accurate for typical than atypical interactions.

Selected fixed effects (

In Tables 2 and 3, some of the odds ratios comparing stimuli produced by atypical interactions reflect large differences in how accurately the participants categorized their excitations and resonators. Furthermore, the mean percent correct scores of Figure 2 reveal that the participants were more accurate at categorizing either the excitation or the resonator but not both among the atypical interactions. They also seemed to vary in how well they categorized the mechanical components, depending on the interaction. For example, even though resonator categorization was better than excitation categorization for BoAc, BlSg, and BoPl, the participants were much better at identifying the air column in BoAc sounds than the string in BlSg and the plate in BoPl sounds. To further dissect the categorization performance, confusion data were analyzed to examine which excitations or resonators were mistaken for one another among the atypical interactions that could not be explained by the percent correct scores and linear mixed effects model analyses alone.

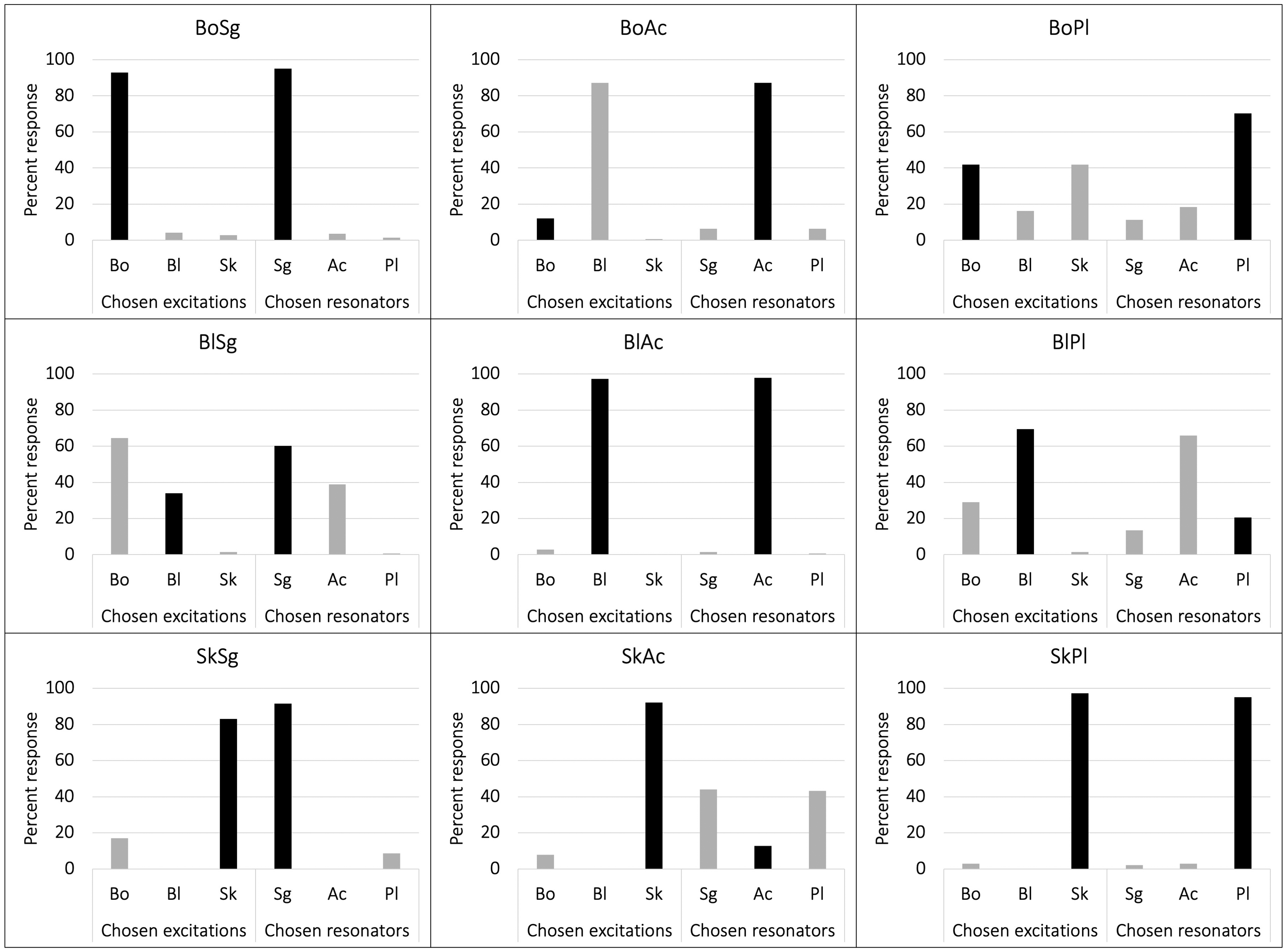

Confusion Analyses

The previous section discussed whether the participants categorized the excitations and resonators of each interaction correctly or incorrectly. For the atypical interactions, the participants achieved higher percent correct scores for either the excitation or the resonator. If they were better at identifying the excitation, for example, of interest to the current study is why they performed worse for resonator categorization. Did they confuse the resonator that produced the sound for a different one? If so, which one? And what does this confusion imply about the mental models for novel or unfamiliar sound sources? Similar questions for the excitations that were confused were proposed as well. The mean percent response of choosing each excitation and resonator category for each interaction across all the participants (regardless of Gold-MSI musical training scores) is shown in Figure 3. The nine interactions are separated into different graphs, and the different excitation and resonator categories are labeled on the horizontal axis. The height of each bar reflects how often the corresponding category was chosen. Black and gray colorations indicate correct and incorrect responses, respectively.

Percent response of choosing each excitation and resonator category (horizontal axis) for each of the nine interaction types (separated by different graphs). Black bars represent correct categorization (i.e., percent correct scores).

For the typical interactions (e.g., BoSg, BlAc, SkSg, and SkPl), the participants most often chose the excitation and resonator that were actually involved in the interactions. This aligns with the hypothesis that listeners would not confuse the mechanical components of these interactions with others. The participants were more likely to say BoAc and BlPl were produced by the blowing excitation and air column resonator, suggesting that they assimilated these atypical interactions to BlAc. This was confirmed by computing two hierarchical cluster analyses, one for excitation categorization (Figure 4a) and another for resonator categorization (Figure 4b), with between-groups linkage as the cluster method. It should be noted that musical training scores could not be added as a covariate in this type of analysis, so the explanation of the findings is generalized across all the participants. As seen in Figure 4, BlAc, BoAc, and BlPl clustered regardless of whether the participants were categorizing the excitations or resonators. A possible explanation for this can be attributed to the modal frequencies of the resonators. The cylindrical air column should have modes at odd harmonic ratios with respect to the lowest vibration mode (i.e., fundamental frequency). This might have been detectable for BoAc, explaining its assimilation to BlAc. As for BlPl, when a plate is driven by a sustained excitation, such as blowing, the resulting vibrations lock into a periodic pattern after the initial excitation of all modes. There will be a fundamental frequency that likely corresponds to the frequency of one of the modes, and then the other modes of the plate will be nearly harmonically aligned to the fundamental frequency. So, BlPl was perhaps not categorized as a plate because listeners expected it to have inharmonic rather than harmonic content.

Dendrograms showing the clustering of the nine interactions based on (a) excitation categorization and (b) resonator categorization.

The remaining atypical interactions were also assimilated to typical ones, but the type of typical interaction they were assimilated to depended on the categorization task. Looking only at excitation categorization in Figure 3, there was a clear assimilation of SkAc to SkSg and SkPl. The hierarchical cluster analysis based on excitation categorization in Figure 4a further confirmed this: Struck sounds clustered together and were easily distinguished from sustained sounds. The participants chose bowing more often than blowing for BlSg (Figure 3); so, not only did they confuse blowing for bowing, but the perception of BlSg assimilated to BoSg when they were instructed to focus on the excitations. As for BoPl, the participants were indecisive between bowing and striking. This confusion may be due to the decay times for the modes of the plate being quite long, so it might have been difficult for the listeners to differentiate between a sustained and impulsive excitation in this case. The dendrogram for excitation categorization in Figure 4a, however, generated a cluster for BoSg, BoPl, and BlSg, suggesting that the latter two interactions were assimilated to the former.

Focusing on resonator categorization in Figure 3, the listeners sometimes confused BlSg for the air column. However, they chose string more often, generating a response pattern that reflects that of BoSg and SkSg. The dendrogram generated from the hierarchical cluster analysis (Figure 4b) supports the assimilation of BlSg to BoSg and SkSg based on resonator categorization. Although the participants were divided when deciding whether BoPl was bowed or struck, they were more certain that it was produced by a plate (see Figure 3). Lastly, SkAc was categorized as a string or plate almost equally but was hardly categorized as an air column. The dendrogram in Figure 4b, however, revealed that BoPl and SkAc clustered with SkPl. These results suggest that there were more confusions between the air column and plate, but the string was easily discernable from them.

Discussion

In two categorization tasks, participants chose either the excitation or resonator that they thought produced each of the sounds in the stimulus set. Each sound was produced by one of nine excitation–resonator interactions. Four interactions were typical: bowed string (BoSg), blown air column (BlAc), struck string (SkSg), and struck plate (SkPl). The remaining five interactions were atypical: bowed air column (BoAc), bowed plate (BoPl), blown string (BlSg), blown plate (BlPl), and struck air column (SkAc). For the most part, the results of the current study converged with previous experiments by Huynh et al. (2024). They found that listeners assigned the highest resemblance ratings to the excitations and resonators that actually produced the typical interactions, but the resemblance ratings for atypical interactions reflected some confusions. Likewise, in the current experiment, listeners were most accurate at identifying the excitations and resonators of the typical interactions, whereas categorization performance for the atypical interactions demonstrated listeners’ confusions. The confusions depended on whether excitations or resonators were being categorized.

To examine whether the participants’ musical backgrounds influenced the accuracy of categorizing the excitations and resonators, musical training scores from the Gold-MSI (Müllensiefen et al., 2014) were added as a covariate in the analyses. Musical training scores did not impact excitation categorization accuracy but did impact resonator categorization accuracy. For excitation categorization, the participants were extremely good at distinguishing striking from the two sustained excitations, whereas confusions between bowing and blowing appeared to be quite consistent across the participants regardless of musical training scores. Resonator categorization, on the other hand, might require some knowledge about the differences in the harmonic content of sounds produced by different resonators. Those with higher musical training scores might have acquired this knowledge through increased exposure to musical instruments. Consequently, these individuals might be better at picking out the harmonic content of the string from the primarily odd-harmonic content of the air column and the variability of harmonic content of the plate (e.g., inharmonic when struck, and harmonic locking when sustained). It is important to note, however, that the musical training scores would have to increase by nearly 30% for the odds of correctly categorizing the resonator to increase by 1.91 times. Given that the musical training scores of the participants spanned from 8 to 49, there would have to be quite a large increase in scores (nearly 12 points) to achieve greater odds of correct categorization. We consider this to be quite a small effect size and postulate that regardless of musical training, similar types of confusions between the resonators among the atypical interactions were made. Although the mental models of musical instruments might be more refined and shaped by exposure for listeners with more musical training, it could be argued that musical training does not give participants that much of an advantage in categorizing the mechanical components of interactions that they cannot conceptualize. So, the discussion of the remaining findings will be generalized across all participants irrespective of their degree of musical training.

In line with the first hypothesis, striking and the string were the most accurately categorized excitation and resonator, respectively. This was mostly reflected in previous experiments as well: All struck sounds had the highest striking resemblance ratings and, apart from BlSg, the string sounds had higher string resemblance ratings (Huynh et al., 2024). In the current study, listeners categorized BlSg as a string more often than as an air column. BoSg and SkSg were most often categorized as a string. As mentioned, these were the two typical interactions involving the string, which increased the chances of its correct identification. Striking was by far the most correctly categorized excitation because it was the only impulsive one, whereas there were two sustained excitations.

The two sustained excitations, bowing and blowing, were simulated to have quite different durations of excitation (as seen in the top two rows of spectrograms in Figure 1). The difference in bowing and blowing excitation durations should have allowed the participants to distinguish them from one another. Instead, they were often confused for one another, especially when they were involved in atypical interactions. A possible explanation for the confusion is that the physical models of these two excitation methods have been considered analogous (Ollivier et al., 2004). Two significant parameters contributing to the bowing excitation are the pressure of the bow on the string and the speed of the bow. These parameters are analogous to the embouchure pressure on the mouthpiece (i.e., the control of the height of the initial reed opening) and the breath pressure of the blowing excitation (Ollivier et al., 2004). Another explanation for the confusion between bowing and blowing corresponds to how the listeners assimilated them to the mental models of typical interactions. For BoAc and BlPl, the listeners were more decisive in categorizing them as blown over other excitations. This finding seemed to be predicted by the resemblance ratings of previous experiments revealing that BoAc and BlPl sounded more like blowing than bowing (Huynh et al., 2024). The listeners of the current study were also somewhat more decisive in saying that BlSg sounds were produced by bowing rather than by blowing; this result was not entirely predicted from the previous experiment, which demonstrated that listeners rated BlSg as resembling both bowing and blowing almost equally (Huynh et al., 2024). If it were the case that half of the time these atypical interactions were categorized as bowed and categorized as blown the other half of the time, then it is likely that the primary reason for the confusion concerns the potential interchangeability between bowing and blowing as mechanical processes. However, because the listeners were more decisive in choosing one excitation over the other, these findings suggest that an assimilation is primarily at play, whereas the potential interchangeability between bowing and blowing plays a secondary role. The role of assimilation is more obvious because the atypical interactions that bowing and blowing were involved in conformed to interactions that are familiar and perceived as mechanically plausible.

Furthermore, for BoPl sounds, it was bowing that was often confused for striking rather than blowing. In the previous study by Huynh et al. (2024), the listeners indicated that BoPl sounded more like striking than bowing. This did not necessarily predict excitation categorization in the current study as the listeners categorized BoPl as bowed and struck equally. Given that this interaction comprised a sustained excitation, it was interesting that the listeners seemed to occasionally perceive it as struck. In terms of resonator categorization, BoPl was most often categorized as a plate. This seemed to be predicted by Huynh et al.'s study, which found that resemblance ratings for BoPl were higher for the plate than the air column or string. As discussed below, perception of BoPl seemed to assimilate to different typical interactions depending on the categorization task.

The explanation of assimilation also supports the second, third, and fourth hypotheses of the current study. The second hypothesis states that an excitation mechanism cannot be teased apart or perceived independently from the resonator it typically interacts with and vice versa. The current findings imply that listeners perceive typical interactions even when they are listening to atypical ones. In acoustic musical instruments, the perception of an excitation is very much tied to the resonator to which it is applied. Sound production in musical instruments is restrictive in the sense that, even with the many extended techniques one could perform on a stringed instrument, for example, no one thinks to blow a string and even less so to excite it with a reed. The breath would not create enough force on the string to set it into vibration, so it would not create much sound. Due to this mechanical implausibility, it is difficult to conceptualize this interaction along with other atypical interactions. This implication additionally coincides with Smalley's (1997) theory that, with respect to electroacoustic music, listeners might have difficulty making the connection between sound-generating components and the perception of the sound. Smalley's theory applies to the digitally simulated atypical interactions of the current study given that the participants clearly could not intuitively associate what they heard with the physical gesture or sounding body involved in sound production. However, the connection between perception and sound generation is obvious for typical interactions and being able to visualize the interaction in acoustic musical instruments (e.g., by watching a performer play their instrument) enhances this connection (Smalley, 1997). Accordingly, it was likely more intuitive for the participants to assimilate the atypical interactions to something that is easier to conceptualize, which additionally supported the third hypothesis.

In the third hypothesis, the assimilation of atypical to typical interactions was predicted. This was partly discussed in the case of bowing and blowing confusions, but SkAc is also a notable example. In the current study, SkAc was almost equally categorized as the string and plate and was also previously equally rated as resembling them (Huynh et al., 2024), suggesting that the listeners assimilated it to SkSg or SkPl. In other words, the listeners denied that SkAc was produced by the air column and conformed the atypical interaction to two typical ones. Another clear example was the assimilation of BoAc and BlPl to BlAc. Interestingly, it was different mechanical components to which BoAc and BlPl were assimilated: The excitation of the former was assimilated to blowing and the resonator of the latter was assimilated to an air column. However, these assimilations were consistent across both excitation and resonator categorization. The same cannot be said, however, for the remaining atypical interactions. This aligns with the fourth hypothesis that the type of categorization task would change how the interactions were perceived, and consequently they could assimilate differently. The hierarchical cluster analyses demonstrated different patterns of clustering depending on the categorization task, with the exception of the BlAc-conforming cluster that included BlAc, BoAc, and BlPl. For excitation categorization, there was a striking cluster featuring all struck sounds and a BoSg-conforming cluster with BoSg, BoPl, and BlSg. In contrast, for resonator categorization, there was a string cluster (SkSg, BoSg, BlSg) and a SkPl-conforming cluster comprising SkPl, BoPl, and SkAc. Therefore, assimilations of the atypical interactions to typical ones differed across categorization tasks.

The assimilations observed in the current experiment were likely formed based on unsupervised learning. It is considered unsupervised because the assimilations were learned through repeated exposure and the detection of co-occurring features (Harnad, 2017) between the unfamiliar, atypical interactions and the familiar, typical ones. This was context-dependent, as the listeners sought different co-occurring features depending on whether categorization was based on the excitations or resonators of the stimuli. A possible approach to test the improvement of both types of categorizations and the reduction of assimilations would be a supervised learning task involving trial and error and corrective feedback (Harnad, 2017). This would train listeners to detect the features that are common among sounds produced by the same excitations or resonators, rather than the features shared between atypical interactions and the typical ones to which they conformed. Consequently, it would be of interest in future work to examine the role of supervised learning in the categorization of the atypical interactions.

One question these findings have yet to answer deals with the efficacy of Modalys as a physically inspired modeling synthesizer. With the atypical interactions, we could not anticipate how they would sound prior to their synthesis. Even after their synthesis, we could not conclude whether the different excitations and resonators were accurately conveyed by Modalys because the atypical interactions are physically impossible. It is important to note that BoPl and SkAc can be considered mechanically plausible as they are representative of extended techniques such as bowed xylophone bars and slap tongue on a clarinet or saxophone, respectively. However, the way these two interactions were synthesized in Modalys does not reflect the mentioned extended techniques. Instead, the bow passes through the plate to simulate BoPl, and a hammer strikes the air molecules within the air column at a position along its length rather than at a mouthpiece for SkAc. So, these two atypical interactions along with the other three are not physically possible. Moreover, each excitation–resonator interaction was simulated with very controlled approaches, with manipulations applying to only two parameters of each interaction. Although a performer does not manipulate only two parameters at a time when they play single notes on their instrument, McAdams and Goodchild (2017) have noted that changing just one parameter, such as the embouchure configuration of a clarinet, can alter the timbre of produced sounds. Additionally, the parameters manipulated in the current stimulus set were among those that are commonly manipulated in the physical modeling of acoustic musical instruments: bow speed and pressure (Halmrast et al., 2010); breath pressure and embouchure pressure (Dalmont et al., 2005); and a variety of options for percussive sounds (Halmrast et al., 2010). Manipulating other types of parameters would be of interest to test the efficacy of Modalys in the synthesis of typical and atypical interactions.

Conclusion

The current study showed that excitation and resonator categorization of nine types of excitation–resonator interactions depended on the familiarity with the interactions and their perceived mechanical plausibility. Musical training appeared to be irrelevant for excitation categorization but had a significant, albeit small, effect on resonator categorization accuracy. Contrary to previous studies (Hjortkjær & McAdams, 2016; Lemaitre & Heller, 2012), excitation categorization was not any better than resonator categorization, but the excitations used in the current study sometimes interacted with resonators atypically. Therefore, the accuracy of excitation and resonator categorization depended on the typicality of the interactions. For the atypical interactions, the listeners interpreted them as conforming to an interaction for which they already have the mental models. It would be interesting to see how these findings relate to dissimilarity ratings of the same set of sounds and whether atypical interactions can be learned. Successful learning of the atypical interactions would provide insight on how and whether new mental models are formed for them. Taken together, the current study reveals how timbre perception provides listeners with information about sound source mechanics and how novel and unfamiliar sound sources conform to existing mental models of typical sound sources. These studies highlight the complex role of timbre in a process that is essential to human behavior: identifying the source of a sound.

Supplemental Material

sj-zip-1-mns-10.1177_20592043241285667 - Supplemental material for Categorization of Typical and Atypical Combinations of Excitations and Resonators of Musical Instruments: Assimilation of the Unusual to the Familiar

Supplemental material, sj-zip-1-mns-10.1177_20592043241285667 for Categorization of Typical and Atypical Combinations of Excitations and Resonators of Musical Instruments: Assimilation of the Unusual to the Familiar by Erica Ying Huynh and Stephen McAdams in Music & Science

Footnotes

Acknowledgements

We would like to thank Bennett K. Smith for programming the experiment and Marcel Montrey for their guidance in the statistical analyses.

Action Editor

Asterios Zacharakis, School of Music Studies, Aristotle University of Thessaloniki.

Peer Review

One anonymous reviewer Trevor Agus, SARC, School of Arts, English and Languages, Queen's University Belfast.

Author Contributions

EYH and SMc researched the literature and conceived the study. EYH designed the study, recruited the participants, and collected and analyzed the data. SMc provided guidance across all steps and secured the ethical approval. EYH wrote the first draft of the manuscript. Both authors reviewed and edited the manuscript and approved the final version.

Ethics Approval

The Research Ethics Board II of McGill University has reviewed this study for compliance with ethical standards (REB File No. 162-0817). Participants provided written informed consent prior to the study.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by grants from the Canadian Natural Sciences and Engineering Research Council (NSERC) awarded to SM (RGPIN-2020-00420). EYH was supported by the Doctoral Postgraduate Scholarships funding program of NSERC (Grant Number 700414).

Data Availability

All stimuli, anonymized datasets, and R scripts are available online as supplementary materials. They are included in the online version of this article.

Supplemental Material

Supplemental material for this article is available online.

Notes

Appendix

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.