Abstract

Recent studies suggest that rhythmic syncopation is a relevant predictor for groove. In order to validate these claims, a reliable measure of rhythmic syncopation is required. This article investigates whether a particular notation-based model for estimating syncopation in Western popular music drum patterns adequately predicts perceived syncopation. A listening experiment was carried out with 25 professional musicians. Six popular music drum patterns were presented to the participants in all 15 pairwise combinations, and the participants chose the pattern from each pair that was more syncopated (win), compared to the other pattern (lose). Perceived syncopation was defined as the proportion of wins for each stimulus. The experiment showed that the model works well in general, but that it overemphasises the weight of syncopes on weak metric positions. This exaggerates the syncopation value of one particular drum pattern and generally leads to inflated syncopation values in the upper syncopation range. In consequence, the fit between the model and perceived syncopation was poor, even when flexible logarithmic functions

Syncopation (from the Greek συγκοπή, “sudden loss of strength”, Liddell, Scott, Jones, & McKenzie, 1996, p. 1666) is a rhythmic phenomenon in metered music that occurs when a weak metric position is accentuated by a note onset, but no onset happens on the subsequent strong metric position (Fitch & Rosenfeld, 2007; Longuet-Higgins & Lee, 1984; Temperley, 1999). Syncopation may trigger a moment of surprise in a listener; it has been interpreted as a “class of violations of temporal expectation” (Huron, 2008, p. 297) and as a conflict between rhythm and meter (Kühn, 1982; London, 2004, p. 86; Randel, 1999, p. 652). Temperley (2010) defined syncopation from a probabilistic point of view as a rhythmic pattern that is “low in probability given the prevailing meter” (p. 371). Syncopation has been understood as a source of rhythmic complexity in music, along with cross-rhythm, polyrhythm, and offbeatness (Fitch & Rosenfeld, 2007; Pfleiderer, 2006, pp. 145–150).

Syncopation has recently been discussed as a relevant factor for creating groove in listeners. In music psychology, groove is understood as a pleasurable “urge to move in response to music” (Janata, Tomic, & Haberman, 2012, p. 54). The groove phenomenon as inception of entrainment (Clayton, 2012; Clayton, Will, & Sager, 2005) or sensori-motor synchronisation (Repp, 2005; Repp & Su, 2013) in human beings is one of the most fundamental effects of music (Madison, 2001).

To date, the influence of syncopation on groove has only partly been clarified. One study found that musicians introduced more syncopation into their music when they intended to increase groove in listeners (Madison & Sioros, 2014). Others reported that a moderate degree of syncopation was best for groove, while low and high degrees had a negative impact on groove (Sioros, Miron, Davies, Gouyon, & Madison, 2014; Witek, Clarke, Wallentin, Kringelbach, & Vuust, 2014). Another study found that participants synchronised their body movement more readily with low- and medium-syncopation music, than with highly syncopated music (Witek et al., 2017). Finally, a recent study on popular music drum patterns reports that syncopation is positively associated with groove in expert music listeners, but no effect was measured for non-musicians (Senn, Kilchenmann, Bechtold, & Hoesl, 2018).

In order to assess the effect of syncopation on groove, a measure is required that reliably predicts the strength of listeners’ experience of syncopation. Several concepts of syncopation and methods for measuring its intensity have been described in the past (Arom, 1991; Gómez, Melvin, & Toussaint, 2005; Toussaint, 2002).

Longuet-Higgins and Lee (1984) proposed a method for quantifying syncopation which has been widely discussed (Essens, 1995; Fitch & Rosenfeld, 2007; Gómez, Thul, & Toussaint, 2007; Sioros, Davies, & Guedes, 2018; Witek et al., 2014). This model (the so-called LHL model) offers an operational definition of syncopation on the basis of notated music. It presents a method to assign a weight to each occurrence of syncopation in a rhythm, and it expresses the overall syncopation of a rhythm as the sum of these weights. Fitch and Rosenfeld (2007) found that the LHL method predicts listeners’ subjective experience of syncopation quite successfully.

LHL was conceived to model syncopation in monophonic music only. But most real-world musical situations involve several voices forming a complex, multi-layered rhythmic pattern. Witek et al. (2014) (revised version: Witek, Clarke, Wallentin, Kringelbach, & Vuust, 2015) expanded on the LHL model in order to accommodate two rhythmic layers, namely the bass drum and the snare drum voices of a Western popular music drum set pattern. These two voices are arguably the most important sources of syncopation in this kind of drum pattern. The hi-hat voice as the third essential layer of popular music drumming (Baur, 2002; Fleet & Winter, 2014; Stewart, 2000; Tamlyn, 1998) most of the time provides a background of regular pulsation against which the syncopated rhythms of bass drum and snare drum take place.

A model like the one presented by Witek et al. (2014, Index of syncopation, see supporting information in Witek et al., 2015) is of great use for the study of popular music drum patterns and groove, if it can be shown that the model predictions agree with listeners’ subjective impressions of syncopation intensity.

This study presents a formal implementation of a model that largely reproduces Witek et al.’s method, but takes a few liberties (see subsection A formal model description, below). It further offers a validation of the implemented model and aims to achieve three goals:

The study establishes a ground truth on perceived syncopation of six reconstructed popular music drum patterns in a listening experiment.

It investigates the fit between the predictions of the implemented model and the experimental results.

If necessary, it proposes changes to the model in order to improve its fit with the ground truth.

Listening experiment: Empirical ground truth on perceived syncopation

Stimuli

Six popular music drum patterns of eight bars’ duration were chosen as a basis for the experiment’s stimuli (Table 1) from a corpus of 250 popular music drum patterns (Lucerne Groove Research Library, http://www.grooveresearch.ch) that had been collected by the authors and their colleagues.

Stimuli and Perceived syncopation with 95% confidence intervals (see also Figure 3).

The six patterns are typical representatives of Western popular music drumming in rock, funk, or pop style. On the original recordings, the patterns have been played by some of the most renowned drummers in popular music. The passages have been selected such that the drummer plays a regular, at least partly repetitive, pattern. No drum solos were chosen. The patterns have the same instrumentation as the stimuli investigated by Witek et al. (2014): in the selected passages, the drummers use the hi-hat, bass drum, and snare drum only. Also in accordance with Witek et al., the six patterns feature an eighth-note-based pulse on the hi-hat. We chose the patterns to show considerable variation in terms of snare and bass drum syncopation (see Table 3 below), ranging from the rhythmically simple “archetypical rock beat” (Tamlyn, 1998, p. 12) of “Billie Jean” (Leon Chancler/Michael Jackson) to the highly complex patterns of “I got the feelin’” (Clyde Stubblefield/James Brown) and “Whole lotta love” (John Bonham/Led Zeppelin). “Kashmir” (John Bonham/Led Zeppelin) was chosen as a wildcard: it obtained a medium syncopation value according to the implemented model, which (to us authors) appeared to overestimate the perceived level of syncopation.

The first author, a professional jazz drummer, transcribed the patterns by ear from the original recordings. The original hi-hat voice was replaced by a regular, monotonic sequence of eighth notes without syncopation, in line with the stimuli used by Witek et al. (2014). The six resulting drum patterns can be studied in Figures 1 and 2.

Transcriptions of “Billie Jean”, “Kashmir”, and “My father’s eyes” snare drum and bass drum patterns. Regular eighth-note sequences replace the original hi-hat voice.

Transcriptions of “Hyperpower”, “Whole lotta love”, and “I got the feelin’” snare drum and bass drum patterns. Regular eighth-note sequences replace the original hi-hat voice.

The drum patterns were reconstructed in Avid ProTools (version 12.1) using audio samples from the Toontrack Superior Drummer (version 2.4.4) Custom & Vintage Library. One drum kit with a relatively neutral sound was chosen for the reconstruction of all six patterns, and a small amount of reverberation was added to the sound. This created the illusion that all drum patterns were played on the same drum kit in the same room.

Participants

Participants for the listening experiment were recruited within the first author’s personal and professional network. All participants were required to have completed a Bachelor of Arts in Music degree; most of them held a Master of Arts in Music degree. This ensured that participants were familiar with the concept of syncopation, theoretically and practically. A total of 25 participants (7 female, 17 male, age 28 ± 3.8 years) fulfilled this condition and took the test, which was carried out in German. Three people indicated they were non-native German speakers, but they claimed to have a good understanding of the language.

Setup and procedure

The listening experiment was carried out in several quiet environments at the Lucerne School of Music or at private indoor locations, supervised by the first author. Music examples were played from an Apple laptop computer (running Mac OS, version 10.11.6) using iTunes (version 12.7.2.60) for music playback. Participants brought their own headphones to the experiment; all participants used earbuds. Participants gave spoken feedback, which the researcher noted in an Excel for Mac (version 14.7.7) spreadsheet. Participants did not see the computer screen during the experiment.

Participants gave informed consent, and answered questions about their person. They listened to a test stimulus (similar to the experimental stimuli), and playback loudness was adjusted to a comfortably loud level. Playback loudness remained the same throughout the experiment. Participants were informed of their task, which consisted of listening to two drum patterns in each trial and of deciding which of the two was “more syncopated” (German: “synkopierter”) than the other. A test trial with two test stimuli was carried out, and participants had the opportunity to ask questions if they were not sure about their task. If participants asked about the meaning of “more syncopated”, they were advised to trust their instincts.

All 15 pairwise combinations of the six experimental stimuli were presented to the participants in a randomised order. The presentation order of the two stimuli within each trial was also randomly determined. For each pair, participants decided which of the two stimuli they perceived as “more syncopated”. Hence every trial was a Bernoulli experiment, and the outcome variable of the experiment is a count of wins (“more syncopated”) and losses (“less syncopated”) for each of the stimuli (Table 1).

The method of pairwise comparisons was the main reason that only six stimuli were used in the experiment. With six stimuli, each participant needed to carry out 15 comparisons. The total number of comparisons grows substantially with every additional stimulus, and we judged that carrying out more than 15 comparisons would exhaust participants’ patience.

Results and discussion

Perceived syncopation (Sp) is defined to be the true population proportion of wins for each of the stimuli. This parameter is estimated for each stimulus by its maximum likelihood estimator, the sample proportion of wins.

The experiment has a paired comparisons design with complete repetitions (Bramley, 2007; David, 1988; Wilkinson, 1957). Inference is based on the log-likelihood ratio (Casella & Berger, 2002; Kent, 1982; Wilks, 1938). The overall significance level is set to α = 0.05.

Estimates of Perceived syncopation (Sp) for each stimulus can be studied in Figure 3. We carried out an omnibus significance test against the null hypothesis that all stimuli had equal Perceived syncopation. The test offers very strong evidence that Perceived syncopation differs across the six stimuli (

Perceived syncopation (Sp) of the six stimuli. Error bars represent 95% confidence intervals for the estimate Sp (for data, see Table 1).

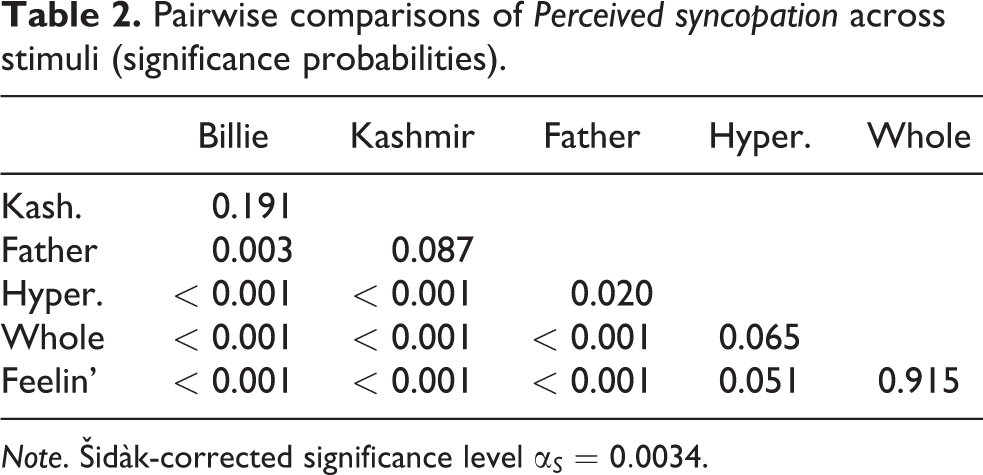

Pairwise comparisons of Perceived syncopation across stimuli (significance probabilities).

Note. Šidàk-corrected significance level

Figure 3 shows that the stimuli are quite evenly spread across the range of Perceived syncopation. The ordering of the stimuli agrees with most of our expectations. However, we were expecting that the “Whole lotta love” pattern would be perceived to be more syncopated than the drum pattern of “I got the feelin’”. Judging from the participants’ responses, the listeners were undecided as to which of the two they found to be more syncopated. Also, we were surprised to find that the “Billie Jean” pattern (the “archetypical rock beat”) obtained 21 wins (Table 1). We expected this most generic of popular music patterns to never win against any of the other patterns in the sample.

The stimuli differed considerably in terms of Tempo (see Figures 1 and 2). The slowest pattern: “Kashmir” had a tempo of 82 bpm, whereas the fastest pattern, “I Got The Feelin’”, moved at 126 bpm. However, Tempo and Perceived syncopation were not significantly correlated (r = 0.115, p = 0.892), and hence we ruled out Tempo as a potentially confounding variable.

Modelling rhythmic syncopation in popular music drum patterns

The syncopation model by Witek et al. (2014)

Witek et al. (2014) (revised in Witek et al., 2015) presented a method to estimate the quantity of syncopation in the snare drum and bass drum voices of popular music drum patterns. They developed their method in a supporting text annexed to the main article. The general idea and the main steps of their method to quantify syncopation (which is based on the notated drum pattern and inherits many properties from the monophonic LHL model of Longuet-Higgins and Lee, 1984) can be described as follows:

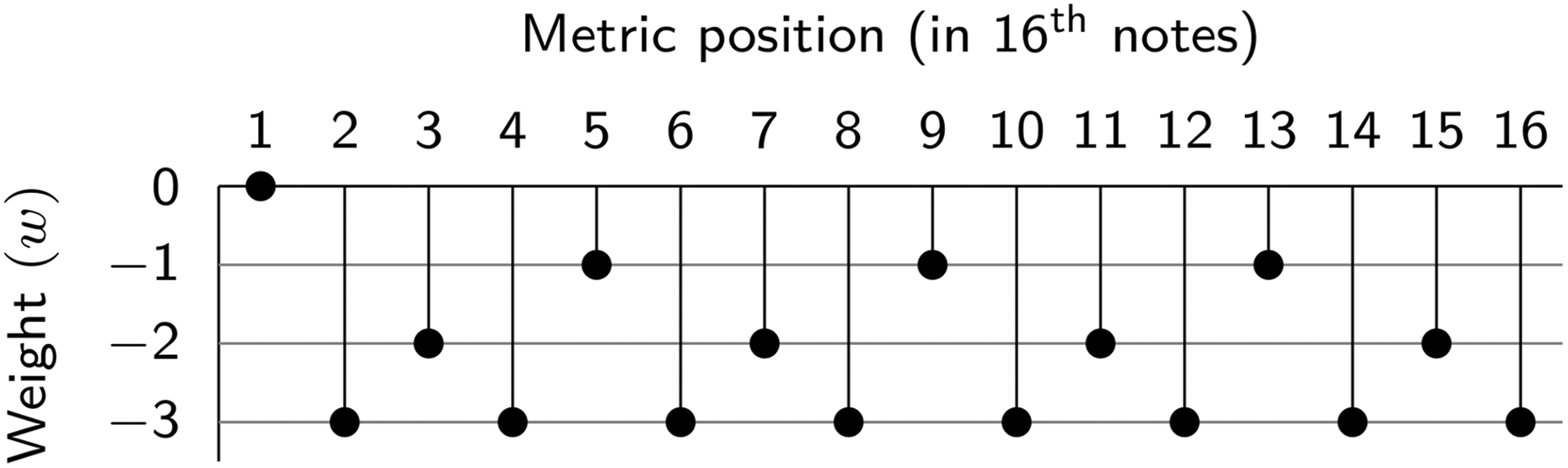

Assign a metric weight to each metric position in a bar. Onbeat positions have a higher metric weight than offbeat positions. Metric weights for the 4/4 common time bar with the smallest subdivisions on the 16th-note level are shown in Figure 4. Note that Witek et al. (2014) slightly depart from the principle that each lower metric level (and hence the next lower metric weight) is reached by strict binary subdivisions: the third quarter-note beat of a bar has the same weight as the second and the fourth beat (for comparison, see Lerdahl & Jackendoff, 1983, p. 23; London, 2004, p. 41; Temperley, 2010, p. 357; Toussaint, 2013, p. 69).

Determine whether syncopation happens at a certain position. Syncopation occurs when in one rhythmic layer (either the snare drum or the bass drum) a note precedes a rest, and the rest is on a metric position with higher or equal weight compared to the note. Let us call the preceding note the syncopator, and the position with the rest is called the syncope (this terminology is newly introduced here). Examples of syncopation can be studied in Figure 5. In case (A), the bass drum note on the fourth 16th note (syncopator) has weight

Establish the metric and instrumental weights of the syncopation. The metric weight of the syncopation is calculated as the difference between the metric weights of the syncope and the syncopator. In case (A), the bass drum note on the fourth 16th note (syncopator) has weight

Add up all metric and instrumental weights across the pattern. Syncopation (A) in Figure 5 has a syncopation value of

Weight (w) per metric position for a common time bar according to Witek et al. (2014). The downbeat (1) weighs w = 0. For the remaining quarter notes (5, 9, 13) w = −1, offbeat eighth notes w = −2, and 16th notes w = −3.

Examples of syncopation. (A) and (C) bass drum syncopation; (D) snare drum syncopation; (B) two-stream syncopation with snare drum syncopator.

Two side notes seem to be appropriate at this point: first, the Witek et al. model with the specification of metrical weights as shown in Figure 4 applies to 4/4 common time drum patterns only. Other meters (such as 12/8 or 3/4) are not explicitly considered. Second, the drum patterns investigated in this study only show syncopation on the tactus (quarter-note beats) and subtactus levels. Syncopation on higher levels (hyperbeats), as described by some theories on “metrical dissonance” (e.g., Krebs, 1987, 1999), appear to be irrelevant in this context.

A formal model description

In explaining their method, Witek et al. (2015) use little mathematical notation. In this section, we restate the core of their method in a more formal way. This allows us to program algorithms for calculating syncopation values and for more easily modifying the model parameters, if necessary.

Our implementation takes a few liberties in order to simplify the model presented originally in Witek et al. (2014) and Witek et al. (2015). This concerns the following aspects:

In Witek et al.’s (2015) model (Index of syncopation, p. 1), the metric weight of the syncope must be either greater than or equal to the weight of the syncopator for syncopation to happen. In our implementation, the metric weight of the syncope must be strictly greater than the weight of the syncopator. This choice leads to improved model fit, and it avoids overestimation of syncopation in simple patterns such as “Billie Jean”.

Witek et al. (2015) (Index of syncopation, p. 2) describe a rare situation, for which they conclude that the pattern of the hi-hat modifies the weight of a “two-stream” syncopation. We do not observe this configuration in our stimuli, so we omit it in the model.

Given these discrepancies, our model is not a strict implementation of the method proposed by Witek et al. (2015). Model syncopation values will differ when calculated by both methods. Nevertheless, our implementation builds largely on Witek et al.’s work, and we hope it captures the essential ideas of their method.

The modelling is carried out in four steps:

We determine the position of the potential syncopator for each potential syncope in the pattern.

We evaluate whether syncopation actually takes place between a potential syncopator and a potential syncope.

We attribute a weight to the syncopation.

We sum the weights of each occurrence of syncopation and evaluate the overall model syncopation of a pattern.

These four steps are elaborated in the paragraphs below.

Step 1

The drum pattern presented in Figure 5 (

We assume that all positions in the pattern, except for the first (because it is preceded by none), are potential syncopes. In the first step, we determine which of the metric positions that precede a potential syncope is the candidate syncopator that might trigger syncopation on the syncope. In order to express the rules for the candidate syncopator mathematically, we start by defining the δ function as a tool to create logical switches. It will prove to be useful throughout the entire modelling effort. Let u and v be two real numbers, then

The rule to find the candidate syncopator is given by the ϕ function in Equation 2. It takes three arguments:

and i, which is the metric position of the potential syncope (i > 1,

The ϕ function (Equation 2) looks complicated at a first glance, but it does a conceptually simple thing: for each potential syncope on position i in the pattern vector of one instrument, ϕ returns the candidate syncopator. This is the last position preceding the potential syncope:

that is marked by a note onset, while all subsequent metric positions up to the syncope are silent, and

that has a greater metric weight than all subsequent positions up to the syncope.

The first condition implements the fact that only the last note preceding a syncope can be a candidate syncopator. And the second condition makes sure that ϕ returns the candidate syncopator of the syncope on position i, instead of a syncope on an earlier position. If none of the positions up to i − 1 qualifies as a candidate syncopator, ϕ returns position i − 1 per default.

The ϕ function works for any kind of meter (respectively, for any kind of metric weight vector). For the common time 4/4 bar with 16th-note subdivisions, Witek et al. (2015) specify four different metric weights (

In the common time metric environment, the output of the ϕ function reduces to the following rules:

Metric position i − 2 is the candidate syncopator, if the instrument

Metric position i − 4 is the candidate syncopator, if there is an onset on

Position i − 1 is the default candidate syncopator, otherwise (regardless of the values of

Step 2

Now that the candidate syncopes and their respective candidate syncopators have been determined, we will evaluate in a second step whether syncopation actually takes place in a specific situation. Syncopation occurs when the syncopator is marked by an onset and has a lower metric weight than the syncope, which is not marked by an onset. (Note that this rule differs from the one used by both Longuet-Higgins & Lee, 1984, and Witek et al., 2015, according to which syncopation happens when the metric weight of the syncopator is less than or equal to the syncope’s metric weight.)

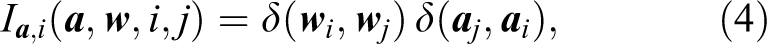

The definition can be formalised as follows: Let i be the metric position of the syncope in instrument

where the δ function has the meaning defined in Equation 3. The value of

Step 3

The third step is to assign a specific syncopation weight to each occurrence of syncopation. According to the Witek et al. (2015) model, the syncopation weight depends on the difference between the metric weights of the syncope and the syncopator (metric syncopation weight), on the instrument in which the syncopation happens (instrumental syncopation weight), and on whether the other instrument has an onset on the syncope or not (“two-stream” syncopation).

For the snare drum, the model proposes the following procedure to calculate the weight of a syncopation, W

This weight adds up several summands:

The difference between the metric weights of the syncope

For the snare drum, an instrumental weight of 1 is added.

Another weight of 4 is added in the two-stream syncopation case, when neither the snare drum, nor the bass drum have an onset on the syncope (this last condition is implemented by the

For syncopation on the bass drum at position i, the model proposes the following weights:

Based on a perceptual argument, Witek et al. (2014) consider syncopation on the bass drum to have a greater impact on perception than syncopation on the snare drum. The instrumental weight is 2 for syncopation on the bass drum (compared to 1 for the snare drum). A weight of 3 is added in the case of two-stream syncopation. As a consequence, two-stream syncopations triggered by syncopators in either the snare drum or the bass drum have the same instrumental weight, namely 5.

Witek et al. (2015) do not seem to discuss the situation when a two-stream syncopation is simultaneously triggered by syncopators in both the snare drum and the bass drum. Several occurrences of this situation can be observed in the transcriptions of Figure 2: for example, the syncope on the last quarter note of bar 1 in “Whole lotta love” is initiated by syncopators in both the snare drum and the bass drum.

In our implementation of the model, we treat this situation as a two-stream syncopation triggered by the syncopator on the bass drum, only. In order to discount the snare drum syncopation in this specific case, we use an inverted variant of the syncopation switch function.

Step 4

Finally, as a fourth step, we aggregate all components defined in Equations 4, 5, 6, and 7. Model syncopation (Sm) in the snare drum and bass drum voices of a popular music drum pattern with n metric positions is calculated as follows:

where n is the number of metric positions in the pattern,

Total Model syncopation (Sm) is the sum of the syncopation contributions from the bass drum and snare drum across all n metric positions (except for the very first metric position at i = 1, which can never be a syncope). We implemented the model as a script in R. The Model syncopation (Sm) values of the six experimental stimuli can be inspected in Table 3.

Model fit: Logarithmic link function.

Note. Sp, Perceived Syncopation; Sm, Model Syncopation;

Fitting the model to the data

The model presented in the sections above quantifies syncopation on the basis of the notated drum pattern. In this section we investigate how reliably our implementation based on this model predicts the Perceived syncopation ground truth we established in the listening experiment. We consider the model to be successful if it is possible to find a link function that predicts Perceived syncopation from Model syncopation with adequate fit. This link function must be monotonically increasing (as Model syncopation increases, Perceived syncopation should also increase across the whole domain of the function).

The link function does not necessarily have to be linear, any kind of increasing one-to-one function can prove to be useful. Also, we expect the link function to go through the origin: when there is no syncopation in the notation of a pattern (

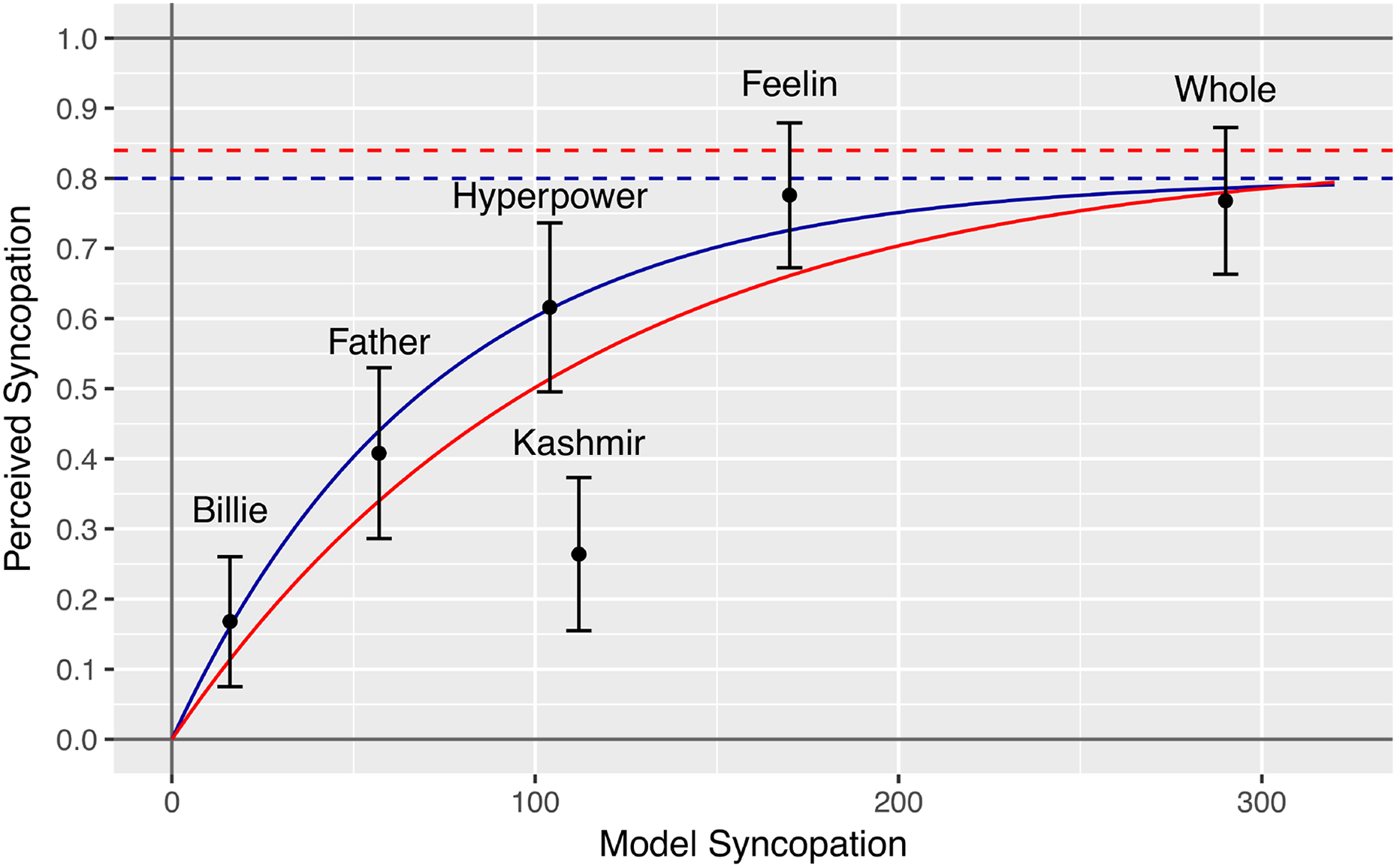

The relationship between the experimental data and the model can be studied in Figure 6. It plots Perceived syncopation (Sp) estimates for all six stimuli (including their 95% CI) against Model syncopation (Sm). The fitted values can be studied in Table 3.

Logarithmic link function: Perceived syncopation (Sp) as a function of Model syncopation (Sm). The red curve is the best fit logarithmic function with all stimuli. The blue curve is the best fit function when “Kashmir” is omitted.

A log-based function might be a promising candidate for the link function: the relationship between Perceived syncopation (Sp) and Model syncopation (Sm), as seen in Figure 6, appears to flatten for higher values. In perception, the relationship between the physical intensity of a stimulus and the perceivers’ sensitivity to changes in intensity often shows a logarithmic relationship: in auditory perception, for example, perceived loudness is modelled as a logarithmic function of acoustical sound pressure (dB scale). Weber–Fechner’s Law is a generalisation of these frequently observed logarithmic relationships (MacKay, 1963; Masin, Zudini, & Antonelli, 2009).

The blue and red curves in Figure 6 are log-based link functions of Model syncopation (Sm) that return Estimated perceived syncopation (

where q > 0 and k > 0 are scale and shape parameters, respectively. Parameters q and k are chosen such that the sum of the squared deviations between Perceived syncopation (Sp) and the fitted value on the curve,

The red curve in Figure 6 is the line of best fit using all six data points. This curve fits the data poorly (

The blue curve is the line of best fit when “Kashmir” is excluded, and only the five data points of the other stimuli are used. It is defined by

with q = 0.28 and k = 0.066. This function fits the data (without “Kashmir”) reasonably well (

One theoretically problematic aspect is that the image space of flog is the interval (0, ∞), whereas Sp is bounded within (0, 1). This, however, seems to be quite irrelevant in practice: flog exceeds the interval (0, 1) when

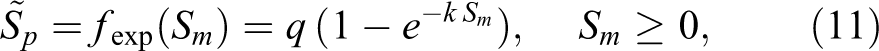

Another potential functional link between Sm and

where

The fit of the exponential approach functions can be studied in Figure 7 and Table 4. Again, the red curve shows that, if all stimuli are included, the fit is poor (

and it has a very good fit with the data (

Exponential approach link function: Perceived syncopation (Sp) as a function of Model syncopation (Sm). The red curve is the best fit exponential approach function with all stimuli. The blue curve is the best fit function when “Kashmir” is omitted. Dashed lines are the approach limits.

Model fit: Exponential approach link function.

Note. Sp, Perceived Syncopation; Sm, Model Syncopation;

In summary, our implementation of the syncopation model, based on the modelling work of Witek et al. (2015), solidly predicts how listeners perceived the degree of syncopation in four of the six stimuli used in the experiment. But the model overestimates the syncopation level of “Kashmir”, and it also seems to overstate the syncopation level of “Whole lotta love”. The model returns a large numerical difference between the Model syncopation values of “I got the feelin’” and “Whole lotta love”, but participants did not perceive such a difference.

The flexible logarithmic or exponential link functions approximate the distortion in the higher syncopation range fairly well, but the “Kashmir” pattern seems to be misplaced, regardless of the choice of link function.

Revising the model

In the remainder of this study, we modify the model in order to improve its fit with the experimental data. Particularly, we intend to avoid the misalignment of “Kashmir” and the exaggeration of differences in the upper syncopation range.

The study of the “Kashmir” pattern (Figure 1) shows that the greatest contribution to this stimulus’ Model syncopation (Sm) comes from the 16th notes in the bass drum just after beats one and three. These notes act as syncopators to the rests on the subsequent eighth-note positions. The weight of these syncopes is increased by the fact that the snare drum has a simultaneous rest, so the constellation is treated as a two-stream syncopation by the model.

Yet, participants do not seem to perceive the “Kashmir” pattern as particularly syncopated. Potentially, listeners heard the 16th notes as ornaments to the beats, rather than as syncopes. This effect might be increased by dynamics: the echoing 16ths in the “Kashmir” pattern are always softer than the note on the downbeat. Thus, we can describe the “Kashmir” pattern as an “archetypical rock beat” (Tamlyn, 1998, p. 12) like “Billie Jean” with a kind of “echo” on the bass drum beats. The model’s overestimation of syncopation in the “Kashmir” pattern seems to be linked to the metric weights and to the emphasis on two-stream syncopation as an important factor.

The distortion in the upper syncopation range can also be traced back to the fact that, in the “Whole lotta love” pattern, many syncopations happen on the eighth-note syncope level in both the bass drum and the snare drum. It is the sheer number of these syncopated events on a low metric level that inflates the Sm syncopation estimate for this pattern.

The “I got the feelin’” pattern, conversely, shows fewer syncopated events altogether, but a large proportion of them are two-stream syncopations and take place on high metric levels. Potentially, the distortion in the upper syncopation range above Sm = 150 can be avoided by adapting metric weights and the weight of two-stream syncopation.

We will use the mathematical model description presented above as a template to reformulate the syncopation model. Many components such as the ϕ function (Equation 2), the δ function (Equation 3), and the syncopation switch function

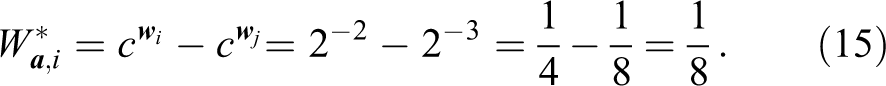

Let the revised metric weight W* of a syncope at metric position i in instrument

where

The parameter c regulates the relative weight between metric positions. Recall that

The revised weight of the downbeat is always

whereas a syncope on an offbeat eighth note, triggered by a syncopator one 16th note ahead only has weight

Hence, large c will reduce the weights of the syncopes on the offbeat eighth notes of both “Kashmir” and “Whole lotta love”.

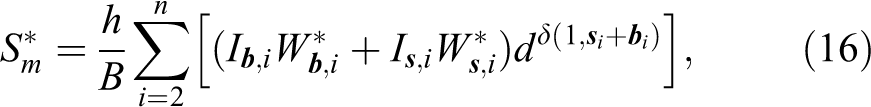

With the described amendment to the calculation of syncopation weight W*, the revised Model syncopation (

where B is the number of beats in the pattern. By dividing the estimate by B we make it independent of pattern length, hence

To summarise, two unknowns need to be estimated in order to optimise the model

Fitting the revised model to the data

A numeric optimisation process was implemented in R, which aimed to minimise the root mean square error between the link function (which is separately fitted for each evaluated combination of c and d) and the experimental data. A linear function was chosen as the target link function between Perceived and Model syncopation. We also want the linear link function to go through the origin, such that

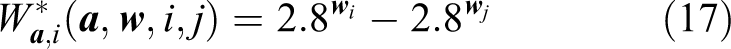

The root mean square error of the model was at a minimum when c = 2.8 and d = 1.6. Hence the revised model is given by

and

In the case of this study’s stimuli with eight bars, there are 32 quarter beats per stimulus, hence B = 32, and the number of metric positions in each pattern is n = 129 (the downbeat of the ninth bar is the last position in each pattern, see Figures 1 and 2).

The result of the revision and optimisation can be studied in Figure 8 and Table 5. The six data points follow a linear regression line quite closely. Every fitted value

Perceived syncopation (Sp) as a function of the optimised Model syncopation (

Model fit: Optimised model.

Note. Sp, perceived syncopation;

The scaling factor h = 1.32 was chosen such that the slope of the linear link function becomes 1. As a consequence of this scaling, the revised Model syncopation (

The revision and optimisation improved two aspects of the original model with respect to this study’s six stimuli:

The misplacement of the “Kashmir” pattern has been fixed. In the revised model, this pattern is well aligned with the other stimuli.

Also, the original model predicted that the “Whole lotta love” pattern was much more syncopated than the “I got the feelin’” pattern, but the participants of the experiment did not perceive this difference. The revised model does not show this distortion.

Thanks to the equivalence between the revised Model syncopation (

The accuracy and general applicability of this estimate is difficult to assess at this moment. The estimate rests on data from a small experiment with only six drum patterns. And these patterns were not randomly chosen from the population of Western popular music drum patterns. Rather, they were selected to represent a wide range from weak to strong syncopation. Another choice of stimuli might result in a very different rule for

A practical drawback of the revised model is that computations do not rely on integers only, as the original Witek et al. (2015) model did. Hence it is unwieldy to calculate

Conclusions

This study presented the implementation of a model for quantifying syncopation in popular music drum patterns, which was based on a method by Witek et al. (2014) (revised version: Witek et al., 2015). The implemented model was then empirically validated. Comparing the model predictions with the data from the listening experiment indicated that the model predicted Perceived syncopation quite well in general. However, our findings also showed that the model overemphasised some aspects of a rhythmic pattern linked to syncopation on weak metric positions, which led to inconsistencies between Perceived and Model syncopation.

We revised the model and improved its fit with the empirical data by numerical optimisation. We found model parameters such that the model expresses Perceived syncopation of the six stimuli as a linear function of Model syncopation.

For the time being, the revised model seems to be our best guess at estimating syncopation in popular music drum patterns, even though many questions remain unanswered. For example, the contribution of the hi-hat to syncopation has been assumed to be irrelevant in this study, yet there is no evidence so far that the contribution of the hi-hat is indeed negligible.

The model is based on a small-scale listening experiment with only six stimuli. A future experiment to validate and further improve the model should include more stimuli in order to better represent the diversity of popular music drum patterns and to generate more stable results. Also, the model should be expanded to include other instruments of the drum set and other time signatures than 4/4 common time.

The complete paired comparison design becomes impractical as the number of stimuli increases (each participant needs to perform “n choose 2” comparisons. Designs with incomplete repetitions might prove useful to solve this problem (Bramley, 2007; Silverstein & Farrell, 2001; Wilkinson, 1957), or methods in which participants rank the stimuli according to perceived syncopation (Bramley, 2005; Kendall & Gibbons, 1990).

In this study we assumed, in line with Longuet-Higgins and Lee (1984) and Witek et al. (2014), that syncopation can be quantified by summing up the weights of all syncopated events in a pattern. This hypothetical additive property of syncopation is somewhat questionable: syncopation is based on the notion of meter. The more syncopated events we introduce into a pattern, the less listeners will be aware of the meter, and the perceived syncopation effect will be weakened. Another problem with syncopation is that listeners with little musical expertise are unlikely to know the concept of syncopation (this was the main reason we recruited only musicians for the listening experiment).

It might be beneficial to replace the concept of syncopation by the concept of rhythmic complexity in the future, as suggested by Fitch and Rosenfeld (2007). Rhythmic complexity might be modelled using a predictive coding approach (Clark, 2013; Vuust, Dietz, Witek, & Kringelbach, 2018; Vuust & Witek, 2014). Increasing rhythmic complexity is associated with reducing listeners’ sense of, and orientation within, the meter. Potentially, complexity can more successfully be projected on a quantitative dimension than syncopation. More rhythmic complexity is likely to be associated with more metric confusion in the listener. Hence, a highly complicated rhythm may be perceived as complex, but it may not be perceived as syncopated, because the listener’s sense of the meter is too confused to distinguish between weak and strong metric positions.

Another potentially valuable metric to explore is beat strength, or, alternatively, perceptual beat salience: listeners with any degree of musical expertise will be able to answer the question whether they recognise the beat more easily in one or in another stimulus. Perceptual beat salience can be expected to be a reverse measure of syncopation or rhythmic complexity.

Supplemental Material

Supplemental Material, syncopation_model_180702 - Modelling perceived syncopation in popular music drum patterns: A preliminary study

Supplemental Material, syncopation_model_180702 for Modelling perceived syncopation in popular music drum patterns: A preliminary study by Florian Hoesl, and Olivier Senn in Music & Science

Footnotes

Acknowledgement

The authors would like to thank Lorenz Kilchenmann and Toni Bechtold for their support preparing the stimuli.

Contributorship

Designed the study: FH, OS. Prepared the stimuli: FH. Carried out the listening experiment: FH. Analysed the data: OS. Created the mathematical models: OS. Wrote the paper: OS, FH.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was partly funded by the Swiss National Science Foundation (grant No. 100016 162504 to Olivier Senn).

Peer review

Justin London, Carleton College, Department of Music.

David Meredith, Aalborg University, Department of Architecture, Design and Media Technology.

Brian Bemman, Aalborg University, Department of Architecture, Design and Media Technology.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.