Abstract

Assessment of students’ clinical performance and reasoning raises critical questions of whether learning outcomes have been reached and whether the aims of a course/education programme have been fulfilled. The aim of this study was to compare two assessment instruments in clinical education. A cross-sectional, comparative design was used. Nursing students and supervisors from five universities and university colleges in Sweden were included in the study. A sample of 435 students was used. Data were collected with study-specific questionnaires targeted for the two groups, nursing students and supervisors, and were analysed using cross-tabulation, chi-square with WinSTAT. Students perceived supervisors using the instrument Assessment of Clinical Education (AClEd) to be more aware of what to assess and they experienced more support from the ‘AClEd supervisors’ as compared to the supervisors using the second instrument, the Assessment form for Clinical education (AssCe). Furthermore, the AClEd assessment was perceived to be fairer compared to the AssCe assessment. The criterion-referenced assessment instrument AClEd was perceived, by both nursing students and supervisors, to give a clearer view of the learning outcome and the possibility of a fair and comprehensive assessment.

Introduction

During nursing education, vocational training is a core activity. In Sweden education for registered nurses consists of about 60% theoretical education and 40% clinical practice. An important part of vocational education and training is to integrate theory and practice and to be able to assess the ability of students to understand and apply knowledge. 1 Criterion-referenced assessment facilitates a fairer and more reliable assessment regime than norm-referenced assessment. Students are measured against identified standards of achievement rather than being ranked against each other. 2

Swedish nursing education

Since the Higher Education Reform in 1977, Swedish nursing education has changed from being a vocational training programme based on experience, to becoming a research-based education programme. During this period of 40 years, the theoretical part of the education programme has changed several times, but the clinical part of the training has not been developed to the same extent. Even though the curriculum today is outcome based,3,4 the assessment of students in clinical education is, to a great extent, still based on the traditional image of the nurse’s professional function. 5

Background

Assessment of students’ clinical performance and reasoning raises critical questions of whether learning outcomes have been reached and whether the aims of a course/education programme have been fulfilled. Newly graduated nurses are expected to be prepared and ready to provide a safe, evidence-based nursing practice in varied settings and complex situations. 6 One key aspect of clinical competency is clinical training. 7 A central part of clinical training is integration of theory and practice and assessment of the student’s ability to understand and apply knowledge.1,8–10 Assessment is important, particularly in clinical practice, to ensure that those who become registered nurses can provide safe and competent nursing care.

Implementing outcome-based models can be difficult in terms of assessing students’ knowledge, skills and attitudes in a valid and reliable way 11 and research has identified problems regarding the quality of the assessment of students’ clinical competence.12,13 In order to improve assessment of clinical skills we developed an instrument to evaluate learning outcomes during clinical practice. This article presents the comparison between a generic instrument and the course-specific criterion-based instrument.

The conceptualization of examinations is complex and within the context of clinical education it can be described as follows: ‘tests of clinical competence, which allow decisions to be made about medical qualification and fitness to practise, must be designed with respect to key issues including blueprinting, validity, reliability, and standard setting, as well as clarity about their formative or summative function’.14(p.945) Formative and summative assessment are often referred to as assessment of function and purpose. The former is about supporting learning, the latter has a primary function of grading or measuring. 15

Formative assessment is directed towards giving feedback on activities and directing students’ attention to their strengths/weaknesses and potential knowledge gaps, allowing the student the opportunity to analyse his or her own learning. 16 At the universities where the authors work, formative assessment in clinical education is performed through dialogue where the teacher and the student discuss the student’s strengths and weaknesses, the objectives the student wants to achieve and how to achieve them. Good two-way communication is central to promote mutual understanding. 17 Summative assessment, on the other hand, is a final examination of student performance.14,18,19 It may be a clinical examination where the student shows his/her skills by, for example, performing practical procedures such as managing venous blood sampling, measuring blood pressure, handling central venous lines etc. as well as other nursing procedures.

Clinical assessment requires close cooperation between clinical partners and academia to enhance the clinical experiences of students, the professional development of preceptors, and the clinical credibility of academics. 20 It is customary for a university teacher to assess theoretical knowledge while a supervisor at the clinic assesses practical knowledge. The student may not acknowledge the close dependents of theoretical and practical education when two persons, with different experiences and perspectives, perform the assessments.

At Karolinska Institutet, Stockholm, Sweden, two of the authors developed a criterion-based assessment form in 2007 which was first applied in 2008, Assessment of Clinical Education (AClEd). 21 During the process of developing the AClEd, it became apparent that earlier forms of assessment were perceived to be unfair. 21 Students and supervisors found inconsistencies in what should be assessed and on what grounds, an observation which has also been made elsewhere.5,22 Others have shown that assessment could be open to the subjective bias of the assessor which may influence the assessment quality. 23

The grading criteria should be helpful to supervisors and teachers when it comes to determining what knowledge and skill level the student should reach during each specific course. In developing the AClEd in 2007, it was possible to make a limited comparison between AClEd and the assessment instrument formerly in use, the Assessment form in Clinical education (AssCe), 24 as both instruments were used simultaneously in a small pilot study. The comparison was inconclusive but was still important when developing the AClEd instrument. Students expressed that AClEd offered fairer grounds for assessment as criteria were clearly adjusted to the specific learning outcomes of the courses. The aim of this study was to describe and compare students’ and supervisors’ perceived experience of fairness when using the two assessment instruments: the norm-referenced instrument Assessment form in Clinical education (AssCe) and the criterion-referenced instrument Assessment of Clinical Education (AClEd) in clinical placement.

Method

Study design

This article presents the comparison between a generic instrument and a course-specific criterion-based instrument. The present study was designed to compare two different assessment instruments used in clinical settings in nursing education. A cross-sectional, comparative design was used. Nursing students and supervisors from five universities and university colleges in Sweden were included in the study. The total number of students that participated was 435, of those 277 had been assessed using AssCe and 158 had been assessed using AClEd. Fifty-four supervisors responded, 23 had assessed students using AssCe and 31 had used AClEd. Data were collected with study-specific questionnaires targeted for the two groups, nursing students and supervisors.

The assessment instruments

The Assessment form in Clinical education (AssCe), 24 is the most common assessment instrument used today in Swedish vocational training for nursing students. This instrument is defined as a norm-referenced 25 instrument and has above all served as a basis for discussions for the student’s development of nursing skills while training to become a nurse. The AssCe instrument has recently been updated and validated for nursing students.26,27

The other assessment instrument, Assessment of Clinical Education (AClEd), 21 is currently being used in at least five out of the 25 different universities across Sweden and is regarded as a criterion-referenced assessment. 28 It contains a specific section, i.e. one column, where the course-specific learning outcomes for the course to be assessed are listed. 29 In addition, criteria are given in the column for checking grading, for how and to what extent the student is expected to meet the learning outcomes to reach a certain grade (fail or pass).

The assessment instrument is used on two occasions during the clinical courses, halfway through the clinical placement when a formative assessment is held, and as close to the last day of the clinical course as possible when a final summative assessment is carried out. On these occasions, the student, the supervising nurse and commonly a representative from the university (lecturer, senior lecturer or clinical lecturer) are present. The supervisor conveys information and gives a recommendation of the grading pass or fail for each learning outcome. Apart from the assessment with AClEd, other examinations take place as well. For example, clinical examinations and theoretical examinations are included in the course, which, combined with the AClEd assessment, provide the final grading for the course.

Data collection

Data were collected using two study-specific questionnaires, one developed for students and one for supervisors. The questionnaires were similar but the questions asked were specified per target group. First drafts of the questionnaires were made by the authors. To ensure face validity, students, nurses, supervisors, clinical lecturers and university teacher colleagues were given opportunities to study and comment on the questionnaire before it was distributed. The questionnaire was revised according to the comments. The final questionnaire comprised eight statements to which the respondents replied on a three-point Likert scale (‘I totally agree’, ‘I partly agree’, ‘I do not agree’). The statements were about learning outcomes, opportunities to take part in practical training towards those outcomes, and the perceived fairness of the assessment. Examples of statements for students include: ‘I have knowledge of which learning outcomes I have been assessed on’, ‘My supervisor knew which learning outcome I was going to be assessed on’. Examples of statements for supervisors include: ‘I have knowledge of what learning outcomes the student has been assessed on’, ‘The student knows on which learning outcomes he/she has been assessed on’. All statements for students are presented in Table 3 and all statements for supervisors are presented in Table 4 later in the article.

The questionnaires were distributed to students and nursing supervisors in five Swedish universities and university colleges, two of which used AssCe and three of which used AClEd. The questionnaires were distributed as close to the final assessment of each course as possible. The questionnaires were distributed to the participating students in person by one of the three authors, during the last part of a class in close connection to a clinical placement. The included universities had all agreed to the process. The students were informed of the purpose and that they did not need to participate. No personal information other than study term, sort of placement and university was collected. The supervisors had their questionnaires sent to them via mail or email, and sent them back via mail or email.

Statistical analyses

The students responded on a three-point Likert scale (1 = I totally agree; 2 = I partly agree; 3 = I do not agree). Since the frequency of the third possible answer was low, we decided to dichotomize the response scale by combining answers 2 and 3. In this way we obtained a bivariate, nominal variable: 1 = Yes (I totally agree) and 2 = No (I partly agree, I do not agree). This was also vindicated with the free-text comments from students and nursing supervisors, that to a great extent explained the alternative 2 = I partly agree; with ‘In my opinion’, ‘I don’t know what others think’.

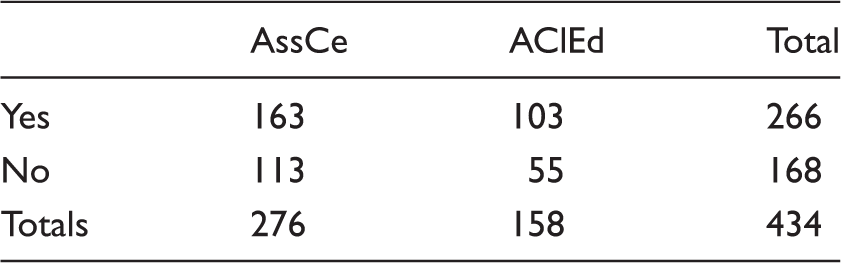

Cross-tabulation of students’ answers to a statement (in this example Statement 1) ‘I have knowledge of the learning outcome in this course’.

AssCe: Assessment form for Clinical education; AClEd: Assessment of Clinical Education.

Chi-square = 1.592; p = 0.207; not significant.

In this example, the expected number of ‘yes’ responses to the statement put to the AssCe participants would be (276/434)*266 = 169.16. Chi-square of this cell therefore is (163–169.16) 2 /169.16 = 0.224. The sum of all cell chi-squares yields the total chi-square, in this case 1.592. The number of degrees of freedom = (number of rows −1)*(number of colums −1) = 1. p = 0.207 is the two-sided probability that the observed chi-squares could be a result of random fluctuations in unrelated variables rather than of a true dependency.

Ethical considerations

All students and supervisors were given both written and verbal information about the study and were informed that participation was voluntary and that they could refrain from participation at any time without consequences. The questionnaires were responded to anonymously and no participants could be identified. The study was approved by the head of each institution at the participating universities/sites. Ethical review was not necessary according to the Swedish Ethical Review Act (2003:615). 30

Consent for publication

Not applicable.

Results

Participants

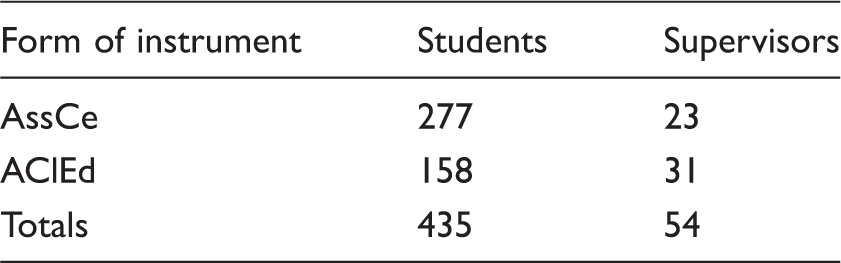

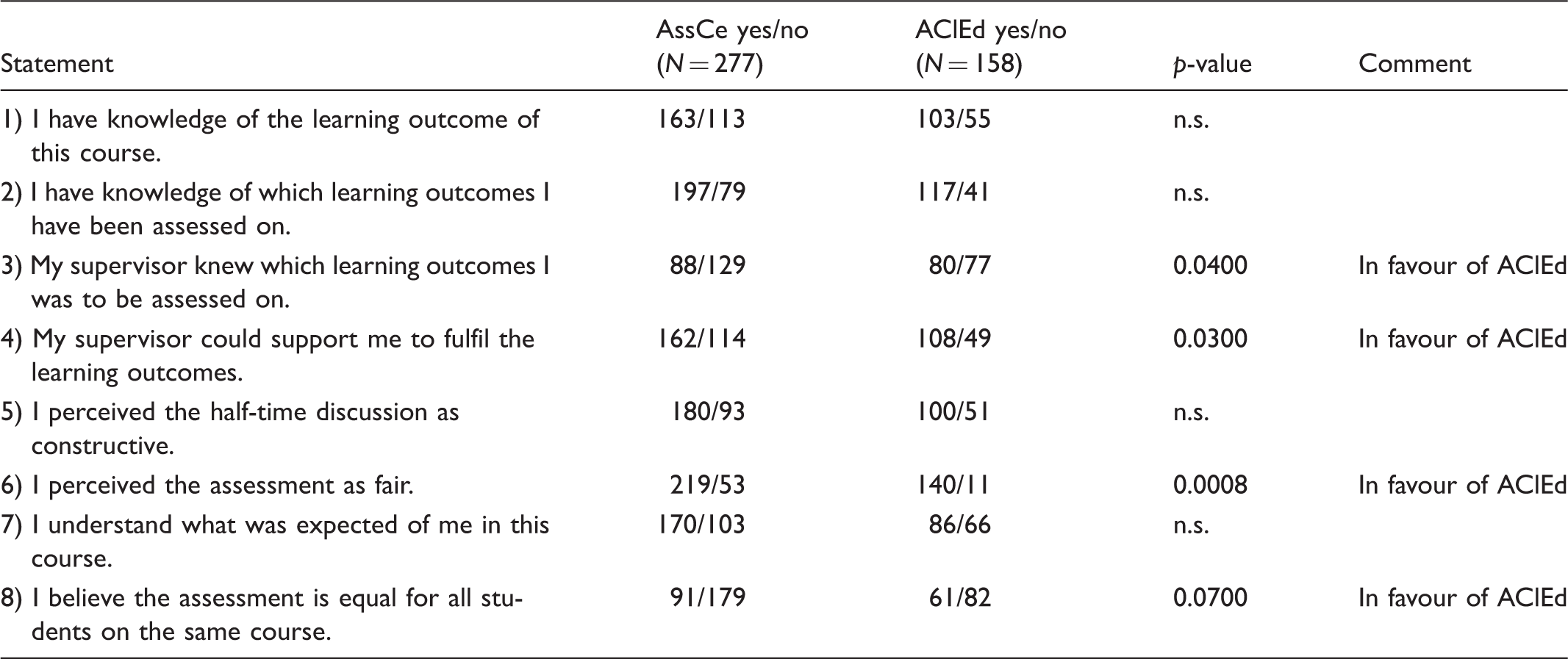

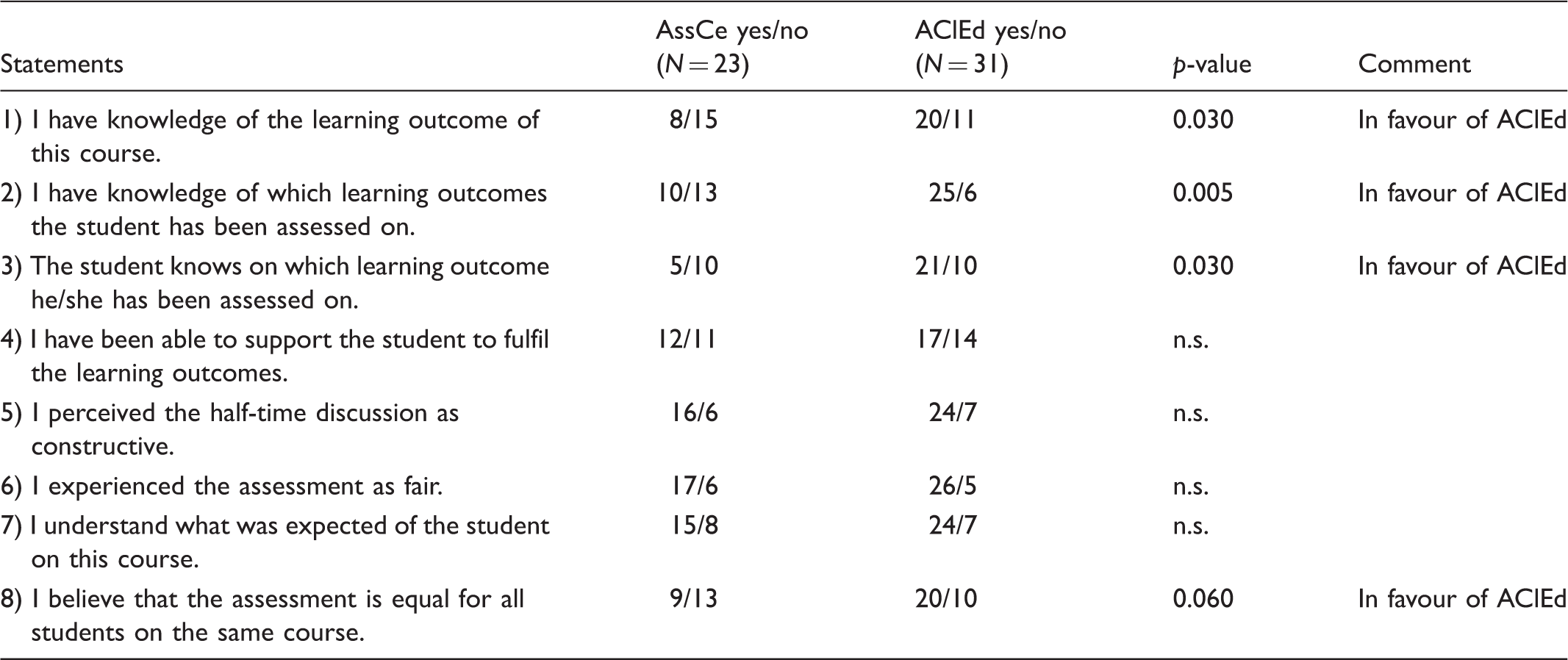

Number of participants in the study.

AssCe: Assessment form for Clinical education; AClEd: Assessment of Clinical Education.

Students’ results, all questions. Total N = 435.

AssCe: Assessment form for Clinical education; AClEd: Assessment of Clinical Education.

Supervisors’ results, all questions. Total N = 54.

AssCe: Assessment form for Clinical education; AClEd: Assessment of Clinical Education.

The responses (yes/no) to the eight statements from students and supervisors are presented in Tables 3 and 4, respectively. The responses are presented with two-sided p-values, i.e. the probabilities that the observed chi-squares could be a result of random fluctuations in unrelated variables.

There were no statistically significant differences between the two assessment instruments on how the students perceived their knowledge of the learning outcomes of the course they were taking. The students knew what learning outcomes the supervisors were going to assess. Students being assessed using AClEd perceived supervisors to be more aware of the learning outcomes to be assessed (p = 0.04). Students felt more support from the ‘AClEd supervisors’ compared to those supervisors assessing using AssCe (p = 0.03). The AClEd assessment was seen as fairer compared to the AssCe assessment (p = 0.0008).

Comments provided by students on the questionnaire indicate that students were more negative towards AssCe compared to AClEd. Comments included ‘AssCe was a complicated assessment instrument’ (#ID346, AssCe), ‘Different supervisors interpret AssCe differently’ (ID248, AssCe) and ‘Everyone thinks differently about AssCe, you hear many different interpretations’ (#ID214, AClEd). On the other hand AClEd was perceived to be clear and concise and easy to understand, e.g. students provided comments such as ‘Thanks to AClEd I really have control’ (#ID68, AClEd) and ‘Concrete and clear learning outcomes’ (#ID112, AClEd).

The result concerning all eight questions shows either no difference or a significant difference which suggests that AClEd is perceived to give a clearer view of the learning outcome and the possibility of a fair and understandable assessment. Supervisors provided comments in relation to AssCe such as ‘The learning outcomes are vague’ (#ID10, AssCe), ‘Difficult to interpret AssCe’ (#ID15, AssCe), and ‘More concrete learning outcomes are needed’ (#ID9, AssCe) as opposed to comments related to AClEd, ‘Good concrete learning goals’ (#ID22, AClEd) and ‘Everything is in AClEd’ (#ID23, AClEd).

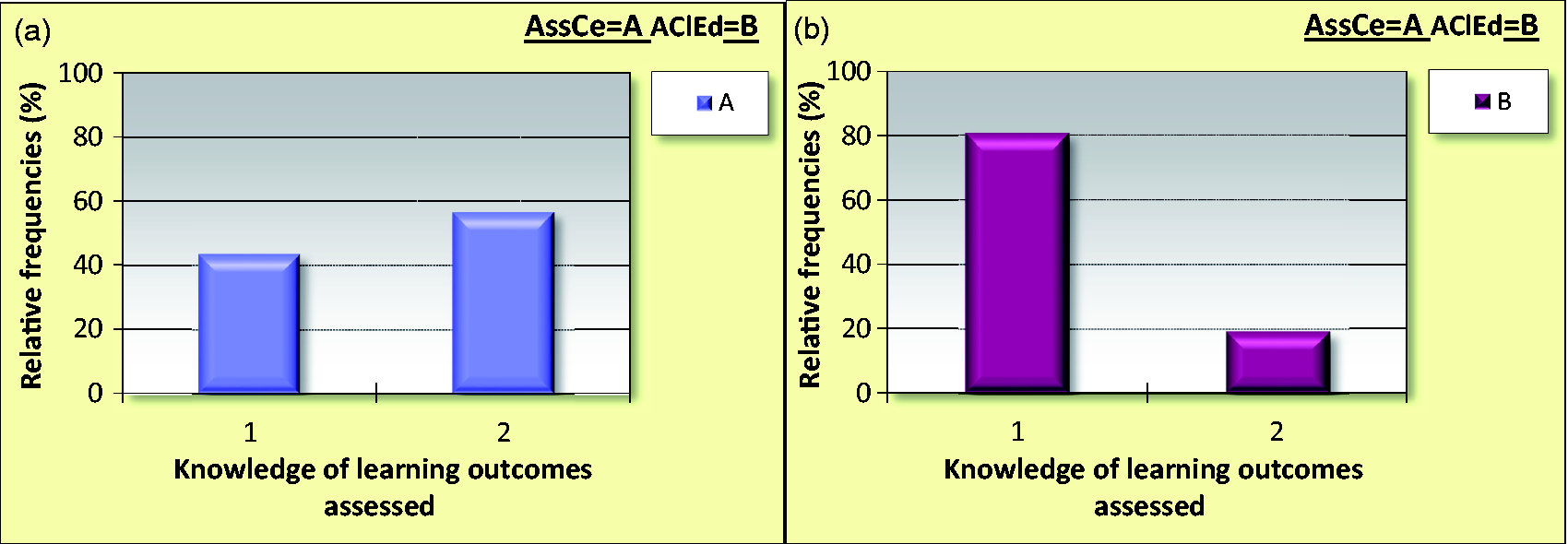

Figure 1 shows responses to the question I have knowledge of which learning outcomes the student has been assessed on'. Panel A show responses by supervisors who assessed students using AssCe, divided into 1 = Yes, and 2 = No. Panel B shows responses from supervisors who assessed students using AClEd, divided into 1 = Yes, and 2 = No.

Supervisors’ responses to the question: I have knowledge of which learning outcomes the student has been assessed on'.

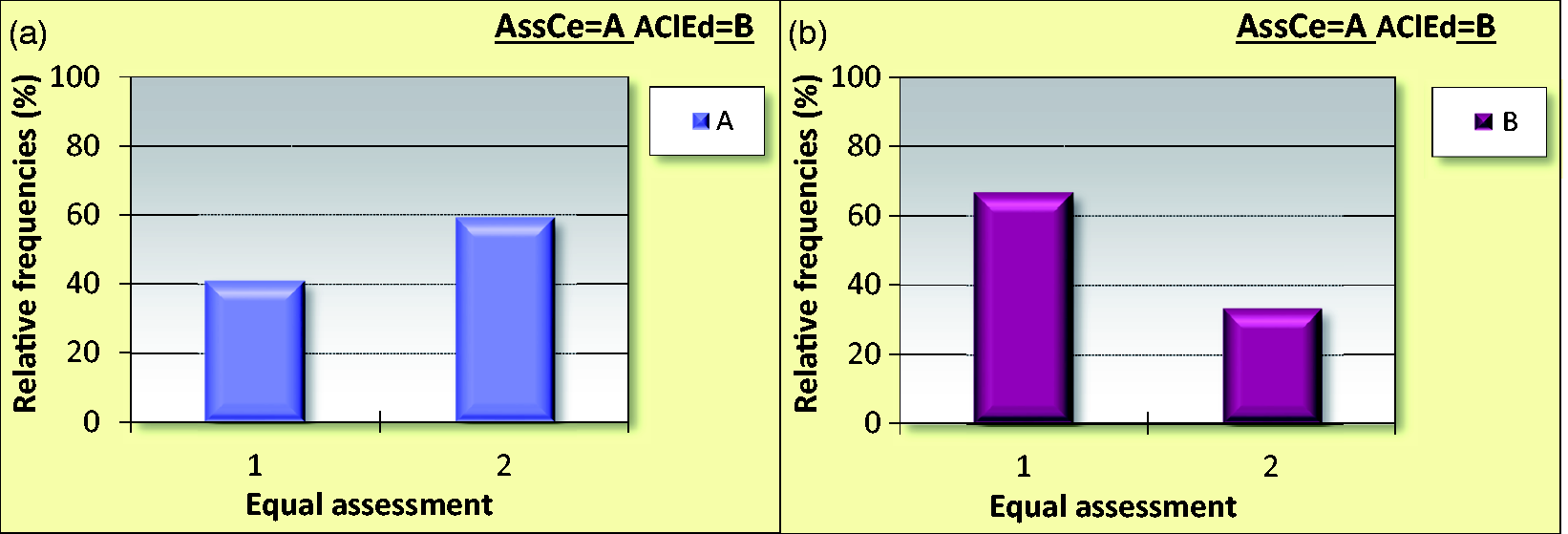

In Figure 2, the responses by supervisors to the question ‘I believe that the assessment is equal for all students on the same course' are presented. Panel A shows responses from supervisors who assessed students using AssCe, Panel B presents supervisors who assessed students using AClEd, divided into 1 = Yes, and 2 = No.

Supervisors’ responses to the question: 'I believe that the assessment is equal for all students on the same course'.

Supervisors and students agreed on statement #3 and statement #8. If the people involved in the assessment knew the learning outcome the discussion and focus became clear, which was seen as important to assure a secure, uniform and faire assessment.

Discussion

The results showed that all students were aware of the learning outcomes for the specific course they were taking and which they were to be assessed on in clinical practice. However, students being assessed with the AClEd instrument perceived the supervisors’ assessment to be fairer and more unbiased compared to those students whose supervisors used the AssCe instrument.

The results suggest that when the learning outcomes are easy to access and are known, the assessment is perceived to be fair and unbiased. As suggested by Price, 29 it is important to share standards of what knowledge or performance students needs to acquire. These standards can be shared at different times in the course, before or after. By making these standards explicit for students throughout the course and by discussing them with both students and supervisors, the standards become known for all parties involved in education. Learning outcomes, teaching and examination need to be aligned to increase transparency around assessment and to facilitate students’ learning. 15 The supervisors in our study who used AClEd for assessment were more aware of the learning outcomes than those who used AssCe.

Biggs’ model 15 was used in designing the nursing programme, determining the progression in the programme, the levels within the courses, the learning outcomes and when developing the criterion-based assessment form, AClEd. This study validates AClEd which was developed in 2007 by the first author, Ulfvarson, and Hägg. 21

The students in our study assessed using AClEd perceived the assessment to be more fair and equal for all students as compared to assessment where AssCe was used. In a recent study 31 the assessment carried out by nurse educators was suggested to be unclear, which is problematic as students have a right to be assessed equally and in an unbiased fashion during their education. Previous research has shown inadequacies in assessments. 13 The goal of the criterion-referenced instrument is to obtain a description of the specific knowledge and skills each student should demonstrate. 28

The present study was set up in order to compare a generic instrument and a criterion-based instrument. We wanted to test the hypothesis that students who were assessed using a criterion-based instrument are more familiar with learning outcomes and perceive assessment as being more fair. In the assessment process, consistency and reliability are important concepts. 14 Limitations of the study should be mentioned. The Swedish setting and the study-specific questionnaire is a limitation to generalizability; however, the criterion-based assessment is important in many settings and all universities with clinical education. Another limitation that is important to mention is the fact that the authors of this study are also the developers of one of the instruments, the AClEd. This may have biased the interpretation of the results, however, we have acknowledged this and tested and retested our findings in the statistics of our presentation. We have followed the CODEX rules and guidelines for research.

Written grading criteria help educators to form more consistent assessments. On the other hand, lack of criteria may increase the risk of arbitrariness. In AClEd the criteria are clearly written for each learning outcome, and students in the present study experienced AClEd to provide a clearer view of the learning outcomes.

Apart from contributing to a safer assessment, criteria can also be used to facilitate students’ learning. 32 When it comes to assessment of students, it is obvious that feedback from teachers improves students’ results. 33 However, students do not always understand the feedback given. To achieve good results the teacher needs to give individual feedback to the students based on the criteria outcome. 25

It has been argued that assessment criteria such as ‘evidence of critical reasoning’ accompanied by standards articulated in such terms as ‘shows an imaginative approach’, or ‘disorganized and rambling’ are open to a variety of interpretations. 2 Different teachers could have different understandings of the interpretation of the criteria. In addition, criteria can be developed to be so precise that they reduce the student’s performance. It is important to create a situation in which the student can show his/her knowledge and the teacher, the supervisor and the student have a dialogue where the student is given plenty of space.

Conclusion

The criterion-referenced assessment instrument AClEd was perceived both by nursing students and clinical supervisors to give a greater understanding of the learning outcomes and the possibility of a fair, unbiased and understandable assessment. Furthermore, students perceived the assessment by AClEd to be more equal for all students on the same course. Clinical supervisors reported students who were more prepared and who had a better understanding of the actual learning outcomes being more focused and devoted to reach the course goals.

Footnotes

Funding

This research received no specific grant from any funding agency in the public, commercial, or not-for-profit sectors.

Conflict of interest

The authors declare that there is no conflict of interest.