Abstract

The Patient Preferences for Patient Participation tool (The 4Ps) was developed to aid clinical dialogue and to help patients to 1) depict, 2) prioritise, and 3) evaluate patient participation with 12 pre-set items reiterated in the three sections. An earlier qualitative evaluation of The 4Ps showed promising results. The present study is a psychometric evaluation of The 4Ps in patients with chronic heart or lung disease (n = 108) in primary and outpatient care. Internal scale validity was evaluated using Rasch analysis, and two weeks test–retest reliability of the three sections using kappa/weighted kappa and a prevalence- and bias-adjusted kappa. The 4Ps tool was found to be reasonably valid with a varied reliability. Proposed amendments are rephrasing of two items, and modifications of the rating scale in Section 2. The 4Ps is suggested for use to increase general knowledge of patient participation, but further studies are needed with regards to its implementation.

Keywords

Background

Patient participation has been declared by The World Health Organization to be a key issue for good care. 1 In Sweden, healthcare professionals are required to provide conditions for patient participation,2,3 and recent legislation further emphasises the patient’s right to participation, involvement and influence over their own care. 3 However, different perspectives of the concept of ‘patient participation’ are applied by different stakeholders, 4 and when the patients’ preferences for participation are not recognised, the healthcare staff’s assumptions 5 and/or the culture of the healthcare facility6,7 have been found to drive the planning and provision of care. Thus, it is important to present opportunities for the individual patient to voice his or her preferences for participation to fulfil the intentions of the legislation.

There are some instruments available that retrospectively evaluate patients’ experiences of participation, involvement and influence over their own care.8–13 However, a prospective approach can be beneficial for the individual patient, as well as being of help to healthcare staff in the implementation of patient participation in practice; a tool that allows the patient to share his or her preferences for participation, combined with the opportunity to evaluate patient participation, can aid in the dialogue and provide a basis for individualised care plans. The Patient Preferences for Patient Participation tool (The 4Ps) 14 was developed in 2009 and 2010 based on the above requirements, allowing the individual patient to depict, prioritise, and later evaluate his or her participation in three separate sections. Thus, The 4Ps is a clinical tool, intended for patients with planned or expected recurrent contacts with care, to phrase and share preferences for their participation with health professionals, thereby offering the professionals an opportunity to understand and provide for patient participation from the patient’s perspective. Further, The 4Ps offers the patient an opportunity to evaluate his/her experience of patient participation. In a dialogue between health professionals and patient, the latter’s experience can be considered; if and to what extent the experiences match the preferences for patient participation can be evaluated. A potential mismatch can be jointly examined, for a mutual dialogue on what lesson is learned, for both the patient and the health professional/s. Given that The 4Ps tool works for comparisons between prioritisations and evaluations at individual patient and/or group level, the tool has the potential to aid both clinic and science as regards patient participation and person-centred care issues. 15

Previously, The 4Ps tool has been tested as regards content validity, response process, and acceptability in a qualitative study with content and construct experts: scientists engaged in patient participation, and patients with chronic heart failure (CHF) or chronic obstructive pulmonary disease (COPD) took part in the research. 14 The findings showed all items to be relevant and to correspond to the concept of patient participation. Further, the tool was considered to have an appealing scope, structure and format. Yet, minor amendments were recommended and additional evaluation was necessary; 16 thus, the purpose of this study was to further evaluate aspects of reliability and validity of The 4Ps in patients subject to chronic heart or lung disease.

Methods

Design

The psychometric properties of The 4Ps tool were investigated by evaluating internal scale validity and test–retest reliability. For study purposes, the potential for using The 4Ps for a dialogue between the patient and health professionals about preferences for and the experience of participation was excluded, and data were collected for the psychometric evaluation only. Ethical approval was provided by the Regional Ethical Review Board in Uppsala, Sweden (number 2011/032).

The 4Ps tool

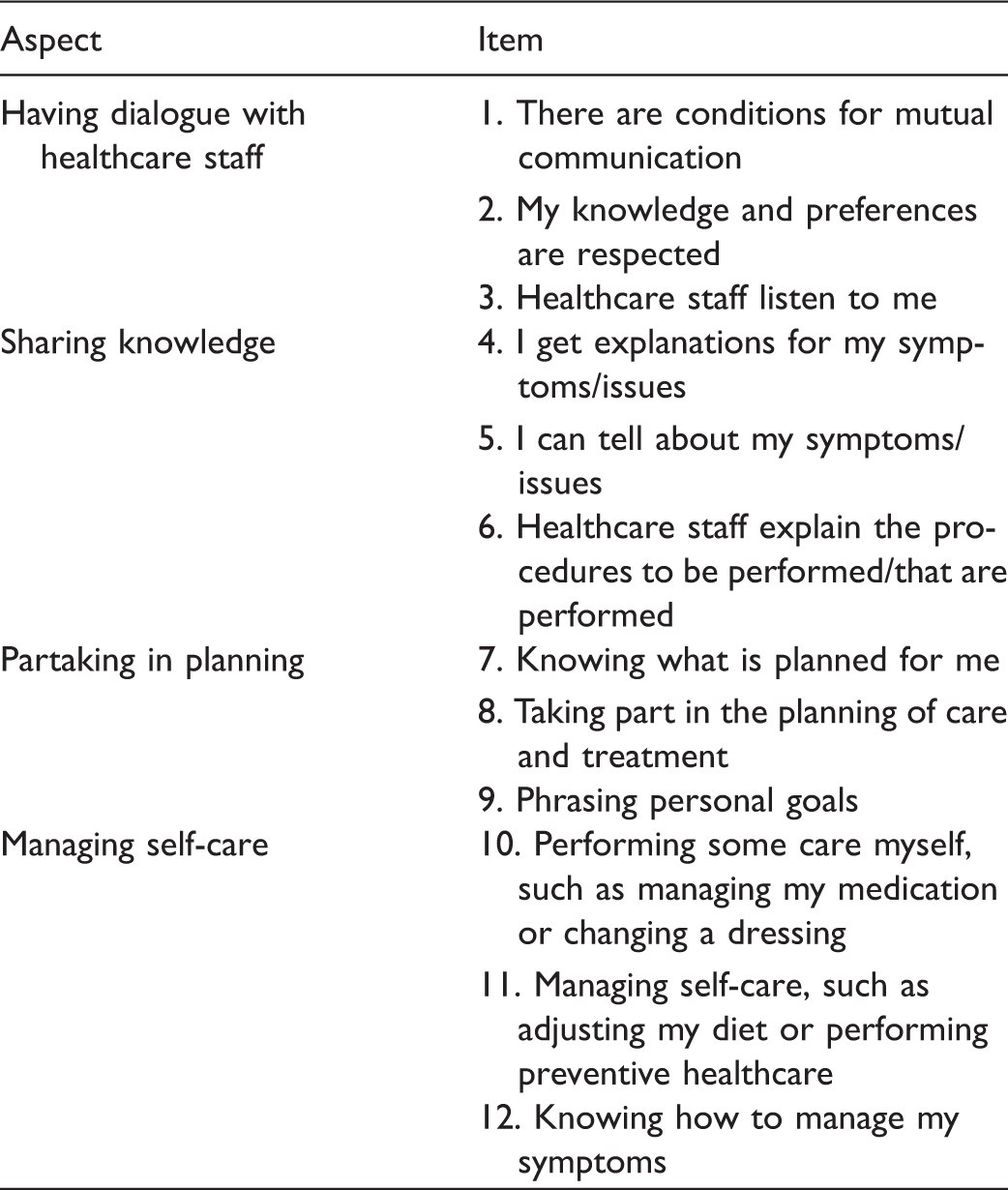

The Patient Preferences for Patient Participation tool (The 4Ps), aspects and items.

Sample

The study used a strategic sample. Individuals with CHF or COPD were recruited through primary healthcare centres and outpatient CHF clinics in both urban and rural areas in a typical Swedish region. Inclusion criteria were: the ability to respond to a questionnaire in Swedish, and having a minimum of two planned healthcare contacts within the next 12 months. For the study, 50 patients diagnosed with COPD and 60 patients diagnosed with CHF were recruited. Data were collected from October 2011 to August 2013.

Procedure

Registered nurses (RNs) at each unit were engaged to identify and include patients. For each patient fulfilling the inclusion criteria, the RN sent written information about the study prior to a planned outpatient visit or provided the written information during a visit. During the scheduled visit, each patient was given a brief oral recap of the information along with a question about their willingness to participate. Patients agreeing to take part gave their written consent and then completed Sections 1 and 2 of The 4Ps tool individually, placed the completed tool in a prepaid envelope, and mailed it to the research team. Two weeks after the first completion of Sections 1 and 2, a retest of Sections 1 and 2 was sent to the patient. Section 3 was sent to the patient once the date of the second of the two additional planned healthcare contacts had passed. In addition, a retest for Section 3 was sent to the patient within two weeks after the first completion of Section 3. For each section of the tool and the retests, reminders were sent after two and four weeks, respectively.

Statistical analysis

Responses in Section 1 were managed as dichotomous data in the statistical analysis, that is, ticked items were treated as ‘yes’ and non-ticked items as ‘no’.

Internal scale validity

To evaluate internal scale validity, and to determine two aspects of reliability, the Rasch measurement model was used to analyse the test data of each section in The 4Ps tool. From the family of Rasch models, the dichotomous model was chosen in Section 1. In Sections 2 and 3 with four-category scales, the polytomous Rating Scale Model was chosen because all items were designed to share the same rating scale. 17 In a Rasch analysis, persons and items are hierarchically ordered along a common measurement line, and scores are transformed by a logarithmic transformation to linear measures and the common unit of measurement for persons and items is ‘log-odds-probability-units’ (logits). 17

The functioning of the four-category rating scales of Sections 2 and 3 was scrutinised (in Section 1, items are dichotomous and thus not considered as a rating scale). The criteria for optimal rating scale functioning were a minimum of 10 responses in each scale step, an outfit mean square (MnSq) of < 2 for each category and threshold, and monotonic advances in scale step and threshold measures. 18 Item goodness of fit to the Rasch model was investigated, and acceptable item fit to the construct was set to an infit MnSq of residuals between 0.6 and 1.4 (the expected value was 1.0) in combination with a standardised z-statistic within the range −2.0 to +2.0. 19 The weighted infit item statistics were chosen because they are less affected by extreme responses by persons whose measure is far from the item measure than the unweighted outfit statistics. 17 Person fit was also investigated using the same criteria as previously.20,21

Item and person reliability coefficients were calculated. 17 The item reliability coefficient reflects to what extent the hierarchy of the items is applicable to another set of respondents. The person reliability coefficient indicates the replicability of person ordering, if this group of persons was given a parallel set of items and is analogous to the traditional coefficient alpha. 17 Benchmarks were set at 0.91–0.94 as very good, 0.81–0.90 as good, 0.67–0.80 as fair and < 0.67 as poor. 22 Targeting, i.e. to what extent item and person measure levels match, was investigated by comparing mean logit measures for items and persons, with the set benchmark of < 0.5 logits, and by visual inspection of the person-item map of Sections 2 and 3 with four-category scales. 17

Test–retest reliability

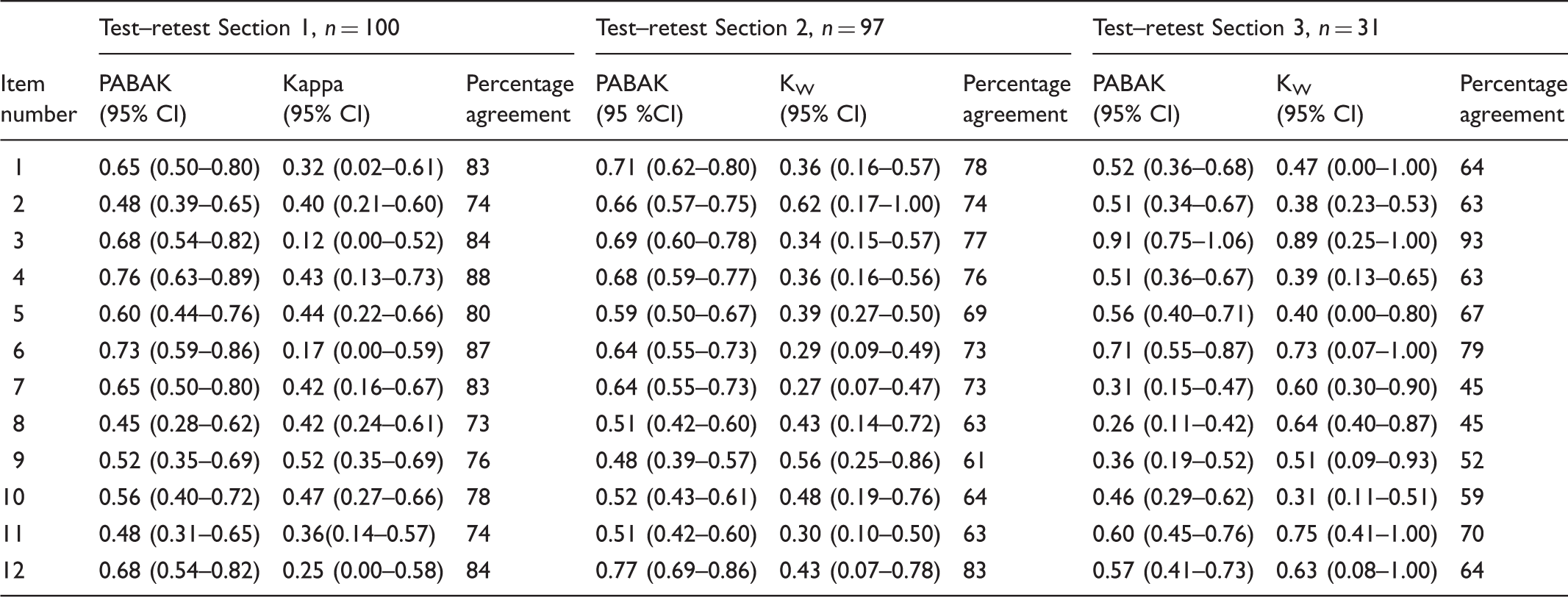

For test–retest reliability, intraclass correlation coefficients (ICC) were calculated on the person measure. 23 Persons who answered less than six items in either test or retest were excluded from the analysis. The person measure for each person and section was obtained from the Rasch analysis in the unit of logits and then transformed to the more easily interpreted ‘4Ps units’ ranging from 0 to 100 for each subscale. 17 The ICC (agreement) was calculated, based on one-way analysis of variance (ANOVA), as the reliability coefficient for each section. 23 An ICC of 0.70 was considered to be sufficient. 24 To evaluate test–retest reliability on an item level, the kappa coefficient, which assesses agreement beyond chance, 25 was calculated for each item in each section. For Sections 2 and 3, with four-category scales, the quadratic-weighted kappa coefficient 26 was calculated. To adjust for skewed proportions in the responses, which give a low kappa despite high agreement, a prevalence- and bias-adjusted kappa (PABAK) 27 was calculated. As benchmarks for all kappa calculations, 28 a coefficient of 0.81–1.00 was considered almost perfect, 0.61–0.80 substantial agreement, 0.41–0.60 moderate agreement, 0.21–0.40 fair agreement, and 0.00–0.20 slight agreement. 29 For descriptive purposes, the percentage agreement, which includes agreement due to chance, was calculated between test and retest.

A 95% confidence interval (CI) was used when applicable. Rasch analyses were conducted with the Winsteps® Rasch measurement computer program (Version 3.75.1) (Copyright 2009, John M. Linacre). The SPSS version 21 software was used to generate descriptive statistics and to perform ICC calculations. Kappa and weighted kappa were calculated with the online software VassarStats. 30 The 95% CIs for weighted kappa in Sections 2 and 3 were calculated manually in the software program STATA release 11. The PABAK was calculated using the online Pabak-OS calculator. 31

Findings

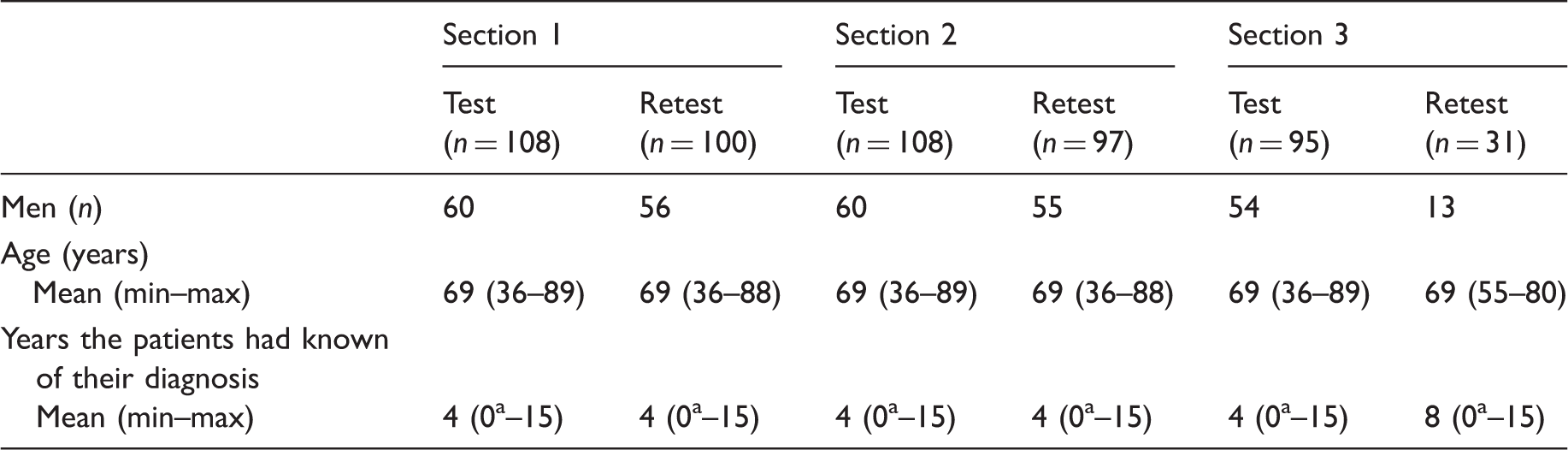

Demographic information of patients completing the test and retest of the different sections of The Patient Preferences for Patient Participation (4Ps) tool.

Recently diagnosed.

Internal scale validity

The rating scale analysis, performed in Sections 2 and 3, showed that the threshold and category measures increased for each scale step, the outfit MnSq was < 2 and the observed counts were > 10 for all response alternatives. Thus, all criteria for rating scale functioning were fulfilled. The entire rating scale was employed in Section 3, more so than in Section 2 with 82% of the ratings in the two most positive alternatives in Section 3, compared to 94% in Section 2.

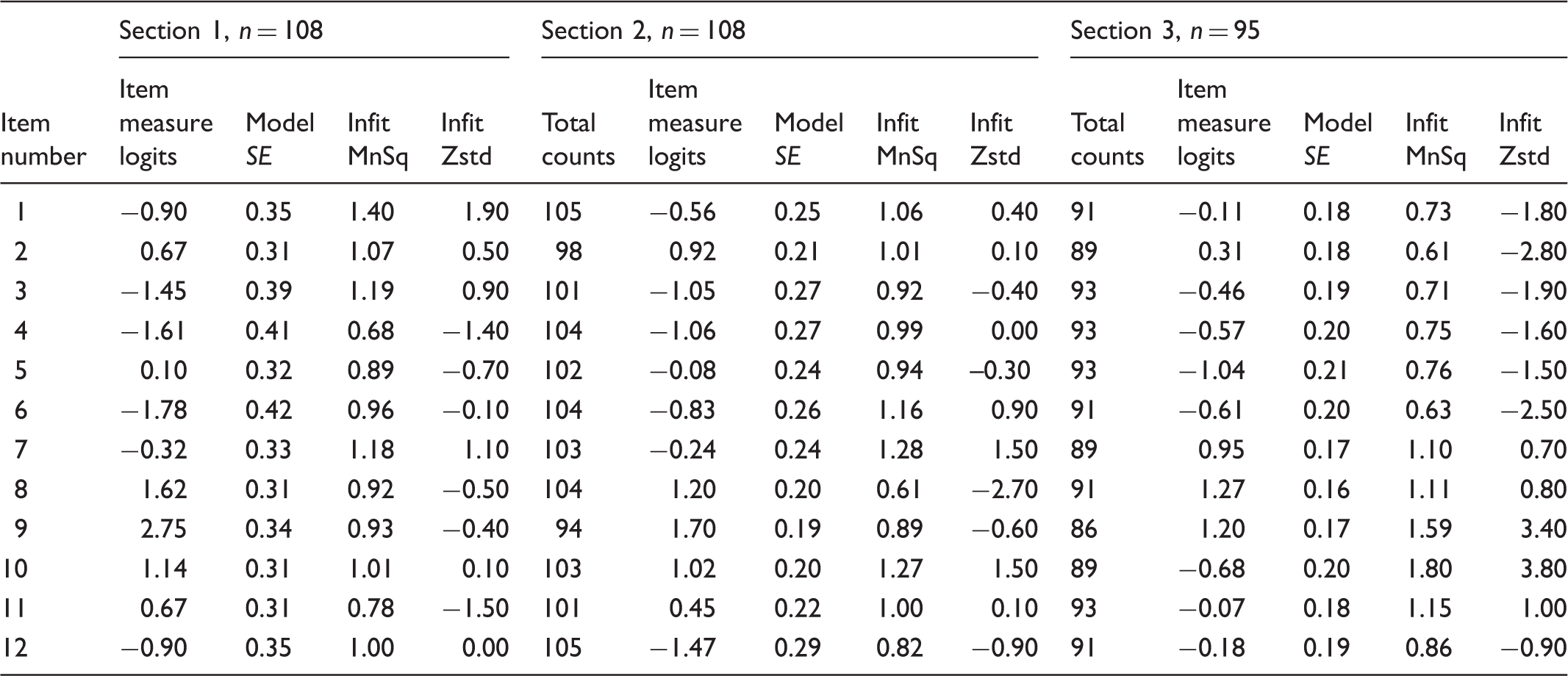

Item measure and goodness-of-fit statistics of The Patient Preferences for Patient Participation (4Ps) tool.

SE: Standard Error; Infit MnSq: Infit mean square. Weighted mean of the information-weighted standardised residuals; Infit Zstd: Infit z-score standardised. The infit MnSq value standardised to a t distribution, estimating the statistical significance of the infit misfit.

The item reliability of Section 1 was 0.93 (very good), and the person reliability was 0.65 (poor). In Section 2 the item reliability was 0.94, which is very good, and the person reliability was 0.69, which is fair. For Section 3, the item and the person reliability were 0.93 (very good) and 0.80 (fair), respectively.

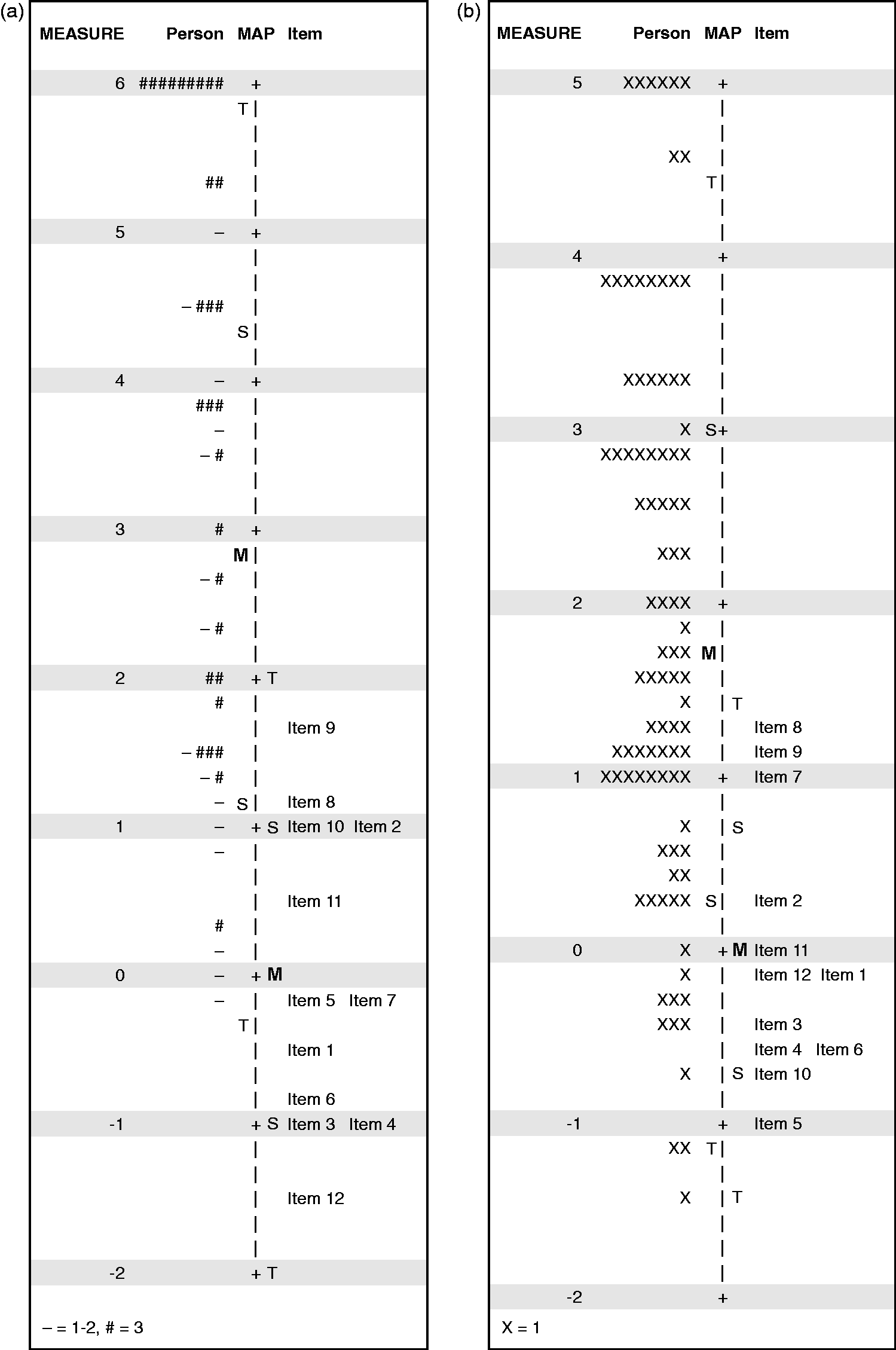

The visual inspection of the person-item map of Sections 2 and 3 showed a ceiling effect in both sections, more pronounced in Section 2. The difference between the mean item measure and the mean person measure was 3.64 logits in Section 2 and 1.92 in Section 3, thus considerably higher than the criterion of < 0.5 logits (Figure 1). The actual ceiling effects were 36% (n = 39), 19% (n = 20), and 5% (n = 5), in the tests of the sections respectively.

Variable map. Targeting of Sections 2 (1a) and 3 (1b) of The Patient Preferences for Patient Participation (4Ps) tool item measures (to the right in the figures) to The 4Ps tool level of the sample (to the left in the figures).

Test–retest reliability

The reliability coefficient (ICC), for Section 1 was 0.56 (CI = 0.39–0.70), and for Section 2, 0.56 (CI = 0.40–0.70), which is not considered to be sufficient. Section 3 was found stable, with an ICC of 0.82 (CI = 0.65–0.91).

Test–retest reliability results in an item level of The Patient Preferences for Patient Participation (4Ps) tool.

PABAK: Prevalence- and bias-adjusted kappa; KW: Quadratic-weighted kappa coefficient; CI: Confidence intervals. For items 1, 3, 4, 6, 7 and 11 for KW in Section 2, and for items 2 and 10 for KW in Section 3 confidence intervals were calculated manually using the standard error calculated in the software program STATA.

Discussion

The psychometric evaluation of The 4Ps performed in this study showed some inspiring results, and points out some areas where the tool can be improved, along with imperative implications for use in clinical practice and in research.

The Rasch analysis illuminated how The 4Ps is used by the participants. The most obvious result was the skewed responses in the rating scale and targeting analyses. The fact that many of the items were highly prioritised and evaluated is a positive aspect of the instrument in the sense that it indicates that all items are relevant for patient participation. 28 The downside of this is a weaker sensitivity to change because a true change in the patients’ preferences or evaluations may not be detected. 24 This ceiling effect was most prominent in the dichotomised Section 1, where the patients were instructed to tick items that they defined as participation. Since all items included patient participation in general terms, 14 a ceiling effect was expected and was not a problem. In Section 2, with a ceiling effect of 19%, providing a response alternative with a value higher than ‘very important’ might further support distinguishing which item or items are a priority to the patient in terms of participation. The responses to Section 3 were more diverse, and the slight ceiling effect a must since the item ‘completely’ cannot be granted a higher value. The item fit evaluation showed that all items in Section 1 and Section 2, and all but two in Section 3, fit the Rasch model; thus, The 4Ps transpires to be a unidimensional construct. 17 The misfit in item 9 and item 10 in Section 3 can probably be attributed to unclear phrasing in item 9, and item 10’s specific examples of self-care not corresponding to living with CHF or COPD. This would be consistent with the findings in the earlier qualitative validation study. 14 We suggest rephrasing item 9 to emphasise that this aspect of patient participation connotes an interaction with healthcare professionals, in line with Swedish healthcare legislation, 3 and removing the examples of self-care presented in item 10. Further, the reliability results from the Rasch analysis showed very good item reliability, and poorer person reliability. The poorer person reliability is most likely due to the ceiling effects, discussed above along with potential solutions.

The test–retest reliability in Sections 1 and 2, illustrated by ICC coefficients, did not reach the set criterion. One reason for the poor ICC may be that only the upper half the 0–100 scale was used for Sections 1 and 2, and because the ICC is a function of the within- and between-person variance, a small between-person variance tends to deflate the ICC. Nevertheless, the poor agreement between test and retest in Sections 1 and 2 is also noticed in particular items. On the item level, PABAK and Kappa coefficients ranged between moderate to substantial agreement. When interpreting the test–retest results, the purposes of the different sections of The 4Ps tool should be considered. Section 1 is designed to merely guide the patient into the subject of participation and in Section 2, the patients are asked to prioritise the importance of the items to patient participation. Although frequently used in policy documents in healthcare,32,33 ‘patient participation’ is an abstract concept and it is possible that lay people have not reflected upon its meaning. Consequently, the first test session might have led to further reflections on the issue of participation and the role of the patient, and this might have affected the retest of Sections 1 and 2. 28 Furthermore, patients’ preferences might vary and individuals might change their minds regarding which aspects of the concept are the most prominent at a particular point in time. 34 These results show that patient preferences for participation may vary over time; thus, in clinical practice patients’ preferences for participation need to be addressed recurrently. This can, together with the prominent ceiling effect in Section 2, explain the modest test–retest reliability in Section 1 and 2, in both item level and in person measure.

The purpose of Section 3, on the other hand, is to evaluate patient participation, and for this purpose high test–retest reliability is essential. In Section 3, the test–retest reliability was found to be more stable when using person measures, whereas it varied between items. One potential reason for the less-favourable results may be that the patients could have had additional healthcare contacts during the test–retest period of 14 days. In such cases the patients’ evaluation of patient participation would not be of the same healthcare interaction, potentially affecting the retest. Further, all in all, what was evaluated was the recall of the individual’s experience of participation in healthcare contacts. Apparently, the recall of verbal contact with healthcare staff was more stable (items 3 and 6), while the memory of other aspects was less stable. The lowest test–retest reliability was found in the aspect Partaking in planning. Possibly, the low reliability was due to the fact that the patients had no, or limited, experience of planning to relate to, as reported previously in the qualitative validation study. 14 Despite low stability for some items, we find no reason to reduce or modify items in the aspect Partaking in planning since planning of care is a well-defined aspect of patient participation, recognised in both research and policies.14,35 Instead, these results need to be taken into consideration when using The 4Ps for evaluation purposes. However, when calculating test–retest reliability from person measures, Section 3 was found to be more stable. This illuminates the advantage of using person measures for research purposes, which in this case seems to even out the differences between items. Further, when performing a test–retest evaluation, there has to be a sufficiently short interval between the test and the retest so that the measured phenomena is not likely to have changed. 28 In this study, the time frame of two weeks with an additional option to respond to reminders after four or six weeks might have been too long. Most other instruments measuring patient participation have not been evaluated for test–retest reliability.8,11–13 The exception is one patient-participation instrument that demonstrated acceptable test–retest reliability with ICC ranging from 0.59 to 0.93 on an item level. 10 However, that instrument evaluated patients’ participation in emergency departments on one specific occasion only, whereas Section 3 in The 4Ps evaluates participation in ongoing care for patients suffering from a chronic condition. Considering the differences in healthcare processes and settings, in addition to different reasons for interacting with healthcare services, we suggest that a tool reflecting ongoing care such as The 4Ps will not be as stable as a tool evaluating one specific healthcare event.

The clinical implications of this study are that the patient’s evaluation in Section 3 is suggested as being useful for recurrent dialogues between the patient and healthcare professionals. Further, with the proviso that the reliability of Section 2 needs to be improved, the patient’s prioritisation (Section 2) of participation can be compared to the patient’s evaluation (Section 3), again in a dialogue between the patient and the healthcare professional. The patient’s prioritisation in Section 2 could also be compared with previous prioritisations. This process would aid healthcare professionals to better understand patient participation considering both patients’ preferences and patients’ experiences. Thus, it could provide a means for understanding and implementing patient participation policies.3,33 In clinical practice, the comparisons can supposedly be performed item by item at an individual level, or group level. Whereas in research, we propose The 4Ps be applied for evaluation purposes: with suggested amendments in Section 2, a correspondence between Sections 2 and 3 can be determined, i.e. taking both patients’ preferences and experience of patient participation into account. Further, Section 2 can be used to evaluate whether patients change their prioritisations over time or after an intervention.

Legislations and policies have a special significance in healthcare, a sector based on knowledge and evidence. 3 However, policies can be based on standards and culture, and can be multifaceted and abstract. Yet, health professionals and organisations are to interpret and implement policies in practice. Regardless of the increasing awareness of the individual’s right to autonomy in diverse interactions with society, there is still a need to implement means that facilitate the patient’s right to participate in healthcare. We advocate that innovations such as The 4Ps, with opportunities for the patient and staff to interact, may serve patient participation from a patient perspective. 36 However, further studies are needed with regard to a favourable implementation of The 4Ps in clinical practice.

Methodological considerations

The 4Ps tool was developed for clinical use to provide a means for patients to share their preferences for participation with healthcare professionals in clinics. In addition, the responses can be used in research to increase knowledge on patient participation in subgroups of patients. 16 The psychometric evaluation was performed on two levels: an item level evaluation, where the application area primarily is found in clinic; and an evaluation performed on person measures, exclusively for evaluation for scientific purposes. A suitable statistic for evaluating agreement in ordinal level data, in this study at the item level, is the kappa analysis. However, kappa is dependent on equal distributions of answers across all response options in order to give fair coefficients. In the case of The 4Ps, a higher frequency of answers at the higher end of the rating scale is to be expected. This skewedness in responses inevitably gives a lower kappa coefficient despite fair agreement, called ‘the kappa paradox’, and leads to difficulties in interpreting the kappa coefficients and drawing conclusions about reliability. 37 Therefore, to provide a more comprehensive understanding of the agreement we calculated PABAK which compensates for the kappa paradox.27,38 The sample in the retest of Section 3 was considerably smaller than planned. This affected the CI of the reliability coefficients, thus the CI became wide. 28 The low response rate was not mainly due to patients not wanting to answer the retest, but rather because, due to error, only a few patients were given the opportunity to respond.

Conclusion

The 4Ps tool provides a means to increase the understanding of patient participation in clinical practice, providing a structure for the individual patient to become more involved in his or her care. As such, it is a potential aid in implementing policies on patient participation. Furthermore, The 4Ps can be applied to increase general knowledge of patient participation. When psychometrically evaluated, The 4Ps tool was found to have reasonable validity, and varied reliability. Based on the findings, we suggest the rating scale in Section 2 be modified to improve sensitivity and reliability, as well as rephrasing of two items to improve goodness of fit. Following amendments, The 4Ps tool can be suggested for recurrent use in dialogues in everyday healthcare, as a basis for care planning together with patients subject to chronic heart or lung disease in primary and/or outpatient healthcare, and for scientific purposes in similar target groups.

Footnotes

Author note

Please direct any correspondence regarding The 4Ps tool to: Ann Catrine Eldh, Faculty of Medicine and Health Sciences, Department of Nursing, Linköping University, S581 83 Linköping, Sweden. Email: ann.catrine.eldh@liu.se