Abstract

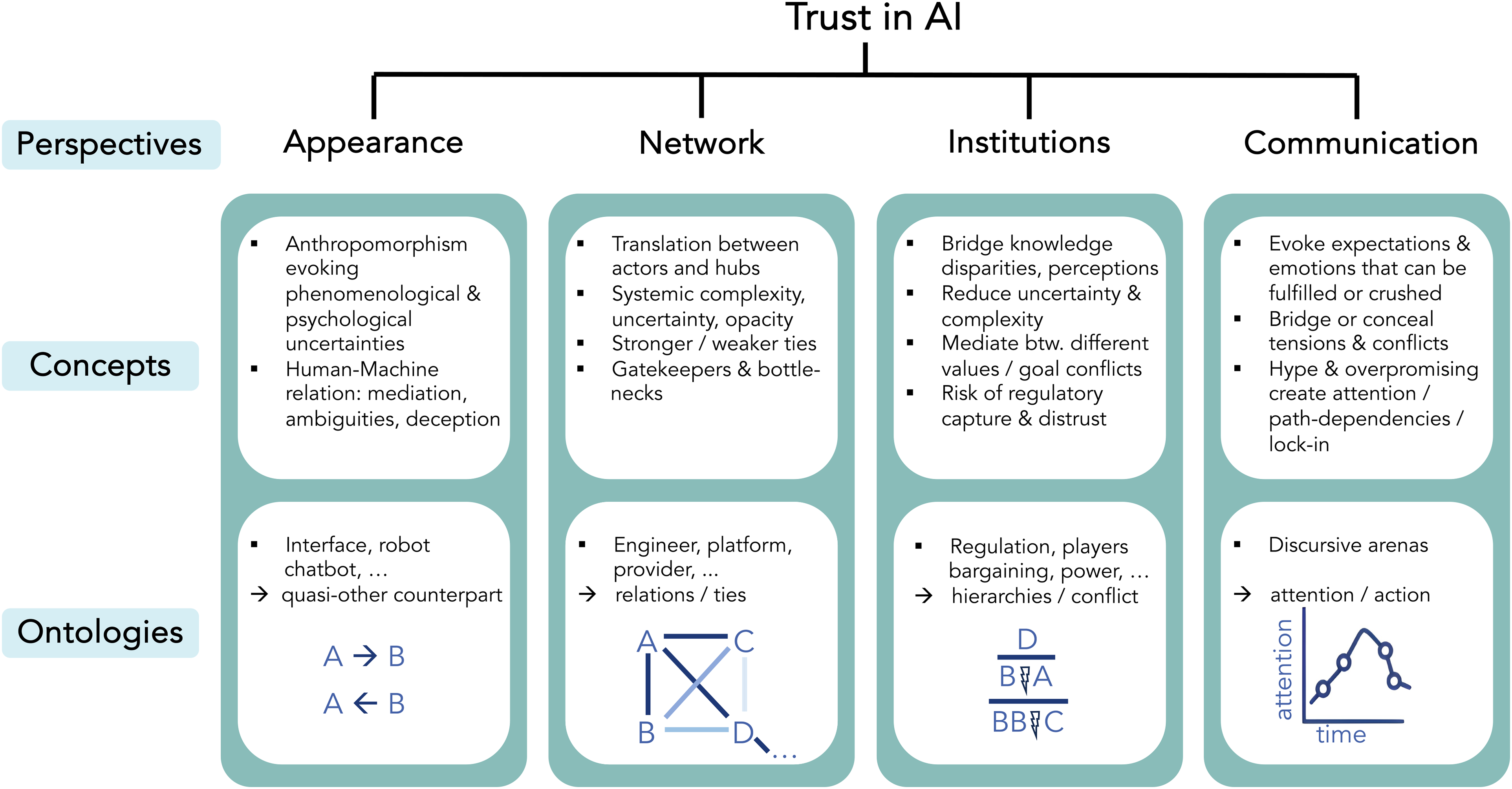

Trustworthy artificial intelligence (TAI) is trending high on the political agenda. However, what is actually implied when talking about TAI, and why it is so difficult to achieve, remains insufficiently understood by both academic discourse and current AI policy frameworks. This paper offers an analytical scheme with four different dimensions that constitute TAI: a) A user perspective of AI as a quasi-other; b) AI's embedding in a network of actors from programmers to platform gatekeepers; c) The regulatory role of governance in bridging trust insecurities and deciding on AI value trade-offs; and d) The role of narratives and rhetoric in mediating AI and its conflictual governance processes. It is through the analytical scheme that overlooked aspects and missed regulatory demands around TAI are revealed and can be tackled. Conceptually, this work is situated in disciplinary transgression, dictated by the complexity of the phenomenon of TAI. The paper borrows from multiple inspirations such as phenomenology to reveal AI as a quasi-other we (dis-)trust; Science & Technology Studies (STS) to deconstruct AI's social and rhetorical embedding; as well as political science for pinpointing hegemonial conflicts within regulatory bargaining.

Keywords

Introduction

Trustworthy artificial intelligence (TAI) is trending high on the political agenda. The advancement of artificial intelligence (AI) technology has been endowed with massive investments and great hopes by governments around the world to solve pressing problems in our societies. However, past incidents related to AI have provoked attention and outcry in media and led to hesitation to continue down the path of AI enthusiasm unquestioningly. AI can be misused to manipulate political opinion with deep fakes (van Huijstee et al., 2021). The COMPAS recidivism risk assessment tool used in the US judiciary paradigmatically shows how incidents of bias and discrimination in data processing can aggravate racism and inequality in criminal prosecution (Angwin et al., 2016). Or, while crucial infrastructure becomes ever more automated with AI, issues of safety, robustness and network vulnerability arise from failing systems (McMillan and Varga, 2022). These are only indicative examples that show some salient problems with AI systems.

Such publicly discussed incidents pose a great threat to building and maintaining trust in AI systems and in the institutions that provide these systems and protect users. Faced with these individual and systemic impacts of AI on our societies, regulators are on the spot to carefully weigh the potentials and risks and develop effective policy. As a result, nation states have addressed the urgency of developing policies that address users’ ethical concerns while harvesting the economic and efficiency benefits of AI in strategy and position papers (Radu, 2021). However, while there is a growing emphasis on the trust dimension in AI governance in these papers, the pairing of trust and AI is far from intuitive. It invokes first and foremost an unorthodox relationship: It marries a widely technically employed term, AI, with a social one, trust. How to bridge this technical to social domain is not so obvious and straightforwardly answered (see section Pairing trust and AI – a conceptual challenge).

Why should policy makers and researchers care about trust in the governance of a multifaceted technology like AI? First, to understand the general value of trust for technology governance, it is helpful to recognise that distrust in particular can be very costly for society (Hardin, 2002; Warren, 1999). In general, trust relationships are characterised by a state of uncertainty and risk (Luhmann, 1988; Misztal, 1996). If users had perfect knowledge and control over their technological environment, notions of trust in technology would be redundant. People are willing to give up control if they can be sure that their peers will not act against their interests (Coleman, 1986). Put simply, if one trusts and gives up control, one can save and/or redirect resources. Distrust not only does the opposite, it can be lastingly damaging as it ruins reputations and leads to a great loss of social and economic capital (North, 1990). When distrust spreads and becomes endemic, everyone infected loses. AI scandals illustrate this phenomenon. In the worst cases, users feel betrayed, AI applications are rejected, providers are boycotted, money is burned, and governments’ regulatory capacity is questioned. But this also implies that distrust is not always negative. Citizens signalling distrust can also represent a healthy watchdog mechanism for checks and balances, for example by flagging misplaced or badly executed AI systems, regulatory capture or empty rhetoric 1 .

Second, and very concretely, TAI plays a pivotal role in the current regulatory debate, as it spearheads regulatory frameworks such as the European AI Act (AIA). Unfortunately, the regulatory approach to trust so far has been rather vague and confusing, lacking definitions and a deeper understanding of trust (section Trustworthy AI in the current landscape: From ethical values to regulatory frameworks).

Third, the current ethical and regulatory debate on TAI is very much fixated on a technical understanding of AI (section Opening technical AI to social dimensions of trust) and its debugging of harmful effects, such as providing computational methods in inspecting models and providing interpretability (see discussion by Páez, 2019; Zednik, 2019; and von Eschenbach, 2021), or de-biasing, discussing trade-offs between algorithmic efficiency and different variations of fairness (Kleinberg et al., 2016; Wong, 2020). This technical debate has its merit, but it lost track of the actual social preconditions that tie trust and AI together.

Therefore, this paper responds to current governance initiatives and ethical discussions that invoke trust as an important variable in AI regulation. To be clear from the start: The main aim of this paper is not to assess whether AI is trustworthy or not, but to give an account of the dimensions that need to be considered in order to be able to assess it. Hence, first of all, this paper takes a step back and revisits the concepts of trust and AI. Given the contested relationship between the two phenomena, what are the epistemic dimensions that tie trust and AI together? To answer this research question I forward and execute an analytical scheme based on four pillars: a) AI as a quasi-other; b) AI's embedding in a network of actors from programmers to platform gatekeepers; c) the regulatory role of governance in bridging trust insecurities and deciding on AI value trade-offs; and d) the role of narratives and rhetoric in mediating AI its conflictual governance processes. It is through this systematization that overlooked aspects and missed regulatory demands around TAI are revealed and can be addressed (see Concluding remarks).

This work can be understood as a follow-up on comprehensive systematization works on trust in information and communication technologies (ICT), such as in e-commerce (McKnight et al., 2002), in information systems (Söllner et al., 2016), or in broader readings of technology (Botsman, 2017). However, the complexity of the AI phenomenon requires both different analytical and disciplinary approaches than the ones targeting ICT systems. Therefore, this work borrows from multiple academic viewpoints and concepts. Among other, I refer to phenomenology in order to reveal AI as a quasi-other that we (dis)trust; Science & Technology Studies (STS) to deconstruct the social and rhetorical embedding of AI; and political science to identify hegemonic conflicts in regulatory bargaining. This, admittedly, wide approach is less a scholarly preference but owed to the complexity of the AI phenomenon itself. With disciplinary blinkers, one would miss the constitutive bridging pillars that connect trust with AI. In my approach, I adhere to the agenda of critical algorithmic studies, which is “essentially, founded in a disciplinary transgression“ (Seaver, 2017: 2).

Trustworthy AI in the current landscape: From ethical values to regulatory frameworks

Ethical principles

Recently, there has been a rich landscape of TAI work emerging in both academic debate and governance proposals. The publication of ethical guidelines has reached a scale that is hard to keep track of 2 . High-level principles are published by political bodies and by big Tech companies that aim to ensure a socially desirable implementation of AI, linking ethical values to notions of trustworthiness (EU High-Level Expert Group on AI, 2019; European Commission, 2020a; OECD, 2019). The most dominant approach towards TAI is embedded in the field of ethics. Here, trust is operationalised as a resulting phenomenon that emerges from following a checklist of ethical requirements that need to be ‘handled’ or ‘taken care of’. In this strikingly instrumental understanding of trust, ethicists list values, such as transparency, privacy, accountability, fairness or robustness as fundamental requirements. Kaur et al. (2023) and Reinhardt (2022) undertake great efforts in assembling all the literature of TAI that unites behind each of these single ethical values (see also Simion and Kelp, 2023).

The ethical discourse, even when condensed, 3 is descriptively rich but at the same time abundant and abstract, lacking clarity and consensus. Lists of axiomatic AI principles from the public and private sector levitate over the contested reality of society. It is implied that ethical values can be analytically ‘isolated’, thereby failing to point to the ambivalences and tensions arising between the values (Mittelstadt, 2019). Furthermore, the overall difficulty and reservation to operationalize normative principles and rights into quantitative and measurable scores for governance, while isolating them from their social surrounding and context (Hoffmann, 2019), has led some to bluntly conclude that the discourse of AI ethics is essentially “useless” (Munn, 2022). On a rather poetic remark, Reinhardt (2022) observes that the academic field of trust and AI has turned into “an intellectual land of plenty, a mythological or fictional place where everything is available at any time without conflicts” (741). In conclusion, ethical values may give guidance for better understanding the risks associated with AI but little can be deduced from the ethical discourse in better understanding the phenomenon of TAI.

Governing frameworks

This ethical discourse is flanked by the crafting of a global governance regime around AI. So far, this regime consists of an overlapping ensemble of private standards, normative principle-setting, concrete standardization efforts, as well as the creation of new legal frameworks that shall extend or replace existing (inter-)national legislation (Veale et al., 2023). Supranational bodies such as the OECD (2019) recommended some guiding (albeit again vague) principles for TAI which it would like to see promoted and implemented, taken up by the United Nations which published a more detailed interim Report on “Governing AI for Humanity” in late 2023.

Of all global players, the EU has unquestionably been most proactive in coming up with a coercive and unified framework for establishing TAI 4 . The AIA passed the European Parliament in March 2024 and will come into force by 2026 (European Commission, 2024). The EU commission had initiated the negotiation process in 2019 to develop a distinct European approach to “Excellence and Trust in Artificial Intelligence” (European Commission, 2020b). In the same year, the High-Level Expert Group on AI (HLEG) set the normative foundations for EUs understanding of TAI, forwarding some ethical principles derived from the EU fundamental rights framework (High-Level Expert Group, 2019). The 2020 European Commission White Paper, embedded in a public consultation process, similarly stressed: “As digital technology becomes an ever more central part of every aspect of people's lives, people should be able to trust it. Trustworthiness is also a prerequisite for its uptake“ (European Commission, 2020b: 1), following up with a bold proposal of an “ecosystem of trust”.

This AI EU ecosystem of trust builds on three pillars (5): “1. it should be lawful, complying with all applicable laws and regulations; 2. it should be ethical, ensuring adherence to ethical principles and values; and 3. it should be robust, both from a technical and social perspective, since, even with good intentions, AI systems can cause unintentional harm.”

The notion of trust enters the picture with the classification of high-risk AI systems. They are handled through a self- and third-party conformity assessment (AIA, Article 43). Such assessment builds on the 2020 ‘self-assessment list for Trustworthy Artificial Intelligence’ (ALTAI), which can be understood as a technical and ethical check list (European Commission, 2020a). If this self-assessment or third-party assessment will be enforced in a rigorous and effective way remains disputable, given the general contestability and interpretative vagueness of ethical values and the questionable willingness of profit seeking companies to curtail themselves with higher conformity obligations. Here, users will simply have to trust providers and third-parties. Interestingly, trust is rather featured as a European selling point in the AIA than really being defined. “The Act portrays this declaration of conformity with EU standards as a chief marker of “trustworthiness” (Paul, 2023:12). Thus, it is the entire EU conformity system that is branded as trustworthy, without any explanation of what is essentially meant by trust in the context of AI. It is striking that the entire EU regulatory framework lacks a single definition of trust. As a result, the presentation of TAI in EU documents appears slightly circular. In a nutshell: The EU AI regulation is trustworthy because AI is addressed by the EU. The term TAI lacks semantic quality. As will be shown, this is problematic because regulation risks missing core dimensions of trust that are important for the governance of AI.

Pairing trust and AI – a conceptual challenge

Towards a sincere understanding of trust

Before delving into the different dimensions of trust in AI (section Trust dimensions in AI), the following section clarifies what to actually look for. Trust is not an axiomatic ethical value as the current ethical debate on AI might suggest. To refer to the introductory remarks, trust is a phenomenon that emerges in the social interaction of individuals and collectives characterised by risk and uncertainty. Conceptual and analytical debates on trust focus on the different reasons for entering into trust relationships and on the characteristics of the trust-giver, the trust-taker, and their relationship. Here, trust is generally understood as a social attitude, a normative, mostly emotional expectation towards an entity x and its performance (Hardin, 2002; McLeod, 2021). Trustworthiness, in turn, is a quality or characteristic of entity x and its performance that motivates to provide sufficient reason to justify the attitude of trust (Nickel, 2013). The commonly used analytical scheme to analyse trustworthiness is a three-place relationship: “B is trustworthy for person A with regard to the performance of x” (Nickel et al., 2010: 431). Applying this analytical scheme to the technological domain is neither intuitive nor unproblematic. The dominant approach to trustworthy technology relates to the factor of functionality, which is understood as reliability in performance. “Reliability is a characteristic of an item, expressed by the probability that the item will perform its required function under given conditions for a stated time interval” (Nickel et al., 2010: 433). It should be noted that the connotation of reliability is heavily influenced by an engineering and rational choice perspective that links the performance of technology to the risk of failure, for example, the risk of infrastructure collapsing.

However, many scholars argue that reducing trust to the notion of reliability does not do justice to the true nature of trust, raising the question of whether one should use the concept of trust at all in the context of technology. They link trust to a richer notion that requires some motivation, also known as ‘motive-based’ theories of trust. These scholars argue that trust must include motives of goodwill and notions of betrayal, thus emphasising emotional involvement (Baier, 1986; Jones, 1996). Others argue that there must be a moral dimension present, such as moral integrity or a person bound by a moral obligation, in order to speak of trust relationships (McLeod, 2002; Nickel, 2007). These broader conceptions of trust defend trust as an inherently interpersonal phenomenon. Trust is conceptualised as a uniquely human feature, capable of emotions, agency and moral intentions, rather than a phenomenon between objects or technology. The enthusiasm of some thinkers commenting on the pairing of trust and technology is rather reserved. Jones writes: “Trusting is not an attitude that we can adopt toward machinery. I can rely on my computer not to destroy important documents or on my old car to get me from A to B, but my old car is reliable rather than trustworthy. One can only trust things that have wills (…)” (Jones, 1996: 14; see also Ryan 2020 on AI). These reservations about simply transferring interpersonal trust to human-machine trust are instructive for the TAI debate. If one wants to pair trust and AI, one needs to look for features that characterise human-machine relationships beyond reliability.

Opening technical AI to social dimensions of trust

Finding these social and uncertainty realms acknowledges a broader understanding of AI. There is a plethora of definitions of AI coming from academia, corporations, tech gurus and policy papers. Certain features of AI are favoured in certain disciplines, reflecting the diversity of existing AI applications and research. This abundance of discourse has unfortunately led to much confusion around the term in both policy (Folberth et al., 2022) and in public discourse (Natale and Ballatore, 2020) (see also Promoting trustworthy AI through narratives: mediating meaning & attention).

From a technical perspective, AI applications aim to perform some ideal action or reasoning associated with mimicking human tasks and thinking (Krafft et al., 2020). Due to recent technical developments in data processing capabilities and the implementation of statistical learning theory, machine learning (ML) has become the state of the art in AI applications, alongside logic and knowledge-based approaches (Russell and Norvig, 2022). ML relies on great access to data to make robust predictions and to correct performance errors in iterative computational sequences. The technical focus of AI is also dominant in policy papers. For example, the AIA, Art. 3, defines AI as a “machine-based system designed to operate with varying levels of autonomy and that may exhibit adaptiveness after deployment and that (…) can influence physical or virtual environments”.

Surprisingly, social environments are not part of the AIA AI definition and that is problematic if one wants to understand the role of trust in the picture. While technical definitions may suggest delimitation and clarity, they fall short of a larger notion when it comes to encompassing AI's relationship to trust. They fail to capture the distinct phenomena that AI applications produce, which arise not so much from algorithmic performativity but the meaning that is ascribed to it. I argue that AI is not only embedded in the social - but is constituted by it. The way AI is perceived and approached by users, embraced by institutions, praised by tech-gurus, and talked about in media points to a constant and complex dynamic between the actual technological developments and the potentials, fears and futures that are associated with it. It is exactly this constant tension between fact and fiction, hype and reality, scandal and breakthrough which is rendering AI so performative as a social phenomenon. I follow a reading that builds on an understanding of AI as situated and relational (Suchman, 2023; Suchman and Weber, 2016; Mackenzie, 2015), reworked and understood by different users and enmeshed in constellations of power. AI is hardly perceived and approached as a clearly articulated, delimited, and external ‘thing’, ‘model’ or ‘tool’ like some technical definitions suggest. Also, in their daily interaction users actually never see code, databases or backends of AI applications. Rather than approaching AI as a self-standing entity that can be generalised (‘AI is x’), in this reading AI is woven and negotiated in the everyday realities of users and society, with its applications mediating human relationships, producing intimacies, social orders and knowledge authorities. It is exactly in this dynamic sphere that I will place the analysis of the following sections, as it is here that one can locate the constitutive bridging pillars that tie trust and AI together. The upcoming scheme (see Table 1) should be understood as an offer to policymakers and researchers when they invoke trust relationships with AI, doing justice to the complexity and fragility of the phenomenon. Building trust is challenging, but also rewarding. As outlined in the introduction, respecting the role that (dis)trust plays in the acceptance and rejection of technology is central to designing successful policies.

Trust dimensions in AI

Four different trust dimensions that constitute TAI. Visualizing the metastructure of the upcoming analysis. 6

Phenomenological appearance: trusting AI as a quasi-other

From its very beginnings - the foundation of modern AI in the 1950s - AI has been associated with the phenomenon of anthropomorphism: the attribution of human characteristics to objects, behaviours or features - in this case, machines (Salles et al., 2020). In 1966, the computer scientist Joseph Weizenbaum fed his chatbot ELIZA with the DOCTOR script, imitating a Rogerian psychotherapist. ELIZA was a very rudimentary chatbot, programmed to simply rephrase patients’ answers as backfeed questions (Güzeldere and Franchi, 1995). Weizenbaum was struck when he observed that his chatbot elicited very emotional and intimate responses from his probands. What has since become known as the ‘ELIZA effect’ is a powerful demonstration of how humans can project emotions onto machines. The experiment shows that it is not so much the human-like capabilities of algorthmic decision making programs that trigger anthropomorphism (since ELIZA was a very simple software), but their combination with the vast field of human imagination. It is this combination of suggestive human characteristics of a machine with the power of human imagination that enables the emotional attachment to AI, whether it be social robots, assistive interfaces, or recent large-language-model chat bots like ‘Chat-GPT’ or ‘Gemini’.

Recent academic discourses such as postphenomenology or robot-ethics have elaborated new epistemologies for technological mediation. They develop new concepts of human-machine interaction (Latour, 1994) and technology embodiment (Ihde, 2009; Suchman, 2007); or discuss whether robots appearing in our social world should be understood as moral agents with rights (Loh, 2019; Wallach and Allen, 2008). Without entering into the discussion of whether it being legitimate or helpful to call AI systems moral agents with wills, it is an empirical fact that they increasingly appear human and interact with us as “quasi-others” (Coeckelbergh, 2012: 75). The recent use of AI in the field of personal assistance technologies based on natural language processing, such as Apple's ‘Siri’ or Amazon's ‘Alexa’ (Silva de Barcelos et al., 2020), social robotics applied in the fields of care, elderly and sex services (Scheutz and Arnold, 2016; Sheridan, 2020), or the use of user interfaces at work (Bader and Kaiser, 2019) are very indicative in this regard.

The phenomenological perspective makes clear that AI systems, even if they only simulate human characteristics such as motivations, morals and emotions, can raise expectations of trust. When people interact intimately with AI systems, they embark on fragile social bonds and expose themselves to emotional attachments. In doing so, they are confronted with a core characteristic of trust: the loss of control. When I show intimate emotions, I expose myself vulnerable as I develop expectations that can trigger feelings of validation, resentment or even betrayal. For the motive-based theorists of trust mentioned above, this phenomenological perspective may be frustrating because it refers only to projections and simulations of social beings, but this does not make it any less attractive to many human interactants. Undoubtedly, societies are only at the beginning of an increasing conflation of the real and the virtual, as AI applications are implemented in all kinds of social spheres.

AI as a quasi-other appears not only in social robotics or interfaces, but also with synthetically generated content. The flooding of the internet with deep fakes or factually false content generated by large-language-models has become a major concern in politics. Here, the blurring is deliberate and systematically aimed at disinformation and manipulation of users and the public, threatening the free formation of opinion and the personal integrity of individuals (Chesney and Citron, 2019; van Huijstee et al., 2021). The weaponisation of suspicion and distrust has already sparked a deliberate military coup in Gabun in January 2019, where a (quite rudimentary) deep fake video of Gabun's President Ali Bongo appearing numb and motionless went viral amid public speculation about his health condition (Washington Post, 2020).

Conclusively, this section stresses that AI as an intersubjective, quasi-other is a pivotal analytical dimension for understanding the relationship between trust and AI. In the face of AI challenging and blurring reality, regulators are on the spot to intervene. So far, the EU AIA imposes transparency duties on the producer of synthetic content, requiring it to be labelled (Art. 52 III). Synthetically produced content will soon increase in quantity and quality and producers will be harder to identify or deliberately remain anonymous villains. Who will be responsible for identifying and proving what is fake or real in the digital world - and will it even be technically possible to distinguish between these states in the future? What content can users trust or must distrust? Current regulatory frameworks fail to address this gap. While the EU's Digital Service Act (DSA) (European Commission, 2022b) prescribes a “notice and take down action” procedure for digital platforms (Art. 14, 14 III, 19), it comes with a caveat. Platforms are not obliged to actively monitor any content and are exempt from liability for the distribution of illegal content as long as they are not aware of it. They wait to be notified by users to flag illegal and offensive content. This, of course, externalises corporate accountability and leaves considerable room for loopholes.

What current TAI governance discussion is missing completely, though, is a reflection of where to draw the line on the role(s) AI should take as quasi-others in very intimate spheres of society such as care, child education or sexuality. It is here where trust relationships are most fragile and people are most exposed and vulnerable. Individuals are already revealing their most intimate selves to AI applications and to much more rudimentary algorithmic systems (see ELIZA). The intrusion of AI into intimate spheres radically puts society's emotional and moral worldviews up for negotiation, as humans are lured out of their comfortable and taken for granted anthropocentric comfort zones. Which boundaries between humans and AI are still legitimate and to be trusted, which even need to be maintained? So far, policymakers have provided little guidance on these questions, and societies are navigating rather blindly into an increasingly blurring of the analogue and the digital, the authentic and the fake.

Trust the network. AI's social embedding and platformization

The relationship between AI and trust is not only demarcated by an intersubjective and apparent quasi-other. Many factors in a muted and hidden structural background play a key role in trust, embedding an AI application in a network of relationships between different actors. Among others: company leaders, designers, engineers, clickworkers, policy makers, users, and non-users. This extends the network of trust beyond the technological application. Von Eschenbach (2021) notes: “Trust with respect to technology (…) can only be understood in reference to the system as a whole, and each agent's trustworthiness will be judged relative to the differences in roles, interests, and expertise” (1619). The EU HLEG also stresses the importance of a systemic trust account: “Trustworthy AI (…) concerns not only the trustworthiness of the AI system itself, but requires a holistic and systemic approach, encompassing the trustworthiness of all actors and processes that are part of the system's socio-technical context throughout its entire life cycle” (2019: 5). In effect, the notion of trust is extended from AI as an application to a web of different actors involved in the chain of building and delivering a trustworthy AI system.

In addition to the concealed social and technical background processes inherent to the respective AI system, AI applications are embedded in different use contexts and domains. Today, societies are beginning to implement AI in all fields, whether it is work, health, entertainment, military or administration. AI systems act as sorting systems that decide who to hire or not (Laurim et al., 2021), mediate users’ access to information through recommender systems on platforms (Gorwa et al., 2020), and increasingly decide who to kill and who to let live in combat warfare (Abraham, 2024; Asaro, 2012). It is crucial to emphasise that AI systems are not just a technology one uses, but are themselves a governance tool in public policy to establish, manage and enforce social orders. This pervasive form of government by algorithm, which Danaher (2016) coins ‘algocracy’, or Rouvroy and Berns (2013) refer to as ‘algorithmic governmentality’, shows a trend towards AI supporting or even replacing police, military, legislative and administrative action. Another trend in the embedding of AI is the dominance of social media platforms and marketplaces. There is a growing centralisation around commercial platforms that act as powerful providers, gatekeepers and bottlenecks for AI applications and services. Commercial platforms use AI technology to evaluate, sort and recommend information flows and users. In doing so, platforms pervasively reshape communication relationships and behaviour (Gillespie, 2010; Nitzberg and Zysman, 2022; Srnicek 2017). Through this central position, platforms reconfigure human-AI situatedness (Suchman, 2007), enforcing new modes of interaction, values, spatial and temporal experiences (e.g., intimacy, ubiquity, acceleration). In terms of trust, the use of AI in society, governance and platforms represents an important embedding that needs to be accounted for conceptually. With AI taking on key tasks in the operation and management of platforms, platforms themselves are also theorised as trust mediators (Bodó, 2021b). These virtual meeting places become sites of trust production by matching buyers and sellers, potential sex partners or bridging transactional uncertainties between customers. Undoubtedly, trust can be built here by platforms moderated by AI - but in turn, as Bodo (ibid.) puts it, it is crucial “to inquire whether we can trust technology to produce trust” (2680).

As shown in this section, trust in AI extends from the obvious and apparent AI application to a network of actors and ties. Moreover, it must also be understood as a governance tool for managing social orders, playing a central role in public policy and in the platformisation of widely used digital services. But: How can users control whether this network of relationships embedding AI is trustworthy? They cannot see or understand all the consequences of the specific technical and political choices made by all actors in the design of AI systems. Nor do they have the skills, let alone the information, to grasp whether AI systems are functioning properly and are integer (for example, by not producing biased results or spreading misinformation). In essence, policymakers must consider that users are being presented with an AI end product that remains completely closed and opaque in its design process, its operating mechanisms, and its underlying normative choices.

It seems intuitive that the much-hailed ethical principles of transparency and autonomy are an essential pillar of a TAI standard, at least to counter this myriad of complexity and opacity. However, much recent empirical research shows that evidence is complicated and not as intuitive as ethical guidelines might suggest (Felzmann et al., 2019). In a German study, König et al. (2022) show that in interaction with personal AI assistants users “do value explainable AI, i.e., high transparency of the AI assistants, [while] this feature barely offsets even a monthly price of 1.99 Euros as compared to no costs” (8). Moreover, Waldman and Martin (2022) show that AI transparency alone does not suffice to judge public policy decisions based on algorithms as legitimate, “countering arguments for greater transparency as a governance solution” (12). They suggest that a human in the decision-making loop is crucial for sensitive areas like policing or judiciary where it is perceived that human capacities and skills crucially matter, which is also supported by Lee (2018). But then again to the contrary, Krügel et al. (2022) show that human oversight does not counter user overconfidence in corrupted algorithms, transforming humans in the loop without digital literacy into “zombies in the loop” (1). While scholarship needs to further explore which arrangements of transparency and human oversight matter in AI contexts, it is already clear that it is not enough to disclose all the different actors and factors that make up the web of trust around an AI application. Realistically, policy makers need to consider that users cannot monitor this myriad and assess the trustworthiness of all actors. To provide TAI, it is essential that users can rely on institutional governance frameworks that establish, maintain and guarantee a trustworthy web of actors. Regulators and their governance role are central to bridging uncertainties. It is within their mandate and competence to implement a regulatory framework that creates systems of trust assurance.

Trust the AI regulatory framework. Governance ensnared between AI interest mediation and value trade-offs

The sociological and institutional literature on trust recognises for long that trust relationships rely on higher-order arrangements that bridge contexts of social uncertainty and knowledge gaps (Misztal, 1996; North, 1990; Sztompka, 1998; Zucker 1985). The complexity of managing different actors influencing TAI demonstrates both the importance and the challenge for public administrations dealing with AI. To date, AI governance modalities make use of both principle-based top-down regulation and market-based self-regulation, using a variety of cooperation and competition logics to govern AI. While the global AI governance landscape is still scattered and evolving, recently, the formation of more coercive regulatory regimes, most notably at the EU level with the AIA, DSA and Digital Market Act (DMA) 5 (European Commission, 2022a) come into being.

Before delving into policy details, it is important to take a step back and adopt the perspective of public administrations trying to establish trustworthiness for their AI regulatory frameworks and bridge the uncertainty faced by AI users. Their challenge is to manage and balance the different imperatives present in society. These include industry interests for a deregulatory capitalist agenda, the administrations' own internal security and geopolitical interests, while addressing users’ concerns about AI and its alignment with existing legal norms and constitutional frameworks. All these imperatives follow different logics and engage with different narratives in the process of AI regulation, making it difficult to co-construct a common understanding of AI, let alone a consensus for appropriate policymaking (König et al., 2021). Recent special issues on the governance of AI (see Büthe et al., 2022; Taeihagh, 2021) have attempted to structure a still young field and aim to find a common language. Here I follow Büthe et al. (2022) that “laws, regulations and other measures to govern AI (…) do not so much reflect inherent characteristics or objective truths about the technology, but reflect political actors’ perceptions given those actors’ predisposition“ (1722).

Instead of talking about different actors in the policy process, however, it is more appropriate to conceptualise the AI policy process as a bargaining field of conflicting players trying to maximise their stakes. This shift in perspective helps to understand the phenomenon of trust and distrust in AI arising from governance frameworks. It is manifested in decisions about value trade-offs that seem inevitable in AI regulation. Politics is caught in a mediating tension, as it has to accommodate different narratives and imperatives of interests that contradict each other in the policy process. The motif of an ensnared state facing a regulatory dilemma has long been propagated by conflict state theorists such as Offe (1972) or Alford and Friedland (1985), and is also present in the hegemony theory of Laclau (1996) and Mouffe (2013). Recent scholarship has aimed to reintroduce agonistic paradigms into technopolitics, mostly in opposition to a perceived dominant deliberative reading of politics in technology assessment (see discussion by Delvenne and Parotte, 2019; Schröder 2019). From an agonistic political perspective, administrations are pressured to consider different narratives and political interests - without taking sides - in order to be perceived as integer, legitimate and trustworthy. Favouring one societal imperative concerning AI (allowing ubiquitous access to user data to support the rise of AI startups) may neglect the concerns of another player (users’ concerns about privacy and data autonomy) and undermine the trustworthiness of the administration. In this context, Sztompka (1998) paradoxically speaks of the need for an “institutionalized distrust” (1). After all, it is not surprising that conflicting opinions and interests clash around AI. On the positive side, it can also be read as a constitutive and vital element of democratic political culture. As Bodó (2021a) writes: “This competition for the autonomous powers of the state (…) requires the development of complex networks of institutional distrust, which reflect both the distrust among different societal groups with radically divergent and competing interests, and the very real possibility that any of these groups may overtake any of the bodies of the state” (12).

Recent reports by the ‘Corporate Europe Observatory’, ‘Transparency International’ and ‘Euroactive’ show how big Tech, corporate think tanks, and trade and business associations are active in blocking and watering down AI regulation in Brussels. Big Tech, largely dominated by US firms, have “spen[t] over € 97 million annually lobbying the EU institutions (…) ahead of pharma, fossil fuels, finance, or chemicals” (Bank et al., 2021: 6). In 2023 industry lobbyists had by far the most meetings with the EU commission on the AIA, featuring 86% of all behind closed-door meetings (73 out of 98 meetings), and were most active in agenda and standard setting (Corporate Europe Observatory, 2023; Kergueno et al., 2021). For the AIA “tech companies have reduced safety obligations, sidelined human rights and anti-discrimination concerns” (Schyns, 2023: 3). Leaked documents strikingly show how companies try to pressure policy makers for a deregulatory agenda by staging narratives like “Big tech is ‘irreplaceable’ when it comes to problem solving”, “we’re just defending SMEs and consumers”, “Europe wins the tech race against China, or it falls back into the Stone Age” (Bank et al., 2021: 27). In the final round of discussions on the AIA, these lobbying efforts have been directed against the designation of general-purpose AI as a ‘high risk’ category in the AIA, with industry fearing that it would overburden and stifle innovation with strict conformity assessments. European startups like ‘Mistral’ and ‘Aleph Alpha’ teamed up with US big Tech companies and derailed, with direct ties to political executives in France or Germany, the policy-making process on the last meters. Industry managed to water down the binding fundamental right assessment proposed by the European Parliament on general-purpose AI into mere transparency rules (Corporate Europe Observatory, 2023; Hartmann, 2023).

Reports that show such a disproportionate favouring of industry interests can be a blow to public perceptions of AI. If users feel (and truly, a feeling may suffice) that regulation is being framed in such a way that AI regulatory trade-offs favour powerful interests but lack democratic integrity, they may be reluctant to trust it. Problematically, distrust can become diffuse and endemic - and then persistently damaging - when the contacts between policy and an interest group become too close and increasingly indistinguishable. Lobbying and partisan agenda-setting takes place behind the scenes. Unable to identify and address those responsible, some publics quickly direct their sentiments of distrust towards diffuse upper hierarchies such as ‘the system’, ‘the powerful elites’, ‘those Eurocrats in Brussels’. The revolving door phenomenon can certainly fuel this perception. This is undoubtedly the case with AI at the EU regulatory level, as “three quarters of all Google and Meta's EU lobbyists have formerly worked for a governmental body at the EU or member state level” (Schyns, 2023: 7). In general, interest trade-offs are not necessarily problematic if regulator communication is transparent and honest. How value trade-offs are communicated and accommodated is an essential feature of managing expectations, hopes and fears around AI. It draws central attention to the discursive dimension of AI, which leads to the final analytical dimension that pairs trust with AI.

Promoting trustworthy AI through narratives: mediating meaning & attention

Trust in AI is strongly mediated by its discursive framing, which creates meaning what to expect from AI, the promises and fears it embodies, and the problems it is supposed to solve. Hence, the societal role which AI shall fulfil is not innate in technical details but is socially constructed and harnessed. Science and technology needs the social narrative to justify itself as valid, legitimate, needed, and strived for. As will be argued, TAI narratives have a dual societal function: they create acceptance, topicality and attract investments around AI, while at the same time silencing and bridging value conflicts and contradictions as assessed in the previous section.

AI is a technology that is very rich from a narrative standpoint. The extensive discursive embedding of AI with human concepts such as ‘thinking’, ‘autonomy’ and ‘intelligence’ shapes perceptions of AI in both public and expert domains. Since its beginning, AI has raised expectations and dreams of exuberant achievements, constantly entertaining the thought of outperforming the human (Campolo and Crawford, 2020; Dandurand et al., 2022; Natale and Ballatore, 2020). These narratives are often embedded in the binary of hopes and fears, or redemption or doom, most concretely embodied in fictional narratives around AI (Cave and Dihal, 2019). But the fictional quickly conflates into the real, with AI myths being echoed in public arenas shaping overall AI sense making (Crépel et al., 2021). Framed perceptions of AI raise expectations that may be frustrated if promises are not kept, negatively influencing perceptions of both the trust-giver (the communicator of promises, such as providers or regulators) and the then demystified AI systems. The often-exaggerated image that conveys the potential and danger of AI is critical for the realm of trust, as trust relationships are built on emotional expectations. When users are confronted with a discrepancy between exaggerated promises and the actual reality, this can lead to feelings of dishonesty, disappointment and even betrayal.

Given this context, empirical work shows how nation-states and supranational institutions have actively positioned themselves in the AI arena. Administrations portray themselves in an ‘AI race’ (Cave and ÓhÉigeartaigh, 2018), employing deterministic rhetoric of an ‘inevitable’ societal path towards AI. This future trajectory is fuelled by rhetorics of TINA (there is no alternative), politically surrendering to the logics of international economic competition. Likewise, societies being constantly shaken by the exhausting reality of crises transforms AI’s role from a technology into that of a saviour, nourishing the epic tale of redeeming society from its current structural problems, such as the urban mobility crisis, social inequalities, or climate change. This solutionist aura (Morozov, 2013) that surrounds AI in the political and cultural realm reifies it as given and needed – thereby defining the toolkit to combat socially deeply rooted problems. With the race to AI portrayed as inevitable, a race to AI regulation (Smuha, 2021) is also evoked, pressuring governments to come up with effective regulatory frameworks. However, selling smart AI-based solutions while ignoring deep-rooted social problems can be a pitfall for TAI. The sociology of expectations and STS warn about the risk of such tech-ubiquity leading to path dependencies and lock-ins (Borup et al., 2006; van Lente and Rip, 1998). Managed public expectations of AI can easily turn into demands on governments. As I have argued elsewhere with Bareis and Katzenbach (2022), deconstructing the consistency of national AI imaginaries: “Once governments proclaim bold promises, they are on the spot to deliver and perform their capabilities” (874). The praise of technology talk becomes performative and can increase the pressure not to disappoint users. Stakeholders are playing with the trust of AI-users if raised expectations are not met and promises prove empty – or scandals shatter the before hailed AI solutions.

In general, not only AI but also TAI has become a buzzword in politics. As outlined in the section before, the EU has framed its entire regulatory framework with the emblem of TAI. While TAI remains completely under-defined, it functions as an empty signifier that has its political function. By deploying the TAI frame, the EU Commission can rhetorically accommodate stakeholders and their conflicting interests and unify a contested field of actors in a seemingly harmonised and consensual regulatory framework. From the outset, “AI industry can read ‘trustworthiness’ as a call for robustness, while ethicists and legal experts can simultaneously imagine that the document puts forward the agenda of making AI development more ethical and lawful” (Stamboliev and Christiaens, 2024:6). Thus, TAI functions as a unifier to bridge different interests, but this comes with a significant caveat: the carving out of what TAI actually entails. This semantic emptiness may even be cherished and promoted by political actors, but of course it would then lack any substance and meaningful content. Worse, if these empty signifiers are revealed as a strategy to obscure power structures in regulatory processes, the blow to TAI and AI governance bodies can be substantial.

Concluding remarks

Trustworthy AI (TAI) has recently been widely employed in the context of AI regulation and in ethical debates around AI. This paper aims to structure and advance the debate, doing justice to a complex socio-political phenomenon that has suffered from being reduced to a semantically carved-out buzzword. This paper argues that the actual requirements for linking trust and AI are demanding – but also rewarding. Rather than following the dominant path in AI research of linking trust to ethical principles such as fairness, transparency, or privacy, or to technical properties such as robustness, efficiency, or accuracy, I hope to have shown that the phenomenon of TAI (while certainly being influenced by these) mobilises larger epistemic and social dimensions. Any technical approach to de-biasing, auditing, or making AI more transparent has its merits, but ultimately falls short of capturing and doing justice to the variously situated realms that constitute TAI. These include a) AI as an intersubjective relationship, with trust being negotiated through AI as a quasi-other; b) the embedding of AI in a network of actors from programmers to platform gatekeepers; c) the regulatory role of governance in bridging trust uncertainties and deciding on AI value trade-offs; and d) the role of narratives and rhetoric in mediating AI and conflictual AI governance processes (see overview Table 1). Admittedly, the analytical scheme is a heuristic and therefore necessarily abstract. I have executed each dimension with regard to AI in this paper, but in reality, they easily conflate. Some work more in the foreground with interfaces and materialities, others are enmeshed and implicit in power-relations and hierarchies, or framed by conversations about AI Hollywood blockbusters or technical policy results. However, for policy makers and researchers, the analytical scheme has its merit as it structures a scattered debate, points to regulatory requirements and brings clarity for further research trajectories.

Given the regulatory perspective, first, one must state that there are clear policy gaps in the European regulatory acts (other international proposals are still in the making) like the AIA, DSA and DMS. This concerns a questionable self-assessment and third-party risk assessment approach, or insufficient accountability duties for the identification and labelling of AI-generated synthetic content on platforms and search engines. With synthetically generated content flooding the internet, there is an increasing societal disorientation to what extent the blurring of the authentic and factual with the fake and false is socially and politically acceptable. This especially concerns AI applications in fields where users are most vulnerable such as care, education or sexuality.

Second, recent scholarship around internet regulation theorized governance as an open-end reflexive coordination in a complex network of social ties, “ordering processes from the bottom-up rather than proceeding from regulatory structures” (Hofmann et al., 2017: 1413). This actor-network inspired governance perspective serves well to bring all actors who are involved in AI production and distribution to the foreground, but understates the very nature of power and political bargaining between these actors. Hofmann et al. state that governance, here understood as coordination, “becomes reflexive when ordinary interactions break down or become problematic” (ibid.: 1414). This implied deliberative take of governing a complex network would misconceive the nature of hierarchical politics, though. Rather than leaning on a reflexive notion of politcs, I have put forward an agonistic picture of AI governance, depicting strivings for hegemony and agenda setting between players in deciding upon value trade-offs. This perspective serves to understand the political dimension in installing trust or provoking distrust in AI, tackling issues of regulatory capture or revolving doors. These phenomena, of course, are not only limited to AI but also emerge alongside other regulations. Not surprisingly, though, it is especially prevalent when big Tech is aiming to make big money.

Third, I indicated that the carving out of TAI may not only be the consequence of a scattered debate but also depicts political strategy. I have highlighted the role of discourses and narratives for trust in AI, managing expectations through playing around with hopes and fears. It is revealing that transparency, integrity and honesty have such a low standing in political processes. The fact that the implementation of AI involves value trade-offs is not the fault of policymakers - but the euphemised way in which it is presented, not to mention the unbalanced and hidden lobbying that is allowed to take place, certainly is. Every trade-off with AI has its benefits and perils for society, and these can and should be fully and transparently articulated – and publicly discussed. This would actually relieve politicians of much of the pressure to sugar-coat bad deals and spare them from manoeuvring themselves into rhetorical traps they then struggle to escape. Clarifying the stakes, the actors and their interests is in itself a transparency value that could substantially (re)build trust in political processes and, consequently, in their regulatory objects – in this case, AI.

By disentangling the relationship between trust and AI, this scholarship situates itself within the agenda of critical policy studies (Paul, 2022) and critical algorithm studies (Seaver, 2017). To successfully (dis-)integrate AI for the benefit of all, an understanding of how algorithmic phenomena shape, maintain and challenge society and its order is a pivotal precondition. This understanding calls for disciplinary transgression where needed to disclose how the technical is inscribed, mediated and practised in the social.

Footnotes

Acknowledgements

I would like to thank Christian Katzenbach and his colloquium, Julia Valeska Schröder and Clemens Ackerl for their helpful comments on earlier drafts of this paper. I am also grateful for three anonymous reviewers and Rocco Bellanova who did not lose trust in this interdisciplinary endeavor in various rounds of review.

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.