Abstract

This article proposes and tests a reproducible framework for a computational method to measure social media-based deliberative discourse by analyzing commentary surrounding the Canadian convoy protests of COVID-19 vaccine mandates and restrictions. Employing a combination of analytic calculations, alongside tools such as Google Perspective and Linguistic Inquiry and Word Count (LIWC), this article assesses the quality of online deliberative discourse using established measures of deliberation including the variables rationality, interactivity, equality, and civility. We propose computational approaches to measuring these variables, and work toward validating our approach by observing correlations between an established computational measure of online deliberation-cognitive complexity. This computational approach is tested using Twitter and Reddit commentary related to the convoy protests that took place in Ottawa, Canada, during February 2022, which influenced the emergence of similar protests around the world. In addition to testing our proposed online deliberative discourse measurement framework, this case study provides insight into the deliberative characteristics of the Twitter and Reddit social media platforms.

Digital spaces, such as social media platforms and message boards, have transformed public discourse, replacing traditional physical spaces for debate with virtual spaces for deliberative discourse (Esau et al., 2017; Gonzalez-Bailon et al., 2010; Kelly et al., 2005; Schäfer et al., 2022). These platforms allow for broad discussions on critical social issues, such as racial injustice, climate change, and public health (Gaytan Camarillo et al., 2021; Graham et al., 2021; Hristova & Howard, 2021; Islam et al., 2020). However, they also enable the spread of misinformation, disinformation, and potentially defamatory speech, further complicated by biases in platform algorithms and policies (Bone, 2021; Hilary & Dumebi, 2021; Matamoros-Fernández, 2017). Research presented here focuses on Twitter and Reddit, platforms previously scrutinized for enabling extremist content and racist discourse (Brown et al., 2018; Criss et al., 2021; Gaudette et al., 2021; Guo & Liu, 2022; Rieger et al., 2021; Torregrosa et al., 2020; Uyheng et al., 2022).

Conventional discourse analysis often relies on manual coding, a time-consuming and sometimes biased process (Camaj, 2021; Gaur & Kumar, 2018; Hsieh & Shannon, 2005; Mackieson et al., 2019; Oz et al., 2018). To overcome these limitations, we propose a computational approach to analyze and assess deliberative quality in social media spaces. This methodology is tested in a case study of social media discourse produced during convoy protests in Ottawa in early 2022 in response to COVID-19 vaccine mandates and restrictions.

While recognizing the challenges posed by automated actors (“bots”) in spreading misinformation and their potential to skew results, we argue that our work contributes useful insights into the deliberative characteristics of discourse on Twitter and Reddit. It also offers understanding of how these platforms’ features and community-driven moderation shape discourse, contributing to existing work on computational deliberative discourse analysis (Gold et al., 2015; Jaidka et al., 2019).

Social media-based online deliberation and deliberative theory

In addition to the examination of traditional notions of deliberation and deliberative democracy, deliberative theory provides useful insights into how individuals engage in deliberation on social media platforms (Bächtiger & Hangartner, 2010; Chambers, 2003; Neblo, 2020). Deliberation refers to the exchange of facts, viewpoints, ideas, and feelings among individuals. Deliberation is essential for democratic functioning in representative democracies, along with information provision and voting as major political practises (Albrecht, 2006). The interplay between effective political deliberation and equitable social institutions is an enduring concern of scholarship on deliberation (Dewey, 1927).

In Gutmann’s (2004) perspective, deliberative democracies must be characterized by the provision of reasons for decisions, accessibility to all citizens, the generation of binding decisions, and a dynamic, changeable nature. However, Habermas (1989) argues that public debate is increasingly affected by private capital-driven interests, a claim further developed by Alexander’s (2006) conception of a “civil sphere” promoting critical thought, democratic fluidity, and solidarity. Importantly, the civil sphere encompasses not only formal governing bodies but also political, societal, and economic issues affecting large-scale institutions intended to serve the collective public.

Rationality stands as a central element of effective deliberative discourse. Benhabib (1996) contends that in a deliberative setting, practical rationality rests upon a free and open discussion on matters of mutual concern. Such deliberations attain their rationality by rendering information accessible, allowing expressions of arguments, and leading to publicly challengeable conclusions (Benhabib, 1996).

Discussions about social media platforms’ efficacy as deliberative spaces are abundant in academic literature (Rauchfleisch & Kovic, 2016). Social media platforms such as Twitter and Reddit can be regarded as a public sphere, offering a venue for circulating information, ideas, debates, and forming political will (Dahlgren, 2005, p. 148). These platforms, despite being private entities, offer substantial room for deliberation. However, this “formation of political will” can lead to polarization if interest groups merely perpetuate their own ideas. According to Kruse et al. (2018), an effective public sphere necessitates unlimited information access, equality in participation, and a deliberative environment free of political and economic influence. Their study reveals that platform users often engage with politically similar individuals, and some users refrain from communicative action on the platforms because of fears of harassment and surveillance.

A consensus definition of deliberation is not yet available. Stromer-Galley (2007, p. 3) characterizes deliberation as a process in which groups, often ordinary citizens, engage in reasoned opinion expression on social or political issues, aiming to identify and evaluate solutions to a common problem. Friess and Eilders (2015) propose a unified definition of deliberation based on four premises: legitimacy through political discourse, adherence to rules for effectiveness, an expectation of beneficial outcomes, and the need for an inclusive public sphere. Later, Friess (2018) adds a fifth premise suggesting that deliberation should embody a communicative process of democracy, not solely focused on liberal, economic, or voting-centric approaches. Janssen and Kies (2005) explore the potential of online platforms for robust and meaningful civic discussions, underscoring the importance of diverse opinions in democratic discourse, with online public communication to reach mutual understanding.

Friess and Eilders (2015) elucidate various design affordances, including synchronicity, anonymity, and moderation, that influence the conditions of online deliberations observable on platforms such as Twitter and Reddit. The synchronicity of communication is regarded as a crucial deliberative feature, with live, synchronous online discussions potentially negatively affecting deliberative quality (Friess and Eilders, 2015). Despite not being as synchronous as a live web chat, Twitter and Reddit enable some aspects of synchronous conversation through thread-based discussions.

Anonymity is another affordance affecting online deliberation, with mixed opinions on its impact on deliberative quality (Friess & Eilders, 2015). Reddit’s semi-anonymous nature allows candid discussions on sensitive topics, as demonstrated by Bagroy et al.’s (2017) study of college students discussing mental health issues, and Sengupta’s (2019) examination of sensitive discussions among graduate students. These observations suggest that Reddit is a safe space for open discussions without fear of consequences (Sengupta, 2019). Del Valle et al. (2020) further conceptualize Reddit as an informal learning environment. As for Twitter, although its previously available verification system did provide some identity confirmation, this was mostly limited to notable individuals (Perez, 2021).

Moderation is considered to positively influence deliberative quality (Friess & Eilders, 2015). This view is supported by Richter’s (2021) examination of Reddit’s subreddit-based rules and site-wide “Reddiquette,” which enforce decorum and foster public deliberation. Similarly, Straub-Cook’s (2018) and Jakob’s (2020) findings on Twitter illustrate how Reddit users share links to substantiate deliberative claims. Crowd-sourced upvoting and downvoting on Reddit enforce civility and help moderate both substantive and irrelevant commentary (Straub-Cook, 2018). Friess and Eilders (2015) also recognize the importance of active moderation in effective online deliberative discourse, evident in Reddit’s crowd-sourced moderation and reliance on community moderators. This division of labor contributes to a well-regulated deliberative environment.

Another factor that was found to influence deliberative quality concerns the length of a social media post and its potential correlation with civility/incivility. Shorter text limitations, like those on Twitter, were associated with greater civility but less deliberative attributes, while platforms allowing longer messages, such as Facebook, exhibited greater deliberation but also increased incivility (G. M. Chen, 2017; Oz et al., 2018). These findings have significant implications for deliberative discussions, such as those on climate change, where post length can impact the quality of deliberation (Treen et al., 2022).

Despite Twitter’s relative limitations for deliberative discussions compared with other social networks, the platform’s practise of sharing links fulfills a deliberative function (Jakob, 2020). Link sharing permits users to convey more information than the tweet text limit allows, serving both informational and argumentative purposes (Jakob, 2020). It stands as a form of justification, a common feature in online deliberative discussions, further enriching the discourse.

Finally, Friess and Eilders (2015) emphasize the role of well-informed, rational, and sourced information sharing in the online deliberative process. Both Twitter and Reddit facilitate effective information sharing through threaded conversations, allowing back-and-forth dialog and the ability to share external resources via links. This supports the sharing of well-substantiated information, thereby serving a deliberative purpose.

Measuring online deliberative discourse

As social media fora have increased in importance for deliberative discourse, researchers have sought to measure deliberative quality on these platforms using a variety of methods and measures (Balcells & Padró-Solanet, 2020; Camaj, 2021; Esau et al., 2017; Fournier-Tombs & Di Marzo Serugendo, 2020; Halpern & Gibbs, 2013; Jaidka et al., 2019; Jakob, 2020; Oz et al., 2018; Stroud et al., 2015; Ziegele et al., 2020).

Most research on measuring deliberative discourse has employed manual coding-based methods (Black et al., 2011; Friess, 2018; Stromer-Galley, 2007). These methods result in advancement in understanding because coders can recognize the nuance of human speech more easily, compared with computational methods, but challenges related to coder subjectivity and analysis of large amounts of data remain (Fournier-Tombs & Di Marzo Serugendo, 2020). Yet as Beauchamp (2019) outlines, the cost of employing hand coding techniques and the potential for introduction of bias suggests that computational approaches to measuring deliberation are called for. These computational approaches typically rely on natural language processing, artificial intelligence-based approaches, visual analytics, network analysis, and other linguistic techniques (Beauchamp, 2019; Fournier-Tombs & Di Marzo Serugendo, 2020; Gold et al., 2015).

In deliberation research, measuring deliberative discourse relies on certain indicators. Friess (2018) outlines a collection of measures commonly used to gauge the quality of online deliberation, including rationality, interactivity, equality, civility, constructiveness, and common good orientation.

Cognitive complexity (CC) is a further measure that is used to analyze the quality of online deliberation (Brundidge et al., 2014; Moore et al., 2021). While not a perfect measure of deliberative quality, CC has been used as a proxy measure to analyze the quality of argumentation in online discussions (Moore et al., 2021).

Our research builds on previous work that measures deliberative discourse using computational methods. Inspired by the work of Friess and Eilders (2015), we operationalize the measurement of rationality, interactivity, equality, and civility as variables for online deliberative discourse. To expand on previous automation efforts, we also include a variable of CC in our analysis. We briefly explore these variables in the following sections.

Rationality

Friess and Eilders (2015) outline that rationality is a crucial element of effective deliberation and serves as a measure of whether online conversations are backed up with factual argumentation. Providing evidence to support a claim is a key requirement for rational deliberation (Stromer-Galley, 2007). Providing external sources of information helps online deliberation participants validate and verify justification, particularly of opposing views (Rowe, 2015). Justification is also cited as a key element of deliberative quality by Jaidka et al. (2019). They outline that justification entails conversation participants sharing supporting material that justified their statements, such as data, links, or facts. Similarly, when manually coding Facebook comments, Stroud et al. (2015) measure commentary for “provision of evidence,” looking for the sharing of links or other information that supports author claims.

Rational arguments should also be clearly defensible using observable empirical evidence or be rooted in a shared understanding of normative behavior (Stromer-Galley, 2007). A further measure of rationality is whether an argument is on-topic and coherent (Friess & Eilders, 2015).

Interactivity

Interactivity is often measured by analyzing the back-and-forth conversations between individuals (Friess & Eilders, 2015). Deliberation should entail a back-and-forth exchange of ideas, both listening and talking (Friess & Eilders, 2015). In their study of Twitter exchanges, Jaidka et al. (2019) outline that interactive deliberation should entail three elements: reciprocity, empathy, and respect. Quality interactive discussions should feature “positive comments that are sensitive or empathetic to others’ viewpoints and respectful towards other discussants” (Jaidka et al., 2019, p. 352).

In their study of online deliberation, Camaj (2021) develops an interactivity index for interactive comments. Citing work from Walther and Jang (2012), comments were classified as either reactive to a source article or comment, or interactive if they were replies to other conversation participants (Camaj, 2021). While not specifically using the term interactivity, Balcells and Padró-Solanet (2020) measure the deliberative quality of online political discussions by analyzing the depth of Twitter conversation trees. Depth is used as a measure that indicates an engaged and reciprocal exchange of ideas (Balcells & Padró-Solanet, 2020).

Equality

The notion of equality in a deliberative discussion focuses on how inclusive or accessible a conversation is (Friess & Eilders, 2015). All participants in an online debate should have an equal opportunity to contribute and take part in a discussion. However, it is challenging to formulate an empirical way to measure equality (Stromer-Galley, 2007). Equality could be measured by the percentage of conversation space an individual takes up, or it could be measured by the number of participants in a discussion (Stromer-Galley, 2007). Another consideration is the quality of participant contributions. Stromer-Galley (2007) outlines someone could speak frequently as part of a debate but add little of substance to the conversation. In later work, Friess (2018) outlines that online deliberative equality should be measured by analyzing the distribution of user comments or the “share of voice” (p. 165).

Civility

Civility is also cited as an important characteristic of quality online deliberation (Friess & Eilders, 2015). Moreover, a consistent sense of civility within deliberative communication is “pivotal for fostering critical engagement” when divergent viewpoints about a topic exist (Brokensha & Conradie, 2017, p. 330). Toxicity is a commonly cited measure of uncivil online behavior (Majó-Vázquez et al., 2020). While no unified definition of toxicity has emerged from academic research, a common theme evident in prevailing scholarship outlines that toxic behavior involves expressed disrespect toward others (Kim et al., 2021). In their study of online comments, Kim et al. (2021) define toxicity as, “expressing disrespect for someone using insulting language, profanity, or name-calling; by engaging in personal attacks; and/or by employing racist, sexist, and xenophobic terms” (p. 3).

CC

CC is a potential measure of online deliberation quality. An established psychological concept, CC has been used in a variety of subject domains to study both speech and text, including online commentary, political debate, and legal proceedings (Brundidge et al., 2014; Moore et al., 2021; Owens & Wedeking, 2011; Suiter et al., 2021; Wyss et al., 2015).

Used as a proxy to measure deliberative quality both in offline and online speech, Wyss et al. (2015) outline that CC “measures the degree to which an individual perceives, distinguishes and integrates topical dimensions” (p. 637). High CC scores indicate that person is willing to accept conflicting viewpoints and “represents an important marker of the epistemic quality of debate; by the same token, it also implies a willingness of actors to integrate and accommodate other viewpoints and strive for agreement” (Wyss et al., 2015, p. 637).Moore et al. (2021) outline that CC can capture the argumentative dimension of online deliberation and show “how individuals’ thought processes are constituted rather than about what actors actually say about a problem, for example, how logical an argument is” (p. 52). CC measures two broad dimensions of argumentation, differentiation and integration (Suiter et al., 2021). Differentiation measures the amount of viewpoints considered by a speaker, while integration outlines the depth of a speaker’s understanding of an issue (Suiter et al., 2021).

Research questions

To better understand how researchers can computationally analyze social media-based online deliberative discourse, with the goal of developing a method that reveals insight on the deliberative affordances of the social media platforms specific to our case study, our research addresses the following questions:

This research proposes a reproducible framework to analyze online deliberative discourse using solely computational methods and proposes a model with discursive variables to measure online deliberative discourse. This framework is tested using a case study, which reveals insight into how online deliberative discourse changes as an issue shifts in public prominence and illustrates how deliberation differs between social media platforms. This research, and the open-source ancillary material that supports it, works to encourage other researchers to test and refine our method using other sources of online deliberative discourse.

Methodology

We provide a case study in which we test our proposed method of analyzing online deliberative discourse using social commentary related to the 2022 Canadian convoy protests. These protests focused on COVID-19 vaccine mandates and restrictions, which became a nation-wide issue triggering wide-reaching news media attention and deliberation online. The Canadian convoy protests were picked up widely by international news media and inspired similar protests in other countries such as the United States, United Kingdom, New Zealand, and Austria (John & Friend, 2022; McKeen et al., 2022; Press, 2022).

The social media commentary surrounding the Canadian-based convoy protests constitutes an ideal case study for testing a computational approach to measuring online deliberative discourse as it represents a polarizing debate with clearly defined groups both for and against an issue or concern (Huang et al., 2022; Roy & Gandsman, 2023). Our focus on social media discourse surrounding the Canadian-based convoy protests ensured our data sets remained a relatively manageable size and simplified data collection, while effectively providing a blueprint for other researchers to study deliberation surrounding polarizing political issues. As outlined by Gillies et al. (2023), the convoy protests represent an example of a growing international movement toward digitally organized grassroots protest movements with a real-world component, such as France’s Yellow Vest and Hong Kong’s Umbrella movements. We hope that our work will inspire other researchers to further adapt and develop our approach for studying similar events.

Although we focused our analysis on the demonstrations that culminated in Ottawa, Canada, discourse from other parts of the world is nonetheless present within our data set due to the global nature of social media platforms. To encompass both the period before and after these demonstrations, we focused on social media threads that were started between January 1, 2022, to March 31, 2022. Our data set, in the case of both Twitter and Reddit, contains tweets and Reddit comments after these periods, but no thread was started outside of this period.

In the case of Twitter, we searched the platform for discussions related to the convoy protests using the search query: (“FreedomConvoy2022” OR “CanadaConvoy” OR “OttawaConvoy” OR “OttawaOccupation” OR “TruckersForFreedom” OR “ConvoyToOttawa” OR “FreedomConvoyCanada” OR “Freedom Convoy” OR “(Ottawa) (Convoy)”) These search terms were observed to be the dominate hashtags and terms used for discussion related to the convoy demonstrations during our study period. These terms were adopted by Twitter users that were both for and against the convoy demonstrations.

For Reddit we analyzed content from five subreddits: r/ottawa, r/onguardforthee, r/canada, r/CanadaPolitics, and r/freedomconvoy. These subreddits were searched using the search terms “Freedom Convoy,” “Ottawa Convoy,” “Convoy,” “Ottawa Occupation,” and “Ottawa Truckers.” These were the dominate terms observed in discussions related to the convoy demonstrations. The subreddits were selected in effort to represent a cross-section of discussions related to the convoy, both locally in Ottawa, nationally across Canada, and internationally. This collection of subreddits contained conversations threads both supportive of and against the convoy demonstrations.

Our analysis focused on Twitter threads that had at least two replies, showing back-and-forth discussion related to the convoy protests. The parent tweet of these threads was found using the above search query. All subsequent replies to these identified tweets were then gathered. Focusing on Twitter conversation threads, rather than all tweets that contained our keyword terms, provided a better snapshot of how online users were talking about convoy protests, and allowed us to compare threaded Reddit commentary more closely to our Twitter data set. Similarly, our Reddit data set featured threads with at least two replies. Our final analyzed data set included 75,516 Twitter threads composed of 2,812,707 tweets and 529,335 Reddit comments from 2806 separate Reddit posts.

To analyze the quality of online deliberative discourse across these data sets, our research developed computational methods to measure the deliberative variables of (a) rationality, (b) interactivity, (c) equality, (d) civility, and (e) CC:

(a) Rationality: An important aspect of a rational deliberative discussion is the sourcing of arguments, so to measure the rationality of our content, we analysed whether social media commentary contained a link to outside sources (Jakob, 2020; Stromer-Galley, 2007). We calculated the number of links per post for each social media thread. As an additional measure of rationality, we employed LIWC2015’s 1 measure of analytic thinking. While the algorithms used to create the measure have not been released, LIWC2015’s analytic thinking variable is measured on a scale of 0 to 100, where high numbers indicate formal and logical thinking, while low numbers indicate informal and narrative thinking (Pennebaker, Booth, et al., 2015). We propose this measure as a proxy for the rational deliberative requirement of empirical argumentation.

(b) Interactivity: Interactive deliberative content features significant back-and-forth discussion (Friess & Eilders, 2015). We calculated the number of replies per conversation participant in our Twitter and Reddit threads to represent interactivity.

(c) Equality: Equality of user participation in our social media threads was calculated using two measures to determine if online conversations are dominated by a small number of individuals: skewness and the Gini coefficient. If an online conversation featured equality of participation, this should be reflected a normal distribution of posts for each individual and these measures can indicate when a distribution is not normal, thus demonstrating participation inequality.

Skewness measures the asymmetry of a distribution with a value of 0 representing a normal distribution. In the case of our social media data, a large positive skewness score would indicate a small set of individuals dominating a conversation. Skewness was calculated using the SciPy Python library (The SciPy Project, n.d., Virtanen et al., 2020), and full details of the formula used are available on their website.

While developed as a measure of income inequality, the Gini coefficient has also been used to measure the distribution of any items among individuals, including online forum participation inequality (van Mierlo et al., 2016). Again, using participant comment volume, we calculated the Gini coefficient for each social media thread in our data set. Gini coefficient values of closer to 0 represent more equal participation, while a value of 1 indicates a thread dominated by one individual. As described in greater detail by van Mierlo et al. (2016), the Gini coefficient is calculated by plotting a Lorenz curve of values, computing the area under this curve, then normalizing this value. In its traditional use in economics, a Lorenz curve features the percentage of income in an economy on the y-axis and cumulative income distribution and cumulative percentage of the population on the x-axis (van Mierlo et al., 2016). Our method takes the same approach using comment volume values rather than income. The Python script used in this article to calculate the Gini coefficient and skewness measures are available in our GitHub repository (https://github.com/stuartduncan416/onlineDelib):

(d) Civility: To analyze the civility of our social media threads, we employed the Google Perspective API to calculate the toxicity of our social media commentary. This platform defines toxicity as “a rude, disrespectful, or unreasonable comment that is likely to make one leave a discussion” (Hosseini et al., 2017, p. 2). We calculated a toxicity value using Perspective for each individual tweet and Reddit comment in our data set, and then averaged these scores for each Twitter and Reddit thread.

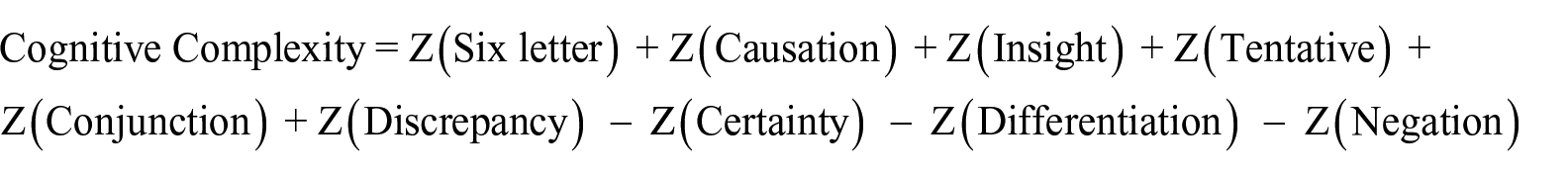

(e) CC: Using the LIWC2015 software to determine the linguistic characteristics of our data set, we analyzed the CC of our Twitter threads. Inspired by a formula first proposed by Owens and Wedeking (2011) and adapted for use in LIWC2015 by Suiter et al. (2021), we calculated the CC of our Reddit and Twitter comments using the following formula

Wyss et al. (2015) outline that the LIWC variable (Six letter) represents the percentage of words with more than six letters, (Causation) represents the percentage of words about casual mechanisms such as “because” or “affect, (Insight) represents the percentage of words about generating insight such as “believe” or “complex,” (Discrepancy) are the percentage of words indicating discrepancies such as “should” or “would,” (Certainty) are the percentage of words representing certainty such as “absolutely” or “inevitable,” and (Negation) represents the percentage of negations in a text. Suiter et al.’s (2021) adapted LIWC2015-based CC formula also includes the variables (Conjunction), which represents the percentage of conjunction words in the text such as “and” and “but,” and (Differentiation) which represents the percentage of differentiation words such as “hasn’t” or “else.”

More details on the rationale for the selections of these variables is provided by Brundidge et al. (2014), Owens and Wedeking (2011), Suiter et al. (2021), and Wyss et al. (2015) but in brief the use of causation words indicates a willingness to consider the cause and effect of ideas. The use of six letter words is a common measure of linguistic complexity. The use of tentative words measures how hesitant a person is about an idea. The use of conjunction words indicates a willingness to integrate other perspectives. Insight words indicate a more in-depth understanding of a subject. Discrepancy words indicate whether an individual identifies inconsistencies. Increased levels of six letter, tentative, conjunction, insight and discrepancy words result in higher levels of CC. Certainty words indicate how confident a person is about something. Negation words indicate whether an individual acknowledges the opposite of something. Differentiation words indicate how distinctive one sees their ideas. Increased use of certainty, negation, and differentiation words result in lower levels of CC.

Following the approach of Moore et al. (2021), the Z-standardized score for each LIWC value was used in the calculation. Moore et al. (2021) outline the significance of the results calculated from this formula: High levels of CC are, thus, indicative of an individual’s cognitions being embedded, organized, and categorized within a dense intellectual system. At the other end of the scale, the CC score diminishes to the extent that the cognitions are narrow, superficial, and fragmented. (p. 52)

Using the above formula and the LIWC values, CC was computed using a custom Python script which is available in the GitHub repository for this project. We then observed how changes in our measures of civility, rationality, interactivity, and equality, impact our measures of CC as a rough test of the validity of our computational approach to calculating these values. We hypothesize that as our measures of civility, rationality, interactivity, and equality increase, we should see corresponding increases in CC.

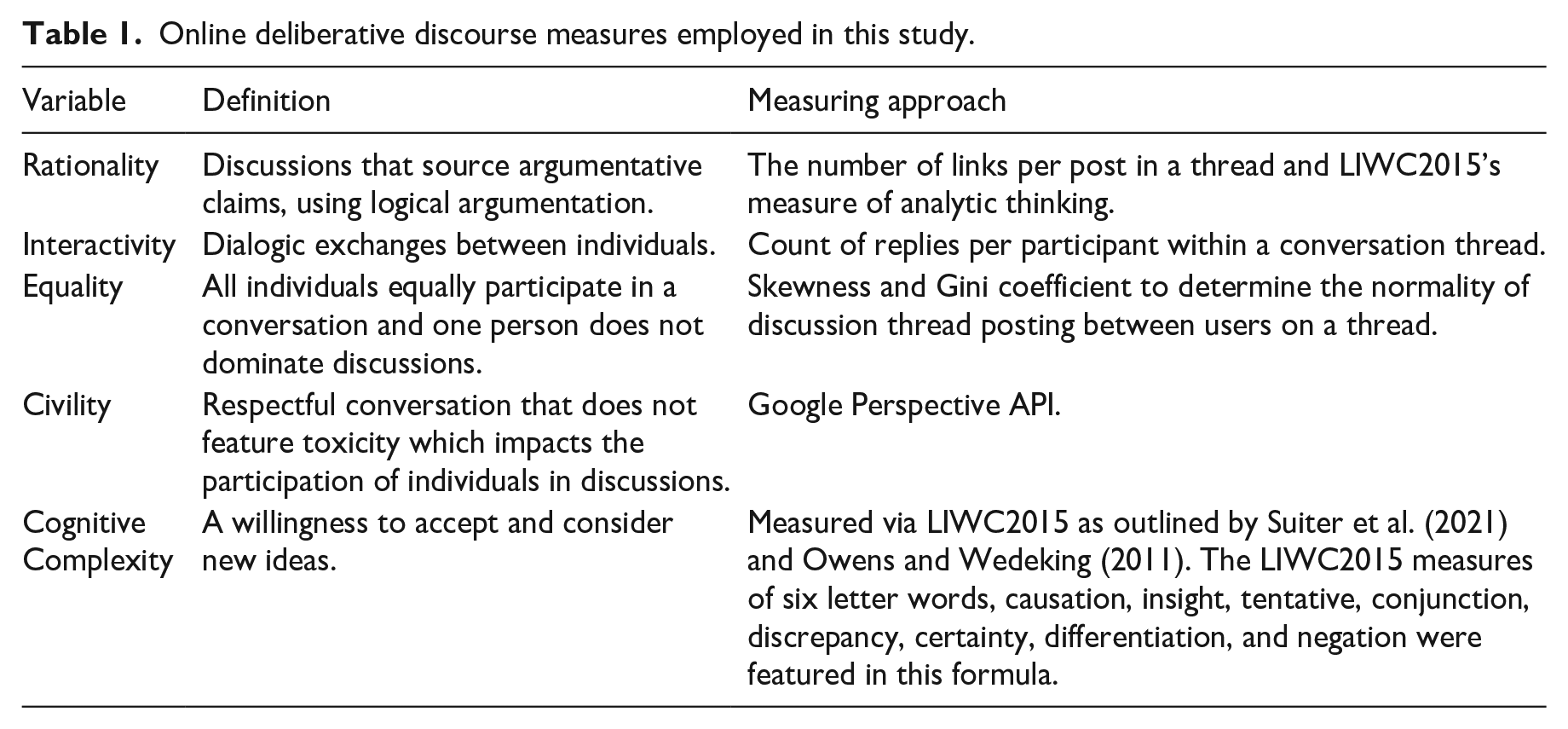

To summarize, in an effort to develop a computational framework to analyze online deliberative discourse, we propose that constructive online deliberative discourse should feature a high percentage of social media content with sourced ideas and analytic thinking (rationality), a significant amount of individuals participating in the discussion (interactivity), a balanced discussion without one individual dominating that deliberation (equality), respectable conversation void of toxic commentary (civility), and feature argumentation where individuals are open to opposing viewpoints (CC). The mean of these variables was calculated across both our Reddit and Twitter data set at a thread level. The measures employed in the study are summarized in Table 1.

Online deliberative discourse measures employed in this study.

To better understand whether general traffic volumes surrounding a specific topic could have an impact on online deliberative discourse, we also observed changes in our measures of toxicity and CC daily to determine whether changes in topic post volume impacted these results.

Results

To determine the viability of our proposed online deliberative discourse methodology, we tested our approach on a case study of social media discussions related to convoy protests surrounding COVID-19 vaccine mandates and restrictions both in Canada and internationally. While demonstrations and discussion post volume related to the protests culminated in February 2022, our period of study focused on threads which were started between January 1, 2022, and March 31, 2022 to better understand deliberation both leading up to and following the protests. We analyzed social media commentary both at a thread level and at an aggregate level for each of the Reddit and Twitter platforms.

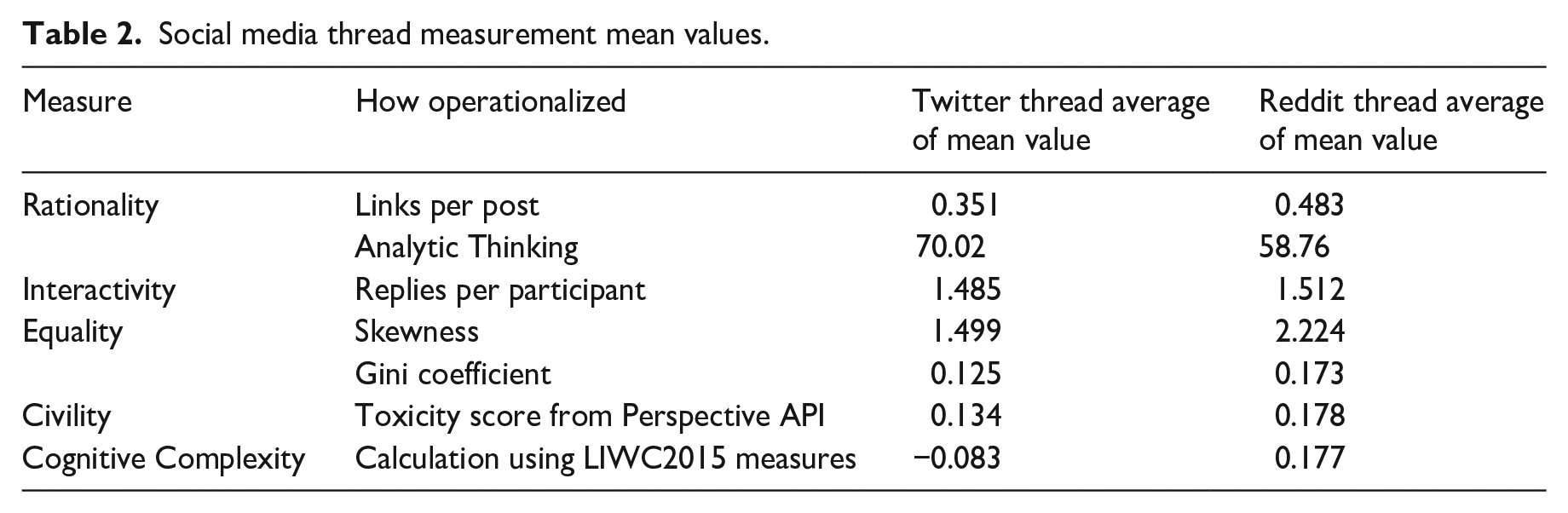

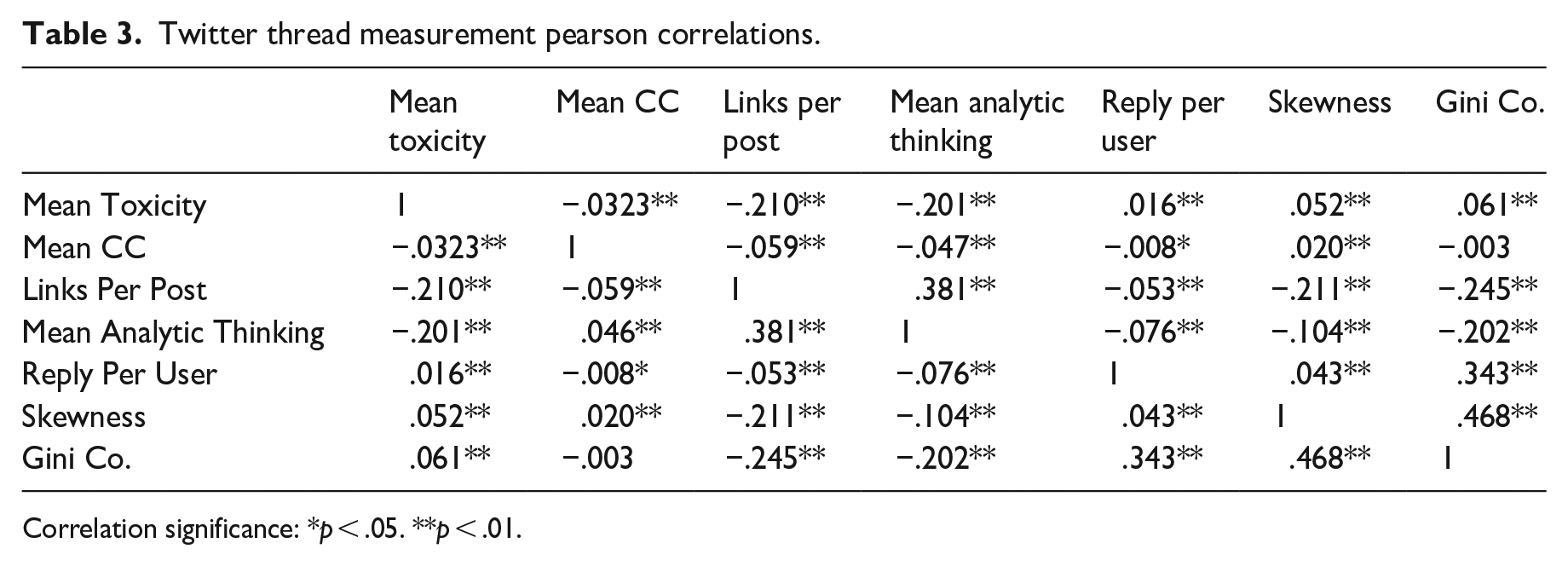

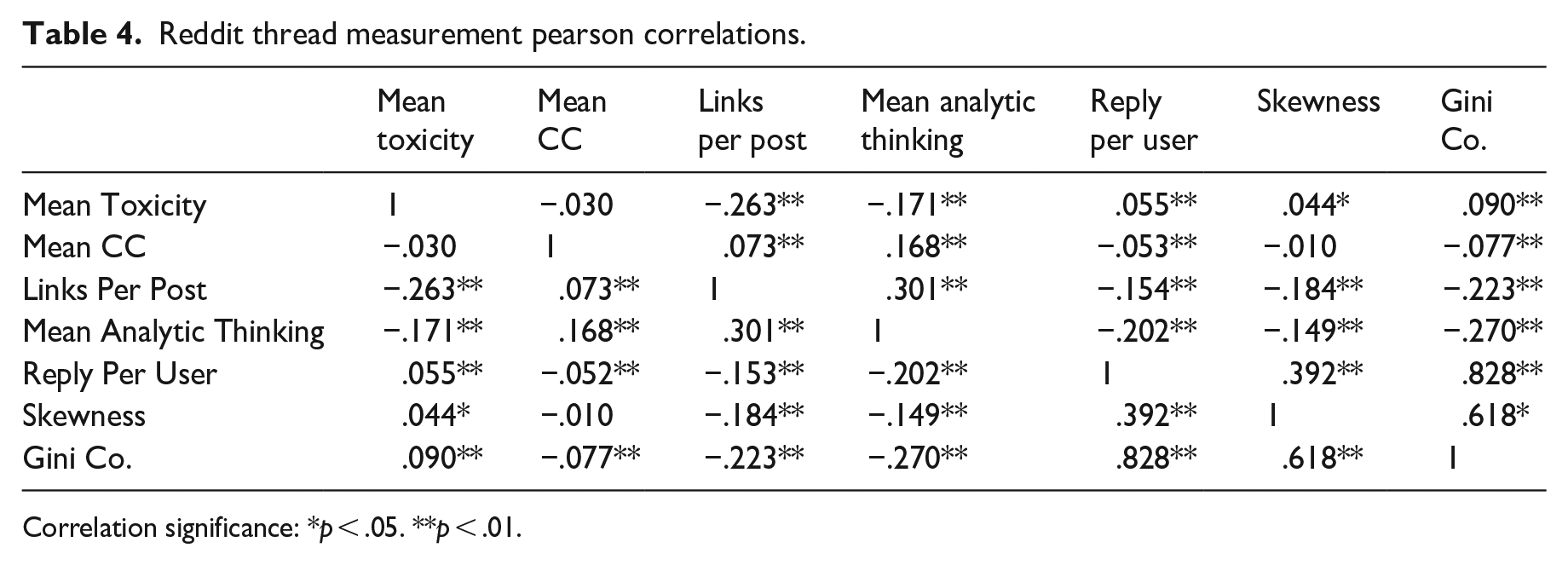

Rationality

We measure rationality via the number of links per post on each social media platform. Table 2 shows there are on average more links per post (0.483) on Reddit compared with Twitter (0.351). Threads on Twitter have an average mean of LIWC2015’s measure of analytic thinking of 70.02, while the corresponding measure for Reddit is 58.76. As seen in Tables 3 and 4, on both Twitter and Reddit there is a negative Pearson correlation between links per post and mean toxicity, suggesting that as the number of links increases on a thread, toxicity declines. A positive Pearson correlation is observed between LIWC2015’s mean measures of analytic thinking and links per post, both on Twitter (0.381) and Reddit (0.301) threads. Conversely a negative correlation between analytic thinking and replies per user is observed both on Twitter (−0.076) and Reddit (−0.202).

Social media thread measurement mean values.

Twitter thread measurement pearson correlations.

Correlation significance: *p < .05. **p < .01.

Reddit thread measurement pearson correlations.

Correlation significance: *p < .05. **p < .01.

Interactivity

There are marginal observed differences between the number of replies per discussion thread participant on each platform, with Reddit featuring slightly more replies (1.512) compared with Twitter (1.485). Both on Twitter and Reddit, we observe negative Pearson correlations between both our measures of skewness and the Gini coefficient and links per post, which may indicate that as the as conversations are dominated by a smaller amount of people, the number of links per post declines.

Equality

When measuring discussion equality, Reddit features higher skewness and Gini coefficient scores. Reddit has a skewness score of 2.224 compared with a score of 1.499 for Twitter. The Gini coefficient score over our data sets Reddit threads is 0.173, compared with 0.125 on Twitter. These results show that a smaller amount of people dominate discussion on the Reddit platform.

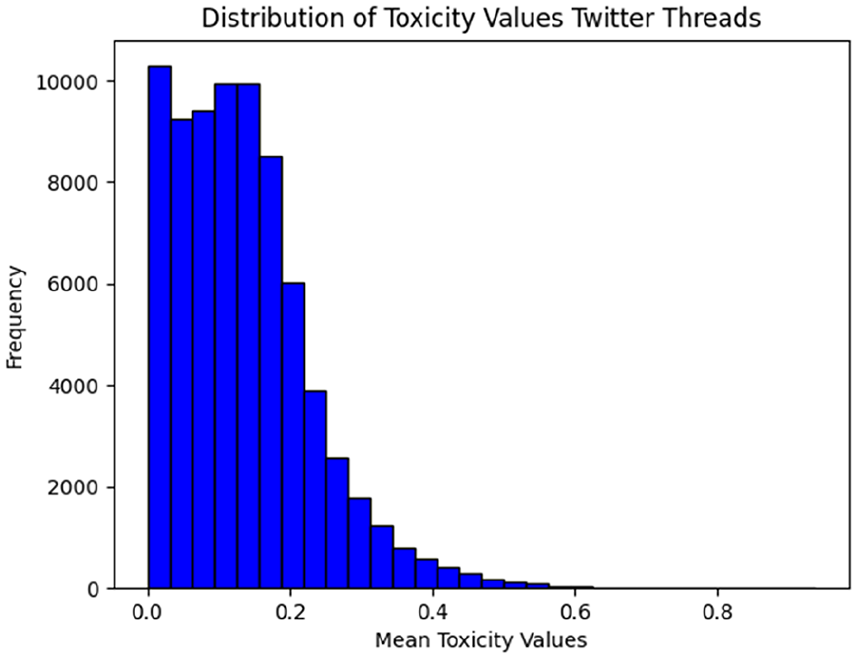

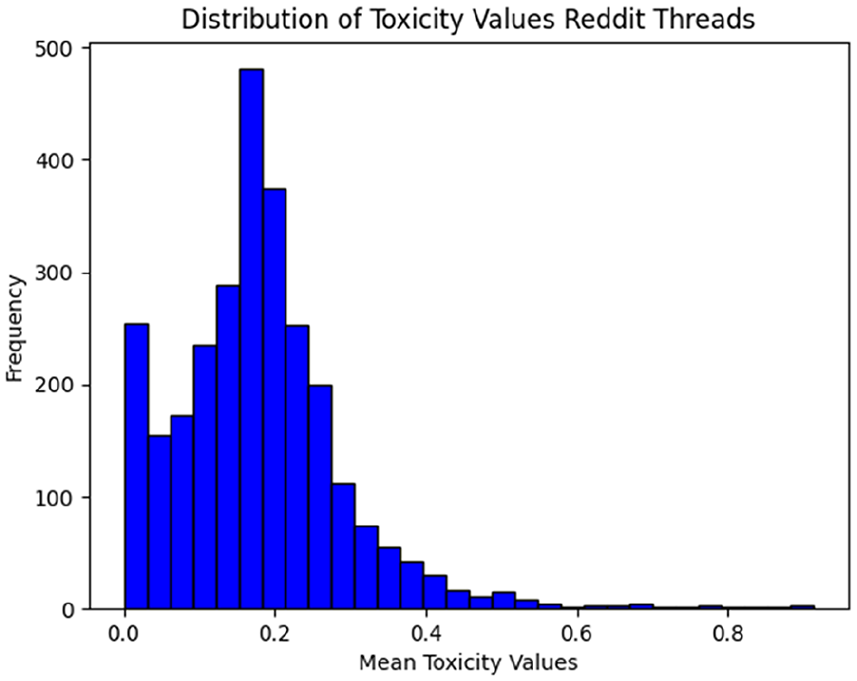

Toxicity

Toxicity scores are slightly higher on Reddit with a value of 0.178 compared with Twitter’s score of 0.134, but neither platform features significant amounts of uncivil discussions. The distribution of mean toxicity values across Twitter and Reddit is shown in Figures 1 and 2. There are differences in average word count per post between Twitter and Reddit, with Twitter averaging 19.5 words per post, and Reddit averaging 44.4 words per post. Observing interrelations between words per post and our proposed variables, on Twitter we detect a Pearson correlation of 0.247 between words per post and mean toxicity within our conversation threads. This same correlation is not observed on Reddit with an increase in average word count resulting in a decrease in toxicity, as seen through a Pearson correlation value of −0.21. We also observe that the average word count is positively correlated with mean CC on Reddit, with a Pearson value of 0.154. To better understand how overall post volume on a specific subject affects notions of online deliberative discourse, we also calculated changes in mean toxicity and CC daily over our test period.

Distribution of mean toxicity values Twitter threads.

Distribution of mean toxicity values Reddit threads.

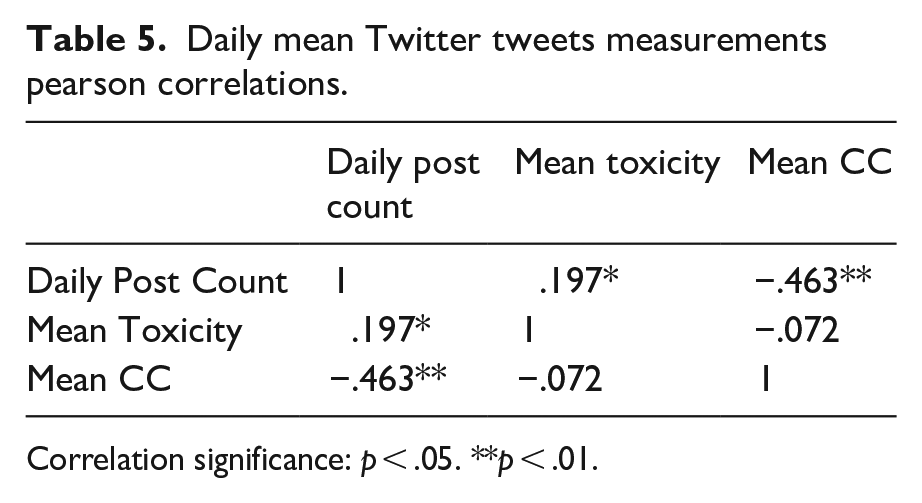

As seen in Table 5, on Twitter there is a positive Pearson correlation (0.197) between the number of posts in a day and the average toxicity of all tweets related to the convoy protests, suggesting that as post volume increases, so does post toxicity.

CC

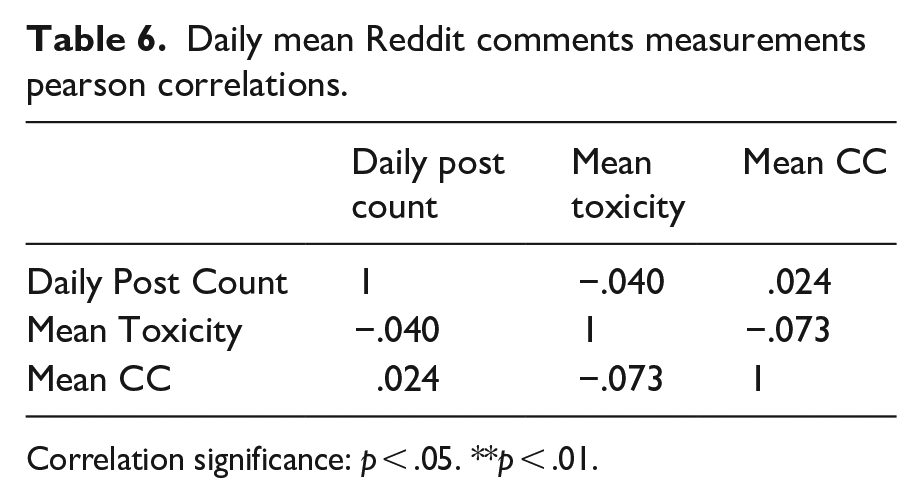

Reddit discussion threads have a higher average CC score of 0.177 compared with Twitter’s average of −0.083. We observe a negative correlation between daily post counts and average CC of a day, showing that as daily post count increases, CC declines as seen in Table 5. When observed over the Reddit platform, as shown in Table 6 these same correlations are not seen, and the correlations are found not to be significant.

Daily mean Twitter tweets measurements pearson correlations.

Correlation significance: p < .05. **p < .01.

Daily mean Reddit comments measurements pearson correlations.

Correlation significance: p < .05. **p < .01.

Observations of correlations between our measures of civility, rationality, interactivity and equality, and CC are shown in Tables 3 and 4. As measures of toxicity increase, indicating a decrease in civility, we see a negative correlation with CC on Twitter (−0.0323) and Reddit (−0.030). Regarding our measures of rationality, we observe a negative correlation (−0.059) on Twitter between CC and links per post, and positive correlation on Reddit (0.073). Our second measure of rationality shows a positive correlation between the LIWC2015 measure of analytic thinking on both Twitter (0.381) and Reddit (0.301). Our measure of interactivity, replies per post, displays little impact on CC on Twitter (−0.008) and a negative correlative on Twitter (−0.052). Examining correlations between CC and our measures of equality, there is a positive correlation between skewness and CC on Twitter (0.020) and non-significant correlation on Reddit. Our second measure of equality, the Gini coefficient, illustrates a non-significant correlation with CC on Twitter and negative correlation on Reddit (−0.077).

Discussion

Social media platforms are deliberative spaces (Esau et al., 2017; Gonzalez-Bailon et al., 2010; Kelly et al., 2005; Kruse et al., 2018). Therefore, measuring the quality of that deliberation can provide important insight into how individuals discuss significant social issues online.

Our research examines the feasibility of using a specific set of computational analytic and linguistic calculations to determine notions of online deliberative discourse on social media platforms. To test the suitability of our variables that were extracted from our literature review and our methodological approach, we analyzed social media discussions related to convoy protests surrounding COVID-19 vaccine mandates and restrictions which culminated with demonstrations in Ottawa, Canada in February 2022 which also spurred similar smaller demonstrations across Canada and internationally.

Our case study features over 3 million separate pieces of Twitter and Reddit commentary. Analyzing discussions at this scale would be virtually impossible with manual coding methods alone. Sampling techniques could narrow this commentary down to a size that might be more suitable for manual coding, but at the cost of losing important elements of discussions of socially significant issues. Furthermore, manual coding methods potentially introduce subjectivity and bias into research results (Gaur & Kumar, 2018; Hsieh & Shannon, 2005; Mackieson et al., 2019).

While computational methods of measuring online deliberative discourse, particularly at a larger scale, can entail the use of less human labor, the approach is not without its challenges. The development and testing of our computational approach highlighted some drawbacks of such an approach.

A significant challenge with acquiring any large data set is ensuring the development of queries that result in a comprehensive and accurate representation of discussions on a specific topic. With over 3 million pieces of content to be analyzed, from thousands of social media threads, meaningful cleaning and validation of our data for topic relevance was virtually impossible, and our data set is bound to feature some content unrelated to the COVID-19 protests. Manual processing of smaller data sets could ensure topic relevance in a way that our current computational approach, as it stands currently, cannot.

As social science researchers with intermediate programming skills, we often found ourselves reliant on pre-developed tools, such as Google Perspective or LIWC2015, for elements of our analysis. While there is significant use of both tools within academic research (Hosseini et al., 2017; Mittos et al., 2019; Moore et al., 2021; Suiter et al., 2021), we were still constrained by the limitations and functionalities of these tools. For example, toxicity scores were acquired via Google Perspective’s web-based API, which featured quotas on the amount of content we could analyze within a certain time period. These quotas lengthened the time required to process our data sets, and complex multi-threading Python programming techniques had to be used to lower projected analysis time for our data set from over a month to a few days.

Our computational approach to online deliberative discourse analysis is also bound to miss some of the nuance of human speech that could indicate quality deliberative discourse, features that manual coders could observe. The sharing of links has been shown to serve a deliberative purpose in online spaces (Jakob, 2020), and we operationalized the measurement of rationality in our data set by calculating the amount of links present per post in a thread, but our computational approach has no way to measure the quality of those links. Are the links on topic? Do these links support the argument a conversation participant is presenting? Do the links come from reputable sources? These are all questions that our current computational approach does not answer, which could be answered via human coding.

Addressing RQ1, the correlations we observed between CC and our approaches to computationally measuring civility, rationality, interactivity, and equality reveal that there could remain space for refinement to our approaches. With the exception of civility (as measured through toxicity) and rationality (as measured through LIWC2015’s analytic thinking scores), our hypothesized correlations between CC and these measures across platforms were not observed. Despite these challenges, this research demonstrates potential methods for computational analysis of online deliberative discourse. With further development of our specifically identified variables, our approaches to measuring rationality, interactivity, equality, civility, and CC could serve as a viable set of tools to analyze the quality of online discourse. CC has been presented as an effective tool to measure deliberative quality (Brundidge et al., 2014; Moore et al., 2021). A significant contribution of this article is to illustrate how one can use a linguistically calculated value like CC alongside more common analytically based values to provide a more fulsome overview of the deliberative characteristics of an online conversation. Our hope is that by developing a reproducible method of analyzing deliberative discourse, these measures will be further refined and validated by other researchers on other data sets.

Addressing notions of deliberative affordances of social media platforms, our work also reveals deliberative differences between the Twitter and Reddit platforms addressing RQ2. While the deliberative aspects of Twitter have been well explored (Balcells & Padró-Solanet, 2020; Brubaker et al., 2021; Jaidka et al., 2019; Jakob, 2020; Oz et al., 2018), our work brings attention to the deliberative characteristics of the Reddit social media platform.

Our findings corroborate the work of G. M. Chen (2017) and Oz et al. (2018), that the affordance of allowing longer post lengths affects notions of online deliberation, as we observed stronger levels of CC within our Reddit data set, which featured on average longer post lengths than Twitter. While posts with longer word counts resulted in higher levels of toxicity on Twitter, we did not observe the same phenomenon on Reddit. We found toxicity levels declined as post length increased on the Reddit platform. We also found that both on Twitter and Reddit, sharing of links within a thread resulted in less overall toxicity, supporting elements of Jakob’s (2020) analysis that link sharing serves an important deliberative role.

This work also provides further insight into the impact that larger platform discourse can have on deliberative elements, and how this impact differs between Twitter and Reddit. Our analysis shows that on Twitter the number of daily posts and the level of toxicity in a day are significantly correlated, suggesting that as the number of posts increases, incivility also increases. We also observe that as posts on a topic increase, CC declines. At least with Twitter, an increase in activity on a socially significant topic appears to have a negative effect on these key elements of online deliberative discourse. These same correlations did not exist on Reddit, which could be an indication that overall platform activity on a topic has less of an impact there. A worthy topic of further research, we hypothesize that the decentralized subreddit system of Reddit sees less overall platform activity impact than the centralized notion of a larger Twitter feed. Reddit on average also shows higher levels of CC, which could indicate that Reddit users are more open to new ideas and are less entrenched in their value systems than Twitter users.

While our study provides insight into development of computational methods of online deliberative discourse analysis, our approach is not without its limitations. To further confirm our findings on the deliberative impact of link sharing and post count, and the overall deliberative differences between Twitter and Reddit, our methodology should be tested on other socially significant topics. The study of other topics on the platforms would help to better understand whether our findings are specific to discussions on COVID-19 vaccines and restrictions, or if they are more broadly applicable to socially significant issues on social media platforms. Having five separate deliberative features also makes it challenging to compare different topics with one another, and there could be value in developing a composite measure that would encapsulate these features into one deliberative score.

Conclusion

This work provides insight into the deliberative characteristics of social media platforms and outlines a potential set of measures that can automate the measurement of deliberative quality. With further development and validation, the measures and our methodological approach can serve as a valuable tool for researchers to explore the deliberative characteristics of social media discourse on a large scale. Building on the research presented in this article, future work could further validate our current approach via more significant statistical analysis and by applying our methodological choices to additional case studies of social media discussions surrounding complex social issues.

Employing linguistic-based analyses such as CC, alongside analytic-based approaches to measuring rationality, interactivity, and equality, together with a machine-learning derived analysis of toxicity, outlines how various computational approaches can be used together to analyze large data sets.

Our research has also confirmed that social media platforms are not a monolith, and that individual platform affordances of the spaces impact how individuals deliberate within them. We observe clear deliberative distinctions between Twitter and Reddit in our work, differences that are ripe for further study.

As our use case study of the 2022 convoy protests has shown, deliberative characteristics of social media platforms can reveal themselves when we embrace methods that can analyze social media commentary surrounding socially significant issues.

Footnotes

Authors’ note

Frauke Zeller is now affiliated to University of Edinburgh, UK.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Social Sciences and Humanities Research Council.