Abstract

In light of concerns over the spread of so-called “fake news” on social media, organizations, and policymakers have increasingly sought to identify tools that can be used to stem the dissemination of misinformation and disinformation. Some evidence suggests that brief media literacy interventions might serve as an important means of helping social media users discern between “real” and “fake” news headlines. However, empirical research indicates that these effects tend to be relatively modest in magnitude. To that end, this study explored the degree to which epistemic self-efficacy beliefs may be able to positively “boost” media literacy interventions. Specifically, we used a series of 2 × 2 experiments to test the contention that the combinatory effects of epistemic self-efficacy and media literacy interventions will better equip users with the resources necessary to discern between disinformation and objectively produced news content. The results failed to indicate the presence of combinatory effects. We did, however, find initial evidence that epistemic self-efficacy beliefs may be importantly associated with the ability to properly classify both fake and mainstream news content.

Since the 2016 US presidential election, terms such as “fake news” and “disinformation” have become staples in the lexicon of Americans across the political ideology continuum. While a societal problem all around the globe, the so-called infodemic’s deleterious impact is perhaps most acutely felt in the United States, where “Americans rate it as a larger problem than racism, climate change, or terrorism” (Graham, 2019). Former President Barack Obama called fake news a fundamental threat to democracy, while media experts believe “bogus news stories appearing online and on social media had an even greater reach in the final months of the presidential campaign than articles by mainstream news organizations” (Higgins et al., 2016). In fact, in discourse published after the election of 2016, mainstream journalists, more than any other factor, blamed fake news for upending the polls and earning Donald Trump the presidency (McDevitt & Ferrucci, 2018). This sentiment makes sense, anecdotally, since “from Pope Francis endorsing then republican presidential candidate Donald Trump, to a woman arrested for defecating on her boss’ desk after she won the lottery, fake news stories have engaged—and fooled—millions of readers” (Tandoc, Lim, & Ling, 2018, p. 137).

It should come as no revelation, then, that fake news, a term “as a meaningful descriptor (that) is fraught with issues,” often invokes and summarizes broader societal feelings of dissatisfaction (Hopp et al., 2020a, p. 376). Such dissatisfaction stems from and is aimed at any number of targets, including, but perhaps not limited to, the so-called mainstream news media, elected officials, media personalities, and reality itself. In light of the ambiguity and political frames that surround and constitute colloquial, applied, and formal understandings of fake news, it should be little surprise that to-date efforts to combat mis-and-disinformation have yielded inconsistent results (Span, 2020). For example, in an effort to maintain its recent historical status as arbiter of truth and facts, mainstream journalistic entities have—often in partnership with third parties such as platform companies and literacy organizations that often use regular citizens—sought to deploy fact-checks as a means of correcting reader misperceptions (Graves, 2016). However, such efforts have yielded both limited and, in some cases, mixed results (e.g., Dias & Sippitt, 2020). For journalists, fact-checking may be the only logical defense against fake news since “journalists have little ability to proactively fight fake news” (Vargo et al., 2018, p. 2029). Organizations on the periphery of journalism, ones staffed by nonjournalists, on the contrary, have seemingly made media literacy endeavors a priority in the struggle surrounding misinformation (Span, 2020). Initial evidence suggests that brief media literacy interventions might serve as an important means of helping social media users discern between “real” and “fake” news headlines (Guess et al., 2020). However, these effects are relatively modest and have also introduced a “small but measurable negative effect on the perceived accuracy of mainstream news stories” (Guess et al., 2020, p. 15542).

In light of the varied complexities surrounding the suppression and refutation of the spread of fake news, this study is informed by several considerations. First, mainstream journalistic organizations are limited in their ability to combat fake news. They are frequently mistrusted and often lack epistemic standing, with groups most likely to share incorrect political and social information (e.g., Hopp et al., 2020b). Second, issue-by-issue refutation of factually incorrect information (e.g., fact-checking) is inefficient and cannot feasibly address all instances of mis- and disinformation. Third, and relatedly, extant research suggests that brief media literacy-based interventions may present a

Literature review

Fake news

So-called “fake news” is not a novel concept; both citizens and scholars have discussed it for more than a century (Waisbord, 2018). People have labeled the phenomenon with different names, but the concept of misinformation or disinformation is a fundamental resident of the information ecosystem. Determining a clear definition for fake news is difficult because, over time, scholars have described the concept in numerous manners (Hopp & Ferrucci, 2020). For example, work prior to 2016 often conflates fake news with satire such as the television program

The overwhelming majority of disinformation is disseminated and then amplified through social media platforms (Fourney et al., 2017; Hopp et al., 2020b). While that fact remains undisputed, more recent work illustrates that those spreading fake news through social media tend to principally be people who self-identify as ideologically extreme and lack trust in the mainstream media (Ferrucci et al., 2020). Yet, while fake news is disseminated outside of the news media, once published, its effects can be seen in the news. Research empirically illustrates that fake news can have an agenda-setting effect on certain types of media organizations, particularly ideologically focused ones: “as partisan media adopted fake news agendas, other media began to resist” them (Vargo et al., 2018, p. 2044). This agenda-setting effect is especially important because social media platforms place very few restrictions on posting and make “it possible for an individual to rapidly share misleading information with large populations, without the overheads associated with traditional broadcast media such as newsprint or television” (Fourney et al., 2017, p. 1). While mainstream media still owns authority over the mainstream public sphere—where much of the public receives its information from—the prolific adoption of social media platforms and an increasingly vibrant ideologically extreme countermedia ecosystem provides the proverbial oxygen for this type of misinformation to reach significant amounts of like-minded audience members (Hopp & Ferrucci, 2020; Carlson, 2017). Journalists agree with this sentiment as they perceive fake news as a problem precipitated by a combination of social media platforms, audience ignorance, and the current political environment (Tandoc et al., 2019). In fact, journalists, along with journalism studies and political communication scholars, almost unilaterally agree that fake news is not something that journalism itself can remedy. But the problem continues eroding the public’s trust in journalism as an institution (Ferrucci, 2017).

When determining whether a piece of information is fake news, the public relies on a myriad of informational assessment strategies and heuristic behaviors. These strategies are influenced by the constellation of ideological beliefs and media consumption habits that together shape an individual’s relationship with the surrounding information ecosystem (Tandoc, Ling, et al., 2018). Specifically, “political allegiances can affect how people process misinformation and fact checks” (Dias & Sippitt, 2020, p. 611). Studies also show that fact-checking can have a short-term effect on people, but, essentially, finding out one piece of information is incorrect does not have more than a tenuous connection to future instances (e.g., Dias & Sippitt, 2020). Therefore, many news organizations and nonprofits on the periphery of journalism have set up their own fact-checking initiatives as a form of fighting the spread of disinformation (Graves, 2016). The other unearthed difficulty surrounding journalism or peripheral institutions acting as a fact-checker is that “fact-checking websites are not aggressively refuting certain media. Indeed, due largely to resource-driven constraints, early fact-checking conventions were to correct a claim and move on” (Vargo et al., 2018, p. 2044). This is why many organizations have turned to combining fact-checking activities with media literacy initiatives (Haigh et al., 2018; Span, 2020).

Media literacy

The concept of media literacy has proved difficult to define and measure for communication scholars over time (Livingstone, 2004). Typically, when defining media literacy, scholars often include various subsets of literacy in general such as information literacy, digital literacy, critical literacy, and news literacy; all together, these subsets make up media literacy, which describes the ability to access, interpret, analyze and evaluate media across forms (Hobbs, 2008). To accomplish the aforementioned goals, many have argued for an understanding of the creation situation for particular pieces of information, in that “audiences can be better equipped to access, evaluate, analyze, and create news media products if they have a more complete understanding of the conditions in which (they were) produced” (Ashley et al., 2013, p. 7). To understand those conditions, a citizen must be able to distinguish the information designed to be disseminated (Livingstone, 2004). However, acknowledging that media literacy, throughout time, is often difficult to define and measure, research illustrates that there are numerous long-term benefits for citizens to become media literate (Mason et al., 2018). And, perhaps more importantly, news media literacy can only have positive effects on democracy (Ashley, 2019).

Scholarly work shows that gains in media literacy can improve writing, critical thinking, and comprehension (Ashley et al., 2013). This work often occurs across disciplines as fields such as communication, political science, psychology, mass communication, information science, sociology, rhetoric, and others have all published significant research in the area (Vraga et al., 2021). If citizens are provided with tools for attaining media literacy, scholars argue that this will “empower people with the knowledge, skills, and motivation to navigate ever-changing media environments” (Vraga et al., 2021, p. 2).

For obvious reasons, then, a significant body of work has hypothesized that media literacy interventions may have a positive effect in slowing the spread of fake news (e.g., Chan, 2022; Guess et al., 2020; Hameleers, 2022; Moore & Hancock, 2022; Vraga et al., 2022). For example, scholars found that adults with higher levels of media literacy believed conspiracy theories less frequently, even if those conspiracy theories aligned with their ideologies (Craft et al., 2017). This is especially important because other work contends that the strength of ideology significantly impacts the amount of political expression on platforms (Ferrucci et al., 2020). Therefore, media literacy could prevent the ideologically extreme and older individuals, the groups most likely to spread and believe fake news, from doing so (Span, 2020). When testing media literacy’s effect on fake news, Jones-Jang et al. (2021) found that a media literacy intervention improved the ability of participants to detect fake news, and others also contend that “relatively short, scalable interventions could be effective in fighting misinformation around the world” (Guess et al., 2020, p. 15537).

Despite the potential of media literacy to help ameliorate the negative individual and society-wide effects of belief in mis-and-disinformation, the magnitude of the relationship between media literacy and informational classification outcomes has been modest in nature. For instance, in Guess et al. (2020), the authors used an experimental approach to test the effects of a news media literacy intervention on the perceived accuracy of false news headlines. The results suggested that exposure to the literacy intervention was, indeed, associated with a decrease in belief in false news headlines; however, these decreases in perceived accuracy amounted to less than 0.2 points on a 4-point scale (where higher scores were associated with higher levels of belief in false information). Likewise, Moore and Hancock (2022) experimentally exposed a group of older adults to the

Taken as a whole, these studies (and, other similar ones, such as Adjin-Tettey, 2022 and Jones-Jang et al., 2021) indicate that media and related information literacies may have persistent but

Self-efficacy

Self-efficacy describes, generally, a person’s self-perceived ability to complete a specific task; it has been shown to have a significant impact on both a person’s motivation and capability to complete the task (Bandura, 1986). More specifically, the higher someone’s self-efficacy around a specific task, the more likely they can accomplish it (Bandura, 1982). Several factors explain the relationship between self-efficacy and information evaluation outcomes. Self-efficacy stimulates deep cognitive processing of information, and perhaps especially so in situations marked by uncertainty, ambiguity, and challenging environmental demands (Bandura, 1994). Self-efficacy also functions on a motivational level such that self-efficacious individuals are likely to form and pursue specified goals and, therein, demonstrate enhanced resilience (Bandura, 1982). Finally, self-efficacy has important implications for emotional regulation such that those high in the construct are unlikely to assign negative emotions (i.e., anxiety, fear) to target behaviors (Bandura, 1989) and, therefore, more likely to engage with challenging obstacles in a persistent manner (Bandura, 1994).

Prior work has engaged specifically with the concept of epistemic self-efficacy, which can be broadly construed as self-perceptions related to the factual adjudication of encountered truth claims (e.g., Pingree, 2011), particularly in informational contexts characterized by multiple and contradictory positions. Recent work has shown that confidence in informational assessment capabilities is positively associated with mis-and-disinformation diagnosis. Hopp (2022), for instance, found that those with high levels of confidence in their ability to spot online fake news were robustly associated with the ability to accurately classify both fake news and mainstream news articles on Facebook. Specifically, this work indicated that those with heightened epistemic self-efficacy perceptions were significantly (1) more likely to engage in informational credibility sorting tasks and (2) better able to discern between mainstream news and disinformation. Loy et al. (2020) found that epistemic self-efficacy on the topic of climate change was positively supportive of the selection of and exposure to accurate information on climate change. In another study concerning identifying fake news about corporate brands, Chen and Cheng (2020) found that higher levels of epistemic self-efficacy, combined with trust in news media, led to higher levels of diagnosing misinformation about, in this particular case, Dasani water.

In light of both general theorization around self-efficacy’s ability to motivate, regulate, and support behavioral outcomes and domain-specific theorization on the critical utility of epistemic self-efficacy’s ability to aid the accurate adjudication of factual claims, this study suggests that epistemic self-efficacy might positively amplify the previously observed relationship between media literacy interventions and the ability to properly diagnose online misinformation. In other words, when information evaluators have heightened feelings of epistemic self-efficacy, we predict that they may

In addition to Hypothesis 1, this study was also interested in the relationship between fake news-related self-efficacy beliefs, media literacy levels, and the ability to assess mainstream news content. Notably, in Guess et al.’s (2020) study on the relationship between media literacy and online information classification, the authors found that while literacy-based interventions diminish people’s belief in fake news content, they increased skepticism of false news headlines may come at the expense of decreased belief in mainstream news headlines—the media literacy intervention [used in the study] reduced the perceived accuracy of these headlines in both the US and India online surveys (p. 15541).

Given our foregoing contentions that self-efficacy beliefs related to informational accuracy assessment can motivate enhanced attention to information attributes and credibility cues, we wondered if the combination of heightened media literacy and heightened information-relevant self-efficacy levels might not together result in an enhancement of the ability to discern between fake and mainstream news.

To empirically assess the foregoing, two experimental studies were conducted. By using multiple discrete studies, we were able to assess the posited hypothesis (and the underlying interventions) in across contextual domains (here, general political information and COVID-related information). In addition, the use of multiple experiments allowed for the possibility of internal replication of any observed statistical effects. These studies are discussed in complete detail below.

Experiment 1

Experiment 1 procedure and materials

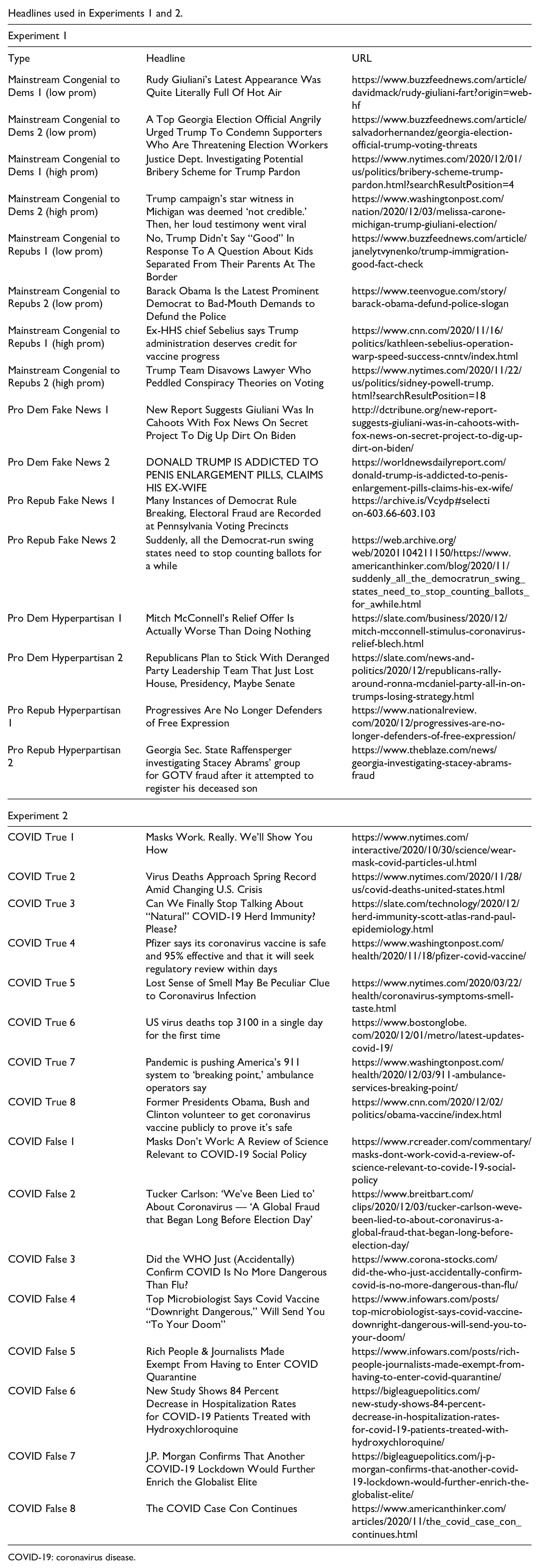

This study employed a 2 × 2 randomized factorial experiment. For the first set of conditions, respondents were randomly exposed to a brief epistemic self-efficacy intervention (those in the controlling condition were not asked to evaluate any text). Development of the intervention was guided by the following concerns: First, digital and social media interventions need to be brief. Research has shown that social media users tend to engage with individual content items briefly (e.g., Vraga et al., 2016). As such, our intervention was quite short (130 words) and meant to be read in around 30 s (research indicates that typical adult reading speeds are just under 250 words per minute; Brysbaert, 2019). Second, given that social media platforms are used by a wide array of people in various life stages, the intervention needed to be readily understandable. At the same time, online mis-and-disinformation is a complex issue, and the trivialized presentation of the issue may be unappealing to some users or trigger third-person effects. To that end, we designed our intervention to be comprehensible by most American adults (Flesh-Kincaid grade level reading score = 13.1). Third, in terms of substantive self-efficacy attributes, the intervention was designed to provide positive verbal persuasion and physiological feedback/management and to stimulate feelings of vicarious experience. These factors are, according to Bandura (1982, 1986, 1989), important sources of self-efficacy. Because the intervention was specifically designed to be brief, it did not directly involve text or tasks designed to stimulate mastery experience; instead, the feeling was that initial feelings of self-efficacy would, over time, stimulate mastery experiences, therefore further enhancing feelings of fake news-related self-efficacy. Fourth, prior work on self-efficacy (e.g., Bandura, 1986) clearly indicates that efficacy levels are domain and behavior-specific. As such, in this case, we focused specifically and narrowly on the epistemic issue of fake news on social media (see Note 1). In the second portion of the experiment, participants were randomly exposed to a brief media literacy intervention taken directly from Guess et al. (2020). Those in the nonexperimental media literacy condition did not evaluate any text. After viewing the manipulations, participants were then asked to assess and evaluate the credibility of 16 informative headlines (see below). The 16 headlines evaluated by participants were shown in random order to prevent ordering effects. Because we were predominantly interested in the assessment of the fake and mainstream news headlines, the hyperpartisan headlines were used as a distracting element in the present experiment. At the end of the questionnaire document, participants reviewed a disclosure statement that identified the fake news headlines, and, subsequently, provided corrective information. All processes were approved by our institutional review board prior to study deployment.

Experiment 1 sample

The sample was recruited using Amazon’ Mechanical Turk population. To qualify for the study, participants needed to self-report being 18 or older, a US citizen, and a current user of Facebook. The CloudResearch platform (https://www.cloudresearch.com/) was employed to help facilitate data quality. Specifically, CloudResearch allowed for the blocking of low-quality participants (i.e., participants who were previously observed providing inattentive responses or exhibiting bot-like response patterns), participants who had participated in prior research, similar research projects designed by the researchers, the assessment of demographic consistency factors (i.e., does the participant consistently provide the same demographic profile information?), and the calculation of completion and bounce rates (here, 87% and 6%, respectively). Participants were compensated $0.85 for their participation (median completion time = 410 s), a compensation rate well above the median compensation rates observed on the platform (e.g., Hara et al., 2017). A total of 1011 responses were collected. Data were gathered on December 20 and 21, 2020. Removing those who “sped” through the questionnaire document (in this case, those who completed the questionnaire in less than 150 s) and those who provided incomplete data on the variables of interest resulted in an analytic sample size of 969. In all, 25.8% of the sample did not see either the media literacy or self-efficacy intervention, 22.7% saw only the media literacy intervention, 25.4% saw only the epistemic self-efficacy intervention, and 26.1% saw both the media literacy and epistemic self-efficacy interventions.

Experiment 1 measures

Information accuracy assessments

Consistent with Chan (2022), Guess et al. (2020), Hopp (2022), Moore and Hancock (2022), and Van Duyn and Collier (2019), we asked participants to assess a series of informational headlines. Each message contained a readily digestible claim and sought to mimic the types of information that users are typically exposed to when using social and digital media platforms. The advantage of the headline approach is that it allows researchers to make sense of informational classification behaviors across a variety of different claims and contexts. Participants were shown four mainstream news article headlines congenial to Democrats (two from high-prominence news sources and two from low-prominence news sources), four mainstream news article headlines congenial to Republicans (again, two from high-prominence news sources and two from low-prominence news sources), two hyperpartisan news headlines congenial to democrats, two hyperpartisan news headlines congenial to republicans, two fake news article headlines congenial to democrats, and two fake news article headlines congenial to republicans. Granular operational definitions of

Fake news self-efficacy

Fake news self-efficacy was measured for the purposes of assessing the extent to which the epistemic self-efficacy intervention was capable of producing identifiable self-efficacy gains in the primary epistemic domain of interest to this study. In other words, fake news self-efficacy is a localized factor that theory suggests should be produced by more general epistemic self-efficacy interventions (e.g., Hopp, 2022). Three items, all taken from Hopp (2022) were used to create a self-reported measure of fake news self-efficacy:

Descriptive variables

Participants were asked to provide their age in years (M = 38.85, SD = 12.90), their biological sex (56.2% female), their race (70.0% white), their average annual income (1 = less than $25,000 – 9 = more than $200,001; median = “between $50,001 and $75,000 annually,” M = 3.22, SD = 1.80), their highest level of educational obtainment (1 = did not complete high school – 6 = postgraduate degree; median = “4-year degree,” M = 4.34, SD = 1.27), and their degree of political interest (1–7, with high scores indicating higher levels of political interest; median = 5, M = 4.69, SD = 1.61). We also assessed political knowledge by asking respondents to answer closed-ended questions pertaining to the length of a senate term, the number of senators from each state, and the name of the current US secretary of state. These items were coded for correctness (0 = correct answer not provided, 1 = correct answer provided) and collapsed into a single additive measure (M = 2.05, SD = 1.00). A number of political affiliation factors were assessed, including political ideology (1 = very liberal, 11 = very conservative; median = 5, M = 5.33, SD = 3.09), political party identification (46.6% democrat, 26.0% republican, 27.3% independent/other parties), and 2020 vote choice (27.3% voted for Donald Trump). Finally, a number of media consumption variables were assessed, including news consumption frequency (1 = read the news very infrequently, 7 = read the news very frequently; median = 4, M = 4.02, SD = 1.96), Facebook usage intensity (“How frequently do you use Facebook?,” “How frequently do you post-content using your Facebook account?,” and “Would you be upset if you could no longer access your Facebook account?”; all items on seven-point scales were higher values represented higher levels of usage/attachment; M = 4.16, SD = 1.60, α = .79, and the use of social media to read about political issues; 1 = very infrequently, 7 = very frequently; median = 4, M = 4.19, SD = 1.82.

Experiment 1 results

An ordinary least-squares regression (OLS) model was used to assess Hypothesis 1. This model regressed the fake news accuracy perception variable on the variables describing the epistemic self-efficacy intervention, the media literacy intervention, and the interaction term comprised of the two interventions. Here, we observed a statistically significant parameter estimate for the epistemic self-efficacy intervention (

Next, to address Research Question 1, an OLS model assessing the relationship between the experimental treatments and the mainstream news article headline accuracy ratings was estimated. We failed to find significant associations between the criterion variable and any of the experimental treatment variables (epistemic self-efficacy intervention:

Experiment 1 follow-up analyses

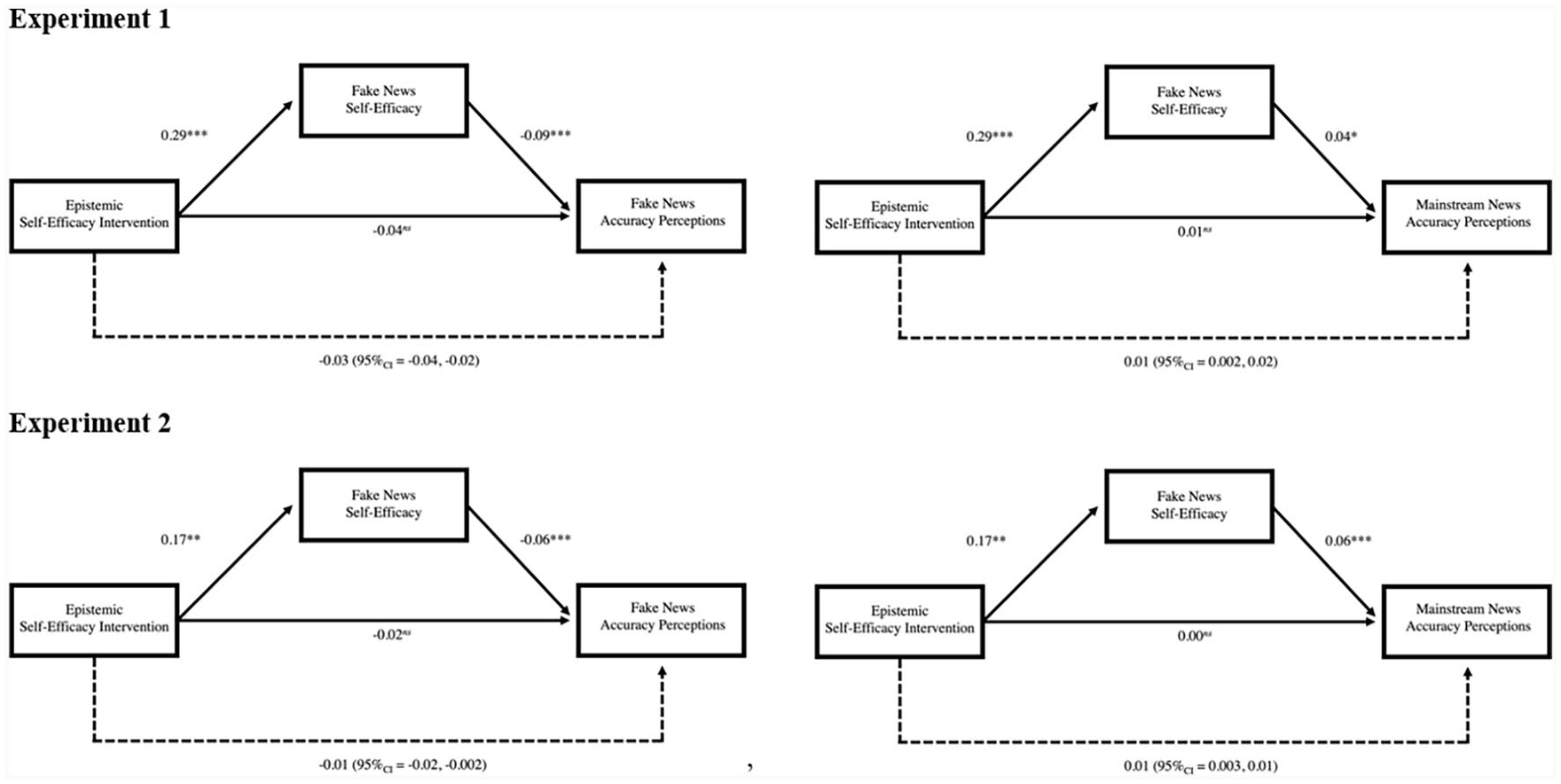

Notably, we observed statistically significant associations between the measured fake news self-efficacy variable and accuracy assessments for both the fake news (

A second mediation analysis that specified the mainstream news headline assessment variable as the response variable was estimated. This model contained the same covariates as discussed above. The first portion of this analysis was identical to the results discussed in the paragraph above. The second model component (

Graphical representation of mediation models tested in Experiments 1 and 2.

Experiment 2

Experiment 2 procedure and materials

The same procedure and experimental manipulations employed in Experiment 1 were employed in Experiment 2.

Experiment 2 sample

The sample was again recruited from the population of Amazon Mechanical Turk users. Data collection was facilitated via the CloudResearch platform, and respondents were compensated $0.85 for their participation (median completion time = 364.0 s; completion rate = 85%, bounce rate = 8%). All data quality controls employed in Study 1 were also employed in Study 2. Data were collected in the period between January 3 and January 4, 2021. Those who participated in Study 1 were prohibited from participating in Study 2. A total of 998 responses were initially acquired; after applying the same data cleaning procedures described in Study 1, the resultant analytic

Study 2 measures

Information accuracy assessments

Participants reviewed 16 article headlines. Of these headlines, 8 were from mainstream sources, and 8 were from fake news sources. All headlines dealt with the COVID-19 pandemic, which was ongoing at the time of data collection. The headline selection procedure was again similar to prior studies on fake news (e.g., Chan, 2022; Guess et al., 2020; Hopp, 2022; Moore & Hancock, 2022; Van Duyn & Collier, 2019). The selected headlines were spread and discussed by Facebook users in the time period immediately preceding the study. The fake news headlines contained claims or themes that had been refuted by one or more major third-party fact-checking site and were associated with websites known to regularly publish false or misleading political information. Each headline was formatted in a Facebook-styled style (see Appendix 2). For each headline, respondents were asked to indicate how accurate the news item was (1 = very inaccurate, 2 = somewhat inaccurate, 3 = somewhat accurate, 4 = very accurate). These assessments were subsequently used to form composite measures describing mainstream news headline accuracy perceptions (M = 3.29, SD = 0.52) and fake news headline accuracy perceptions (M = 1.62, SD = 0.56).

Fake news self-efficacy

The fake news self-efficacy scale used in Experiment 1 was again used in Experiment 2 (M = 5.66, SD = 0.94, α = .95).

Descriptive variables

Participants were again asked to provide their age in years (M = 39.47, SD = 13.59), their biological sex (54.6% female), their race (77.2% white), their average annual income (median = “between $50,001 and $75,000 annually,” M = 3.46, SD = 1.99), their highest level of educational obtainment (median = “4-year degree,” M = 4.45, SD = 1.27), their interest in politics (median = 5, M = 4.70, SD = 1.65), their level of conservatism (median = 5, M = 5.15, SD = 3.03), their political party ID (47.9% democrat, 27.1% republican, 25.0% independent/other parties), who they voted for in the 2020 presidential election (27.1% voted for Donald Trump), their political knowledge levels (M = 2.10, SD = 1.00), their news consumption frequency (median = 4, M = 4.02, SD = 1.99), their Facebook usage intensity (M = 4.01, SD = 1.52, α = .75), and the frequency that they used social media to read about politics (median = 4, M = 4.20, SD = 1.82). Questionnaire items and response categories were the same as those employed in Study 1, with the exception of the political knowledge questionnaire, which included an additional item that asked the participant to name the current Speaker of the House of Representatives.

Experiment 2 results

As a first step, we explored the effect of the experimental treatments on accuracy assessments of the fake news headlines (Hypothesis 1). In this model (

Experiment 2 follow-up analyses

As in Study 1, we next conducted two mediation analyses to assess the relationship between the information credibility self-efficacy motivation, measured fake news self-efficacy levels, and informational accuracy assessment outcomes. These analyses employed a set of control variables identical to those used in Experiment 1. For the model predicting the fake news accuracy assessment variable, the first model portion (

In the model predicting news accuracy assessments, the second portion of the analysis (

Discussion

The core hypothesis motivating this project was that self-efficacy beliefs pertaining to an individual’s ability to make informational credibility decisions should amplify the previously observed relationship between media literacy and the ability to spot online disinformation. This hypothesis was not—across two experimental studies—supported by the data. Therein, we found scant evidence that the media literacy intervention had any influence over the ability to properly classify either fake news or mainstream news articles. We did, however, find tentative evidence that self-efficacy beliefs relating to the ability to spot low-quality/factually inaccurate news information on social media are associated with the ability to (1) classify fake news as inaccurate and (2) the ability to classify mainstream news content as accurate. The implications of these findings are discussed in the section below.

Implications for fake news identification on social media

Although prior work has investigated the degree to which media literacy interventions can ameliorate the belief in and spread of fake news on social media platforms such as Facebook (e.g., Guess et al., 2020), this study is one of the first attempts to explore the relationship between information-relevant self-efficacy levels and the belief in false, incorrect, misleading, and/or hyperpartisan information online. In contrast to prior findings (e.g., Guess et al., 2020), we did not find any evidence that the delivery of a media literacy intervention diminished people’s belief in either fake or mainstream news headlines. We did, however, find some initial evidence that self-efficacy levels pertaining directly to fake news identification may play a potentially important role in limiting belief in low-quality information. In contrast to some prior work, the relational directionally of our findings was encouraging. For example, research on media literacy interventions has shown that they may increase belief skepticism in all forms of online information. Notably, in both studies, our data indicated that fake news-related self-efficacy levels were associated with diminished belief in the accuracy of fake news headlines

For platforms and organizations interested in suppressing the spread of so-called fake news on social media, it may, then, be valuable to pursue the development of efficacy-building interventions. Encouraging users to draw upon internal resources for information evaluation has the potential to stimulate long-term gains as they relate to the amelioration of incorrect political beliefs. Specifically, given the idea that mastery experiences encourage both the maintenance and growth of self-efficacy (e.g., Bandura, 1982, 1986, 1989), there exists the possibility that initial efforts to stimulate self-efficacy levels may encourage a sort of self-sustaining feedback loop wherein initial efficacy levels are gradually enhanced over time. That being said, the epistemic self-efficacy intervention employed in this study can undoubtedly be improved upon. Generally speaking, it did not appear to stimulate a strong enough effect to, on its own, influence classification outcomes. Instead, our intervention was supportive of the development of a more specific form of fake news self-efficacy. In other words, the intervention appeared to contribute to the development of localized feelings of fake news self-efficacy, which, in turn, seemed to support the ability to accurately parse a series of fake and mainstream news headlines.

To that end, it may be important for future research to explore individual-level factors that are predictive of/associated with fake news self-efficacy. In that regard, the work presented here provides some initial clues. For instance, in both Studies 1 and 2, we found negative and statistically significant relationships between the measured fake news self-efficacy variable and age (see Appendix 3); this finding may, potentially, explain some of the age-related effects observed relative to fake news use and dissemination (e.g., Guess et al., 2019). Alternately, in both studies, we found positive relationships between fake news self-efficacy and both political interest and news consumption, suggesting that political information surveillance may support the ongoing development and reinforcement of self-efficacy. These findings, taken together, suggest that that older people and those who infrequently engage with political information may be especially ripe self-efficacy intervention targets.

Theoretically, while against our initial assumptions, these findings make sense. Prior work examining the spread of fake news found that, for example, disseminators have high levels of political knowledge (Ferrucci et al., 2020); it is therefore potentially probable that a lack of media literacy is not a cause of belief in fake news. Rather, more likely, homegenity in networks on social media and a lack of trust in institutions can possibly cause people to believe this form of misinformation (Ferrucci et al., 2020). More specifically, for media literacy interventions to truly have a significant effect in this area, it would mean that participants have the desire to believe organizations—and institutions—they presumably lack trust in spreading quality information. This presumption seems dubious at best. Guess et al. (2020) did find some evidence for media literacy’s positive effects, but the sample for the study was so large that the small effect could be expected. Therefore, platforms and organizations aiming to stop the spread of fake news should focus more attention on self-efficacy.

Future research should build off this study’s results, combined with prior work surrounding epistemic and social media self-efficacy to examine individual-level attributes. A better understanding of how these characteristics and demographics impact self-efficacy can inform future self-efficacy interventions. Upcoming work should also test differing self-efficacy interventions to better understand how to maximize positive outcomes. Finally, future work could also pursue how to position this intervention for the best effect.

As previously noted, while our self-efficacy intervention could be improved upon considerably, the results found are rather encouraging. It would not take much effort at all, for example, for a platform such as Facebook to expose all users, when they first log-on to the site, to a self-efficacy intervention, one that admits misformation is prevalent on the site and provides users with the confidence to identify it. It is worth mentioning, again, that this study found that fake news-related self-efficacy levels were associated with diminished belief in the accuracy of fake news headlines and enhanced belief in the accuracy of mainstream news headlines. It is possible, then, that even people with distrust in mainstream news, once emboldened to be aware of the potential for fake news and have the confidence to spot it, may then put aside their mistrust in mainstream news due to their desire to classify fake news. In short, it would be relatively facile and unobtrusive toward user experience for platforms to add a self-efficacy intervention, something that could have potentially significant, positive effects on democracy.

Finally, we note that we did not, in contrast to a number of other studies, observe statistically significant effects between the media literacy intervention and fake news classification outcomes. Having said that, we did observe small fake news perceptive accuracy decreases in a manner consistent with other work (See Appendix 4). In Experiment 1, for instance, those who saw the media literacy intervention posted lower (albeit statistically negligible) fake news headline accuracy scores than those who did not see the media literacy intervention. The effect size for the bivariate association between the two variables was

The current project has some limitations. First, while indisputably theoretically different, a self-efficacy intervention could be considered a form or priming. Future work in this area could lean into priming literature more thoroughly and, perhaps, combined with our other measures, have even more success. Second, prior research has consistently shown that Amazon Mechanical Turk is a valid tool for conducting experimental work (e.g., Berinsky et al., 2012; Horton et al., 2011; Kees et al., 2017; Paolacci et al., 2010; Thomas & Clifford, 2017) that yields result similar to the results obtained from national samples (Coppock, 2019; Kees et al., 2017). Nonetheless, the demographic and psychographic characteristics of Amazon Mechanical Turk workers differ from the population as a whole (e.g., Hargittai & Shaw, 2020), and, as such, our results should be confirmed using a nationally representative sample. Third, as mentioned above, the self-efficacy intervention used in this project should be refined and improved upon. Fourth, our study focused specifically on Facebook, which serves as a key conduit for fake news. Given that some research has shown slight differences in fake news-related behaviors across platforms (e.g., Ferrucci et al., 2020), it may be important for future research to specifically address the ways that fake news-related self-efficacy beliefs differentially develop and manifest across platforms.

Footnotes

Appendix 1

Appendix 2

Headlines used in Experiments 1 and 2.

| Experiment 1 | ||

| Type | Headline | URL |

| Mainstream Congenial to Dems 1 (low prom) | Rudy Giuliani’s Latest Appearance Was Quite Literally Full Of Hot Air | https://www.buzzfeednews.com/article/davidmack/rudy-giuliani-fart?origin=web-hf |

| Mainstream Congenial to Dems 2 (low prom) | A Top Georgia Election Official Angrily Urged Trump To Condemn Supporters Who Are Threatening Election Workers | https://www.buzzfeednews.com/article/salvadorhernandez/georgia-election-official-trump-voting-threats |

| Mainstream Congenial to Dems 1 (high prom) | Justice Dept. Investigating Potential Bribery Scheme for Trump Pardon | https://www.nytimes.com/2020/12/01/us/politics/bribery-scheme-trump-pardon.html?searchResultPosition=4 |

| Mainstream Congenial to Dems 2 (high prom) | Trump campaign’s star witness in Michigan was deemed ‘not credible.’ Then, her loud testimony went viral | https://www.washingtonpost.com/nation/2020/12/03/melissa-carone-michigan-trump-giuliani-election/ |

| Mainstream Congenial to Repubs 1 (low prom) | No, Trump Didn’t Say “Good” In Response To A Question About Kids Separated From Their Parents At The Border | https://www.buzzfeednews.com/article/janelytvynenko/trump-immigration-good-fact-check |

| Mainstream Congenial to Repubs 2 (low prom) | Barack Obama Is the Latest Prominent Democrat to Bad-Mouth Demands to Defund the Police | https://www.teenvogue.com/story/barack-obama-defund-police-slogan |

| Mainstream Congenial to Repubs 1 (high prom) | Ex-HHS chief Sebelius says Trump administration deserves credit for vaccine progress | https://www.cnn.com/2020/11/16/politics/kathleen-sebelius-operation-warp-speed-success-cnntv/index.html |

| Mainstream Congenial to Repubs 2 (high prom) | Trump Team Disavows Lawyer Who Peddled Conspiracy Theories on Voting | https://www.nytimes.com/2020/11/22/us/politics/sidney-powell-trump.html?searchResultPosition=18 |

| Pro Dem Fake News 1 | New Report Suggests Giuliani Was In Cahoots With Fox News On Secret Project To Dig Up Dirt On Biden | http://dctribune.org/new-report-suggests-giuliani-was-in-cahoots-with-fox-news-on-secret-project-to-dig-up-dirt-on-biden/ |

| Pro Dem Fake News 2 | DONALD TRUMP IS ADDICTED TO PENIS ENLARGEMENT PILLS, CLAIMS HIS EX-WIFE | https://worldnewsdailyreport.com/donald-trump-is-addicted-to-penis-enlargement-pills-claims-his-ex-wife/ |

| Experiment 1 | ||

| Type | Headline | URL |

| Pro Repub Fake News 1 | Many Instances of Democrat Rule Breaking, Electoral Fraud are Recorded at Pennsylvania Voting Precincts | https://archive.is/Vcydp#selection-603.66-603.103 |

| Pro Repub Fake News 2 | Suddenly, all the Democrat-run swing states need to stop counting ballots for a while | https://web.archive.org/web/20201104211150/https://www.americanthinker.com/blog/2020/11/suddenly_all_the_democratrun_swing_states_need_to_stop_counting_ballots_for_awhile.html |

| Pro Dem Hyperpartisan 1 | Mitch McConnell’s Relief Offer Is Actually Worse Than Doing Nothing | https://slate.com/business/2020/12/mitch-mcconnell-stimulus-coronavirus-relief-blech.html |

| Pro Dem Hyperpartisan 2 | Republicans Plan to Stick With Deranged Party Leadership Team That Just Lost House, Presidency, Maybe Senate | https://slate.com/news-and-politics/2020/12/republicans-rally-around-ronna-mcdaniel-party-all-in-on-trumps-losing-strategy.html |

| Pro Repub Hyperpartisan 1 | Progressives Are No Longer Defenders of Free Expression | https://www.nationalreview.com/2020/12/progressives-are-no-longer-defenders-of-free-expression/ |

| Pro Repub Hyperpartisan 2 | Georgia Sec. State Raffensperger investigating Stacey Abrams’ group for GOTV fraud after it attempted to register his deceased son | https://www.theblaze.com/news/georgia-investigating-stacey-abrams-fraud |

| Experiment 2 | ||

| COVID True 1 | Masks Work. Really. We’ll Show You How | https://www.nytimes.com/interactive/2020/10/30/science/wear-mask-covid-particles-ul.html |

| COVID True 2 | Virus Deaths Approach Spring Record Amid Changing U.S. Crisis | https://www.nytimes.com/2020/11/28/us/covid-deaths-united-states.html |

| COVID True 3 | Can We Finally Stop Talking About “Natural” COVID-19 Herd Immunity? Please? | https://slate.com/technology/2020/12/herd-immunity-scott-atlas-rand-paul-epidemiology.html |

| COVID True 4 | Pfizer says its coronavirus vaccine is safe and 95% effective and that it will seek regulatory review within days | https://www.washingtonpost.com/health/2020/11/18/pfizer-covid-vaccine/ |

| COVID True 5 | Lost Sense of Smell May Be Peculiar Clue to Coronavirus Infection | https://www.nytimes.com/2020/03/22/health/coronavirus-symptoms-smell-taste.html |

| COVID True 6 | US virus deaths top 3100 in a single day for the first time | https://www.bostonglobe.com/2020/12/01/metro/latest-updates-covid-19/ |

| COVID True 7 | Pandemic is pushing America’s 911 system to ‘breaking point,’ ambulance operators say | https://www.washingtonpost.com/health/2020/12/03/911-ambulance-services-breaking-point/ |

| COVID True 8 | Former Presidents Obama, Bush and Clinton volunteer to get coronavirus vaccine publicly to prove it’s safe | https://www.cnn.com/2020/12/02/politics/obama-vaccine/index.html |

| COVID False 1 | Masks Don’t Work: A Review of Science Relevant to COVID-19 Social Policy | https://www.rcreader.com/commentary/masks-dont-work-covid-a-review-of-science-relevant-to-covide-19-social-policy |

| COVID False 2 | Tucker Carlson: ‘We’ve Been Lied to’ About Coronavirus — ‘A Global Fraud that Began Long Before Election Day’ | https://www.breitbart.com/clips/2020/12/03/tucker-carlson-weve-been-lied-to-about-coronavirus-a-global-fraud-that-began-long-before-election-day/ |

| COVID False 3 | Did the WHO Just (Accidentally) Confirm COVID Is No More Dangerous Than Flu? | https://www.corona-stocks.com/did-the-who-just-accidentally-confirm-covid-is-no-more-dangerous-than-flu/ |

| COVID False 4 | Top Microbiologist Says Covid Vaccine “Downright Dangerous,” Will Send You “To Your Doom” | https://www.infowars.com/posts/top-microbiologist-says-covid-vaccine-downright-dangerous-will-send-you-to-your-doom/ |

| COVID False 5 | Rich People & Journalists Made Exempt From Having to Enter COVID Quarantine | https://www.infowars.com/posts/rich-people-journalists-made-exempt-from-having-to-enter-covid-quarantine/ |

| COVID False 6 | New Study Shows 84 Percent Decrease in Hospitalization Rates for COVID-19 Patients Treated with Hydroxychloroquine | https://bigleaguepolitics.com/new-study-shows-84-percent-decrease-in-hospitalization-rates-for-covid-19-patients-treated-with-hydroxychloroquine/ |

| COVID False 7 | J.P. Morgan Confirms That Another COVID-19 Lockdown Would Further Enrich the Globalist Elite | https://bigleaguepolitics.com/j-p-morgan-confirms-that-another-covid-19-lockdown-would-further-enrich-the-globalist-elite/ |

| COVID False 8 | The COVID Case Con Continues | https://www.americanthinker.com/articles/2020/11/the_covid_case_con_continues.html |

COVID-19: coronavirus disease.

Appendix 3

Appendix 4

Authors’ note

The authors have agreed to this submission, and this article is not currently being considered for publication by any other print or electronic journal. The authors are listed alphabetically as each contributed equally to this manuscript.