Abstract

Misinformation about the novel coronavirus (COVID-19) is a pressing societal challenge. Across two studies, one preregistered (n1 = 1771 and n2 = 1777), we assess the efficacy of two ‘prebunking’ interventions aimed at improving people’s ability to spot manipulation techniques commonly used in COVID-19 misinformation across three different languages (English, French and German). We find that Go Viral!, a novel five-minute browser game, (a) increases the perceived manipulativeness of misinformation about COVID-19, (b) improves people’s attitudinal certainty (confidence) in their ability to spot misinformation and (c) reduces self-reported willingness to share misinformation with others. The first two effects remain significant for at least one week after gameplay. We also find that reading real-world infographics from UNESCO improves people’s ability and confidence in spotting COVID-19 misinformation (albeit with descriptively smaller effect sizes than the game). Limitations and implications for fake news interventions are discussed.

This article is a part of special theme on Studying the COVID-19 Infodemic at Scale. To see a full list of all articles in this special theme, please click here: https://journals.sagepub.com/page/bds/collections/studyinginfodemicatscale

Introduction

The SARS-CoV-2 (COVID-19) pandemic is a pressing global health crisis, the mitigation of which relies in part on non-pharmaceutical interventions that leverage insights from the social and behavioural sciences (Van Bavel et al., 2020; Van der Linden et al., 2020). Misinformation about the disease has spread widely on social media, ranging from fake ‘remedies and cures’, such as eating garlic or injecting bleach, to elaborate conspiracy theories behind the cause of COVID-19 (BBC News, 2020). In response, the World Health Organization (WHO) has warned of an ‘infodemic’ (Zarocostas, 2020), with some claiming that the prevalence of misleading information around the virus might be ‘the most contagious thing about it’ (Kucharski, 2020). Susceptibility to misinformation about COVID-19 relates to a variety of negative outcomes (Enders et al., 2020; Roozenbeek et al., 2020b), such as affecting people’s willingness to comply with evidence-based health regulations (Imhoff and Lamberty, 2020), support for violence (Jolley and Paterson, 2020) and vaccine uptake intentions around the world (Loomba et al., 2021; Roozenbeek et al., 2020b).

To add to the problem, information about COVID-19 is not always easily classified as either true or false. Considering the continuously developing scientific understanding of the virus, information around it can range between various degrees of unverified or disproven, making a clear classification of what counts as ‘misinformation’ difficult (Vraga and Bode, 2020). Nevertheless, in a Pew survey, almost half of the sample (48%) reported having been exposed to falsehoods about the virus, the majority of whom claimed to see misleading information on a daily basis (Schaeffer, 2020). Another study found that 85% of U.S. participants believed more than one false or misleading statement about COVID-19 (Miller, 2020). Frequent exposure to misinformation is particularly dangerous as repetition increases reliance on false information (Fazio et al., 2015). As the supply of evidence-based interventions remains low (Agley et al., 2020; Pennycook et al., 2020), it is critical to explore how the spread of misinformation around COVID-19 may be mitigated.

Theoretical background: Prebunking and inoculation theory

Preemptively debunking (‘prebunking’) misinformation is regarded as a promising step towards building attitudinal resistance against misinformation. Prebunking is a key component of inoculation theory, often regarded as the ‘grandfather theory of persuasion’ (Eagly and Chaiken, 1993: 561). Psychological inoculation is based on a biological analogy of the immunisation process (McGuire, 1964). Similar to how exposure to a weakened dosage of a pathogen triggers the generation of protective antibodies, inoculation theory posits that a weakened persuasive argument will elicit motivation to equip oneself with protective arguments against it (McGuire and Papageorgis, 1961). Both processes thus rely on the assumption that exposure to a weakened pathogen triggers an immunity-bolstering response. In the psychological inoculation literature, the inoculation process commonly consists of two elements: (1) a forewarning – which elicits threat and motivation to defend one’s attitudes – and (2) a pre-emptive refutation (or ‘prebunk’) of the persuasive arguments (Compton, 2013; Compton and Pfau, 2005). Research has demonstrated the robustness and efficacy of psychological inoculations in conferring resistance across a multitude of topics (for reviews and meta-analyses, see Banas and Rains, 2010; Compton et al., 2021; Lewandowsky and van der Linden, 2021).

Yet, the inoculation analogy is meant to be ‘more instructive than prescriptive’, and several important open questions about the boundary conditions of inoculation theory remain (Compton, 2013: 233). Specifically, recent inoculation research has seen three key innovations. First, researchers have begun to distinguish between prophylactic and therapeutic inoculation approaches (Compton, 2019). Inoculation can be fully preemptive (prophylactic) when people have not yet been exposed to misinformation. In contrast, therapeutic inoculation treatments – like therapeutic medical vaccines – are administered to those who have already had some potential prior exposure to misinformation as they can still boost immunity and limit further spread among the ‘already afflicted’ (Compton, 2019; Wood et al., 2012). In practice, this distinction matters little as people are inoculated regardless of prior exposure, but theoretically it helps to elucidate the conditions under which inoculation can be effective (Compton, 2019; Compton et al., 2021; van der Linden and Roozenbeek, 2020).

Second, traditional psychological inoculations often target specific false or misleading arguments, for example about climate change (Cook et al., 2017; van der Linden et al., 2017b) or vaccinations (Jolley and Douglas, 2017). This issue-based approach makes it difficult to scale inoculation interventions. To help scale the approach further, recent work has shifted away from inoculating against individual arguments towards conferring resistance against the techniques that underlie many instances of misinformation (Cook et al., 2017; Roozenbeek and van der Linden, 2018; van der Linden et al., 2021).

Third, inoculation research has recently explored the benefits of ‘active’ versus ‘passive’ inoculations (Roozenbeek and van der Linden, 2018). Traditional inoculation research has predominantly required participants to passively read a short text as part of the inoculation treatment (Banas and Rains, 2010; Compton, 2013). More recent work has produced interventions that simulate social media environments in which people are prompted to make decisions proactively, thus generating their own ‘mental antibodies’. An example of such an active inoculation intervention is the award-winning ‘fake news’ game Bad News (Basol et al., 2020; Maertens et al., 2020; Roozenbeek and van der Linden, 2019; Roozenbeek et al., 2020a), in which players build psychological resistance against six common misinformation techniques. Yet, little is currently known about the differences between active and passive inoculation treatments (Banas and Rains; Compton et al., 2021), their persistence over time (Maertens et al., 2020) and their effect on attitudinal certainty (Basol et al., 2020).

In addition, several other important gaps in our understanding of inoculation theory remain: relatively little attention is paid to how psychological inoculations affect how likely people are to share misinformation (but see Roozenbeek and van der Linden, 2020). Reducing the spread of misinformation and sharing the ‘vaccine’ are important elements in achieving psychological ‘herd immunity’ (Compton and Pfau, 2009; van der Linden et al., 2017a), particularly within the context of the COVID-19 pandemic. Furthermore, how inoculation interventions affect attitudinal certainty remains an open question. Research suggests that the more certain individuals are of their attitudes, the more likely an inoculation is to guide behaviour (Rucker and Petty, 2004), help resist persuasion (Tormala and Petty, 2002), and persist over time (Tormala, 2016). In the context of misinformation, it is therefore important to explore if inoculation interventions not only reduce susceptibility to misinformation, but also to what extent people become more confident in their ability to spot it and whether they are less likely to share it with others.

The present research

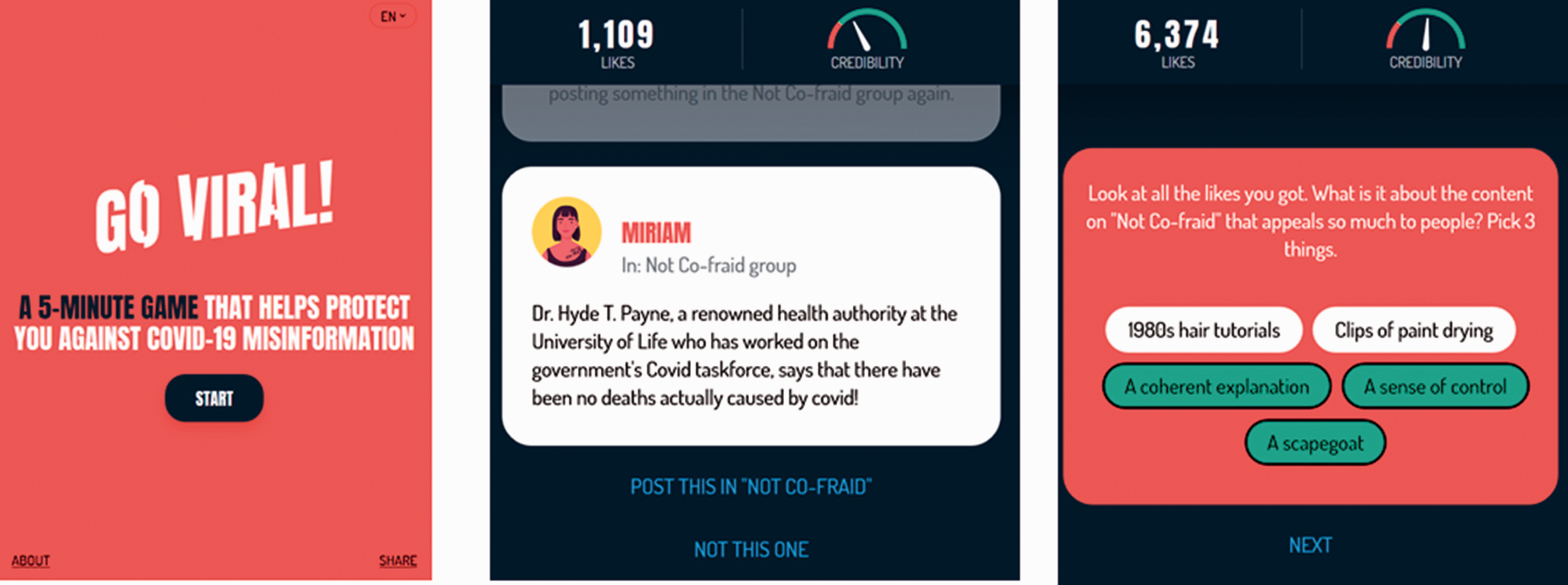

In this study, we address these gaps in the inoculation literature within the context of COVID-19 misinformation. To do so, we test two technique-based prebunking interventions aimed at improving people’s ability to spot misinformation about COVID-19 alongside each other. The first intervention is Go Viral!, a novel and freely available five-minute choice-based browser game similar in design to other ‘fake news’ games such as Bad News (Roozenbeek and van der Linden, 2019) and Harmony Square (Roozenbeek and van der Linden, 2020). We created Go Viral! (www.goviralgame.com) in collaboration with the UK Cabinet Office and DROG with support from the WHO and the United Nations’ Verified Campaign to expose three manipulation techniques commonly used in COVID-19 misinformation: fearmongering, using fake experts, and spreading conspiracy theories (World Health Organization, 2020b; Zarocostas, 2020). Go Viral! is available in three languages (English, French, and German), is listed by the WHO as an anti-misinformation resource 1 and has been played approximately 300,000 times since its launch in October 2020. Go Viral! functions as an active inoculation against future manipulation attempts by pre-emptively warning and exposing people to weakened doses of COVID-19 misinformation and letting them generate their own psychological ‘antibodies’ (van der Linden et al., 2020).

In the game, players start out by browsing their (fictitious) social media feed and are slowly lured into an echo chamber where misinformation and outrage-evoking content about COVID-19 are common (these scenarios are aimed at eliciting threat and motivation). Across three scenarios, players are encouraged to gain ‘likes’ and ‘credibility points’ while learning about three common manipulation techniques. In the first scenario, ‘The Fearmongerer’, players create a social media post by using emotionally evocative language and watch it go viral. The use of moral-emotional language is known to enhance the virality of social media content (Acerbi, 2019; Berriche and Altay, 2020; Brady et al., 2017). They are then invited to join Not Co-Fraid, a group of online ‘truth tellers’. In the second scenario, ‘My Imaginary Expert’, players start sharing content in the group as Not Co-Fraid’s latest member. Their low credibility, however, prompts them to back up their claims by using fake experts, such as Dr Hyde T. Paine from the ‘University of Life’. By giving Not Co-Fraid group members the illusion that their content is endorsed by experts, players gain popularity, and are eventually asked to become a Not Co-Fraid moderator. This scenario relies on impersonation and the fake expert technique, both of which are commonly used in online misinformation (Cook et al., 2017; Roozenbeek and van der Linden, 2019). In the final scenario, ‘Master of Puppets’, players create their own COVID-19 conspiracy theory. They first pick a target (e.g. a large NGO, the government or one Bob from New York), accuse it of shady practices and connect the dots, resulting in nationwide protests. Conspiracy theories have featured heavily around COVID-19 (van der Linden et al., 2020) and have been linked to violent intentions (Jolley and Paterson, 2020) and reduced willingness to comply with health guidelines (Roozenbeek et al., 2020b). Figure 1 shows the Go Viral! landing page and game environment.

Go Viral! landing page (left) and game environment (middle and right).

The second prebunking intervention consists of a series of infographics about COVID-19 misinformation. As part of its #ThinkBeforeSharing prebunking campaign, UNESCO, with input from inoculation researchers, created a social media package of images that explain how COVID-19 misinformation is created and spreads (UNESCO, 2020). Figure 2 shows several examples.

UNESCO infographics.

In this study, we leverage the public availability of both interventions to test a number of key hypotheses pertaining to prebunking and inoculation theory as a way to reduce susceptibility to misinformation. First, this study advances the literature by testing prebunking interventions in the context of COVID-19 misinformation. Second, to date, no published research has assessed different types of anti-misinformation inoculation or prebunking interventions alongside each other. Crucially, some findings suggest that so-called ‘active’ inoculation (e.g. in form of a game) confers attitudinal resistance more effectively than ‘passive’ inoculation (i.e. through reading, see Banas and Rains, 2010; McGuire and Papageorgis, 1961; Roozenbeek and van der Linden, 2018). This study is the first to address this question in the context of misinformation. Third, this study is one of the first to explore how prebunking interventions affect self-reported measures of behaviour; in this case, people’s willingness to share misinformation with others (Roozenbeek and van der Linden, 2020). Fourth, we build on the existing literature on attitudinal certainty by exploring how such interventions affect people’s confidence in their ability to spot misinformation. Fifth, following recommendations to maximise the generalisability of interventions effects (O’Keefe, 2015), we also make use of the public availability of both Go Viral! and the UNESCO infographics in English, French and German to assess the effectiveness of prebunking interventions in different cultural and linguistic settings (Roozenbeek et al., 2020). Finally, given the decay of resistance to persuasion effects (Maertens et al., 2020), we evaluate the long-term effectiveness of both interventions after a one-week follow-up.

We address the above questions in two high-powered and large-sample studies. Our Open Science Framework (OSF) page contains all the necessary information needed to replicate our findings and methods, including our datasets, Qualtrics surveys, the full list of items (social media posts), preregistrations, supplementary tables, figures and analyses, and our analysis and visualisation scripts: https://osf.io/mbqwj/. Both studies were approved by the Cambridge Psychology Research Ethics Committee (PRE.2020.035).

Study 1

Method

In study 1, we implemented a voluntary pre–post survey within the Go Viral! game, following the within-subject paradigm developed by Roozenbeek and van der Linden (2019), which is relatively unaffected by testing effects (Roozenbeek et al., 2020a). At the start of the game, players were asked to participate in a scientific study. Consenting participants were shown three misinformation and three real news social media posts (in the form of Tweets) relating to COVID-19, and asked to rate the manipulativeness of each post on a 1–7 Likert scale (1 being ‘not at all’ and 7 being ‘very’, following Saleh et al., 2021). After completing the game, players were asked to participate in the second part of the study. Upon agreeing to do so, they were again asked to rate the manipulativeness of the same social media posts that they saw in the pre-test, and presented with a series of demographic questions: age group, gender, education, political ideology (1 being ‘very left-wing’ and 7 being ‘very right-wing’) and geographic region. Participants received no financial compensation.

The three misinformation posts each make use of a manipulation technique players learn about in the game (using moral-emotional language, using fake experts and conspiratorial reasoning), and were taken from fact-checking websites such as FullFact and the WHO’s COVID-19 Mythbusters page (World Health Organization, 2020a). The three real posts are Tweets about COVID-19, taken from the Twitter accounts of reputable news sources (BBC News, AP, Reuters). To avoid potential source confounds, all source information (for real and fake posts) was blacked out so that assessments were restricted to wording and language use. All social media posts used in this study can be found in the ‘items’ folder on our OSF page: https://osf.io/mbqwj/. Figure 3 shows the survey in the in-game environment.

In-game survey screenshots: start of the survey (left), consent form (middle) and a social media post (right).

This study design allows us to test the following hypotheses about the effectiveness of Go Viral! as a way to improve people’s ability to spot misinformation about COVID-19:

In addition, we test the following null hypothesis:

Sample

Between 27 October and 26 November 2020, a total of 2634 complete pre–post survey responses were collected within the Go Viral! game environment, out of 14,755 people who completed the game in this time period (a response rate of 17.9%). As per our ethics approval, we excluded 863 underaged participants, leaving a total sample of N = 1771; 52.9% of our sample identified as male (43.0% female, 1.8% other, 2.3% prefer not to say); 53.6% indicated being between 18 and 34 years of age, and 36.3% reported having a university bachelor’s degree. Our sample also skewed politically left (M = 3.07, SD = 1.24). Finally, most study participants were from Europe (59.3%) and North America (22.7%). See Table S1 for the full sample composition.

Results

To test hypothesis

To test hypothesis

For real news, we find no significant difference in overall pre–post manipulativeness scores (Mrealnews,pre = 2.63, Mrealnews,post = 2.66, Mdiff = 0.03, 95% CI (–0.0180 to 0.0782), t(2,1770) = 1.23, p = 0.22, d = 0.03, 95% CI (–0.0174 to 0.0758)). Furthermore, we find no significant pre–post differences for two out of three real news items either (both ps > 0.13), and a small but significant increase in the perceived manipulativeness of one real item (Masia,pre = 2.83, Masia,post = 2.91, Mdiff = 0.09, 95% CI (0.0192–0.157), t(2,1770) = 2.51, p = 0.012, d = 0.06, 95% CI (0.013–0.106)). A Bayesian paired samples t-test for the averaged real news items gives a Bayes factor of BF10 = 0.057 (error % = 0.046), indicating strong support for the null hypothesis

Bar graph of the perceived manipulativeness of fake news (left) and real news (right), averaged and per individual item. Error bars show 95% confidence intervals.

Finally, to check for covariate effects, we conducted a linear regression with the difference in pre–post veracity discernment as the dependent variable, and gender, age group, education level, political ideology and being from Europe (as this was the largest single geographic region of origin in our sample) as covariates. We find no significant effects (all ps > 0.082), except for political ideology (p = 0.006), so that identifying as left-wing is associated with a higher post–pre inoculation effect in terms of veracity discernment than people who identify as right-wing. See Table S3.

Discussion

In a large-sample in-game survey experiment, we showed that people who play Go Viral!, irrespective of their demographic background (aside from political ideology), found misinformation about COVID-19 significantly more manipulative after playing than before, whereas their assessment of real news did not change in a meaningful sense. The effect sizes are in line with previous studies that have used similar designs (Roozenbeek and van der Linden, 2019), and are particularly encouraging considering these are within-subjects effects. Although this study allowed us to leverage the popularity of Go Viral! to collect survey responses ‘in the wild’, it does not include a comparison with other interventions aimed at reducing susceptibility to COVID-19 misinformation. Furthermore, the absence of a randomised control group allows for limited causal inference, and we only ran the survey in one language (English). Finally, to avoid overburdening game players, we only included a total of six items and one outcome measure (manipulativeness). We address these issues in Study 2.

Study 2

Method

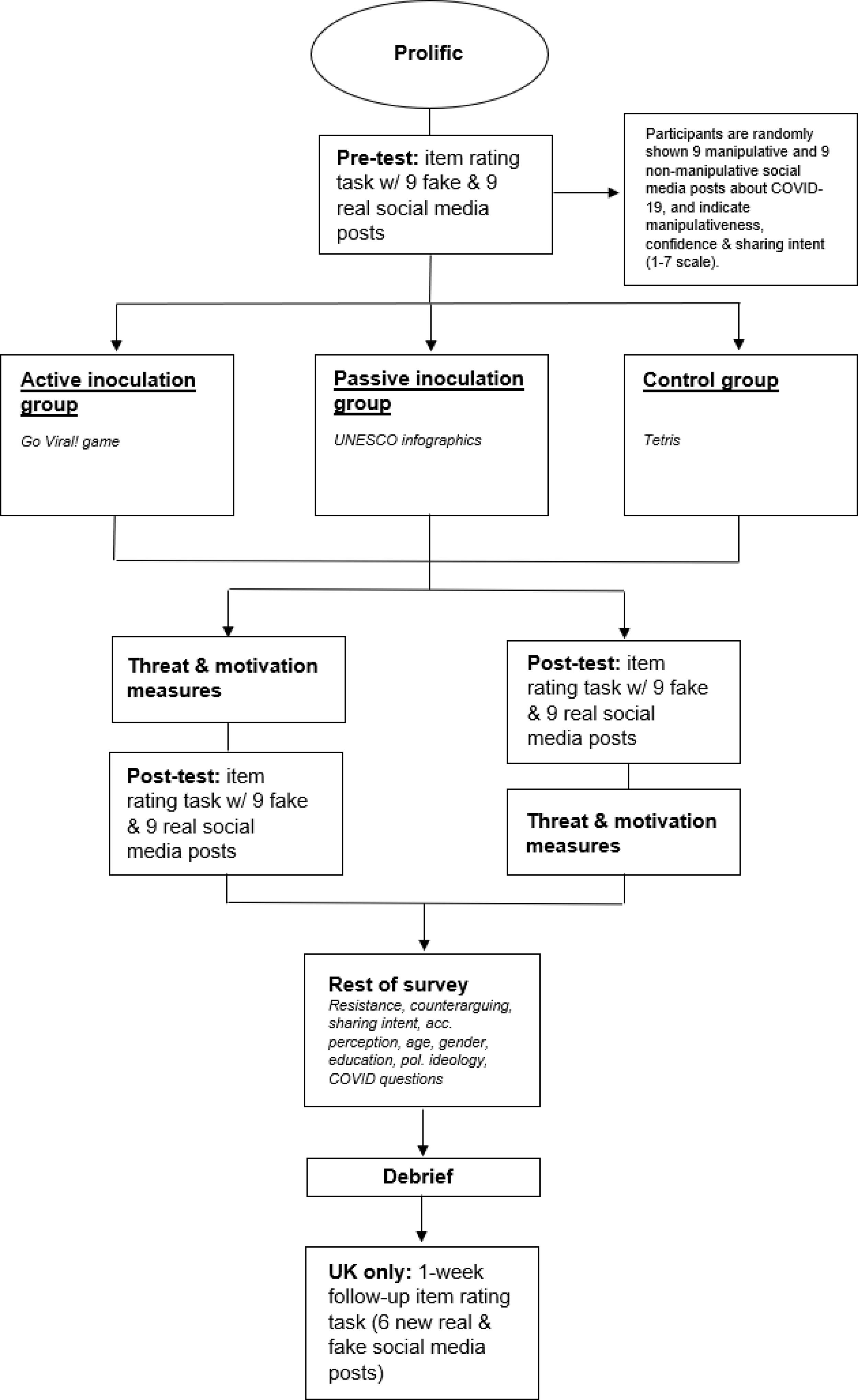

We conducted a preregistered randomised controlled trial on Prolific Academic with three conditions (an active condition, a ‘passive’ Infographics condition, and a control condition), across three languages: English (using a national sample of the United Kingdom), French and German. 4

The active (inoculation) condition involved playing Go Viral! The Infographics condition involved reading through the UNESCO infographics. 5 The control condition involved attentively playing Tetris for a mandatory minimum of five minutes, approximately the same amount of time it takes to complete Go Viral! We chose Tetris for several reasons: (1) it has been used as a control condition in previous studies on inoculation games (Basol et al., 2020; Maertens et al., 2020; Roozenbeek and van der Linden, 2020); (2) it is in the public domain; and (3) it is a simple game with a flat learning curve.

To begin with, participants performed an item-rating task, where they were randomly shown nine real and nine misinformation social media posts (in the form of tweets, in English, French or German) about COVID-19. Six of these 18 items were the same as those used in Study 1, the other 12 were selected using the same procedure as described in Study 1. 6 In total, participants thus saw nine real news posts (not containing misinformation) and nine misinformation posts (three per manipulation technique: fearmongering, fake experts and conspiracy). As in Study 1, all source information was blacked out to avoid source confounds (Roozenbeek and van der Linden, 2020). We included three main preregistered outcome measures. For each post, participants rated the following statements on a 1–7 scale (1 being ‘strongly disagree’ and 7 being ‘strongly agree’): (1) this post is manipulative (Saleh et al., 2021); (2) I am confident in my assessment of this post’s manipulativeness (attitudinal certainty; Basol et al., 2020); (3) I would share this post with people in my network (Roozenbeek and van der Linden, 2020). See Figure 5.

Examples of a manipulative (left) and real (right) social media post from the item rating task (Study 2).

After completing this item rating task, participants were randomly assigned to one of the treatment conditions (active inoculation or Infographics) or the control condition (1:1:1). Both treatment conditions were followed by manipulation checks to ensure that participants paid sufficient attention. As preregistered, low-effort responses, i.e. participants who gave exactly the same answers for all 18 social media posts in the pre-intervention item rating task and participants who failed one (in the Infographics condition) or two (in the Go Viral! condition) attention checks were excluded and resampled. 7 Next, participants were given two tasks in a random order 8 : (1) a set of questions about perceived motivational and apprehensive threat adjusted to the context of misinformation about COVID-19 (Miller et al., 2013; Richards and Banas, 2018) 9 ; and (2) the same item rating task that participants completed in the pre-test (i.e. the post-test). After these two tasks, participants answered a series of questions: the ‘vigilance’ measure from the Reuters Digital News Report (a measure of the extent to which people are concerned about the accuracy and source reliability of the news that they consume, with responses ranging from ‘never’ to ‘frequently’ on a four-point scale; see Newman et al., 2020); perceived resistance against misinformation (1–7; see Ivanov et al., 2012); motivation to counter-argue against misinformation about COVID-19 (1–7; see Ivanov et al., 2017); people’s willingness to share the game (Tetris/Go Viral!) or the UNESCO infographics on social media accounts (1–7) and in real life (1–7); whether people have had COVID-19 (yes/no/unsure/prefer not to say; see Dryhurst et al., 2020); how worried they are about COVID-19 (1–7; see Dryhurst et al., 2020); and whether they would get vaccinated against COVID-19 if a vaccine became available (yes/no; see Roozenbeek et al., 2020b). Finally, participants were asked several standard demographic questions: birth year, gender, education, and political ideology (1 being ‘very left-wing’ and 7 being ‘very right-wing’).

A week later, UK participants who completed the initial study were reinvited to partake in a follow-up, 10 in which they completed the same item rating task (with manipulativeness, confidence and willingness to share as outcome measures) for 12 new, previously unseen social media posts (6 real and 6 misinformation, or 2 misinformation posts per technique learned in Go Viral!). The flowchart in Figure 6 shows the study’s design schematically.

Study 2 design flowchart.

For study 2, we tested the following hypotheses (all preregistered except

Finally, based on our preregistered exploratory analyses on threat, we hypothesise that:

Sample

Participants were recruited via Prolific Academic (Peer et al., 2017). We first conducted a pilot study (n = 231) as a pre-test, in order to validate our item sets. 11 Next, we ran the full study in three different languages: one with a national sample of the UK (in English), one in French and one in German. 12 Participants were paid GBP 1.75 for their participation. UK participants who took part in the follow-up study were paid an additional GBP 0.25. Participants in the Infographics and Go Viral! conditions were subjected to one (for Infographics) or two (for Go Viral!) attention checks. As per our preregistration, low-effort participants were excluded from the analysis. A priori power analysis using G*Power with an effect size of d = 0.40, 95% power, three groups and three measurements (pre – post – follow-up) gives a desired sample size of n = 261 per language to detect a main effect. However, because the effect size is expected to be smaller for the confidence and sharing measures (Roozenbeek and van der Linden, 2020), and in line with our preregistration, we aimed to recruit 900 participants for each language. Unfortunately, due to an unexpected number of participants failing to provide the correct game completion password, the final sample consists of n = 710 valid participants for the UK study, n = 610 for the French study and n = 457 for the German study, for a total of N = 1777. In total, 606 out of 701 UK participants took part in the one-week follow-up study (86% retention). See Table S4 for the full sample composition by each country.

Results

We first present the (preregistered) analyses for our three main outcome measures included in the social media posts item rating task (manipulativeness, confidence, and sharing) separately, for both the misinformation and real items, focusing primarily on the difference for each outcome measure before and after the intervention between conditions (i.e. hypotheses

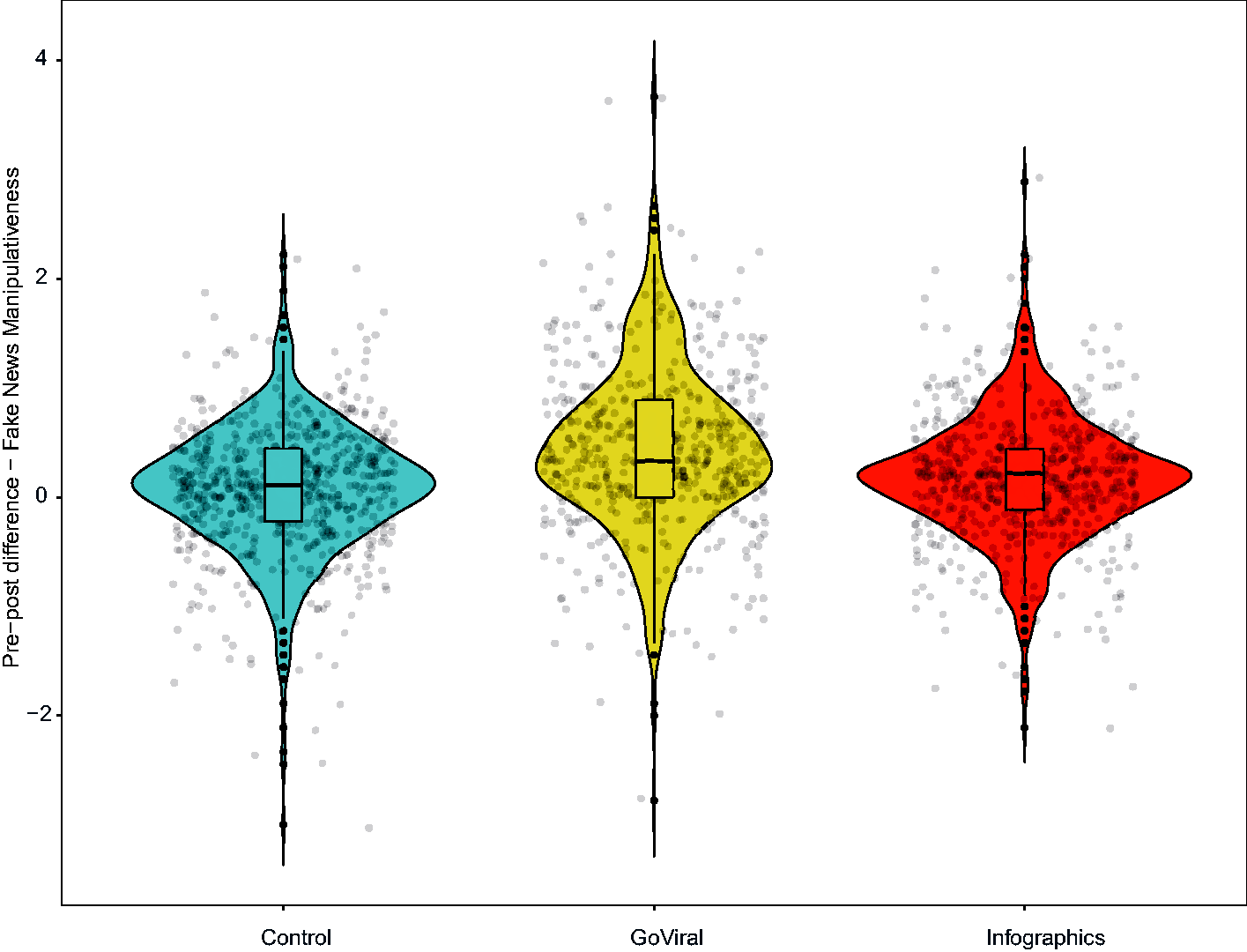

Manipulativeness

For the pooled sample, a one-way between-subjects ANOVA shows a significant effect of condition (control, Go Viral!, Infographics) on the pre–post intervention difference in the perceived manipulativeness of misinformation about COVID-19 (F(2,1774) = 51.69, p < 0.001, η2 = 0.055). 14 A Tukey HSD post-hoc comparison shows that the pre–post difference in perceived manipulativeness for the Go Viral! condition was significantly higher than the control condition (M = 0.45 vs M = 0.08, Mdiff = 0.37, 95% CI (0.28–0.46), ptukey < 0.001, d = 0.56) and the Infographics condition (M = 0.45 vs M = 0.18, Mdiff = 0.27, 95% CI (0.18–0.36), ptukey < 0.001, d = 0.41). We find a similar effect in the same direction for the Infographics condition compared to the control condition (M = 0.18 vs M = 0.08, Mdiff = 0.10, 95% CI (0.02–0.19), ptukey = 0.015, d = 0.17), indicating that both playing the Go Viral! game and reading through the UNESCO infographics significantly increases the perceived manipulativeness of COVID-19 misinformation. These results are similar (and significant) in all three countries; see Table S6 for a full overview as well as item-level statistics. Figure 7 shows these results in a violin plot.

Violin plot with jitter of post-pre manipulativeness scores of fake news posts (all countries combined).

For real news, we also find a significant effect of condition on the pre–post difference in perceived manipulativeness (F(2,1774) = 42.73, p < 0.001, η2 = 0.046). A Tukey post-hoc comparison shows that the perceived manipulativeness of real news is significantly higher in the Go Viral! condition than both the control condition (M = 0.51 vs M = 0.15, Mdiff = 0.36, 95% CI (0.25–0.46), ptukey < 0.001, d = 0.45) and the Infographics condition (M = 0.51 vs M = 0.14, Mdiff = 0.37, 95% CI [0.26, 0.48], ptukey < 0.001, d = 0.45). However, the Infographics condition does not differ significantly from the control condition (M = 0.14 vs M = 0.15, Mdiff = 0.01, 95% CI (–0.01 to 0.11), ptukey = 0.950, d = 0.02). These results are similar across countries; see Table S6. We thus find partial support for hypothesis

To test hypothesis

For real news, while a repeated measures between-subjects ANOVA shows a significant effect of time × condition on the perceived manipulativeness of real news (F(4,1206) = 4.62, p = 0.001, η2 = 0.003), there was no significant difference between conditions for real news manipulativeness in the one-week follow-up (F(2,603) = 2.04, p = 0.131, η2 = 0.007), indicating that participants across conditions rated real news as equally manipulative in the follow-up study. In addition, a (non-preregistered) between-subjects ANCOVA with pre-test real news manipulativeness as the covariate and real news manipulativeness in the follow-up as the dependent variable gives no significant difference between conditions (F(2,602) = 2.35, p = 0.10, η2 = 0.005). We thus find support for hypothesis

Bar graphs of perceived manipulativeness of fake news and real news (UK only), by condition, for the pre-test (T1), post-test (T2) and 1-week follow-up (T3).

Confidence

For the confidence measure, a between-subjects ANOVA on the pre–post difference in confidence scores for misinformation is significant (F(2,1774) = 29.47, p < 0.001, η2 = 0.032), in that participants in the Go Viral! condition are significantly more confident after the intervention in their assessment of misinformation than the control group (M = 0.34 vs M = 0.05, Mdiff = 0.29, 95% CI (0.20–0.38), ptukey < 0.001, d = 0.44) and the Infographics condition (M = 0.34 vs M = 0.14, Mdiff = 0.20, 95% CI (0.11–0.29), ptukey < 0.001, d = 0.29). 15 In addition, participants in the Infographics condition are also significantly more confident in their assessment of misinformation manipulativeness than the control group (M = 0.14 vs M = 0.05, Mdiff = 0.09, 95% CI (0.002–0.18), ptukey = 0.043, d = 0.15). These results are similar (and significant) in all three countries, see Table S8. 16

For real news, a between-subjects ANOVA shows no significant difference between conditions for the pre–post difference in confidence scores (F(2,1774) = 1.58, p = 0.206, η2 = 0.002). This finding is similar in all three countries; see Table S8. We thus find support for hypothesis

To test hypothesis

Sharing

For the sharing measure, a between-subjects ANOVA on the pre–post difference in willingness to share misinformation with others is significant (F(2,1774) = 4.00, p = 0.019, η2 = 0.004), in that participants in the Go Viral! condition are significantly less likely to indicate being willing to share misinformation after the intervention than the control group (M = –0.18 vs M = -0.07, Mdiff = 0.11, 95% CI (0.014–0.19), ptukey = 0.019, d = 0.15). 17 However, we find no significant difference between the Infographics condition and the control group (ptukey = 0.12) nor the Go Viral! condition (ptukey = 0.71). These effects are directionally similar but not significant in each individual country (see Table S10).

For real news, we find no significant pre–post difference for the sharing measure between conditions (F(2,1774) = 0.28, p = 0.75, η2 = 0.0003), with similar results across countries (see Table S10). We thus find partial support for hypothesis

To test hypothesis

Sharing the intervention with others

To test hypothesis

Threat

An ANOVA with traditional threat as the dependent variable and condition (Go Viral!, Infographics, control) and threat/post-test order as the independent variables revealed a non-significant effect for the overall model (F(5,1771) = 1.84, p = 0.35). In contrast, an ANOVA with motivational threat as the dependent variable and experimental conditions and threat/post-test order as the independent variables, shows that the overall model is marginally significant (F(5,1771) = 2.19, p = 0.053). This effect is primarily driven by the experimental condition (F(2, 1771) = 4.06, p = 0.017, partial η2 = 0.005). Specifically, a Tukey HSD post-hoc comparison shows that participants in the Go Viral! condition indicated higher motivational threat than participants in the Infographics condition (Mgoviral = 5.59 vs Minfographics = 5.43, Mdiff = 0.16, ptukey = 0.028, 95% CI (0.013–0.31), d = 0.15) and the control condition (Mgoviral = 5.59 vs Mcontrol = 5.44, Mdiff = 0.15, ptukey = 0.039, 95% CI (0.006–0.29), d = 0.14). There was no significant difference between the control and Infographics condition (ptukey = 0.98). These results support our exploratory hypothesis

Discussion

We find that both prebunking interventions significantly increase the perceived manipulativeness of misinformation about COVID-19, compared to a control group. This result is in line with Study 1 and remained valid in a randomised controlled setting and across three different languages. Go Viral! participants rated misinformation about COVID-19 as significantly more manipulative one week after the intervention, and were also significantly more confident in their judgments and experienced more motivational threat to defend their attitudes. With regards to real news, unlike Study 1, we find ambiguous results in Study 2: playing Go Viral! increases the perceived manipulativeness of real news immediately after the intervention (similar to findings by Guess et al., 2020), whereas this effect is not observed for the infographics. However, this scepticism of real news among Go Viral! participants dissipates entirely after one week, unlike for misinformation, with real news being rated as equally manipulative across conditions in the follow-up. 19 We found no differences between conditions for real news for the confidence and sharing measures.

General discussion

Across two large-sample studies using different research designs, we find strong support that both active and passive prebunking interventions increase people’s ability to spot misinformation about COVID-19 in social media content. Additionally, in line with previous studies (Basol et al., 2020; Saleh et al., 2021), we find that prebunking interventions increase people’s confidence in their ability to spot misinformation. Crucially, this increase is in the right direction, so that people only became more confident in their ability when they correctly rated misinformation as manipulative. For Go Viral! players, these two effects remain significant for at least one week after gameplay, even when presented with previously unseen misinformation about COVID-19, indicating robust support for a high degree of retention of the inoculation effect (Maertens et al., 2020). These results also speak to the relative benefit of active versus passive inoculation especially in terms of delaying decay over time. We also note that, at least descriptively, the active intervention yielded larger effect sizes for manipulativeness and confidence assessments than the passive intervention (d = 0.56 vs d = 0.17 for misinformation manipulativeness, and d = 0.44 vs d = 0.15 for confidence). Finally, people were significantly more willing to share the Go Viral! game with others in their social media network than the infographics, which points towards a potential relative benefit of active versus passive prebunking interventions.

With respect to people’s willingness to share social media content about COVID-19, we find that playing Go Viral! significantly reduces willingness to share misinformation about the virus (in line with Roozenbeek and van der Linden, 2020). However, this finding is not significant at the country level (although directionally similar). Furthermore, this effect was no longer significant after one week, and we find no difference in sharing willingness for the UNESCO infographics. As such, the #ThinkBeforeSharing infographics finding is inconsistent with recent research showing that getting people to pause and think can help reduce sharing of false news online (Fazio, 2020). It is possible that flooring effects are at play (i.e. participants had relatively low willingness to share both misinformation and real news even in the pre-test). Another possibility is that the sample sizes for the individual countries were not large enough to detect a significant effect; for example, a post-hoc power analysis for the sharing measure with d = 0.15, α = 0.05 and n = 710 (which is what we obtained for the UK sample) returns an achieved power of 0.41. It is therefore possible that larger samples are needed to find consistent effects of misinformation interventions on sharing intentions.

This study also adds to the ongoing debate about the extent to which anti-misinformation interventions influence people’s assessment of real news (Guess et al., 2020; Pennycook et al., 2020; Roozenbeek et al., 2020a). Our findings are somewhat ambiguous: while in Study 1 we find that playing Go Viral! does not meaningfully affect people’s assessment of real news, Study 2 suggests that Go Viral! players find real news about COVID-19 significantly more manipulative immediately after gameplay. Curiously, this effect is observed even for the items that were used in both studies. At the same time, confidence assessments and sharing intentions of real news are not affected by prebunking interventions (unlike those of misinformation), and any heightened scepticism of real news dissipates entirely one week after playing (unlike for misinformation). These results may be put into perspective with the decline of trust in news in recent years (Newman et al., 2020). Indeed, a recent cross-cultural study found that internet users’ navigation on social media was based on a ‘generalised scepticism’ (Fletcher and Nielsen, 2018). Overall, our findings suggest that while prebunking interventions may (sometimes) influence people’s assessment of real news (see also Guess et al., 2020), the presence and size of this effect varies substantially across studies and designs, and in the absence of established psychometric scales could be due to item effects rather than genuine underlying scepticism (Roozenbeek, et al., 2020a). We encourage further research on the implications of heightened scepticism of real news versus misinformation for truth discernment. Moreover, fact-checking is not without risk either (in some cases it can backfire, see Ecker et al., 2020; Krause et al., 2020), emphasising the question – as with medical treatments – whether the benefits of any (anti-misinformation) intervention outweigh potential side-effects.

Furthermore, inoculation theory has long regarded threat as integral in conferring attitudinal resistance, arguing that ‘inoculation would be impossible without threat’ (Compton and Pfau, 2005: 100–101). This observation is interesting because McGuire never explicitly measured threat himself, and a meta-analysis showed no significant relationship between threat and resistance, urging inoculation researchers to take a closer look at the role of threat (Banas and Rains, 2010). More recent scholarship suggests that ‘motivational threat’ – or threat in the form of motivation to defend oneself against persuasive attacks – is conceptually more consistent with inoculation than traditionally apprehensive threat (Banas and Richards, 2017; Richards and Banas, 2018). Our findings add to this debate by demonstrating promising effects of motivational threat (but not traditional threat) for the Go Viral! condition on the perceived manipulativeness of misinformation. Go Viral! is therefore a promising step towards the development of interventions that motivate people to engage in attitudinal resistance without inadvertently heightening anxiety around the imminent attack or vulnerability of one’s attitudes (Richards and Banas, 2018).

Like any study, ours has several limitations. First, our data is self-reported, and we were unable to assess how playing Go Viral! or reading the UNESCO infographics affects real-world behaviour. Second, we were only able to conduct a one-week follow-up in the UK. Third, it should be noted that only configural or weak invariance across the three language versions of this survey was established for the manipulativeness, confidence, and sharing measures in Study 2 (both for the real and the misinformation items; see Tables S24-S28). However, the alpha levels for each construct were acceptable at the pooled and individual country level (0.72–0.92; see Table S5). Additionally, failing to reach a certain level of invariance should not prevent analyses so long as researchers note this limitation, which we do here (Putnick and Bornstein, 2016). Fourth, our study may not have achieved enough power to detect differences between conditions for the sharing measure, although judgments were made within a simulated social media setting (enhancing ecological validity). Fifth, in selecting the treatment comparisons, we favoured real-world generalisability over maximising internal validity for this study so future research may want to adopt a passive control that is more identical to the active condition across key parameters of interest.

Conclusion

Across two large-sample studies, we provide strong cross-cultural evidence for the effectiveness of two short and easily scalable prebunking interventions to reduce susceptibility to misinformation about COVID-19. Go Viral!, a five-minute free-to-play browser game, positively impacts people’s ability to identify misinformation about the virus for at least one week after playing, and significantly reduces intentions to share misinformation with others. We argue that prebunking constitutes a crucial step in the mitigation of misinformation about the pandemic. Finally, as the success of COVID-19 vaccination programmes worldwide depend in part on minimising the amount of unreliable information that surrounds them, our findings add to the emerging insight that interventions informed by behavioural science are a crucial tool to help mitigate the spread of misinformation.

Supplemental Material

sj-pdf-1-bsd-10.1177_20539517211013868 - Supplemental material for Towards psychological herd immunity: Cross-cultural evidence for two prebunking interventions against COVID-19 misinformation

Supplemental material, sj-pdf-1-bsd-10.1177_20539517211013868 for Towards psychological herd immunity: Cross-cultural evidence for two prebunking interventions against COVID-19 misinformation by Melisa Basol, Jon Roozenbeek, Manon Berriche, Fatih Uenal, William P. McClanahan and Sander van der Linden in Big Data & Society

Footnotes

Acknowledgements

We wish to thank the Cabinet Office of the United Kingdom for funding the Go Viral! game and the World Health Organization and the United Nations for their support during and after the launch. We are also grateful to DROG and Gusmanson Design for their key role in the design, launch and hosting of the game, and to UNESCO (and specifically Isabel Tamoj) for translating the misinformation infographics to German.

Author contributions

Melisa Basol and Jon Roozenbeek were primarily and equally responsible for the study design, data collection and analysis, visualisations and write-up, under the supervision of Sander van der Linden and with assistance from William P. McClanahan, Fatih Uenal and Manon Berriche. Manon Berriche and Fatih Uenal were responsible for the French and German translations of the Go Viral! game, respectively. William P. McClanahan was responsible for the invariance testing. All authors contributed to editing the manuscript.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

We are grateful for financial support from the University of Cambridge's ESRC IAA COVID-19 Rapid Response Fund and the United Kingdom's Cabinet Office.

ORCID iDs

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.