Abstract

Simultaneous localization and mapping technology is commonly used within mobile devices and household appliances—it allows our vacuum robot to navigate the living room and enables us to view augmented reality content on our smartphones. By examining simultaneous localization and mapping–based devices and contrasting them against more traditional forms of cartographic practices, we argue that simultaneous localization and mapping technology not only opens up interior spaces for geographic examination but also calls into question the categorical difference between inside and outside itself. As with simultaneous localization and mapping, the mathematical construction of the surrounding space happens in the moment of its detection, simultaneous localization and mapping exhibits a moment of radical situativeness that is freed from the constraints of a stabilized, external database. We propose that this moment of situativeness, which is also inscribed into the resulting highly mobile and fluid visualizations, is the defining feature of a new kind of geomedia that simultaneously establish a vertical and a horizontal geography.

Introduction: What is simultaneous localization and mapping?

Before we can define simultaneous localization and mapping (SLAM) technology by way of the genus proximum et differentia specifica, that is, in contrast to other historic and contemporary modes of cartographic production, we will start out by giving a cursory overview of SLAM as a socio-technical challenge, its history, and the different fields of application in which it is commonly used. Subsequently, we will outline the common theoretical principle underpinning the different solutions toward the SLAM problem and illustrate it against the backdrop of the long-established practice of nautical celestial navigation. In the following sections, we will discuss SLAM in the wider context of the accumulation of geographical knowledge, its relationship to established geographic networks, and—by drawing on the example of spatially aware interfaces—the inherent cooperative properties of the SLAM platform. We will conclude the analysis with a reflection on indoor navigational practices and the way in which the deployment of machine-readable elements and SLAM-based actors increasingly calls into question the difference between the cartographic modes of the interior and the exterior.

The term SLAM and the interchangeably used term CML (concurrent mapping and localization) describe the central problem—and the process by which it can be solved—any autonomous robotic agent faces within an unknown environment, be it a domestic vacuum robot trying to work out the most efficient route to clean the living room, 1 a disaster relief robot trying to establish a wireless network in disaster areas, 2 or a spacecraft during a complicated docking maneuver: 3 in order to be able to carry out any meaningful navigational task, the robotic agent first has to map the surrounding space and localize itself within the mapped space. The core problem, which was initially framed during the 1986 IEEE Robotics and Automation Conference held in San Francisco by a group of robotics and artificial intelligence (AI) researchers around Peter Cheeseman, Jim Crowley, and Hugh Durrant-Whyte (see Durrant-Whyte & Bailey, 2006; Smith & Cheeseman, 1986; Smith, Self, & Cheeseman, 1990), has essentially stayed the same, although many different implementations of SLAM, some more robust than others, have been found in the meantime. 4 In its most simple form, the SLAM problem boils down to this: Traditionally, the acts of localization and mapping each rely on dependable data, usually provided as the outcome of the other process. 5 The reason for this is simple: If the measurements of the surroundings are correct, but the information about the location of the point from which the measurements were taken is inaccurate, the resulting map will not represent the surroundings adequately, but is shifted in accordance with said inaccuracy. On the contrary, a faulty map (i.e. a map that is generated based on inaccurate measurements) cannot be used to localize oneself within the surroundings, as the measurements of visible landmarks will not correspond to their representations within the map. As all sensory data used by technical devices are prone to inaccuracy, this creates a non-trivial dilemma, the solution to which we will discuss in the following sections.

We see SLAM devices as complex socio-technological stacks (Bratton, 2016; Straube, 2016) comprising hardware and software layers, as well as the practices of production and use associated with them. Our analysis, therefore, has to tie together both the technical background and the highly situated processes unfolding in situ and in actu. Note that we will only talk about the details of the sensory make-up of the respective devices when this is required for understanding SLAM, both within the narrower context of concrete navigational practices and in the broader historical context of geomedia.

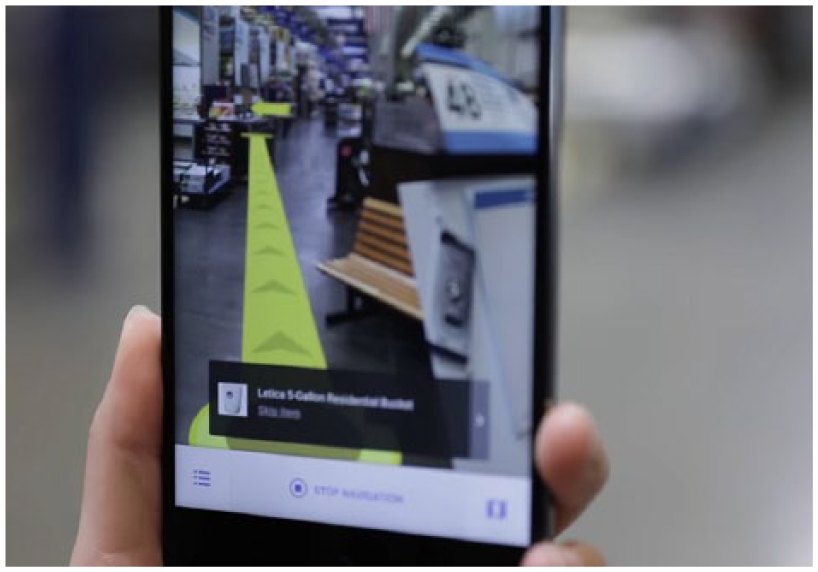

Apart from the autonomous robotic actors mentioned above, there is another class of non-human agents whose functionality is contingent on knowing their own position relative to their own past positions and to the positions of other objects within their sensory reach: Augmented reality (AR) 6 and virtual reality (VR) 7 interfaces (see Figure 1) that aim for seamless integration of digital objects into the user’s field of view 8 rely on the tracking of the interface, as well as certain elements of the physical world—especially surfaces—in order to keep the position of the digital objects shown within the display consistent with the movement of the users (see Normand, Servières, & Moreau, 2012). Even though the technical means by which these navigational tasks are accomplished oftentimes differ from the approach used in truly autonomous SLAM, we argue that it is justified to conceptualize both technologies in a similar fashion. Both employ sensory equipment and movement in order to go beyond the flattened imagery of horizontal geography, and both do so in a highly situative manner. Thus, one of the goals of the following analysis will be to compare autonomous SLAM with interfaces reliant on tracking themselves and their surroundings. By doing so, we hope to gain insight into the concepts underpinning both technologies and their specific practices of space-and map-making.

Indoor navigation with Google Tango.

SLAM in the context of traditional navigational practices

To illustrate the general principle of reference point–based navigation—our genus proximum—and in preparation for the following comparative analyses between different forms of navigation and accumulation of geographic knowledge, we begin this section by outlining a common navigational practice of naval cartographers during the 18th century. As Latour (1987) emphasizes, cartography at the time relied on celestial navigation, a practice which in turn required a multitude of different media acting together: first, a marine chronometer provided the current time, and then the angle between the horizon and multiple celestial bodies had to be obtained by means of a sextant. These pieces of data could then be used to look up the possible positions of the ship and, therefore, the origin of the cartographer’s measurements in a nautical almanac.

9

Of course, the position obtained in such a way

10

subsequently had to be plotted within a map and placed in relation to any landmarks the cartographer wanted to map as well. This example not only emphasizes the complexity of the traditional navigational process and the lengths navigators and cartographers had to go to in order to provide a stable origin of their measurements, it also serves to highlight some of the problems that both naval navigators and autonomous robotic agents share. When no positional data could be obtained through observation of external reference points like stars or beacons, ships had to rely on their on-board sensory equipment, so to speak, by using a practice called dead reckoning: The current position of the vessel could be estimated from the knowledge of the ship’s speed (provided by the chip log), its directional changes (provided by a compass), and the last known position. Errors within this estimation were cumulative—as the accuracy of subsequent steps depended on the precision of the previous steps—and grew with the distance traveled. Small angular deviations early in the process could result in vast differences between the estimated and actual end point. The robotic agent trying to navigate its surroundings essentially faces the same problem, but with vastly different consequences. The noise of its measurements, that is, the measurement errors, is also statistically dependent (Thrun, 2002). The robot will interpret a new measurement’s data in the light of its past observations; errors accumulate and grow in magnitude over time. While the naval navigator could correct the estimated course that had already been traveled based on the new measurements of the ship’s position once an external point of reference became observable again, autonomous robots in indoor environments do not have this kind of luxury. The landmarks they can reference are only landmarks their own sensory equipment has found and identified in their immediate surroundings. As Smith et al. (1990) emphasize:

In situations utilizing inexpensive mobile robots, perhaps the only way to obtain sufficient accuracy is to combine the (uncertain) information from many sensors. However, a difficulty in combining uncertain spatial information arises because it often occurs in the form of uncertain relative information. (p. 2)

This problem is aggravated by the fact that most cases of the use of SLAM demand that the robot moves around during the process of mapping.

11

This means that it has to, at least partially, rely on its own form of dead reckoning, achieved by the tracking of its movement commands or its odometry, which is the entirety of the data generated by the movement sensors, from the get-go. The robot’s odometric sensors suffer from measurement noise in much the same way as the sensors scanning the surroundings do. As this noise is also statistically dependent, there seems to be an impasse: How can the robot complete the task of producing a map and localizing itself within its surroundings, when neither the information about its own path through the world nor its sensory data about the world can be taken for granted? How can it even begin the process, when each first step it could take is equally precarious? The inability to determine whether the map or the estimation of the robot’s pose

12

should come first leads to an infinite regress akin to Plutarch’s classical dilemma, the “chicken-and-egg” problem: As outlined above, creating the map necessitates knowledge of the pose, which in turn relies on the map, and so on—a circle that continues ad infinitum. The option of simply ignoring the uncertainty and continuing the navigational process with the noisy measurements is not viable either, as the cumulative errors would soon lead to huge discrepancies between the SLAM agent’s model of the world and the actual state of its surroundings. As Thrun (2002) succinctly puts it, “[…] both the robot localization and the map are uncertain, and by focusing just on one the other introduces systematic noise” (p. 4). It is therefore impossible to estimate one of the two using the uncertain information about the other one, without further increasing the uncertainty. Both problems have to be solved at the same time, simultaneously, while taking the uncertainty of the robot’s sensory measurements—and the effects this uncertainty has on all future measurements—into account. It follows that the resulting model of the world cannot be deterministic (it cannot be computed with certainty from an initial state), but has to be probabilistic: For every given step of the process, there is a given probability that the measured state, in which both the map and the pose of the robot are compounded, is correct. This probability is conditional. It depends on the question of whether the previous steps, their sensory information about the robot’s movement, and the landmarks it measured are correct or not. While this recursive method

13

allows for on-the-fly corrections of both the robot’s pose and the map, it also gives rise to certain problems that human navigators never had to face. We will outline two of them briefly: First, there is the correspondence problem or data association problem (see Thrun, 2002). Since the robot cannot use absolute points of reference and all its information is contingent on potentially inaccurate measurements, it becomes difficult to tell whether measurements taken at different points in time correspond to the same physical object. Second, the robot has no way of differentiating between static, persistent objects that need to be registered within the map and mobile objects that are not part of the surroundings. Changing the environment during the process of mapping can therefore drastically hamper the robot’s ability to produce an accurate map:

The dynamism of robot environments creates a big challenge, since it adds yet another way in which seemingly inconsistent sensor measurements can be explained. To see, imagine a robot facing a closed door that previously was modeled as open. Such an observation may be explained by two hypotheses, namely that the door status changed, or that the robot is not where it believes to be. (Thrun, 2002, p. 3)

These examples emphasize that SLAM cannot fully be understood in analogy to established forms of navigation as used by human actors—the specifics of the robot’s sensory make-up have to be considered.

Mapping and the accumulation of geographical knowledge

After this theoretical run-down of the general problem, its potential solutions, and the practical difficulties associated with them, we will give a brief overview about different practices of the accumulation of geographic knowledge, from the seafaring cartographers described by Bruno Latour to the more recent practices of geobrowsing and social navigation, in order to highlight the similarities and differences between these approaches to maps, on the one hand, and SLAM, on the other hand.

When Bruno Latour (1987), in his seminal work Science in Action, describes the process of charting an unknown territory as an incrementally growing chain of consecutive expeditions, he, at the same time, characterizes the way in which the maps that are already available are used in the context of said expeditions. To do so, Latour draws upon the example of French explorer and geographer La Pérouse, who was sent on a cartographic expedition around the world in 1785. One of the missions La Pérouse had to carry out was to verify whether Sakhalin, an island off the northern coast of Japan, was indeed an island or just a peninsula. Latour describes the explorer as being at the mercy of both the terrain and the Chinese who inhabit the island, as he arrives at the unfamiliar shore; while he can only devise his further proceedings after he has seen the coast for the first time and met the native inhabitants, his cartographic work allows subsequent visitors to the same region to get an idea of the surroundings, before their ships have even left port. On the assumption that La Pérouse’s descriptions reached a satisfying level of accuracy, it follows that their position will be much stronger than that of the initial explorer himself, as his work puts them in a position to plan their interactions with both the terrain and the inhabitants weeks and months in advance. When an expedition prepared in this way arrives at a pre-charted destination, it is not the first time that its members are seeing the terrain that was previously described. The initial contact was made before the journey, at the map table or in the library (Latour, 1987). Consequently, such a voyage cannot be considered as an isolated event. It has to be understood as the n + 1th link in a long chain, where n is the tally of preceding exploratory enterprises, the experiences of which the current explorers can draw on. As Latour explains, by taking the example of Alan Shepard, the simulator-trained pilot for the first Mercury space flight, the actual journey can assume the form of a mere matching of already acquired information so long as the chain of prior knowledge accumulation is sufficiently long 14 (Latour, 1987). While these circular processes of knowledge accumulation, described by Latour, also exhibit the recursive properties characteristic of SLAM devices, there exists a pivotal difference between the two procedures. Both practices of charting the surroundings consider the previous state of the agent’s world knowledge, 15 but the SLAM device cannot simply accumulate more sensory data, in the hope that its knowledge of the world will get more precise over time. As we outlined above, the sensory noise forbids that. Instead of piling on more and more accurate information, it permanently has to re-evaluate both the current state of its knowledge and all the recursive steps used for its calculation.

Latour draws on La Pérouse’s case to exemplify his concept of immutable mobiles (Latour, 1986), flat inscriptions that are needed to keep information in a stable form while transporting them from the periphery, the frontier of exploration, so to speak, back to the centers of calculation, where the information is accumulated by combining it with other inscriptions. With the advent of digital cartographic practices and geomedia, this assumed immutability of maps gets called into question (Abend, 2018): Highly mobile participatory and cooperative forms of map-making emerge, and digital mapping interfaces allow the immutable map to become editable in the form of mutable images (Lammes, 2017). While these developments often depend on a base map that is kept relatively stable (e.g. by the platform provider), or a pre-existing geographical network that guarantees that the collaboratively generated, highly localized geographical information can be integrated into a bigger map by the means of absolute coordinates (like the Global Positioning System (GPS)), SLAM has to contend with the absence of these immutable fail-saves. None of the geographical information used in the context of SLAM is immutable—every state of knowledge has to be permanently re-evaluated.

At the same, the technical agents’ capability for self-determined movement goes well beyond the passive state of “being transported,” described by Latour’s concept of mobility. Consequently, we instead propose to employ the notion of motility, used in drone research to refer to the drone’s potential of self-sustained movement (Bender, 2018; McCosker, 2015). The information contained within an autonomous SLAM device’s databases could therefore rightfully be described as the antithesis of the immutable mobile: the highly mutable motile, whose informational content is never fixed but always modified by a continuous flow of sensor data 16 generated by the device’s self-induced movement.

Satellite networks and external databases

To flesh out the specific relationship between SLAM-based platforms and more recently established geographical networks, the following paragraphs will engage with the examples of the GPS and social navigation apps, such as Waze. This comparative approach also allows us to relate SLAM to recent geographical discourses, especially to questions of verticality and the production of space.

Just as in the case of Shepard’s space flight described above, every trip with a GPS-based navigational system that correlates positional data with a sufficiently large amount of accumulated geographical information (a map) likewise takes the form of aligning the actual trip with the pre-existing information. As long as there is no discrepancy between the state of the route, as it is inscribed in the available map, and its actual state in front of the car, this process of matching can proceed smoothly and without friction. However, the knowledge of this state, as it is presented by the map, is substantially more ephemeral than the position of an island or the shape of a coast; construction zones, accidents, climatic conditions, and so on warrant constant updates to the map in order to keep it functional in a navigational sense. Whereas the challenge during La Pérouse’s time consisted in conducting discrete, oftentimes dangerous expeditions in order to supply the centers of calculation with the inscriptions necessary to painstakingly extend the boundaries of the geographical network outward, in more recent times, a major shift of objectives has taken place: as the cartographic networks encompass the entire globe, 17 their scale has reached a momentary maximum, which in turn leads to the focus being shifted from their further expansion to their preservation and actualization. While La Pérouse tried to find out what a certain part of earth looked like, in order to record the answer on his map, contemporary navigational practices seem to be more concerned with the question of whether that part still matches the description found in an already existing map. To stay within Latour’s descriptive model, recent navigational practices require the constant updating of maps; we live in an age of permanent expeditions into familiar terrain. 18

In a way, SLAM-based platforms fit perfectly into this logic of constant updates and re-evaluations, as they short the circuit of consecutive expeditions and the resultant knowledge accumulation by performing the movement, localization, and mapping, not only at the same time but also a myriad of times within a small time frame. We have seen that these operations do not usually rely on external reference points or established geographical networks. Contrary to network-based approaches, which always presuppose one or more perspectives other than the agent’s own (the auctorial view of a map, the multi-perspectivity of several satellites), SLAM is therefore highly subjective, in that it is only based on the—admittedly multi-sensory—perspective of one entity. In the context of navigational map use (e.g. car navigation systems), the traditional logocentric map view has already given way to an egocentric cartographic mode (Thielmann, 2007), in which “[…] the viewer always remains in the center of the data space that dynamically orients and reorients itself around this center in every moment of movement” (Abend, 2018, p. 102). However, it is only with the advent of SLAM that this egocentric mode comes to form the basis of map creation—a sentiment that is perfectly encapsulated in the fact that the robot usually posits its own position as the origin of the mathematical coordinate space in which the surroundings are then modeled during the mapping process.

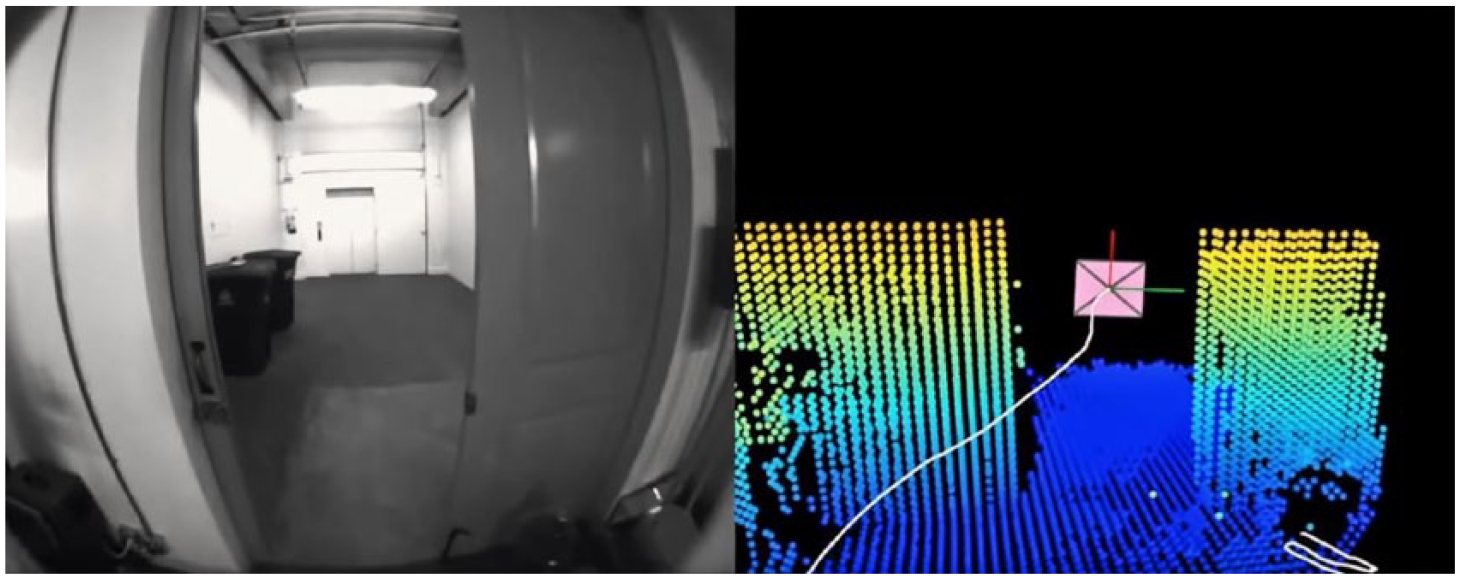

GPS necessarily reduces the entirety of space to a singular coordinate on Earth’s surface and therefore provides a highly abstract, geometric form of localization that needs to be paired with a pre-existing map in order to facilitate actual navigation. On the contrary, technologies that sample their surroundings in depth, like the time-of-flight-cameras and LIDAR (Light Detection And Ranging) devices used for SLAM, provide a multiplicity of distance measurements, resulting in a point cloud—and subsequently a three-dimensional (3D) model—describing the surroundings in which the user can navigate and with which the user can interact, without having to refer to external databases.

In the light of these findings, Stephen Graham’s (2016) call to bring geographic debates into line “with the proliferating verticalities of our world, a highly mobile and uneven world of often disorientating vertical views, mobilities and structures which can only be understood in volumetric rather than two-dimensional, planar ways” (p. 13), almost reads like a call for wide-scale use of SLAM-based mapping within the field of geography. We argue that, by virtue of the highly mobile, egocentric, and 3D cartographic mode it provides, SLAM can help to overcome the “flat” tradition now seen as a hindrance to the geographical understanding of vertically structured urban spaces (Dodge, 2018; Graham, 2016; Hewitt & Graham, 2015). As we will see in the following sections, SLAM can contribute to urban vertical geography in more than one way: Besides the volumetric measurement and representation of the surroundings, SLAM devices—autonomously or in cooperation with human actors—are not limited to surveying the outside. They can be deployed indoors across multiple floors above and below ground, creating the possibility for new avenues of research concerning indoor mobilities and issues of equality and power formerly deemed inaccessible.

Cooperative mapping

To provide a backdrop for discussing the role of networks and cooperation in the context of SLAM, we will turn to the example of social navigation applications such as Waze, which allow drivers to annotate the map seen by other users in the same area, enabling them to modify their route before they themselves are impeded by obstacles such as accidents, traffic jams, or traffic policemen. These drivers’ trip again becomes the n + 1th journey within the time frame in which the obstacle appeared, the nth one being the journey of the user who initially registered the obstacle. Social navigation apps therefore mark the present end point of an ongoing acceleration—and therefore a shortening—of the circles of accumulation via the means of employing ever-longer chains of operations involving numerous human and non-human actors. 19 As these apps filter the different bits of information corresponding to their perceived relevance for the user—this relevance being usually quantified as the distance between the user’s vehicle and the location from which the information initially was sent—the information distributed in this way is not only temporally transient but also highly localized. The benefit of controlling a territory, that is, mastering one’s own way through traffic, is not the outcome of a singular observation that has to be as precise as possible, but of the sustained, distributed production of data that are accumulated on a central platform.

According to Latour (2005), the network metaphor at the heart of actor-network theory (ANT) relies on three properties: (1) a physically traceable point-to-point connection which (2) leaves empty most of what is not connected and which (3) is “not made for free, […] requires effort […]” (p. 132). It is plain to see that social navigation exhibits these properties: The users are connected via a traceable signal, spaces without users/cartographers are not updated on the map, and an effort is required to keep the maps current. While pure SLAM does not rely on distributed data collection, we nevertheless argue that it has to be understood in terms of a network as outlined above. Let us take a look at the properties: As the robot moves, it creates a connection between two points of measurement, traceable both within the physical surroundings 20 and within the generated map. Spaces not visited/measured remain empty. The robot exerts an effort—in terms of movement and mathematically—to form the network and keep it stable. By looking at it that way, we notice that the network stretches out both spatially and temporally—the links are formed between different points of measurement which correspond to the robot’s position at different points in time. So does the robot produce the map on its own? Certainly, at any given point in time, the robot is alone in its endeavor, but over the course of the whole process, it is forced to enlist the help of all its past instances of measurement to even have a chance at creating a map of the surroundings.

Following Schüttpelz and Meyer (2017, p. 158), who define cooperation as the mutual production of shared goals, means, and procedures, it could be argued that a SLAM device is indeed cooperating with itself. This counter-intuitive reasoning stems from the fact that the old measurements are never discarded and continue to influence the future calculations even after the robot has moved a considerable distance. The decision where to travel next (the goal) and the map used to navigate there (the means) are created by the robot by maintaining a steady dialogue with its past selves incorporated within the measurement data, so to speak. This observation leads us to two distinct forms of cooperation. While social navigation relies on a multitude of actors surveying the environment at (approximately) the same time, SLAM takes an inverse approach: One actor surveys the environment multiple times in short succession, thereby creating different mathematical instances of itself 21 which it can consult later. Notably, social navigation apps remain contingent on some kind of external reference (a rudimentary base map, GPS coordinates) to facilitate cooperation. In contrast, it is the cooperative action itself that produces the map in the case of SLAM.

Apart from this baseline of cooperation on a purely technological level, there are indeed cases of cooperative practices that involve multiple actors within the wider context of SLAM. On the one hand, cooperation between non-human actors is possible. Thus, the task of mapping can be split, allowing for quick surveys of larger areas and—due to the multiple perspectives from which the physical surroundings are scanned—a higher level of precision (Waniek, Biedermann, & Conradt, 2015).

On the other hand, some technological actors inherently assume the presence of a human actor handling the device. As this is the case with all AR and VR stacks, these have to be considered to be cooperative 22 in a twofold way. On the hardware and software layer, the interface cooperates with data generated during the earlier steps of the process in much the same way outlined above in order to calculate its own pose and trajectory. But in contrast to the case of autonomous SLAM, the tasks of way finding and navigating the surroundings are solved cooperatively between human and non-human actors. As both actors are located within immediate proximity of each other and act (and are acted upon) simultaneously, playing AR games, placing virtual furniture, or simply creating a 3D model of an object or room via AR interfaces can be understood as a cooperative mapping exercise. By employing SLAM—at least that is the technological promise—everybody can become their own 3D cartographer (see Figure 2), without having to rely on external databases or infrastructures. In turn, the digital model or “digital world” produced by this process becomes a platform and infrastructure, in order to facilitate further interaction within it. In the case of hand-held devices, this model is created cooperatively and procedurally in the instant in which the physical surroundings are sequentially paced out and experienced. Hand-held SLAM devices are therefore a direct successor to earlier forms of geomedia used to trace and map the environment while sensing it in a physical way, that is, with one’s own body (Thielmann, Schulz, & Lommel, 2018). In this way, the 3D models resulting from cooperative mapping processes, like the one described above, resemble trajectories, as were theorized by Torsten Hagerstrand (1982, p. 323) in the context of his time geography. As the human actor moves the SLAM device around, their own movement is inscribed into the resulting map, just like the path on which an autonomous robot moves through an unknown environment shapes the probabilistic calculations of the spatial model it generates. Here, one is reminded of Latour’s notion, that “a network is not made of nylon thread, words or any durable substance but is the trace left behind by some moving agent” (Latour, 2005, p. 132), be it the trace of a robot’s earlier locations and the subsequently generated data or the trace of a human and non-human actor moving in unison to map their surroundings. Movement through an environment, understood as a sequence of highly localized situations that are automatically measured by the sensor devices, hence not only influences the subsequent measurements but also enables further action within said environment. Consequently, the socio-technical space produced by the SLAM technology can be described as a particular kind of Code/Space (Kitchin & Dodge, 2005, 2011) in which these highly localized situations—or individuations—are transduced not by the mere existence of code but by the distinct ways in which the code, the hardware within which it is embedded, and the human actors move and are moved through space.

Camera image (left) and 3D point cloud generated by the infrared time-of-flight-based Google Tango platform (right).

It is important to note that even though the human actors—their bodies and sensory apparatuses—move through the environment together with the technical device, the model representing the spatial data that are gathered during the use of the AR interface does not depend on the human actor’s perceptions, only on the sensory make-up of the interface and the chosen mode of visualization. Consequently, the resulting models are concerned with human perception only insofar as they are presented as perspectival renderings, ready to be interpreted by the human user. While the data gathering and processing behind autonomous SLAM seems to follow the trend of “operative images” and maps made not to be interpreted and acted upon by humans but by machines (Andrejevic, 2019; Farocki, 2004; Paglen, 2014), the case of cooperative AR/VR mapping runs contrary to that narrative. Here, the human actor’s movement through space and their perception of both the surroundings and the section of the map that has already been generated play a vital role, as they change the way in which the further transduction of space—and thus the mapping process—unfolds.

Outdoor navigation, indoor navigation

Through the satellite networks encircling the planet, the cartographic and navigational networks seem to have reached their maximum expansion. The incorporation of innumerable mobile devices ensures the active (conducted by human actors) or passive (conducted by non-human actors) updating of the respective section of the map. Despite this massive proliferation of the networks, there are still areas in which navigation using the historical and distributed practices mentioned in this section is not only difficult, but turns out to be downright impossible. As we will show in the following section, it is precisely these white spots on the map with which the SLAM problem is concerned.

Since the advent of GPS-based navigation technology, the outside, traditionally painted as “the unknown” and therefore home to all kinds of cartographic activity seems to have become entirely navigable as far as physical space is concerned. 23 The development of a consistent geographic model of the entire Earth—started by Ptolemy’s “Geographia” and Eratosthenes’ “On the Measurement of the Earth” and massively driven forward during the age of exploration—seems to have reached its logical conclusion with the advent of Google Earth (on the formal level of the map) and the various GPS-based navigational systems that predate it (on the level of navigational practice). Meanwhile, interior spaces have eluded the grasp—and interest—of our massively upscaled technological systems and have only become the focal point of a new wave of research in the last 30 years, one that is focused on phenomena such as the integration of sensory equipment into everyday objects (Internet of Things, Ubiquitous Computing) and the proliferation of interfaces, autonomous robots, and drones, the performance of which is entirely dependent on spatial data.

Has the man-made interior, traditionally connected with notions of familiarity and intimacy, remained recalcitrant to the various efforts of mapping and the establishment of generalized navigational practices? Has the interior become the true outside, in the sense that it is the only place that lies beyond the reach of the global geographic networks? On the one hand, it can be argued that there was simply no need to develop practices for charting interior spaces. After all, every building based on an architectural blueprint basically comes with its own map. The map of the interior which is yet to be built actually predates the territory, so to speak. On the other hand, generalized navigational practices were indeed formed—they just do not primarily rely on maps, but on other types of indexation (e.g. floor and room number). 24 Such a system of indices (or codes) is used to identify a specific physical space by process of differentiation, that is, by assigning each room a unique name or identification code (see Dodge & Kitchin, 2005). While these labels can, in turn, be used to correlate more abstract intentions (“Meet Mr. Jones”) with a concrete physical space (“Mr. Jones’ Office, Floor 3, Room 12”), they do not provide information on an exact path to be followed, only the destination. 25 It is inherently assumed that the person seeking the information will find the way on their own, that they know the system based on which the floors and rooms are designated. 26

This kind of pragmatistic indoor navigation based on prior knowledge of general building structure and the (arrogant) notion that most buildings are not complex enough to get lost in them anyway not only turns out to be entirely unsuitable for the needs of autonomous robotic agents and position-based interfaces but can also be seen as one reason for the lack of geographic engagement with the inside of the stacked vertical geographies characteristic of the contemporary urban landscape.

To accommodate non-human actors, two developments have been taking place: One approach aims to change the physical surroundings in order to make them more machine-readable or—to be more precise—to add a machine-readable layer to the environment; the other focuses on the development of a hardware and software stack focused on different kinds of sensor data in order to generate a 3D model of the surroundings. The second approach also comes into play when the autonomous robotic agent has to operate in an environment in which the augmentation with a machine-readable layer is not feasible—hazardous environments such as the sites of catastrophic events, the deep sea, and outer space. In the following section, we will briefly describe the first approach, its technical background, and cases of common use as an intermediary stage. This serves to contrast it with the second approach that will lead us back to the problem of SLAM and the radical change it brings to the fields of geography and geomedia, in particular.

Indoor localization, machine-readability

Punch cards and the piano roll, both inventions of the late 19th century, are among the earliest examples of machine-readable media. They were bound to a specific place in the sense that they required certain localized apparatuses to encode and decode their contents, but carried no geographical information themselves. It was not until the invention of the barcode (patented in 1952), and its widespread use in commerce and logistics in the 1970s, that machine-readable information, and thus the indexation it provided, was used to track the movement of objects through space. This tracking was (and still is) indirect, essentially reliant on the same system of checkpoints, inventory lists, and accounting practices used by tradesmen for thousands of years: By checking that an item has passed a certain point (entrance to the warehouse, check-out register, etc.), one can infer the current whereabouts of said item until it passes another checkpoint. This principle still holds true for current radio-frequency identification (RFID)-based systems used for indoor tracking. In a Latourian sense, the whole network of accounting software, barcode printers and scanners, and the people operating them has to be considered. This then allows us to understand how the barcode enables the spatial tracking of inventory over time, while the barcode alone does not show any particular spatial properties—it is only actualized as a geomedium as a part of the chain of operations outlined above.

The Bluetooth-based beacon technology 27 that is also used to make space—and especially the smartphone-using human actors within—machine-readable is also embedded in such a network. However, it relies on a different principle of operation, more akin to that of sonar or radar technology. At set intervals, it automatically messages Bluetooth devices that are located within its range and have the respective app installed, informing them about the beacon’s identification number as well as its signal strength. The application then translates the signal strength into an approximation of the distance between smartphone and beacon and—if multiple beacons are available—triangulates the position of the smartphone in relation to the beacons. 28 While the barcoded items had to be indexed in advance and it is only afterward that their physical movement could be inferred by matching the codes against an external database, in the case of the beacon system, the physical distance is calculated in situ from the strength of the received Bluetooth signal. The signal strength–based localization systems (other than the Bluetooth-based beacon system, there are also wireless LAN (WLAN)-based ones and, of course, GSM localization for mobile phones) are therefore reliant, not on a pre-existing index of locations but on the laws of physics that govern the relation between a signal’s strength and the distance it has traveled—that is, a distinctly spatial relation. 29 It must be noted that neither the beacon nor the mobile device knows anything about the space they both are located in, apart from their position in relation to each other. Thus, one could characterize the process described above as the relative localization of actors within the same network within uncharted territory. 30

SLAM-type devices go one step further: The model of the world they create is also based on measurements, but they do not limit their engagement to other technical actors within the same network (WLAN, Bluetooth, etc.). Their sensory devices are aimed at directly measuring the physical properties of the surroundings, be it by capturing them with common RGB (red, green, and blue) cameras or via more sophisticated sensor technology, such as infrared time-of-flight-cameras, LIDAR, or ultrasound scanners. As we have outlined above, one of the major problems of this kind of sensor-based surveying is the lack of identifiable external points of reference. In the context of indoor navigation, especially in the case of AR and VR applications, numerous workarounds have been devised in order to provide the technical agents with these kinds of reference points. Besides the signal strength–based systems described above, several approaches make use of the barcode’s spiritual successor, the QR code. These codes, placed around a room, can provide fixed landmarks that the robot or interface can optically track. They subsequently allow the rest of the space to be measured in relation to said landmarks (see Rostkowska & Skrzypczynski, 2015). This assemblage is best described as a hybrid system that is reliant on embedding information in the environment prior to the robotic agent’s navigational endeavors. While earlier code-based systems of localization had the code (through the objects it adhered to) move through certain static passage points to facilitate scanning—and thereby tracking—of said objects, this relationship is reversed in the case of codes-as-landmarks. To become a landmark, the code has to remain immobile in one place, while the system performing the scan is highly mobile and does the measurement “on the run.”

Notably, hybrid systems like the QR-based method outlined above come in all shapes and sizes. While the QR code passively waits to be scanned, VR interfaces often employ base stations which actively emit a steady signal, against which the head-mounted displays, as well as hand-held devices, can measure their positions with high accuracy. 31 These stations, which have to be mounted at the edge of the designated space used by the interface, unfold a highly localized, situated geographical network, inside which micro-navigation becomes possible.

The different navigational practices deployed by human and robotic actors in interior spaces outlined above should have demonstrated that the current age of the exploration of the inside is not facilitated by the extension of established cartographic networks to the interior. Rather, it is the hybridization of technical devices that can act (semi-)autonomously while being dependent on highly localized networks provided by sensory media, that makes the inside–outside difference seem more and more like a continuous spectrum instead of the absolute boundary it has traditionally been for navigational devices, networks, and practices. In the future, the wide-scale deployment of devices that rely purely on SLAM and shun any networks and external reference points would not only denote the dissolution of the indoor/outdoor categorization but also open up the indoors to a whole new mode of geographic inquiry, allowing extensive mapping of interior spaces and the ways human and non-human actors move through and interact with them.

Conclusion

We have demonstrated here that the product of a successfully implemented SLAM platform is a map of the surroundings that is generated autonomously, ad hoc and in situ, while, simultaneously, the position of the mapping agent is located within said map. The whole chain of subsequent expeditions and processes of knowledge accumulation that took La Pérouse and his fellow seafarers of the age of exploration many years to accomplish (if they ever returned) now gets condensed into the runtime of SLAM devices that operate probabilistically and constantly refer back to their own past steps and the uncertain estimations associated with them.

This ever re-calculating nature of the mapping process together with the principal independence from human actors, GPS navigation, and pre-stabilized maps makes autonomous SLAM devices the archetypical mutable motiles that nevertheless remain combinable with—but do not rely on—other forms of geomedia. As these mutable motiles depend on their ability to move in order to chart the highly localized situations they find themselves in, the situativeness they exhibit is rooted not only in their independence from established networks but also in their motility. SLAM devices can therefore be likened to Tim Ingold’s (2009) inhabitants, who

[…] know as they go, as they journey through the world along a path of travel. Far from being ancillary to the point-to-point collection of data to be passed up for subsequent processing into knowledge, movement is itself the inhabitant’s way of knowing. (p. 41)

As our engagement with the phenomena of social navigation and AR/VR showed, SLAM devices are able to act as cooperative geomedia platforms, cooperating with both prior instances of their own mathematic modeling and other non-human and human actors, whose traces or trajectories are embedded in the resulting maps.

Our cursory foray into the history of inventory keeping and indoor navigation revealed that the border between interior and exterior in the physical, architectural sense historically oftentimes correlated with being outside or inside of established geographical networks. Thus, by gaining independence from the said networks, SLAM not only has the potential to fill the cartographic blank spots associated with the interior, but in the process destabilizes the boundary between interior and exterior itself. SLAM therefore advances the verticality or three-dimensionality of cartography in a twofold way: On the one hand, the ability to operate indoors enables inquiries into the plasticity and verticality of stacked spaces, vertical mobilities, and indoor navigational practices; on the other hand, the egocentric, 3D cartographic mode employed by hand-held SLAM devices inherently transcends the established “flat” modes of representation by giving a volumetric account of the surroundings.

Footnotes

Acknowledgements

This article was produced within the context of project A03 (Navigation in Online/Offline Spaces) of the DFG Collaborative Research Center 1187 Media of Cooperation.