Abstract

Harmful and illegal content on social media is widespread, but what should be taken down is widely disputed, creating ongoing challenges for resolving the tension between free speech and user safety. User reporting is a key mechanism for addressing such content, yet little is known about who reports, what motivates them, and how they compare to the general population. We study these questions using two datasets: (1) a unique survey with individuals verified to have previously reported potentially illegal content to a third-party organization in Germany and (2) a quota-based sample approximating the German population. We show that individuals who have previously reported potentially illegal content via a third-party reporting service represent a distinct, civically engaged subset of users. They tend to be older, more often men than women, highly educated, highly politically active, and markedly left-leaning. They are not politically representative of the German population and take a distinctly different position when balancing free speech and protection from harm, putting more emphasis on protecting from harm. Reporting users’ motivations appear primarily civic-minded rather than reactive, especially among those who do it frequently and those intervening on behalf of others. These insights highlight reporting as a form of digital civic participation and offer perspectives relevant for understanding political engagement online, platform governance, user agency, and trust and safety regulation.

Introduction

Harmful content remains widespread on social media; however, what content should be removed is disputed, posing challenges for resolving the tension between free speech and user safety. To combat harmful – and sometimes illegal – content, platforms have traditionally relied not only on automated detection and human moderators but also on user flagging and reporting (Singhal et al., 2023). User-centered moderation, one of the earliest forms of moderation on social media (York, 2022), has increasingly moved to the forefront – epitomized by X’s (Twitter’s) 2021 Birdwatch program, later rebranded on X as Community Notes. The shift accelerated in January 2025, when Meta’s CEO Mark Zuckerberg announced that the company would end U.S. fact-checking and rely increasingly on users reporting content before action is taken by Meta (Isaac & Schleifer, 2025). Coupled with Meta’s termination of third-party fact-checking – reversing years of prioritizing automation (Meta, 2020) – these moves underscore renewed reliance and higher responsibilities on users.

User reporting seems democratic in nature, but limited flagging options and opaque enforcement often leave users frustrated and doubtful that their reports matter (Crawford & Gillespie, 2016; Jhaver & Zhang, 2023). Many users abandon reports when categories are unclear or outcomes uncertain, reinforcing perceptions of arbitrariness (Blackwell et al., 2018; DiFranzo et al., 2018). In response to these limitations, some countries have established independent third-party reporting portals that allow citizens to report abusive or potentially illegal content outside of platform environments.

Reporting has furthermore drawn at times significant criticism. For example, platform mechanisms are criticized for legitimizing takedowns based on single reports that may not reflect majority views (Crawford & Gillespie, 2016), fueling debates over unequal treatment and uneven moderation across groups (Kemp & Ekins, 2021). Users can also exploit reporting for strategic flagging to silence opponents (Crawford & Gillespie, 2016). These dynamics reveal a key tension: reporting can safeguard users but also risk being weaponized in ways that undermine free speech and democratic discourse.

Despite the central role reporting plays in platform accountability – and the costs and uncertainties tied to enforcement – we still know remarkably little about users who report content. Evidence from Germany suggests that just over half of politically active individuals have reported content directly to a platform (Koch et al., 2025), compared with 34% of the general population, while only a small minority – around 5% – report content via third-party organizations (Brennauer et al., 2024). Yet it remains unclear who these reporters are, how they differ from the broader public, and what motivates them to take action.

A key obstacle to answering these questions is the lack of reliable ground truth. Platforms do not systematically disclose data on reporting behavior, making it difficult to assess who reports content, how frequently, or under what conditions. As a result, existing research relies heavily on self-reported survey measures or platform-specific case studies, which limit our ability to characterize reporters and to link reporting behavior to broader patterns of political engagement, attitudes, and trust.

To address these gaps, we adopt an alternative empirical strategy that focuses on reporting through a third-party portal. We draw on two complementary surveys: a novel dataset of verified individuals (

Studying reporting via

Our findings reveal that reporting is performed by a distinct and civically engaged subset of the public. Reporting users tend to be older, more often men than women, and highly educated, and – relative to the general population – include a substantially overrepresented share of non-binary and “other” respondents. They are also highly politically active, more left-leaning, value protection from the harm speech may cause, and differ from the general population in their attitudes, digital behaviors, and motivations. While some respondents cite personal experiences with hate speech as their main motivation for reporting content in general, the majority report being motivated by a sense of civic responsibility or concern for the integrity of online spaces, especially among frequent reporters or when harm affects others. The primary motivation for turning to an independent reporting portal such as

Content Moderation and User Reporting

Platforms employ a range of content moderation mechanisms to address harmful and illegal content. While much of this content is removed through algorithmic detection, moderation also involves human review – either in combination with automated systems or independently (Crawford & Gillespie, 2016; Singhal et al., 2023). In addition, platforms rely on users to report or “flag” content they believe violates platform policies or national laws. To facilitate this, they generally provide reporting tools and publish guidelines to help users identify violative content. However, these guidelines are often excessively long and complex, especially on very large platforms, making them difficult to understand – with some platforms – usually smaller ones not providing guidelines at all (Nahrgang et al., 2025).

After years of emphasis and investment in automated content moderation, user reporting moved back to center stage in January 2025, when Mark Zuckerberg announced that Meta would end its partnership with third-party fact-checkers in the United States and, for lower-severity violations, would begin to rely more on “someone reporting an issue before [Meta] takes action,” framing this shift as a return to free expression (Meta, 2025). While the European context differs, owing to stricter legal frameworks such as the DSA, user reporting remains a central pillar of enforcement. Its importance stems from the limitations of algorithmic and human moderation, which are prone to error, failing to detect harmful or illegal content. 1 In such cases, reporting becomes a crucial fallback mechanism, with platforms often relying on user reporting to enforce their rules and to improve algorithmic training and inform human review processes (Crawford & Gillespie, 2016). Yet this reliance is contested: systems are reactive rather than preventive (Crawford & Gillespie, 2016; Douek, 2022), vulnerable to coordinated “strategic” flagging, and for being far more complex than a simple binary user decision, as they constitute an “interaction between users, platforms, humans, and algorithms, as well as broader political and regulatory forces” (Crawford & Gillespie, 2016, p. 411).

The European Union’s (EU) DSA, adopted in 2022, is the EU’s new regulatory framework for online platforms. It sets out obligations for risk management, transparency, and accountability in content moderation and pivots from strict takedown mandates to transparency duties scaled by platform size (European Union, 2022). Crucially, transparency here concerns not just published rules but their implementation through moderation mechanisms such as user reporting. However, as Busch notes, the DSA “abstains from the difficult task of drawing a line between legal and illegal content” (Busch, 2022, p. 53), leaving much discretion to private actors in defining such speech. To address this, the DSA created “Trusted Flaggers” – recognized organizations whose reports are prioritized (European Union, 2022).

Viewing reporting as user agency within content moderation, its value for safeguarding public discourse hinges on whether – and how – people use these mechanisms. What motivates users to intervene and who chooses to report are therefore central questions: do reporters mirror the public, or are they skewed by attitudes and demographics? Answering this is essential to assess whether reporting strengthens democratic participation and accountability online or risks amplifying existing biases and inequalities.

Reporting, Participation, and Online Behavior

A useful starting point is to examine how users respond to potentially illegal and harmful content online. On large platforms, low identifiability and diffusion of responsibility reduce the perceived obligation and social pressure to act – people expect others to intervene or view the issue as outside their remit (Aleksandric et al., 2022; Blackwell et al., 2018; Obermaier et al., 2016). Such reluctance, and the sense that harmful or illegal content is inevitable, signals both normalization of harm and resignation about platforms’ capacity or will to enforce rules (Theocharis et al., 2025).

Possible user responses to potentially illegal and harmful content span a spectrum: low engagement actions such as unfollowing, muting, or blocking; reporting of content or accounts (for Community Guidelines violations or alleged illegality); and counterspeech, either publicly or via private messages. Compared with reporting, counterspeech typically carries higher costs because it requires direct engagement – often in public, exposing users to potential confrontation or backlash. Nonetheless, empirical work documents modest but reliable effects of empathy-based appeals, especially when individuals connect their own experiences as targets to their behavior toward outgroups (Gennaro et al., 2025; Hangartner et al., 2021), and shows that identity-based counterspeech can significantly reduce online hate (Munger, 2017; Siegel & Badaan, 2020). Related evidence indicates that empathy increases public intervention against cyberbullying; however, the effects are more pronounced when interpersonal similarity is low (Wang, 2021).

Intervention hinges on perceived threat, moral judgment, and identity-linked norms. Moral outrage, often triggered by perceived victimhood, both fuels virality by increasing sharing and motivates corrective action (Gray & Kubin, 2024). Users are more likely to intervene when they believe enforcement is transparent and fair (Shim & Jhaver, 2024); among young German adults, personal responsibility and empathy raise direct and indirect intervention (Obermaier, 2024; Wang, 2021). Non-intervention commonly reflects limited knowledge of reporting, uncertainty about illegality, low interest, or doubts about impact (Böswald et al., 2025; Doseva et al., 2024).

Design choices further shape intervention. Making moderators who flagged content visible can deter bystanders and suppress peer enforcement (Bhandari et al., 2021), whereas exposure to bystander interventions and other norm-conforming responses can spur action (Blackwell et al., 2018). Users favor low-cost options like reporting over higher-cost counterspeech (Hansen et al., 2024); if reporting is ambiguous or opaque, perceived costs rise, and bystanding persists. Beyond psychology and platform design, reporting is political, moral, and normative; unlike visible engagement or counterspeech, it is private, platform-directed, and procedurally mediated (Crawford & Gillespie, 2016; Suzor, 2019).

Finally, political identity and moral priorities shape both action and inaction. Partisans often agree on which hate speech is most censorable but misperceive the other side’s priorities, fueling conflict over moderation (Solomon et al., 2024). Debates over content moderation actions mirror these divides, with both left and right alleging bias (Appel et al., 2023; Corduneanu-Huci & Hamilton, 2022; Kemp & Ekins, 2021; Vogels et al., 2020). Left-leaning users generally advocate stricter platform rules, while others reject content moderation as censorship or hold back when the statements align with their identity or target lower-priority outgroups (Kozyreva et al., 2023; Munzert et al., 2025; Pradel et al., 2024; Rasmussen, 2022). Thus, intervention hinges on whether users expect their action to matter, at what personal and social cost, and in light of the norms and identities that make some speech, and some targets, feel more deserving of intervention than others.

Understanding who remains a bystander versus who reports helps illuminate how citizens engage, assume responsibility, and exercise agency online. Reporting operates as delegated governance, flagging content against platform norms, democratic values, and law (Gillespie, 2018). Framed as civic responsibility and personal agency, it is constrained by opaque processes, limited feedback, and strategic misuse, exposing tensions between safety and free expression (Theocharis et al., 2025). As a core moderation mechanism, reporting can both enable meaningful user participation and safeguard speech through due-process safeguards (clear standards, transparency, accessible notice-and-appeal). Realizing this potential requires knowing who reports and why. We therefore ask: Who reports content, how do reporters differ from the general population, and what motivates them to report?

Data Collection

To identify who reports potentially illegal content and why, we use a unique dataset of confirmed reporters to the German third-party portal

Following public announcements on the “Trusted Flaggers,” targeted attacks against the reporting portal occurred during our study, and the link to the survey became one target of these attacks. To safeguard data integrity and only include true reporters, we applied predefined exclusion rules (A2, Supplementary Material, hereinafter SM), removing 156 inauthentic responses and yielding a final sample of

To contextualize reporting users relative to the general population, we draw on a quota-based survey of the German population aged 18 and over (

While quotas and post-stratification weights were used to approximate the German population, the sample is not fully representative. It includes only respondents identifying as male or female, covers an age range of 18 to 69, and slightly underrepresents individuals with high levels of education; these deviations reflect adjustments made at the survey provider’s request after the relevant quota could not be fully filled.

We first profile reporting users – their sociodemographics, social media experiences, and civic–political engagement, among others. We then compare them to the German survey and German census data to assess whether they form a distinct subgroup. Finally, we analyze motivations to report, including subgroup differences, and open-text answers to the question of what motivated respondents to report to

Measurement

Sociodemographic Measures

Our main points of comparison are sociodemographic characteristics, a classic determinant of politically active citizens (Verba & Nie, 1987). To analyze the differences between reporting users and the general population, we look at the relative differences concerning the age, gender, and education of respondents. 6

Reporting Behavior

We measure reporting users’ reporting behavior by looking at the shares of previous reporting behavior to

Political Measures

We include political behaviors and attitudes relevant to civic engagement and reporting: political participation, trust in institutions and media, ideology, satisfaction with democracy, political interest, and experiences with harmful content that may shape preferences and actions (Vogels et al., 2020). 7 We further examine attitudes toward freedom of speech, central for how users engage with platform content, and views on content moderation and engagement with its mechanisms (Howard, 2019; Theocharis & De Moor, 2021; Theocharis et al., 2025).

Motivation to Report

Prior work links online intervention to civic-mindedness and personal experience, among other motives (Brennauer et al., 2024; Delmas, 2018; Gennaro et al., 2025; Koch et al., 2025; Porten-Cheé et al., 2020). For respondents’ motivations to report in general, we offered: “Concern for the online community,” “Personal experience with hate speech,” “Advocacy for a specific cause,” “My civic duty,” “It is a bad way to treat people,” and “Other/I’m not sure/Prefer not to say.” 8 We then recoded selections into four categories: Prosocial Motives (“Concern for the online community,” “It is a bad way to treat people”), Personal Experiences (“Personal experience with hate speech”), Civic Motivation (“Advocacy for a specific cause,” “My civic duty”), and Other.

To gain a more detailed view of respondents’ motivations for reporting to

Results

Given the limited existing research on reporting users, our analytical strategy is descriptive and correlational. We characterize reporting users’ political attitudes, behaviors, and motivations in comparison to the German public, using an inductive approach to identify empirical patterns that may inform future theory-building and causal research. We begin by descriptively characterizing reporting users and their reporting behavior in comparison to the German population.

Who Are the Reporting Users and What Do They Report?

Sociodemographic Profiles

Figure 1 compares the demographic profiles of reporting users, the German census, and the broader quota-based survey conducted in Germany. The majority of confirmed reporters fall within the 40 to 59 age brackets, with an average age of 47 years. This average closely matches the German survey for the same age range (46 years) and is comparable to the overall German population average (45 years). The majority of reporting users are male (54%), while 39% are female and 7% identify as “Other” – a category that includes the options “Other,” “Transgender,” “Non-binary,” and “Prefer not to say.” While men are the majority within the reporting users group, the most pronounced gender difference concerns the higher prevalence of non-binary or other gender identities among reporting users.

Bar plots with error bars showing the relative frequencies of demographic characteristics across the two samples are further benchmarked against official census data for the German population. The German comparison sample was fielded using predefined age quotas (with a top-coded upper age category) and weighted to approximate the general population within these categories.

Reporting users are highly educated: 45% hold a high level of education (at least a bachelor’s degree), and 51% fall into the mid-level category (see Table A1, SM). Compared to the general population, those who report content to

Users’ Reporting Behavior

We examine reporting frequency, where content was encountered, its type, and target-relatedness. Most respondents reported to

Political Attitudes and Behavior

Although there is limited evidence on individuals who report content to social media platforms, existing research suggests that politically active individuals tend to report more frequently than the general public (Brennauer et al., 2024; Koch et al., 2025). It is therefore reasonable to assume that people in our sample may also exhibit higher levels of political engagement compared to the broader population (Figure 2). 10 Consistent with previous work, we find that reporters exhibit higher levels of political engagement compared to the broader population.

Bar plot with error bars showing the percentage of having done each of the options during the last 12 months, answering either with “Yes” or “No” for each sample. The percentages for the German survey are weighted to approximate the general population.

Reporting users are markedly more politically active than the German population. As Figure 2 shows, 81% have posted political opinions and 84% have commented on political posts; 72% follow politicians or organizations on social media. Furthermore, 84% have signed a petition, 43% volunteered for a community project, and 50% attended a protest. These gaps underscore that

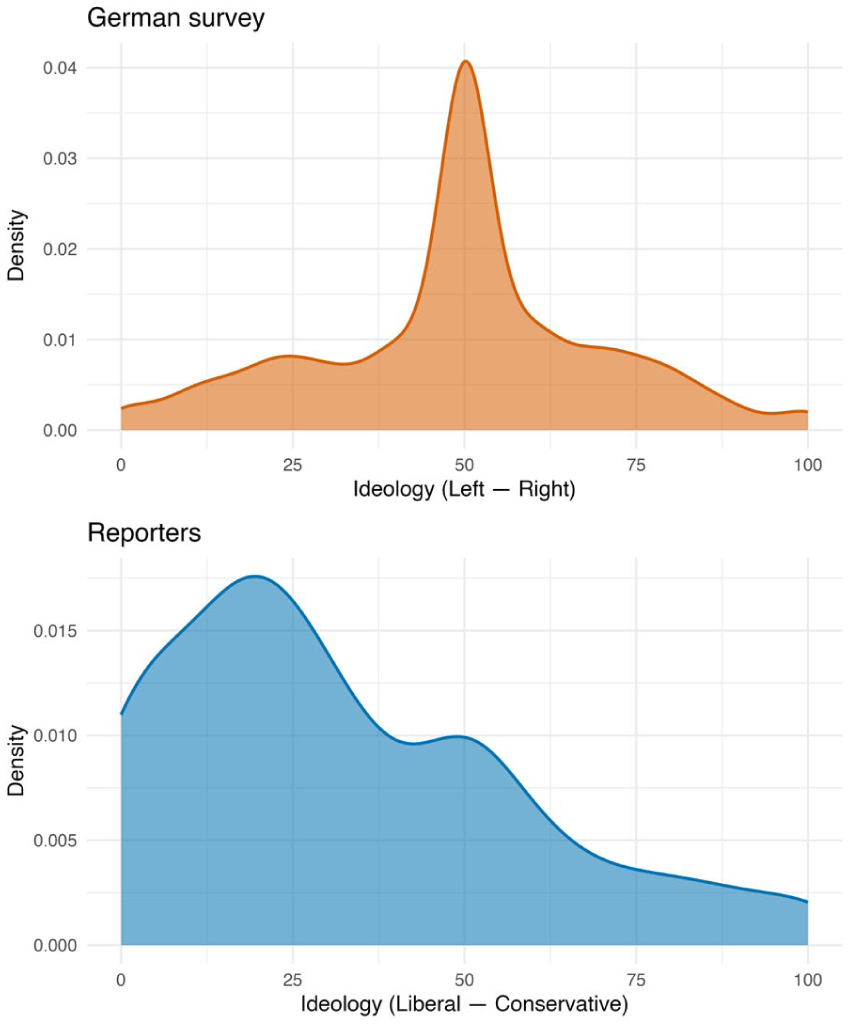

Notably, reporting users are overwhelmingly left-leaning compared to the German population (Figure 3). Their ideological distribution is sharply concentrated at the left or liberal end of the spectrum, whereas the German reference sample is more centrally distributed. The magnitude and direction of this divergence point to a substantively meaningful ideological imbalance among reporting users, complementing their high levels of political engagement.

Density curves of ideology measured on a scale from 0 = “Left/Liberal” to 100 = “Right/Conservative.” The German scale uses “Left/Right”; the reporters survey uses “Liberal/Conservative.” The German survey is weighted to approximate the general population; reporters are unweighted.

Given their high political engagement, one might expect reporting users to also be more trusting of democratic institutions. Interestingly, our sample of reporting users displays similar levels of institutional trust to those of the general population (Figure A2, SM). Moreover, reporting users trust the police less and the national parliament more. We see a sharper contrast in media trust: reporters are less trusting of traditional media and show the lowest trust in social media platforms. At the same time, they report higher satisfaction with democracy and very high political interest. Overall, reporting users emerge as civically and politically engaged, ideologically skewed to the left, digitally active, and more critical of platforms as institutional actors.

Experiences Online

We examine whether reporters’ behavior reflects harsher online experiences. Compared to the German public, reporting users report substantially more negative experiences (Figure A8, SM A5.4) – both witnessing and suffering attacks based on opinions or identity, as well as engaging in self-censorship due to feared reactions. They are also more likely to have had posts flagged, labeled, or removed, indicating greater exposure to moderation. An additional item fielded only to reporting users reveals that majorities report being called offensive names and encountering intolerance (both 76%), with over half reporting experiences of physical threats (Figure A10a, SM). As discussed in the SM, these elevated exposure rates reflect the highly selected nature of the reporting user sample rather than the prevalence in the general population. Finally, experiences of online hostility are patterned by gender, with male reporting users reporting higher exposure across most experience categories. Respondents identifying as “Other” also report exposure across several categories, though estimates are based on a smaller group (Figure A9, SM). Overall, reporting users face a markedly harsher online environment, which plausibly feeds into their intervention behavior. 11

Freedom of Speech Values

Do reporting users, given their harsher online experiences, prioritize protection from harm over free speech? Prior harm and moderation can push attitudes either toward stronger protection or, conversely, toward heightened concern for expression. We find that reporting users tilt toward protection: on a 0–100 scale (0 = “strongly prefer freedom of speech,” 100 = “strongly prefer protection from harm”), reporting users score higher than the German public, which leans toward unrestricted expression (Figure A11, SM). This pattern holds across items: reporters are more supportive of limiting harmful speech and misinformation, while the general population places relatively more weight on protecting expression, even when offensive or misleading (Figure A12, SM). In short, reporting users endorse free speech as a democratic value but set clearer limits when speech causes harm, consistent with norms likely motivating their proactive reporting.

Attention Checks

We examined differences between respondents who passed and failed the attention check. Despite age and gender differences in attention-check performance (Figure A18, SM), substantive outcomes are similar across groups (Figure A19, SM); as discussed in the SM, attention checks likely capture variation in digital literacy rather than inattentiveness per se (Guess & Munger, 2023; Munger et al., 2021) (SM A5.7).

Taken together, our findings reveal that, in comparison to the general public, users who reported content to

What Motivates Users to Report Potentially Illegal Content?

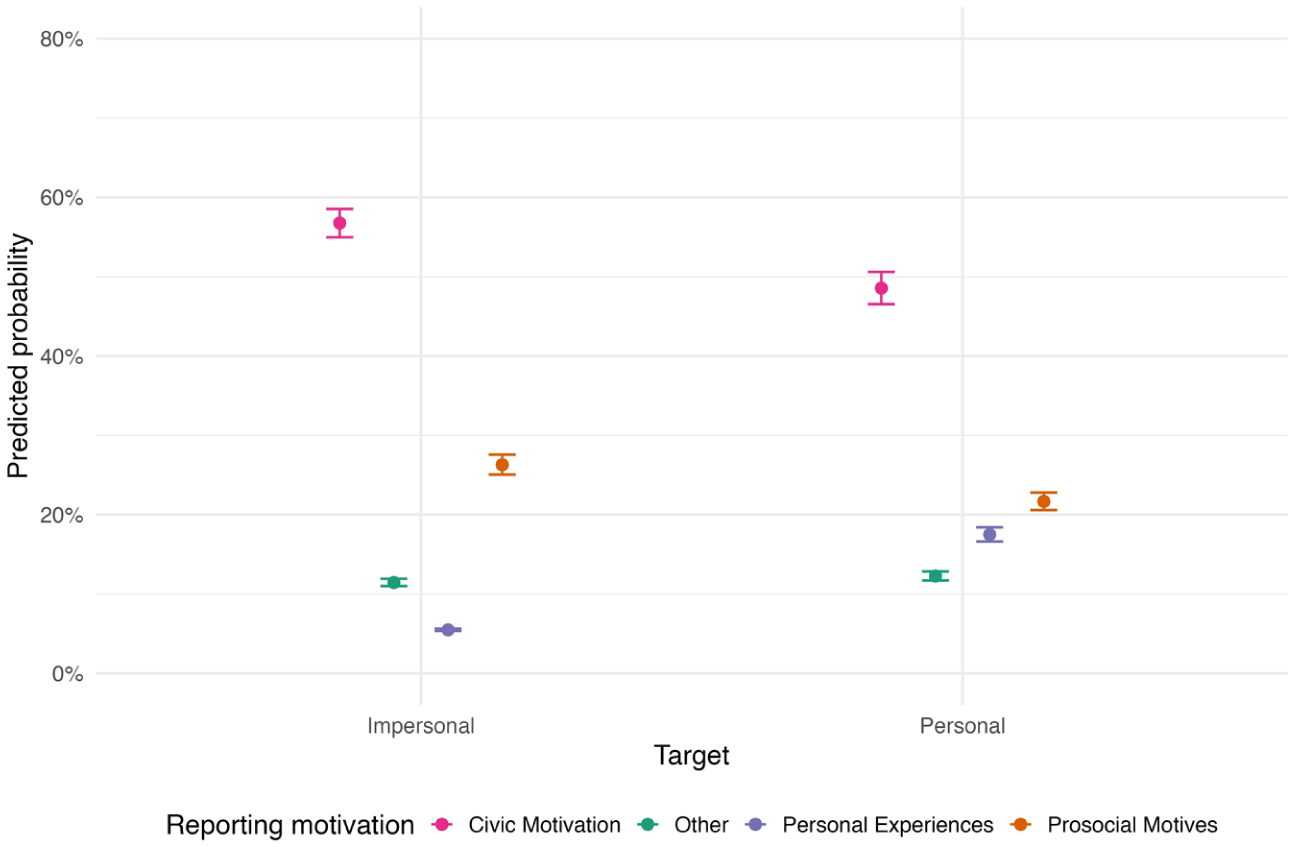

To examine heterogeneity in reporting motivations, we classify respondents’ stated reasons for reporting into distinct outcome categories. Given the unordered categorical structure of this variable, we estimate multinomial logistic regression models. Predictors include demographics (age, gender, education), attitudes (democratic satisfaction, free expression), contextual factors (target, experience index), and reporting behaviors (social media reporting and submission volume). 12 We report model-based predicted probabilities with 95% confidence intervals, holding other covariates constant, and visualize these estimates to summarize associations.

General Motivation to Report

Respondents answered two motivation questions: (i) why they report in general and (ii) why they report to

Bar plot with percentages of motivations to report content in general. Respondents were asked “What motivated you to report the content in general?”

The fact that reporting users see reporting as their civic duty is consistent with respondents’ reported flagging behavior. Most reporting users indicate that they have reported posts or comments multiple times, routinely engage in moderation actions, and regularly block or mute other users or accounts (Figure A7, SM). They are both highly exposed and responsive, actively managing their feeds as civic–protective engagement. In addition, a plurality reports content targeting groups they don’t belong to (39%) versus personal victimhood (18%) (Figure A6, SM), signaling solidarity over self-defense. Third-party reporting is thus not merely reactive but proactive and value-driven.

Controlling for covariates, motives vary modestly by demographics. Civic motivation is common regardless of gender, with men only slightly more likely than women to cite prosocial reasons (Figure A16, SM). By age, civic motivation is as high as 60% among the youngest, but only 43% among the oldest (Figure A13, SM); prosocial motives are stable across age, while personal experience and “other” reasons rise slightly with age. Education does not seem correlated with motivation, although lower-educated respondents more often cite personal experience and less often prosocial reasons (Figure A17, SM).

We expected an index of negative social media experiences to predict personal experience motives. Respondents in our reporting user survey report a high frequency of negative experiences online. In total, 76% have been called offensive names, 76% faced intolerance/discrimination, 53% were purposefully embarrassed, and 52% were physically threatened (Figure A10a, SM). As negative experiences increase, personal victimization motives rise while civic motivations for reporting fall, from roughly 61% to just under 40%, suggesting exposure to negative experiences shifts motivations from civic-mindedness toward self-related concerns (Figure A14, SM).

Finally, we examine how reporting motivations vary with both the target of the reported content and users’ reporting frequency, measured as the number of submissions to

Predicted probabilities of the reported content’s target on motivation to report based on multinomial models with “Other” as the reference category.

Descriptively, most respondents reported content only once via

Motivation to Report to REspect!

We measure motivation to report to

Both approaches converge (Figure 6): a primary driver is frustration with weak enforcement on major platforms – especially Facebook and X – where respondents perceive rule or even law-violating content as persisting without consequences. A second, complementary theme is trust in

Bar chart with the absolute and relative frequencies of LDA-derived topics from open-ended responses on reporting motivations. Respondents were asked, “Even though there is a reporting mechanism on the website where you came across the content, why did you report the content on the

Beyond these pragmatic concerns, civic values and democratic responsibility also emerge. Respondents specifically mention that, for example, “

Discussion and Conclusion

Content moderation has traditionally relied on algorithmic detection and human review, with user reports serving as a crucial backstop for content that slips through. User-centered moderation has recently regained attention following Meta’s decision to relax certain moderation rules and end its U.S. fact-checking program – a move widely seen as accommodating the new U.S. administration (Isaac & Schleifer, 2025). Despite the growing importance of user reporting, there is still limited research on who reports potentially illegal content – whether to platforms or third-party organizations – and what motivates them.

This article set out to fill this gap by providing empirical evidence on who reports content, how do reporting users differ from the general population, and what are their key motivations to report content. We address these questions with two complementary surveys, comprising a novel and unique dataset with individuals verified to have previously reported content to a third-party organization in Germany and a quota-based sample designed to approximate the general population in Germany.

Reporting users tend to be older, more often men than women, and highly educated; compared to the general population, they are substantially more politically engaged, markedly left-leaning, and more digitally active. Rather than reflecting a broad cross-section of society, reporting is more common among individuals who view it as a civic duty, raising questions about representation, equity, and the effectiveness of moderation systems that rely on user participation. Respondents’ dissatisfaction with the transparency, perceived effectiveness, and enforcement of platform-based moderation further helps explain why some turn to third-party organizations for support.

Our study uniquely surveys reporting users, offering novel insights into their motivations and behavior. A key limitation is that the opt-in design, while well-suited to reach this population, likely overrepresents highly motivated and civically engaged individuals, as reflected in our results. To contextualize self-selection, we draw on monthly reporting data and observe a relatively high response rate. Some reporting may be strategic or aimed at gaming the system; while we cannot rule this out, it warrants future research. Ideological orientation was captured using different self-placement items across samples, limiting comparability across groups.

Despite its largely descriptive focus, the study makes a theoretical contribution by conceptualizing user reporting as a form of digital civic participation within broader debates on political behavior and platform governance. By identifying the social, attitudinal, and behavioral profiles of reporting users, we extend research on new and digitally enabled forms of political participation (Theocharis, 2015; Theocharis & De Moor, 2021). Our findings suggest that reporting to

While respondents were identified via a third-party reporting organization, they were asked about motivations for reporting both in general and to that organization specifically. Findings should be interpreted with caution, as they reflect users who actively engage in reporting, were recruited through a third-party service, and opted into the survey and may not generalize to all users or moderation contexts. The study informs debates on platform governance, user agency, and regulatory approaches to trust and safety, while also contributing to research on online political participation and the design of policies aimed at holding both platforms and perpetrators accountable.

By highlighting potential shortcomings in content moderation mechanisms and their effects on users, our analysis suggests that, in the context studied here, reliance on user reporting as a central pillar of moderation may disproportionately amplify the voices of a narrow, civically engaged group. This raises concerns about representational bias and potential blind spots in the identification of harm. To promote fairness, accountability, and effectiveness, platforms and regulators should consider how to broaden participation and transparency in reporting, and in content moderation more broadly, by making these mechanisms more accessible, transparent, and trusted across diverse user groups. Recognizing reporting as a form of digital civic engagement also opens new avenues for empowering users as active stewards of online communities.

Supplemental Material

sj-docx-1-sms-10.1177_20563051261437497 – Supplemental material for Bystanders and Reporters: Who Acts Against Illegal Online Content?

Supplemental material, sj-docx-1-sms-10.1177_20563051261437497 for Bystanders and Reporters: Who Acts Against Illegal Online Content? by Friederike Quint, Yannis Theocharis, Spyros Kosmidis and Margaret E. Roberts in Social Media + Society

Footnotes

Acknowledgements

The authors thank the editor and anonymous reviewers for their valuable feedback. The authors are grateful to Jan Zilinsky, Jesper Sommer Rasmussen, and Giuliano Formisano for helpful discussions and feedback and to Arjun Premkumar and Sebastián Aguilar for excellent research assistance. The authors also thank participants at the 2025 European Political Science Association (EPSA) Conference, the American Political Science Association (APSA) Annual Meeting, and other workshops for helpful comments, and REspect! for their continued collaboration.

Ethical Considerations

Both studies were reviewed and received ethical approval. The survey on confirmed reporters received approval by the Ethics Committee of the Technical University of Munich (Ethikkommission der Technischen Universität München, approval number: 2024-38_1-NM-BA). The survey with German respondents received ethical approval from the ethics board of the University of Oxford (approval number: SSH/DPIR_C1A_24_006) as part of a larger 10-country study.

Consent to Participate

All participants gave informed consent and were warned that some questions could be sensitive or distressing. Participation was voluntary, and respondents could skip any question or withdraw at any time without penalty. We provided contact details for queries to the respective institutions’ complaints procedure.

Consent for Publication

Not applicable.

Author Contributions

The lead author developed the study; led the research design, data collection, and analysis; and drafted the manuscript. The co-authors contributed to study design and theory; measurement; provision of the collection environment; statistical modeling and robustness checks; and manuscript revision. All authors approved the final version.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article. The survey on reporting users was funded by the Council of Europe’s impact study and coordinated by the Justice for Prosperity Foundation, in collaboration with

Declaration of Conflicting Interests

The other authors declare no conflicts of interest.

Data Availability Statement

Given the sensitivity of the underlying data, the dataset will not be publicly released. Replication materials will only be shared upon reasonable request from university-affiliated researchers whose stated purpose is to reproduce this study. For the second survey approximating the German population, data can be shared at request for replication reasons.

Supplemental Material

Supplemental material for this article is available online.