Abstract

Online hate speech is a pervasive form of digital aggression that shapes engagement and communication in online communities. Prior studies have seldom differentiated exposure pathways within the same context, limiting understanding of how distinct experiences shape user behavior. This study distinguishes between indirect exposure, where users witness hate speech directed at others, and direct victimization, where users personally receive it. Using large-scale user behavioral data from a major Chinese social platform, we analyze how these experiences affect content production, social interaction, and antisocial expression. The findings show that witnessing and receiving hate speech trigger distinct and lasting behavioral patterns, with the intensity of hate speech further moderating these effects. User tenure also differentiates these dynamics: new users show a higher propensity for hate expression, while long-term users display stronger persistence in behavioral adjustments. These results extend Stress and Coping Theory to the domain of online hate speech, emphasizing exposure mode as a key factor in stress appraisal and offering insights for community governance.

Introduction

As social media and online communities continue to expand, the proliferation of harmful content, particularly hate speech, has become an urgent concern in the digital communication landscape. Its widespread circulation disrupts normative discourse and presents complex challenges for users and platform governance. Hate speech, defined as any form of communication that incites hatred, discrimination, or violence against individuals or groups based on characteristics such as race, ethnicity, religion, or gender, not only damages the social fabric of online communities but also has broader societal implications (United Nations, 2025). Recent studies suggest that approximately 33% of Internet users have encountered online harassment or hate speech, with a particularly high incidence among adolescents. In the United States, 64% of adolescents aged 13–17 report exposure to hate speech (Rideout & Robb, 2018). These statistics underscore the urgency of addressing hate speech, particularly within online platforms that allow users to interact and share content.

Hate speech can have a profoundly negative impact on individuals, leading to stress, anxiety, and depression, while also eroding the overall sense of safety and well-being in online spaces (Kowalski et al., 2014). For communities, the presence of hate speech can diminish engagement, reduce trust among users, and disrupt the healthy development of these virtual environments (Cheng et al., 2017). On a broader scale, hate speech exacerbates social divisions, disseminates misinformation, and undermines public opinion (Allcott & Gentzkow, 2017). Given its pervasive and damaging effects, understanding how hate speech influences user behavior and community dynamics has become a critical area of research.

Empirical evidence indicates that exposure to hateful content can induce negative affective states, including anxiety, stress, and diminished perceptions of safety, which may subsequently alter users’ participation and engagement patterns in online settings (Keum & Miller, 2018; Müller & Schwarz, 2021; Soral et al., 2018). For instance, Ziegele et al. (2018) and Rossini (2022) found that in the context of news comment sections, exposure to hate speech reduces users’ likelihood of providing positive feedback. Cheng et al. (2017) documented that witnessing hate speech in online news discussion communities suppresses constructive opinion exchange and fosters increased antisocial behaviors, such as hostile comments, personal attacks, and trolling. Although prior research has enhanced our understanding of how hate speech influences four common forms of community user engagement, namely content production, opinion exchange, positive affective feedback, and antisocial behavior, these studies have typically examined different forms of engagement separately across different community contexts, rather than analyzing them collectively within a single online community. Furthermore, in real-world online environments, individuals encounter hate speech in two distinct forms: some witness it as observers, while others receive it directly. According to stress and coping theory (Carver et al., 1989; Krohne, 2001; Lazarus & Folkman, 1984; Skinner et al., 2003), theoretically, receiving hate speech constitutes a more intense stressor than merely witnessing it, and is therefore expected to elicit stronger affective responses, defensive behaviors, and potentially more severe psychological consequences. Therefore, to obtain reliable conclusions regarding the impact of hate speech on user behavior, it is necessary to simultaneously examine how hate speech affects different forms of user engagement through distinct exposure pathways within the same community context.

To address this need, we collaborated with Zano, a popular social media platform in China, to collect engagement data from 86,863 users over a period of 48 weeks. By employing a fixed-effects regression model, we controlled for unobserved user heterogeneity and temporal trends and found that witnessing and receiving hate speech exert opposite effects on certain forms of user engagement, with these effects further moderated by the intensity of hate speech. We also examined the persistence of these effects and found that although receiving high-intensity hate speech, as a strong stressor, provokes more intense reactions from users, its influence tends to be more short-lived, and new users are generally more capable of dissipating these effects compared to long-term users. This study offers practical guidance for community managers in developing strategies to mitigate the negative impact of hate speech on user engagement. Theoretically, it contributes to the literature by extending Stress and Coping Theory to the context of online hate speech, emphasizing that the mode of exposure is a critical factor in the stress appraisal stage and triggers distinct coping frameworks.

Exposure Pathways of Hate Speech

Hate speech in online communities has become a growing concern for platform governance and academic inquiry. Understanding how users perceive and react to hate speech is essential for designing strategies that mitigate its harm while safeguarding free expression. Extensive scholarship has documented the detrimental consequences of hate speech on its targets, ranging from psychological harm (Maitra & McGowan, 2012; Nielsen, 2002) and emotional distress to incitement of real-world violence (Fyfe, 2017; Müller & Schwarz, 2021). Victims often experience offense, violation of dignity, fear, and erosion of autonomy, while prolonged exposure may foster social alienation, radicalization, and the normalization of extreme ideologies (Cohen-Almagor, 2015; Hawdon et al., 2014, 2017; Lee & Leets, 2002).

Despite these advances, prior research often conceptualizes hate speech exposure as a uniform experience, overlooking the distinct perceptual pathways through which users encounter hostile content (Kansok-Dusche et al., 2023; Soral et al., 2018). Indirect and direct exposures are likely to engage different psychological processes. Indirect exposure may elicit empathic responses, strengthen social identity (Hogg, 2016), or provoke collective outrage, thereby motivating expressive or defensive behaviors. By contrast, direct exposure constitutes a more personally salient stressor, triggering stress and coping mechanisms that are centered on the self and may lead to emotional withdrawal, retaliatory actions, or disengagement from the community. Recognizing the differentiated psychological mechanisms activated by each pathway, we empirically examine whether these modes of exposure produce distinct behavioral responses. Accordingly, we propose the following hypothesis:

Intensity of Hate Speech

While the mode of exposure shapes how individuals engage with hate speech, the intensity of the encountered hate speech further modulates its psychological and behavioral impact. Research by Saleem et al. (2017) indicates that the intensity of hate speech is positively correlated with its rate of dissemination and the range of its impact. High-intensity hate speech attracts more user attention and emotional responses, leading to broader dissemination on social media platforms. This phenomenon partially explains why certain extreme statements can rapidly spread online, triggering large-scale societal reactions. From the perspective of Stress and Coping Theory, hate speech can be understood as a social and psychological stressor that elicits appraisal and coping processes. Higher intensity of hate speech amplifies perceived threat, increasing cognitive and emotional load, which in turn shapes whether users engage in problem-focused strategies, such as counter-speech and norm enforcement, or emotion-focused strategies, such as withdrawal, avoidance, or retaliatory behavior. In this way, the severity of the stressor not only influences immediate behavioral responses but also affects the long-term adaptation of individuals within online communities.

To examine the effects of hate speech intensity based on exposure pathways, we first conducted a graded classification of users’ hate speech posts, using a methodological framework that enables differentiation by intensity. Specifically, we follow the approach proposed by Arce-García et al. (2025) and utilize the intensity classification system outlined in the labeling manual by De Lucas Vicente et al., which categorizes hate speech into six levels based on linguistic features and contextual cues, ranging from implicit negativity to overt incitement. In the first two intensity levels, no overtly aggressive language is used; instead, negative emotions are expressed implicitly, or facts are cited to stigmatize others or groups. The last four levels begin to explicitly convey negative impressions or use language that constitutes hate speech against others or groups, and may even call for violent actions against them. Due to the relative rarity of high-intensity hate speech, we first classified posts into six graded levels of intensity and then further grouped them into two broader categories based on how clearly negative meanings were conveyed. Specifically, the first two levels were combined as low-intensity, while the remaining four levels were categorized as high-intensity. Building on this classification of hate speech intensity, we examine its influence on user behavior through the lens of Stress and Coping Theory, treating higher-intensity hate speech as a stronger stressor that shapes users’ coping responses, and we propose the following hypotheses:

User Differences in Online Communities

Beyond the nature and intensity of hate speech exposure, users’ tenure also shapes their behavioral patterns in online communities. According to Stress and Coping Theory, individuals’ appraisal of stressors and their coping strategies are influenced by their personal resources, prior experience, and familiarity with the environment. In this context, user tenure reflects accumulated experience, social integration, and normative understanding. New users, who are still adapting to the community, often have weaker social ties and limited familiarity with prevailing norms, making them more vulnerable to negative content and more prone to maladaptive behaviors. Long-term users, by contrast, tend to develop adaptive coping mechanisms through repeated interactions and stronger identification with the community, which enable them to regulate their behavior and maintain more stable patterns over time.

Building on this perspective, we focus on two tenure-related dynamics in user behavior: Propensity and Persistence. Propensity refers to users’ general tendency to engage in hate speech, reflecting baseline differences in behavioral inclination between new and long-term users. This initial behavioral tendency may shape how users respond when exposed to hate speech and set the stage for subsequent adjustments. Persistence captures the temporal stability of behavioral changes following exposure, indicating how long users maintain such adjustments over time. By considering both dimensions together, we can examine not only which users are more likely to engage in hate speech initially but also how these behaviors evolve and stabilize across weeks.

Based on this conceptual link, we propose two related hypotheses:

Behavioral Responses as Coping Strategies

Grounded in stress and coping theory, this study conceptualizes user behavior in online communities as observable coping responses to psychological and social stressors in digitally mediated contexts. Prior research shows that individuals rarely respond to stressors in a single, uniform way. Instead, coping responses are commonly distinguished along well-established dimensions, such as problem-focused versus emotion-focused coping and adaptive versus maladaptive strategies. These coping strategies differ in their orientation, emotional demands, and social consequences (Carver et al., 1989; Krohne, 2001; Lazarus & Folkman, 1984; Skinner et al., 2003). When stressors arise in social and communicative settings, coping is expressed not only through internal cognitive or emotional processes but also through observable patterns of participation, interaction, and expression. Research on online communities therefore suggests that responses to social tension and conflict are better captured through distinct forms of engagement rather than through a single aggregate measure of activity (Preece & Shneiderman, 2009; Rafaeli & Sudweeks, 1997).

Building on this literature, we conceptualize behavioral responses to hate speech by integrating stress and coping theory with established frameworks of online participation and social interaction. Prior studies of online communities consistently distinguish among content creation, interactive communication, and low-cost affiliative feedback as core modes of participation (Burke et al., 2011; Preece & Shneiderman, 2009; Rafaeli & Sudweeks, 1997). In parallel, research on online aggression and norm violation identifies antisocial expression as a distinct and theoretically meaningful response to social strain and perceived threat (Cheng et al., 2017; Suler, 2004). Drawing on stress and coping theory, which distinguishes between constructive and maladaptive coping orientations, and on prior research identifying core modes of participation in online communities, we operationalize users’ coping responses through four observable behavioral dimensions: content production, opinion exchange, positive affective feedback, and antisocial behavior.

Along these dimensions, content production represents an active and problem-focused coping orientation. Through producing original posts, users respond to stressors by articulating personal viewpoints, sharing experiences, or initiating public discourse. In online communities, this behavior entails relatively high effort and high visibility, as it requires users to take an explicit stance and engage directly with potentially contentious environments. Opinion exchange reflects a different adaptive coping pathway that is more interactional and cognitively oriented. By commenting within existing conversations, users participate in dialogue and deliberation, facilitating collective sense-making and norm negotiation, particularly under conditions of social tension or uncertainty (Kent & Taylor, 2002). Compared to content production, opinion exchange typically involves lower initiation costs while remaining publicly visible and socially embedded.

Positive affective feedback constitutes another adaptive coping response, but one that is primarily emotion-focused and low in effort. Through liking or similar affiliative actions, users can express alignment, support, or encouragement without direct confrontation or extended interaction. Prior research suggests that such low-cost affiliative signals play an important role in emotional regulation and social bonding, allowing users to cope with stress while minimizing personal risk and exposure (Burke et al., 2010; Kraut & Resnick, 2012). In contrast, antisocial behavior represents a maladaptive coping pathway. In this case, stress, frustration, or perceived threat is externalized through aggressive or norm-violating expression, such as hate speech. Research on online aggression shows that such behavior often arises under conditions of perceived social strain and serves as an expressive, though disruptive, outlet for unresolved stress (Cheng et al., 2017; Suler, 2004).

Taken together, these four behavioral dimensions represent theoretically distinct coping pathways through which users may respond to hate speech in online communities. Importantly, stress and coping theory suggests that these responses are not functionally equivalent and may exhibit divergent patterns under similar conditions. This multidimensional behavioral framework therefore motivates the examination of content production, opinion exchange, positive affective feedback, and antisocial behavior, allowing for a more nuanced understanding of how users navigate and adapt to hostile digital environments.

Materials and Methods

Dataset

In this study, we utilize data from a popular online community platform widely adopted among Chinese college students. We briefly present the interface and functionalities of the platform in Figure 1. Its structure shares similarities with platforms such as Fizz and Reddit. Users on the platform frequently engage in information seeking and idea sharing, but instances of hate speech often emerge amid exchanges of divergent opinions. We used a dataset officially provided by the platform’s development team, which spans 48 weeks starting from February 2022 and includes activity from 86,863 users. It comprises user-generated content, anonymized user information, and interaction records such as likes and user reports.

The main page of the Zano platform.

The dataset comprises 289,411 posts, 1,453,959 comments, and 2,043,940 likes. Given the large volume of user-generated content, manually annotating all posts for hate speech and its intensity is impractical. Instead, we leverage an advanced large language model (LLM) for automated annotation. In recent years, LLMs have been increasingly applied to various natural language processing (NLP) tasks. Following the approach of Kholodna et al. (2024) in using LLMs for text annotation, we employed ChatGPT to label hate speech and classify its intensity across millions of text entries in our collected dataset. Following established literature (Fortuna & Nunes, 2018; Gagliardone et al., 2015), we define hate speech as language that expresses hatred, incites violence, or promotes discrimination against individuals or groups based on attributes such as race, gender, religion, or sexual identity. Our annotation guidelines are further informed by platform policies and prior hate speech datasets (Mathew et al., 2021; Vidgen & Derczynski, 2020).

We use ChatGPT API (version 4.1) to annotate the dataset, with the temperature parameter—controlling the model’s degree of creativity—set to 0.3 (the default value is 1.0, with higher values producing more diverse outputs). The prompt design follows the labeling guidelines proposed by De Lucas Vicente et al. (2022), and the full prompt is provided in the supplementary materials. To evaluate the reliability of ChatGPT’s annotations, we compared its outputs with human-labeled data on a sample of 1000 instances, each consisting of a replied-to content and a user comment. Human annotators were instructed to assess each instance based on two key criteria: whether the content constituted hate speech, and the intensity of hostility expressed in the message. In cases where the surface text was ambiguous, annotators were required to rely on contextual cues to inform their judgment. To ensure consistency and accuracy, each instance was independently labeled by three trained annotators. We adopted a majority-vote strategy to determine the final label for each case, first deciding whether a sample constituted hate speech and then, if applicable, voting on its intensity level. Human annotators were guided by the same hate speech intensity labeling guidelines used for ChatGPT. When the annotated intensity levels were completely inconsistent, the human annotators discussed their reasoning and re-annotated the instance until a final consensus was reached. The annotation results yielded a Fleiss’ Kappa of 0.8301 and a Krippendorff’s Alpha of 0.8302, indicating a high level of inter-annotator agreement among the human annotators. By examining specific annotation cases, we find that disagreements among human annotators primarily occurred at the level of fine-grained intensity classification rather than in the identification of hate speech itself. Moreover, inconsistencies in assigned intensity levels were generally minor, with few cases differing by more than one level, indicating that the overall annotation outcomes were largely consistent.

When evaluating ChatGPT’s labeling performance, the results show that ChatGPT achieved an annotation accuracy of 90.25%, suggesting a substantial level of agreement with human judgments. Given this strong alignment, we proceeded to use ChatGPT to annotate the full dataset, comprising over one million text entries. Finally, we organized the dataset into a user-week panel format, tracking each user’s activity across up to 48 weeks. For each user-week, we calculated the number of posts made and received, comments made and received, likes given and received, reports sent and received, hate speech sent and received, the number of hate speech instances witnessed, the intensity and proportion of both witnessed and received hate speech, as well as the user’s tenure, measured by the number of weeks since joining the community. To facilitate the analysis of persistent effects, we also computed cumulative sums of each variable for each user across all weeks. We report the descriptive statistics of the main variables in Table 1.

Descriptive Statistics.

Method

Guided by the theoretical framework outlined in the Introduction, we operationalize users’ behavioral responses using four observable activity measures commonly available in online communities: content production, opinion exchange, positive affective feedback, and antisocial behavior. These measures capture distinct forms of user activity within the community.

Content production is measured as the number of original posts authored by a user, capturing users’ initiation of new discussion threads and original content contributions. Opinion exchange is measured by the number of comments a user posts in response to others’ content, reflecting participation in ongoing conversations rather than the initiation of new topics.

Positive affective feedback is operationalized as the number of likes a user gives to posts or comments authored by others, representing low-effort engagement behaviors that signal agreement or support. Antisocial behavior is measured by the production of hate speech within the community, identified at the level of individual user contributions.

This multidimensional behavioral typology enables a comprehensive and theory-driven examination of how users navigate hate speech in online settings, capturing both constructive and destructive forms of participation.

To test H1, which examines the impact of hate speech exposure on user behavior in online communities, we employ a fixed-effects regression model, a method widely used in economics and management research to control for unobserved heterogeneity. This approach controls for unobserved heterogeneity by accounting for all time-invariant individual characteristics and time-specific effects, such as weekly platform-wide fluctuations. Given that our dataset is structured as a user-week panel, this method is especially appropriate as it leverages within-user changes over time while controlling for external temporal influences. Consequently, fixed-effects regression enhances causal inference by minimizing bias from omitted variables that do not vary over time or across users. The regression model is specified as follows:

where Yit represents the dependent variable for user i in week t, which may include content production, opinion exchange, positive affective feedback, or antisocial behavior; WitnessHateSpeechit denotes the cumulative number of hate speech messages that user i has been exposed to by observing others being targeted in week t; ReceiveHateSpeechit represents the cumulative amount of hate speech received by user i in week t; ReceiveNeutralMessagesit indicates the cumulative amount of non-hate speech received by user i in week t; ReceiveLikeit refers to the cumulative number of likes received by user i in week t; ReceiveReportit represents the cumulative number of reports that user i received from other users in week t; Log(TenureWeeks) is the natural logarithm of the total number of weeks user i has been a member of the community up to week t.

We operationalize witnessing hate speech as the number of posts containing hate speech in which the user has participated through non-hate comments. This captures the frequency users are exposed to hate speech content within their discussion posts. We operationalize receiving hate speech as the number of hate speech comments directly targeting a user, either in the comments under their posts or in replies to their comments on others’ posts.

In the regression model, α is the intercept term, while γ t and γ i denote time (weekly) fixed effects and individual user fixed effects, respectively. These fixed effects control for unobserved heterogeneity at individual and temporal levels, thereby mitigating potential omitted variable bias. The error term ϵ it is computed with robust standard errors to account for heteroskedasticity and autocorrelation.

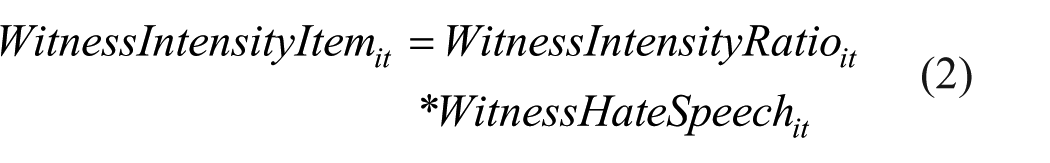

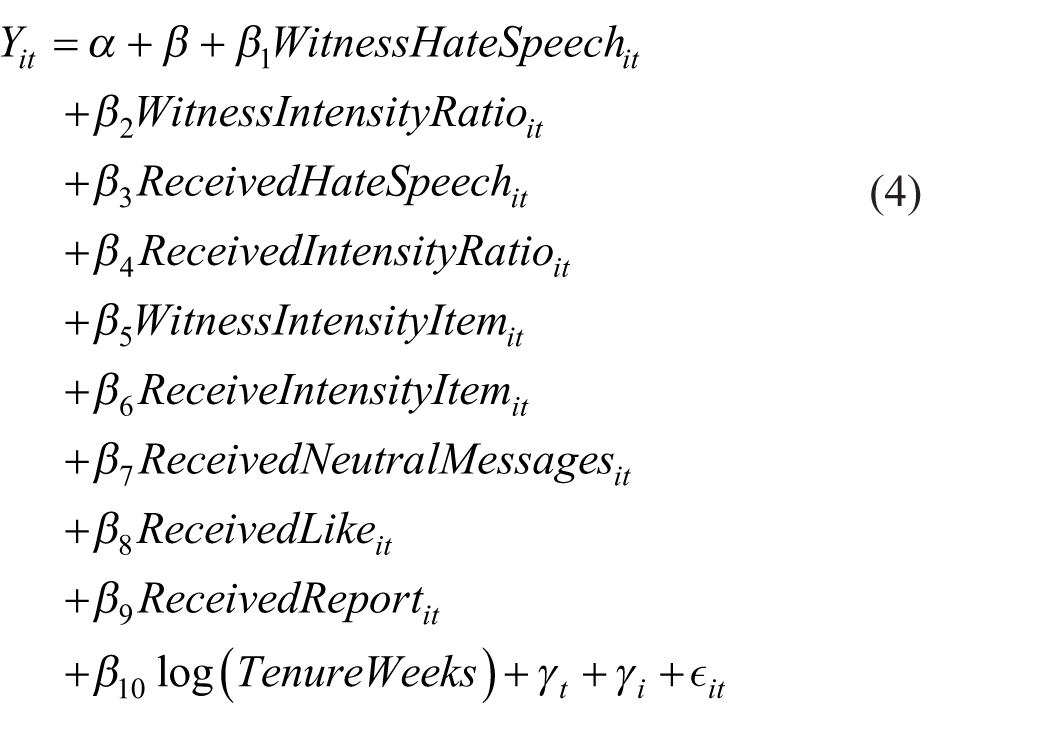

For H2, given the panel structure of our data, it is challenging to directly use raw intensity values of hate speech for analysis. Therefore, we introduce two new variables, WitnessIntensityRatioit and ReceiveIntensityRatioit, representing the proportion of high-intensity hate speech a user i witnesses or receives at time t, respectively. These variables enable us to assess the impact of high-intensity versus low-intensity hate speech, allowing for a more fine-grained analysis of behavioral responses based on the severity of exposure. To further assess the moderating effect of hate speech intensity, we construct the interaction terms WitnessIntensityItemit and ReceiveIntensityItemit, where

The final regression model is specified as follows:

For H3, we construct dependent variables for each user at multiple future time points to examine the lasting impact of hate speech exposure on user behavior. Following the approach of Zeng et al. (2023), we use Model (4) to assess the significance of the interaction terms across these future periods. The duration of the influence is determined by the time interval over which the effect remains statistically significant before becoming nonsignificant, providing a measure of how long hate speech continues to affect user behavior.

For H4a, to explore behavioral differences between new and long-term users in response to perceived hate speech, we categorize users into two groups based on the median duration of community membership: new and long-term users. This classification is appropriate because users join the community on a daily basis, resulting in a relatively uniform distribution of user tenure. Since the distribution is not heavily skewed, using the median as a cutoff point avoids potential issues associated with long-tail distributions. To examine differences in hate speech expression across these two groups, we first visualize the distribution of hate speech production among new and long-term users. We then perform a chi-square test to statistically assess whether the difference in hate speech expression between the two groups is significant.

For H4b, we conduct separate analyses for new and long-term users using the same methodology as in H3. By comparing the results across the two groups, we aim to identify potential differences in the persistence of their behavioral responses to hate speech exposure.

Results

Impact of Two Exposure Pathways of Hate Speech on User Behavior

The results of the regression analysis are shown in Table 2. We could observe that witnessing hate speech is positively associated with increased levels of opinion exchange (β = .1470, p < .01), positive affective feedback (β = .3085, p < .01), and antisocial behavior (β = .0268, p < .01), although it slightly suppresses content production (β = −.0125, p < .01). These findings suggest that witnessing hate speech may not silence users but provoke stronger emotional and expressive responses and more active engagement in discussions. The simultaneous increase in opinion exchange and antisocial behavior suggests that while users may engage in discussions out of empathy for the victim, they may also express hate speech themselves.

Regression Results: Witnessing Versus Receiving.

Robust standard errors in parentheses: *p < .1, **p < .05, ***p < .01.

In contrast, being the target of hate speech—shows a distinct behavioral profile. It is significantly negatively associated with content production (β = −.2064, p < .01), opinion exchange (β = −.1353, p < .01), and positive affective feedback (β = −.8419, p < .01), suggesting a silencing or withdrawal effect. However, it is strongly and positively associated with antisocial behavior (β = .3929, p < .01), implying that victimized users may retaliate or react with hostility. Combining the results of witnessing hate speech and being the target of hate speech reveals that the two exposure pathways exert similar effects on content production and antisocial behavior, but have opposing impacts on opinion exchange and positive affective feedback, partially supporting H1.

Impact of Hate Speech Intensity on User Behavior

We found significant differences in users’ behavioral responses depending on the intensity of their exposure to hate speech and whether they witnessed or directly received such speech. Table 3 presents the main regression results.

The Effect of Different Intensities of Hate Speech.

Robust standard errors in parentheses: *p < .1, **p < .05, ***p < .01.

For users who merely witnessed hate speech, the main effects show that such exposure is associated with a slight decrease in content production (β = –.0290, p < .01), alongside increases in opinion exchange (β = .4250, p < .01), positive affective feedback (β = .4708, p < .01), and antisocial behavior (β = .0763, p < .01). This suggests that, in general, witnessing hate speech tends to elicit more participatory behaviors, such as commenting and emotional reactions, while slightly reducing the inclination to initiate new posts.

However, the interaction effects between witnessing and high-intensity hate speech present an opposite trend across all four behavioral outcomes. Specifically, high-intensity hate speech witnessed by users leads to a significant increase in content production (β = .1260, p < .01), while simultaneously causing sharp decreases in opinion exchange (β = –1.8410, p < .01), positive affective feedback (β = –1.6338, p < .01), and antisocial behavior (β = –.3263, p < .01). When combining the main and interaction effects to evaluate the net impact of witnessing high-intensity hate speech, the overall direction of the behavioral responses is reversed relative to witnessing hate speech in general. For example, the negative main effect of witnessing on content production (β = –.0290, p < .01) is overturned by the positive interaction effect (β = .1260, p < .01), resulting in a net increase. Similarly, the positive main effects on opinion exchange, positive affective feedback, and antisocial behavior are each more than offset by the negative interaction terms, yielding net decreases in these dimensions. These reversals underscore the critical moderating role of hate speech intensity: what might appear as engagement under lower-intensity exposure turns into withdrawal or disengagement when the hostility becomes extreme.

In contrast, users who receive hate speech directly respond in more polarized ways, depending on the intensity. The main effects of receiving hate speech are strongly negative for content production (β = –.2134, p < .01), opinion exchange (β = –.8838, p < .01), and positive affective feedback (β = –1.6653, p < .01), with a modest positive effect on antisocial behavior (β = .1579, p < .01). These baseline results suggest that low-intensity hate speech discourages engagement and expressive behavior among victims. However, the interaction terms for high-intensity received hate speech show uniformly positive effects: content production (β = .1117, p < .01), opinion exchange (β = 1.6685, p < .01), positive affective feedback (β = 1.6614, p < .01), and antisocial behavior (β = 0.5030, p < .01). After summing the coefficients of the main effects and interaction terms, it becomes clear that high-intensity direct hate speech reverses the suppressive effects of low-intensity hate speech on opinion exchange and positive affective feedback. Victims respond by increasing various forms of engagement, including content creation, opinion exchange, positive affective feedback, and even retaliating through antisocial behavior such as posting hate speech themselves. These results suggest that high-intensity hate speech serves as a behavioral catalyst, prompting expressive and often confrontational responses from users who are directly affected.

These findings support H2 by demonstrating that user reactions to hate speech are significantly moderated by its perceived intensity. Moreover, the moderation effects differ markedly between passive witnessing and direct receiving, suggesting distinct psychological and behavioral mechanisms depending on users’ exposure type.

Impact of Exposure Pathway and Hate Speech Intensity on Behavioral Persistence

Table 4 presents the persistence of significant behavioral changes after users perceive hate speech, revealing the main effects of exposure type (witnessing vs receiving) and the moderating role of exposure intensity (as measured by the interaction term: quantity × intensity).

Duration of Significant Change in User Behavior After Perceiving Hate Speech (In Weeks).

A key pattern emerges: witnessing hate speech leads to robust and sustained changes across all four behavioral domains—content production, opinion exchange, positive affective feedback, and antisocial behavior—with effects persisting beyond 12 weeks regardless of intensity level. This suggests that even low-intensity exposure, when merely observed, is sufficient to trigger long-term shifts in user behavior, and increasing the intensity (via the interaction term) does not substantially alter the persistence of these effects. In short, witnessing aggression—no matter how severe—consistently reshapes user engagement patterns over an extended period.

In contrast, direct victimization (receiving hate speech) presents a more complex and dynamic pattern. The main effects demonstrate sustained behavioral change across most dimensions, with content production, opinion exchange, and positive affective feedback showing persistence beyond 12 weeks. However, antisocial behavior exhibits a relatively shorter duration, with significant effects tapering off around the seventh week, suggesting partial behavioral recovery. The moderating role of exposure intensity further refines this picture. In content production and opinion exchange, the influence of the interaction term fades earlier than the main effects, indicating that intensity-driven differences are short-lived and largely concentrated in the initial response period. Conversely, in antisocial behavior, the interaction effect lasts slightly longer than the main effect, hinting that higher exposure intensity may delay behavioral recovery or exacerbate negative responses. These results highlight that while direct aggression elicits intensity-sensitive reactions, the temporal dynamics of such modulation vary significantly across behavioral domains.

Taken together, the results offer partial support for H3. There is a clear asymmetry between indirect and direct exposure pathways: witnessing hate speech produces uniformly long-lasting effects that are insensitive to intensity, while receiving hate speech is associated with more variable and intensity-sensitive outcomes. This highlights the importance of distinguishing not only how users encounter hate speech, but also how much and how severe that exposure is, especially in cases of personal victimization.

Comparing New and Long-Term Users: Propensity and Persistence in Hate Speech Responses

Figure 2 illustrates the overlapping distributions of hate comment ratios between new and long-term users. The vertical axis represents the proportion of hate comments within each user’s total comments, while the width of each violin indicates the relative user density at that level. For both groups, the ratios are primarily concentrated between 0 and 0.2, indicating that most users seldom engage in hate expression. However, when the proportion exceeds 0.2, the distribution for new users becomes visibly broader and overlays that of long-term users, suggesting that new users constitute a larger share among those with higher hate speech ratios. This pattern implies that new users are relatively more inclined to produce a greater proportion of hate comments compared to long-term users. A chi-square test confirms that this difference is statistically significant (χ² = 163.770, p < .001), supporting H4a that new users have a higher propensity to engage in hate speech than long-term users.

Overlapping distributions of hate comment ratios among new and long-term users. 1

Having established that new users are more prone to engage in hate expression, we next examine whether these tenure-based differences also manifest in the persistence of behavioral changes following exposure to hate speech. As shown in Table 5, long-term users tend to recover more swiftly than new users from direct hate speech in terms of antisocial behavior. Specifically, while new users exhibit sustained increases in antisocial expression for over 12 weeks following direct victimization, long-term users return to baseline levels by the third week. This pattern suggests that accumulated community experience may foster stronger behavioral regulation or more effective coping strategies when faced with interpersonal hostility.

Duration of Significant Change in User Behavior After Perceiving Hate Speech. a

The value before “/” indicates the duration of impact (in weeks) for new users, and the value after “/” corresponds to that for long-term users.

At the same time, long-term users appear more vulnerable to the prolonged effects of high-intensity hate speech. In both cases of witnessing and receiving hate speech, the interaction effects that reflect exposure intensity persist longer for long-term users than for new users. For example, witnessing high-intensity hate speech influences content production for more than 12 weeks among long-term users, whereas the effect dissipates by the seventh week for new users. A similar contrast is observed in opinion exchange and positive affective feedback: new users display minimal or short-lived changes under intense exposure, while long-term users exhibit more prolonged behavioral shifts, particularly in emotional domains. This heightened responsiveness may stem from deeper emotional engagement with the community or greater sensitivity developed over time.

Together, these findings reveal a dual pattern. While long-term users are more resilient in avoiding retaliatory antisocial behavior, they are also more susceptible to the lasting influence of intense hostility across other behavioral dimensions. This partially supports H4b. Specifically, although general exposure to hate speech produces similar effects across user types, clear differences emerge when users are directly targeted and when the intensity of exposure is considered.

Discussion

Given the complex and often incomplete understanding of hate speech’s behavioral consequences in prior literature, which has seldom simultaneously considered the two exposure pathways of witnessing and receiving within a single community context, this study applies Stress and Coping Theory to examine how users respond to hate speech. Hate speech is conceptualized as a social and psychological stressor, with witnessing and directly receiving hate speech representing distinct modes of exposure that elicit different appraisals of threat. Users’ behavioral reactions, influenced by factors such as the intensity of hate speech and their tenure in the community, are treated as coping processes that mediate these stressors. By integrating hate speech exposure mode, intensity, and user tenure into a unified framework, our findings provide a nuanced understanding of how individuals cope with, and adapt to toxic digital environments over time.

First, users respond differently depending on how they encounter hate speech. Witnessing hate speech is associated with increased levels of opinion exchange, positive affective feedback, and antisocial behavior, while having only a minimal suppressive effect on content production. These patterns suggest that indirect exposure discourages users from initiating new discussions but stimulates expressive and reactive engagement within existing conversations, potentially driven by empathic outrage, collective norm enforcement, or defensive mobilization. In contrast, being directly targeted by hate speech sharply reduces content production, opinion exchange, and positive affective feedback, while significantly increasing antisocial behavior. From a theoretical perspective, these findings illustrate how different exposure pathways shape the primary and secondary appraisal of stressors (Carver et al., 1989; Krohne, 2001; Lazarus & Folkman, 1984; Skinner et al., 2003). Research on first-person and third-person effects further clarifies this distinction by showing that individuals systematically differ in how they perceive the severity, personal relevance, and responsibility associated with harmful content depending on whether they are personally targeted or merely observe others being targeted (Jhaver & Zhang, 2023). Specifically, when hate speech is witnessed rather than received, it acts as a third-person stressor. In this context, users typically appraise the incident as a threat to community norms or targeted groups, rather than to themselves. This appraisal triggers outwardly oriented coping, such as expressing support through likes or stepping in to maintain community order. By contrast, direct exposure functions as a first-person stressor, appraised as an immediate, personal threat to one’s identity and safety. This shifts coping toward self-protection, often leading to withdrawal in the form of reduced content generation and opinion exchange, or retaliatory antisocial behavior. Together, these findings extend Stress and Coping Theory by demonstrating that exposure mode is a critical determinant of stress appraisal in digital environments, shaping both the perceived severity of hate speech and the selection of coping strategies, and underscoring the importance of distinguishing between collective and personal stress appraisals when predicting behavioral responses to online hostility.

Second, the intensity of hate speech critically shapes user behavior, interacting with exposure pathways in distinct ways. High-intensity hate speech witnessed by users tends to suppress expressive behaviors such as opinion exchange and positive affective feedback, while slightly increasing content production, suggesting that extreme community-level stressors prompt withdrawal and disengagement to mitigate perceived. In contrast, users who directly receive high-intensity hate speech exhibit more polarized responses, increasing both expressive behaviors (content production, opinion exchange, positive affective feedback) and antisocial actions, indicating that severe personal stressors can act as behavioral catalysts, provoking reactive and confrontational coping (Hogg, 2016; Matamoros-Fernández & Farkas, 2021). These patterns extend Stress and Coping Theory to online community contexts, highlighting that the severity and type of stressor jointly shape adaptive and maladaptive coping strategies. Specifically, indirect exposure to extreme hostility may trigger avoidance and norm-protective withdrawal, whereas direct exposure to severe hate speech mobilizes self-focused coping that manifests in both constructive and retaliatory behaviors, emphasizing the need to consider both exposure pathway and intensity when evaluating the behavioral consequences of hate speech.

Third, our findings reveal that the temporal persistence of behavioral responses to hate speech varies according to exposure type and intensity. Witnessing hate speech, regardless of intensity, functions as a chronic, community-level stressor, eliciting sustained behavioral changes across content production, opinion exchange, positive affective feedback, and antisocial behavior. This prolonged effect reflects the cumulative cognitive and emotional load imposed by repeated exposure, gradually shaping community norms and eroding trust and civility. In contrast, direct victimization represents an acute, personal stressor, producing more transient behavioral responses. Low-intensity direct hate speech can still induce lingering effects due to rumination and unresolved psychological tension, whereas high-intensity direct hate speech often provokes sharp but short-lived spikes in behavior, consistent with rapid, stress-driven coping strategies. These patterns illustrate that chronic, ambient stressors and acute, personal stressors are processed differently, highlighting the role of exposure type and intensity in determining both immediate and long-term adaptation.

Finally, our analyses highlight meaningful heterogeneity in behavioral responses based on user tenure, encompassing both the propensity to engage in hate speech and the persistence of subsequent behavioral changes. Consistent with H4a, new users demonstrate a higher propensity to post hate comments, suggesting that limited familiarity with community norms and weaker social ties contribute to a greater baseline inclination toward antisocial behavior. Building on this, H4b shows that the temporal dynamics of these responses differ across tenure groups. Both new and long-term users exhibit sustained changes after witnessing hate speech, indicating that chronic exposure functions as a persistent stressor with lasting implications for community norms and engagement. However, responses to direct victimization diverge between the two groups: long-term users initially display stronger antisocial reactions but recover more rapidly, reflecting more developed coping strategies and greater familiarity with community norms. Under high-intensity hate speech, long-term users exhibit more enduring effects than newcomers, likely because their stronger sense of community ownership renders severe violations more personally salient. Together, these findings demonstrate that user tenure shapes both the initial behavioral tendency (propensity) and the temporal stability of responses (persistence), extending Stress and Coping Theory to account for individual differences in processing and adapting to hostile online interactions.

In summary, our findings demonstrate that hate speech, whether witnessed or directly received, alters user behavior through distinct psychological and social mechanisms. By examining exposure pathways, intensity, and user tenure, this study extends Stress and Coping Theory to the context of online communities, showing how different exposure pathways and hate speech intensity, together with users’ tenure, influence coping responses and adaptation patterns. From a practical perspective, these insights inform platform governance by emphasizing that content moderation should address not only isolated incidents but also the cumulative and ambient stress imposed by repeated exposure. Interventions such as proactive norm signaling, reinforcement of positive community behaviors, and tools that facilitate adaptive coping can help platforms cultivate resilient and inclusive online environments that mitigate the long-term harms of hate speech.

Conclusion

This study provides important insights into the effects of hate speech on online community behavior. By differentiating exposure pathways, intensity, and user tenure within a unified community context, we extend Stress and Coping Theory to the domain of online hate speech, highlighting that the mode of exposure constitutes a critical factor in the stress appraisal stage and shapes distinct coping frameworks. Our findings show that the effects of hate speech on user behavior vary across the four experiments: exposure pathway influences the type of engagement, intensity of hate speech modulates the magnitude of responses, high-intensity exposure affects the duration of behavioral changes, and user tenure shapes how individuals appraise and cope with hostility. Together, these results highlight the nuanced ways in which users adapt to toxic online environments.

These results have two main implications. Theoretically, they advance our understanding of the cognitive and social processes through which individuals respond to online hate speech stressors, showing how different coping strategies emerge depending on the directness and severity of the threat. Practically, our findings provide actionable guidance for platform designers and policymakers seeking to mitigate the harms of hate speech. Platforms should develop targeted support features and reporting tools for users who directly receive hate speech, while implementing content moderation strategies that account for intensity by prioritizing the rapid removal of high-intensity content, which exerts more severe effects. In addition, while community managers often focus on attracting and retaining newcomers, our study highlights the need to also devote sustained attention to long-term users, as the behavioral consequences they experience tend to persist for longer periods.

This study has several limitations. First, although ChatGPT demonstrates relatively high overall accuracy in annotating hate speech, its performance remains imperfect. In particular, large language models have inherent limitations in capturing complex discourse phenomena such as irony, double meanings, contextual ambiguity, and implicit cultural references (Kocoń et al., 2023; Shen et al., 2023). These limitations are further compounded by cultural specificity. Because the data were drawn from an online community in China, certain hate speech expressions reflect culturally embedded linguistic practices that are not always well recognized by ChatGPT. As a result, the model occasionally misclassifies the intensity levels of hate speech that rely on discourse conventions or contextual cues unique to the Chinese context, which may affect the precision of fine-grained intensity classification. Second, the analysis primarily focuses on individual-level behavior and does not address broader, macro-level dynamics, such as how hate speech influences community-wide interactions or collective discourse patterns. Finally, this study does not incorporate additional user attributes, such as gender or ethnicity, which may also influence how individuals experience and respond to hate speech. In future work, we will extend our analysis to the community level, examining how hate speech influences overall participation dynamics and collective norms. We will also conduct cross-cultural investigations to assess the universality of our findings across different sociocultural contexts.

Supplemental Material

sj-pdf-1-sms-10.1177_20563051261433500 – Supplemental material for Coping With Digital Hostility: How Witnessing and Receiving Hate Speech Elicit Divergent Responses

Supplemental material, sj-pdf-1-sms-10.1177_20563051261433500 for Coping With Digital Hostility: How Witnessing and Receiving Hate Speech Elicit Divergent Responses by Dong Jing, Xu Gao, Jianwei Liu and Kee-Hung Lai in Social Media + Society

Footnotes

Acknowledgements

We are grateful to the college student community platform for providing the necessary data for this study. In addition, we appreciate the constructive comments from the anonymous reviewers and editorial team, which significantly improved the quality of this manuscript.

Ethical Considerations

All data analyzed in this study were fully anonymized. The research did not involve any interaction with human participants. Therefore, in accordance with standard institutional guidelines, ethical approval was not required for this study.

Consent to Participate

As this study did not involve interaction with human participants and all data were fully anonymized, informed consent was not required in accordance with standard institutional guidelines.

Author contributions

D.J.: Conceptualization, Formal analysis, Writing – review & editing

X.G.: Data curation, Validation, Writing – original draft.

J.L.: Methodology, Investigation.

K.L.: Supervision, Resources, Validation.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by The Hong Kong Polytechnic University (grant number P0045777) and the National Natural Science Foundation of China (grant number 72571045, awarded to J.L.).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

The data used in this study are proprietary operational data provided by a commercial company (Zano) and cannot be shared publicly. Researchers who wish to access the data for academic purposes may contact the corresponding author. Subject to approval from the Zano operations team, partial access to the data may be granted.

Supplemental material

Supplemental material for this article is available online.