Abstract

This study examines the relationship between misinformation and political intolerance during the 2022 Brazilian Election. Using a three-wave survey, we show that citizens who believe false claims about electoral fraud become more intolerant toward political opponents over time. Beliefs in electoral misinformation consistently predicted increases in intolerance over the course of the election. We find an indirect effect for using messaging apps for news and intolerance, mediated by beliefs in electoral misinformation, suggesting that citizens who rely on messaging apps for news are not only more susceptible to believing misinformation but also to its detrimental effects on democracy. These findings highlight electoral misinformation as a key driver of intolerant attitudes in polarized democracies, operating not only by eroding trust in institutions but also by undermining citizens’ commitment to democratic norms.

Democracies around the world have been challenged by political division and struck by the electoral appeal of political candidates adopting extreme rhetoric toward minorities and disadvantaged groups. Digital media are seen as catalyzers of these dynamics due to their contribution to the spread of misinformation and their use by far-right groups (Bailard et al., 2024). As social media has become an integral part of citizens’ news diet (Newman et al., 2025), politicians have become skilled in using these platforms to advance strategic goals, often resorting to outrageous rhetoric to influence public debate and bypass traditional media gatekeeping and conventional party structures (Bennett & Livingston, 2025). In response, scholars have sought to understand the role of an increasingly complex digital media environment in explaining democratic disruption.

Despite mixed evidence on the relationship between social media and polarization (Wojcieszak et al., 2023), ideologically extreme citizens are more vulnerable to online harms, such as believing in misinformation and disinformation and being in echo chambers (Rossini et al., 2023; Valenzuela et al., 2019). Indeed, recent episodes of political violence—for example, insurrections to override election results in the United States (2021) and Brazil (2023)—have likely been motivated by falsehoods spreading online (Hendrix, 2023; Sheerin, 2022), although empirical evidence is limited. In Brazil, social media and messaging apps were used to orchestrate the attacks on the Presidential Palace, Congress, and Supreme Court on January 8, 2023 (Nicas, 2023), and investigations have revealed that these platforms were used instrumentally by former president Jair Bolsonaro and his surrogates to spread disinformation about electoral fraud (Ionova, 2025).

While there is evidence that illiberal politicians weaponize social media against democracy, little is known about the downstream effects on attitudes related to political extremity and intolerance. Against this backdrop, our study investigates the relationship between using social media and messaging apps for news, beliefs in electoral misinformation, and political intolerance. Understanding whether beliefs in electoral misinformation foster intolerance is essential to grasp how misinformation and disinformation undermining electoral integrity can harm democracy beyond merely leaving citizens “confused.”

We study these relationships leveraging a three-wave panel study during the contentious 2022 Presidential Elections in Brazil, focusing on the role of electoral misinformation in driving political intolerance and on how citizens’ digital news diets can help explain these relationships. The combination of high reliance on digital platforms in the population and heightened concerns with online misinformation makes this a theoretically relevant case for investigating these relationships. Social media and mobile messaging are the primary news sources for most Brazilians (Newman et al., 2025), raising concerns about exposure to low-quality information and vulnerability to online falsehoods. In addition, Brazil’s increasing polarization in the past decade (McCoy, 2024) has created a fertile ground for political elites to weaponize misinformation (Rossini et al., 2023). Hence, citizens who use social media and messaging applications to get information about the elections may not only be more vulnerable to electoral misinformation but also, we argue, become intolerant toward the opposition as a result.

We find that belief in electoral misinformation is consistently correlated with higher levels of intolerance across waves, suggesting that those who believed such misinformation became more intolerant over the course of the election. Mediation analysis using structural equation modeling indicates that using messaging apps for news indirectly increased intolerance after the election by strengthening misinformation beliefs. These findings suggest that electoral misinformation is a key driver of political intolerance during contested electoral contexts, with democratic consequences that go well beyond confusing citizens.

Digital Media and Political Intolerance

Political tolerance—citizens’ willingness to extend civil rights and liberties to disliked political groups, including those with highly disagreeable views—is a cornerstone for democratic stability (Gibson, 1992; Sullivan et al., 1981). As such, scholars have investigated the antecedents of intolerant attitudes, finding that perceptions of threat (Sullivan et al., 1981) and negative emotions, such as hatred, anger, and fear (Gibson et al., 2020), are linked to higher intolerance, while higher education and political knowledge are associated with lower intolerance (Sullivan et al., 1981).

As scholars examine the links between extreme partisanship and political hostility (Kalmoe & Mason, 2022), understanding the role of social media is crucial. Yet few studies have addressed the relationship between media use, misinformation, and political intolerance in democratic contexts. This gap is striking, considering both the global reliance on social media (Newman et al., 2025) and evidence of online misinformation fueling political violence in India, Brazil, the United States, and the United Kingdom (Banaji et al., 2019; Ozawa, Lukito, et al., 2023; Rosati, 2020; Sheerin, 2022).

The limited research on digital media use and political intolerance has focused mainly on hybrid or authoritarian regimes. Using a cross-sectional survey, Samet et al. (2024) examined Myanmar and expected social media to increase intolerance by exposing users to inflammatory content against minorities. Contrary to this expectation, the study finds that Facebook use was positively associated with religious and racial tolerance—likely due to confounding variables, such as demographic discrepancies between social media users and nonusers (Samet et al., 2024), given the country’s limited internet penetration (Thida et al., 2025). In Pakistan, Masood et al. (2004) similarly reported a positive relationship between consuming political information on social media and higher tolerance toward minorities, arguing that social media can expose users to pro-minority content (Masood et al., 2024). A study in Hong Kong treated political tolerance as a mediator and found that more tolerant respondents displayed higher levels of network heterogeneity on social media (Xia & Shen, 2023). Similarly to the other studies, though, its cross-sectional design does not allow for establishing the direction of the effects.

Among the rare studies using causal approaches, a deactivation experiment in Bosnia and Herzegovina found that participants who avoided using Facebook for 1 week reported lower regard for ethnic out-groups than those who remained active, underscoring the potential role of social media in promoting exposure to out-group content, potentially increasing levels of tolerance toward such groups (Asimovic et al., 2021). While these findings are insightful, they refer to social media use in general, not specific informational uses (e.g., for news) or contact with misinformation. As a result, they do take into account that different interests and motivations underlying social media use are crucial to understand its effects on political attitudes (Matthes et al., 2023).

The literature examined so far has key limitations, warranting further investigation. First, scholars have focused on authoritarian or hybrid regimes (Freedom House, 2020), with relatively low levels of internet penetration, 1 meaning that the hypothesized effects of social media cannot be disentangled from inequalities in internet access. Second, previous studies examined general social media use rather than informational uses or beliefs in online misinformation, which have been linked to detrimental attitudinal effects (Ognyanova et al., 2020; Valenzuela et al., 2019). Our study addresses these gaps by examining how electoral misinformation, informational uses of social media, and messaging applications more broadly may shape political intolerance in a contentious election period.

The Role of Misinformation

Concerns about misinformation and disinformation have been at the forefront of research in political science and communication due to their detrimental impact on citizens’ knowledge of public affairs (Flynn et al., 2017). Scholars have examined the spread of political misinformation during elections and major events, such as Brexit and the Coronavirus pandemic, highlighting factors that make citizens more vulnerable to believing falsehoods (Altay et al., 2023; Neyazi et al., 2021). Recently, the focus is shifting to the “second order” effects of misinformation on political attitudes and behaviors, such as institutional trust (Ognyanova et al., 2020; Rossini et al., 2023) or support for violence (Badrinathan et al., 2025).

Scholars and journalists have highlighted the potential for online misinformation to fuel offline violence (Banaji et al., 2019; Nicas, 2023), with antidemocratic protests attributed partly to social media as both a mobilization tool and a vehicle for spreading falsehoods. The January 6, 2021, US insurrection is attributed to Donald Trump’s use of social media to delegitimize election results (Sheerin, 2022), with evidence that the attack was organized via Telegram and Facebook (Bailard et al., 2024). Similarly, on January 8, 2023, the violent invasion of Brazil’s Congress, Supreme Court, and Presidential Palace was coordinated through social media and messaging apps (Ionova, 2025) and fueled by falsehoods undermining electoral integrity (Nicas, 2023).

Literature on democratic backsliding refers to disinformation as a weapon used by illiberal incumbents to undermine support for institutions that should otherwise hold them accountable (Bermeo, 2016; Cella et al., 2025). This has been a well-documented strategy in recent democratic elections (Hendrix, 2023; Ozawa, Woolley, et al., 2023; Rosati, 2020). Political misinformation can be an effective weapon because of its intrinsic relationship with ideology, often explained by motivated reasoning (Berlinski et al., 2023; Flynn et al., 2017). This matters because the consequences of misinformation go beyond confusing citizens about facts, affecting a series of political attitudes, such as increasing feelings of political inefficacy, alienation, and cynicism (Balmas, 2014), and lowering trust in the news media and political institutions (Mont’Alverne et al., 2022).

While no studies have examined the relationship between misinformation and political intolerance, the relationship is likely cyclical: divisive contexts heighten threat perceptions, increasing the spread and persuasiveness of misinformation, which further amplifies threat perceptions and increases intolerance. Existing research suggests that misinformation increases perceptions of threat (Calvillo et al., 2020), which correlate with political intolerance (Enders et al., 2021; Stoeckel & Ceka, 2023). Contexts of elevated conflict influence these dynamics, as heightened outgroup threat perceptions increase the persuasiveness of misinformation (Mazepus et al., 2023). If that is the case, we expect beliefs in misinformation to increase political intolerance, particularly in a highly divisive electoral context.

We focus on electoral misinformation, which undermines trust in the system and its institutions (Norris et al., 2020), perceptions of electoral integrity, and support for democracy (Berlinski et al., 2023; Mauk & Grömping, 2024). Electoral misinformation can decrease institutional trust (Rossini et al., 2023), which is associated with threat perceptions (Schlipphak, 2024). This matters because when citizens perceive disliked groups as threats to their values or to society more generally, they become intolerant toward them and willing to override democratic norms (Stoeckel & Ceka, 2023). While the pathways between misinformation and intolerance have not been explored, there is evidence that conspiratorial thinking, which correlates with vulnerability to electoral misinformation (Edelson et al., 2017), increases intolerance by inflating perceptions of threats from disliked groups (Stoeckel & Ceka, 2023).

Importantly, research highlights a symbiotic relationship between partisanship and misinformation, whereby politically extreme citizens are more vulnerable to ideologically congruent falsehoods (Garrett et al., 2016; Mazepus et al., 2023). When elites trash-talk democracy (e.g., undermining confidence in elections and attacking the courts and the press), they elicit anti-democratic attitudes both among supporters and the opposition (Cella et al., 2025) and undermine perceptions of electoral integrity (Norris, 2024). In recent elections, social media has proven to be a powerful tool for political elites to spread falsehoods attacking their opponents, the media, and democratic institutions (Ozawa, Woolley, et al., 2023; Rosati, 2020). Consequently, it is possible that beliefs in electoral falsehoods have fueled intolerant political attitudes. Based on the theory and evidence discussed so far, we hypothesize that electoral misinformation may have democratically relevant but hitherto unexplored downstream effects on political intolerance.

Informational Pathways to Intolerance: Direct or Indirect Effects?

Understanding the role of social media and messaging applications in influencing political attitudes is a challenging task, as these users approach these platforms with different purposes and motivations (Matthes et al., 2023). However, these platforms have become a major pathway to news globally (Newman et al., 2025), accompanied by heightened concerns about the spread of, and beliefs in, misinformation and disinformation (Altay et al., 2023), as well as polarization and echo chambers more broadly (Wojcieszak et al., 2023)

Despite widespread concerns, previous studies have found null effects of exposure to ideologically congruent content on polarization (Wojcieszak et al., 2023), including in Brazil (Mont’Alverne et al., 2025), and mixed evidence that social media use fosters beliefs in misinformation (Theocharis et al., 2023; Valenzuela et al., 2022). Rather than reinforcing “echo chambers,” social media users often encounter heterogeneous information sources (Barnidge, 2020; Fletcher et al., 2023). Accordingly, studies reporting a negative association between social media use and intolerance (Asimovic et al., 2021; Masood et al., 2024; Samet et al., 2024) attribute their findings to exposure to diverse viewpoints. On these grounds, we formulate the following hypothesis:

Messaging applications are more ambivalent platforms because there is less clarity about information circulating on them, given their encrypted nature and varied contexts. While news organizations can have a formal presence, most users encounter information through everyday conversations – in individual chats or with groups of family, friends, and acquaintances (Mont’Alverne et al., 2022). Qualitative evidence in Spain suggests that WhatsApp users typically encounter news via personal contacts, which makes information feel more relevant than news encountered on social media (Masip et al., 2021). Yet, messaging apps may expose participants to more like-minded information via strong ties (Kalogeropoulos & Rossini, 2023), and large political discussion groups on WhatsApp are dominated by partisan misinformation content, reducing exposure to diverse perspectives (Chauchard & Garimella, 2022; Recuero et al., 2021). Scholars have also documented the role of WhatsApp as a propaganda tool during Bolsonaro’s presidency (Ozawa, Woolley, et al., 2023), demonizing political opponents and potentially fostering hostility toward them. However, no research has considered how these dynamics may affect political attitudes, leading us to propose a research question:

Informational uses of social media and messaging apps may also affect political intolerance indirectly due to the prevalence of misinformation on these platforms. This theoretical pathway relies on the assumption that informational uses of these platforms influence exposure to and belief in political falsehoods (Guess & Lyons, 2020; Valenzuela et al., 2019). People who use messaging apps more frequently with homogeneous networks tend to be more accepting of misinformation (Gill & Rojas, 2020)—and also avoid challenging it (Chadwick et al., 2023). Others highlighted that messaging applications may expose people to like-minded content via political discussion groups (Recuero et al., 2021), potentially increasing the likelihood of users holding misinformed beliefs (Kalogeropoulos & Rossini, 2023). To shed light on these indirect relationships, we investigate whether believing in misinformation mediates the relationships between informational uses of social media and messaging apps and political intolerance.

Methods

This study is based on an original three-wave survey of a nationally representative sample of internet users in Brazil (N = 1600 in Wave 1, N = 1328 in Wave 2, and N = 1034 in Wave 3). Data collection was fielded by Ipec Inteligência, a leading Brazilian research company, using quotas for age, gender, social class, and region to match the sample to the population (see Supplemental Appendix B for sample characteristics). Data collection for Waves 1 and 2 took place shortly after the first and second rounds of voting for the 2022 Presidential Election (W1: October 5–19, W2: November 8–23). A third wave of data collection took place from December 8 to 21, 2022, as anti-democratic protests by Bolsonaro supporters, claiming an army intervention to overturn the election results, were happening around the country. The study was reviewed and approved by the [suppressed for review] Ethics Committee.

Measures

Dependent Variable: Political Tolerance

We measured political intolerance using a variation of the least-liked approach (based on the General Social Survey). Participants were first asked: “Speaking of different groups, can you indicate how much you like or dislike the following groups?” (abortion advocates, people who defend the military dictatorship, communists, evangelicals, “petistas,” and “bolsonaristas” 2 ) using a scale of 1 to 10. Then, we asked four questions about extending civil rights (to vote, protest, work in the civil service, and speak publicly) to the groups they disliked the most (i.e., rated 3 or lower), with answers on a five-point scale from totally disapprove to totally approve. Finally, we created a measure of Political Tolerance by averaging responses to these four questions related to political groups on the left (petistas, communists) and right (bolsonaristas, dictatorship advocates) 3 across the three waves (Mw1 = 3.62, SDw1 = 1.34, Mw2 = 3.68, SDw2 = 1.3, Mw3 = 3.54, SDw3 = 1.32).

Independent Variables

Electoral Misinformation

To measure beliefs in electoral misinformation, we created a list of eight false statements related to the elections and fact-checked by news agencies (see Supplemental Appendix C). 4 We asked participants whether they had previously encountered each statement (in a binary yes/no question) and then, whether they thought it was definitely true, probably true, probably false, definitely false, or don’t know. False statements incorrectly classified as “definitely” or “probably” true were coded as one. Correct answers and “don’t know” answers were coded as zero. The compound measure ranged from 0% to 8%, and 46% of respondents scored zero, meaning they did not hold any misinformed beliefs (Mw2 = 1.58, SD w2 = 2.02). We note that this measure was only included in Wave 2 due to ethical concerns of inadvertently disinforming participants before voting. Participants were debriefed at the end of the survey.

The frequency of informational social media/messaging use was measured for each platform. We asked: “How often have you used the following channels to follow the news?” in a four-point scale: (1) never, (2) a few times a month, (3) a few times a week, and (4) Every day or almost every day for Social Media (Mw1 = 2.66, SDw1 = 1.11, Mw2 = 2.61, SDw2 = 1.07, Mw3 = 2.35, SDw3 = 1.08) and Messaging Applications (Mw1 = 2.42, SDw1 = 1.21, Mw2 = 2.38, SDw2 = 1.19, Mw3 = 2.13, SDw3 = 1.13).

Control Variables

Our models control for engagement with disagreement on social media, given the theoretical expectation that more exposure to and engagement with heterogeneous political views could affect political tolerance, an expectation based on interpersonal communication studies (Mutz, 2006) that is often applied to online spaces (Barnidge, 2020). Participants were asked, “Thinking about the last thirty days, how often have you talked to people who have very different opinions about the elections than you on [WhatsApp, Facebook, Instagram, YouTube, Twitter, Telegram and YouTube 5 ]?” The answers ranged from 1 (“Never”) to 5 (“Several times a day”). We combined these platform-specific measures on a 1 to 5 scale for Waves 1 and 2 (α = .91, Mw1 = 2.36, SDw1 = 1.27, Mw2 = 2.29, SDw2 = 1.28).

We further control for political interest (“In general, how much would you say you are interested in politics?”, from “1” = not at all to “4” = very interested, Mw1 = 2.74, SDw1 = 1.06), ideological placement 6 (measured on a 10-point left to right-wing scale, Mw1 = 5.92, SDw1 = 2.98), age (continuous), gender (binarized for analysis), and education.

Data analysis

We employed two autoregressive ordinary least squares (OLS) models to answer H1, H2, and RQ1 to estimate Political Intolerance in Waves 2 and 3 while controlling for prior levels of intolerance. This modeling choice enables us to examine whether the correlates of political intolerance are associated with changes over time, that is, 1 and 2 months after Wave 1 was fielded. We relied on autoregressive models instead of fixed effects because our key independent variable, belief in electoral misinformation, was only asked in Wave 2 to avoid misinforming citizens before the vote. By controlling for prior intolerance, we can more directly examine time variation in our dependent variable, which brings us closer to causal evidence than would be possible with purely cross-sectional data (Markus, 1979). However, we are aware of the inherent limitations of observational data in establishing causality, and we refrain from making causal claims about our findings.

To measure the mediating role of misinformation beliefs on the relationship between social media and messaging app news use and political tolerance (H3), we ran two structural equation models (SEM) using the lavaan package in R (Rosseel, 2012) that employs bootstrapping (95% confidence intervals and 5000 bootstrapped samples). The SEM models also included a lagged-dependent variable of political tolerance as a control.

Results

Figure 1 presents the plotted coefficients of two autoregressive regression models predicting levels of political intolerance in Waves 2 and 3. In the first model, predicting changes in political intolerance between Waves 1 and 2, we used Wave 2 measures of our independent variables. In the second model, where the dependent variable was political intolerance in Wave 3, we used wave-3 measures of social media and messaging app news use, and Wave 2 measures of belief in misinformation and political disagreement, which were not asked in Wave 3. In both models, we used Wave 1 measures of stable control characteristics (age, gender, education, ideology, political interest).

Standardized coefficients of autoregressive regressions predicting political tolerance in W2 (M1) and W3 (M2). N = 1133 on M1 and 840 on M2. The table with the full output can be found in the Supplemental Appendix, including a cross-sectional model (M0). Figure depicting regression model plots for Models 1 and 2, measuring changes in political tolerance in Waves 2 and 3.

We find a negative relationship between beliefs in electoral misinformation and political tolerance (β = −.09, p < .001 in model 1 and β = −.10, p < .01 in model 2), providing clear support for H1. In both models, higher levels of belief in electoral misinformation were associated with greater political intolerance, even after controlling for individuals’ pre-existing levels of tolerance (W1). In other words, these results confirm that electoral misinformation is significantly linked to increases in political intolerance.

Testing our second hypothesis, we find that the frequency of social media news use had no significant relationship with political tolerance in Wave 2, but was marginally significant in Wave 3, meaning that people using social media for news became more tolerant over time, compared to their baseline levels of tolerance at the beginning of the election. Messaging app news use was not significantly associated with political tolerance in either model (RQ1).

Before examining the mediation hypotheses, we note that frequency of disagreement on online platforms was associated with decreases in political tolerance during the election period (β = −.07, p < .001), but the effect vanishes when looking at changes in measures of political tolerance between Waves 1 and 3 (β = −.03, p > .05). Although this relationship is not central to our key theoretical concerns, these findings contrast with theoretical expectations from research on the effects of disagreement in interpersonal settings (Mutz, 2006) and from a deliberative democracy standpoint (Gutmann & Thompson, 1996).

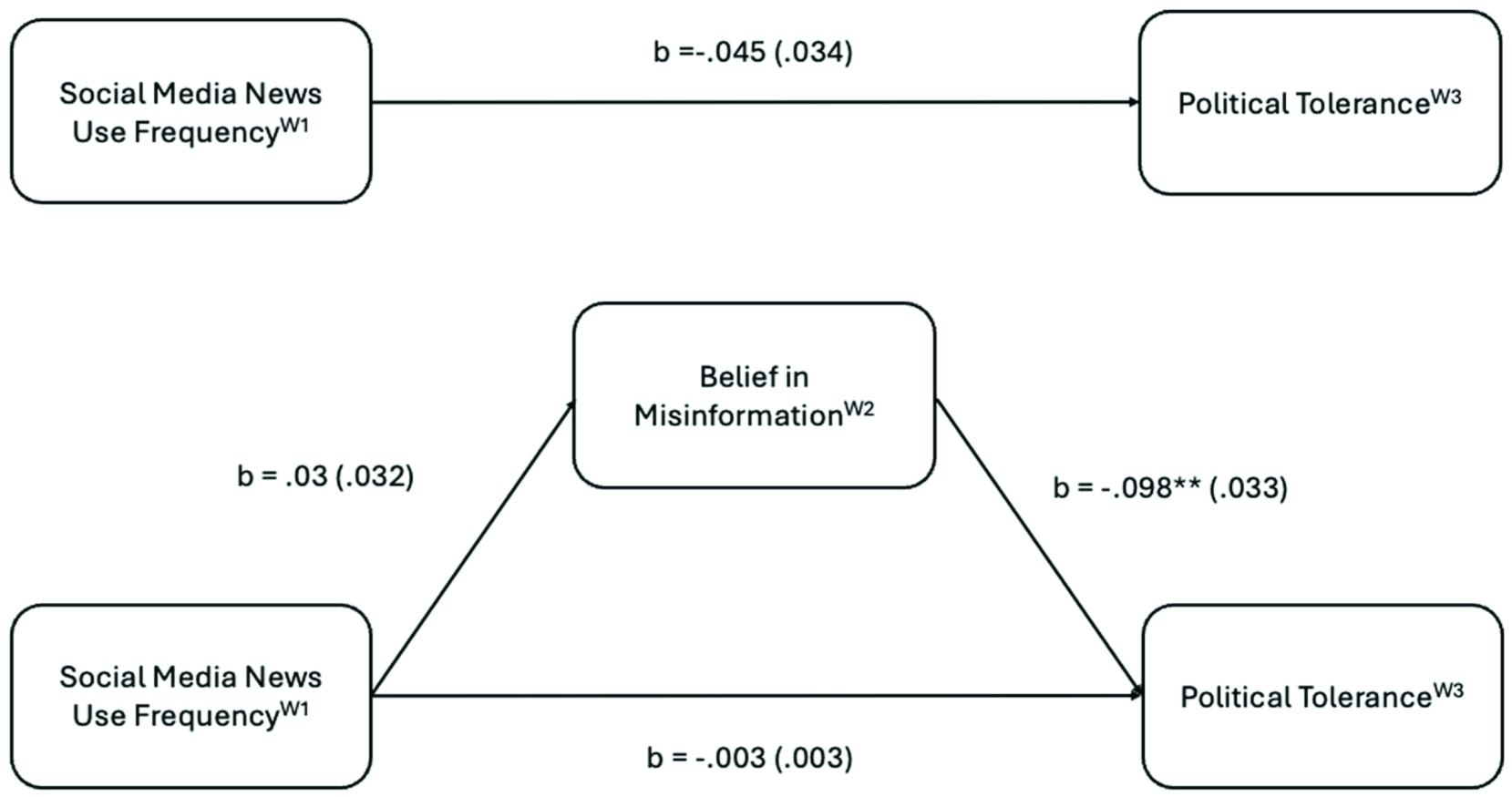

To test our mediation hypotheses (H3a and H3b), we fitted two SEM models to test how (a) social media and (b) messaging app news use frequency in Wave 1, affect belief in electoral misinformation in Wave 2, which in turn affects levels of political tolerance in Wave 3. Our tests were conservative, since we controlled for levels of political tolerance in Wave 1, belief in placebo misinformation, which has been shown to predict belief in misinformation (Rossini et al., 2023), and other relevant controls (see note to Figure 2). Across all three mediation models, we evaluate the structural model fit following prior SEM literature (Awang, 2014): we aim for widely adopted thresholds for the models’ comparative fit index (CFI > .90), root mean square error of approximation (RMSEA < .08), and Tucker–Lewis Index (TLI > .90). The first SEM model does not confirm a mediation, leading to a rejection of H3a: higher levels of social media news use did not directly or indirectly influence political tolerance through belief in misinformation (see Figure 2) (β = −.045, p > .05 for the direct effect) and β = −.003 (p > .05 for the indirect effect).

Autoregressive SEM testing results for Social Media News Use Frequency W1 and Misinformation W2 on Political Tolerance W3. Figure demonstrating the mediation pathways between W1 Social Media News Frequency, W2 Beliefs in Misinformation, and W3 Political Tolerance.

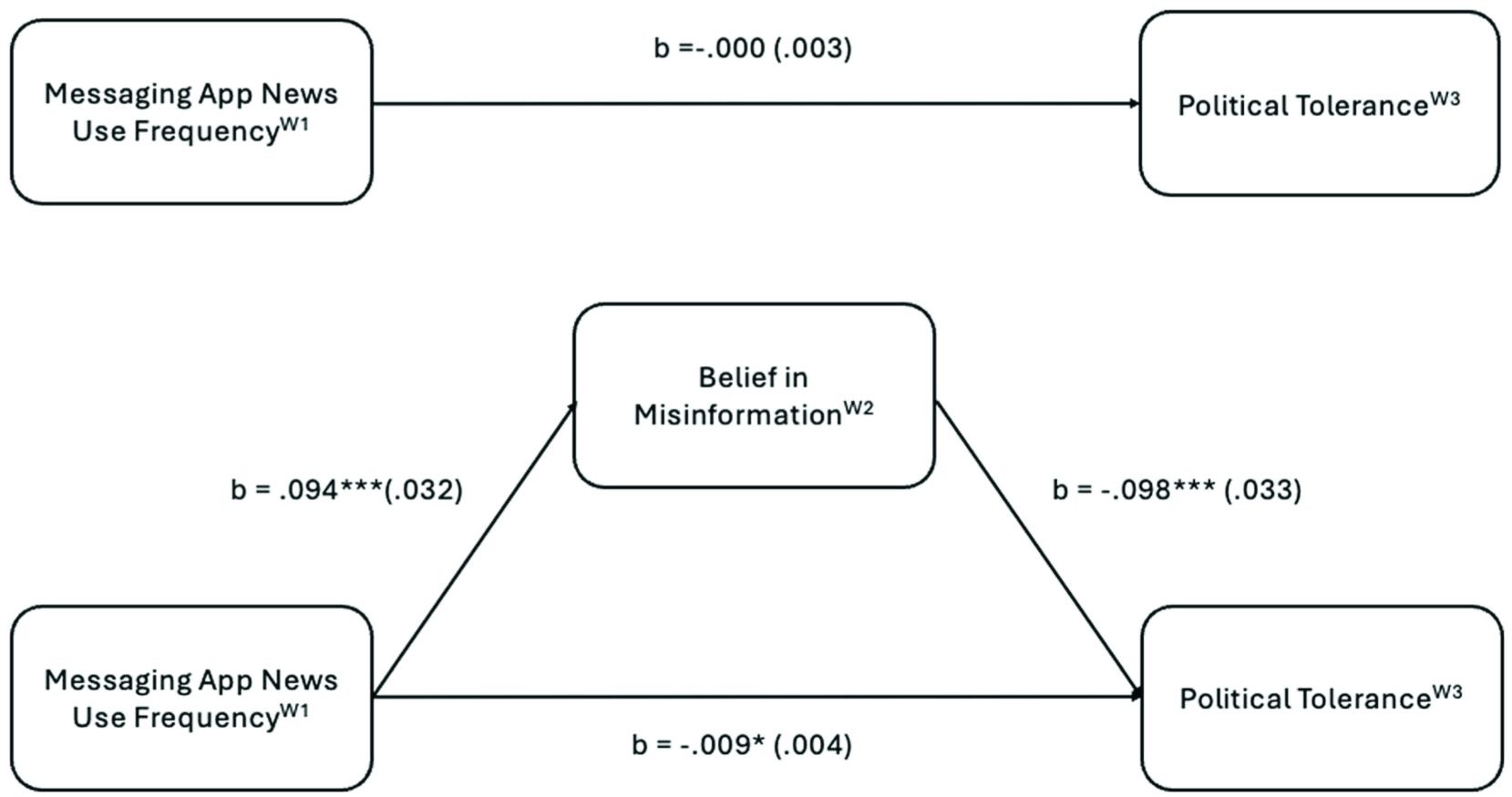

Our second SEM model suggests that higher levels of messaging app us for news led to higher beliefs in electoral misinformation, which in turn lead to lower levels of political tolerance (see Figure 3) (β = −.009, p < .05), confirming H3b. However, we find no significant direct effect. A direct effect was included as a condition in Barron and Kenny’s requirements for mediation analysis (Baron & Kenny, 1986), but researchers have criticized the necessity of this requirement (Preacher & Hayes, 2004; Shrout & Bolger, 2002). One of the key advantages of modeling mediation patterns is that an association between a dependent and an independent variable could be stronger when a mediator is considered; therefore, “it seems unwise to defer considering mediation until the bivariate association between X and Y is established” (Shrout & Bolger, 2002, p. 429). Furthermore, our model fit metrics reveal good RMSEA and CFI metrics and a TLI that is only slightly below the recommended threshold of .90 (.895), suggesting that the patterns we include capture meaningful variation in the data.

Autoregressive SEM testing results for Messaging App News Use Frequency W1 and Misinformation W2 on Political Tolerance W3. Figure demonstrating the mediation pathways between W1 Messaging App News Frequency, W2 Beliefs in Misinformation, and W3 Political Tolerance.

Discussion

Scholarly and societal concerns with polarization and episodes of disinformation-fueled violence in recent electoral cycles often point to social media as the culprit to explain the rise of anti-democratic behaviors among the electorate, including offline violence (Bailard et al., 2024). Our study makes a distinctive contribution to this literature by investigating the relationship between electoral misinformation and political intolerance, and examining the pathways through which social media and messaging applications may indirectly fuel democratically problematic political attitudes. Our findings point to a persistent relationship between holding misinformed beliefs about election fraud and intolerance, opening new avenues for future research addressing how misinformation can damage democracies’ social fabric beyond affective polarization (Jenke, 2024). While prior research highlights partisans’ vulnerability to believing in political misinformation (Garrett et al., 2016; Rossini et al., 2023), which can in turn depress institutional trust (Boulianne & Humpretch, 2023; Ognyanova et al., 2020), our findings point to a more problematic dynamic, with electoral misinformation influencing political intolerance over the course of a contested electoral period marked by several episodes of political violence.

While more research is needed to establish the mechanisms underlying this relationship, our research is the first to establish a correlational pathway between misinformation and intolerance, providing empirical evidence that rampant electoral misinformation can have a detrimental effect on crucial political attitudes. Considering the violent aftermath of the 2022 elections in Brazil, it is possible that electoral misinformation—which demonizes the opposition, attacks institutions, and undermines electoral trust (Berlinski et al., 2023)—offers a cognitive shortcut for voters who are inclined to engage in antidemocratic behaviors. This is particularly worrisome when political elites weaponize disinformation against opponents and allude to violence as a solution to political problems (Ozawa, Woolley, et al., 2023).

While our findings are correlational, prior work on the relationship between conspiratorial thinking and intolerance suggests a potential mechanism driving these effects: increased threat perception. Falsehoods about electoral integrity may fuel a sentiment that the system is “rigged,” increasing perceptions that the opposition and the political system itself pose a substantial threat, fostering intolerant sentiment against groups one fears may be taking advantage of unfair elections (Berlinski et al., 2023). Given the prevalence of electoral misinformation in contemporary elections and the weaponization of disinformation by political elites via digital media, further research is urgently needed to unveil the causal mechanisms driving these effects.

Another important contribution of our study lies in shedding light on the role of informational uses of social media and messaging apps. Unlike cross-sectional evidence that social media use may increase political tolerance (Asimovic et al., 2021; Masood et al., 2024; Samet et al., 2024), we only find a weak association between using social media for news and increases in political tolerance in Wave 3. The difference between our findings and studies focused on authoritarian and mixed regimes may lie in our focus on informational uses. As suggested by a meta-analysis study, informational uses of social media yield more positive outcomes in authoritarian and mixed regimes when compared to democracies, where other uses (e.g., networking) may be more consequential (Boulianne, 2019).

Our study reveals a hitherto unexplored indirect pathway whereby exposure to news on messaging apps leads to increased beliefs in electoral misinformation, fostering intolerance. These findings point to a troubling dynamic, where informational uses of messaging apps increase people’s vulnerability to falsehoods because credibility in these contexts is contingent on personal relationships rather than news sources (Masip et al., 2021). This matters because of the rising centrality of these applications: in Brazil, they are more popular than social media for news (Newman et al., 2025), and have been systematically weaponized by political elites adopting nondemocratic tactics (Ozawa, Woolley, et al., 2023). Due to inherent platform affordances, information circulating on WhatsApp is often disconnected from news sources, shared without further context, and in the form of images or short videos, which makes it cumbersome for users to verify information they receive from peers (Masip et al., 2021). While these trends may be particularly problematic in Brazil, given the reliance on these apps, the move toward messaging applications as information sources is a global trend, requiring further examination of their potential consequences.

Finally, we found that engagement with disagreement was negatively associated with tolerance during the election cycle, that is, between Waves 1 and 2. It might be that, contrary to earlier studies of interpersonal communication (Mutz, 2006), online disagreement is more ambivalent in divisive contexts, potentially reinforcing people’s perceptions of political polarization (Padró-Solanet & Balcells, 2022). This hypothesis, if confirmed, poses significant challenges for efforts to counter intolerance, as interaction with counter-attitudinal information in some contexts may backfire. However, this relationship was no longer significant in the Wave 3 model, suggesting these negative effects may wear off once a polarized election is over.

Our study has limitations. First, our longitudinal design covers a relatively short period, which may be insufficient to fully capture how deep-rooted attitudes like intolerance evolve beyond contested election periods, nor how they may be related to other types of misinformation beyond electoral fraud. As well, self-reported measures of news use must be carefully interpreted, as they may not be fully congruent with behavior. Finally, our findings reflect the context of one country and one election cycle and may not replicate in other contexts. While our findings need to be carefully interpreted when studying other countries, our study demonstrates that electoral misinformation poses real threats to democratic stability, providing a helpful framework to interpret democratic decay and backsliding in contexts of heightened political division (Berlinski et al., 2023; Edelson et al., 2017; Norris et al., 2020).

Conclusion

Our study contributes to understanding the role of electoral misinformation in undermining democratic attitudes, showing that beliefs in electoral misinformation can critically influence political intolerance over time, eroding a fundamental cornerstone of democratic societies. Importantly, our findings suggest that the detrimental effects of electoral misinformation may last beyond the campaign period and reveal how specific pathways to news, such as messaging apps, can indirectly influence intolerance by reinforcing misinformation beliefs.

The risks of electoral misinformation surpass leaving citizens confused or uninformed, with the potential to increase political intolerance and undermine democratic harmony, including after elections. The combination of attention-driven algorithms on digital media, low trust in institutions, and political animosity creates a fertile environment for electoral falsehoods to thrive, with clear detrimental effects on political intolerance. Hence, interventions to address misinformation need to go beyond factually debunking inaccurate information to consider the potentially nefarious effects of misinformation on intolerance and other key democratic attitudes not covered in this study. By the same token, misinformation research should broaden its focus beyond explaining and countering the prevalence of false information to assessing its indirect effects on key political attitudes and behaviors.

Addressing these issues is urgent because, in the context of highly divisive elections, the combination of misinformed, but highly interested and mobilized citizens may lead to offline political disruption and violence (Bailard et al., 2024)—particularly when illiberal elites weaponize social media to effectively increase threat perceptions toward political opponents—framing opponents as existential enemies against whom no rules apply. In Brazil, for example, preexisting cleavages such as antipetismo (Davis & Straubhaar, 2020) created fertile ground for political intolerance, and misinformation served to reinforce and intensify these sentiments. In this sense, misinformation can be understood not as the origin but as an amplifier, helping to sow the seeds of hate already present in the political landscape. More broadly, these dynamics underscore the need for electoral legislation and regulation to take seriously how political elites’ efforts to undermine electoral integrity can destabilize democratic systems and help fuel the wave of political violence witnessed in recent elections worldwide.

Supplemental Material

sj-docx-1-sms-10.1177_20563051261419393 – Supplemental material for Amplifying Division: Electoral Misinformation and Political Intolerance in Brazil

Supplemental material, sj-docx-1-sms-10.1177_20563051261419393 for Amplifying Division: Electoral Misinformation and Political Intolerance in Brazil by Patrícia Rossini, Antonis Kalogeropoulos and Camila Mont’Alverne in Social Media + Society

Footnotes

Ethical considerations

This study was approved by the Ethics Committee in the College of Social Sciences at the University of Glasgow [400220013/2022].

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Fieldwork for this project was supported by the British Academy under SG2122\21120, and by a Google unrestricted gift (Google Gifts 2022).

Data availability statement

Data for replication can be made available by the authors upon request.

Supplemental material

Supplemental material for this article is available online.

Notes

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.