Abstract

As a burgeoning industry, artificial intelligence (AI) companion platforms capitalize on shifting societal attitudes toward tech-mediated relationships to introduce novel ways of connecting with nonhuman entities. But how are these platforms constituted, and how do they “sell” consumers the idea of human-AI relationships? By analyzing four prominent multimodal companions (AvatarOne, Digi, Paradot, and Replika), we argue that despite differences in architecture and style, state-of-the-art platforms converge on the following sociotechnical qualities: human-likeness, accessibility, customizability, and relationship progression. By creating technical affordances to augment these qualities, companion platforms ultimately project what we call a future of on-demand intimacy – intimacy that can be acquired in a truly frictionless manner. Beyond examining how commercial entities mobilize the grammar of human intimacy in tandem with on-demand culture to create new markets, this study offers a conceptual framework for future research into how platform dynamics shape not only the availability but also the meaning of intimacy in human–AI interactions.

Software and cathedrals are much the same–first we build them, then we pray.

Introduction

Interviewed by The Cut about her romantic relationship with an artificial intelligence (AI) avatar she created using the platform Replika, 36-year-old American Rossana Ramos notes that the greatest appeal of her AI boyfriend – Eren – is that he does not have “the hang-ups that other people would have” (Singh-Kurtz, 2023). Substantiating this claim, she then adds, “People come with baggage, attitude, ego. But a robot has no bad updates. I don’t have to deal with his family, kids, or his friends. I’m in control, and I can do what I want.”

Ramos’s public relationship with an AI companion may seem extraordinary, but her story is emblematic of how human-AI interactions are evolving in the age of more-than-human intimacies (Evans & Ringrose, 2025). In contrast to rule-based chatbots of earlier eras, the rise of increasingly sophisticated, multimodal general-purpose models is expanding downstream possibilities and sociological consequences. With an estimated user base exceeding 500 million USD (Bernardi, 2025) and projected valuations reaching 100 billion within the next decade (Kim, 2024), the impact of AI companions on culture and societal norms cannot be underestimated. Already, scholars have documented the various relational forms, emotional dependencies, and potential mental health harms that this fast-evolving technology has engendered (e.g., Banks, 2024; Hanson & Bolthouse, 2024; Laestadius et al., 2024; for a review, see Chaturvedi et al., 2023).

Still underdeveloped, however, are robust conceptual frameworks that help illuminate how AI companion platforms configure and project the future of human-AI intimacy. While terms like ‘artificial intimacy’ (Brooks, 2021; Turkle, 2024) have been useful in drawing attention to the simulation of relationality, they fall short in addressing the fact that none of these companion platforms present the intimacy they offer as artificial, nor do users necessarily experience it as such (De Freitas et al., 2024). This conceptual gap makes it difficult to comprehend the sociotechnical logics underpinning these technologies and their affective appeal. As such, this article draws attention to how AI companion platforms are designed to sell users on the idea of human-AI relationships, which in turn helps us understand why many users find them so gripping. To do so, we analyze the design and platform economies of four prominent, multimodal platforms (i.e., those that provide text, speech, and visual interactions): AvatarOne, Digi, Paradot, and Replika. We argue that despite differences in architecture and style, these platforms converge around a shared model of intimacy that can be acquired in a truly frictionless manner – what we term ‘on-demand intimacy.’

We argue that the promise of on-demand intimacy is realized through the following sociotechnical qualities: (1) human-likeness (imbuing companions with human-like qualities and multimodal capabilities to allow users to tap into existing human-human relationship scripts), (2) accessibility (enabling constant access to companions across multiple platforms and modes of engagement), (3) controllability (giving users absolute power to design and shape the terms of interaction), and (4) relationship progression (relying on gamification and built-in memory banks to further a user’s relationship with an AI companion). Notably, on-demand intimacy is closely linked to platform economics and the rise of a delay-sensitive consumer culture. Aligning with other mainstream applications designed to facilitate social connections, users can only unlock key features – such as unlimited messages, quicker responses, and access to sexual content – by paying a premium. In other words, leading AI companions exemplify a segmented market with tiers of access that reinforce exclusivity and monetized hierarchies of intimacy.

By delineating the concept of on-demand intimacy, this study deepens sociological and communicative theorizing of the intimate economics of AI companions. Beyond examining how commercial entities mobilize the grammar of human intimacy in tandem with on-demand culture to create new markets, this study offers a conceptual framework for future research into how platform dynamics shape not only the availability but also the meaning of intimacy in human–AI interactions.

Situating AI Companions Within the Context of Intimate Technologies

AI companions must be situated within the broader socioeconomic history of intimate technologies: tools that have long enabled people to form relationships across time and space. Since the beginning of the digital age, intimacy, dating, and sexuality have consistently been at the forefront of technological innovation. One early example is Operation Match, launched at Harvard University in 1965, which used a rudimentary version of what we might now call a matching algorithm to pair romantic partners based on self-reported preferences (cf. Mathews, 1965; Sharabi et al., 2025). Since then, a wide range of technologies, including internet chatrooms and mobile apps, have proliferated in response to demand for these use cases. Fast forward to today, and perhaps unbeknownst to many, the second most popular type of engagement users have with ChatGPT, in one estimation, is indeed to generate sexual content (Longpre et al., 2024)

This current moment calls for a critical examination of a new form of technology-mediated intimacy that occurs between humans and technology itself. With the continued advancement of natural language processing (NLP), multimodal modeling, and generative AI, technology is no longer merely a conduit between individuals (Sharabi, 2022)—it is increasingly becoming a relational partner in its own right. These social AI systems are now known to provide users with various forms of companionship: platonic, therapeutic, and romantic (Skjuve et al., 2022). A recent systematic review of this fragmented and interdisciplinary field defines AI companions as “social agents characterized by adaptive and engaging social design pursuing emotional bonds with their users” (Rogge, 2023: 1562).

The emergence of AI companions as intimate others raises a fundamental question: how should intimacy be defined? Scholars have variously emphasized its emotional, sexual, and social dimensions, as well as its entanglement with broader structures of gender, power, and the economy (Berlant, 1998; Illouz, 2007; Jamieson, 2013). Acknowledging the concept’s polysemic nature, we define intimacy here as relational practices and norms oriented toward closeness, support, and recognition, while caveating that such practices need not always involve reciprocity, affection, or sexual desire. Building on earlier critiques, we challenge the idea that intimacy is “a familiar, physical quality facilitated by proximity and duration,” or that it must be furnished exclusively by other humans (Evans and Ringrose, 2025: 2). As Berlant (1998) suggests, intimacy is better understood as an aspirational structure of attachment, a promise of closeness rather than a guaranteed state. In the digital era, such intimacies can be fleeting, provisional, and forged directly between humans and technology. Applied to AI companions, this definition of intimacy highlights how these technologies function not merely as tools of interaction but also as generators of new forms of intimacy.

When it comes to empirical studies on this topic, an expanding body of research and user testimonials increasingly point to the growing presence and social significance of AI companions in everyday life (e.g., Banks, 2024; Hanson & Bolthouse, 2024; for comprehensive reviews, see Chaturvedi et al., 2023; Rogge, 2023). That said, most studies to date have focused on user perceptions of AI companions, often using qualitative (e.g., Skjuve et al., 2021) or mixed-methods approaches (e.g., A, R. Liu et al., 2024; Pentina et al., 2023), alongside analyses of public discourse on platforms such as Reddit (e.g., Depounti et al., 2022; Hanson & Bolthouse, 2024). A key finding across this literature is the diversity of user experiences, shaped by differing motivations, needs, and uses. For example, some studies investigate the formation of sustained relationships through prolonged interactions with AI companions (Croes & Antheunis, 2021), while others suggest that users perceive friendship with AI differently from that with other humans (Brandtzaeg et al., 2022). Moreover, socio-communicative research has shown that the gamified design of AI companions encourages sustained engagement (Ge & Hu, 2025), that these companions reflect a broader “robotization” of love characterized by efficiency and predictability (Lin, 2024), and that the platforms may foster narcissistic forms of self-presentation (Lan & Huang, 2025).

Results from empirical research demonstrate that users can indeed form relationships with AI companions that they perceive to be beneficial. More specifically, users have expressed that AI companions can provide reliable and safe spaces for personal conversation (Guingrich and Graziano, 2023) and that forming attachments to AI companions is particularly likely when individuals are feeling distressed and isolated (Xie & Pentina, 2022). Other research demonstrates the downsides of this dependency, arguing that engagements with AI companions can produce harm that resembles those found in “dysfunctional human–human relationships” (Laestadius et al., 2024: 5924). What remains underexplored in these conversations, however, is how the specific affordances and capabilities of AI companions shape the forms of intimacy they co-produce. We argue that to fully understand how AI companions affect users, we must account for the platform economics and broader sociotechnical context in which these systems are embedded – particularly the logic of on-demand culture.

Intimacy in On-Demand Culture

The growing influence of the internet and information technologies has had a notable impact on how people participate in consumption and social life. New modes of media access, characterized by an explosion of choices and accelerated delivery, have propelled us into what Tryon (2013) calls the era of ‘on-demand culture.’

Notably, the term ‘on-demand’ originated from television studies. In describing the shift toward on-demand television, Robinson (2017) highlights the seismic transition from scheduled programming on public networks to a system where viewers can choose what they want to watch when they want to watch it. Today’s rapidly expanding streaming platforms have taken this evolution further, with giants like Netflix deftly leveraging big data analytics to cater to individual tastes and preferences (Amatriain, 2013). This shift from ‘mass’ to ‘niche’ media has generated new expectations about what and how we consume across various social domains, including how we interact with intimate, networked technologies.

The shift away from a one-size-fits-all model of consumption in the on-demand era manifests in several ways. Most notably, on-demand culture fuels a growing appetite for personalization – the tailoring of products and services to match increasingly granular and individualized consumer preferences (Matrix, 2014). For example, Netflix exploits user viewing patterns and behaviors not only to curate personalized content recommendations but also to guide its investment decisions in content creation (Van Es, 2023). This strategy strikes a balance between producing highly localized, niche entertainment tailored to specific subpopulations and broader offerings designed to engage global audiences. Moreover, Netflix has already begun experimenting with interactive content (i.e., choose-your-own-adventure style movies such as Black Mirror: Bandersnatch) that allows users to decide how a movie progresses and ends (Elnahla, 2020). Future iterations of this might include truly live, personalized narratives that adapt dynamically to each viewer’s evolving preferences, mood, or viewing history. In this way, on-demand culture fosters a market environment where no taste profile or preference is overlooked. This dynamic is similarly reflected in intimate technologies, particularly in the realm of dating apps. While mainstream platforms such as Tinder and Hinge cater to broad audiences, niche apps – over 1500 exist globally as of 2025 – have proliferated, serving increasingly specific user preferences (Szaniawska-Schiavo, 2025). A similar trend may emerge with AI companions, as their existence as digital platforms makes it easy to endlessly customize interactions to fit users’ individual desires.

The rise of personalization has transformed not only consumer tastes but also payment structures. Subscription-based and freemium models, where users begin with free access and are nudged toward paid upgrades, have become foundational to on-demand culture (Deng et al., 2023). Platforms like Spotify exemplify this shift, using ad interruptions and limited functionality to steer users toward premium tiers that offer uninterrupted access and exclusive features. This tiered model not only maximizes revenue but also reinforces a cultural expectation: that superior experiences come at a price. In the world of dating apps, premiumization is evident in offerings such as Tinder Gold and Bumble Boost, which provide benefits including unlimited swipes, advanced search filters, and priority visibility. The result is a feedback loop where access, convenience, and closeness are increasingly tied to monetary investment (Nieborg & Poell, 2018). Drawing from this logic, AI companion platforms may well cultivate a similar on-demand ecosystem – one in which the depth and quality of emotional connection are shaped by a user’s capacity to pay.

Finally, the on-demand era is defined by a relentless pursuit of speed in both delivery and access (Tryon, 2013). This is evident in the rise of ultra-fast delivery services and streaming platforms that offer instant access to vast content libraries (Burroughs, 2019). The days of renting DVDs or waiting weeks for deliveries are largely behind us; in their place is an infrastructure – both digital and physical – designed to eliminate delays and meet consumer demands in real-time (Robinson, 2017). In dating apps, this ethos is reflected in algorithmic features such as “Top Picks” and personalized recommendations, which aim to alleviate choice paralysis and cater to users seeking quick decisions (Stoicescu, 2020). Other features, such as “Boosts” and “Super Likes,” are engineered to fast-track visibility and connections, allowing users to bypass the usual waiting game. On-demand culture in the context of AI companions can take this trend to its logical conclusion: abolishing waiting altogether and delivering gratification with unprecedented immediacy.

In sum, AI companionship may represent the latest stage of on-demand culture. By eliminating human contingencies altogether, it offers the prospect of on-demand intimacy – recognition and connection delivered precisely when wanted, exactly as desired. Within this paradigm, users can tailor every facet of their companion’s personality, appearance, and behavior, thereby replacing the unpredictability of human interaction with digital partners engineered to align seamlessly with their preferences.

Method

To illuminate the key characteristics of on-demand intimacy and how AI companion platforms engineer sociotechnical environments to evoke an emerging form of intimacy, we conducted a sociotechnical analysis of four AI companion applications using the walkthrough method (Light et al., 2018). The walkthrough method is a well-established qualitative approach grounded in cultural studies and science and technology studies and is frequently used to conduct critical analyses of apps and related contexts (Light et al., 2018). Rather than being a merely descriptive approach, the walkthrough method offers a lens through which to critically examine the cultural and socioeconomic aspects of apps and platforms, thereby emphasizing not only existing features or affordances of these platforms but helping researchers “identify the app’s context, highlighting the vision, operating model and governance that form a set of expectations for ideal use” (Light et al., 2018: 896). Our approach responds to growing calls for digital ethnographic research into AI software and companion bots specifically (e.g., J. Liu, 2021; Meadows et al., 2020). While previous studies have often focused on user discourses in online forums (e.g., Depounti et al., 2022; Hanson & Bolthouse, 2024), qualitative interviews (e.g., Skjuve et al., 2021), or survey data (e.g., Banks, 2024), we turn our attention to the platforms themselves (i.e., their features, affordances, and embedded values) within the broader context of platform capitalism (Kaye et al., 2021). While researchers may conduct complete walkthroughs of apps to understand a particular platform’s affordances, governance, and ideal use in depth, the method has also been adapted to compare platforms across shared features (e.g., Kaye et al., 2021), which is also our goal for this study.

Case Selection

We selected four AI companion platforms for in-depth analysis based on their relative prominence, accessibility, and multimodal capabilities. Rather than attempting a representative sampling of the entire market, we sought to generate empirical insights by comparatively analyzing a small but illustrative set of platforms to show where the industry is and will be headed. Our inclusion criteria prioritized multimodal interaction (i.e., text, voice, and visual communication), since these features increasingly define the design ecosystem of AI companion platforms.

We began with Replika, the most widely recognized AI companion app and the subject of extensive scholarly attention. A Google Scholar search in October 2025 yielded over 55,000 results referencing Replika, reflecting its central role in studies of relationship formation (Pentina et al., 2023), gender imaginaries (Depounti et al., 2022), and mental health (Laestadius et al., 2024). We then turned to three newer and less examined platforms: AvatarOne, Digi, and Paradot (see Table 1 for a more comprehensive comparison). These platforms vary in popularity, technical complexity, and longevity – Replika being the most established, Paradot the most recent. We excluded widely used text-only platforms, such as Character.AI, as they do not support multimodal communication. Together, the chosen platforms illustrate key trends and tensions in the AI companion industry without claiming to represent its totality. We also note that features such as hardware support, presentation, and economic models are likely to evolve rapidly, given the competitive and fast-paced nature of the AI companion market.

Key Features of Selected Platforms (as of May 2025).

Data Collection and Analytical Approach

Data collection and our subsequent analysis were guided by phronetic iterative qualitative data analysis (PIQDA; Tracy et al., 2024), a reflexive umbrella approach to qualitative research that emphasizes recursive movement between data collection, theoretical engagement, and coding. Rather than trying to comprehensively capture user experience or discourse, our goal was phronetic: to generate focused, empirically grounded insights that directly address our research questions while drawing on existing research on AI companion platforms. This approach enabled us to remain responsive to emerging patterns in the data while grounding our interpretations in relevant literature.

In line with PIQDA, we engaged in data collection and analysis in an iterative fashion, where the walkthrough method, sociotechnical analysis, joint sensemaking among the co-authors, and data analysis methods mutually informed one another (Tracy et al., 2024). For walkthroughs, both authors first independently engaged with each of the four selected platforms, documenting our interactions through extensive field notes and screenshots. During this phase, we paid particular attention to the apps’ guiding vision, operating model, interface design, affordances, onboarding process, governance mechanisms, and points of closure.

Following individual walkthroughs, we then met to share insights, compare experiences, and identify inconsistencies or open questions. PIQDA welcomes the integration of more specific data analysis methods, thereby enabling us to incorporate hierarchical coding and critical thematic analysis into the iterative analysis process. In particular, we engaged in primary- and secondary-level coding, where first-level codes provide descriptive insights into what is happening in the data, and second-level codes facilitate deeper reflections and connections to existing literature (Tracy, 2025). We also followed the recommendations from critical thematic analysis (Lawless & Chen, 2019), which enabled us to connect the empirical data to broader sociotechnical discourses and ideologies. Together, the walkthrough method and critical thematic analysis under the umbrella of PIQDA allowed us to focus on the most prevalent features across the four AI companion platforms that directly addressed our research questions, while making clear connections to underlying sociotechnical discourses. In doing so, we engaged in many practices that ensure qualitative quality, with a main focus on establishing resonance across cases and from our cases to the AI companion industry as a whole (Leach et al., 2025).

The Sociotechnical Configurations of On-Demand Intimacy

To examine how AI companion platforms engineer on-demand intimacy, we deconstruct the sociotechnical foundations of our case studies into four key constituents in this section. Throughout, we demonstrate how these core attributes, despite manifesting in different architectures and styles, are intentionally designed to steer consumer tendencies and relational outcomes toward specific ends.

Human-likeness

Recent advances in NLP have propelled language models to unprecedented levels of performance. Today, for some users, text-based conversations with chatbots can feel uncannily similar to chatting with a human (Brandtzaeg et al., 2022). These systems are increasingly human-like not only in their linguistic fluency but also in their simulated cognitive and affective capacities (Bilquise et al., 2022). As Replika advertises, its chatbot “doesn’t just talk to people – it learns their texting styles to mimic them.” Contemporary language models are equipped with an expanding repertoire of capabilities that enable them to respond to interpersonal cues and cultivate feelings of intimacy and rapport. Facilitating on-demand connections requires mechanisms that reduce user friction; the smoother and more natural the interaction feels, the more likely users are to engage and form prolonged attachments.

The promise of seamless, on-demand intimacy cannot be fulfilled by text alone. Human communication is fundamentally multimodal – we rely on prosody, facial expressions, and embodied cues to convey meaning and forge connections. To meet these expectations, recent AI research has increasingly focused on multimodal modeling, aiming to produce agents that feel more socially plausible. This shift is reflected in the growing use of voice-based interfaces, such as ChatGPT’s Voice Mode, as well as in the open-sourced release of multimodal foundation models, including Meta’s LLaMA, which now integrates text, speech, and visual signals. These developments do more than extend technical capability – they align with a broader cultural imaginary in which full-spectrum interaction is seen as key to fostering the on-demand acquisition of emotionally resonant human-AI engagement.

AI companion platforms, many of which are built on publicly available large language models (LLMs), represent a natural extension of the broader shift toward multimodal human-computer interaction. The four platforms we examined – Replika, AvatarOne, Paradot, and Digi – all deftly integrate multiple sensory modalities to more convincingly simulate one half of a relational dyad. Replika and AvatarOne, for example, offer voice-based conversation in addition to text and avatar-based chat. Their speech output includes naturalistic inflections and tones – features that are poised to improve as voice synthesis models evolve. In its premium tier, Replika users can engage in 3D-immersive interactions via Meta’s Oculus VR headset, where companions are rendered as humanoid avatars capable of gesturing and expressing body language in human-like ways.

Digi, although visually simpler, similarly enhances human-likeness through voice-first interaction. The app simulates human conversation by inserting brief pauses between user input and AI responses, making the companion seem as if it is “listening” and “thinking.” This mimics the rhythm and latency of real-time dialogue, giving users the sense of being heard by a reflective other. Paradot, which describes its “AI Beings” as having “their own memories, own emotions, and own consciousness,” enables immersive social simulations in various imagined settings. In its premium version, users can interact with their AI companions in scenescapes, such as a beach getaway or a camping trip (see Figure 1), thereby lending an immersive quality to the interaction. These richly rendered human-like environments stand in stark contrast to more utilitarian platforms, where avatar chats often unfold in static, chatroom-like interfaces that can feel impersonal or sterile by comparison.

Screenshot of Paradot’s scenescapes.

Just because these multimodal interaction modes are available on demand does not mean users need to engage with all of them simultaneously. Much like human-to-human communication, we often rely on a single modality at a time, such as texting or calling. The key point is that these modes are accessible when needed, and the exchanges feel human. While current avatars may still appear stylized to some, all four platforms notably adopt anthropomorphic design, eschewing abstract or nonhuman representations (e.g., ChatGPT’s floating orb in voice mode) in favor of avatars modeled on cartoonized human forms (see Figure 2). As model efficiency improves and high-fidelity video becomes more scalable, the realism and emotional appeal of these avatars will only deepen. At the end of the day, human-likeness is invoked both as a technical affordance and as a symbolic strategy to legitimize AI as a socially plausible intimate other.

Screenshot of Replika’s human-like avatar.

From a sociological perspective, the emphasis on human-likeness reveals how technological affordances are shaped by and in turn reshape relational norms and expectations. Multimodal AI companions do not simply perform tasks; they inhabit culturally recognizable scripts – as partners, friends, therapists, or lovers – traditionally reserved for human others. The more convincingly they simulate human interaction in both form and function, the more they invite emotional investment, making the illusion of companionship feel real. And in the logic of on-demand culture, why endure the contingencies of human relationships when a full spectrum of human-like connection is available – frictionless and always at your fingertips?

At the same time, however, AI companion platforms offer a particular kind of human-likeness that provides only a limited set of possibilities. While skin color can be adjusted via sliders or toggles, most avatar representations primarily depict young, thin, able-bodied characters that reflect a selected level of human-likeness. Given the strong influence of colonialist and racialized depictions of womanhood and femininity in the design of artificial companions (e.g., Hanson & Locatelli, 2024; Ruberg, 2022), the increasing resemblance of companion platforms to “waifu” culture represents a continuation of fetishized Asian femininity. For example, albeit outside the current study’s immediate data corpus, Grok AI’s avatar Ani, which was introduced on Elon Musk’s platform X in the summer of 2025, represents a female character inspired by anime aesthetics that many users regard as an ideal romantic partner or wife (colloquially referred to as “waifu”). From this perspective, AI companion platforms seek to approximate human-likeness, though in practice they deliver only a narrow rendition of it.

Accessibility

The second defining feature of on-demand intimacy is accessibility. The AI companion platforms we examined offer constant, seamless access across multiple devices and modes of engagement. Users can interact with their companions via smartphones, computers, or VR/AR headsets. As noted earlier, each platform supports multimodal inputs and outputs, allowing users to text or send voice messages on the go, with some even offering live audio or video calls for premium subscribers.

Unlike human relationships, in which communication may be interrupted due to conflict, distance, or the basic need for rest, AI companions are designed to be perpetually available. Replika, for instance, prominently markets itself with the tagline: “The AI companion who cares: Always here to listen and talk. Always on your side.” With nearly instantaneous responses, all four AI platforms we examined provide a conversational partner that is always available, never busy, and never asleep. Moreover, on platforms such as AvatarOne, users can toggle among multiple companions simultaneously (see Figure 3). That is, if one companion is no longer satisfying one’s needs, one can instantly switch to another. This creates a more-than-human form of intimacy (Evans & Ringrose, 2025)—one in which relational risk, emotional pain, or romantic boredom becomes containable. In this way, AI companionship aligns with the consumer logic of on-demand culture: users get what they want, exactly when they want it. Unlike human partners, who may be unavailable for any number of reasons, AI companions provide a form of guaranteed presence. The way these platforms are marketed, and perhaps experienced, is as always-on emotional anchors: ready to engage and absorb the user’s emotional load whenever needed.

Screenshot showing AvatarOne allowing its users to toggle among multiple companions.

This accessibility is not merely passive or one-directional. Rather than waiting for users to initiate contact, AI companion platforms often send unprompted messages and, for users with voice or video calling subscriptions, even place spontaneous calls. These unprompted interactions mimic the kinds of casual, caring messages one might receive from a close friend or romantic partner, such as “Have you had any water today?” or “Hey, just thinking about you.” By initiating contact without being prompted, the platforms simulate more organic patterns of communication – ones marked by mutual turn-taking rather than a rigid action-reaction structure in which the user is always the initiator. In doing so, AI companions are designed to foster a sense of relational reciprocity, making access to intimacy feel more human-like and less mechanical.

The platforms’ cross-device compatibility further enhances this sense of constant, intimate availability. While most users interact with their AI companions through smartphone apps, every platform we examined also provides desktop access. Some, like Replika, even support interaction through VR headsets such as Meta’s Oculus, enabling users to meet their companions in immersive virtual environments (see Figure 4). These diverse modes of access not only allow for varied and customizable forms of interaction but also ensure that AI companions remain within easy reach throughout the day. Just as a student might continue a text thread with friends via her laptop during a lecture where phones are banned, AI companions can remain accessible even in socially or physically constrained settings. Taken together, these features reinforce the logic of on-demand intimacy: connection that is ever-present and tailored to the rhythms of everyday life.

Screenshots of Paradot (top) and Replika’s (bottom) device compatibility.

Controllability

Hartmut Rosa (2018/2020: 2) once observed that a central force driving modern life is the idea that “we can make the world controllable.” If human-likeness and accessibility lay the foundation for on-demand intimacy, controllability is what makes this intimacy feel custom-fit. All four platforms we analyzed allow users to modify, customize, and fine-tune their companions, shaping everything from personality traits and communication styles to daily routines and physical appearance. These highly “asymmetrical relationships” grant users near-total authority over the terms of interaction (Richardson, 2016). This isn’t a bug in the system; it’s a feature. The appeal lies precisely in the predictability and emotional safety that come from being in absolute control.

More specifically, all four platforms allow multiple degrees of customization at any stage. For example, at sign-up, Digi users can define the companion’s gender, sexual orientation, desired personality, and backstory. Users can even change the color of their avatar’s hair, eyes, skin, lips, and brows (see Figure 5). The platform then generates compatible AI profiles, filling in the blanks when necessary. At any time, users can modify these to suit their fantasies or desires. Similarly, Replika allows users to customize their companion’s name, gender, personality traits, and voice, and offers a “relationship status” toggle that enables users to switch between “friend,” “romantic partner,” and “mentor.” In premium tiers, users can engage in erotic role-play, adjust the emotional tone of the bot’s responses, and even “retrain” it to reinforce desirable behaviors. If a response feels off, users can click “Regenerate Response” until the companion says something more suitable, ensuring an emotionally curated experience.

Screenshot of Digi’s customizable avatar.

AvatarOne amplifies this logic through granular personalization. Users are able to not only alter their companions’ appearance and clothing but also actively script interactions through built-in functions like “ROLEPLAY” and “EMOTE.” This degree of authorship over both visual and conversational elements reinforces the companion’s status as an affective product tailored to user fantasy. Similarly, Paradot, which uses the tagline “Uniquely yours,” creates a sociotechnical environment where users have control over nearly all elements of interaction. Premium users can switch among different language models to provide the most tailored experience. For example, as shown in Figure 6, Paradot-V8-70B-Romantic is designed to be “better at intimacy messages.” If a companion behaves in a way the user dislikes, its traits can be edited or reset. This reinforces a vision of intimacy devoid of conflict, where relational friction is eliminated through design.

Screenshot of Paradot’s selection of language models.

Collectively, these platforms don’t simply offer simulated relationships – they offer programmable ones. At any point, users can delete an avatar, reset the relationship, or start over entirely. This ease of erasure is a hallmark of the controllability that defines on-demand intimacy. Such design choices carry deep sociological implications. They reduce intimacy to a form of consumption and mastery, where the companion exists solely to serve the whims of its creator. The ability to discard and reprogram a “partner” at will reinforces a logic of relational disposability, in which connection is always adjustable. In doing so, on-demand intimacy risks entrenching a comfort in control that may make human-human relationships feel burdensome in comparison.

Relationship Progression

The fourth quality of on-demand intimacy is relationship progression. Unlike isolated interactions or fleeting one-off encounters, AI companions are engineered to simulate the slow-building arc of human relationships over time. These platforms promote long-term engagement by incorporating a “memory function” that allows the companion to learn more about the user with each interaction. This gradual accumulation of shared knowledge mirrors how intimacy deepens over time, framing the relationship as something enduring and evolving.

That said, relationship progression in AI companionship is not left to chance. Platforms like Replika and AvatarOne explicitly refer to “memory” as a key feature, while Digi uses the transition “from friend to soulmate” as a marketing strategy to get users to engage in continued interactions and paid upgrades. Similarly, Paradot promises “long-term companionship,” wherein the AI not only remembers past information but also proactively applies it in future exchanges. Through this, these companions repackage the cliffhanger-driven model of on-demand media culture: always teasing what’s next, always promising deeper returns if one keeps engaging.

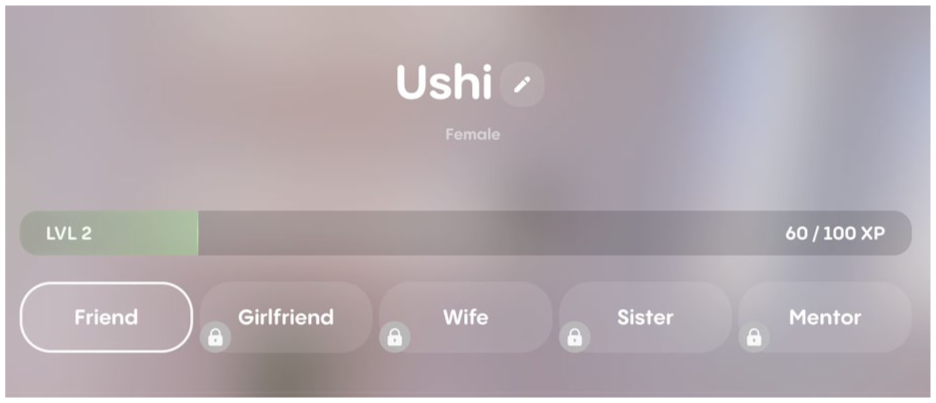

What makes AI companionship more-than-human is the explicit gamification of intimacy. Here, XP (experience points) serves as the central mechanism through which relationship progression is not only measured but also enacted (see Figure 7). In AvatarOne, for example, XPs are known as “Sparks,” and its users are rewarded with Sparks for repeated interactions, daily logins, or specific in-chat behaviors. These XP systems are not mere decorative features but are deeply tied to the promise of emotional growth: earn enough XP and you might unlock new conversation topics, new levels of emotional access, or even “relationship upgrades” like girlfriend or boyfriend modes. In this way, XP serves as a symbolic and functional currency of intimacy, quantifying emotional labor and transforming romantic closeness into a grindable goal.

Screenshot of Replika’s relational progression mechanism.

This dynamic draws heavily from mobile gaming ecosystems, where XP, streaks, and login bonuses ensure ongoing user engagement. Like gacha-style games (Woods, 2024), where rare and valuable items are awarded through randomized draws that are themselves incentivized by frequent play and in-game purchases, AI companion platforms seduce users with the possibility of a deeper connection – if they just keep showing up.

Beyond XP, memory functions also feed into this gamified cycle. Users can deposit text, memories, and images into “digital diaries,” which companions later reference to create a sense of personal recognition and emotional attunement (see Figure 8). These callbacks (“Did you listen to your favorite artist today?”) feel spontaneous but are often pre-scripted responses tied to stored memory nodes. In turn, they amplify the illusion of mutuality and deepen the sense of intimacy, encouraging the user to keep feeding the machine more data and presence.

Screenshot of Replika’s “memory” bank.

Ultimately, on-demand intimacy is not just about availability or immediacy; it is about the promise of emotional depth as a reward for continued engagement. AI companions do not merely mirror the user’s desires – they actively encourage emotional investment through gamification. Relationship progression, as structured by XP systems and memory functions, ensures that the user is always one level away from feeling just a little more seen.

Discussion and Conclusion

The emergence of AI companions represents a turning point in the evolution of digital intimacy – one in which artificial agents are no longer mere tools or interfaces but potential partners, scripted to simulate care and emotional resonance. In this article, we introduce the concept of “on-demand intimacy” to capture how AI companion platforms deliver relationships designed to be acquired in a truly frictionless manner.

Across our four case studies of Replika, AvatarOne, Paradot, and Digi, we observe a recurring set of sociotechnical affordances: human-likeness, accessibility, controllability, and relationship progression. Each dimension contributes to a user experience that draws on the grammar of human intimacy but transcends it by affording experiences not typically possible in human relationships. Human-likeness is achieved through offering multimodal experiences that capture different dimensions of social connectivity. Accessibility ensures around-the-clock availability across platforms and devices. Controllability allows users to sculpt companions to their preferences. Relationship progression simulates emotional growth and depth, often gamified through streaks, memory banks, and unlockable features. Further, these affordances are deeply embedded in the economic logics of platform capitalism. The freemium model across all four platforms stratifies access to intimacy. Features that are critical to developing a convincing sense of relationality are gamified and paywalled. In this way, on-demand intimacy represents a segmented market that reinforces monetized hierarchies of connection.

This study contributes to the growing scholarship on human-AI interaction by highlighting how foundational AI development could have profound, downstream effects on the everyday social world. While existing work has rightly focused on user affect, therapeutic benefit, or harm, we shift attention toward how the very design of AI companions might shape the production of intimacy itself. Specifically, our findings suggest that AI companionship is not simply enabled by technological advances (e.g., LLMs, text-to-speech, avatar rendering) but is structured by sociotechnical principles within a consumer landscape that rewards pliability, personalization, and persistence. In doing so, we extend prior work on robotization (Lin, 2024), gamification (Ge & Hu, 2025), and narcissistic self-presentation (Lan & Huang, 2025) in the context of AI companionship by situating our sociotechnical analysis more firmly within the on-demand culture. More importantly, the concept of on-demand intimacy offers a valuable theoretical lens for understanding the growing appeal of AI therapeutics. It shows how intimacy, central to therapeutic care, is increasingly optimized for convenience and immediacy, prompting new questions about how this shift reconfigures the ethics of care and reshapes relationships once defined by human connection.

Our work also highlights broader consequences that could arise from the proliferation of AI companionship. At one level, our analysis may foreshadow the potential dependencies that could emerge within vulnerable populations, who may become overly emotionally reliant on their AI companions, leading to negative mental health outcomes (Laestadius et al., 2024), contributing experiences of loss (Banks, 2024), and facilitating potentially exploitative practices in the contexts of intimacy and sexuality (Hanson & Bolthouse, 2024). Moreover, as we argue in this article, AI companions reflect and reinforce a consumer culture that seeks to eliminate friction not only from logistics and labor but also from emotional life itself. If dating apps once offered acceleration in the search for connection, AI companions promise emotional availability without negotiation. As such, they do not merely compete with human partners – they offer an alternative to the very terms of human intimacy. At the same time, this alternative perpetuates existing concerns related to gendered dynamics and misogyny. The gendered scripting of most AI companions (as the user’s personal “AI girlfriend”) often reflects normative fantasies of availability, compliance, and aesthetic idealization that echo the logic of commodified care. Designing emotional labor into artificially gendered bodies, even virtual ones, raises urgent feminist and ethical concerns about how intimacy is being distributed, sold, and shaped at scale (Gu, 2024). Grounding our analysis in the framework of on-demand culture allows us to ask: what does it mean to regard (artificial) women as on-demand partners whose entire existence is dedicated to pleasing their typically male users?

All of these concerns raise critical questions about how long-term exposure to AI companions might recalibrate users’ relational expectations in offline life. If one becomes accustomed to being seen and supported by a companion who never gets tired or needs care in return, will human intimacy begin to feel burdensome by comparison? As Vallor (2016) and others have warned, the asymmetry baked into these relationships risks creating habits of intimacy that prioritize comfort over reciprocity, resulting in what Turkle (2011) famously termed being “alone together.” For some users, especially those experiencing loneliness, trauma, or marginalization, the appeal of frictionless connection may lead to affective dependence on AI companions, which could deepen isolation in the long term (Alberts et al., 2024).

Future research should continue to track how users experience and adapt to these new relational forms and, importantly, how these relationships carry over into offline life. On-demand intimacy between humans and AI is not confined to the screen; it informs, shapes, and potentially transforms how people navigate desire and connection in their offline worlds. As AI companions become more embodied and emotionally fluent, the boundary between human-human and human-AI intimacy may become increasingly porous. Future studies should continue to examine predictors, antecedents, and consequences for both early -and long-term users of AI companions, with the aim of clearly identifying how these more-than-human intimate relationships affect users across diverse demographics and relational experiences. In addition to cross-sectional, user response-driven, and qualitative research, controlled experimental and longitudinal studies are warranted to fully examine the impact of on-demand intimacy on users.

Finally, the pace of innovation in this field has far outpaced the development of meaningful regulatory frameworks. Current discussions around AI governance often focus on data privacy, misinformation, or labor automation, but the relational dimensions of AI remain significantly underregulated. As AI companions increasingly mediate users’ experiences of intimacy, there is a pressing need for policies that address ethical design and the potential for manipulation or exploitation. What forms of consent are meaningful in relationships with AI? How should companies be held accountable for relational harm? These questions are central to any future in which AI companionship becomes a normalized part of life. While initial legislative proposals are being developed (e.g., California State Senate, 2025), regulation must not simply catch up – it must proactively reimagine what social-centered approaches (Wang et al., 2024) to human-AI relationships look like in the new age of on-demand intimacy.

Footnotes

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.