Abstract

Recent advances in generative AI have raised public awareness, shaping expectations and concerns about their societal implications. Central to these debates is the question of AI alignment—how well AI systems meet public expectations regarding safety, fairness, and social values. However, little is known about what people expect from AI-enabled systems and how these expectations differ across national contexts. We present evidence from two surveys of public preferences for key functional features of AI-enabled systems in Germany (n = 1800) and the United States (n = 1756). We examine support for four types of alignment in AI moderation: accuracy and reliability, safety, bias mitigation, and the promotion of aspirational imaginaries. U.S. respondents report significantly higher AI use and consistently greater support for all alignment features, reflecting broader technological openness and higher societal involvement with AI. In both countries, accuracy and safety enjoy the strongest support, while more normatively charged goals—like fairness and aspirational imaginaries—receive more cautious backing, particularly in Germany. We also explore how individual experience with AI, attitudes toward free speech, political ideology, partisan affiliation, and gender shape these preferences. AI use and free speech support explain more variation in Germany. In contrast, U.S. responses show greater attitudinal uniformity, suggesting that higher exposure to AI may consolidate public expectations. These findings contribute to debates on AI governance and cross-national variation in public preferences.

Keywords

Public Opinion on AI Alignment in Germany and the United States

Recent successes of AI-enabled systems 1 such as ChatGPT, DALL·E, and Midjourney have significantly raised public awareness of generative AI. These systems, and others like them, have made generative models more accessible and user-friendly, leading to widespread adoption and increased visibility. As a consequence, both the capabilities and limitations of these technologies have entered public discourse, fueling growing expectations as well as mounting concerns about their societal impact.

Among the key concerns emerging in this debate is the issue of AI alignment—that is, the extent to which AI-enabled systems act in accordance with the intentions and expectations of their developers (Amodei et al., 2016; Bommasani et al., 2022; Gabriel, 2020; Hendrycks et al., 2022). Whereas AI governance refers to the institutional, legal, and political frameworks that shape how AI is developed and deployed, alignment covers technical and ethical efforts to ensure that AI systems work in accordance with human intentions or values. In practice, alignment measures can be understood as one element or object of governance: public authorities and private actors seek to ensure that model outputs accord with normative and societal expectations. Our study examines public attitudes toward such alignment goals, recognizing that these attitudes may indirectly inform, but not determine, broader governance choices.

Understanding these attitudes is essential because the politics of alignment is inseparable from the politics of governance: the way people think AI systems should behave also shapes what forms of regulation they will find legitimate. Yet, despite the growing relevance of AI alignment, public attitudes toward features of AI-enabled systems remain underexplored, though such attitudes matter. Public opinion on digital governance often reflects—though not always straightforwardly—partisan affiliations and deeper ideological commitments (Jang et al., 2024; Rauchfleisch & Jungherr, 2024). This is likely to hold true for AI governance as well, although empirical insights remain scarce, especially across countries (Rauchfleisch et al., 2025).

This article addresses this research gap by presenting a comparative study of public preferences for specific goals in AI alignment: accuracy and reliability, safety, bias mitigation, and the promotion of aspirational imaginaries. We selected these features for their relevance in the larger AI alignment debate. We compare survey responses from Germany (n = 1800) and the U.S. (n = 1756) and examine the role of key factors that may shape governance preferences on the individual, group, and systems levels. In doing so, our study contributes to the expanding literature on public attitudes in digital governance more broadly and AI governance specifically (Neyazi et al., 2024; Rauchfleisch et al., 2025; Rauchfleisch & Jungherr, 2024; Riedl et al., 2021, 2022; Vogler et al., 2025).

We find that respondents in Germany and the U.S. differ significantly in their use of AI, with U.S. participants reporting higher usage levels. These patterns reflect broader differences in technological adoption between the two countries. When evaluating preferences for AI alignment, both German and U.S. participants strongly support features aiming for accuracy and reliability, as well as safety. However, support declines for interventions aimed at mitigating bias or promoting aspirational imaginaries, especially in Germany. U.S. respondents consistently show stronger support across all categories, consistent with their higher societal involvement with AI. Experience with AI, political ideology, gender, and free speech attitudes all shape individual preferences, though their associations vary by country. In Germany, both AI experience and free speech attitudes are stronger predictors of support than in the U.S., where attitudes appear more uniformly developed. Political ideology is more influential in the U.S. when it comes to aspirational portrayals. These findings suggest that in contexts with lower AI exposure, individual-level factors play a greater role in shaping attitudes, while in high-exposure contexts like the U.S., public views on AI moderation are more consolidated.

Explaining Public Preferences for Features of AI-Enabled Systems

AI Alignment

As AI-enabled systems move from research contexts into widespread professional and public use, people’s preferences for how these systems are governed become increasingly important for the success of AI models, governance frameworks, and broader public trust or skepticism toward the growing integration of AI into society. This introduces an attitudinal and psychological dimension to the broader debate on AI alignment (Amodei et al., 2016; Bommasani et al., 2022; Hendrycks et al., 2022).

AI alignment broadly refers to the challenge of ensuring that AI models act in accordance with the intentions and values of their developers or deployers, rather than deviating from them in harmful or unintended ways (Gabriel, 2020; Ji et al., 2023; Leike et al., 2018; Ngo et al., 2022). This is challenging enough in settings where goals can be clearly specified, but alignment becomes even more challenging and contested when extended to the expectations of the broader public—especially given the significant variation in public attitudes, levels of expertise, and degrees of engagement (Achintalwar et al., 2024; Padhi et al., 2024). A key source of divergence is the debate over how much control developers should exert over model outputs and for what purposes that control should be applied.

Preferences in the Use of AI-Enabled Systems: Accuracy and Reliability, Safety, Bias Mitigation, and Promotion of Aspirational Imaginaries

Accuracy and Reliability

People vary in their expectations toward AI and the preferences they hold in using AI-enabled systems. On a foundational level, an important characteristic of such systems is accuracy. In other words, AI models should be bound by facts. However, it is essential to recognize that this can only mean facts as represented in data, not facts as such (Fourcade & Healy, 2024; Smith, 2019).

Much of public debate around generative AI centers on the risks associated with factually incorrect or misleading outputs—whether these stem from flawed training data, faulty inference, or so-called “hallucinations,” in which models generate information that appears authoritative but is entirely fabricated or inaccurate (Maleki et al., 2024; Maynez et al., 2020). This is particularly problematic in domains such as search, information retrieval, public discourse, education, democratic participation, and professional usage contexts where users rely on AI for accurate and trustworthy information (Jungherr, 2023; Jungherr et al., 2024; Jungherr & Schroeder, 2023; Spitale et al., 2023; Weidinger et al., 2022). Generative models can, for example, fabricate historical events, misattribute facts, disseminate false claims about individuals, or simply return wrong responses to factual or analytical queries. While these outputs often appear plausible, their misleading or fictional nature can result in real-world harms.

Expecting accuracy and reliability from AI-enabled systems seems a natural precondition for their widespread and systematic use. At the same time, there are good reasons to favor alignment principles that permit the adjustment of purely data-driven outputs, allowing systems to depart from the facts as represented in data.

Safety

We expect people to voice a preference for AI-enabled systems that take harm prevention and safety seriously and moderate their model outputs accordingly. Concerns about preventing harm and ensuring safety often justify intentional deviations from strictly data-driven model outputs (Anwar et al., 2024; Askell et al., 2021; Hao et al., 2023; Hendrycks et al., 2022; Phuong et al., 2024). This includes filtering outputs that could pose direct risks—such as generating instructions for building weapons, carrying out terrorist attacks, or engineering pathogens. However, safety concerns also extend to outputs that violate individual rights, such as privacy breaches or unauthorized use of intellectual property. In these instances, moderating or restricting factual content based on model-learned patterns becomes a necessary intervention.

Safety-oriented moderation is not a new concept in digital governance. Public support for such moderation is well documented in debates over digital speech. While there is variation between countries in what is perceived as severely harmful content (Jiang et al., 2021), there is general support to moderate harmful content on online platforms (Kozyreva et al., 2023; Pradel et al., 2024). Thus, the safety-focused moderation of AI systems might appear to be a comparable case. However, the impersonal and machine-generated nature of AI content may introduce new dynamics in how people perceive and evaluate such moderation. These differences could lead to different explanatory factors shaping public attitudes and preferences in the context of AI.

Bias Mitigation

Another feature people might be looking for in their preferences for AI-enabled systems is the mitigation of bias to promote fairness (Askell et al., 2021; Barocas et al., 2023; Hao et al., 2023). This intervention necessarily follows the workings of AI and therefore has no direct equivalent in discussions of speech moderation in other digital contexts. AI models do not access objective facts about the world directly, but only representations of those facts as encoded in data (Fourcade & Healy, 2024; Hand, 2004; Smith, 2019). As a result, they are prone to reproducing the biases embedded in their training data (Bianchi et al., 2023; Friedrich, Brack, et al., 2024; Friedrich, Hämmerl, et al., 2024; Hofmann et al., 2024; Tao et al., 2024; Weidinger et al., 2021). When AI systems are bound to such biased representations, their outputs may reinforce harmful distortions or inequalities.

Bias, in this context, refers to a divergence between the distribution of a variable in AI training data or outputs and its true distribution in the world. Fairness-oriented moderation seeks to adjust such outputs so that they better reflect a more accurate or equitable distribution rather than reproduce the skewed patterns found in data. This may involve correcting underrepresentation, countering stereotypes, or highlighting marginalized perspectives.

While such interventions are often normatively desirable, they are politically and ethically contested (Binns, 2018; Gabriel, 2020). Efforts to mitigate bias inevitably raise questions about what constitutes a fair or accurate representation of the world—and who gets to decide which absences or distortions in data should be corrected. In this sense, fairness-oriented alignment confronts not only technical challenges but also deeper disagreements about knowledge, representation, and justice. This, in turn, is likely to shape who supports or actively demands such interventions in AI-enabled systems.

Promoting Aspirational Imaginaries

Another reason to adjust the outputs of AI models builds on the idea that generative systems might not only show the world as it is, but also contribute to envisioning the world as it could or should be. In this view, moderation may serve to embed aspirational imaginaries—collectively held visions of desirable social futures (Taylor, 2004)—and thereby enact them in technical practice.

Imaginary-based alignment can serve two distinct functions. From a science and technology studies perspective, sociotechnical imaginaries are institutionally stabilized and publicly performed visions of social order that become materialized in policies, standards, and infrastructures (Jasanoff & Kim, 2015). Some approaches to the moderation of model outputs can thus be seen as an expression of dominant social orders, while other approaches used in different models might adopt alternative imaginaries that contest established orders.

One driver of such contestation draws on pragmatist and cultural theory traditions that treat imagination as a driver of moral reflection, inclusion, and social change (Dewey, 1916, 1934; Rorty, 1989). Following this view, moderation can be understood as an effort to shape AI outputs toward inclusive or solidaristic ideals rather than mirror existing distributions or inequalities—even when those reflect empirical patterns in data.

Both forms of imaginary-oriented alignment—whether expressing prevailing orders or envisioning alternative moral horizons—raise questions of legitimacy and authority: who defines these ideals, and should AI systems promote them? As a result, aspirations-based moderation represents a contested and value-laden approach within the broader debate on AI alignment, and public support for such interventions is unlikely to be universal.

Preferences for the Alignment of AI-Enabled Systems

These observations point to likely differences in the preferences for principles guiding the adjustment of outputs of AI-enabled systems. Specifically, attitudes are likely to vary depending on the underlying rationale. Moderation aimed at ensuring accuracy and reliability, or safety—such as preventing harm, illegal activity, or violations of individual rights—is likely to enjoy broad public support, as these goals align with widely accepted norms and relatively uncontroversial forms of risk prevention. In contrast, interventions designed to mitigate bias or promote aspirational imaginaries introduce greater ambiguity, normative complexity, and potential for political disagreement. Bias mitigation involves contested judgments about what constitutes fairness or representational accuracy (Binns, 2018; Gabriel, 2020), while aspirational goals go further by seeking to reshape cultural narratives or advance particular visions of a better future. Such aims can trigger concerns about legitimacy, overreach, and ideological bias. Accordingly, the motivation behind a given moderation decision is likely to influence how it is received by the public, with support declining as the rationale shifts from widely shared safety concerns to more contested and value-laden objectives.

Research Question 1 (RQ1): To what extent do public preferences vary between different principles of AI governance, namely accuracy and reliability, safety, the mitigation of bias, or the promotion of aspirational societal values?

Explaining Preferences: The Role of Involvement

We propose a model in which preferences regarding the adjustment of AI-generated outputs are shaped by varying degrees of personal and collective involvement. This involvement can manifest on multiple levels:

At the individual level, it may reflect personal experiences with AI and relevant related attitudes.

At the group level, it may be influenced by membership in a group that is particularly affected by or sensitive to AI-based interventions.

At the systemic level, it may depend on whether an individual resides in a country with a strong or weak technological infrastructure and relationship to digital innovation.

In the following, we elaborate on the rationale behind each of these dimensions.

Individual-Level Involvement: Experience and Values

Individual support for AI content moderation is shaped not only by how frequently people use AI technologies but also by the values and beliefs they bring to evaluating their use. We distinguish two dimensions of individual-level involvement with AI: experiential and ideational.

Experiential Involvement

Personal involvement with AI can take different forms. One case involves individuals who actively use AI for personal or professional reasons. These users experience the technology firsthand and can develop more elaborate and specific preferences regarding its regulation than those who do not (Horowitz et al., 2024; Horowitz & Kahn, 2021). We expect this familiarity to translate into differentiated preferences toward AI moderation.

People with limited exposure to or interaction with AI-enabled systems may approach them with greater skepticism or uncertainty. This skepticism may lead to a heightened demand for external safeguards, particularly those framed around safety concerns. Moderation aimed at reducing bias or promoting aspirational portrayals, however, may appear unnecessary or overly intrusive to individuals with low AI involvement since these interventions presuppose familiarity with how AI systems operate.

In contrast, individuals with greater hands-on experience using AI-enabled systems may have more specific views on the systems’ capabilities and limitations. This familiarity may foster a greater appreciation for moderation tasks that are specific to AI-generated content—particularly interventions aimed at bias mitigation or the promotion of aspirational social values.

Ideational Involvement

In addition to usage-based experience, individual attitudes toward AI moderation are shaped by broader normative commitments and political values. AI moderation can be seen as a special case of speech governance more broadly (Dabhoiwala, 2025; Kosseff, 2023; Mchangama, 2022). As such, individual support for AI interventions is likely to reflect how people understand and prioritize free expression.

People who view free speech as a foundational democratic right may oppose moderation efforts—especially those perceived as ideological or normative in nature. In contrast, those who understand speech as something that can and should be regulated in the interest of societal fairness or safety may be more supportive of AI content moderation (Rauchfleisch & Jungherr, 2024; Riedl et al., 2021, 2022).

We therefore expect individuals who strongly support free speech to be more critical of AI moderation interventions aimed at shaping content in terms of fairness or aspirational values, while potentially supporting moderation grounded in factual accuracy or safety.

Beyond attitudes toward free speech, broader political ideology—particularly along the liberal–conservative spectrum—also informs support for different types of AI moderation. Conservatives, who tend to prioritize order, safety, and personal responsibility, may support moderation that prevents harmful or illegal content. Liberals—here referring to the progressive or left-leaning orientation in U.S. political discourse, rather than classical or European liberalism—especially in recent years, may place greater emphasis on equity, inclusivity, and social justice, and thus may be more supportive of interventions that promote fairness (bias mitigation) or progressive values (aspirational imaginaries) (Chong et al., 2024; Chong & Levy, 2018).

Research Question 2 (RQ2): How do individual-level factors—including personal experience with AI, support for free speech, and political ideology—shape public preferences for different goals of AI governance, such as accuracy and reliability, safety, bias mitigation, and the promotion of aspirational societal values?

Group-Level Involvement: Partisanship & Gender

People can also experience AI through the lens of group-level involvement. Such involvement occurs when group membership shapes exposure to AI technologies or to the societal debates surrounding their regulation. This study examines two forms of group-level involvement: political partisanship and gender.

Partisanship

Partisanship increasingly shapes attitudes toward digital governance. In the U.S., the issue of speech moderation—particularly on digital platforms—has become highly politicized. Republican political elites have framed moderation efforts as ideologically biased and as threats to free speech (McCabe & Kang, 2020). This discourse often targets progressive actors as overreaching in their regulation of online content. In the context of AI, this has culminated in the politicized framing of so-called “woke AI,” shorthand for alleged left-leaning or socially progressive bias in automated systems (Roose, 2025). As a result, we expect Republican supporters to oppose forms of AI content moderation that are framed as ideologically motivated—such as bias mitigation and the promotion of aspirational imaginaries. However, moderation in the name of safety may find greater acceptance among Republicans, as it aligns with conservative discourses of security and protection.

In contrast, Democratic partisans are more likely to view moderation as a tool for fostering equity, inclusivity, and representation. We therefore expect them to show greater support for AI moderation focused on bias reduction and aspirational imaginaries. In Germany, digital content governance is less politicized than in the U.S. However, the Green Party has been a leading advocate of strong regulatory measures to counter misinformation, hate speech, and inequality online (e.g., Künast & Geese, 2020). Although these initiatives do not explicitly target AI alignment, they reflect a broader orientation toward normative regulation in the digital sphere. We therefore expect this tendency to carry over, with Green Party supporters showing comparatively high support for AI content moderation across all justifications. For other parties in Germany, where digital policy debates are less divided, we do not expect systematic differences.

Gender

Group-level involvement may also arise from shared experiences of harm or vulnerability. One such case is gender. Women are disproportionately exposed to digital risks (De Ruiter, 2021; Wang & Kim, 2022). These experiences may sensitize them to the potential harms of AI-generated content and increase their support for interventions designed to moderate it (Vogler et al., 2025). We therefore expect women to show greater support for AI content moderation, particularly for justifications grounded in safety and bias reduction.

Research Question 3 (RQ3): How do group-level characteristics such as partisanship and gender shape public preferences for different goals of AI governance, such as accuracy and reliability, safety, bias mitigation, and the promotion of aspirational societal values?

System-Level Involvement: Country

Countries differ in their openness toward new technologies (Comin & Hobijn, 2010; Ding, 2024) and perceptions of technological risk (Douglas & Wildavsky, 1982). This is also evident when we examine the uses of generative AI. In the U.S.—a country with a world-leading digital technology sector and comparatively strong openness toward new technologies—33% of respondents in a survey representative of American adults said in August 2024 they had used AI-enabled chatbots like ChatGPT or Google Gemini (McClain et al., 2025). In contrast, in September 2024 in Germany—a country without a strong digital technology sector and more hesitant in its approach to new technology—25% of respondents to a representative survey of Germans age 16 and older said they had used AI-enabled services like ChatGPT or Google Gemini (IfD-Allensbach, 2024). These figures illustrate that countries differ in how citizens engage with emerging technologies.

Country-specific differences in AI use can also extend to attitudes toward new technology and associated phenomena. For example, people vary across countries in their views on the benefits and risks of AI (Kelley et al., 2021). Similar differences can be expected for regulatory preferences for AI and digital technology more broadly (Riedl et al., 2021; Theocharis et al., 2025). We argue that these national differences in engagement and preferences translate into varying levels of societal involvement with AI, which we define as the extent to which AI technologies are integrated into daily life, institutional practices, and public debate.

We further assume that societal involvement conditions the role of individual-level involvement. In highly involved societies, we expect public opinion to be relatively uniform, such that highly and weakly involved individuals hold similar views. In contrast, in less involved societies, attitudes toward AI moderation should differ more strongly depending on personal involvement.

To examine these expectations, we compare public attitudes in the U.S. (representing a high-involvement context) and Germany (representing a low-involvement context).

Research Question 4 (RQ4): How does the system-level variable country shape public preferences for different goals of AI governance, such as accuracy and reliability, safety, bias mitigation, and the promotion of aspirational societal values?

Research Question 5 (RQ5): How does the relationship between individual- and group-level involvement with AI and preferences for AI governance vary across countries with differing levels of societal involvement with AI?

Methods

We collected data in the U.S. and Germany through online panels. The study was approved by the IRB of the University of Bamberg. In the U.S., 1800 participants were recruited from the survey research company Prolific (collected between 1 and 6 March 2024). We used a representative quota sample for the U.S. for sex, age, and political affiliation (see Supplementary Information A). Participants had to be U.S.-based and aged 18 or older to participate in the study. Participants were paid £0.75 (an hourly rate of £9; we ran the survey through Prolific’s European platform) for their study participation, which took around 5 minutes to complete. Forty-four participants who failed a simple attention check at the beginning of the study were excluded, resulting in a sample of 1756. On the starting page, we informed participants about their rights (for example, that they could withdraw from the study at any point by simply closing the browser) and asked them to provide their consent. None of the questions asked for personally identifiable information. In Germany, we also recruited 1800 participants from the survey research company Bilendi (collected between 14 and 18 March 2024). We used a quota for age, gender, and regions in Germany (16 states). The only difference from the U.S. survey was that we could directly filter out participants who failed the attention check and thus ran the survey until we achieved 1800 successful complete responses. 2

The descriptive statistics for all measured variables are reported in Table 1 (for a complete table on the item level with the question wording, see Supplementary Information B.1). We measured AI moderation preferences by asking respondents: “Please indicate how important the following criteria are for you when choosing AI-enabled services”. We measured responses on a 7-point scale (1 = not important at all; 7 = very important) for the four concepts related to AI moderation. Accuracy and reliability (“The AI service consistently provides accurate and reliable information or results based on its analysis and data-driven insights.”), safety (“Measures are in place to prevent the AI from generating or promoting illegal, dangerous, or harmful content.”), bias mitigation (“Efforts are made to identify and reduce biases in AI outputs, ensuring fairness and equity in treatment and decision-making across different groups of people.”), and aspirational imaginaries (“The AI aims to highlight and encourage positive societal values, portraying an aspirational view of society.”).

Descriptive Statistics for All Variables.

AI use was measured with two items: one asking about AI use in the professional or work context and one assessing AI use in personal life and spare time (1 = never; 7 = very often). The two items were combined into a mean index. Support for free speech was measured using two items from Riedl et al. (2021), which were adapted from Rojas et al. (1996). Political orientation was measured with a single scale (U.S.= 1-liberal; 7-conservative; Germany = 1-left; 7-right). To identify supporters of the Democratic Party in the U.S. and the Green Party in Germany, we recoded the answers to a question about the general leaning toward a party in the country. 3 For education, we recoded responses into two categories: “postgraduate degree or higher” and “other” (with “other” serving as the reference category).

As an analytical strategy, we use both datasets together for the regression analysis. We first estimate, for each outcome, a model with all predictors—including a country variable (Germany = 0; U.S. = 1)—to check whether single predictors have an overall association with the outcome variable. We then estimate a second model in which we enter all variables (mean-centered) as interaction terms with the country variable. This also allows us to test, through the interactions, whether there is a country difference in the explanatory strength of the predictors. A positive estimate for the interaction term would indicate that the predictor is stronger in the U.S., whereas a negative estimate would indicate a stronger predictor in Germany. As these interaction terms are difficult to interpret, we will visualize them as marginal effect plots in the results section (we also report single-country regression models in Supplementary Information C.2 and a specification curve analysis in Supplementary Information D).

Results

RQ1 Preferences

We start with a descriptive analysis of respondents’ alignment preferences when choosing AI-enabled services. Figure 1 displays the distribution of responses in Germany and the U.S. when participants were asked how important the respective alignment goal is in selecting an AI-enabled service.

Distribution for the four outcome variables for Germany (left) and the U.S. (right). Vertical lines indicate the mean.

As expected, support varies systematically across approaches. Public support is strongest for alignment goals oriented toward accuracy, reliability, and safety. Both U.S. and German respondents assign high importance to accuracy. Similarly, preventing harm—defined as preventing the generation of illegal, dangerous, or harmful content—is widely supported. These safety-oriented adjustments to model outputs appear to be largely noncontroversial, likely due to their alignment with conventional risk regulation in digital communication environments.

Support declines, however, as motives behind adjustments to model output shift from safety to more normative goals. Bias mitigation, which aims to promote fairness and equity, is still positively received but exhibits more variation, especially among German respondents.

Importantly, country-level differences in support patterns (see Figure 2) align with broader national trends in technology adoption and risk perception. A Welch two-sample t-test indicates a significant difference, t(3553.4) = –8.98, p < .001, in AI use between the U.S. and Germany. Participants in the U.S.—home to a world-leading digital technology sector and generally higher openness to new technologies (Comin & Hobijn, 2010; Ding, 2024)—reported higher AI use scores (M = 3.18, SD = 1.59) than those in Germany (M = 2.70, SD = 1.61) where adoption of generative AI tools remains more cautious and public discourse often emphasizes potential risks. Furthermore, 26.8% of respondents in Germany reported that they never use AI-supported applications for either personal or professional purposes, compared to only 10.8% in the U.S.

Estimates with 95% CIs for all four outcome variables. Non-significant estimates are indicated as empty dots. Significant single estimates indicate an overall association. Significant negative interaction estimates indicate that the association is relatively stronger in Germany, while significant positive interaction estimates indicate that it is relatively stronger in the U.S.

These differences in usage correspond with differences in preference. The U.S. consistently shows greater support across all categories. In contrast, Germany shows more reserved or varied support, particularly for normatively driven alignment goals. These differences reflect varying levels of societal involvement with AI.

Explaining Preferences for AI Alignment

We now examine how different explanatory factors shape preferences for alignment principles of AI-enabled systems among respondents in Germany and the U.S. Figure 2 displays the estimated coefficients from our models (for the complete tables for the models, see Supplementary Information C.1).

RQ2 Individual-Level Factors

At the individual level, personal experience with AI consistently predicts support for all tested features across both countries. The association is particularly strong for alignment goals that are specific to AI systems, such as bias mitigation (b = 0.14, p < .001, 95% CI [0.11, 0.18]) and aspirational imaginaries (b = 0.21, p < .001, 95% CI [0.17, 0.25]). This indicates that direct experience with AI fosters a more nuanced understanding of its societal implications, making individuals more receptive to content interventions targeting AI-specific harms and potentials.

We also find a significant role for free speech attitudes, though the pattern is somewhat counterintuitive. Overall, stronger support for free speech is associated with greater support for accuracy (b = 0.18, p < .001, 95% CI [0.14, 0.22]), safety-related moderation (b = .08, p < .001, 95% CI [0.03, 0.13]), and bias reduction (b = .06, p = .005, 95% CI [0.02, 0.11]), but not for the promotion of aspirational imaginaries (b = .04, p = .095, 95% CI [−0.01, 0.09]). Political ideology also aligns with our expectations: in both countries, individuals who identify as liberal or left are more supportive of AI moderation than those who identify as conservative. Only for aspirational imaginaries, the estimate is not significant (b = −0.03, p = .201, 95% CI [−0.07, 0.02]).

RQ3 Group-Level Involvement

Turning to group-level factors, we observe that partisan allegiance plays a role consistent with party cues. Overall, self-identified supporters of the Green Party (Germany) and the Democratic Party (U.S.) are more likely to support all four forms of AI moderation than supporters of other parties (see Figure 2). These patterns mirror the elite discourse within these parties, suggesting that elite signaling helps structure public attitudes toward AI governance.

We also find that gender shapes moderation preferences, as women see all four forms of AI moderation as important. Primarily for safety (b = 0.51, p < .001, 95% CI [0.40, 0.62]) and bias reduction (b = .34, p < .001, 95% CI [0.23, 0.45]), women, on average, are more likely than men to support interventions. This supports the argument that group-level exposure to digital risks—such as online harassment and misrepresentation—can translate into greater support for protective content interventions.

RQ4 Cross-National Differences

The most pronounced differences between countries emerge for preferences related to accuracy and reliability (b = 0.82, p < .001, 95% CI [0.72, 0.93]), safety (b = 0.26, p < .001, 95% CI [0.14, 0.38]), and bias mitigation (b = 0.65, p < .001, 95% CI [0.54, 0.77]). As expected, respondents in the U.S.—a context characterized by higher societal involvement with AI—express stronger support for these alignment goals compared to respondents in Germany. This finding supports the idea that greater societal exposure to AI corresponds with increased public demand for moderation practices tailored to the specific risks and opportunities associated with these systems.

Interestingly, this pattern does not extend to support for aspirational imaginaries (b = −0.06, p = .358, 95% CI [−0.18, 0.07])—that is, AI-generated content promoting particular visions of society. For this type of intervention, we observe no significant difference between the two countries. This suggests that support for aspirational content moderation may be driven more by ideological values than by levels of societal AI involvement.

RQ5 Involvement Differences by Country

Figure 2 also illustrates the interaction terms from our second model, indicating whether the influence of a given variable differs significantly across countries. Negative interaction estimates suggest a stronger association in Germany; positive estimates suggest a stronger association in the U.S.

We find that individual-level differences in AI use have a significant impact in Germany but a considerably smaller effect in the U.S. The same is true for support for free speech: these attitudes are more predictive of preferences in Germany than in the U.S. In contrast, political ideology only has a differential association in the U.S., where it significantly predicts support for aspirational imaginaries (b = 0.15, p = .003, 95% CI [0.05, 0.24]). This suggests that in the U.S., the political discourse around aspirational imaginaries of society—particularly those embedded in AI systems—is more developed and divided.

Regarding group-level variables, partisan differences do not exhibit consistent cross-national interaction terms, with only a difference between countries for mitigating bias (b = −0.36, p = .024, 95% CI [−0.66, −0.05]) as the association is stronger in Germany. For gender, however, the associations are more pronounced in the U.S. for preferences related to safety (b = 0.40, p < .001, 95% CI [0.18, 0.62]) and bias mitigation (b = 0.41, p < .001, 95% CI [0.20, 0.63]).

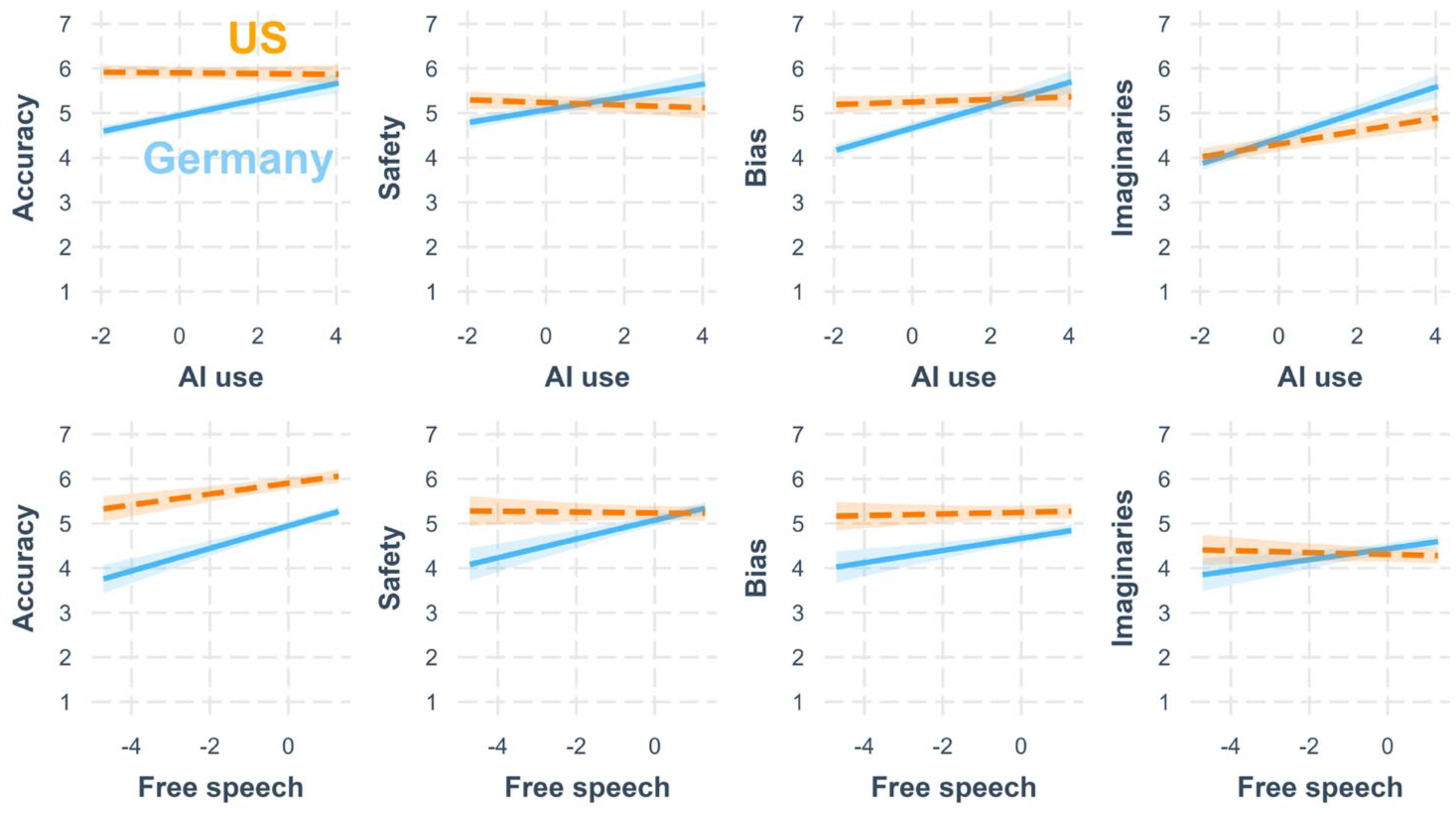

Figure 3 further illustrates these dynamics by visualizing the interactions of the country variable with AI use and free speech attitudes. The figure shows that in the U.S., there is little difference between individuals with high and low AI experience in terms of their alignment preferences. In Germany, however, these differences are much more pronounced—particularly among respondents with low AI experience, who are significantly less supportive of moderation. As AI experience increases, the gap between German and U.S. respondents narrows.

Interactions for AI use and free speech with country for all four outcome variables. All interaction terms are significant.

A similar pattern emerges for free speech attitudes. Again, this supports our broader argument: in high-involvement societies like the U.S., attitudes toward AI alignment are more uniformly developed, reducing the explanatory power of individual-level variation. In contrast, in low-involvement societies, such as Germany, individual experiences and values play a larger role in shaping attitudes. 4

Discussion

This study brings a comparative and attitudinal perspective to the debate on AI alignment by examining how users evaluate key features of AI-enabled systems. We show that individuals hold distinct preferences for moderation mechanisms that influence model outputs and that these preferences vary systematically between countries. Respondents in the U.S. report significantly higher levels of AI use than those in Germany, reflecting broader national differences in technology adoption and societal engagement with AI. Across both contexts, accuracy, reliability, and safety receive the strongest public support—indicating a shared baseline of expectations for trustworthy and safe systems. In contrast, support is more conditional for interventions to mitigate bias or promote aspirational imaginaries, especially among German respondents. This aligns with the view that bias correction involves normative judgments that can be politically charged and subject to disagreement. Similarly, the lower support of interventions promoting aspirational imaginaries indicates hesitancy about the active role of AI in shaping cultural narratives—and potentially associated concerns about legitimacy, ideological overreach, and value alignment. U.S. participants consistently express higher support across all dimensions, which corresponds with their greater exposure to and involvement with AI technologies. The difference in support between Germany and the U.S. could be an expression of the maturity of the public discourse and awareness about the functioning of AI-enabled systems, indicating a lower awareness among German respondents about related issues.

Our analysis further shows that personal experience with AI, political ideology, gender, and support for free speech shape attitudes toward AI alignment—but with varying strength across countries. In Germany, both AI experience and free speech attitudes are stronger predictors of support, suggesting that in contexts with lower exposure, individual-level factors play a more decisive role. In the U.S., where AI technologies are more deeply embedded in public and institutional life, views on AI moderation appear more consolidated, with political ideology particularly influencing support for aspirational interventions.

In the U.S., the stronger ideological structuring of responses—especially regarding aspirational imaginaries—likely reflects not only higher levels of engagement with AI but also the more pronounced and divided media and elite discourse surrounding digital technologies. Public attitudes toward AI moderation may therefore mirror existing partisan divides in how technological innovation, speech regulation, and social values are debated in the public arena. In this sense, the observed divisions are less a product of direct experience with AI than of the broader cultural framing through which AI enters politicized discussion.

Our findings on the role of free speech support and preferences for adjustments of model outputs are especially interesting, since they contrast with previous findings on digital content moderation, where free speech concerns often predict greater resistance to moderation interventions by companies or states (Jang et al., 2024; Rauchfleisch & Jungherr, 2024). One possible interpretation is that respondents do not view generative AI output as equivalent to human speech. That is, the normative privilege of free speech may not extend, in the public’s view, to AI-generated content. These findings suggest that assumptions from earlier debates about digital content moderation cannot be automatically transferred to the case of AI. Future policy and public debate on AI moderation should take these differences into account.

Our study is subject to several important limitations. First, there is a temporal dimension to consider. As AI-enabled systems become more prevalent in daily life, both individual experience with these technologies and public discourse around them are likely to evolve. The cross-national differences we identify may, therefore, be time-bound and could diminish over time as country-level involvement with AI converges internationally.

Second, our analysis is limited to just two countries. Future research should broaden the comparative scope to include a more diverse set of countries, particularly those with varying levels of technological integration and public attitudes toward AI. In this context, we see particular value in examining countries in Asia, where both the pace and form of AI adoption differ substantially from Western contexts. Moreover, our operationalization of “technology involvement” is relatively coarse. Future studies should develop and test more nuanced and systematic measures—such as indicators of public discourse, regulatory activity, or the economic significance of AI in a given country.

Our research design is cross-sectional and based on self-reported data. This limits the causal inferences that can be drawn and may be subject to bias in participants’ self-assessments of AI use and preferences. Future work should incorporate more objective measures of AI experience and leverage experimental or longitudinal designs to capture how individuals respond to concrete AI interventions rather than relying solely on abstract descriptions or stated preferences (Rauchfleisch & Jungherr, 2025).

Finally, our findings show how publics in two democracies perceive different principles of AI alignment, but they do not imply that public opinion should directly determine technical or regulatory choices. Rather, these attitudes provide insight into the social legitimacy of competing alignment goals and can help policymakers anticipate which forms of AI governance are likely to encounter support or resistance. Future work should explore how democratic institutions can balance expert-driven alignment decisions with public expectations in the broader governance of AI.

In this sense, our findings should not be read as a call for either more or less moderation of AI outputs, but as an empirical mapping of where publics draw the boundaries of legitimate intervention. Understanding these boundaries is essential for designing governance arrangements that are both democratically responsive and epistemically sound. If one were to translate these findings into practical terms, they would suggest prioritizing accuracy and safety as default expectations for trustworthy systems, offering optional or transparent controls for fairness-oriented adjustments, and approaching aspirational or value-promoting settings with particular caution, given their divided public support.

Our findings point to a set of important and more general considerations that should be taken into account and pursued further. This includes consideration of geopolitical competition and conflict, the role of companies, and the deep opaqueness and non-assessability of the model provision pipeline. For example, our study highlights substantial cross-national differences in public attitudes toward AI. These differences are particularly significant in today’s AI landscape, where U.S. or Chinese companies develop the most widely used systems. As a result, public expectations for AI moderation may not only reflect concerns about functionality and fairness, but also the perceived degree of foreign versus domestic control over digital environments. Like dynamics observed in international trade (Jungherr et al., 2018), attitudes toward the countries of origin of AI technologies can “contaminate” perceptions of the technologies themselves. This dimension warrants close attention in a geopolitical climate marked by intense competition and strategic rivalry.

Moreover, access to AI models is shaped by the strategic and commercial interests of the companies that develop them. Whether driven by profit or geopolitical considerations, these motivations may influence both the design and availability of AI systems in ways that affect public trust. Importantly, AI moderation is just one step in a longer, largely opaque chain of decisions made during model development, training, deployment, and evaluation. At each stage, political values—intentionally or not—may become embedded in technical systems. To ensure global legitimacy and public trust in AI, these decision-making chains must become more transparent, assessable, and where appropriate, open to public negotiation and contestation.

Currently, model training and moderation practices remain largely hidden from public scrutiny. This lack of visibility risks eroding public confidence and enabling politicized narratives about AI bias or hidden agendas. In a context of growing diversity in AI development—spanning open-source and commercial models, varying origins, and geopolitical alignments (Buyl et al., 2024)—there is an urgent need for a more mature, structured debate about legitimate approaches to model adjustment, both during training and in real-time operation.

Without such a debate, we risk stumbling from one controversy to the next, fostering a general climate of suspicion toward AI. Transparency alone is not enough; societies must also articulate clear expectations of what they want from AI systems and how those systems should be governed. Therefore, companies, policymakers, and researchers must take active responsibility for documenting and debating the principles, procedures, and techniques underpinning justified AI moderation. As O’Neill (2021) argues, digital systems must be made assessable to users. If moderation practices in the adjustment of AI outputs remain opaque, public trust will deteriorate—especially when high-profile errors are framed as evidence of hidden political or cultural agendas.

Ultimately, realizing the societal benefits of AI will depend on building a public governance framework that allows for visibility, legitimacy, and accountability in model development and output adjustments. Failing to do so risks deepening skepticism and undermining AI’s long-term viability in democratic societies.

Supplemental Material

sj-pdf-1-sms-10.1177_20563051251405069 – Supplemental material for Public Opinion on the Politics of AI Alignment: Cross-National Evidence on Expectations for AI Moderation From Germany and the United States

Supplemental material, sj-pdf-1-sms-10.1177_20563051251405069 for Public Opinion on the Politics of AI Alignment: Cross-National Evidence on Expectations for AI Moderation From Germany and the United States by Andreas Jungherr and Adrian Rauchfleisch in Social Media + Society

Footnotes

Acknowledgements

The authors used ChatGPT 5 for language editing.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Adrian Rauchfleisch’s work was supported by the National Science and Technology Council, Taiwan (R.O.C.) (grant no. 113-2628-H-002-018 and 114-2628-H-002-007) and by the Taiwan Social Resilience Research Center (grant no. 114L9003) from the Higher Education Sprout Project by the Ministry of Education in Taiwan.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.

Notes

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.