Abstract

Covid-19 deepened the need for digital-based support for people experiencing mental ill-health. Discussion platforms have long filled gaps in health service provision and access, offering peer-based support usually maintained by a mix of professional and volunteer peer moderators. Even on dedicated support platforms, however, mental health content poses difficulties for human and machine moderation. While automated systems are considered essential for maintaining safety, research is lagging in understanding how human and machine moderation interacts when addressing mental health content. Working with three digital mental health services, we examine the interaction between human and automated moderation of discussion platforms, contrasting ‘reactive’ and ‘adaptive’ moderation practices. Presenting ways forward for improving digital mental health services, we argue that an integrated ‘adaptive logic of care’ can help manage the interaction between human and machine moderators as they address a tacit ‘risk matrix’ when dealing with sensitive mental health content.

Keywords

Introduction

The Covid-19 pandemic deepened an already prevalent global need for digital-based health support (Sorkin et al., 2021). Health discussion and support platforms have long filled gaps in mental health service provision, offering accessible support usually maintained by small teams of professional and volunteer peer moderators and managers, sometimes with input from clinicians (McCosker, 2018). However, mental health content poses deep challenges to moderation practices and policies that need to ensure safety while making peer-based support possible outside of clinical settings. And these challenges have troubled dominant social media platforms like Facebook, Instagram and TikTok (Gerrard, 2020).

There is a growing consensus that automated or algorithmic moderation systems are essential to effective and safe online community (Gorwa et al., 2020). However, current systems are not always up to the task, especially when faced with complex, ambiguous or what is referred to as ‘borderline’ content – content that may fall one side or the other of community standards (Gillespie, 2018, 2022; Heldt, 2020). Furthermore, much of the current research on moderation practices focuses on the future utility of automated moderation and risks in the way algorithms manage, or fail to manage, safe, supportive and inclusive online environments (Gorwa et al., 2020). There are insights to be gained from how moderation is managed on platforms dedicated to peer-based mental health support. The field of content moderation has much to learn from these organisations about how to accommodate and manage mental health content. Additionly, there is work to be done in reconciling the risk-targeting of automated systems and the learned expertise of human moderators aiming to scale online mental health support. This article addresses a gap in understanding how human and machine moderators interact to balance risk and support in dealing with sensitive mental health content to help inform practices in both dedicated non-profit mental health peer-support communities and large commercial social media platforms.

In this article, we focus on understanding the interplay between automated and human moderation practices (Gorwa et al., 2020; Ruckenstein and Turunen, 2020) through a research collaboration with three Australian mental health service providers (SANE Australia, Beyond Blue and ReachOut). When exploring best practice, we saw synergies, negotiations and some tensions between human and automated moderation in balancing risks against benefits. These tensions and synergies can be understood in relation to what Brian Christian (2020) refers to as the ‘human–machine alignment problem’, where the values, goals and intentions accompanying human and machine decision-making often misalign or are at odds. Specifically, this article contributes to recent research aiming to extend moderators’ professional capabilities and suggests ways to improve the alignment of automated decision systems with the goals, expertise and learned experience of moderators and organisations’ health and wellbeing imperatives building on existing research (Jhaver et al., 2019; Ruckenstein and Turunen, 2020).

In complex scenarios like the discussion of mental health and illness, the (mis)alignment of human and machine expertise matters greatly. Science and technology studies (STS) research has shown that automated systems are not constructed in isolation, but instead co-produced via ‘social practices, identities, norms, conventions, discourses, instruments and institutions’ (Jasanoff, 2004: 3). Identifying sites of co-production in automated moderation can expose how its features are entangled, helping to move beyond narratives of technological determinism that focus only on what those technologies do and how they shape social situations (Govia, 2020). We show that automated moderation systems are entwined with the judgements and goals of experienced human moderators who can enact more ‘adaptive’ moderation practices.

There are opportunities for enhancing the way moderation teams and automated systems work together to mitigate the growing human–machine value alignment problem in the reliance on algorithmic moderation. Given the gap in understanding how this might work in moderating complex material such as mental health content, and contributing to the field of content moderation and digital mental health service implementation, we ask the following research question: How do moderators and managers balance support and resilience-oriented goals and intentions against the ‘programmed risks’ embedded in automated moderation systems in mental health discussion content? Answering this research question will help to guide practical improvement in interactions between human–machine moderation.

Our analysis shows that automated moderation foregrounds a reactive logic tied to risk and safety in the management of mental health help-seeking and support. Human moderation teams, however, excel in deploying an adaptive ‘logic of care’ (Mol, 2008; Ruckenstein and Turunen, 2020). However, there is room to improve the way these roles interact and collaborate in achieving effective platform moderation. We argue that automated systems are circumscribed in their ability to oversee the ‘risk’ and ‘safety’ concerns raised by mental health content—often limited to a reactive logic of risk-response. Nonetheless, they can be improved by embedding an adaptive logic of care, leveraging lived experience and expertise, that is, more attuned to individuals’ needs and the nuances of mental health issues, while still operating at scale.

The following section elaborates on the human–machine alignment problem and draws together existing research to examine the difficulties facing human versus automated content moderation practices in the context of mental health. We then draw attention to the specific issues that mental health and illness raises for those undertaking the work of community moderation—whether for the purpose of commercial content moderation or non-profit and volunteer peer moderation. The Analysis and Discussion sections focus on how that alignment and integration takes place and the implications for improving human–machine moderation through what we refer to as an adaptive logic of care.

Mental health content moderation and the human–machine alignment problem

Social media platforms tend to treat mental health content as problematic, using terms like ‘borderline’ or ‘low quality’ to describe content that may not quite breach standards but raises concerns (Heldt, 2020). Concerns mostly target the potential to trigger trauma, self-harm or suicide (Gillespie, 2018). Hence for commercial platforms, there has been a strong focus on controlling and banning accounts or images of self-harm, including disordered eating and suicidal ideation (Gillespie, 2018). With mental health, the lines are often blurred between expressions of distress and distressing content. When expressions of mental health and ill-health are moderated on Facebook, for example, they are either blocked through techniques like hashtag bans (McCosker and Gerrard, 2021) or attract ‘thin self-regulation’ measures such as automated notifications and connections to national support organisations (Medzini, 2022). The varied and often in-effectual responses underline the difficult position commercial platforms are in when dealing with mental health content.

Mental health content tends to challenge moderation practices and tools because it involves balancing potential harms against the known benefits of giving voice to people experiencing mental ill-health and those seeking and receiving social support outside of often stretched health services (McCosker, 2018; Gerrard, 2020; Hendry, 2020; Naslund et al., 2016). As mental health services become ‘platformed’ (Van Dijck and Poell, 2016)—exploring techniques for operating digitally—it is important to consider the values, goals and practices they bring to digital care. While commercial platforms have consulted mental health expertise to help improve approaches and policies, there is much to learn from digital mental health service providers in providing online support (Roland et al., 2020). Content moderators and platform managers need guidance in how to address challenging mental health content, especially as those roles continue towards professionalisation. Priority, however, has been given to political interference, misinformation, harassment and hate speech and other forms of more explicit abuse with solutions at scale still seemingly a long way off (Suzor, 2019; Gillespie, 2020).

Critical accounts of ‘commercial content moderation’ (Roberts, 2019) note the challenges for platforms in establishing the right balance of intervention and free expression. Grimmelmann (2015) refers to the long history of content moderation on Internet forums and defines it as the set of ‘governance mechanisms that structure participation in a community to facilitate cooperation and prevent abuse’ (p. 6). A more nuanced definition involves:

the detection of, assessment of, and interventions taken on content or behaviour deemed unacceptable by platforms or other information intermediaries, including the rules they impose, the human labour and technologies required, and the institutional mechanisms of adjudication, enforcement, and appeal that support it (Gillespie et al., 2020).

This growing body of research emphasises the challenges in the often-invisible labour and emotional toll of content moderation (Roberts, 2019), the political economy of platform policies and governance (Grimmelmann, 2015) and the socio-technical processes and practices that entwine human and machine value systems in the work of moderation (Gorwa et al., 2020; Jhaver et al., 2019; Ruckenstein and Turunen, 2020). Gillespie et al. (2020) suggest expanding content moderation research to improve the professional field of content moderation. This goal can feel at odds with a growing emphasis on automation.

Automated moderation systems, sometimes referred to as ‘algorithmic moderation’ (Gorwa et al., 2020), are built on a range of artificial intelligence (AI) or machine learning and natural language processing techniques and have been considered solutions to issues of scale and the challenges in decision-making posed by ‘problematic’ content. These systems are often criticised as being in a constant state of co-production (Govia, 2020), an ‘imperfect solution’ (Gillespie, 2018: 97), but essential to managing the safety of platform users at all scales. Approaches to the field of ‘integrity’—a term championed by platforms for its more virtuous connotations (see Halevy et al., 2022)—tend towards designing technical solutions to unwanted platform activity. Gorwa et al. (2020) argue that while it fills this role of accommodating platform scale, algorithmic moderation can ‘decrease decisional transparency’ and obscure or decrease accountability for the values and politics that are built into those systems (p. 3). Transparency is of particular concern when platforms use algorithmic ‘reduction’ of the visibility of certain types of content it deems problematic but not enough to ban (Gillespie, 2022). In addition, there may be limits to technical solutions when they ignore the lessons, insights and learning offered by human moderators, or are static rather than dynamic and adaptable in decision-making.

The core issue here has been described by Brian Christian as an ‘alignment problem’ facing machine learning and automated decision-making. While automated systems continue to improve in their ability to perform certain tasks and make decision autonomously, the question of alignment is about ensuring that those systems do what we want and need them to do. Christian (2020) considers whether it is possible for such systems to ‘capture our norms and values, understand what we mean or intend, and above all what we want’ (p. 8). It is worth noting that his arguments and cases do not always consider the diversity of intentions and needs behind the ‘we’ and the ‘our’. Nonetheless, many current systems are at odds with human intentions, mainly because it is difficult to embed values and intentions within those automated systems, particularly when people are not always clear about what needs to be considered when making autonomous decisions. These are not merely technical issues to be resolved through better machine learning models or datasets.

In the case of automated moderation of mental health-related content, the issue is also how to enhance the way moderation teams act with and in relation to the risk-orientation embedded in automated moderation systems. This is also of concern in fields such as human computer interaction, where human involvement in AI systems design is seen as essential to ‘ensure that the insights being derived are practical, and the systems built are meaningful and relatable to those who need them’ (Inkpen et al., 2019: 3).

Overall, then, the conflict between trigger risk and support needs makes it difficult for moderation practices and tools to handle mental health content effectively. Current research suggests the need to expand the understanding of the labour and practices involved in mental health moderation, including the use and integration of automated systems. We explore possibilities for achieving this by adopting an ‘adaptive logic of care’, an approach we elaborate below and examine through our data analysis.

Conceptual approach: an adaptive logic of care in content moderation

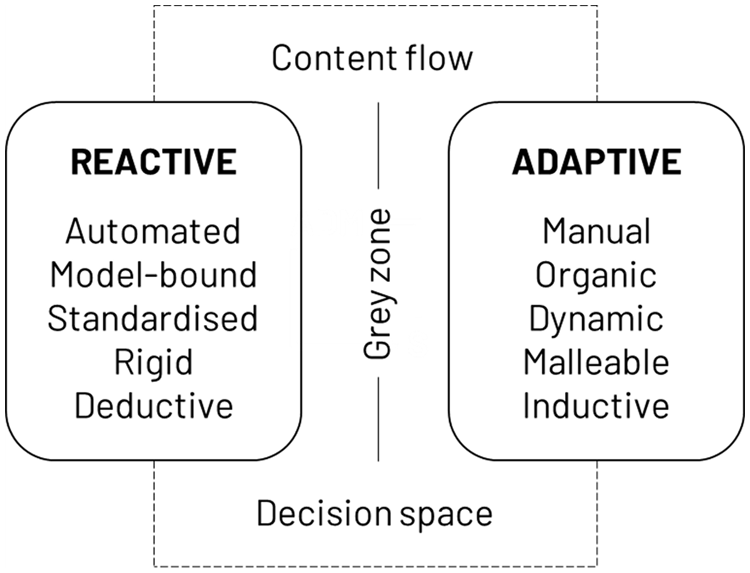

Ruckenstein and Turunen (2020) draw on the contrasting ‘logic of choice’ and ‘logic of care’ as conceptualised by Mol (2008) to explore the way content moderation professionals perceive and perform their moderation practices. Describing the ambiguity that moderators have to attend to in their work, Ruckenstein and Turunen (2020) argue that a logic of care ‘is an ongoing process, aimed at maintaining and improving the overall platform culture’, dealing not only with illicit and offensive material, spam, advertising, soliciting, but ‘all kinds of “mess” and “disorder” in the platforms’, including content out of place or containing private information and otherwise threatening safety (pp. 1031–1032). The idea of a logic of care underpinning moderation practices acknowledges the socio-technical entanglements and the relational component of the work of co-producing moderation. It recognises that moderation goes beyond binary decision-making in determining the value or risk of content and interactions. However, there is a perceived disconnect between that work and the increasingly automated processes that are predicated on a different logic of control and reactive choice (Ruckenstein and Turunen, 2020: 1038). We extend these ideas to consider reactive and adaptive logics in the decision space of content moderation (Figure 1).

Mental health content flow within a reactive and adaptive decision space.

The goal of ‘rehumanising’ discussion platforms, as Ruckenstein and Turunen (2020) put it, requires commitment to a logic of care that does not always mesh with the reactive logic of algorithmic decision systems. While human moderation can be very ‘machine-like’ (Carmi, 2019: 450), when the goals of a particular platform are predicated on supporting wellbeing and allowing space for sensitive discussion of topics, there must be capacity for adaptation and adjustment. However, more work is needed to establish how to ‘encode’ a logic of care into the human–machine moderation assemblage.

Mental health services providing platformed support have recourse to a range of care-oriented goals that distinguish them from commercial platforms. Their aims are to support ‘resilience’, ‘recovery’, ‘self-efficacy’ and similar strength-based individual and community approaches to addressing ongoing mental ill-health outside of clinical settings. These goals set the groundwork for what we refer to in this article as an adaptive logic of care in moderation practices and discussion platform management. Resilience is broadly understood across several disciplines as an individual and collective capability to adapt in the face of adversity, trauma, tragedy, threats or even significant sources of stress. Resilient individuals and communities can draw on personal and collective psychosocial resources to manage and adapt to everyday challenges exacerbated by mental ill-health (Berkes and Ross, 2013). As part of a strengths-based logic of care, resilience factors present in peer-support forums involve learning and access to new knowledge, belonging and social capital through access to trusted and supportive networks, resources for improving self-efficacy and adaptive capacity (Kang et al., 2022; Steiner et al., 2023). Questions remain as to how to foster these resources within discussion platforms and support groups, and how they are realised across the wider digital mental health ecosystem through the work that professional and peer moderators do to stimulate cycles of sharing, support, feedback and learning (McCosker, 2017a; McCosker and Hartup, 2018).

Research context

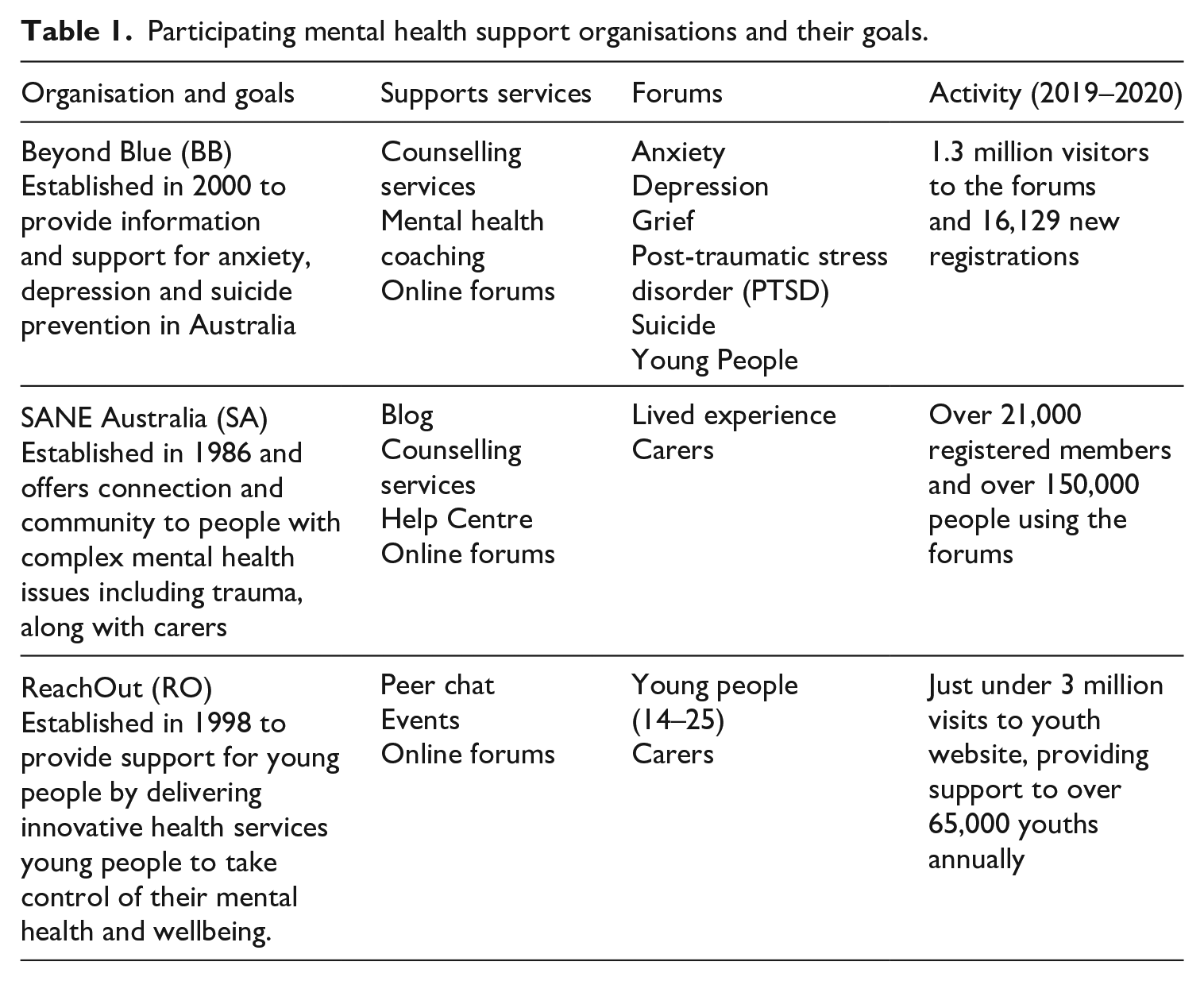

The three non-profit organisations featured here operate a range of face-to-face and digital mental health support services. Their integrated service framework includes online forums, self-directed community-based support, phoneline service, one-to-one peer work and counselling and arts and social groups (Table 1). Each grew out of a need to provide additional community-based mental health support outside of the tertiary and primary care systems and have been funded by a mix of charitable donations and government funding. Beyond Blue focuses on depression, anxiety and suicide prevention, SANE on complex and intersecting mental health issues, and ReachOut on mental health support for young people. ReachOut was founded through a community fundraising campaign hosted by the youth radio station Triple J and restricts membership of its forums to young people between 14 and 25 years old with a separate forum for carers. Despite these differences, the three organisations often work together or collaborate, and at the time of writing, SANE and ReachOut were in the process of collaborating on shared support forum infrastructure and moderation.

Participating mental health support organisations and their goals.

There are varying moderation and management roles across these organisations, and substantial movement of personnel between the different organisations or in similar roles supporting other healthcare organisations. We also note variation in the availability and use of clinical support staff, paid moderators, community managers, use of outsourced overnight moderation services, appointment of volunteer moderators or related roles named variously as community guides and champions.

While they continue to use the term ‘support forums’, they can be more accurately defined as discussion platforms, as they have undergone gradual shifts in technological architecture, now all running on the Khoros platform (khoros.com), which allows for new features. ReachOut was an early adopter of machine learning as a support for platform moderation through a bespoke automated ‘triage’ system, and this system was subsequently installed at SANE. This differs to the flatter risk keyword-based systems used by Beyond Blue. Where the latter sees moderators intervene as soon as risk is flagged through filtering, the triage system differentiates three levels of risk and continues to learn and moderators are also involved in adjusting parameters and inputs, continually training the system to better support their moderation practices.

Methods

In Australia, non-profit organisations that host mental health forums prohibit research without the forum managers’ consent and oversight, and direct consent when conducting research with forum members or moderators (Kamstra et al., 2022). The three non-profit organisations are partners on an Australian Research Council funded project and were instrumental in establishing consent for the study, recruiting the appropriate participants from their organisation, and communicating the research with their community members, in conjunction with Swinburne University ethics committee approval. This approval covered data collection through interviews, workshops through participant consent signoff.

We conducted in-depth interviews in 2020 with seven forum community moderators and managers across three partner organisations to better understand the programme logic underpinning the forums and to explore their moderation practices. An online workshop was then held in 2022 with four community forum community moderators and managers across three partner organisations (two from ReachOut and one each from SANE and Beyond Blue) to follow up and expand the research, with two new staff filling old positions (total n = 9 informants). These participants represent the core moderator and community management teams for the three organisations, responsible for coordinating larger groups of paid and peer moderators. Hence, we refer to the study participants as informants with regard to their specialised roles, knowledge and experience in the research context, and in their role as conduits and ethical guides for the research (Field-Springer, 2017).

Interviews and workshops covered a wide range of issues relating to the purpose and goals of the discussion platforms, their place within an integrated mental health support service ecosystem, and how they were moderated. The workshop was designed to be more informal to encourage dialogue between the organisations, allowing for open and free flowing conversation where ideas could be shared in an online space (Ørngreen and Levinsen, 2017). The workshop explored questions concerning the role the discussion forums play for each service provider, how moderation works to achieve their goals and challenges and areas for improvement. As a cross-organisational discussion, the workshop allowed deeper reflection on how moderation practices took place, the logic and goals of those practices, and both shared and contrasting experiences.

Document analysis also played a part in triangulating the account of moderation, albeit with the focus more on moderator systems and practices. We refer to the community guidelines published on each organisations’ websites and made available to new platform members, and these supported our understanding of the ‘social facts’ (Bowen, 2009), or ‘stable orientations’ (Gillespie, 2018: 72) around which moderation takes place. Analysis of the combined data set involved a first pass open coding process to establish central and outlying themes with two researchers iterating codes and ensuring inter-coder agreement. Our findings and discussion focus on part of the discussions that homed in on the relationship between human and machine moderation practices. We took an abductive approach (Timmermans and Tavory, 2012) building on the logic of care theory described above, and accounting for the alignment problem noted in the literature. The themes arrived at emphasise those aspects of how our informants understood the human–machine interactions as part of their broader efforts to manage their discussion platforms as safe and helpful spaces.

Findings

Automated moderation was positioned generally as an essential ‘first line of defence’ tool or aid in a wider set of moderation practices and processes. Platform managers explained the value of the automated systems in relation to the weighing of risk against community guidelines and other less explicit reference points for making moderation decisions. The systems worked well when they took care of actions that needed to be done but not necessarily by people. Automation is understood to ‘assist with prioritising risk, flagging guideline breaches, collating data, and supporting the community to manage response times’ (Erika, RO, interview). However, our informants insisted that some tasks, like responding directly to individuals or conducting risk assessments and referrals should be done by people with experience and expertise.

It became clear that human moderators, managers or peer workers and the automated systems that assisted their moderation efforts, weighed decisions against a complex and essentially tacit ‘risk matrix’. This sense of weighing risk when deciding about intervention comes from an underlying sense of care (explained to us as a ‘duty of care’) and distinguishes the moderating teams’ evolving expertise built on experience over time. As we explain further below, the risk matrix is both rigid—algorithmic, rule-bound through stated guidelines—and fluid—an intuitive or adjusted judgement or response to content—and these responses shape moderation actions in ways that can be both reactive and adaptive.

Goals, intentions and guidelines

Using common Internet and social media metaphors and reference points, informants refer to the discussion platforms sometimes in direct contrast with Facebook and other platforms as a ‘safe space where people can write about what they’re going through and receive really important support from people that understand them’ (Marcia, BB, interview). They contrast this with what one community manager described as ‘the cluster mess of using Facebook, using TikTok, using any of these other social media platforms’ built on ‘profit making beyond anything else’ (Guido, BB, workshop). The discussion platforms serve as a way of decreasing isolation, ‘providing that immediate relief from their emotional load (Mirjam, RO, interview), and recouping a more functional sense of “community”’ and ‘belonging’ (Marcia, BB, interview). As we’ve shown elsewhere, the sense of belonging promoted here is foundational to the aim of enabling social capital and self-efficacy (Kang et al., 2022; Steiner et al., 2023). The goal is that this ‘creates this well of advice and support and wraps around them’ in ways that differ to ‘bricks and mortar standalone appointment type systems’ (Pimm, BB, interview).

A tacit set of resilience factors informs the kinds of behaviours and content that forum managers and moderators want to see and subsequently co-produce, with users, on discussion platforms. Enabling members to ‘learn from others’ and build ‘self-efficacy’ is seen as a pre-cursor to developing ‘adaptability’, or ‘learning to develop the skills to adapt independently moving forward’ (Piia, SA, interview). By increasing their users’ ‘self-advocacy’, ‘their knowledge and their readiness to take a more intensive step’, moderators see discussion platforms as enablers of resilience and recovery (Mirjam, RO, interview). By helping to develop young people’s self-efficacy, the ReachOut platform builds:

the language and the confidence to actually make changes in their life or make lifestyle changes; and, also, the support from peers to pick themselves up and keep going when things go, don’t go to plan or when they’re finding things tough. (Mirjam, RO, interview)

These goals reflect a logic of care (Mol, 2008; Ruckenstein and Turunen, 2020), and tie in with a set of frameworks or programme logics that guide the organisations’ work and are linked to government and philanthropic funding and reporting.

While each service has processes in place to manage a duty of care, particularly around suicidal ideation and what they refer to broadly as ‘high-risk posting behaviour’, as one community manager puts it: ‘we do make it very clear that the forum [discussion platforms] is not a crisis service, and it’s not entirely appropriate for the people that do need immediate crisis [support]’ (Piia, SA, interview). When they talked of their ‘duty of care’, this was weighed against the goal of self-organisation or, as one community manager put it, ‘creating a peer-to-peer space where actually, everyone else is doing the talking, and we’re not having to do a lot of the heavy lifting’ (Guido, BB, workshop).

The overarching values, intentions and goals are in some ways reflected in the codified (Gillespie, 2018: 46) set of guidelines or community standards that are available to discussion platform users and referred to by moderators when communicating about decisions to remove content. Facebook, like other dominant platforms embed commercial interests, foregrounding authenticity, privacy, safety and dignity, and focuses moderation efforts on violence and criminal behaviour, safety—including suicide and self-injury—‘obscenity’, integrity or authenticity, intellectual property. Whereas the mental health discussion platforms ask users to remain supportive, respectful, empowering, safe, friendly, noting the kind of material likely to be removed and responses that can be expected for problematic posts. ReachOut includes advice on ‘how to talk about self-harm or suicide’, and requests ‘trigger warnings for any topics that may be distressing for other members by adding “TW” to the title of your post’. 1 SANE foregrounds safety, respect and anonymity, defining safety as a concern for the poster and others: ‘although we cannot account for every person’s trigger and trauma response, the guidelines above aim protect the community against common triggers for people with a lived experience of complex mental health issues and trauma’. 2

Driven more by a logic of care, these community guidelines embed certain values into moderation. Having guidelines is about the importance and ‘value of having a set expectation about how things work, especially from a duty of care perspective of a certain post needs to be removed or edited’ (Brandy, RO, workshop). However, as we show later, responses are continuously negotiated through adaptive moderation practices and real time judgements about individual’s and the community’s needs.

Moderation routines and adaptive practice

Given the strengths-based and community-building high-level goals referred to above, it was notable that moderators and managers focus their daily processes on addressing ‘risky’ content identified by the automated triage systems or by outsourced over-night professional moderation teams. Indeed, the triage systems contribute to structuring the routine tasks moderators and managers must attend to, as reported in a study by Jhaver et al. (2019: 23). However, the influence moves in both directions and we found decision-making to be collective and distributed.

A community manager from Beyond Blue notes the importance of the regular handover processes to the evening team and then back again in the morning. Typically, this would involve identifying individuals who are showing distress in the way they are posting and monitoring and acting to address concerns:

The focus is really on users that we can see who are needing a close eye active management. We’ve got the ability to auto hold all of their posts so that every post from that user gets reviewed, just to help us kind of slow down the pace of a really distressed person who is kind of scatter-gunning, in the way they’re trying to communicate (Guido, BB, workshop).

They would then hold a ‘community of practice session’ each day, trying to ‘work through those people obviously having a difficult time and how we can support them’. This process aims to assess ‘what will be the point where we have to escalate them and stop them from posting full stop, because they’re just not in a well place, and [decide that] it’s not a space that’s working for them’ (Guido, BB, workshop). Others describe the regular check-ins as ‘reflective practice’. In the case of SANE, this involves both collaboration and space for reflection:

Ensuring that they have time together to reflect on the various ways that we do peer-work here . . . Every fortnight we have a look at the high-risk cases that are going on and review what plans we have in place to manage those look at the data or the trends. (Kara, SA, interview)

The emphasis on collective reflexive practice highlights the daily labour of the moderation team in addressing the indeterminacy of the content and behaviours on the forums, with and alongside automated moderation processes. Moderation is not just a matter of calculating risk in relation to the mental health and wellbeing of discussion platform users. Or rather, the goals and intentions of moderation do not end with ‘high-risk’ cases. Decisions are constantly made based on ongoing exchanges.

One of the criticisms of the automated moderation system was that it ‘can be black and white, and unable to factor in conflicting information or later edits made to posts’ (Erika, RO, interview). Moderation strategy can also change over time and involve more subtle decisions that are difficult to adapt complex algorithms to. One community manager relays an issue of common concern, relating more to the ‘quality’ of content:

we had a lot of members that were writing these long-term social posts, weren’t using the forums in the way that they’re designed to be used. It was a lot more social posting, and they generated a lot of conversation, like, one-word discussions, sort of silly banter, that was just not appropriate for our forums. And so, we sort of modified our guidelines to remove those discussions. (Marcia, BB, interview)

These posts would not be identified as a risk through the automated moderation system, but they ran up against the Guidelines as inappropriate for the overall goals and intentions of the forum.

There are similar areas of uncertainty across the three organisations but also differences, particularly in whether they allow ‘personal threads’ and ‘venting’ (deep self-expression and working through of trauma or experiences—rather than discussion and interaction). Kara, who moved from a position at ReachOut to SANE points to differences in policy, where ReachOut doesn’t allow ‘diary threads’ and would ‘discourage people from going back to a thread that would become just their place to vent, time and time again’; whereas with the older cohort at SANE ‘people could have their spaces, and I think that’s a moderation decision’ (Kara, SA, workshop). The community manager at Beyond Blue explained that—before the pandemic and the 2020 black summer wildfires across large parts of South-East Australia—they aimed to keep conversations ‘constructive’ and focused on mental health issues. After those events, she recognised the need to relay anxieties and vent as a response to global events: ‘so we had to adapt how we moderated the forums’ (Marcia, BB, interview). Differences in decision-making around issues such as these are determined collectively within each organisation, and wider policy changes are only made after deliberation. The different approaches reflect established practices and a tradition of decisions made at each organisation. Kara, as noted above, was able to comment on these policy differences, and could help shape changes due to her experience across the two platforms.

Whether responding to automatically flagged content or working through the grey areas of community policy, moderators are drawing on experience and knowledge that allows them to accommodate users in a more ad hoc and adaptive fashion. One community manager talked about how their team’s responses to flagged content are based on training and intuition, while referring to established responses through a ‘reference guide’:

All of the team have some sort of social work or kind of frontline support work experience, so they’ve come with some you know assumed experience that. But then we also have a look at the reference guide in terms of how to how to respond like what are we looking for, or what a young people looking for when we’re responding. (Li, RO, interview)

Judgements are made based on that training and experiential expertise. This might involve considering ‘protective factors, and then what is that person going to need, like what’s actually going to be most beneficial for them’ (Li, RO, interview). Informants noted the need to cultivate a sense of ownership and responsibility (Kara, SA, workshop), and the importance of ‘empathic facilitation’ (Guido, BB, workshop). Moderation practices, then, are distributed across a range of different professional or peer-worker and voluntary moderator roles, routines and actions. But to what extent can these roles and activities be automated, aligned with or integrated into an automated moderation system?

Automating an elusive risk matrix

It is not easy pin down the role that automation plays in applying community guidelines and policies. Informants described a loose division of decision-making, with the automated systems tending to work in an initial hierarchal and reactive mode to help classify, sort and direct attention. Here we step through core issues raised regarding scale, the allocation of risk and human moderator responses.

As with commercial platforms, moderation practices at our partner organisations are layered. With the ReachOut system, an automation layer built on a supervised machine learning classifier is trained on ‘data from the community’—as a community manager puts it to rank messages as green, amber, red or crisis depending on the urgency of attention required (for a technical account see Calvo et al., 2017; Milne et al., 2019). Structured and conceptualised like a hospital emergency ‘triage system’, the tool addresses content scale by augmenting human moderation. In one platform manager’s words, ‘it allocates risk ratings for every post that enters the community so the team can intervene with a risk assessment’ (Erika, RO, interview). A member of the SANE team estimates that around ‘60% of posts get the green tick by the triage platform’, leaving about 40% flagged for different levels of review depending based on a ‘risk calculation’. She notes that this ‘really helps when you’re trying to moderate 2000 or 3000 posts a week’ (Kara, SA, workshop).

Making decisions about risk and the required action, then, is distributed. One informant hesitated around this distribution: ‘A lot of it is, some of it is inbuilt into the platform’.

We use a platform called Khoros [with a] software add-on that will triage posts. Those [posts] that . . . present with some form of risk will be flagged with us to prioritise or assess—us as in the moderator. The moderator will then engage with that software and follow the prompts to determine whether any sort of follow up or any actions need to be taken to support that member. (Piia, SA, interview)

The slight hesitation about the balance points to the emergent or ‘tricky’ lines between automated and human decision-making in moderation. The differing roles are given a hierarchy—the moderator will ‘follow the prompts’, suggesting a temporal staging, but with the moderator ‘stepping in’ to decide whether to escalate, respond or otherwise act.

This differs in some ways from the Beyond Blue process where system and moderators weigh keywords or content against a ‘risk matrix’:

We’re just there to step in when we need to. And the way we do that is by assessing it against a risk matrix. We have a bespoke moderation dashboard that really, really aids us with our moderation. It prioritises work for us by risk, and then we have, you know, guidelines in place that assists us in decision making. (Marcia, BB, interview)

She explains that after making its calculations, the system ‘will bring a particular post with particular levels of risk to the attention of a moderator’. Decision-making wavers between system and human moderators as well as clinical experts, who can bring their expertise to the process. However, there is also a clear sense that there are limitations in the way each of the automated systems can respond.

The systems do not work well with ‘ambiguity or ambivalence’ (Erika, RO, interview), and the RO triage system is ‘difficult to modify quickly or easily in response to evolving demands or needs’ (Erika, RO, interview). Similarly, what ‘counts’ as ‘high-risk’ and ‘lower-risk’ is not always clear-cut. A member of the RO team emphasises that:

Our moderators are also assessing and managing risk. So we’re not escalating it, it stays at that moderator level. But I think that the boundaries in moderating are really important. And I think that it’s tricky. It’s tricky to find that balance. (Erika, RO, workshop)

While automated moderation systems here are understood to ‘support the team’s ongoing interventions’, they ‘aren’t perfect and do require regular human intervention’ (Erika, RO, interview). Ultimately, it is the degree of adaptability that is seen as countering the reactive actions of automated moderation systems and adaptability is central to what human moderators offer in managing the platforms. Put another way, much of the work of platform managers and moderators is checking to make sure flagged content is a risk needing intervention. With the machine learning models (RO and SA), adjustments can be made so that the system continues to learn in line with the moderator team’s values and goals. This oversight labour can be understood as part of the co-productive relationship between human and automated moderation processes, but also signals the potential for misalignment. As one community manager puts it, there is no substitute for the expertise that comes from lived experience. Peer moderators bring to the process ‘a different form of expertise—one that’s informed by lived experience’ and enact ‘mutuality, reciprocity, hope and empathy’, understood as the exclusive domain of ‘human-to-human connection’ (Erika, RO, interview).

Discussion: towards adaptive moderation and abductive feedback loops

Current research and scholarship on content moderation has sought to understand the social and political tensions that are affecting dominant commercial platforms such as Facebook and Twitter. Part of that work examines the crucial role of content moderators as ‘custodians’, managing user-generated content in relation to often contested community standards and content rules (Gillespie, 2018; Klonick, 2017; Roberts, 2019). Our findings in this study contribute to the more expansive goal for content moderation research sought by Gillespie et al., (2020) by building on the professional expertise of non-profit moderators managing complex discussion content dealing with mental health and illness. Such work can enrich the social functioning of platforms and help to build collective best practices to foster supportive spaces for mental health help-seeking. While Gorwa et al., (2020) see a shift away from ‘traditional practices of community moderation’ towards commercial and algorithmic moderation, our findings point overwhelmingly to the need for collaboration and strategic interaction between these alternatives and greater recognition of the human labour and expertise need to ensure algorithmic outputs are useful. This aligns with the growing professionalism and expertise in online community management (McCosker, 2017b; Bossio et al., 2020).

Our research question asked how moderators and managers balance their resilience-oriented goals for the platform against the ‘programmed risks’ of automated moderation. We saw that consideration of higher-risk content among our partner organisations is both a collaborative and reflexive process, with differences across the three organisations in large part based on the differing goals, strategy and traditions for managing risk and care in relation to their unique userbase. Decision-making is distributed as the automated system flags content and human moderators weigh responses against an opaque and dynamic ‘risk matrix’. We saw this decision space as a mix of both rigid and fluid experience-based templates or schemas for intervention, to some degree assessed against guidelines, but also through the lens of professional and lived experience.

The automated assignment of content as risky, while far from perfect, is also understood as helpful to the overarching goals of managing digital mental health services and providing a ‘duty of care’. This is a different kind of co-production to what Govia (2020) observed within programmers’ AI production methods. Rather, this is about how human moderators work with the AI and adjust its parameters of operation. While the flux in decision-making may suggest that automated moderation is an ‘imperfect solution’ (Gillespie, 2018: 97), we argue that it is an important part of an adaptive human–machine system that should be fostered. Decision negotiations constantly happen across and between human and automated processes through routines and reflexive practice, or adjustments to system parameters. These are opportunities for ‘alignment’ in line with Christian’s (2020) arguments about the way AI struggles to perform in ways that meet the goals and values of the human contexts in which it operates. While moderators were clear about the need for automation, to maintain a logic of care they combined this with experience, intuition and learning, making constant adjustments to rigid lines of decision-making often in response to emergent issues or individual needs. We saw this, for example, in deliberation about allowing ‘venting’, particularly in times of natural disaster or extended COVID lockdowns. Adjustments can be made in this vein to the parameters of the automated system to incorporate future such events.

For Ruckenstein and Turunen (2020), a logic of care as an ideal mode that can be drawn on to re-humanise discussion platforms and overcome the growing reliance on rule-based machine enforcement. However, in our analysis, we see in the work of specialist mental health moderators and community managers a more continuous interaction between automated triage systems and human moderation practices, reacting to content daily but with many positive adaptive attributes that shapes discussion activity as they work to maintain them as spaces of care. Effective moderation is not just about rehumanising algorithmic systems, but also making them adaptive while augmenting human moderation through fine-tuned reactive systems. This was evident in the daily collaborative deliberation over difficult cases drawing on a range of experiences, knowledge and learned expertise in making decisions. This matches, we argue, ‘abductive’ modes of decision-making—something that so far eludes machine learning according to Larson (2021). Abductive reasoning happens between the rule and the new experience, drawing on knowledge, expertise, existing theories or strategic goals. It is based on conjecture, and lived experience, like our informants’ intuitive and adaptive responses to the more ambiguous content, or activity inviting ‘mutuality, reciprocity, hope and empathy’ (Erika, RO, interview). Therefore, effective design and use of automated moderation systems in complex contexts such as mental health discussion requires ‘complementary human-centered insights that go beyond aggregated assessments and inferences to ones that factor in individuals’ differences, demands, values, expectations, and preferences’ (Inkpen et al., 2019: 3).

These practices can be distilled to help improve human–machine moderation interaction in ways that enable resilience through an adaptive logic of care. Effective moderation balances and assigns roles to reactive and adaptive decision types, as we set out in Figure 1. The dynamic depicted in Figure 1 helps to make explicit the interaction between adaptive and reactive practices in moderation scenarios, noting a set of benefits and payoffs when addressing uncertainty or ‘grey zone’ decisions and responses. These are not a binary allocation between automated reaction and human adaptive judgement; it is not that reactive moderation is negative or restrictive while adaptive moderation is positive and more effective. Rather, both modes of decision-making are required as an interactive loop. While improving mental health moderation is work that can be done within specific organisational and platform contexts, we emphasise three core steps to guide such work: (1) create moderation conditions and processes that can directly and explicitly integrate publicly stated organisational goals and guidelines; (2) ensure moderation routines—including both the interactions among professional or volunteer peer moderators, and the parameters of automated systems—allow for reflexivity and adaptability and (3) systematise adaptability as both a human and machinic logic of care to generate constant co-produced improvement (or alignment) in mental health moderation.

As the size and complexity of moderation grows, it is not simply brute force that is needed through ‘better’ or more accurate automated systems (Gillespie, 2018). Integrated and adaptive moderation, rather, draws on and adjusts to circumstances based on moderators’ lived experience and expertise aligned with dynamic goals and guidelines. There are implications for mental health moderation on large commercial platforms where, counter to recent moves to reduce staff working in safety and integrity, moderation teams and practices are needed that can integrate mental health expertise and lived experience to better adapt and adjust algorithmic systems in alignment with positive mental health interactions and outcomes.

Conclusion

In this article, we have argued that an integrated ‘adaptive logic of care’ can help manage the interaction between human and machine moderators as they address a tacit ‘risk matrix’ when dealing with sensitive mental health content. Automated moderation systems react to certain inputs via a set of coded values attributed to the intentions behind different kinds of contents. They tend to apply a trained model to identify and remove or flag content deemed inappropriate (Gorwa et al., 2020; Jhaver et al., 2019). As Gorwa et al. (2020) note, automated moderation systems are not all built or used in the same way, and have ‘varying affordances, and thus differing policy impact’ (p. 2). When ‘integrity’ and content moderation systems are trained primarily to find and restrict content designated as risky and harmful, they provide an incomplete response to mental health interaction and content.

Our findings have wider implications for the work of content moderators and platform management. The separation of roles in the moderation of mental health content is not straightforward. Both the automated and human moderators need to weigh risks against strengths or what we have referred to as resilience factors in an adaptive logic of care. Embedding reflexive and adaptive practices into the design and maintenance of automated systems might also enable more sustainable and effective moderation at scale that involves both human and machine processes but improves on both. This will continue to involve developing the expertise of both human and machine moderators, as part of platform ecosystems that are goal oriented. One limitation of our study is its focus on moderator teams and systems, and we know the important role that user practices and norms also play in these environments. Further research should explore these interactions, particularly in the way they affect the way human and automated moderation operates.

Footnotes

Acknowledgements

The authors wish to thank Sherridan Emery who contributed to data collection through interviews and workshops associated with this project. From our partner organisations we thank Bianca Kahl and Erin Neville (ReachOut), Sophie Potter (SANE) and Brent Patching and Madeline Starling (Beyond Blue).

Authors’ note

Peter Kamstra is now affiliated to the University of Melbourne.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship and/or publication of this article: This research was supported by funding from the Australian Research Council (grant nos. DP200100419 and CE200100005).