Abstract

Social media platforms are crucial for political and social engagement, where identity profoundly shapes public opinion. While existing research explores social media and identity, the rise of sophisticated bots complicates this relationship; bots programmed to mimic human identity groups may influence discourse and sway opinion on critical issues like elections and climate change. Addressing a gap in theoretical work, this article proposes the concept of instrumental bot identities, explaining how bots can be strategically designed to leverage and co-opt social identity processes for enhanced impact. By developing a theoretical framework, I outline the characteristics (e.g. relevance, familiarity, anonymity) and interaction patterns (e.g. influencing ingroup/outgroup dynamics) that could make specific identities instrumental for bot campaigns. Using the case of fossil fuel workers, a politically salient group in energy transition discussions, the article illustrates how bots could simulate classed and gendered characteristics to reinforce stereotypes, shape group norms, and ultimately influence public attitudes toward decarbonization policies. This framework highlights that bot effectiveness may stem from exploiting identity-motivated reasoning, offering crucial insights for understanding and mitigating online manipulation in polarized contexts.

Introduction

Identity is a central organizing element of social life. It helps establish social positioning as we construct and narrate ourselves and others into social contexts (Campion, 2019). As such, identities have an outsized effect on public alignment on key issues (Mason, 2015). Social media has rapidly become a central space for politically and socially consequential interactions. However, the neoliberal, libertarian ecosystem created by corporatized social media platforms profoundly shapes these interactions, impacting our identities and how we navigate them (Bastos, 2021). Though research on social media and identity has grown significantly in recent years, it has yet to robustly acknowledge a seriously complicating factor: not all actors on social media are real people.

Since the 2016 American presidential election, there has been growing awareness of the potential for bots on social media platforms to sway public opinion on significant issues. Social bots are capable of swaying public opinion on major issues (Allcott & Gentzkow, 2017; Bastos & Mercea, 2019; Bessi & Ferrara, 2016; Ferrara, 2017; Keller & Klinger, 2019; King et al., 2020; U.S. Senate Intelligence Committee, 2018), exacerbating political polarization (Howard, 2020), and disrupting the social trust necessary for a healthy democracy (Bradshaw & Howard, 2017; Kreps & Kriner, 2023). This phenomenon, termed “computational propaganda,” involves the strategic use of algorithms, automation, and big data to manipulate public opinion (Woolley & Howard, 2016, 2018). These sophisticated systems contribute to political polarization and the erosion of trust, making computational propaganda a central issue in understanding contemporary online discourse.

Across the computational social sciences and computer sciences, significant efforts have been made to detect social bots and gauge the relative impact of various bot campaigns (e.g. Besel et al., 2018; Dorri et al., 2018; Grimme et al., 2018). Though significant strides have been made toward these ends, efforts have been complicated by ongoing improvements in bot sophistication and the capabilities of bots to convincingly mimic real people (Cresci et al., 2019; Grimme et al., 2017; Yan et al., 2021). This presents challenges for detecting bots (Echeverrïa et al., 2018; Gorwa & Guilbeault, 2020; Iliou et al., 2019; Martini et al., 2021).

It is not just the convincing performance of a real person that may make bots so effective at shaping social issues. Instead, our identity processes subject us to complex, motivated reasonings that could shape how effective a given bot campaign will be on various social groups (Yan et al., 2021). Social bots also have a “Self” (Gehl & Bakardjieva, 2017) or identity that they are programmed to perform. This identity is instrumental, theoretically chosen by the programmer based on how they are meant to resonate with various social groups (Latah, 2019). It is therefore imperative that we understand what makes an instrumental bot identity, and how these instrumental identities might be leveraged to engage in our identity processes, if we want to better understand the effectiveness of bots at driving discourses on key issues.

A growing body of evidence demonstrates that bot campaigns are increasingly sophisticated in their use of identity. For instance, the Russian Internet Research Agency (IRA) has been shown to have created a range of fake social media personas, such as “Blacktivist” and “LGBTUnited,” which were designed to impersonate and infiltrate activist communities (Friedberg & Donovan, 2019). These accounts adopted the linguistic styles, cultural references, and grievances of these groups to build trust and sow discord. Similarly, researchers have documented the use of bots with distinct, human-like personalities and political views to engage with and influence unsuspecting users ( 2024). These examples underscore the real-world applicability of the theoretical framework developed in this article and demonstrate that the strategic creation and deployment of bot identities is a tangible and significant threat to the integrity of online discourse.

The use of bot campaigns could catastrophically hinder meaningful social change, particularly on wicked problems like climate change, where powerful actors have a documented history of shaping politics to their benefit. Climate change is a valuable case for thinking through bot identities for several reasons. First, the efforts of vested interests to mislead the public about the scientific certainty of climate change have been well documented (Oreskes & Conway, 2010; Carroll, 2021). After all, climate action and the energy transition challenge hegemonic fossil capital and threaten the hegemony of those who benefit from fossil fuel–based energy systems (Malm, 2016). Protecting this hegemony through bot campaigns would be in alignment with past initiatives. Second, there are established petro-populist efforts to associate the energy transition with an elite agenda that harms the average citizen to drive support for the fossil fuel industry (Gunster et al., 2021b; for example, see Turner, 2012). Because bots can effectively engage in identity processes and shape discourse on key issues, they present a powerful tool for digital astroturfing (Kreps & Kriner, 2023; Woolley, 2023), and it would be a logical step for digital astroturfing through bot networks to be used to spread this messaging further. Theorizing what kinds of identities bots are programmed to perform can be critical to understanding digital astroturfing campaigns across political issues.

This article explores how bots on social media platforms could intervene in identity processes to sway public opinion on key issues. As I will demonstrate, instrumental bot identities are a useful yet underutilized lens through which to examine the efficacy of bots across different social groups. I argue that bots are deliberately designed to mimic identity groups relevant to key political and social issues, amplifying their impact on public discourse. The theoretical framework establishes the characteristics of an instrumental bot identity and outlines how bots could intervene in the identity processes of different social groups. I demonstrate my argument using the example of fossil fuel workers—a politically salient identity group in discourses on the energy transition. By performing the politicized identity of fossil fuel workers, bots are well-positioned to influence public opinion on decarbonization by engaging real people’s identity processes.

Literature Review

Social Bots

Many novel bots have been designed and distributed over the last decade; however, the terminology used to describe them and their properties is inconsistent (Lebeuf et al., 2019). “Bot” has been used to refer to everything from simple scripts that automate background tasks to complex entities that interact with humans and iteratively adapt to activities that humans and systems do while mimicking human behavior and intelligence (Lebeuf et al., 2019; Monaco & Woolley, 2022). There are three key dimensions to consider when describing a bot: the properties of the environment in which the bot was created and operates, the intrinsic properties of the bot itself, and how the bot interacts with its environment (Lebeuf et al., 2019). My focus will be on social bots which “automatically produce content and interact with humans on social media” (Ferrara et al., 2016: 96). Social bots have unique design and operational aspects that enhance their capacity to shape online narratives (Ciesla, 2024)

Though social bots are programmed for a wide range of purposes, they generally have one of four aims: (1) to change the actions of an individual or group, (2) to change the perceptions of an individual or group, (3) to hide the malicious activity of an individual or group (e.g. cyberbullying), and (4) to spread malware (Van der Walt & Eloff, 2018). Bots range in their ability to successfully pursue these intents, and a large part of their effectiveness rests in how convincingly bots mimic real people and avoid detection by people and platforms (Latah, 2020).

To successfully integrate into human social networks, social bots engage in three distinctive behavioral patterns (Hegelich & Janetzko, 2016). The first is mimicry, where they replicate what a real human user’s account might look like. This mimicry can take many different forms based on the identity that the social bot is attempting to perform. The second behavioral marker is window dressing, where bots attempt to appeal to target human users by promoting popular topics and using hashtags. The third behavior is reverberation, where bots retweet or share content from other accounts to engage human users and integrate into their social networks. As I will argue, these behavioral patterns derive their efficacy due to how they engage with human identity processes. Therefore, to understand the potential impact of social bots, it is important first to understand what identity is and how managing our identities leaves us vulnerable to bot campaigns.

Identity

Identity is a central organizing element of social life. We use identity to construct meanings, classify groups and individuals, and narrate ourselves into existing social networks and cultural contexts (Brekhus, 2020). The social groups in which we hold membership greatly influence our identity (Bourdieu, 1987; Tajfel & Turner, 1986). Group membership grants a sense of belonging, security, and self-esteem, maintained by the ascription to a common set of values and beliefs (Burke & Stets, 2009). Our commitment to our ingroups is matched with a distancing or “Othering” of outgroup members (Islam, 2014; Tajfel & Turner, 1986). We tend toward a positivity bias for our ingroups and an exaggerated understanding of differences between our ingroups and outgroups. Some group memberships are ascribed, such as race or gender, while others are voluntarily entered, like religion or sports (Carter & Marony, 2021).

The relevance or salience of our different group memberships is situational. Individuals manage multiple competing identities that draw varying degrees of social value and cultural attention from others that change over time and across contexts (Stryker, 2008). For example, being a bandwagon sports team fan may only be salient when you attend a game against your hometown team’s rival. Likewise, you may not particularly care that your co-worker is a devoted fan of your hometown team’s rival until they tease you about beating your team in the finals, raising the salience of your membership as a fan, and invoking defensiveness.

Information and its framing play an important role in identity formation and maintenance. People use the information available to them to understand the world, their place within it, and construct practical, cognitive strategies to cope with it (Swidler, 1986). We are much less likely to challenge the accuracy of information shared by those we perceive as part of our ingroup (Jun et al., 2017). We are equally likely to reject information that conflicts with our pre-existing perceptions of the world and our identities, demonstrating a preference for information that validates our ingroup identities even if that information may not be factually correct (Harel et al., 2020).

Identity negotiation is instrumental. In social situations, individuals strive to confirm the meanings of or verify their identities to experience positive emotions (Stets & Burke, 2014). As political contexts change, what is relevant and advantageous also changes. Cues from the emerging context can raise the salience of a particular social identity (Turner et al., 1994) or “activate” the identity (Carter, 2013, p. 206). Individuals may politicize and activate—or be forced to politicize—more than one identity (Duncan & Stewart, 2007); however, the degree to which an individual will link political events with their identities—and thus shift their group allegiances based on those events—depends on the personal commitment an individual has to the implicated identity (Stryker, 2008). Some groups develop a greater politicized collective identity, whereby “group members (self) consciously engage in a power struggle on behalf of their group” (Simon & Klandermans, 2001, p. 324).

Those identity groups centered in discourses on key social issues are politically salient identities and instrumental to the narratives formed around contentious issues. The conscious manipulation of how politically salient identities are represented—the “motivated representations”—is directed by those with the power and financial means to generate and disseminate cultural texts. Over time, these motivated representations turn elite beliefs and values into common-sense perceptions (Seiler and Seiler, 2004, p. 173). These motivated representations are dynamic, as politically salient identity groups engage in “politics of recognition” to contest how they are represented (Taylor, 1992), forcing a response. The iterative process of negotiating these identities plays a symbolic and critical role in social struggles. It is at these sites of identity navigation that I argue bots intervene.

Social Media, Identity, and Bots

Literature on social media and identity generally treats social networking sites as a digital space in which conventional identity processes occur (Pooley, 2014). Specific web-based qualitative approaches like netnographies, which situate “the study of communities and cultures emerging through electronic networks,” are a notable exception (de Valck et al., 2009, p. 197). Literature on social media and identity overwhelmingly aligns with Goffman’s theory of performativity and considers how the affordances of different social media platforms shape how we intentionally express ourselves (e.g. Davidson & Joinson, 2021; Goehring, 2019). This approach focuses on how people form expectations regarding potential audiences who may view their account and adapt their communication accordingly (French & Bazarova, 2017). For example, when creating an account, users purposefully choose the elements or phrases they want to represent themselves with, engaging in intentional moments of identity reflexivity (i.e. Brubaker & Cooper, 2000).

While moments of deliberate identity performance have been a significant focus, these moments of reflexivity, beyond profile curation, are a narrow representation of how identity is invoked through social media. Instead, most social media platforms facilitate fast-paced, emotionally affective interactions that keep users on sites longer and thus generate more advertising revenue (van Dijck et al., 2018). Platform algorithms reinforce ingroups, artificially prioritizing sensationalist and emotional content in individuals’ feeds, forming echo chambers, triggering identity responses, and facilitating emotionally charged engagements (Bastos, 2021). However, it is not just platform algorithms that drive platform polarization: social amplification by users also shapes the visibility of polarizing content (González-Bailón et al., 2023). In some cases, algorithmic effects may even be relatively modest compared with an individual’s social curation (González-Bailón et al., 2023). This social curation helps us verify our identities to experience positive emotions (Stets & Burke, 2014). We are therefore much more likely to engage with other accounts and content that affirms our identities, regardless of whether they are a real human account or if the information they share is accurate (Harel et al., 2020). For example, research by Guess et al. (2020) on the 2016 election revealed that the consumption of fake news websites was not widespread. Instead, these sites represented a small fraction of the average person’s information intake. They were mainly frequented by a small proportion of the public, indicating a stronger inclination toward misinformation that confirmed the pre-existing views of a select group.

The ability of bots to widely distribute fake news or perform instrumental identities could make them highly effective at intervening in our identity processes and swaying public opinion on key issues. Political bots are a type of social bot that impersonate political actors and interact with human users, aiming to influence public opinion (Yan et al., 2021). Political bots are primarily created to promote a specific party or candidate, creating fake backing and popularity through digital astroturfing (see Kovic et al., 2018). The presence of political bots is familiar to many in Western democracies, having been linked to the MacronLeaks misinformation campaign in the 2016 French elections (Ferrara, 2017), hyperpartisan news leading up to the Brexit referendum (Bastos & Mercea, 2019), and discourses surrounding the 2016 US Presidential election (Bessi & Ferrara, 2016; Senate Intelligence Committee, 2020). Though the extent to which political bots influence broader public opinion is still subject to debate, it is clear that political bots can be highly effective tools for spreading misinformation, fostering polarization (Woolley, 2023), and eroding social trust (Kreps & Kriner, 2023).

Apart from chatbots, most bot accounts do not operate individually. Rather, like any social group, bots’ power rests in their numbers and ability to coordinate across networks. Through the coordinated efforts of “botnets,” bots can influence social discourses faster, at a larger scale, and a lower cost than real, human-operated accounts (Latah, 2020; Yan et al., 2021). Advances in generative AI enhance the effectiveness of these bot networks by improving the unique identity mimicry of each bot (Goldstein et al., 2023), making bot astroturfing campaigns a looming threat to democracy (Kreps & Kriner, 2023; Woolley, 2023). Recent developments have allowed large language models to generate text that is almost as persuasive to US audiences as genuine foreign covert propaganda (Goldstein et al., 2024), a reality that Western democracies are ill-prepared to deal with. The intuitive heuristics that people use to try to identify AI-generated content are inadequate to actually do so, leaving us vulnerable to novel, automated methods of deception, fraud, and identity theft (Jakesch et al., 2023).

Despite the threat bots may pose to our social and political systems, approaches to detecting bots still lag behind bot development (Iliou et al., 2019). Modern spam bots are becoming harder to identify as they effectively simulate genuine human accounts by using fabricated metadata, such as GPS spoofing (Cresci et al., 2019). Social bots can engage in context-aware attacks where they mimic a legitimate user’s behavior, network structure, or interest to appear legitimate (Gürsun et al., 2018). Our ability to quantitatively pinpoint the full extent of bot influence on our social and political systems is thus hampered (Daume et al., 2023).

As unnerving as it may be to recognize that bot detection lags bot development, it is important to note that even as better knowledge about the presence of bots becomes available, it is unlikely to change how most people engage with them. The motivated reasoning that guides our identity processes interferes with our ability (and willingness) to critically discern which users are human and which are bots (Yan et al., 2021). Individuals use the same social rules when interacting with computers as they would when interacting with people (Kim & Sundar, 2012), often ignoring the cues that signal the non-human nature of a computer (Nass & Moon, 2000). Because we are motivated to verify our identities (Carter & Marony, 2021), we are motivated to believe those users who affirm our identities are real or, at the very least, we tend to ignore the inconvenient fact that they may not be. Likewise, misinformation will not necessarily change a reader’s mind (Kreps et al., 2022), but how that misinformation aligns with their identity will overwhelmingly determine its effectiveness. In an experiment, Bail et al. (2018) found that, despite being informed that they were interacting with bots designed to mimic partisan personas, users carried these experiences into their lived interactions, becoming less trusting of the opposite political party members. In other words, interacting with political bots engages us in identity verification regardless of whether we know they are bots. This suggests that simply focusing efforts on the technical challenge of detecting bots fails to address the broader social mechanisms that shape our engagements with bots.

A Theoretical Framework for Instrumental Bot Identities

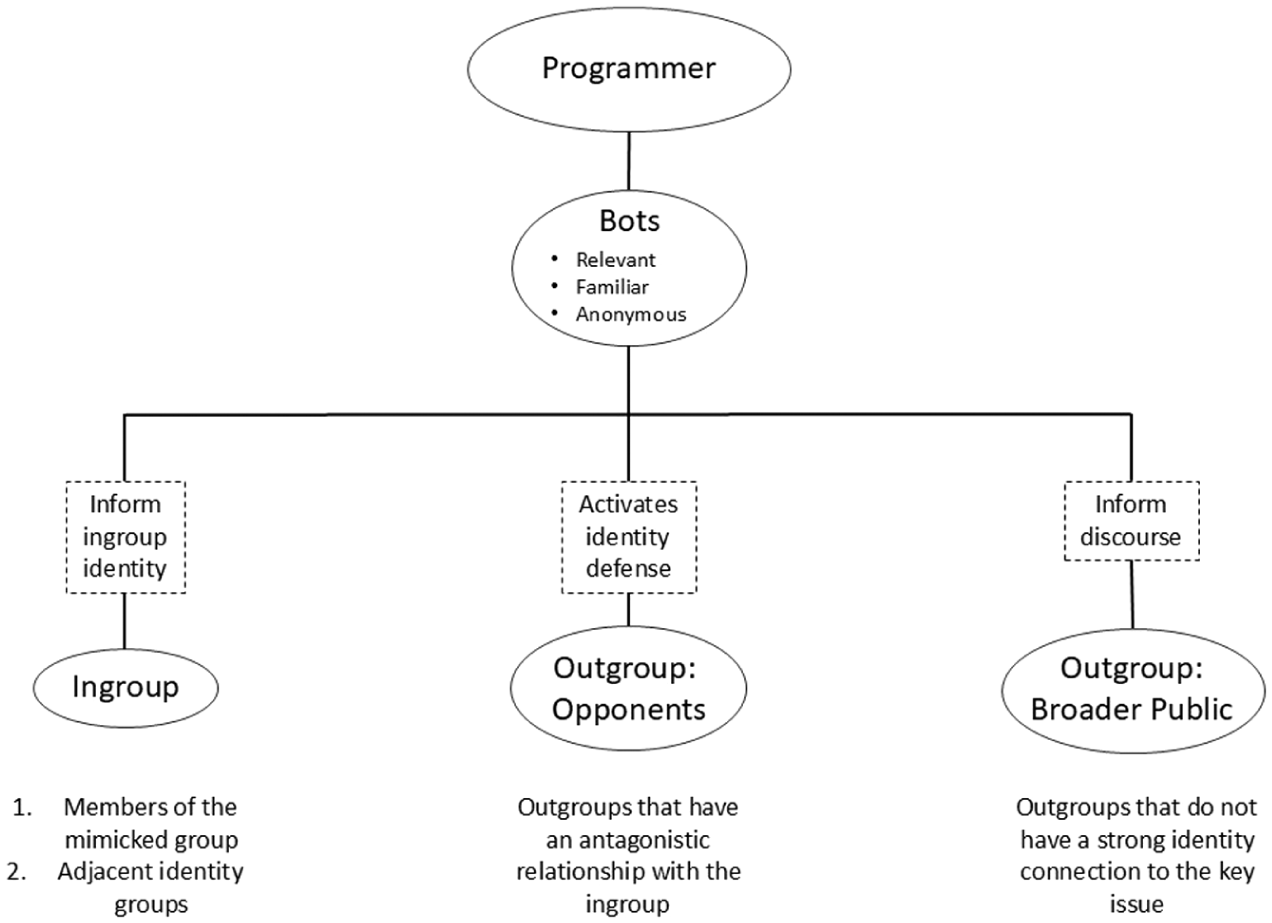

In the following section, I offer a framework (Figure 1) for how bot accounts programmed by motivated political actors might intervene in identity processes. As I will outline below, three main characteristics of a politically salient identity make it more instrumental for shaping discourses on a key issue over other identities. I then clarify the main avenues through which social bots intervene in identity processes: programmers, ingroup/allies, outgroup/opponents, and outgroup/broader public.

Theoretical framework for an instrumental bot identity.

The framework is not designed to identify sites where we can say with absolute certainty that bots are intervening; instead, it pinpoints spaces of particularly emotive identity processes, drawing attention to those identity interactions most readily exploited by bot campaigns. The framework is a response to the call from Daume et al. (2023) for theoretical approaches that explore the likely influence of bot accounts. I use the example of fossil fuel workers in the energy transition context to demonstrate how specific identities are more instrumental in influencing key issues and, therefore, are more likely to be impersonated by bots. In the next section, I briefly introduce fossil fuel workers and the energy transition debate, outlining why it is a valuable case study, before moving on to the details of the theoretical framework for bot detection.

Fossil Fuel Workers and the Energy Transition

Few misinformation campaigns have been as highly effective as those launched by fossil fuel corporations to sow uncertainty about climate change. Though the trends of a warming world have been known within the scientific community for decades, broader public knowledge of the issue and political willpower to take action were delayed by the intentional actions of fossil fuel companies like ExxonMobil (López, 2022; Oreskes & Conway, 2011). The industry has since ceased its outright denial of climate science; however, they continue to engage in several avenues that hinder transformative energy system changes (Benegal & Scruggs, 2024; Slevin et al., 2025). It is not just fossil fuel companies; it is a complex network of individuals and institutions whose identities and material success are entangled in the ongoing hegemony of fossil capitalism (Daggett, 2018; Malm, 2016).

The tactics used by these fossil fuel–invested actors have evolved over the years in response to increased scrutiny of the environmental and social impacts of the industry. Social media has effectively disseminated climate disinformation (Daume et al., 2023; McKie, 2021; Treen et al., 2020). Calls circulate through social media networks to encourage industry employees and sympathizers to advocate more for the industry (Gunster et al., 2021b, for example, see Turner, 2012; Wood, 2018). These petro-populist narratives situate the energy transition as a threat to extractivism and the communities economically dependent on it, thus positioning fossil capitalism as emergent from the democratic will of a social movement rather than something orchestrated by powerful vested interests (Malm, 2016). Understanding how the identities of communities and workers are exploited to secure this support (or the appearance of it) is a primary focus of environmental social sciences (Bell et al., 2020; Daggett, 2018; Eaton & Enoch, 2021).

Fossil fuel workers are central to maintaining the validity of petro-populism (Gunster et al., 2021a) making them a politically salient identity group within climate politics. They represent a key workforce for the transition from fossil fuels and are a central component of the capitalist system that relies on cheap fossil fuels. Their work also embodies industrial and frontier masculinity characteristics, which hold symbolic capital, particularly for the political right (Daggett, 2018; Vowles & Hultman, 2021). Like other natural resource occupations that align with a rural, frontier masculinity, fossil fuels are deeply entangled with identities and ways of life (Filteau, 2014). The deep immersion of fossil fuels in Western economies has facilitated not only direct material benefits to the predominantly male financial and occupational sectors associated with fossil fuels but also spawned lifestyles and identities that are themselves highly gendered and racialized (Krange et al., 2021; Moreno-Soldevila, 2022).

Special interest groups like fossil fuel workers with highly embodied cultural capital can be especially useful for mobilization by powerful actors, as their unconsciously acquired yet obvious skillsets or mannerisms are likely to be seen as legitimate competence instead of a form of capital (Bourdieu, 1987). Within Western culture, masculine identities—particularly those associated with whiteness—tend to be heavily laden with this symbolic cultural capital (Hultman & Pulé, 2018; Miller, 2014), carrying a significant amount of influence over reproducing systems of power because of their intangibility and unmarked status. This is likely one reason why petro-populist advocates have turned their attention to elevating workers as a politically salient group who supports the industry.

With respect to the political salience of fossil fuel workers, the self-identities of workers are of less consequence than how they perceive themselves as belonging to a group, the ways the wider public perceives workers, and how these perceptions uphold broader cultural and social narratives about the possibility of the energy transition (Letourneau et al., 2023). In other words, when it comes to the instrumental power of politically salient identities like fossil fuel workers, it is not about what most individuals from that identity group believe. Instead, it is about how the rest of society perceives them (Taylor, 1992). It is within these broader societal narratives that there are ample opportunities for misrepresentation by powerful or influential actors to sway public opinion on key issues like the decarbonization. As the next section will outline, bots offer a powerful avenue for fossil fuel–vested interests to dispense “motivated representations” (hook, 1997) of politically salient identity groups like workers to continue driving petro-populist narratives.

To develop the theoretical framework, I work with the assumption that bots have been programmed by actors who seek to defend fossil fuel hegemony and resist the energy transition. This assumption is in line with a substantial body of literature that addresses the misinformation (Carroll, 2021; Oreskes & Conway, 2011), astroturfing campaigns (Dunlap, 2022), and political manipulation tactics (Goldberg et al., 2020) used by fossil fuel companies and industry supporters to defend the sector. However, it is important to note that, given the accessibility of bot programming, bots can be programmed to spread messaging in favor of an energy transition, though little scholarship has demonstrated social movement groups using explicit deception to further their goals.

Characteristics of an Instrumental Bot Identity

While bots can be programmed to mimic any desired identity, I argue that specific characteristics make some identity groups more politically instrumental to mimic than others. I will begin by defining these characteristics below and then demonstrate how they vary based on the identity process involved.

Relevance

The first characteristic is the relevance of the identity to the targeted discourse. A key factor when we assess the validity of the information is our subjective belief in the authority or positionality of the speaker on that topic (Callison, 2014). In the energy transition context, fossil fuel workers are relevant because they will be directly impacted by a transition away from fossil fuels (Letourneau et al., 2023). Their loyalty to the industry or support for transitioning is an important source of validation for those who seek to defend fossil fuel energies and those who wish to see an energy transition occur (e.g. Cha, 2020; Gazmararian, 2024). Those who seek to maintain fossil hegemony are more likely to draw on the need to defend workers’ current jobs in the fossil fuel industry, supporting messaging that implies workers are not interested in transitioning. Those supporting an energy transition may focus instead on emphasizing why workers want to transition to jobs outside the fossil fuel sector. For example, they may emphasize issues with safety or the lack of job security in an industry notorious for boom/bust cycles (Vachon, 2021). In either case, the identities of workers and whether or not they support an energy transition are important, contested elements of transition discourse.

The salience of fossil fuel workers to the issue of labor and the energy transition is evident. To that effect, there are other discourses around climate change to which fossil fuel workers would be less relevant. For example, if bot programmers wanted to promote messaging that implied electric vehicles are less reliable than gas-powered vehicles, fossil fuel worker identities would be less instrumental in spreading this message. Instead, groups like vehicle mechanics would be more relevant in sharing that message, as they would be seen as having credibility and expertise in vehicle maintenance. Therefore, the impact of a politically salient identity group can be determined by a range of factors like the relevance of perspective, as it is for fossil fuel workers and the energy transition, or professional expertise, as it is for mechanics and electric vehicle reliability.

The relevance of an instrumental bot identity is established in the social discourse. Bots do not need to justify the relevance of fossil fuel workers in the energy transition discourse because their significance is already widely acknowledged. Because bots can influence discourses over time, they could feasibly shift the relevance of specific identities to key issues. They might do so by sharing messaging that prioritizes a new identity group in a key issue discourse or minimizing the relevance of current relevant identities. Therefore, even though the relevance of an identity group to key issues is important for their instrumentality, botnets can produce new relevant identities over time.

Familiarity

The second characteristic of an instrumental bot identity is the familiarity of cultural stereotypes or narratives about the target identity group within the broader public. People rely on dynamic stereotypes and cultural narratives to help them categorize and form assumptions about other identity groups (Bastos, 2021). Though this unconscious bias informs most of our social interactions, the effects are magnified on social media platforms where identity presentation is constrained by platform design (Harel et al., 2020). As a result of these constraints, our expectations for identity performance on social media are reduced: we do not scrutinize digital identities to the same extent we would a physical person (Yan et al., 2021). Because we rely heavily on stereotypes about other groups to fill in these digital identities and have become accustomed to incomplete information presented about other users, bots that are performing an identity group with strong stereotype associations do not have to do as much to convincingly mimic the target identity group as they would for a more obscure identity. The more familiar an identity group is within the broader cultural discourse and the more consistent the stereotypes around that group are, the less work bots must do to establish themselves as part of that group.

Fossil fuel worker identities are situated in a range of recognizable cultural narratives that could help flesh out the profiles of bot accounts. The connection between workers and petro-masculinities or frontier masculinities provides ample cultural material that human users will draw on when engaging with bots. Of course, the framing of these cultural materials will differ based on the identity of the human user interacting with the bot account. I will elaborate on these framings in further detail in the next section, but what is important to establish here is that there is a shared understanding of characteristics about fossil fuel workers that are familiar across the broader public discourse. The meanings of these characteristics and how they are framed depend on the human observer’s identity and the relationship of that identity to human fossil fuel workers.

Anonymity

The final characteristic of an instrumental bot identity is anonymity. Unmarked identities—those that are taken for granted as socially generic and universalized (Brekhus, 2020)—less likely to be scrutinized by human users. Fossil fuel workers are generally not expected to have the same level of traceability as other identity groups (see also Ma et al., 2016). This primes fossil fuel workers to be mimicked by bot accounts without suspicion.

In contrast, other identity groups have much higher expectations for traceability. It would be much more difficult for a bot to mimic a skilled professional, such as a lawyer, because this vocation would be expected to have a verifiable digital fingerprint elsewhere online. For working-class identities like fossil fuel workers, social expectations are such that no one would necessarily be surprised if, for example, Bill, a fossil fuel worker from rural West Virginia, does not appear to have a digital presence other than his Facebook page. Identities with these reduced expectations for traceability are less likely to be subjected to scrutiny from human users and are therefore easier for bots to mimic successfully.

Bot Interactions in Identity Processes

Programmers

The identities of those who program bots are implicated in the chain reaction of identity effects. Their perceptions of the politically salient identities they program the bots to mimic catalyze the following identity processes. Despite how effective bots can be, programmers are not omnipotent; they are other flawed humans. Their programming will undoubtedly have flaws, reflecting the gaps in their knowledge. Therefore, while their bots may be programmed to mimic certain identity groups or interact with users in particular ways, the effectiveness of bots is limited by the skill set and knowledge of the programmer. The increasing access to generative AI has significant implications for reducing programmer limitations due to a lack of cultural familiarity (Jakesch et al., 2023).

Ingroup Identity Processes

Our social identities generate ingroup and outgroup dynamics between ourselves and others (Carter & Marony, 2021). We seek to defend the groups we are a part of from outsiders while engaging in an ongoing negotiation with our fellow group members around the group’s norms (Bourdieu, 1987). These ingroup identity processes help us navigate the salience of a given identity among our many group memberships. They also serve an instrumental function in facilitating intergroup competition and social change (Scheepers et al., 2006). By mimicking members of a target identity group, bots can influence group norms, changing how group members perceive themselves and other group members (Hagen et al., 2022). The ingroup identity processes in which bots participate apply to two main groups. The first is human users from the mimicked identity group.

Social bots use mimicry to perform their identity (Hegelich & Janetzko, 2016). They draw on norms from the target identity group to convincingly mimic it (Phillips et al., 2024). The identity performances of bots are often reductive, building off common stereotypes, and are unlikely to capture the full diversity of individuals who are part of the identity group. This is particularly true for the growing number of bots drawing from large datasets to inform their performance (Hundt et al., 2022). Even design choices such as the gender of bot identities are an important factor, as this changes how they are perceived by human users (McDonnell & Baxter, 2019).

The stereotype-informed identity performed by bots signals a specific ingroup identity to human users. Bots tend to entrench pre-existing views in human users from the same ingroup (Tanprasert et al., 2024). This is partly why they have successfully disseminated conspiracy theories like those surrounding the COVID-19 pandemic (Unlu et al., 2024) or elections (Vincent & Gismondi, 2021). Concerning fossil fuel workers, this could involve amplifying concerns over the threat posed by decarbonization policies to their ongoing employment. Bots may disseminate emotionally affective content to unite workers to support the fossil fuel industry against the energy transition. For example, bot accounts can sow mistrust among human workers about the policies being presented to reduce the impact on workers from transitions, such as the Sustainable Jobs Plan in Canada or the Inflation Reduction Act in the United States. Employed in large numbers, bots can create the illusion that other fossil fuel workers overwhelmingly lean a certain way politically, pressuring real fossil fuel workers to acquiesce to the alignment of the perceived group.

Ingroup identity processes involving bots are not exclusive to the niche group they are mimicking. The messaging shared by political bots will resonate with other identity groups that are closely related to the mimicked identity. This may include broader ingroups of which the mimicked identity is a part (van Dommelen et al., 2015). With the example of fossil fuel workers, a broader identity group could be fossil fuel supporters. Like fossil fuel workers, the identities of fossil fuel supporters are verified by the ongoing hegemony of fossil fuels and the lifestyles they support (Gunster et al., 2021b), motivating supporters to defend the industry—and themselves by extension.

Outgroup Identity Processes

Our commitment to our ingroups is matched with a distancing or “Othering” of outgroup members (Islam, 2014; Tajfel & Turner, 1986). As such, messaging that verifies some groups’ identities is likely to trigger identity responses in outgroups (Shenton, 2020). We define our identities based on cues from outgroups just as much as we do cues from our ingroups. Establishing who is not a part of our ingroup is just as important as determining who is, particularly when understanding our identities and place in social hierarchies. Information about outgroups can help us form a more precise boundary of our ingroups. Likewise, arguments between different identity groups result in the mutual definition of either group, engaging in what Shenton (2020) calls social media poetics.

We are motivated to process identity-threatening information defensively (Thürmer & McCrea, 2018). Therefore, we engage in various identity-defensive behaviors based on how much we feel threatened by information (Harel et al., 2020). These behaviors range from ingroup identity verification, where we turn to other ingroup members to reassure our identity (Greve et al., 2022), to outright hostile actions against outgroups (Thürmer & McCrea, 2018). The investment or reaction we have to the messaging of outgroups varies. Some outgroups have a closer relationship to our ingroups, resulting in a greater investment or reaction to the identity processes of some outgroups over others (Islam, 2014).

While any group that we are not part of is, by process of elimination, an outgroup, it is important to distinguish between outgroups that invoke a significant identity response from those that only have a marginal or imperceptible effect on ingroup identity. This is especially important for understanding the nuanced identity interactions of political bots. Therefore, I differentiate between two subcategories of outgroups that bots engage with—opponents and the public—to demonstrate that the political effect of bots is shaped by the relationship one has to the instrumental identity.

Opponents

Opponents are outgroups that have an antagonistic relationship with another group. Typically, opponents’ values or motivations are directly at odds with those of the ingroup. The inherent contradiction between the two groups entangles them in an intimate process of mutual definition, whereby their conflict shapes the norms and meanings of each identity group (Shenton, 2020). Instrumental bot identities are likely to trigger various degrees of identity defense in opponents.

One identity group’s narratives about another tend to be stereotypes (Judd et al., 1991). In the same way we seek out information that helps with our own identity verification, we are partial to information that supports our overgeneralizations about outgroups (Diamond, 2020). The same characteristics that make the bot successful at performing its instrumental identity may reinforce opponents’ stereotypes about that identity group, to the detriment of social change.

For example, individuals who strongly support the energy transition may be susceptible to information shared by instrumental bot accounts that is anti-transition or pro-fossil fuels. Anti-transition messaging could be seen as a threat to their values. It could also reinforce negative beliefs that transition supporters may have of fossil fuel workers, such as the belief that workers do not care about the environment or that workers could never be allies in transition efforts. The validation of these beliefs could increase the antagonism that transition supporters feel and express toward fossil fuel workers in the real world. This increased antagonism from transition supporters may signal workers that the energy transition movement is hostile to the struggles workers are experiencing, exacerbating the threat that workers see the energy transition posing.

This example demonstrates that bots’ influence on opponents is not limited to these outgroups but fosters resounding impacts on numerous identity groups through the intergroup interactions they initiate (Hogg, 2013). While these far-reaching impacts may be entirely unintentional, in other instances, bots may be programmed with the express purpose of stoking frustration among outgroups, as we often see with troll bots (Addawood et al., 2019).

Public

Even though we live in a time of increasing political polarization, we have varying attachments to issues based on our unique identities (Burke & Stets, 2009). As a result, some issues will spark a stronger identity response in us over others. I refer to the public as those individuals who have yet to form a strong identity connection to the key issue bots may attempt to intervene in. Unlike outgroup opponents like energy transition advocates, the average citizen approaches the issue with greater neutrality and less personal investment. This is not to say that the public has no identity response to the key issue, but to highlight that the effect is markedly different from those which opponents experience.

The primary impact of politically salient identities on the public is that they inform broader discourses on the issue. How the public perceives the central actors in the energy transition shapes how they think about the transition. It is worth noting that this is not a homogeneous interpretation. The eventual conclusions drawn by members of the public about politically salient identities are filtered through the identities of the observers, subject to complicated identity processes of meaning-making. For example, if people feel sympathy for workers who stand to lose their jobs, this may foster uncertainty about the tradeoffs of the energy transition. Among individuals who highly value labor rights, this uncertainty could translate into support for just transition policies that ensure workers are adequately supported (Gazmararian, 2024). Alternatively, among more conservative individuals, this uncertainty could lead to dismissing the energy transition as bureaucratic overreach, causing unnecessary impacts to communities and the economy (Cha & Pastor, 2022).

Whether an individual feels sympathy for workers or a different emotion entirely depends on the representations of fossil fuel workers to which they are exposed. By drawing on stereotypes readily available in cultural narratives to construct bot programs in the first place, bots can exacerbate political polarization (Howard, 2020; Veale & Cook, 2018; Woolley, 2023) and further entrench cultural stereotypes about target groups like fossil fuel workers. The identities of individual workers shape a broader understanding of fossil fuel workers as a group, and it is from these perceptions and stereotypes that the public, in part, draws its conclusions about the energy transition.

Conclusion

There is little question that bots pose a significant risk to contemporary social discourses. Better understanding how bots are so successful in their manipulation of issues is key to developing appropriate solutions. In this article, I have argued that bots are programmed to perform politically salient identities. I have used the example of fossil fuel workers and their salience to the energy transition to demonstrate how these identity processes could facilitate bots engaging in a politics of recognition with political and social consequences. The theoretical framework establishes the three characteristics of an instrumental bot identity: relevant, familiar, and anonymous. It also outlines the various types of identity interactions between bots and humans on platforms: programmers, ingroup/allies, outgroup/opponents, and outgroup/broader public.

Clarifying the identity processes in which bots intervene is necessary to understand their effectiveness in swaying discourses on key issues. A focus on identity recognizes that the sophistication or ability of a bot to mimic a real person convincingly is not the only factor determining its efficacy. While bot sophistication certainly helps bots build trust with users and avoid detection from platform administrators, the reality is that we employ identity-motivated reasoning in our assessment of bots that shapes their effectiveness. We strive to confirm the meanings of or verify our identities to experience positive emotions (Stets & Burke, 2014). We rely on the information available to us to do so (Swidler, 1986). We are generally less inclined to question the validity of information shared by individuals we consider part of our ingroup (Jun et al., 2017). Likewise, we tend to dismiss information that contradicts our pre-existing beliefs and perceptions, favoring information that affirms our ingroup identities, even when it may not be entirely accurate (Harel et al., 2020). This identity-motivated reasoning plays a significant role in our willingness to identify bots, with partisan information impairing our capacity to critically discern whether the other user, who is helping us verify our identity, is, in fact, a real person (Yan et al., 2021). By approaching the issue of bot misinformation through an identity lens, we acknowledge that more or better information about the prevalence of bots online will not necessarily change how people ultimately engage with them.

In the age of misinformation, the issue is not that people will wrongly believe anything they are told; instead, they will be unlikely to believe information if it undermines their identities. With access to a wealth of information, why would we engage with information that challenges our identity and perspective of the world when we can find a host of actors (real or not) to support the illusion that the world is precisely as we want to see it? The framework developed in this article recognizes that not all people uniformly internalize the misinformation proliferated by bots on social media. Some misinformation resonates with certain identity groups more than others and has a range of politically intended and unintended consequences. Therefore, the identity bots are programmed to mimic is a strategic decision, informed by the desired outcomes of the bot campaign. A better understanding of what makes an instrumental bot identity is important for understanding the uneven impacts that bot campaigns have on different identity groups.

When identity groups feel threatened, their responses are guided by group norms, which are themselves contested and subject to change (Hornsey, 2005; Posner, 2017). In some cases, bots could encourage a politicized collective identity, wherein group members actively engage in a power struggle on behalf of their group (Simon & Klandermans, 2001). By mimicking politically salient identities, bots could shape group norms over time, influencing how members perceive threats and opportunities related to key issues like the energy transition.

This article has explored how the well-documented legacy of petro-populist messaging could be continued by bots programmed to mimic fossil fuel workers. The case illustrates how bots could perform classed, gendered, and politicized identities to influence perceptions of decarbonization. Through their alignment with a politically salient identity group, the impact of bots may be increased, allowing them to sway public opinion via the emotional and cognitive processes of identity-based group affiliation. Bots that mimic fossil fuel workers could reinforce a sense of solidarity among real fossil fuel workers, amplifying fears about job loss and uncertainty. These bots may promote narratives that challenge decarbonization policies or stoke distrust in just transition programs, further entrenching the idea that fossil fuel workers are inherently opposed to environmental movements. This manipulation can have broader consequences, as it influences workers and shapes how the public and policymakers view fossil fuel labor, reinforcing stereotypes that make it more challenging to secure the social license for energy transition policies that benefit all workers.

In light of the increasing sophistication of bots and the critical challenges we face as a society, from climate change to political polarization, we must advance our understanding of how bots manipulate identities. Doing so will enhance our ability to protect public discourse and ensure that identity remains a tool for genuine self-expression rather than manipulation. As bots continue to evolve, so must our approaches to identifying and mitigating their influence on individual identity formation and collective social processes. Researchers and policymakers should prioritize methods that capture the complex interactions between bots and identity groups, considering how bots shape discourse through identity-motivated reasoning. Future research should also explore how bots affect the long-term development of group norms and identities, especially in politically polarizing contexts like the energy transition.

Footnotes

Ethical considerations

Ethics approval was not required for this research.

Consent to participate

Not applicable.

Consent for publication

Not applicable.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability Statement

Not applicable.