Abstract

Social media’s transition into algorithmic content recommendations, accelerated by TikTok’s entry into the ecosystem, has reshaped platforms’ consumptive curation affordances, reducing users’ ability to curate their feeds directly. While previous research has explored user experiences with TikTok’s algorithmic recommendations, there has been limited attention to how its interface shapes these interactions. This article interrogates the role of TikTok’s interface design in shaping these new consumptive curation affordances. Drawing on Davis’s concept of consumptive curation – users’ selective engagement with vast pools of content – and literature on social media affordances and mediation theory, I present consumptive curation affordances as relational: shaped by the interplay between platforms’ technological design, user practices and social arrangements. TikTok’s interface is central in this interplay, mediating consumptive curation practices with algorithmic recommendations through several affordance mechanisms. I analyse TikTok’s interface through a walkthrough method, organised according to the algorithmic experience framework, where I operationalise the concepts of friction levels and affordance mechanisms. Findings reveal the dominant role of the For You Page, where TikTok strongly encourages users toward passive consumptive curation – watch, scroll, repeat – while refusing to provide enough transparency about how interactions curate recommendations and discouraging users from disabling data collection. As a result, TikTok’s interface discourages users from strategising consumptive curation practices, demanding reliance on opaque algorithmic recommendations. This study offers a theoretical foundation for understanding how interface design influences consumptive curation affordances. Grounded in a relational view of affordances, future studies can explore how socially situated users strategise interactions with TikTok’s algorithmic environment.

Introduction

Back in 2007, a younger Mark Zuckerberg stood in front of an audience at the Web 2.0 Summit in San Francisco, expressing his vision for the future of Facebook: We’re talking about the set of connections that everyone has in real life [. . .] All we’re trying to do is take those connections and map it out. Once we have an accurate model, we can help people to share their information more effectively. But it’s going to take 30 years – or at least tens of years – before this becomes a really mature platform. (Johnson, 2007)

Some 15 years later, social media looks very different. It can still be generally understood as ‘digital internet technologies that facilitate communication and collaboration by users’ (Burgess et al., 2017, p. 3). However, these technologies are also structured as online platforms, programmable digital architectures geared toward the systematic collection, algorithmic processing, circulation and monetisation of user data (van Dijck et al., 2018, p. 4). Beyond merely connecting people, social media platforms have developed specific business models that shape their technological design and, in turn, influence how users interact with each other and curate the content they consume – that is, how users engage in consumptive curation, understood as ‘an active practice of looking and engagement through which networked individuals navigate pools of data in discriminating ways’ (Davis, 2017, p. 773).

Early social media platforms – like Facebook or Twitter – often gave users agency to build networks that populated their feeds with chronologically ordered content. As their user base grew, social media were faced with the problem of organising and enhancing content and people’s discoverability. Platforms started leveraging continuous advances in artificial intelligence (AI) – mostly those in data-driven machine learning (Bauer, 2021) – to implement content recommendations in their main feeds. This meant that people’s feeds were not ordered just by recency anymore. Users’ behavioural data was used to personalise feeds, presenting relevant content first (Twitter, 2016), even content created outside their immediate network (Kincaid, 2011). In the process, platforms started placing targeted ads on people’s feeds (Constine, 2011). Thus, social media feeds became algorithmically 1 curated, in other words, they incorporated algorithmic recommendations as part of their functioning.

There has been a uniform tendency by most social media platforms to follow this trend. Platforms leverage this model to increase user engagement and maintain their business logic, based on analysing user behaviour to target advertising (Zuboff, 2019).

In this new era of recommendation media (Mignano, 2022), social media recommendation algorithms are sometimes perceived as powerfully all-seeing (Cotter et al., 2022) and objective (Gillespie, 2014, p. 179). The pervasiveness of recommender systems and their captivating capabilities (Seaver, 2019) make it difficult for people to avoid using them for their daily consumptive curation. In less than 20 years, we have transitioned from a social media model where users could directly choose content sources to one heavily based on their inferred algorithmic identities (Cheney-Lippold, 2011).

Algorithmic content distribution and the technological architecture of the platforms that embody it shape how users are allowed to curate and consume content (Davis, 2017) – that is, the consumptive curation affordances of a platform. On the one hand, this can be positive for users, creating new venues to discover content. For instance, TikTok’s CEO has repeatedly stated how the platform allows every creator to be seen, even if they do not count with a big follower base, the algorithm will connect them with interested people (Ted Talks, 2023). On the other hand, recommendation media does not necessarily serve users’ best interests. Some might disagree with platforms’ engagement-driven computational advertising model (Pierson, 2021), while others might simply find that their feeds do not always provide the best recommendations (Davis, 2022, p. 52), or at least not the content they would have chosen to consume by other means.

Engagement-driven algorithmic logic is not a magic approach that successfully serves users with the content they truly desire to consume, nor is it the only possible social media model –

First, engagement is not a universal, immutable concept. Platforms 2 decide what counts as engagement (Gillespie, 2014) – for example, which interaction features are available to users and how much each interaction influences future recommendations. Platforms also decide on the role algorithmic recommendations play in the overall interface and how it is understood by the platform – for example, TikTok’s main feed shows content from anyone on the platform, Tumblr’s feed still relies on content from people users follow, Instagram’s feed offers a mix of both, and so on.

Second, optimising for engagement brings an inherent set of problems. An increasing number of studies are pointing out that it can lead users to become addicted (Qin et al., 2022) and suffer detrimental effects on their mental health (Sala et al., 2024). Furthermore, social media dynamically influence how users’ algorithmic identity is constructed by recommending content based on previous interactions with already personalised content (Milano et al., 2020). Such reinforcing loops are behind previously described phenomena such as filter bubbles or echo chambers, which potentially can lead users into radicalisation rabbit holes (Keith, 2021) or self-harm content (Amnesty International, 2023), among others.

Third, social media platforms often do not properly explain their algorithmic logic. Users often do not know how algorithmic recommendations work (Gillespie, 2014), or even about their existence (Eslami et al., 2015), so it is difficult for them to strategise their consumptive curation accordingly.

Despite these problems, in the recommendation media era, driving engagement up ultimately benefits advertising-driven revenue models, which is enough for the companies that own such platforms. Among all of them, perhaps because it was created more recently, TikTok stands out as a prime example – rather than merely integrating algorithmic recommendations as a supporting feature, it has embraced them as the core of its offering from the very beginning. TikTok’s algorithmic feed is frequently discussed within its user community (Cotter et al., 2022). References to the platform’s recommendation capabilities are common and hold a significant place in the discourse within (Schellewald, 2022) and surrounding the platform (Hern, 2022). The company strategically incorporates references to its algorithm in its public communications, such as the app’s description in the Google Play Store (Figure 1).

TikTok application description on the Google Play Store.

Accordingly, TikTok’s interface puts algorithmic recommendations at the forefront, showcasing how core they are to the platform’s offering (Narayanan, 2022). Indeed, TikTok revolves heavily around an algorithmically curated feed, which recommends content to users from any possible profile in the app, in the form of a never-ending stream of full-screen vertical videos or pictures. This content consumption proposal has been repeatedly pointed out as addictive for users (Evitts, 2022; Qin et al., 2022).

In fact, TikTok’s journey has been one of fast growth. After a few years of existence, it is already considered a very large online platform – more than 45 million monthly active users in the European Union – by the European Commission (2023). The platform is especially popular among young people, with 38% of 18- to 24-year-olds declaring to use it weekly (Newman et al., 2023, p. 12). TikTok’s achievements are impacting how other social media platforms approach their services, with many implementing TikTok-like feeds (Murray, 2023).

TikTok’s rapid growth has drawn increasing regulatory scrutiny. In February 2024, the European Commission (2024) opened formal proceedings to assess whether the company might be breaching the Digital Services Act (DSA) (Regulation 2022/2065, 2022) – a European Union regulation that, among other aspects, requires TikTok to assess and mitigate systemic risks stemming from its algorithmic systems (European Commission, 2024). Moreover, in October 2024, internal TikTok communications were leaked, showing the company is aware of how its algorithmic recommendations pose a risk for teenagers (Allyn, 2024).

Motivated by the described issues that the transition into recommendation media poses to people’s control over their consumptive curation – and TikTok’s relevance in this process – in this article I explore how consumptive curation affordances are shaped on TikTok. More specifically, I interrogate the role of TikTok’s interface design in shaping such affordances.

This is an underexplored area of study, especially on TikTok. Although previous research has explored how users relate to TikTok algorithmic recommendations (Cotter et al., 2022; Siles et al., 2022; Simpson et al., 2022), minimal attention has been given to the role of TikTok’s interface in shaping how users consume content (Bhandari & Bimo, 2022; Scharlach & Hallinan, 2023).

Theoretically, this article expands existing discussions on affordances as relational, highlighting social media platforms’ interface role in shaping consumptive curation through varying levels of friction and transparency. Empirically, by applying the walkthrough method to TikTok, this study reveals how the platform’s design strongly encourages passive consumptive curation while limiting users’ ability to strategise their interactions. This dual contribution is reflected in the structure of the article, which unfolds in two parts: first addressing the theoretical discussion before applying it to an analysis of TikTok’s interface.

In the first part of this article, I approach the topic from a broader theoretical perspective – discussing social media platforms in general. I bring together literature on affordances and mediation theory to discuss the relational nature of consumptive curation affordances, which are collectively shaped by the interplay between social media technology, user practices and social arrangements. I also examine the mediator role of social media interface design, as it establishes the environment in which consumptive curation practices take place. Understanding affordances as relational implies that designers’ intentions and social conditions shape a platform’s interface, which in turn shapes users’ consumptive curation practices within specific social contexts. Studying a platform’s interface reveals how user interactions with algorithmic recommendations are guided, as well as the underlying values that steer this process.

In the second part of this article, I apply this theoretical lens through a walkthrough method (Light et al., 2018) where I critically analyse how TikTok’s interface design attempts to shape consumptive curation affordances. I organise my analysis according to Alvarado and Waern (2018) five algorithmic experience areas (AX) and apply different theoretical concepts I introduce in the first part – such as friction levels (5Rights Foundation, 2021) and Davis’s (2020) conceptualisation of affordance mechanisms. This analysis provides a foundation for understanding the relational nature of TikTok’s consumptive curation affordances.

Consumptive content curation, mediation process and affordances

In this section, I situate social media platforms’ consumptive curation affordances within a broader context, emphasising that they do not emerge solely from the interaction between individual users and technology, but are deeply embedded in a social dimension.

According to Davis (2017), consumptive curation is a practice of both necessity and motivation. People desire control over the information they consume and increasingly need it when confronted with vast amounts of data.

While platforms’ technological design significantly shapes how users navigate and filter information – for example, by allowing them to follow accounts or by recommending content based on previous interactions – consumptive curation practices are not solely a product of user-technology interactions. They are inherently contingent upon other users’ decisions to make, upload and share content – what Davis (2017, p. 772) calls productive curation. Taking it one step further, we can think about different types of actors that influence consumptive curation practices. Social media platforms have been described as assemblages, socio-technical systems where multiple and constantly evolving actors – also, once again, non-humans such as the software itself – influence communication processes (Bucher, 2018). The current social media landscape is, for instance, shaped by the companies that own these platforms and the government institutions that establish the regulatory framework.

Indeed, users interact with social media platforms ‘amid conditions of design-based control and infrastructural pressures’ (Savolainen & Ruckenstein, 2022, p. 13) – in other words, influenced by the technical design of the platforms themselves, and by a broader socio-economic context where users and platforms operate.

Lievrouw’s (2014, p. 45) mediation framework brings all these elements – and their interrelationships – together. Social media can be understood as constituted by three major components: (1) technological artifacts, 3 (2) people that engage in communicative practices and (3) social arrangements that surround them. These three components have an ongoing, articulated and mutually determining relationship, where each shapes the others in a mediation process.

Adopting a mediation lens helps to describe how social media technology, consumptive curation practices and social arrangements influence one another, thereby all playing a role in shaping social media consumptive curation affordances. Examples of these mutually determining relationships are endless. Facebook incentivises certain user interactions by increasing their visibility in friends’ feeds – such as through notifications of ‘X likes Y’s photo’ (Bucher, 2012). Twitter’s – currently known as X – old favourite button not only afforded bookmarking content but was employed in 25 different ways by users and even revamped into a popularity tool (Bucher & Helmond, 2017). In terms of social arrangements, legislative efforts such as the Digital Services Act (DSA) (Regulation 2022/2065, 2022) regulate some of the algorithmic control features social media must offer to users – such as the possibility to turn off algorithmic recommendations – and impose algorithmic transparency obligations that can help users understand how algorithmic recommendations work. Furthermore, the economic goals of social media owners shape the technological design of the platform’s feeds – algorithmic recommendations drive up engagement and therefore advertising revenue. The list goes on.

Consumptive curation practices are therefore in a constant triple articulation 4 with social media’s technological design and social arrangements. Trying to understand this articulation inevitably leads us to the concept of affordances. Originally developed in ecological psychology (Gibson, 2015), affordances referred to the possibilities of action that animals perceived when interacting with their environment. The term was popularised after its adoption in design and Human-Computer Interaction studies (Norman, 1990), where it lost the relational framing to be conceived as properties of objects that designers could implement to make people perceive certain action possibilities. From here, many other fields adopted affordances as a term. Media and communication studies have widely used affordances to refer to how communication technologies – such as social media platforms – enable or constrain certain practices (Bucher & Helmond, 2017, p. 239).

As Bucher and Helmond (2017) accurately point out, media and communication scholars have often operationalised affordances as simply technological properties – closer to how Norman defined them. When thinking about consumptive curation affordances in this way, it may be tempting just to note and list a platform’s various features and how they are presented to users. If a platform offers, for example, the option to mute a certain hashtag, it feels intuitive to argue that it affords users the possibility to hide certain types of content.

However, in a mediated environment such as social media, affordances cannot be merely equated to technological features. They are not only a functional property of social media platforms, embedded and fixed. They are also relational – a link between actors and objects as Gibson argued – and learned by socially situated people (Lievrouw, 2014). It is perhaps possible that some users cannot find the feature that allows them to select which hashtags to mute. It could also happen that some do not have a good grasp of what a hashtag is because they have never used other platforms. For those people, the platform does not afford to mute a hashtag – a consumptive curation practice – in the same way. Many scholars (Bucher & Helmond, 2017; Davis, 2020; Davis & Chouinard, 2016; Nagy & Neff, 2015; Ratto et al., 2021; Scharlach & Hallinan, 2023) – among others – advocate for this conceptualisation of affordances, closer to Gibson than to Norman.

Understood this way, affordances as a concept sit in the middle of the triply articulated mediation process. It recognises how social media’s technological properties shape user practices – for example, by creating networked publics (boyd, 2010) that impact communicative dynamics (Van Raemdonck & Pierson, 2022) – and also how user practices shape technology as well. Not only because they might reconfigure the technology to meet their needs (boyd, 2010, p. 55), but also because users afford something back to social media platforms (Bucher & Helmond, 2017, p. 250). Algorithmic feed’s core function – serving recommendations – is facilitated by continuously learning and predicting from data created through user practices. Finally, affordances encompass the socially situated nature of human-technology relations. Users’ ability to perceive and employ social media’s technological features varies among individuals, due to differences in perception or skill levels and the impact of cultural norms and institutional factors on different population groups – that is, what Davis (2020) calls conditions of affordance. As Lievrouw (2014) states, users learn about affordances affected by ‘patterns of relations and institutional formations that create and regulate social knowledge and power’ (p. 48). These varying conditions

Social media interface design and consumptive curation affordances

Understanding affordances as relational does not mean designers and engineers cannot embed certain properties in the technology to attempt to shape user practices. Recently, technology and culture journalist Kyle Chayka argued in the New Yorker about his reasons for uninstalling Spotify: Yet it has become aggravatingly difficult to find what I want to listen to. [. . .] the Music tab is filled with rows of playlists, autoplay ‘radio’ stations, and algorithmically generated mixes. The only option for browsing full albums is a small item in the lesser Library column [. . .] With the upgrade,

Chayka’s complaints showcase the triply articulated relationship that social media technology, curation practices and social arrangements have with each other. Spotify is pushing for algorithmic music recommendations, which is probably a pursuit of increasing user engagement with the app. This change has a ripple effect on the way artists create music – for example, releasing individual songs becomes more efficient than whole albums – and how users consume it – by listening to algorithmically recommended songs, instead of exploring in other more direct ways. Eventually, if too many users leave the platform, Spotify might be forced to reevaluate.

More concretely, Chayka is complaining about changes in Spotify’s software interface, and the power they have over his experience on the platform – and his perceived sense of agency (Sundar, 2008; Sundar et al., 2015). Most certainly, in a social media paradigm where users constantly negotiate with engagement-driven algorithms over their content recommendations (Sundar, 2020), the interface plays a major mediator role by establishing the environment in which consumptive curation takes place.

Designers and engineers shape this technological environment through their decisions, trying to influence user practices – that is, establishing intended uses of the interface. The concept of script – widely used in Science and Technology Studies – was developed by Akrich (1992) and Latour (1992) to refer to how technological artifacts prescribe human actions. Verbeek (2006) argues designers’ intentions do not necessarily translate into fixed properties of artifacts, but are shaped by the relationships between humans and technology. 5 Certainly, interfaces are not static places where users and technology meet, but dynamic and relational (Ratto et al., 2021) – more fitting with a mediation view of social media affordances. A social media platform’s interface does not shape all users’ behaviour equally, but it applies ‘varying levels of pressure on socially situated subjects’ (Davis, 2020, p. 8).

It is however worth studying how these designers’ intentions emerge in a platform’s interface. They give key insights into mediation processes: how the interface attempts to guide users’ consumptive curation practices and how it is shaped by such practices and social arrangements. Importantly, design decisions do not always stem from a grand, hidden scheme to nudge users towards monetisation, nor from regulatory impositions. Rather, they can be a result of how software design is conceived. Design methods sometimes employ a reductive view of user, interface and affordances (Ratto et al., 2021). For instance, the use of fictional personas – representing common user archetypes – inadvertently simplifies the socio-cultural context and diverse needs of real users and inherently reflects the biases of the designers creating them. These drawbacks are difficult to minimise – although not impossible. Even the best designers, with good intentions and enough resources, have to decide on value trade-offs inherent to any recommender system deployment (Stray et al., 2022) – therefore establishing a value hierarchy of what matters and what does not. Engineering design not only prescribes action, but it is also an inherently moral activity (Verbeek, 2006, p. 368).

Companies employ not-so-obvious software design strategies that aim at nudging users. These strategies formulate affordance mechanisms (Davis, 2020) – that is, ways in which technology requests, demands, encourages, discourages, refuses and allows certain practices. For example, designers implement different levels of friction in software interfaces, establishing a baseline on how much users need to click, tap or think to perform certain actions (5Rights Foundation, 2021, p. 27). Although these tactics do not guide every user in the same way (Stefanija & Pierson, 2023), they aim to act aggregately over the population (Slota et al., 2021, p. 11), steering some consumptive curation practices. Indeed, visual elements on an interface – features – say and suggest things to users, even if what exactly is not set once and for all (Bucher & Helmond, 2017, p. 234) and is subject to conditions of affordance (Davis, 2020, p. 11) – that is, variability across subjects and circumstances.

Design strategies can try to guide people into consumptive curation practices – and other behaviours – they do not agree with – such as in the Spotify example, where the latest version encouraged Chayka to consume algorithmic recommendations, thereby discouraging direct music selection. These are known as deceptive patterns (Brignull et al., 2023). They can also aim to do the opposite. For instance, the interface of a social media platform can be designed to improve user experiences with algorithmic recommendations (Alvarado & Waern, 2018).

Critically inspecting a software interface offers us a peek into the values designers attempt to inscribe on a social media platform. The extent to which such values are embodied in the platform is contingent upon the social context in which users interact with it (Nissenbaum, 2001). Nonetheless, this inscription attempt provides significant insights into how users are guided to interact with algorithmic recommendations for consumptive curation purposes.

For that purpose, I have chosen Alvarado and Waern’s (2018) algorithmic experience (AX) framework. It is ‘an analytic tool for approaching a user centered perspective on algorithms, how users perceive them and how to design better experiences with them’ (p. 1). Alvarado and Waern (2018) created this framework with specific design values in mind, suggesting a platform’s interface can be ‘deliberately designed to foreground algorithmic behaviour and increase user awareness of algorithmic influence’ (p. 7).

The five areas of design recommendations the AX framework provides – see Table 1 – can be conceived as the different ways a social media platform’s interface can potentially shape users’ perceptions and interactions with algorithmic recommendations – and, therefore, the consumptive curation affordances of the platform.

Algorithmic Experience Areas (Alvarado & Waern, 2018).

In the following sections, I will employ the AX framework areas to guide my inspection of TikTok’s interface. By operationalising the concepts of friction and affordance mechanisms, my analysis provides a theoretical foundation on how TikTok’s interface mediates users’ consumptive curation practices with algorithmic recommendations, making some thrive and others diminish. According to the presented relational and mediational view of affordances, this analysis advances existing TikTok scholarship by resurfacing the importance of technology design – concretely the interface – in shaping – but not setting in stone – user consumptive practices on the platform.

Methodology

The walkthrough method

My inspection of TikTok is based on the walkthrough method (Light et al., 2018). Adapted from design methodologies, this widely used method takes the researcher through the process of critically analysing an app’s interface. The goal is to identify embedded values in the app features and imagine, in turn, how these features attempt to reinforce certain values in users.

It involves the researcher critically analysing an app’s environment of expected use, or how its provider anticipates the app ‘will be received [vision], generate profit or other forms of benefit [operating model] and regulate user activity [governance]’ (Light et al., 2018, p. 883). The method’s central data-gathering procedure ‘involves the researcher engaging with the app interface, working through screens, tapping buttons and exploring menus’, while assuming a user’s position (Light et al., 2018, p. 891).

The walkthrough method is theoretically grounded in mediation theory – how users’ practices, technology and social arrangements shape each other – and in a relational view of affordances – understanding they are contingent on the context of use. It recognises that identifying certain affordance mechanisms present in a software interface is not the same as observing how users – in their social context – interact with such interface. However, the walkthrough method’s goal is to build a foundational understanding of a platform’s interface design mechanisms that can later be used as ‘a foundation for further user-centred research that can identify how users resist these arrangements and appropriate app technology for their own purposes’ (Light et al., 2018, p. 881).

In that sense, the main limitation of the walkthrough method is that it is influenced by the researcher’s assumptions and social circumstances. My analysis of TikTok’s affordance mechanisms is influenced by my social position as a European academic researcher with a background in computer science and media and communication studies, among other aspects.

Furthermore, social media platforms’ interfaces tend to change continuously due to A/B testing procedures. Nonetheless, in this article, I am not establishing a static atemporal assessment of how TikTok’s interface is configured, but interrogating and critically analysing the general consumptive curation that TikTok proposes to users, which is much more stable over time. My goal is to use these findings as a foundation for upcoming user-centred studies about consumptive curation practices on TikTok.

Applying the walkthrough method to study TikTok

As a framework to organise my critical analysis, I employ the AX framework (Alvarado & Waern, 2018). AX’s five areas serve as a guideline for the different ways in which TikTok’s interface attempts to shape consumptive curation affordances on the platform.

During my interface analysis, I employ Davis’s affordance mechanisms 6 – request, demand, encourage, discourage, refuse or allow – to discuss how I perceive that certain design choices try to steer consumptive curation practices on the platform. I also make use of the concept of friction to discuss how easy the interface makes it to use algorithmic control features.

Regarding algorithmic awareness, I have purposely excluded from my analysis documents such as terms of service and privacy agreements. I have considered these texts a convoluted way to explain how algorithmic recommendations work, which discourages users from reading – as declared by a high percentage of them (Fowler, 2022).

In terms of algorithmic user control, I have expanded the list of examples provided in the AX framework – such as disabling personalised recommendations or giving explicit negative feedback – to include a wider variety of options. Recognising how challenging it is to determine precisely which of the available features influence algorithmic recommendations on TikTok, I have decided to map out: (1) features that TikTok highlights when explaining its algorithmic logic, inviting users to engage with – see algorithmic awareness section – and (2) features that are traditionally understood as ways to curate algorithmic content recommendations on social media – such as liking or following. Furthermore, to narrow down my analysis, and since most TikTok users do not upload content to the platform (Statista, 2023), I excluded those features related to new content production – even though they might also affect content recommendations.

The walkthrough was performed for 2 months (16 October 2023 to 16 December 2023) from Seville, Spain. The device used was a Redmi Note Pro 9, OS version MIUI 14 (14.0.3.0.SJZMIXM). TikTok app’s version was v31.7.4(2023107040). I created a fresh Gmail account and used it to create a new ‘fresh’ TikTok account, with the minimum necessary information. I performed the walkthrough using this fresh account and my personal account – which I had been using for 3 years at that moment. My goal was to obtain a better view of the app features from the signing-up stage to several years of use and to minimise the impact of A/B testing differences in my analysis.

Findings

The importance of algorithmic recommendations in TikTok’s interface

TikTok’s interface welcomes users with a full-screen vertical video or image slideshow, part of an endless feed, the For You page (FYP) – whose name openly points out the feed is personalised, reinforcing the idea that content recommendations are uniquely curated to match your interests.

During my inspection of TikTok’s interface, the protagonism of the FYP felt total. In contrast with other social media apps, TikTok is designed to be fully usable without ever needing to leave the main feed. The moment you sign in, the FYP starts showing you content – even before you follow any accounts or like any post (Figure 2). Consuming one piece of content at a time also eliminates the need to tap to enlarge media or to switch to another screen to comment.

TikTok’s For You page.

While the app offers different feeds – such as Following, Friends, or Live – and alternative ways to find content – such as a Search page – the interface encourages most interactions to seamlessly occur within the FYP. Furthermore, TikTok refuses any of these alternative feeds to be set as the default, making sure that the FYP welcomes you every time you open the app.

The only way to change the FYP is to turn off algorithmic recommendations. This option makes TikTok default to a Popular feed, instead of the FYP. However, a small pop-up appears every time the app is opened in this mode, reminding for a brief second that personalisation is off, as if TikTok refused to completely let go of the FYP. Indeed, it is probably trying to do that. There is a legal obligation for TikTok to offer a main feed option not based on profiling – DSA article 38 – but no specification on how this alternative must be. The platform then poses a forced dichotomy to users between its version of algorithmic personalisation or generic popular content, not allowing any default option in between.

Beyond feed selection, TikTok also encourages users to consume algorithmic content through search recommendations. The app places search pop-ups on some posts and sometimes transforms users’ comments into clickable search queries. While these suggested searches often seem contextually related to the content, TikTok never explains how they are generated while encouraging users to follow a path of related videos.

AX AREA 1: algorithmic awareness

TikTok’s interface offers multiple references to its algorithmic logic through a combination of pop-ups (Figure 3) and in-app posts (Figure 4). Table 2 showcases a list of the different instances this information was encountered during the walkthrough. Most of the posts are available on TikTok’s Help Center – through the app’s settings. Some of them are also linked from other sections of the app, usually to provide context about a particular feature.

Example of pop-up referring to TikTok’s algorithmic logic.

Example of post referring to TikTok’s algorithmic logic.

TikTok’s Algorithmic Awareness Posts.

Throughout the various posts examined, TikTok consistently presents a vague narrative regarding the inner workings of its algorithmic recommendations and how users can influence it – with even some outdated information (Figure 5). TikTok explains that video information, device settings and account preferences play a role. The app also encourages active engagement with interface features as the best way to curate content (posts 3, 4, 5, 6, 7 – Table 2). Some examples are offered such as liking, commenting, or tapping not interested, among others. However, this information feels general and not comprehensive. It is obvious that, apart from the provided examples, other interactions must also play a role in curating algorithmic recommendations. TikTok never concretises on this. Furthermore, it never explains how much each interaction matters towards future content recommendations, stating interactions’ weighting may vary with time (post 7).

Example of outdated information on a TikTok post (post 4).

On several occasions, TikTok emphasises the importance of content diversity as a design value driving its algorithmic recommendations (posts 4, 7). According to the app, users may encounter videos or images that don’t appear to be relevant to their expressed interests, nor popular. During the walkthrough, I encountered some videos that appeared to fall into this category. However, discerning whether they were diversity attempts or a result of my previous interactions with the app was challenging. On a platform where the weighting of interactions in curating content recommendations is not transparent, diversity can potentially function as a scapegoat that justifies bad recommendations.

Notably, TikTok seems to make a greater effort to explain how ads are personalised (post 2). The app elaborates on how both on and off-TikTok activity can be used to personalise ads and the different ways in which users can manage their ad profiling (see algorithmic profiling section).

In regards to the delimitation of algorithmic spaces – an example of algorithmic awareness (Alvarado & Waern, 2018, p. 7) – the app mainly points out to the FYP as the place where algorithmic recommendations happen (posts 3, 4, 5, 7). There are some instances (posts 1, 6, 8) where other interface sections are pointed out as well, such as the Following and Friends feeds – which do not follow chronological order – and the Search tab.

AX AREA 2: algorithmic user control

Table 3 shows TikTok’s algorithmic user control features classified by friction levels – how much interaction with the interface is needed to use them – using the FYP as a starting point – since it is the place where the app constantly makes users return to. If a feature is available in different places of the app, it has been classified only once, at the level of least friction.

TikTok’s Algorithmic User Control Features.

Features not present in ads.

Features not present in Following feed.

Features not present in Friends feed.

Even though TikTok’s interface offers a high number of possible interactions to curate content recommendations, it especially encourages users to perform two actions: (1) watching the current content that plays automatically unless stopped – demanding to be watched until the user (2) scrolls to the next piece. Not only is it entirely feasible to consume content on TikTok without ever leaving the FYP, but also without having to perform any other interactions. TikTok’s interface establishes passive consumption – requiring minimum engagement – as the least friction way to consume content.

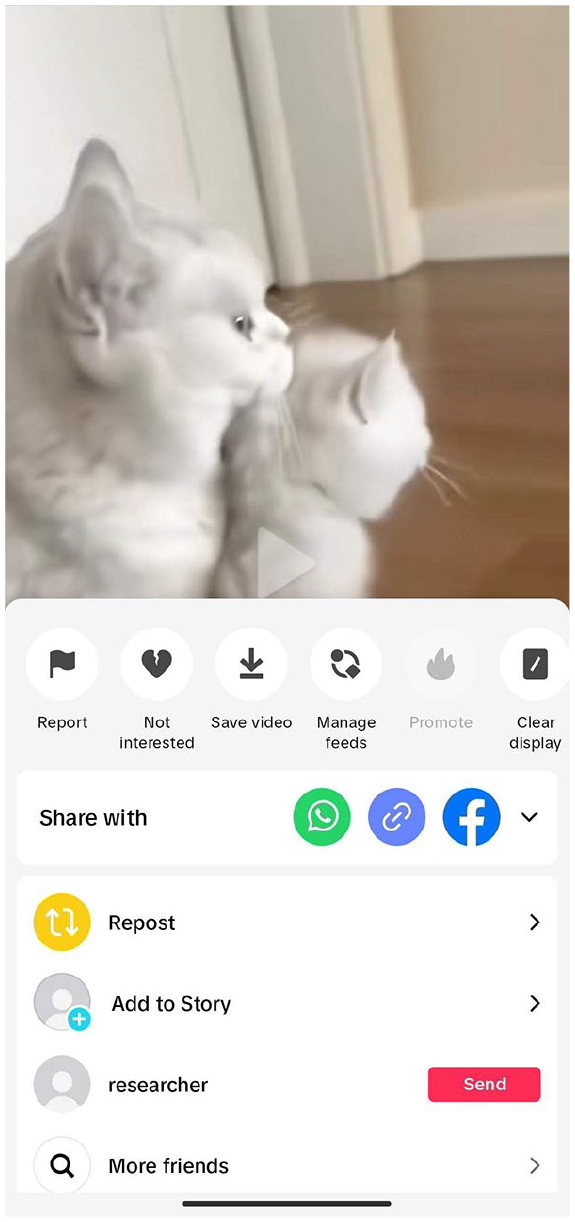

TikTok’s interface strongly encourages liking content, making it one of the easiest interactions to perform – either by double-tapping the screen or with the like button. The interface encourages interactions that signal a preference for the content, such as follow, comment, share and save – which allows bookmarking content under chosen categories. These features are easily identifiable in the FYP, and all show a count to communicate how many other users interacted in the same way (Figure 6).

TikTok’s algorithmic control features with the least friction.

In contrast, interactions that signal the recommendation was not well received are discouraged (Figure 7) – such as expressing not interested, reporting or disabling the personalised feed. Some are placed in obscure places – such as a menu that appears after pressing the share button. Furthermore, most features that allow deactivating tracking sources and personalisation features are strongly discouraged, demanding users to navigate through settings menus to use them.

More TikTok’s algorithmic control features.

The vast number of available features makes it difficult to strategise how to use them to curate algorithmic recommendations. The fact that only some of them are easily accessible on the interface makes one wonder if TikTok is just creating an illusion of choice, where certain interactions are possible but do not form part of the flow consumptive state that the platform proposes: watch, scroll and repeat.

Adapting interactions to control algorithmic recommendations is also difficult because of the complex nature of such interactions. Features such as likes or comments can be performed (1) as social interactions – where the aim is to communicate with other users, (2) as low-friction interactions to shape algorithmic recommendations (Figure 8) and (3) for a multitude of other goals – for example, a like can be an easy way to save content to watch later, since it can be accessed from the profile section.

Example of the comment feature used to shape algorithmic recommendations.

Even when the intention is to use these features to shape recommendations, it is not clear the effect they have. For instance, when a user taps not interested, it can be interpreted as indicating disinterest in the content being shown, the creator who uploaded it, or both. TikTok’s interface refuses to explain how the interaction is measured.

TikTok’s interface does not offer any way to fix these problems, such as offering different features for different goals or allowing users to specify what they mean by certain interactions.

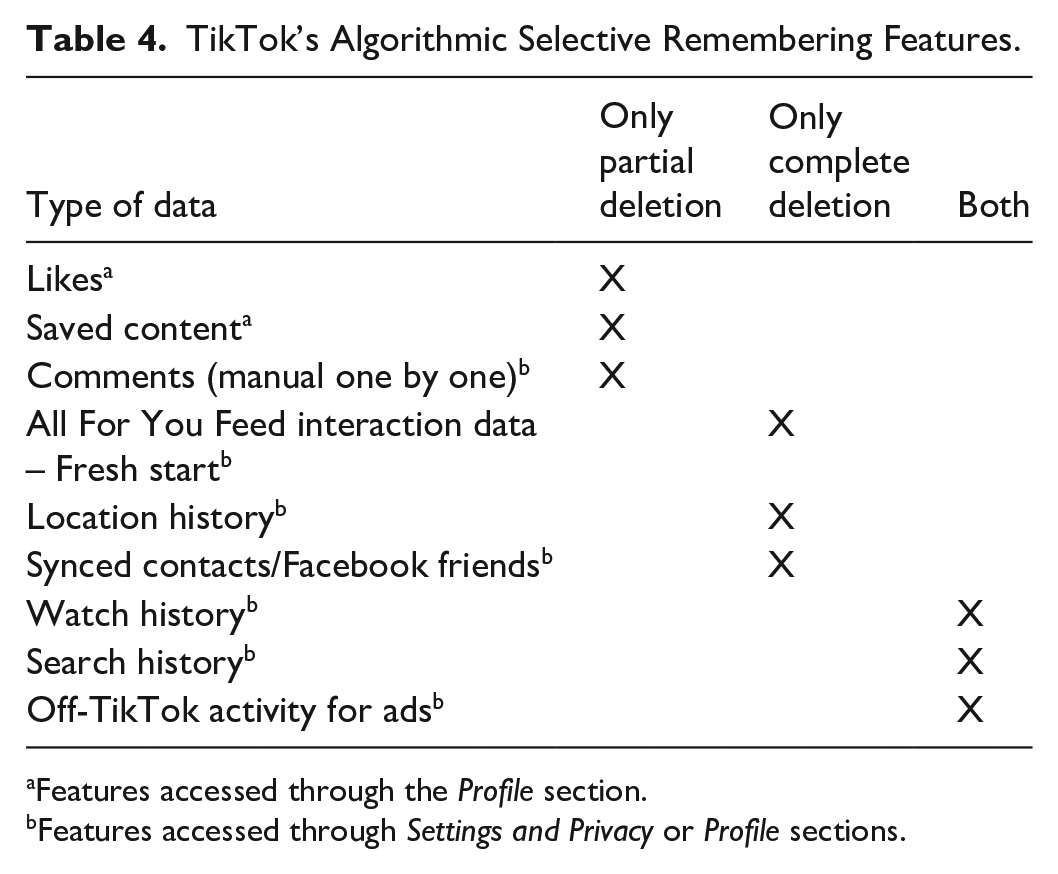

AX AREA 3: algorithmic selective remembering

TikTok’s interface allows users to delete a variety of data about previous interactions they had in and off the app, as well as data gathered through tracking sources – such as location history or synced contacts – see Table 4. During the walkthrough, these features were found scattered in different parts of the interface, throughout the profile page and settings menus. Not all of them can be deleted in the same manner, with TikTok refusing partial or complete deletion of different types of information.

TikTok’s Algorithmic Selective Remembering Features.

Features accessed through the Profile section.

Features accessed through Settings and Privacy or Profile sections.

AX AREA 4: algorithmic profiling transparency

TikTok’s interface sometimes explicitly states why some content reached your feed. For instance, it shows if the content has been posted or reposted by an account you followed. It also labels ads. Even though these pieces of information do not explain algorithmic recommendations per se, they can help users adapt consumptive curation practices accordingly.

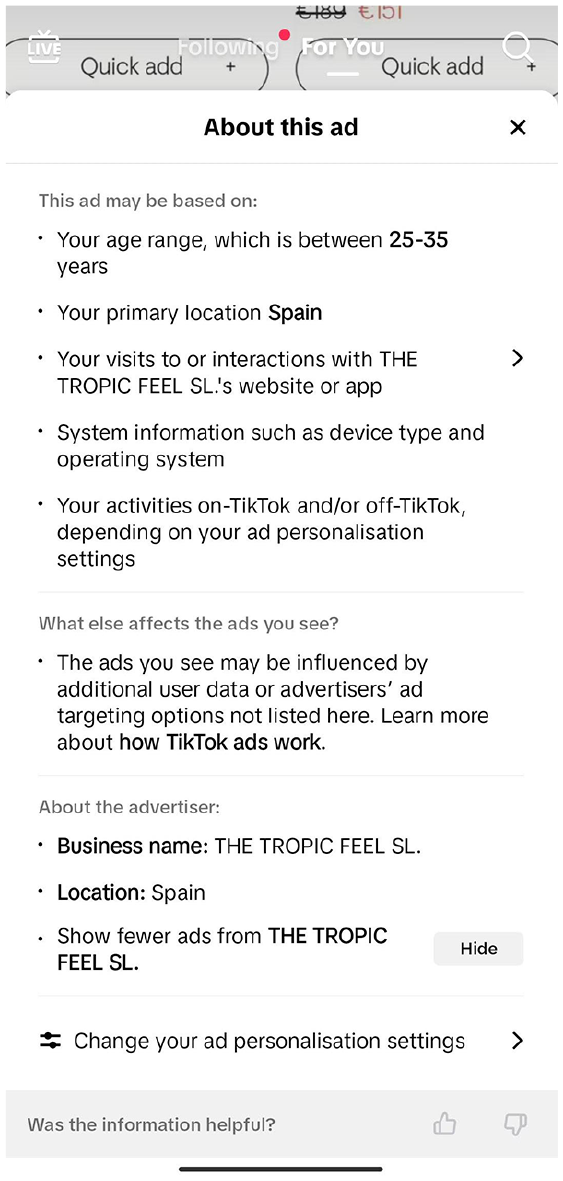

Regarding explaining algorithmic recommendations, TikTok treats normal content and ads differently (Table 5). Although TikTok offers a vague, high-level explanation of why both types of content are recommended, the ‘About this ad’ feature (Figure 9) provides more detail. Compared to the ‘Why you’re seeing this video’ feature (Figure 10), it provides more reasons, additional links with further information and easier access to settings.

TikTok’s Algorithmic Profiling Transparency Explanations.

TikTok’s explanation of ad recommendation.

TikTok’s explanation of normal content recommendation.

These explanations do not clarify why a specific piece of content was recommended. Rather, they offer a glimpse into how the algorithm functions and highlight some of the data that may influence its recommendations. Explaining algorithmic recommendations is challenging. It has been pointed out that translating hidden AI model variables into concrete, understandable terms in human language is difficult (Fazi, 2020). However, TikTok could do better to portray some of the inferred profiling categories for normal content recommendation, as it does with ads (Figure 11). For the latter, TikTok allows users to review the inferences it has made about them and discloses which off-TikTok interactions with advertisers are used to personalise ads.

TikTok’s inferred ads profiling categories.

AX AREA 5: algorithmic profiling management

As shown in Table 6, TikTok only allows users to review inferred profiling categories for ads (Figure 11), and only after a certain time of using the platform. Each category can be activated or deactivated, but no new categories can be added. TikTok’s interface explicitly indicates that, no matter user correction, deactivating the categories does not stop the platform from using other information to personalise ads.

TikTok’s Algorithmic Profiling Management Features.

Discussion

To begin with, TikTok’s interface makes an obvious and substantial effort to encourage users to consume algorithmic recommendations on the FYP (see section ‘The importance of algorithmic recommendations in TikTok’s interface’). Theoretically, users could use the app without ever having to leave this feed, endlessly being fed with new content recommendations. To a lesser but also relevant extent, TikTok encourages users to follow recommended searches, which also send users into algorithmic ‘micro’ feeds of recommended videos that can quickly deviate from the original one. TikTok refuses to explain how these searches are recommended.

The stark contrast between TikTok’s strong push for users to consume algorithmic recommendations and its lack of transparency (see sections ‘AX AREA 1: algorithmic awareness’ and ‘AX AREA 4: algorithmic profiling transparency’) about how these recommendations work makes it difficult for users to strategise their consumptive curation practices accordingly. While TikTok acknowledges the FYP is algorithmically curated, it offers brief, high-level explanations that barely scratch the surface of how user interactions curate future recommendations and why specific content appears. TikTok also refuses to explain how each interaction weighs into future content recommendations. The platform points to the value of diversity as a guiding principle, yet it is difficult to understand when this value is put into practice or when the platform is just serving bad recommendations. TikTok’s interface discourages users from accessing this information in the first place, demanding users to navigate through numerous settings and Help Center pages or hiding it behind menus that appeared only when I held the screen or tapped the sharing button – an unintuitive location for such information.

Alongside its strong push for algorithmic recommendations and the lack of transparency, TikTok shapes consumptive curation affordances through varying levels of friction applied to different interface features (see section ‘AX AREA 2: algorithmic user control’). As with any other app, TikTok designers work with limited on-screen space to place different features. Usability principles (Nielsen, 1994) followed by designers also lead them to create minimalist interfaces, where users are not distracted from the primary use case. By examining which features are persistently available in the interface – and therefore occupy limited and valuable on-screen real estate – we can unveil designers’ intentions to nudge users.

While TikTok provides various algorithmic control features, only a few feel intuitive and easily accessible. Concretely, the interface encourages interactions that signal a preference for the recommended content, strongly favouring the like action. However, it does not allow users to specify their intent when engaging with features, nor does it clarify how these interactions curate future algorithmic recommendations.

When it comes to controlling the data TikTok ‘remembers’ or has access to (see section ‘AX AREA 4: algorithmic profiling transparency’), the app discourages users from consistently managing and deleting their previous interactions or turning off data sources. Somehow, TikTok seems partially aware that there might be situations where users find their content recommendations no longer accurate and need to reset the algorithmic profiling to a blank state – offering the option to perform a fresh start. Still, TikTok prioritises this broad reset over allowing users more granular control over stored data – control that could allow for more precise consumptive curation adjustments. Instead, the app consistently demands heavy reliance on the promises of non-transparent algorithmic recommendations, discouraging a clear, centralised and consistent way to delete previously stored information. This showcases how TikTok’s interface design is shaped by the platform’s business model, which seems to benefit from a ‘the more data the better’ approach.

Along the same line, TikTok refuses users from consulting their algorithmic profiling for normal content recommendations (see section ‘AX AREA 5: algorithmic profiling management’) – which also discourages successful strategising of consumptive curation – but allows it for ad recommendations. This is a recurring trend observed in the walkthrough, where TikTok provided more detailed explanations for how ads are recommended than for regular content recommendations. The reasons behind not offering this functionality might be technical difficulties determining inferred categories, but TikTok’s interface does not even offer a centralised place to tweak explicit variables that affect algorithmic recommendations, such as location and content language.

Conclusion

Social media transition into algorithmic content recommendations, accelerated by TikTok, has broad and deep implications, posing a series of individual and systemic risks to society. This shift into recommendation media has fundamentally changed how platforms allow people to consume and curate online content – that is, their consumptive curation affordances. Understanding this phenomenon is perhaps one of the most crucial challenges of our time.

Drawing from mediation theory (Lievrouw, 2014), I have proposed that our approach should consider social media consumptive curation affordances as relational, co-shaped by the technology, users’ practices and social arrangements. While people may attempt to curate their feeds based on personal preferences, skills and social circumstances (Davis, 2020), they must also strategise and interact with interface features to do so. The interface establishes an environment in which consumptive curation occurs. By designing the interface in a particular way – making some features and information more or less accessible – social media platforms exert some degree of control over affordance mechanisms (Davis, 2020) and therefore over the consumptive curation affordances of the platform.

In this article, I have critically analysed how – and why – TikTok’s interface design makes certain consumptive curation practices easier to perform than others. Bringing together the concept of friction levels (5Rights Foundation, 2021), Davis’s (2020) conceptualisation of affordance mechanisms and Alvarado and Waern’s (2018) algorithmic experience framework, my analysis revealed that, above anything else, TikTok strongly encourages a passive and limited consumptive curation, requiring minimum engagement from users to keep algorithmic recommendations flowing endlessly. At the same time, TikTok refuses to give more information that would allow users to strategise other types of consumptive curation practices.

A key strength of this study lies in its focused examination of TikTok’s interface – analysing it as the foundational environment upon which consumptive curation takes place. While this approach – based on the walkthrough method (Light et al., 2018) – has inherent limitations, as it is influenced by my own personal and social context as a researcher, it serves as an essential groundwork for future research. Following the relational understanding of affordances, these empirical findings set the foundation for user-centred studies on how people enact consumptive curation practices influenced by the environment TikTok has set up. Engaging with real users will serve to deepen our understanding of how strategies such as friction levels influence consumptive curation practices, and how users’ personal and social contexts play a role.

Although the empirical findings apply directly to TikTok, the theoretical framing and methodological approach developed here can be repurposed to critically question how platforms employ their interfaces to shape consumptive curation affordances in the broader social media landscape. In the case of TikTok, its guiding mantra for users seems to be clear:

Footnotes

Acknowledgements

I would like to express my gratitude to my supervisors, Professors Trisha Meyer, Emilia Gómez Gutiérrez and Jo Pierson, as well as to my PhD committee member, Professor Leah Lievrouw, for their invaluable feedback and insightful comments on this article.

Funding

The author disclosed receipt of the following financial support for the research, authorship and/or publication of this article: This work was realised with the collaboration of the European Commission Joint Research Centre under the Collaborative Doctoral Partnership Agreement No 36104.

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.