Abstract

Data-driven political campaigning strategies often remain a black box for citizens; however, educational interventions provide a means to enhance understanding, conscious evaluations, and skills. In this context, we term this combination digital campaign competence (DCC). We conducted an online pre-registered experiment in Austria (N = 553) using a 2 × 2 between-subject design to compare intervention formats (reading a voter guide vs. playing a campaign game) and content framing (emphasizing risks vs. benefits of data-driven campaigning) plus a control condition. Results show no significant differences in framing on DCC. However, variations are observed among different formats, with the non-interactive voter guide proving to be the most effective one. Contrary to our expectations, the voter guide emphasizing the risks of data-driven political campaigning enhanced conceptual understanding levels, influenced evaluative perceptions, and aided skill development to detect highly targeted ads. We argue that innovative interventions do not always guarantee success in enhancing competencies.

Introduction

Citizens play a dual role in data-driven campaigns (hereafter DDC) as both targets of political ads and enablers. Their data are the fuel for data-driven political ads. Depending on a party’s resources, they can access and analyze voter data, generate insights into their target audience, and evaluate and optimize their campaign interventions (Dommett, Barclay, Gibson, 2024; Dommett, Kefford, & Kruschinski, 2024). These data can serve a positive purpose by delivering tailored content to citizens, but it could be misused for manipulation or neglect of certain groups of citizens (Zuiderveen Borgesius et al., 2018, p. 92). Substantial election funds are allocated globally to targeting criteria on Meta platforms by parties and candidates. These expenditures range from $700,000 in Austria’s 2022 presidential elections to multi-million-dollar budgets in Germany and The Netherlands in the 2021 elections, with parties and candidatesin the United States spending $400 million in 2020 (Votta et al., 2024). These investments highlight the significance of data for parties and large platforms, while also emphasizing the importance of citizens recognizing the value of their data in political campaigns.

The European Commission’s Digital Services Act underscores the crucial need to empower citizens in navigating data-driven advertising landscapes (Lomas, 2021). Research into educating citizens about data-driven advertising has received limited scholarly attention, with an even greater scarcity of studies that integrate citizens’ evaluations and behavioral responses to such advertising (Ham, 2017). To address this research gap, our study specifically investigates the impact of interventions on citizens’ understanding, evaluation, and skills in dealing with data-driven political campaigns, which we call digital campaign competence (hereafter DCC). Building on the theoretical notions of the inoculation theory (McGuire, 1961) and framing theory (Entman, 1993), we argue it is crucial to consider two central aspects in an intervention: the gamification of interventions and the framing of the message conveyed to comprehensively understand citizens’ competence in dealing with DDC, including their understanding levels, evaluations, and skills (Friestad & Wright, 1994).

More specifically, while it is unclear how and to what extent the competence of citizens can be increased, previous research has shown that educational interventions with higher levels of interactivity are helpful tools to increase awareness of misinformation (Roozenbeek & van der Linden, 2019) and behavioral advertising (Lorenz-Spreen et al., 2021). Next to this, providing a specific narrative of a story through framing could affect how people perceive the information and respond to it, for example, as shown with task-framing in citizen-science games (Tang & Prestopnik, 2019), or with “loss” and “gain” frames in environmental behavior (Ahn et al., 2015). This is especially relevant as people perceive data-driven advertising as more harmful than beneficial, but highlighting its advantages can help reduce resistance among citizens toward its use (Ham, 2017). While negative implications of data-driven tactics like the uneven distribution of information are detrimental to democracy (Bayer, 2020; Zuiderveen Borgesius et al., 2018), positive aspects such as receiving more relevant information should not be overlooked either (Dommett et al., 2022). For example, targeted ads might inform less politically interested people about the standpoints of politicians in election campaigns. Rather than examining two crucial aspects of interventions (i.e., interactivity and framing) in isolation, this study investigates how they individually, but also in conjunction, affect citizens.

We conducted a pre-registered survey experiment (N = 553) on the effects of educational interventions on DCC in the Austrian context. This research contributes to the communication literature in three ways. First, we compare the effectiveness of cognitive training techniques with different levels of interactivity by expanding the use of highly interactive gamified education approaches to the data-driven political advertising sphere. Second, we look at different framings of DDC techniques by highlighting either the benefits or the risks for individuals, society, or the political party. Third, we investigate to what extent all three components (i.e., understanding, evaluation, skills) of DCC are affected by an intervention, instead of only focusing on one aspect of this multifaceted competency.

Boosting Campaign Competences

Given that today’s campaigns lie at the intersection of technology and politics, regulatory bodies as well as social media architecture can and should limit unwanted influences on citizens (Kozyreva et al., 2020). However, our focus lies on enhancing citizens’ competence to navigate DDC. According to previous literature on defining competence (Delamare Le Deist & Winterton, 2005), three components are essential: understanding, evaluation, and having the necessary skills to respond effectively. First, citizens need a comprehensive understanding of several key factors related to DDC. This includes being aware of sponsored content and its persuasive influence (Boerman et al., 2018), the economic model behind data-driven personalized advertising (hereafter DDPA; Kruschinski & Haller, 2017), the regulations surrounding DDPA (Dobber et al., 2019), and having online privacy literacy (Masur, 2020). Based on this understanding, citizens can evaluate the appropriateness of data-driven tactics, their (dis)liking and skepticism toward them (Boerman et al., 2018; Rozendaal et al., 2011), and assess their personal relevance. Finally, citizens need the skills to react effectively. This includes correctly assessing content as targeted, critically reflecting on its intent (Boerman et al., 2014), and using privacy-enhancing techniques or technologies (Ireland, 2021; Kaaniche et al., 2020). The goal of campaign competence is to enable citizens to benefit from DDC while minimizing any unintended negative consequences. This involves engaging more with relevant content to receive more of it or limiting personal data online to reduce targeted political ads. Boosting competence means helping people develop their cognitive and behavioral abilities, enabling them to make informed and beneficial choices.

Interventions in the field of fake news have proven effective in equipping people with the knowledge and skills to identify and resist misinformation (Basol et al., 2020; Roozenbeek & van der Linden, 2019). Similarly, enhancing people’s familiarity with persuasive advertising strategies can improve their ability to recognize targeted advertisements. Lorenz-Spreen et al. (2021) found that reflecting on one’s personality increases the accuracy of identifying personality-targeted ads. Theoretically, these studies are grounded in the inoculation theory, which uses a biological analogy in the context of information processing. Exposure to weak doses of a virus should stimulate antibody production and confers resistance against future infections (McGuire, 1961). Applied to the communication science context, pre-emptive explanations about commonly used fake news strategies can reduce their influence when people are exposed to them later. Likewise, if people reflect on their personality traits (e.g., introversion or extroversion), they can better identify behavioral advertising that exploits this information.

Having established the importance of enhancing competences through interventions, the next crucial step is to explore the most effective approaches to achieve this goal.

Gamification and Framing Strategies

There are various approaches to achieving the goal of increasing awareness about persuasive online architectures, potentially manipulative spaces, and fostering individuals’ motivation to engage with them effectively (Hertwig & Grüne-Yanoff, 2017). In the following part, we look at two aspects of educational interventions—the format and the content—as potential avenues for boosting competences.

Boosting Competences Through Gamification

Educational formats that are highly interactive, such as games, are increasingly recognized as more effective tools for learning and informing citizens than traditional methods of only providing information (de Freitas, 2018, p. 80). The gamification in education refers to applying game design elements in non-game contexts. As such, game-based learning approaches are said to increase motivation to engage with a topic by tapping into basic gamification techniques such as creating competitive elements and rewarding players (Al-Azawi et al., 2016). As a consequence, they can lead to behavioral changes in players, as new skills and cognition are trained (Al-Azawi et al., 2016; de Freitas, 2018). An example is the “Bad News Game” that improved competences in an entertaining way (Basol et al., 2020; Roozenbeek & van der Linden, 2019). Players learn about six common misinformation techniques as fake news creators on Twitter through choice-based elements and rewards. The replication study by Basol et al. (2020) demonstrated the success of using active educational interventions to instill reliability assessments and to spot fake news items. While interactivity on its own does not cause better learning, it is related to more attention and motivation to engage with a topic (Moreno & Mayer, 2007). Nevertheless, the authors also observe that gamified formats could induce cognitive overload due to their heightened demand for attention and processing, unlike non-interactive environments. However, the interactivity of games might provide individuals with a sense of control, thereby aiding cognitive processing (Chung & Zhao, 2004).

While gamified interventions inherently involve interactivity, non-gamified interventions can either incorporate interaction or not. In non-gamified educational formats (e.g., narrated animations, text on websites), the learners’ actions do not affect the way information is presented (Moreno & Mayer, 2007, p. 310), but they foster critical thinking (Basol et al., 2020). In contrast to the game, this study uses a non-gamified “voter guide” resembling a standard website supplemented with videos in between, requesting active reflection from participants. Basol et al. (2020) highlight the significance of distinguishing between interactive and non-interactive formats when examining learning outcomes, rather than solely concentrating on gamification. Green et al. (2022) discovered that interactive skill development proved more effective in enhancing resistance to persuasion than the non-interactive method (i.e., solely providing information). In addition, the study by Lorenz-Spreen et al. (2021) underscores the importance of active learning within the targeted advertising context. The authors compared passive inoculation (simply providing information about personality dimensions) versus active inoculation (asking participants to reflect on their own personality). Merely informing participants is insufficient; prompting reflection on personality dimensions is necessary to accurately detect targeted ads. Reflecting helps people to integrate new information and leads to better learning (Moreno & Mayer, 2007). In this study, we prompt participants reading the voter guide to reflect on how their data can be used through implementation intentions (Gollwitzer, 1993) by linking a specific situation (e.g., “The next time I see an online post of a political party”) with an intention (e.g., “I can check whether the post is sponsored or not”).

To summarize, (inter)active educational interventions were found to decrease the perceived reliability of misinformation in a gamified version (Roozenbeek & van der Linden, 2019), as well as in a not gamified version (Green et al., 2022). Prompting active reflection is crucial to detect targeted ads, while raising awareness is not (Lorenz-Spreen et al., 2021). In response to the call from Roozenbeek and van der Linden (2019) to explore the boundaries of inoculation theory beyond game environments, and in light of the limited knowledge about more- and less-active inoculation in the context of data-driven political advertising, our aim is to contribute to the literature by comparing a gamified educational intervention with a non-gamified educational intervention that also requires participants’ active reflection. We anticipate that both intervention formats will result in learning, with the gamified intervention yielding greater outcomes:

Hypothesis 1 (H1). Playing a game about data-driven political advertising (vs. reading a voter guide) leads to an increase in DCC.

Boosting Competences Through Risk and Benefit Frames

Next, we want to investigate how the framing of content affects how people are informed. Frames define problems and solutions and provide the basis for making moral judgments (Entman, 1993). Information can be framed in positive or negative terms, such as gains and losses, benefits and risks, thereby influencing the opinions and behaviors of citizens.

Framing information about DDC could shape citizens’ understanding, evaluation, and willingness to develop or apply skills to respond to it (e.g., use more or less privacy-enhancing technology [PET]).

Given the data-exploitation scandals surrounding political data-driven advertising (e.g., Cambridge Analytica; Hu, 2020), DDC has often been discussed with regard to its risks for individuals and democracy by the media, governments, and scholars alike (Bennett & Lyon, 2019; Dommett et al., 2022). Commonly named threats concern citizens (e.g., exclusion of voter groups), political parties (e.g., more power to commercial entities), or public opinion (e.g., lack of transparency regarding political standpoints; Zuiderveen Borgesius et al., 2018). Less often discussed are the potential benefits of targeted advertising (Dommett et al., 2022) like relevant content for citizens, efficient campaign spending for parties, and engaging politically disinterested citizens who tend to bypass traditional media but can be reached through social media (Zuiderveen Borgesius et al., 2018). Dommett et al. (2022) found that citizens’ acceptance of targeting practices varies based on the type of data used, suggesting that the rejection of DDC is not uniform. Citizens assess social exchanges (e.g., personal data for political information) in terms of costs and rewards (see the social exchange theory, Emerson, 1976). The risk focus in public discussions on DDC (e.g., Dommett et al., 2022) might skew perceptions. In addition, negatively framed information impacts citizens more than positive information because the negativity bias (e.g., Rozin & Royzman, 2001) demands greater cognitive processing (Fiske, 1980). People tend to take protective actions when they perceive risks to outweigh potential benefits (Ham, 2017). While Kirmani and Campbell (2004) suggest that acceptance of persuasive strategies depends on the perceived benefits, the study by Ham (2017) in the context of online behavioral advertising indicates that perceived risks are more strongly related to behaviors. This means that even if people are accepting of inherently persuasive data-driven advertising strategies, perceived benefits might not necessarily lead to behavioral changes. Hence, we expect that:

Hypothesis 2 (H2). Exposure to a risk-framed (vs. benefit-framed) educational intervention about data-driven political advertising leads to an increase in DCC.

As mentioned before, educational interventions incorporating gamification or interactive components are effective in helping people acquire new information and skills across various contexts. In addition, when content is presented with an emphasis on risks, it tends to grab attention and prompt behavioral changes more effectively than content emphasizing benefits. Thus, the combination of gamified educational interventions, along with highlighting potential risks, seems most promising to enhance awareness of DDC, encouraging conscious evaluations of information and ultimately driving behavioral changes or adaptations. While research on the combined impact of interaction levels and gain/loss message framing exists in environmental behavior (Ahn et al., 2015), such insights are lacking in the context of DDC. We propose the following research question:

RQ. To what extent does the mode of an educational intervention about data-driven political advertising (reading a voter guide vs. playing a game) and the framing (risk-framed vs. benefit-framed) of an educational intervention interact and affect citizens’ DCC?

Method

Experimental Design

We conducted a pre-registered, 2 × 2 between-subjects experiment with a control group. The experimental groups received interventions, while the control group only filled in the questionnaire. The first factor refers to the mode of the educational intervention, which differed in terms of interactivity levels. Participants either played a game or read a voter guide. Both formats covered four commonly discussed DDC topics: (1) data collection and surveillance, (2) financial resources and data-sharing with Google and META, (3) data-driven tactics (A/B testing, geo-targeting, search engine optimization), and (4) persuasion tactics, such as political parties positioning themselves as single-issue parties. To ensure ecological validity, we collaborated with the NGO Tactical Tech and adapted their original content and design for the stimulus materials (see Appendix). The gamified intervention had players role-playing as campaign managers for a fictional party and lasted approximately 26 minutes. It included interactive features, allowed players to decide the next content, provided feedback on choices, and rewarded them with badges after they concluded each topic. The voter guide necessitated less engagement from participants as it resembled a standard website with two short videos. The guide encouraged active reflection but lacked feedback and gamified elements and lasted approximately 30 minutes.

The second factor refers to the framing of the intervention: either the benefits or the risks within one of the four common DDC topics. For example, the risk-frame condition highlighted the threat of excluding people from information using location-based data in political campaigns, while the benefit-frame condition emphasized that individuals would not be overwhelmed by excessive information due to location-based data. The conditions presented the same information but framed it differently, with risks displayed via a red risk meter and benefits via a green benefit meter.

Sample

An a priori power analysis in R was conducted based on expected mean differences for the main effect based on previous research in similar contexts (Lorenz-Spreen et al., 2021; Roozenbeek & van der Linden, 2019). The power analysis suggested that at least 300 participants are needed to detect the expected mean differences with a power of 1.00 (α = .05) for the main effect. To test for interaction effects, we aimed at 500 participants. The study was reviewed by the Ethics Review Board at the University of Amsterdam (filed as FMG-501_2022). In March 2023, the polling company Dynata collected the sample for this study using a quota sampling method considering the distribution of age and gender in Austria 1 (N = 553): age (M = 44.6, SD = 16.1), gender (51.2% female). Education levels were diverse, with 42.5% having higher education, 29.7% having middle levels of education, and 27.8% having lower levels of education. To ensure the data’s validity and reliability, non-completes, individuals who failed the attention check after the second attempt, and duplicates were excluded from the analysis. Data and code are available on OSF (https://osf.io/ahj9t). The analysis here is reported with outliers, and the analysis without outliers are reported in Appendix C.

Procedure

In the online experiment, we first assessed participants’ informed consent and measured social-demographics and other possible predictors (see pre-registration: https://osf.io/9rfma). We randomly allocated participants to either the control group or one of the four experimental conditions. Next, participants completed a post-treatment survey to measure the dependent variables and subsequently a comprehension check. At the end of the questionnaire, we assessed manipulation checks for the mode and framing of the intervention. During debriefing, we referred all participants, including the control group, to Tactical Tech’s Data-Detox-Kit to ensure equal access to educational benefits from participating.

The randomization was successful as the five conditions did not differ significantly concerning age (F4,548 = 1.11, p = .349); gender, χ2 (4, N = 553) = 1.86, p = .762; educational levels, χ2 (20, N = 553) = 19.47, p = .491; use of ad blockers, χ2 (8, N = 553) = 5.53, p = .806; political interest (F4,548 = 0.27, p = .90); and need for cognition (F4,548 = 0.46, p = .763).

Measures

If not stated otherwise, items were measured on a 7-point Likert-type scale (1 = lowest level, 7 = highest level). The English translation of all items can be found on OSF 2 (https://osf.io/9rfma). Multiple items relating to understanding, evaluation, and skills in the context of DDC are examined to allow for a nuanced assessment of the multifaceted concept of DCC.

Dependent Variables

Understanding

A scale composed of eight true statements measured people’s self-reported conceptual understanding of DDPA. Higher scores on this scale indicate greater understanding of data-driven political campaigning (Cronbach α = .79, M = 4.77, SD = 1.32). The statements referred to the persuasive intent, the political source, the persuasive tactics, the economic model, data collection, and surveillances practices by political parties and online service providers, as well as data-protection laws and technical knowledge related to data privacy (based on the study by Boerman et al., 2018; General Data Protection Regulation [GDPR], n.d.; Macintyre & Cladis, n.d.; Masur, 2020; Rozendaal et al., 2016). For example, to assess understanding of the political source of DDC, participants considered the following statement: “Political parties pay social media and search engines like Google to show campaign ads to certain groups of people but not to others.”

Evaluation

Evaluative perceptions of data-driven targeting with election ads were assessed with semantic differentials referring to skepticism, appropriateness, and liking based on the study by Boerman et al. (2018). We additionally measured “relevance.” Skepticism (trustworthy—untrustworthy; honest—dishonest; M = 5.21, SD = 1.44, Spearman-Brown’s r = .86) and appropriateness (problematic—unproblematic; unacceptable—acceptable; M = 3.10, SD = 1.58, Spearman-Brown’s r = .823) were each assessed through two items. Liking (disliking—liking; M = 3.12, SD = 1.63) and relevance (irrelevant—relevant; M = 4.60, SD = 1.60) are composed of one item each.

Skills

Participants were shown a fictitious person’s social media profile and then asked to spot and react to data-driven ads. First, we assessed participants’ perception of the degree of targeting of five postings/ads received by the fictitious person. Four were sponsored ads, and one was a regular, non-sponsored post. 4 Three ads were highly targeted toward the fictitious person (Cronbach α = .59, M = 5.59, SD = 1.21). Confirmatory factor analysis indicated good model fit χ2 (6) 161.736, p < .001, TLI (Tucker-Lewis Index) = 1.00, CFI (Comparative Fit Index) = 1.00, RMSEA (Root Mean Square Error of Approximation) = .00, SRMR (Standardized Root Mean Square Residual) = .00. One ad and one posting were not tailored toward the fictitious person (Spearman-Brown’s r = .53, M = 4.50, SD = 1.66). Higher values indicate that participants judged the perceived degree of targeting (i.e., either highly targeted or not targeted) correct.

Another measured skill is the ability to identify targeting criteria. Two ads/postings were shown again to the participants (one highly targeted, one not targeted). They were tasked to determine the (un)likelihood of different targeting criteria having been used to distribute the ads. Participants considered seven factors such as age, gender, location, hobbies, interests, job, and relationship status. For each criterion, participants assessed whether it was unlikely or likely used for targeting the ad. In total, participants could score 14 points (i.e., seven points per ad/posting; M = 9.27, SD = 2.80).

Two items, adapted to the data-driven advertising context, were used to assess critical processing (Boerman et al., 2014). Participants were asked to indicate to what extent they were critical about influential ad tactics when they reviewed the ads shown earlier (M = 4.53, SD = 1.55, Spearman-Brown’s r = .75).

Intent to use PET was assessed using four items. Participants were asked to what extent they would use do-not-track browsers, use privacy settings, restrict personal information online, and obfuscate personal information online (Cronbach α = .72, M = 4.39, SD = 1.65; Data Detox Kit, n.d.; Ireland, 2021; Kaaniche et al., 2020). Confirmatory factor analysis indicated good model fit, χ2 (8) 15.951, p < .001, TLI = 0.90, CFI = 0.97, RMSEA = .11, SRMR = .03. To mitigate potential bias from their current privacy practices, we instructed them to choose “very likely” if they already use the technique or technology.

To measure the use of privacy-enhancing technologies and techniques (M = 5.15, SD = 1.56), participants were asked if they wanted to take immediate control over their digital privacy and security. Only those who responded with “likely” or “very likely” were informed that they would receive access to the Data Detox Kit developed by Tactical Tech at the end of the study.

Pilot Test and Manipulation Checks

A pilot study (N = 102) confirmed the validity and credibility of the independent variables (mode and framing of the educational intervention). 5 For the manipulation check in the main study, we grouped the experimental groups by mode (i.e., game or guide) and framing (i.e., risk or benefit). Analysis of variance (ANOVA) tests and Tukey post hoc tests confirmed that our manipulations were successful (see Appendix A).

Results

Format: Game vs. Voter Guide vs. Control

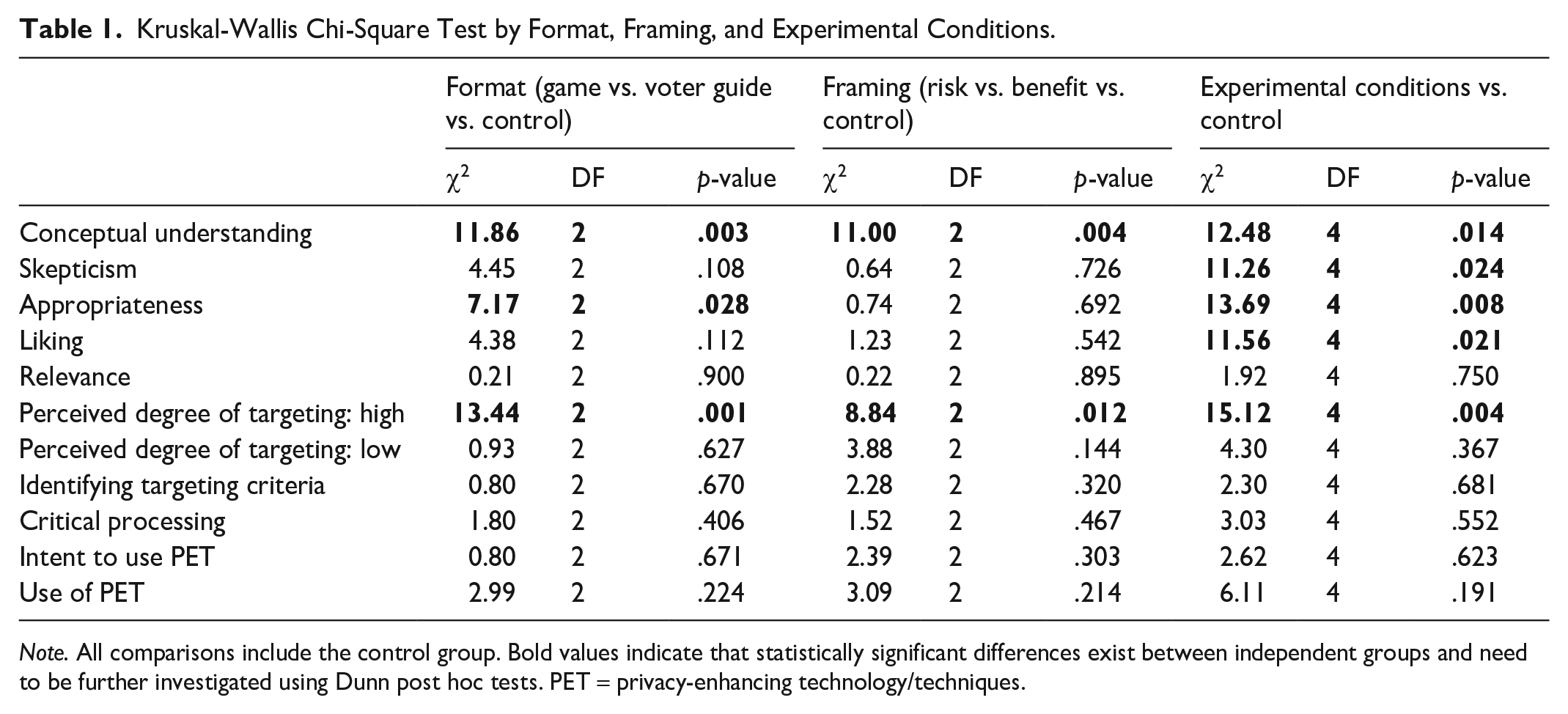

To answer the first hypothesis, we examined whether game-based educational interventions are more successful in instilling DCC among citizens than the voter-guide conditions. To do this, we grouped gamified conditions, regardless of framing, and voter-guide conditions, regardless of framing. First, we performed a Kruskal-Wallis χ2 test 6 (see Table 1). This test informs us about significant group differences for conceptual understanding, appropriateness perceptions, and detecting highly targeted ads. Subsequently, we performed Dunn post hoc tests with Bonferroni corrections to see which formats differ significantly from one another.

Kruskal-Wallis Chi-Square Test by Format, Framing, and Experimental Conditions.

Note. All comparisons include the control group. Bold values indicate that statistically significant differences exist between independent groups and need to be further investigated using Dunn post hoc tests. PET = privacy-enhancing technology/techniques.

We conclude that, in comparison to the control group, game conditions (z = 2.39, p = .017, p.adj = .050, r = .13) and voter-guide conditions (z = 3.43, p < .001, p.adj = .002, r = .18) increased conceptual understanding about data-driven political advertising among the participants. Between game conditions and voter-guide conditions, no significant differences concerning conceptual understanding of DDPA exist. We do, however, find a significant difference about perceptions of appropriateness of DDC between the game conditions and the voter-guide conditions. Participants in the voter-guide conditions think DDC strategies are less appropriate than participants in the game conditions (z = −2.62, p = .009, p.adj = .026, r = −.13). Furthermore, participants in the voter-guide conditions performed significantly better in detecting highly targeted ads than the control group (z = 3.59, p < .001, p.adj < .001, r = .19), but not better than participants in the game conditions. Contrary to H1, game interventions did not prove to be the most effective format. The voter-guide condition reduces appropriateness levels compared to the game-based interventions and enhances understanding and the ability to identify highly targeted ads compared to the control group.

Framing: Risk vs. Benefit vs. Control

To investigate the second hypothesis, we compared whether risk-framed educational interventions are more effective in increasing DCC among citizens than benefit-framed conditions. To do this, we grouped risk-framed conditions, regardless of their format, as well as benefit-framed conditions. The Kruskal–Wallis test indicates significant group differences about conceptual understanding and the perceived degree of targeting of highly targeted ads. Post hoc tests show that both the benefit-framing (z = 2.66, p = .008, p.adj = .023, r = −.15) and the risk-framing (z = 3.21, p < .001, p.adj = <.01, r = .17) increase conceptual understanding compared to the control conditions. There are no group differences between risk-framed and benefit-framed educational interventions for increasing the understanding of DDC among citizens. Likewise, both benefit (z = 2.54, p = .011, p.adj = .033, r = .14) and risk-framed educational formats (z = 2.79, p = .005, p.adj = .016, r = .15) increase the detection abilities of highly targeted ads compared to the control group. H2 is not supported. We conclude that the framing of educational intervention does not seem to have a significant impact. Instead, our results suggest that the provision of information itself is crucial, irrespective of how it is presented.

Interaction Between Formats and Frames

Finally, we examine the interaction effects of educational intervention formats and framings on citizens’ DCC. The Kruskal–Wallis test shows significant group differences regarding conceptual understanding, skepticism, appropriateness, liking, and detecting highly targeted ads (Table 1). Dunn post hoc tests (Table 2) show that the risk-framed voter-guide condition is most effective, albeit not consistently across conditions. In terms of understanding, the voter-guide risk condition increases the understanding of data-driven political campaigning (z = 3.38, p < .001, p.adj = .007, r = .21) compared to the control condition. No other group differences are significant. Participants in the voter-guide risk condition exhibit higher levels of skepticism (z = 3.23, p < .001, p.adj = .012, r = .22) and lower levels of appropriateness (z = −3.60, p < .001, p.adj = .003, r = −.24), and they like DDPA significantly less (z = −3.19, p < .001, p.adj = .014, r = −.22) than the gamified risk condition. Interestingly, the levels of skepticism and liking toward DDPA were only significantly affected when we considered the interaction between format and framing. Finally, participants in the voter-guide risk condition are significantly better in detecting highly targeted ads (z = 3.62, p < .001, p.adj = .003, r = .23) than the control condition. Based on our findings, it appears that a non-gamified and more educational format, specifically a voter guide that emphasizes the risks associated with data-driven political campaigning techniques, seems to be a highly effective approach in enhancing understanding of data-driven political advertising, influencing evaluations, and developing detection skills of highly targeted ads.

Significant Mean Differences Based on Dunn Post Hoc Tests With Bonferroni Corrections.

Note. Per row (i.e., dependent variable), only two conditions differ significantly from one another. Measures range from 1 to 7, except identifying targeting criteria (0–14). Ncontrol = 128, NGame-Risk = 98, NGame-Benefit = 99, NVG-Risk = 122, NVG-Benefit = 106.

p adjusted < .001, **p adjusted < .01, *p adjusted < .05.

Discussion and Conclusion

Citizens are increasingly exposed to data-driven content online, from health advice to entertainment and political information, all determined to a large extent by their personal data. While this can provide relevant information and help them make informed decisions, it also risks excluding certain citizens from content, potentially impacting online discourse. In particular, being excluded from political information can limit the diversity of viewpoints they encounter, threatening the principles of a healthy democracy. The purpose of this study was to investigate how to support citizens in enhancing their campaign competence by recognizing, evaluating, and responding to data-driven techniques used in political campaigns. This multifaceted competence is crucial because a lack of it may prevent citizens from fully benefiting from DDC and leave them vulnerable to unconscious informational bias and data exploitation. To our knowledge, this study is one of the first to use an online experimental setup to examine how educational interventions can foster the competencies needed in a data-driven political context.

Our first key finding indicates that reinventing the wheel might not be necessary when it comes to boosting DCCs because the “voter guide,” a non-gamified educational format, outperformed both the interactive gamified condition and the control condition, especially when the risks associated with data-driven political campaigning techniques were highlighted in the text. For example, only participants in the voter guide with the risk-frame showed a significantly higher understanding of data-driven political advertising and were significantly better at identifying highly targeted advertisements than the control group. While the other experimental interventions also enhanced understanding and ad recognition in comparison to the control group, observed mean differences were not significant. Based on recent studies using the inoculation theory, we expected that both the gamified intervention (e.g., Roozenbeek & van der Linden, 2019) and the voter guide that encourages active reflection (e.g., Lorenz-Spreen et al., 2021) would lead to learning outcomes, with the game outperforming the guide. The assumption that our gamified intervention would outperform traditional learning formats (Clark et al., 2016; de Freitas, 2018) was made due to the absence of direct comparisons between gamified and non-gamified interventions that contain active reflection elements. The inclusion of active reflection in terms of implementation intentions (Gollwitzer, 1993) in the voter guide might blur the lines, making it less fitting for the traditional format category. In addition, incorporating narratives in the gamified intervention may shift the attention from the learning content and introduce alternative goals for players (Clark et al., 2016, p. 112). Even though we only included a few gamified elements, the gamified intervention stood out from the voter guide due to its narrative of being a campaign manager for a political party, which the voter guide lacked due to ecological validity considerations.

Second, at first glance, the gamified interventions’ ability to immerse players and maintain their interest through rewards (Al-Azawi et al., 2016) might have diminished the seriousness of the topic and thus affected participants’ attitudes toward DDC. Participants playing the game with content highlighting the risks of DDC for individuals and democracy were notably less skeptical about scenarios such as political parties gathering data and subsequently excluding specific voter groups based on demographics, behaviors, or interests. In addition, appropriateness perception of data collection and usage, as well as “liking” these practices, were significantly higher in the game conditions with risk-framing than they were in the non-interactive voter-guide conditions with risk-framing. These findings are consistent with a meta-analysis on narrative persuasion which showed that narratives, a common feature in games, elicit less resistance than non-narratives (Ratcliff & Sun, 2020). This might explain the positive evaluations in the game-based intervention compared to the information-only intervention. However, at second glance, we note that skepticism levels were already quite high across all groups (M = 5.20, SD = 1.44), including the control condition. The gamified condition may have illuminated the positive aspects of DDC, thus lowering skepticism levels in these groups. In other words, participants may be more inclined to acknowledge the benefits of receiving tailored content to stay informed about topics that interest them most. While moderate skepticism is healthy for scrutinizing ads (Koslow, 2000), excess skepticism can lead to undue distrust and ad avoidance (Baek & Morimoto, 2012). Thus, in data-driven political advertisements, too much skepticism could have spillover effects to the source of the advertisement (e.g., the political party), similar to studies on misinformation where disproportionate skepticism levels affect credibility perceptions of reliable news sources (van der Meer et al., 2023). Indeed, informing citizens about the implications of data-driven practices without inducing a sense of resignation is a delicate balancing act (Sander, 2020). The consequence could be privacy cynicism, a feeling of hopelessness, and frustration of the widespread data collection and use (Choi et al., 2018). 7

Third, we found no significant differences between risk and benefit-framed content concerning any sub-component of DCC. However, in comparison to the control group, both framings lead to more understanding and skills among participants. The risk-framed voter-guide affects understanding and skills compared to the control group, while the positive-framed guide does not. This supports Ham’s (2017, p. 652) finding that perceived risks drive behavioral changes more than perceived benefits. Our study makes a noteworthy contribution by demonstrating that this holds for the non-gamified, information-only intervention in the form of a voter guide. Thus, the game may not have induced enough “risk” compared to the voter guide to have an effect. Given citizens’ general aversion to online political advertising (Turow et al., 2012) and their relatively indifferent stance on individual data collection (Kruikemeier et al., 2020), it is possible that positively framed information about DDC faced inherent challenges. In this study, “liking” scores were notably low, falling below the scale’s midpoint across all conditions. Therefore, considering the greater impact of negative information due to the negativity bias (Rozin & Royzman, 2001), the received positive information might not have had sufficient sway over attitudes toward DDC. In essence, whether positive or negative information is presented, a negative overall perception of DDC persists.

Interestingly, significant differences in “liking” of DDC were only observed between the two risk-framed conditions (game risk, guide risk). It is possible that participants enjoyed playing the game, which could have influenced their views. This is further supported by the fact that, despite participants expressing a preference for data-driven strategies, there was no significant difference in their perception of the actual relevance of these strategies across conditions. However, it is possible that some individuals like excluding specific groups from political information and do not see this as a heightened risk facilitated by DDC. Future research could explore political leanings, an aspect we did not investigate.

Limitations and Future Research

While the current study provides significant contributions, it also presents limitations that indicate potential areas for future research. First, participants in the game conditions adopted a first-person perspective commonly used in games to learn about data sources and usages available for political campaigns. In comparison, the guide did not convey a specific perspective to the reader, aligning with the style of typical informative websites. Through these decisions, this study stressed ecological validity considerations. Adopting a specific viewpoint might have influenced participants’ evaluations of DDC techniques, but it is less likely to have affected their understanding abilities and skill adoption about DDC. Future studies might want to control for taking different, more fine-grained perspectives to rule out this possibility.

Second, both the game and the voter guide likely required significant cognitive effort due to their length. While we cannot definitively determine which condition was more mentally taxing, gamified formats may induce cognitive overload as they demand more attention and processing than non-interactive environments (Moreno & Mayer, 2007). Combining text with visuals enhances learning compared to text-only formats (Mayer, 2009). Although there were no group differences in the need for cognition, indicating similar enjoyment of challenging tasks across participants (Petty & Cacioppo, 1982), and there is no “one-size-fits-all” solution for enhancing competencies (Kozyreva et al., 2020, p. 130), future research should explore which interventions are most appreciated by different individuals, potentially through qualitative interviews.

Third, we do not know how long the effects of our intervention last. Those in the game conditions might retain content better due to active practice with data-collection strategies, unlike the guide condition which focused on reflection tasks instead. However, for lasting changes in understanding and behavior, regular practice over a longer period is ideal (Bandura, 1977; Hertwig & Grüne-Yanoff, 2017). Future studies might want to explore the duration of educational intervention effects on conceptual understanding, evaluative perceptions, and skills.

Finally, to conceptualize DCC, this study aligns with the European Commission’s digital competences framework (EU Digital Education, 2020), comprising understanding, evaluations, and skills. However, our measures require further conceptual exploration and testing, especially as some skills may become outdated as technology evolves.

Despite these limitations, our study demonstrated that educational interventions can boost competence by helping citizens learn, evaluate, and recognize data-driven political ads. Given that data influence many aspects of our online environments, similar educational interventions could be effective in other areas where digital competence is crucial to make informed decisions. Structural interventions by regulatory authorities and social media platforms, along with educational initiatives, are essential for supporting citizens in the digital age and maintaining a healthy democracy.

Footnotes

Appendix A

Appendix B

Appendix C

Appendix D

Appendix E

Acknowledgements

The authors would like to thank the Tactical Tech collective.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The project DATADRIVEN is financially supported by the NORFACE Joint Research Program on Democratic Governance in a Turbulent Age and co-funded by ESRC, FWF, NWO, and the European Commission through Horizon 2020 under grant agreement no. 822166. This research was funded in whole or in part by the Austrian Science Fund (FWF) 10.55776/I4818.