Abstract

Social media platforms are too often understood as monoliths with clear priorities. Instead, we analyze them as complex organizations torn between starkly different justifications of their missions. Focusing on the case of Meta, we inductively analyze the company’s public materials and identify three evaluative logics that shape the platform’s decisions: an engagement logic, a public debate logic, and a wellbeing logic. There are clear trade-offs between these logics, which often result in internal conflicts between teams and departments in charge of these different priorities. We examine recent examples showing how Meta rotates between logics in its decision-making, though the goal of engagement dominates in internal negotiations. We outline how this framework can be applied to other social media platforms such as TikTok, Reddit, and X. We discuss the ramifications of our findings for the study of online harms, exclusion, and extraction.

Introduction

As social media companies face increasing public scrutiny, it has become difficult to pinpoint how, exactly, they justify their priorities. Take the case of Meta and Facebook: “Facebook was built to bring people closer together,” proclaims a blog post from the previous head of Facebook’s Newsfeed (Mosseri, 2018). “Meta Is Advancing Democracy,” announces another company blog post, despite evidence that its first goal—bringing friends closer together—can have harmful effects on democratic discourse (Potts, 2023). “Making Emotional Health a Priority,” explains a third public post (Meta, 2021b), against a backdrop of concerns about bullying, peer comparison, as well as racist and sexist harassment (Wells et al., 2021).

This revolving set of justifications reflects the muddled infrastructures that platforms create. As socio-technical systems, social media companies are subject to shifts in norms and decisions as developers, users, and policies co-evolve (Gillespie, 2018). Yet even as social media companies tweak their algorithms, change their content moderation policies, develop fact-checking partnerships, send mental-health–related notifications, and create transparency centers, their responses are criticized as piecemeal, incomplete, and inefficient (Ananny, 2018; Hao, 2021; Marwick, 2021; Napoli & Caplan, 2017; Sharp & Gerrard, 2022; Sobieraj, 2020). In this article, we suggest that these priority paradoxes and incomplete implementations come into focus if we analyze the struggles and conflicts that characterize how social media companies justify their mission. We understand social media companies as complex organizational entities with internal tensions, different priorities, and distinct teams and departments in charge of these goals.

Specifically, we ask: how do different systems of values compete and conflict at social media companies? We focus on Meta’s (Facebook, Instagram, Threads, and WhatsApp) public material and inductively identify three distinct ways in which Meta justifies its policies. First, we highlight the dominance of an engagement logic based on the optimization of users’ signals, which maximizes online advertising revenues and the platform’s broader commercial goals. Second, we identify a public debate logic, with an emphasis on verified information, rational-critical discourse, and pro-democratic outcomes. Third, we delineate a wellbeing logic centered on promoting and repairing users’ psychological and physical health. We then outline the applicability of this framework to other social media platforms.

These different justifications are often in direct conflict with one another in the daily internal operations of social media companies. Drawing on the documents made public by Frances Haugen in the 2021 Facebook leaks, we find that public debate and wellbeing typically remain subordinate to engagement during key decision points. We argue that the visible presence of the public debate and wellbeing logics is what enables social media top executives to publicly save face and continue doing “business as usual,” even when their products are consistently criticized because they amplify racialized, gendered, and other forms of injustice. We conclude by discussing the ramifications of these findings for the study of online inequality and extraction.

From Values to Competing Logics: Making Sense of Social Media Platforms

Broadly considered, social media platforms are a set of websites and applications characterized by users’ ability to create individual accounts, post content on their accounts, and share content with lists of contacts, both public and private (boyd & Ellison, 2007). While most social media companies have long described themselves as neutral actors, scholars have highlighted the political, economic, and cultural values embedded in their design and policies. Overall, however, social media companies are too often perceived as monoliths without internal complexity. Drawing on organizational sociology, we outline the benefits of examining the role of internal conflicts, fractures, and tensions in how social media companies justify their priorities.

Platforms and Their Values

Over the past 20 years, social media platforms have repeatedly sought to position themselves as neutral intermediaries. As Gillespie (2010) first pointed out, the metaphor of the platform itself downplays these companies’ role, presenting it as “just hosting” digital content and conveniently suggesting a “hands-off neutrality” (p. 358). Social media platforms insisted early on that they were not media companies, largely because media companies are obliged to police various types of speech and have historically experienced more intensive government oversight (Helft, 2008; Napoli & Caplan, 2017). To date, the U.S. judicial system has upheld this framing: Instead of grouping platforms with newspapers or broadcasters, legislation has treated platforms as information conduits comparable to telecom companies and post offices. Following Section 230 of the 1996 Telecommunications Act, social media platforms are protected from legal liability for any hosted, curated, or distributed third-party speech.

Against this statutory neutrality, scholars have pointed out that companies such as Meta and Google are in fact media companies (Napoli & Caplan, 2017). Platforms constantly tinker with the flow of online information: They determine “how profiles and interactions are structured; how social exchanges are preserved; how access is priced or paid for; and how information is organized” (Gillespie, 2018, p. 22). For instance, when Instagram allowed users to edit photos through filters, this directly shaped content-production strategies (Leaver et al., 2020). Humans and algorithms also fulfill editorial functions on social media platforms through content moderation (Klonick, 2018; Roberts, 2021).

What are the values underlying the interventions and policies of social media platforms? Scholars have examined this question by exploring how platform design and content moderation shape the experience of marginalized users (Benjamin, 2019; Brock, 2020; Bucher, 2021; Chander & Krishnamurthy, 2018; Noble, 2018; van Dijck et al., 2018; van Dijck & Poell, 2013). Brock (2020) notes how the alleged neutrality of platforms relies on the enactment of “color-blind” norms that position Whiteness as the default internet identity, while Noble (2018) highlights the racist and sexist bias of search engine algorithms. Scholars also find that platforms’ commitment to neutrality often amplifies the spread of misogynistic online content. For Lewis (2018), platforms’ “attempts at objectivity are being exploited by users” (p. 44). Massanari (2017) adds: “remaining ‘neutral’ in these cases valorizes the rights of the majority while often trampling over the rights of others” (p. 339). These studies remind us that when platforms seek to remain “neutral” and “objective” in contested political spheres, they are in fact making political choices.

To explain these choices and the values of social media platforms, scholars have mobilized two complementary arguments. First, they emphasize the lack of diversity within the platforms’ labor force. As Gillespie (2018) writes, “the full-time employees of most social media platforms are overwhelmingly white, overwhelmingly male, overwhelmingly educated, overwhelmingly liberal or libertarian, and overwhelmingly technological in skill and worldview” (p. 12). This sociodemographic base is often invoked to explain why platforms’ executives and engineers keep searching for “neutrality” and “objectivity,” even when the harms associated with adopting such a “view from above” or “god trick” are well documented (Haraway, 1988; Hill Collins, 2000). The background and values of executives and engineers, together with the project-based internal structure of most large technology companies, further thwart the efforts of “ethical entrepreneurs”—often women and people of color—seeking to change the status quo (Ali et al., 2023).

Second, scholars highlight the economic incentives of platforms to understand their policy and design choices. For Couldry and Mejias (2019a, 2019b), social media platforms perform “data colonialism” through their collection, mining, and selling of user data. In this view, Facebook is a “principal actor in data colonialism” because it is one of the “corporations involved in capturing everyday social acts and translating them into quantifiable data which is analyzed and used for the generation of profit” (Couldry & Mejias, 2019b, p. 340). Thus, social behaviors and interactions that previously existed outside of marketplaces are now perceived as “raw materials” appropriated to extract and create revenue by selling behavioral data to advertisers (Zuboff, 2019). This data mining business model prompts platforms to boost user engagement. As a result, social media companies actively draw users to the platform and keep them there through personalized algorithmic targeting. 1

From rewarding sexist content on algorithmic feeds to monetizing user data through behavioral targeting, social media platforms are always in the process of shaping user interactions in ways that reveal their underlying economic, political, and social values. To date, scholarship examining these values has primarily focused either on the macro, societal level (showing how social media platforms encode broad racist, misogynistic, and capitalistic values in their design) or at the micro, individual level (examining the negative individual experiences of marginalized users on social media). To explain these values, scholars have turned to two complementary arguments, one at the micro level (technology companies’ lack of diversity) and one at the macro level (the economic incentives of platforms).

Competing Logics

Our intervention is to look at the meso, organizational level and move beyond unitary analyses of social media platforms as monoliths driven by a single mission. Instead, we understand social media companies as complex entities with internal tensions and fractures; different priorities represented by various employees, projects, and departments; and distinct ways of justifying these goals. Specifically, we ask: how do different systems of values compete and conflict in how social media companies justify their priorities?

To examine these tensions, we turn to organizational sociology and the sociology of evaluation (Lamont, 2012). In organizational sociology, multiple studies have analyzed how different norms, rules, and values compete within existing fields and organizations. Scholars developed the concept of “institutional logics” to describe these broad systems of values (Friedland & Alford, 1991). Thornton and colleagues (2012) define institutional logics as the “socially constructed, historical patterns of material practices, assumptions, values, beliefs, and rules by which individuals produce and reproduce their material subsistence, organize time and space, and provide meaning to their social reality” (p. 51). The conflict and replacement between logics has been studied across sites. For instance, Thornton and Ocasio (1999) document how U.S. academic publishing switched in the 1970s from a primarily editorial orientation focused on prestige and personal imprints to a market logic focused on high profit margins and market share.

Yet institutional logics also have been criticized as deterministic and unitary. As Fligstein and McAdam (2012) noted, “The use of the term ‘institutional logics’ tends to imply way too much consensus in the field about what is going on and why and way too little concern over actors’ positions” (p. 11). A distinct approach emerged with the sociology of evaluation, which examines “how an entity attains a certain type of worth” (Lamont, 2012, p. 205). In this view, evaluative practices are always deeply contested processes. For instance, Boltanski and Thévenot (2006) delineate six broad “orders of worth”—inspired, domestic, civic, opinion, market, and industrial—that people use when they try to justify their understanding of a situation. They explicitly focus on the coexistence of different orders and the conflicts that emerge between them; they study controversies where people struggle over different understandings of the same context.

Drawing on the sociology of evaluation, scholars have examined the negotiations and evaluative practices through which people and groups struggle to define “what counts” (Beckert & Aspers, 2011; McPherson & Sauder, 2013). For instance, Stark (2009) offered the concept of “heterarchy” to describe organizations where different orders of worth coexist and where accountability is distributed. Similarly, Christin (2020) argues that workers and organizations are torn between distinct “modes of evaluation,” which she defines as “all the cognitive, discursive, and practical operations by which people categorize and hierarchize ideas, objects, and practices” (Christin, 2020, p. 71). In the web newsrooms she studied, journalists moved back and forth between editorial and click-based definitions of their work. Here, we build on these studies that seek to understand the internal contradictions, struggles, and negotiations shaping how organizations justify their mission to analyze social media companies.

Methods and Data

In this article, we focus on the “discursive work of justification” (Gibson et al., 2023) of social media platforms, with a specific emphasis on the public material produced by Meta, and to a lesser extent by TikTok, Reddit, and X (formerly known as Twitter).

Meta (previously Facebook) is a U.S. technology conglomerate that owns Facebook, Instagram, Threads, and WhatsApp, among other services. Facebook launched in 2004 and became accessible to anyone with an email address in 2006. The company filed for an Initial Public Offering (IPO) in 2012 and acquired Instagram the same year. As of 2024, Facebook is the most used social media platform worldwide, with 3 billion monthly active users (Dixon, 2024).

From Edward Snowden’s 2013 revelations about the Prism program to backlash against the “emotional contagion” experiment in 2014, the contested role of Facebook during the 2016 presidential campaign, the Cambridge Analytica scandal in 2018, CEO Mark Zuckerberg’s testimony to Congress in 2018 (and again in 2024), and the revelations of whistleblower Frances Haugen about internal dynamics at Facebook and Instagram in 2021, Meta has been at the center of many controversies (Silverman, 2016; Wells et al., 2021). The company responded to these scandals by adjusting its algorithms and content-moderation guidelines. In the process, the top executives often explained and justified their decisions, providing valuable public material within a broader context of corporate secrecy.

Meta is undoubtedly the most studied social media platform (Bucher, 2021; Frenkel & Kang, 2021; Frier, 2020; Vaidhyanathan, 2018), probably due to its age, size, and controversial role. This sizable coverage, together with the controversies surrounding Meta, makes it a relevant case to see how the evaluative logics framework helps to make sense of the company’s justifications over time. Thus, we analyzed all of Meta’s publicly available materials from 2010 to present about its policies, algorithms, experiments, and content moderation guidelines, together with the existing literature on the platform. We combed through Meta’s blog posts, high-profile as well as less-visible media interviews with employees, the company’s 2012 IPO Securities and Exchange Commission (SEC) registration statement, technical research papers published by scientists working for Meta or with Meta’s data, internal research leaked to the media, and secondary analyses.

Building on Bucher’s analysis of Facebook’s “tensions and transitions” (Bucher, 2021, pp. 2–32) and related work on the multiple metaphors inherent to platforms’ slippery public presentation (Gibson et al., 2023; Hoffmann et al., 2018; Seaver, 2017), we paid close attention to the contrasts, changes, and switches in the discourses and justifications offered by Meta’s executives and employees. We also examined the negotiations and tensions that publicly emerged between different teams, departments, and top executives.

Despite its size and impact, Meta is only one of the many social media companies operating in contemporary societies—and it may well be idiosyncratic (Bucher, 2021). Thus, we turned to other social media platforms to find out if similar repertoires and tensions emerged. We reviewed the public material and executive statements provided by TikTok, Reddit, and X (formerly known as Twitter), repeating the process we had developed to analyze Meta.

Mapping the Competing Logics of Social Media Platforms: Meta and Beyond

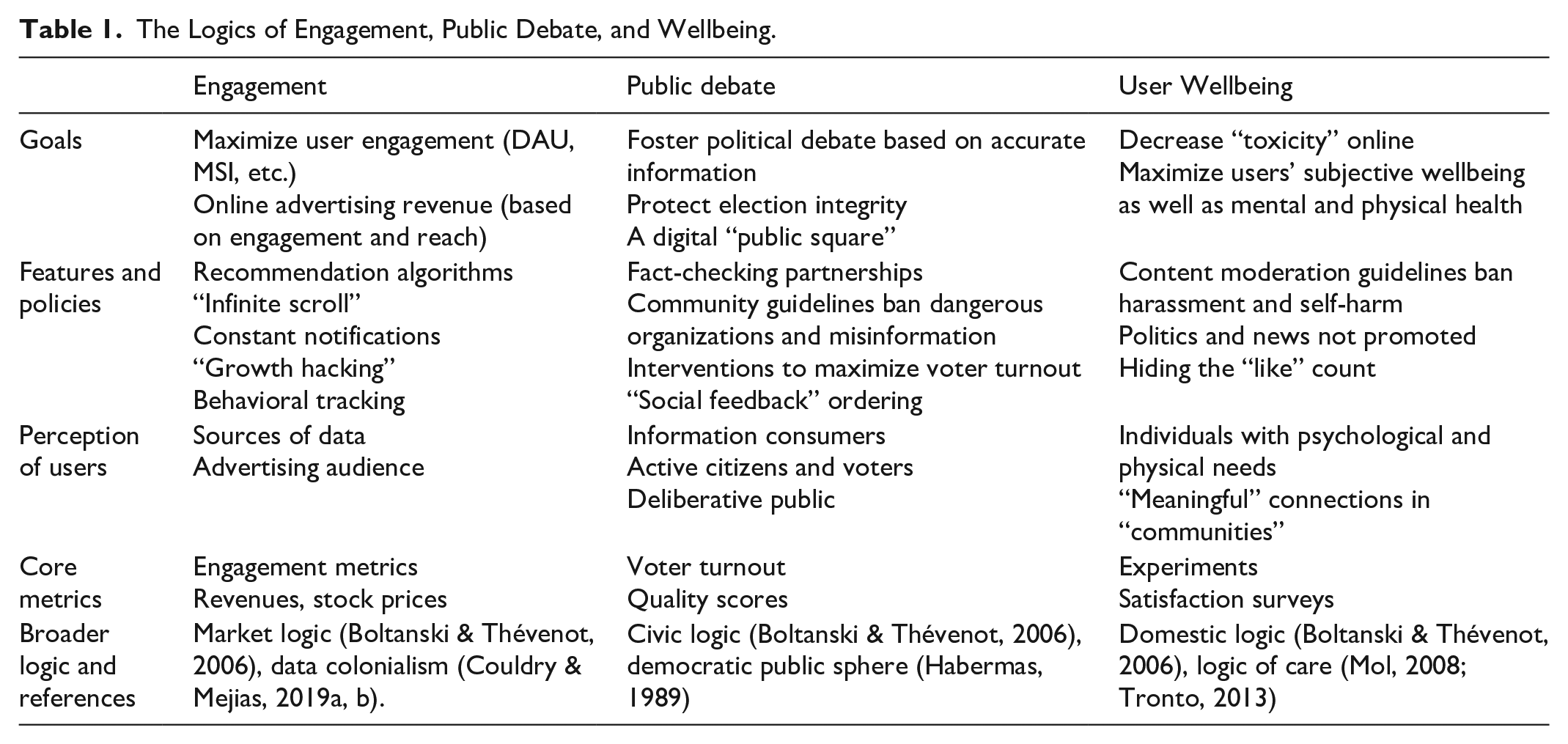

Based on this two-staged examination, we identified three public definitions of social media companies’ mission: a definition based on engagement, a definition based on public debate, and a definition based on user wellbeing (see Table 1). In the rest of this article, we call these distinct priorities “logics” that offer somewhat consistent blueprints for evaluation, action, and justification. Yet we also acknowledge that the use of the term “logic” tends to reify dynamics that are always contentious, multiple, and temporary. Our overview of these logics is ideal-typical, in the sense that these are simplified constructs offered for the purpose of analytical clarity; the reality of their implementation is messier. We first outline what these distinct logics look like in the case of Meta, before briefly turning to TikTok, Reddit, and X.

The Logics of Engagement, Public Debate, and Wellbeing.

Engagement

The first priority emerging from Meta’s public material is all about user engagement. When presenting itself to potential advertisers, the company’s commercial page states: “Your customers are here. Find them with Meta Ads.” The page further offers to “drive engagement” and “optimize for link clicks” by inviting brands to “show ads to people likely to be interested in your business and get more messages, video views or post engagement” (Meta, 2024).

This rhetoric of engagement is Meta’s financial backbone. As early as 2012, in the company’s IPO SEC filing document, an entire section entitled “How We Create Value for Advertisers and Marketers” (p. 75) emphasized the centrality of user engagement as a key value proposition (Ebersman, 2012). After noting that “Facebook offers the ability to reach a vast consumer audience of over 800 million Monthly Active Users (MAUs) with a single advertising purchase” (p. 75), the report turned to the unique strength of the platform, namely its system of “social ads,” where brands could opt to display their ads with “social context” (additional text showing which friends have “liked” or otherwise interacted with the brand). Facebook’s SEC statement repeatedly highlighted the role of engagement among “fans” (a term frequently used in the statement) in terms of advertising reach: We believe that the shift to a more social web creates new opportunities for businesses to engage with interested customers. Most of our ad products offer new and innovative ways for our advertisers to interact with our users, such as ads that include polls, encourage comments, and invite users to an event [. . .]. We believe that Page owners can use Facebook ads and sponsored stories to increase awareness of and engagement with their Pages. (Ebersman, 2012, p. 77, emphasis added)

Over the years, Meta developed a range of metrics to measure user engagement. These include the number of MAUs, Daily Active Users (DAU), Meaningful Social Interactions (MSI), as well as more granular metrics. To optimize user engagement metrics, Facebook has been implementing a range of technical features. A significant innovation in this logic is the adoption of the “infinite scroll,” a 2006 tweak in which the timeline never ends and users can keep on browsing infinitely. Aza Raskin, a Silicon Valley designer widely credited with the invention of the infinite scroll, explained in 2018 (Andersson, 2018; see also Cohen, 2021): It’s as if they [Facebook and other social media platforms] are taking behavioral cocaine and just sprinkling it all over your interface and that’s the thing that keeps you like coming back and back and back [. . .]. Behind every screen on your phone, there are generally like literally a thousand engineers that have worked on this thing to try to make it maximally addicting [. . .]. If you don’t give your brain time to catch up with your impulses, you just keep scrolling.

Meta also adopted other strategies to increase engagement. For instance, since its early days, Facebook has been hiring “growth hackers”: employees tasked with designing and testing approaches to build a larger user-base, ultimately growing overall engagement (Fidelman, 2013). The company also developed a range of technical nudges, including recommendation algorithms that primarily draw on users’ previous engagement signals (Meta, 2019); notifications to bring users back to the platform when there is activity on their posts (Meta, 2023); and the widespread deployment of “likes” despite internal research that found such “likes” made it hard for users to log off.

In official comments, Facebook denied ever seeking to make the platform addictive to users. In 2018, a representative stated that the platform was designed to “bring people closer to their friends, family, and the things they care about [. . .]. At no stage does wanting something to be addictive factor into that process” (Andersson, 2018). Yet former employees regularly contradicted this statement. For example, in 2017, former Facebook president Sean Parker stated that Facebook would send “a little dopamine hit every once in a while [. . .] in the forms of ‘likes’ and comments. The goal was to keep users glued to the hive, chasing those hits while leaving a stream of raw materials in their wake” (cited in Zuboff, 2019, p. 451). Quotes by executives further reveal a wide acceptance of the priority of engagement at Meta (Rhodes & Orlowski, 2020).

This logic of engagement is obviously predicated on Meta’s reliance on online advertising, but also on a broader quest to maximize shareholder and investor value. Not only do platforms have to retain engaged users for advertising purposes, they also need growing metrics to attract funding. As Raskin, the infinite scroll designer, put it (Andersson, 2018): “To get the next round of funding, to get your stock price up, the amount of time that people spend on your app has to go up [. . .]. So, when you put that much pressure on that one number, you’re going to start trying to invent new ways of getting people to stay hooked.”

Returning to the sociology of evaluation, this focus on engagement echoes what Boltanski and Thévenot define as a broader market logic, organized around values of investor capitalism, efficient transactions, and share prices (Boltanski & Thévenot, 2006; Thornton et al., 2012). But it also differs from this traditional commercial ideology in its emphasis on user participation, metrics, and data, which resonates with Couldry and Mejias’ (2019a, 2019b) analysis of “data colonialism”: This monetization of “the social” is the business model that social media platforms have successfully enabled and commercialized over the past 20 years, with Meta leading the pack (see also Turow, 2011; Zuboff, 2019).

Perhaps unsurprisingly, this engagement-driven language is not highly publicized on Meta’s front webpages: It is primarily directed at potential investors, brands, and customers or criticized by former employees. The next two logics, in contrast, are at the front and center of Meta’s public presentation of itself.

Public Debate

The second priority found in Meta’s public material centers on public debate—how to improve its quality online and its connection to the democratic process. In Facebook’s early years, Zuckerberg frequently mentioned the importance of “free expression” on and through the platform (Bucher, 2021, p. 162). In recent years, executives have switched the emphasis to the term “public debate”—perhaps to avoid some of the connotations of free speech absolutism. For instance, in a blog post, Meta’s President of Global Affairs Nick Clegg wrote about the company’s responsibility to protect “public debate” and explicitly linked the exchanges taking place on the platforms (“messy” but “positive”) to people’s democratic attitudes (Clegg, 2023).

Across Meta’s public material, three points emerge. First, Meta repeatedly anchored its discussion of online public debate to the question of misinformation and disinformation. Following the 2016 U.S. election cycle (Silverman, 2016), Meta ramped up its partnerships with non-partisan organizations such as Snopes and the International Fact-Checking Network to identify misinformation on Facebook and Instagram (Meta, 2022a, 2023b). Meta also sought to ban other types of problematic content, including violent content and hate speech; inauthentic content (manipulated by political actors); content produced by state-controlled media in non-democratic countries; and content interfering with democratic election processes (Facebook, 2023).

Second, Meta embraced a paternalistic responsibility to “protect” the integrity of the democratic electoral process, establishing a direct connection between information seeking on their platforms and people’s political behavior. Many programs were developed for the 2020 U.S. Presidential Election. For instance, in 2019, Meta executives issued the following statement: “We have a responsibility to stop abuse and election interference on our platform. That’s why we’ve made significant investments since 2016 to better identify new threats, close vulnerabilities and reduce the spread of viral misinformation and fake accounts” (Rosen, 2019). Meta also developed interventions to nudge users to vote through its “Voting Information Center” on Facebook and Instagram, which provided detailed information about how to vote and showed posts from verified election officials. Banners displayed countdowns to election day, options to share the countdown with friends, reminders about voting procedures, and calls to sign up as a poll worker in one’s state (Gleit et al., 2020). In an op-ed, Zuckerberg (2020) reiterated the responsibilities of the company: People want accountability, and in a democracy, the ultimate way we do that is through voting. With so much of our discourse taking place online, I believe platforms like Facebook can play a positive role in this election by helping Americans use their voice where it matters most—by voting. We’re announcing on Wednesday the largest voting information campaign in American history. Our goal is to help 4 million people register to vote. As we take on this effort, I want to outline our civic responsibilities.

Of course, Zuckerberg’s statement about the “positive role” of Facebook on democracy should be taken with a grain of salt. For instance, many experts have criticized the efficacy of Meta’s fact-checking initiatives (Ananny, 2018) and the problematic impact of Meta’s policies in non-democratic countries (Amnesty International, 2022).

More broadly, Meta tackled the question of the “quality” of public debate on its platforms. This comes through in several publications about “discussion quality” in the “digital public square” (Facebook, 2017). For instance, a 2017 study using Meta data analyzed how the ordering of comments on a Facebook post may affect the “quality” of comments—defined as “in-depth, interesting, engaging statement or question that is worth reading, and adds to the comment conversation in an exceptional or noteworthy way” (Berry & Taylor, 2017, p. 1373).

Across these interventions, online users are not described in terms of their spending power or time engaged on the platform, but rather as citizens and voters who seek information online and whose exchanges, attitudes, and behaviors need to be protected for the democratic polity to flourish. Interestingly, on paper at least, these statements seem to be in line with the role that van Dijck et al. (2018) envision for Western European governments, rather than support the image of platforms as neoliberal or libertarian organizations (Couldry & Mejias, 2019a, 2019b; Zuboff, 2019). Meta executives emphasize their state-like responsibilities, adopting a protective tone to monitor the political issues that their own platforms contributed to.

Meta’s emphasis on the importance of public debate in turn echoes what Boltanski and Thévenot (2006) analyze as the civic order of worth, as well as earlier theories of the public sphere (Habermas, 1989), where private people come together to discuss public concerns. In Habermas’ view, the public sphere that emerged in eighteenth-century Europe was characterized by the existence of “rational-critical” discourse supposedly decoupled from private interests (see Fraser, 1990, for a feminist critique). Here, Meta executives praise the role of social media—admittedly owned and operated on an aggressive for-profit basis—as a continuation of the coffee shops that Habermas romanticized.

User Wellbeing

Instead of economic performance or democracy writ large, the third priority turns to the individual as it focuses on fostering and supporting user “wellbeing.” If Meta’s public material on democratic debate adopted a paternalistic style, their statements on wellbeing take on a decidedly more caring and maternal tone. In this vein, Meta executives regularly claim to “protect” their “community” and decrease the “toxic” aspects of social media to ensure the “health” and “happiness” of the platform’s users—all terms laden with multiple and often fuzzy meanings (Gibson et al., 2023).

In his 2012 letter to the SEC, Zuckerberg emphasized the “social mission” of Facebook: “We hope to strengthen how people relate to each other. Even if our mission sounds big, it starts small—with the relationship between two people [. . .]. Relationships are how we discover new ideas, understand our world and ultimately derive long-term happiness” (Ebersman, 2012, p. 67). In this statement, Zuckerberg equates digital connections with strong relationships, happiness, discovery, and openness—all made possible through the platform. This vision determined the labeling of contacts as “friends” on Facebook—a label that has no technical basis, as Bucher (2021, p. 102) reminds us.

It also shaped pronouncements by Facebook executives over the years. For instance, in 2018, Mosseri, then Head of News Feed, described Facebook as a tool to “bring people close together and build relationships.” He was echoing a public post in which Zuckerberg stated that Facebook had been built to “put friends and family at the core of the experience” to “improve our wellbeing and happiness” (Mosseri, 2018). As of 2024, the focus on community wellbeing is still central to the company, as exemplified in the opening sentence of Meta’s Community Standard: “Every day, people use Facebook to share their experiences, connect with friends and family, and build communities” (Meta, n.d.).

Over time, however, the equation of digital connections, community, and happiness became harder to take for granted (Nowland et al., 2018; Sheldon et al., 2011). Users voiced their dissatisfaction with the superficiality of many online “friendships,” reporting negative emotions when seeing their friends’ posts (Aalbers et al., 2019; Shaw et al., 2015). They criticized the oppressive social and beauty standards promoted on social media (Appel et al., 2016). They reported “wasting time” and being “bored” (Gelles-Watnick, 2022). Many of these reactions came up in surveys about Facebook: As early as 2010, user satisfaction was described as “abysmal” (Reuters, 2010).

Facebook ran multiple studies to understand why people felt bad when using the platform. Researchers analyzed the patterns of “emotional contagion” and “social comparison” on Facebook (Kramer et al., 2014); how users defined “meaningful interactions” (Litt et al., 2020); how negative beliefs about Facebook affected users’ perception of time spent on the platform (Ernala et al., 2022); and why users saw Facebook as “problematic” (Cheng et al., 2019). Zuckerberg referred to this body of research in 2018: Recently we’ve gotten feedback from our community that public content—posts from businesses, brands and media—is crowding out the personal moments that lead us to connect more with each other. [. . .] We feel a responsibility to make sure our services aren’t just fun to use, but also good for people’s wellbeing. [. . .] The research shows that when we use social media to connect with people we care about, it can be good for our wellbeing. We can feel more connected and less lonely, and that correlates with long term measures of happiness and health. (Zuckerberg, 2018)

To address these issues, Zuckerberg announced a change to the News Feed algorithm to prioritize posts by “friends, family, and groups” (compared to content posted by businesses and news organizations). The change was meant to improve the “wellbeing” of the Facebook “community.” In addition to the redesign of the News Feed, Meta soon unrolled several high-profile features on Instagram. These included messages stating “You’re All Caught Up” in the Instagram Feed (thus putting an end to infinite scrolling; Instagram, 2018); the option to hide the public count of “likes” on Instagram (Instagram, 2021; Meta, 2021a); and the option to set up reminders to “Take a Break” on Instagram.

Interestingly, not all these interventions had noticeable effects on users in terms of improved psychological or physical outcomes. For instance, “Project Daisy”—the pilot program that tested the effects of hiding the public count of “likes” on Instagram—did not make teenagers’ experiences of the platform more positive. Yet Facebook rolled out the change to all users regardless, in part to publicly demonstrate that they took user wellbeing seriously. In an internal discussion leaked to the media in 2021, Meta executives wrote: “A Daisy launch would be received by press and parents as a strong positive indication that Instagram cares about its users” (Wells et al., 2021).

Meta’s public emphasis on user wellbeing endures. In 2023, Mosseri, head of Instagram and support for Threads (designed to compete with X/Twitter), announced that politics and news would not be algorithmically promoted on the new platform. Again, drawing on a well-established trope, he appealed to the “amazing communities” of users and the psychological toll and “negativity” associated with political content to justify the decision: Politics and hard news are inevitably going to show up on Threads—they have on Instagram as well to some extent—but we’re not going to do anything to encourage those verticals. [. . .] Politics and hard news are important, I don’t want to imply otherwise. But my take is, from a platform’s perspective, any incremental engagement or revenue they might drive is not at all worth the scrutiny, negativity (let’s be honest), or integrity risks that come along with them. There are more than enough amazing communities—sports, music, fashion, beauty, entertainment, etc.—to make a vibrant platform without needing to get into politics or hard news (Mosseri, 2023).

Throughout these statements, Meta executives adopted what Boltanski and Thévenot call a domestic order of worth, comparing social media users to dependents having a range of emotional and physical needs that needed to be addressed and cared for (Boltanski & Thévenot, 2006; Bucher, 2021, p. 210). Taking a step back, this logic of wellbeing bears some similarities with what feminist theorists have analyzed as a “logic of care” (Mol, 2008; Tronto, 2013), in the sense that Meta articulates a sense of responsibility for the nurturing, sustenance, and repair of the relationships and wellbeing of a community of participants. Of course, these statements appear somewhat incongruous given the psychological toll that women and minority groups experience on Facebook and Instagram (Marwick, 2021; Sharp & Gerrard, 2022; Sobieraj, 2020). And indeed, Meta’s efforts toward community wellbeing repeatedly fall short of their stated goals. As we saw with Project Daisy, the public perception of Facebook as “caring” often seemed more important than the actual efficacy of the intended interventions.

Beyond Meta: Logics Across Platforms

So far, our analysis has focused on the case of Meta: We showed how the logics of engagement, public debate, and user wellbeing inductively emerged from Meta executives’ public material, statements, and interviews. To strengthen and expand our argument, we reviewed the public material and executive statements produced by TikTok, Reddit, and X (formerly known as Twitter). We find evidence suggesting that similar discourses operate at these distinct platforms.

In TikTok’s public material, the focus on engagement primarily manifests itself through repeated mentions of “discovery,” “creativity,” and “dialogue”—with explicit mentions of how “fun” the platform is. For instance, the “TikTok for Business” page invites brands to “supercharge [their] TikTok strategy with an Always Engaged approach (TikTok, n.d., emphasis added),” stating that “Engagement on TikTok looks different. Here, it’s beyond just likes and shares—it’s this two-way dialogue between brands and audiences that allows brands to grow on TikTok. TikTok’s unique ecosystem of entertainment [. . .] is built on endless discovery, diverse community, and an inclusive culture” (TikTok, n.d.). TikTok also states that it takes community wellbeing seriously: “We care deeply about the well-being of our community members and want to be a source of happiness, enrichment, and belonging” (TikTok, 2023b), adding that “we do not allow content that may put young people at risk of exploitation, or psychological, physical, or developmental harm” (TikTok, 2023c). The public debate angle is a bit less visible, which might be due to TikTok’s ownership structure (ByteDance is a Chinese internet company that also operates Douyin and Toutiaou in China). Yet it comes through when the company states that: “In a global community, it is natural for people to have different opinions, but we seek to operate on a shared set of facts and reality” (TikTok, 2023a). In its statement on election integrity, TikTok further discusses the importance of “the informed exchange of civic ideas in a way that fosters productive dialogue” (TikTok, 2023a), which clearly echoes the civic order of worth and the Habermassian public sphere framework.

On Reddit, we find a similar emphasis on engagement, public debate, and wellbeing, but with interesting nuances. For instance, Reddit’s early public material often blends engagement and public debate discourse. Take this 2012 description of the platform on its Frequently Asked Questions (FAQ) page (cited in Gilbert, 2013): Reddit is a source for what’s new and popular on the web. Users like you provide all of the content and decide, through voting, what’s good and what’s junk. Links that receive community approval bubble up towards #1, so the front page is constantly in motion and (hopefully) filled with fresh, interesting links.

Reddit’s design of “upvotes” was designed to help users identify and make visible good ideas and discussions, yet it has also been found to boost extreme and far-right content, as well as manosphere subreddits (Gaudette et al., 2021; Krendel, 2023). As of 2023, the focus on engagement is clear on the business pages of the company, which emphasize Reddit’s “performance analytics” and its dashboards to “keep a pulse on overall organic engagement” for brands and businesses (Reddit, 2024). The public debate logic remains inherent in Reddit’s goal to empower its users to have diverse conversations while preserving disagreements. For instance, one of the platform’s stated values is to “Keep Reddit Real”: “We don’t understand or agree with everything on Reddit (we’re a vast and diverse group of people, too), and we don’t try to conform Reddit to what we or other people think it should be. We do, though, try to create a space that is as real, complex, and wonderful as the world itself” (spez, 2022). Reddit’s take on community wellbeing uses the same language as its competitors: The company’s public material states that its “mission is to bring community, belonging, and empowerment to everyone in the world, and we do that by keeping Reddit safe, healthy, and real” (Reddit, 2023). Yet they also focus explicitly on authenticity and vulnerability as key values on the platform. As Steve Huffman, Reddit’s co-founder and CEO, notes: “More than any other place on the internet, Reddit is a home for authentic conversation [. . .]. There’s a lot of stuff on the site that you’d only ever say in therapy, or A.A., or never at all” (Isaac, 2023).

Finally, X (formerly Twitter) also features the engagement, wellbeing, and public debate priorities. Since its acquisition by Elon Musk, X has become a fervent defender of a specific version of the public debate discourse—one that centers on free speech absolutism under the tagline of promoting “the exchange of information” (X, 2024b). For instance, a recent company blog post invited users to “Stand with X to protect free speech,” complaining about recent “attacks from activist groups [. . .] who seek to undermine freedom of expression on our platform” (X, 2024d; X Safety, 2023). Interestingly, X explicitly outlines the tension and trade-offs between their free speech values and their commercial bottom line, stating that “X will protect the public’s right to free expression. We will not allow agenda-driven activists, or even our own profits, to deter our vision.” At the same time, the company seeks to reassure potential advertisers about the centrality of engagement as a goal and set of metrics guiding X’s strategy (X, 2024c). Perhaps unsurprisingly, discourse on wellbeing is currently less visible on the platform’s public pages—but it still exists. For instance, X’s “Abuse and Harassment” statement explains that: “We recognize that if anyone, regardless of background, experiences harassment on X, it can jeopardize their ability to express themselves and cause harm [. . .]. We prohibit behavior and content that harasses, shames, or degrades others. In addition to posing risks to people’s safety, these types of behavior may also lead to physical and emotional hardship for those affected” (X, 2024a). Here the language of harm and health clearly echoes the ideals of the wellbeing logic—even though the reality of how these policies are implemented might differ significantly from their public presentation.

Together, these elements indicate the presence of the logics and repertoires of engagement, wellbeing, and public debate at TikTok, Reddit, and X. This overview in turn surfaces interesting differences. For instance, X under Musk’s ownership seems to prioritize public debate more than any other platform, while TikTok is more vocal about how much it cares about people’s wellbeing.

Discussion

How can we make sense of the revolving priorities of social media platforms and their muddled implementations? In this article, we adopted a meso-level approach and analyzed the different evaluative logics emerging from social media companies’ public material. Focusing on the case of Meta, we inductively identified three distinct priorities: engagement, public debate, and user wellbeing. We outlined the discourses and policies sustaining these different goals and examined how they surfaced at other social media platforms. In this section, we turn to the struggles and tensions that emerge in the daily operations of social media companies, and what this means for the study of online inequality and extraction.

Internal Fractures

Until now, we have examined the logics of engagement, public debate, and wellbeing as if they were operating in separate worlds. Of course, this does not reflect the day-to-day operations of social media companies, which are characterized by ongoing negotiations, conflicts, and trade-offs between people and teams involved in projects that align with these different goals. Due to the secrecy and non-disclosure agreements put in place by social media companies, we do not know much about these internal struggles. Yet, from publicly available material, it appears that most of the time, in these negotiations, engagement comes out on top.

Consider the disconnect between the three following events. First vignette: Zuckerberg, commenting on the 2018 Facebook News Feed algorithm change to promote content by friends and family instead of public pages, explained: Now, I want to be clear: by making these changes, I expect the time people spend on Facebook and some measures of engagement will go down. But I also expect the time you do spend on Facebook will be more valuable. And if we do the right thing, I believe that will be good for our community and our business over the long term too (Zuckerberg, 2018).

Zuckerberg clearly acknowledged the tension between what we analyze as the logics of engagement (which he refers to as time spent on Facebook and other “measures of engagement”) and user wellbeing. He outlined the distinct temporal frames within which he made sense of this tension, presenting a current (short term) setback in terms of engagement and profitability as an investment in the long-term success of the platform. If we took this statement at face value, we would expect Meta executives to consistently prioritize user wellbeing over other goals.

Second vignette: fast forward to late 2020. Employees inside Facebook realized that their users viewed many of the most viral posts on the platform as “bad for the world.” In response, the employees built a machine learning classifier to downrank such posts, only to have the effort shelved by executives because it reduced engagement metrics. A post published on Facebook’s internal network, leaked to the New York Times, explained: “The results were good except that it led to a decrease in sessions [the number of times users opened Facebook], which motivated us to try a different approach” (Roose et al., 2020). The message was clear: prioritize wellbeing and public debate goals only insofar as they do not meaningfully cut into engagement.

Third vignette: the 2021 Facebook documents leaked by Frances Haugen revealed multiple cases where the initiatives and recommendations of the Facebook’s Civic Integrity Team were canceled or outranked by Zuckerberg and other top executives. This was so largely because the proposed interventions led to a decrease in engagement metrics, including the ubiquitous “MSI”—a metric that appears on almost every single page of the Haugen files and that served as a North Star indicator for most teams. As one document states about a proposed intervention to protect public discourse, “Mark [Zuckerberg] doesn’t think we could go broad. [. . .] We wouldn’t launch if there was a material trade-off with MSI.” (Brandom et al., 2021; Dwoskin et al., 2021).

The gap between Zuckerberg’s public declarations, which are almost always about Meta’s moral guidance and ethical responsibilities, and the reality of how internal decisions are made is jarring. Facebook’s Civic Integrity Team was clearly tasked with improving and protecting public debate on the platform, while Instagram’s Wellbeing team saw its mission as protecting vulnerable users. Yet in both cases, these teams’ initiatives were blocked or stymied by top management because they were threatening engagement metrics.

These high-level cases give a small preview of the kinds of internal conflicts and negotiations that take place within Meta and other social media companies. They provide a much-needed counterpoint to the companies’ public declarations about their commitment to protect democratic integrity and user wellbeing. Based on these examples, it seems that the goals of public debate and wellbeing sit uneasily on the sides of the surveillance and algorithmic apparatus that social media platforms have put in place over the past decades to boost user engagement and sell advertising placement.

Gendered, Racialized, and Extractive Decoupling

Given this dominance of engagement as a North Star goal, why do social media companies even bother hiring the internal teams and putting together the public pages dedicated to improving public debate and user wellbeing?

Our findings evoke what organizational scholars call “decoupling” or “loose coupling,” a process that describes how organizations develop rituals and ceremonial formal structures to gain legitimacy in response to external pressures from civil society and regulators (DiMaggio & Powell, 1983; Friedland & Alford, 1991; Meyer & Rowan, 1977). Given that much of the external pressure coming from the institutionalized environment is ripe with contradictions and ambiguities, organizations tend to “decouple” their public presentation from their internal processes. Decoupling also allows for-profit companies to protect their economic bottom line while maintaining legitimacy. According to neo-institutionalist scholarship, decoupling is more likely in fields and industries where there is some uncertainty about what counts as organizational success (Meyer & Rowan, 1977, p. 354).

In this view, the public debate and wellbeing discourses can be analyzed as “ceremonial props” or smokescreens through which social media companies maintain their public standing and reputation, while their actual guiding logic—engagement—goes unchecked, fostering misinformation and disinformation, hate speech, harassment, and bullying that target vulnerable users. For instance, the public debate priority gained ground after the 2016 U.S. presidential election, during which Facebook was widely criticized for enabling viral misinformation and foreign interference. The wellbeing discourse emerged more gradually in the second half of the 2010s, yet it also maps onto internal company awareness about the growing dissatisfaction of users—especially teenagers—interacting with Facebook and Instagram. Thus, both the public debate and wellbeing discourses can be analyzed as reactive, in the sense that Meta executives sought to anticipate and respond to backlash about their negative impact on democracy and users’ mental health.

One could further argue that the visible presence of the public debate and wellbeing discourse and teams is what enables social media companies to save face, receive funding, and continue doing “business as usual,” even when their products are showed to harm and delegitimate users, reproducing and amplifying racial and gender discrimination (Benjamin, 2019; Noble, 2018; Sobieraj, 2020). This in turn is exactly what Ray (2019, p. 42) analyzes as “racialized decoupling,” in which organizations “decouple formal commitments to equity, access, and inclusion from policies and practices that reinforce, or at least do not challenge, existing racial hierarchies.”

It might also, in Couldry and Mejias’ (2019b, p. 341) words, serve “the purpose of ‘social’ media platforms [. . .] to encourage ever more of our activities and inner thoughts to occur on platforms.” The social interactions related to user wellbeing and public debate that occur on platforms constitute a new type of raw material. In this view, wellbeing and public debate content could be part of the “the audacious yet largely disguised corporate attempt to incorporate all of life [. . .] into an expanded process for the generation of surplus value” (p. 343, original emphasis).

At the same time, the mere presence of these alternative goals of public debate and community wellbeing may, perhaps, alter internal dynamics within social media companies. As we argued, technology companies are not monoliths: They comprise multiple layers, teams, and categories of actors with different—and often competing—intentions and values (Ali et al., 2023; Bucher, 2021). The existence of goals and discourses legitimating the protection of public debate and user wellbeing within social media companies itself creates frictions and openings. Managers tasked with leading the public debate and wellbeing initiatives often hired employees with distinct qualifications, sociodemographic backgrounds, and political convictions. While this is not in itself a guarantee of social change, it may, under the right conditions, transform how social media companies address the harms they create.

Conclusion

In recent years, social media platforms have voiced paradoxical priorities and executed half measures in response to issues of polarization, misinformation, abuse, harassment, and mental health. To make sense of these inconsistencies, we argue that social media workers and departments are torn between competing evaluative logics and fractured discourses about the company’s mission. Focusing on Meta’s public material, we identify three key priorities developed by the company to describe and justify its responsibilities: engagement, public debate, and user wellbeing. We find that these distinct evaluative logics are present at other social media platforms—TikTok, Reddit, and X—and that they are often in conflict. Given the centrality of commercial incentives for social media platforms, we note that the public debate and wellbeing logics remain largely subordinate to engagement goals in the companies’ day-to-day functioning. This hierarchy contradicts Meta’s public declarations but is understood by employees, observers, and regulators, as evidenced by frequent backlashes against the company’s inconsistent, decoupled, and contradictory policies. We discuss how this decoupling between the official discourses of social media companies and what we know of their internal decision-making process allows them to continue doing “business as usual” in the face of massive public critique.

Our analysis also raises additional questions. First, we still know little about the day-to-day functioning of social media companies, largely because of their intense corporate secrecy. We need more in-depth studies of how they make mundane decisions about their products, policies, and personnel. Concretely, this means that scholars need to be given access to internal company processes—not just to deidentified social media data. Second, we need to pay closer attention to how questions of racial and gender justice intersect with social media companies’ stated priorities of improving user wellbeing and the quality of public debate, both of which are theoretically supposed to improve equity and care across social media platforms but may not end up accomplishing these goals. Third, we might consider what social media platforms would look like if they were designed at the outset not to optimize for engagement, but instead to meet the ideals of public debate and user wellbeing. In the meantime, our analysis of social media companies’ public discourses surfaces the complex set of revolving priorities that they claim to uphold, leaving us with the messy work of understanding how these values compete and conflict.

Footnotes

Acknowledgements

This article would not have been possible without the discussions and feedback of the Social Media AI group, including Tatsunori Hashimoto, Michelle Lam, Nathaniel Persily, Tiziano Piccardi, Martin Saveski, Johan Ugander, and Samidh Chakrabarti. We also thank David Stark for his feedback, as well as Rachel Bergmann and Tyler Leeds for fantastic research assistance.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: a Hoffman-Yee Research Grant from the Stanford Institute for Human-Centered Artificial Intelligence (HAI).