Abstract

Dark patterns are user interface (UI) strategies deliberately designed to influence users to perform actions or make choices that benefit online service providers. This mixed methods study examines dark patterns employed by social networking sites (SNSs) with the intent to deter users from disabling accounts. We recorded our attempts to disable experimental accounts in 25 SNSs drawn from Alexa’s 2020 Top Sites list. As a result of our systematic content analysis of the recordings, we identified major types of dark patterns (Complete Obstruction, Temporary Obstruction, Obfuscation, Inducements to Reconsider, and Consequences) and unified them into a conceptual model, based on the differences and similarities within nuanced subtypes in the user account disabling context. The Dark Pattern Typology presented at the 12th International Conference on Social Media and Society is further illustrated in this work. We document the distribution of the subtypes in our sample SNSs, exemplifying dark UI design choices. All of the sites used at least one type of dark pattern. Our findings provide empirical evidence for these pervasive—yet rarely discussed—strategies in the social media industry. Users who wish to discontinue using SNSs—to protect their privacy, break an addiction, and/or improve their general well-being—may find it difficult or nearly impossible to do so. Dark patterns, as common UI design strategies, require further research to determine whether particularly manipulative and user-disempowering varieties may warrant more stringent social media industry regulation.

Keywords

Introduction

Over the past 13 years, several movements have urged people to quit social media. Privacy concerns prompted critics to declare 31 May 2010 “Quit Facebook Day” (boyd & Hargittai, 2010). After Facebook’s emotional contagion experiments were publicly revealed in 2014, the 99 Days of Freedom project challenged users to avoid using the site and any Facebook-linked product or service for 99 days (Baumer et al., 2015). Then, in 2018, the Cambridge Analytica scandal incited a #DeleteFacebook campaign on Twitter (Brown, 2020). Most recently, concerns over potential Twitter policy changes—including paid verification and a more relaxed approach to content moderation—caused #TwitterMigration to trend on the platform and drove various public figures to announce their intentions to leave (Bossio, 2022; Maass, 2022; Metz, 2022).

These efforts, centered on specific events that occurred on Facebook and Twitter, have been accompanied by intensifying criticism of social media as a whole. Popular media, such as Jaron Lanier’s (2018) book, Ten Arguments for Deleting your Social Media Accounts Right Now and the 2020 Netflix documentary, The Social Dilemma (Orlowski, 2020), have highlighted social media’s corrosive effect on users’ privacy and mental health. In a similar vein, critics have advised users to periodically disconnect from the internet and digital technologies, as exemplified by Cal Newport’s (2019) guide to “digital minimalism” and Sherry Turkle’s (2015) appeals to “reclaim conversation” by embracing technology-free, face-to-face interactions with family, friends, and colleagues.

Research has confirmed that, under certain conditions, social media may indeed have a detrimental impact on people’s well-being (Delellis et al., 2022). For example, one study established that increased passive social media use (i.e., “lurking”) was positively associated with depression (Escobar-Viera et al., 2018), while another found that greater social networking site (SNS) use was, in general, “significantly associated with elevated depression symptoms” (Cunningham et al., 2021, p. 249). In fall 2021, leaked internal documents from Instagram revealed that use of the platform could be especially harmful to the mental health of teenaged girls (Wells et al., 2021). Not surprisingly, people often cite concerns over reduced well-being as a motivating factor in their decision to give up social media. Drawing on Satchell and Dourish’s (2009) typology of non-use in human–computer interaction (HCI), Grandhi et al. (2019) found evidence for four varieties of social media non-use: active resistance, disenchantment, disenfranchisement, and disinterest. Active resistance, in particular, is related to the perception that social media use erodes personal well-being, either due to fears of compromised privacy and security, or by wasting time that could be invested in more meaningful, value-adding activities (Grandhi et al., 2019). The motivations identified by Grandhi et al. (2019) align closely with findings from an earlier study on Facebook non-use practices and experiences (Baumer et al., 2013). Facebook users reported disengaging from the platform for reasons that included loss of privacy, concerns over data use and misuse, banality, reduced productivity, addiction, and social, professional, or institutional pressures to leave (Baumer et al., 2013).

While it is not strictly necessary to disable a social media account to discontinue using it, there are several reasons for a user to take this step. For one, the disabling process makes reversion difficult. Disabling an account usually involves the deletion of the user’s personal information, such as posts and contacts; as a result, if the user decides to return to the site, they will need to start over with a new account. Second, disabling an account allays privacy concerns by removing the user’s personal information from public view. Assuming that the personal information is not retained by the service provider, disabling an account also minimizes the risk that the information will be leaked in a data breach.

Yet, even when users have clear motivations to disable their social media accounts, many find it difficult to do so. A user may hesitate to leave a platform if it provides access to social contacts or serves as a log-in for other online accounts (Brown, 2020). Moreover, if a user does attempt to disable their account, they may struggle to complete the process through the site’s user interface (UI; Baumer et al., 2015; Schaffner et al., 2022). In some cases, the difficulty may be deliberate, as online service providers profit from user data and activity, and are therefore incentivized to retain active users (Schaffner et al., 2022). Hidden account disabling options have instigated the creation of services such as JustDelete.Me, which offers a directory of links to account deletion pages for various websites.

Deliberate attempts to deter user account disabling violate the core principles of usable UI design (Nielsen, 2020) and threaten to keep users active on social media against their intentions and best interests. SNSs deploy various UI strategies to complicate the account disabling process, and while some of these strategies may be familiar to users, others are more covert. Although prior work has recognized that online service providers may deliberately deter users from disabling their accounts (Bösch et al., 2016; Gray et al., 2018), research identifying the specific UI strategies employed for this purpose is limited (Runge et al., 2023; Schaffner et al., 2022). To address this research gap, our study examines the dark patterns implemented by SNSs to frustrate users’ attempts to disable their accounts, as well as the tactics that encourage reversion once an account has been disabled. Dark patterns (Brignull, 2010) are UI strategies deliberately designed to influence users to perform actions or make choices that benefit online service providers.

In this study, we recorded our attempts to disable experimental accounts in 25 SNSs drawn from Alexa’s 2020 Top Sites list. We content analyzed the recordings and identified major types of dark patterns (Complete Obstruction, Temporary Obstruction, Obfuscation, Inducements to Reconsider, and Consequences) and unified them into a conceptual model, based on the differences and similarities within nuanced subtypes. We account for the prevalence of these dark pattern types and subtypes in our sample. Finally, we propose recommendations for the design of user-friendly account disabling UIs that aid—rather than undermine—the user in their effort to quit social media. While an earlier version of this work was presented in the 12th International Conference on Social Media and Society (Kelly & Rubin, 2022), a fuller methodological explanation and implications for design are offered in this elaborated work.

Literature Review

Defining Dark Patterns: Recurrent, Intentional UI Design Strategies that Benefit Online Service Providers

User experience (UX) consultant Harry Brignull coined the term “dark patterns” in 2010 to describe “user interfaces that have been designed to trick users into doing things they wouldn’t otherwise have done.” On his website, darkpatterns.org/ (now https://www.deceptive.design/), Brignull compiled examples of problematic design practices, such as making a service easy to enter into but difficult to leave (Roach Motel), guilting the user into accepting an undesirable option (Confirmshaming), and focusing the user’s attention on a salient site element to distract them from other information (Misdirection). A pattern is defined as “a structured description of an invariant solution to a recurrent problem within a context” (Dearden & Finlay, 2006, p. 50). Dark patterns are distinct from anti-patterns, or design choices with unintended negative effects (Greenberg et al., 2014), because they “are not mistakes”; rather, they are “carefully crafted with a solid understanding of human psychology” and “do not have the user’s interest in mind” (Brignull, 2013).

Much research has conceptualized dark patterns as deceptive UI design strategies. This focus is reflected in Brignull’s (2011, 2013) original descriptions, which emphasize how dark patterns “trick,” “misdirect,” and “confuse” users. Similarly, Gray et al. (2018) characterize dark patterns as “instances where designers use their knowledge of human behavior (e.g., psychology) and the desires of end users to implement deceptive functionality that is not in the user’s best interest” (p. 1) and Di Geronimo et al. (2020) state that dark patterns “deceive users into performing actions they did not mean to do” (p. 1). Deception simply involves causing someone to hold false beliefs, thereby exerting a covert influence on their decision-making process (Susser et al., 2019).

Recently, researchers have recognized forms of dark patterns’ influence beyond deception alone. Mathur et al. (2019) describe dark patterns as “user interface design choices that benefit an online service by coercing, steering, or deceiving users into making decisions that, if fully informed and capable of selecting alternatives, they might not make” (p. 2). To varying degrees, a dark pattern might be asymmetric (placing unequal burdens on the available choices), covert (concealing the mechanism of influence), deceptive (inducing false beliefs), information hiding (obscuring or delaying the display of relevant information), and/or restrictive (restricting the available choices; Mathur et al., 2019, 2021). Despite the lack of a precise, consistent definition of dark patterns across the literature, descriptions tend to coalesce around three key characteristics: a dark pattern is “(a) a recurrent configuration of elements in digital interfaces that is (b) intentionally created by a designer and (c) leads to user behavior that goes against the user’s best interests and towards those of the designer” (Lukoff et al., 2021, p. 2). Users may be encouraged to purchase unneeded goods or reveal personal information (Luguri & Strahilevitz, 2021; Narayanan et al., 2020). In this study, dark patterns are defined as UI strategies deliberately designed to influence users to perform actions or make choices that benefit online service providers.

Researchers have applied theories of decision-making from psychology and behavioral economics to explain why dark patterns work. Many dark patterns target and exploit users’ psychological vulnerabilities, such as cognitive biases and heuristics (Lukoff et al., 2021; Mathur et al., 2019; Waldman, 2020). For example, stressing the losses that a user will incur if they opt out of a service capitalizes on the framing effect and loss aversion (Tversky & Kahneman, 1986). Through the lens of dual-process theory (Kahneman, 2013), dark patterns are believed to work best when users rely on quick, intuitive System 1 thinking processes, rather than the effortful and conscious deliberation that arises in System 2 (Bösch et al., 2016).

The study of dark patterns belongs to a similar line of research as persuasive technology and digital nudging. The possibility of deliberately influencing user behavior through technology design was recognized by B. J. Fogg in the 1990s, and dark patterns “may have resonance with persuasive strategies previously proposed by Fogg, albeit twisted from their original purpose and ethical moorings” (Gray et al., 2018, p. 3). For example, a dark pattern that impedes the user’s task flow to deter them from completing an action (i.e., Gray et al.’s [2018] Obstruction) is an inverse form of Fogg’s (2003) tool of Reduction, which involves making an action easy to perform to increase the likelihood that the user will do so. More recently, researchers have drawn from Thaler and Sunstein’s (2008) seminal work on nudge theory to propose the concept of “digital nudging,” which describes “the use of user-interface design elements to guide people’s behavior in digital choice environments” (Weinmann et al., 2016, p. 433). In essence, dark patterns can be conceptualized as “dark” digital nudges—subtle adjustments to a digital choice architecture that guide users toward actions that benefit the online service provider.

Dark Pattern Typologies in HCI Research, UX Practice, Video Gaming, Shopping Website, Mobile App, Proxemic Interaction, Home Robot, and Privacy Contexts

The patterns library published on Brignull’s website, darkpatterns.org/, represents one of the first attempts to name, assemble, and describe different kinds of problematic UI design practices. In the same year, Conti and Sobiesk (2010) provided an early look at what they call “malicious interface design”—deliberately crafted UIs that “violate design best practices in order to accomplish goals counter to those of the user” (p. 271). Building from examples observed “in the wild,” Conti and Sobiesk present ten categories of malicious UI design techniques. The category of Obfuscation, for instance, refers to “[h]iding desired information and interface elements” (p. 273) and could manifest in a site using a low-contrast color scheme to conceal the “close” button on a video advertisement. The design tactics detailed by Conti and Sobiesk are similar to the phenomena Brignull describes; however, the term “dark patterns,” rather than “malicious interface design,” was eventually adopted by UX practitioners and academic researchers in the field of HCI.

In 2018, Gray et al. consolidated dark patterns identified by UX practitioners into a typology consisting of five broad categories (Nagging, Obstruction, Sneaking, Interface Interference, and Forced Action) and 14 variants. Researchers have also identified dark patterns that arise in specific contexts. Zagal et al. (2013) examine dark patterns in the context of video game design, focusing on strategies that are “used intentionally by a game creator to cause negative experiences for players which are against their best interests and likely to happen without their consent” (p. 7). Similarly, Lewis (2014) codifies motivational design patterns and dark patterns in the context of games and web and mobile applications. Mathur et al. (2019) present dark patterns used on shopping websites, drawing from descriptions and labels introduced in the typologies by Brignull (2010) and Gray et al. (2018) while also introducing new categories relevant to ecommerce (e.g., Social Proof, Scarcity, and Urgency). Meanwhile, Greenberg et al. (2014) describe the use of dark patterns in proxemic interactions, and Lacey and Caudwell (2019) conceptualize “cuteness” in home robots as a dark pattern.

Finally, researchers have proposed typologies of dark patterns that steer users toward privacy-invasive choices online (Bösch et al., 2016; Commission Nationale de l’Informatique et des Libertés [CNIL], 2019; Forbrukerrådet, 2018; Fritsch, 2017). For example, a site may preselect privacy-invasive defaults (Bösch et al., 2016; Forbrukerrådet, 2018), pressure the user into sharing their phone number by claiming that it is needed to secure their account (CNIL, 2019; Fritsch, 2017), or make privacy settings “[unnecessarily] complex, overly fine-grained, or incomprehensible” (Bösch et al., 2016, p. 248).

Dark Pattern Prevalence, Impact, and End-User Resistance

Research has documented the prevalence of dark patterns in several contexts, including websites, mobile apps, and tracking consent pop-ups. Using an automated approach to analyze a data set of approximately 11,000 shopping websites, Mathur et al. (2019) uncovered 1,818 instances of dark patterns across 1,254 of the sites studied. Examining a sample of 240 mobile apps, Di Geronimo et al. (2020) found that 95% contained one or more dark patterns, with an average of 7.4 dark patterns per app. Gunawan et al. (2021) discovered that, in a sample of 105 online services, all contained at least one dark pattern across three modalities (mobile application, mobile browser, and web browser). Surveying the designs of the most commonly used consent management platforms (CMPs) on the top 10,000 websites in the United Kingdom, Nouwens et al. (2020) found that only 11.8% of the sites were compliant with the European Union’s General Data Protection Regulation (GDPR), which meant that they made consent explicit, made rejection of all tracking as easy as acceptance, and had no boxes pre-ticked.

Research has also begun to investigate the impact of dark patterns on user behavior. Nouwens et al. (2020) tested how adjustments to the appearance of tracking consent pop-ups affect users’ actions. A key finding that emerged is that “placing controls or information below the first layer [i.e., of a pop-up] renders it effectively ignored” (p. 10). In a large-scale experiment, Luguri and Strahilevitz (2021) found that “mild” dark patterns more than doubled the percentage of consumers who signed up for a questionable service, while “aggressive” dark patterns almost quadrupled the percentage of consumers who signed up. A study by Graßl et al. (2021) determined that, when interacting with tracking cookie consent requests, participants’ consent behavior did not seem to differ depending on whether dark patterns were present—possibly because people “are conditioned to agree to consent request[s] from their everyday life” (p. 16). A second experiment, however, showed that “bright” patterns, using obstruction and defaults, effectively swayed participants away from consenting. Bright patterns are analogous to digital nudges that steer people toward personally advantageous—rather than detrimental—choices, such as protecting their personal data online (Acquisti et al., 2017; Kroll & Stieglitz, 2019; Warberg et al., 2019).

Finally, research has probed end-users’ perspectives and experiences of dark patterns, including their ability to resist manipulative practices. Early work in this area was completed by Fitton and Read (2019), who determined that 12- and 13-year-old girls relied on various strategies to deal with dark patterns in free-to-play apps. Di Geronimo et al. (2020) found that majority (55%) of participants were unable to spot malicious designs in an app that contained dark patterns. Meanwhile, a study by Bongard-Blanchy et al. (2021) revealed that majority (59%) of participants were able to correctly identify five or more dark patterns out of nine interfaces that included them; however, participants remained “only vaguely aware of the entailed concrete harm” (p. 2). Voigt et al. (2021) asked participants to interact with a version of a fictitious online shop that was either “Dark” (containing five dark patterns) or “Clean” (free of dark patterns) and then fill out a questionnaire. Participants who interacted with the “Dark” version reported a higher level of annoyance and lower brand trust than those who interacted with the “Clean” version (Voigt et al., 2021).

Methodology

In this study, our key objectives were to: (1) identify dark patterns in the context of SNS users attempting to disable their accounts (i.e., delete or deactivate the service); (2) consolidate these tactics into a typology; and (3) assess their prevalence in our sample.

For the above objectives, we systematically collected and content analyzed a data set of recordings and associated email exchanges that captured our account disabling processes for 25 sample SNSs, most highly ranked on Alexa’s May 2020 Top Sites list and fitting our inclusion criteria. We considered “account disabling” to refer to any option that allowed the user to withdraw from the service; this encompasses permanent deletion as well as temporary deactivation of the user account. The “account disabling process” refers to the total steps that must be completed by the user to disable their account. Our work borrows from the methodological approach taken by Di Geronimo et al. (2020), who screen-recorded user interactions with mobile apps and manually reviewed the recordings for instances of dark patterns. This research relies on a mixed methods study design that includes two steps: (1) a qualitative content analysis of the account disabling process for 25 SNSs, leading to the development of a typology of dark patterns employed to deter users from disabling their accounts; and (2) a quantitative content analysis that documents the prevalence of the dark pattern subtypes across our sample of sites.

Sample Selection

We obtained a list of the 50 most popular SNSs in May 2020 from Alexa Top Sites, a web traffic analysis service. Alexa calculates rank by combining “a site’s estimated traffic and visitor engagement over the past three months” (Alexa, 2020). 1

In total, we removed 23 sites from our sample based on the following exclusion criteria: (1) the site was not in English; (2) the site demanded sensitive personal information in order to create an account; (3) the site did not provide the opportunity to create an account; (4) the site was no longer in operation; or (5) the site did not meet our criteria for an SNS. Adapting the definition by boyd and Ellison (2007), we considered a site to be an SNS if it allowed users to create a profile and interact with other users within the system.

Searching the web address of one site from Alexa’s list, Stumbleupon.com, produced a message that the site had been moved to Mix.com. As a result, we replaced Stumbleupon.com with Mix.com in our remaining sample of 27 sites. Two of the sites from Alexa’s list, Tagged.com and Hi5.com, shared a nearly identical UI; both sites were included in our sample because they required separate account creation.

Data Collection

We registered experimental accounts for the 27 SNSs in our sample using a new Gmail account created for the purpose of the study. Using Windows 10 Game Bar, a type of screen-recording software, we captured videos of our attempts to disable our accounts for all of the sites. This method of data collection is preferable to collecting screenshots because it allows user interactions with site elements to be recorded and analyzed (Di Geronimo et al., 2020).

We started recording immediately before we logged into each site through a desktop browser and stopped recording after we had logged out. To ensure consistency in the actions performed across the different sites, we adhered to the following protocol: (1) we searched for options to initiate the account disabling process in account and/or privacy settings; (2) if no options were found, we navigated to the frequently asked questions (FAQs) and/or Help sections; (3) if again no options were found, we attempted to request account disabling through real-time chat or request forms, when available. In doing so, we simulated the behavior of a user attempting to disable their SNS account. The recordings are uninterrupted and allow time for the user to make choices among available options (e.g., tabs, check boxes, or radio buttons) and read various pop-up screens, notifications, or other legal terms presented to the user by the UI design.

Following our initial attempts to disable accounts, we recorded our attempts to log back into the accounts on the same day to test whether they could be reactivated. If a site claimed that it only offered account reactivation for a fixed period (e.g., 30 days), we also recorded our attempts to log back in after this period had passed. In cases where sites required the user to confirm their decision to disable their account by email, we recorded the process of logging into our Gmail account and responding to each site’s message.

One site (Facebook.com) barred us from accessing our account unless we provided a phone number. Consequently, we were unable to access the site’s account disabling options and we excluded the site from our sample. Another site (Student.com) was also excluded from our sample because its users evidently do not directly interact with each other but rather are encouraged to contact booking consultants as intermediaries. Thus, this site was disqualified by our fifth exclusion criteria, since according to the definition by boyd and Ellison (2007), the site was not deemed a clear case of an SNS. These two exclusions reduced the number of SNSs studied from 27 to 25 (see Appendix A for a list of the 25 sites included in our sample).

To determine whether sites continued to communicate with users, we monitored our Gmail account and screen-captured emails that were sent from sites after the user’s account had been disabled.

The complete data set consists of 62 MP4 video files and 16 PDF email files. Data collection occurred during the period of June 2020 to September 2021. After recording each video, we took notes to document potential dark patterns observed during our interaction with the site. These notes served as the basis for our initial set of codes.

Coding

Manual coding of the recordings was performed following basic steps in the Content Analysis methodology by Klaus Krippendorff (1980, 2004). An initial set of codes was produced by organizing dark patterns noted during data collection into a conceptual model consisting of four broad categories. In formulating this scheme, we drew from dark pattern labels and descriptions identified in earlier works (Brignull, 2010; Conti & Sobiesk, 2010; Gray et al., 2018) and adapted them to the context of user account disabling in SNSs. We also proposed our own labels and descriptions to capture strategies observed during data collection that had not been identified in prior research. The resulting set of codes was used for pilot coding of the media files in NVivo, a software program designed for qualitative data analysis (QSR International, 2022). NVivo allows researchers to assign codes to temporal segments of video files, and therefore allowed us to indicate exactly where a dark pattern occurred within each user interaction with a site.

Close inspection of the media files during pilot coding revealed additional strategies that were missed in our data collection notes. As a result, we added several new dark patterns to our scheme’s existing categories. We also refined the structure of the coding scheme by splitting one category, Delays, into Obfuscation and Inducements to Reconsider. This enabled us to distinguish the dark patterns previously grouped under Delays by their fundamental purposes (i.e., covertly or overtly steering users away from disabling their accounts). The revised coding scheme was used for a second pass at coding in NVivo.

Applying insights from this second pass, we revised the coding scheme a final time, resulting in our Dark Pattern Typology (Kelly & Rubin, 2022). The hierarchical structure of the scheme, which previously consisted of four levels, was simplified to two main levels: types and subtypes. This was achieved by merging unnecessarily granular dark pattern descriptions into the subtypes level of the hierarchy. In the few instances where subtypes remained divided into two options, we ensured that only one option could logically be chosen for each site.

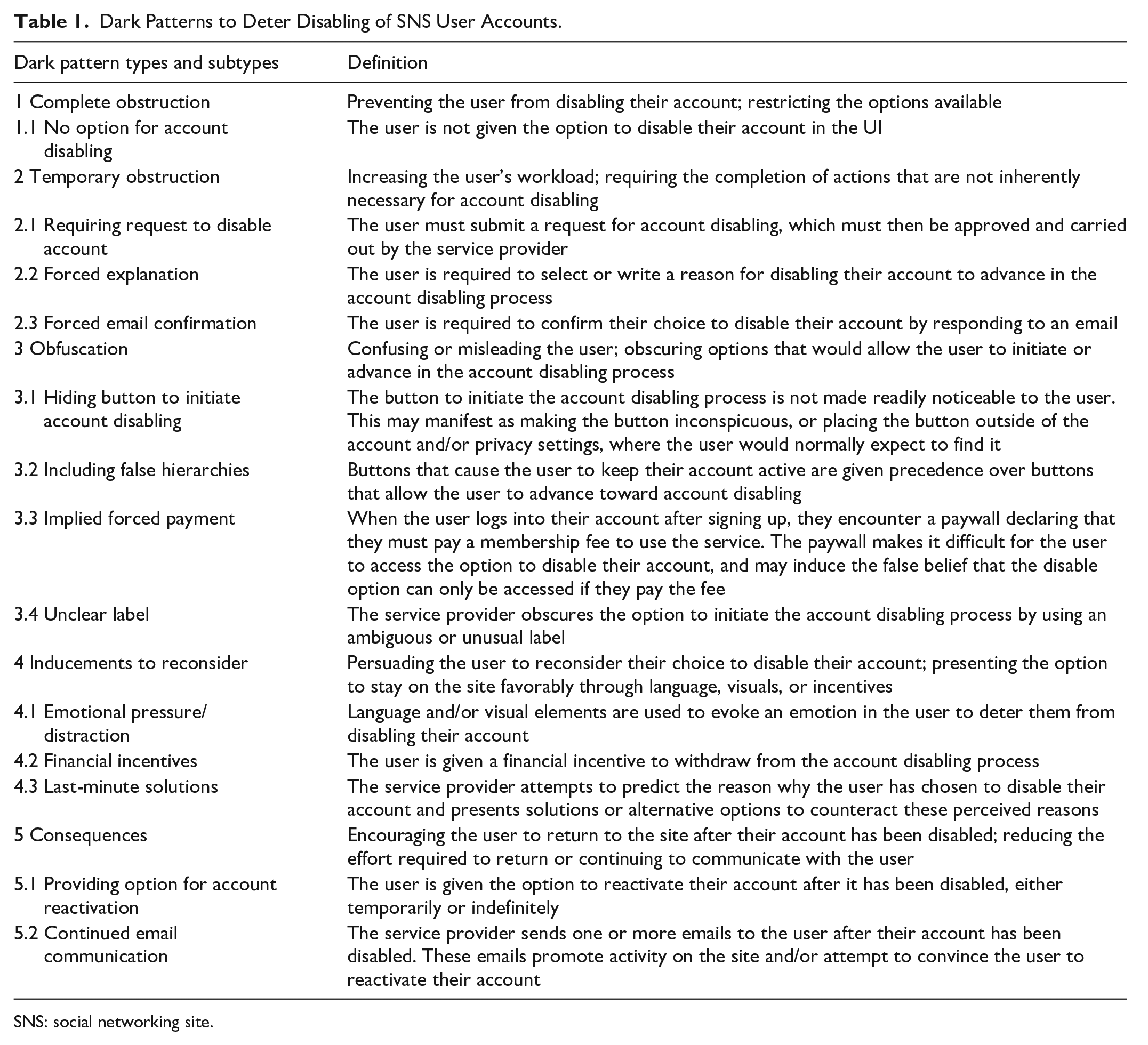

This resulting coding scheme (see Table 1) was utilized to code the videos in NVivo a final time. Detailed instructions and illustrative examples can also be found in Appendix B containing the complete codebook used in this study coding.

Dark Patterns to Deter Disabling of SNS User Accounts.

SNS: social networking site.

Results

Based on our content analysis of the recordings of the 25 site disabling attempts and associated email exchanges, we present a fivefold typology of UI design strategies, or dark patterns, that specifically deter SNS users from disabling their accounts or encourage reversion after an account has been disabled. Table 1 includes five major types (Complete Obstruction, Temporary Obstruction, Obfuscation, Inducements to Reconsider, and Consequences) and their 13 subtypes.

Qualitative Results

In this section, we describe the five major types of dark patterns, five prominent subtypes, and a specific example of each subtype that was observed in our sample of 25 SNSs. Illustrative screenshots of the subtypes are included. In developing our dark pattern typology, we combined, modified, and extended the dark pattern models from earlier works by Brignull (2010), Conti and Sobiesk (2010), and Gray et al. (2018). This work is specific to the account disabling deterrence strategies employed by SNSs, with a more nuanced discussion of the typology that was initially presented at the 12th International Conference on Social Media and Society (Kelly & Rubin, 2022).

Complete Obstruction

Complete obstruction refers to strategies that make it impossible for the user to disable their account through the site’s UI.

Subtype: No Option for Account Disabling

We only observed one subtype of this dark pattern type in our sample. The subtype operates by excluding any option for the user to disable their account from the site’s UI.

Subtype Example

In our sample, one site provided no option for account disabling in its user account settings (see Figure 1). On a page describing the site’s terms and rules, the site administrators stated that they do not delete accounts at the user’s request because this “would make it too easy for [the user] to return as a different user.”

Example of no option for account disabling from our data set.

Temporary Obstruction

Temporary obstruction refers to strategies that increase the user’s workload during the account disabling process. This is accomplished by requiring the user to complete actions that are not inherently necessary to account disabling to proceed in the task flow. In contrast to complete obstruction, which presents impassable barriers to account disabling, dark patterns of this type temporally delay the user’s progression toward the completion of their task.

Subtype: Requiring Request to Disable Account

A notable subtype of this dark pattern type requires the user to submit a request for account disabling, either by filling out an online form or by communicating with a company representative in real time. Because the service provider could simply require the user to click a “Disable account” button and confirm their choice, this dark pattern needlessly increases the user’s workload. Users may also waste time searching for a “Disable account” button in their account and/or privacy settings before realizing that they must manually submit a request.

Subtype Example

One example of this dark pattern subtype in our sample occurred on a site that omitted buttons to initiate the account disabling process from its account settings and “Help” page (see Figure 2). By clicking a “LiveChat” feature in the lower right corner of the screen, the user could request account disabling by typing messages to a “Support Agent.” However, the site did not make it clear that the user needed to enable “LiveChat” to disable their account, and some users might be discouraged from beginning the process by the potential effort inherent to this interaction (e.g., the possibility of a long wait time before the representative responded).

Example of requiring request to disable account from our data set.

Obfuscation

Obfuscation refers to strategies that confuse or mislead the user prior to or during the account disabling process. This is achieved by obscuring information and/or options that would allow the user to initiate or advance in the task flow.

Subtype: Including False Hierarchies

This dark pattern subtype occurs when buttons that cause the user to keep their account active are given precedence over buttons that allow the user to proceed in the account disabling process. Generally, the option preferred by the service provider is made larger or given greater contrast against the background than other options. False hierarchies may cause users to accidentally click the more salient button, to perceive the salient button as the recommended option, or to mistakenly believe that the salient button is the only option available.

Subtype Example

In our sample, one manifestation of this subtype occurred in a site that required the user to click through several pop-ups to complete the account disabling process (see Figure 3). At each step, the button that allowed the user to exit the task flow—and thereby keep their account active—was more salient than the option to proceed toward account disabling. Although the button to exit the task flow was large and colorful, the button to proceed was small and displayed in a low-contrast color that blended in with the background. It would be easy for users to accidentally click the salient button at one of these steps, requiring them to restart the entire account disabling process.

Example of including false hierarchies from our data set.

Inducements to Reconsider

Inducements to Reconsider are strategies that attempt to persuade the user to reconsider their decision to disable their account during the account disabling process. This is accomplished by using language, visuals, or incentives to present the option to stay on the site favorably. Typically, the service provider highlights benefits that the user will accrue if they keep their account active or emphasizes costs that the user will suffer if they disable their account.

Subtype: Emotional Pressure/Distraction

This subtype occurs when language and/or visual elements are used to evoke an emotion in the user, with the aim of deterring them from disabling their account. This manifests in three main forms: (1) invoking worry or fear through language (e.g., “Warning!” or “Danger!”) or visual elements (e.g., large, bright red font or sad faces); (2) making statements that express disappointment (e.g., “It makes us sad to see you go”); or (3) asking questions that cast doubt on the user’s decision (e.g., “Are you sure you want to delete your account?”).

Subtype Example

In one site, we observed this subtype immediately after clicking a “Delete user account” button on the account settings page (see Figure 4). A page appeared with text that cast doubt on the user’s choice (“Are you really, really sure you want to delete your [site name] user account?”) and expressed disappointment (“It would be a shame to see you go!”).

Example of emotional pressure/distraction from our data set.

Consequences

Consequences are strategies that encourage the user to return to the site after their account has been disabled. Dark patterns of this type operate in two ways: by making it easy for the user to reverse their decision to disable their account, or by sending unsolicited messages to the user that promote activity on the site and/or attempt to persuade them to return.

Subtype: Providing Option for Account Reactivation

This subtype occurs when the user is given the option to reactivate their account after it has been disabled. The user may retain the ability to reactivate their account during a temporary wait time, after which the account cannot be recovered, or the user may retain the ability to reactivate their disabled account indefinitely. Whereas temporary obstruction dark patterns increase the effort users must exert to disable their accounts, this subtype makes it possible for users to reverse their decision, and thereby reduces the effort required of users to return.

Subtype Example

One manifestation of this subtype in our sample occurred on a site that presented a message to users immediately after they disabled their account (see Figure 5). The message reminded users that they could reactivate their account at any time by logging in. Together, enabling reactivation through login and offering this option indefinitely makes it very easy—and tempting—for the user to reactivate their account.

Example of providing option for account reactivation from our data set.

Quantitative Results

In total, 59 instances of dark patterns were documented in our sample of 25 SNSs, based on our procedure of coding the presence (1) or absence (0) of a dark pattern subtype within each site. The number of dark pattern subtypes identified are summarized in Table 2.

Number of Sites That Employed Each Dark Pattern Subtype.

All of the sites (25 out of the 25 sampled SNSs) used at least one type of dark pattern. Most SNS UIs attempted to manipulate the user once or twice, for example, by providing an option for account reactivation, by emotional pressure/distraction, or by hiding the button to initiate account disabling. Five (5/25, 20%) SNSs attempted to manipulate the user at least five or more times during their account disabling process.

The three most prevalent dark patterns across our sample of 25 SNSs were: emotional pressure/distraction (13/25, 52%); providing an option for account reactivation (10/25, 40%); and last-minute solutions (9/25, 36%).

More than a third of the sites (10/25, 40%) provided users with the option to reactivate disabled accounts. Of these sites, six offered account reactivation during a temporary wait time, while four offered account reactivation indefinitely.

Among the sites that used the forced explanation dark pattern (6/25, 24%), nearly all (5/25, 20%) also used the last-minute solutions dark pattern. In these cases, the two patterns were used in combination: the site forced the user to select a reason for leaving, and then presented solutions to counteract that reason.

Intercoder Reliability Results

We conducted intercoder reliability testing based on two coder judgments of whether 13 dark pattern subtypes were present or absent (and if so, which ones) in seven randomly selected SNSs (or 28% of the total data set of 25). The entire data set of media files for the 25 SNSs was originally collected, reviewed and thoroughly coded by the first author. She considered the unit of analysis to be a site and counted only the first occurrence of each subtype in each site. She provided a 1.5 hr training session to the second coder, who followed the codebook instructions (see Appendix B). The second coder was able to reach 90% agreement with the first coder. Both coders used the SNS video recordings and screenshots but coded them independently. To account for agreement by chance, we also calculated Cohen’s kappa statistic of 0.65. This intercoder reliability result is considered to be substantial agreement, as per the table of kappa interpretation by Landis and Koch (1977; namely, 0.41–0.60 Moderate; 0.61–0.80 Substantial; and 0.81–1.00 Almost perfect).

Discussion

Comparison to Prior Work

Our study builds on earlier work that has recognized how online service providers may deliberately frustrate users’ attempts to withdraw from their services. Brignull (2010) proposed the term Roach Motel to describe UI designs that make it easy to get into a certain situation, but difficult to leave; for instance, a site might lure the user into accidentally purchasing a subscription, and then require them to fill in and mail a form to receive a rebate. Meanwhile, Bösch et al. (2016) coined the term Immortal Accounts to describe situations where an online service provider makes the account deletion experience unnecessarily complicated or does not provide any account deletion option. The challenge of canceling online subscriptions has received recent attention from consumer protection groups and regulatory bodies (Lomas, 2022; Tomaschek, 2022). In July 2022, for example, Amazon agreed to simplify its Prime membership cancelation process for sites in the European Union following discussions with the European Commission and national consumer protection authorities (European Commission, 2022), and in March 2023, the Federal Trade Commission (FTC, 2023) “proposed a ‘click to cancel’ provision requiring sellers to make it as easy for consumers to cancel their enrollment as it was to sign up.”

Research examining the specific UI strategies employed by online service providers to deter disabling of user accounts is nascent. Comparing the steps required for joining versus quitting 40 online services, Runge et al. (2023) found that “many firms employ tactics that make deleting accounts much harder than creating accounts” (p. 156). These tactics include “requiring repetitive confirmation steps, suggesting deactivation rather than deletion, offering grace periods, or providing excessive details on reasons why one may not want to quit” (Runge et al., 2023, p. 156). Registering an account required an average of 18 clicks, whereas deleting an account required an average of 27 (Runge et al., 2023). The practice of requiring repetitive confirmation steps is similar to the obstruction dark patterns identified in our analysis, which operate by increasing the user’s workload. Offering a grace period before account deletion is recognized in our providing option for account reactivation dark pattern, and providing excessive information about why the user may not want to quit is captured in our inducements to reconsider dark patterns, where users are persuaded to reconsider their choice to disable their account.

In a similar vein, Schaffner et al. (2022) analyzed the account deletion process for 20 social media platforms and found evidence of four types of dark patterns (confirmshaming, forced external steps, immortal accounts, and forced continuity) in the platforms’ interfaces and policies. Confirmshaming, where the user is shamed into keeping their account active, is closely related to our emotional pressure/distraction dark pattern. Meanwhile, forced external steps, where users must “take steps external to the platform to complete the desired task [account deletion]” (Schaffner et al., 2022, p. 9) describes the same phenomenon as our forced email confirmation dark pattern. Schaffner et al. (2022) also identified three account termination options: close (accounts remain recoverable indefinitely), delete after closing (accounts remain recoverable during a waiting period), and delete immediately (there is no waiting period and accounts are not recoverable). We observed all three options in our sample of 25 SNSs, and consider the practice of making accounts recoverable—either during a waiting period or indefinitely—to constitute a dark pattern (providing option for account reactivation).

In an article currently under review, the first author performed a content analysis of dark patterns employed by SNSs to influence users to make privacy-invasive choices (i.e., “privacy dark patterns”) when registering an account, configuring account settings, and logging in and out (Kelly & Burkell, Under Review). Consistent with the strategies we observed in account disabling UIs, Kelly and Burkell identified dark patterns that increased the user’s workload, confused or misled the user, and deployed language and/or visuals to present a particular choice favorably.

Lessons for Design

In the process of this study, we have learned the following lessons for ethical UI design practices to avoid manipulating users into staying with the online services they had originally signed up for.

First, UI designers should place an account disabling button where the user would reasonably expect to find it: their account and/or privacy settings. The button should be readily noticeable; it should not be faint or tiny, and it should not lack salience relative to other buttons on the settings page (i.e., the option should not be significantly smaller or less colorful than other options, which naturally grab the user’s attention).

Second, once the user clicks this button, the UI designer should require them to confirm their choice. This step protects the user from performing an irreversible action by accident. One approach is to present a neutrally worded pop-up requesting the user’s confirmation. For example, the user could be asked to, “Please confirm that you would like to disable your account” alongside a “Confirm” button and a “Go back” button that are given the same visual weight. The language in this screen should not cast doubt on the user’s choice (i.e., “Are you really sure you want to disable your account?”).

Another approach to obtaining confirmation is to require the user to enter their password. This action requires minimal effort and doubles as a security measure, ensuring that the user’s account is not maliciously disabled by another person who gains access to it. We observed password confirmation in several sites, but it was usually accompanied by further steps that needlessly increased the user’s workload.

To summarize, a user-friendly account disabling process consists of two simple and transparent steps:

Clicking an easy-to-see button located in account or privacy settings.

Confirming your choice, either through a neutrally worded confirmation screen or by entering your password.

Limitations

Our study has several limitations. For one, the number of sites on which we were able to perform and record the account disabling process was relatively low (25). Of Alexa’s top 50 SNSs, many sites were excluded from our sample because the service provider demanded sensitive personal information for account creation and, to protect our privacy, we did not provide it. Several SNSs with large userbases were captured in our study, including Twitter, LinkedIn, and Pinterest. However, one highly popular SNS—Facebook—was omitted from our sample because a phone number was required for verification when we attempted to log into our account shortly after signing up.

Certain sites were not included in our sample because they were not categorized as “social networking sites” by Alexa. Sites such as Instagram, TikTok, and YouTube—which all claimed high numbers of users as of early 2021 (Auxier & Anderson, 2021)—are arguably SNSs because they allow members to construct profiles and interact with other site members. To our knowledge, Alexa does not state the criteria followed to determine whether a site is classified as an SNS.

Another limitation is that it is difficult, if not impossible, to prove that a UI design was deliberately crafted to deter the user from disabling their account. Intentions have historically been challenged and debated over in legal courts and public discourse, yet we have had no access to any internal company documentation that could provide evidence to disprove or corroborate manipulative UI design decisions at the company level. Whether intentional or not, the consequences of UI implementations are, however, directly observable.

One subtype in our typology, unclear label, captures instances where the service provider obscures the option to initiate the account disabling process by using an ambiguous or unusual name, such as “Change account status.” This language would presumably confuse the user, but it is hard to establish whether this is an intentional dark pattern or an example of poor design.

Moreover, certain strategies in our typology could serve secondary, more benign purposes. For example, allowing the user to reactivate their account during a brief period makes it tempting for the user to reverse their decision, but it also ensures that the user has a way to recover their account if it was accidentally disabled. In our sample, all sites offering account reactivation provided a “grace period” of at least 2 weeks. If a site’s goal is to allow the user to reverse an accidental closure, we contend that 2 weeks is an unnecessarily long grace period. As well, we see little, if any, justification for cases where account reactivation is possible for very long periods (one site in our sample offered it for a year) or where it is offered indefinitely.

We ultimately included any strategies that could plausibly deter the user from disabling their account in our typology. Online services benefit from retaining active users, so it is reasonable to believe that these strategies are deliberate; however, we cannot claim to know the UI designer’s intentions with certainty.

Future Work

Our findings point to several areas for future work. Further studies could connect the dark patterns described here to the specific cognitive biases they exploit and the design attributes they exhibit, as exemplified in the study of shopping websites by Mathur et al. (2019). Using our typology, researchers could document the prevalence of dark patterns to deter user account disabling in a larger sample of SNSs. The typology itself could be extended by identifying tactics employed to interfere with account disabling in a broader range of online services, or with other types of non-preferred actions (e.g., multiple users sharing the same account—a common practice that Netflix recently expressed interest in combatting; Kan, 2022). Building on recent works that have investigated end-user perspectives and experiences of dark patterns (Bongard-Blanchy et al., 2021; Di Geronimo et al., 2020; Fitton & Read, 2019; Schaffner et al., 2022), studies should further examine whether people can recognize the tactics in our typology when encountered, and whether certain strategies are perceived as more or less acceptable than others. Viable future research questions in the dark patterns or digital nudging area(s) of HCI research should include, for example: (1) do users readily recognize dark patterns as manipulative?; (2) do users perceive differences in subtypes of dark patterns and consider some of them more or less acceptable as legitimate business strategies?; and (3) how tolerant are users of attempts to influence their UI interactions with various online services, under similar or different contexts and intentions as studied here?

Conclusion

Critics have implored users to quit or reduce their usage of social media (Lanier, 2018; Newport, 2019), and research shows that users indeed have clear and well-founded reasons to disable their social media accounts (Baumer et al., 2013; Grandhi et al., 2019). Our study exposes how UI design plays a role in keeping users active on SNSs—despite their intentions and efforts to leave.

In this mixed methods study, we performed a systematic Content Analysis (Krippendorff, 1980, 2004) of the disabling process for 25 experimental SNS accounts. Our article makes several contributions to the dark patterns or digital nudging literature in HCI and UI design research and practice. Building on earlier models (Brignull, 2010; Conti & Sobiesk, 2010; Gray et al., 2018), we presented an empirically validated typology of dark patterns employed in a novel context—user account disabling in SNSs—and documented the distribution of our model’s five types and 13 subtypes in our sample. To avoid engaging in manipulative practices and to gain user trust, we propose that online service providers should develop account disabling UIs that consist of two simple and transparent steps: (1) clicking an easy-to-see button for account disabling located in account or privacy settings; and (2) confirming the choice through a neutrally worded confirmation screen or by entering one’s password. Our guidelines aim to help users easily realize their intentions, free from interference that increases their workload, hides relevant options, persuades them to reconsider, or pressures them to return. We recommend these guidelines for social media policies of transparency and fair treatment of users.

Footnotes

Appendix A

Appendix B

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Data Availability Statement

The data that support the findings of this study are available from the corresponding author, upon reasonable request.