Abstract

Hate influencers play a critical role in platforming hate. In this article, we illustrate how visible (forward-facing) and invisible (faceless) hate influencers mobilize far-right hate groups in the mobile socio-sphere. Based on our digital multimodal walkthrough method and multimodal discourse analysis, we analyze 16 Telegram channels for two designated hate groups. We focus our analysis on Proud Boys content related to the 6 January attack on Capitol Hill and the White Lives Matter rallies across North America in 2021. To illustrate how hate influencers mobilize these groups, we introduce a three-part model that entails the process (mobile mobilization), means (discourses), and ends (actualizing the objective of the hate group).

Introduction

Hate influencers play a critical role in platforming hate. As mainstream outlets like Facebook and Twitter deplatform right-wing extremist groups, far-right actors increasingly populate alternative social media like Gab, BitChute, and Telegram (Rogers, 2020; Urman & Katz, 2022). Telegram contains a variety of popular news, sports, and activist accounts, but some channels intentionally spread hate (Al-Rawi, 2021), which is the focus of our study. While Jihadist Islamic State of Iraq and Syria (ISIS) channels are now mostly blocked on Telegram, other hate groups like Proud Boys and White Lives Matter (WLM) participate in hateful communication and promote far-right, neo-fascist, neo-Nazi, White nationalist ideologies.

Below, we provide a brief account of the two case studies in this article. First, WLM is a right-wing extremist response to the Black Lives Matter (BLM) movement founded in 2015 by two Americans linked to the Texas neo-Nazi group Aryan Renaissance Society (SPLC, 2021). The phrase “White Lives Matter” has been adopted by groups like the Ku Klux Klan, the Golden State Skins, the Traditionalist Workers Party, and Noble Breed Kindred (SPLC, 2021). WLM focuses on the idea that media, government, and educational institutions are “anti-white” and highlights crimes against White people by people of color (Zadrozny, 2021), immigrants, and proponents of interracial marriage (Strickland, 2016). WLM rallies across North America in 2021 were linked to neo-Nazis and Proud Boys (Zadrozny, 2021). Proud Boys is a “pro-West fraternal organization.” The core tenets of the group include aspects like minimal government, anti-political correctness, and reinstating the spirit of Western chauvinism, which the Proud Boys describe as “radical traditionalism.” For this study, we examine two designated hate groups: Proud Boys and WLM (SPLC, 2021). We follow 16 Proud Boys and WLM Telegram channels between December 2020 and June 2021 with a focus on the 6 January attack on Capitol Hill and the May and June 2021 WLM rallies across North America. The data illustrate how hate influencers operate as visible (forward-facing) and invisible (faceless) hate influencers that audiences connect to in a new public sphere—a mobile socio-sphere—that enables participation outside conventional boundaries (Shehabat et al., 2017).

Hate groups are not hate influencers. Rather, hate influencers are the individuals who exert influence and mobilize hate groups, often through mobile technologies. On Telegram, hate influencers appear as visible leaders or prominent members of hate groups as well as the influencers who mobilize hate groups behind the scenes (i.e., Telegram channel administrators). Our work addresses the calls for more research on the informational flows (Dargahi Nobari et al., 2021) and migration patterns or actions of hate groups (Urman & Katz, 2022) through an analysis of influencers who exist “below the radar” (Abidin, 2021, p. 2). While the concept of a political influencer is not yet fully formed across disciplines (Casero-Ripollés, 2021; Riedl et al., 2021), our work also responds to the need to develop new typologies of political influencers (Riedl et al., 2021; Rivas-de-Roca et al., 2020). Thus, a hate influencer is a type of political influencer that uses social media to recruit members and spread hate.

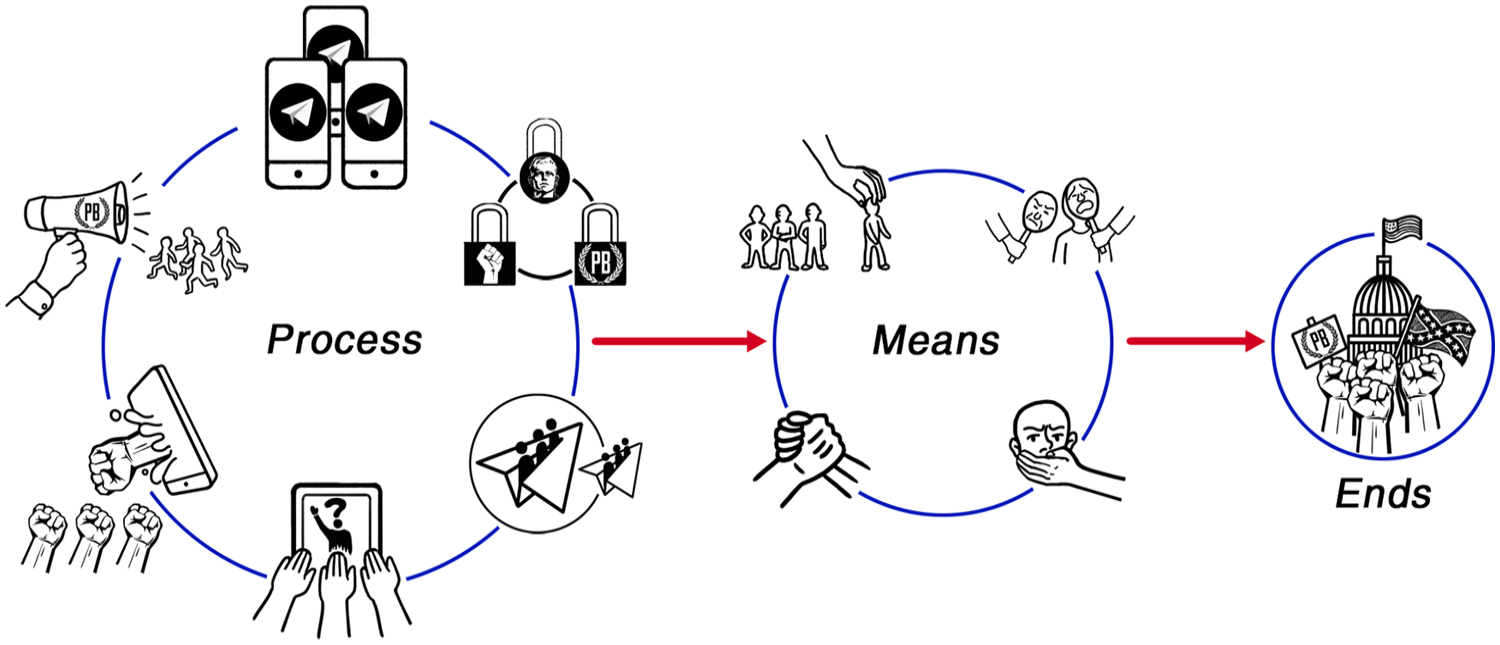

In this article, we detail our digital multimodal walkthrough method as a data-gathering tool, which we supplement with multimodal discourse analysis. To illustrate how hate influencers mobilize in the mobile socio-sphere, we also introduce a three-part theoretical model that entails process, means, and ends (Figure 1). The process entails mobile mobilization—the assemblage of diverse people who connect on Telegram channels where parasocial relationships form between hate influencers and channel subscribers. The second stage is the means of mobile mobilization and includes common discourses (fear, othering, victimhood, brotherhood, etc.). The third stage entails the ends—or the end result of mobile mobilization.

Hate influencer mobile mobilization model.

Confluent Networks in the Mobile Socio-Sphere

Hate influencers mobilize confluent networks using public and private affordances on mobile applications. The concept of “public” is situated in opposition to “private” as a result of its accessibility, as well as the ability of democratic citizens to participate in public affairs (Batorski & Grzywińska, 2018). This aspect is relevant to our study on the use of mobile apps by hate influencers because it combines the public and private spheres. According to Dewey (1957), the difference between public and private is not strictly a matter of distinguishing individual versus social because private acts can be social and “contribute to the welfare of the community or affect its status and prospects” (p. 13). Habermas (1991) also examines the dichotomy between public and private, as well as the unfolding structural transformation that occurs when the public sphere exits a state of rationality entangled within the constitution of society. The public sphere does not exist in a state of separation from the private sphere, but rather, as a form of publicness. Representative publicness produces a “new sphere of ‘public power’” where private persons are no longer “excluded from public power because they hold no office” (Habermas, 2009, p. 47). Public power fosters a political public sphere that encourages public discourse about the objects of the state (Habermas, 1991, 2009). However, the public sphere has never been a unified space as it has always been made up of subdivided segments in what Gitlin (1998) calls public sphericules. These multilayered spheres consist of digitized and social networks where citizens engage and mobilize to shape discourse (Iosifidis, 2011).

Digital platforms have further transformed this public sphere, creating a new realm for social life (Shehabat et al., 2017, p. 30). Al-Rawi (2022) extended Gitlin’s concept of public sphericules to situate mobile applications as portable public spheres. To deal with the radical transition of the public sphere into digital domains, Krieger and Bellinger (2014, p. 13) propose “a social operating system” as a way to build, maintain, and transform networks outside the orders of algorithmic rationality. This social operating system moves toward—rather than recoiling away from—the peripheral edges of chaos, allowing communicative action to resurrect spaces that support creativity, freedom, and responsibility that “drive self-organization and the emergence of unpredictable,” uncontrollable, and risky events (Krieger & Bellinger, 2014, p. 13). The social operating system allows the socio-sphere, a space of networking, to emerge as a “stage upon which social processes” and politics materialize (Krieger & Bellinger, 2014, p. 14). These spaces of networking entail public and private spheres encased by a social operating system constructed of mobile infrastructures, devices, and applications. Mobile infrastructures afford greater reach and scope, embeddedness, and transparency (Hartmann, 2018; Star, 1999), and allow the smartphone to function as an “artefact of popular culture” and a tool to enhance the public sphere (Gordon, 2002, p. 18). The socio-sphere is independent of traditional spatial and temporal dimensions, but rather, “presents itself as a play of layers and filters” (Krieger & Bellinger, 2014, p. 132). Information organized by a filter is a layer, which is a defining attribute of mixed reality (Krieger & Bellinger, 2014, p. 132). Mixed reality is the process whereby physical spaces intermingle with complex information and communication systems, allowing humans and non-humans to converge in a state of hybridity—a socio-sphere—within the social operating system (Krieger & Bellinger, 2014). The result is the formation of a new public sphere, a mobile socio-sphere, where networks are transformed by the communicative actions encased within the social operating system.

The socio-sphere has previously been applied to an analysis of ISIS channels on Telegram (Shehabat et al., 2017). Telegram appeals to users who have been deplatformed from other social media sites (Rogers, 2020). These users want unrestricted free speech environments that support extreme content (Rogers, 2020). Habermas uses the example of eighteenth-century cafes in London in his conception of the public sphere as a space for people to “take place and action without interference from the authorities” (Gordon, 2002, p. 18), but Telegram functions in a slightly different manner. As a mobile app, it was originally designed to help users evade government surveillance and is situated in “contradistinction to Facebook” as “it leads with protected messaging, and follows with the social” (Rogers, 2020, p. 216). Telegram appeals to users who want social privacy, including extremist actors (Rogers, 2020), far-right networks (Urman & Katz, 2022), and terrorist organizations like ISIS (Shehabat et al., 2017).

Telegram is a mobile cross-platform, cloud-based encrypted instant messaging service (Baumgartner et al., 2020; Shehabat et al., 2017) with over 500 million monthly users (Telegram, 2022). Telegram’s user interface allows a one-to-many and one-to-one messaging structure as well as public and private messaging in a single environment (Dargahi Nobari et al., 2021; Gursky et al., 2022; Shehabat et al., 2017). Telegram’s affordances allow hate influencers to control who is added or visible on channels, which, alongside its lax content moderation protocols, create spaces that layer privacy and obfuscation (Gursky et al., 2022). Hate influencers alternate between these public and private spheres, forming concealed spaces where refracted publics can “avoid being registered on the radar” (Abidin, 2021, p. 10).

Across digital media, private, public, and social aspects weave together to produce confluent networks (Papacharissi, 2010). Networks are a central feature of the mobile socio-sphere involving hate influencers and followers. Centrality within a network becomes important so a single actor can generate a higher number of relational nodes to establish a better position in the network (Ingenhoff & Sevin, 2021, p. 4). An in-degree value is established when a user is mentioned, and an “out-degree value is created” if a user mentions someone (Ingenhoff & Sevin, 2021, p. 4; see also, Wasserman & Faust, 1998). Closeness centrality is the shortest path “from a node to all other actors in the network” and betweenness centrality shows the role a node plays in connecting different communities (Ingenhoff & Sevin, 2021, p. 4; see also, Wasserman & Faust, 1998). These relational nodes allow opinion leaders to form and exert pressure and support over social groups (Katz & Lazarsfeld, 1955). This logic extends to influencers who are often regarded as online or digital opinion leaders (Ingenhoff & Sevin, 2021; Riedl et al., 2021). Similarly, we situate hate influencers as opinion leaders who often use four qualities of influence, including (1) having a following, (2) being perceived as an expert, (3) actual expertise, and (4) a position in the community to exert social pressure and support (Dubois & Gaffney, 2014; Katz & Lazarsfeld, 1955). Opinion leaders often assume an influential role in communicating political “messages to a wider public” (Dubois & Gaffney, 2014, p. 1262) through a persona that establishes credibility, authenticity, and trustworthiness with publics (Riedl et al., 2021). Abidin (2018) argues that authenticity is now extracted from a static state and exists as a performative parasocial strategy that includes aspects of self-presentation.

If followers view an influencer as trustworthy, they develop pseudo-social relationships with the actor (Masuda et al., 2022). Parasocial relationships between audiences and media personalities offer the “illusion of a face-to-face relationship” (Papa et al., 2020, p. 34), producing “new role possibilities” that propel social mobility (Horton & Wohl, 1956, p. 222). Social media influencers often “exploit linguistic style and emotional contagion” to persuade followers with content and production (Lee & Theokary, 2021, p. 869) and develop similar kinds of parasocial relationships with audiences, especially when collective objectives or shared values are achieved through emotions that offer a mutual goal or sense of motivation. For example, “affective emotions” can create “ties of friendship, love, solidarity, and loyalty” (Jasper, 1998, p. 417). The result of the mobile socio-sphere is that online communities form around opinion leaders on specific sites, creating connections between members. Utilizing public and private spheres, hate influencers capitalize on the affordances of mobile apps like Telegram to inform and potentially mobilize their followers through the three stages discussed below.

Digital Multimodal Walkthrough Method and Multimodal Discourse Analysis

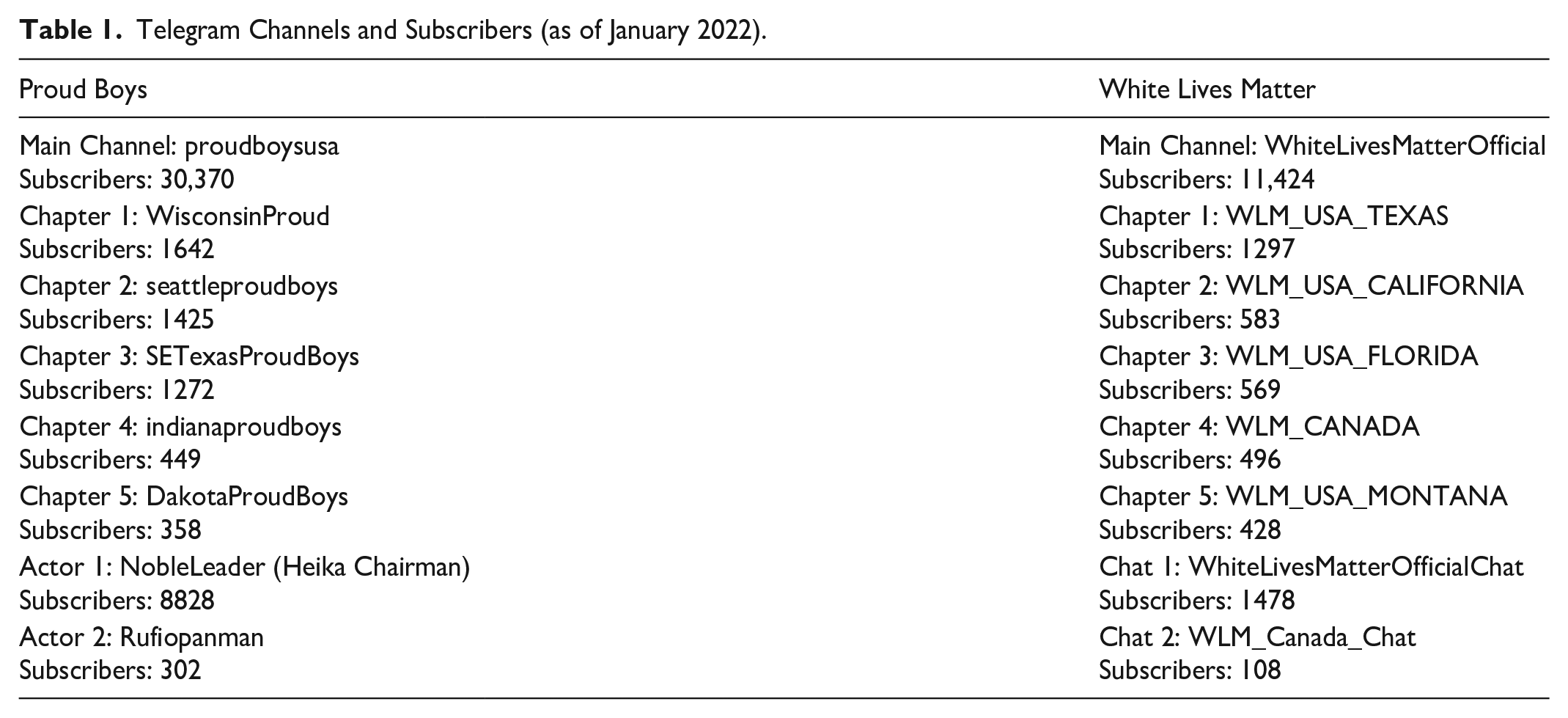

In this study, we examine the main Telegram channels for Proud Boys (“proudboysusa”) and WLM (“White Lives MatterOfficial”) and conduct a scan of the top five most popular chapter groups to observe organizational cross-sharing patterns. “Chapter” is the term both hate groups use to describe smaller organizational branches—and Telegram channels—subdivided by geographical locations (e.g., Texas). To determine the chapter groups, we input all available channel handles and subscriber numbers into Excel, restricting our selection to Canada and the United States. The most popular Proud Boys chapter channels include Wisconsin, Seattle, Texas, Indiana, and Dakota, whereas WLM is more prominent in Texas, California, Florida, Canada, and Montana (Table 1). We also explore the two most populated WLM group chats to help assess the ends phase and two Proud Boys hate influencers that appear prominently in the dataset, including hate group leader Enrique Tarrio (“NobleLeader”) and group member Ethan Nordean, who also goes by the name of Rufio Panman (“Rufiopanman”).

Telegram Channels and Subscribers (as of January 2022).

The study includes 6 months of data that were manually downloaded from Telegram from 31 December 2020 to 31 June 2021. We use purposive sampling to focus on Proud Boys content because it was one of the leading figures in relation to the January 2021 attack on Capitol Hill and the WLM rallies across North America. We analyze this group and these events because they have political and cultural significance and elucidate how hate influencers like some members of Proud Boys use public and private affordances on Telegram to mobilize hate groups in online and offline contexts. We focus on the May and June 2021 WLM rallies because the main WLM Telegram channel was developed on 7 April, the first rally was hosted on 11 April, 1 and the majority of the chapter channels emerged after this date.

Various steps were taken to protect the well-being and safety of the research team. Data collection on Telegram was limited to one researcher who ordered a dedicated direct inward dial number on voip.ms to avoid using their personal phone number. They created an alias and uploaded the WLM symbol as the profile picture (the same image as many channel subscribers). The researcher only “joined” public channels, but following these protocols is essential because hate influencers can remove or block members that do not adhere to their internal channel guidelines (e.g., no profile image, trolls). The researcher participated on the channels strictly as an outside observer. These safeguards are essential for minimizing risk, particularly since far-right actors frequently attack “intellectual freedom and specific academics on college campuses” (Massanari, 2018, p. 1).

To allow all members of the research team to participate in multimodal discourse analysis (Kress, 2010, 2012), the researcher made screencast recordings for each channel using Lookback, a commercial recording software initially developed as an internal application for Spotify. The researcher set up a project folder on Lookback before opening the project link on a smartphone to record one video per channel using the “Participate by Lookback” iOS app. The Lookback “audio” and “screen” functions allowed the researcher to record the full range of multimodal discourses (texts, videos, emojis, memes, gifs, website links, comments, shares, etc.). After recording each channel as a video, we used purposive sampling to collect screenshots, videos, and transcribe podcasts from the Telegram channels, focusing on the WLM rallies and the Capitol Hill attack. The collection of material was assembled into five shareable presentation slide decks on the graphic design platform Canva. Slide decks were separated by group, and the channels were further segmented by a title page. Each section includes a downloaded Lookback video for the channel as well as a chronological account of the channel compiled from screenshots, videos, and podcast transcripts.

Extending walkthrough literature (Light et al., 2018), we highlight here the digital multimodal walkthrough method as a way to archive and document the detailed data-gathering process we outline above. Traditionally, the walkthrough method allows researchers to engage with application interfaces to understand technological mechanisms, cultural references, and the impact it has on user experience (Light et al., 2018, p. 883). As a “genre of vernacular cultural practice,” walkthroughs reveal artifact details and produce a step-by-step notation to narrate use (Light et al., 2018, p. 885). The focus of the walkthrough method is traditionally on the materiality of technology with an emphasis on affordances (Light et al., 2018). The walkthrough procedure enables researchers to step through an app using aspects of ethnography such as observation and detailed field notes (Light et al., 2018). The technical walkthrough is central to data-gathering and involves “engaging the app interface, working through screens, tapping buttons, and exploring menus” (Light et al., 2018, p. 891). The approach produces “detailed field notes and recordings” (e.g., screenshots or video recordings) to systematically trace key actors (e.g., icons and purchase buttons) (Light et al., 2018, p. 891). However, our digital multimodal walkthrough method extends the data-gathering process from the walkthrough method but shifts the focus from technological mechanisms to multimodal discourses on social media channels.

Similar to other digital methods like virtual ethnography (Hine, 2000), netnography (Kozinets, 2010), and Application Programming Interface (API)-based research (Baumgartner et al., 2020), the digital multimodal walkthrough method creates an archive of social media channels, offers a safe collection structure, a collaborative environment for researchers to conduct multimodal discourse analysis, and allows researchers to work independently without being influenced by the opinions of other coders. The unique advantage of the digital multimodal walkthrough method—in comparison to other common methods—is its ability to replicate the scrollable experience of users browsing social media. The video replicates a social media channel, “re-creating” the multimodal experience that is often “lost” when data are collected through other methods (e.g., API). This allows multiple researchers to be “on” the same social media channel at precisely the same moment, but at any time.

The video also captures a permanent scrollable copy of the channel, which is particularly helpful in today’s climate where platform processes like deplatformization are commonly directed at far-right actors, hate groups, and mass shooters, increasing the likelihood that data can potentially be removed. For example, the fifth chapter group initially outlined in the Excel spreadsheet for Proud Boys was a New Hampshire channel (“NHPBs”) with 389 subscribers. An initial scan of the chapter revealed a number of posts related to the Capitol attack; however, at the time of data collection a note on the page said, “This channel can’t be displayed because it violated Telegram’s Terms of Service.” The “NHPBs” ban illustrates the value of creating an archival copy of social media channels through the scrollable video capture made possible with the novelty of the digital multimodal walkthrough method. Importantly, the channel copy allows several researchers to examine the same data in a structured way since sometimes the outcomes of the search might change depending on the users’ past searches and preferences, especially on platforms that are heavily regulated by algorithm searches. The digital multimodal walkthrough method is also helpful because data appear as a stream of information, rather than a set of independent texts or images, which is valuable in understanding the dialogical nature of the construction of discourse. While content can be curated by one researcher (as outlined above), the video allows other researchers to cross-check purposive sampling decisions or discourse analysis by viewing the scrollable copy of the channel, and therefore, reducing the likelihood of bias. In addition, the collection of material on data archiving tools offers a novel system to collate material and take notes—either independently or as a research team.

After we collected the data using the digital multimodal walkthrough method, we analyzed the multimodal discourses on the channels. Multimodal discourse analysis involves analyzing multiple modes in one sign (Kress, 2010, 2012); which in our case includes aspects like texts (posts, comments, videos, podcasts, etc.), images (screenshots, gifs, memes, emojis, etc.) and color. We merge this traditional multimodal discourse analysis with literature around alt-right discourses. In a recent study, Lorenzo-Dus and Nouri (2021) highlight five recurrent discourses among the alt-right, including groupness, political politics, race, religion, tradition, and change. Similarly, our research team examined the dataset to independently determine three to five discourses. All four researchers then simultaneously entered their individual observations into a Zoom chat box, before we looked for common discourses among the research team. The common discourses include (1) mobile mobilization, (2) othering (us vs them), (3) brotherhood, (4) victimhood, (5) fear, and (6) nationalism. We conceptually situate these discourses along a linear pathway for the three-part model we describe in the findings, inspired by the means-end chain model (Reynolds & Olson, 2001) and aligned with the abovementioned six discourses.

Hate Influencers’ Mobile Mobilization Model

Within the mobile socio-sphere, hate influencers appear as visible (forward-facing) or invisible (faceless) opinion leaders (Figure 2). Behind every far-right public-facing Telegram channel is a hate influencer that uses network centrality as a way to mobilize hate groups through the mobile phone. Enrique Tarrio (“NobleLead”) is a forward-facing hate influencer in our dataset who has 8828 subscribers and describes himself on Telegram as “Chairman of the Notorious ProudBoys Patriot—Super-Villain—Entrepreneur American Supremacist.” On 3 January 2021, Tarrio posted on Telegram, “What if we invade it?” One follower responded with, “January 6 is D day in America.” The chairman’s posts are routinely shared by the main/chapter channels for Proud Boys, including a post where Tarrio welcomes “newcomers to the darkest part of the web.” The majority of hate influencers in our dataset are faceless but play an equally important role in mobilizing publics. Hate influencers leading WLM channels frequently use discourse like, “We declare war against the anti-White system” as a way to fuel emotional contagion among confluent networks situated below the radar (see Papacharissi, 2010; Lee & Theokary, 2021; Abidin, 2021).

Faceless (left) and forward-facing (right—“NobleLead”) hate influencers.

Forward-facing hate influencers use a personal approach to channel content, posting stories, images, selfies, personal funding pages, professionally developed media content, and behind-the-scenes footage of events. They also allow followers to engage with them through comments and emoticon responses, which is less common with faceless hate influencers. Faceless hate influencers aim to retain anonymity—often for themselves and their followers. For example, WLM has policies to ensure members do not expose their identity (on Telegram or at events), including a rule that no one can use personal images as their profile picture. In addition, members must cover their faces for all pictures, posts, and events for security and privacy. Chapter channels routinely re-post material from main channels (and main channels often repost from forward-facing hate influencers), resulting in nearly identical content between the main and chapter channels. The exception is that chapter groups also post geographically specific content related to events (e.g., invites, images of events); however, main channels frequently re-post the event images. As a result, we treat the main and chapter channels similarly in our analysis. Below, we outline the three-part hate influencer mobile mobilization model, which includes process, means, and ends (refer to Figure 1).

Mobile Mobilization Process

The process hate influencers use as a central influencing mechanism is mobile mobilization. We define mobile mobilization as the instantaneous form of mobilizing community members by utilizing the affordances of mobile applications like Telegram. Due to the nature of networked technologies, this form of mobilization is highly personalized, connected, and relatively private because it occurs through an end-to-end encrypted cloud-based app. Hate influencers often engage citizens and shape discourse, using emotional contagion to cultivate ties among followers (Jasper, 1998) and create strongly perceived parasocial relationships between followers and hate influencers (often the channel’s administrator). We argue here that the mobile devices people carry enhance the feeling of security, safety, and a sense of unity among community members.

Our mobile mobilization concept derives from the theoretical frameworks of “portable public spheres” (Al-Rawi, 2022) and socio-spheres (Krieger & Bellinger, 2014), focusing on the ecosystem that far-right hate groups engage with that largely reinforces their antagonistic worldviews. To situate the far-right portable public sphere within the broader context, it is relevant to refer to Gitlin’s (1998) concept of the “public sphericules” since the utopian notion of the public sphere is in reality highly fragmented. We argue that Telegram channels that host far-right content assist in producing and sustaining portable public spheres (Al-Rawi, 2022). At the Capitol attack, most rioters used mobile phones to film and document their violence, share, and exchange information, and get updated news, illustrating how mixed reality is a fixture of the mobile socio-sphere. Videos illustrate Proud Boys and other far-right actors pushing down barricades, climbing walls, entering the Capitol chanting “fucking traitors,” and various scenes from inside the Capitol building (Figure 3).

Mobile mobilization on Telegram.

The mobile mobilization process revolves around connecting diverse people who share similar ideas. This process entails aspects like the creation of a like-minded online community upon joining the Telegram channel, the enhancement of parasocial relationships between hate influencers and followers, and an increased sense of personalization, safety, and closeness, which is magnified by the instantaneous nature of mobile communication.

Mobilization Means

The second stage relates to the means of mobile mobilization which might vary from one context to another. In relation to the case study, we identify four main means that hate influencers use to initiate and generate mobilization: (1) the promotion of fear, (2) othering in the sense of promoting racist and xenophobic views by considering non-White groups as inferior from the White mainstream society, (3) victimhood in connection to representing White people as being unjustly and repressively treated by the new political powers like liberal democrats, Antifa, and immigrants, and (4) brotherhood in relation to enhancing the sense of unity, oneness, and a shared identity, ultimately offering an enhanced feeling of power, independence, and security. Within the latter means, we identified clear traces of toxic masculinity (Grant & MacDonald, 2020), including sexist and demeaning attitudes toward women and non-binary gender groups.

Fear

The first means we identified is fear. Throughout history, elites and political opportunists have appealed to group-based identities through religion, sex, race, and class to advance agendas or reinforce power (Powell & Menendian, 2016). Hate influencers from WLM and Proud Boys use fear to promote racism, xenophobia, anti-feminism, transphobia, and far-right agendas. Whiteness has operated as a social mechanism that grants White people special privileges; therefore, living as a White person within a White-dominated culture is normal (National Museum of African American History and Culture [NMAAHC], 2021). Hate influencers use illegitimate fears like “white replacement” as recruitment tools to fight common interests, crafting emotionally charged narratives like, “If you don’t believe the war on whites is real in this country then you need to wake up,” and “The anti-White system wants you to think you are alone. They are suppressing anything positive about our race and promoting outright calls to genocide” (Figure 4). WLM hate influencers predicate their calls to action around the belief that White people are going extinct due to an oppressive anti-White system:

Many of us seem to think White People haven’t lost any wars in recent history. We have lost no wars, yet our People are being replaced. We have lost no wars, yet our culture is being destroyed . . . The wake up call is here: WE ARE AT WAR.

Promotion of fear on Telegram.

Othering

In the context of hate influencer mobilization, the process of othering encompasses expressions of prejudice and behaviors such as tribalism but also reveals a standard set of conditions and practices that propagate group-based inequalities and marginalization (Powell & Menendian, 2016). This process involves establishing categories based on social identity with a perceived superiority over the out-groups (Canadian Museum for Human Rights [CMHR], n.d.). Hate influencers create an “us” versus “them” mentality in relation to Antifa and BLM. A WLM influencer in Florida argues anti-Whites “burn, loot, and murder for months on end without any repercussions, where antifa and the feds work hand in hand with the anti-White government to destroy the lives of our people” (Figure 5). A post by “NobleLead” says, “Today Black Lives Matter and Antifa decided they were going to target my family’s house . . . My Castle is not to be fucked with.” A video posted across channels shows a Proud Boy rapping in a MAGA hat, “Fuck Black Lives Matter and Antifa. I’m a Proud Boy and a loud boy. Fuck around and find out.” The video ends with the barrel of a gun pointed at the camera, illustrating how othering and fear often connect. Politics often intersect with othering, including images of people pointing their middle finger at President Joe Biden on television, videos of Proud Boys setting flags on fire (e.g., BLM), saleable merchandise with slogans like “American Supremacist” and “Capitol Raiders,” and videos of Proud Boys tearing down BLM signs in Washington with the caption, “Patriots have already started showing up in DC for the 6th.” Posts during the Capitol attack include videos of Proud Boys storming the Capitol, Ashli Babbit being shot from multiple camera angles, and an image of officials hiding during the Capitol invasion marked with the caption, “Make Them Scared Again.” Hate influencers encourage this sense of shared identity and social status by constructing categories as hierarchies, where members within the group are established as superior.

Telegram posts related to othering.

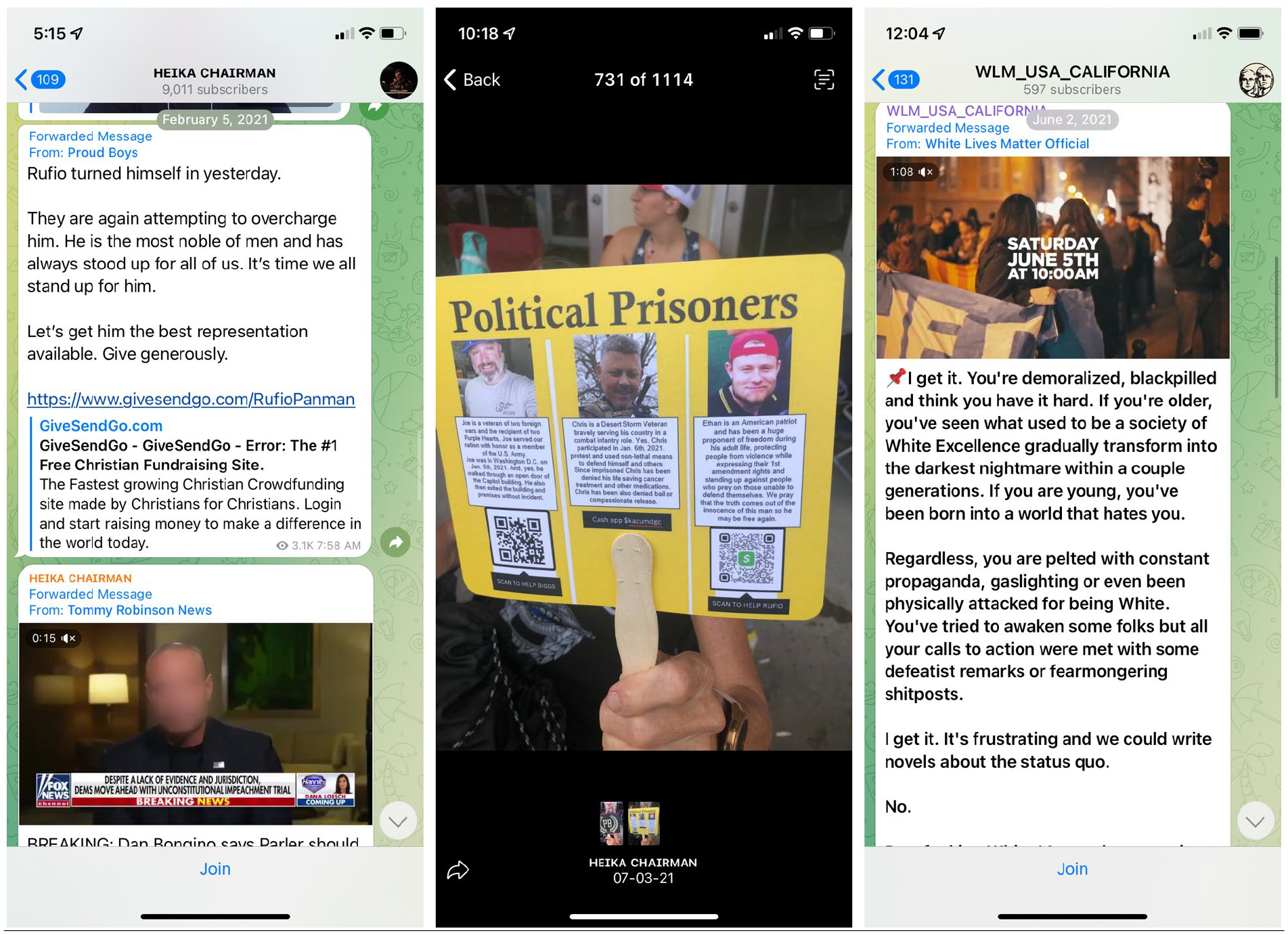

Victimhood

The third discourse we identify is victimhood. Shared grievances can serve as a driver for hate influencer mobilization through the perception that members have been victimized by an illegitimate inequality (LeFebvre & Armstrong, 2018). This can be conflict-specific, where group members claim to have suffered more than the other party, or general exclusive, which believes that the rest of the world wants to harm the in-group (Vollhardt, 2015). An influencer for WLM California claims that America was once an example of White excellence but has since transformed to hate Whiteness (Figure 6). In the case of Proud Boys, notable members involved with the Capitol attack are routinely framed as victims and “political prisoners” as a way to garner support around funding initiatives designed to #FreeTheBoys. Thus, fighting for the cause and supporting hate influencers becomes a moral obligation for collective action.

Telegram posts related to victimhood.

Brotherhood

People who feel marginalized in society may be attracted to a supportive group that can affirm their self-worth and sense of identity. Hate influencers offer a sense of collective identity, belonging, purpose, and status to those who work on their behalf (Lyons-Padilla et al., 2015; Polletta & Jasper, 2001). Thus, the mobile socio-sphere elicits a sense of collective identity among its members, a concept that is defined as the “individual’s cognitive, moral, and emotional connection with a broader community, category, practice, or institution” (Polletta & Jasper, 2001, p. 285). This does not mean, however, that the community members share similar lived experiences, as this sense of identity might be “imagined” but it often strengthens positive feelings as a result of being connected to a larger group.

Hate influencers drive group cohesion through intergroup conflict contextually connected to socio-political events (Bliuc et al., 2020). The BLM protests in 2021 displayed markers of group identity and out-group differentiation. In the context of WLM, hate influencers argue that White people are united by their blood, culture, and spirit rather than political allegiances, allowing intergroup conflict to produce an increased sense of solidarity. Therefore, to support BLM, would be to fight against their interests. Groups often become more cohesive in the aftermath of events that they perceive as collective achievements (Bliuc et al., 2020). One major example was the rise of far-right movements following Donald Trump’s US Presidential win in 2016. Perceptions of group success can lead to a stronger sense of social identification and an increase in recruitment for group members (Bliuc et al., 2020). Hate influencers use the concept of “brotherhood” to deepen parasocial relationships. A WLM Florida influencer refers to poster “activism” and the June rally as “White Boy Summer” and Proud Boys influencers post pictures of “the boys” partying together with “Brotherhood” as the caption (Figure 7). Hate influencers use brotherhood to mobilize publics as evidenced by the #FreeTheBoys hashtag designed to help raise funds for visible Proud Boys who were arrested during the Capitol riots. Personalized hashtags were developed for influential Proud Boys members like “Rufiopanman” (#FreeRufio). Hate influencers promote countless funding pages with one post saying, “We are resilient and relentless . . . We are the fucking ProudBoys and we ain’t fucking leaving! #FreeRufio.”

Brotherhood on Telegram.

Mobilization Ends

The final stage in mobile mobilization focuses on the end result. The objective of the Capitol riot was to cultivate a sense of nationalism, manifested by an ultra-nationalist regime shaped by White supremacist ideologies and strong beliefs in conspiracy theories. Donald Trump’s presidency became the epitome of the empowerment the Proud Boys felt in their warlike action to reinforce their White, male, heterosexual political ideology (Vitolo-Haddad, 2019). Digital media, like Telegram, play a role in the rise of nationalism alongside contextual factors like the pandemic, economic issues, and cultural shifts (Mihelj & Jiménez-Martínez, 2021). Social media further diversifies nationalism and makes it more unpredictable (Mihelj & Jiménez-Martínez, 2021). This diversification results in greater fragmentation and polarization of the national imagination, allowing groups to adhere to more niche versions of collective identity (Polletta & Jasper, 2001) and the mobilization of increasingly extreme forms of nationalism (Mihelj & Jiménez-Martínez, 2021). In particular, the Proud Boys and WLM groups employ neo-tribal nationalism, “a reactive form of nationalism, that is exclusionary, based on the construction of an authenticity and homogeneity that is organic and does not change” (Triandafyllidou, 2020, p. 799). This form of nationalism is predicated on a rejection of diversity and creates a transnational digital community of chauvinist nationalists. These neo-tribal identities are not based simply on tropes of nostalgia, but also on how various forms of nationalism react to modernity, mobility, and diversity (Triandafyllidou, 2020).

Proud Boys influencers consistently use tropes in comparison to the BLM protests, particularly related to arson, violence, and property destruction, and supportive political responses in relation to the Capitol riots and the criminal charges of rioters. On Telegram, a Proud Boys hate influencer posted that riots at the Capitol should have been expected because “Americans waited months. Peacefully protesting & trying to get a resolution on an election in which they didn’t trust the results” (Figure 8).

Telegram posts related to mobilization ends.

Extremists trade messages of hate and “cultural superiority,” fostering fear and paranoia (Klein, 2021). Klein (2021) argues there is a “broader, more deliberate campaign to rebrand bigotry in ‘legitimatized’ terms (p. 5).” A WLM hate group member wrote,

the problem with that [pandemic lockdowns] is the fact that it is creating a LOT of people with nothing left to lose. People have lost friends, family, and livelihoods. That is a dangerous state of affairs for any society. And it’s being foolishly encouraged to continue by people who have nothing to lose.

The WLM member is articulating that the pandemic has exposed the fragility of political and economic systems. This fragility can provide a conduit that galvanizes far-right hate groups hoping to start a revolution. The laws created around the pandemic foster an atmosphere where instability in societal institutions, fears, marginalization, and religion allows people to accept ideas previously considered unacceptable more readily. In a post about the destruction of society through communism and cultural Marxism, a Proud Boys influencer blames these political influences for the oppression of the White heterosexual male. The influencer asserts to followers that the “aim is the total deconstruction of Western societal norms and the demolition of the foundations of our very way of life.”

Conclusion

Hate influencers lead from visible (forward-facing) and invisible (faceless) modalities within the mobile socio-sphere, forming confluent networks cemented together through emotional contagion, affective strategies (Jasper, 1998), and parasocial relationships. Hate influencers use social media platforms to mobilize members, share information, spread misinformation, and most problematically, incite violence. Our study shows how portable public spheres emerge from mobile applications like Telegram, allowing hate influencers to mobilize followers—a primary concern given that ideologically motivated violence by hate groups is increasingly a priority threat in extremist environments.

Though we focused our attention on two far-right hate groups and on the WLM rallies and Capitol Hill attack, these groups and the violent events we studied are globally connected to other far-right groups in North America and Europe. There is a strong ideological connection linking these diverse groups together specifically in relation to Christianity and White race. For example, far-right groups in Canada almost always reference Trump as one of their respected figures. However, the hate groups we studied serve a wider purpose to elucidate the value of studying hate influencers (forward-facing and faceless) while using the digital multimodal walkthrough method and hate influencer mobile mobilization model (process, means, and ends). Future research can use this framework to study far-right actors, hate groups, mass shooters, terrorists, and other extremist groups.

Proud Boys and WLM offer a valuable frame to explore and introduce the concept of a hate influencer, but this selection of groups also constrains our findings. We limited our data collection to North America, but both Proud Boys and WLM have chapters in other regions around the world and those Telegram channels are missing in our analysis. As we focus on Telegram, it would be helpful to study these same groups on other platforms. Hate groups like Proud Boys and WLM have a presence on other platforms. For example, WLM is also active on BitChute and Facebook, and the #whitelivesmatter hashtag is searchable on Twitter, Instagram, and Facebook. In addition, our data collection is limited in scope (31 December 2020 to 31 June 2021). As such, there is room to explore data before and after this time period. In particular, it would be valuable to analyze the Proud Boys Telegram data during the 6 January seditious conspiracy trial (Feuer & Montague, 2023).

Future research can be conducted on hate influencers spanning a wide range of hate groups from varied geographical regions and platforms to fill this gap in the literature. Analysis should be conducted on alternative and mainstream platforms. Research should examine other geographical regions and mobile applications. Comparative research strategies can be used for various groups, across platforms, and/or cross-continent analysis. In addition, more research is needed using the digital multimodal walkthrough method so hateful or problematic social media content can be preserved—using the scrollable video capture—before it is removed. As the findings in the mobile mobilization model are case-specific, particularly means and ends, other themes and discourses are likely to emerge in different case studies. Similarly, the distinctions between forward-facing and faceless hate influencers may alter between hate groups.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Government of Canada’s Ministry of Heritage Digital Citizen Contribution program (grant no. R529384) and the Lookback software was funded by SSHRC: Software Skills in the Media Manifold (grant no. 430-2018-00964).