Abstract

We investigate the effect of social media endorsements (likes, retweets, shares) on individuals’ policy preferences. In two pre-registered online experiments (N = 1,384), we exposed participants to non-neutral policy messages about the COVID-19 pandemic (emphasizing either public health or economic activity as a policy priority) while varying the level of endorsements of these messages. Our experimental treatment did not result in aggregate changes to policy views. However, our analysis indicates that active social media users did respond to the variation in engagement metrics. In particular, we find a strong positive treatment effect concentrated on a minority of individuals who correctly answered a factual manipulation check regarding the endorsements. Our results suggest that though only a fraction of individuals appear to pay conscious attention to endorsement metrics, they may be influenced by these social cues.

Introduction

Social media has been hypothesized to have broad effects on politics (Zhuravskaya et al., 2020). However, the magnitude of these effects and the mechanisms through which they arise remain debated. This article studies how social media affects individuals’ policy preferences. In particular, we study endorsements, as evinced by common metrics of engagement: likes, ❤s,  s, retweets, and shares. Social endorsements are a central feature of social media and are observed by billions of individuals around the world, thus even small effects may have important consequences for policy-making and political dynamics. Can the perceived support of social media messages affect how individuals evaluate policies?

s, retweets, and shares. Social endorsements are a central feature of social media and are observed by billions of individuals around the world, thus even small effects may have important consequences for policy-making and political dynamics. Can the perceived support of social media messages affect how individuals evaluate policies?

To answer this question, we conducted two pre-registered online experimental studies in Europe (Ireland, n = 305, and Italy, n = 300) and the United States (n = 779) in the context of the COVID-19 pandemic and its policy trade-offs (public health vs. economic activity, Settele & Shupe, 2022). 1 The experiment allows us to isolate the effects of perceived support for policy choices in a controlled environment different from individuals’ own social media. We study endorsements, a specific but important feature of social media, but without conflating issues of social image, peer effects, or selective exposure. Instead, we exposed individuals to strangers’ tweets and endorsements, and examined their effects on individuals’ policy preferences in an anonymous survey. More specifically, we exposed participants to non-neutral policy messages about the COVID-19 pandemic, manipulated the perceived level of endorsements of these messages, and examined how this affected their policy attitudes.

We find no overall effects of endorsements on policy views. On aggregate, we estimate a precise zero effect of our treatment on the policy views of our participants. A factual manipulation check suggests that most individuals pay little attention to endorsement metrics, with only one-third of participants correctly answering a post-treatment question about these metrics.

One particular focus of our work studies the effects of endorsement metrics on active social media users, which we define as those who use Facebook or Twitter for at least 1 hr a day. These are individuals who are frequently exposed to engagement metrics and are thus likely to be sensitive and responsive to likes and retweets on social media posts. In addition, it is most important to understand how the behavior and attitudes of these individuals are affected by the online environment, as they are the ones who use the platforms most frequently.

We find that our experimental treatment shifted the policy views of active social media users by about 0.12 standard deviations, with the effect further concentrated on the minority of individuals who correctly answered the manipulation check. These results suggest that though only a small share of the population appears to pay conscious attention to likes or retweet metrics, they may be influenced by these social cues. These findings can have further implications for policy decision-making, since social media users are known to be more engaged with politics and can have a disproportionate influence on policy agendas (Barberá et al., 2019; Vaccari et al., 2015; Vaccari & Valeriani, 2021).

Our work is related to a growing literature on the relationship between social media and politics (Zhuravskaya et al., 2020). Social media has been shown to affect electoral outcomes (Fujiwara et al., 2021), legislative processes (Barberá et al., 2019), political knowledge (Munger et al., 2022), and protest participation (Enikolopov et al., 2020; Fergusson & Molina, 2019). However, there is still little understanding of how different features of social media affect behavior.

Studies have emphasized how social media exposes individuals to echo-chambers of predominantly like-minded information (Bakshy et al., 2015; Barberá, 2015; Halberstam & Knight, 2016) and amplifies political polarization (Allcott, Braghieri, et al., 2020; Gorodnichenko et al., 2021; Levy, 2021; Settle, 2018; Sunstein, 2018). Media concerns about the influence of social media on elections are also common, 2 yet many contend that these concerns may be overblown (Allcott & Gentzkow, 2017; Boxell et al., 2017; Eady et al., 2019; Gentzkow & Shapiro, 2011; Guess, 2021; Guess et al., 2020; Scharkow et al., 2020). As a way to sharpen our understanding of these issues, we study one precise mechanism, endorsements, through which social media may affect political dynamics.

Given that social pressure is known to shape behavior and views (Bursztyn & Jensen, 2017; Carlson & Settle, 2016; Cialdini & Goldstein, 2004), online social endorsements can be an important channel through which social media may affect policy preferences, especially in situations of evolving public opinion (Bursztyn et al., 2020; Casoria et al., 2021; Hensel et al., 2022). Furthermore, humans are known to rely on heuristics or mental shortcuts to make judgments and decisions (i.e., bounded rationality), especially in situations where information is scarce and uncertainty is high (Kahneman, 2003; Simon, 1955). In the context of our study, there was substantial uncertainty on how to balance minimizing the spread of COVID-19 while preserving economic activity. An opinion that is highly endorsed would appear to have a higher level of credibility (Luo et al., 2022; Shin et al., 2022), thus potentially making it more persuasive to the audience—in what is termed the endorsement heuristic (Hilligoss & Rieh, 2008). Relatedly, bandwagon heuristics, whereby individuals are likely to follow what others do can also explain these social dynamics (Banerjee, 1992; Bikhchandani et al., 1992; Metzger et al., 2010; Sundar, 2008). The use of heuristics, and thus the effectiveness of social media endorsements in shaping opinion, is expected to be prevalent in settings involving new issues where individuals are yet to form fixed opinions.

Related work has documented that online social endorsements and perceptions of support affect whether individuals select to read content (Anspach, 2017; Messing & Westwood, 2014), like or retweet messages (Alatas et al., 2019; Egebark & Ekström, 2018), and self-report voting (Bond et al., 2012, 2017), and can have broader implications for online political dissent (Morales, 2020). We contribute to this work by studying how the perceived endorsements attached to social media messages affect policy attitudes, and we do so in the context of the COVID-19 pandemic.

Background

Since we present results from studies conducted with nationally representative samples in Ireland, Italy, and the United States, here we briefly describe the social contexts at the time of our experimental intervention and data collection. Our surveys were conducted in July 2020, at the end of the first COVID-19 wave. Overall, there was substantial policy uncertainty and contentious debates regarding the trade-offs between public health and economic activity.

In Ireland, daily confirmed deaths per million had stabilized at 0.26 (Mathieu et al., 2020). The country was at the end of the first period of lockdown, which had a significant negative impact on the economy with unemployment rising up to 28% and gross domestic product (GDP) forecasted to decline by 10.5%. 3 By the time our European data collection started on 8 July, cafés, restaurants, and non-essential retail outlets were allowed to open with social-distancing measures, though there were still restrictions on social gatherings.

Italy, having been severely affected early during the pandemic, was the first country to enact a nationwide lockdown on 9 March 2020. By early July, however, restrictions had gradually been eased and freedom of movement across regions had been restored, as COVID-19 deaths per million had also stabilized at 0.26 (Mathieu et al., 2020). Concerning the economic impact, by this date real GDP was forecasted to fall by over 11% in 2020. 4

In the United States, lockdown policies varied across states with California being the first to issue a statewide stay-at-home order on 19 March, though by early April, about 90% of the US population were living under stay-at-home orders. By May 2020, the unemployment rate had grown to 14.7%, the highest since the Great Depression. While daily confirmed deaths per million was down to 1.55 in early July, by the time our US survey was sent out on 31 July, this metric had risen again to 3.27 (Mathieu et al., 2020). The number of confirmed cases had exceeded 3 million and many states postponed re-opening plans as case numbers rose. 5

Experimental Design

Our main hypothesis is that the policy attitudes of participants are affected by social media metrics. As users conform to others’ preferences, social media affects policy attitudes by informing individuals about others’ views. Specifically, we hypothesize:

H1, Conformity: Individuals conform to views which appear more popular (as evinced by social media support metrics, that is, likes and retweets). 6

We also report here our results from investigating additional sources of heterogeneity. First, we are particularly interested in studying whether social conformity effects arising from endorsement metrics are larger for active social media users, who are frequently exposed to, are more likely to pay attention to, and understand the significance of these metrics. Our definition of active social media users consists of those who use Facebook or Twitter for 1 hr or more each day (combined). 7 Second, through the use of a factual manipulation check, we investigate the role of attention in our findings. In particular, if participants did not observe the endorsement metrics, we would not expect an effect to arise (i.e., this serves as a form of placebo check). We pre-registered these dimensions of potential heterogeneity along with a number of other variables, therefore, the results presented should be considered exploratory. These analyses help us understand the mechanisms through which the effects of endorsement metrics arise (or fail to do so).

Finally, we discuss two additional pre-registered hypotheses related to the order in which the messages appear (anchoring), and we present results on one other margin of heterogeneity, pre-treatment attitudes. This last margin of heterogeneity speaks to the literature on social media and political polarization. We find no strong patterns along other margins of heterogeneity; the estimates for all variables specified in our original pre-registration are shown in Appendix Figure A2. 8

Implementation and Design

Our first survey was conducted using nationally representative samples in terms of age, gender, and region in Ireland (n = 305) and Italy (n = 300), and it was sent out on 8 July 2020. Our second survey was conducted in the United States, using a nationally representative sample in terms of age, gender, and census regions (n = 1,519), and was sent out on 31 July 2020. Both surveys were programmed in Qualtrics. The main analyses presented below pool the two surveys, but our main results are quantitatively similar when analyzing the samples separately. 9 Unless otherwise noted, we follow the pre-analysis plans.

To recruit our sample, we contracted Dynata, formerly Research Now SSI, a survey company often used to recruit participants for research in social science (Krupnikov et al., 2021; Snowberg & Yariv, 2021). The company recruits panel members through various marketing channels and collects their sociodemographic information. To obtain a nationally representative sample, survey invitations are sent to potential respondents whose sociodemographic distributions match the one in the latest census data of the country (Bol et al., 2021). Respondents are rewarded for participating in surveys depending on the length and content of the survey. Data quality is ensured by identifying and, after checking, potentially removing random responding, illogical or inconsistent responding, overuse of item non-response (e.g., “don’t know”), and speeding (overly quick survey completion). 10

We first measured participants’ pre-treatment policy attitudes using statements about COVID-19 policy responses, for example “The government’s highest priority should be saving as many lives as possible even if it means the economy will recover more slowly.” 11 Participants indicated their agreement with these statements on a 1 to 7 Likert-type scale. We standardized these responses and coded positive values as being pro-economy. In addition, we combined the questions into one index through principal components analysis.

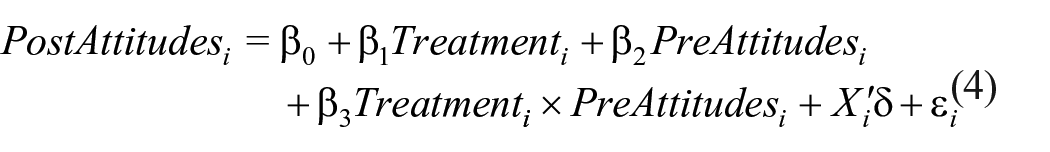

We next randomized participants into one of three treatments: control, pro-economy, or pro-health. 12 In each treatment, participants are shown six tweets from strangers about COVID-19 policies, of which three are pro-economy and three are pro-health. 13 In the control condition, all tweets have low endorsements (a low number of likes and retweets). In the pro-economy condition, the three pro-economy tweets are given high endorsements while the three pro-health tweets are given low endorsements. In the pro-health condition, the three pro-economy tweets are given low endorsements while the three pro-health tweets are given high endorsements.

The tweets were preceded by the following text: The algorithms used on social media may sometimes present you with posts by complete strangers. You will now be shown 6 tweets. As if you were going through your own social media feed (eg Twitter or Facebook), please consider whether you would “like” or “retweet” each of the following 6 tweets.

Figure 1 shows an example of the experimental variation. The tweets were generated using https://www.tweetgen.com/ using the following input:

An additional treatment dimension in the US sample exposed half the participants in each of the three above treatments to an attention prime prior to the six tweets. Participants were shown an unrelated tweet followed by three questions about the content, the timing of this tweet, and (importantly) the number of likes. We designed this manipulation to prime participants into paying careful attention to the subsequent six tweets and their endorsements, since absence of treatment effects can potentially be attributable to participants not noticing the metrics. We find that the prime did not reinforce the expected effect and in fact nullified the effect for social media users. 16 However, this treatment also allowed us to assert that the absence of an effect for non–social media users is not due to them not looking at the endorsement metrics. 17 Our analysis focuses on the non-primed group, n = 779 (out of 1,519) in the United States, and n = 605 in Europe. 18

Example of experimental variation.

After the six tweets, we elicited participants’ post-treatment attitudes using a different set of questions about COVID-19 policy responses. Participants stated their agreement on a 7-point Likert-type scale to a number of policies, such as “Prohibiting gatherings” and “Closing the borders.” 19 We use the first principal component of these responses as an index measure of post-treatment policy attitudes, our main outcome variable. We again defined positive values as being more pro-economy.

After the post-treatment attitude questions, we conducted a factual manipulation check by asking participants, Views about COVID-19 policy response can be roughly split into two: (1) Pro-health: prioritise the elimination of COVID-19 over economic activities, for example by extending lockdown measures despite economic costs. (2) Pro-economy: prioritise economic activities over the elimination of COVID-19, for example by opening up the economy despite risks of a second wave. Which of these two views had more likes in the 6 tweets shown earlier?

Participants selected from “pro-health,” “pro-economy,” “neither (both had about the same number of likes),” or “don’t know.” Participants could not go back to the previous screen to check the number of “likes” on the tweets. 20

Finally, we collected data on education, income, self-reported political ideology on a 0 to 10 left-right scale, party voted in the last election (or if they voted), experience of COVID-19, degree of stubbornness measured by the participants’ resistance to change (Oreg, 2003), media consumption, trust in the media and the government, and the frequency with which they discuss policy issues with family and friends (both on and outside of social media). We measured participants’ social media use by asking about time spent per day on the social media platforms Facebook and Twitter and define active social media users as those who spend more than 1 hr daily on Facebook or Twitter combined. 21

Empirical Analysis and Results

Main Analysis

Key summary statistics are shown in Appendix Table A1, for the whole sample and split by social media use. Notably, active users are younger, hold a more right-wing ideology, and tend to support more pro-economy policies pre-treatment. They were also more likely to correctly answer the factual manipulation check at the end of the survey. The proportion of active users is highest in Ireland (30%) and lowest in the US Midwest (17%); in all regions, Facebook use is more common than Twitter.

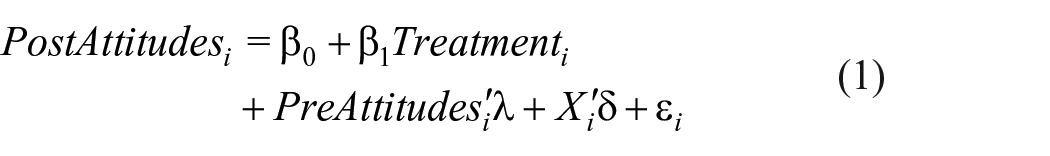

We estimate the effect of social media endorsements on participants’ policy attitudes using ordinary least squares (OLS) as follows

The dependent variable

We measure pre-treatment attitudes in two ways. First, we use the principal component of all the pre-treatment policy attitude questions (as pre-registered). Second, we use the question that has the highest correlation with the post-treatment attitude index to represent participants’ pre-treatment attitudes. In the US sample, the question used is “The government’s highest priority should be saving as many lives as possible even if it means the economy will recover more slowly. What do you think of this statement?.” In the European sample, the question used is “Sweden’s government has so far avoided implementing a lockdown in order to keep the economy going. What do you think of this policy?.” Although this latter approach differs from the pre-registered specification, we find that the correlation between pre-treatment and post-treatment attitudes is substantially higher when using this measure, potentially better capturing the policy dimension of interest and thus maximizing the statistical gains from our quasi-pre-post design (Clifford et al., 2021). 24 We present results using both measures.

We estimate Model 1 for the whole sample and for active social media users below. The results are shown in Figure 2. Estimates including a fully interacted model that tests for differences between the groups can be found in Appendix Table A2. We observe no overall treatment effect on participants’ policy attitudes. Our estimates suggest a precisely estimated zero effect of endorsements on policy attitudes for our entire sample (left panel). However, we find a differential treatment effect for active social media users (right panel) that suggests these users shift their policy attitudes by about 0.12 standard deviations in response to our treatment. That is, we find evidence consistent with our main hypothesis only for active social media users: endorsement metrics have a significant effect in persuading active users to shift their views in the intended direction.

Treatment effects.

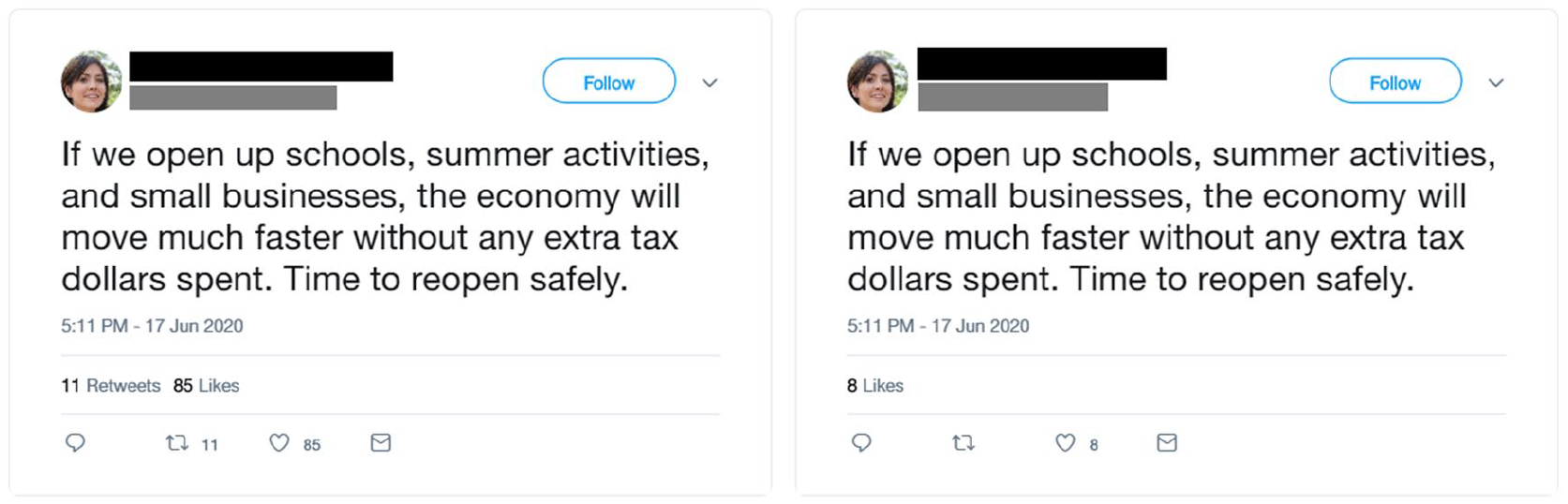

A factual manipulation check allows us to identify individuals who paid conscious attention to the endorsement counts and to study the extent to which the treatment effects are driven by them (Kane & Barabas, 2019). We asked participants—after they had submitted their policy preferences—about the relative levels of “likes” in the tweets they had seen. Overall, only 33.8% of participants answered the manipulation check correctly, with the rest answering incorrectly or “don’t know.” 25 The proportion of correct responders is higher in the group of active social media users than passive/non-users (38.7% vs. 32.1%, t test, p = .0225). Importantly, this post-treatment attention check is endogenous to the extent to which attention (or correct reporting) of the endorsement metrics may be selective (Montgomery et al., 2018). 26

To further explore these findings, we split our sample by both social media use and whether they answered this manipulation check correctly. The results are shown in Figure 3. Estimates are also presented in Appendix Table A6, and a fully interacted model that tests for differences between the groups can be found in Appendix Table A7. We observe that the treatment effect is concentrated on social media users who correctly answered the manipulation check. The coefficients are robust to the addition of controls, suggesting that selective attention is unlikely to explain these findings (Oster, 2019). Instead, the results suggest that a relatively small fraction of participants (about 10%) were sensitive to the social cues provided by the engagement metrics in our experiment; the treatment shifted their policy views by about 0.38 standard deviations (p value < .001).

Treatment effects by social media use and manipulation check.

We also observe patterns suggestive of heterogeneous treatment effects for passive/non–social media users; but these are not robust to the addition of controls, revealing instead that these users may pay selective attention to social cues which match their policy attitudes. In particular, passive/non–social media users who correctly answered the manipulation check were more likely to hold views which aligned with the assigned treatment, while those who did not correctly answer the question were more likely to hold views which differed from their assigned treatment.

As further evidence of potential selection in these subsamples, regressing the treatment assignment on pre-treatment attitudes highlights that correctly answering the manipulation check is potentially endogenous (in Appendix Table A8). The patterns appear particularly stark for passive/non–social media users and suggest that they are more prone to selective attention. To evaluate the extent to which the heterogeneity in Figure 3 may be driven by selective attention, as a further robustness check, we test the sensitivity of our estimates to the addition of controls in a selection on unobservables framework (following Oster, 2019). Our results (shown in Figure 4) corroborate our reading of the results presented here, revealing that the estimates for passive/non–social media users are sensitive to unobservable selection, while those for active social media users are not. 27

Selection-bias-adjusted treatment effects for participants with correct manipulation check.

The analyses presented here suggest that the (relatively small) subset of active social media users who tend to pay conscious attention to endorsement metrics are indeed influenced by these social cues. On the contrary, passive/non–social media users are more likely to notice endorsement metrics which reinforce their pre-existing attitudes, but they are on average not influenced by these metrics.

As two additional tests, in Appendix Table A9, we split our sample by countries and show that, though somewhat noisier, the patterns we documented are largely consistent across countries, with the largest effects concentrated on social media users who correctly answered the manipulation check (row 3, columns 4–6). We also find that our results are stronger when excluding the top and bottom 5% respondents in terms of study duration (i.e., those that were “rushing through” or taking too long to respond, see Appendix Figure A5 and Appendix Table A18). Finally, the results are shown separately for each post-treatment question in Appendix Figure A3, revealing a general shift in attitudes, and that our results are not driven by any particular question.

Additional Analyses

Anchoring

In addition to our main hypothesis on endorsement-driven social conformity, we pre-registered two additional hypotheses. First, the nature of the experiment allows us to evaluate the presence of anchoring, the idea that individuals may be disproportionately affected by the views that they first see (Furnham & Boo, 2011; Tversky & Kahneman, 1974). We hypothesize that this heuristic extends to social media settings in which individuals are exposed to different views.

H2, Anchoring: Individuals are anchored (or primed) by what they are first exposed to, so they tend to conform to the first views they observe.

Second, we explore the complementarity between our two hypotheses. In particular, we hypothesize that there are positive complementarities of the two treatments, such that higher endorsement metrics have a differential effect on attitudes when users are exposed to these views first.

H3, Complementarities: Both anchoring and popularity affect individuals’ policy views, and there are positive complementarities between the two: individuals first exposed to popular messages conform most to these views.

The order in which tweets were shown to participants was randomized, which allows us to evaluate our anchoring and complementarities hypotheses. In particular, this randomization allows us to evaluate whether the first post observed affects policy attitudes, by estimating the following model

The indicator variable

In addition, we investigate whether this anchoring effect of the first tweet, and our main treatment, are “complementary.” In particular, we estimate this model

The indicator variable

Our results from these analyses are presented in Figure 5. We find weak and marginally significant anchoring effects that appear to be concentrated on passive/non–social media users. These findings on anchoring have therefore unclear policy implications. In particular, potential policy interventions from social media platforms hoping to exploit these results (e.g., by manipulating the top message shown in a news feed) may prove ineffective, since the effect appears to be only present in passive/non–social media users.

Anchoring effects and complementarities.

In addition, we find no overall complementarity between the content of the first message (pro-econ vs. pro-health) and the engagement metrics, but (conditional on controls) we do find a complementarity for active social media users. This pattern suggests that, for this subset of participants, the content of the first tweet did matter when it had the high endorsement metrics. Although these results are more imprecisely estimated, they appear to confirm our previous findings and suggest once more that active social media users can be influenced by engagement metrics.

Political Polarization

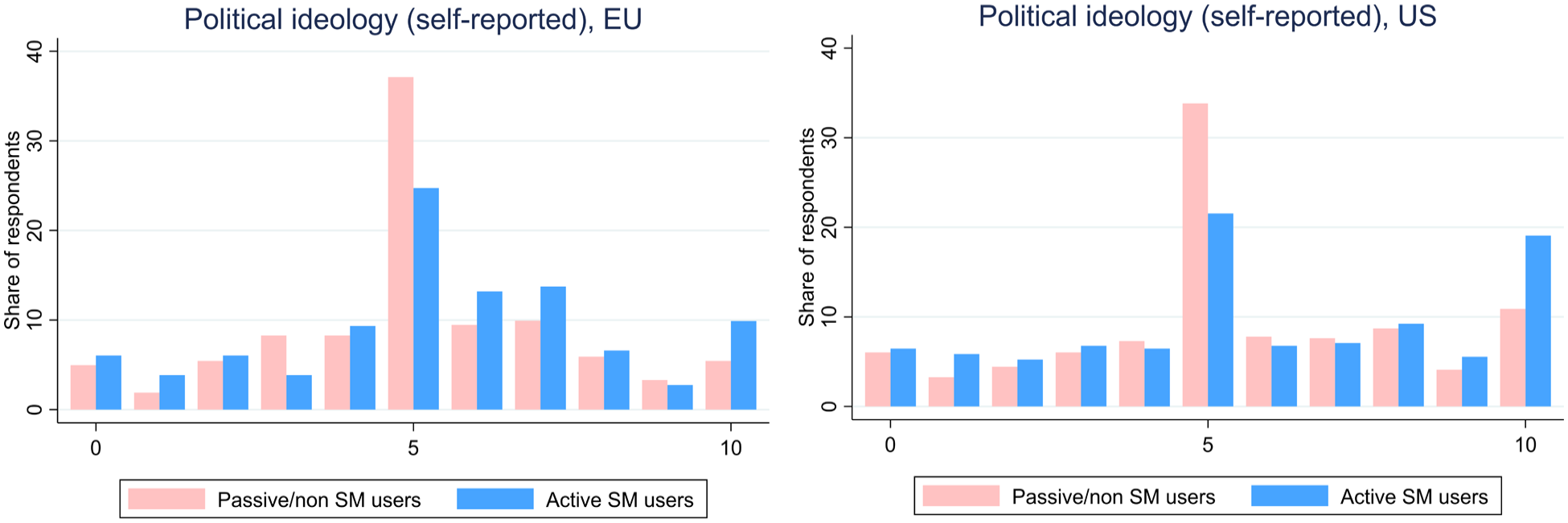

Social media has commonly been associated with an increase in political polarization (Zhuravskaya et al., 2020). In concordance with these worries, active social media users in our survey were less likely to consider themselves politically moderate (Figure 6) and were less likely to hold (pre-treatment) moderate policy views with respect to COVID-19 (Appendix Figure A4). However, these patterns could well be the result of selection into social media use: individuals who hold more polar views tend to be more active on social media, perhaps as an outlet for their extreme opinions. Although recent work documents that deactivating Facebook can indeed reduce individuals’ political polarization (Allcott, Braghieri, et al., 2020), the extent to—and the precise mechanisms through—which social media causes polarization remains debated.

Self-reported political ideology and social media use.

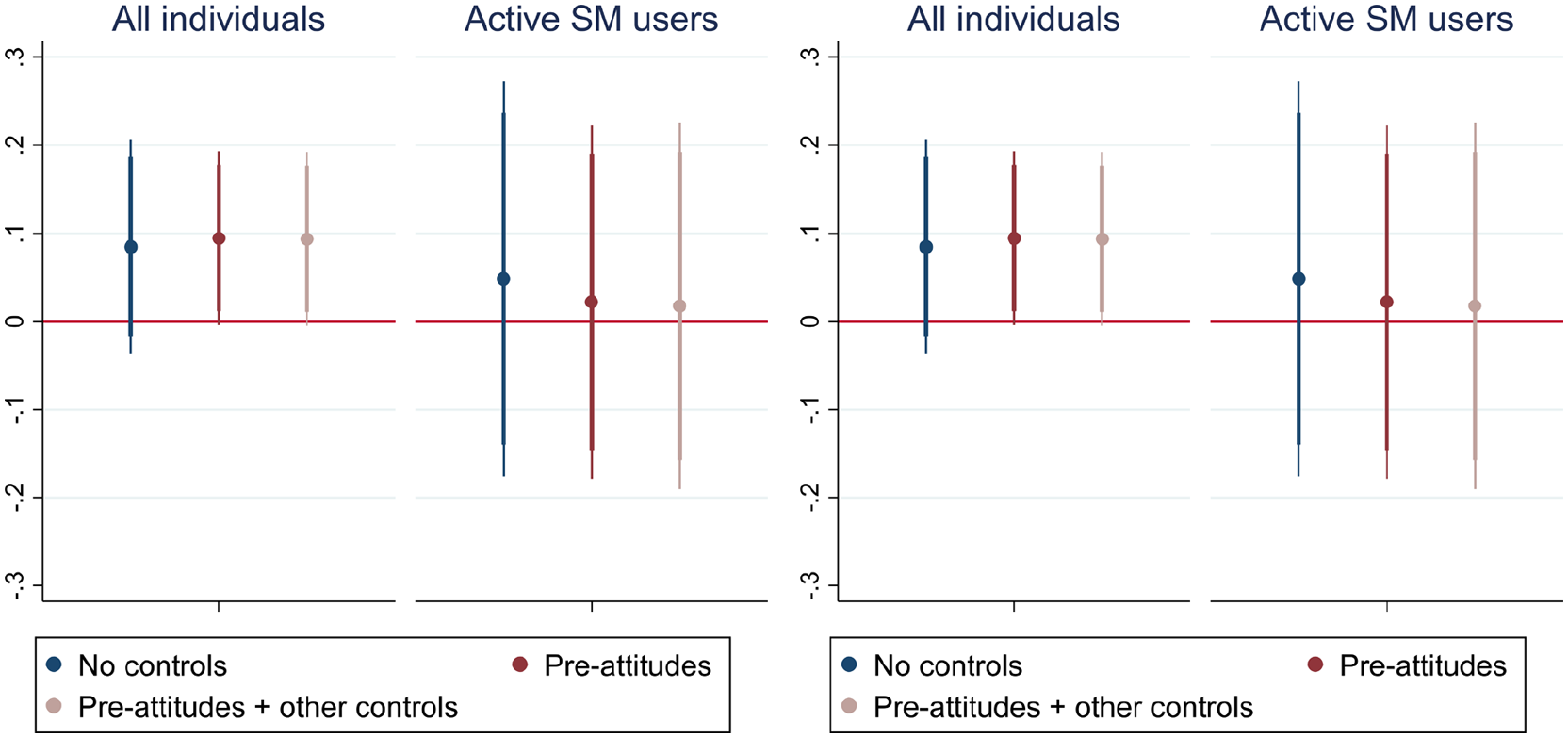

We explored whether our treatment varied with participants’ pre-treatment policy attitudes: Is the effect of endorsements larger for congenial views? This margin of heterogeneity was pre-registered among others, but we do not have enough statistical power to perform multiple hypothesis corrections. For this reason, the results from this section should be viewed as merely exploratory.

We investigate the heterogeneity of our main treatment by pre-treatment attitudes with the following empirical model

and do so specifically for active social media users.

The estimated marginal treatment effects from estimating Model 4 are shown in Figure 7. We find that our treatment differentially affects social media users depending on their pre-existing attitudes. A one standard deviation increase in pre-treatment attitudes leads to an additional change of between 0.11 and 0.16 standard deviations in policy attitudes (depending on how we measure pre-treatment attitudes). Put differently, individuals who held more extreme pre-treatment attitudes were more responsive to the treatment, and the treatment reinforced their pre-treatment attitudes. This pattern is particularly driven by individuals who held relatively more pro-economy views before the treatment. We also present models in which we include triple-interactions of treatment, active social media use, and pre-treatment attitudes (Appendix Table A12). The results suggest that public endorsement metrics may be a mechanism through which social media affects individuals’ policy preferences and could also contribute to polarization, and especially since individuals may be more likely to endorse congenial views (Garz et al., 2020).

Heterogeneity by pre-treatment attitudes.

Discussion

We hypothesized that online endorsements could affect the formation of policy preferences. Our experiment, in contrast, revealed a precisely estimated null effect of perceived endorsements on our representative samples in the United States and Europe. Furthermore, we found that only about one-third of the experiment participants appeared to pay conscious attention to these engagement metrics. At the same time, we found suggestive evidence of large treatment effects concentrated on a small share of individuals: active social media users who did appear to pay attention to the endorsement metrics (about 10% of all participants). For these individuals, a higher sensitivity to popularity metrics means that the perceived popularity of (even) strangers’ opinion is enough to sway their attitude. Finally, given our conservative measure of “high” metrics, our results potentially underestimate the impact of endorsement for viral tweets or tweets by famous people, which may attract thousands or even more likes and retweets—this would be an interesting avenue for future research.

That the effects appeared concentrated on only a fraction of participants perhaps suggests that the broader effects of endorsement metrics on politics may be limited. However, social media dynamics could further propagate across society in different ways (Margetts et al., 2015; Tufekci, 2017). Social media engagement is also associated with other forms of political engagement; as such, these individuals could exert disproportionate influence in political processes (Barberá et al., 2019; Vaccari et al., 2015; Vaccari & Valeriani, 2021) and have a broad impact on public opinion (Centola et al., 2018). In our survey, active social media users are significantly more likely to (say they) have voted in the previous election. They also report more frequently discussing policy issues with friends or family members both on and outside of social media (Appendix Table A16). 28

Our micro-level study identifies endorsement metrics as one channel through which social media affects users’ policy attitudes. However, there is a trade-off between isolating a precise causal mechanism in a controlled setting versus external validity, and future work should aim to study this relationship in real social media settings. Improved understanding of these effects can inform social media platforms in the design of appropriate interventions to address issues of polarization, misinformation, and foreign influence in politics, among others (since platforms are unlikely to promote account deactivation, as in Allcott, Braghieri, et al., 2020). One further implication is that these social cues may reinforce the effects of selective exposure (as emphasized in Zhuravskaya et al., 2020). If individuals with more extreme preferences are also more likely to “like” content, then not only will social media algorithms expose users to more polarized opinions (Levy, 2021), but such content may also appear to have broader support. In particular, our results may underestimate the true effect of endorsement metrics on social media platforms where individuals are exposed to posts and endorsements by people they know and whom they choose to follow—whose opinions the individual likely agrees with—which, as our exploratory analysis suggests, may further contribute to political polarization. How these different features of social media interact to influence political views and the persistence of the effects remain an important avenue for future work. Finally, substantial uncertainty surrounding the topic of COVID-19 in the early stages of the pandemic likely made policy attitudes in this respect highly malleable. Future work should also examine whether endorsements can shape policy attitudes of social media users in deeper entrenched topics for which views are likely to be more rigid.

Footnotes

Appendix

Acknowledgements

We thank Anna Dreber Almenberg, Karen Arulsamy, Stefan Müller, and Johannes Wohlfart as well as seminar participants at UCD and Collegio Carlo Alberto for comments. The experiments were approved by University College Dublin Office of Research Ethics (reference numbers: HS-E-20-110-Samahita and HS-E-20-134-Samahita). Authors’ order has been randomized using the AEA Author Randomization Tool (reference: eTjbUATa_zKY), denoted by

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Funding from UCD, Collegio Carlo Alberto, and the Einaudi Institute for Economics and Finance is gratefully acknowledged.