Abstract

The COVID-19 pandemic brought about several challenges in addition to the virus itself. The rise of Islamophobic hate speech on social media is one such challenge. As countries were coping with economic collapse due to mass lockdown, hateful people, especially those associated with far-right groups, were targeting and blaming Muslims for the spread of the coronavirus. In India, where intense religious/communal polarization is taking place under the right-wing Bharatiya Janata Party (BJP)-led government, one such prominent instance of Islamophobia—the “Tablighi Jamaat Controversy” (TJC)—occurred. This article analyzes Facebook posts by public groups over a 5-month period (March–August 2020) to find the major actors and track their link-sharing behavior. We found that the Pro-BJP groups with a right-wing ideology spread Islamophobic hate speech, while other groups (anti-hate) worked to counter the hate. We also found that the hate disseminators were extremely active (three times faster) in sharing their content as compared with the anti-hate groups. Finally, our research indicated that the links most widely shared by the hate spreaders were mostly misinformation. These results explain the use of the Facebook platform to spread hate and misinformation, demonstrating how BJP’s pro-Hindu ideology and its attitude toward Muslims is directly and indirectly enabling these actors to spew hate against Muslims with no legal consequences.

Introduction

Islamophobia can be defined as “hatred or fear of Muslims or their politics or culture” (Collins English Dictionary, 2022). Whether Islamophobia should be categorized as a form of racism or xenophobia is the subject of ongoing debate. However, Islamophobia is certainly a social evil that has taken root in the world, especially after the terrorist attacks of September 11, 2001. In countries where the Muslim community has a substantial share of the demographics, conservative political parties have regularly used Islamophobia as a political tool to garner votes (Bukar, 2020). The COVID-19 pandemic provided another opportunity for conservative and right-wing political leaders and their supporters to target Muslims. As a result, Islamophobic hate speech and fake news proliferated on social media, leading not only to physical violence against Muslims but also to boycotts of Muslim businesses, essential workers, and health and relief workers (Soundararajan, 2020). The spread of Islamophobic hate speech on social media has received attention from academics (Burnap & Williams, 2016) and also from the social media platform Twitter (2018). However, Facebook came under scrutiny and is the subject of a lawsuit by a civil rights group for its failure to crack down on hate speech against Muslims (Collins & Guynn, 2021; The Conversation, 2021).

Our research takes the case of the TJC Islamophobic campaign that happened in India during the COVID-19 pandemic in order to study Islamophobic hate speech on Facebook. Tablighi Jamaat is a transnational Sunni Islamic missionary movement founded in 1926, and around 80 million Muslims participate in activities it organizes every year (Pieri, 2021). In early March 2020, a gathering of Tablighi Jamaat took place in New Delhi, and became a superspreader event resulting in more than 400 infections (Slater et al., 2020). While the organizers defended themselves by citing the Health Ministry of India statement that said COVID-19 wasn’t a health emergency and blamed government “ill-planning” for this situation (Khan, 2020). However, this event allowed the right-wing, hypernationalist anti-Muslim groups to spread Islamophobic hate speech against Muslims during the COVID-19 pandemic in India. Our findings focused on the actors that spread Islamophobic hate. As detailed later, we found that several groups that align with right-wing ideologies and promote pro-Bharatiya Janata Party (BJP) propaganda were spreading hate against Muslims.

BJP is the ruling political party in the current government of India and has been in power in central government since 2014. It has very close ties to Rashtriya Swayamsevak Sangh (RSS)—the right-wing Hindu nationalist organization and proponent of Hindu Rashtra (“Hindu nation”) (Frayer & Khan, 2019). Several prominent leaders of BJP, including the current Prime Minister, Narendra Modi; Home Minister, Amit Shah; and Defense Minister, Rajnath Singh, started their careers as members of RSS and still associate themselves with it. Under the current government, India has morphed into an “ethnic democracy,” which equates the Hindu majority as the only nationals, relegating Christians and Muslims to roles of second-class citizens (Chatterji et al., 2019; Jaffrelot, 2021).

The polarized social environment of India under the current government is also visible in the virtual/digital world. One look at various social media platforms, such as Twitter, Facebook, WhatsApp, and YouTube, is enough to attest to the changing nature of Indian society (from a liberal democracy to an ethnic democracy). BJP has its so-called IT cell, which runs all kinds of propaganda on social media (Jose, 2021; Mahapatra & Plagemann, 2019; Neyazi, 2020), and The Tablighi Jamaat incident during the early days of the COVID-19 pandemic provided the supporters of the Hindutva (anti-Muslim) group to further polarize the country by spreading online hatred using the tools of misinformation (Arabaghatta Basavaraj et al., 2021). Some examples of representation of Muslims during TJC are shown in Figure 1. The middle image in Figure 1 where Muslim man is represented as an octopus is similar to the image that was used in Australia in 1886 against Chinese immigrants 1 . This shows the reproduction and recirculation of hateful imagery in different contexts, but with the same objective of creating an environment of hate and animosity in society by hateful individuals or groups. More than 100 instances of viral, Islamophobic fake news were reported between March and May 2020 (Media Scanner, 2020). At one point during this controversy, even the Indian government was profiling the Tablighi members and explicitly publishing the total number of infections overall and infections among Tablighi members specifically without giving statistics for any other religious groups. This was condemned by the Emergency Program Director of the World Health Organization, Mike Ryan (Jain, 2020). As Figure 2 shows, on April 19, 2020, Prime Minister Narendra Modi tweeted against discrimination during the COVID-19 pandemic. However, this tweet came only after the 57-member Organization of Islamic Cooperation (OIC) criticized the Islamophobic campaign in India by maligning Muslims for the spread of Coronavirus.

Examples of representation of Muslims on social media during TJC.

Tweets by PMO India and OIC-IPHRC.

Investigative journalists and researchers have been writing about how Hindu nationalists (the right-wing and BJP supporters) have spread Islamophobia and hate speech on social media in India (Basu, 2019; Bilaval, 2021; Dotto & Swinnen, 2021; Mirchandani, 2018). However, not much research has been done on Facebook. To the best of our knowledge, our article is the first study to analyze TJC by utilizing Facebook data. Facebook is a critical social media platform in India due to its deep reach in India society. India is Facebook’s largest market with 340 million users (Statista, 2021), but internal documents leaked in 2021 (known as the “Facebook Papers”) revealed several concerning details about Facebook’s inability to control the hate speech and misinformation spread in India due to a lack of resources and expertise (Frenkel & Alba, 2021). Furthermore, these internal documents also revealed how a 2019 case study conducted by Facebook to examine “adversarial harm networks in India” by examining RSS feeds found that its groups and pages were spreading hate speech and misleading content. However, RSS was not penalized due to the “political sensitivities” that could affect Facebook’s operations in India (Frenkel & Alba, 2021; Newton, 2021; Zakrzewski et al., 2021).

Our findings provide similar evidence about the involvement of Hindu nationalist groups and supporters of BJP in spreading hate speech during TJC’s majority. However, we noticed that these groups are still active and continue to spread anti-Muslim propaganda and hate on Facebook (as of April 15, 2022). These findings align with previous research by political and social scientists, who suggest that India is becoming a dangerous place for Muslims under the present BJP-led government (Chacko & Talukdar, 2020; Chatterji et al., 2019; Jaffrelot, 2021).

Related Work

The growing significance of social media in our everyday life makes it a critical source to study social issues and human behavior. This has led to an exponential growth in research on social media analysis, resulting in Islamophobic hate speech being analyzed from a different perspective. For example, the classification of Islamophobic hate speech on social media has been undertaken by several researchers (Khan & Phillips, 2021; Mehmood et al., 2022; Saha et al., 2019; Vidgen & Yasseri, 2020). Here, we review the literature on understanding Islamophobic hate speech on social media in the context of a specific event (mass shootings, COVID-19, and Brexit) by utilizing computational, qualitative, and quantitative methods to support our efforts to dig into the Tablighi Jamaat Controversy (TJC).

During the first half of last decade when social media research was still in its nascent stage, Awan (2014) examined tweets and offered a typology of offender characteristics in the aftermath of the Woolwich attack in May 2013 that led to hate crimes against Muslims in the United Kingdom. He argued that although street-level Islamophobia is an important area of investigation, online anti-Muslim abuse is emerging rapidly. He also suggested the adoption of a new international and national online cyber hate strategy. Evolvi (2018) studied Twitter to understand the characteristics of online Islamophobia and the differences between online and offline anti-Muslim narratives in the aftermath of the 2016 Brexit referendum. The author reported that online Islamophobia enhances offline anti-Islam discourses and suggested that it is important to consider the networking and connections facilitated by social media, news platforms, and offline spaces when working to counter online Islamophobia. Awan and Khan-Williams (2020) produced a study titled “Coronavirus, Fear, and How Islamophobia Spreads on Social Media.” In this report, the authors analyzed Twitter and showed that how anti-Muslim bigotry was evolving and transforming in the United Kingdom in the context of COVID-19. Similarly, Soundararajan (2020) provided a detailed report on the analysis of how the hashtag “#Coronajihad” was used during the COVID-19 pandemic to spread Islamophobic hate on various social media platforms in India, which also resulted in physical violence on Muslims. Aguilera-Carnerero and Azeez (2016) collected and analyzed tweets using the hashtag “#jihad” through the lens of Critical Discourse Analysis and Corpus Linguistics methodology. Their research showed that social media discourse amplifies and is more explicit in expressing the stereotypes and promoting negative representations of Muslims. Chandra et al. (2021) created the CoronaBias data set of tweets, performed a content analysis using the topic modeling method, and showed the existence of anti-Muslim rhetoric related to COVID-19 in the Indian sub-continent.

Awan (2016) examined Facebook pages, posts, and comments to understand the characteristics of anti-Muslim hate on Facebook by utilizing qualitative data gathering techniques embedded within grounded theory. The author characterized offender behavior typology into five categories: (1) opportunistic, (2) the deceptive, (3) fantasists, (4) producers, and (5) distributors. Civila et al. (2020) analyzed the use of “#Stopislam” on Instagram through quantitative analysis methods in order to understand how demonization is used as a weapon of oppression in order to devalue specific individuals and to understand the role Instagram plays in this process. Rajan and Venkatraman (2021) performed an exploratory inquiry that engaged in a semiotic analysis of the Instagram pages of Hindu Secret and Hindu He Hum using of Stuart Hall’s encoding and decoding theory as a theoretical framework to uncover the visual and textual codes developed on social media to create stigmas and blatant stereotypes that dehumanize and demonize certain communities. They found encoded stereotypes of threat in the use of color, religious structures, clothing, and other physical markers of cultural identity in the content created to engender Islamophobia.

Facebook is an important social media platform in India with a reach of around 330 million people. However, there is dearth of research that uses state-of-the-art computational tools and methods to analyze Facebook data in the context of Islamophobic hate speech. Our research tries to fill this gap.

Our research tries to answer the following research questions:

Research Question 1 (RQ1). Which Facebook groups or entities spread Islamophobic hate during the TJC?

Research Question 2 (RQ2). How did anti-Muslim and anti-hate groups share links (URLs) during the TJC?

Research Question 3 (RQ3). How did anti-Muslim Facebook groups use misinformation to spread Islamophobic hate during the TJC?

Data and Methodology

Among various social media platforms, Twitter has frequently been used for social media analysis due to the ease of accessing data though Twitter APIs. However, recent CrowdTangle APIs have made Facebook’s “public posts” available to researchers, although researchers using Facebook data still have comparatively less to work with. In addition, India has the highest number of Facebook users in the world, and Facebook is one of the most important channels of communication. For these reasons, we selected Facebook to study TJC.

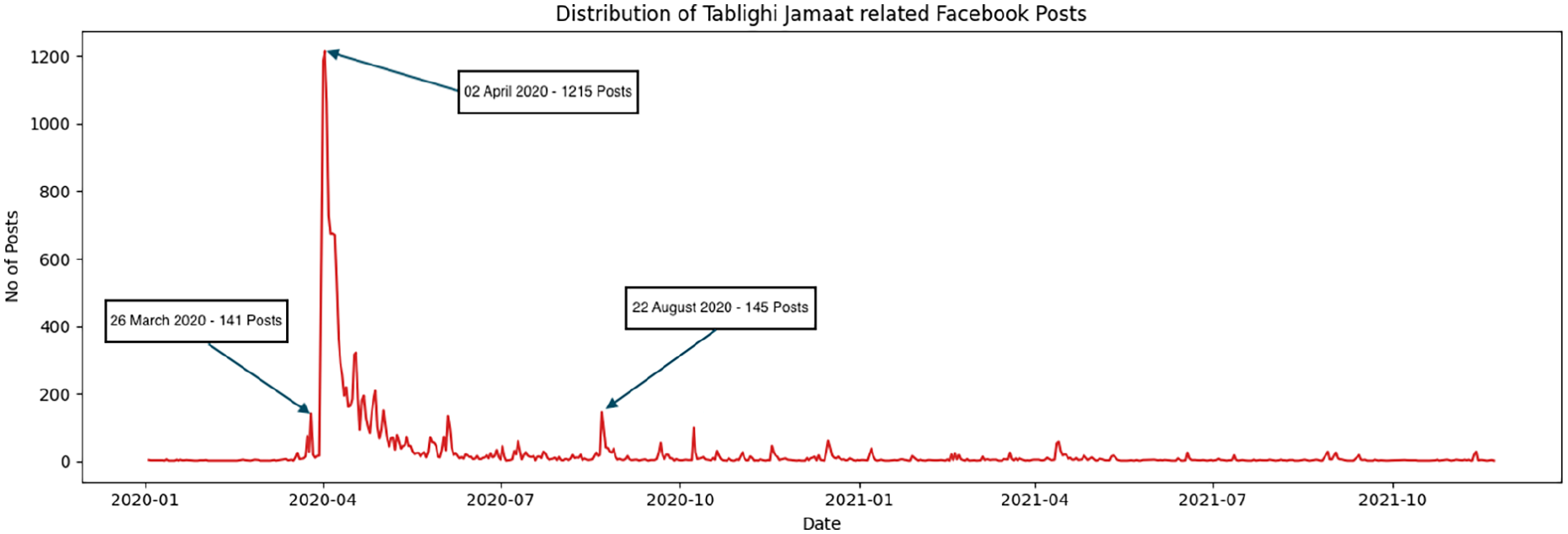

We collected 14,656 posts from public Facebook groups and pages using the keyword “Tablighi” between March 26, 2020, and August 22, 2020, using the CrowdTangle historical data feature (CrowdTangle Team, 2022). CrowdTangle is a public insight tool owned and operated by Facebook that covers public pages, public groups, and verified profiles on Facebook (and Instagram). As shown in Figure 3, we chose this specific period based on the observation that March 26 was the first day when posts related to the controversy (TJC) reached more than 100 hits per day, and August 22 was the last day when posts numbered more than 100 hits per day. While 96.74% of the collected posts came from India, the rest were from the United States (1.16%), Pakistan (1.08%), Bangladesh (0.51%), and Great Britain (0.51%), as shown in Table 1.

Temporal distribution of Tablighi Jamaat–related Facebook posts.

County-Wise Post Distribution.

Next, we identified the highly connected and coordinated entities (groups and pages) that repeatedly shared the same URLs within the coordination interval using the CooRnet package. We used the CooRnet R package (Giglietto et al., 2019) to detect entities that performed Coordinated Link Sharing Behavior (CLSB) around a set of given URLs. CLSB refers to a specific coordinated activity performed by a network of Facebook pages, groups, and verified public profiles (Facebook public entities) that repeatedly shared the same news articles in a very short time from each other (CooRnet, 2022b). CooRnet performs two main functions: “get ctshares”—given a list of URLs within the publication date, this function will query the link endpoint of the CrowdTangle API to collect respective social media shares—and “get coord shares”—given a data set of CrowdTangle shares, this function will detect networks of entities (pages, verified accounts, and public groups) that performed coordinated link sharing behavior (Giglietto et al., 2020). This produced 1731 entities, which we further filtered by deleting all the vertices within a degree less than 100. This left us with the 168 most-connected Facebook groups. Out of these 168 entities, we identified 11 that were anti-Muslim and 157 that were anti-hate groups. Out of the 157 anti-hate groups, we again filtered down to the 11 biggest groups in terms of coordinated shares.

We used our first findings to collect data to determine the coordination factor in order to understand their link-sharing behavior. Here, link-sharing behavior is defined as repeated sharing of the same links within an unusually short period of time by Facebook entities such as pages or groups (Kharazian, 2020). To understand this coordination, we collected all the posts by these 22 groups within the same period, again using the CrowdTangle historical data feature. We collected 2068 and 2801 entries from anti-Muslim and anti-hate groups, respectively.

The first step in our analysis was to find “highly connected coordinated entities” within the body of collected data (i.e., the 14,656 posts returned by CrowdTangle). CooRNet’s “get urls from ct histdata” function collected all the URLs present in this data set, producing 7448 URLs. Next, the “get ctshares” function of the CooRNet package collected all the posts that linked to or mentioned these 7448 URLs by querying the CrowdTangle API. Finally, the “get output” function provided us with a list of “highly connected coordinated entities” that shared the URLs in a coordinated way (CooRnet, 2022a). This resulted in 1731 highly connected, coordinated entities. Figure 4 shows the top 10 of these entities (account names) along with the number of coordinated shares, the average subscriber count, degree, and description (this description is written by consulting the “About” section of the concerned Facebook group or page). Out of these 1731 entities, we filtered out entities with degrees less than 100, which resulted in 168 entities.

Top 10 “highly connected coordinated entities.”

Then, in order to examine link-sharing behavior, we again collected data using CrowdTangle’s historical feature; however, this time, we collected “Tablighi”-related posts shared by 22 entities (11 anti-Muslim and 11 anti-hate groups identified previously). We collected a total of 4869 posts (2068 anti-Muslim posts and 2801 anti-hate posts). Then, we repeated (second iteration) the step described in the previous paragraph to determine link-sharing behavior by calculating coordination intervals. The objective behind calculating coordination interval is that, while it may be common that several entities share the same URLs, it is unlikely, unless a consistent coordination exists, that this occurs within the time threshold and repeatedly.

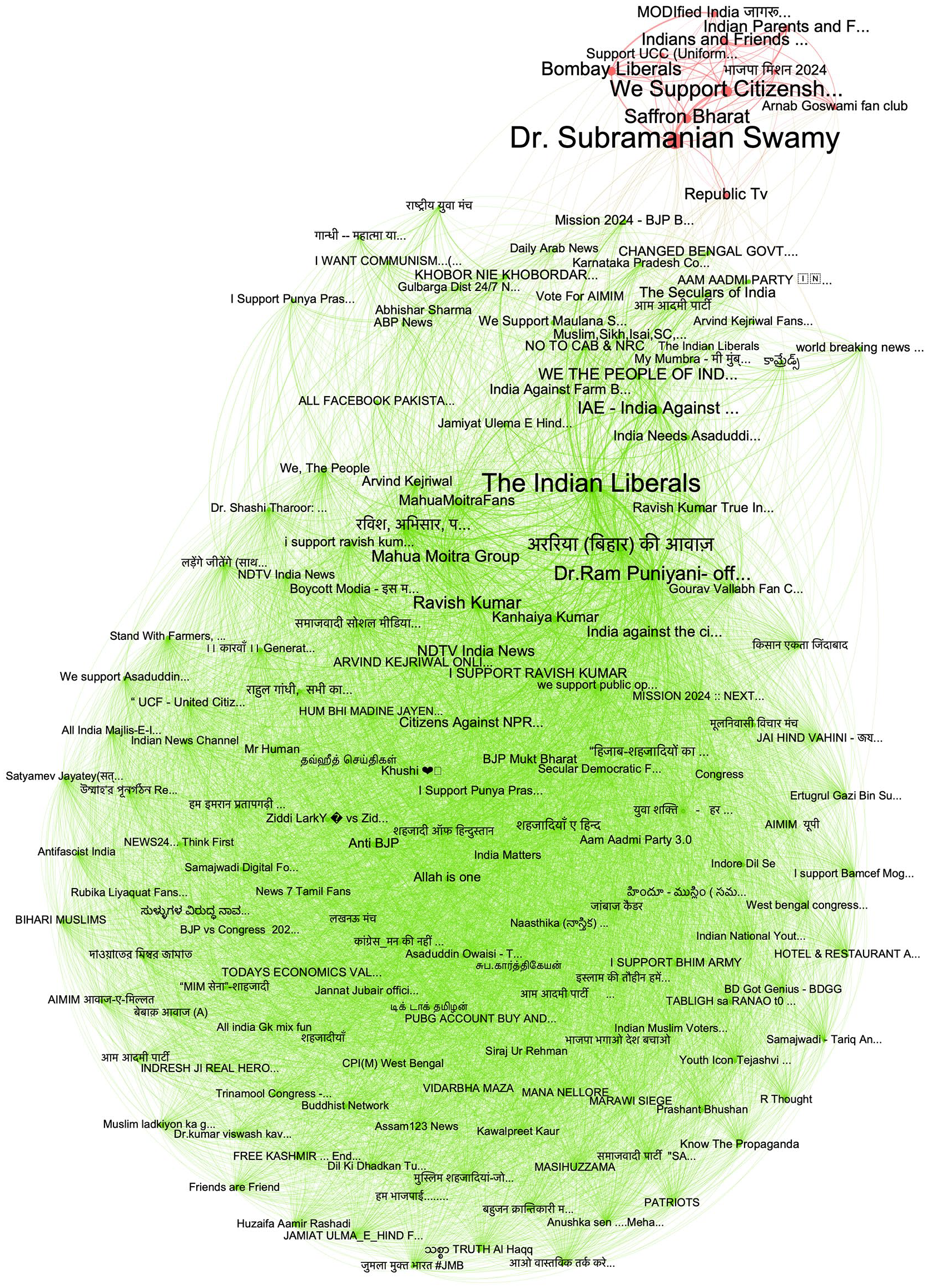

Using the Gephi software with the ForceAtlas2, noverlap, and Label Adjust algorithms, we created graphics to assist in visualizing all the networks (Bastian et al., 2009). Groups are represented by nodes and edges represent the social-media sharing between two groups. The size of the nodes (and label) is proportional to the number of coordinated shares (the higher the number of coordinated shares, the larger the size of node), while edges with a higher weight—more shares between two groups—will be thicker.

In order to check whether or not the shared content is misinformation, we first determined the top 20 shared posts for each of the two groups using the CooRNet package. Then, we manually verified each of the posts. The comment columns of Supplemental Appendices C and D demonstrate the verifiability of these posts. Posts that have “Fake news” comments are all identified by Google Fact Check Tools 2 . Other observations such as whether the source is verifiable or not, authentic or not, are duly listed in the comments column.

Results

Finding 1: Anti-Muslim Groups Are Smaller But More Densely Connected

We first created a network of the 168 highly active public Facebook groups found using the aforementioned process. Figure 5 shows the resulting network with social division between two distinct clusters—a small cluster with eleven nodes (entities/groups) including public groups formed around ideas, parties, and figures that indicate their support of the BJP’s Hindu nationalist identity, such as Dr Subramanian Swamy (BJP politician—famous or infamous depending on which side you are on—for his anti-Muslim views), Saffron Bharat (Saffron is the chosen color of RSS and the BJP), and MODIfied India (incorporating the Indian PM’s family name). We identified this as the anti-Muslim cluster. The bigger cluster has 157 nodes with some of the larger nodes including public groups, such as Dr Ram Puniyani Official (a well-known human-rights activist involved in opposing Hindu fundamentalism), Ravish Kumar (Magsaysay Award-winning journalist), and the Mahua Moitra Group (Mahua Moitra is member of Lok Sabha and belongs to the All-India Trinamool Congress Party). Most of the public groups are against hate and support India’s secular and liberal identity. Hence, we named it the anti-hate cluster. Note that the node size is proportional to its number of coordinated shares in Figure 5. Out of 168 nodes, the three largest nodes are (1) Dr Subramanian Swamy with 81 coordinated shares, (2) the Indian Liberals with 64 coordinated shares, and (3) the We Support Citizenship Amendment Act (CAA) (CAB) & (NRC) with 49 coordinated shares. The first and third belong to the anti-Muslim cluster, while the second belongs to the anti-hate cluster. Figure 6 shows 11 anti-Muslim and 11 anti-hate entities along with their node designation and their percentage of coordinated shares. As the anti-hate network was big when we kept the degree greater than 100, it produced a network with 973 nodes and 206888 edges (Figure 9 left side). Next, to pinpoint the core from this network, we used k-core decomposition. At k greater than 900, we produced a network with 19 nodes and 171 edges (Figure 9 right side). Supplemental Appendix A provides details concerning these 19 highly connected anti-hate nodes (entities). The anti-Muslim network was comparatively small, so at degree greater than 100, we produced a network with 200 nodes and 13,108 edges (Figure 8 left side). At degrees greater than 250, we produced a smaller network of core groups that included 19 nodes and 171 edges (Figure 8 right side). As mentioned previously, we ranked the size of nodes based on the “coordinated shares” parameter and the size of the edges by weights.

Network of highly active and connected public Facebook groups during Tablighi Jamaat controversy.

Anti-Muslim and anti-hate entities.

Another observation from this network is the presence of groups related to the controversial Citizenship Amendment Act (CAA) (Government of India, 2019). CAA was an act passed in the Indian parliament on December 11, 2019, that aimed to provide citizenship to persecuted minorities such as Hindus, Christians, Jains, Parsis, Buddhists, and Sikhs from Afghanistan, Bangladesh, and Pakistan. This act does not include Muslims, and this created a huge uproar not only in Indian society but also globally as an anti-Muslim law (BBC News, 2019; Gringlas, 2019; Regan et al., 2019). Soon afterward, India and several world cities witnessed massive protests against this act (The Hindu, 2019; Scroll, 2019; The Wire, 2019). This event polarized the country with liberals and secular people protesting against this act, while the supporters of BJP favored this act. This polarization was visible on various social media platforms. The time gap between this event and TJC was very narrow; therefore, we found that several of the public Facebook groups (including both supporters and opposers of this act) were already actively participating in their given group when TJC occurred. Groups such as “We Support Citizenship Amendment Act (CAA) (CAB) & NRC” started spreading TCJ-related anti-Muslim hate soon after it was presented. Meanwhile groups such as “Boycott CAA/NRC/NPR” and “Citizens Against NPR, CAA and NRC” that rejected the idea of persecuting one community through the CAA protest posted against targeting Muslims for the spread of Coronavirus.

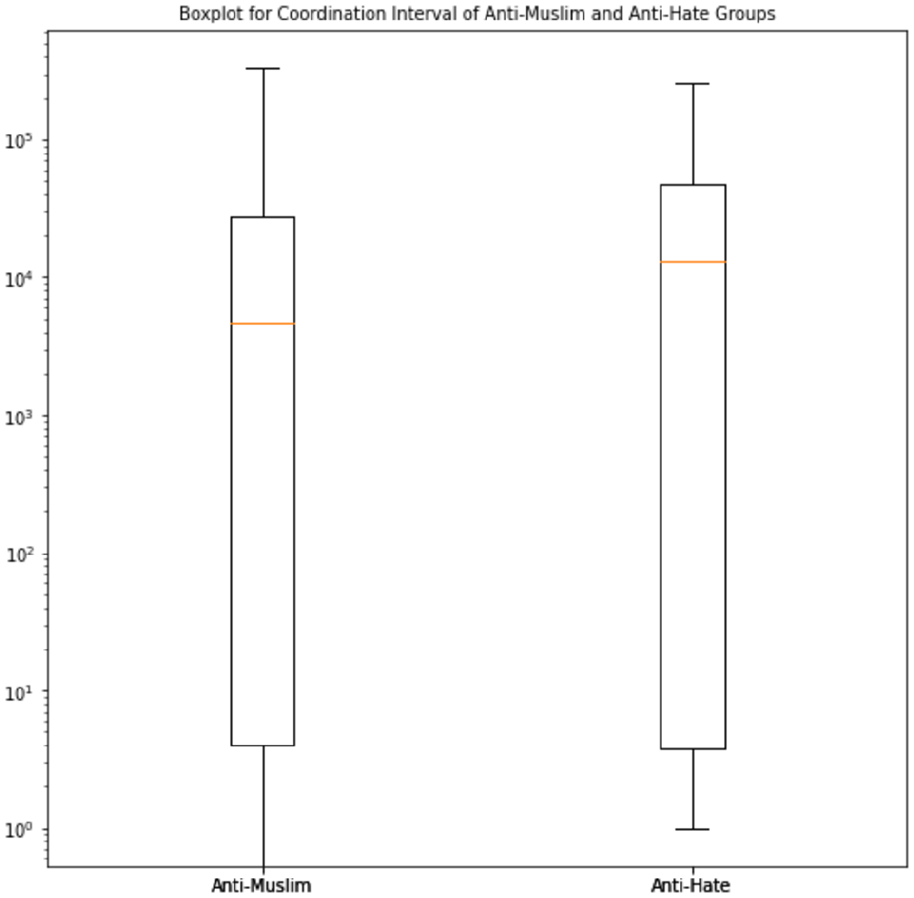

Finding 2: Anti-Muslim Groups Are Faster in Spreading Hate

After selecting the anti-Muslim and anti-hate groups, we collected all the Tablighi Jamaat-related Facebook posts by these groups during the four-month period. There were 2068 posts by 11 anti-Muslim groups, while 11 anti-hate groups posted 2801 posts. Figure 7 shows the coordination-interval distributions for both groups. On average, the coordination interval for anti-Muslim groups was 77.7 min, while for anti-hate groups, the interval was 218.8 min. This indicates that anti-Muslim groups were approximately three times faster at spreading hate than the other group was at combating it.

Coordination intervals of anti-Muslim (left) and anti-hate (right) groups.

In order to further examine the core network structure of the two groups, we created a network for each group with k-core decomposition. The core network of anti-Muslim groups is shown in Figure 8 and the counterpart of anti-Hate groups is shown in Figure 9. The left side depicts the complete network, and the right side depicts the 19 highly connected nodes. Some of the groups identified in our first finding are also present in the core-19 nodes as they are highly connected groups. Compared to the anti-hate groups’ density of 0.432, the anti-Muslim network has a higher density of 0.659. Supplemental Appendix A provides account names, coordinated shares, number of subscribers, and degrees of the 19 anti-Muslim groups, whereas Supplemental Appendix B provides those counterparts for the 19 anti-Hate groups. Table 2 depicts network features for both anti-Muslim and anti-hate groups.

Network Features of Anti-Muslim and Anti-Hate Groups.

Note. The second row corresponds to parameters for k-core decomposition.

Network of anti-Muslim public Facebook groups during the “Tablighi Jamaat controversy.”

Network of anti-hate public Facebook groups during the “Tablighi Jamaat controversy.”

Finding 3: Anti-Muslim Groups Shared Misinformation, While Anti-Hate Groups Shared Authentic and Verified News

In order to determine the kinds of shared posts, we analyzed the top two groups’ top 20 most shared posts. For the anti-Muslims, there were 11 unique links shared by 20 unique users. The top-ranked news was shared 2429 times by Svn Times account handle. This article reports on the conspiracy by Tablighi Jamaat members and a famous Muslim leader to kill 100,000 Hindus via the coronavirus. Efforts to verify this story resulted in no website for this channel. We could not verify this news as the page was “not found.” Out of the 11 unique news stories, only three were based on authentic news reports. The rest were fake news, misinformation, or news that could not be independently verified because no source is available (page not found). Some links originated from sources that are notorious for their anti-Muslim views. Also, out of 20 users, all accounts except four can be identified as supporters of BJP or its leaders. On the other hand, out of the top 20 posts shared by anti-hate groups, 12 posts had a title, five had no title, one was re-shared with an added title, and two reports were presented twice. There were 15 unique accounts, out of which, eight were credible national-level news sources such as Outlook India, The Wire, Quint, The Lallantop, Newslaundry, and The National Herald. We could verify all 18 posts (two were repeated). More details are available about anti-Muslim and anti-hate groups in Supplemental Appendices C and D, respectively.

This finding shows that anti-Muslim Facebook groups were using inauthentic sources, had a history of fake news, and were involved in pro-BJP and anti-Muslim propaganda. In contrast, anti-hate Facebook groups used authentic sources to counter the hateful fake news or misinformation spread by anti-Muslim groups.

Implications

The 11 public Facebook groups and pages that were identified as spreaders of Islamophobic hate during the TJC can be put on a blacklist, and we can monitor their activities. In order to demonstrate this, we made a “Live Display” using CrowdTangle, which is publicly available online 3 . For comparison, we have also included the 11 anti-hate entities that were highly active in countering hate. Using this Live Display, we can monitor these entities’ daily and weekly activities, including which entity is comparatively very active among those identified and what kind of posts they are sharing. Also, by continuously monitoring the anti-Muslim groups, we might delay rapid link-sharing activities or alert the relevant authorities to take action against these groups when necessary. While monitoring the eleven anti-Muslims entities, we found that these groups are still actively sharing Islamophobic posts even after the TJC period. The nature of most of the posts are Islamophobic, which shows that these public Facebook groups and pages are inherently Islamophobic, and Facebook should act to stop the spread of Islamophobic hate.

The relationship between the consumption of misinformation and the use of hate speech is a critical question that merits urgent attention. Cinelli et al. (2021) explored this question and provided evidence in favor of a moderate (if not absent) relationship between online hate and misinformation. Our research analyzed the top posts shared by both anti-Muslim and anti-hate groups. Finding 3 reveals that most of the top shared posts by anti-Muslim groups are misinformation or information that came from unverified sources, all of the top posts shared by anti-hate groups are from verified and authentic sources. These findings suggest that misinformation can be used as a weapon for hate speech. Hate speech and misinformation are serious problems at present, and these two evils are being used in tandem by notorious actors to aggravate tension in society. Social media researchers need to further investigate this question and provide a possible solution.

Discussion

Two discussion themes emerge from the findings of this research. The first theme is Facebook’s inaction to stop hate and misinformation. As Finding 2 showed, anti-Muslim hate groups and individuals (the supporters of right-wing ideology) on Facebook spread hate-themed misinformation much faster than anti-hate group’s factual messaging. However, Facebook does a very poor job of policing and stopping the spread of misinformation, which encouraged harm—including violence—against Muslims in India. The second theme is the proliferation of anti-Muslim content on Facebook by right-wing Hindu-nationalist groups and individuals (who are either affiliated with BJP or are BJP supporters).

In the past, Facebook has been subject of heavy criticism for several issues such as misinformation surrounding the 2016 U.S. Presidential election, violations of users privacy, Cambridge Analytica scandal, and for conducting psychological tests on 70,000 unconsenting participants in 2012 (Meisenzahl & Canales, 2021). However, the leak of troves of internal Facebook documents by former Facebook employee turned whistleblower Frances Haugen in 2021, led to the one of the most scathing criticisms of Facebook. Among other things, these leaked documents revealed the hypocrisy of Facebook’s claim regarding hate speech. Publicly, Facebook claimed that it removes more than 90% of hate speech; however, in private internal communication, the company says the figure is only 3%‒5%. The deceptive trick in a matter of such a crucial issue of hate speech was shocking and exposed Facebook’s ineffective content moderation (Giansiracusa, 2021).

In August 2020, Facebook came under fire for not removing anti-Muslim posts by a politician from the BJP—Mr T. Raja Singh—that flouted its rule against hate speech. However, amid the growing political storm over its handling of extremist content, Facebook banned that politician. Facebook’s top policy official in India—Ms Ankhi Das—opposed banning Mr Singh. In communications to Facebook staff, she said that punishing violations from PM Narendra Modi’s party could hurt company’s business interest in India. Although, unsurprisingly, Facebook denied all allegations of political biases, Ms Das stepped down some months later citing personal reasons (Horwitz & Purnell, 2020; Perrigo, 2020a, 2020b; Purnell & Roy, 2020b). However, October 2021’s leak of internal documents from Facebook (which later came to be known as “the Facebook Papers”), collected by whistleblower Frances Haugen, once again introduced the issue of political biases in Facebook–India. These documents showed that Facebook conducted an internal study (Adversarial Harmful Networks: India Case Study) and knew that RSS and its affiliates were pushing anti-Muslim hate content on its platform but did not take any action, again citing its business interests in India (Pahwa, 2021; Sekhose, 2021). Our findings are another proof of how pro-BJP Hindu nationalist Facebook groups spread anti-Muslim hate content during the COVID-19 pandemic and that these groups are still actively spreading same kind of Islamophobic content. This raises questions about Facebook’s content moderation policy (Facebook, 2022; Patel & Hecht-Felella, 2021), and lack of expertise and resources to deal with problematic content (especially that containing hate speech) coming from the Indian sub-continent. In December 2021, Facebook released an “Adversarial Threat Report” which is an end-of-year report related to Coordinated Inauthentic Behavior (CIB) and Brigading and Mass Reporting (Facebook, 2021). It only mentions the removal of CIB from China, Palestine, Poland, and Belarus. India does not feature in this report. Facebook’s approach to protect its business interests in India by not angering the ruling political party is a serious violation of not only the social media platform’s tenet of non-partisanship but also of the people’s trust. If Facebook is serious about curbing hate speech on its platform, it should show through its policies and behavior that it is ready to take action against anyone involved in spreading of hate irrespective of their political affiliation.

The results of a recent study based on the survey and in-depth interviews of 1056 Muslims in India showed that at least 60% of the participants have come across content on digital platforms (including Twitter, Facebook, WhatsApp, Reddit, and GitHub) that incites violence against Muslims, and this anti-Muslim hate on these digital platforms reflects the broader political ideology of Hindutva that is rooted in the othering of Muslims (Dutta, 2022; Roy, 2022). Similarly, an investigative report which analyzed accounts of “Prashasak Samiti,” a social media network, on different platforms (it has two Facebook pages, one of which has 1,24,645 followers), and found that on all its social media platforms (Twitter, Facebook, Instagram, Telegram, and WhatsApp), this group is spreading false news and hate speech targeting Indian Muslims and other minority communities (Goyal, 2022). Moreover, this trend of Islamophobic hate speech by Hindu nationalists is not limited to India. Thanks to social media groups dedicated to Indian citizens in diaspora, antagonism toward Muslims by the supporters of BJP has gone global (Zhou, 2020). Our research also shows that many Facebook groups, such as Dr Subramanian Swamy, Saffron Bharat, We Support Citizenship Amendment Act (CAA) (CAB) & NRC, and MODIfied India, who were actively sharing Islamophobic content are supporters of BJP and its anti-Muslims policies.

Later this year (August 15, 2022), India will be celebrating the 75th year of its Independence. While India did not break up as Winston Churchill predicted, several major fault lines persist and one such fault line is religious polarization. Hindutva—the core ideology of BJP—is an ideology of religious polarization. Since BJP came into power in 2014, religious polarization has reached a new level where supporters of Hindutva ideology try to use even small incidents to further polarize Indian society. These communal actors utilized social media platforms to spread hateful ideology and content with speech to the farthest corner of India. On the top of that, the inaction by corporations that owned these social media platforms specially to save their business interests has made the matter worse. The Indian government’s ignorance toward religious hate and lack of strict action by social media platforms (Facebook in present case) to stop online hate are the two main culprits for the current hateful environment in India. Finally, the growing religious polarization in India is neither good for India’s global image nor for its economy. In India, the opposition political parties and national/international civil rights organizations should pressurize the Indian government to take action against those who spread hate.

Future Research

We collected data for our analysis through Facebook’s metrics platform “CrowdTangle,” which covers public pages, public groups, and verified profiles on the platform. Although entirely appropriate as it protects the privacy of Facebook users, because of this limitation, we were unable to trace the further dissemination of Tablighi Jamaat–related Islamophobic hate through closed groups, direct messaging, or private or semi-private personal profiles (e.g., profiles whose posts are available only to friends or friends of friends). Thus, our analysis is limited to the fully public spaces available on the wider Facebook platform. Reddit is another social media platform that can provide interesting insights into hate-speech research. However, we have focused on Facebook for this research. In our future research, we would like to try to analyze multiple social media platforms, including Reddit in order to understand Islamophobic hate speech in India. As hate speech and misinformation on social media platforms is a global problem, it would also be important to do a comparative analysis of two or more countries to understand different discourse and narrative surrounding these issues.

Supplemental Material

sj-pdf-1-sms-10.1177_20563051221129151 – Supplemental material for Rapid Sharing of Islamophobic Hate on Facebook: The Case of the Tablighi Jamaat Controversy

Supplemental material, sj-pdf-1-sms-10.1177_20563051221129151 for Rapid Sharing of Islamophobic Hate on Facebook: The Case of the Tablighi Jamaat Controversy by Piyush Ghasiya and Kazutoshi Sasahara in Social Media + Society

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical Approval

All data were collected from Public Facebook Groups or Pages made available by Facebook’s CrowdTangle platform and hence comply with the privacy policy of Facebook.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by the JST CREST (JPMJCR20D3).

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.