Abstract

Recently,

Keywords

Introduction

Social media platforms like Facebook, Instagram, or Twitter have become an established part of the modern media diet (Newman et al., 2017) and communication nowadays is more often than ever mediated through online technologies. However, not all communication partners in online-interactions are honest interaction partners or even humans. Fully or semi-automated user-accounts, so-called

Social bots and their influence are heavily discussed in the context of political manipulation and disinformation (Bessi & Ferrara, 2016; Ferrara et al., 2016; Kollanyi et al., 2016; Ross et al., 2019), leading governments across the globe to strive for regulation. 3 Yet, as the detection of social bots remains a challenge, the actual number of social bots and details about their realization remain unclear. Quantitatively, different numbers exists: Varol et al. (2017) estimated a fraction of 9%–15% of active Twitter accounts to be social bots, while platforms themselves report on an absolute scale on millions of accounts (Roth & Harvey, 2018). Both indications should be used with caution, as the evaluation of the underlying tools applied for detection have been found to be not sufficiently precise in distinguishing social (spam) bots from other (human) pseudo-users or humans (Cresci et al., 2017; Grimme et al., 2018). Hence, quantitative statements on social bots—relative or absolute—remain as speculation to some extent.

What is clear, however, is that social bots need a technical infrastructure, which can be broadly understood as the combination of (a) the profile on a social media platform and (b) the technical preconditions for partial automation of the account’s behavior through the accordant platform’s

Contribution

This article is the first to provide the results of a comprehensive market analysis of the online sales areas for social bots and related commodities in the so-called

Structure

The remainder of this paper is organized as follows. First, we review the literature to define the core concepts of intelligence and automation underlying our work and prior research on social bots. Rooted in this state-of-the art, we present an initial investigation of the markets for social bots on (English and German) clearnet and darknet venues. Finally, we provide a comprehensive overview about social bot code on free online code sharing platforms. Taken together, this article provides a comprehensive overview about the social bot ecosystem and the developmental state of social bots, providing much needed empirical insights for the ongoing debate. We review this debate in light of our findings in the final section.

Related Work

The investigation of intelligence in social bots demands some words on the notion of intelligence, the general understanding of bots, and a summary of important related work based on a structured literature review to complement the empirical findings of this article.

Notions of Intelligence and Automation

As stated by many authors and nicely summarized by Legg and Hutter (2007) in a list of popular definitions of intelligence across disciplines, intelligence is still an ambiguous concept. While Boring (1923) defines intelligence in a very indirect (and somewhat circular) way as what is measured by an intelligence test, Bingham (1937) defines intelligence as “the ability of an organism to solve new problems.” In a later definition which summarizes much of prior development in the field, Gottfredson (1997) broadened the perspective, stating that Intelligence is a very general mental capability that [ . . . ] involves the ability to reason, plan, solve problems, think abstractly, comprehend complex ideas, learn quickly and learn from experience. [ . . . ] Rather, it reflects a broader and deeper capability for comprehending our surroundings—catching on, making sense of things, or figuring out what to do. (p. 230)

Although many other perspectives from different disciplines exist, see for example, the

Considering intelligence in the context of human–computer interaction, Alan Turing (1950) extensively discussed a communication-level test in his work “Computing Machinery and Intelligence.” Using the term “imitation game,” Turing defined an experiment to challenge machines for their intelligence; covering the identity of communication partners, can an interrogator identify only by questions and answers whether he or she is talking to a human or a machine? Most interestingly, Turing had already connected the discussion of whether machines could pass this test, with the term of learning machines—an idea, we follow up today in the context of machine learning and AI.

As we deal with bots in this work, we specifically search for AI in automation. The term “bot” finds its origin in “robot” and should be synonymous with automation in this article. Here, a bot is not necessarily a physical robot but may only be represented by a software system.

As motivated earlier, we are especially interested in bots appearing on social media platforms—commonly known as social bots. Social bots gain more and more attention in public discussions and multiple research fields (Ferrara et al., 2016). Broadly, a social bot can be understood as, a super-ordinate concept, which summarizes different types of (semi-) automatic agents. These agents are designed to fulfill a specific purpose by means of one- or many-sided communication in online media. (Grimme et al., 2017)

From this perspective, a

In other contexts, the purposes of social bot employment might be less innocuous. Particularly in political context, sometimes the term

Finally, an area of current intelligent bot development is the creation and utilization of

Review on Bot Intelligence

To set a contextual basis for the empirical study, we conducted a structured literature review according to vom Brocke et al. (2009). The goal of the literature review is to (a) elaborate a common sense of the understanding of the intelligence of social bots and (b) to find examples from literature, which discuss the development or evolution of bot intelligence. An investigation of implications and impacts of (intelligent) bots on society is of course strongly connected to the aspect of technological potential (Millimaggi & Daniel, 2019), however, beyond the scope of this work. For an interesting and very interdisciplinary discussion regarding this topic, we refer the reader to a collection of academic essays edited by Gehl and Bakardjieva (2016/2017).

Using Scopus 6 as indicator of the academic debate, we searched for all papers entailing the terms “intelligent” or “intelligence” in conjunction with “chat bot” or “political bot” or “social bot.” Overall, 206 papers were identified and augmented by context literature finally covering the time from Alan Turing’s “Computing Machinery and Intelligence” up to Cresci (2020).

Since Turing’s proposal, the creation of a computer program, which has the ability to imitate human-like behavior, was in the focus of early “chatterbot” development. They were originally constructed to act within a human–computer conversation setting (text- or speech-based) and to ultimately pass the Turing Test in a predefined scope (Abdul-Kader & Woods, 2015; Jafarpour & Burges, 2010). Earliest implementations, such as the famous ELIZA chat bot, were simple rule-based systems employing predefined sentences from a knowledge database to create sentences based on what the interrogator had said (Weizenbaum, 1966). Often, chat bots are frameworks, composed by different subsystems which all interact with each other to create believable human-like output. The most important component is referred to as the

With the rise of social platforms in the early 2000s, a new type of bot—the social bot—came into play. As one of the first researchers, Boshmaf et al. (2011) report on social bots as computer steered social network accounts that mimic human behavior, similar to chat bots. They also noted that social bots can appear in groups, called bot nets, and can be employed to spread spam, support politicians, or others by pushing campaigns. According to Boshmaf et al. (2011), the army of simple bots is steered by a so-called

Most studies describe the intelligence of social bots as being limited to the purposes of malicious spam attacks or the extraction of user data. Spam attacks are realized by a group of bots, where each bot is able to send requests and post or share predefined content (Zhang et al., 2013). To extract user data, bots typically send out friend requests (Wald et al., 2013). Afterwards, data like birthday dates or phone numbers, can be easily collected by API calls (Boshmaf et al., 2013). This is comparable to the

During the years, social bots became more and more important as political actors (Hegelich & Janetzko, 2016). A spam bot architecture can be used to spread political opinions or disinformation, for example, during election periods. The malicious use of social bots implied the need for social bot detection strategies. Although the intelligence of social bots seems limited to easy tasks (like sharing predefined content and connect with other users), the detection is complicated (Ferrara et al., 2016), especially, when some “simple” human mimicry, like a day and night rhythm is added. Researchers work on bot detection by applying machine learning approaches (Dewangan & Kaushal, 2016) or reverse engineering strategies (Freitas et al., 2015).

Bot detection is complicated, thus, the current level of intelligence in social bots, that is, their ability to create content oftentimes stays vague. According to the literature, mimicking human behavior is realized by spreading predefined posts, share existing posts, or search for content to share in the Internet (Appling & Briscoe, 2017). Next to external sources, social bots could be enhanced by chat bot technology (Boshmaf et al., 2011). Ferrara reports on a dark market, where customized social bots can be bought to support campaigns on social media (Ferrara, 2017).

With the rise of neural computing and introduction of sophisticated neural architectures such as Recurrent Neural- and Long Short-Term Memory (LSTM) Networks (Hochreiter & Schmidhuber, 1997), researchers started to build more complex chat bot systems which mainly improved the

While social bot detection mechanisms get more and more sophisticated (e.g.,

Only little has been published on the subject of bot technology so far. Notable exceptions are an example (but very simple) bot code provided by Grimme et al. (2017), the work of Kollanyi (2016), which partially inspired this study, and a recent paper by Millimaggi and Daniel (2019) which analyzes social bot code focusing on Twitter and a small selected set of implementations to extract behavioral patterns that may be harmful.

This study contributes to the literature through both a comprehensive analysis of dark markets as well as an extensive examination of software repositories and strives to conclude on the intelligence potential of present social bots. This may serve as a new building block for discussing the influence of social bots in society.

Clearnet and Darknet Market Places

Data Acquisition

We investigated clearnet and darknet markets in a systematic field study which mirrors the market access of “average” Internet users. Since social bots are highly debated as tools of political manipulation and malicious trading, we focused on the market situation before a large democratic election, namely the German parliamentary election in 2017. Accordingly, the analysis focuses on German and English markets. Markets were identified through a triangulation of the scientific and “grey” literature as well as through special online resources (e.g., darknet related Reddit threats or the now defunct deepdotweb). We included all markets that were online at the time of data collection and were trading at least one item related to social bot technology into the database. A total of

Search Terms Used to Determine Clear- and Darknet Markets in Alphabetical Order.

Data Description

Overall, social bot-related market places and commodities are more prevalent in the clearnet as compared to the darknet. Collecting descriptions of all commodities identified on the few darknet markets offering relevant items (

Targeted Social Media Platforms

In both, the clear- and the darknet, social bot-related commodities were available for every larger social media platform and online service. Wide reaching social media sites dominated the market. Of the

Capabilities

Slightly more than one-third (36%) of the commodities advertised certain capabilities, allowing insights into the “intelligence” of the offered service or product. Most of them advertised simple amplification functions such as liking or sharing content (20%), followed by—the technically still rather simple—simulation of social connection through following others or providing fake followers (14%). Only 2% of commodities advertised “intelligent” functionality.

A closer inspection of the latter

The vast majority (51%) of commodities, however, addressed criminal purposes not directly related to manipulation as discussed in the context of social bots (e.g., spreading Trojans, exploiting SQL vulnerabilities, or conducting DDoS attacks).

Free Online Code Sharing Platforms

Data Acquisition

We used the Alexa global usage statistics to identify the five most relevant collaboration platforms. The Alexa rank is a metric, which can be taken to evaluate the importance of a website. 7 The metric combines calculations of internal homepage traffic such as page callings, and their development over time. Since the collaboration platforms are structured differently, it was infeasible to establish a common and comparable procedure for searching specific bot programs. The largest platform, GitHub, offers a detailed search engine where explicit search criteria can be applied on different repository fields. Similar to GitHub, GitLab also offers an API. We selected the search terms as generic as possible. Concretely, we combined the name of each Social Platform with the term (bot) through a logical AND operator. For GitHub, GitLab, and Bitbucket a unique crawler was programmed that automatically gathered the repositories’ information for all search term combinations. While GitHub and GitLab explicitly provide an external API for searching, Bitbucket is not easily accessible. Therefore, we utilized a web scraping framework for collecting the relevant information. The remaining platforms, SourceForge and Launchpad, were manually queried through the provided web interface because of the low number of matching repositories for those platforms.

To allow for time efficient crawling and to avoid noise in the data set due to temporal developments during data collection, we specified the following limitations to our gathering process: First, we did not download the actual files (source code) of the repositories, since our analysis is mainly based on metadata. Second, we dismissed the history of individual commits (code contributions) on all repositories. Although these data may provide interesting insights, the amount of potential additional API requests would have been significantly increased. Instead, we limit our analysis to the first and last contributions. Due to the heterogeneous structure of the collaboration platforms, we defined a common intermediate schema for data representation (see Supplementary Figure S11). Although some platforms consist of meta-data (e.g., location attribute on GitHub) that are not present on other sources, we include these additional information sources in our analysis. This especially holds for the GitHub platform which contains more than 90% of all repositories.

Data Description

The data of

Top Five Code Repository Hosting Platforms.

Targeted Social Media Platforms

Across all collaboration platforms, we observed a similar distribution regarding frequency of social bot repositories targeting specific social media platforms. Most bot code was produced for Telegram (52%), followed by Twitter (24.5%), Facebook (9%), Reddit (9%), and Instagram (2%). 8

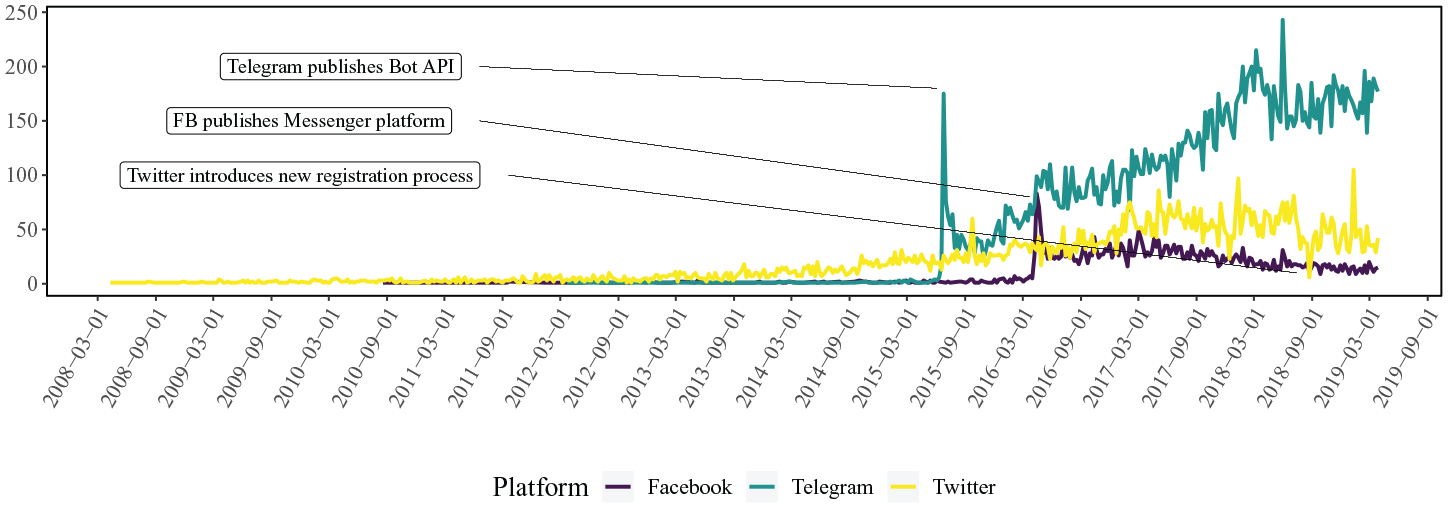

At first sight, this is a surprising result since Telegram is not considered as one of the big social media players and the platform only exists since 2013. A detailed inspection of the creation date for Telegram-oriented repositories revealed that until 2015 the platform did not receive a lot of attention. This changed in June and July 2015, when a significant increase in the number of related projects can be observed. We directly explain this sudden increase by the fact that Telegram officially launched its open bot platform, on 24 June 2015, making it easy for programmers to create automated bot programs through an external API. The reverse effect can be observed for the number of newly created Twitter bot repositories in summer 2018, see Figure 1. Twitter revised the registration process for developer accounts multiple times, by making it successively more complicated to register new applications. While these new restrictions impede the development of new large-scale social bot networks, they also limit the development of sophisticated detection mechanisms which utilize forensic analyses on large amounts of data.

Number of newly created repositories per week.

Across all platforms, we observed a positive Spearman rank correlation between the number of repositories found for a specific social platform and the corresponding level of API support (ρ = .75). 9 All of the mentioned examples emphasize the crucial role of technological affordances for the availability of tools for automation, showing how platform decisions affect the availability and spread of social bots on individual platforms. From an even broader perspective and with respect to addressed social media platforms, we can observe that social bot code availability on open collaboration platforms is contrary to the respective availability on commodities provided in online market places of the clear- and darknet (see Figure 2). This suggests that the level of technical accessibility of social media platforms directly determined whether social bot services were predominantly offered as open software or paid service.

Relative share of bot commodities for the clear/darknet and collaboration platforms.

The APIs of the platforms can be accessed by different programming languages. The most common programming language over all platforms is Python, although JavaScript is also frequently utilized. This is most plausible as Facebook explicitly provides a JavaScript Toolkit. In addition, JavaScript is used for accessing the web interface of platforms with restricted APIs (e.g., Instagram).

In 2016, Kollanyi (2016) investigated the geographical origin of Twitter bot repositories. We build on this approach and present an updated version of the contributor distribution in Figure 3. In line with Kollanyi’s work, the majority of the Twitter-related repositories originated from the United States. Our updated version reveals that the United Kingdom caught up to Japan and followed the United States by providing the second largest number of bot-related repositories. In addition to the analysis conducted by Kollanyi, we can directly compare the geographical distribution of repositories for all major social media platforms. Therein, we observed some inherent dissimilarities; while Russia did not play an important role in the context of Twitter bots, most of the Telegram bot code owners were from that country, which is presumably due to the popularity of Telegram within the Russian population (Karasz, 2018). For WhatsApp-related bot development, India was the main geographical origin of repositories, as this medium was an important information source and networking platform in that region. Overall, we might infer that the regional social media diet is reflected in the geo-spatial distribution of repositories (Raina et al., 2018).

Global geographical distribution of Twitter, Telegram, and WhatsApp repositories determined by evaluating the geographical information provided by the considered software collaboration platforms.

Bot Capabilities

Identifying the capabilities, operational scenarios, and associated implementation effort of automated bot programs in the context of social media platforms is regarded as one of the major goals of this study.

Therefore, the content as well as the overall topics of available social bot code were of central interest. Since, in general, manual inspection of the code base or the description of each repository is infeasible for the number of gathered repositories, we approach the data in two different ways: in a first step, we applied dominance filtering from normative decision theory (Peterson, 2009) to restrict the number of “interesting” repositories for detailed manual investigation. This “interest-value” of repositories was determined through three different indicators: the

Apart from these native social bot functionalities, the largest proportion (

Visualized LDA results and topic distribution of five identified topics. To plot the topics onto a two-dimensional surface, we applied t-SNE (van der Maaten & Hinton, 2008) on the original documents (project descriptions). All documents were represented as 20-dimensional topic distribution vectors and subsequently transformed into a low dimensional space. We colored each document according to the most dominant topic and explicitly excluded descriptions with uncertain topic belonging. The visualization shows that with the chosen number of topics (

Surprisingly, none of the top representatives among the resulting topics contained concepts related to modern AI or state-of-the-art machine learning algorithms.

Relevance and Methods of Artificial Intelligence

The results of our interest filtering and topic modeling indicate that techniques of AI were rarely utilized and therefore played a minor role in the bot-creation community at the time of data collection. Nonetheless, it is important to identify which techniques are suitable for creating more sophisticated bots. Especially in the aftermath of recent advancements in natural language generation (NLG; Radford et al., 2019; Vaswani et al., 2017), we wanted to evaluate whether these techniques were already part of the established bot-creation tool set or not. To provide a more concrete picture of the state-of-the-art of intelligent social bots, we manually extracted and analyzed repositories, which contained relevant keywords (e.g., machine learning, deep learning, or AI) or entailed specific algorithms and technologies, for example,

A large fraction (25%) of the identified repositories (

Interestingly, we also discovered that

The remaining

Discussion

The observations of the different descriptive analyses together imply that we can distinguish two scenarios with respect to the effort of social bot development. The first and simple scenario is the one where bot operators aim for trivial usage of the available APIs of social media platforms performing amplifying actions like posting, favoring, or sharing. For these purposes, off-the-shelf software is freely available and feasible. In addition, there is a set of proprietary interfaces and frameworks to easily enable such tasks.

More expensive, though probably still feasible, is the realization of advanced social bots that simulate human behavior on the activity level—not in interaction with others. Openly available social bot frameworks (some even well established and continuously maintained) enabled the enrichment of ready-to-use building blocks with more complex code. Experiments of Grimme et al. (2018, 2017) demonstrated that such extensions are feasible and require only medium technical expertise. It has to be emphasized that even those presumably simple mechanisms can already cause substantial harm to society if they are used in large scale (Ross et al., 2019).

A major gap, however, can be identified between the available open software components and the sometimes postulated existence of intelligently acting bots—that is, bots which are able to produce original content (related to a defined topic), provide reasonable answers to comments, or even discuss topics with other users. The absence of software tools for such (intelligent) tasks can have multiple reasons:

The development of those techniques is too difficult and too costly to be provided for the public. This could be a plausible explanation, however, we would then expect software being offered in commodity markets, either in the clearnet or more probably on the darknet platforms. Still, we did not find any of such commodities in our market analysis and thus assume that commodities comprise tools of simple to medium complexity.

Existing code may be shared in an obfuscated way. This approach would contradict market mechanisms (the goal of earning money with provided service) as well as the paradigm of software sharing. Hiding potent software products is plausible for secret services but not for the developer community or commodity providers.

Techniques for realizing “intelligent” social bots are scientifically too advanced to be subject of current development. It is plausible to assume that social bots are usually applied by groups or persons that do not have sufficient resources nor time for advanced research in AI. It is thus only pragmatic to assume that the costs of hiring human agents who impersonate multiple (fake) accounts is far more time- and cost-effective than developing and controlling intelligent automatons.

Recent reports on experimental intelligent social bots by large software companies, support the last assumption. These reports suggest that there is currently no productive “intelligent” software available (Hempel, 2017; Ohlheiser, 2016). Other observations show that “intelligence” on conversational level is (intentionally) restricted to advanced chat bot capabilities (Stuart-Ulin, 2018), as simple learning approaches are too sensitive regarding external manipulation (Ohlheiser, 2016). As such, the effort for realizing “intelligent” bots can be considered infeasible, today.

Conclusion and Future Perspectives

In this work, we investigated the reported capabilities regarding the intelligence of automated (bot) programs and compared them to existing social bot realizations gathered from code-sharing collaboration platforms as well as to available darknet commodities. Interestingly, we find a clear discrepancy between the theoretic, literature-based, and the practically achieved degree of intelligence. While sophisticated chat bot architectures do exist, which utilize deep learning techniques and are also discussed in literature, we identified a predominance of only rather simple social bot implementations during our data collection. Although, both chat bots and social bots work on the level of human-machine communication, a transfer of artificially intelligent approaches from chat bots toward social or political bots could not be observed.

As already mentioned before in the previous section, we can only speculate about reasons for this discrepancy. It may be plausible that the available artificial intelligent techniques used in chat bots are not sufficient for dealing with highly domain specific content such as political debates. A different explanation could be that intelligent systems are simply not needed to achieve the overall goal, namely manipulation of humans, and that simple amplification systems already satisfy the needs of users that utilize such bots. As the study focused on the descriptive analysis of open bot repositories as well as clear- and darknet market commodities, a clear reasoning about the causal effects cannot be made and should moreover be tackled in an interdisciplinary research endeavor for future work.

Clearly, technologies will change or advance together with research (e.g., new methods in AI) or with modifications in the technological ecosystem of social media networks (e.g., changes in APIs or the accessibility of data). Beyond singular analyses of market places and open software for social bot realization, future research should monitor the bot capabilities and developments over time to track upcoming trends in social bot-creation (Assenmacher et al., 2020). This can point towards the direction of the development of more sophisticated and multifaceted detection techniques, which go beyond account-based, simplistic, and deterministic techniques applied today. The success of these endeavors, however, also depends on the availability of data for research and thus the transparency and responsibility of social media platforms. Besides methodological advances in science, it is up to both politics and platform operators to enable research for keeping track with new development and challenges.

Supplemental Material

Supplemental_Material – Supplemental material for Demystifying Social Bots: On the Intelligence of Automated Social Media Actors

Supplemental material, Supplemental_Material for Demystifying Social Bots: On the Intelligence of Automated Social Media Actors by Dennis Assenmacher, Lena Clever, Lena Frischlich, Thorsten Quandt, Heike Trautmann and Christian Grimme in Social Media + Society

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The authors acknowledge support by the German Federal Ministry of Education and Research (FKZ 16KIS0495K), the Ministry of Culture and Science of the German State of North Rhine-Westphalia (FKZ 005-1709-0001, EFRE- 0801431) and the European Research Center for Information Systems (ERCIS).

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.