Abstract

Drawing on findings from qualitative interviews and photo elicitation, this article explores young people’s experiences of breaches of trust with social media platforms and how comfort is re-established despite continual violations. It provides rich qualitative accounts of users habitual relations with social media platforms. In particular, we seek to trace the process by which online affordances create conditions in which “sharing” is regarded as not only routine and benign but pleasurable. Rather it is the withholding of data that is abnormalized. This process has significant implications for the ethics of data collection by problematizing a focus on “consent” to data collection by social media platforms. Active engagement with social media, we argue, is premised on a tentative, temporary, shaky trust that is repeatedly ruptured and repaired. We seek to understand the process by which violations of privacy and trust in social media platforms are remediated by their users and rendered ordinary again through everyday habits. We argue that the processes by which users become comfortable with social media platforms, through these routines, call for an urgent reimagining of data privacy beyond the limited terms of consent.

Introduction: Social Media and Trust

Scandals relating to Cambridge Analytica’s data brokerage, recurrent stories about Facebook’s privacy violations, and the leaking of data from a gamut of sites ranging from online dating websites to major retailers have all contributed to revealing the colossal amounts of sensitive information being compiled by large organizations, both public and private, and their dubitable claims to safeguard it. Indeed, it has been claimed that experiencing a data breach is now more likely than rainfall (Brockwell, 2019), so how do social networking sites convince users that they will be protected from the deluge of data discomforts that are becoming so frequent (leaking private information, false information, promoting harmful content, etc.)? The frequency of high-profile incidents involving mass surveillance have brought issues relating to the ethics of social media data collection to the fore and raised questions around how users come to re-establish trust and familiarity with platforms in such volatile times.

As the most likely to use social media platforms (Miller-Bakewell et al., 2015; Perrin, 2015) and given the way online spaces can play an important role in their community building and identity formation (Hanckel et al., 2019; McCann & Southerton, 2019), young people are all too familiar with these periodic exposés. Many scholars have rejected claims that young people are ignorant of the risks of disclosing personal information online, emphasizing that their continued participation has more to do with strong social ties formed online that make nonparticipation undesirable, even abnormal, and contend that the structures of the platforms themselves render informed decision-making about privacy difficult (Blank et al., 2014; Hargittai & Marwick, 2016; Marwick & boyd, 2014; Steeves & Regan, 2014). While many scholars have examined the so-called “privacy paradox” (Barnes, 2006; Taddicken, 2014), little is known about the lived experiences of these processes by which the discomfort with data vulnerability is eased.

The 2019 Edelman Trust Barometer reveals that trust has changed profoundly in the past year. In particular, “low trust” in social media as a source of information has become characteristic of Western societies such as Europe, the United States, and Canada where trust in social networking sits just over 30%. This decline in trust, however, has not translated into an exodus from social networking sites—far from it. Continual growth in subscribers has been reported by most major social media platforms. It is now estimated that Facebook has 2.23 billion monthly users; Instagram, 1 billion users, WhatsApp, 1.5 billion, and Messenger 1.3 billion (Constine, 2018). The continued use of social media platforms despite pervasive distrust supports existing findings that users feel “opting out” is not a viable solution to data breaches or privacy concerns (Elmer, 2003; Hargittai & Marwick, 2016). The question of precisely how trust is established, broken, and re-established, then, is a pressing one. Pink et al. (2018) argue that trust is closely connected with the interplay between routine and change, stating “humans trust when we feel confident enough that any improvisatory action is sufficiently cushioned by the familiarity of process or place” (p. 3). This article seeks to examine the habits and routines of young people’s social media use with an orientation toward the question of trust to interrogate these moments of rupturing and discomfort with privacy violation. In doing so, we respond to the call of scholars such as Pink et al. (2017), who argue for greater attention to be paid to the mundane and routine practices from which data emerge.

This article is organized into five sections. First, we situate our study within existing discourse examining social media privacy and practices. In the second section, we outline our methodology; a photo-elicitation project including interviews with young adults focused around the theme of everyday surveillance. The third section turns to the findings, examining the ways our participants described moments of privacy violation and discomfort with social media data collection or surveillance, as well as the ways in which they become comfortable again using the platform. In the fourth section, we examine the affordances of social media platforms, which encourage certain practices while discouraging others. We also draw on Deleuzian thinking to conceptualize the ways habits form inclinations and new desires over time, arguing that social media habits are constitutive of familiarity and comfort with the surveillance of the platforms. In the fifth and final section, we argue that the habitual and routine relations that constitute our encounters with surveillance-intensive platforms highlight the inadequacies of approaches to data ethics that remain oriented toward rational choice. We argue for a rethinking of existing approaches to the ethics of data surveillance on social media platforms that is capable of considering the habitual conditions from which information disclosure emerges.

Sharing and Privacy on Social Media

The mass collection of data on social media platforms has raised a number of important questions about privacy and under what circumstances user’s data can be collected. Contemporary debate around data ethics and privacy have largely remained oriented around the autonomy of the user (Barnard-Wills, 2012; Lyon, 2003), specifically concerned with whether users are or can be appropriately informed about what data will be collected and for what purpose (Fairfield & Shtein, 2014; Mayer-Schönberger & Cukier, 2013; Nissenbaum, 2011). The “notice-and-consent,” or “informed consent,” model remains the standard of most online privacy policies and contemporary debate has focused on critiquing the key dimensions of this model (Barocas & Nissenbaum, 2009; Bechmann, 2014; Custers, 2016; Nissenbaum, 2011). Notice-and-consent practices require the informed consent of participants based on information provided by the data collector about the way the data will be collected, stored, and disseminated. Crucially, it is the “informed” dimension of consent that becomes key to this framework, the assumption being that individuals evaluate, in a rational manner, and, based on the given information, make an informed choice whether they wish to participate in the collection of data.

While the informed consent model has been criticized, the focus of this critique has largely been the question of what constitutes properly “informed” consent (Andrejevic, 2010; Bechmann, 2014; Flick, 2016; Fuchs, 2017), without questioning the decision-making subject this focus on consent privileges. Whether individuals truly consent has been substantially critiqued on the grounds that the desirability of social networking sites renders consent problematic from the outset. Furthermore, the resale of big data and future unpredictable uses of data are argued to be another key concern about whether consent can be considered genuine. Individuals cannot adequately be informed about, and thus consent to, secondary uses of their data that have not even been considered at the time the data are collected or at the time they would be giving consent (Barocas & Nissenbaum, 2009; Custers, 2016; Fairfield & Shtein, 2014; Mayer-Schönberger & Cukier, 2013; Rule, 2012). In addition, as Nissenbaum (2011) points out, privacy policies designed to inform users are often long, wordy documents that are either not read or inadequately understood. It is often ambiguous to users exactly what they are consenting to and information can be misleading.

While these criticisms certainly raise important questions, they serve to accept and reinforce the underlying assumptions of the notice-and-consent model that render it inadequate. Once “informed,” individuals are believed to be able to consent to data collection and dissemination based on their evaluation of risks and benefits; the decision to utilize a service or a device is a choice on behalf of the user. In particular, the assumption that individuals are ultimately intentional subjects, at least preserving this as an ideal, neglects the less conscious aspects of data generating practices. Furthermore, it ignores the proclivity of surveillance “to quickly fade into the background, quietly habituating new ways of thinking and being” (Taylor, 2018: 385). As such, we argue that understanding the affordances of social media platforms and the habits they are embedded within are key to understanding how and why participants construct a veneer of trust to mask discomfort with data surveillance, even when the routine ruptures in data security would inform them otherwise.

Scholars have identified the limitations of the notion of privacy as it is currently understood (Andrejevic & Burdon, 2015; Lyon et al., 2012), especially with regard to the normalization of surveillance (Lyon, 2018) and the individualization of privacy (Fuchs, 2017; Sarikakis & Winter, 2017), these critiques remain largely oriented toward explicating the power structures acting upon subjects. Although such lenses are of crucial importance, they do not account for the co-emergence of human/technological relations. Our complex relations with these data-networking technologies, and indeed the transformations this relation has already elicited, appear at odds with the view of the human as primarily exercising rational choice. While discourses on posthumanism have sometimes prematurely proclaimed, and even celebrated, the end of the sovereign human subject (Haraway, 1991; Hayles, 1999), it certainly seems that an appeal to a subject who must be capable of providing informed consent—even if the present conditions do not provide such a thing—falls severely short of addressing the kinds of transformations made possible by relations with technology. Accounts that retain the subject as autonomous, especially with respect to our relations with technology, fail to comprehend the complex ways in which the human and the technological are co-implicated. As Bernard Stiegler (2013) insists, the human is always already technological, never simple or “naturally” human. Technology is not something we take up as a tool, but something wholly prosthetic, which has the capacity to transform us in ways that involve both constraining and enhancing dimensions. If it were simply a matter of human intention motivated by rationality, how can we possibly come to terms with the unexpected transformations that emerge from technological change? How can we reconcile these diverse transformations with the limited focus of current debates?

If we stay within the bounds of the sovereign frame, young people seem to inevitably fail in their responsibility to manage their exposure to privacy risks—especially in the face of repeated privacy scandals. They are commonly depicted as ambivalent or, worse, ignorant of the implications of their everyday practices. Their actions (or inactions), their failure to opt out of these services, are seen as proof of their false consciousness (Fuchs, 2011, 2017). Such an approach neglects the ways that these data are produced, through processes involving complex interrelations of pleasure, intimacy, familiarity, comfort, habits, and inclinations, which cannot be accommodated in this model but are crucial to its production.

Methodology

Seeking to examine the micro-level processes by which users cope with relations of comfort and discomfort surrounding privacy on social media, this article draws on a photo-elicitation study conducted with nine young people aged between 19 and 24. Participants for this project were recruited from undergraduate courses at a university in Canberra, Australia. They were provided with a digital camera or elected to use their own device and were asked to take photos that reflected their experiences of surveillance over a period of 1 week. Some participants also elected to include screenshots taken using their personal laptops and smartphones as part of their photo-diary. Participants were instructed that the composition and content of the images, as well as their interpretation of what constitutes surveillance, was entirely up to them. Directions given to participants with regard to the content of the images were deliberately broad to facilitate their own interpretation of the task. Participants were then asked to select 10 images to discuss in an unstructured interview, lasting between 45 and 90 min, in which participants offered their reasons for selecting the images as well as their reflections on the experience of taking the images.

Each of the participants captured imagery relating to or representing social networking sites and their interaction with them. Participants also further raised and discussed social media and privacy in the in-depth interviews that followed their weeklong photo-diary exercise. In particular, participants described being “uncomfortable” and “unsettled” by participating in the study and having their focus drawn to some of the information collected about them through social media platforms. Through the interviews, it became apparent that social media users were able to actively remediate these moments of rupture and, once again, establish the “ordinariness” of social media surveillance. This method offers unique insights into the ways in which social network users construct a veneer of trust and comfort to enable them to participate in the sharing practices necessary to access the platforms.

Findings: Getting Comfortable and Uncomfortable With Social Media

In much the same way as a public data breach scandal, the photo-diary method focused participants’ attention to the flows of their data for brief moments throughout their participation and we were able to reflect on these moments with them in our interviews. Given that young people experience conflicting desires between their privacy concerns and their deep social connections through social media (Fulton & Kibby, 2017; Lee and Cook, 2014), this method provided a way to draw out the complex and contradictory experiences of our participants. One participant, Emily

1

(19 years old), recounts how she felt after noticing a very personalized advertisement on Facebook during the project: Just like, shock . . . how in the hell did you get that information? That is just completely tailored to me. Like, yeah. Yeah, just scary and it makes me kind of angry that they’re pushing me to buy that.

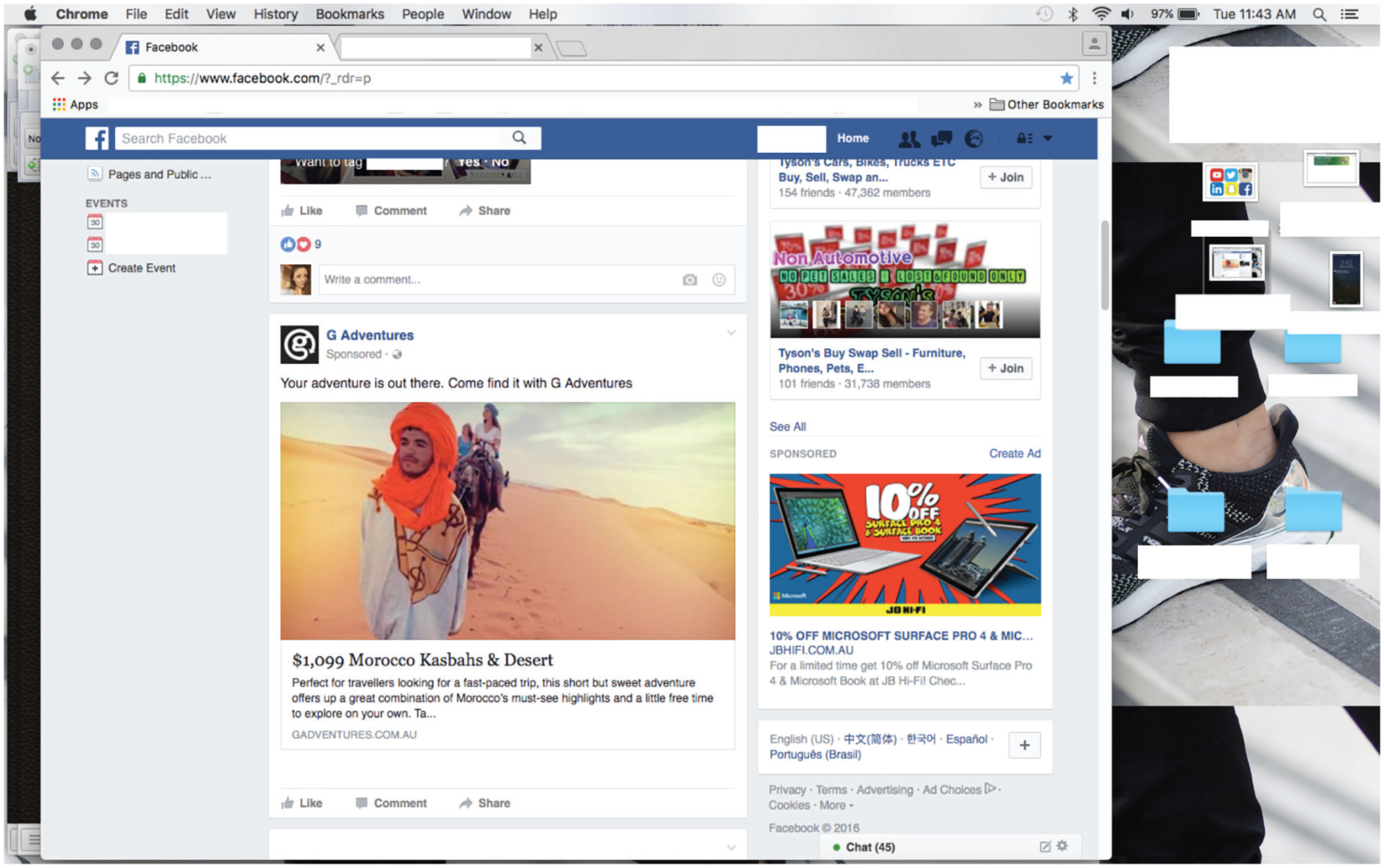

Emily describes the discomfort at the realization of the personalized advertisement. However, as the conversation progresses, she begins to reconstitute this discomfort, first by attributing responsibility to herself and then by reassuring herself that personalized advertisements are not very harmful. “I kind of feel a bit silly because there just has to be a part in that . . . terms and . . . there has to be,” she says, retaining some uncertainty, later adding, “it’s advertisement and it’s crap, and I hate that but . . . but it’s not really having that much of an impact.” At the beginning of the discussion, Emily describes her data being collected, sold, and presented back to her in the form of a personalized advertisement as “scary.” Within a minute, she reframes this reaction with an individualistic focus on her responsibility to resist these processes (Figure 1).

Emily’s photograph of her personalized advertisement.

The photograph Emily captures situates the moment of discomfort, the personalized advertisement, within the ordinariness of her digital life. Her screen-captured image shows her desktop (cluttered with files), her web-browsers with multiple tabs open and the advertisement sits among a myriad of other Facebook interactions—upcoming events, the offer to tag a friend in a photograph, and a list of friends active on her Messenger chat. Emily’s photograph not only prompts discussion of the way in which web browsing data are used for targeted advertising but also brings to life the way these tensions and moments of discomfort are embedded within comfortable domesticated routines.

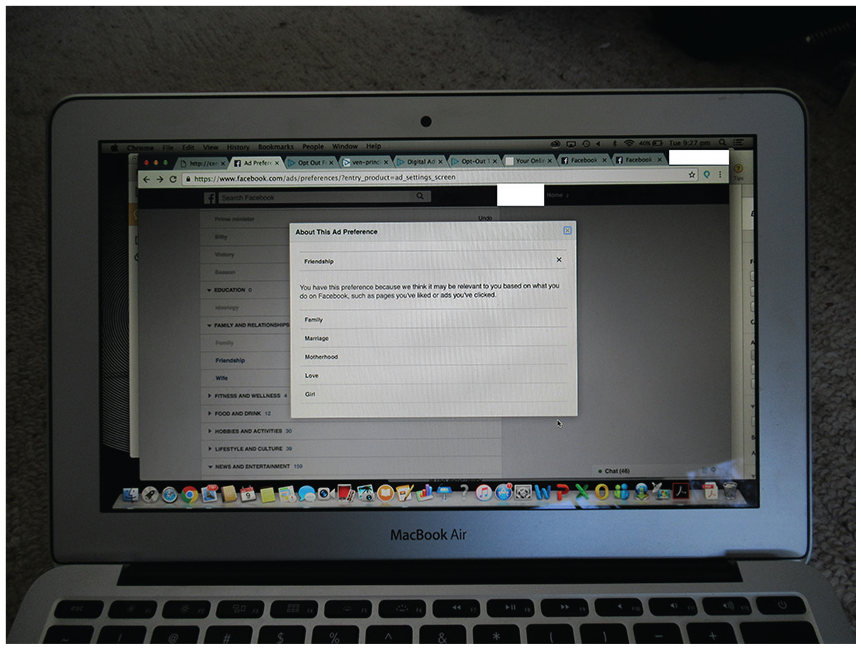

Moments in which participants dwelled on the ways their social media data were collected stood out within their ordinary social media practice, jarring at times with the desire they felt to engage with the platforms. Advertisements on social media were, in particular, a way through which participants’ attention was drawn to the question of privacy and their personal data. One participant, Amelie (24 years old), took a photo to capture a moment during the week where she reviewed her “ad preferences” on Facebook

2

and, in the interview, she reflected on how she felt seeing her personal information reviewed by the platform (Figure 2): Umm . . . it said that this is based on either pages that I’ve liked or ads that I’ve clicked on. Another page said it’s like . . . according to internet connection and . . . I guess it doesn’t really make me feel . . . umm . . . even, like . . . oh no it does make me feel scrutinised. But I just . . . I think it’s . . . funny to me now (laughs). Like, it thinks I’m . . . married and I have a child and I associate friendship with girls . . . as well, which I think is interesting. Like, so much of that is wrong.

Amelie’s photograph of her laptop while she looked at her advertising preferences on Facebook.

Here, Amelie attempts to make sense of the categorization of her user data from Facebook, finding humor in the ways the data fall far short of an accurate snapshot of her or her interests. In the second sentence, she begins to say she is unbothered by reflecting on how Facebook advertisers might see her but pauses and rephrases. She concedes that she feels scrutinized but is able to re-establish distance from this feeling by finding the data inaccurate. However, as the interview continues, she revisits her assessment of how looking at the settings made her feel, explaining, “I guess I do feel uncomfortable about it, like that was probably why I looked into it in the first place.” Earlier in the interview, Amelie had described the process of giving up personal information as justifiable to gain access to a service, in reference to using an app that measured her sleep. The interviewer asks her if Facebook is a similar decision, and her answer eloquently traces the tension many participants described between their daily practices and moments of violation: I never really think about what Facebook offers me, personally. It feels more like a compulsion than a service, I guess . . . that I get anything out of (laughs). I guess I kind of have a bit more of a tumultuous relationship with Facebook.

Although much has been made about the exchange process whereby users “sell” their personal information (Carrascal et al., 2013), Amelie’s account suggests divulging personal information to social media platforms is not simply a desire for a service but rather further complicated by the associated rituals and routines of engagement on the platforms. What Amelie, perhaps playfully, describes as “a compulsion” draws attention to the ways social media platforms like Facebook become ordinary and comfortable.

Moments in which privacy becomes thrust into a user’s awareness can become quickly subsumed by daily routines, as Olivia (19 years old) describes in her interview. When discussing a photo she included of her webcam, Olivia recalls a high-profile incident in which Facebook CEO Mark Zuckerberg’s computer was shown in the back of a promotional photograph for Instagram with his webcam and microphone visibly covered (Hern, 2016). The photograph went viral and caused many to do the same with their own webcams and microphones. She says the photograph “did kind of scare me when I saw that” and describes rethinking her assumptions about what Facebook did with data from the many video calls she had made using their platform, explaining “it always makes me, like, rethink everything and that Mark Zuckerberg thing really did.” Olivia then describes the shift in momentum as the privacy scandal fades from public attention, describing the process by which “the news story kind of dies and it goes away and then you forget about it.” Olivia’s description of the way tension surrounding privacy violations dissipates gives a sense of the way discomfort soon becomes reconstituted by the everyday habits of use that render social media practice ordinary once more.

Participants were aware of the tension they experienced between moments of discomfort when their trust in social media platforms was violated, on one hand, and their embeddedness in routines of social media use on the other. Alfonso (19 years old) references exactly this ordinary aspect of social media use in a discussion about what would motivate him to leave the social media platform Facebook:

I dunno, like, if I read somewhere, if there was a big scandal or something, if all this data . . . being . . . either hacked from Facebook or Facebook were using . . . for . . . umm . . . yeah if Facebook . . . were giving it to other people.

Like if it was being sold?

Yeah, like selling the data. Then maybe delete. But I don’t know if I would! (Laughs).

And what do you think it is about Facebook that makes you not want to delete it?

It’s just . . . I dunno . . . I’ve been using it for too long, it’s too easy . . . [sic]

For Alfonso, the sale or hacking of his personal data on Facebook is certainly a concern but he acknowledges the ease with which he communicates and connects using the platform. He laughs when he reconsiders aloud whether he would indeed delete Facebook after such a violation of trust, a way of momentarily relieving this tension and acknowledging the contradiction playfully (Figure 3).

Alfonso’s photograph depicting the Facebook log out screen.

Alfonso’s photograph that initiated this discussion was an image of the screen Facebook displayed when he logged out. In the interview, he reflected on the rarity of actually logging out and seeing this screen. The image, for Alfonso, despite being of an unfamiliar Facebook page (the log out screen) represented the familiarity of the platform and the normality of consistent sharing of personal information through being signed up to the site. As he explains, “It’s just been normalised, Facebook. It just seems so normal and so mundane.” The ease by which social media affords connectivity and becomes part of everyday routines of communication was referred to by participants when they discussed the impossibility of leaving the platforms—Facebook in particular. Despite acknowledging these platforms as somewhat untrustworthy, some participants felt they did not necessarily have another choice beyond using them, as Hannah (23 years old) explained: It’s almost just, like, part of the experience or something. Like you know, like, I know that people collect information about me and I guess I don’t consciously think about it every time I click on something. But . . . yeah. It’s just . . . I dunno how to avoid it [sic].

Hannah identifies the tension between the collection of her personal data and the ways in which this does not register consciously with her in her everyday experiences with the platform. She renders this more ordinary by describing it as “part of the experience” rather than as a notable occurrence. Trust with the platform is established here through routine interaction and the necessity to access connection through the site.

In addition to moments of discomfort in which violations of privacy were brought to their attention, our participants also identified the positive value and, at times, social obligation to be sharing. In many discussions about engaging with social media platforms, the pleasures of participation were emphasized and participants described the difficulty of abstaining. Olivia (19 years old) describes the pleasures and desirability of sharing, using the example of geotagging on Snapchat (Figure 4): I feel like . . . it’s almost like a good thing. So you put in a location border when you’re somewhere cool, like we went up to Bondi for the weekend, always doing the things . . . being able to go up to Bondi . . . is almost saying to your friends “Hey look, I was able to go to Bondi,” you know, so it is almost again . . . kinda like . . . powerful to be able to tag yourself in these cool places [sic].

Olivia’s photograph of her Snapchat image.

Olivia describes posting an image of her visit to a popular Australian beach and the social capital attached to this practice. For her, sharing her location and the location-tracking capacity of the platform facilitates interactions that are highly desirable. Olivia also hints at the discomfort and anxiety associated with failing to participate, especially with regard to the expectations of others: . . . with Facebook and you can see when people are on and when they’re not on, you can never really be switched out of the kind of public sphere, you always have to be kind of switched on. Which . . . like . . . it’s not creating more anxiety, but it does kind of create more urgency . . . to your life . . . because you’re constantly having to reply to people, to be active to be online and . . . you’re not left free time, in a sense.

Olivia describes the daily conditions of interaction, of which social media forms a significant part, as constituted by urgency and an obligation to be available, and she connects this to the capacity of Facebook to display who is online and when users last accessed the platform. Over time, the ease of connection facilitated through the platform and the solidifying rituals of checking in cultivate the conditions in which a message warrants an immediate response. Earlier in the interview, Olivia describes the “power” of being able to check if her boyfriend has “read” a message on Facebook messenger, but we also see through her discussion of this shift toward urgency that this capacity also shapes her own communicative practices. Her account offers insight into the ways of affordances of the platform: the capacity to see when someone has read a message, interact with habits to cultivate new expectations and anticipations.

Affording Trust: Social Media Habits

We argue that social media platforms script and incline users toward habitual and routine relations of disclosure and connection through sharing their data. By this process, a feeling of familiarity is developed with the platform and its associated practices (e.g., “liking,” “checking in,” or checking to see if a friend has seen a message). It is important to consider the ways that social media become embedded in the daily routines of young people to understand their encounters with breaches of privacy, and to develop more ethical protocols for data collection. Recent years have seen attention given to the ways in which nonhuman artifacts “script” (Akrich, 1992) actors, “prescribe” conduct, and exert influence over the environment in which they are located. Although affordance theory is strongly contested (Blewett & Hugo, 2016; Davis & Chouinard, 2016; Knappett, 2004), it nevertheless provides a useful concept to bring to an analysis of social media practices as it offers a way to think through the material capacities of the platforms. Originally conceptualized by James Gibson (1986) drawing on Lewin’s aufforderung-scharakter (invitation character) as a way of understanding how material objects project certain potentialities or suggest a set of actions by those coming into contact with them, the phenomenological idea that objects afford specific behaviors has since been adopted and developed by numerous disciplines, from psychology to design, anthropology to philosophy. Antagonizing pervasive Cartesian dualism, and building on affordance theories, there has been a move to recognize the agency of nonhumans, overriding a (mainstream) sociological tendency to “discriminate between the human and inhuman” (Latour, 1988, p. 303).

Drawing on this perspective, we argue that specific affordances of social media platforms are conducive to cultivating a sense of familiarity in the user. The platform interfaces incorporate feedback loops to personalize what information the user receives, resulting in each user experiencing an individual version of the platform (Bucher et al., 2017). Platform interfaces also draw on familiar symbols (e.g., heart, thumbs up) and seek to cultivate interaction between users through the quantification of engagement in the form of measures such as “likes,” “retweets,” or “followers” (van Dijck & Poell, 2013). These familiar interactions become further solidified by the habitual participation encouraged by platforms through the design of interfaces that incline users to “refresh” feeds or “update” followers. Further extending this notion of the affordances of social media, we argue that the deep embeddedness of social media in the lives of young people is made possible by habitual and repeated “checking in” and engagement, which at the same time reduces awareness of the intense surveillance of the platform.

Interacting forces of pleasure and desire are enfolded into routines and other processes that are not captured by consciousness, making social media practices much more complex than simply the actions of a sovereign subject following from either their own will power or power as exerted upon them. Exploring habits and repetition allows for a consideration of the nonconscious dimensions of use, acknowledging their productive capacity without subordinating them to conscious processes. While the problem of habit has been explored in social theory, these understandings have been predominantly negative. Considered “mindless,” habit has been seen as symptomatic of deeply embedded regimes of power. Bourdieu’s (1990) concept of habitus, concerned with the bodily manifestation of class structure in the form of tastes, thoughts, and actions, has been highly influential in thinking in the social sciences about what habit can do. For Bourdieu, habit manifests through external social structures acting upon an individual that they are unaware of. In this model, habits are the embodiment of false consciousness as individuals believe themselves to be autonomous but are truly reproducing social inequality in their desires. Habit here cannot be productive of anything but oppression and the nonconscious is rendered nothing more than the entry point for manipulation by social structures.

Repetition has long been associated with a kind of deadening of originality and creativity, replaced with lifeless and thoughtless action (Malabou, 2008; Sinclair, 2011). In particular, repetitive actions associated with technologies have been seen as symptomatic of the increasing mechanistic nature of human life as a consequence of technological interventions (Oulasvirta et al., 2011; Rosen, 2012; Salehan & Negahban, 2013). Understanding of repetition as fundamentally mechanical and symptomatic of our manipulation, either by social structures or by social media itself, offers little insight into the ways these actions elicit transformation. In contrast, what we argue for is an understanding of habit as an important generative force. In recent years, there has been a renewed interest in thinking through habit as a productive force, particularly in light of the republication of Felix Ravaisson’s book Of Habit in 2008 and the renewed interest in thinkers like Gilles Deleuze, and his work on repetition. Ravaisson (1838/2008) proposes that habits are formed through a process of repetition in which conscious awareness diminishes as bodily intelligence develops. Through repetition, the actions come to require less effort and become more effective. More than simply more effective action, habit opens up new capacities in bodies. As Ravaisson (1838/2008) puts it, “habit remains for a change which either is no longer or is not yet; it remains for a possible change” (p. 25).

Even more than considering habit as a productive, rather than a destructive force, we argue that habit is the central generative force of life. Deleuze (1968/1994) emphasizes repetition is ontological, arguing that it is through repetition and habits that difference proliferates, generating life in a constant process of becoming. Central to this claim is Deleuze’s (1968/1994, p. 186) assertion that this generative repetition is not a return of the same. “Bare repetition,” the return of the same, is superficial and mechanical, and observable to us as an action. However, Deleuze contrasts this kind of repetition with “clothed repetition,” the dynamic repetition that accounts for the production of difference. Clothed repetition is not the return of the same but rather facilitates transformation through minute variations and changing inclinations (Deleuze, 1968/1994). It is clothed repetition that is the basis of Deleuze’s ontology, as it is only through the production of difference that life continues. As such, habits introduce new capacities while dampening others: habits are dynamic transformations.

Thinking of habit, as Ravaisson and Deleuze understand it, enables us to consider the ways that our participants’ habits of social media use transformed over time to cultivate a kind of temporary and fragile trust in social media platforms. Each day they engaged in sharing their personal information with a social media platform, they cultivated new desires toward sharing and disclosure while diminishing inhibitions toward transparency. When our participants experienced moments in which their routine was disrupted—either by participation in the research project itself or by data breach scandals—the discomfort was not simply their conscious reflection on their privacy but also the friction these events caused in their otherwise seamless routines of connection through the platforms. When becoming immersed in social media feels familiar and comfortable, the demand to pause to take stock of the way one’s data are being harvested is disruptive but ultimately a singular event among thousands of daily repetitions which return the feeling of ease. Checking Facebook, using Snapchat, or Tweeting cannot simply be understood as the interaction of an individual and a technology but rather sits within a history of repeated encounters and inclinations, many of which may be beneath our conscious awareness.

Rethinking Data Ethics

In light of the complex interrelations that constitute social media use, an ethics that is attentive to how users become comfortable (and uncomfortable) with social media sharing opens up the kinds of questions we can ask when it comes to issues of privacy and data collection. Rather than limit debate to whether individuals know enough to make rational decisions or whether consent is possible in the present system, such an ethical evaluation is open to the multiplicity and ambiguity of the situation. Within this kind of ethics, it is untenable to hold to a pre-existing hierarchy of values that might position something like privacy as preferable to exposure. As Nietzsche (1886/1990) contends, those which are seemingly opposite might be far more intermingled. Ideas as seemingly opposite as desire for privacy and desire for exposure are far more interrelated than current debates about privacy would suggest. Thus, the instant of discomfort associated with a data breach might involve the layering of anxiety around violation, with desire and familiarity. Both can be held in productive tension and need not be reduced or simplified to come to an inevitable conclusion that there is a “social good” or “social evil.”

This approach represents a shift away from questions about the rights and responsibilities of individuals. Instead, we argue that questions should be asked about what kind of repetitions and transformations specific instances of data collection elicit. Using this approach, the issue of data resold multiple times would be considered not as a question of whether the user is adequately informed of these possibilities to consent but rather each instance of data use would need to be evaluated in terms of what it makes possible. This does not mean that attending the habits of social media and the affordances of the platforms equate to failing to critique these surveillant practices. Crucially, this approach does not put the responsibility on individuals to manage their data and the information they disclose, given that the conditions in which these data are produced are intrinsically bound up with the affordances and habits of social media use. This approach is also not bound to notions of the rights of (certain) individuals to privacy and therefore cannot be satisfied by inadequate notions of consent or changeable ideas of what privacy is (or should be).

Rather than being concerned with comparing data collecting practices with an existing “ideal” or “acceptable” degree of surveillance, attending to the routine and nonconscious conditions of data disclosure reveals the necessity to extend our ethical frameworks far beyond the individual and what they may or may not consent to in order to ask questions about what kinds of data should be able to be collected, bought, and sold. We might ask questions about what social conditions such routines of data collection give rise to, rather than seeking to condemn those who fail to opt out.

Conclusion

Thinking about social media practice as primarily habitual highlights the key problems with the way that we think about data ethics and privacy, especially regarding the way users remain on platforms in the face of distrust, privacy scandals, and violations. Limitations with existing privacy practices become increasingly apparent as we confront continual revelations of platforms violating users’ privacy failing to significantly affect social media use. The model of notice and consent heavily relies on the individual to take notice and provide consent if they so desire. Essentially, the responsibility falls with individuals to exercise their will power or suffer the consequences, yet such a perspective cannot account for the way individuals habitually ignore without simply defining them as “unwitting” or ignorant. A focus on informed consent to data collection neglects an understanding of these habits and the extent to which participation arises from nonconscious repetitions rather than conscious contemplation.

Though some scholars highlight a lack of information as the source of this problem and propose a solution based on providing more information, this implicitly constructs a subject who knows and navigates the world through conscious rationality (Andrejevic, 2010; Fairfield & Shtein, 2014; Nissenbaum, 2011). This perspective starts from the assumption that individuals go through a process of decision making when picking up their device as generating data, an assumption that is essentially out of touch with the mode we produce these data in and only possible if we neglect the material relations of social media practice.

If we examine social media at the level of affordances which incline users toward particular practices and the generative habits of routine use, we can better understand the ordinary repetitions of social media that largely form users’ experiences. These forms of dispersed power do not operate in a coercive manner and are not possessed by actors but rather operate in terms of relations, which are constitutive and transformative (Foucault, 1984). Particularly in the context of new forms of surveillance-facilitated voyeurism involved in the pleasures of social media (Trottier, 2012), considering surveillance in terms of shifting capacities opens up new questions about pleasure and thus resists the tendency that prevails in much social science to treat actors as ill-informed dupes of repressive power. Given the continued failure to protect their users’ privacy has ultimately not caused mass departure from social media platforms, it is clear that new ways of thinking about how privacy is negotiated in the everyday is needed. To develop more ethical platform models, it is imperative that the habitual and ordinary routines of social media use be considered, such that the responsibility for managing privacy does not unrealistically imagine individuals who will resist their own comfortable and pleasurable social practices.

Footnotes

Acknowledgements

The research would not have been possible without the participants who generously took part in the photo-diary exercise. We sincerely appreciate them contributing the photographs that they captured to our study and for sharing their insightful views and experiences with us.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was funded by an internal research grant from the College of Arts and Social Sciences at The Australian National University.

Data Availability Statement

Data available on request from the authors.