Abstract

Public health research has heralded social media as a space where sex education can be delivered to young people. However, sexual health organisations are increasingly concerned that restrictive content moderation practices impede their ability to distribute sex education content on social media platforms. To better understand these experiences, this article uses an autoethnographic case study of my experience navigating Meta's content moderation policies and practices when I promoted the Bits and Bods sex education web series. Using conjunctural analysis, I contextualise Bits and Bods's two experiences of content moderation (when our account was deleted from Instagram and advertising was rejected by Facebook) through policy analysis of Meta's content moderation policies. I then conclude by questioning whether public health practitioners should still be conceiving Meta's platforms as a space where they can deliver sex education to young people.

Keywords

Introduction

For the last decade, public health research has heralded social media as a space where sex education can be delivered to young people (Guse et al., 2012; UNESCO, 2020). Social media was seen as a way to overcome the organisational and political barriers that restrict school-based sex education from covering content relevant to young Australians (Shannon, 2022). However, sexual health educators and organisations are increasingly concerned that content moderation impedes their ability to share sex education content on social media platforms. This means that, instead of social media providing an opportunity to reach and engage young people, sex education content is increasingly deplatformed from social media platforms.

There has been increased media coverage and academic interest in social media platforms’ content moderation policies and practices for ‘sexual’ content since the passage of Stop Enabling Sex Traffickers Act and Allow States and Victims to Fight Online Sex Trafficking Act (commonly known as FOSTA-SESTA) by the United States Congress in March 2018. Although digital media outlets have reported that social media platforms are censoring sex education (see Kale, 2018; Moss, 2021), empirical research has predominantly focused on the impact of content moderation on non-educational sexual content targeted at adults (see Are, 2022, 2023; Monea, 2022; Paasonen et al., 2019; Spišák et al., 2021; Tiidenberg and van der Nagel, 2020). As such, there is a limited understanding of how social media's content moderation practices impact the distribution of sex education content.

This article starts to address this empirical gap through an autoethnographic case study of my experience of navigating Meta's content moderation practices while promoting the Bits and Bods (BB) web series to teenage girls and gender-diverse teens in 2019. These experiences are contextualised through conjunctural analysis, a research approach that seeks to understand the context that surrounds a cultural practice at a given point in time in order to determine practical interventions that resist cultural hegemony (see Hall et al., 2013).

In this article, I argue that the opaque and conservative nature of Meta's content moderation practices around sexual content restricted the ability of BB to distribute sex education content. This article is divided into three sections. In the first section, I draw on academic literature to outline the changes that social media platforms made to how sexual content is moderated in 2018. I then outline my approach to studying the BB case study, which included using autoethnography and post-structural policy analysis of Meta's content moderation policies. In the third section, I first undertake a policy analysis of Facebook and Instagram's (the social media platforms where BB's content was distributed) content moderation policies and problematise how they represent sex education content. I then explore what BB's experience of account deletion and rejection of paid advertising suggests about using Meta's platforms to distribute sex education content targeted at teenagers. I argue that these experiences demonstrate the significant resources and expertise needed to effectively navigate Meta's opaque and conservative content moderation practices and how accounts sharing sex education are often faced with the choice between reaching their target audience or circulating engaging content. While this case study advances academic and policy discussion around the content moderation of sex education content, I conclude by questioning whether public health practitioners should still be conceiving Meta's platforms as a space where they can deliver sex education to young people.

Contextualising changes to the social media ecosystem

FOSTA-SESTA is a packaged set of bills passed by the US Congress in March 2018 that amended legislation to enable the prosecution of the providers of websites that are ‘knowingly assisting, facilitating or supporting’ sex trafficking or sex work under existing state and federal criminal and civil law (US Government, 2018: 3). In doing so, the legislation amended Section 230 of the Communications Decency Act of 1996, which had previously meant that online platforms could not be held liable for the actions of their users, to enable prosecution of platforms. This significant change caused Alexander Monea to argue that ‘we can essentially divide content moderation practices into pre-FOSTA and post-FOSTA eras’ (2022: 115). However, as commercial content moderation practices are considered proprietary knowledge, researchers will never know definitely whether FOSTA-SESTA caused the subsequent changes that all major US-based social media platforms made around the moderation of sexual content.

While all US-based social media platforms had prior restrictions on the representation of nudity on social media (Gillespie, 2018), Stephen Molldrem (2019) argued that these platforms significantly tightened existing prohibitions around nudity and moved to proactively delete and filter content that violated their policies in 2018. He described this as the deplatforming of sex which, due to the existing dominance of US-based social media platforms, has impacted the distribution of sexual content across the globe regardless of the local cultures that surround sex work, nudity, and sexuality (Gillespie, 2018).

The deplatformisation of sex from social media occurred in two distinctive waves. The first wave occurred directly after FOSTA-SESTA was signed into law in April 2018 and included the shutdown of websites commonly used by sex workers as well as the removal of accounts of sex workers from mainstream platforms (Liu, 2020; Paasonen et al., 2019; Tiidenberg and van der Nagel, 2020). However, the ability of sexual health organisations to distribute sex education was not affected until the second wave of deplatformisation. This occurred in December 2018 and involved US-based social media platforms revealing expanded restrictions on sexual content posted on their platforms (Spišák et al., 2021). 1 The most notable changes were the announcement that Tumblr was implementing a ban on ‘adult content’ (Pilipets and Paasonen, 2022) 2 and Facebook was now prohibiting sexually suggestive content (Carman, 2018).

In addition to broadening the type of sexual content that was prohibited from social media platforms, platforms moved to proactively enforce their content moderation policies. This change occurred during a period when there was significant pressure on social media platforms to remove terrorist/extremist content, hate speech, and abuse (Gorwa et al., 2020). To deal with the scale of content that had to be assessed for compliance, platforms moved from relying on user flagging of content that they perceive to be non-compliant to automated content moderation that predicts whether the content is compliant with their policies. However, predictive forms of automated content moderation struggle to understand context (Gorwa et al., 2020). This is particularly troublesome for sexual content given its highly subjective nature and the vague descriptions of sexual content included within most platforms’ content moderation policies (Gillespie, 2018; Paasonen et al., 2019). Therefore, the growing reliance on automated content moderation means that all sexual content, regardless of its intent, tends to be deplatformed from social media (Spišák et al., 2021). This was best demonstrated when the ‘glitched realisation’ of Tumblr's ‘adult content’ ban incorrectly flagged clothed individuals, animals, and cartoons as pornography (Pilipets and Paasonen, 2022: 1460).

The implementation of FOSTA-SESTA and the significant changes social media platforms made to what and how sexual content is moderated have created increased interest in the study of content moderation of sexual content. 3 Recent literature has predominantly focused on mapping the material impact that content moderation has on individual users, including sex workers and influencers, who are affected by both the removal of nudity and/or sexually explicit content (see Liu, 2020; Paasonen et al., 2019; Spišák et al., 2021; Tiidenberg and van der Nagel, 2020) and the shadowbanning of ‘sexually suggestive’ content (see Are, 2022; Blunt et al., 2020; Monea, 2022). Pole dancer and content moderation researcher Carolina Are (2023) expanded the academic understanding of content moderation of sexual content with her autoethnographic reflection of her accounts being deleted from TikTok and Instagram. However, despite the political nature of content moderation (Tiidenberg, 2021) and the significant politicisation of sex education (Shannon, 2022), there have been limited studies on how content moderation affects sex education aimed at young people. Interviews with healthcare providers and accredited sexual health educators found that the removal and shadowbanning of sexual and reproductive health content restricted their ability to share sexual health information with young people on TikTok, YouTube, and Instagram (Delmonaco, 2023). Similar conclusions have been drawn from secondary analysis of media reporting on social media censorship of sex education (see Monea, 2022: 124–216; Borrás Pérez, 2021). This article builds on this existing research by providing a new case study that explores how Meta's content moderation policies and practices impact the ability to distribute sex education content to teenagers.

Methods

This article uses conjunctural analysis to develop a holistic understanding of the cultural processes and context that surround the circulation of sex education content on Meta's social media platforms in 2019. Conjunctural analysis seeks to reveal how ‘common sense’ has been constructed around a given cultural practice during a conjuncture where tensions and problems become evident in order to determine possible sites of intervention that can progress specific struggles against the existing cultural hegemony (see Hall et al., 2013). This politically motivated research approach was used to counteract the limited consideration of context evident within automated content moderation of sexual content (see Gorwa et al., 2020). As conjunctural analysis does not have a prescriptive methodology, I used autoethnography and policy analysis to think conjuncturally about the BB case study.

Autoethnography

Ellis et al. described autoethnography as a research approach ‘that seeks to describe and systemically analyse … personal experience … to understand cultural experiences’ (2011: 273). Similar to conjunctural analysis, autoethnography is a political act that is used to make sense of ‘an oppressive cultural and social structure’ (Atay, 2020: 268), such as Meta's content moderation practices (Spišák et al., 2021). Due to the opaque nature of platform content moderation policies and practices, pole dancer and content moderation researcher Carolina Are argued that ‘qualitative, ethnographic and autoethnographic explorations of platform governance are all users currently have to fight and understand their puritan censorship of nudity and sexuality’ (Are, 2023: 3). Inspired by Are's (2022, 2023) autoethnographic studies, this article uses an autoethnographic case study of BB to understand the impact that Meta's content moderation policies and practices have on the circulation of sex education content to teenagers.

BB was an Australian sexual health web series that talked with teen girls and gender-diverse teens (13–18 year olds) about sex, bodies, and all the awkward bits in between. This volunteer-run project was established in 2015 by a small interdisciplinary team of young women who felt that school-based sex education does not equip teenagers to have pleasurable sex. Drawing on our backgrounds in public health, communications, and art, we produced humorous and aesthetically pleasing sex education content that was not restricted by the postcode someone lived in, the school they went to, or the teacher they were allocated (see Shannon, 2022).

The BB web series was released in November 2019 4 and edited together the stories of 30 diverse young Australians into seven fun and sex-positive videos. We then used $10,000 of paid advertisements to market the content to teen girls on Meta's platforms; this was done to actualise the ‘algorithmic capacities of social media platforms to ‘push’ educational content to target audiences’ (McKee et al., 2018: 4585). The production of the web series and its distribution through paid social media advertisements was funded by grants received from the Victorian Government as well as feminist and LGBTIQ + organisations.

BB's social media presence has sought to build an audience for its web series since 2016. Between 2016 and 2018, our Instagram account regularly posted high-quality sexually suggestive photos, videos, artworks, and memes. Our content was informed by a sub-culture communications approach, whereby it was aligned to the ‘aesthetic, behavioural and media preferences’ (Ems and Gonzales, 2016: 1761) of our audience. There was a strong appetite for our content; by June 2018, our Instagram account had more than 4000 followers who actively engaged with and shared our content. Due to resource constraints, we did not regularly post content between July 2018 and June 2019 (less than 2 months before our account was removed from Instagram).

In this article, I reflect on my experience as the Director of Strategy at BB from 2016 until the organisation wound up in December 2019. My role primarily involved leading our external engagement (i.e., liaising with funders, video talent, and the sexual health sector) as well as keeping up to date with major changes within the digital and sexual health ecosystems. This included monitoring the algorithmic gossip, which is ‘communally and socially informed theories and strategies’ related to algorithms that are shared and implemented to increase visibility within a social media platform (Bishop, 2019: 2589), around content moderation of sexual content. Additionally, due to the resignation of our Communication Director, I also ran our social media accounts between October and December 2019.

My autoethnography was conducted between March and December 2019. During this period, I wrote memos of experiences that stood out to me as I undertook my day-to-day work. These memos subsequently shaped my PhD research which undertakes a conjunctural analysis of the production and distribution of pleasure-informed digital sexual health content aimed at young Australians. 5 My memos were supplemented by archival documents from BB, including social media content, emails, and policy and strategy documents, and interviews with my colleagues that were conducted as part of my PhD research.

The experiences of content moderation that I discuss in this article were two of the three unexpected experiences that occurred during this period. 6 These were experiences that surprised me, my colleagues, and our key stakeholders, as we all assumed that the educational nature of our content would safeguard BB from content moderation. I argue that understanding our experiences can help to expand the academic conceptualisation of content moderation, as there have been limited studies that document experiences of account deletion or the rejection of paid advertising. While Carolina Are (2023) has recently written about her accounts being removed from Instagram and TikTok, research has not yet considered the impact that either account deletion or the rejection of paid advertising has on the distribution of sex education content.

Policy analysis

While most platform's content moderation practices tend to be opaque and subjective, Tarleton Gillespie (2018: 73) argued that the vague wording describing sexual content within content moderation policies allows the biases of individual moderators and programmers of automated content moderation to strongly influence what sexual content is deemed as ‘inappropriate’. To understand how the actors involved in content moderation conceptualise sex education content, I use Carol Bacchi's (2009) ‘What's The Problem Represented To Be’ framework to undertake a policy analysis of the Facebook Advertising Policies (Facebook, 2019a) and Community Standards (Facebook, 2019g), and the Instagram Community Guidelines (Instagram, 2019). This post-structuralist approach draws on the work of Foucault, particularly his practices of archaeology and genealogy, to examine the implicit problematisations produced within the studied policies. In doing so, I explore the binaries, silences, and assumptions about sex education within Meta's content moderation policies.

Findings and discussion

Meta's policies about sexual content

To help contextualise BB's two experiences of content moderation, I use Carol Bacchi's (2009) ‘What's The Problem Represented To Be’ approach to analyse Meta's content moderation policies that regulate sex education content. While existing analysis of how sexual content is represented within various platforms’ content moderation policies has focused on organic content (for example Are, 2022; Monea, 2022; Paasonen et al., 2019; Spišák et al., 2021; Tiidenberg and van der Nagel, 2020), interviews with social media workers in 12 UK and Australian sexual health organisations demonstrated that the rejection of paid advertising was the most commonly experienced form of content moderation by sexual health organisations (Williams, 2022). Building on this research, I argue that the Facebook Community Standards and Advertising Policies act as texts where norms, values, and behaviours are articulated with the expectation that Meta's users will normalise towards not distributing sexual content. However, I later demonstrate how this is not practical for sexual health organisations – like BB – who have been specifically funded to distribute sex education content to young people on social media.

Community guidelines

Organic content (i.e., content that is shared in a user's feed, stories, private chats, and groups) posted on Instagram in 2019 was governed by the Instagram Community Guidelines (Instagram, 2019). This policy explained that the platform is a ‘positive, inclusive and safe’ (Instagram, 2019) environment. It outlined what content Meta deems ‘unsafe’ or ‘inappropriate’ for sharing on each platform and that posting ‘inappropriate’ content leads to the removal of content, account deletion, and/or other restrictions. The policy positioned ‘nudity’ as inappropriate content that contains a ‘risk of harm’ (Instagram, 2019) alongside content that contains bullying and harassment, hate speech, self-injury, spam, or violence.

Although not publicly communicated when BB's Instagram account was deleted in August 2019,

7

content posted on Instagram was also governed by the Facebook Community Standards (Facebook, 2019g). These policies provided greater detail on prohibited content than the high-level overview included within Instagram Community Guidelines, and included four Community Standards that affected the distribution of sexuality education aimed at teenagers:

Community Standard on Child Sexual Exploitation, Abuse, and Nudity, which prohibited sexual content aimed at or about minors; Community Standard on Adult Sexual Exploitation, which prohibited sexual content that was obtained or shared without the consent of those it portrays; Community Standard on Adult Nudity and Sexual Activity, which prohibited the representation of adult nudity (i.e., visible genitals, anus, bottom, and ‘female nipples’) and/or sexual activity (Facebook, 2019d); and Community Standard on Sexual Solicitation, which prohibited content that facilitates a sexual encounter, the consumption of pornography, and the use of ‘sexually explicit language’ (Facebook, 2019f).

Despite these significant limitations that Meta placed on the distribution of sexual content, there were exceptions built into these Community Standards that should allow sex education content targeted at adults. For example, the Community Standard on Adult Nudity and Sexual Activity allowed the representation of sexual activity where it was shared for ‘educational, scientific, humorous or satirical purposes’ as well as the representation of implied sexual intercourse or genital stimulation in a ‘sexual health context’ (Facebook, 2019d). However, this Community Standard does not explain how such context can be signalled. Similarly, the Facebook Community Standards were silent on whether sex education aimed at teenagers was explicitly allowed or prohibited on Meta's platforms, despite teenagers traditionally being the recipients of sex education.

While caveats existed that theoretically support the distribution of sex education, the Community Standard on Sexual Solicitation significantly restricted the distribution of discursive sex education content that engages users. This Community Standard was introduced in October 2018 (Carman, 2018) and was explicitly framed as prohibiting the facilitation of sexual encounters between consenting adults (including sex work). In doing so, it also restricted the use of ‘sexually explicit language’ which it described as ‘going beyond a mere reference of a state of sexual arousal … or an act of sexual intercourse’ (Facebook, 2019f). This effectively restricted the ability of sexual health organisations to distribute content that discusses sex (which, I would contend, is the point of sex education). In addition to these restrictions, an unreleased version of this Community Standard was being used between July and September 2019 to prohibit content that included an ‘offer or ask’ for a sexual encounter alongside ‘suggestive elements’ (Turner, 2019). Examples of suggestive elements included ‘contextually specific and commonly sexual emojis or emoji strings’, ‘regional sexualised slang’, or ‘mentions or depictions of sexual activity (including hand-drawn, digital, or real-world art)’ (Facebook, 2019f). However, these ‘suggestive elements’ effectively function as the digital vernacular around sex (Marston, 2020; Tiidenberg and van der Nagel, 2020). Similarly, the ‘offer or ask’ was defined so broadly that explicitly referring people to visit any external website was often perceived as a violation of this policy (see Oversight Board, 2023). Thus, the two prohibitions within the Community Standard on Sexual Solicitation effectively constrained the ability of sexual health organisations to discuss sex in a way that resonates with the digital vernacular of their target audience.

Advertising policies

The Facebook Advertising Policies alluded to all nudity and sexual content being ‘pornographic’ by prohibiting ‘adult services and products’ and ‘adult content’ (Facebook, 2019a). This policy outlined that all proposed advertising must be reviewed for compliance with its content moderation policies before it is circulated on Meta's platforms (Facebook, 2019a). This could explain the almost systematic rejection of sexual health advertisements compared to the more ad hoc experience of content removal experienced by sexual health organisations (Williams, 2022).

Similar to the Community Standard on Adult Nudity and Sexual Activity, the Advertising Policies prohibited using nudity, sexually explicit content, or sexually suggestive content in advertisements. Rather than providing detailed descriptions of what is prohibited, the policy provided examples of prohibited photographs as well as vague and highly subjective descriptions. For example, ‘adult content’ was broadly defined as ‘nudity, depictions of people in explicit or suggestive positions, or activities that are overly suggestive or sexually provocative’ (Facebook, 2019b) without describing what activities and positions would be deemed ‘suggestive’ or ‘provocative’. The highly subjective nature of these terms is evident through the inclusion of a close-up photograph of a woman eating a banana as an example of ‘sexually explicit’ content that cannot be used in advertising. The inclusion of this image – combined with the systematic rejection of sex education advertisements (Williams, 2022) – reinforces to sexual health organisations that Meta takes a conservative stance on adjudicating sex education advertisements.

The Facebook Advertising Policies also contained a caveat that supports the provision of advertisements for sexual health services and products aimed at adults. These caveats were more restrictive than the Facebook Community Standards as advertisements can only be placed behind contraceptives and family planning services. Additionally, the policy prohibited messaging that includes references to sexual pleasure, despite public health research showing that framing sexual health messages around pleasure increases audience engagement and behaviour change (Zaneva et al., 2022).

The problematisation of sex education

Meta consistently represented nudity and sexual activity as ‘the problem’ within its policies. These policies inferred that sexual content restricts their users’ ability to feel safe enough to authentically engage on the platform. This framing was demonstrated within the rationale provided for the prohibition of sexually explicit language: ‘Some audiences within our global community may be sensitive to this type of content, and it may impede the ability for people to connect with their friends and the broader community’ (Facebook, 2019g). However, these policies were silent on why and to whom sexual content was considered ‘inappropriate’ and ‘sensitive’.

Despite Facebook's assurance that its content moderation policies were ‘fairly and consistently’ enforced (Facebook, 2019g), the vague wording of sexual content within the four Community Standards and the Advertising Policies meant that the biases and values of human moderators and programmers of automated content moderation determine what sexual content is deemed ‘appropriate’ or ‘inappropriate’ (Gillespie, 2018). For example, the Community Standard on Child Sexual Exploitation, Abuse, and Nudity prohibited the ‘sexual exploitation’ (which include images that are ‘professionally’ shot) and ‘sexualisation’ of ‘minors’ (Facebook, 2019e) without clarifying what any of these terms mean.

Katrin Tiidenberg (2021) argued that the use of highly subjective language to describe sexual content within content moderation policies continues the power structure of the status quo in which platforms operate. Susanna Paasonen et al. (2019: 169) contends the status quo for Meta is the residual Puritan cultural legacy, which represents sexuality as ‘something to be feared, governed and avoided’. In doing so, this legacy conflates risk (and the absence of safety) with sexuality, which explains the negative framing of sexual content within Meta's content moderation policies. The negative framing of sexual content was evident in the terminology used to classify the four Community Standards that govern sexual content as problems related to ‘safety’ or ‘objectional content’ (Facebook, 2019g); the latter term is used to allude to all sexual content is intrinsically ‘obscene’ or ‘pornographic’ content. The allusion to pornographic content and sex work was also evident within the terms used to describe prohibited sexual content within the Facebook Advertising Policies: ‘adult services and products’ and ‘adult content’ (Facebook, 2019a).

By restricting the provision of sex education content to adults (Facebook, 2019d), Meta infers that all sexual content is ‘inappropriate’ for minors. The inclusion of a ‘child’/‘adult’ binary within Facebook's content moderation policies is rationalised as safeguarding ‘innocent’ minors from sexual exploitation by adults (Facebook, 2019e). However, this binary conflates teenagers (13–18 years old) and children (0–12 years old) while ignoring how sexual experimentation between peers is a common and normal part of adolescence and that teenagers already use the internet to learn about sex (Fisher et al., 2020). Rather than safeguarding teenagers from sex, Meta's prohibition on advertising ‘sexual health services and products’ (Facebook, 2019c) to teenagers restricts the ability of sexual health organisations to distribute sex education to a demographic that has high rates of sexually transmitted infections (Australian Department of Health, 2018).

While some age-based restrictions on sexual content are explicitly stated within Meta's content moderation policies, other prohibitions most acutely impact teenagers. For example, the prohibition on using ‘suggestive elements’ (Facebook, 2019f) restricted sexual health organisations from using sexual slang and emojis, which are a core part of the digital sexual cultures of young people within western English-speaking nations (Marston, 2020). Similarly, the prohibition of mentioning sexual pleasure within paid advertisements stops organisations from distributing content on a topic that young people are interested in learning about (Johnson et al., 2016).

While I have outlined the conservative nature of Meta's content moderation policies, what is written in a policy often differs from how it is enforced. In the next section, I analyse the BB case study to understand how Meta's content moderation policies around sex education are enforced.

The deplatforming of BB

Despite our best attempts to produce compliant content on Meta's platforms, BB had two experiences of content moderation in 2019 that significantly restricted our reach and engagement with our audience (13–18-year-old girls). This section documents these experiences. The first experience was in August 2019 when our account was removed from Instagram for violating its Community Guidelines. The second experience was in November 2019 when I struggled to place $10,000 of paid advertising behind our web series on Facebook. I argue that these experiences demonstrate the significant resources and expertise needed to effectively navigate Meta's opaque and conservative content moderation practices and how accounts sharing sex education are often faced with the choice of reaching their target audience or circulating engaging content.

Removal from Instagram

BB was first affected by content moderation practices when our account was deleted from Instagram on 11 August 2019 for violating the Instagram Community Guidelines. This was a surprise as we never shared sexually explicit content. Due to algorithmic gossip circulated during the second wave of deplatformation of sex, we had not shared content that included nudity and shied away from posting sexually suggestive content since December 2018. Additionally, our Communication Director had decided to only repost on our account visual content that had existed on Instagram for several years, as she thought that content that had not been removed from the platform was unlikely to experience content moderation in the future. In addition to this, we both actively searched out algorithmic gossip shared by other accounts that circulated sex education to understand what content would be removed and/or shadowbanned. However, we observed that there was no consistency in what sex education accounts reported to have been removed and/or shadowbanned on Instagram.

What was most surprising to me was that Meta never notified us that our account was deleted. We only became aware of our deletion when a team member tried to log into our Instagram account and was met with a notification that the account had been disabled for a ‘violation of the community guidelines’. We did not receive an email outlining what picture triggered our deletion from Instagram, which guideline we had broken, or how we could contest this experience. In the absence of information provided by Meta, we assumed the account deletion resulted from the last photo that our account posted. This was a photograph of an adult woman in the snow wearing a woolen costume that was reminiscent of a vulva. We could not understand how this violated the Instagram Community Guidelines as the woman was fully clothed and not involved in sexual activity. Additionally, we had not received any prior warnings that our content was violating community guidelines despite Instagram having recently unveiled a ‘strike’ system that warned users that their account might be deleted (see Kastrenakes, 2019). Without Meta providing a clear explanation of what we were doing wrong before our account was deleted, we were unable to change our content to ensure it complied with the Instagram Community Guidelines. 8

Our experience of account deletion is similar to Are's (2022) description of the ‘automated powerlessness’ that she experienced when her accounts were erroneously removed from TikTok and Instagram. She described social media platform's reliance on automated content moderation, combined with platforms’ limited transparency about the decisions made and the process of contesting the decisions, as causing deleted users to feel powerless. I felt powerless as we (unsuccessfully) used a generic form on Instagram's website to appeal this decision. While other team members felt that this form would make Instagram aware of its mistake and reinstate our account, I knew that we did not have the necessary relationships with media outlets and Meta that algorithmic gossip had highlighted as being essential to reversing the erroneous deletion of an account (see Are, 2023). As such, we lost access to three years’ worth of sex education content and the ability to share the web series content, which we had produced based on the affordances and platform vernaculars of Instagram, with our existing audience of 4000 followers and our target audience of teenage girls who primarily used this platform (rather than other platforms that sexual health organisations commonly used to share sex education content).

The deletion of our Instagram account made me question whether going forward social media should be a space for sex education. This concern was further heightened as I watched the continued deplatforming of sex workers and educators on Instagram over the next couple of years (see Are, 2022). Given that our deletion occurred after the post-production of our web series had been finalised and there was no transparency about what had caused it, this experience did not influence how we subsequently produced content. However, it demonstrated to us the significant time and effort needed to understand and navigate Meta's content moderation practices if we wanted to distribute sex education content via Instagram. As a volunteer-run organisation, we understood that we did not have the resources to continue navigating social media platforms’ opaque and constantly changing content moderation practices, which led to us deciding to wind up BB once we spent our paid advertising budget. Additionally, our removal from Instagram significantly restricted our ability to demonstrate to stakeholders our approach to sex education and the credibility of our messages. If we had decided to continue producing content, the inability to demonstrate a following and existing content would likely have significantly constrained our ability to access funding in the future.

Struggle with paid advertising

While we had originally envisioned that the distribution of the short entertaining teaser videos for each of the seven episodes of our web series would occur on Instagram, BB's deletion from Instagram led to our paid advertising campaign being distributed through Facebook, where we had an existing following of 1000 people. These videos were promoted to our target audience (13–18 years old girls), with each teaser linking to the longer episode uploaded on YouTube. Six teaser videos were produced for each episode, with advertising initially placed behind the video that our team deemed most likely to capture the attention of teenagers.

Putting paid advertising behind these teasers turned out to be a much more challenging process than I had anticipated. When I put our teasers through Facebook's Business Manager, content that I had assumed should be approved under the Facebook Community Standards’ caveats on sex education was consistently rejected for advertising ‘adult goods and services’ and ‘adult content’ (Facebook, 2019a). These decisions made me aware of the Facebook Advertising Policies, which govern paid advertising on Facebook and Instagram. Despite being aware of algorithmic gossip around the sexist restrictions on advertising sex toys on Meta's platforms (see Dame and Unbound, 2019), I had never previously heard of the Facebook Advertising Policies.

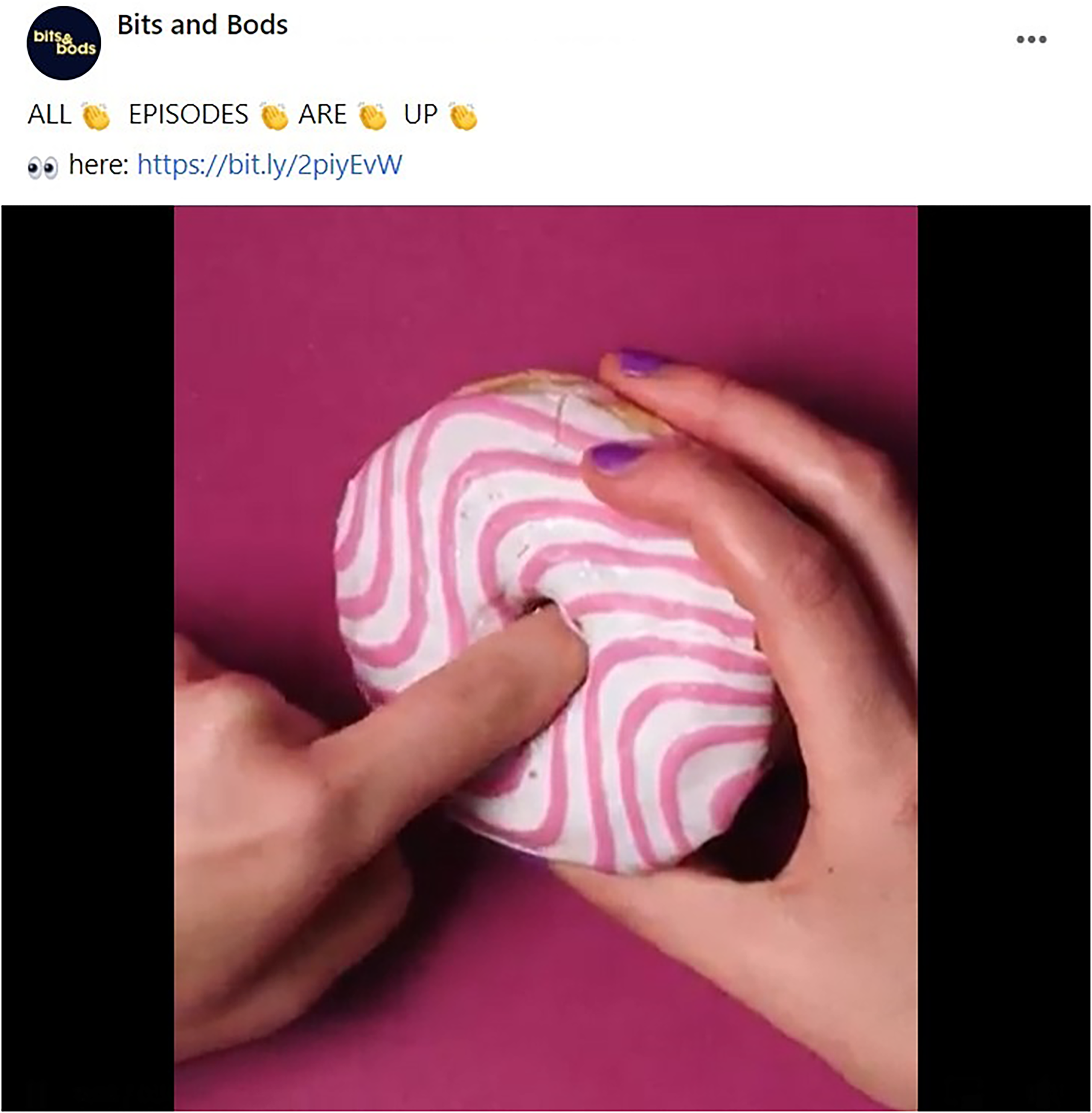

I quickly realised that all our paid advertising would be rejected as BB produced pleasure-informed sex education aimed at 13–18 year olds. Sexual health messaging that incorporates pleasure and sexual health advertisements targeting minors are the two things that the Facebook Advertising Policies explicitly prohibit. Compared to the lack of transparency around the deletion of our Instagram account, Meta provided high-level feedback about which part of the Facebook Advertising Policies our advertisement did not comply with. The most common rationales provided for why our advertisements were rejected included that they contained ‘sexually suggestive’ content. This was often used to reject videos that included cutaways to inanimate objects, such as doughnuts, fruit, vegetables, tampons, and feather dusters (see Figure 1). Another common rationale for rejection was the inclusion of medically accurate diagrams of genitals, which were not mentioned in Meta's public-facing content moderation policies. Finally, some advertisements were rejected for including profanity (including words such as vagina, pussy, or queer).

Example of Bits and Bods (BB) teaser content rejected for implied sexual activity.

Image description. A screenshot of a BB Facebook post that says ‘All episodes are up’ with clap emojis between the words. This is accompanied by a still of a doughnut with a finger in the doughnut hole (alluding to penis-in-vagina sex).

After a painstaking week of appealing rejections, tweaking copy, and testing videos, I managed to place paid advertising aimed at 13–18 year old girls behind teasers for all seven episodes. While this was mostly due to an excruciating process of trial and error, I did manage to successfully appeal the rejections of a number of advertisements by communicating that BB was a sexual health charity that was funded by the Victorian Government to provide sex education to young people. I did this to help the human moderators that handle appeals to recontextualise the educational nature of our content, rather than relying on US cultural norms that perceive all sexual content as ‘pornographic’. This ability to recontextualise our content would not be possible if the appeals had been handled by automated content moderation that exclusively scans for flagged visuals (such as a medically accurate diagram of genitals) or words.

While I was relatively successful in appealing most of the advertisements, other advertisements were only approved after I tweaked their copy to better align with the Facebook Advertising Policies. This often involved removing the language and visuals that I knew would most resonate with young people. As XBIZ (an adult industry news publication) reported the historical changes to Facebook's Sexual Solicitation Community Standard a week before our campaign went live (see Turner, 2019), I knew to remove the playful vernacular and innuendo commonly used by young people to discuss sex (such as the peach, eggplant, and water droplets emojis as well as phrases such as ‘cumming soon’ and ‘doing it’) from our captions. I also removed all mentions of sex and substituted words likely to be flagged as ‘inappropriate’ with other euphemisms that automated content moderation and our target audience might not understand (for example, ‘doing it’ became ‘bang’). While I was able to work around the constraints of the Facebook Advertising Policies to reach teenagers, I was only able to share teasers with sterile copy that did not represent the playful and fun approach evident in our longer videos.

There were some advertisements that I struggled to get approved even with significant tweaking of their captions. This was due to parts of their audio-visual content being prohibited – for example, videos that included diagrams of medically accurate genitals or a video talent who used a ‘profane’ word in the video. In these cases, I placed advertising behind less engaging teasers that complied with the Facebook Advertising Policies.

As BB wound up after placing these advertisements, the provision of high-level rationales for why each advertisement was rejected by Meta did not influence how we produced subsequent content. However, they provided me with a stronger understanding of where Meta envisions the boundary between ‘compliant’ educational sexual content and prohibited ‘adult content’ content, which made it easier for me to tweak the copy to comply with the Facebook Advertising Policies. However, the approved advertisements felt reminiscent of the sexual health messaging evident in school-based sex education that are not relevant to the sexual cultures of young people. Thus, our experience highlights not only the significant time and expertise required to navigate Meta's opaque and conservative content moderation practices, but also how accounts sharing sex education are often faced with the choice of reaching their target audience or circulating engaging content.

Conclusion

This article documented two experiences of content moderation that I experienced in 2019 when I was co-running BB, a sex education web series aimed at teen girls and gender-diverse teens. Despite distributing what we thought was compliant sex educational content, our Instagram account was deleted for violating the Instagram Community Guidelines in August 2019. This experience highlighted the significant time and expertise needed to ensure that our educational sexual content could remain on Instagram and reach our existing and target audiences (see Are, 2023). This conclusion was further reinforced when BB's paid advertising was systematically rejected for promoting ‘adult goods and services’ and ‘adult content’ (Facebook, 2019c). I put significant time into ensuring our content could reach teenage girls; however, this involved self-censoring our content by removing all language and imagery that aligned with the digital and sexual cultures of young people. Thus, our experience highlights not only the significant time and expertise required to navigate Meta's opaque and conservative content moderation practices but also how accounts sharing sex education are often faced with the choice of reaching their target audience or circulating engaging content.

While BB was drawn to social media by the promise of using ‘algorithmic capacities of social media platforms to ‘push’ educational [sexual] content to target audiences’ (McKee et al., 2018: 4585), our experiences of deplatformisation made me question whether Meta's platforms could be used to distribute sex education campaigns. This question is particularly poignant as Australian governments now fund sexual health organisations to undertake sexual health promotion campaigns aimed at young people (15–30 year olds) on social media platforms (Australian Department of Health, 2018) with most Australian sexual health organisations distributing this content on Instagram (Williams, 2022). However, BB's experience suggests that other sexual health organisations are likely to be unable to target advertisements to an important segment of young people, their accounts may be deleted at any point, and their content cannot use language and imagery that resonates with the digital and sexual cultures of young people. As such, this case study provides evidence that can be used by sexual health organisations, national governments, and/or inter-governmental organisations to lobby Meta to move to a more localised approach to moderating (educational) sexual content aimed at teenagers.

My discussion of the rejection of paid advertising as a form of content moderation expands the existing understanding of content moderation, which was historically focused on the removal of content. This experience is not limited to BB as the rejection of paid advertising was the most common type of content moderation experienced by sexual health organisations (Williams, 2022). Given the consistent enforcement of Facebook's Advertising Policies and the provision of the rationale behind rejected advertising, studying what advertising is rejected or approved can be used to better understand where Meta places the boundaries around ‘appropriate’ sex education content rather than analysing the vague terminology used within its policies (Gillespie, 2018).

While this study provides much-needed empirical evidence of the interaction between content moderation policies and the deplatformisation of educational sexual content, undertaking a case study limits the generalisability of my findings to other social media platforms. Similarly, staggered amendments to Meta's content moderation policies and the fast-changing nature of the practice of content moderation limit my findings to this specific point in time. Although my analysis focused on six months in 2019, the policies have not substantially changed. Indeed, Caroline Are's (2022, 2023) longitudinal autoethnography on distributing pole-dancing content on Instagram suggests that Meta's content moderation practices are becoming increasingly conservative. Finally, my focus on sex education content targeted at teenagers means that my findings are not necessarily generalisable to sexual health promotion campaigns aimed at adults, which are explicitly allowed within the Facebook Community Standards and Advertising Policies. However, as Australian sexual health organisations are funded to distribute content to both teenagers (15–17 year olds) and young adults (18–30 year olds), the circulation of content that engages teenagers could lead to Australian sexual health organisations being removed from Instagram. This would limit the ability of adults to access sexual health information on Instagram, despite policies specifically allowing the circulation of this information.

Noting these limitations, this study could be used to inform future research that draws on the experiences of diverse sexual health organisations to develop practical strategies that sexual health organisations and content creators can use to resist Meta's opaque and conservative content moderation practices (see Hall et al., 2013). Informed by qualitative research on content creators (Duffy and Meisner, 2023), practical strategies have already been developed based on interviews with employees from sexual health organisations (Williams, 2022, 2023) and content creators (including healthcare professionals and certified sexual health educators) (Delmonaco, 2023) about their experiences with content moderation (including how they use algorithmic gossip to resist content moderation). Identified strategies to resist content moderation of sexual health content include (but are not limited to) partnering with influencers to reach new audiences (i.e., bypassing paid advertising), using algospeak 9 and memes to fly under the radar of automated content moderation, and producing entertaining and shareable social media content (as opposed to content with a clear health messaging) (see McKee, 2012).

Footnotes

Acknowledgments

I would like to sincerely thank the team who ran BB, Kath Albury, and Anthony McCosker for supporting the documentation of our experiences. I am so grateful to the Layne Beachley Foundation, GLOBE, Victorian Women's Trust, Victorian Government – HEY Grant, The Channel, LINC, and individual donors for funding the production and distribution of the BB web series. Finally, I am deeply indebted to the feedback that the reviewers, Kath Albury, Taylor Hardwick, Nick Casey, David Williams, and Louise Williams provided on this article.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was conducted by the ARC Centre of Excellence for Automated Decision-Making and Society (CE200100005) and funded by the Australian Government through a Research Training Program (RTP) Scholarship. No additional grants were received in the development of this paper.